Abstract

This article introduces a mobile infrared silhouette imaging and sparse representation-based pose recognition for building an elderly-fall detection system. The proposed imaging paradigm exploits the novel use of the pyroelectric infrared (PIR) sensor in pursuit of body silhouette imaging. A mobile robot carrying a vertical column of multi-PIR detectors is organized for the silhouette acquisition. Then we express the fall detection problem in silhouette image-based pose recognition. For the pose recognition, we use a robust sparse representation-based method for fall detection. The normal and fall poses are sparsely represented in the basis space spanned by the combinations of a pose training template and an error template. The ℓ1 norm minimizations with linear programming (LP) and orthogonal matching pursuit (OMP) are used for finding the sparsest solution, and the entity with the largest amplitude encodes the class of the testing sample. The application of the proposed sensing paradigm to fall detection is addressed in the context of three scenarios, including: ideal non-obstruction, simulated random pixel obstruction and simulated random block obstruction. Experimental studies are conducted to validate the effectiveness of the proposed method for nursing and homeland healthcare.

Keywords

1. Introduction

In recent years and for the foreseeable future, in many countries an ageing society is increasingly obvious due to better quality of life and a lower birth rate. The proportion of the worldwide population over 65 years is growing [1] and increasing numbers of elderly people not only need advanced medical technologies for the treatment of disease, but also more healthcare services for independent living and a better quality of life. However, the decreasing numbers of nursing professionals and in some countries the decline in the working-age population will cause a serious imbalance when looking to offer enough healthcare services for elderly people. Therefore, the ageing problem has motivated much research on automated and reliable healthcare systems.

Falling is common among elderly people and a major hindrance to daily living, especially independent living. According to the research in reference [2], approximately one-third of those over 65 years old fall each year and half of them are repeat fallers. Falls often lead to dramatic physiological injuries and psychological stress. The elderly may remain on the floor for a long time leading to life-threatening situations, while the fear of falling can result in decreased activity, isolation and further functional decline. Therefore, falls should be detected as early as possible to reduce the risk of morbidity-mortality [3].

With regard to detecting falls effectively and quickly, there has been a recent surge of interest into automated fall detection and the detections of other abnormal behaviours of elderly people. These methods can be divided into two categories according to the use of the sensors, including: wearable sensors and vision sensors. Wearable sensors are usually mounted on the human body and able to achieve accurate fall detection, such as acceleration [4–6], gyroscopes [7] and wireless button alarm [8]. However, these solutions break the both physical and psychological feelings of the elderly people. Moreover, if the elderly person forgets to wear it, the alarm system would not work. Therefore, automatic fall detection using a non-intrusive method is an essential sensing for complementary under certain circumstances.

Vision sensors can realize the healthcare objectives in a non-intrusive fashion. Several authors have explored commercial cameras to capture video in home environments and extracted the object feature for fall detection [9, 10]. Although the existing methods have obtained accurate fall detection, precise body poses extraction and robustness against changing lighting are challenging issues in the computer vision community. Body poses extraction may be corrupted by the clustered clothes and shadows. In addition, camera-based methods intrude into the privacy of the elderly. Several alternative solutions have been proposed in order to analyse human motion using thermal cameras [11–13]. The human body is considered to be a natural emitter of infrared rays. Normally, the body temperature is different from that of its surroundings. This leads to easy motion detection from the background regardless of lighting conditions and the colours of the human surfaces and surroundings. This sensing pattern can extract meaningful information for human motion directly. However, thermal cameras are expensive and information processing is still difficult to deal with.

To overcome the above limitations of data acquisition, we propose a mobile infrared silhouette sensing with a pyroelectric infrared (PIR) sensor array for elderly pose acquisition and robust fall detection via sparse representation. Thanks to the well-established studies of the intelligent mobile robots for home care [14,15] and the PIR sensor-based wireless networks [16, 17] for multiple human tracking, we make an assumption that the mobile service robot is able to detect where a person has been lying for a long time and move close to the incident region. The robot also has the function of patrolling the interested region periodically. Another assumption is made based on the fact that elderly people usually do not move after a fall, therefore a relatively static pose silhouette would provide enough information for fall detection. We have designed a sensor array consisting of a single vertical column of PIR detectors for capturing human thermal radiation and use a mobile service robot for implementing silhouette imaging. The mobile robot undertakes rotary-scanning and the pose of the human body can be recorded as a crude binary silhouette. Fall detection is cast as image-based object recognition.

For the data processing, we use a sparse representation-based method for fall recognition. In general, simple aspect-ratio-based shape analysis for fall poses detection is able to satisfy the requirements. However, this method is suitable for applications in an ideal environment with no obstructions. In reality, the object recognition will encounter numerous corruptions, such as furniture obstructions in real home environments. This will render the simple aspect-ratio-based shape recognition useless. For this reason, we propose the sparse representation-based robust pose recognition, which is mainly motivated by the recent sparse representation and its application for robust face recognition and target tracking [18, 19]. The normal and fall poses are sparsely represented in the basis space spanned by the combination of a pose training template and an error template. The sparsest approximation is computed based on the ℓ1 -minimization using linear programming (LP) and orthogonal matching pursuit (OMP), and the candidate with the maximum amplitude is classified as the predefined pose. We test this classification method in three scenarios: ideal non-obstruction, simulated random pixel obstruction and simulated random block obstruction.

Figure 1 presents the schematic diagram of the system. The fall detection system integrates a mobile infrared silhouette imaging sensor and a sparse representation-based pose recognition algorithm.

Schematic diagram of the proposed system

The experiments are conducted to demonstrate the effectiveness of the described sensing and robust pose recognition in elderly-fall detection. If a user's behaviour is detected as an abnormal event, the alarm will be activated as soon as possible. This succinct sensing pattern and robust recognition algorithms make it flexible for elderly and disabled people to continue to live a free and independent life in their own house while receiving reliable safety assistance.

The rest of this article is organized as follows. Section 2 we give a brief review of PIR sensing and silhouette imaging, then present the mobile infrared silhouette imaging. Section 3 describes the sparse representation-based robust pose recognition. Section 4 presents the experimental details and results. The summary and conclusions of the article are given in Section 5.

2. Mobile Robot Aided Infrared Silhouette Sensing

2.1. Related Work

Increasing attention has focused on PIR-based motion capturing patterns for human presence detection. The PIR detector has several promising advantages: it is able to convert the incident thermal radiation into an electrical signal, and it responds to radiation with wavelengths ranging from 8μm to 14μm which just corresponds to the typical thermal radiation emitted from the human body [20]; the cost of a commercially available sensor is extremely low; the power consumption is low and suitable for wireless networks and mobile agent application. Because of these advantages, the PIR detector has been developed in lightweight biometric detection [21, 22], human identification [23–25] and multiple human tracking [16, 17].

A recent surge of interest has focused on the silhouette imaging sensor for the applications of electronic fence. The silhouette sensor belongs to crude imaging devices and captures a pixelated silhouette of the monitored target directly. Sartain firstly introduced the concept of the silhouette imaging sensor and discussed a variety of approaches to realize a silhouette sensor [26]. The crude silhouettes generated from a sparse array of sensors would offer sufficient information for the classification task and the classification algorithms were tractable without complex image processing. Russomanno et al. designed a sparse array of sensors with the near infrared (IR) transmitters and receivers for the border, perimeter and other intelligent electronic fence applications [27, 28]. However, the proposed sensor belongs to an active version and it is difficult to deploy them in a nursing home. Thus, the passive sensing pattern is a more attractive option. Jacobs et al. introduced the concept of passive PIR sensor-based silhouette imaging and gave a simulation based on thermal infrared video sequences [29]. Willianm et al. presented a pyroelectric linear array-based silhouette sensor for distinguishing humans from animals, but they did not discuss the application of the analysis of human poses [30]. It should be noted that the field of view (FOV) of the above-mentioned solutions is fixed and it difficult to extend using sensor networks or mobile agents for home healthcare situations.

Our sensing device is motivated by the above silhouette imaging. A crude silhouette of the human body preserves sufficient information for distinguishing between normal and abnormal poses. Considering the fact that elderly people usually do not move after a fall incident, a mobile silhouette imaging device is more preferable for sensing a relatively static object. Thus, we embed linear multi-cell PIR detectors on a mobile robot for capturing the pose of the human body. Then the fall detection problem is cast as image-based object recognition.

2.2. Sensing Model

Figure 2 shows the sensing model of a PIR detector. The human body is a natural infrared radiation source and makes exchanges with the surroundings. Thus, the PIR detector collects the incident thermal radiation. This will make changes in the temperature on the pyroelectric material and this will be converted into an electrical output. The pyroelectric sensing model can be briefly represented using a form of reference structure [31]:

Mobile pyroelectric infrared sensing model

In this article, the commercially available pyroelectric detectors D205b [32] are employed for sensing changes of the thermal radiation in the object space. The object space is defined as the collection of the thermal radiation fields in a human body. Fresnel lenses are used to bridge the FOV of PIR detector matching to the motion sensing space. Due to the special property of the PIR just responding to the thermal radiation emitted from the human body, the intrusion of visible illumination can be removed. When a sensing region moves across the object space continuously, the detector would give a large voltage output corresponding to the human body, as shown in Figure 2.

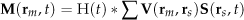

Once the voltage outputs are collected, they will be transmitted to the data processing centre wirelessly. At the data processing centre, users can determine the presence of the human body via the short-time energy method. Figure 3 shows a typical response of a PIR detector when scanning across a human body and explains the short-time energy method for transforming the raw analogue signals into an “ON” or “OFF” state signal. First, the collected signal from a PIR detector is normalized by removing its direct-current component. Second, we calculate the squared absolute value of the normalized signal and enframe them into overlapping frames. Third, the energy signal is obtained by accumulating the enframed signal in each column and a predefined threshold is used for determining the current state.

Flowchart of the short-time energy method for transforming the analogue signals into binary state signal

2.3. Mobile Silhouette Sensing

Figure 4 and Figure 5 show the proposed silhouette sensor consisting of a single vertical column of multi-cell PIR detectors, which is attached to an intelligent mobile robot. The sensor array organizes a vertical column of 20 PIR detectors to capture the human pose information at different heights. The lowest-cell detector is 6cm above the ground, and the pitch between any two separated detectors is 6cm. Therefore, the sensor array is able to acquire a crude image in the region with a total height of 120cm. The optical axis of each detector is perpendicular to the longitudinal axis of the object. For better resolution, both the horizontal and vertical FOVs of each detector are 10°.

Presentation of the imaging sensor array consisting of a single column of PIR detectors installed on a mobile robot

Prototype of the mobile infrared silhouette sensor

The user tracking method used by the mobile robot is based on the wireless distributed PIR sensors [16, 17]. The FOVs of distributed sensors are cooperatively coded to support multiple human tracking and identification. The user's location and biometric information are able to transmit to the robot via data centre wirelessly. Then the robot chooses an interesting target and moves close to him/her for scanning imaging.

Under the assumption of knowing the location where a human lays for a long time, the intelligent mobile robot moves near to the interest region and performs a patrol task autonomously. With the help of the mobile robot, the PIR sensor array will undertake scanning using self-rotation. In our experiments, the rotary speed of the robot is approximately 9 degrees per second. The distance between the object and the sensor is 1.5m. For the signal sampling and wireless communication, we use a ultra-low power consumption micro-controller CC2430 from Texas Instruments. The micro-controller samples the PIR sensor's signal at a rate of 10Hz, and then transmits the signal to the data centre wirelessly. The wireless communication in this system is followed by the Zigbee (802.15.4) protocol, which has a low data rate and low power consumption compared with other wireless protocols.

The time spent on a complete scan is approximately 20 seconds. This time is controlled by the maximum rotation speed of the mobile robot, which can be improved by using a more flexible and quicker robot. If the user disappears from the scanning region, the robot will query this with the data centre to confirm the location of the user. If the user still remains within the scanned region and the robot is not able to capture the body image, we infer that access to body sensing is not feasible. Scanning at another position or using other devices could compensate for this disadvantage. If the user moves away from the scanned region, the robot will restart the tracking program.

To confirm the effectiveness of the proposed sensing method, we collected some silhouettes based on typical normal poses and falls in the laboratory as experimental samples for reference. According to research on sedentary living among elderly people [33, 34], they are more accustomed to less frequent and low intensity activities. Older adults often spent much time standing or sitting to work, eat, read or socialize. These samples include the three most common categories: standing, sitting and fall on the ground. Figure 6 presents the experimental scenarios and the acquired silhouettes. All pose silhouettes are normalized as a constant dimension of 20 × 150 and the body is located at the centre in the horizontal direction. After the rotating scanning, the pixelated silhouette images can be recorded. However, the raw silhouettes contain distortion as shown in the column in Figure 6.(b). We use a median filter with size of 3 × 3 for preprocessing the raw silhouettes. It should also be noticed that the binary silhouettes protect the privacy of elderly people.

Illustration of the typical experimental scenario and the acquired silhouettes. (a) Scenarios with standing pose, sitting pose and fall pose. (b) Corresponding silhouettes generated by the mobile infrared silhouette sensor. (c) Refined silhouettes via median filter.

3. Sparse Representation-based Robust Pose Recognition

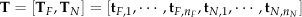

Recent developments in sparse presentation-based classification reveal that a test sample is able to be linearly represented using an overcomplete dictionary whose base elements are the combination of training templates and error-compensation templates [18, 19]. If the data processing centre captures sufficient samples for reference, it is able to represent the test image with a sparse coefficient spanned on the template of the same class. Following this framework, we exploit the sparse presentation-based classification to perform binary silhouettes-based pose recognition.

In the proposed fall detection system, there are two categories of poses including: normal pose and fall pose. We use the labelled training samples to build the template matrix. For all sample images, we first arrange each of them as a vector

Assuming the data centre collected enough training samples of the ith pose image

where the

Then, the linear representation of m can be modified as:

here

In many practical home scenarios, the silhouette image

where ε is the errors vector and we assume that only a small fraction of the entries is nonzero. The nonzero entries of the ∊ are denoted as the errors or obstruction in the image

where

The problem of find sparsest solution for the equation

where || · ||0 is the l0 norm of a vector, and

where || · ||1 is defined as

This problem is a convex optimization problem and there are sophisticated methods with polynomial computational complexity which can be used to solve it. There are two representative algorithms for solving the sparse recovery. The first method is based on the convex optimization and the problem can be solved via LP [35, 36]. The second method is the greedy algorithm and the problem can be solved via sequentially investigating the support of recovered signal [37]. OMP is a widely used method in the greedy algorithm family due to its simplicity and good performance. In this article, we first used CVX for solving the ℓ1 norm optimization, a package for specifying and solving convex programs [38]. Then we find the sparse support of the recovered signal using the normal OMP [37].

For a newly obtained silhouette image, we first compute its sparsest solution following (8). Generally, nonzero entries in the

Illustration of the sparse representation-based pose recognition

4. Experimental Details and Results

4.1. Experimental Setup

The experimental acquisition of normal and fall poses is performed with the involvement of 10 volunteers, two female subjects and eight male subjects. All volunteers are at normal heights, ranging from 160cm to 180cm. The data acquisition process is based on a relatively frontal capture. For each category of activity, the participants are required to perform a self-selected pose and strategy. For each kind of pose, we use the proposed sensor array to scan 6 times under the predefined rate. Thus, there are 60 samples for standing, 60 samples for sitting and 60 samples for fall poses.

Experimental data are divided into two sets: the training templates and testing samples. At the initialization stage, we randomly select 30 samples from each pose for building training templates. Therefore, there are 30 columns for representing fall pose and 60 columns for representing normal pose. Hence, the extended template matrix Φ has size 3000 × 3090. The remaining samples are used for testing the recognition method. The following average results are computed based on 10 times cross-validation. All the recognition experiments are run on an Intel Pentium4 2.8GHz computer under the Matlab implementation. The average time spent on OMP recovery is 0.5738s with maximum 0.6125s, while the average time for LP is 1.8618s with maximum 2.0672s.

4.2. Recognition without Obstruction

We first test the proposed method for the pose recognition without obstruction. Figure 8 illustrates the representative results of algorithm1, the sparse recovery is based on LP. Figure 8.(b) gives the sparse coefficients spanned on the training template, while Figure 8.(c) shows the error coefficients spanned on the error template. It can be seen that the blue entries of the spare coefficients are corresponding to the true pose class and have larger amplitudes than those in error coefficients. The largest amplitude in the estimated candidates is always assigned to the true class. The red or green circle in the Figure 8.(b) indicates the determined pose class. The proposed fall detection method achieves a 100% recognition rate based on both LP and OMP recovery.

Illustration of the recognition without obstruction. (a) The test silhouette images.(b) Estimated spare coefficients w. (c) Estimated error coefficients ê.

Acquiring the infrared silhouette image using the mobile PIR sensor. Extending the template matrix with Normalizing each column of the Φ to have unit l2 norm. Solving the l1 norm minimization problem:

4.3. Recognition with Random Pixel Obstruction

Considering the possible errors caused by PIR sensor and the noise associated with the mobile robot or sensing surrounding, we simulate this situation using the random pixel obstruction on the silhouette images at various levels, from 10% to 50%. The obstruction operation is executed by the ‘bit-or’ operation of the raw images with a random pixel obstruction. Figure 9 illustrates the representative results of algorithml with 20% obstruction, the sparse recovery is based on LP. Figure 9.(c) shows the images with the random pixel obstruction. Figure 9.(d) shows the amplitude of the coefficients on the training template, while Figure 9.(e) shows the amplitude of the coefficients on the error template. It can be seen that the blue entries are corresponding to the true pose class, while a limited number of error coefficients are activated. In these representative examples, the estimated global candidates are sparse and have the largest amplitude at the associated class. The red or green circle in the Figure 9.(d) is chosen as the determined pose class. In this test, the sparse representation-based pose recognition method is able to detect the fall pose and normal pose correctly with serious random noise. Table 1 exhibits the average recognition rate at various levels of random pixel obstruction. The proposed fall detection method achieves similar performance based on both LP and OMP recovery.

Illustration of the recognition with random pixel obstruction. (a) The raw test silhouette images. (b) The masks used for simulating random pixel obstruction. (c) The silhouette images with random pixel obstruction. (d) Estimated spare coefficients ŵ. (e) Estimated error coefficients ê.

Recognition rate with random pixel obstruction

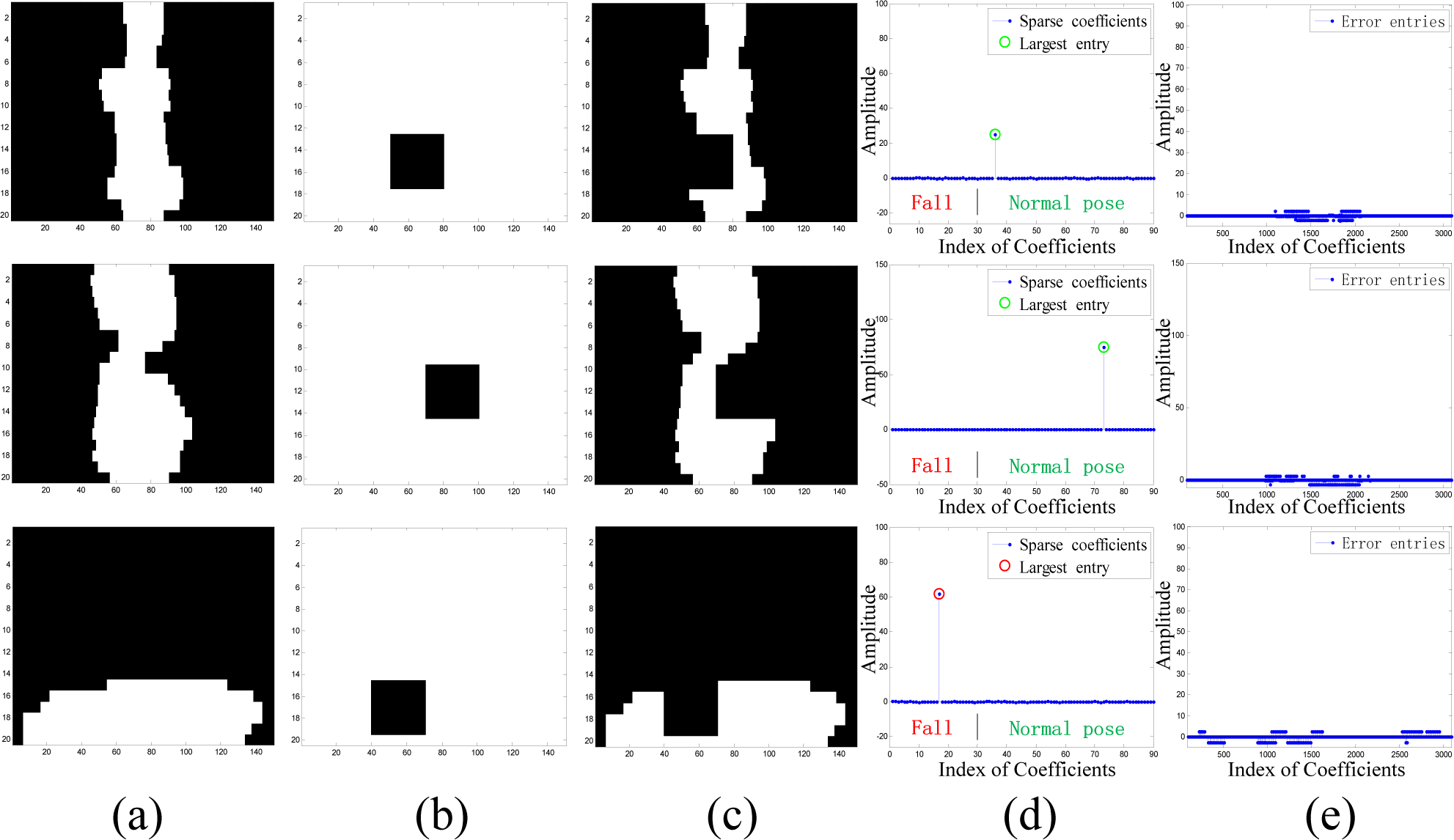

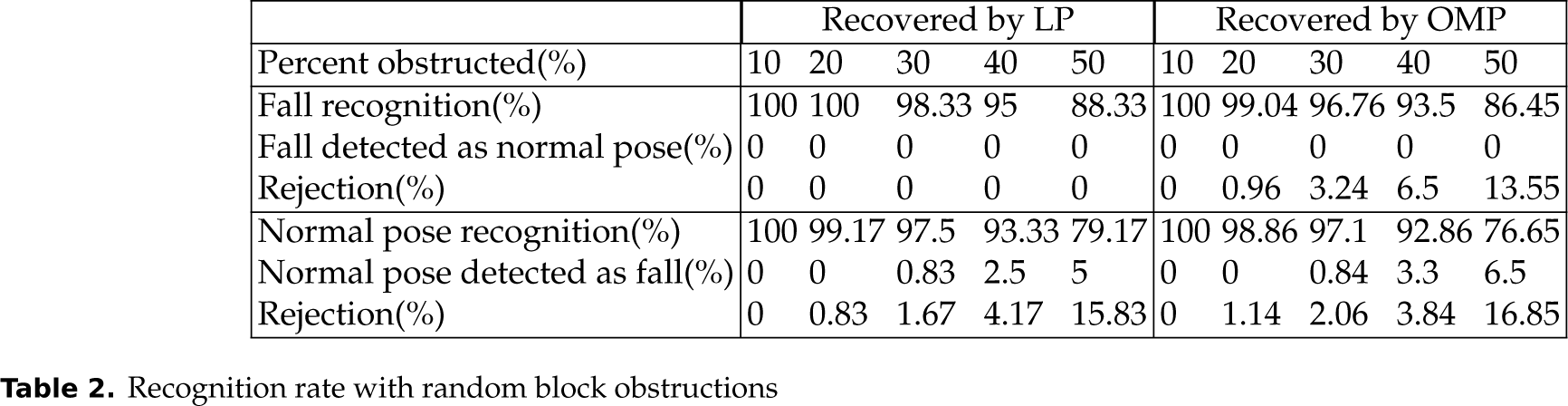

4.4. Recognition with Random Block Obstruction

For simulating a realistic scene containing furniture obstructions, we create random block obstructions on the silhouette images at various levels, from 10% to 50%. The obstruction operation is executed by masking the raw images with random rectangle block obstructions. Figure 10 illustrates the representative results of algorithm1 with 20% obstruction, the sparse recovery is based on LP. Figure 10.(a) shows the raw images and Figure 10.(b) simulates the mask with the random block obstructions. Figure 10.(c) is the simulated silhouette image using the ‘bit-or’ operation between Figure 10.(a) and Figure 10.(b). It can be seen that the blue entries are associated with the true pose class. In these representative examples, the estimated candidates are sparse and have the largest amplitude at the true pose class. The red or green circle with the largest amplitude in the Figure 10.(d) indicates the determined pose class. In this test, the sparse representation-based pose recognition method is able to detect the fall pose and normal pose correctly with serious block obstructions. Table 2 exhibits the average recognition rate at various levels of random block obstruction. The proposed fall detection method achieves similar performance based on both LP and OMP recovery.

Illustration of the recognition with random block obstructions. (a) The raw test silhouette images. (b) The masks used for simulating random block obstructions. (c) The silhouette images with block obstructions. (d) Estimated spare coefficients ŵ. (e) Estimated error coefficients ê.

Recognition rate with random block obstructions

5. Discussions and Conclusions

In this article, we integrate an effective infrared sensing method and robust pose recognition algorithm for elderly-fall detection. For the data acquisition, we use a single column of PIR detectors to implement the infrared silhouette imaging. A mobile robot agent is employed for aiding the mobile silhouette imaging. Fall detection is cast as binary silhouette-based pose recognition. The candidate pose is represented as a linear combination of training templates and error templates. A good pose is able to be approximated by the training template, which will lead to a sparse solution. Although some error coefficients will be activated in the simulated practical scenarios, the combined coefficients are still sparse. The ℓ1 norm minimizations using LP and OMP are used for finding the sparsest solution, and the entity with the largest amplitude indicates the class of the testing sample. From the experimental results, both algorithms have similar performance, but the OMP method will take less computational time compared with the LP method. In some limited circumstances, the OMP is a better choice.

However, there are some limitations to our system. First, the data acquisition process is based on a relatively frontal capture. For each category of activity, the participants were required to perform a self-selected pose and strategy. In the case that a frontal acquisition is not possible, the posture may compromise the structure of the data acquired, which is a limitation of this fall detection method. If the robot has difficulty accessing the frontal position in real application scenarios, we can deploy a similarly-structured PIR array on the ground horizontally to acquire a tangent silhouette, or a help button to assist the fall detection. Second, in practical usage, if the service environment contains certain heating sources at body temperature, they will interfere with the silhouette imaging process. Therefore, the proposed system will have a better practical performance in more controlled environments such as nursing homes.

While the proposed methods do not help to prevent falls or decrease the number of falls occurring in the home, they may provide a sense of comfort and reassurance to the elderly. If an emergency occurred, immediate assistance and care would be available to them. The proposed sensing model is not only advantageous in providing a low-cost, non-invasive motion sensing method, which will not interfere with the lighting condition, it may also become a ubiquitous agent for healthcare applications.

6. Acknowledgements

The authors would like to thank the anonymous reviewers for their constructive comments and suggestions. They also wish to thank all the staff of the Information Processing & Human-Robot Systems lab in Sun Yat-sen University for their aid in conducting the measurement experiments. This work is partly supported by the National Natural Science Foundation of LiaoNing Province (grant no.2013020008) and the National Natural Science Foundation of China (grant no.61074167).