Abstract

This paper describes the National Research Council of Canada's submission to the 2011 i2b2 NLP challenge on the detection of emotions in suicide notes. In this task, each sentence of a suicide note is annotated with zero or more emotions, making it a multi-label sentence classification task. We employ two distinct large-margin models capable of handling multiple labels. The first uses one classifier per emotion, and is built to simplify label balance issues and to allow extremely fast development. This approach is very effective, scoring an F-measure of 55.22 and placing fourth in the competition, making it the best system that does not use web-derived statistics or re-annotated training data. Second, we present a latent sequence model, which learns to segment the sentence into a number of emotion regions. This model is intended to gracefully handle sentences that convey multiple thoughts and emotions. Preliminary work with the latent sequence model shows promise, resulting in comparable performance using fewer features.

Keywords

Introduction

A suicide note is an important resource when attempting to assess a patient's risk of repeated suicide attempts.1,2 Task 2 of the 2011 i2b2 NLP Challenge examines the task of automatically partitioning a note into regions of emotion, instruction, and information, in order to facilitate downstream processing and risk assessment.

The provided corpus of emotion-annotated suicide notes is unique in many ways.

3

The text is characterized by being emotionally-charged, personal, and raw. By definition, the writing is the product of a distressed mind, often containing spelling mistakes, asides, ramblings, and vague allusions. The text's unedited nature makes it difficult for basic NLP tools: optical character recognition, tokenization and sentence breaking errors abound. The provided emotion annotation also has some interesting properties. Annotation is given at the sentence level within complete documents, and each sentence can be assigned multiple labels. Where one would expect most of a suicide note to be rich with emotion, more than 73% of the training sentences are annotated with no label or with only

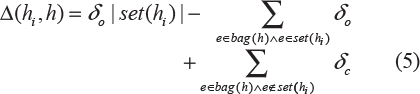

We experiment with two different large-margin learning algorithms, each using a similar, knowledge-light feature set. The first approach trains one classifier to detect each label, a strategy that has been applied successfully to other multi-label tasks.4–6 The second approach attempts to segment a sentence into multiple emotive regions using a latent sequence model. This novel method looks to maintain the strengths of a Support Vector Machine (SVM) classifier, while enabling the model to gracefully handle run-on sentences. However, because so much of the data bears no emotion at all, the issue of class balance becomes a dominating factor. This gives the advantage to the one-classifier-per-label system, which uses well-understood binary classifiers and produces our strongest result. It scores 55.22 in micro-averaged F-measure, finishing in fourth place. The latent sequence model scores 54.63 in post-competition analysis, and produces useful phrase-level emotion annotations, indicating that it warrants further exploration.

Related Work

Over the last decade, there has been considerable work in sentiment analysis, especially in determining whether a term, sentence, or passage has a positive or negative polarity. 7 Work on classifying terms, sentences and documents according to the emotions they express, such as anger, joy, sadness, fear, surprise, and disgust is relatively more recent, and it is now starting to attract significant attention.3,8–10

Emotion analysis can be applied to all kinds of text, but certain domains tend to have more overt expressions of emotions than others. Computational methods have not been applied in any significant degree to suicide notes, largely because of a paucity of digitized data. Work from the University of Cincinnati on distinguishing genuine suicide notes from either elicited notes or newsgroup articles is a notable exception.1,2 Neviarouskaya et al, 9 Genereux and Evans, 11 and Mihalcea and Liu 12 analyzed web-logs. Alm et al, 13 Francisco and Gervá, 14 and Mohammad 15 worked on fairy tales. Zhe and Boucouvalas, 16 Holzman and Pottenger, 17 and Ma et al 18 annotated chat messages for emotions. Liu et al 19 and Mohammad and Yang 20 worked on email data. Much of this work focuses on the six emotions studied by Ekman 21 : joy, sadness, anger, fear, disgust, and surprise. Joy, sadness, anger, and fear are among the fifteen used in i2b2's suicide notes task.

Automatic systems for analyzing emotional content of text follow many different approaches: a number of these systems look for specific emotion denoting words, 22 some determine the tendency of terms to co-occur with seed words whose emotions are known, 23 some use hand-coded rules, 9 and some use machine learning.8,13 Machine learning is also a dominant approach for general text classification. 4 Tsoumakas and Katakis 6 provide a good survey of machine-learning approaches for multi-label classification problems, including text classification.

One Classifier per Label

The provided training data consists of sentences annotated with zero or more labels, making it a multi-label classification problem. One popular framework for multi-label classification builds a separate binary classifier to detect the presence or absence of each label.4,5 The classifiers in our One-Classifier-Per-Label (1CPL) system are linear SVMs, each trained for one of the emotions by using as positive training material those sentences that bear the corresponding label, and as negative training material those sentences that do not. To counter the strong bias effects in this data, we adjust the weight of each class so that negative examples have less impact on learning.

Model

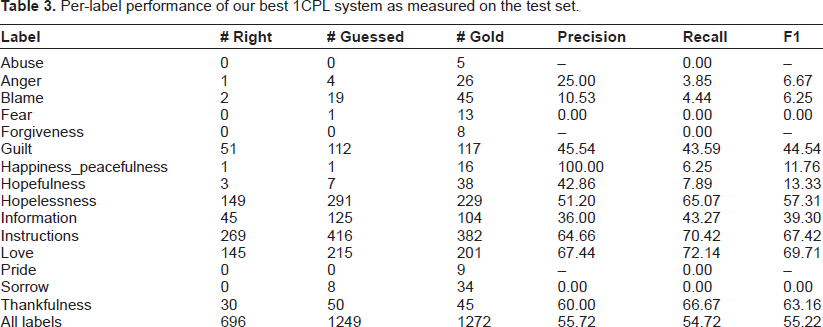

We view our training data as a set of training pairs

The 1CPL model builds a binary classifier for each of the |

where

We train each model using an in-house SVM that is similar to LIBLINEAR. 24 Training is very fast, allowing us to test all 15 classifiers over 10 folds in less than a minute on a single processor. Our selection criterion for both feature design and hyper-parameter tuning is micro-averaged F-measure, as measured on the complete training set after 10-fold cross-validation. We now describe the feature templates used in our system, grouped into thematic categories:

Base

Our base feature set consists of a bias feature that is always on, along with a bag of lowercased word unigrams and bigrams. This base vector is normalized so that

Sentence

This template summarizes the sentence in broad terms, using features like its length in tokens. This includes features that check for the presence of manually-designed word classes, reporting whether the sentence contains any capitalized words, only capitalized words, any anonymized names (John, Jane), any future-tense verbs, and so on.

Thesaurus

We included two sources of hand-crafted word clusters. The first counts the words matching each category from the freely available

Character

This template returns the set of cased character 4-grams found in the sentence. These features are intended as a surrogate for stemming and spelling correction, but they also reintroduce case to the system, which otherwise uses only lowercased unigrams and bigrams.

Document

Four document features describe the document's length and its general upper/lower-case patterns. Document features are identical across each sentence in a document.

Experiments

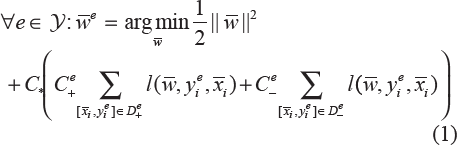

Table 1 reports the results of our 1CPL submissions to the competition in terms of micro-averaged precision, recall and F-measure. System 1 was intended to test our pipeline and output format, and was submitted before tuning was complete. System 2 uses all of the features listed above, with

Test-set performance of our 1CPL systems.

Ablation

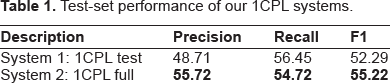

Table 2 shows the results of our ablation experiments. With each modification, we re-optimized C* in 10-fold cross-validation and then froze it for the corresponding test set run. First, we removed the class balance parameters

Ablation experiments for our 1CPL model. Results exceeding the baseline are in bold. Cross-validation results are micro-averaged scores measured on the entire training set (4241 sentences, 600 documents, 2522 gold labels). Test results are on the official test set (1883 sentences, 300 documents, 1272 gold labels).

Per-label performance

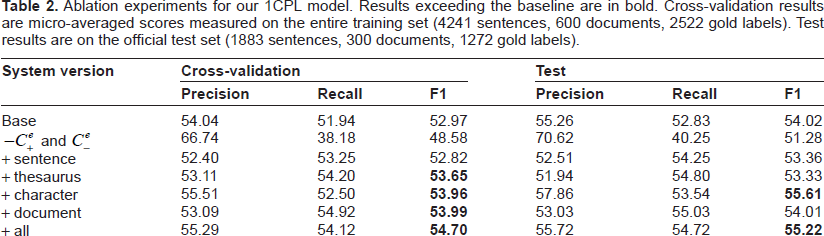

Table 3 shows our performance as decomposed over each label. With the exception of

Per-label performance of our best 1CPL system as measured on the test set.

Label confusion

We also examined those cases where both the model and the gold-standard agreed a sentence should be labeled, but they did not agree on which labels. We found only three sources of substantial confusion in the test set:

Discussion

The 1CPL model is very fast to train, and our automatically determined class weights

Unfortunately, 1CPL cannot reason about multiple label assignments simultaneously. No one classifier knows what other labels will be assigned, nor does it know how many labels will be assigned. This means that we cannot model when a sentence should be given no labels at all, nor can we model the fact that longer sentences tend to accept more labels. Our next approach attempts to address some of these weaknesses.

Latent Sequence Model

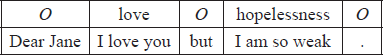

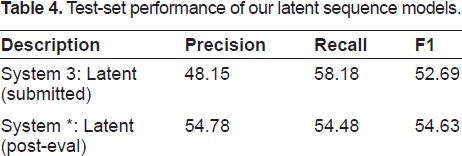

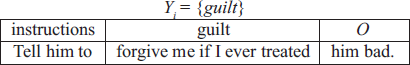

On many occasions, when a sentence expresses multiple emotions, it can be segmented into different parts, each devoted to one emotion. Take for example, the following sentence, which was tagged with

Dear Jane I love you but I am so weak.

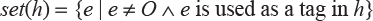

It is easy to see that this could be segmented into emotive regions. We represent these regions using a tag sequence, with tags drawn from

Inspired by latent subjective regions in movie reviews, 25 our latent sequence model assumes that each training sentence has a corresponding latent (or hidden) tag sequence that was omitted from the training data. Our latent sequence model attempts to recover these hidden sequences using only the standard training data, requiring no further annotation.

By learning a tagger instead of a classifier, we hope to achieve three goals. First, we hope to learn more precisely from sentences that have multiple labels: a

Model

We view our training data as a set of training pairs

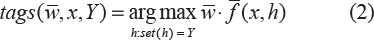

For example, for

Here

We now find ourselves in a circular situation. If we had a tagging model

The latent SVM alternates between building training sequences and learning sequence models. Given an initial

For each training point, transform label sets

Learn a new

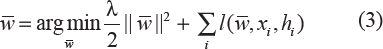

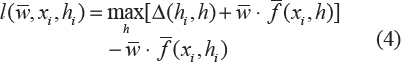

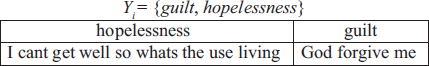

The structured SVM optimizes its weights according to the following objective:

where λ is a regularizing hyper-parameter and

and Δ (

where

The latent sequence model is substantially slower than 1CPL, but still quite manageable. We can train and test one inner loop in about 12 minutes, meaning all 10 folds can be tested in 2 hours. The system rarely requires more than

We adapt the baseline and thesaurus features from 1CPL to our latent sequence model. Recall that a tag sequence

Experiments

Test-set performance of our latent sequence models.

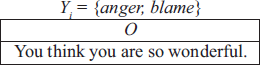

The current version is also prone to over-segmentation, and does not always split lines at reasonable positions:

This indicates that the system could benefit from more feature that help it detect good split points. There are also cases where our one-emotion-perregion assumption breaks down, with two emotions existing simultaneously. Here, two emotions label one clause, and the system catches neither:

Like the 1CPL system, the majority of our errors correspond to problems with empty sentences: either leaving emotion-bearing sentences empty, or erroneously assigning an emotion to an empty sentence.

Conclusion

We have analyzed two machine learning approaches for multi-label text classification in the context of emotion annotation for suicide notes. Our one-classifier-per-label system provides a clean solution for class imbalance, allowing us to focus on feature design and hyper-parameter tuning. It scores 55.22 F1, placing fourth in the competition, without the use of web-derived statistics or re-annotated training data. Beyond basic word unigrams and bigrams, its most important features are character 4-grams.

We have also presented preliminary work on a promising alternative, which handles multiple labels by segmenting the text into latent emotive regions. This gracefully handles run-on sentences, and produces easily-interpretable system output. This approach needs more work to match its more mature competitors, but still scores favorably with respect to the other systems, receiving an F1 of 54.63 in post-competition analysis.

Disclosures

Author(s) have provided signed confirmations to the publisher of their compliance with all applicable legal and ethical obligations in respect to declaration of conflicts of interest, funding, authorship and contributorship, and compliance with ethical requirements in respect to treatment of human and animal test subjects. If this article contains identifiable human subject(s) author(s) were required to supply signed patient consent prior to publication. Author(s) have confirmed that the published article is unique and not under consideration nor published by any other publication and that they have consent to reproduce any copyrighted material. The peer reviewers declared no conflicts of interest.