Abstract

In cricket, all-rounders play an important role. A good all-rounder should be able to contribute to the team by both bat and ball as needed. However, these players still have their dominant role by which we categorize them as batting all-rounders or bowling all-rounders. Current practice is to do so by mostly subjective methods. In this study, the authors have explored different machine learning techniques to classify all-rounders into bowling all-rounders or batting all-rounders based on their observed performance statistics. In particular, logistic regression, linear discriminant function, quadratic discriminant function, naïve Bayes, support vector machine, and random forest classification methods were explored. Evaluation of the performance of the classification methods was done using the metrics accuracy and area under the ROC curve. While all the six methods performed well, logistic regression, linear discriminant function, quadratic discriminant function, and support vector machine showed outstanding performance suggesting that these methods can be used to develop an automated classification rule to classify all-rounders in cricket. Given the rising popularity of cricket, and the increasing revenue generated by the sport, the use of such a prediction tool could be of tremendous benefit to decision-makers in cricket.

Introduction

An all-rounder in cricket is a player who is capable of performing well at both batting and bowling. These all-rounders still have their dominant role by which one categorizes them as batting all-rounders or bowling all-rounders. To the knowledge of authors of this paper, currently, there is no precise method for a player to be categorized as a bowling all-rounder or a batting all-rounder. Therefore, the current practice of accomplishing this tends to be very much subjective. In this paper, authors attempt to find a precise method to categorize all-rounders using machine learning techniques. A review of the literature on the problem including a brief introduction to cricket is presented in the next section.

What is cricket?

Cricket is a field game played between two teams. Each team consists of eleven players that include several batsmen, several bowlers, and a wicket keeper. Cricket matches are played on a grass field, in the center of which is a flat strip of ground 20 m long and 3 m wide called the pitch. At both ends of the pitch, 20 m apart, wickets are placed. A wicket, usually made of wood, is used as a target of the bowler. The bowler bowls the ball from one end of the pitch, and the batsman who is on the other end (striker) tries to hit the ball with the bat while protecting his wicket. Once a batsman hits the ball sufficiently far, he runs to the (non-striker) wicket while the other batsman at the non-striker wicket simultaneously runs to the striker wicket, accumulating a single run. Manage and Scariano (2013) provides more details about scoring and other aspects of cricket.

There are three main types of cricket games, namely test cricket, One Day International (ODI), and Twenty20, which is rapidly becoming popular among cricket fans. In a cricket game, before play commences, the two team captains toss a coin to decide which team shall bat or bowl first. The captain who wins the toss makes his decision on the basis of tactical considerations, based on ground conditions and the strengths and weaknesses of the two teams.

One day international (ODI) cricket

An ODI cricket match is played on a single day. Each team gets only one innings, and that innings is restricted to 50 overs (six deliveries per over). In an innings, each bowler is restricted to bowling a maximum number of overs equal to one fifth (10 overs in ODI) of the total number of overs in the innings. If ten batsmen are out before the end of the 50

It is the responsibility of the selectors to strategically choose batsmen, bowlers, and a wicket keeper based on both teams– strengths and weaknesses. Furthermore, as we mentioned, each team must have at least five players who are capable of bowling. Cricket captains usually change the bowlers around to introduce variation and to prevent the batsmen building longer innings and partnerships. For this, even though a team must consist of a minimum of five bowlers, team captains usually take advantage of having more than five players to bowl during an innings. Consequently, team selectors place particular emphasis on having several players who can excel in both batting and bowling.

All-rounders

An all-rounder is a player who is capable of contributing to the team by both bat and ball as needed. Having several capable all-rounders in a team is a great asset to the captain. While it is not uncommon to have all-rounders as top-order batsmen, they usually play in the middle order. Their task is to carry on the momentum built by the top-order batsmen or to take control of building the innings if the top-order batsmen collapse early. Even though all-rounders are good at both batting and bowling, they still have their dominant role by which we categorize them as batting all-rounders or bowling all-rounders. In some situations, this classification can be a challenging task. To best of our knowledge, there is no standard method to do this classification. The goal of this paper is to determine an appropriate method for classifying all-rounders into bowling all-rounders or batting all-rounders, based on their performance parameters.

Applications of machine learning in cricket

Machine learning is becoming popular in statistical data analysis, especially as a classification technique. It has the potential to predict both game outcomes and player performance in cricket. Given the rising popularity of cricket, and the increasing revenue generated by the sport, the use of machine learning as a prediction tool could be of tremendous benefit to decision-makers in cricket.

Several studies have used machine learning techniques and other related statistical procedures for prediction and classification in sports. Saikia and Bhattacharjee (2011) used stepwise multinomial regression and naïve Bayes classification models to classify all-rounders in the Indian Premier League (IPL) tournament. In that article, the authors suggested four different classifications of cricket players as performer, underperformer, batting all-rounder, and bowling all-rounder. Akhtar and Scarf (2012) suggested a method to forecast the outcome probabilities of test matches using a sequence of multinomial logistic regression models. Davis et al. (2015) provided a methodology to investigate both career performances and current form of the players in Twenty20 cricket. Asif and McHale (2016) developed a dynamic logistic regression (DLR) model for forecasting the winner of ODI cricket matches at any point of the game. Pathak and Wadhwa (2016) used modern classification techniques such as naïve Bayes, support vector machines, and random forest to conduct a comparative study to predict the outcome of ODI cricket. Agarwal et al. (2017) have considered factors for the selection of 11 players from a pool of 16 players, based on relative team strengths between the competing teams. Jayalath (2018) discussed a machine learning approach to analyze ODI cricket games. Jayanth et al. (2018) proposed a supervised learning method using the SVM model with linear, polynomial, and RBF kernels to predict the outcome of cricket matches. They also introduced a player ranking system using the performance statistics. Khan et al. (2019) used logistic and log-linear regression models to explore the association of influential factors with match results of ODI cricket games. Wickramasinghe (2020) discussed a naïve Bayes approach to predict the winner of an ODI cricket game.

There have been numerous studies that apply machine learning techniques and other related methods in other sports data as well. Ofoghi et al. (2013) discussed the utilization of an unsupervised machine learning that assist cycling experts in the crucial decision-making processes for athlete selection, training, and strategic planning in the track cycling. Leung and Joseph (2014) presented a sports data mining approach to predict outcomes in sports such as college football. Rein and Memmert (2016) discussed how big data and modern machine learning technologies aid in developing a theoretical model for tactical decision making in team sports. Baboota and Kaur (2019) created a feature set for determining the most important factors for predicting the results of a football match using feature engineering and exploratory data analysis. They also created a highly accurate predictive system using machine learning. Thabtah et al. (2019) proposed a new intelligent machine learning framework for predicting game results in the National Basketball Association (NBA) by aiming to discover the influential features that affect the outcomes of NBA games. Yi and Wang (2019) used the advantages of machine learning techniques in data analysis and feature mining in the training of dragon boat sports. They proposed a machine learning-based safety mode control model for dragon boat sports physical fitness training. Cust et al. (2019) reviewed the literature on machine and deep learning for sport-specific movement recognition using inertial measurement units and computer vision data inputs. By analyzing some recent research on sports prediction, Bunker and Thabtah (2019) have also shown that machine learning techniques such as artificial neural network can be used as a prediction and classification technique in sports data.

Data set

We have included 149 players (all-rounders) in our study, most of whom are currently playing in international games. We have also included some players who have recently retired from international cricket to improve the applicability of our conclusions. Out of these, 83 were bowlers (bowling all-rounders) and 66 were batsmen (batting all-rounders). This was based on the categorization from the respective teams (countries). There were some players for whom that categorization was not listed. For those cases, we have searched through expert match commentaries and decided the category based on those expert comments. We have separated the data set into two sets, using random assignment as usual. Consequently, 112 players (75%) were used in model building; that was called the training data set. The remaining 37 players (25%) were set aside as the testing data set to validate the models. In the training data set, there were 62 bowlers and 50 batsmen. The testing data set consisted of 21 bowlers and 16 batsmen.

Classification of all-rounders is done based on their performance statistics. The authors have carefully selected the following statistics to accomplish this task.

Runs scored, batting average, and batting strike rate are batting statistics, for which a higher value indicates better performance. The number of wickets taken, bowling average, economy rate, and bowling strike rate are bowling statistics. Better performance is indicated by higher values for wickets taken, and lower values for the other three bowling statistics. As shown in Table 2.1, for the data set we considered, mean batting averages respectively for batting all-rounders and bowling all-rounders were 33.74 and 15.42. This indicates that a batting all-rounder scores 18.32 runs more than a bowling all-rounder on average. The batting strike rate for batting all-rounders was 87.63, while the same for bowling all-rounders was 76.32. This indicates that a batting all-rounder scores 11.31 runs more per hundred balls on average. The mean bowling averages for batting all-rounders and bowling all-rounders were 48.78 and 32.45 respectively. This shows that a batting all-rounder conceded 16.33 more runs per wicket on average. Based on mean bowling strike rates, we see that a typical batting all-rounder uses 14.68 more balls per wicket than a bowling all-rounder. Economy rates indicate that a batting all-rounder concedes 0.33 more runs per over on average. These statistics can be used as reference guidelines as we try to derive an automated classification mechanism for classifying all-rounders.

Descriptive Statistics of Performance Parameters

Descriptive Statistics of Performance Parameters

Furthermore, due to the nature of the predictor variables in our study, they usually have some inherited dependency among them. To overcome issues like multicollinearity that arise due to this dependency, we have converted these predictor variables to principal components and those principal components were used as the new predictor variables with model building. The first five principal components explain 97.6% of the total variability, and they can be expressed as linear combinations of the original predictors as below;

Next section presents results along with a brief introduction to the six different classification techniques that we used.

In this study, we have applied six different techniques that are being commonly used by practitioners for classification, to classify all-rounders into bowling all-rounders or batting all-rounders based on their performance parameters. Those techniques are: Logistic Regression (LR) Linear Discriminant Analysis (LDA) Quadratic Discriminant Analysis (QDA) Naíve Based Classifier (NB) Support Vector Machine (SVM) Random Forest (RF)

To quantify the performance of the different classification methods, we used two evaluation metrics; the first was accuracy, in which the classification strength is evaluated at a specific threshold. This is, in fact, the rate of correct classification based on that threshold. The second metric was the Area Under Curve (AUC) of the Receiver Operating Characteristic (ROC) curve, which is a more comprehensive measure. It is a tradeoff between the true positive rate and false positive rate of a particular classification method. AUC is a more aggregate measure that gives an overall summary of the performance based on multiple thresholds. Therefore, it is known that the AUC outranks accuracy measures when quantifying the performance of classification techniques. Nevertheless, we present the results of both accuracy and AUC for each of the six classification methods. Both of these measures were calculated for the training sample as well as for the testing sample. Performance based on testing sample is essential to evaluate the robustness of the techniques.

Logistic regression

Logistic Regression is a machine learning technique that is commonly used for classification. It is highly used in binary classification problems. A binary logistic regression model was fitted by taking the player’s grouping (bat or ball) as the response variable. The predicted probability of the

For the training sample, out of the 62 bowlers, the model misclassified 2 players (Mohammad Saifuddin and Imad Wasim). Out of the 50 batsmen, 4 were misclassified (Hardik Pandya, Dwayne Smith, Moises Henriques, and Mominul Haque). This gave a correct classification rate of 94.64% with an area under the ROC curve 0.9829. To assess the robustness, the model was used to predict the class of the players in the testing sample. Out of the 21 bowlers, none were misclassified. Furthermore, out of the 16 batsmen, 3 were misclassified (Dasun Shanaka, Stuart Binny, and Corey Anderson). The confusion matrix for logistic regression along with other confusion matrices for the rest of the techniques are provided in the Appendix. The model gave a correct classification rate of 91.89%, with an area under the curve 0.9912 for the testing sample. Fig. 4 shows the ROC curve for the testing sample.

Linear discriminant analysis

Linear Discriminant Analysis (LDA) is another simple and effective method for classification. LDA is commonly used as a dimensionality reduction technique as well. As a classification technique, LDA creates the axes for best class separability. The LDA algorithm divides the data into output categories using linear boundaries based on the given predictor variables. As usual, here we used the training sample to build the LDA classification function. The predictive performance was evaluated based on both training and testing samples.

In the training sample, out of the 62 bowlers, 4 were misclassified (Mohammad Saifuddin, James Faulkner, Andile Phehlukwayo, and Imad Wasim). Out of the 50 batsmen, 3 were misclassified (Dwayne Smith, Moises Henriques, and Mominul Haque). The model gave a correct classification rate of 93.75%, with an area under the curve 0.9729. In the testing sample, out of the 21 bowlers, only Andre Russell was misclassified. Furthermore, out of the 16 batsmen, none were misclassified. The correct classification rate for the testing sample was 97.3%, with an area under the curve 0.9792. Fig. 5 shows the ROC curve for testing sample.

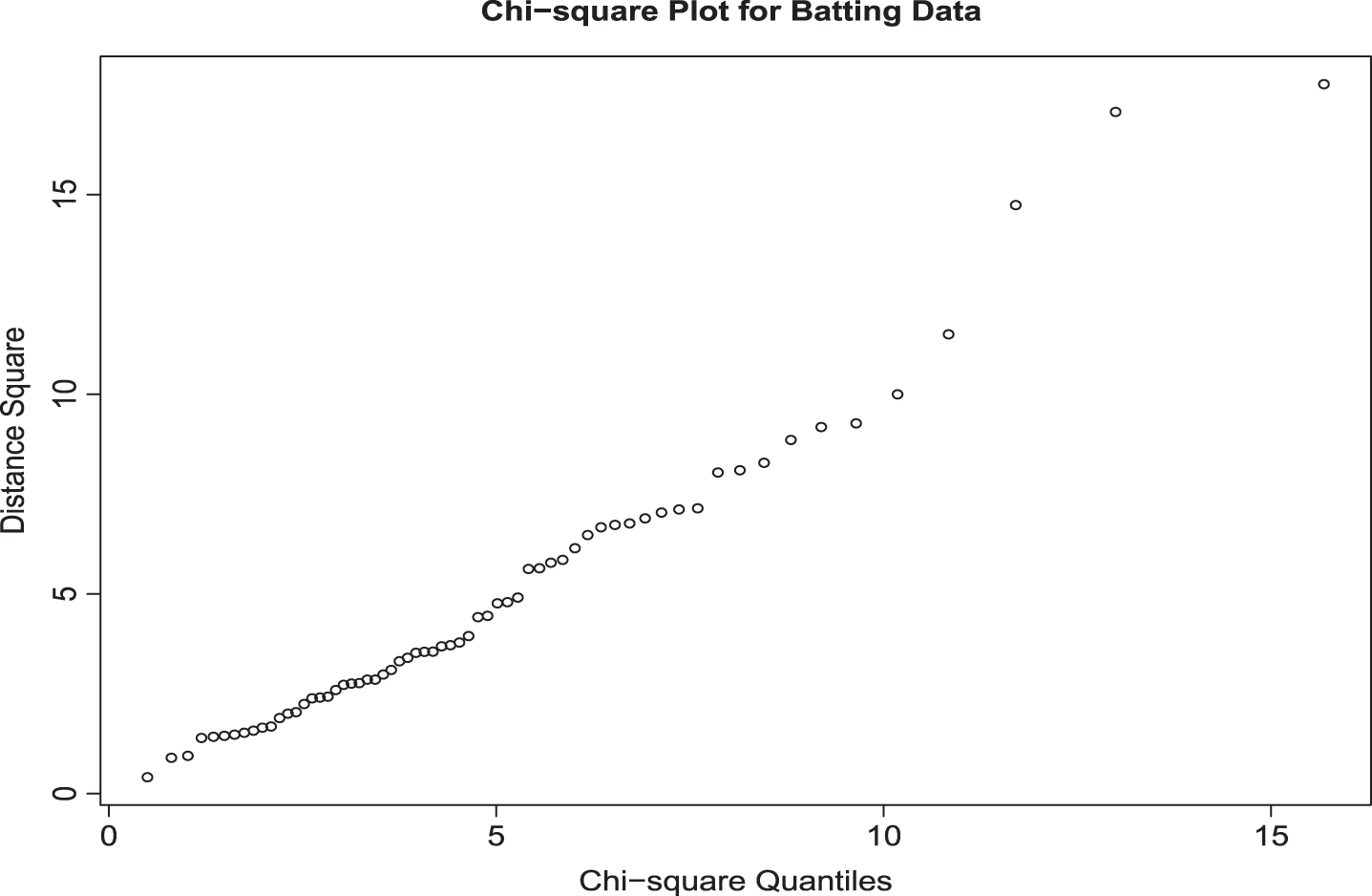

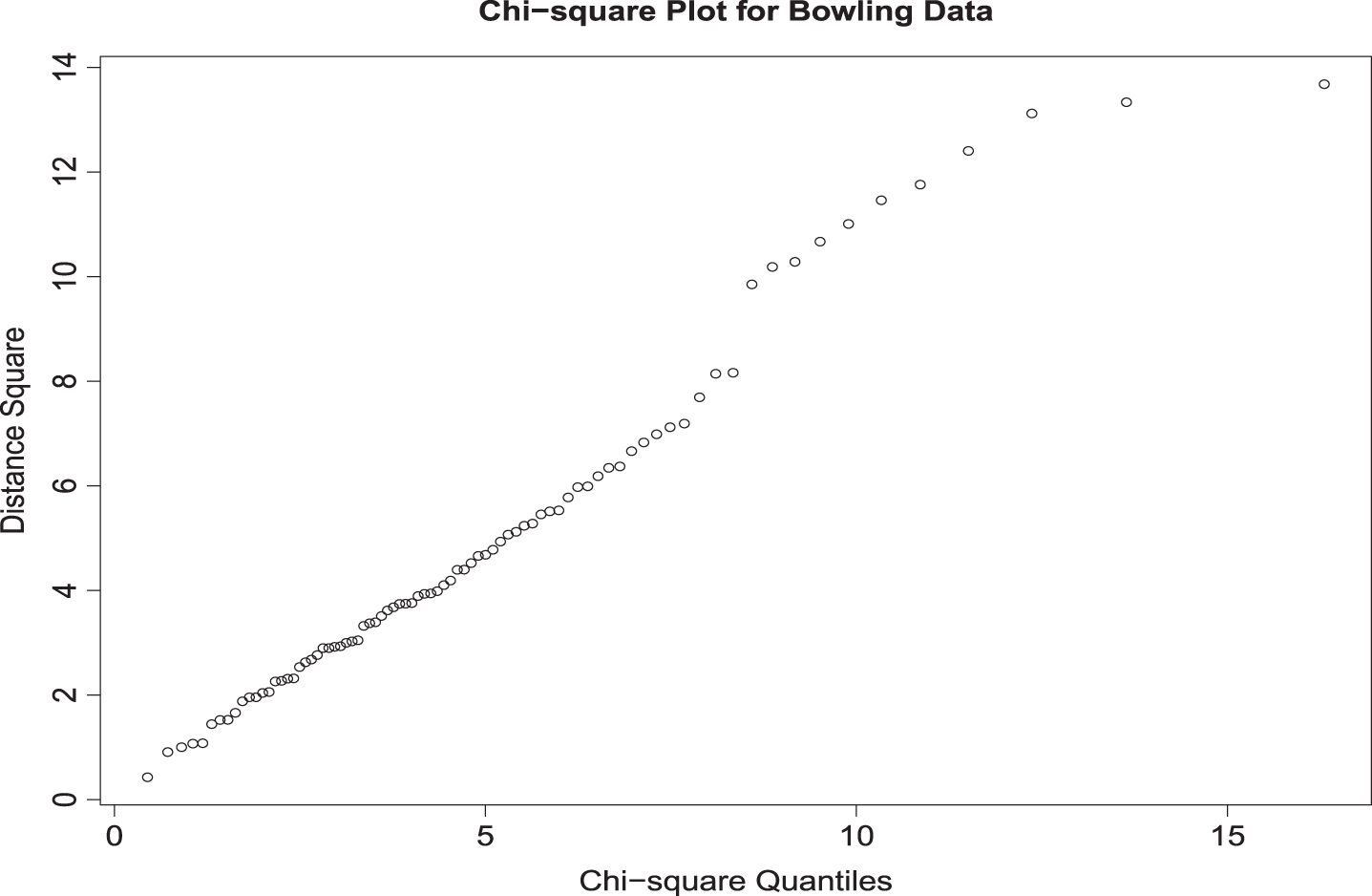

The assumption of normality is required for both LDA and QDA methods. Chi-square plots for batting and bowling data in Fig. 1 and 2 do not indicate any serious violations of this assumption. Homogeneity of the covariance matrices is an assumption for the LDA method. Box–s M test indicated that (Approximate chi-square value = 241.06 with degrees of freedom = 15) the two covariance matrices are not the same. However, since the Box–s M test is highly sensitive to normality, it not uncommon to see such outcomes.

Chi square Plot for Batting Data.

Chi square Plot for Bowling Data.

Quadratic Discriminant Analysis (QDA) is an extension of Linear Discriminant Analysis. QDA is more flexible than LDA and it is applied when the within-class covariance matrices are not equal. The flexibility is achieved due to the relaxation of the assumptions in the covariance structure of the classes. However, it requires a substantial amount of computation due to the large number of parameter estimations. The discriminant function for the QDA is given by;

The classification is done by categorizing the player in the category that gives the highest value of

In the training sample, the QDA model misclassified 4 out of the 62 bowlers (Seekkuge Prasanna, Mohammad Saifuddin, Andile Phehlukwayo, and Mohammad Nabi). Out of the 50 batsmen, 8 were misclassified (Hardik Pandya, Moeen Ali, Dwayne Smith, Moises Henriques, Nasir Hossain, Colin Grandhomme, Mosaddek Hossain, and Mominul Haque). Consequently, the model correctly classified 89.29% of the players, giving an area under the curve 0.9645. In the testing sample, the model correctly classified all 21 bowlers and only one of 16 batsmen was misclassified (Dhananjaya de Silva). The model possessed high robustness, giving a correct classification rate of 97.30%, with an area under the curve 0.9970 for the testing sample. Fig. 6 shows the ROC curve for testing sample.

As shown in the previous section, the assumption of normality can be verified by the chi-square plots(Fig. 1 and 2). Box–s M test results (Approximate chi-square value = 241.06 with degrees of freedom = 15) show that the covariance matrices are not equal which is the second assumption that should be satisfied for the QDA method.

Naïve Bayes classifier

Naïve Bayes is a probabilistic machine learning technique based on Bayes Theorem. It uses the probabilistic approach to classify a data set. Naïve Bayes assumes that the predictor variables of the classes are independent. Although this is a strong assumption, it is true in our data set, as our predictor variables are independent, being the principal components. In our data set, each feature variable

and

Given the prior

When we applied Naïve Bayes Classifier to the training sample, it misclassified 4 of the 62 bowlers (Seekkuge Prasanna, Mohammad Saifuddin, James Faulkner, and Imad Wasim) and 6 of the 50 batsmen (Elton Chigumbura, Dwayne Smith, Moises Henriques, Nasir Hossain, Colin Grandhomme, and Mominul Haque). The model had a correct classification rate of 91.07%, with an area under the ROC curve 0.9716. In the testing sample, the model incorrectly classified two of 21 bowlers (Andre Russell and Al-Amin Hossain) and 3 of 16 batsmen (Dhananjaya de Silva, Kevin O’Brien, and Stuart Binny). The model predicts the players 86.49% times correctly, giving an area under the ROC curve 0.9554. Fig. 7 shows the ROC curve for the testing sample.

Support vector machine

Support Vector Machine (SVM) is a machine learning technique that can be used for classification and regression. As in the other methods, here our focus was to use SVM as a classification technique to categorize all-rounders as batting all-rounders or bowling all-rounders. The goal in SVM is to find the optimal boundary to classify a data set. SVM maximizes the margin around the hyperplane that separates the classes. The support vectors are the subset of training samples that help to specify the decision function. Depending on the nature of the data set, an analyst has to select the appropriate kernel function for SVM. The task of a kernel function is to transform the data set into a form that facilitates a linear classification. Furthermore, these kernel functions are generalizations of the dot products. The simplest one is the linear kernel, which does not need any transformation. Several other non-linear kernel functions can be applied to transform the data, such as radial bases kernel (RBF), polynomial kernel and sigmoid kernel. An Introduction to Statistical Learning with Applications in R by James et al. (2013) provides an excellent introduction to SVM and other manchine learning teachniques.

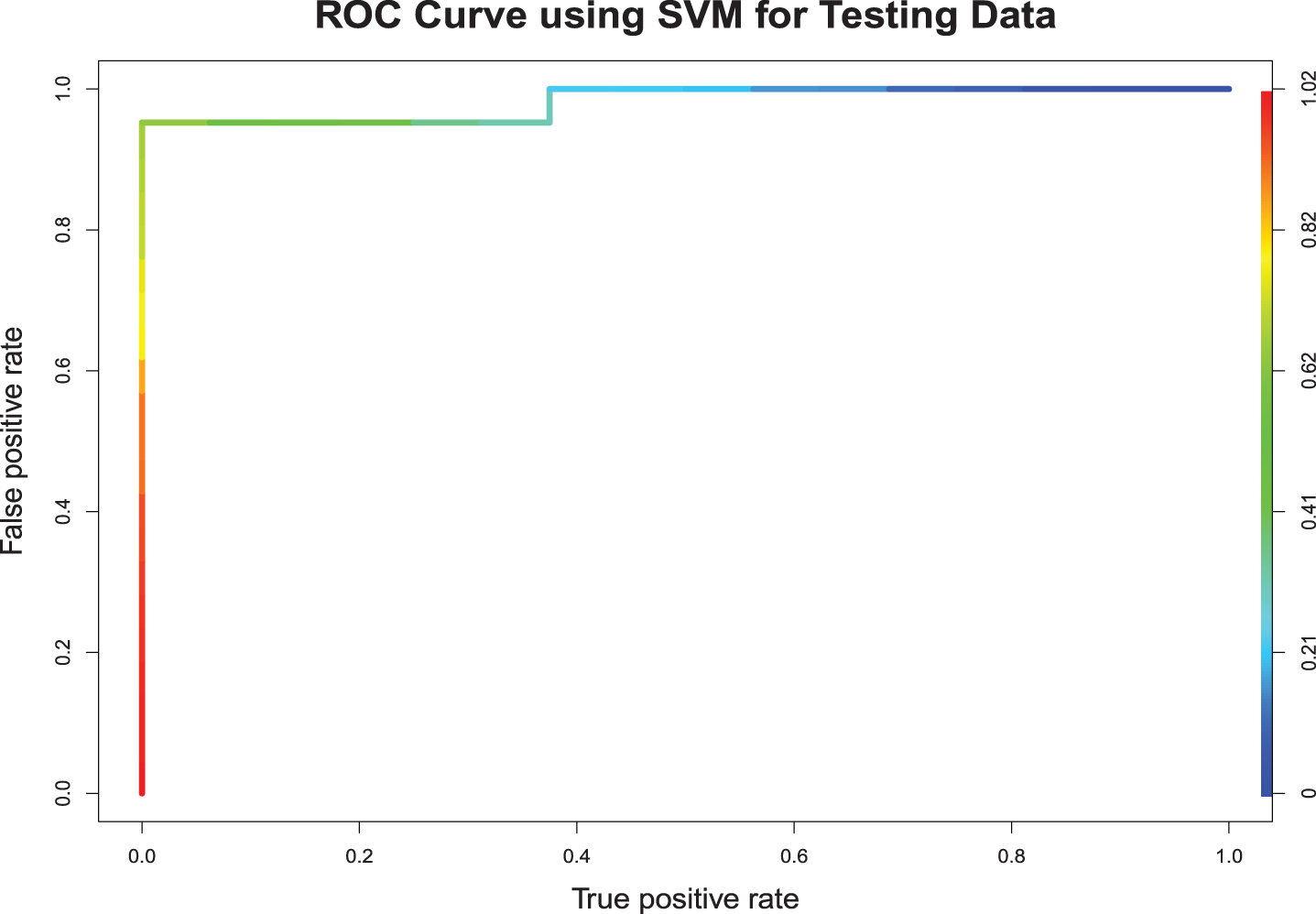

To find the appropriate kernel in our study, we have investigated four different kernels, namely linear kernel, radial base kernel (RBF), polynomial kernel, and sigmoid kernel. Based on the classification accuracy of both training and testing samples, we have decided to use the RBF kernel in our analysis. As shown in Fig. 3, the appropriate value of the cost (tuning parameter) is 2. In the training sample, out of the 62 bowlers, 3 were misclassified (Seekkuge Prasanna, Mohammad Saifuddin, and Imad Wasim). Out of the 50 batsmen in the training sample, 3 were misclassified (Moeen Ali, Dwayne Smith, and Moises Henriques). The model gave a correct classification rate of 94.64%, with an area under the curve 0.9458. In the testing sample, out of 21 bowlers, only Andre Russell was misclassified. Furthermore, out of the 16 batsmen, none were misclassified. The model predicted correctly 97.30% of the times, with an area under the ROC curve 0.9706. Fig. 8 shows the ROC curve for testing sample.

Performance of SVM.

Random Forest (RF) is another machine learning technique that is applied to both classification and regression. As in the other approaches applied above, our goal here was to use it for classification. Random forest is a meta estimator consisting of a large number of individual decision trees on different subsamples of the training data, and it aggregates the outcomes of these decision trees to decide the class of the subjects. It applies the bootstrap aggregating method called bagging with model building. Bagging is a technique in which the algorithm collects a large number of uncorrelated trees to boost the predictive performance of the model. In random forest, the model is decided based on an error rate called out-of-bag (OOB) error, which is calculated using the left-out samples.

Tuning was done to control the training process and to gain better accuracy in model prediction. In random forest, tuning was focused on two parameters; the number of variables randomly selected to be sampled at each split, and the number of trees to grow to derive the average prediction. In our case, the number of variables randomly selected to be sampled at each split was 2 and the number of trees to grow for taking the average prediction was 300.

Application of random forest for classifying all-rounders resulted the following outcomes. In the training sample, out of the 62 bowlers and 50 batsman, none were misclassified. The correct classification rate was 100%, with an area under the curve 1. In the random forest, since the model used the data points many times during the resampling process, it is not uncommon to have a higher accuracy of prediction for the training sample. In the testing sample, out of the 21 bowlers only 1 was misclassified (Andre Russell). However, out of the 16 batsmen, 5 were misclassified (Corey Anderson, Dhananjaya de Silva, Grant Elliott, Kevin O’Brien, and Stuart Binny). The model gave a correct classification rate of 83.78%, with an area under the curve 0.9479 for the testing sample. Fig. 9 shows the ROC curve for testing sample.

Discussion and conclusion

This paper focuses on utilizing the machine learning techniques to classify all-rounders in cricket. One can develop an automated classification rule based on the findings of this study, which can be used by cricket administrators with player selection. The remainder of this section summarizes the findings in detail.

Misclassified players based on different methods for the training and testing data sets are shown in Tables 4.1 and 4.2. Assessment of the performance of the methods were done using measures; accuracy and AUC. Fig. 4-9 give the ROC curves of the six classification techniques for testing data. Table 4.3 shows the accuracy of the methods separately for the training and testing samples. Table 4.4 gives the AUC values for the different classification methods. Evaluating the performance of different methods for testing sample is essential to assess the robustness of the models. Here, we start our discussion with the training sample and later conclude with the results for the testing sample.

Misclassified Players in Training Data Set

* Original classification.

Misclassified Players in Testing Data Set

* Original classification.

Logistics Regression.

Linear Discriminant Function.

Quadratic Discriminant Function.

Naíve Bayes.

Support Vector Machine.

Random Forest.

Accuracy-Correct Classification

Area Under the ROC Curve

For the training sample, random forest was the most accurate classification method, resulting in no misclassifications. However, as we discuss later, this method had serious overfitting issues. This is common for the random forest method due to the repeated resampling in the model building process. As we see from Table 4.4, AUC for all six methods is above 0.9458 (lowest, which was for SVM), which is an indication that these classifiers do an outstanding job in classifying all-rounders in the training sample. There were 3 players who were misclassified by all the methods except the random forest method. As we evaluate the classification methods, we scrutinized these players to see if there were any errors in the original classification. Those players were Mohammad Saifuddin, Dwayne Smith, and Moises Henriques.

Based on Tables 4.2 and 4.3, we see that for the testing sample, support vector machine, linear discriminant function, and quadratic discriminant function show significantly better performance than the other three methods. Each of these three methods misclassified only a single player giving a correct classification rate above 0.97. Logistic regression had an accuracy rate of 0.9189. Random forest and Naive Bayes method performed poorly relative to the other methods for the validation sample. As can be seen from the AUC values in Table 4.4, all six methods performed well for the testing sample which assures the robustness of the classification. Logistic regression, linear discriminant function, quadratic discriminant function, and support vector machine were all highly accurate, with AUC values 0.9912, 0.9792, and 0.9970, and 0.9706 respectively. Even the random forest and naive Bayes method that showed lower accuracy had considerably high AUC values (0.9479 and 0.9554 respectively). As a further investigation, we scrutinize three players who were missclassified by at least three methods we considered. These players were Andre Russell, Stuart Binny, and Dhananjaya Silva.

This study has shown the capability of machine learning techniques for classifying all-rounders in cricket. Based on the findings of this study, it is clear that logistic regression, linear discriminant function, quadratic discriminant function, and support vector machine can be used to develop an automated classification rule that can be used by cricket administrators and team managers with player selection. A classification rule that incorporates all the above four methods would be highly effective. Furthermore, the sample size (number of matches) could play a significant role in classification accuracy. It will be an interesting future study to investigate the effect of the sample size on the classification accuracy of the methods that we discussed in this article.

Footnotes

Appendix

Confusion Matrices for Logistic Regression Confusion Matrices for LDA Confusion Matrices for QDA Confusion Matrices for Naíve Bayes Confusion Matrices for SVM Confusion Matrices for Random Forest

(A) Training Data

Predicted

bowl

bat

Actual

bowl

60

02

bat

04

46

(B) Testing Data

Predicted

bowl

bat

Actual

bowl

21

00

bat

03

13

(A) Training Data

Predicted

bowl

bat

Actual

bowl

58

04

bat

03

47

(B) Testing Data

Predicted

bowl

bat

Actual

bowl

58

04

bat

03

47

(A) Training Data

Predicted

bowl

bat

Actual

bowl

58

04

bat

08

42

(B) Testing Data

Predicted

bowl

bat

Actual

bowl

21

00

bat

01

15

(A) Training Data

Predicted

bowl

bat

Actual

bowl

58

04

bat

06

44

(B) Testing Data

Predicted

bowl

bat

Actual

bowl

19

02

bat

03

13

(A) Training Data

Predicted

bowl

bat

Actual

bowl

59

03

bat

03

47

(B) Testing Data

Predicted

bowl

bat

Actual

bowl

20

01

bat

01

15

(A) Training Data

Predicted

bowl

bat

Actual

bowl

62

00

bat

00

50

(B) Testing Data

Predicted

bowl

bat

Actual

bowl

20

01

bat

05

11