Abstract

Pulmonary nodules are ubiquitously found on computed tomography (CT) imaging either incidentally or via lung cancer screening and require careful diagnostic evaluation and management to both diagnose malignancy when present and avoid unnecessary biopsy of benign lesions. To engage in this complex decision-making, clinicians must first risk stratify pulmonary nodules to determine what the best course of action should be. Recent developments in imaging technology, computer processing power, and artificial intelligence algorithms have yielded radiomics-based computer-aided diagnosis tools that use CT imaging data including features invisible to the naked human eye to predict pulmonary nodule malignancy risk and are designed to be used as a supplement to routine clinical risk assessment. These tools vary widely in their algorithm construction, internal and external validation populations, intended-use populations, and commercial availability. While several clinical validation studies have been published, robust clinical utility and clinical effectiveness data are not yet currently available. However, there is reason for optimism as ongoing and future studies aim to target this knowledge gap, in the hopes of improving the diagnostic process for patients with pulmonary nodules.

Introduction

The past four decades have seen a dramatic increase in thoracic computed tomography (CT) imaging, resulting in approximately 1.6 million adults in the U.S. diagnosed with incidentally-detected pulmonary nodules (PNs) annually [1,2]. Moreover, based on the 2013 U.S. Preventative Services Task Force (USPSTF) recommendations, an estimated 8.0 million adults in the U.S. are eligible for lung cancer screening with low-dose CT [3], and this population is anticipated to expand to 15.1 million with implementation of the 2021 USPSTF recommendations [4]. When PNs are detected – either incidentally or via screening – lung cancer is the primary concern, as it is the deadliest malignancy in the U.S. and worldwide [5]. A definitive diagnosis of lung cancer requires an invasive lung biopsy, which is associated with certain procedural costs and potential significant risks, including respiratory failure, pneumothorax, myocardial infarction, and even death [6,7,8,9,10]. Therefore, malignancy risk stratification is the fundamental first step in guiding PN management decisions among clinicians, who seek to diagnose cancer in a timely manner while avoiding unnecessary procedures for those with benign PNs [11]. Among suspicious PNs > 8 mm in maximal diameter, clinical guidelines for both incidentally-detected PNs (American College of Chest Physicians [12]. Fleischner Society [13]) and screen-detected PNs (American College of Radiology Lung Imaging Reporting and Data System [Lung-RADS] [14]) recommend surgical resection for high risk PNs ( > 65% risk of malignancy) and conservative management with non-invasive serial CT imaging surveillance for very low risk PNs ( < 5% risk of malignancy). However, clinicians face a diagnostic dilemma among intermediate risk (5%–65% malignancy risk) PNs, as they must decide whether to pursue a lung biopsy or surveil with serial imaging. This crucial decision has important implications for patients. A malignant PN inappropriately managed with imaging surveillance delays a cancer diagnosis and may even deny a patient the opportunity for curative treatment. On the other hand, a patient with a benign PN recommended to undergo lung biopsy has been unnecessarily exposed to the risks and costs associated with this invasive procedure.

There currently exists a significant misalignment between malignancy risk stratification processes and clinical management decisions [9,15,16,17,18]. As many as 45% of individuals undergoing a lung biopsy for evaluation of a PN are ultimately found to have a benign diagnosis [15,16,17,18,19,20], meaning that a considerable proportion of patients are unnecessarily exposed to the potential complications and harms of lung biopsy procedures. While conventional regression-based risk prediction models incorporating a variety of clinical and PN characteristics have been in existence since the late 1990s (e.g., the Mayo Clinic and Brock models) [21,22,23,24] they do not reliably outperform routine clinician assessment of malignancy risk [15,25,26]. Moreover, only 18% of thoracic surgeons and 31% of pulmonologists regularly use any clinical risk prediction model [27], and clinicians do not consistently document a quantitative estimate of cancer risk [28]. Thus, a core focus of the thoracic oncology scientific and clinical community is to improve PN malignancy risk stratification to better guide subsequent management decisions [29,30]. A smorgasbord of biomarkers has been developed recently [31] including blood-based [32,33,34,35,36], airway-based [19,37,38], breath-based [39,40,41], and imaging-based tests and devices [42,43]. This article will focus specifically on recent efforts to use radiomics and artificial intelligence (AI) technology for PN risk stratification and the practical hurdles that exist for clinical implementation.

Radiomics and artificial intelligence

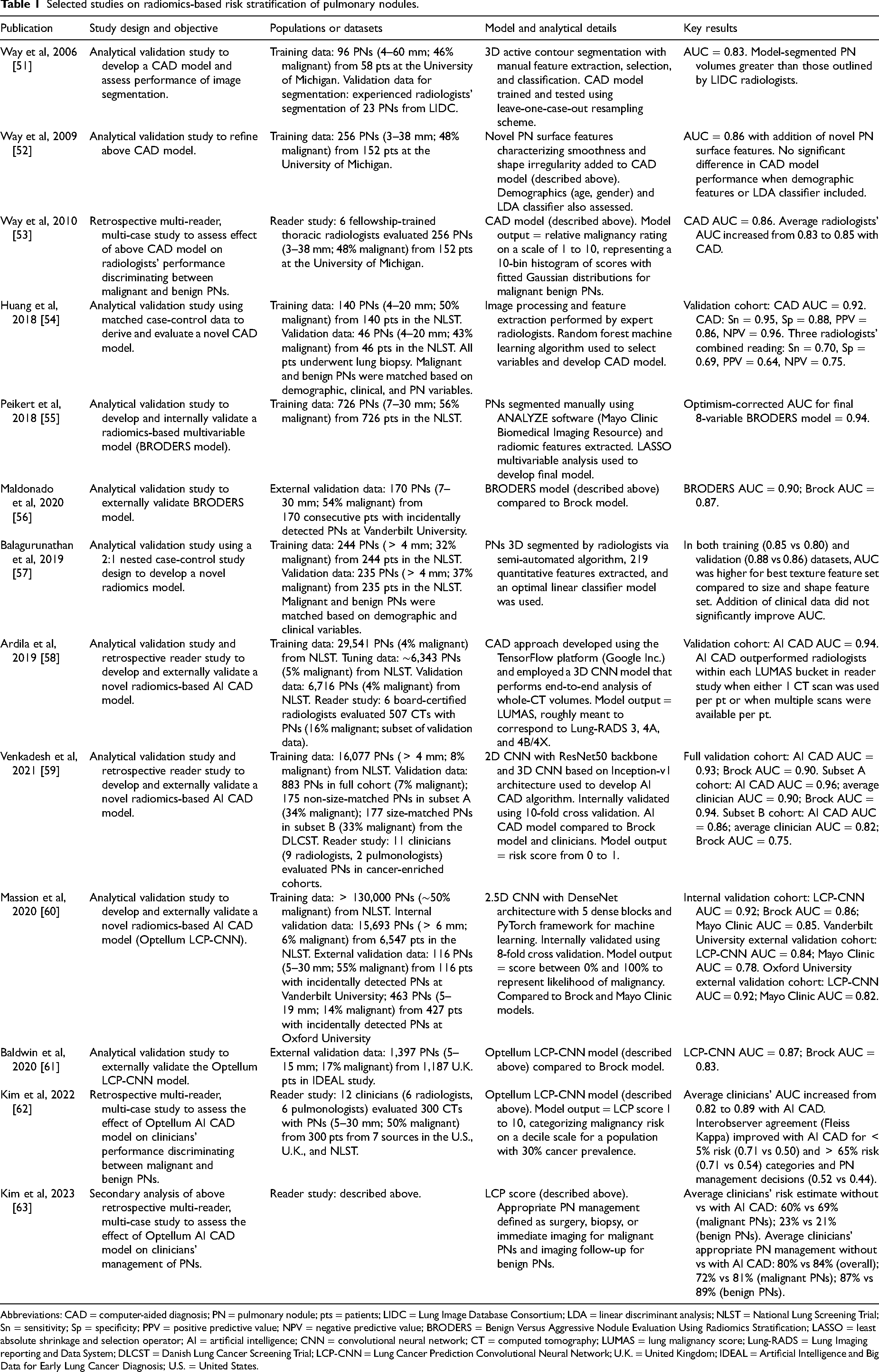

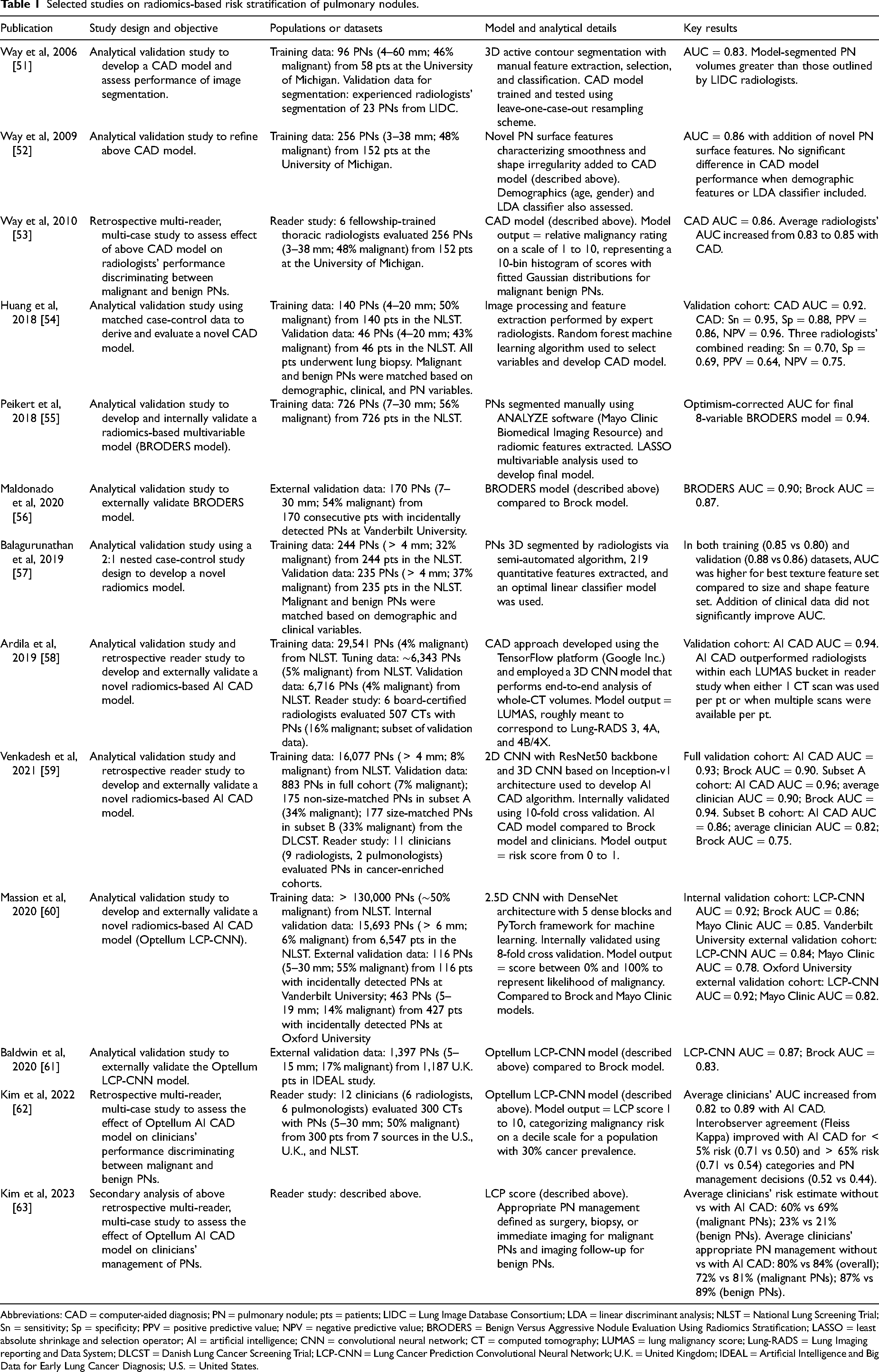

Selected studies on radiomics-based risk stratification of pulmonary nodules.

Selected studies on radiomics-based risk stratification of pulmonary nodules.

Abbreviations: CAD = computer-aided diagnosis; PN = pulmonary nodule; pts = patients; LIDC = Lung Image Database Consortium; LDA = linear discriminant analysis; NLST = National Lung Screening Trial; Sn = sensitivity; Sp = specificity; PPV = positive predictive value; NPV = negative predictive value; BRODERS = Benign Versus Aggressive Nodule Evaluation Using Radiomics Stratification; LASSO = least absolute shrinkage and selection operator; AI = artificial intelligence; CNN = convolutional neural network; CT = computed tomography; LUMAS = lung malignancy score; Lung-RADS = Lung Imaging reporting and Data System; DLCST = Danish Lung Cancer Screening Trial; LCP-CNN = Lung Cancer Prediction Convolutional Neural Network; U.K. = United Kingdom; IDEAL = Artificial Intelligence and Big Data for Early Lung Cancer Diagnosis; U.S. = United States.

Radiomics-based computer-aided diagnosis (CAD) tools demonstrate promise for noninvasive PN risk stratification using solely CT imaging data. CAD describes the automation of image review to assist clinicians with making diagnoses [44], and the past two decades have seen CAD paired with radiomics, which uses advanced mathematical analysis of imaging data to aid interpretation [45,46]. More recently, the evolution of AI has allowed deep learning using neural networks to enhance the development of radiomics-based CAD tools [47,48]. The potential benefit of such tools lies in their ability to analyze additional data invisible to the human eye (including shape, spatial complexity, textures, and wavelet transformations) and provide information to clinicians beyond PN size, spiculation, and density [49]. Additionally, in contrast to traditional clinical risk prediction models that require clinicians to enter discrete variables into a model to calculate a probability of malignancy, radiomics-based CAD tools automate this process, which theoretically could lower the threshold for clinical uptake. Numerous studies to date have been published describing the development and validation of radiomics-based biomarkers for PN risk stratification [50,51,52,53,54,55,56,57,58,59,60,61,62,63]. An exhaustive systematic review of all radiomics-based CAD tools is outside the scope of this focused narrative review, which will cover select notable examples to date (Table 1).

Initial efforts to incorporate radiomics-based quantitative imaging data into models to distinguish between malignant and benign PNs used conventional machine learning approaches, which rely on explicit parameters based on expert knowledge and classic multivariable model development techniques. In 2006, Way and colleagues first described a CAD system that was trained on clinical imaging data, evaluated using data from the Lung Image Database Consortium and differentiated malignant from benign PNs using morphological and texture characteristics via a three-dimensional active contour method, achieving an area under the receiver operating characteristic curve (AUC) of 0.83 [51]. This system was then updated a few years later to include additional nodule characteristics including surface smoothness and shape irregularity, achieving an AUC of 0.86 [52]. Next, this group performed a multi-reader, multi-case study using retrospective PN CT data from the University of Michigan to evaluate the effect of this CAD tool on radiologists’ performance discriminating between malignant and benign PNs and found that on average radiologists’ AUC increased from 0.83 to 0.85 (P < 0.01) [53]. In 2018, Huang and colleagues published the results of their CAD algorithm, which analyzed adjacent lung tissues in addition to PN texture features and was derived from random forest machine learning using National Lung Screening Trial (NLST) data [54]. They performed a matched case-control study and reported a CAD AUC of 0.92, sensitivity of 0.95, specificity of 0.88, positive predictive value (PPV) of 0.86, and a negative predictive value (NPV) of 0.96, which outperformed three radiologists’ collective evaluations (sensitivity: 0.70, specificity: 0.69, PPV: 0.64, NPV: 0.75). In 2018, Peikert and colleagues also used NLST data to develop a distinct radiomics-based model via manual software segmentation, incorporation of both PN and adjacent lung tissue characteristics, and the least absolute shrinkage selection operator (LASSO) method for multivariable model development, and reported an associated AUC of 0.94 on internal validation [55]. Subsequent external validation of this model using data from the Vanderbilt University Lung Nodule Registry yielded an AUC of 0.90 [56]. In 2019, Balagurunathan and colleagues published the results of their radiomics-based models also trained on NLST data reporting an AUC as high as 0.85 and noting the superior contribution of texture metrics in comparison to traditional size metrics [57]. The authors also found that discrimination was not augmented when clinical factors were incorporated into their radiomics-based models.

An alternative method of harnessing and analyzing radiomics-based quantitative imaging data from CT scans to develop a predictive model requires the use of AI [48,49]. Advancements in AI have ushered in the emergence of deep learning algorithms that do not rely on explicit feature parameter inputs but instead are trained via direct interaction with the data, theoretically enhancing problem-solving abilities. Convolutional neural networks (CNNs) are currently the most commonly used deep learning architecture in medical imaging. Generally speaking, these AI deep learning algorithms simultaneously evaluate imaging data, extract and aggregate features, and integrate this information to achieve high-level reasoning and ultimately make a prediction regarding PN malignancy risk. Radiomics-based tools that use AI technology fundamentally differ from those that do not, as these algorithms “learn” independently, can potentially identify previously unknown imaging features, and are capable of being iteratively updated by the introduction of new training data. A small but growing number of radiomics-based AI tools have been developed to date. In 2019, Ardila and colleagues described the development of a CNN model designed by Google that was trained on and validated in NLST imaging data. Notably, this model used full-volume imaging data (i.e,. the entire axial series of images) to classify malignancy risk. They reported an AUC of 0.94, which outperformed six radiologists [58]. The authors proposed a four-tier lung malignancy scoring (LUMAS) system, loosely meant to correspond with estimated malignancy probabilities associated with American College of Radiology Lung-RADS categories, but emphasized that optimization of this scoring system for use in clinical practice had yet to be performed. Separately, in 2021 Venkadesh and colleagues published the results of their CNN-based algorithm that was trained on NLST data and externally validated using data from the Danish Lung Cancer Screening Trial. Their deep learning algorithm outperformed the Brock (PanCan) traditional clinical risk prediction model (AUC: 0.93 vs 0.90; P < 0.05) and performed similarly to thoracic radiologists (AUC: 0.96 vs 0.90; P = 0.11) [59]. The authors initially made their algorithm freely accessible to the public for a time and concluded that their AI-based algorithm could serve as an adjunct for radiologists evaluating screening CT scans in the future.

To date, the only radiomics-based AI algorithm to gain both U.S. Food and Drug Administration 510(k) clearance (2021) and European Union CE marking (2022) is the Lung Cancer Prediction Convolutional Neural Network (LCP-CNN) developed by Optellum. This AI CAD tool was trained on and internally validated in NLST data of screen-detected PNs (AUC: 0.92) and was externally validated using imaging data of incidentally-detected PNs from Vanderbilt University Medical Center (AUC: 0.84), Oxford University Hospital National Health Service (NHS) Foundation Trust (AUC: 0.92), Leeds Teaching Hospital NHS Trust (AUC: 0.88), and Nottingham University Hospitals NHS Trust (AUC: 0.89) [60,61]. Additionally, the LCP-CNN had superior discrimination compared to both the Mayo Clinic and the Brock (PanCan) clinical models. A commercially available version of the LCP-CNN generates a radiomics biomarker Lung Cancer Prediction (LCP) score that represents an estimate of predicted risk of malignancy on a decile scale. In 2022, a retrospective multi-reader, multi-case study was performed to evaluate the effect of the LCP-CNN on clinicians’ malignancy risk assessments [62]. Twelve clinicians (six pulmonologists and six radiologists) each evaluated 300 chest CT cases of PNs and were asked to provide an estimate of PN malignancy risk (0%–100%) and a management recommendation for each case before and after using the AI tool. When using the tool, clinicians’ average discrimination improved by 7 percentage points (AUC: 0.89 vs 0.82; P < 0.001) and sensitivity and specificity at both the 5% and 65% malignancy risk thresholds increased as well. Interobserver agreement for both clinically relevant malignancy risk categories ( < 5%, 5%–30%, 31%–65%, > 65%) and management recommendations (no action, CT surveillance, diagnostic procedure) also increased with use of the AI tool. Moreover, the average proportion of appropriately managed PN cases (defined as immediate imaging or biopsy for malignant PNs and no action or imaging surveillance for benign PNs) increased from 80% to 84% with use of the LCP-CNN in this retrospective study [63].

Despite the plethora of novel radiomics and AI-based CAD tools that have been developed and the well-known need for improved PN risk stratification, widespread adoption of this technology has not yet occurred despite being commercially available. The reason why is likely multifaceted. First, while all of the aforementioned studies reported metrics for model performance (i.e., AUC, sensitivity, and specificity), prospective clinical utility studies using real-world data have not yet been performed. It is critical to note that models associated with high levels of discrimination (i.e., AUC) do not necessarily equate to high-performing models in clinical settings that differ from patient populations in which models were originally trained and validated [64]. Specifically, differences in demographic characteristics and cancer prevalence could limit generalizability of model performance in distinct populations. In fact, the more relevant metric for model performance and applicability to specific patient care scenarios is model calibration [65]. Currently, there does not exist a standardized approach to systematically evaluate AI in healthcare or how best to evaluate the clinical utility of new technologies. However, several approaches to rigorously evaluating novel AI technologies have been proposed. For example, Park and colleagues have proposed an approach akin to the classic framework for new drug development, advancing scientific inquiry from phase 1 safety-focused studies to eventual phase 4 clinical effectiveness studies [66]. Khera and colleagues have suggested a holistic approach to AI evaluation and implementation with an emphasis on health quality, equity, generalizability, and medical education in addition to evaluating patient-centered outcomes [67]. Of course, the optimal method for evaluating any novel intervention is to perform a prospective randomized controlled trial assessing patient-centered outcomes. To date, no such studies have been published. Second, much has been made of the unique challenges AI technology poses in the medical setting. As AI tools use an automated approach to independent learning, concerns have been raised regarding the “black-box” nature of which factors drive AI decision-making and risk estimation [68]. This opaqueness in what is “under the hood” of AI algorithms have resulted in mistrust among clinicians [69]. In fact, a recent survey of clinicians highlighted limited acceptance and trust of AI technology as a significant perceived barrier to implementation [70]. This survey also revealed clinicians’ concerns about safety, inconsistent technical performance, absence of standardized guidelines, lack of technical knowledge, and loss of autonomy. Radiologists have additionally raised concerns regarding medical-legal liability, responsibility for the results of AI-generated recommendations, and the nature of AI integration into routine clinical workflow [71]. Third, as radiomics-based tools require high resolution CT images to be available and large imaging data files to be uploaded into CAD software platforms, practical barriers to clinical implementation include lack of standardization of CT image acquisition across different healthcare institutions and disruption of clinical workflow in already busy pulmonary nodule clinics. Finally, the medical community’s overall wariness of AI technology is understandable given previous examples of unintended consequences of CAD on medical decision-making [72,73]. For example, a 2003 study assessing the effect of CAD on electrocardiogram (ECG) interpretation by inexperienced resident physicians demonstrated that when incorrect CAD interpretations were provided, residents were more likely to misinterpret an ECG compared to when CAD was not used [74]. In another study, use of CAD was associated with a reduction in breast cancer discrimination on mammography among high-performing expert clinicians [75]. Subsequent studies reported either no significant impact of CAD on radiologists’ decision-making [76] or a decrease in clinician discrimination when using CAD [77]. These examples underscore the importance and need to perform high quality studies assessing the effect of CAD tools on both clinical decision-making and patient outcomes.

Future directions

Before widespread implementation of radiomics-based AI tools for PN risk stratification can be recommended, well-executed studies must be performed to assess the effect of such tools on medical decision-making and patient-centered outcomes and to determine how best to implement these devices into routine clinical practice. Importantly, AI algorithms have been developed and trained to discriminate between malignant and benign PNs, but they are not capable of understanding the nuances of patient preferences and clinician assessments of the associated risks of various management approaches [68,69]. For a given indeterminate PN, clinicians have inconsistent approaches to PN risk assessment and variable malignancy probability thresholds above which they would recommend pursuing a lung biopsy [29,78,79,80,81]. For example, a more conservative clinician might not recommend a biopsy unless a PN diameter is greater than 10 mm or unless the estimated malignancy risk is greater than 20% or 30%, whereas a more aggressive approach might see a clinician recommend a biopsy for any PN larger than 8 mm or with a risk greater than 10%. Apart from clinicians’ variable perspectives on PN malignancy risk and management, individual patients can have widely disparate opinions on acceptable risk and anxiety related to the lack of certainty associated with a PN detected on a CT scan [82,83,84]. For example, a patient who values not missing a cancer diagnosis and places high importance on timeliness of care might choose to pursue a biopsy upfront for a given indeterminate PN even at the lower end of malignancy risk. On the other hand, a patient with multiple comorbidities who might be more anxious of the potential risks and complications of a lung biopsy procedure might choose to avoid a biopsy initially, opting for surveillance with serial CT scans instead. Thus, radiomics-based AI tools are not designed to replace clinicians’ decision-making but, at best, could assist clinicians and patients in jointly making the challenging decision of whether or not to biopsy a given PN [85]. As such, several decision analytic modeling approaches to estimating the clinical utility of diagnostic tests that take into account various threshold probabilities for biopsy have been developed. The most widely used and oldest is decision curve analysis, developed by Vickers and colleagues in 2006 [86,87,88,89]. This analytic technique plots net clinical benefit (a weighted difference between true positives and false positives for malignancy) on the Y-axis against threshold probability (the malignancy probability above which biopsy would be recommended) on the X-axis and has been used in multiple areas of research [90,91,92]. Notable examples of alternative approaches include the relative clinical utility curve developed by Baker and colleagues [93,94,95,96] and the interventional probability curve from Kammer and colleagues [97]. A necessary first step to understanding the potential effect of novel AI tools on PN management decisions will be the rigorous application of such clinical utility models using real-world patient data.

Promisingly, a growing number of studies have begun to estimate the clinical utility of radiomics-based tools in a retrospective fashion. For example, a recent publication from Paez and colleagues demonstrated the potential clinical utility of the Optellum LCP-CNN for longitudinal assessment of PNs, as malignancy risk estimates for malignant PNs increased over time while those for benign PNs remained relatively stable [98]. Separately, in 2021 Kammer and colleagues described the development of a novel combination biomarker incorporating clinical variables in addition to blood and radiomics-based inputs and performed a clinical utility analysis to estimate what the effect of using the biomarker would have been on clinical decision-making [99]. They found that use of this novel biomarker would theoretically have both reduced the proportion of individuals with benign PNs undergoing invasive procedures and the time to diagnosis of cancer among those with malignant PNs.

As previously mentioned, the gold standard method of evaluating any novel intervention is to perform a prospective randomized controlled trial that directly assesses the impact of an intervention on patient-centered clinical outcomes. Multiple experts have urged the performance of such trials when evaluating any novel AI-based technology [69,72,100,101]. To date, no clinical trials have been conducted evaluating the clinical effectiveness of a radiomics-based AI tool on PN risk stratification. However, a recent search of ClinicalTrials.gov reveals one such trial that is actively recruiting patients (NCT05968898). This pragmatic randomized controlled trial will compare usual care with an approach to PN risk stratification that incorporates use of the Optellum LCP-CNN tool. The primary outcome will be the composite proportion of malignant PNs managed with biopsy or empiric treatment and benign PNs managed with imaging surveillance, and secondary outcomes include timeliness of care, adverse events, diagnostic yield of biopsy procedures, and healthcare costs. Thus, much needed future efforts to carefully investigate AI technology are currently in the pipeline.

Conclusions

In conclusion, recent advances in radiomics-based AI technology have yielded promising preliminary data suggesting that AI may serve a complementary role to routine clinical decision-making for PN management in the future. However, widespread adoption of such novel tools has not yet been observed despite commercial availability, and use of such technology is not currently recommended by any clinical guidelines due to a dearth of adequate clinical utility and prospective randomized controlled trial data. Future rigorously conducted clinical research studies are required to fully evaluate the clinical effectiveness of radiomics-based AI tools for PN risk stratification and to clearly define what role, if any, these tools should play within routine clinical practice.

Footnotes

Author contributions

R.Y.K. performed the literature review and wrote the manuscript.

Funding

R.Y.K. is supported by a National Cancer Institute Career Development Award (K08CA279881).

Conflict of interest

No relevant financial conflicts of interest to disclose.