Abstract

Developing students’ information problemsolving (IPS) competence in higher education is imperative. However, existing theoretical frameworks describe IPS learning outcomes without guiding effective learning environment design. This systematic review and meta-analysis synthesized empirical evidence to formulate design principles for developing IPS competence. A systematic search across seven academic databases yielded 69 peer-reviewed articles from 2000–2023 with controlled pretest-posttest designs targeting (under)graduate students. Analysis of these studies yielded seven design principles: learning task, instruction, modeling, practice, learning activities, support, and feedback, with meta-analyses validating key relationships. The IPS educational design principles (IPS-EDP) model summarizes how these principles address learning outcomes, teaching and learning activities, and assessment strategies. While our review covered all IPS components, empirical evidence predominantly addressed information search and selection, potentially limiting generalizability to broader IPS competence. The IPS-EDP model provides a theoretical foundation for investigating and designing effective IPS learning environments in higher education, bridging research and practice.

Higher education institutions must prepare graduates with the necessary digital competencies to effectively address increasingly complex societal problems. This includes competence in information problem solving (IPS). IPS competence is commonly defined as the integrated knowledge, skills, and attitudes that enable individuals to search for, assess, and use online information to solve problems and construct knowledge (Brand-Gruwel et al., 2009; Eisenberg & Berkowitz, 1990). This competence is crucial in various contexts: professionally, individuals rely on IPS for effective decision-making (S. Campbell, 2004), lifelong learning (International Federation of Library Associations and Institutions [IFLA], 2005; Lau & Elliott, 2006), and active contribution to democratic knowledge societies (IFLA, 2005). Academically, IPS is critical for achieving success (Catalano & Phillips, 2016; Rowe et al., 2021) and earning higher grades across disciplines (Weber et al., 2019). It equips students to effectively find, evaluate, and synthesize digital information needed for coursework, research, or projects. Despite its importance, numerous studies highlight significant gaps in students’ IPS competence (e.g., Frerejean et al., 2016; Rosman et al., 2015). Consequently, lacking a robust foundation in IPS, higher education students may struggle to access vital information needed for their assignments or base academic and professional decisions on inaccurate, biased, or outdated information. Therefore, higher education institutions should find and apply effective ways to structurally teach IPS.

The need for IPS competence among students has become even more prominent given the rapid technological advancements, which increase online information production and consumption. Given this reality, a lack of IPS competence has significant consequences: It could amplify the digital divide, encompassing not only access to technology but also inequalities in effective digital information usage (Van Dijk, 2020). Recognizing its societal impact, both global policymakers (e.g., UNESCO, 2022) and higher education professionals (as reported by Van Laar et al., 2017; Yevelson-Shorsher & Bronstein, 2018) emphasize the vital role of IPS for graduates. This recognition is evident in qualification frameworks from various regions across the globe, including the Qualification Framework for Central American Higher Education (CSUCA, 2018), the Framework for Information Literacy for Higher Education (American College of Research Libraries [ACRL], 2016), the Canadian Degree Qualifications Framework (Council of Ministers of Education, 2007), the UK Quality Code for Higher Education (Quality Assurance Agency, 2024), and the DigComp Framework (Carretero et al., 2017), which the European Commission endorses. Nevertheless, although these frameworks highlight the broader educational objectives related to IPS, they do not provide detailed guidance for designing effective IPS learning environments within specific educational contexts. To address this gap, this literature review aims to identify evidence-based relationships between learning environment characteristics and IPS-related learning outcomes and to synthesize these findings into a comprehensive set of design principles for developing IPS in higher education.

Defining Information Problem Solving Competence

Until now, our discussion has focused on IPS from an educational science perspective. Yet, this competence is also researched in other disciplines, predominantly library and information sciences (LIS), where the term information literacy is frequently used interchangeably. The ACRL (2016) Framework defines information literacy as “the set of integrated abilities encompassing the reflective discovery of information, the understanding of how information is produced and valued, and the use of information in creating new knowledge and participating ethically in communities of learning” (p. 8). While we embrace this definition, we will consistently use the term IPS throughout this review, as it aligns with our focus on instructional design in online educational contexts (Brand-Gruwel et al., 2009).

An essential aspect of IPS competence is the interrelatedness of knowledge, skills, and attitudes. Rather than viewing the integrated abilities of IPS as mere skills, we consider them complex competencies, in line with Mulder’s (2014) definition: “a coherent cluster of knowledge, skills, and attitudes which can be utilized in real performance contexts” (p. 111). The complex interplay between knowledge, skills, and attitudes determines students’ IPS performance, which can be understood as the measurable outcome of their IPS competence in practical information problem solving scenarios. For instance, students’ performance in assessing source credibility is determined not only by their evaluation skills but also by their domain-specific knowledge (e.g., Lucassen & Schraagen, 2013; Salmerón et al., 2013) and critical attitude (e.g., Kammerer & Gertjets, 2012).

Building on this knowledge, skills, and attitudes perspective, the IPS-I model introduced by Brand-Gruwel et al. (2009) provides a comprehensive framework that delineates the set of interrelated IPS competencies. While they describe IPS competence as “constituent skills” for solving information problems using the internet, we will refer to these as “constituent competencies” throughout our review, in line with our earlier discussion. The five constituent competencies are: (a) define the problem, (b) search, (c) select, (d) process, and (e) present information, whereby regulation is integral to each of these. The constituent competencies are highly interrelated within an iterative process (Brand-Gruwel et al., 2009). Given the interconnectedness of these competencies, IPS learning environments should address not only the different aspects of knowledge, skills, and attitudes but also the synergy among the constituent competencies.

Information Problem Solving Competence in Higher Education

Integrating IPS systematically into academic programs, as advised by the ACRL (2016) Framework, proves a significant challenge for higher education institutions; in prevailing practice, the integration of IPS is frequently limited (Badke, 2011) or inadequate (Julien, 2016). Common methods for teaching IPS in higher education, such as online modules or one-off library orientation sessions, have shown limited effectiveness (e.g., Derakhshan & Singh, 2011; Probert, 2009). The divergence between the acknowledged need for structural integration and the limited actual practice is concerning, as complex competences like IPS require ample practice within meaningful contexts (Van Merriënboer et al., 2006). Yet, contemporary educational trends pressure students to acquire numerous competencies within a limited time (Fahimirad et al., 2019). This pressure leads educators to make misguided assumptions about students developing IPS competence naturally from existing online search tasks without explicit guidance (McGuinness, 2006; Walraven et al., 2008). Consequently, it pushes students toward peer-assisted or self-guided learning (S. U. Kim & Shumaker, 2015). However, research has consistently underscored the pitfalls of such minimal guidance for developing IPS and emphasizes the need for explicit training (e.g., Brand-Gruwel et al., 2005; Weber et al., 2019). To achieve the desired IPS educational objectives, curricula must be designed to balance efficient delivery with an effective learning environment.

Existing Literature on Developing Information Problem Solving Competence

Designing higher education curricula to develop generic competences like IPS requires careful management and coordination of various elements within the learning environment. Intended learning outcomes, assessment strategies, and teaching and learning activities should be purposefully connected, as emphasized by Biggs’s (1996) principle of constructive alignment. Despite the widespread adoption of this principle in educational practice, a preliminary literature review suggests research on IPS teaching and learning often treats these elements in isolation, with limited consideration of cultural and disciplinary contexts.

Concerning the intended learning outcomes, IPS research in higher education reveals three limitations in scope. First, most identified review-level evidence on IPS teaching and learning predominantly focuses on librarian-led instruction to teach (basic) library skills (e.g., Grabowsky & Weisbrod, 2020; Koufogiannakis & Wiebe, 2006; L. Zhang et al., 2007). The emphasis on search-and-find competencies aligns with the findings of a review conducted by Kuiper et al. (2005), who found that research on teaching IPS in K–12 education primarily concentrated on the searching phase while neglecting the processing phase. This narrow focus contrasts with evidence that IPS expertise requires substantial engagement in problem definition, processing, and presenting information (Brand-Gruwel et al., 2005). Second, review-level evidence predominantly focuses reports on quantitative learning outcomes, such as knowledge (e.g., Grabowsky & Weisbrod, 2020; Koufogiannakis & Wiebe, 2006) or skills (e.g., Brante & Strømsø, 2018; L. Zhang et al., 2007). This focus has resulted in limited understanding of affective learning outcomes, such as attitudes and self-efficacy in IPS development. Third, IPS instruction research has primarily examined the development of IPS outcomes related to traditional academic writing tasks, such as research papers and literature reviews (e.g., Argelagos et al., 2022; Frerejean et al., 2019). This limits our understanding of how to develop IPS competence for diverse professional and disciplinary contexts. Consequently, the current focus on quantitative learning outcomes related to search skills in the context of academic writing tasks limits the understanding of how to develop IPS competence across different educational contexts.

As for the assessment strategies, IPS tests used as outcome measures in research exhibit two main biases. The primary bias observed in IPS tests is the so-called library bias—that is, a focus on assessing traditional information search-and-find competencies (Boh Podgornik et al., 2016; Garcia et al., 2021). This narrow focus on information location and access overlooks crucial competencies related to information creation and synthesis—skills that have become essential in today’s digital information environments (M. J. Anderson, 2016). The second bias concerns ecological validity, reflecting a significant mismatch between assessment tasks and real-world information challenges (Garcia et al., 2021). This validity concern stems from multiple methodological factors, including reliance on self-assessment tests (e.g., Rosman et al., 2015) and the quick obsoletion of IPS tests due to constant evolution of technologies and tools (Garcia et al., 2021). The library and ecological validity biases in IPS tests limit the generalizability of effectiveness studies to real-world settings.

Concerning the teaching and learning activities, previous review studies have investigated the effects of different teaching delivery methods. Early reviews examined the effects of face-to-face versus online instruction (Koufogiannakis & Wiebe, 2006; L. Zhang et al., 2007), whereas later reviews also compared this to blended instruction (Weightman et al., 2017). However, these investigations have primarily focused on research within the context of academic libraries in Anglo-Saxon countries, with a strong geographic bias toward the United States and limited representation from other regions. Review-level evidence encompassing diverse disciplinary and cultural contexts for promoting IPS competence remains limited (Wuyckens et al., 2022). Furthermore, while specific reviews have explored the effects of face-to-face, online, and blended formats on student perception (e.g., Morris, 2020), they failed to establish a clear connection between these preferences and actual improvements in IPS competence. Although recent studies have examined learning activities designed to foster IPS in online and hybrid learning environments (e.g., Argelagos et al., 2022; P. C. Campbell & Mischkowski, 2024; Liu, 2023; Wegener, 2022), no comprehensive review has yet synthesized their effects. Thus, broader review-level evidence is required to synthesize how diverse teaching activities influence IPS competence across diverse disciplinary contexts and cultural settings.

To conclude, prior research has examined IPS learning outcomes, assessment strategies, and teaching activities primarily in isolation. This results in a fragmented understanding of how these elements of constructive alignment within the learning environment interact to influence IPS competence development. While existing frameworks effectively decompose the IPS process (e.g., Brand-Gruwel et al., 2009) and describe IPS learning outcomes (e.g., ACRL, 2016), they primarily focus on defining IPS competence rather than guiding its development. This creates a gap between understanding what IPS competence entails and knowing how to design learning environments that foster its development. As a result, instructional designers struggle to translate these conceptual frameworks into educational actions (Wuyckens et al., 2022). Consequently, a comprehensive framework is needed that explicitly connects IPS learning environment characteristics with the development of IPS learning outcomes. Such a framework will advance both systematic investigation of IPS teaching effectiveness and evidence-informed design of aligned IPS learning environments.

Conceptual Framework

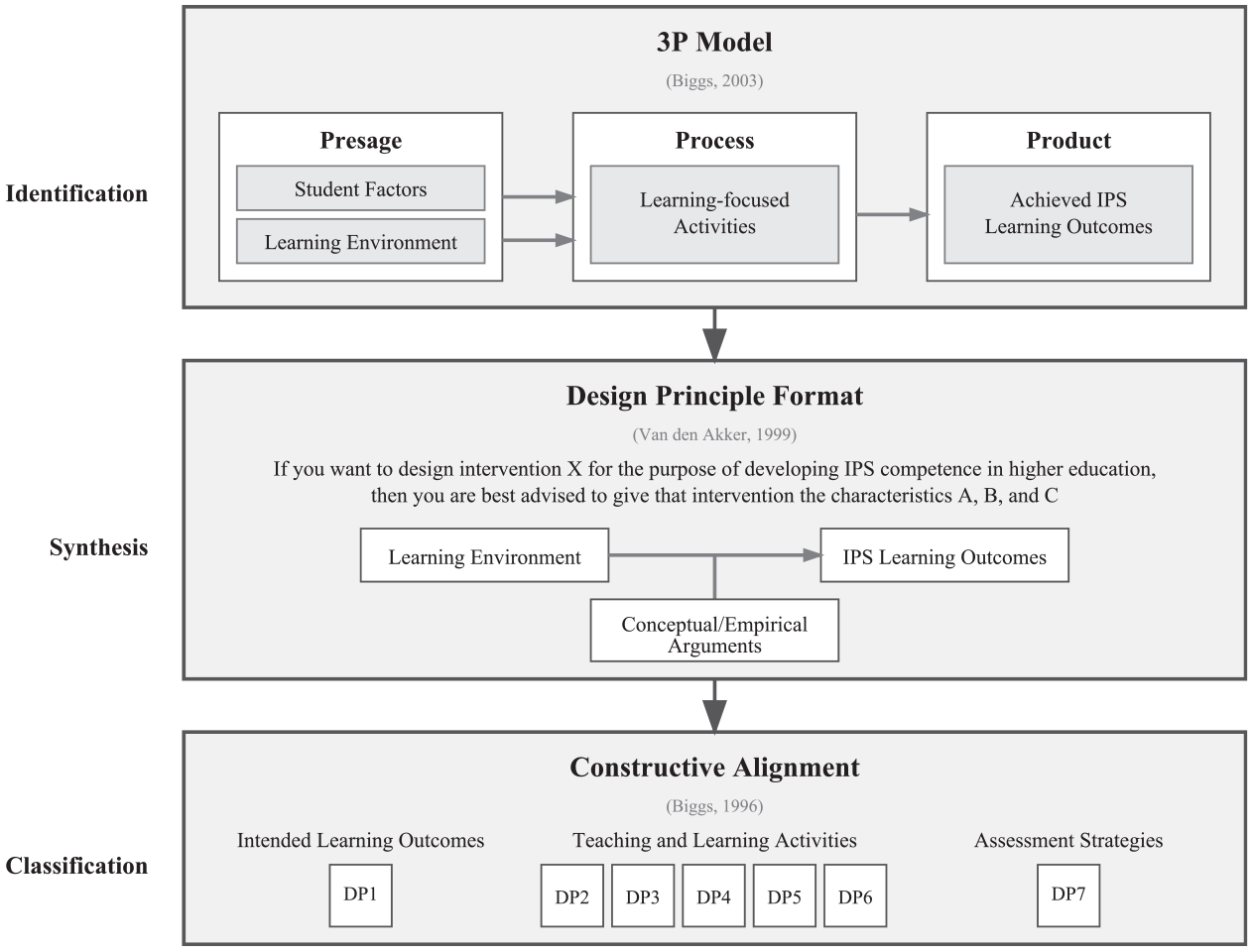

To develop such a comprehensive framework, we draw on three complementary theoretical perspectives to systematically analyze and synthesize research on IPS teaching and learning. First, Biggs’s (2003) 3P model provides a systematic framework for identifying relevant learning environment characteristics. Second, Van den Akker’s (1999) design principles format structures the synthesis of empirical evidence on the effectiveness of these characteristics for IPS competence development. Third, constructive alignment (Biggs, 1996) offers an organizing framework for classifying these principles, ensuring comprehensive coverage of intended learning outcomes, teaching and learning activities, and assessment strategies. Figure 1 illustrates how these three theoretical perspectives integrate to form our conceptual framework for constructing design principles for developing IPS competence.

Conceptual framework used for constructing a comprehensive set of design principles for IPS.

Biggs’s 3P model of teaching and learning in universities served as the foundational analytical framework. This model conceptualizes teaching and learning as an interactive system where student characteristics and learning environment (presage) influence learning-focused activities (process), which lead to the achievement of learning outcomes (product). In the context of teaching and learning IPS, the IPS learning environment concerns how teachers arrange educational settings to promote student learning. This component is particularly relevant for our analysis as it enables systematic identification of learning environment characteristics that influence IPS competence development. The achieved IPS learning outcomes component represents students’ development of specific aspects of IPS competence as a result of their engagement with the learning environment. This analytical approach builds on established methodologies from previous higher education review studies. These reviews have successfully used the 3P model to identify evidence linking learning environment characteristics to learning outcomes for specific competences, such as argumentation and oral presentation competence (Noroozi et al., 2012; Spelt et al., 2009; Van Ginkel et al., 2015).

Van den Akker’s design principle format extends this analytical foundation by providing a systematic structure for synthesizing empirical evidence into actionable educational design principles. Van den Akker’s (1999) proposes the following format: “If you want to design intervention X for the purpose Y in context Z, then you are best advised to give that intervention the characteristics A, B, and C, [. . .], because of arguments P, Q, and R” (p. 5). This format emphasizes that effective educational design principles must specify not only what works, but also why and under what conditions. Conceptually, this format aligns with the 3P model: the intervention characteristics (A, B, C) correspond to identified elements of the learning environment; the purpose (Y) relates to intended learning outcomes; supporting arguments (P, Q, R) emerge from evidence about effective learning-focused activities. Moreover, the explicit consideration of context (Z) aligns with the 3P model’s emphasis on student factors, enabling design principles that account for the generalizability of interventions across diverse teaching and learning contexts.

Objectives and Research Questions

This systematic literature review aims to identify, synthesize, and classify the available empirical evidence on learning environment characteristics that effectively foster IPS competence in higher education students. The main objective is to develop a comprehensive set of design principles that (1) directly relate learning environment characteristics to IPS competence or components thereof, (2) provide conceptual and empirical arguments for effective operationalization of these learning environment characteristics, (3) set the context in which the principle holds, and (4) address the intended learning outcomes, teaching and learning activities, and assessment strategies of the learning environment. By considering these three key areas of the learning environment, this systematic review aims to provide a comprehensive model for developing IPS in higher education that serves two purposes: providing researchers with theoretical grounding for systematically investigating IPS teaching effectiveness across diverse contexts, and offering instructional designers evidence-based guidance for developing aligned learning environments for IPS competence. To achieve these objectives, our research is guided by two complementary research questions:

What learning environment characteristics can be identified from empirical studies that demonstrate effects on higher education students’ development of IPS competence and its constituent components?

How can these empirically validated characteristics be synthesized and classified into a comprehensive framework of design principles for developing IPS competence?

Methods

This systematic review was prepared following a preregistered protocol (Boetje, Sichterman, Van Ginkel, & Smakman, 2023a) that followed the JBI Manual for Systematic Reviews of Effectiveness (Tufanaru et al., 2020), which is congruent with the PRISMA-P checklist (Moher et al., 2015). Our methodology consisted of six subsequent phases: (1) formulation of inclusion criteria, (2) development of a search strategy, (3) selection of studies, (4) critical appraisal, (5) data extraction, and (6) data synthesis. During the data synthesis phase, we employed narrative syntheses, complemented by meta-analyses, reflecting our mixed-methods approach. The quantitative analyses verified the impact of evidence-based relationships between learning environment characteristics and IPS-related learning outcomes, while the qualitative analyses offered a deeper understanding of the arguments underlying these relationships in various contexts. To ensure transparent reporting, the PRISMA-S statement (Rethlefsen et al., 2021) was used to guide the reporting of the first two phases. All protocols, datasets, scripts, outputs, and supplementary materials are made available via the Open Science Framework (https://osf.io/xp2ua), consistent with open science principles (Wilkinson et al., 2016).

Inclusion criteria

To be included in the systematic review, a reported study had to meet the following seven criteria: (1) the study subjects are (under)graduate students at a higher education academic institution, since this educational context is the focus of the study; (2) the study report explicitly describes one or more characteristics of the learning environment and linked these to the development of students’ IPS competence or components thereof; (3) the study employs a pretest-posttest design with a randomized or nonrandomized control group because they give more robust evidence on the effectiveness of learning environment interventions for the development of IPS competence than other types of designs; (4) the used statistical analyses should either assess the treatment effect using pretest scores, statistically control for pretest differences using analyses of covariance, or permit the calculation of effect size for the treatment effect based on reported data; (5) the article is published in a peer-reviewed journal, to ensure scholarly quality; (6) the report is published in English, to ensure conceptual consistency with dominant theoretical frameworks and facilitate reliable comparison of findings across studies; (7) the publication date is after 2000, corresponding with transformative shifts in information access through search engines and digital libraries and the formal integration of IPS into higher education curricula through widely-adopted frameworks and standards (e.g., the ACRL Standards, 2000). Publications were excluded when they (1) did not focus on developing IPS as the dependent variable; (2) solely addressed the description of one or more teaching strategies without examining the effect on IPS competence; (3) purely focused on the relationship between student factors and IPS competence without taking learning environment characteristics into account.

Search Strategy

A three-step search strategy was developed, registered, and peer-reviewed with a senior information specialist using the PRESS guideline statement (McGowan et al., 2016), consisting of (1) identifying key papers, (2) database search, and (3) citation tracking. The first step in the search strategy aimed at identifying a set of key papers on IPS teaching and learning in higher education. This method involved asking 10 expert researchers in the field of IPS to suggest essential papers. From their suggestions, we compiled a list of eight key papers that met the inclusion criteria (see https://osf.io/s7ugy). Subsequently, we used these key papers to evaluate the viability, sensitivity, and specificity of our search string.

The second step in the search strategy involved searching seven academic databases for relevant literature (platforms listed in brackets): five specialized databases—that is, Education Resources Information Center (ERIC) [EBSCO], Education Research Complete (EBSCO), PsycInfo (EBSCO), Library and Information Science Abstracts (LISA) [ProQuest], and Library, Information Science & Technology Abstracts (LISTA) [EBSCO]—and two general databases—that is, Web of Science (WoS) and Scopus. The five specialized databases extensively cover research in library and information science (LIS), education, and behavioral sciences. Web of Science and Scopus were selected as the primary multidisciplinary databases for international academic literature.

Three sets of search terms were used based on the essential concepts of the review—namely “IPS competence” (outcome) in combination with “learning environment” (intervention) in the context of “higher education” (population). Synonyms, subject headings, and related terms were identified through keywords of related review studies (Grabowsky & Weisbrod, 2020; Koufogiannakis & Wiebe, 2006; Walraven et al., 2008), thesauri, and the authors’ knowledge of the subject area. Following Van Ginkel et al. (2015), action verbs focusing on the relation between intervention and outcome (e.g., develop, improve) were added to the learning environment search term set. Then, the related terms were combined with the Boolean operator OR. The resulting three search term groups were combined with AND to arrive at the final search queries. The search was restricted to title, abstract, and keywords in the databases to increase search precision. The search was limited to peer-reviewed articles published in English after 2000. The searches were conducted on 16 January 2023, and the search strategies for all seven databases were registered on searchRxiv (Boetje, Sichterman, Van Ginkel, Smakman, et al., 2023b). The third step in the search strategy was backward and forward citation searching for all relevant publications traced in the screening phase using the automated SpiderCite function of the SR-accelerator (version 2.0) (Rathbone et al., 2015).

Selection of Studies

Selection of studies was performed in four stages: (1) data preparation, (2) preliminary screening, (3) screening, and (4) quality checks. First, all identified records were extracted from the databases and imported into Zotero (version 6.0.20). Missing DOIs were retrieved, verified, and cleaned using Zotero’s DOI Manager (version 4.1.2) to improve the deduplication process. After manually checking the identified duplicates, duplicates were removed using the automated deduplication function in the SR-accelerator (version 2.0) (Rathbone et al., 2015).

Next, two authors independently conducted a preliminary screening of a random 1% sample of the dataset to ensure screening reliability and refine the inclusion criteria. The interrater reliability, calculated using the kappa2 function from the irr package in R (version 4.2.2), was sufficient (κ = .65). Discrepancies were resolved through discussion until agreement was reached.

Subsequently, the same two authors independently screened the remaining records based on the seven inclusion criteria, following a screening protocol (see https://osf.io/bsfj8). This approach aligns with Pigott and Polanin’s (2020) recommendation to conduct screening with two independent screeners to avoid the loss of eligible studies. Anticipating a large volume of papers, we employed priority screening using ASReview (Van de Schoot et al., 2021), version 1.1 (ASReview LAB Developers, 2022). Priority screening enhances efficiency screening while allowing for a sensitive search. This iterative machine learning-based process reassesses unscreened records for relevance, aiding in the sorting of abstracts based on their inclusion probability. In addition, ASReview helps to prevent screening bias, as it allows for anonymous screening, ensuring no author or journal names are visible during title and abstract screening. The SAFE procedure described by Boetje and Van de Schoot (2024) was followed to determine when to stop screening. The level of agreement between the two screeners was found to be sufficient (κ = .80). Disagreements on study eligibility were resolved through consensus or consultation with the third author if needed. Additionally, in cases where manuscripts lacked sufficient data for effect size computation, the original authors were contacted to obtain more comprehensive data.

Finally, two quality checks were performed. For the first quality check, the first author screened records labeled as irrelevant to identify any incorrect exclusions. For the second quality check, the complete author team inspected the records identified as relevant to check for incorrectly included but irrelevant records based on the inclusion criteria. Irrelevant records were marked and removed from the dataset, leaving only relevant records. The complete process of selecting studies was visually represented using a PRISMA 2020-compliant flow diagram using the shiny app created by Haddaway et al. (2022).

Critical Appraisal

To ensure the reliability, validity, and applicability of the results of the selected studies for this review, a risk of bias assessment was performed at the individual study level. Criteria for evaluating studies suitable for randomized controlled trials, nonrandomized studies, and mixed methods studies were adopted from the critical appraisal checklists from the JBI Manual for Evidence Synthesis (Aromataris & Munn, 2020). We conducted a pilot appraisal for each of the three study designs to make clear how each item of the checklist was met and to maximize the reliability of the appraisal. Subsequently, two authors independently appraised the methodological quality of each included study. The interrater reliability agreement between the two authors was calculated and found sufficient (κ ranging from .66 to .86). Any disagreements were resolved by consensus. We did not confirm article-level data with the original authors but relied on the published records.

Data Extraction

The data extraction involved extracting key information from the selected studies. To ensure a rigorous and systematic approach, we followed four steps: (1) creating a preliminary data extraction sheet, (2) refining it through testing, (3) independently coding 10% of the publications with the finalized sheet, and (4) coding the remaining publications.

Initially, the first author created a preliminary data extraction sheet building on Biggs’s (2003) 3P model. This model informed the inclusion of variables in the categories of student factors, learning environment, learning-focused activities, and learning outcomes. The constituent competencies of the IPS-I model were used as a guiding framework for a more detailed analysis of the assessed learning outcomes. Additionally, a category was included to capture the conceptual and empirical arguments for the relation between intervention and outcome, facilitating the formulation of the rationale for the design principles. The entire author team discussed and further developed the first version of the identified categories.

Second, the data extraction sheet was refined through rigorous independent testing by the first and third authors on three key publications: Brooks (2014), Frerejean et al. (2016), and Levesque et al. (2018). Each of these publications represents a distinct tradition within this research field, building on the frameworks of information literacy, information problem solving, and evidence-based practice, respectively. Any differences in the extracted data were subsequently compared and discussed, leading to a final data extraction sheet containing a categorized list of data extraction elements. The final sheet contains 56 variables distributed over seven categories: (a) study description, (b) methods and procedures, (c) student factors, (d) learning environment, (e) learning-focused activities, (f) learning outcomes, (g) empirical and conceptual arguments, and (h) statistical data (see Table S1 [online only]).

Third, the first two authors independently coded 10% of the records using the final data extraction sheet. Any disagreements about data extraction were resolved by consensus or by the third reviewer’s decision. We contacted study authors to clarify existing data or to request missing or additional data. If data allowed, we computed effect sizes as standardized mean differences (ESsm) as Hedges’s g, using the posttest mean adjusted for the pretest, following the guidelines and formulas from Lipsey and Wilson (2001). If there were multiple reports (publications) for the same study, the newest one was used as a basis for data extraction. Interrater reliability was assessed and found to be excellent (94.23% agreement). Consequently, the codes of the first author were used for interpretation.

Last, the first author proceeded to code the remaining publications. All resulting data were discussed and refined with the third author. Following Alexander’s (2020) recommendation, we created a summary table to provide a concise overview of the characteristics of the included studies, highlighting the diversity in participant demographics, studied constructs, and intervention components within this research domain. Subsequently, we selected relevant data on the four components of the teaching system as defined by Biggs (2003) and combined these into one overall 3P model (Biggs, 2003). This model summarizes the key elements present in the reviewed articles, including student factors, learning environment, learning-focused activities, and learning outcomes.

Data Synthesis

Qualitative synthesis (narrative synthesis)

A narrative synthesis was prepared in four stages. In the first stage, the selection of learning environment characteristics was guided by two main criteria: high prevalence and methodological quality. Prevalence was determined using the 80/20 principle, where characteristics present in more than fourteen (i.e., 20%) of the reviewed publications were considered. This approach has been effectively employed in previous review studies focused on the formulation of design principles within the field of educational sciences (e.g., Van Ginkel et al., 2015). From this selection, characteristics were retained only if supported by evidence from studies meeting over 60% of the JBI quality appraisal criteria (deemed as moderate to high-quality). This selection process increases the generalizability and relevance of the selected characteristics, thereby enhancing the applicability and usefulness of the findings across diverse educational contexts.

In the second stage, these key learning environment characteristics, their effects on IPS competence or components thereof, and underlying arguments were synthesized into an elementary form of a design principle following the ideas of Van den Akker (1999). Regarding the purpose of this study to relate learning environment characteristics with IPS competence and support this with underlying arguments, the following aspects of the formula were explicitly included in the construction of the design principles: “characteristics of the intervention A, B and C,” “for the purpose Y,” based on “arguments P, Q en R,” “in context Z.” To enhance readability, the authors synthesized the conceptual and empirical arguments that require deep elaboration into a separate explanatory paragraph, while the design principles focused on the learning environment characteristics (A, B, and C) and IPS-related learning outcomes (Y).

In the third stage, the research team thoroughly discussed the preliminary design principles. The discussions focused on (1) the strength of the underlying theoretical and empirical argumentations for each principle, (2) the distinctiveness of the principle, (3) the level of readability, and (4) the summative evaluation of the total strength of the body of evidence of each principle. For this last aspect, we created a rubric (see Table S2 [online only]) based on the six dimensions of the QUESTS approach for evaluating evidence in educational practice—that is, quality, utility, extent, strength, target, and setting of the evidence (Harden et al., 1999). This evaluation indicates the circumstances and contexts (Z) where the design principles most apply.

In the last stage, after consensus within the research team, we classified the design principles based on Biggs’s (1996) three components of constructive alignment—that is, intended learning outcomes, teaching and learning activities, and assessment strategies. This resulted in the final set of design principles for developing IPS competence in higher education.

Quantitative synthesis (meta-analyses)

To quantitatively support the impact of the specific learning environment characteristics outlined in the design principles, we conducted a meta-analysis for each design principle if (1) other aspects of the studies, such as measured IPS competencies, were also similar and (2) the studies reported enough information to calculate a standardized mean difference (SMD) for direct comparison. In only five cases did the studies meet these conditions. These five meta-analyses combined findings from studies with similar comparison groups to estimate the overall effect of specific learning environment characteristics. A random-effects meta-analysis was performed using the R package meta (Schwarzer, 2022) to account for variations in study designs. Hedges’s g was used to compute the standardized mean difference, as this effect size measure is suitable for meta-analyses with diverse sample sizes. Forest plots visually represented the research data, while Higgin’s I2 was used to estimate heterogeneity. Publication bias was assessed using funnel plots, and selective reporting was evaluated by comparing the protocol or methods section to the results section of the published reports. Statistical details of the meta-analyses, including individual and average effect sizes for studies supporting each design principle, were summarized in the supplementary tables.

Results

This section first documents the systematic search and screening process that established our evidence base, followed by an analysis of identified learning environment characteristics and their synthesis and classification into a set of design principles.

Search and Screening Results

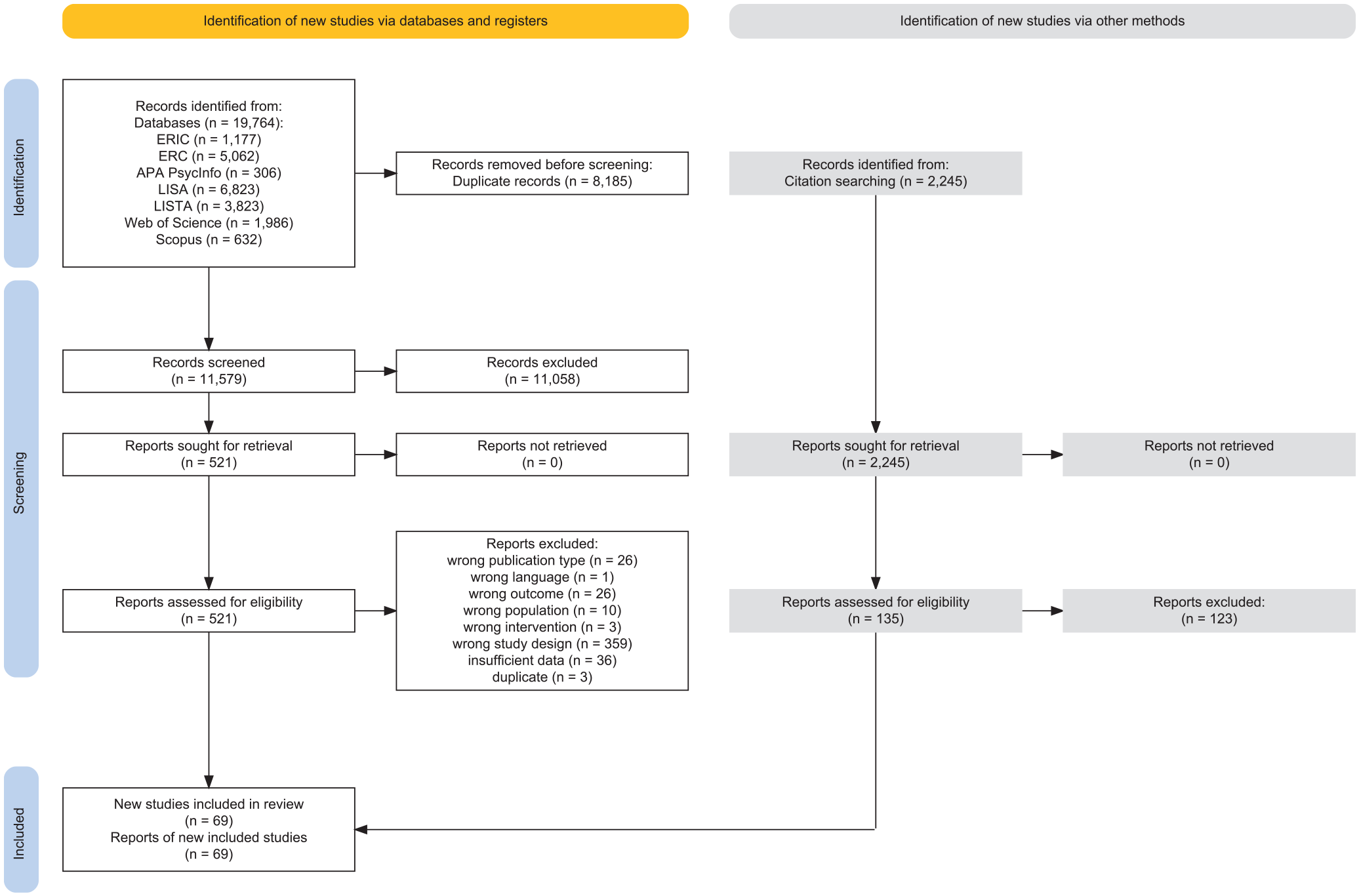

The PRISMA 2020 flow diagram (see Figure 2; based on Page et al., 2021) illustrates the systematic selection process employed in this review. The initial search across seven databases yielded 22,428 potentially eligible records. After limiting our search to English peer-reviewed journal articles from 2000 to 2023, 19,764 records remained; subsequently, we removed 8,185 duplicates and excluded 11,058 records based on title and abstract screening. We assessed the remaining 521 articles for eligibility in full text and excluded 464 based on the reasons detailed in Figure 2. The complete list of reasons for full-text exclusions can be found on OSF (see https://osf.io/mnwra). The first author did not identify any incorrect exclusions during the quality check. Consequently, we included 57 articles in the final analysis (see https://osf.io/s32ak). We further identified 12 additional articles from forward and backward citation searches (see https://osf.io/6ndcz). The final set of included articles comprised 69 articles—corresponding to 72 primary research studies—denoted by asterisks in the references. Together, these studies represent a pooled sample size of 9,267 students. Predominantly, the studies employed quantitative (94%) and quasi-experimental (77%) methods, referenced the ACRL standards or framework (43%), and were conducted in North America (62%) and Europe (28%). For a detailed quantitative summary, see Table S3 (online only).

PRISMA 2020-compliant flow diagram illustrating the study selection process in the systematic review.

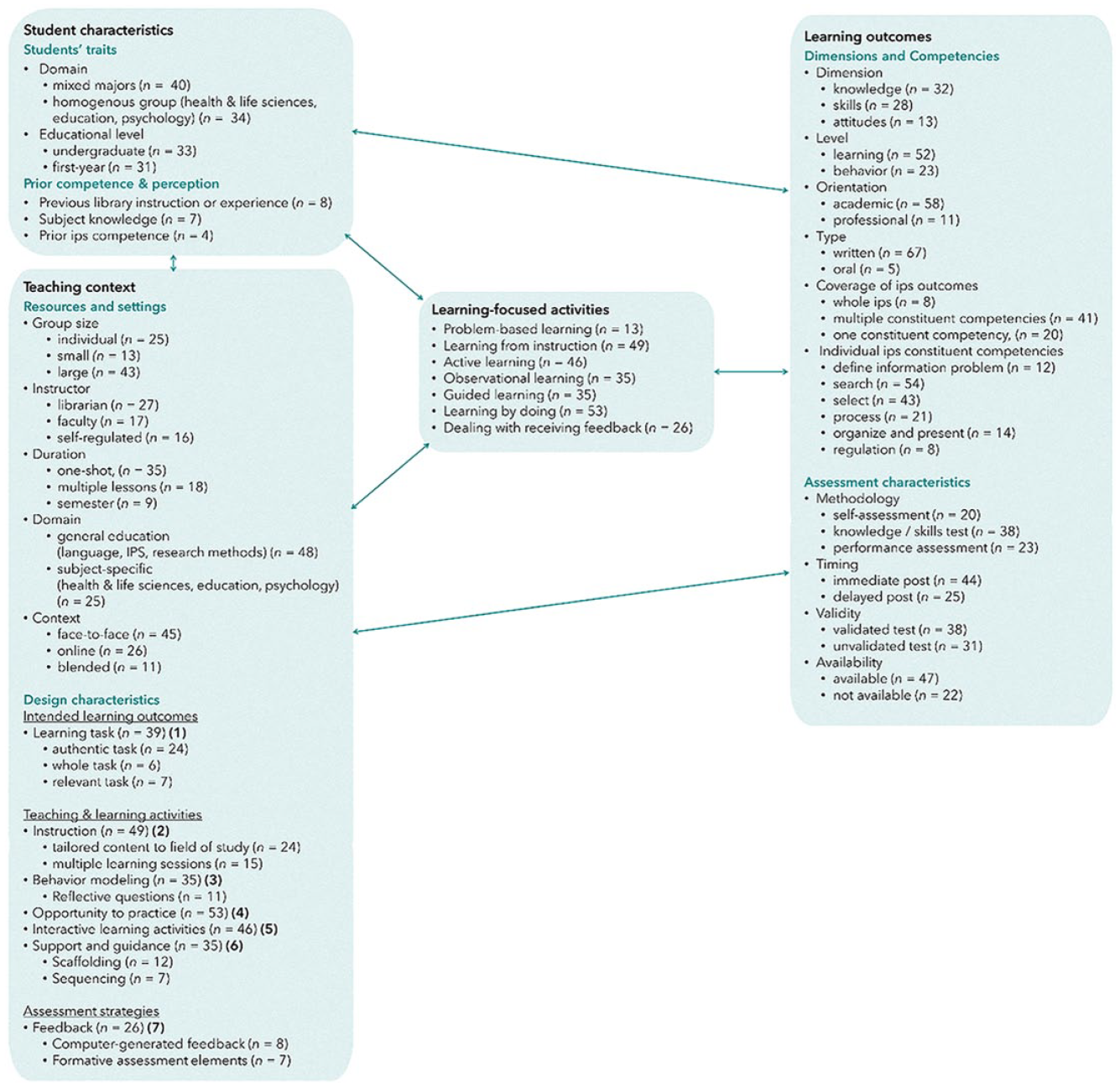

Learning Environment Characteristics for Developing IPS Learning Outcomes

Figure 3 presents the most prevalently studied characteristics of IPS teaching and learning identified in the included articles. These characteristics are organized according to Biggs’s (2003) 3P model components of student factors, learning environment, learning-focused activities, and learning outcomes. Expanding upon these descriptive statistics, we constructed a framework for developing IPS competence in higher education, building upon Biggs’s (2003) 3P model, as shown in Figure 3. This framework provides an overview of the most prevalent student factors, learning environments, learning-focused activities, and learning outcomes extracted from the included articles. Notably, participants were predominantly first-year or undergraduate students engaged in large-group (>15), librarian-led, one-shot, face-to-face instructional sessions, typically within the context of general education courses focusing on academic writing. The most frequently observed design characteristics of the learning environment were: (1) learning task, (2) instruction, (3) behavior modeling, (4) opportunity to practice, (5) interactive exercises, (6) support and guidance, and (7) feedback. Moreover, the included studies primarily focused on assessing short-term knowledge and skills in searching and selecting information, while other competencies critical to IPS, such as defining the problem, processing, and presenting information, were less emphasized. In the next subsection, we will present and explain the seven design principles that we have derived from this framework. For an in-depth look at the data informing these principles, see Table S4 (online only) for a summary of the main design characteristics of each study and the extracted data sheet for a detailed overview (see https://osf.io/q4txm).

Framework for developing IPS competence in higher education: A synthesis of reviewed studies (based on Biggs [2003] 3P model).

Design Principles for Developing Information Problem Solving Competence

Based on the most prevalent design characteristics of the learning environment identified in the reviewed literature, we formulated seven educational design principles for developing IPS competence in higher education. These principles address the design characteristics that instructional designers can calibrate to construct an effective IPS learning environment. Figure 3 illustrates the IPS educational design principles (IPS-EDP) model, which integrates these principles, encompassing (1) learning task, (2) instruction, (3) modeling, (4) practice, (5) learning activities, (6) support, and (7) feedback. Drawing upon Biggs’s (1996) aligned course design framework, the sequencing of these principles mirrors the stages of course development, starting with intended learning outcomes (principle 1), then moving to teaching and learning activities (principles 2, 3, 4, 5, 6), and ending with assessment strategies (principle 7). While the comprehensive application of each principle is not imperative, it is recommended to apply at least one principle from each component of constructive alignment within the learning environment.

Each design principle consists of three components: conceptual arguments, empirical arguments, and a summative evaluation of evidence. Every principle begins by outlining the conceptual arguments extracted from the included studies, which establish the theoretical foundation for the characteristic’s impact on IPS competence. Next, empirical evidence, including meta-analyses where possible, supports the principle’s effectiveness and illustrates its practical application. Finally, the summative evaluation assesses the overall strength of the evidence for each principle using the QUESTS dimensions (Harden et al., 1999). An example of such a QUESTS evaluation is presented visually for the first design principle, while the evaluations for the remaining principles are accessible in the online supplement.

Intended Learning Outcomes

Learning task

Design principle 1: Ensure that the learning task (a) reflects students’ relevance perception (academic or professional); (b) requires the integration of skills, knowledge, and attitudes; and (c) simulates authentic real-life practice scenarios to enhance IPS knowledge, skills, and performance

More than half (n = 39) of the reviewed studies identified specific characteristics of the learning task—the IPS assignment—for encouraging a range of outcomes, including IPS knowledge (e.g., Fitzpatrick & Meulemans, 2011; Gross & Latham, 2013), IPS skills (e.g., Catalano, 2015; Durieux et al., 2018), and IPS performance (Frerejean et al., 2019; Srisuwan & Panjaburee, 2020). Twenty publications focused on various aspects of the learning task in more detail, of which 15 referred to arguments grounded in educational theory. Of particular significance is the 4C/ID model by Van Merriënboer & Kirschner, 2018 (e.g., Argelagos et al., 2022; Frerejean et al., 2016, 2019), which emphasizes the importance of authentic and whole learning tasks—a perspective that is echoed in other publications (e.g., Catalano, 2015; Ghali et al., 2000; Wopereis et al., 2008). Such authentic tasks, including case-based learning (e.g., Ku et al., 2007), problem-based learning (e.g., Dolničar et al., 2017), and project-based learning (e.g., Durieux et al., 2018), foster student motivation and stimulate active involvement by encouraging active sense-making and increased engagement (e.g., Fitzpatrick & Meulemans, 2011; Gross & Latham, 2013; Wishkoski et al., 2021). Concurrently, the complex, real-world nature of these tasks requires students to integrate knowledge, skills, and attitudes, thereby facilitating the development of IPS competence and its transfer (e.g., Frerejean et al., 2018; Ruzafa-Martínez et al., 2015; Wopereis et al., 2008).

Incorporating authentic learning tasks in IPS learning environments, aligning with students’ academic and professional interests, predominantly yields positive effects on IPS competence. For example, using authentic academic assignments as IPS learning tasks, such as writing a master’s thesis theoretical framework or an argumentative essay, significantly improves IPS performance (Argelagos et al., 2022; Luna et al., 2022) and self-efficacy (Argelagos et al., 2022). In a professional context, engaging students in (potential) professional scenarios also enhances IPS outcomes. For instance, life science students enrolled in a problem-based learning course showed increased IPS knowledge after devising solutions for real-world drinking water problems compared to peers receiving traditional IPS lectures (Dolničar et al., 2017). Similarly, education students who produced informational brochures on dyslexia as part of a course on the subject demonstrated improved IPS performance relative to peers who did not perform this learning task (Brand-Gruwel & Wopereis, 2006). Furthermore, students in an evidence-based medicine course applying literature to patient care questions from real-life clinical scenarios showed enhanced self-assessed IPS skills (Ghali et al., 2000; Ruzafa-Martínez et al., 2015) and were more inclined to apply these skills in clinical practice (Ghali et al., 2000), suggesting a positive transfer effect. In the absence of real-life scenarios, alternative approaches like analyzing hypothetical case studies have also led to modest but positive effects on IPS skills (Ku et al., 2007).

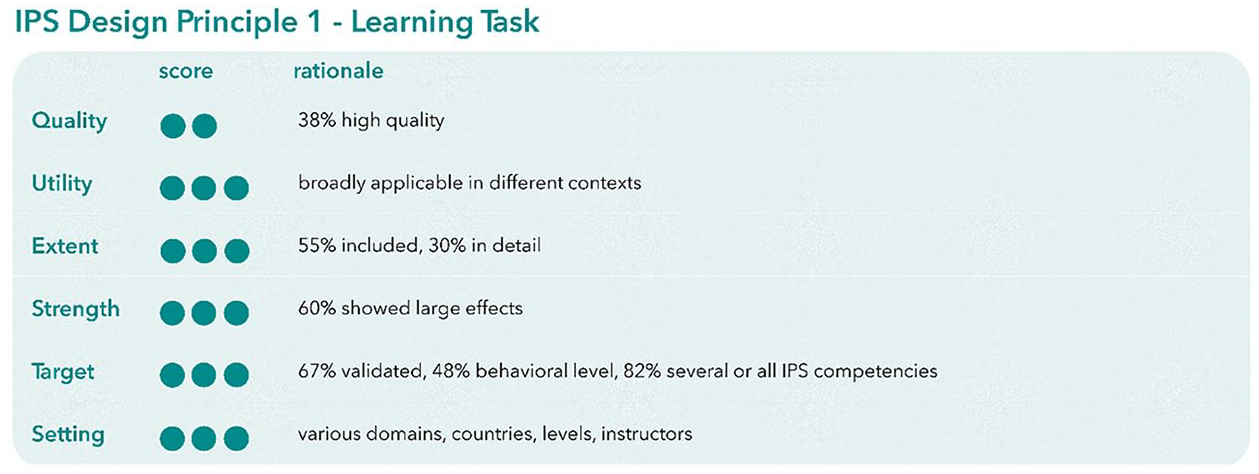

Both theoretical constructs and empirical evidence underscore the value of incorporating relevant, whole, and authentic tasks into learning environments to enhance IPS knowledge, self-assessed skills, and performance. This design principle shows broad applicability in diverse contexts, as demonstrated by high scores across QUESTS dimensions (see Figure 4). Nevertheless, the effectiveness of using authentic tasks is primarily documented in the setting of long-term courses, suggesting that these tasks require prolonged engagement.

Evaluation of evidence for design principle 1 “learning task” using the QUESTS dimensions (Harden et al., 1999).

Teaching and Learning Activities

Instruction

Design principle 2: Include multiple IPS instruction sessions in the curriculum and embed these within the content of the respective discipline to enhance IPS knowledge, skills, and performance

Over two-thirds of the included studies (n = 49) mention instruction as a critical element of the learning environment to improve IPS knowledge (e.g., Levesque et al., 2018; Silk et al., 2015), IPS skills (e.g., Ku et al., 2007; Shamsaee et al., 2021), IPS performance (e.g., Gruppen et al., 2005; Wopereis et al., 2008), and self-efficacy (e.g., Argelagos et al., 2022; Fuselier et al., 2017). Sixteen studies examining the effects of instruction in more detail theoretically underpinned their arguments, notably mentioning the concepts of embedded librarianship and contextualization. Embedded librarianship, manifested through increased librarian interaction, recognizes the need for extended engagement and practice to foster IPS competence (e.g., Mery et al., 2012; Meyer et al., 2008; Teagarden & Carlozzi, 2017). Contextualization (Perin, 2011; Van Merriënboer & Kirschner, 2018) involves integrating instruction with immediate relevance to real-world issues alongside domain-specific content (e.g., Frerejean et al., 2019; Fuselier et al., 2017), enabling students to connect academic content to practical applications within their domain. Several authors argued that contextualization enhances motivation, engagement, deep learning, and transfer of learning (e.g., Brand-Gruwel & Wopereis, 2006; Wopereis et al., 2008).

The empirical evidence demonstrates that IPS instruction extended over time, systematically embedded within domain-specific curricula, positively affects various IPS outcomes. A meta-analysis of three independent studies involving 812 participants quantitatively supports the benefits of extended semester-long IPS sessions, outperforming one-shot IPS instruction in developing students’ knowledge of searching, selecting, and citing information (Hedges’s g = 0.86, 95% CI [0.64–1.09], p < .001; see Table S5 and Figure S1 [online only]). Analysis of Higgins’s I² indicated no significant heterogeneity in intervention effects, and funnel plots showed no signs of publication bias. This pattern is mirrored in diverse educational domains, including education, psychology, and biology. In these fields, extended IPS instruction that is embedded throughout the curriculum significantly enhances a broad spectrum of IPS outcomes, from performance and self-evaluated skills to self-efficacy, when compared to no embedded IPS instruction (e.g., Brodsky et al., 2021; Fuselier et al., 2017; Ku et al., 2007). To illustrate, a target intervention involving 15 study hours of IPS instruction embedded within a teacher education course showed improvements in IPS performance, although the effects did not persist on a delayed post-test (Frerejean et al., 2019). Moreover, specialized additional IPS instruction—such as PICO training along with weekly clinical reasoning sessions embedded in an evidence-based medicine course—significantly enhances students’ IPS knowledge and self-assessed skills (Levesque et al., 2018). This contrasts with shorter embedded interventions, such as nursing students receiving a one-day IPS program and IPS assignments tailored to a nursing course, which lacked lasting impacts on IPS self-efficacy (Courey et al., 2006).

In conclusion, theoretical arguments and empirical evidence suggest that extending instruction through increasing contact frequency and integrating IPS instruction within existing course content can improve IPS knowledge, skills, and performance. The combination of instruction that is extended over time and intertwined with domain-specific content seems especially effective. The summative evaluation of evidence in Figure S2 (online only) supports this principle’s effectiveness and potential for broad applicability in diverse contexts.

Modeling

Design principle 3: Provide opportunities for students to observe short fragments of peer-expert models, interspersed with instructional guidance, to increase IPS performance

Over half of the reviewed studies (n = 35) explicitly mention observing models of peers or experts as one of the key strategies to increase IPS knowledge (e.g., Cudiner & Harmon, 2001; Tewell, 2014), IPS performance (Frerejean et al., 2018; Mateos et al., 2018), and self-efficacy (Hostetler & Luo, 2021; Kelly, 2017). In the seven publications focusing on this aspect, Bandura & Walter’s (1977) social cognitive theory and Sweller’s (2006) cognitive load theory were commonly invoked to justify the use of behavioral modeling to promote IPS. According to social cognitive theory, observational learning from model demonstrations enhances the acquisition of complex skills, such as IPS, by revealing the underlying cognitive processes (e.g., Frerejean et al., 2018; Kelly, 2017). This insight enables learners to anticipate and navigate challenges in the IPS process, thereby strengthening self-efficacy beliefs (Kelly, 2017). Frerejean et al. (2018) further suggest that combining observational learning with practice and self-explanation prompts could enhance retention of IPS skills by automating the required skills independent of the model. According to cognitive load theory, modeling examples can help reduce cognitive load and facilitate the construction of knowledge schemas (e.g., Frerejean et al., 2018; Hostetler & Luo, 2021). By allowing learners to reallocate their cognitive resources from performing to understanding IPS tasks, behavioral modeling promotes both IPS competence and regulation of the IPS process (Mateos et al., 2018).

Regarding empirical evidence, studies can be broadly divided into two categories: those that use non-expert models, such as peers (Hostetler & Luo, 2021; Tewell, 2014), and those that use expert models, such as peers who demonstrate the task proficiently (Frerejean et al., 2016; Mateos et al., 2018) or librarians (Cudiner & Harmon, 2001; Kelly, 2017). Studies involving peer-expert videos—showing short fragments of each IPS process step, interspersed with reflective prompts (Frerejean et al., 2018) or explicit instruction (Mateos et al., 2018), and followed by practice—demonstrated a significant, medium to large, positive effect on IPS performance when compared to groups that only practiced. This positive effect persisted even after one week in a delayed posttest (Frerejean et al., 2018). However, a study by Kelly (2017), which used librarian-expert videos, revealed no noticeable effect on self-efficacy toward IPS (Kelly, 2017). The impact of non-expert models varied, showing either no effect on IPS knowledge (Hostetler & Luo, 2021) or a significant, large effect on understanding IPS concepts (Tewell, 2014). Notably, the modeling techniques used in these studies differed considerably: Tewell employed comedy excerpts on IPS-related topics, whereas Hostetler and Luo used direct in-class live peer demonstrations. This variance in the operationalization of modeling leads to reduced comparability between these studies. Coupled with the use of unvalidated outcome measures, their results on the effectiveness of non-expert models for teaching IPS should be approached with caution.

In conclusion, arguments grounded in social cognitive theory and cognitive load theory underscore the pivotal role of observational learning through modeling in fostering IPS competence and self-efficacy. Empirical evidence indicates positive effects from both non-expert and expert models. Nevertheless, peer-expert models show the most compelling evidence for improving IPS performance, especially when supplemented with instructional guidance. The summative evaluation in Figure S3 (online only) underscores this principle’s extensive applicability across diverse academic domains and countries, with a particular emphasis on first-year contexts.

Practice

Design principle 4: Provide opportunities for students to practice IPS in preparation for or following instruction or modeling to develop IPS knowledge, skills, and performance

Fifty-three of the reviewed publications adopted the opportunity to practice IPS as a crucial learning environment characteristic to improve IPS knowledge (e.g., K. Anderson & May, 2010; Fitzpatrick & Meulemans, 2011), IPS skills (e.g., Brooks, 2014; Ghali et al., 2000), and IPS performance (e.g., Frerejean et al., 2018; Leichner et al., 2014). Among the 18 publications focusing on practice in more detail, 14 deployed theoretical arguments to substantiate their approaches. These studies drew upon Vygotsky’s social constructivist theory (e.g., Engelmann et al., 2022; Fitzpatrick & Meulemans, 2011) and collaborative practice principles (e.g., Dolničar et al., 2017; Mateos et al., 2018) to assert that interactions with peers or the practice task promote active processing, fostering deep comprehension and internalization. Furthermore, proponents of the 4C/ID model (Van Merriënboer & Kirschner, 2018) (e.g., Argelagos et al., 2022; Frerejean et al., 2016) emphasized the benefits of part-task practice and repetition for routine IPS tasks to strengthen cognitive rules in long-term memory and free cognitive capacity for complex tasks. Moreover, a theme that repeatedly surfaced in the literature is the synergistic effect of combining deliberate practice with instruction or modeling examples to enhance skill retention and knowledge acquisition (e.g., Cudiner & Harmon, 2001; Frerejean et al., 2018; Wood et al., 2000). This argument is grounded in the premise that equipping students with effective strategies significantly amplifies the benefits of practice.

Empirical evidence strongly supports the combination of practice with other didactical interventions as crucial for enhancing IPS performance. Isolated practice, such as mere student-centered practice conducted in class, does not significantly enhance IPS knowledge of plagiarism compared to traditional instruction (Moniz et al., 2008). Yet, combining this hands-on practice with traditional lecture formats has been shown to be more effective. Out-of-class preparatory practice tasks performed before formal instruction significantly improve the ability to critique low-quality information (Lawson & Brown, 2018). They furthermore improve knowledge and skills in question formulation and search strategies (Brooks, 2014). Furthermore, in-class collaborative practice, especially when it follows tutor-supervised collaborative phases and self-study, yields large effects on IPS knowledge when compared to lecture-based learning (e.g., Dolničar et al., 2017), although this was not studied in isolation. Preparing students for practice with instruction and/or modeling demonstrations also results in medium to large improvements in IPS performance (Gruppen et al., 2005; C. Zhang, 2013) and medium effects on IPS knowledge (Cudiner & Harmon, 2001; Fitzpatrick & Meulemans, 2011) when compared to practice-only approaches. For instance, in an intensive English program, which combined traditional reading and writing practice with five instructional sessions, significantly enhanced L2 English speakers’ synthesis writing skills, outperforming mere practice (C. Zhang, 2013). Additionally, the inclusion of short peer-expert modeling videos before engaging in practice enhances IPS performance (Frerejean et al., 2018; Mateos et al., 2018). In contrast, simultaneous hands-on practice during expert demonstrations appears less effective for knowledge of search strategies (Cudiner & Harmon, 2001), potentially due to cognitive overload, highlighting the importance of carefully timing and combining didactical interventions with practice for optimal IPS learning outcomes.

In conclusion, theoretical underpinnings, including Vygotsky’s social constructivist theory and principles of collaborative practice, indicate that interactive and deliberate practice fosters deeper understanding and better internalization of content. The empirical evidence substantiates these theoretical concepts but also highlights a caveat: engaging in IPS practice alone is insufficient. It is the combination of hands-on practice before or after lectures or modeling that is most effective for improving IPS knowledge and performance. The summative evaluation of evidence in Figure S4 (online only) demonstrates the broad applicability of this principle in diverse contexts.

Learning activities

Design principle 5: Ensure that learning activities actively engage students to increase short-term IPS knowledge and skills

Most of the reviewed studies (n = 46) used a form of active learning in the learning environment to encourage IPS knowledge (e.g., McCabe & Wise, 2009; Tewell & Angell, 2015), IPS skills (e.g., Hsieh et al., 2014; Moniz et al., 2008), IPS performance (e.g., Leichner et al., 2014; C. Zhang, 2013), and self-efficacy (e.g., Beile & Boote, 2004; Courey et al., 2006). Six studies provided a detailed examination of active learning, all referring to arguments grounded in educational theories. Recurring theoretical frameworks highlighted across these studies included social constructivism (Engelmann et al., 2022; Moniz et al., 2008), student-centered learning (e.g., Bonnet et al., 2018; Hsieh et al., 2014), and game-based learning (e.g., McCabe & Wise, 2009; Tewell & Angell, 2015). The central proposition of these studies was that active learning activities encourage active knowledge construction, leading to deeper internalization of knowledge, schema construction, and, ultimately, enhanced learning of IPS. Additionally, proponents of game-based active learning have argued that this pedagogical approach increases student engagement and motivation for IPS, leading to improved learning outcomes (McCabe & Wise, 2009; Tewell & Angell, 2015).

Empirical evidence supports the benefits of game-based and other types of active learning for IPS knowledge and skills. A meta-analysis of three independent studies involving 450 participants comparing game-based approaches to traditional IPS instruction reveals a small but significant increase in enhancement in short-term retention of IPS knowledge, specifically in information search, selection, and citation (Hedges’s g = 0.26, 95% CI [0.07–0.45], p = .007; see Table S5 and Figure S5 [online only]). Higgins’s I² indicated no significant heterogeneity in intervention effects, and funnel plots showed no signs of publication bias. These studies underscore the efficacy of both individual games, like Citation Tic Tac Toe, which focuses on IPS knowledge (McCabe & Wise, 2009; Tewell & Angell, 2015), and interactive group games, such as the “hot potato” game designed to spark discussions on resource credibility (Bonnet et al., 2018). Similarly, example-based learning, whether through explaining solutions to a partner or to oneself, significantly enhances students’ knowledge and skills in appraising scientific evidence (Engelmann et al., 2022). These findings suggest that the key to the effectiveness of active learning-based methods in IPS education lies in the active engagement they promote rather than the specific format of the activity (individual or group-based). However, the impact of interactive group exercises other than games varies. For instance, while a small group website evaluation exercise significantly improved source evaluation knowledge (Bonnet et al., 2018), the effects of role-playing exercises to simulate real-world plagiarism scenarios on knowledge of plagiarism were inconclusive (Moniz et al., 2008). These mixed outcomes hint at the possible influence of the concurrent presence of additional instructional strategies, such as modeling, within these learning activities. The role-playing exercise by Moniz et al. (2008), which did not incorporate modeling techniques, contrasts the approach of Bonnet et al. (2018), who enriched interactive group activities with strategic source-finding demonstrations. This observation underscores the potential of applying active learning strategies and modeling in synergy to enhance IPS learning outcomes.

To conclude, different forms of active learning, including interactive exercises and game-based learning, have demonstrated noticeable effects on short-term IPS knowledge and skills as compared to traditional instruction. The theoretical arguments presented in the reviewed studies suggest that active learning facilitates active knowledge construction, leading to enhanced knowledge retention. As highlighted by the summative evaluation in Figure S6 (online only), this design principle demonstrates broad applicability, primarily drawing on evidence from one-shot sessions for undergraduate students.

Support

Design principle 6: Provide support both on the IPS task and the IPS process to enhance IPS performance

Over half of the reviewed studies (n = 35) incorporated instructional support into their learning environments as a key strategy to enhance IPS knowledge (Davis & Smith, 2009; Kavšek et al., 2016), IPS performance (e.g., Frerejean et al., 2016; Srisuwan & Panjaburee, 2020), and self-efficacy (e.g., Davis & Smith, 2009; Lawson & Brown, 2018). Among these studies, nine explicitly focused on the role of guided learning and support in enhancing IPS, particularly for complex tasks such as writing synthesis texts (e.g., Granado-Peinado et al., 2019, 2023; Luna et al., 2022; Mateos et al., 2018). Seven of these studies highlight two theoretical approaches to guide IPS learning. The first approach builds upon the writing instruction design principles by Rijlaarsdam et al. (2018) (e.g., Luna et al., 2020, 2022), advocating for explicit instructional support that clarifies underlying task processes to foster appropriate task representation, thereby enhancing self-regulation (Mateos et al., 2018). The second approach, emphasizing Ferretti & Lewis’s (2013) theory, posits that, especially for undergraduates, a scaffold that extends beyond mere explicit instruction is vital to acquire the self-regulation strategies needed to overcome the difficulties they face during the IPS process. Consequently, effective scaffolding of the IPS process entails a comprehensive pedagogical approach that includes discussion, modeling, and practice to promote self-regulation, understanding, and skill mastery (e.g., Granado-Peinado et al., 2023; Luna et al., 2020).

The empirical evidence can be categorized into studies focusing on task support (e.g., Luna et al., 2020; Long et al., 2016) and process support (e.g., Granado-Peinado et al., 2019, 2023). Task support on the linguistic aspects of an argumentative synthesis in an online learning environment significantly enhanced IPS performance as compared to mere practice (Luna et al., 2020). Conversely, a digital tool aimed at guiding health students in scientific literature appraisal to support evidence-based practice did not markedly improve self-assessed IPS skills as compared to mere practice (Long et al., 2016). This variance in the effectiveness of task support interventions underscores that simply providing tools may be insufficient; learners need task support, including explicit instruction and modeling, to be guided through the steps involved in IPS tasks. Regarding process support, evidence consistently shows a positive influence on IPS outcomes, where more support leads to better IPS performance. The inclusion of extensive process support, including explicit instruction with video modeling on processes in synthesis, has proven more effective than task support focusing on the final product (Luna et al., 2022). Meta-analyses of three independent studies involving 259 participants highlighted the added benefits of such extended process support over using just a written argument-mapping guide and reflective questions as a scaffold. The analyses revealed significant effects on both identifying arguments (Hedges’s g = .39, CI [0.10–.69], p = .009) and integrating arguments from conflicting sources (Hedges’s g = .65, 95% CI [.40–.90], p < .001), as shown in Table S5 and Figures S7 and S8 (online only). The analysis of funnel plots and Higgins’s I² showed no signs of publication bias or heterogeneity in intervention for in both meta-analyses. Apart from whether support is focused on the task itself or the process involved, the timing of this support also seems to matter. Luna et al. (2022) found that providing process support during the synthesis writing process, as opposed to task support before task commencement, leads to better integration of conflicting arguments.

In conclusion, both theoretical perspectives and empirical evidence highlight the crucial role of task and process support in enhancing IPS performance, particularly for complex tasks such as synthesis writing. Providing extensive support during the IPS process appears most effective in enhancing IPS performance as it helps students identify and overcome problems that may arise. The summative evaluation in Figure S9 (online only) demonstrates both the broad applicability and strong empirical support for the effectiveness of this design principle across diverse contexts.

Assessment Strategies

Feedback

Design principle 7: Ensure timely feedback during IPS task performance and facilitate self-assessment to improve IPS knowledge, skills, and self-efficacy

Feedback on IPS is emphasized as a vital feature of an effective learning environment in 26 of the analyzed publications for developing IPS knowledge (e.g., Buhay et al., 2010; Petersohn, 2008), IPS skills (e.g., Catalano, 2015; Lerdpornkulrat et al., 2019), self-efficacy (Argelagos et al., 2022; Shaffer, 2011), and IPS performance (Luna et al., 2020; C. Zhang, 2013). The identified feedback types encompassed various sources of feedback, including computer-generated feedback (e.g., Germain & Jacobson, 2000; Gutierrez & Wang, 2001), peer feedback (e.g., S. M. Kim & Hannafin, 2016; Moniz et al., 2008), teacher feedback (e.g., Argelagos et al., 2022; Fitzpatrick & Meulemans, 2011), and self-assessment (Lerdpornkulrat et al., 2019). Six studies examined the role of feedback in depth, drawing from a range of theoretical perspectives. These studies emphasized the capacity of feedback to heighten engagement (e.g., Buhay et al., 2010; Petersohn, 2008), mitigate potentially detrimental emotions like boredom or frustration (Guo & Goh, 2016), and encourage the development of self-regulated learning habits (Lerdpornkulrat et al., 2019; Luna et al., 2020). In particular, the instantaneous evaluation of student understanding through digital feedback tools, such as clickers or learning management systems, allows educators to adjust their pedagogical strategies in real-time, thereby improving instructional efficacy and boosting learning outcomes (e.g., Buhay et al., 2010; Petersohn, 2008).

Despite the established potential of feedback, only five studies focused on its effect in isolation in the context of learning IPS. Among these, a meta-analysis of three independent studies involving 386 participants evaluated the impact of immediate whole-class feedback facilitated by digital response systems on short-term retention of knowledge of search strategies, revealing a small effect that approached significance when compared to traditional instruction (Hedges’s g = .22, 95% CI [−0.02–0.47], p = .07; see Table S5 and Figure S10 [online only]). However, the evidence is not robust enough to confirm its efficacy conclusively due to the marginally significant p-value and the moderate heterogeneity observed among the studies (I² = 33.6%). Delving into the formulation of feedback messages, Guo and Goh (2016) investigated computer-generated feedback within an educational library game, contrasting affective messages with neutral feedback messages. Although no significant gains were found regarding IPS knowledge, the affective feedback message significantly enhanced motivation, enjoyment, perceived usefulness, and behavioral intention. Further exploring these affective outcomes, Lerdpornkulrat et al. (2019) examined the influence of self-assessment rubrics on motivational goal orientation and engagement of first-year students in a literature assignment, observing no substantial impact. Yet, students trained to employ these self-assessment rubrics for in-class reviews of their work demonstrated enhanced self-efficacy and IPS skills compared to peers who relied solely on teacher feedback. Although not inherently motivating, self-assessment rubrics helped students to internalize quality expectations for academic work, thereby supporting students in regulating their own learning process and revising their assignments.

In summary, various feedback mechanisms play a crucial role in creating an effective learning environment for enhancing IPS competence by the theorized working mechanisms of promoting engagement, stimulating self-regulation, and enabling adaptive teaching strategies. Just-in-time feedback, whether computer- or self-assessed, has been shown to significantly improve IPS learning outcomes, including knowledge, skills, and self-efficacy. The summative evaluation in Figure S11 (online only) substantiates the applicability of this design principle across diverse educational domains, primarily drawing on evidence from one-shot sessions designed for first-year students.

Discussion

Summary of Findings

This systematic review has synthesized current scientific insights on developing IPS competence in higher education into a comprehensive set of seven interrelated design principles as visualized in the IPS-EDP model (Figure 5): (1) learning task, (2) instruction, (3) modeling, (4) practice, (5) learning activities, (6) support, and (7) feedback. These interrelated design principles are supported by conceptual arguments derived from theoretical frameworks and empirical evidence from the reviewed studies. Meta-analyses further substantiate the empirical validity of principles 2, 5, 6, and 7. Collectively, the seven identified design principles form a comprehensive, constructively aligned set, addressing intended learning outcomes (principle 1), teaching and learning activities (principles 2, 3, 4, 5, 6), and assessment strategies (principle 7). Contrary to general educational models for teaching complex competences, the IPS design principles are uniquely tailored to teaching and learning IPS in the context of higher education. They stand apart in two ways: firstly, they articulate specific design sub-characteristics based on empirical evidence from diverse educational settings in higher education. Secondly, the theoretical arguments underlying the design principles clarify the particular advantages of these characteristics for IPS learning. To summarize, the IPS-EDP model’s emphasis on specific design characteristics, supported by robust empirical and theoretical foundations, distinctly equips it to enhance IPS teaching and learning in higher education.

The IPS-EDP model of the seven interrelated educational design principles for developing information problem solving competence in higher education.

Implications

From the findings of this systematic review, we derive three main implications for higher education stakeholders: for researchers, the need for standardized design and reporting in IPS interventions; for practitioners, the limited impact of implementing design principles in isolation and the need for contextual adaptation; and for institutional policymakers, the indispensable role of institutional support.

For researchers, we derive from the findings the implication that there is a need for uniformity in the creation and documentation of IPS interventions. The observed diversity in IPS intervention designs, divergent operationalization of design characteristics, and unclear reporting significantly challenge the synthesis of research findings and the application of evidence-informed pedagogical strategies. By providing a concrete tool, the IPS-EDP model addresses these challenges, facilitating the standardization of design and reporting in IPS research and educational practice. For researchers, it provides a structured framework that facilitates the reporting of interventions, thereby enhancing the comparability, synthesis, and generalization of IPS research findings. This dynamic framework not only organizes current knowledge and practices but also highlights where further exploration and innovation are needed. It encourages future studies to refine these design principles and assess their effectiveness across various educational contexts. Considering these affordances, the IPS-EDP model enables a standardized methodology that improves the comparability of research outcomes and supports evidence-informed decision-making in educational practice.

For practitioners, the isolated implementation of the identified seven design principles within the IPS-EDP model is insufficient to effectively develop IPS competence. Conversely, the integrated application of multiple design principles synergizes their effectiveness. Empirical evidence demonstrates that integrating hands-on practice with explicit instruction or modeling is crucial for attaining optimal learning outcomes. Extending this argument, Frerejean et al. (2019) explicitly demonstrated that the integration of multiple design principles—embedded instruction and practicing authentic tasks supported by modeling examples and feedback—substantially improves IPS performance. In light of these findings, we encourage researchers, educators, and curriculum designers to apply the IPS design principles in tandem, ensuring that each principle complements and reinforces the others. In practice, this involves following the stages of constructively aligned course design (Biggs, 1996): (1) describe the intended learning outcomes; (2) create a conducive learning environment through appropriate teaching and learning activities; and (3) use assessment strategies that evaluate students’ performance. We recommend applying at least one design principle in each of these three steps to facilitate an effective, constructively aligned learning environment. We present an example to illustrate the application of the IPS design principles within each step. To begin, create authentic IPS learning tasks that align with students’ academic or professional goals. This will provide a clear foundation for the learning objectives. Next, design instruction, modeling of IPS processes, and engaging learning activities for the students to practice with IPS tasks. These activities will help concretize the teaching and learning process. Lastly, integrate (self-)assessment opportunities to reinforce and assess the learning process. Ultimately, thoughtfully combining the IPS design principles throughout the course design will optimize IPS teaching and learning.