Abstract

This article investigates youth judgments of the accuracy of truth claims tied to controversial public issues. In an experiment embedded within a nationally representative survey of youth ages 15 to 27 (N = 2,101), youth were asked to judge the accuracy of one of several simulated online posts. Consistent with research on motivated reasoning, youth assessments depended on (a) the alignment of the claim with one’s prior policy position and to a lesser extent on (b) whether the post included an inaccurate statement. To consider ways educators might improve judgments of accuracy, we also investigated the influence of political knowledge and exposure to media literacy education. We found that political knowledge did not improve judgments of accuracy but that media literacy education did.

Introduction

This study takes as a starting point the proposition that democracy works better when participants care about the accuracy of truth claims. This premise is hardly controversial. As Jennifer Hochschild and Katherine Levine Einstein (2015) write, “Almost no one disputes that citizens should acquire and use appropriate and accurate information when making political and policy choices” (p. 6). Indeed, the belief that accurate information will bolster democratic decision making and enable societal improvement is deeply embedded in the enlightenment paradigm, pragmatist beliefs, deliberative ideals, and other prominent conceptions of a strong, just, and productive democracy. The benefits of engagement with credible evidence stem from improving knowledge and understanding of issues, enabling assessment of varied viewpoints and policies, identification of one’s interests in relation to varied policies, and supporting formulation of more effective responses to societal issues (Delli Carpini & Keeter, 1996; Dewey, 1927; Mill, 1859/1956). Moreover, widespread use and circulation of misinformation can undermine the public’s ability to identify desirable policies, their desire to engage in politics, and their sense of the very legitimacy of democratic governance (see Delli Carpini & Keeter, 1996; Hochschild & Einstein, 2015). In short, there is a strong case for Daniel Patrick Moynihan’s oft-quoted statement that “Everyone is entitled to their own opinion, but not their own facts.”

Unfortunately, we often experience the kinds of political debates that frustrated Moynihan—ones in which highly partisan individuals and groups have their own facts. Studies show that Republicans and Democrats (or conservatives and liberals, depending on the study) differ in their beliefs about the fundamental facts that relate to many of the most important issues in recent years, including the Iraq War (Kull, Ramsay, & Lewis, 2003), income inequality (Bartels, 2009), and climate change (McCright & Dunlap, 2011). Moreover, these differences in beliefs regarding factual knowledge are not merely the product of innocent ignorance. Instead, the deliberate distribution of misinformation by some politicians, political organizations, and interest groups is common (Hochschild & Einstein, 2015; Lewandowsky, Ecker, Seifert, Schwarz, & Cook, 2012). In addition, partisan polarization among political officials in the United States has expanded dramatically over the past several decades (McCarty, Poole, & Rosenthal, 2006). And among the electorate, the affective ties associated with partisanship—the degree to which partisans dislike and impute negative traits to members of the other party—also has increased. To cite one stark example, in 1960, roughly 5% of Republicans and Democrats said they would be “displeased” if their child married someone from the other party. By 2010, these numbers had grown to 49% of Republicans and 33% of Democrats (Iyengar, Sood, & Lelkes, 2012). These trends along with changes in the media environment such as the diminished role of gatekeepers and vastly expanded opportunities for circulation of both information and misinformation in the Digital Age make exposure to inaccurate information both more common and more difficult to detect (Garrett, 2011; Lewandowsky et al., 2012; Rojecki & Meraz, 2016). As a result of these changes, the role of schools in promoting the capacity and commitment to use and identify accurate information will be ever more important in the Digital Age.

Judging Political Claims: An Educational Priority

Those pursuing the democratic purposes of schooling have long argued that educators should prepare youth to be informed about controversial issues, able to critically assess evidence and factual claims related to such issues, and able to judge and construct well-reasoned arguments (e.g., Merriam, 1934). As a means of pursuing these goals, civic education scholars have, for example, highlighted ways that controversial issue discussions can deepen understanding, promote political interest, and help students develop skills needed to craft well-warranted arguments (e.g., Campbell, 2008; Hess & McAvoy, 2015; Parker, 2006; Torney-Purta, 2002).

These educational priorities are also reflected in current educational standards and policies. Authors of the Common Core State Standards (CCSS), for example, write that evidence-based reasoning is “essential to both private deliberation and responsible citizenship in a democratic republic” (National Governors Association Center for Best Practices & Council of Chief State School Officers, 2010, p. 3). And the standards embedded in the CCSS attend to these concerns. Ninth and 10th graders, for example, are expected to demonstrate the ability to “identify false statements and fallacious reasoning” (p. 40).

Standard educational responses to these priorities often emphasize content knowledge and generic analytic abilities. However, a growing body of research demonstrates the limited value of knowledge and analytic abilities when it comes to making evidence-based judgments in highly partisan contexts. In particular, in a polarized environment, judgments of truth claims are often shaped more by whether or not individuals’ prior perspectives on the issue align with the claims than by how well informed the individuals are or their capacities to reason (Lavine, Johnston, & Steenbergen, 2012; Taber & Lodge, 2006).

Unfortunately, despite concern regarding these priorities, educational researchers have rarely examined whether and how school practices can counter these partisan biases. Since scholars from political science, psychology, and communications have done significant conceptual and empirical work on these issues (although they have not addressed the educational implications of their findings), we begin by reviewing that work. We then examine data from the Youth Participatory Politics (YPP) Survey, a large-scale nationally representative survey of youth ages 15 to 27, to examine the ways that prior beliefs shape judgment of the accuracy of political content and ways that actions by educators may influence these dynamics. Specifically, the survey includes an experiment that was designed to simulate the sorts of political content that circulate on social media. Participants were randomly assigned to read a post regarding economic inequality and tax policy that expressed either a conservative or a liberal perspective and that contained accurate or inaccurate information. They were then asked to rate the post’s accuracy. We assessed the degree to which youths’ judgments were influenced by the posts’ alignment with their prior political perspectives and whether the post contained misinformation. As detailed in the following, we found that youth tended to rate posts as “accurate” when the posts aligned with their prior views on the issue (irrespective of whether the post contained factual inaccuracies). In addition, we tested whether two potential supports (political knowledge and media literacy instruction) might help counteract these dynamics. In what follows, we discuss what we found and the implications of those findings for furthering the democratic aims of education.

Biased Judgments of Evidence and Arguments Are Common When It Comes to Controversial Issues

Social science research indicates that when individuals encounter highly partisan issues, numerous perceptual biases influence their judgments of truth claims. These biases also limit the degree to which individuals learn through exposure to information and from deliberation more generally. Scholars often emphasize the distinction between two fundamental motivations that affect the ways that individuals process information: directional motivation and accuracy motivation (Kunda, 1990; Taber & Lodge, 2006; for a review, see Druckman, 2012). When individuals are guided by directional motivation (the desire to justify conclusions that align with prior beliefs), information that is consistent with one’s prior preferences tends to be accepted uncritically and judged positively, whereas information that runs contrary to one’s preconceptions is subjected to greater scrutiny and judged less positively (Ditto, Scepansky, Munro, Apanovitch, & Lockhart, 1998). By contrast, when motivated by accuracy goals, “[Individuals] expend more cognitive effort on issue-related reasoning, attend to relevant information more carefully, and process it more deeply, often using more complex rules” (Kunda, 1990, p. 481).

Scholars find that directional motivation is especially common in the processing of political information. Prior research demonstrates that sociopolitical concepts are affect laden and that when they are invoked (even preconsciously), they trigger “hot cognition,” whereby these positive and negative feelings come to mind automatically and bias subsequent information processing (Lodge & Taber, 2005). This process of directional motivated reasoning leads individuals (regardless of ideological leaning) to seek out evidence that aligns with their preexisting views (confirmation bias), work to dismiss or find counterarguments for perspectives that contradict their beliefs (disconfirmation bias), and evaluate arguments that align with their views as stronger and more accurate than opposing arguments (prior attitude effect) (Kunda, 1990; Taber & Lodge, 2006). Moreover, rather than learning from exposure to new information, individuals who encounter new information that contradicts their prior perspective often become even more favorable to their prior beliefs (Redlawsk, 2002).

These dynamics related to directional motivation deserve careful attention from educators. They constrain individuals’ ability to learn, and in particular, they appear to limit learning from exposure to diverse viewpoints when it comes to politicized topics. Moreover, contrary to common assumptions, the biases associated with directional motivation are greatest among those with the most political knowledge. Taber and Lodge (2006) find that highly knowledgeable individuals—who possess the capacity to counterargue information and arguments that are contrary to their own views—tend to show a greater bias in favor of arguments consistent with their prior beliefs and take a longer time processing arguments that contradict their beliefs than they do arguments that align with their prior beliefs because the contradictory arguments are subjected to greater critical scrutiny.

At the same time, as Druckman (2012) notes, the dominance of directional motivation is not inevitable. A variety of contexts and interventions appear to foster accuracy motivation and diminish directional motivation. Such interventions and contexts include requiring individuals to justify their opinions, encouraging them to consider varied perspectives, and prompting reflection on one’s reasoning process. As we discuss in the following, these findings highlight priorities and norms that educators value and may have ways to promote.

Changes in the Media and Information Environment

As a result of changes in the news media environment, particularly the growing importance of cable TV and the Internet, it is now far easier for individuals to choose the source and partisan slant of the information and perspectives to which they are exposed (Prior, 2013). These changes have coincided with a dramatic increase in partisan polarization among both politicians and the electorate (Barber & McCarty, 2013). These dual dynamics make it far easier and more likely for individuals to act on confirmation biases and enter echo chambers (Sunstein, 2007) or be subjected to filter bubbles (Pariser, 2011) through which search engines guide exposure toward views and information that align with views and interests one already holds. And this process can create a reinforcing cycle. Studies show that exposure to views that align with one’s own fosters attitudinal polarization (Stroud, 2011). In sum, these changes in the media environment appear likely to increase individuals’ abilities to act in response to directional motivation and by fostering more extreme partisan leanings, increase the degree to which individuals’ judgments are driven by directional motivation.

Adding to these dynamics, a tremendous amount of political content now flows through social networking sites and other online platforms without being subject to vetting or credibility checks (Metzger, 2007). For example, an analysis of the 2011 YPP survey of youth ages 15 to 25 indicated that young people were roughly as likely to receive news on civic and political issues from Twitter and Facebook posts by family and friends as they were from newspapers and magazines read both online and offline (Cohen, Kahne, Bowyer, Middaugh, & Rogowski, 2012). In addition, the number of youth using social media to spread content is increasing dramatically. Pew Survey data indicate that the number of youth who posted political news on a social networking site grew from 13% in 2008 to 32% in 2012 (Rainie, Smith, Schlozman, Brady, & Verba, 2012; Smith, 2013).

Users are confronted with numerous challenges when assessing the credibility of the information accessed through these online sources of news, particularly given the widespread presence of political misinformation. Youth audiences evaluating a website’s credibility may rely on criteria such as the site’s surface characteristics (Sundar, 2008) or whether the site was the first result provided by a search engine (Hargittai, Fullerton, Menchen-Trevino, & Thomas, 2010). These heuristics for assessing the credibility of information sources are not adequate when evaluating political information. The combination of highly partisan sources, homophilous online networks, lessened influence of gatekeepers vetting the accuracy of truth claims, and the speed with which information can spread through digital media means that a great deal of political misinformation circulates online (Lewandowsky et al., 2012). Indeed, the prevalence of ideologically homophilous online networks can encourage the viral spread of politically motivated misinformation “among like-minded audiences who can now selectively attend to information based on their ideological beliefs and perspectives” (Rojecki & Meraz, 2016, p. 38). Moreover, as Lewandowsky et al. (2012) explain, both analytic and intuitive processing lead individuals to accept content that is consistent with their prior beliefs because it “feels right.” This can encourage both the acceptance of misinformation as true and the further spread of falsehoods through social media. Garrett (2011) finds that the effects of exposure to false rumors that circulated online during the 2008 presidential campaign were conditional on the political biases of those exposed to the rumors. Exposure to emails containing rumors about Barack Obama did not seem to affect the beliefs or behavior of supporters of the candidate; however, among nonsupporters, increased exposure was associated with a greater likelihood both of believing the false rumors about Obama and of forwarding the emails to others.

In addition, misinformation is particularly challenging because it is difficult to correct in the presence of directional motivated reasoning. Unlike the uninformed who are more likely to learn from exposure to new information, the misinformed are confident that they are correct, resist factually correct information, and use their misinformation to form their policy preferences (Kuklinski, Quirk, Jerit, Schwieder, & Rich, 2000). Furthermore, a “backfire effect” can occur where presenting the misinformed with correct information not only often fails to reduce their misperceptions but actually intensifies their commitment to their inaccurate “knowledge” (Nyhan & Reifler, 2010).

Directional motivated reasoning is not always problematic at the level of the individual. Individuals might be rational not to abandon prior perspectives to which they have given considerable prior thought and to be skeptical of new information that contradicts what they have already learned about an issue (Lodge & Taber, 2013). However, in the presence of misinformation, directional motivated reasoning has unambiguously negative implications for democratic deliberation. When individuals accept misinformation used to support policy arguments or even worse, when they choose to trumpet that misinformation to justify their position on an issue, they may well lead others who are not aware that the information is inaccurate to adopt a position they would not otherwise hold. In addition, these actions may lead those who hold opposing views and who are aware that the claims being made are inaccurate to believe that those they disagree with are ignorant—perhaps willfully—and to doubt the likelihood of reasoned dialogue in a democracy.

Research Questions

Although educators value and work to promote attention to the accuracy of information and the quality of arguments, educational researchers have rarely conducted systematic studies of the degree to which youths’ partisan beliefs bias their judgments of arguments and of truth claims regarding controversial issues. As a result, we lack information regarding the degree to which particular educational experiences or educational outcomes influence the impact of partisan biases on judgments regarding politically charged arguments or truth claims. Educational researchers are, however, well positioned to undertake studies that address such concerns. Indeed, we see significant value in this research agenda. Such studies could provide much needed guidance to civic educators committed to supporting the oft-neglected democratic purposes of schools.

The study detailed in the following responds to this need. In particular, we conduct an experiment designed to test how directional motivation and accuracy motivation affect young people’s judgments of the sorts of truth claims made about political issues that circulate through social media. Next, we examine how two factors that educators could promote (students’ political knowledge and exposure to medial literacy learning opportunities) might affect the degree to which directional and accuracy motivation shape students’ judgments of accuracy. Specifically, the experiment was designed to address the following research questions about the relative influence of directional and accuracy motivation:

Research Question 1: How does directional motivation influence assessments of truth claims?

Research Question 2: How does accuracy motivation influence assessments of truth claims?

Research Question 3: How are assessments of truth claims influenced by factors that schools can promote, such as students’ political knowledge and exposure to media literacy learning opportunities?

Research Question 3a: Do these factors influence the degree to which directional motivation shapes assessments of truth claims?

Research Question 3b: Do these factors affect the degree to which accuracy motivation affects assessments of truth claims?

Methods

Our examination of these questions draws on data from the Youth Participatory Politics Survey, a nationally representative survey of young people between the ages of 15 and 27 in the United States. Specifically, we embedded an experiment within this survey, which was administered by the GfK Group (formerly Knowledge Networks), a private company that maintains an online panel that is representative of the United States population. The sample for the YPP survey was drawn from two sources: GfK’s KnowledgePanel (KP)—a nationally representative, probability-based Internet panel 1 —and a supplemental Address-Based Sample (ABS) recruited specifically for this survey. The YPP survey included oversamples of African American, Asian American, and Latino youth, and the sampling frames were stratified by age and race. The KP was used to draw a direct sample of persons aged 18 to 27 as well as to draw a sample of parents with offspring between the ages of 15 and 27. From the latter group, the parent was asked to identify the race and ethnicity of each person aged 15 to 27 in the household, and if any individuals belonged to the target population and the parent consented to their participation, one eligible household member was asked to take the survey. 2

The ABS used the US Postal Service Delivery Sequence File as the sample frame. Selected households were sent a letter that described the study and invited one eligible household member to complete the survey online. The letter offered a monetary incentive for participation and provided a website address and unique password to complete the survey online. Initial nonresponders were sent a reminder postcard after about one week, and after about another two weeks, attempts were made to contact nonresponding households by telephone.

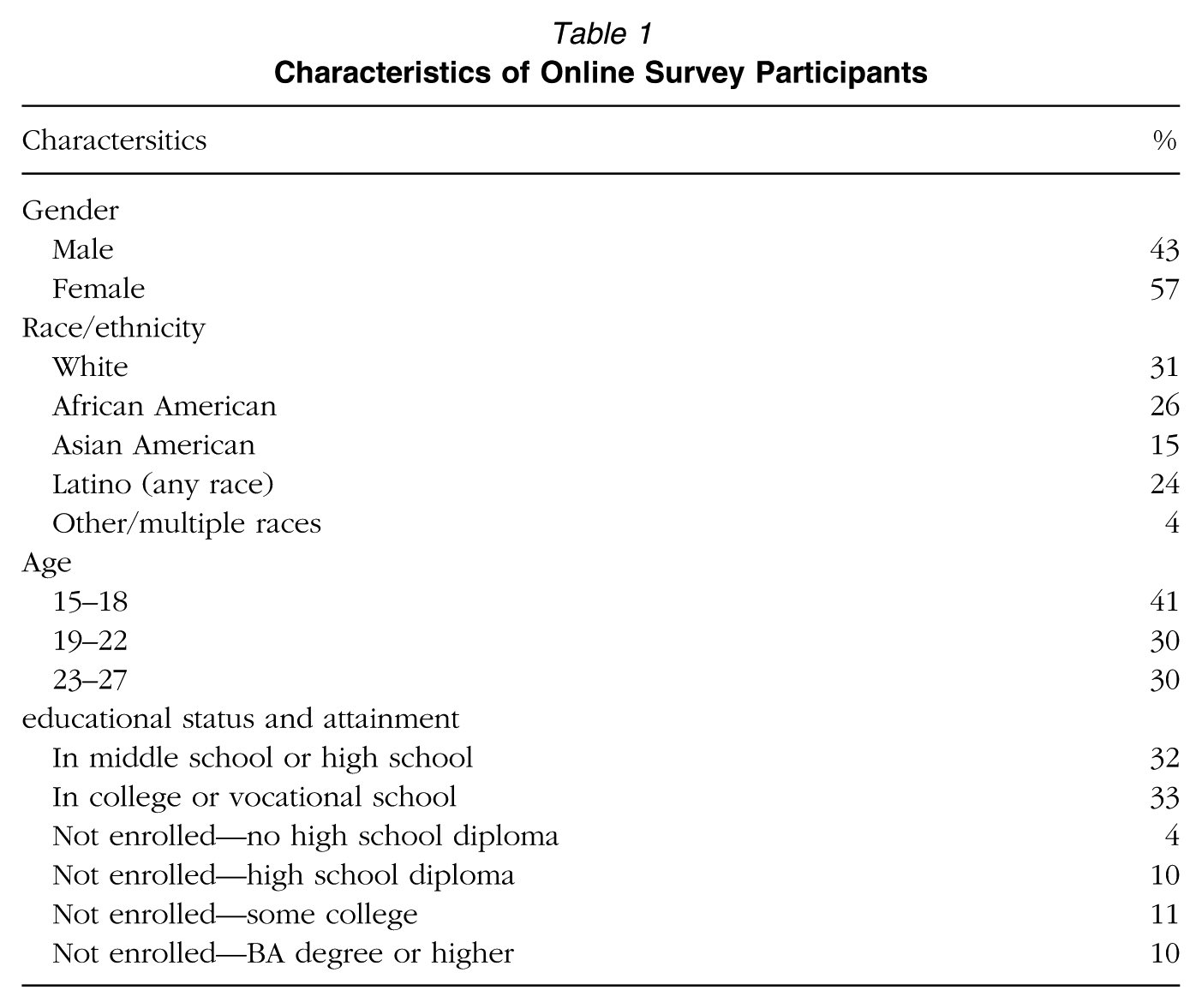

We analyze the responses of those participants who took the Englishlanguage survey online (N = 2,101). 3 Descriptive statistics, including demographic and educational variables, for this sample are reported in Table1. 4 The median respondent completed the online survey in 30 minutes.

Characteristics of Online Survey Participants

Experimental Design

In the midst of a survey about their online activity and political participation, respondents were exposed to the experimental treatment. This treatment was designed by the authors to incorporate relevant features of content that circulates online through social media, a major source of political information for young people (Cohen et al., 2012; Media Insight Project, 2015). We piloted the treatment to ensure that students were answering the questions in a manner consistent with the purpose of the items. 5

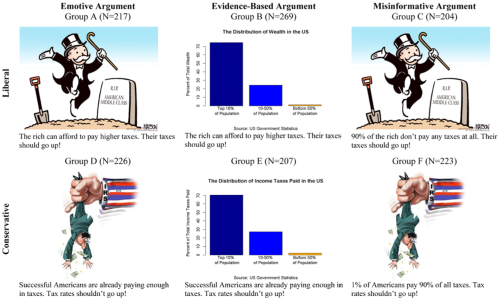

Survey participants were randomly assigned to see one of six “posts”—a picture (either a graph or a political cartoon) accompanied by a short comment—on the topics of economic inequality and tax policy. Figure 1 displays each of the posts as well as the number of participants assigned to each group, which ranged from 204 in Group C to 269 in Group B. 6 These topics were prominent and polarizing issues in political discussions at the time, following the 2011 Occupy movement and 2012 Presidential campaign.

“Posts” seen by experimental groups by ideology and type of argument.

As shown in Figure 1, these posts were manipulated to vary along two dimensions: political ideology 7 and the type of argument employed. By varying these two aspects of the posts simultaneously, we are able to gauge the relative influences of directional motivation and accuracy motivation on participants’ reactions. The comments were designed to be equivalent in style (two short, declarative sentences) while presenting opposite positions. Each comment was accompanied by a visual (graph or cartoon) that might be deployed by a supporter of that policy position. Participants assigned to Groups A, B, and C read a comment that presented a liberal position on the tax rates of wealthy individuals (the second sentence of the comment read, “Their taxes should go up!”). Those assigned to Groups D, E, and F were exposed to a conservative argument (the sentence read, “Tax rates shouldn’t go up!”).

In addition to their ideological position, the posts also varied in the type of argument that they employed. Groups A and D saw a post that was designed to present a subjective, emotive appeal without any empirical evidence. Those participants in the liberal condition (A) read, “The rich can afford to pay higher taxes,” while those in the conservative condition (D) read, “Successful Americans are already paying enough in taxes.” Both statements are inherently subjective and are phrased in such a way that supporters of the policy stance would be likely to accept the assertion while opponents would not. The statements were paired with a cartoon that was consistent with the opinion expressed in the post. Specifically, Group A viewed a cartoon of Mr. Monopoly dancing on the grave of the “American middle class,” and Group D saw a man being shaken upside down by the IRS. Neither cartoon presents evidence on behalf of the policy position; instead, both present emotive appeals on behalf of the purported victims of economic inequality (Condition A) or tax policy (Condition D). Both cartoons are characteristic of the kinds of cartoons used on the Internet to illustrate a perspective.

Groups B and E read the same two sentences as did Groups A and D, respectively, but the post that they viewed contained a graph rather than a cartoon. These two groups were presented with graphs containing accurate data that were constructed to be as equivalent as possible while presenting facts that were commonly cited by liberals (in Condition B) or by conservatives (in Condition E). “The Distribution of Wealth in the US” graph seen by Group B was based on data from the U.S. Census Bureau’s Current Population Survey, and “The Distribution of Income Taxes Paid in the US” seen by Group E was based on data from the Internal Revenue Service: Both were attributed to “US Government Statistics” in the graph to be consistent across conditions without being inaccurate. The two particular statistics were chosen because their distributions are remarkably similar: 10% of the U.S. population possess about 70% of the total wealth, and 10% pay about 70% of federal income taxes. All other details of the two graphs (e.g., color schemes and fonts) are identical. The main difference between these posts and those seen by Groups A and D is that while the latter provided strictly emotive arguments, the arguments viewed by Groups B and E were supported by evidence.

The final pair of experimental conditions was designed to test whether the presence of misinformation affects young people’s judgments of the accuracy of truth claims. Participants in Groups C and F viewed the same cartoons as did Groups A and D, respectively. However, instead of the subjective statements read by Groups A and D, the first sentences of the posts read by Groups C and F presented as fact a claim that was objectively false. Group C read that “90% of the rich don’t pay any taxes at all,” and Group F read that “1% of Americans pay 90% of all taxes.” Not only are these statements inaccurate, they are wildly so, erring by orders of magnitude, not mere percentage points. 8 Thus, the purpose of these two conditions is not to test young people’s knowledge of the details of the tax distribution but rather to see whether their judgments are influenced by inclusion of a substantial falsehood. 9 Overall, then, the six experimental conditions provide a liberal and conservative version of three types of arguments: an evidence-based argument, an emotive opinion statement, and an opinion backed by misinformation.

Dependent and Independent Variables

Judgment of Accuracy

Respondents’ judgments of the accuracy of the post they saw is our dependent variable. Immediately after viewing the post, participants were asked to rate the accuracy of the comment. Specifically, respondents placed themselves on a 4-point scale from strongly disagree to strongly agree regarding the statement, “I think this comment is accurate.” In summarizing psychological studies of motivated reasoning in a variety of contexts, Ditto et al. (1998) conclude that research consistently demonstrates that “information one wants to believe is perceived as more valid or accurate than information one prefers not to believe” (p. 54).

Alignment With Political Beliefs

To operationalize whether a post aligned with a participant’s prior beliefs, we considered the ideology of the post in conjunction with participants’ responses to a question about the appropriate role for the government in reducing income inequality. This question, administered in the survey prior to the experiment, asked participants to place themselves along a scale according to their beliefs about what the “government in Washington” should do to address income differences, ranging from 1 (the government ought to reduce the income differences between rich and poor) to 7 (the government should not concern itself with reducing this income difference between the rich and the poor). 10 Those participants who placed themselves on liberal end of the scale (points 1–3, N = 1,001) were coded as “ideologically aligned” if they were assigned to Groups A, B, or C and as “ideologically unaligned” if they were assigned to Groups D, E, or F. Participants who placed themselves at the conservative end of the attitude scale (points 5–7, N= 545) were coded as “ideologically unaligned” if they were assigned to Groups A, B, or C and as “ideologically aligned” if they were assigned to Groups D, E, or F. Respondents who placed themselves at the midpoint of the scale (4, N = 524) were excluded from the analyses as the hypotheses regarding the effects of ideological alignment do not apply to individuals who are ambivalent on the issue.

Nature of Argument

As described previously and shown in Figure 1, the experimental conditions also varied the nature of the argument employed in the post. We classify respondents according to which experimental group they were assigned. Namely, participants assigned to Groups A or D were exposed to an emotive argument, those assigned to Groups B or E saw an evidence-based argument, and those in Groups C or F read an argument with misinformation. In the multivariate analyses, these groupings are operationalized as dummy variables, with the misinformative argument serving as the baseline condition.

Political Knowledge

We measure political knowledge by summing the number of correct responses a participant gave on a battery of three questions about the American political system. These three items are a subset of the battery of items shown by Delli Carpini and Keeter (1996) to be a reliable and valid measure of general political knowledge and sophistication. 11 This measure has also been shown to be strongly related to awareness of current events (Price & Zaller, 1993) and stability in political attitudes (Zaller, 1990). Thus, our measure of political knowledge serves as a proxy for being politically informed in general, not just a measure of knowledge of specific political facts.

Media Literacy Learning Opportunities

Given the increasing difficulty of judging political claims—especially online—many are advocating increasing provision of civic media literacy education (Hobbs, 2010). Our focus in this study is on media literacy learning opportunities that aim to promote accurate judgment of truth claims. Exposure to media literacy learning opportunities that aim to promote such accurate judgment is measured by a scale created by summing responses to two questions asking respondents to recall their experiences in school. Respondents were asked how often they had “Discussed how to tell if the information you find online is trustworthy” and “Discussed the importance of evaluating the evidence that backs up people’s opinions” in their classes. 12 These experiences tap into two primary ways by which an accuracy motivation might be instilled through media literacy education: by cultivating skills for judging accuracy and developing commitment to a norm of accuracy.

Hypotheses

The first phase of our study addresses our first two research questions regarding the relative effects of directional motivation and accuracy motivation on individuals’ judgments of accuracy. We examine these relationships by comparing accuracy ratings across the different experimental conditions. Specifically, in order to assess the influence of directional motivation, we compare the responses of participants who saw an ideologically aligned post to those of participants who were presented an ideologically unaligned post, controlling for the type and quality of argument employed in the post. This approach of varying the partisan or ideological direction of an argument while holding the quality of argument constant is consistent with other studies of motivated reasoning (Taber & Lodge, 2006). Since the posts in each pair of experimental conditions (A and D, B and E, C and F) are equivalent in their accuracy, the difference in ratings between the ideologically aligned and unaligned conditions reflects the effects of directional motivation. If participants are guided by directional motivation, then we hypothesize (Hypothesis 1) that those participants assigned to an ideologically aligned post will be more likely to judge the post as accurate than those who were assigned to a post that did not align with their predispositions (holding constant the kind of argument being made).

To assess the impact of accuracy motivation, we analyze the responses of participants who were assigned to an ideologically aligned condition only. By restricting this analysis to ideologically aligned conditions, we hold constant the influence of directional motivation. While the effect of directional motivation should be the same in all three ideologically aligned conditions, the effect of accuracy motivation should vary by the type of argument employed in the post. In particular, it is in the experimental conditions involving an ideologically aligned post that contains misinformation that directional motivation and accuracy motivation are manipulated to be at odds with one another. For liberals, this occurs if they were assigned to Group C, and for conservatives, it occurs if they assigned to Group F. In these conditions, individuals who possess the will and capacity to act on accuracy motivation would be expected to identify their assigned post’s comment as being inaccurate in spite of their partisan motivation to agree with its policy stance. By contrast, the posts seen by Groups A, B, D, and E are subjective enough that someone who agreed with its point of view might be able to make a reasonable case for why it is accurate. 13 Consequently, we would expect (Hypothesis 2) that participants exposed to an ideologically aligned post will be less likely to rate the post as accurate if they were assigned to a misinformative post than if they were assigned to an evidence-based or emotive post.

After testing the main effects of the experimental manipulations, we turn our attention to the questions of how the educational characteristics and experiences of individual participants may influence these dynamics. We examine whether the relative effects of directional motivation and accuracy motivation on judgments of accuracy vary according to two characteristics of the participants: political knowledge and exposure to media literacy education. Prior research (e.g., Taber & Lodge, 2006) leads us to expect that the effects of directional motivation will be greatest for those individuals who possess the most political knowledge. Consequently, we expect that (Hypothesis 3) there will be an interaction effect between political knowledge and being exposed to an ideologically aligned post. Specifically, we expect that the difference in the accuracy ratings of participants who are assigned to an ideologically aligned post and those assigned to an unaligned post will be greater for participants with high levels of political knowledge than it is for participants with less political knowledge. In particular, since those individuals with more political knowledge “possess greater ammunition with which to counterargue incongruent facts, figures, and arguments” (Taber & Lodge, 2006, p. 757), we expect that participants with high levels of political knowledge will be less likely to rate ideologically unaligned posts as accurate than participants with less knowledge.

Similarly, media literacy learning opportunities may help students better understand the meaning of political communications. To the extent that this is true, individuals who received instruction in media literacy will be more likely to identify whether a political message to which they are exposed is consistent with their prior beliefs. Thus, we expect that (Hypothesis 4) there will be an interaction effect between media literacy learning opportunities and whether the post is ideologically aligned.

There are reasons to expect that the relative effect of accuracy motivation on judgments of the posts’ accuracy will be conditional on both an individual’s political knowledge and media literacy learning opportunities. Conventional wisdom would lead one to expect that those with more political knowledge would tend to have greater capacity to recognize if a post contained factual inaccuracies and to be able to make a judgment based on accuracy motivation. Thus, we hypothesize (Hypothesis 5) that there will be an interaction effect between political knowledge and the presence of misinformation in a post. We expect that highly knowledgeable individuals will be less likely to judge posts that contain misinformation as accurate than they are the other posts. Moreover, we expect that highly knowledgeable individuals will be less likely to judge posts that contain misinformation as accurate than are individuals with less knowledge.

The central rationale for media literacy instruction is that it should increase individuals’ attentiveness to the accuracy of information (a norm of valuing accuracy) as well as the skills to assess accuracy. Thus, we hypothesize (Hypothesis 6) that there will be an interaction effect between media literacy learning opportunities and the presence of misinformation in a post. We expect that those youth who report having received the most media literacy learning opportunities will be less likely to judge posts containing misinformation as accurate relative to the posts without misinformation. In addition, we expect that those individuals with more media literacy education will be less likely to judge posts containing misinformation as accurate than are youth who did not receive any such learning opportunities.

Statistical Methods

To investigate the first research question regarding how directional motivation influences judgments of accuracy, we compare the average responses of participants exposed to an ideologically aligned post with those who were assigned to an unaligned post, holding constant the type and quality of argument. To address the second research question about the influence of accuracy motivation, we restrict our attention to those participants assigned to an ideologically aligned condition and compare the average responses across the three different argument types. For both of these analyses, responses to the question about whether the post is accurate are collapsed into a dummy variable (coded 0 for strongly disagree or disagree and 1 for agree or strongly agree). We employ t tests to evaluate whether there are statistically significant differences in the means of this dummy variable across the experimental groups. 14

To investigate the third research question regarding whether the effects of directional motivation and accuracy motivation on judgments of accuracy are conditional on political knowledge and media literacy learning, we estimate a series of multivariate models that include interactions between the experimental conditions and, respectively, political knowledge and media literacy learning opportunities. In these analyses, we use the full range of the four-category dependent variable, and since this is an ordinal variable, we employ ordered logit regression.

Specifically, Models I through III evaluate the hypotheses about the effects of directional motivation on accuracy ratings. Models I and II are estimated as preliminary steps prior to the tests of the conditional hypotheses. Model I includes a dummy variable to capture the effects of the ideological alignment of the post as well as controls for the type of argument employed in the post and demographic variables. 15 Model II adds political knowledge and media literacy learning opportunities. Model III provides our tests of Hypotheses 3 and 4 by adding interaction terms between political knowledge and media literacy learning opportunities, respectively, and the ideological alignment of the post.

A similar logic is applied in Models IV through VI, which test the hypotheses regarding accuracy motivation, though these models are estimated for only those participants assigned to a pro-attitudinal condition. Model IV includes the dummy variables for the type of argument used in the post and control variables only, and Model V adds political knowledge and media literacy learning opportunities. Model VI includes the interaction terms between political knowledge and media literacy learning opportunities, respectively, and the type of argument dummy variables, in order to test Hypotheses 5 and 6.

Results

Directional Motivation and Judgments of Truth Claims

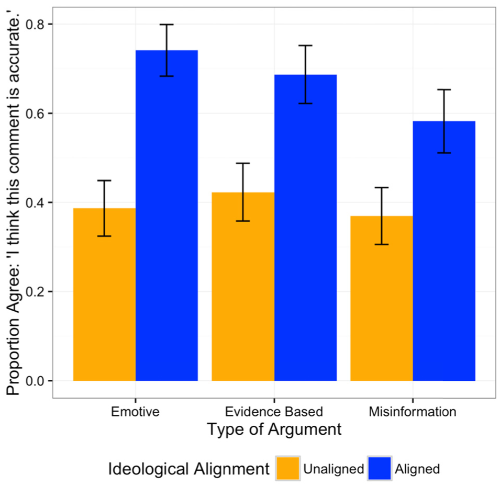

To investigate the first research question regarding how directional motivation influences judgments of accuracy, we compare the responses of participants exposed to an ideologically aligned post with those who were assigned to an unaligned post, holding constant the type and quality of argument. Figure 2 displays the proportion of participants who agreed that the post’s comment was accurate by the type of argument used in the post and whether the post was ideologically aligned with the participants’ prior attitudes. Comparing the average accuracy ratings of aligned posts and unaligned posts for each argument type, we find strong support for Hypothesis 1. On average, 67% of participants exposed to a post that aligned with their prior political views characterized the post as accurate compared to 39% of those who saw a post that did not align with their prior political perspective. Across all three types of arguments, there is a large and statistically significant difference (p < .001 for a two-tail t test) between those assigned to an ideologically aligned condition and those assigned to an unaligned condition: For all three argument types, a majority of participants assigned to an ideologically aligned post agreed that the statement was accurate while a majority of participants assigned to an unaligned post disagreed. 16 Thus, consistent with prior research, directional motivation appears to have a substantial effect on judgments of accuracy.

Ratings of post’s accuracy by ideological alignment and type of argument.

Accuracy Motivation and Judgments of Truth Claims

Comparing across the three different argument types in the ideologically aligned condition, we also find evidence of the influence of accuracy motivation as articulated in Hypothesis 2. Participants who saw an ideologically aligned post were less likely to rate the post as accurate if it contained misinformation. Fifty-eight percent of those who saw an ideologically aligned post that contained misinformation (Groups C and F) agreed that the post was accurate compared to 74% who saw an aligned emotive post (Groups A and D) and 69% of those who saw an aligned, evidence-based post (Groups B and E). Both of these differences between the misinformative posts and the two other conditions are statistically significant (p < .001 for a two-tail t test of the difference between the evidence-based and misinformation conditions; p = .03 for a two-tail test of the difference between the emotive-based and misinformation conditions). 17

Effects of Political Knowledge and Media Literacy Learning Opportunities on Judgments of Truth Claims

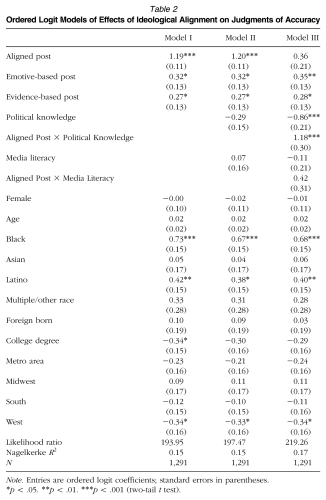

Having demonstrated that directional motivation and accuracy motivation appear to influence participants’ judgments of accuracy, on average, we turn to the third research question, which asks whether these effects of directional motivation and accuracy motivation are conditional on factors that schools can promote: political knowledge and exposure to media literacy education. The ordered logit regression models reported in Table 2 estimate the effects of ideological alignment on accuracy ratings, with Model III providing the tests of whether directional motivation is conditional on political knowledge and media literacy learning. The results of Model III are supportive of Hypothesis 3’s prediction that directional motivation will have a greater influence on those participants with the most political knowledge. Those with more knowledge are more likely than others to judge posts that align with their prior beliefs as accurate (regardless of the posts’ actual accuracy). The main effect of political knowledge is negative and statistically significant, indicating that in the ideologically unaligned conditions, greater political knowledge is associated with a lower likelihood of agreeing that the comment is accurate. In addition, the interaction between being assigned to an ideologically aligned post and political knowledge is positive and statistically significant, consistent with the hypothesis that the effects of directional motivation are greatest among those with the most political knowledge.

Ordered Logit Models of Effects of Ideological Alignment on Judgments of Accuracy

Note. Entries are ordered logit coefficients; standard errors in parentheses.

p < .05. **p < .01. ***p < .001 (two-tail t test).

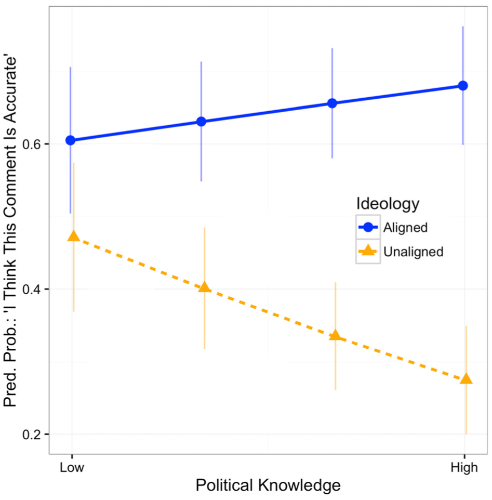

These conditional effects are illustrated in Figure 3, which plots the predicted probability of agreeing that the comment is accurate by the participants’ level of political knowledge. This predicted probability is calculated separately for those participants assigned to an ideologically aligned condition and those assigned to an unaligned condition, with all other variables included in Model III held constant. Political knowledge clearly has a large effect in the unaligned conditions. For an otherwise typical participant assigned to view an unaligned post, the predicted probability of agreeing that the comment is accurate declines from .47 for someone at the low end of the political knowledge spectrum to .27 at the high end. In the ideologically aligned condition, however, the difference in probabilities across the range of political knowledge is small (.60 at the low end to .68 and the high end) and not statistically significant. Put differently, among those with low levels of political knowledge, we see a modest difference between the ideologically aligned and unaligned conditions (.60 and .47, respectively). By contrast, among those with high levels of knowledge, we see a large gap between the aligned and unaligned conditions (.68 to .27).

Accuracy ratings by ideological alignment and political knowledge.

By contrast, the findings for Model III do not support Hypothesis 4. Neither the estimated effect of media literacy nor the interaction effect between ideological alignment and media literacy are statistically significant. This indicates that the effects of motivated reasoning are not conditional on the amount of media literacy education that an individual received. 18

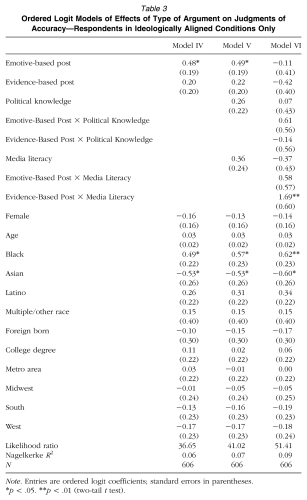

Table 3 presents the results of the set of models that investigate whether the influence of accuracy motivation on judgments of accuracy are conditional on individuals’ political knowledge and media literacy education. Contrary to Hypothesis 5, individuals with high levels of political knowledge do not appear to be influenced more by accuracy motivation than do other participants. Even though those with more knowledge might be expected to have greater capacity to recognize misinformation, having greater knowledge does not increase the likelihood of rating posts with misinformation as inaccurate relative to the two other ideologically aligned posts. That is, while Model III indicates that the effect of directional motivation on judgments of accuracy is conditional on political knowledge, Model VI suggests that political knowledge does not affect the relative influence of accuracy motivation.

Ordered Logit Models of Effects of Type of Argument on Judgments of Accuracy—Respondents in Ideologically Aligned Conditions Only

Note. Entries are ordered logit coefficients; standard errors in parentheses.

p < .05. **p < .01 (two-tail t test).

By contrast, there is support for Hypothesis 6’s prediction that accuracy motivation will have a greater influence on those individuals who reported having had the most media literacy learning opportunities. Specifically, there is a positive, statistically significant interaction effect between student reports of media literacy learning and viewing an evidence-based post (relative to seeing a post with misinformation). For those participants who reported receiving no media literacy training, there is no statistically significant difference across the three types of arguments employed in the posts. The presence of misinformation in a post does not appear to affect their judgments of the post’s accuracy. However, among those participants who reported the most media literacy learning experiences, there is a large, statistically significant difference in ratings of accuracy between those exposed to a post that employed misinformation and those who saw an evidence-based post. 19

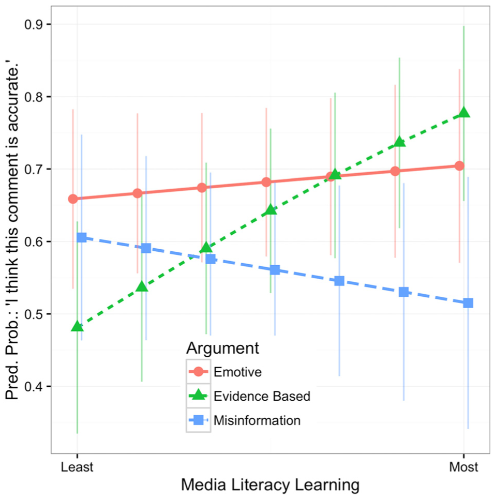

Figure 4 illustrates the substantive significance of this interaction by plotting the predicted probability of agreeing that the post is accurate while varying reports of media literacy training and the presence of misinformation, holding constant political knowledge and all the control variables. Participants who reported little media literacy education do not seem to make distinctions between the posts based on the type of argument employed. If anything, they are more likely to rate the (objectively false) post with misinformation as accurate (.60) than the evidence-based post (.48), though this difference is not statistically significant. Conversely, those individuals who reported having the most media literacy learning experiences appear to make a clear distinction between the evidence-based posts and the posts with misinformation. For otherwise typical participants who reported having the maximum amount of media learning opportunities, the predicted probability of saying that the post is accurate declines from .78 if they were assigned to an evidence-based post to .52 if they saw a post with misinformation.

Accuracy ratings by argument type and media literacy learning opportunities.

Discussion

The democratic process suffers when individuals are inattentive to or unable to judge the factual accuracy of political content (Delli Carpini & Keeter, 1996). Acceptance and circulation of misinformation undermines reasoned decision making and informed action while delegitimizing the promise of deliberation. Moreover, increasing partisanship and dynamics associated with political engagement in the Digital Age (e.g., the diminished vetting of truth claims by gatekeepers and the prevalence of homophilous online networks) have increased both exposure to misinformation and the need to prepare youth to assess the accuracy of truth claims.

This study contributes to our understanding of how young people judge the factual accuracy of partisan claims tied to controversial societal issues and demonstrates clear reason for concern. To summarize, we found evidence that youth are guided by both directional motivation and accuracy motivation when making such judgments. But we also found that the impact of alignment with one’s prior beliefs was greater than the impact of whether a given statement is accurate. In addition, the study’s findings provide guidance regarding strategies that civic educators and others seeking to prepare youth for engagement in a democratic society can use to respond to these dynamics. In particular, we found that the influence of directional motivation was greatest for those with the most political knowledge and that counter to our expectations, those with high levels of political knowledge were no more likely than others to correctly identify inaccurate truth claims. The findings with respect to media literacy were exactly the reverse. Youth who reported having media literacy learning opportunities were no more likely than others to be influenced by directional motivation but were significantly more likely to be influenced by accuracy motivation. In the following, we discuss the implications of these findings for those committed to promoting the democratic aims of education.

Educators Must Respond to the Problems Resulting From DirectionalMotivation

Consistent with prior research on directional motivation (Kunda, 1990; Lodge & Taber, 2013) and as noted previously, we find strong evidence of directional biases when young people are asked to evaluate the accuracy of a political claim. Even when presented with a grossly inaccurate statement, a clear majority of youth (58%) in the nationally representative YPP survey agreed that the statement was accurate when those claims were used to support perspectives that aligned with their ideological perspective. In a media environment in which political misinformation circulates widely and rapidly and in which individuals can easily seek out news and perspectives from sources that champion their beliefs, this psychological tendency of individuals to accept claims that align with their beliefs as true, even when the claims are not accurate, will undermine the quality and ultimate productivity of democratic deliberation. Thus, it is important for educators to identify ways to counteract the impact of directional motivation on judgments of partisan content.

Political Knowledge Is an Insufficient Support for Accurate Judgment

Contrary to conventional wisdom, this study also indicates that political knowledge is an insufficient support for accurate judgments of partisan claims. Motivation is also of central importance. In particular, we find that political knowledge appears to magnify the impact of directional motivation. Those youth who possess high levels of political knowledge are significantly more likely than less knowledgeable youth to judge content as inaccurate when that content does not align with their prior beliefs. As described by Taber and Lodge (2006), individuals with greater political knowledge may have an increased capacity to counterargue messages that contradict their partisan leanings. Those with more political knowledge may also have greater ability to recognize the political implications of varied posts and thus be better able to align their judgments of those posts with their prior beliefs (Bowyer, Kahne, & Middaugh, in press).

On their own, the dynamics associated with knowledge are not necessarily problematic. Knowledge may enable youth to better align their beliefs with their judgments. However, from the standpoint of preparing students for political deliberation, political knowledge is insufficient. It does not lead youth to effectively attend to the factual accuracy of claims—only to a claim’s alignment with their beliefs. Highly knowledgeable participants are just as likely as their less knowledgeable peers to accept an ideologically aligned post as accurate even when a post contains substantial falsehood. Thus, although it is common for those discussing the democratic aims of education to assume that promoting students’ political knowledge will help youth make reasoned and accurate judgments related to pressing policy issues, such knowledge does not appear to enhance the likelihood that individuals will identify misinformation in charged political contexts.

Media Literacy Education Is an Essential Support for Judgment in a Highly Partisan Digital Age

In contrast to these findings regarding political knowledge, we were heartened that media literacy learning experiences that aim to promote accurate judgment of truth claims appear to be helpful. Individuals who reported high levels of media literacy learning opportunities were considerably more likely to rate evidence-based posts as accurate than to rate posts containing misinformation as accurate—even when both posts aligned with their prior policy perspectives. Those who reported no exposure to media literacy education, in contrast, were not more likely to rate posts with evidence-based arguments as more accurate than posts that contained misinformation. We believe this finding is important. It indicates that media literacy learning opportunities that aim to promote accurate judgments of truth claims may well advance a form of what Lavine et al. (2012) label critical loyalty. Those with critical loyalty still hold strong values and beliefs, but they adopt a critical stance when evaluating an argument—even when that argument aligns with their partisan preferences.

Future Educational Research Must Conceptualize and Test Specific Educational Responses to Motivated Reasoning so as to Enhance the Quality of Political Judgment

While these findings provide a clear indication of the potential potency of media literacy learning opportunities, much more work remains to be done. Clearly, one limitation of our data is that we rely on self-reports of receiving media literacy learning opportunities, and we lack details on the media literacy learning opportunities that students received. Studies of particular interventions (especially if structured as field experiments) would enable direct measurement of what students received and would avoid the potential biases associated with student reports of receiving media literacy. Such an approach would also help to clarify the programmatic components of media literacy education that have the greatest impact on students’ will and capacity to make accurate judgments. In addition, since both political knowledge and media literacy learning opportunities were not randomly assigned in our survey experiment, we cannot rule out the possibility that other, unobserved factors might explain the differences across participants in their judgments of accuracy. 20 Again, structured experiments tied to particular interventions would be especially helpful in establishing the causal impact of varied educational experiences.

More broadly, these findings highlight the potential payoff of educational responses to problematic dynamics related to motivated reasoning. As Deanna Kuhn (2011) has written, Although people may use argument in self-serving ways that they are in limited command of, it doesn’t follow that they cannot achieve greater conscious command and come to draw on it in a way that will enhance their cognitive power. (p. 83)

When assessing exposure to media literacy, we asked youth if educators had discussed how important it was to evaluate evidence that backs up opinions (emphasizing the norm of accuracy motivation) and if they had provided skills (or capacities) that would help them judge the accuracy of information they find online (emphasizing the need for skills). It would be wise to test additional ways to promote the norm of accuracy motivation as well as the skills or capacities to act productively in response to this motivation.

Indeed, media literacy, while a fruitful strategy to pursue, is but one way that educators prepare students to care about and be able to assess truth claims in policy arguments. Frequently, for example, teachers engage students in debates of controversial issues and have students write research papers that examine controversial issues. In addition, scholars have studied ways that differing classroom discussion practices and discussion goals (i.e., prioritizing convincing others vs. prioritizing reaching consensus) can foster desired forms of argumentation (Felton, Garcia-Mila, Villarroel, & Gilabert, 2015; Kuhn & Crowell, 2011; Michaels, O’Connor, & Resnick, 2007). In one study, Kuhn and Crowell (2011) found that curricula engaging students in dialogues on social issues can increase the quality of argumentative reasoning, as exhibited in writing on topics that were not part of the curricular intervention (see also Hess & McAvoy, 2015). Fortunately, this priority aligns with broad school reform agendas. The ability to assess the quality of truth claims and arguments figures prominently in the Common Core State Standards (National Governors Association Center for Best Practices & Council of Chief State School Officers, 2010) and the National Assessment of Educational Progress (NAEP) Civics Test (National Center for Education Statistics, 2015), for example.

In short, the general concern for preparing youth to judge the accuracy of truth claims, like the broader concern for the democratic purposes of schooling, should not be confined to a single priority such as media literacy. Rather, we believe these findings highlight dynamics worthy of study in multiple domains. Indeed, educators still have much to learn about whether and when varied curricular experiences are shaped by and may influence the prevalence and impact of accuracy and directional motivation in real-world political contexts. Moreover, our study focused on identification of one kind of misinformation. If educators are to prepare youth to judge the quality of a policy argument, many qualities of political argument are worthy of attention (e.g., how partisan leanings influence assessments of whether an argument employs coherent reasoning).

The relationships between directional and accuracy motivation on the one hand and a range of curricular practices and capacities for judgment on the other deserve careful study. Such work can clarify the impact of varied approaches and deepen our conceptual understanding of ways to promote high-quality judgment of politically charged issues. These skills are essential given the dramatic expansion of choice when it comes to news media, the diminishing role of gatekeepers, and the widespread circulation of misinformation. Without the capacity for and the commitment to the accurate assessment of truth claims regarding controversial political issues, the links between rigorous thought and evidence on the one hand and democratic deliberation and informed policymaking on the other are severely compromised. The emphasis educators place on knowledge and analytic reasoning in non–politically charged contexts is not misplaced, but this focus is insufficient if we are to fully prepare youth for democratic participation in an increasingly partisan age.

Footnotes

Notes

J

B