Abstract

For researchers working ev national security, nuclear weapons, and arms control, the Internet is an electronic embarrassment of riches. The Web surpasses all other media as a source for materials from government agencies, academics, nongovernmental organizations, special interest groups, corporations, and the news media. Government, military, and educational domains account for an estimated 12-15 percent of Internet content (some 300-400 million “pages”).

The Internet is also the leading source for so-called “gray” literature, which includes academic studies, position papers, reports by public policy institutes, government proceedings, and dissertations. Before the Web, this type of material rarely circulated outside the world of wonks, academics, and inside-the-Beltway policy-makers.

Yet information on the Internet is not comprehensive. Nor is it selective. Most online material has not been published by academics or deemed important by the news media. As a result, what is on line can be a mystery, which often makes it as difficult to retrieve quality material now as in the golden days before the Web.

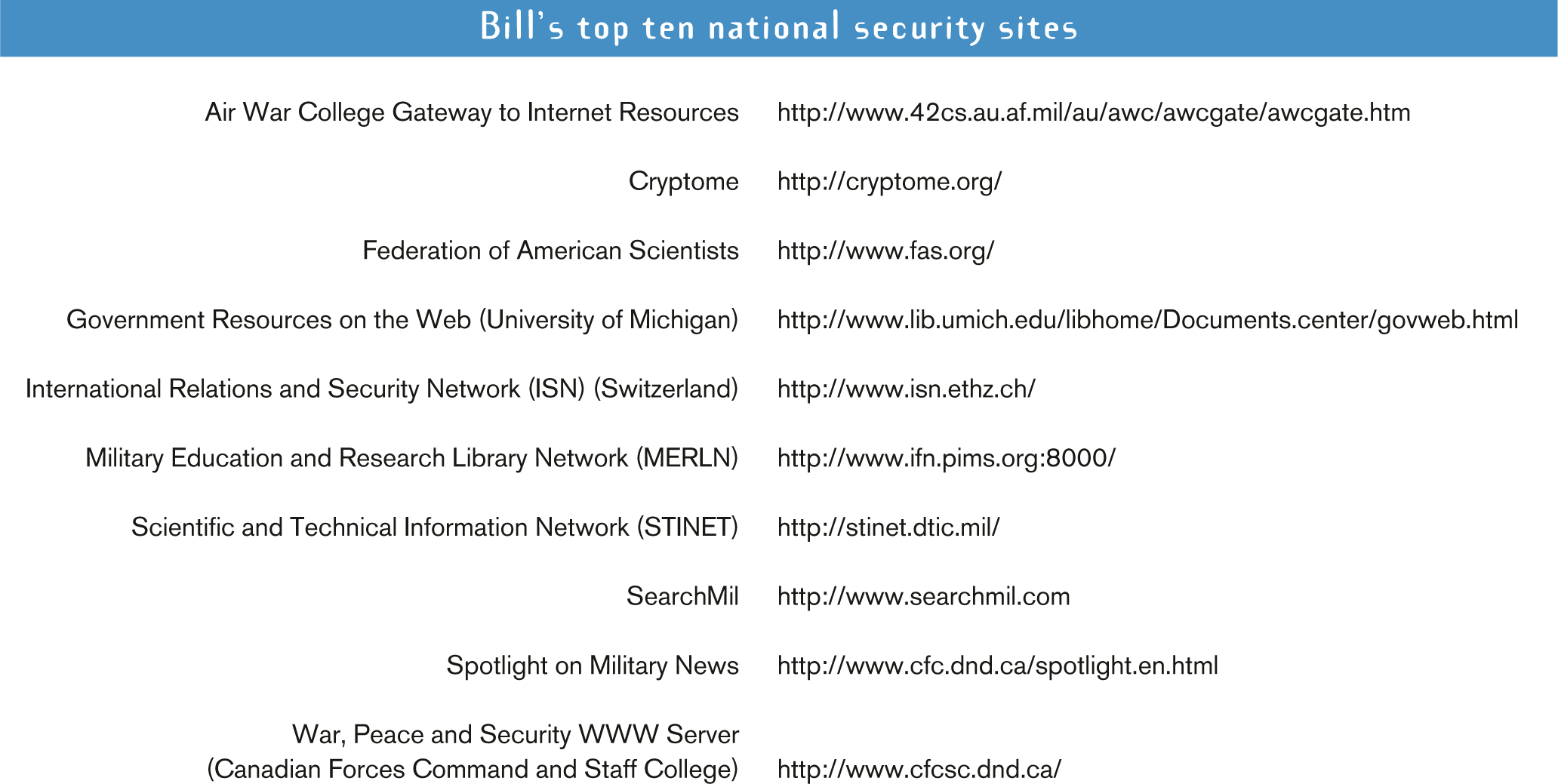

Even in a narrow field like national security, there is no methodical catalog of relevant Internet content and no comprehensive search service. To help students, researchers, and journalists maximize their use of the Web, the Center for Strategic Education at Johns Hopkins University published William Arkin's book National Security Research on the Internet (the second edition of which was published last year). The guide's online version (www.sais-jhu.edu/cse/) is supplemented by a nine-part meta-directory of national security resources, including search engines and directories, reference materials and data sources, libraries and archives, national security portals and links, national security media resources, U.S. government resources, foreign institutes and international private organizations, and regional and country studies.

It is common knowledge that the Internet emerged from a Pentagon network built to survive nuclear war. Other, less well-recognized precursors were the proprietary information networks that provided up-to-the-second feeds on the status of the stock and commodity markets. Using desktop “black box” systems, a financial manager could click on a ticker to obtain more and more detailed information. When the first graphical browser emerged in late 1994, hyperlinking was brought to the desktop of Internet users, and the World Wide Web was born.

The Web has now grown to include more than two billion pages, with content doubling in the past year alone. Add to this the tens of billions of database records now appended to the Web and you get some idea of the difficulty of cataloguing and finding material. Further complicating matters is the “Web's financial legacy, which has helped create a culture that favors immediacy and views all material as perishable. The Web has yet to develop any convention of permanence: Resources get posted ad hoc, sites go off line, and pages are moved around internally within a site. (There are, however, several meta-sites like that of the Federation of American Scientists, which collect hard-to-find material and create a permanent home for it.)

Search services–or “engines”–are not only tasked with cataloguing astronomical numbers of pages, they also have to be sustainable businesses. And their main clients are not national security specialists. A common complaint is that there is too much information on line, not too little. As search engine businesses have transitioned from being one-dimensional search services to “portals,” the size of their search engine databases has become less important. Other services like news bulletins, weather updates, and games that get the user to linger on the site have become more important than the search engines themselves, which only invite users to leave the site.

A further problem with search engines is their inability to keep up with the rapid pace of change. Even the best search engines (Google, FAST, HotBot, Northern Light) do not re-index material quickly enough to stay up-to-date. It is impossible, for example, to find new resources and databases that are triggered by breaking news stories by relying solely on search engines.

Proper search methods and query syntax can greatly enhance one's success at tracking down information. National Security Research on the Internet provides a tutorial on how to formulate effective queries when using general search engines.

For many subjects, the Internet contains tens or hundreds of thousands of individual pages, hundreds or thousands of relevant Web sites, and dozens or scores of meta-information sites to assist the researcher. Meta-information–information on how to find information–includes search engines, directories, and primary sources.

Many search services now include country and regional engines, as well as specialized engines–like GovBot or SearchMil–that index particular types of information. Directories, whether general in nature (like Yahoo!) or specialized (like the thematic directories of the www Virtual Library), catalog content by subject.

How does one find material when even a well-thought-out search fails? The answer is to go directly to the information source or to sites that typically catalog the information. It's called Internet intuition, which requires a good sense of the Web's landscape. In a library, material is organized according to strict rules. The “Web does not have a similar organizational structure, but Internet intuition can help one effectively navigate the terrain.

Serious online research requires looking for material on multiple search engines, using all of the meta-information tools available, and broadening and narrowing search terms as necessary. Finally, a lot of information is not on the Web. But one can always go to the library.