Abstract

The Turing Test is a method of testing whether a machine has human intelligence. A novel brain–computer interface (BCI) Turing Test was proposed in the BCI Controlled Robot Contest in World Robot Contest 2022. Contestants developed algorithms that can distinguish if an instruction is issued by a human. Participants collaborated with an electroencephalogram-based BCI to play a soccer game in a virtual scenario. Participants were asked to perform steady-state visual evoked potential (SSVEP) tasks or motor imagery (MI) tasks to control the robots or be in an idle state to mimic the system giving instructions on behalf of the participants. Several algorithms proposed in this competition are developed based on the concept that the idle state is a category in multiclass classification problems. This paper details the algorithms of the top five teams with the best performance in the final, lists the popular classification models and algorithms for MI and SSVEP, and discusses the effectiveness of each approach in improving classification performance and reducing the computation time.

Keywords

1 Introduction

A brain–computer interface (BCI) can establish direct communication between the human brain and computers. Guided by the National Natural Science Foundation of China and sponsored by the Information Science Department of the National Natural Science Foundation of China, the Chinese Society of Electronics and Tsinghua University, the BCI Controlled Robot Contest, which widely regarded as the “Olympics” of robotics, was held in Beijing Etrong International Exhibition and Convention Center from August 18 to 22, 2022. The contest was divided into two rounds, namely preliminary and final rounds. The preliminary round was held on the cloud, and the final round was held on site. Among them, 250 teams from 94 colleges and universities participated in the preliminary competition. Among these teams, 15 teams entered the final, and finally, 11 first prizes were awarded based on team points and ranking of each competition tasks [1].

The Turing Test was proposed by English mathematician Alan Turing to determine whether a machine can mimic human behavior based on human’s judgment. The BCI Turing Test shares a similar concept with the Turing Test but uses algorithms instead of humans to determine whether the instructions output by the BCI system originates from humans’ real will or machines’ assistance by using EEG signals. With the rapid development of artificial intelligence technology, the integration of BCI technology and artificial intelligence (AI) technology is increasing rapidly. AI is used in many BCI systems to assist decision-making, allowing the BCI to perform tasks with AI to improve work efficiency. However, because of this result, we may not be able to directly infer whether an instruction originated from a human or a machine. For example, when a disabled person used a BCI to type, the outside world may not be able to determine whether the output characters originated from the real will of the user or from the AI system. Therefore, the BCI Turing Test is devised in which EEG signals are used to determine whether a human is using a BCI system to send commands or an AI system is controlling. Here, 2022 is the first year that the BCI Turing Test was added to the BCI Controlled Robot Contest.

In the BCI Turing Test, BCI is used to control robots in a 2 versus 2 soccer game through motor imagery (MI) and steady-state visual evoked potential (SSVEP). Each robot has two degrees of freedom of movement, including left and right movement, passing, and shooting. For Robots 1 and 2, the system randomly selects one robot to be controlled by a human through BCI, and the other robot moves autonomously.

Motor imagery is a commonly used BCI paradigm through which users can generate brain signals to exchange information with external devices [2]. By decoding and analyzing raw EEG signals, the MI-based BCI system can assist patients in rehabilitate partial motor function. Furthermore, the applications of MI-based BCI also include wheelchair control [3], robot arm operation [4], and quadcopter manipulation [5]. EEG signals are nonstationary signal, and because of volume conduction, the signal recorded by the electrode is typically attenuated and distorted [6]. Therefore, an MI-based BCI system requires advanced signal processing methods and machine learning algorithms to decode EEG signals. Conventional pattern recognition in BCI typically involves three steps, namely preprocessing, feature extraction, and classification. Specifically, the preprocessing stage removes artifact and noise, feature extraction step is designed based on neural basis and prior knowledge about human brain, and classification stage is typically implemented by popular machine learning methods, such as support vector machine (SVM) and linear discriminative analysis (LDA). Frequency analysis and time–frequency analysis are two popular features used in MI because of event-related synchronization (ERS) and event-related desynchronization (ERD) during MI [7]. The common spatial pattern (CSP) and its variants are another feature that is used to construct optimal spatial filters [8]. Moreover, sophisticated deep learning models, such as DeepConvNet and ShallowConvNet proposed by Schirrmeister et al. [9] and EEGNet proposed by Lawhern et al. [10], have been proposed to avoid manual feature extraction and achieve state-of-the-art performance in BCI.

Steady-state visual evoked potential is a BCI paradigm based on periodic brain responses caused by repeated visual stimuli. When subjects repeatedly receive visual stimuli at a certain frequency, SSVEP appears as brain activities that have most information at the stimulation frequency and its harmonics [2]. Steady-state visual evoked potential is a popular paradigm in many practical applications, such as text input [11] and robot control [12], because of its high information transfer rate and signal-to-noise ratio. In a typical real-time SSVEP-based BCI, users are required to stare at the flickering target on the screen as the EEG signals are collected using EEG headsets and transmitted to computing equipment. After the algorithm processes the EEG signals and extracts the frequency features, the corresponding target is displayed on the screen. In SSVEP-based BCI, the critical step is to determine which frequency is the target frequency by analyzing EEG signals [13]. First, people only used single-electrode EEG signals, for example Oz, to perform spectrum analysis, which is simple but is inefficient and imprecise. Next, several multichannel frequency recognition algorithms, namely calibration-free and calibration-based approaches, have been developed. Calibration-free methods, such as canonical correlation analysis (CCA), do not require additional data and are easy to use. Calibration-based approaches, such as task-related component analysis (TRCA) and extended CCA (eCCA), typically exhibit superior performance for calibration data can be used to construct template data and spatial filters. However, these models require additional calibration time, which degrades user experience.

In this paper, we review the algorithms submitted by the top five teams (HDU_BMCI, HUST_XS_ZJ, Ecust_BCILab, UMBCI, XianLiGong) in the final of 2022 WRC BCI Turing Test. The remainder of this paper is organized as follows. Section 2 focuses on introducing the paradigm and competition rules of the BCI Turing Test. Section 3 summarizes the algorithms and strategies used by each team. Section 4 reviews the results of preliminary and final competitions and discusses the advantages and disadvantages of each approach. Section 5 presents the conclusion.

2 Paradigm and competition rules

This section first introduces the paradigm, including paradigm, task flow, and data format. Next, we present the evaluation metric used in the contest and the corresponding scoring rule.

2.1 Paradigm

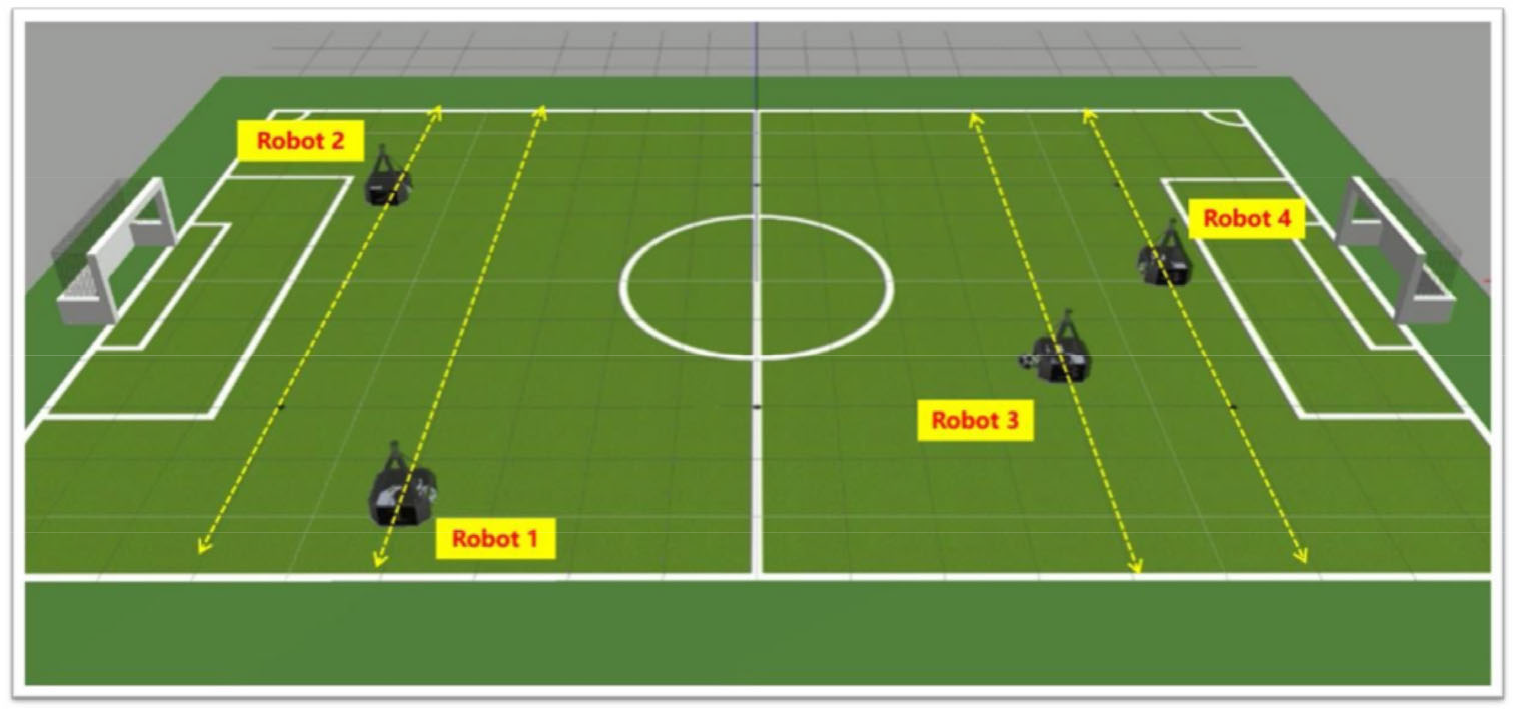

The soccer robot 2 versus 2 confrontation in the simulation environment was the BCI Turing Test scenario. As displayed in Fig. 1, two group robots were on opposite sides of the field. In a turn-based shooting competition, one side attacks and the other side defends. Each robot has two degrees of freedom of movement, including left and right movement, passing, and shooting. For Robots 1 and 2, the system randomly selected one robot to be controlled by a human through BCI, and the other robot moved autonomously. Robots 3 and 4 moved completely autonomously.

BCI Turing Test scenario.

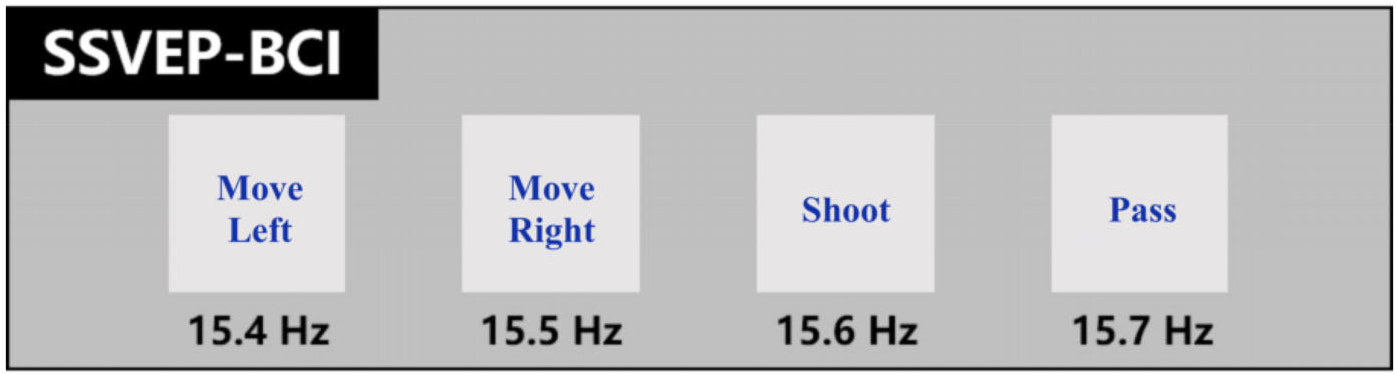

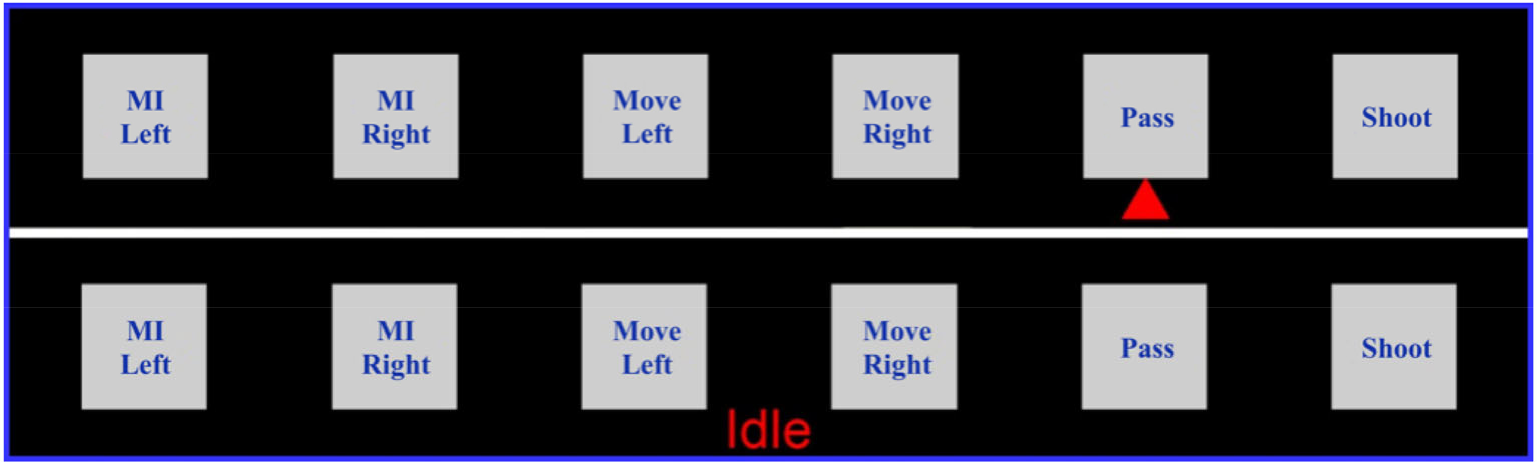

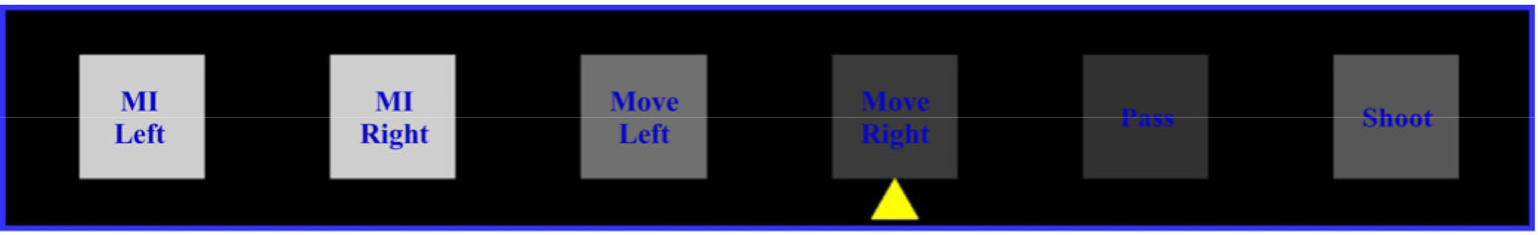

The participants in the soccer game used two BCI paradigms, namely, MI and SSVEP. The direction of robot movement is controlled by left/right hand motion imagination; the left hand motion controls the robot to move left, and the right hand motion controls the robot to move right. The SSVEP-based controlling method provides four options, including left movement, right movement, passing, and shooting and the stimulation frequencies were 15.4, 15.5, 15.6, and 15.7 Hz, respectively, and the initial phase was 0. The stimulation brightness was realized through sine modulation [grayscale range: [−0.618, 0.618], 1 corresponds to RGB (255, 255, 255), −1 corresponds to RGB (0, 0, 0)]. The control panel for SSVEP is presented in Fig. 2. Left and right movements can be realized both by MI and SSVEP, while passing and shooting can only be realized by SSVEP. Apart from controlling the robots through BCI, participants may also be in an idle state, in which case the actions of the robot are assigned by the program automatically. Figures 3 and 4 present an example of when a task prompt and SSVEP stimuli are given.

SSVEP-BCI control panel.

Control panel when giving task prompts.

Control panel when giving SSVEP stimuli.

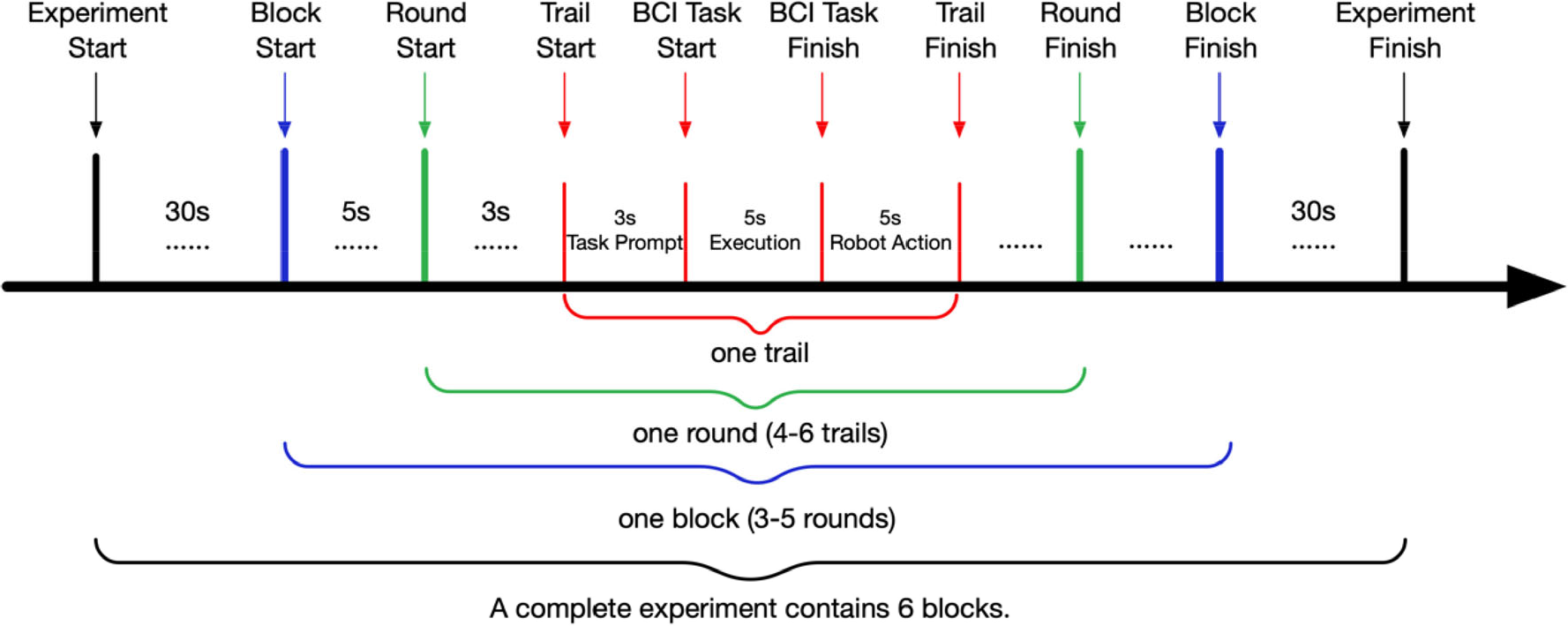

Each subject is required to complete 6 blocks, and each block consists of 3 to 5 rounds with 4 to 6 trials in each round. The experiment process is displayed in Fig. 5. Between the two blocks, the subjects determined the rest duration independently, and the process was performed continuously within the block. The robot position on the field was randomly initialized when a round started. A trial is a complete robot behavior, including the task prompt stage (3 s), the execution stage according to the prompt (5 s), and the robot action stage (5 s).

Experimental procedure.

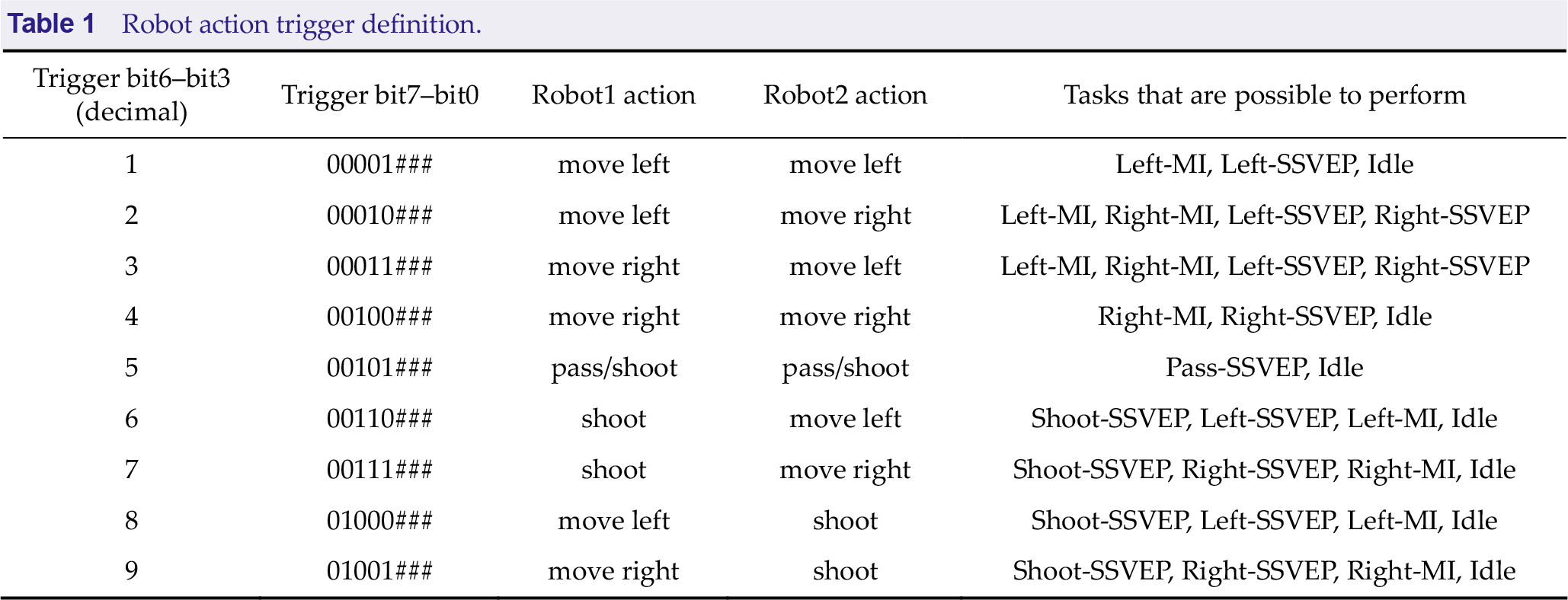

Task prompt stage: The system plans the actions of Robots 1 and 2 according to the situation in the field and randomly assigns a robot to be controlled by the subjects through BCI. Next, the corresponding BCI task is generated, and the subject is prompted to perform it. The BCI tasks that a robot behavior may correspond to are presented in Table 1. During this period, the robot in the field remains stationary.

Robot action trigger definition.

Execution stage: The subjects performed the corresponding BCI task according to the prompt. If the task is MI, then subjects perform the corresponding left/right hand motor imagination, and the SSVEP visual stimulus does not flicker. When the task is SSVEP, the SSVEP visual stimulus flickers. If the task is idle, then the subject remains idle. During this period, the robot in the field remains stationary.

Robot action stage: the subject stops performing the BCI task, and the robot performs the action according to the plan.

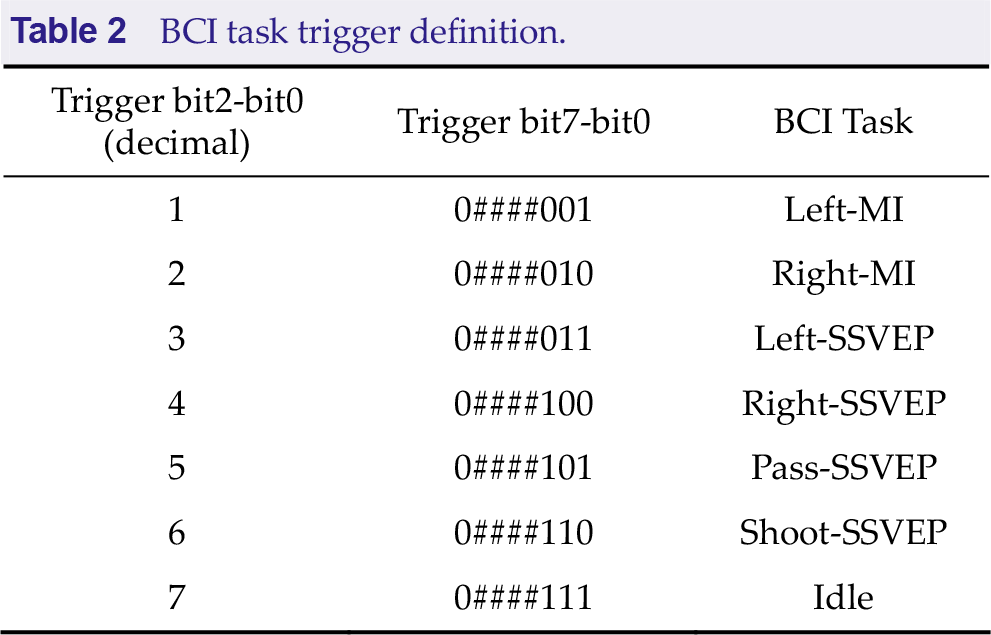

The system provides EEG data of each subject and triggers containing action information in each trial. Contestants are required to develop algorithms that determine the BCI tasks that were performed in each trial or subjects were in idle state and report the corresponding number (1–7, see Table 2 for BCI tasks).

BCI task trigger definition.

2.2 Algorithm Interface

There are two interfaces related to contestants, namely TaskInterface and AlgorithmInterface. The TaskInterface is implemented by the question maker, including data acquisition and the result feedback methods. The implementation class of this interface is injected into the competing algorithm implementation class before the algorithm runs. During algorithm execution, the interface can be called to obtain data and report the results through the result feedback method. According to the number of times the data acquisition method is called and the correctness of the results, a comprehensive score is detailed. As for AlgorithmInterface, contestants are required to develop their algorithm in the run function. During algorithm execution, the EEG data were obtained by the TaskInterface get_data() method, and the result is returned by the report() method. When finish_flag in the EEG data obtained through the get_data() method is true, then data processing ends and the function should finish the operations by itself.

2.3 Scoring rule

In the BCI Turing Test, the ratio of the number of correct results to the length of the spent data is used as the scoring standard, which indicates that algorithms with higher accuracy and shorter computation time are encouraged. The details are as follows:

For one block, the trials are successively tested. When the number of correctly reported trials reaches 80% of the total number of trials in the block (rounded up), the testing stops. If this requirement is not satisfied, then the score of this block is 0.

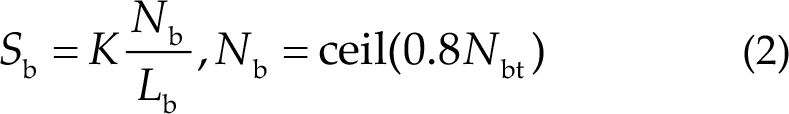

Real-time scoring within a block is calculated by the following formula, in which from the first trial to the i-th trial, N bi represents the number of correct results, L bi represents the total computation time, and K is a fixed scoring coefficient, which is 200.

The final score of one block shares nearly the same formula as the real-time score. In the following formula, N bt represents the total number of trails and L b represents the total length of data when test stops.

3 Method

This section introduces some methods that are frequently used by teams and participants in the BCI Turing Test, including preprocessing methods, algorithms for MI, algorithms for SSVEP and strategies that combine the results of MI and SSVEP.

3.1 Preprocessing methods

Most teams used a 50-Hz notch filter to remove powerline noise and a bandpass filter to remove artifact and high-frequency noise. Furthermore, some teams added detrend into the preprocessing pipeline by applying high-pass filtering at a low frequency, such as 0.1 or 1 Hz. Almost all teams used open-source Python packages to preprocess the EEG data, such as MNE [14], SciPy [15], and Braindecode [9]. In MNE, powerline noise can be directly removed from the raw object, which is easy to implement. The associated function in MNE is raw.filter(). In SciPy, a signal can be considered to be a NumPy array. Scipy provides multiple finite input response and infinite input response filters related interfaces to users for designing and implementing applications. The associated functions can be observed in the signal processing toolbox of SciPy.

3.2 Algorithms for MI

As described in the first section, MI-based BCI classification algorithms can be categorized into two categories, namely machine learning algorithms and deep learning models. The details of various algorithms used in the BCI Turing Test are described next.

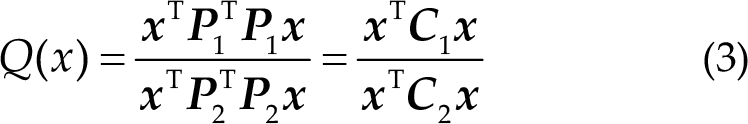

The classification of MI-based EEG signals can be considered to a conventional pattern recognition process, including data acquisition, preprocessing, feature extraction and classification. The feature extraction methods of MI-based EEG data typically focus on features in the time domain, frequency domain, and spatial domain because of the existence of ERS and ERD phenomena. ERS/ERD are typically represented as time-variant spectral power in a joint time–frequency domain [16]. In the spatial domain, a CSP is proven to be effective for MI classification [17]. After the development of the CSP algorithm, K.K. Ang developed a novel filter bank CSP (FBCSP) to improve classification accuracy [18]. CSP learns spatial filter to minimize the variance of samples of the same class and maximizes the variance of samples belonging to different classes. The CSP determines the optimal spatial filters x by optimizing the following function:

where T represents the matrix transpose,

The features are extracted as the logarithm of EEG signal variance after using the spatial filters. The features of the i-th trail can be calculated as follows:

After feature extraction, many teams chose to use traditional machine learning methods, such as LDA, linear regression (LR), and SVM. The advantages of machine learning algorithms are obvious; for example, simple implementation and less computation. However, the performance may rely on extracted features and hyperparameters.

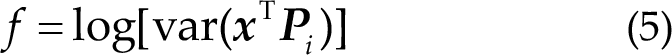

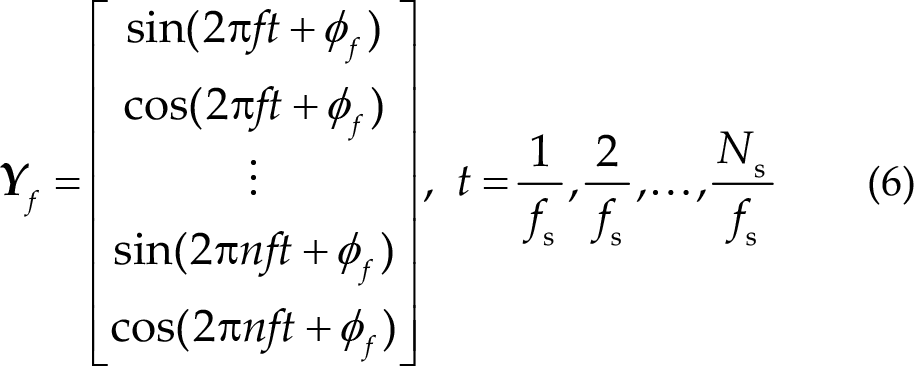

With the rapid progress of deep learning models, transferring innovations in deep learning to EEG decoding and BCI is a critical topic of research. Convolutional neural networks (ConvNets) are prominent techniques, especially in computer vision, which extract local, low-level features from images, and is applicable for the feature extraction of EEG signals. Schirrmeister proposed a DeepConvNet and a ShallowConvNet for end-to-end MI classification, which exhibited superior performance [9]. Lawhern proposed a compact neural network, namely EEGNet for EEG-based BCI and achieved considerable performance on multiple EEG tasks [10]. CNNs exhibit both merits and demerits compared with machine learning methods. Merits include their support for end-to-end learning avoiding manual feature extraction and selection that requires prior knowledge. Demerits include that they require considerable training data, longer time for training, lack interpretability, and are vulnerable to adversarial attacks [19].

Table 3 presents a summary of ShallowConvNet and EEGNet used by participants in the BCI Turing Test. The parameters of the convolution layer are presented as “kernel numbers × kernel sizes”. TConv2d refers to convolution in the time dimension, SConv2d refers to convolution in the spatial dimension, and DWConv represents deepthwise convolution with a lower number of trainable parameters. SepConv represents separable convolution consisting of deepthwise and pointwise convolution, whose kernel size is 1 × 1. BatchNorm indicates batch normalization and ELU is the exponential linear unit activation function. AvgPool2d represents mean pooling, and these numbers are their parameters.

CNN models used in the BCI Turing Test.

3.3 Algorithms for SSVEP

As mentioned in Section 1, the algorithms for SSVEP can be categorized into two categories, namely calibration-free and calibration-based approaches. In this BCI Turing Test, SSVEP training data for calibration were not provided. Therefore, all teams applied a calibration-free approach, such as CCA and filter bank canonical correlation analysis (FBCCA), to determine the frequency of EEG signals.

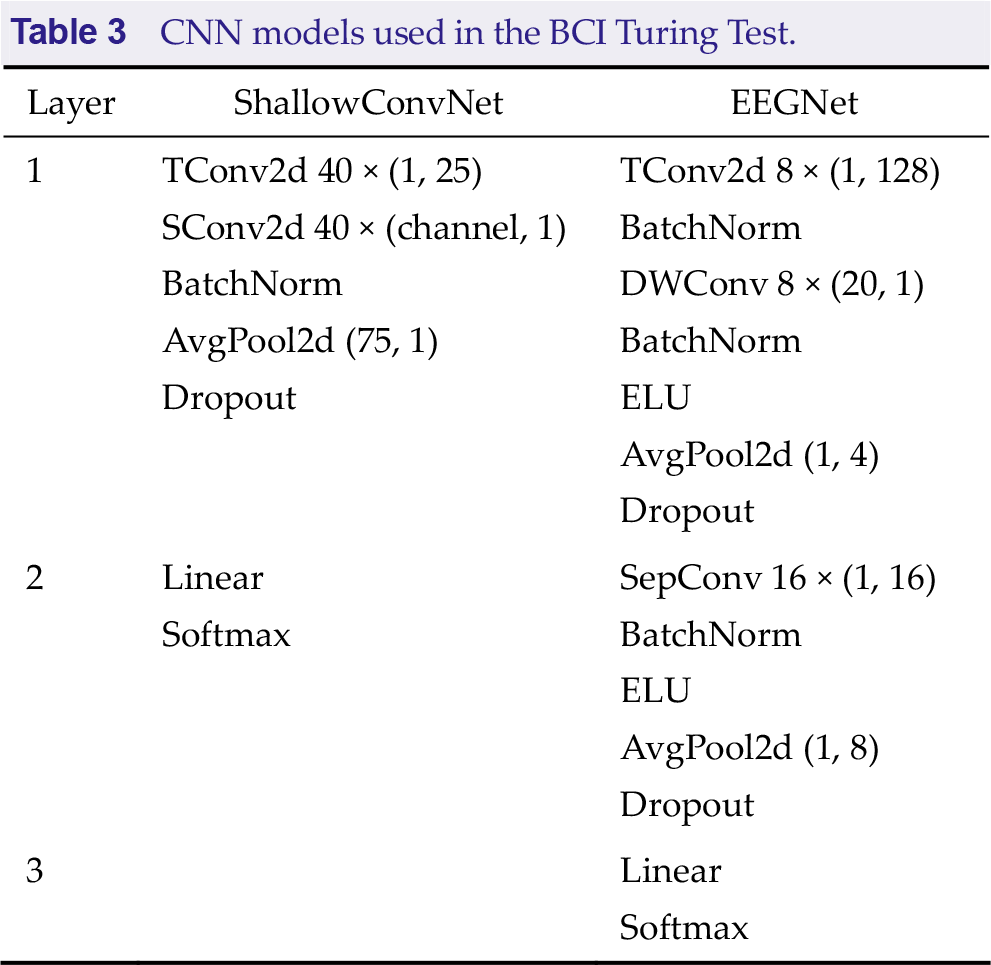

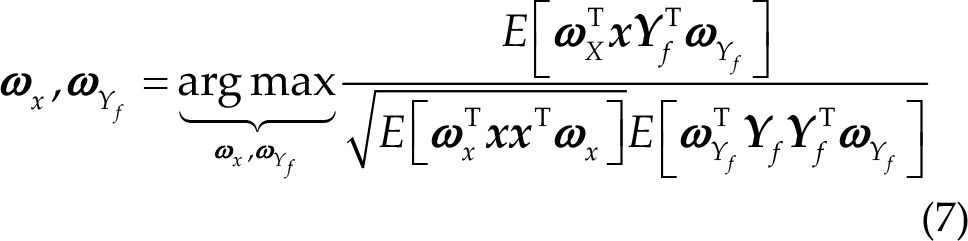

Canonical correlation analysis extracts the correlation between two sets of multichannel time-series signals by finding their optimal linear combination [20]. Lin et al. were the first to use CCA for the recognition of SSVEP signals [21]. The correlation coefficients between EEG signals and each frequency template signal can be calculated by CCA. The frequency with the highest correlation coefficient is the final classification result of the CCA algorithm. The template signal generally consists of sine and cosine waves corresponding to the stimulus frequency and their harmonics.

Let

where f represents the frequency, n is the number of harmonics, and fs is the sampling frequency. Canonical correlation analysis aims to find two optimal weights

Next, let K be the number of targets (K = 4 in BCI Turing Test). Finally, according to the correlation coefficients of each frequency, the frequency with the largest correlation coefficient is selected as the result as follows:

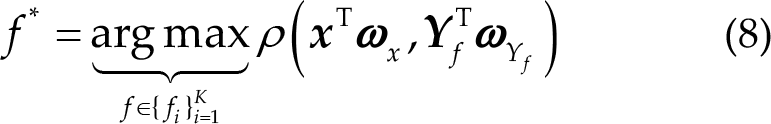

Applying filter banks to CCA, FBCCA was proposed by Chen et al. [22]. For FBCCA, the raw EEG data were first filtered by bandpass filters with different frequency ranges into M sub-frequency bands. Next, the standard CCA was applied to the M sub-frequency band data, which generates a correlation coefficient τk ,m for the k-th frequency and m-th sub-frequency. The final correlation coefficient is the weighted sum of the correlation coefficients that belong to the k-th frequency:

3.4 Decision strategy

In the BCI Turing Test, the algorithm first needs to determine which task the EEG signals belongs to because the subject may be performing MI, SSVEP, or is in an idle state. The contest code framework provides triggers for each trail, indicating which tasks the subject can perform. As presented in Table 1, if given a trial with trigger “00101###”, the subjects can only be in the idle state or performing Pass-SSVEP. Therefore, the label information hidden in the trigger is crucial because it reduces classification difficulty.

After using label information in triggers, most triggers cannot provide sufficient label information to transform cross-task classification problems into single-task classification problems. Therefore, the algorithm should distinguish tasks that EEG signals belong to and subsequently use the algorithms for MI and SSVEP to classify the signals. Most teams merged the idle state classification task into the SSVEP task and repeated the same procedure for MI. When both classification methods for MI and SSVEP determine that a certain EEG signal was generated by the subject being in an idle state, the algorithm finally classifies the data as the idle state. Otherwise, the algorithm preferentially outputs the classification result of SSVEP if the result of the SSVEP classification method is not idle state.

4 Results

This section reviews the algorithms used by the top five teams in the final and presents the detailed scores of the online preliminary contest and offline finals.

4.1 Overview of each algorithm

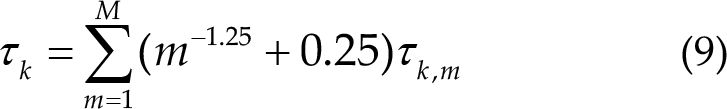

In this BCI Turing Test, the organizers provided a baseline algorithm for the participants to refer to the algorithm design ideas and code framework of the contest. Next, this paper introduced the baseline algorithm and the algorithms of the top five teams and analyzed their models and strategies. Table 4 summarizes the details of all the algorithms clearly.

Overview of each team’s algorithm.

First, the baseline algorithm performed a 50 Hz notch filter and a 6–90 Hz bandpass filter on the data, and subsequently used the CCA, which is commonly used for SSVEP signal recognition Next, after performing a 7–30 Hz bandpass filter on the original data, an algorithm pipeline composed of CSP and LDA is used to classify the motor imagery task. When the CCA algorithm determines that the recognition result is idle state, the result of the motor imagery task is used; otherwise, the SSVEP result is used as the recognition result. The baseline algorithm consumes 3 s of EEG data to obtain a result.

The algorithm of HDU_BMCI also performs a 50-Hz filter to preprocess the data and applies both CCA and FBCCA. The correlation coefficients calculated by CCA and FBCCA are fused by simple addition. For the classification of MI, HDU_BMCI used the same preprocessing methods and classification models as the baseline algorithm. After the recognition of SSVEP, HDU_BMCI used label information based on the triggers to reduce the number of the categories. The decision strategy of HDU_BMCI is putting the result of SSVEP in the first place and only considering the result of MI when the result of SSVEP is idle state. The algorithm of HDU_BMCI uses 1.7 s of EEG data to obtain a result.

The HUST_XS_ZJ algorithm performs the same preprocessing methods before classification. FBCCA is used for SSVEP and three machine learning models, namely SVM, LR, LDA, are used by adding their results up for MI. Label information is also used. The HUST_XS_ZJ algorithm uses 1.6 s of EEG data to obtain a result.

The algorithm of Ecust_BCILab contains the same preprocessing methods. The FBCCA and EEGNet are used for SSVEP and MI, respectively. Label information is also used. The algorithm consumes data of various time lengths for different subjects.

The UMBCI algorithm adopts the same preprocessing method and recognition algorithms as SSVEP. The EEGNet was selected for the MI classification task. Label information is not used in triggers. A total of 2.6 s of data are used to output a result.

In the XianLiGong algorithm, the same preprocessing is used for SSVEP as the baseline method but different methods for MI. Detrend is used on EEG data and notch filtering is performed at 50 Hz and bandpass filtering at 0–38 Hz. FBCAA is used to recognize SSVEP, and multiple ShallowConvNets are trained for each subject. The algorithm does not use label information in triggers and requires 1.9 s to calculate a result.

4.2 Performance of every algorithm

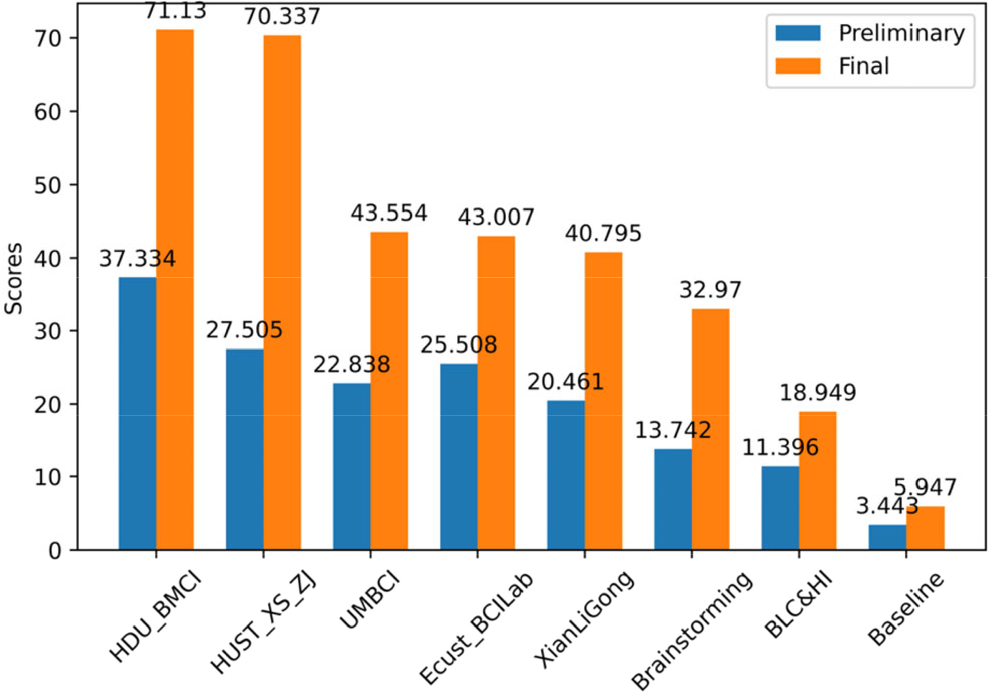

The preliminary of 2022 BCI Turing Test was first held online from June 1st to August 7th and the final was held offline in Beijing from 18th to 21st August. Analyzing the scores of each team in preliminary and final reveals that the algorithms using label information generally obtain higher scores than those without label information, and the top two algorithms consume less inference time. Furthermore, most teams used FBCCA for SSVEP, but various algorithms were used for MI, including machine learning and deep learning.

According to the scoring rule, the score of a block is valid if the accuracy of this block is higher than 80%, and the score increases with less time consumed. Therefore, the algorithm with excellent performance only uses data from a short time because the classification accuracy of the algorithm is high and the rational use of label information reduces classification difficulty. For the algorithms for SSVEP, FBCCA was widely used because training data were not available for SSVEP recognition. Algorithms, such as eCCA, TRCA, and eTRCA that require training did not appear in this competition. As for the MI algorithm, some teams used machine learning (ML), whereas other teams used deep learning (DL). Compared with DL, ML has many advantages in many aspects, such as high computational efficiency and no training with large datasets. However, ML has disadvantages, such as poor generalization ability and poor transfer ability across time periods. Because this competition provided only a small amount of calibration data for each subject, training the DL models perfectly may be difficult, and only a few days were there between the collection of calibration data and the holding of the final. The deviation of the data distribution was not large; therefore, the top two teams’ ML algorithms for MI performed well.

Scores of each team in preliminary and final.

5 Conclusion

We reviewed the algorithms of the top five teams in the BCI Controlled Robot Contest in World Robot Contest 2022: BCI Turing Test. The algorithms used in this competition reflect the trends in the development of algorithms for MI, and some widely accepted algorithms for SSVEP have emerged. The BCI Turing Test was first proposed in the BCI Controlled Robot Contest in the World Robot Contest. The test requires algorithms to determine whether the AI or participants is conducting the BCI system by directly judging the EEG task of a trail. If an algorithm can distinguish whether a subject is using BCIs intensively, it may be useful in evaluating subjects’ ability to use BCIs. In the future, we will investigate algorithms to distinguish whether a segment of EEG signals contains task information. For the setting of the contest, the soccer confrontation could be longer so that more trials are performed in a round, and longer action sequences could contain more contextual information to better reflect the differences between AI and humans in dealing with the problem. Thus, more information can be obtained for the teams. Methods can be devised diversified, which can render the competition more exciting.

Footnotes

Conflict of interests

All contributing authors report no conflict of interests in this work.

Funding

This work was supported by National Natural Science Foundation of China (Grant No. U20B2074), Key Research and Development Project of Zhejiang Province (Grant Nos. 2023C03026, 2021C03001, 2021C03003), and supported by Key Laboratory of Brain Machine Collaborative Intelligence of Zhejiang Province (Grant No. 2020E10010).

Authors’ contribution

Hangjie Yi developed the algorithm used in BCI Turing Test, summarized each team’s algorithms, analyzed the competition results, and wrote the initial draft. Dongjun Liu contributed commentary and revision. Xuanyu Jin discussed the results and revised the manuscript. Hangkui Zhang developed the algorithm and helped to search hyperparameters. All the authors approved the final manuscript.