Abstract

Objectives:

This retrospective case study evaluated the interrater and intrarater reliability of seven common extensor tendon pathologic features on musculoskeletal ultrasonography (MSK-US).

Materials and Methods:

A cohort of 50 patients were imaged due to presenting with atraumatic nonradicular lateral elbow pain. Three experienced and two novice readers rated the images on two separate occasions, and AC1 and kappa coefficients were calculated for each feature.

Results:

The interrater reliability was fair with respect to fascial thickening/scarring (AC1 = 0.26), tearing (AC1 = 0.35), tendon thickening (AC1 = 0.38), and intratendinous calcification (AC1 = 0.33); substantial for enthesophytes (AC1 = 0.80); and near complete for hyperemia (AC1 = 0.83) and hypoechogenicity (AC1 = 0.92). Intrarater reliability was moderate for fascial thickening/scarring (κ = 0.48), tearing (κ = 0.41), tendon thickening (0.47), intratendinous calcification (κ = 0.56), and hypoechogenicity (κ = 0.47); substantial for hyperemia (κ = 0.71); and almost perfect for enthesophytes (κ = 0.86).

Conclusion:

MSK-US may be a reliable tool to determine soft tissue changes in common extensor tendon pathology.

Common extensor tendinopathy (CET) at the elbow has been historically described in the literature and by health care providers as tennis elbow, lateral epicondylitis, and chronic elbow tendinitis.1,2 However, these terms emphasize an acute inflammatory process without considering a degenerative intratendinous process that may be occurring concomitantly or exclusively. Common extensor tendinopathy has been shown to affect 1% to 3% of adults per year. 1 Many different treatments are used for CET, including physical therapy, counterforce bracing, dry needling, peritendinous cortisone injections, percutaneous tenotomy, intratendinous platelet-rich plasma (PRP) injections, bone marrow concentrate injections, and placenta-derived extracellular injections.1,3–6 The most appropriate treatment relies on an accurate diagnosis and classification of the pathology being treated. Although the pathophysiology of tendinopathy has been described, its natural progression has not been previously systematically classified.

Musculoskeletal ultrasonography (MSK-US) has become widely available and is frequently used in clinical settings to evaluate various CET findings and to confirm the presence of lateral epicondylitis.1,5–7 Sonographic findings have been previously shown to correlate with histologic results from CET biopsies.8,9 However, the interrater and intrarater reliability for these MSK-US findings has not been well established.2,7,10–12 The primary purpose of this study was to determine the interrater and intrarater reliability of seven CET pathologic features identified on MSK-US to form the basis of a standardized classification system for CET. A secondary purpose of this study was to determine whether any reliability differences existed between novice and experienced MSK-US readers. These seven characteristics are tendon hypoechogenicity, tendon thickening, fascial thickening/scarring, intratendinous calcification, enthesophyte, hyperemia, and tendon tearing.

Materials and Methods

Study Sample

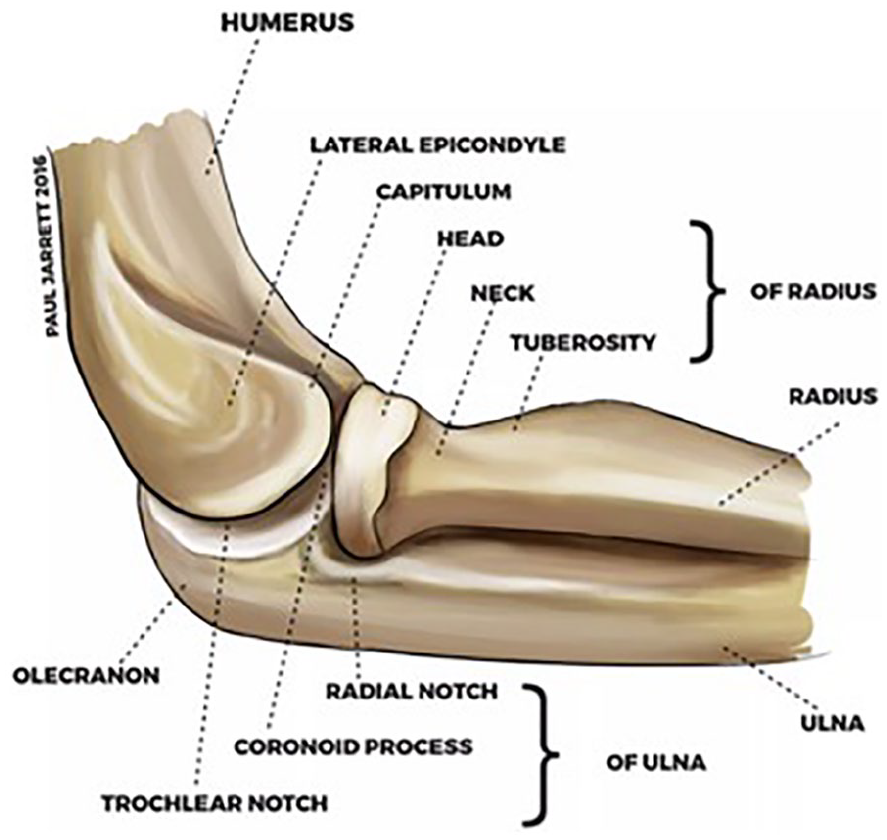

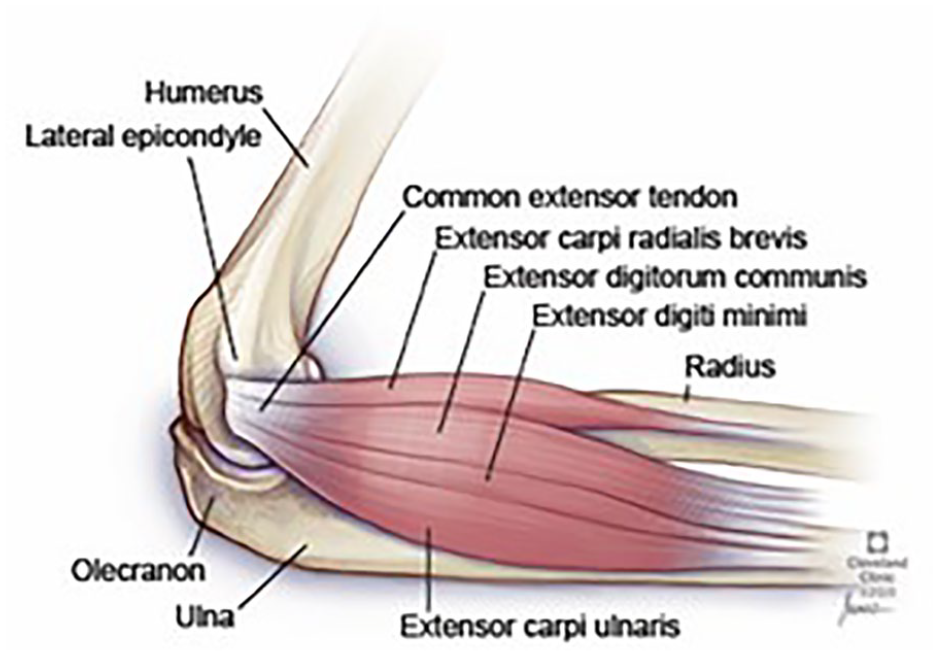

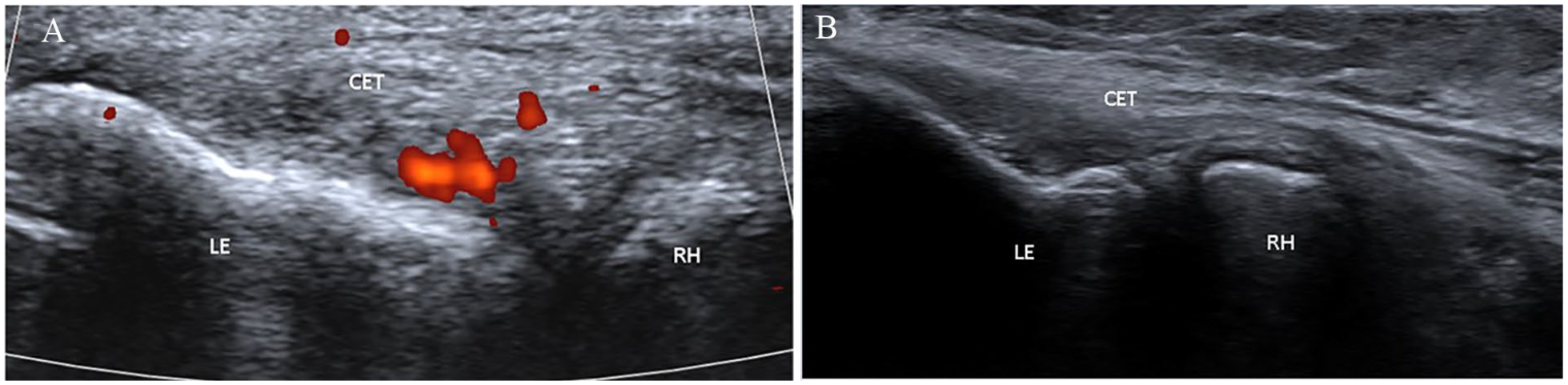

This study was approved by the hospital’s Institutional Review Board and was deemed to be exempt. Lateral elbow musculoskeletal sonographic images, which only followed the complete lateral elbow imaging protocol, were extracted from the electronic medical record. This allowed for images to be procured from 50 patients, at a US tertiary academic hospital. The patients presented with atraumatic nonradicular lateral elbow pain and had a diagnosis of common extensor tendinopathy with sonography. The five dedicated and full-time musculoskeletal sonographers who obtained the images were formally trained. The musculoskeletal sonographers’ formal training consists of completion of the Registered Musculoskeletal Sonographer (RMSK-S) certification through American Registry for Diagnostic Medical Sonography (ARDMS) and 6 months of internal training within the department of radiology. Internal training comprised on-the-job training with experienced musculoskeletal sonographers and musculoskeletal radiologists in conjunction with twice-monthly hands-on scanning demonstrations run by an experienced musculoskeletal radiologist. The sonographer also completed a musculoskeletal anatomy workbook and reviewed musculoskeletal anatomy in Netters’ atlas. A 1 year of scanning with progression to independent scanning by the sonographer over the last 3 months was required. The lateral elbow imaging protocol followed guidelines from the American College of Radiology and were obtained using a 10- to 14-MHz linear transducer (Siemens S3000, Germany). The specific components on evaluation included the lateral epicondyle, the common extensor tendon, the collateral ligament complex, proximal attachments of the extensor carpi radialis longus and brachioradialis, and the radial nerve and its branches (superficial and posterior interosseous nerve) (Figures 1 and 2). The group of readers established a consensus of the following seven characteristics observed: hypoechogenicity (any presence of hypoechogenicity was considered as a positive finding); hyperemia (images under power Doppler were reviewed. The presence of increased signal was considered as a positive result, Figure 3A); tearing (area of anechoic that is within the tendon was considered as a positive result); enthesophyte (the presence of a bony abnormality at the enthesis was considered as a positive result, Figure 3B); fascial thickening/scarring (comparison images with the opposite elbow were used. The measurements were taken originally by the ultrasound imaging technicians on the collected images that were then used by the readers); tendon thickening (evaluation of the tendon from superficial to deep was done in comparison with the opposing tendon); and intratendinous calcification (the presence of a calcification within the tendon only was considered a positive finding).

Lateral elbow Osseous anatomy.

Lateral elbow anatomy, demonstrating the origin of common extensor tendon at the lateral epicondyle.

Lateral elbow ultrasound image of the common extensor tendon, demonstrating certain pathologic characteristics. (A) Hyperemia, highlighted in red with power Doppler. (B) Enthesophyte at the lateral epicondyle. CET, common extensor tendon; LE, lateral epicondyle; RH, radial head.

Ultrasonographic Interpretation

Five sonography readers analyzed and scored the 50 lateral elbow MSK-US images. Of the five readers, two were considered “novice readers” and consisted of two primary care sports medicine fellows in the midst of their fellowship year. The three “experienced readers” consisted of two board-certified sports medicine attendings who had been practicing independently for at least 5 years and one musculoskeletal sonography-trained radiology attending. The two board-certified sports medicine attendings had formal sonography training through sports medicine fellowship, along with the experience of reviewing MSK-US images in practice. The readers interpreted the sonographic images and verbally relayed to a blinded recorder, using a “positive” or “negative” rating for each pathologic characteristic. The term “positive” was used when the finding was present and “negative” when the finding was absent. There were no cutoff values for each of the pathologic characteristics except for fascial thickening, which had a cutoff value of 1 mm. As previously mentioned, the sonographers made the original measurements on the collected images for fascial thickening. However, the five readers were able to make adjustments during the reading session if they felt the measurements were incorrect. All five readers made remeasurements throughout the reading sessions; the more “experienced” readers made more adjustments than the “novice” readers. The readers reviewed all of the 50 lateral elbow sonographic images during each reading session and scored the cases on two separate reading occasions. These two separate reading occasions were separated by 1 month, and no additional formal education was provided for the readers between these reading occasions. The order of the images was randomized, and a different randomization order was used for the two reading occasions. The readers were blinded to each other’s ratings, and these were recorded on Microsoft Excel Spreadsheet, 2010.

Statistical Analysis

Interreader Agreement

Unweighted (for binary findings) kappa statistics were calculated for each pairwise comparison between readers. The kappa variable describes the relative magnitude of agreement between readers after adjusting for chance agreement. 13 The mean kappa statistic over the pairwise reader comparisons was reported along with a 95% confidence interval (CI). For interrater agreement, Gwet’s AC1 coefficient (95% CI) was used in addition to kappa for statistical analysis. This was done because kappa’s paradoxical behavior often lends to results lower than expected when there is a high rate of “positive” readings, even when the actual percent agreement is quite high.13,14

Intrareader Agreement

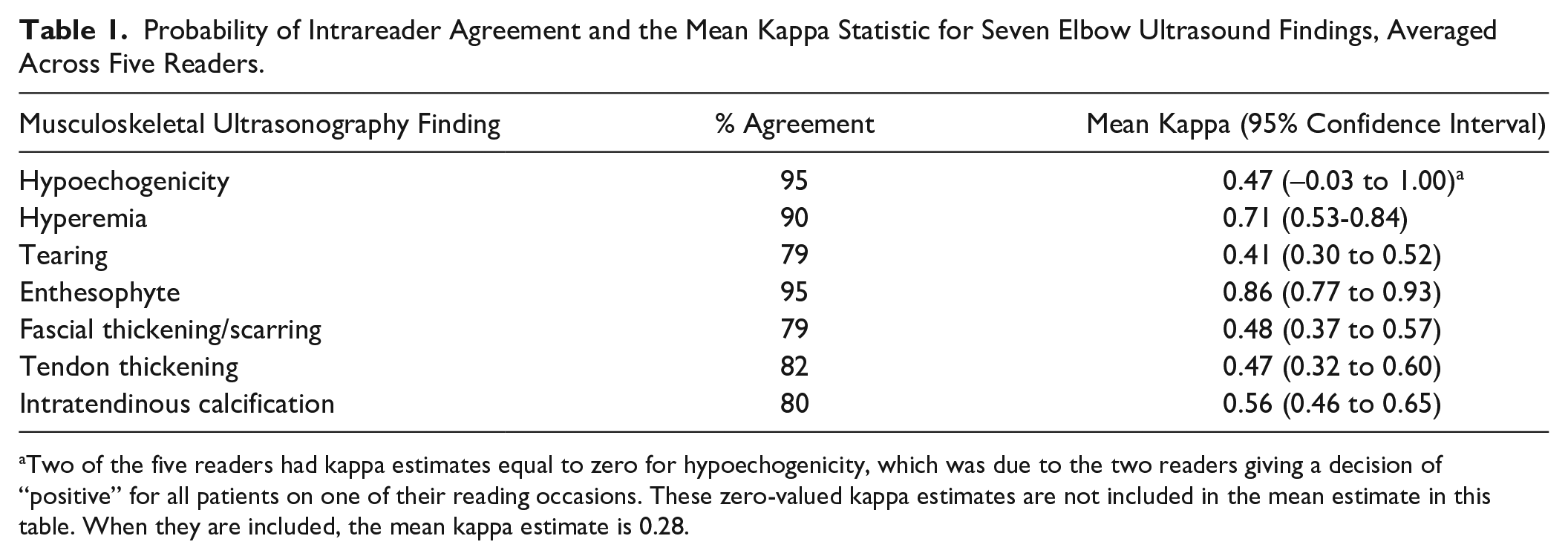

Unweighted kappa statistics were calculated for each reader using the findings from their first and second passes. The mean kappa statistic over the five readers was reported (95% CI). All patients received the same rating by the reader. In the resulting analysis, the kappa estimate involving that reader’s rating was zero. For example, reader 2 marked 94% of patients positive for hypoechogenicity on reading occasion 1 and 100% of patients positive for hypoechogenicity on reading occasion 2. Although reader 2’s interpretations were therefore in agreement for 94% of patients, kappa was zero. In the second instance, reader 4 marked 90% of patients positive for hypoechogenicity on reading occasion 1 and 100% of patients positive for hypoechogenicity on reading occasion 2. Again, although reader 4’s interpretations were in agreement for 90% of patients, kappa was zero. The estimates of the mean kappa for hypoechogenicity exclude the zero-valued kappa statistics, although the mean kappa when they are included is also reported (Tables 1–3). The other MSK-US characteristics were not affected in this way.

Probability of Intrareader Agreement and the Mean Kappa Statistic for Seven Elbow Ultrasound Findings, Averaged Across Five Readers.

Two of the five readers had kappa estimates equal to zero for hypoechogenicity, which was due to the two readers giving a decision of “positive” for all patients on one of their reading occasions. These zero-valued kappa estimates are not included in the mean estimate in this table. When they are included, the mean kappa estimate is 0.28.

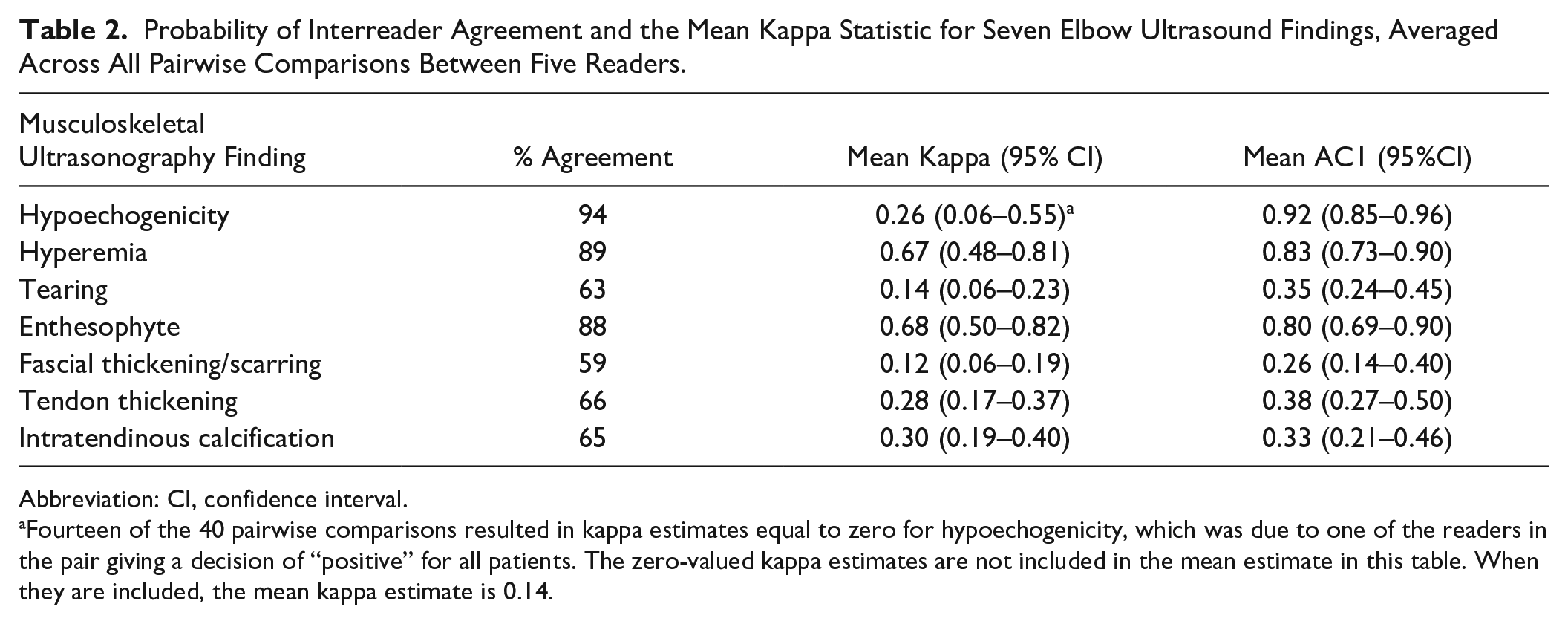

Probability of Interreader Agreement and the Mean Kappa Statistic for Seven Elbow Ultrasound Findings, Averaged Across All Pairwise Comparisons Between Five Readers.

Abbreviation: CI, confidence interval.

Fourteen of the 40 pairwise comparisons resulted in kappa estimates equal to zero for hypoechogenicity, which was due to one of the readers in the pair giving a decision of “positive” for all patients. The zero-valued kappa estimates are not included in the mean estimate in this table. When they are included, the mean kappa estimate is 0.14.

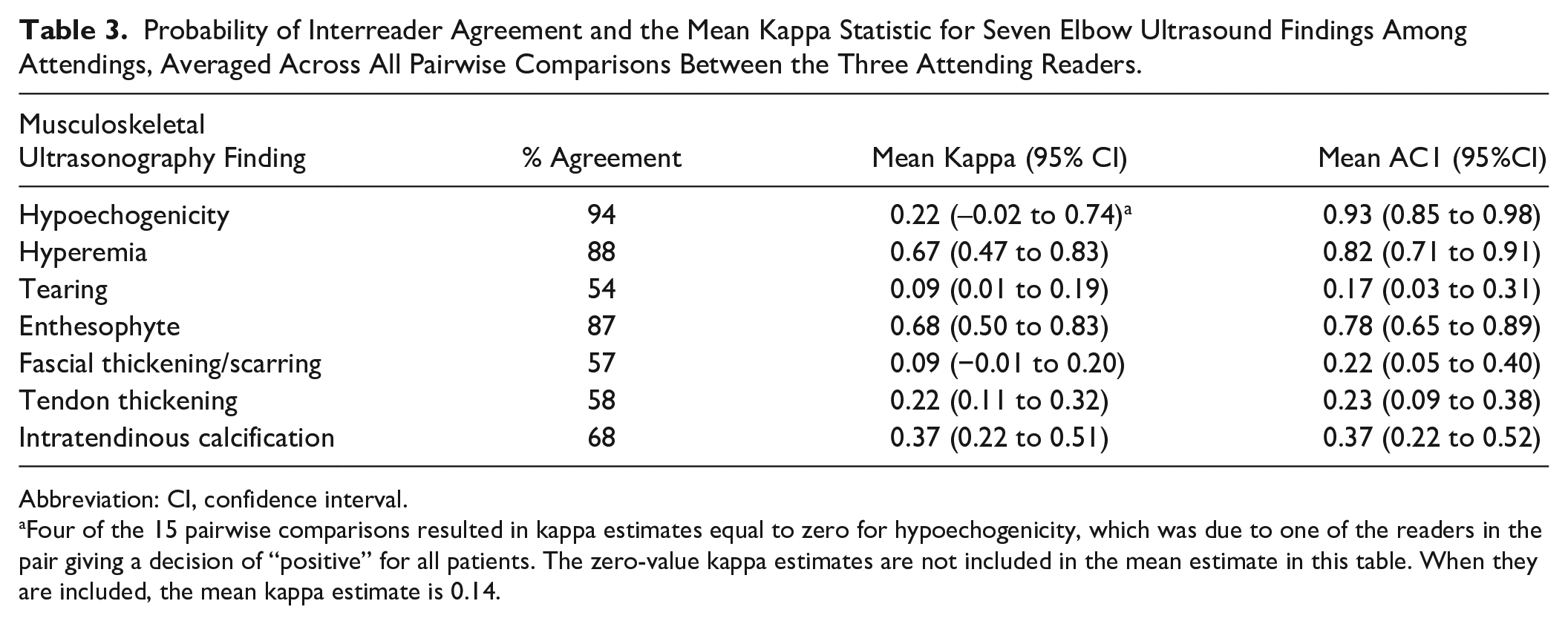

Probability of Interreader Agreement and the Mean Kappa Statistic for Seven Elbow Ultrasound Findings Among Attendings, Averaged Across All Pairwise Comparisons Between the Three Attending Readers.

Abbreviation: CI, confidence interval.

Four of the 15 pairwise comparisons resulted in kappa estimates equal to zero for hypoechogenicity, which was due to one of the readers in the pair giving a decision of “positive” for all patients. The zero-value kappa estimates are not included in the mean estimate in this table. When they are included, the mean kappa estimate is 0.14.

Results

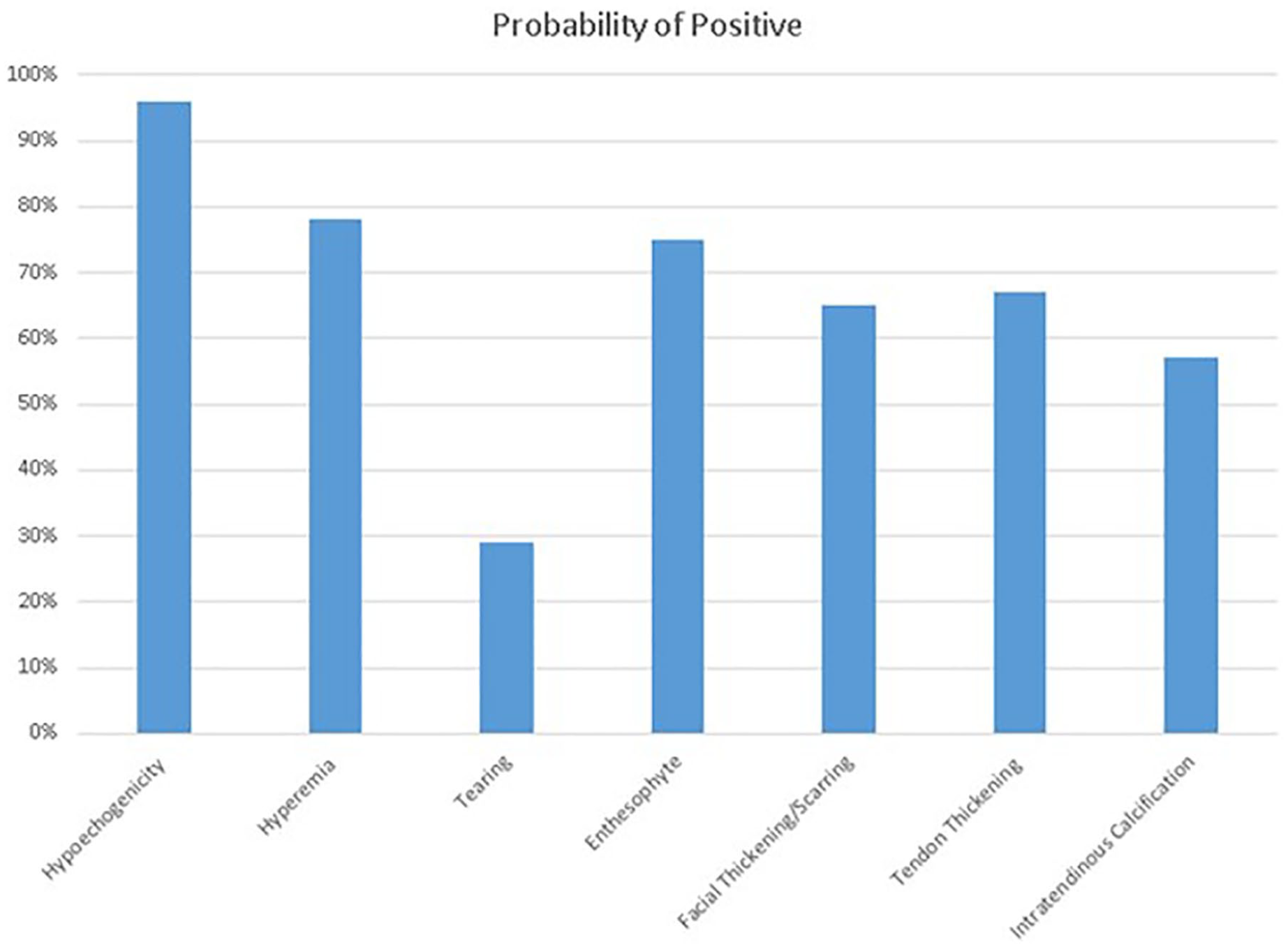

Averaging across all readers and reading occasions, the probability of a patient being marked positive for each of the seven MSK-US characteristics is displayed in Figure 4.

Probability of being marked positive among the seven pathologic characteristics.

Intrareader Agreement

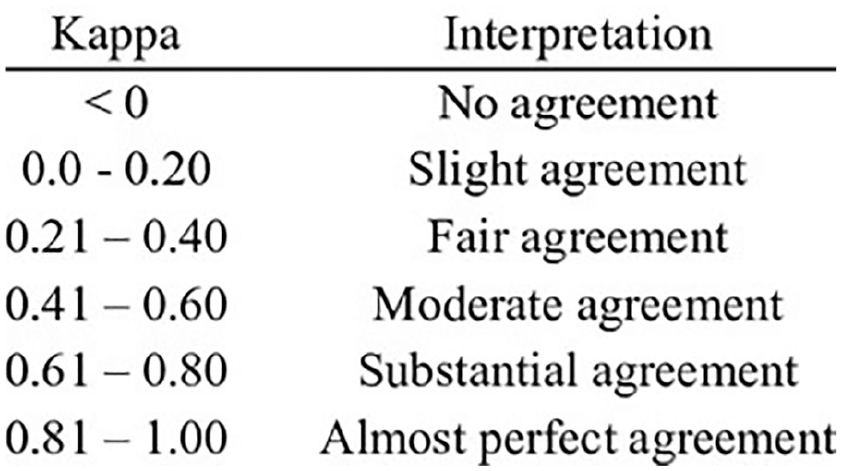

According to Cohen’s kappa coefficient, as seen inFigure 5, moderate intrareader agreement was observed for tearing (0.41), fascial thickening/scarring (0.48), tendon thickening (0.47), and intratendinous calcification (0.56) (Table 1). Substantial to near-complete intrareader agreement was observed for hyperemia (0.71) and enthesophyte (0.86). A reader’s binary interpretation of hypoechogenicity was the same during each reading occasion for 95% of patients on average. The mean kappa statistic for this US finding (0.47) suggests only moderate intrareader agreement. However, this was because the probability of a patient being marked positive for hypoechogenicity was very high (96%).

Scale used in statistics to quantify the level of agreement based on kappa/AC1 values.

Interreader Agreement

All five readers

As seen in Table 2, according to Cohen’s kappa coefficient, interreader agreement was minimal to fair with respect to tendon tear (mean kappa: 0.14), fascial thickening/scarring (0.12), tendon thickening (0.28), and intratendinous calcification (0.30) (Table 2). Substantial interreader agreement was observed for hyperemia (0.67) and enthesophyte (0.68). The mean kappa statistic for this US finding (0.26) suggests only fair interreader agreement. However, this was because the probability of a patient being marked positive for hypoechogenicity was very high (94%).

According to the AC1 coefficient, interreader agreement was fair with respect to tearing (0.35), fascial thickening/scarring (0.26), tendon thickening (0.38), and intratendinous calcification (0.33). Substantial interreader agreement was observed for hyperemia (0.83) and enthesophyte (0.83). Hypoechogenicity (0.92) had almost perfect agreement.

Three experienced readers

According to Cohen’s kappa coefficient, interreader agreement was slight to fair with respect to tearing (0.09), fascial thickening/scarring (0.09), tendon thickening (0.22), and intratendinous calcification (0.37) (Table 3). Substantial interreader agreement was observed for hyperemia (0.67) and enthesophyte (0.68). The mean kappa statistic for this US finding (0.22) suggests only fair interreader agreement; however, this was because the probability of a patient being marked positive for hypoechogenicity was very high (94%).

According the AC1 coefficient, there was slight interreader agreement with respect to tearing (0.17), and there was fair agreement with respect to fascial thickening/scarring (0.22), tendon thickening (0.23), and intratendinous calcification (0.37). Substantial interreader agreement was observed for enthesophyte (0.68) and there was almost perfect agreement with respect to hypoechogenicity (0.93) and hyperemia (0.82).

Two novice readers

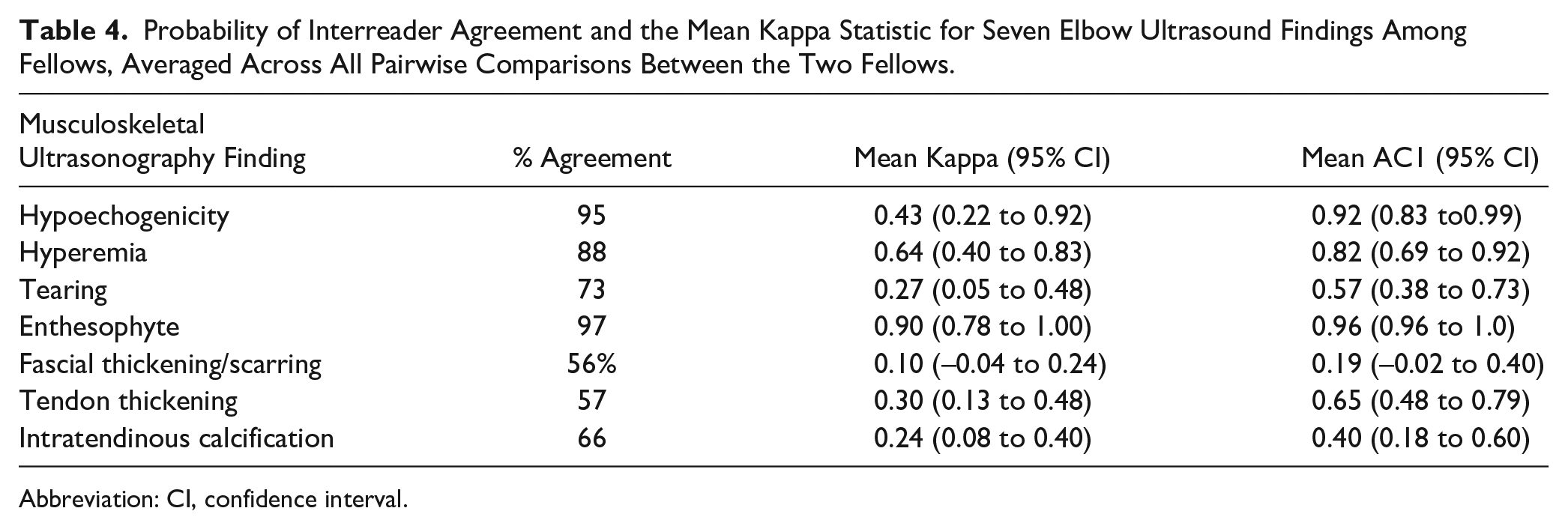

According to Cohen’s kappa coefficient (Table 4), interreader agreement was slight to fair with respect to tearing (0.27), fascial thickening/scarring (0.10), tendon thickening (0.30), and intratendinous calcification (0.24). Good interreader agreement was observed for hyperemia (0.64) and near-complete agreement was observed for enthesophyte (0.90). The mean kappa statistic for this US finding (0.43) suggests moderate interreader agreement; however, this was because the probability of a patient being marked positive for hypoechogenicity was very high (95%).

Probability of Interreader Agreement and the Mean Kappa Statistic for Seven Elbow Ultrasound Findings Among Fellows, Averaged Across All Pairwise Comparisons Between the Two Fellows.

Abbreviation: CI, confidence interval.

According to the AC1 coefficient, there was slight interreader agreement with respect to fascial thickening/scarring (0.19) and there was fair agreement with respect to intratendinous calcification (0.40). There was substantial agreement observed for tendon thickening (0.65) and tearing (0.57). Near-total agreement was observed for enthesophyte (0.96), hypoechogenicity (0.92), and hyperemia (0.82).

Discussion

Based on a limited literature search, this is the first study to report the interrater and intrarater reliability of these seven MSK-US characteristics for CET.

The probability of any of the sonographic images being marked positive for a certain pathologic characteristic by the sonography reader was over 50% for six of the seven studied characteristics: all but tearing. This relatively high prevalence of each of the six characteristics could be attributed to several factors. The most obvious factor is that the patient population investigated in this study was a subset who presented to the clinic with lateral elbow pain. In theory, patients presenting with lateral elbow pain will have a higher prevalence of pathology on their sonogram. Unfortunately, there appears to be no readily available data of the prevalence of these pathologic sonographic characteristics, in an asymptomatic population. Another possibility is the “all or nothing” phenomenon created by the scale that was used by the ultrasound readers. The scale used by the readers regarding the pathologic characteristics was “positive” when the finding was present and “negative” when the finding was absent. Therefore, even if a characteristic was minimally present on the sonographic image, it would be marked simply as “positive” by the reader. Finally, there could have been observer bias among the readers by simply knowing they were evaluating the images of symptomatic patients. There was blinding to the readers regarding the order of the sonograms, but the readers knew that the images were obtained from a population that presented with lateral elbow pain.

When comparing the kappa values of intrarater versus interrater reliability, the kappa values of intrarater reliability seem to be higher across the board than the kappa values of interrater reliability for each characteristic. This is not an unexpected outcome. In theory, it makes sense that a specific reader is more likely to agree with his or her own interpretation of a sonographic image versus those of other readers. Although consensus definitions were agreed upon for each characteristic prior to reading the images, there is still some room for variation in interpretation among readers, which is potentially reflected in the kappa values for interrater reliability that are lower.

Although kappa statistics are widely accepted in agreement studies, due to the paradoxical behavior of the statistic, Gwet’s AC1 was used to help provide further evidence of agreement that could be lost in the kappa statistic in this study. Gwet’s AC1 statistic distinguishes between rated items that evoke virtually total consensus among raters and items whose categories are essentially guessed randomly with equal category probabilities by at least some raters. The agreements due to such random guesses are excluded from the calculation of the statistic.13–15

Among the seven characteristics, there seems to be an admittedly large range between the interreliability scores. For instance, “enthesophyte” had a kappa/AC1 score no less than 0.68, whereas “tearing” had a kappa/AC1 score no greater than 0.35 when looking at all the data. It is possible that certain pathologic features are more readily distinguishable among ultrasound readers versus others. For future studies, it could be beneficial to further investigate the characteristics that consistently had a higher reliability, such as hyperemia and enthesophyte, to determine whether these results are reproducible in a study with more sonographic images and increased power.

Another aspect of this study was comparing the interrater reliability between novice and experienced sonography readers. When looking at the data, the percent agreement is higher or the same value for four of the seven ultrasound characteristics among the novice readers. The three characteristics that had lower agreement for the novice readers were fascial thickening/scarring, tendon thickening, and intratendinous calcification. The novice readers have the same or higher interrater reliability for five of the seven characteristics when looking at kappa and AC1. One would assume that the agreement between experienced readers would be higher due to their increased ability to correctly identify pathology on a sonographic image. One possible explanation is that novice readers are more likely to abide strictly to the consensus definitions placed when reading sonographic images, whereas experienced readers deviate from the agreed-upon consensus definitions due to a prior formed habit of reading sonographic images. Another factor to consider is that only two novice readers were used while there were three experienced readers in this study. To our knowledge, there are limited data on the effect that level experience for a reader has on interrater reliability, 8 particularly when looking at the common extensor tendon.

There are several limitations to this study. First, there was a selection bias of sonographic images interpreted for patients presenting with lateral elbow pain. Although, as mentioned above, there was blinding to the readers regarding the order of the sonographic images, it could have affected the likelihood that a reader would report a pathologic feature as “positive.” It would be worthwhile for future studies to limit these biases by having control images from a patient population without elbow pain and to blind the reader to which patient population was connected to the sonographic image. Next, the study was limited by having only 50 sonographic images for the readers to grade. Future reliability studies should be higher powered with more sonographic images. Next, the scale used of “positive” or “negative” does not provide any quantitative description of how much of that pathologic characteristic was present. Future studies could aim to use a numerical scale, where the minimum number represents minimal pathology and the maximum represents maximum pathology.

The results from this study may have some clinical implications. This study demonstrated the simple learning curve of interpreting MSK-US images of the common extensor tendon of the elbow, as both experienced and novice readers had similar interrater and intrarater reliability. The authors believe that current research investigating the effectiveness of various treatments of common extensor tendinopathy, particularly orthobiologics, has been limited in reproducibility by a lack of ability to properly select patients with similar common extensor pathologies. 4 This study may help us to understand why there is such variability between studies that evaluate treatments for common extensor tendinopathies. By demonstrating a significant level of reliability among sonography readers, this study also helps to lay the groundwork for creating an MSK-US-based classification system for common extensor tendinopathies. Such a classification system could be used as a research standard for all common extensor tendinopathy treatments so that we could match “demographics” of various common extensor tendinopathies, and then accurately investigate the utility of conventional and novel treatments.

Conclusion

MSK-US is an inexpensive, non-invasive, dynamic, and, with the findings of this study, significantly reliable tool in identifying various pathologies of the common extensor tendon. 9 Patients presenting with clinical findings of common extensor tendinopathy should be evaluated with MSK-US, to better delineate the specific tendon pathologic features that are present within the tendon. MSK-US could be used in the future to establish a classification system for common extensor tendinopathies that may help to standardize research and better delineate patient populations that may respond most favorably to various common extensor tendinopathy treatments and procedures.

Footnotes

Acknowledgements

We gratefully acknowledge Isaac Briskin for his assistance with the statistical analysis and Brittany Stojsavljevic for editorial management.

Ethics Approval

This study was approved by the Cleveland Clinic IRB #17-230.

Informed Consent

Written informed consent was obtained from all subjects before the study.

Animal Welfare

Guidelines for humane animal treatment did not apply to the present study because

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr Genin reports other from Skye BioRenew, during the conduct of the study; other from CyMedica Orthopedics, other from Flexion Therapeutics, other from Samumed, and other from Ferring Pharmaceuticals, outside the submitted work. Dr King reports other from DJO Global, other from Arthrex, other from CyMedica Orthopedics, and other from Flexion Therapeutics, outside the submitted work.

Trial Registration

Not applicable.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.