Abstract

Residents of communities increasingly rely on geographically focused groups on online social media platforms to access local information. These local groups have the potential to enhance the quality of life in communities by helping residents learn about their communities, connect with neighbors and local organizations, and identify important local issues. Moderators of online community groups—typically untrained volunteers—are key actors in these spaces. However, they are also put in a tenuous position, having to manage the groups while simultaneously navigating desires of platforms, rapidly evolving user practices, and the increasing politicization of local issues. In this paper, we explicate the visions of local community groups put forward by Facebook, Reddit, and NextDoor in their corporate discourse and ask: How do these platforms describe local community groups, particularly in reference to ideal communication and community engagement that occurs within them, and how do they position volunteer moderators to help realize these ideals? Through a qualitative thematic analysis of 849 company documents published between 2012 and 2023, we trace how each company rhetorically positions these spaces as what we refer to as a “local platformized utopias.” We examine how this discourse positions local volunteer moderators, the volunteer labor-force of civic actors that constructs, governs, and grows community groups. We discuss how these three social media companies motivate moderators to do this free, value-building labor through the promise of civic virtue; simultaneously obscuring unequal burdens of moderation labor and failing to address the inequalities of access to voice and power in online life.

Introduction

Social media groups are an increasingly important source of information and connection in local communities (Adria, 2020). These local groups have the potential to enhance the quality of life in communities by helping residents learn about their communities, connect with neighbors and local organizations, and identify important local issues. Facebook offers hundreds of region-specific community groups, proudly touting these in nation-wide commercials and Superbowl advertisements (Facebook, 2020). Reddit has hundreds of subreddits focused on specific states, cities, and towns. And Nextdoor promotes itself as a space where users can “Get the most out of your neighborhood” through the platform. In these locally-oriented digital spaces, users interact, discuss community issues, and share information about what is happening around them. Particularly in communities that have experienced the decline of local newspapers, local community groups can serve as a vital resource during moments of strain or community crisis, such as natural disasters, man-made disasters, or civic unrest. At the same time, the quality of civic discourse in these spaces may be threatened by information pollution, including spam, scams, incivility and “toxic” trolls, off-topic posting, rumor, and/or inaccurate information. The job of overseeing these community groups—and ensuring the health of these spaces—most often falls to volunteer moderators.

The social fabric of local community life is changing as the tech industry has made targeted investments to become part of this fabric. For example, in an open letter beginning with the question “Are we building the world we all want?”, Mark Zuckerberg (2017) heralded Facebook groups for their ability to “strengthen existing physical communities,” noting the important role of these groups’ volunteer moderators as “engaged leaders.” Moderators of online community groups—typically untrained volunteers—are key actors in these spaces. However, they are also put in a tenuous position, having to manage the groups while simultaneously navigating desires of platforms, rapidly evolving user practices, and the increasing politicization of local issues. Indeed, the realization of functional and useful local groups often relies on their successful navigation of these elements, all while providing free leadership and labor. In this paper, we interrogate how these companies position themselves as a platform for local communities and civic life, and how volunteer moderators are expected to build, govern, and grow these spaces. We ask: How do Facebook, Reddit, and Nextdoor describe local community groups, particularly in reference to ideal communication and community engagement that occurs within them, and how do they position volunteer moderators to help realize these ideals?

Gillespie (2010: 1) observes that social media companies are careful in how they position “themselves to users, clients, advertisers, and policymakers, making strategic claims as to what they do and do not do, and how their place in the information landscape should be understood.” Discourses are “structures of knowledge that influence systems of practices” (Chambon, 1999: 57). Technology companies scaffold their products with discourse to guide the diffusion and adoption of their products through society. This discourse helps shape how a given technology becomes “part of our systems of goals, values, and meaning, part of our articulated interests, struggles, and activities” (Bazerman, 1998: 386), contributing to what Jasanoff and Kim (2015) refers to as “sociotechnical imaginaries,” that is, widely shared imaginings of idealized futures. The stories and ideas technology developers use to describe their products play a key role in shaping broader understanding of them through the values and interests embedded in them (Pfaffenberger, 1992). We argue that social media companies’ imaginaries of utopian, technologically mediated local communities entail a degree of performativity, influencing the future development and integration of these communities within broader social infrastructure (Thorson et al., 2020), even if those dreams are never fully realized.

Several research studies have highlighted the importance of the discourse of tech purveyors. Couldry (2015) argues Facebook's discourse helps produce a vision of human collectivity and Haupt (2021) extends this work by examining how Facebook posits desirable futures in its discursive construction of global community. Hoffmann et al. (2018) highlight how Mark Zuckerberg's mediates the relationship between the platform and users in an ever-changing environment, and Rider and Murakami Wood (2018) focus specifically on Zuckerberg's “Building Global Community” manifesto, highlighting the growing belief in a strong tie between government and social media. Nam (2020) explores how social media companies’ IPO statements position themselves as vehicles for economic growth and a “virtuous venue for self-expression and community building, while at the same time effectively concealing … the wide use of free labor” (p. 420). Highlighting different ideals of how communities can operate in relation to moderation practices, Cowls and Ma (2023) examine how Parler's co-founder and CEO rhetorically positioned that platform as a free-speech oriented town square.

Importantly, Gillespie (2010) argues that platforms position themselves through strategic language use to pursue current and future profits. He notes, “The business of being a cultural intermediary is a complex and fragile one, oriented as it is to at least three constituencies: end users, advertisers, and professional content producers.” (Gillespie, 2010: 7). We posit that there is an additional fourth constituency that these companies address: volunteer moderators, as a free-labor source that puts into action these companies’ visions of a technologically mediated local community. We argue the role of volunteer moderator in local community groups is a learned role, and moderators’ beliefs, expectations, and normative behaviors in these spaces may be shaped, in part, by the company's discourse.

We extend Gillespie's logic to trace how the social media industry's discourse positions itself in relation to local communities, the ideals of local community groups, their place in civic life, and the local volunteer moderators within them. We engage in a qualitative thematic analysis of 849 documents created by Facebook, Reddit, and Nextdoor (400 from Facebook, 184 from Reddit, and 265 from Nextdoor) between 2017 and 2023 that broadly relate to communities, volunteer moderation, and the role online groups play in local civic life. We have chosen these three platforms as they are each publicly traded, began in the U.S., are publicly accessible through free sign-up, and have advertised themselves as spaces for community connection as part of U.S. advertising campaigns. We find each company projects a unique vision of what platform-mediated local communities should look like and the roles volunteer moderators play within them. We argue this discourse paints groups as “local platformized utopias,” and simultaneously positions local volunteer moderators as civic leaders that serve as the labor force that perpetually works to realize the utopian features. This promotion of civic virtue helps these for-profit companies recruit, motivate, and sustain a non-paid workforce that performs a critical value-creation function. Findings from this work bridge several disparate literature areas including community studies, work on platform moderation, civic engagement, and sociotechnical imaginaries.

Literature review

Moderation and online communities

Since the late 1970s, individual users have helped to moderate online communities (Jiang et al., 2023; Seering et al., 2022). Moderators represent a diverse group of both paid workers and volunteers who help determine when content or behavior violates established policies (Gillespie, 2018; Roberts, 2019). There are more than a hundred thousand people employed as commercial moderators (Chotiner, 2019) whose (often precarious, see: Newton, 2019; Roberts, 2019) work consists of reviewing social media posts to ensure compliance with platforms’ community guidelines or terms of service (Roberts, 2019; Steiger et al., 2021; Wong and Solon, 2018). While commercial and volunteer moderators may review content for its compliance with established rule sets, there are a number of key differences between the two groups, beyond matters of remuneration.

Volunteer moderation today can encompass a wide variety of tasks. This may include founding groups within a platform (such as making new ‘Groups’ on Facebook or Nextdoor, or subreddits on Reddit); establishing content rulesets for communities that extend beyond platform rulesets; enforcing the removal of violating content and sanctioning of users that run afoul of either platform or local group rules (Seering et al., 2019; Seering et al., 2022); recruiting new moderators to join teams; working with particular software suites to aid in community management (Geiger and Ribes, 2010), performing different kinds of emotional labor (Wohn, 2019); in addition to numerous other tasks and functions. In his analysis of the role of volunteer moderators play on platforms, Matias (2019) argues scholarship on volunteer moderation has typically approached it from three perspectives: that of digital labor, that of civic participation in virtual communities, or that of power-dynamics in the digital space. To that end, we briefly review scholarship from these three areas before turning our attention to local community groups and their intersections with techno-utopian ideals.

Volunteer moderators generate value for platforms, in part, by laboring to foster spaces that interest and attract others. For example, Terranova (2000) highlights how volunteer moderation fits into the paradigm of what has been previously described as the “social factory.” The labor of these volunteers in this way is “simultaneously voluntarily given and unwaged, enjoyed and exploited, free labor on the Net … is animated by cultural and technical labor through and through, a continuous production of value” (p. 33–34). Examples of this from the early web include the how volunteer moderators of America Online community chat boards managed those spaces, essentially “co-constructing” them through their actions (Postigo, 2009). Ultimately recognizing the value they were producing for the company and the degree of effort that they were expending, these volunteer moderators filed a suit against AOL for their unpaid labor. This suit was eventually settled for $15 million dollars (Postigo, 2009). Volunteer moderation continues to be a driver in the digital economy today. For example, in 2023, Reddit (which has a market-capitalization of roughly $20 billion dollars) was estimated to have roughly 2000 full-time employees, but 60,000 volunteer moderators that were active on a daily basis (Statista, 2024). Li et al. (2022) estimate that volunteer moderator labor was worth roughly 3% of Reddit's revenue in 2019.

The labor volunteer moderators do is not uniform. Trade-offs between a multiplicity of moderation styles (e.g., human vs. automated), philosophies (e.g., nurturing vs. punishing), and values (moderator identities vs. community identities vs. competing stakeholders) characterize moderation work (Jiang et al., 2023). The style volunteer moderators adopt in their work varies across platforms according to the platform's affordances (e.g., synchronous vs. asynchronous communication), user bases (e.g., young-skewing users versus older), and norms (e.g., maintaining civility). However, much of this labor is often “behind the scenes” and invisible to users (Jarrett, 2016; Li et al., 2022). Nakamura (2015) highlights that much of this kind of work, “is uncompensated by wages, paid instead by affective currencies such as ‘likes’, followers, and occasionally, acknowledgement or praise from the industry” (p. 103). Indeed, Postigo (2009) highlights how much of this labor has become “pastoralized” or feminized and devalued. As such, volunteer moderators are often motivated to do this labor for something beyond money.

While scholars coming from different traditions of political-economic analysis have focused on the labor function and value creation potential of this work, others have focused on how volunteer moderators function as the social glue that maintains online communities. For example, Butler et al. (2007) argue that while many members of online groups contribute to community building in digital groups, those in leadership positions, such as volunteer moderators, often take on an outsized role. Indeed, volunteer moderators often see themselves as responsible for dynamic and intersecting social processes ranging from nurturing and supporting their communities, to fighting for them, to governing and regulating them (Matias, 2019; Seering et al., 2022). Volunteer moderation, in this regard, is often framed by the moderators as a kind of civic obligation or duty. Matias (2019) argues that volunteer moderators’ labor should be considered a kind of “civic participation.”

There are other kinds of non-economic capital volunteer moderators may realize through their positions. Scholars have noted that volunteer moderators hold important gate-keeping roles and have the power to shape flows of information in these digital spaces. Schneider (2024) for example, posits that platforms give out this power to volunteer moderators as a kind of payment and argues that it constitutes a kind of feudalism. Riedl (2020) provides a cautionary note, stating, “As platforms grow, however, governance in democratic peer production settings may gravitate towards oligarchy. Platform moderation policies and censorship mechanisms in both commercial and volunteer platforms can clash with user interests.” Indeed, as Matias (2019) observes, volunteer moderator decisions are not always in line with the wishes and desires of their userbase, and democratic processes can be important for maintaining transparency and more effectively navigating the moderator-user relationship.

Local community groups

While a body of work has explored volunteer moderation of online communities in general, there has been far less consideration of volunteer moderation for local online communities specifically. We define local community groups online as digital spaces on social media sites whose focus is tied to physical geographic places such as cities, counties, neighborhoods, or other housing communities. Indeed, part of what makes local groups unique is that they often bridge between physical space and virtual space in ways that other forms of online community may not.

Research on the community effects stemming from the interpenetration of the digital and the local is not new (Hampton and Wellman, 2003). However, the increasing “platformization” (Helmond, 2015; Helmond and van der Vlist, 2024; Poell et al., 2019) of local communities is. In our context, platformization refers to the integration of a material technical infrastructure that embeds unique values and logics and provides an ecosystem for socialization that the community itself does not own. The rise of digital platforms alongside the economic decline of local news media has together led to increased reliance on platform-based community groups as sources of information and connection in local communities (Adria, 2020; Domingo et al., 2015; Mathews, 2022; Thorson et al., 2020).

Digital platform companies create opportunities for localized interaction through many different mechanisms. Nextdoor is almost entirely focused on neighborhood-level communities. In contrast, Reddit and Facebook enable groups of all kinds—localized groups are just one subset of their broad portfolio. However, they are also an important type. For example, there are more than a thousand city-focus subreddits globally, hosting a range of topics and civic-discussions, including everything from leisure activities, social welfare issues, taxes, crime, weather, homelessness, missing pets, among dozens of others (Lê et al., 2020). Likewise, Facebook offers a range of local groups, including those for buying and selling used goods, sharing local news and notices, preserving local history and memories, and providing support for parents, among others (Ballatore et al., 2024). Indeed, many groups on these platforms serve as a critical space for citizens to access hyperlocal media and news (De Meulenaere et al., 2020; Turner, 2021).

Local community and techno-utopian ideals

Utopian thinking about digital futures has long been a feature of sociotechnical imaginaries (Bina et al., 2020). The term “utopia” refers to an ideal: “how we would live and what kind of a world we would live in if we could do just that” (Levitas, 2011: 1). Utopias also encapsulate hope, “a kind of reaction to an undesirable present and an aspiration to overcome all difficulties by the imagination of possible alternatives” (Vieira, 2010: 7). In this way, they represent a “speculative discourse” (Vieira, 2010: 7), as they entail dual connotations of perfection and impossibility.

Various figures and movements have long imagined the possibilities the Internet could offer for building new kinds of communities across time and space (Rheingold, 1993; Turner, 2005). With inspiration from the New Right, New Left, and counterculture movements of the 1960s and 1970s, these enthusiasts characterized early forums through the techno-utopian lens (e.g., Rheingold, 1992) of the California Ideology (Barbrook and Cameron, 1996; Turner, 2005). With a strong techno-determinist undercurrent, those working in or around Silicon Valley saw online communities as blank canvases upon which to paint a happier, more convivial, prejudice-free co-existence, and technological progress as “lead[ing] back to the America of the Founding Fathers” (Barbrook and Cameron, 1996: 6). West Coast ideologues imagined libertarian spaces of free expression and equality, while ignoring hardened material realities of social and economic inequalities (Barbrook and Cameron, 1996). Although these early techno-utopian dreams coincide with fears around the weakening of traditional community and concerns that social media afford more sparse, supposedly shallow, connections (Hampton and Wellman, 2020; Putnam, 2000), they remain deeply embedded in the ethos of the U.S. tech industry (Marwick, 2015). During the Web 2.0 boom, the tech industry embraced a vision of social media as strengthening participation, democracy, and community (Marwick, 2013). Bay Area startups often saw online and offline sociality as symbiotically linked, and the computing infrastructure sometimes materialized this mutuality through local area networks (LAN).

While utopian local communities in American have often been defined by pastoralism (Hampton and Wellman, 2020), the “technopole” of Silicon Valley (Castells and Hall, 1994) has offered a new vision of utopian localism, shaped in the region's image. Contemporary social media platforms represent the legacy of earlier cyber-utopian visions of networked communities (Marwick, 2013), and rely on “happy nostalgia for a safer, more naïve time” (Rider and Murakami Wood, 2019: 643). But who creates these images? Mager and Katzenbach (2021) argue, “imaginaries are increasingly dominated by technology companies who […] take over the imaginative power of shaping future society” (p. 223). As such, as social media platforms gain an increasingly important place in local communities, it is important to examine their visions of local communities and how these visions may position the activities within them. To that end, we posit that companies produce idealized, cyber-utopian visions of local network communities that we refer to as, “local platformized utopias.” Further, we argue volunteer moderators play a key role in local platformized utopias, as the actors whose labor ultimately creates, maintains, and manages these spaces, as civic actors, and through their work exercising power in the management of their communities.

In what follows, we examine the local platformized utopia imaginaries of community groups that Facebook, Reddit, and Nextdoor project in their discourse, and how their discourse positions moderators to realize their ideals. Although not fully determinative of the nature or role of these local groups in broader local civic infrastructure, platform discourses offer insight into the ideals driving the companies’ development of these spaces and downstream interpretations of them, and how they position and entice “local leaders” to take charge of them.

Methods

To answer our research question, we conducted a qualitative thematic analysis of public statements created by Facebook, Reddit, and Nextdoor about volunteer moderators and local communities. In general, qualitative content analysis is a method, “for the subjective interpretation of the content of text data through the systematic classification process of coding and identifying themes or patterns” (Hsieh and Shannon, 2005: 1278). By exploring the overarching themes that emerge from this language use, we can get a better sense of the local platformized utopias projected by these companies.

Data collection

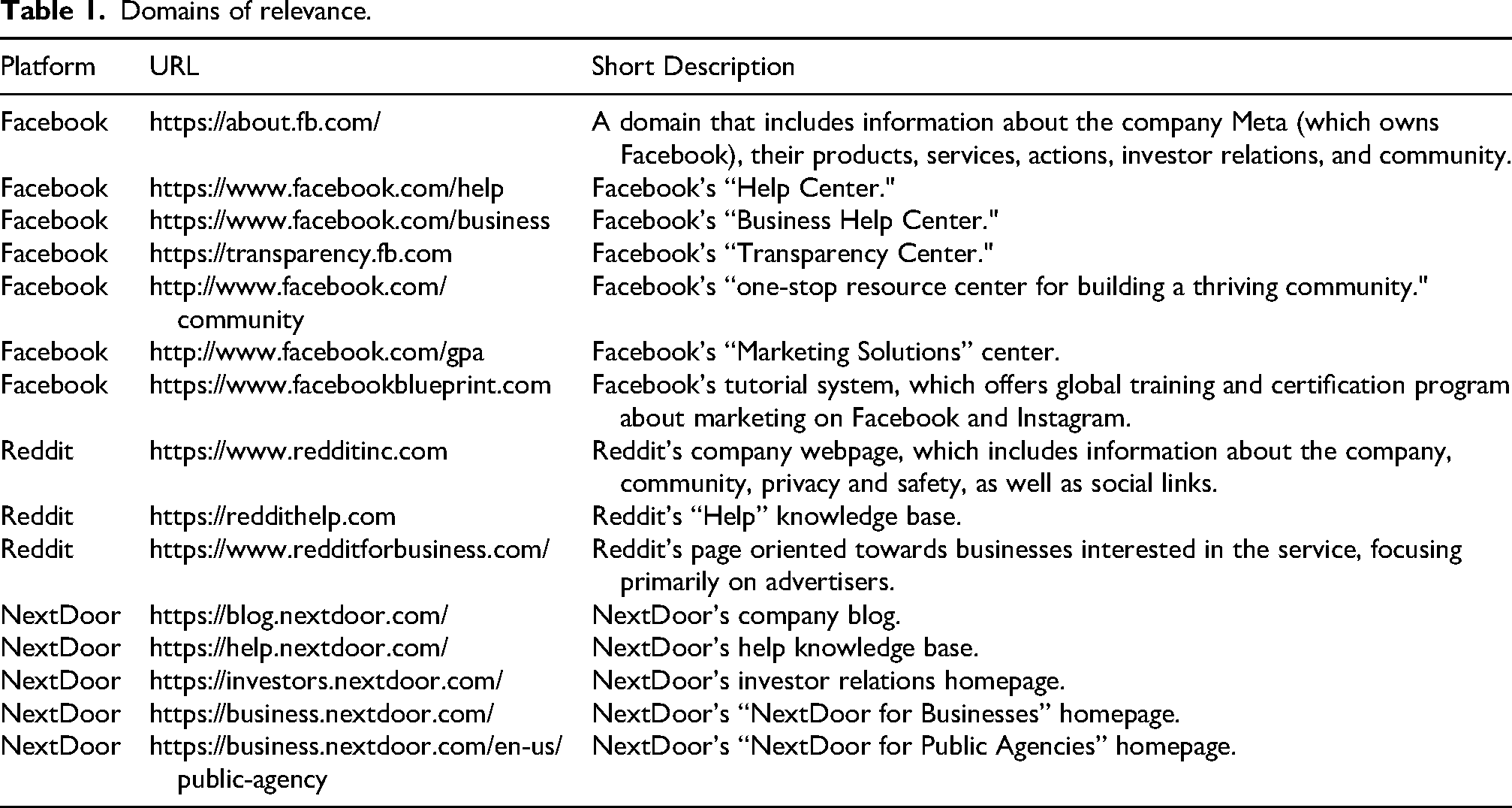

We chose to focus on materials that these platforms make publicly available on their websites. We began by creating a list of potentially relevant high-level URLs for each website by iteratively exploring the top-level domains of each social media platform. In total, we included 7 Facebook domains, 5 from NextDoor, and 3 from Reddit. These are illustrated in Table 1.

Domains of relevance.

Each of these websites independently had hundreds of pages of material. In order to determine which materials from these URLs would be most germane to our research question, we conducted targeted searches using Google. In these searches, we searched for a given keyword, as it appeared within the site URLs listed in Figure 1 (producing searches as “keyword site:https://relevantURL). We chose to search each domain using the keywords “moderator,” “admin,” and “neighborhood,” in some 45 searches. We used the website < https://thruuu.com/> to transcribe the organic search results on Google and saved the generated lists of URLs in Microsoft Excel. After collecting a full list of searches, for each site that returned a result, we saved a PDF copy of that website.

Domains searched.

We removed duplicate materials, non-English language materials, materials that were unrelated, excluding a total of 749 documents and leaving 849. All documents in the corpus were collected between January and February 2023, but the post dates of the original web contents ranged from July 2012 to February 2023. The websites we identified ranged in terms of their genre and likely intended audience. For example, help center documents were sometimes written for moderators, sometimes for users, and sometimes for businesses that use the platforms. Blog posts authored by the companies may be directed to users, but also may be oriented at potential advertisers. Documents like transparency reports may have multiple intended audiences, such as everyday users, policymakers, or investors. As such we did not remove any documents from our corpus on the basis of audience, as documents written for one audience may certainly be discovered by others through search, nor did we make any removals based on genre.

Data reduction

We developed a coding scheme to identify the most relevant documents in the broader corpus. We first constructed a set of themes based on our review of the relevant literature that described key topics related to local community information and moderator support, and wrote a codebook (Schreier, 2012). Table 2 provides a list of codes, their definition in the codebook, and an example passage that would cause the document to be flagged as containing the code.

Coding schema.

We have provided original URLs, though please note that some of the content has been removed or has undergone “link rot.” Copies of most URLs are available through archive.org. For those not, please contact the authors.

We used this coding scheme as a data reduction technique. Coding occurred at the document level, and each document could receive multiple codes (for example, a document could contain codes 1, 3, and 6). Three researchers on our team began by coding a sample of 149 documents to establish intercoder reliability for the six thematic categories. Our three coders reached a satisfactory Krippendorff's alpha coefficient of .65.

To answer our research question, we narrowed in on a specific subset of the corpus: documents coded as containing 1) Discussion of Local Communities, 3) Discussion of Volunteer Moderation as Practice/Work,

Results

Promises: Visions of local platformized utopias

All three platforms routinely projected an idealized version of local community space within the platform. However, each platform varied in the specifics of its vision. In the following subsections, we illustrate emergent themes. Citations from our corpus have been simplified to “PlatformName#NNNN” for ease of reading with full bibliographic information available in Appendix A.

Local platformized utopias on Facebook

Facebook's ideal local community group is a “place[] to connect” (Facebook#1475), particularly “around shared interests and life experiences” (Facebook#1466). It centers on inclusivity and belonging, with references to “a respectful and inclusive community” (Facebook#580) and “diverse and inclusive viewpoints” (Facebook#878). Facebook positions inclusivity and belonging alongside a goal of “creat[ing] understanding and foster[ing] an ability to see common or shared humanity” (Facebook#547). In working towards this goal, Facebook's ideal community works to “bring[] the world closer together” (Facebook#1470).

In Facebook's ideal community, members’ differences are welcome as they “allow for fresh perspectives and unique opportunities to learn from each other” (Facebook#501). Maintaining this diversity requires that members work hard to find “common ground” and “bridge divides” (Facebook#551). It also means members are experts at maintaining “constructive” and “healthy” conversation and navigating “controversial conversations” (Facebook#551). While disagreements are to be expected and “it is inevitable that a conflict will come up” (Facebook#564), members of Facebook's ideal community do their best to avoid conflicts when possible. Community members share the goal of creating a “safe space” (Facebook#761, Facebook#820, Facebook#878, Facebook#1418) for “support and connection, not hate or harm” (Facebook#1445).

Members of Facebook's ideal community feel a strong sense of connection to each other akin to “family” (Facebook#864) and feel they “belong to a strong community they can relate to and thrive in” (Facebook#759), a place where they “come together to ask questions, support each other, swap anecdotes and tips” (Facebook#820), as guided by values of authenticity, safety, privacy, and dignity (Facebook#564). Sometimes community members’ bonds lead to collaboration on local projects and initiatives, including advocacy and fundraising for causes (Facebook#820). Facebook's ideal community is valuable for credible information about topics such as taking care of pets, announcements from local schools, and real-time weather updates (Facebook#820, Facebook#864, Facebook#875).

Local platformized utopias on Reddit

Reddit's ideal community is a grassroots space where members “find not just news and a few laughs, but also support, new perspectives, and a real sense of belonging” (Reddit#28). Connections amongst members sometimes lead to meaningful local actions like fundraising for a children's hospital or creating tools to alert people when COVID-19 vaccines become available in a local area (Reddit#53).

It is an orderly “democracy—where everyone follows a set of rules, has the ability to vote and self-organize (Reddit#28). It is a “fair and tolerant place for ideas, people, links, and discussion” (Reddit#92), one that is “free of harassment, bullying, and threats of violence” (Reddit#75). Like Facebook, Reddit's ideal community emphasizes inclusivity, striving to “maintain a healthy and welcoming environment” (Reddit#109). Members are “incentivize[d]” (Reddit#28) to abide by the rules and contribute constructively with quantifiable metrics that represent their reputation score (karma) (Reddit#28, Reddit#75). Moreover, anyone exhibiting “transgressive behavior” or “low-quality” contributions (Reddit#28) will be speedily rejected by other members or even expelled (Reddit#94).

Local platformized utopias on Nextdoor

Nextdoor's ideal community is defined by “cultivat[ing] a kinder world where everyone has a neighborhood they can rely on” (Nextdoor#226) and the understanding that “a little kindness goes a long way towards making life easier, neighborhoods stronger, and the world a better place” (Nextdoor#546). It goes so far as to illustrate the importance of kindness among neighbors by citing a study that found “41% of people say they would adopt the child of a neighbor who passed away” (Nextdoor#368).

Nextdoor's ideal community is also one in which “trusted information” prevails as premised on “civilized discourse” (Nextdoor#294) and “respectful discussion” (Nextdoor#253), . NextDoor positions itself is a place “where you connect with people from diverse backgrounds and viewpoints and have conversations that bring people together and spark change without conflict” (Nextdoor#253). Members may readily discuss and share “Links to news, websites, or resources that are directly relevant to local politics or issues” and “Why [they] support a particular local cause or candidate” (Nextdoor#253). However, other political talk like “national partisan politics or international geopolitical issues” (Nextdoor#253) are taboo. At the same time, the Nextdoor ideal community is one which “everyone is empowered to have their voices heard” (Nextdoor#253), and hate speech and discrimination are not tolerated.

How platforms leverage volunteer moderators to realize these visions

Facebook, Reddit, and Nextdoor rely on volunteer moderation to help build and maintain the promise of these idyllic, local platformized utopias. Facebook acknowledges this directly, stating communities are “built, not bred” (Facebook#878). Across the corpus, we see platforms encourage volunteer moderators to: 1) “proactively” moderate by promoting dialogue, modeling good behavior, and being a rules and values teacher; 2) “reactively” moderation, where volunteer moderators enforce rules about content within groups; and, 3) surveil and manage the community by keeping moderation records, and growing the volunteer moderation team as a group scales.

Proactive moderation: the neighborhood welcome committee approach

Facebook, Reddit, and Nextdoor each encourage moderators to engage in “proactive moderation,” helping realize the local platformized utopias through the cultivation of a group culture consistent with its ideals. For example, Facebook states, “The best rules are those which encourage the behavior you want to see within the community rather than sanctioning behavior that you find unacceptable

These three platforms routinely suggest ways moderators can promote prosocial community values and norms. Facebook encourages moderators to foster dialogue and relationships (Facebook#547), stating local moderators should “bring diverse voices and experiences together and make inclusion a part of your community DNA” (Facebook#818). Nextdoor suggests volunteer moderators focus on, “Slowing down, actively listening, and leaning into compassionate empathy” to “create an environment where everyone can feel seen and heard, further fostering a sense of belonging and inclusion.” (Nextdoor#498). Alongside these more abstract directives, volunteer moderators are encouraged to educate users about community values and norms. For example, Reddit volunteer moderators are told, “To maintain a healthy and welcoming environment, you’ll use education to help bring folks in line with your community's culture and expectations.” (Reddit#109). Facebook encourages moderators to “share your rules and norms with newcomers, teaching them how to interact” (Facebook#878), and in another document, suggests “Reinforcing rules with periodic reminders… even posting a monthly reminder, so members know their responsibilities” (Facebook#551).

In addition to educating users, moderators can contribute to a culture that fosters a utopian experience by being role-models. Facebook remarks that the “moderator team should model the behavior you want to see” (Facebook#878). Nextdoor encourages moderators to promote empathy and personally enacting the community guidelines (Nextdoor#501). Reddit encourages moderators to post regularly (Reddit#105).

Reactive moderation: the home-owners association approach

All three platforms note volunteer moderation also occurs through discouraging certain behaviors through rule-setting; punitive enforcement such as the removal of rule-breaking content; sanctioning users (e.g., muting, removal from group, etc.); and dealing quickly with disruption, when it occurs. We refer to this as “reactive moderation.”

We saw two different versions of reactive moderation. Volunteer moderators on Nextdoor are encouraged to enforce the platform's Community Guidelines, with specific guidance on when to remove, vote to remove, or keep content (Nextdoor#253). Meanwhile, Facebook and Reddit both offer flexibility to volunteer moderators in how they are allowed to establish rule-sets and enforce them. For example, Facebook suggests: “Having a solid set [of rules] makes it easy to identify when members are not following them and give moderators the support they need to enforce” (Facebook#815). Reddit emphasizes how rule development helps shape local community culture, noting subreddit rules may be “tailored to the unique needs of each individual community, and are often highly specific.” (Reddit#94).

Facebook encourages volunteer moderators to deal with rule violations through several mechanisms. In one document, Facebook states that volunteer moderators can require moderator approval before posts become visible, remove comments on a group post, and mute members entirely (Facebook#890). In Reddit's case, mods are also left to their own devices to determine how to enforce the subreddit specific rules: “Volunteer community moderators are empowered to remove any post that does not follow their community's rules, without any involvement or direction from Reddit, Inc.” (Reddit#94). This flexibility is positioned as moderator empowerment.

Volunteer moderators across all three platforms are encouraged to keep an eye out for content that breaks site-wide rules, are encouraged to be vigilant and act quickly when they see rule breaking materials. On Facebook volunteer moderators are encouraged to look out for hate speech, calls for violence, or false information about voting (Facebook#580). Nextdoor relies heavily on the use of expedient language, such as, “In the event you think someone may be using racial, discriminatory, or hateful language … please

Record-Keeping and management in moderation: the city Hall approach

In addition to proactive and reactive moderation, volunteer moderators are encouraged to keep and review records of their groups’ activities and members, to use particular technical features and platform tools to help moderate, and to grow the moderation team as their community scales. We refer to this as “the city hall approach.”

All three platforms encourage moderators to use both platform affordances and personal tools to keep activity logs to learn patterns of problems. Facebook offers tools that automatically keep a log of volunteer moderation of pending posts, removal of comments, and the muting of members (Facebook#890). Reddit similarly encourages volunteer moderators to, “Consider attaching a mod note to a member when you've attempted to lead [a user who has violated a rule] down a better path” (Reddit#109). Nextdoor also has a logging system that assists review teams and leads “review reported content from the past … and understand what content has been removed and why” (Nextdoor#441).

Companies encourage volunteer moderators to use different tools within their platforms to help realize particular aspects of the utopian visions they lay out. For example, in the case of NextDoor, they draw a direct line between moderation history tool use and, “make their neighborhoods a better place” (Nextdoor#441). Many of the tools that the platforms advise on were established to help volunteer moderators deal with the problems of scale, or to pre-empt bad faith actors. For example, Facebook introduced a tool that, “automatically decline[d] incoming posts that have been identified as containing false information” (Facebook#1475). However, rather than just tracking offences and removing content in an automated fashion, volunteer moderators are also centrally encouraged to make certain kinds of information more accessible within the community through platform affordances. For example, Reddit suggests, “Making sure your community rules and guidelines are clear and easily found by new visitors will help to reduce confusion and lessen accidental policy infractions. It's also good practice to have a page in your wiki or details in your sidebar detailing how you handle policy infractions” (Reddit#108).

Growth of the community group is at the forefront of many of the documents. In its Community Management Study Guide, Facebook states, “Community managers are in charge of building,

Moderating a growing community can necessitate a larger volunteer moderation team, and volunteer moderators are themselves encouraged to find ways to help manage the workload. Facebook acknowledges the work-like aspect of this, positioning it as an obligation that one assumes when becoming a volunteer moderator, stating, “Growing your group can be challenging, and dedicating a fair amount of time to serving your community requires commitment.” (Facebook#852). Facebook rationalizes that, “Building and growing your moderation team lets you empower your most valued members, enhances your ability to respond to issues and gives you a support system” (Facebook#871).

Facebook, Reddit, and Nextdoor each acknowledge the time and energy it takes volunteer moderators to continuously foster a local platformized utopia. For example, Nextdoor notes, “Ensuring discussions stay healthy and productive takes a tremendous amount of energy” (Nextdoor#253). Facebook also suggests that volunteer moderator teams check-in on each other, stating, “Conflict can happen anywhere - even within your own team…If you feel your teammate is overwhelmed or needs a break, give them opportunity to take time off or let them leave with the best intentions.” (Facebook#871). Indeed, keeping volunteer moderators doing what they do appears to be a key aim of this discourse.

Digital communities and physical geographic place

A Facebook blog post about the success of online communities during the pandemic articulates a core claim that platforms make for their digital spaces: “if it can happen in real life, it can happen in a Facebook community” (Facebook#886). This core claim represents a tension: The three platforms analyzed here not only draw on diverse strands of local platformized utopian discourse to motivate and guide volunteer moderators, they all also visibly wrestle with the connections between digital community and physical place-based communities. In some instances, platform discourse works to keep the non-utopian struggles of place-based community—conflict, politics, un-neighborly behavior—out of the local platformized utopia. In other moments, digital platforms encourage moderators to amplify and celebrate connections between local communities and their online representations. For example, Facebook offers an extensive guide for moderators to help them address hate speech in their (online) communities. The document is framed as a set of tools for preventing what is happening outside Facebook from affecting communities on the platform (and never the other way around). “With more than 3 billion people using Facebook's apps every month, what's happening offline is often mirrored on our services [emphasis added]” (Facebook#580). Moderators are encouraged to prevent pollution of their online spaces with struggles happening in “real life” proactively and reactively. Nextdoor uses a combination of algorithmic tools, professional moderation, and volunteer moderation to prevent conflictual real-world national political discussions from entering Nextdoor communities. Discussions of national politics are not allowed in neighborhood feeds, though local politics are. National political conversations can only occur in specially designated group spaces which must be sought out and proactively opted into (Nextdoor#253). Like Facebook, Nextdoor also asks moderators to report hate speech “either overtly, or veiled” and “advocacy or support of violence” (Nextdoor#253).

Celebrating the connections between online and offline community

All three platforms emphasize a net positive impact that the local platformized utopias may have on offline communities and position volunteer moderators as essential for realizing this impact. For example, Reddit and Facebook encourage moderators to build bridges to their offline communities by encouraging moderators to do things like holding community fundraisers (Facebook#794) or promoting video game marathons to raise money for local hospitals (Reddit#21). Volunteer moderators are also encouraged to help create better offline communities by supporting local economic development efforts. During the pandemic, Facebook moderator training materials encouraged groups to be “a place for business owners to stay connected with their local customers and crowdfunding” as well as to identify “trusted organizations who are helping local businesses in your community to share with members” (Facebook#919).

Nextdoor and Facebook both articulate efforts to use digital tools to promote social good in the “real life” version of the community. One way they do so is by idealizing a high-intensity form of neighborliness. Facebook wrote a profile of one moderator, whose group boasts membership by more than half of all the 20,000 homeowners who live in a planned community. According to the Facebook blog post, “[the moderator] and her group help weave the social fabric in Sienna. Sienna Neighbors gives residents the opportunity to mesh both online, and most importantly, offline. This is partially a testament to her style of moderation, but also to just how influential and pivotal an online community can be” (Facebook#864). Sienna Neighbors is only open to local residents and serves as a “private and secure online environment” for discussion and mobilization of neighborliness—enabling the strength of online interaction to spill over to improve the offline community. “Building respect and trust online makes group members better neighbors in real life,” writes the author of the blog post. The power of the group in the community was particularly visible during a 2017 hurricane: “People were not watching the news, they were watching the group.”

Finally, Nextdoor and Facebook both explicitly value local knowledge as a characteristic of good moderators. Nextdoor suggests to moderators that their contextual understanding of their community will help them tell the difference between good and bad content: As neighborhood moderators, you all have the local knowledge and expertise that makes your voice critical in knowing if a piece of reported content is truly hurtful or harmful to your neighborhood. Your willingness to contribute to your community in this way sets you up as a local leader (Nextdoor#504). The admin of the San Miguel Unido y Conectado group has started encouraging members to post videos of themselves “sharing” the activity of drinking maté in their respective homes. This way members can keep the tradition alive while their regular maté rituals are on hold. (Facebook#886) To get her community engaged, [she] uses a combination of moderator tools and good old fashioned Southern hospitality…. Thanking problematic members for their involvement while dismissing them from the group for not following the rules – [she]refers to this as “bless and release” (Facebook#930)

Discussion

Our analysis illustrates how Facebook, Reddit, and Nextdoor each construct a local platformized utopia, though with slight variations in underlying ethos and ideological approaches to governance in each, and in how volunteer moderators function as a key actant in realizing them. Facebook emphasizes diverse connections, bringing the world closer together (one locality at a time), and communities that are constructive. Nextdoor emphasizes kindness, inclusivity and belonging, and meaningful connections between neighbors. Reddit emphasizes a bottom-up view of community akin to a liberal democracy where free expression pairs with tolerance of multiple points of view. Each of the platforms relies on volunteer moderators to help realize these visions. To properly guide volunteer moderators, these companies construct discourse that encourages forms of “proactive” moderation, “reactive” moderation, careful record keeping, growth, and promote the avoidance of volunteer moderator burnout.

Platforms’ projection of these local platformized utopias is important to the companies’ success. Certainly, the discourse helps attract users. However, this rhetoric is also key for recruiting the local volunteer moderators themselves. Volunteer moderators are a critical source of (free) labor for these companies, worth millions of dollars (Li et al., 2022). This labor helps maintain local community groups as a valuable product for the companies. Access to local content, civic information, and local sociality gives users a strong incentive to stay on the platforms. But to sustain the voluntary workforce needed to build, govern, and grow these spaces, the companies must motivate volunteers not with money, but with something else. They accomplish this through the promise virtue and status. This discourse positions individuals to value the work of being a volunteer moderator as a means of altruistically “making a difference” in their community, similar to values-driven, other-oriented offline volunteerism (Wilson and Son, 2018). By characterizing volunteer moderators as the key to realizing local platformized utopias, the companies elevate their status and rationalize the significant time and labor those volunteers expend.

Platforms also appeal to egoistic motivation (Wilson and Son, 2018) by suggesting volunteer moderators are leaders in their communities. In doing so, they encourage volunteer moderators to create content themselves and, in turn, guide other users in creating content within the boundaries of the rules. By generating and sustaining positive engagement on the platforms, volunteer moderators create value for the companies beyond mere rule-setting and enforcement. They cultivate the culture necessary for local platformized utopias to be realized. The privileged status the companies grant to volunteer moderators echoes the Californian Ideology's idea of the “virtual class” or “techno-intelligentsia” who uphold technology and media industries (Barbrook and Cameron, 1996). As part of the virtual class, demanding expectations for volunteer moderators’ time conveyed by the companies redefine their work as a key path to self-fulfillment. By channeling the free labor of volunteer moderators to build a network that functions as a simulacrum of local community, encouraging growth and the recruitment of additional new free labor/volunteer moderators, the companies can derive value from the intrinsic network effects of these spaces.

The platforms’ discourses also orient volunteer moderators towards performing another kind of labor: that of reputation management and accountability buffering for the platforms. For example, in response to criticisms about racism on the platform (Holder and Akinnibi, 2022), Nextdoor began much more proactively emphasizing the removal of hate-speech and the promotion of inclusivity in its discourse (Nextdoor#226). Facebook and Reddit both highlighted stories featuring volunteer moderators performing “Good Samaritan” acts that position the platforms as productive of more compassionate, cooperative communities. The realization of these responses relies in part on local volunteer moderators, but ultimately serves the reputation of the companies.

Volunteer moderators are more than the frontlines of realizing these initiatives. When new (or old) social problems emerge, volunteer moderators can be turned towards addressing the problem without substantial new investments in labor on the part of companies. And, if users or other external stakeholders perceive a persisting failure to effectively resolve the problem, volunteer moderators act as buffers, putting distance between the companies and the on-the-ground decision making, as the companies have “empowered” their volunteer workforce to deal with the problem. In this sense, volunteer moderators serve as a convenient fire-shield, as part of a broader networked platform governance (Caplan, 2023). Positioning moderators in this way may also reinforce a vision of volunteer moderators as esteemed leaders, shouldering the great responsibility of upholding a communities’ ideals. This model also reinforces the idea that the ideological view of what constitutes an ideal community flows from the top down, despite local empowerment narratives. Volunteer local community moderators are a labor force, first and foremost. And, indeed, the narratives of civic virtue may obscure unequal burdens of moderation labor, particularly as volunteer moderators are asked to both create and realize a local platformized utopia while keeping the less commercializable aspects of social life, such as conflict and social strife, out.

While Facebook, Nextdoor, and Reddit occasionally mention the importance of offline local expertise, many volunteer moderators fall into the role through either self-selection or active participation in their online community. The corpus reflects extensive discussion of what moderators should and should not do, suggesting a perceived understanding of volunteer moderators as leaders-in-training rather than already-made leaders. Optimistically, the difference between the ascendancy of leaders offline and within the platforms’ digital spaces could represent an opportunity for true grassroots governance of communities, as volunteer moderators face fewer institutional barriers to attaining their position. Alternately, volunteer moderators might be seen as those receptive to or unquestioning of platforms’ articulated definitions of good leadership, which serve the platforms’ needs and interests first and foremost. As a quasi-class of local leaders, future work should consider how volunteer moderators mediate their local communities’ needs and interests as they negotiate those of the platforms.

Conclusion

In this work, we have illustrated the promises social media platforms make about local community groups and how these promises serve as idealized, local platformized utopias. At the same time, these social media companies strategically position moderators to help realize those promises, while simultaneously attracting and guiding a voluntary labor force. The companies do so, in part, by motivating people not with money, but virtue, while reaping the economic benefits of being an intermediary to local communities. By portraying volunteer moderators essential for realizing local platformized utopias, the companies construct a vision of volunteer moderation as high-status, a responsibility that carries cultural cachet.

While we have traced the discourse of these three companies, there remains much that is unknown. Our data collection method naturally has limitations. While we have chosen three of the most prominent and publicly accessible social media platforms, there are certainly other services which connect neighbors to neighbors. Further, we focus on English language materials, specifically in the context of the United States. We note this because online content laws vary, sometimes significantly, and the kinds of statements companies make about moderation are likely to vary depending on the audience it is speaking to. Future work should consider studying volunteer moderators to better understand how they do and do not respond to these discourses, and the degree to which their lived experiences match with this idealized rhetoric. There also remains a significant gap in understanding national differences in this discourse. Further, while we have suggested how local volunteer moderators may beckon to the Californian Ideology's idea of the “virtual class” or “techno-intelligentsia” who uphold technology and media industries (Barbrook and Cameron, 1996), this concept of a class should be further developed, particularly given Steve Huffman (the CEO of Reddit)'s description of moderators as “landed gentry” during the platform blackouts in 2023 (see: Ingram, 2023 for more).

As social media technologies attempt to naturalize their position, the social character of neighborhoods may be changing in tandem. What does it mean to be involved with your local community today? It is becoming more common for people to discover and learn about community ongoings through a platform mediated local group. The vision for these groups comes from top-down rhetoric that constructs these spaces as a local platformized utopia. Yet, the platforms expect local volunteer moderators to realize that vision. Volunteer moderators are serving as a new kind of local community leader, but one whose labor platforms seek to carefully discursively construct to serve a corporate value-creation end.

Footnotes

Acknowledgements

We would like to thank Sarah Gilbert for sharing her insights on an early version of this work. We would also like to thank Marialina Antolini, Benji Davis, and Gahana Kadur for their efforts and insights on this project. Finally, we would like to thank the anonymous reviewers on this manuscript for their helpful feedback and suggestions.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Science Foundation (grant number 2207834, 2207835, 2207836).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.