Abstract

This study examines how visibility of a content moderator and ambiguity of moderated content influence perception of the moderation system in a social media environment. In the course of a two-day pre-registered experiment conducted in a realistic social media simulation, participants encountered moderated comments that were either unequivocally harsh or ambiguously worded, and the source of moderation was either unidentified, or attributed to other users or an automated system (AI). The results show that when comments were moderated by an AI versus other users, users perceived less accountability in the moderation system and had less trust in the moderation decision, especially for ambiguously worded harassments, as opposed to clear harassment cases. However, no differences emerged in the perceived moderation fairness, objectivity, and participants confidence in their understanding of the moderation process. Overall, our study demonstrates that users tend to question the moderation decision and system more when an AI moderator is visible, which highlights the complexity of effectively managing the visibility of automatic content moderation in the social media environment.

Keywords

Introduction

Moderation in social media platforms has become a prominent topic, especially with the rising wave of cyberbullying and online harassment. Online harassment ranges from offensive name-calling or purposeful embarrassment to stalking, physical threats or harassment over a sustained period (Vogels, 2021). According to surveys published by Pew Research, as many as 41% of U.S. adults have personally experienced online harassment (Vogels, 2021), and 66% have witnessed potentially harassing behavior directed toward others online (Duggan, 2017). To understand how content moderation works to prevent and remove inappropriate content, activists have pushed for more transparency in moderation decisions (Santa Clara Principles, 2018), especially since each social media platform (i.e. Facebook, Twitter, etc.) has its own set of community guidelines and regulations. Yet, most moderation systems and decisions remain opaque (Banchik, 2020; Crawford and Gillespie, 2016; Suzor et al., 2019), which can leave users confused and unsure about how and why content moderation occurs (Crawford and Gillespie, 2016; Jhaver et al., 2019a). While there is abundant critical scholarship focused on the issue of content moderation (e.g. Barocas et al., 2013; Gillespie, 2014, 2020; Kroll et al., 2016), few empirical studies have looked at how information visibility on moderation decisions affects users. One stream of research has started to focus on the reaction of the moderated users (Jhaver et al., 2019a, 2019b), while other literature has started to explore the effect of information visibility on online community members (e.g. Matias et al., 2020). For instance, when a welcome note to newcomers explained the community's norms and mentioned that harassment was uncommon within the community, newcomers’ participation in online feminism discussions increased (Matias et al., 2020). Yet, there is limited understanding of how lay users perceive moderation with different types of content and moderation source visibility.

Previous research has shown that users develop folk theories about why or how content has been taken down, and users often think that other humans are primarily responsible for such actions (Myers West, 2018). Yet, social media companies often use a mix of different moderators to regulate content on their website, from automated systems to commercial moderators to users themselves, but we do not know whether users react differently to different sources of moderation. In this study, therefore, our goal is to explore how the display of the source of moderation (AI vs. other users vs. unspecified source) affects users’ perception of, agreement with, trust in, and perceived fairness of the moderation decision. We also investigate how the source of moderation affects users’ belief certainty in how moderation works on the social media site as well as perceived objectivity and accountability of the moderation system.

This study is important for several reasons. First, it is critical to focus on online community members’ perspectives as they, as witnesses of problematic content, are indirectly impacted by harassment and, consequently, by the moderation systems in place. Information visibility, and specifically the source of moderation, can have important consequences on how users perceive social media platforms, learn from the moderation system in place, and whether they decide to become members and participate in an online community (Jhaver et al., 2019b; Matias et al., 2020). Second, existing approaches to algorithm analysis have paid limited attention to the relationships between platform policy and user perspectives (Shin and Park, 2019), so understanding users’ perception is needed to fully comprehend the ecosystem of moderation decisions.

Literature review

Sources of moderation

Social media platforms rely on both automated and human moderation systems to govern the content allowed on their sites. Platforms are working toward creating automated tools to identify hate speech, adult content, and extremism more quickly and at a larger scale than human reviewers, ideally removing this type of content before any human sees it. However, many researchers caution against a full reliance on automatic moderation. Researchers have pointed out that automated moderation tools are not very good at accounting for content subtlety, sarcasm, or cultural meaning (Duarte et al., 2018). They are also not able to easily adjust for context, for example, terrorist speech conveyed within a journalistic post (Llanso, 2019). Additionally, automated moderation systems can exacerbate rather than ameliorate the content policy problems that platforms face. Specifically, these systems, even when well-designed, increase opacity, make moderation practices more difficult to understand, and can reduce fairness within large-scale platforms (Gorwa et al., 2020). In the same vein, Gollatz and colleagues (2018) point out similar issues with ambiguity in the automation of content moderation, and that opaque implementation, vague definitions, and lack of accountability stand in the way of accuracy for automated content moderation.

The other type of moderation employed by platforms, “human” moderation, can take the form of commercial content moderation or community-based moderation. There has been an extensive discussion in the literature around the issues that arise from platforms’ use of commercial content moderators, people whose job it is to look through potentially harmful content before it is posted on social media platforms. Specifically, commercial moderation has been criticized for its harmful labor practices, including extremely stressful and mentally taxing working conditions and mental health challenges faced by moderators, as well as for its overall lack of transparency and accountability (Gillespie, 2018; Roberts, 2016).

On the other hand, sites like Wikipedia, Reddit, and Twitch rely on their own user communities to do most of the moderation. Seering and colleagues (2019) found that this type of moderation system leads to significantly different dynamics compared to when moderation is driven top-down by company policy. User-driven moderation is an intensely social process that is core to community development (see Gillespie, 2020; Llansó, 2020). Even sites that do use top-down moderation approaches like commercial moderators often do so in conjunction with user flagging, which is framed in terms of self-regulation of social media platforms. Many platforms have specific community guidelines and encourage users to flag content that violates these guidelines to distribute the labor of moderation. This also shifts responsibility (or at least perceived responsibility) onto the shoulders of the users rather than on the site.

Calls for increased transparency have grown in the literature, as it is believed that transparency increases understanding, fairness and trust (see Santa Clara Principles, 2018). However, many researchers have rightfully pointed out that blanket transparency may not truly be desirable, especially because transparency can be manipulated, by emphasizing specific information and downsizing other types of information (Berkelaar and Harrison, 2017; ter Hoeven et al., 2021). For example, too much transparency as to how decisions are made could lead to increases in individuals’ attempts to “game” the system. Some scholars have pointed to ways that moderating algorithms can be “gamed” and moderation can be used to harass and silence certain (often minority) groups online (Diakopoulos, 2015; Munger, 2017; Noble, 2018).

Given the different types of moderation source and the impact that revealing each of the sources can have on users, we explore how each of the moderation sources (AI, other users or unknown) affects users’ perceptions of 1) moderation decision via trust and fairness, their agreement with the moderation decision, and likelihood to look at the site content policy; 2) moderation process via belief certainty; and 3) moderation system via perceived system accountability and objectivity. In the next section, we explain the reasoning behind each of our research questions, by accounting for users’ perceptions of moderation decisions to their general understanding of moderation process to the platform's moderation system as a whole.

Fairness and Trust in the Moderation Decision

As the use of algorithmic systems has increased across platforms and technologies, many researchers have started to examine users’ perceptions of algorithms’ fairness, accountability, and transparency (FAT) (Shin and Park, 2019). Broadly speaking, perceived fairness of algorithms reflects a tenet that algorithmic decisions should not be discriminatory or prejudiced and should not lead to unjust consequences (Yang and Stoyanovich, 2017). For example, Facebook states that they strive to have fair moderation decisions, meaning that the same content posted by two users would be equally likely to be either pulled down or left up regardless of who posted the content (Gorwa, 2018). However, fairness can get quite complicated as the perception of a decision's fairness is largely contextual and subjective. Gorwa (2018) points out that aspects of fairness involve balancing individual rights to expression against potential widespread societal harm, and this balancing aspect may vary from users to users or from platforms to platforms.

In addition, another key facet of user perception of moderation is trust. Shin and Park (2019) found that when users had higher levels of trust in algorithmic systems, they were more likely to see algorithms as fair and accurate. Additionally, trust moderated the relationship between FAT and user satisfaction in their study. Other research found that transparency of an automatic moderation system is a prerequisite for trust in that system (Brunk et al., 2019). Yet, an important question is whether trust and perceived fairness of the moderation decision vary by type of moderation source (AI vs. user-based) and its visibility.

Agreement with the Moderation Decision and Likelihood to Look at the Site Content Policy

In addition to perceptions of the moderation decision, it is important to understand users’ actions around it. While social media companies give users the tools to help moderate inappropriate content, not knowing the source of moderation can confuse users and lead to inaccurate guesses about moderating decisions (Myers West, 2018; Santa Clara Principles, 2018; Suzor et al., 2019). Similarly, visibility of the source of moderation might influence users’ decision to check the site content policy when seeing a moderated comment. Looking at the site content policy can serve as an indicator of whether users question or want to gain more information on the moderation system and regulation.

Belief Certainty in the Moderation Process and Perceived Accountability and Objectivity of the Moderation System

For the perception of the moderation process, we examine belief certainty referring to users’ confidence around their understanding of how the moderation process works on the site. Certainty is an important dimension of attitudes and beliefs because it influences behavior's stability and durability over time (Bargh et al., 1992; Grant et al., 1994; Gross et al., 1995). People's confidence around the moderation process is likely to be linked to visibility or transparency around the identity of the moderations and explanations for why certain content has been taken down (Grimmelmann, 2015). When the moderation process is obscure, users lack the basis for evaluating moderation and interpret moderators’ decisions in a subjective (and often inaccurate) way (Kempton, 1986). For example, when a moderation system was opaque, users whose content was moderated by algorithms often erroneously attributed moderation to other users instead of algorithms (see Eslami et al., 2015; Myers West, 2018). In other words, in the absence of transparency around moderation process, users resort to their own folk theories (Eslami et al., 2016), which is a combination of

On the platform level, we examine users’ perceptions of the moderation system's accountability and objectivity. Algorithmic accountability refers to perceived impacts or consequences of an algorithmic system, and the extent to which the algorithmic system (or its creators) is held accountable for unintended harms that arise due to the system's decisions. Users may be concerned that algorithmic systems are vulnerable to making mistakes or can lead to undesired consequences (Lee, 2018). Accountability is also often linked to visibility of information. Being able to make observations from visible information helps create insights and knowledge required to hold systems accountable. It has been shown that visibility of information can create two forms of perceived accountability: a “soft” accountability, where systems and platforms must answer for their action, and a “hard” accountability, where insights and knowledge that come as a result of visibility bring about “power to sanction and demand compensation for harms” (Ananny and Crawford, 2018: 976; see Fox, 2007). Following Rader and colleagues (2018), we refer to perceived accountability as participants’ beliefs of “how the system might be accountable to them, as individual users” (p. 8) through measuring their perceived control over the outputs and perceived system's fairness, and we raise a question of how visibility around the source of moderation might influence perceptions of system accountability.

Finally, we also explore perceived objectivity of the moderation system. Perceived objectivity has been linked to higher perceived trust and credibility in algorithmic decisions (Sundar and Nass, 2001). People often assume that algorithmic decisions are more credible than human decisions because computers are more objective than humans (Sundar and Nass, 2001). However, individuals can vary in their experience with algorithms, and when a machine is too “machine-like,” system objectivity can backfire and users can infer more trust in human than machine decisions (Dietvorst et al., 2015; Waddell, 2018). Yet, perceived objectivity in moderation decisions hasn't been yet explored in relation to transparency around the source of moderation.

Ambiguity in moderation

As discussed previously, there is a great deal of opaqueness surrounding content moderation. Content ambiguity can add another layer of opacity, especially because different social media platforms have different strategies for dealing with problematic content and even have different definitions of allowable or appropriate content. Pater and colleagues (2016) conducted a content analysis of the harassment regulation policies for fifteen different social media platforms. They find what they call a “striking inconsistency” between different platforms in their definitions of harassment. This lack of a common definition of harassment across platforms can cause uncertainty on how to respond to different types of offenses. As a result, there is a discrepancy between the ways in which different platforms respond to harassment. For example, some platforms merely censor the offensive content, while other policies point to the potential involvement of government and law enforcement such as police and intelligence agencies (Brown and Pearson, 2018).

The context and intentionality, previous interactions between the harasser and the person being harassed, offline power dynamics and many other factors can all be important in determining if a post or a comment constitutes harassment (Langos, 2012). In fact, flagging can sometimes move from a mechanism of reporting and upstanding into something that can be “gamed” and abused (Crawford and Gillespie, 2016). Finally, because of ambiguity in harassment comments, building automated systems to detect harassment can be difficult and these systems cannot necessarily understand and interpret ambiguity (Nadali et al., 2013).

Therefore, we explore how content ambiguity can moderate effects of source visibility on users’ perceptions around moderation decision (trust and fairness), moderation process (belief certainty), and moderation system (accountability and objectivity), as well as people's agreement with the moderation decision and their likelihood to look at the site policy. A summary of our predictions is presented in Figure 1.

Summary of predictions.

Methods

This study was part of a larger experiment, which resulted in two separate studies, each one involving different research questions, hypotheses, and dependent variables (first study: Bhandari et al., 2021). The experiment was pre-registered on the Open Science Framework, with the research questions, measures, and analysis plan available at the following https://osf.io/bwm8e. It involved a custom-made social media platform called “EatSnap.Love,” which focuses on sharing pictures of food and reproduces basic functionalities of a social network site (DiFranzo et al., 2018; Taylor et al., 2019). This platform was designed as a testbed to study users’ reactions to harassment online in a realistic yet controlled environment. As a cover story, we told participants that they would be beta testing a new social media platform. On this platform, users can create and share their own social media posts through a newsfeed, and to comment, like, and flag posts. Within EatSnap.Love, we have used a social media simulation engine called Truman, which mimics other users’ behaviors on the site through pre-programmed bots that could comment or like participants’ posts and others’ posts that were displayed on the participant's newsfeed as if they were coming from real users on the site. This way the Truman platform allows for a realistic social media experience while preserving the experimental control through identically curated socio-technical environments for each participant assigned to a similar experimental treatment. The Truman platform is open-source and allows for easy replication of this or any other study, as all simulation material (bots, posts, images, etc.) as well as the platform itself are available on this GitHub https://github.com/cornellsml/truman.

Participants

We recruited 582 participants from Amazon MTurk to participate in our study in exchange for a $10 compensation. The power analysis calculation using the software program G*Power yielded a target sample size of 350, with .80 power to detect a medium effect size of .20 at the standard .05 error probability. To receive the full payment, participants were asked to log into EatSnap.love at least twice a day for two days and to create at least one post each day. When signing up on the platform, participants could see the terms of service as well as the community rules (see supplementary material for details). After removing participants who did not complete at least one day of the two-day study, our final sample size was 397 (54% female). Participants were located in the US, and their ages ranged from 18 to 70 (

Experimental design

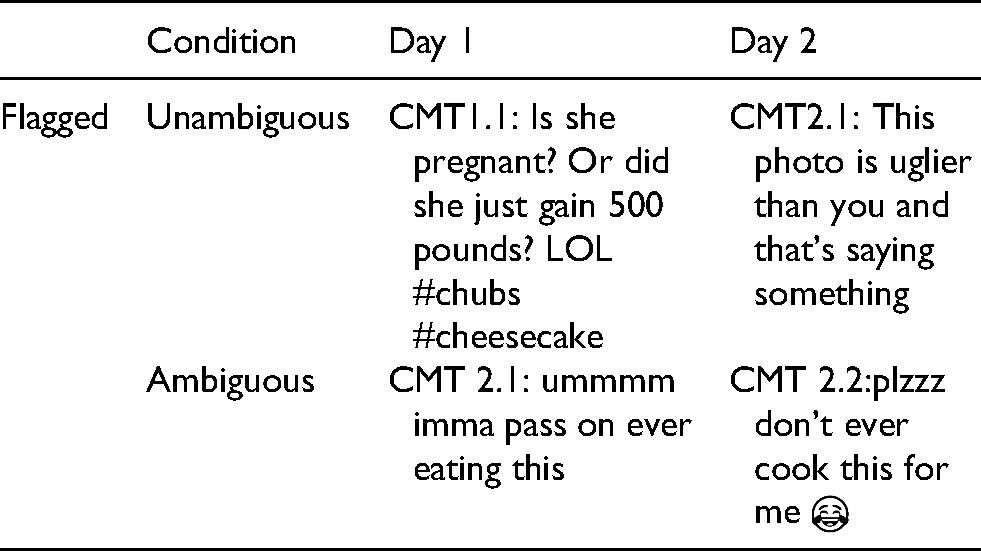

Participants were randomly assigned to one of the six conditions, and in all the conditions, they encountered in their newsfeed one moderated comment per day. We employed a 3 (other users vs. an automated AI system vs. no source identified) × 2 (ambiguous vs. clear harassment comment) between-subject factorial design, as described in the pre-registration. The visibility of the source of moderation of the harassment comment was manipulated by mentioning that either an automated system or other users on the site moderated the comment, or not mentioning the source of moderation at all (See Figure 2 for screenshots of the conditions). Depending on the experimental treatment, ambiguity of the moderated comment was also manipulated, making the moderated comment either clearly harassing or ambiguously worded (See Table 1).

Screenshot of the moderated social media comment. The screenshot shows the full post for Day 1 for the unambiguous comment and for the AI as moderation source condition. For the other conditions, instead of “

Comments selected for the experiment across conditions and days.

To determine the appropriate level of ambiguity of the comments, we pilot tested 40 comments that were evaluated by 145 participants recruited on MTurk. The comments were initially created by our research team based on real comments they saw on social media platforms. The pilot test ascertained the difference between ambiguous and clear harassment content by evaluating perception of harassment for each comment (3 questions, e.g. to what extent do you see this as online harassment? 1 =

Procedure

Participants first completed a pre-survey that collected demographic information as well as information on their social media use, web skills, and tolerance for ambiguity and affinity for technology. When registering on the study's social media site, participants could read the community rules (e.g. no bullying, no non-food posts, etc.). Each day of their participation in the study, all participants were exposed to a harassment comment between two different EatSnap.love users (bots) that was moderated by one of the moderation sources, randomly assigned to each participant out of the three choices described above. The moderated comment was always displayed near the top of the newsfeed, randomly placed between the first and sixth post Participants visited EatSnap.love on average 12.5 times on the first day and 8.5 times the second day. Participants also engaged with the site by liking on average 23 posts, commenting on 3.68 posts, and flagging 1.62 comments. At the end of the two days, participants completed the post-survey, and their account was deactivated.

Measures

There were two types of measures used in the study: two behavioral measures captured through log data during participants’ interaction on the platform, and post-survey measures. The behavioral measures for this study were: 1) agreement with the moderation decision (“yes”/”no” prompt if they agreed with the moderator's decision) (see Figure 2) and 2) the choice to view the site's moderation policy (after participants indicated their answer to the agreement with the moderation decision question, another question appeared asking if participants wished to review the site's community rules; their positive response would take them to the community rules page. The post-survey included measures on 1) the moderation decision (trusting in the decision and its perceived fairness), 2) the moderation process capturing participants’ belief certainty regarding how they understood the moderation process on the platform, and 3) perceived accountability and objectivity of the platform moderation system.

For judgments on the moderation decisions, we evaluated trust in the moderation decision (“How much do you trust that EatSnap.Love make(s) good moderation decisions?”, measured on 1 =

Results

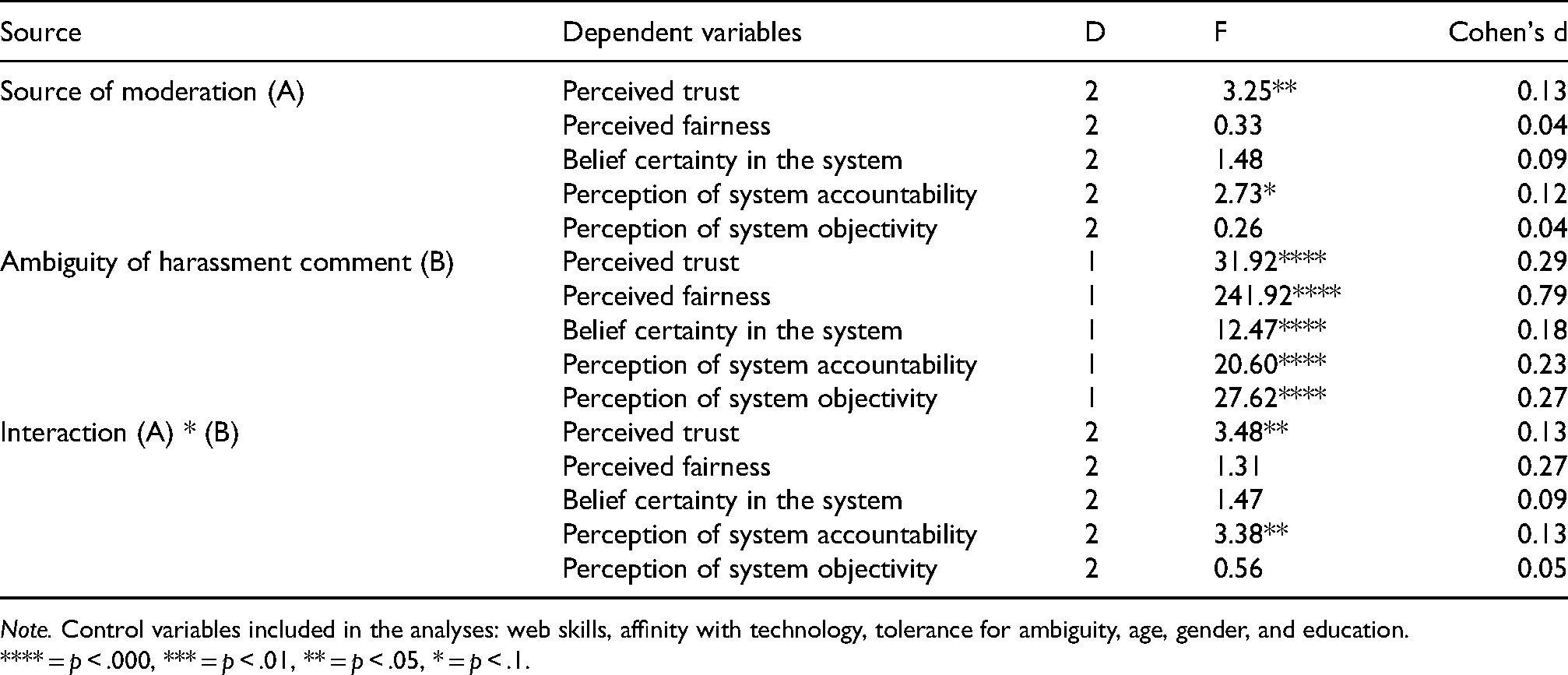

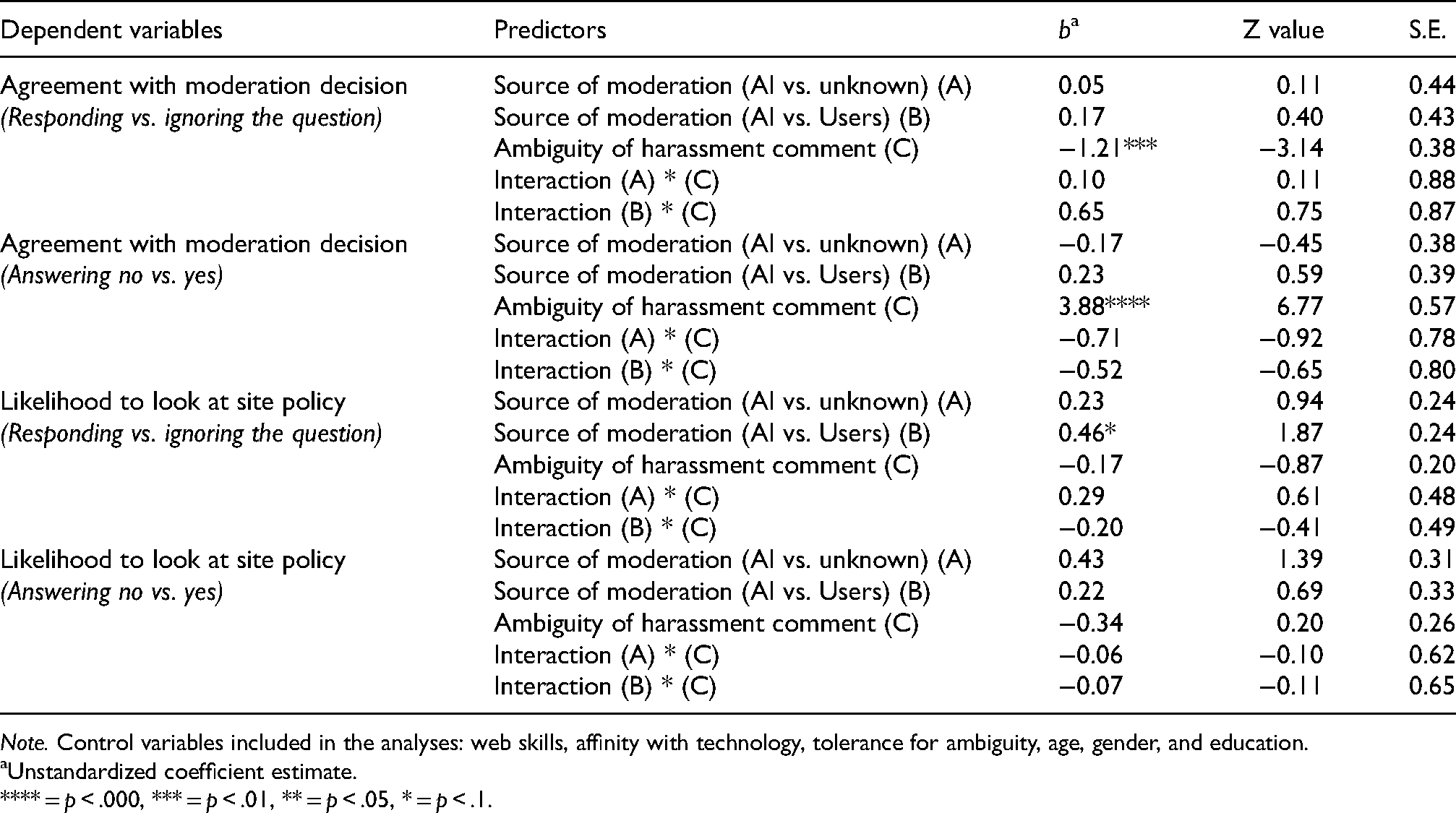

All the analyses include the following control variables: web skills, affinity with technology, tolerance for ambiguity, age, gender, and education; they are reported only when found to be significant predictors on the dependent variables. We present below our most pertinent results organized by participants’ perceptions of (1) the moderation decision, (2) the moderation process, (3) the moderation system, and an additional category of (4) behavioral variables (agreement with the moderation decision and choice to review the site's moderation policy). Moderation decision is based on the perception of the moderation related to harassment comment; moderation process emphasizes the understanding of how the process works, and perception of the moderation system focuses on the judgment of the overall moderation system. A complete set of the results can be found in Tables 2 and 3. For thoroughness and consistency, we ran the main effects and interactions on all our dependent variables, which means that some results presented below are not included in our pre-registration. For continuous dependent variables, we used the AOV modeling function from stats package on R software to perform ANCOVAs to highlight differences in means between the moderation sources. For categorical dependent variables, we used the GLMER modeling function from LME4 package (Bates et al., 2015: 4) to perform binary logistic regressions.

ANCOVA results.

**** =

Binary logistic regression result

Unstandardized coefficient estimate.

**** =

Perception of moderation decision: fairness and trust

Perception of the moderation decision was measured via fairness and trust While the moderation source did not change perception of fairness (see Table 2), it did impact perception of trust in the moderation decision

Furthermore, there was a significant interaction between the source of moderation and comment ambiguity on perceived trust,

Ambiguity of the harassment comment did impact both perception of fairness

Perception of moderation process: belief certainty

For perception of the moderation process, we captured belief certainty about how the moderation process works. The results demonstrate that the moderation source did not impact how certain participants felt about how the moderation process worked, and there was no interaction between moderation source and comment type (see Table 2). However, comment type significantly impacted belief certainty

Perception of moderation system: accountability and objectivity

Perceptions about the moderation system were captured with participants’ assessments of system objectivity and system accountability. While moderation source did not impact perception of system objectivity, it did impact system accountability, with higher ratings capturing participants’ belief that the moderation system acted in the best interest of its users. Our results showed a marginally significant effect of the source of moderation

When adding the interaction term to the models, moderation source and comments type were qualified by a significant interaction

Comment type had a significant effect on both perception of system accountability

Likelihood to check content policy and agreement with the moderation decision

For our final analyses, we examined the effect of source visibility and comment ambiguity on behavioral actions. We used logistic regressions to test whether the source of moderation, the comment type and their interaction impacted participants’ decision to agree with the moderation decision and their likelihood to check the content policy. We analyzed agreement with the moderation decision in two ways, first by comparing those who responded to the prompt “do you agree with the moderation decision?” and those who ignored it completely, and second, within the group who responded, we compared participants who agreed versus those who disagreed with the moderation decision. The only significant impact in both cases was produced by the content ambiguity, with participants in the clearly harassing cases being more likely to respond than to ignore the prompt,

Discussion

The goal of this study was to understand how making a content moderator visible in a social media environment may influence perceptions of the moderation system. We found that users tend to question (1) their trust in the moderation decision and (2) the accountability of the moderation system when moderation was attributed to AI compared to other users or an unknown source. However, instead of AI moderation being seen as less trustworthy and accountable across the board, the difference between AI and the other sources of moderation only emerged for content moderation of ambiguously worded harassment, and not for clear harassment cases. Below we explain the meaning of these results from the point of view of users’ engagement with problematic content moderated by different sources, as well as the implications for making moderation sources visible on social media platforms.

With regards to the perceptions of moderation decision, we found that users were less trusting when exposed to an automated system moderator (vs. other users or unknown source) when the harassment comment was ambiguously worded. Interestingly, no differences in source of moderation nor in the interaction of source of moderation and comment type were found for fairness of the moderation decision. This is somewhat surprising as fairness is a critical component of trust in policy making (

With regard to the perception of the moderation process, we found that when the harassment comment was clearly harassing, participants expressed that they were more certain in their understanding of how moderation worked (i.e. more belief certainty) compared to when the comment was ambiguous. Interestingly, there was no difference in belief certainty depending on the moderation source, indicating that automatic moderation did not cause more doubts in their understanding of the moderation system. The literature suggests that when AI is understood and analyzed by lay users, AI systems can be better integrated in day-to-day activities (Hagras, 2018).

Perception of the moderation system was examined via participants’ assessment of the system's accountability and objectivity. Participants did not judge the system objectivity differently based on the moderation source, but there were significant differences in perceptions of system accountability depending on the moderator (i.e. an automated moderation system vs. other users or unknown source) when the comment was ambiguously harassing another user. While system objectivity refers to general fairness of the moderation system, accountability goes beyond fairness and captures whether the system is perceived to be acting in the best interest of all users (Rader et al., 2018). It is possible that in the more ambiguous situation, users are concerned about AI's moderation because of the above-mentioned inherent bias toward machines (Lee, 2018) foregrounded by ambiguous content. In other words, people may be willing to yield to machine judgments in clear-cut and unequivocal cases, but any kind of contextual ambiguity resurfaces underlying questions about the AI moderation system because of a possibility of errors and questions about whose interests and values it reflects. In contrast, when participants see “other users” or even an unidentified source flagging an ambiguous comment, they may be less likely to question their actions and intentions because the trust window is larger with other users than with AI moderation.

Finally, we looked at whether visibility of moderators impacted users’ agreement with the moderation decision and likelihood to look at the site content policy. Moderation source did not impact users’ agreement (vs. disagreement) with the moderation decision but the exposure to different type of comments led participants to take different actions on the site: in the clear (vs. ambiguous) harassment comment condition, participants were more likely to answer the agreement question than to skip it, presumably because they were more confident in judging the comment as harassment, and particularly to answer “yes” (vs. “no”). This kind of prompt may have important implications: the more harassment comments are reported by users, the higher the likelihood that the platform will remove the stated comment and the more the engagement of users on the platform (Jiménez Durán, 2022).

Overall, these results highlight how ambiguity in harassing comments strongly impacts perceptions of moderation decisions and moderation systems, especially when the moderator is an AI system. The results show that when users feel uncertain about whether the comment is harassing, they are more likely to question the moderation decision and the moderation system, similar to how they question the certainty of their own understanding of the system (Blackwell et al., 2018). Furthermore, the exposure to the same ambiguous content led to more questioning of AI moderation than the other moderation sources (i.e. other users and unknown sources). This finding adds a more nuanced understanding of folk theories about the role of

Limitations

As in any research, this study comes with some limitations. Despite our ability to reproduce a simulated social media environment to increase the ecological validity of the experiment, the social media site we used (EatSnap.Love) was new to our participants. Being in a new social media environment may have made our participants act differently than in their commonly used social media sites. Yet, even in this new environment and not knowing the other users of the site, participants still trusted more the moderation decision with ambiguous content when other users flagged the comment than when an automated system did so. Future research could extend the test of moderation source visibility to real world platforms, for example, by using field experiments, similar to the study done by Matias (2019). Second, the results do not elucidate participants’ interpretations of their experiences with the moderation system, which could be probed with open-ended questions and qualitative data. Finally, while we examined three different moderators, subsequent research should also look at the effect of commercial moderators on perceptions of moderation decisions and systems. Adding this fourth category would give a broader understanding of how people engage with different types of content moderation.

Conclusion

The findings from our study reveal the complexity of how users interact with different types of content moderation in social media platforms. Specifically, users are more likely to question AI moderators, especially how much they can trust their moderation decision and the moderation system's accountability, but only when moderation content is inherently ambiguous. In other words, rather than invariably trusting AI's moderation less and consistently seeing AI as less accountable compared to other users’ moderation, the difference only emerges with ambiguous content. Those findings are increasingly relevant to the question of whether AI should be used solely to moderate content (Gillespie, 2020), as they highlight the differences in how people engage with AI content moderation system compared to human moderators.

While media companies continue to use artificial intelligence to improve users’ experience (Moss, 2021) and to maintain engagement and profitability (Jiménez Durán, 2022), a promising approach could be identifying ways for AI and humans to work together as “moderating teammates,” in line with the “machines as teammates” paradigm (see Seeber et al., 2020). Even if AI could effectively moderate content, there is a necessity of human moderators as rules in community are constantly changing, and cultural context differ, so there is never a shared value system that everyone agrees on (Gillespie, 2020). Furthermore, AI moderators can help with content moderation scalability and reduce the burden on professional moderators who deal daily with harassment comments, violence, or conspiracies (Irwin, 2022). As our findings suggest, when a comment is clearly harassing another user, using AI as moderator does not seem to affect users’ perception of the moderation system. However, for an ambiguously harassing comment, platforms could rely on users rather than AI, or look for ways to engage automated moderation in a way that supports “partnership between human and automated moderation” (Gillespie, 2020: 4), for example, by AI tools providing users with contextual information when users are not sure about ambiguously worded harassment. Similar to how users expand their understanding of the breadth and impact of a harassment problem through the act of labeling negative online experiences as “online harassment” (Blackwell et al., 2018), users may increase their understanding of AI moderation systems through direct experience.

Footnotes

Acknowledgments

We want to thank the reviewers for their comments. We also thank Anna Spring for her help with developing the simulation, Katherine Miller with providing support with participant compensation, and research assistants Annika Pinch, Suzanne Lee and Hyun Seo (Lucy) Lee for their help with testing the simulation and managing data collection.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the USDA NIFA HATCH (grant number 2020-21-276).