Abstract

While interpreter-mediated political discourse has attracted growing scholarly attention, comparative studies scrutinising the interpersonal reconstruction of such discourse by human interpreters and large language models (LLMs) remain sparse. This exploratory study investigates how interpersonal meaning is reconstructed in the Chinese–English renditions of Chinese speakers’ speeches delivered at the Boao Forum for Asia (2012–2024) by human interpreters and GPT-5. Drawing on corpus-assisted critical discourse analysis grounded in Appraisal Theory, the study compares human interpreters’ renditions with GPT-5’s translations to uncover different patterns of Attitudinal and Engagement manifestations. The analysis reveals that human interpreters, compared with GPT-5, demonstrate a refined sensitivity to relational cues for instance by reconfiguring the source discourse through evaluative amplification, dialogic expansion and deictic pronouns for solidarity discourse; they not only convey meaning but also perform interpersonal relationships. By contrast, the translations generated by GPT-5 tend to flatten evaluative nuance and interpersonal intent, leaving discourse relationally diminished. Focusing on interpersonal meaning as the site of human mediation, this study illuminates the enduring added value of human interpreters in enacting interpersonal and communicative attunement beyond algorithmic fluency.

Keywords

1. Introduction

Interpreting, particularly in diplomatic settings, is an intrinsically interpersonal endeavour. Beyond the transmission of propositional meaning, interpreters function as relational mediators who construct affective resonance, negotiate stance, and sustain communicative rapport between speakers and audiences (e.g., Bartłomiejczyk, 2020, 2022; Gao, 2024; Gu & Tipton, 2020; Gu & Wang, 2021, 2023; Wang & Feng, 2018). Their linguistic choices, whether expressed through evaluative tone, modality, or pronoun uses, constitute acts of alignment, empathy, and affiliation that transform monologic speech into dialogic engagement (Gao, 2024; Munday, 2012, 2015). As such, interpreting constitutes a human-oriented practice of interpersonal connection. Yet, the emergence of artificial intelligence (AI) in this sphere raises a compelling question: when translation is performed by a system that is not there “in person,” can it still deliver interpersonal function?

Large language models (LLMs), epitomised by ChatGPT, have redefined the boundaries of translation with their capacity to produce linguistically sophisticated and contextually coherent texts at unprecedented speed and fluency (e.g., Chan & Tang, 2024; Hendy et al., 2023; Lee, 2024). Although not originally designed for translation, the integration of advanced neural architectures and massive multilingual datasets has enabled these systems to deliver outputs that, at times, rival or even surpass those of dedicated translation engines (Hendy et al., 2023). This technical achievement, however, foregrounds a deeper epistemic issue: can a model devoid of human affect, consciousness, or embodied presence simulate the interpersonal dimension of language that human interpreters instinctively perform?

In language use, attitudinal meaning is not simply a “personal matter” but “a truly interpersonal matter” as it serves to advance opinions and elicit responses of solidarity from the listeners (Martin & White, p. 143). This study extends that insight to explore whether a non-human language model, through its linguistic choices, can participate in this process of interpersonal solidarity-making; in other words, whether its translations, however fluent, remain interpersonally human-like. Appraisal Theory (Martin & White, 2005) provides a productive framework for interrogating how interpersonal meaning is realised and negotiated. Through this theoretical lens, the comparison between human interpreters and GPT-5 becomes not a test of accuracy, but rather a question of interpersonal functionality: how each voice occupies, expands, or evades the relational fabric of communication.

While research on interpreter mediation has richly documented the human capacity to adapt relational cues and negotiate interpersonal positioning (e.g., Bartłomiejczyk, 2022; Gao & Munday, 2023; Munday, 2012, 2018; Wang & Feng, 2018), studies on LLM translation have largely emphasised lexical precision and syntactic fluency and cohesion (e.g., Chan & Tang, 2024; Hendy et al., 2023; Lee, 2024). What remains insufficiently explored is the interpersonal frontier: how human renditions and machine translations differ in enacting attitudinal resonance and dialogic alignment. Drawing on parallel corpus data from Chinese speakers’ speeches delivered at the Boao Forum for Asia (2012–2024) (see Section 4 for details), this study investigates whether translations produced by GPT-5 can reproduce the relational depth that human interpreters naturally construct. Three research questions are addressed:

In what follows, Section 2 reviews extant literature on human interpreters’ mediation and the emerging scholarship on LLMs in translation. Section 3 outlines the theoretical framework, drawing on Appraisal Theory and deictic positioning to conceptualise interpersonal meaning as a linguistic and relational phenomenon. Section 4 details the data and analytical procedures and Section 5 presents and discusses the findings. The article concludes in Section 6 by reflecting on what the comparison between human and machine mediation reveals about the evolving nature of interpersonality in translation.

2. Existing studies on human interpreter mediation in conferences and LLM performance in translation

Translation has been foregrounded as a constitutive act in political communication. It constitutes a foundational element in political communication, wherein linguistic mediation simultaneously mirrors and reconfigures ideological alignments (Schäffner, 2004, 2012; Schäffner & Bassnett, 2010). Such mediation actively reshapes political discourse through the interplay of belief systems, institutional imperatives, and media frameworks (Schäffner, 2004, 2012). These processes manifest as critical junctures where discursive practices forge connections between individual expression and overarching structures of authority, enabling networks of agents, including translators and interpreters, to exert ideological influence via strategic textual interventions at points of heightened decision-making (Chilton & Schäffner, 2002; Munday, 2007). Contemporary advancements in the field bring together linguistics with political discourse analysis, leveraging language functions to delineate the negotiation of evaluative and ideological transformations across cultural and institutional divides (Munday, 2024).

Interpreting in institutional and political contexts, in particular, has been widely recognised as a communicative practice characterised by interpreter agency or mediation rather than neutral transfer. Interpreters’ linguistic choices are often entangled with the institutional, sociocultural, and ideological parameters of the events in which their interpreting practices are embedded. As Pöchhacker (2006, p. 191) observes, this phenomenon is interpreters’ “within-one-side” positioning, through which they enact the ideological stance of the organisations to which they are affiliated through subtle lexical and syntactic manoeuvres. Gao and Munday (2023) also posit that interpreters actively “edit” the source discourse through selective linguistic reconstruction to align with ideological expectations, a process shaped by their responsiveness to contextual stimuli.

A wealth of empirical research substantiates this argument, revealing how interpreters reshape the interpersonal and ideological fabric of political discourse through a plethora of linguistic resources. It is the reconfigurations of these linguistic categories by interpreters that generate interpersonal and ideological effects: shifts in referential choices, including nouns and pronouns, realign ideological perspective (Gu & Tipton, 2020); variations in modality recalibrate degrees of speaker commitment and epistemic certainty (R. Fu & Tan, 2024; Gao & Wang, 2024, 2025; Li, 2018); adaptations of metaphor reshape rhetorical resonance and cultural appeal (Gao, 2025); and reformulations of evaluative language, core to Appraisal Theory (Martin & White, 2005), serve to articulate institutional stance and relational positioning (Bartłomiejczyk, 2022; Gao, 2024; Munday, 2012, 2018; Wang & Feng, 2018; Xu & Liang, 2023). These findings reinforce the notion of interpreters as co-authors/speakers who both mediate and co-construct political discourse.

Parallel to this body of research, recent scholarship has employed a comparative lens to investigate the efficacy of LLMs and human translators, illuminating persistent disparities in handling nuanced textual elements, particularly in literary and specialised domains. In translating scientific texts from English to Chinese, generative AI models such as ChatGPT 3.5 demonstrate superior lexical accuracy in terminology but struggle with syntactic complexity and contextual depth, resulting in translations with lower word diversity and shorter sentences compared to human renditions, which prioritise source nuances (L. Fu & Liu, 2024). In a similar vein, evaluations of classical Arabic poetry translations reveal that while LLMs like Gemini and ChatGPT achieve thematic clarity and creativity on par with humans in some metrics, they significantly underperform in relation to prosody, as evidenced by lower mean scores from expert assessors, highlighting LLMs’ limitations in capturing rhythmic and emotional resonance (Farghal & Haider, 2024). In literary contexts, human translators excel at infusing “deep meaning” and “humanisation” into outputs, which fosters cultural empathy and ethical considerations absent in AI processes (Cook et al., 2025). This pattern extends to poetry translation methods, where human efforts surpass machine and post-edited outputs in conveying idiomatic subtleties and artistic intent (Lei & van de Weijer, 2025). Echoing these findings, comparative analyses of AI tools like ChatGPT in literary translation affirm human superiority in stylistic preservation and cultural adaptation, despite AI’s advantages in speed and literal fidelity, which suggests that LLMs often produce interpersonally flat renditions devoid of the attitudinal engagement inherent in human mediation (Abdelhalim et al., 2025).

These two research strands delineate a theoretical and empirical asymmetry: while interpreting studies have richly theorised human agency, LLM research has largely focused on lexical accuracy, stylistic adequacy, and creative potential. What remains insufficiently explored is the interpersonal dimension of AI-mediated translation, particularly, how LLMs engage with attitudinal meaning and engagement positioning. No systematic investigation has yet compared how institutional interpreters and advanced LLMs reconstruct interpersonal meaning in political discourse. Against this backdrop, the present study draws on the Appraisal Theory proposed by Martin and White (2005), a framework particularly suited to examining interpersonal meaning in discourse.

3. Theoretical framework

Appraisal Theory affords a useful tool-kit for explicating how speakers/writers negotiate interpersonal meanings (Martin & White, 2005). It provides a fine-grained system for exploring the linguistic encoding of interpersonal functions of language. As a linguistic model of evaluation, it evolves from and extends the interpersonal meta-function of Systemic Functional Linguistics (SFL) developed by Halliday (1978, 1994) and further elaborated by Halliday and Matthiessen (2014). At its core, SFL views language as a social semiotic system, a resource for meaning-making in context rather than a set of abstract grammatical rules. It is fundamentally concerned with language in use, focusing on the communicative and functional dimensions of linguistic choice. Within this paradigm, meaning is construed through the principle of realisation, whereby specific lexical and grammatical selections encode discourse semantics that, in turn, reflect and reproduce broader sociocultural and ideological contexts.

SFL proposes three meta-functions of language that collectively underpin all acts of communication: the ideational, the interpersonal, and the textual (Halliday & Matthiessen, 2014). The ideational meta-function organises experience and logical relations, enabling language users to represent and categorise the world around them. The interpersonal meta-function enacts and negotiates relationships between speaker and listener (or writer and reader), managing evaluation, attitude, and stance. The textual meta-function concerns the organisation of discourse through thematic structuring, information flow, and cohesion. These three dimensions operate concomitantly in every communicative act, together shaping how meaning is constructed and interpreted.

Concerning the interpersonal meta-function, Appraisal Theory (Martin & White, 2005) extends SFL’s concern with social meaning by providing a systematic model for analysing evaluative language and interpersonal engagement. While Halliday’s framework accounts for interpersonal meaning through mood, modality, and person systems (Halliday & Matthiessen, 2014), Appraisal Theory elaborates on the ways in which speakers and writers linguistically encode attitude, engagement, and evaluative degree. It explicates the ways in which feelings, values, and judgements are realised through lexis and grammar to offer a more delicate account of interpersonal positioning. In this respect, Appraisal Theory enriches the functional description of interpersonal meaning in SFL by bridging lexicogrammar with discourse semantics, thereby providing linguistic tools to interpret how speakers/writers align with or distance themselves from other voices, negotiate solidarity, and construct ideological alignment.

Crucially, Appraisal Theory operates at the content plane of lexicogrammar and discourse semantics, linking interpersonal meaning with linguistic realisations across attitudinal and dialogic domains. Within this framework, interpersonal meaning unfolds through three interrelated subsystems: Attitude, Engagement, and Graduation (Martin & White, 2005). This study is informed by the first two subsystems.

3.1 Attitude: evaluative polarity

Attitude constitutes the “most basic and early form of evaluation” (Martin & White, p. 42), encompassing the affective, judgement, and aesthetic dimensions through which social actors express emotions, judge behaviours, or appreciate phenomena. These evaluative meanings are typically realised lexicogrammatically via attitudinally loaded adjectives and their variants. Attitude divides into three domains: (1) Affect, expressing emotional states (e.g., delighted, concerned), and often signalling alignment or empathy between speaker and audience; (2) Judgement, representing moral or ethical evaluation of people’s behaviour (e.g., competent/incompetent, just/unjust); (3) Appreciation, evaluating the aesthetic or qualitative value of objects, events, or processes (e.g., effective, constructive, significant).

3.2 Engagement: dialogic space and voice management

While Attitude deals with how speakers feel or evaluate, Engagement concerns how they position themselves vis-à-vis other voices and perspectives in discourse. As Martin and White (2005, p. 36) explain, it “position[s] the speaker/writer with respect to the value position being advanced and with respect to potential responses to that value position.” Drawing from Bakhtin’s (1981/2010) notion of dialogism, Engagement delineates how utterances contract or expand dialogic space through modality, evidentiality, and attribution. The Engagement system distinguishes monoglossic expressions, those that present propositions as self-evident or categorical, from heteroglossic expressions, which acknowledge alternative viewpoints. Within the latter, dialogic contraction (e.g., undoubtedly, of course, must) restricts alternative positions, while dialogic expansion (e.g., perhaps, it seems, should) opens interpretive space for negotiation.

Within the sub-category of heteroglossia, dialogic expansion is further subdivided into entertain, which modalises propositions to invite alternative interpretations (e.g., may, could, in my view), and attribute, which ascribes claims to external sources to distance the speaker and broaden voices (e.g., according to X, experts claim). In contrast, dialogic contraction encompasses disclaim, which counters or denies potential alternatives (e.g., but, however, no), and proclaim, which emphatically asserts or concurs to suppress dissent (e.g., obviously, of course, we all know). Martin and White (2005) emphasise that these resources enable speakers/writers to align audiences with their stance, fostering solidarity in persuasive contexts like political discourse, while modulating the risk of misalignment. By integrating with Attitude, Engagement thus facilitates nuanced interpersonal negotiation, allowing texts to either amplify authorial authority or accommodate diverse reader/listener positions for rhetorical effectiveness.

On top of these linguistic categories explicitly mapped out in Appraisal Theory, the first-person plural pronouns, such as we, us, and our, function as salient linguistic resources for negotiating solidarity, collectivity, and proximity in political discourse. Building on Munday’s (2015, 2018) extension of Martin and White’s (2005) Engagement system into translation and interpreting studies, these deictic markers serve as spatial–ideological coordinates that position the speaker at the centre of the discourse space while aligning the audience within a shared referential field. In interpreting practices, deictic pronouns are found to encode inclusivity or exclusivity and operate as vehicles for alignment (Gu & Tipton, 2020).

By juxtaposing Attitude and Engagement across human and machine renditions, the present study examines whether the translations generated by GPT-5 replicate, attenuate, or reconfigure the interpersonal dynamics that animate Chinese political discourse. These two subsystems therefore form the analytical core through which the study explores how human interpreters and GPT-5 differently reconstruct evaluative stance and dialogic alignment in recontextualising speeches delivered at the Boao Forum for Asia. The following section outlines the corpus, data sources, and methodological procedures employed to operationalise this comparative inquiry.

4. Data and methods

The data for this study were drawn from the Boao Forum for Asia (BFA), a high-level multilateral dialogue mechanism established in 2001 and headquartered in Boao, Hainan Province, China. The Forum constitutes a premier venue for deliberations on regional and global governance, economic integration, and sustainable development, bringing together political leaders, policymakers, business executives, and scholars across Asia and beyond (Boao Forum for Asia, n.d.). Rooted in the ethos of “shared development through dialogue,” the BFA projects an agenda of multilateral cooperation and collective prosperity within the broader narrative of the “Asian century” (Boao Forum for Asia, n.d.).

The speeches delivered by Chinese speakers at the opening sessions of BFA Annual Conferences between 2012 and 2024 constitute an invaluable corpus of China’s global communication, which encapsulates recurring discursive motifs such as development, cooperation, and shared future. These source texts (STs) and target texts (TTs) were retrieved from official repositories, including the BFA Secretariat’s website (https://english.boaoforum.org), the State Council of the People’s Republic of China (https://english.www.gov.cn), and Xinhua News Agency’s English archive (https://english.news.cn), which provide transcripts and audiovisual recordings of plenary addresses. We checked the transcripts against the audiovisual recordings for accuracy. The compiled dataset comprises 13 Chinese speeches and their corresponding institutional English renditions, forming a parallel Chinese–English corpus for analysing linguistic mediation in political discourse.

To facilitate a comparative investigation of rendering interpersonal meanings between human interpreters and LLMs, GPT-5 was employed to translate the same 13 source texts under a controlled zero-shot condition. In the zero-shot experiment, the prompt “translate the Chinese speeches into English ones” was used consistently for the 13 speeches. This zero-shot configuration was intentionally adopted to evaluate GPT-5’s innate translational disposition, its capacity to generate interpersonal meaning without human-engineered contextual cues or stylistic conditioning. By avoiding few-shot or instruction-augmented prompts, the design preserves experimental neutrality and ensures that any observed interpersonal effects arise from the model’s internalised linguistic patterns rather than external prompt steering. Collectively, these three sub-corpora, Chinese STs, human interpreters’ TTs, and GPT-5 translations, constitute a dataset for scrutinising attitudinal and engagement realisations between human and machine renditions of Chinese political discourse.

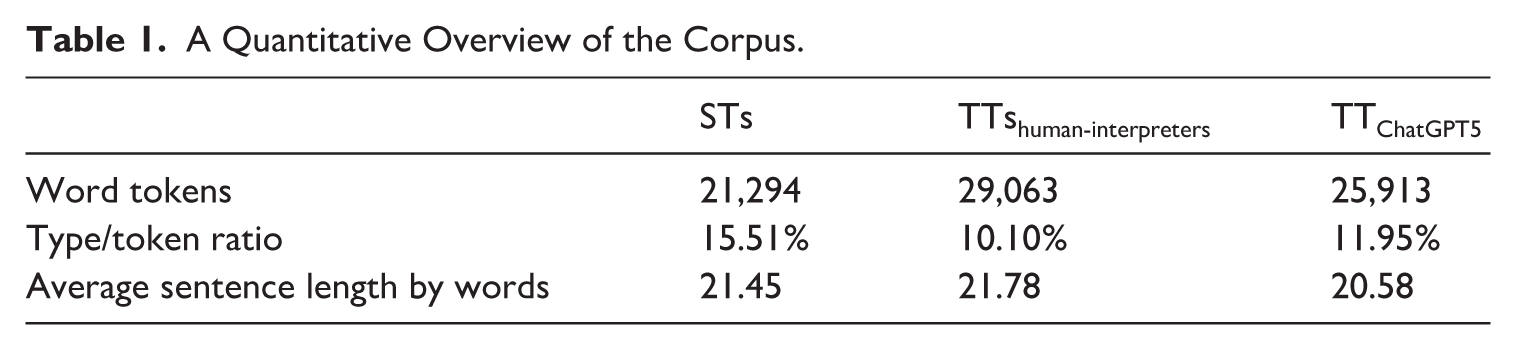

Table 1 presents a quantitative overview of the three sub-corpora under investigation. The ST sub-corpus comprises 21,294 tokens, while the interpreters’ renditions total 29,063 tokens, indicating a considerable expansion of approximately 36%, which may reflect the interpreters’ tendency to explicate implicit meaning or adapt discourse for clarity and audience engagement. GPT-5’s translations, by contrast, contain 25,913 tokens, representing a moderate increase of roughly 22% relative to the STs, yet notably more concise than human interpreters’ output. The type–token ratio (TTR) of the STs stands at 15.51%, evidencing the highest lexical diversity among the three, compared with 10.99% for the human interpreters and 11.96% for GPT-5. In terms of average sentence length, the STs average 21.45 words, human interpreters 21.78 words, and GPT-5 20.58 words, reflecting GPT-5’s slightly shorter syntactic units and potentially more segmental rendering style. In sum, these metrics reveal subtle quantitative divergences that underpin the subsequent qualitative analysis of attitudinal and engagement realisations in the human interpreters’ renditions and GPT-5’s translation.

A Quantitative Overview of the Corpus.

To investigate how interpersonal meanings are reconstructed across the three sub-corpora, this study adopts a corpus-assisted critical discourse analytical approach that dovetails well with the Attitude and Engagement systems from the Appraisal framework (Martin & White, 2005). Characterised by textual attentiveness, critical discourse analysis (CDA) provides a lens through which linguistic choices can be related to ideology, social relations, and communicative intent (Wodak & Meyer, 2009). When integrated with corpus linguistics, as Baker et al. (2008) demonstrate, it yields a powerful synergy between empirical rigour and contextual interpretation. This integration enables both a systematic identification of recurrent patterns and a nuanced reading of their interpersonal and ideological significance. Frequency profiling and concordance inspection were used to trace evaluative and engagement tendencies, which were subsequently interpreted qualitatively to identify the attitudinal and dialogic mechanisms underlying human interpreters’ renditions and GPT-5’s translation.

The analysis focuses on how the two subsystems of Appraisal (Attitude and Engagement) are linguistically realised and functionally recontextualised. The two subsystems constitute the most salient dimensions through which interpersonal meaning is negotiated and enacted in political discourse. Preliminary analysis of the Graduation system revealed minimal variation between the STs and TTs, which suggests that shifts in intensity alone were not central to the relational dynamics under investigation. More importantly, Attitude and Engagement directly capture evaluative stance and dialogic positioning, the two axes most critical to examining how human interpreters and ChatGPT differentially reconstruct interpersonal alignment and communicative presence. In the analysis, Attitude is examined in terms of positive and negative polarity, capturing the emotional, ethical, and aesthetic evaluations that are indicative of the evaluative stance hidden in the texts. Engagement is explored regarding how the texts negotiate dialogic space, particularly through modal verbs, epistemic adverbs, and stance expressions that indicate openness or assertiveness (Martin & White, 2005). In addition, deixis, following Munday’s (2015) extension of the Appraisal framework to deictic positioning, is examined through first-person plural pronouns such as we, our, and us, which index interpersonal and collective alignment.

The analytical procedure combines quantitative and qualitative phases. In the quantitative phase, AntConc 4.0 was used to generate frequency counts and normalised rates of evaluative and stance-related items, which engendered the overall distribution of attitudinal polarity, dialogic expansion/contraction, and deictic “we”-positioning. The qualitative phase involved a close reading of representative instances to interpret their interpersonal implications, with a focus on how evaluative meanings were intensified, softened, or neutralised alongside how engagement and pronoun choices reflected relational positioning. This two-tiered approach allows macro-level quantitative tendencies to be interpreted through micro-level discourse evidence, ensuring that observations about interpersonal meaning are empirically grounded.

Given the study’s focus on interpersonal meanings rather than lexical frequency alone, all relevant appraisal items were manually annotated. The annotation followed Appraisal Theory (Martin & White, 2005) as the linguistic framework. Lexical exemplars were cross-referenced by Martin and White (2005) and Munday (2012), which offer reference points for appraisal shifts in translation and interpreting. Both inscribed and invoked appraisal expressions were annotated, the latter including metaphors, non-core lexis, and ideational tokens that implicitly convey evaluation (Martin & White, 2005; Munday, 2012). To ensure the reliability of manual annotation, inter-coder agreement was assessed following the protocol outlined by Brezina (2018). Given the corpus’s substantial size and internal consistency across text types, a 20% sample was selected for double annotation, a proportion considered sufficient for large datasets where category definitions and coder training have been standardised (Brezina, 2018). This subset was annotated independently by two trained coders (the first author and a colleague), yielding an agreement rate of 90.68%, which exceeds the accepted 80% reliability threshold (Brezina, 2018). While only a portion of the corpus was double-coded, the stability of the coding scheme across comparable discourse segments ensures that this sample adequately represents the overall reliability of the annotation process. The result confirms the reliability of semantic tagging and strengthens the validity of subsequent analyses, ensuring that differences in evaluative stance, engagement, and deictic alignment reflect the contrasting interpersonal orientations of human interpreters and GPT-5.

5. Analysis

5.1 Reconfigured evaluation: human attitudinal positivity versus algorithmic literalism

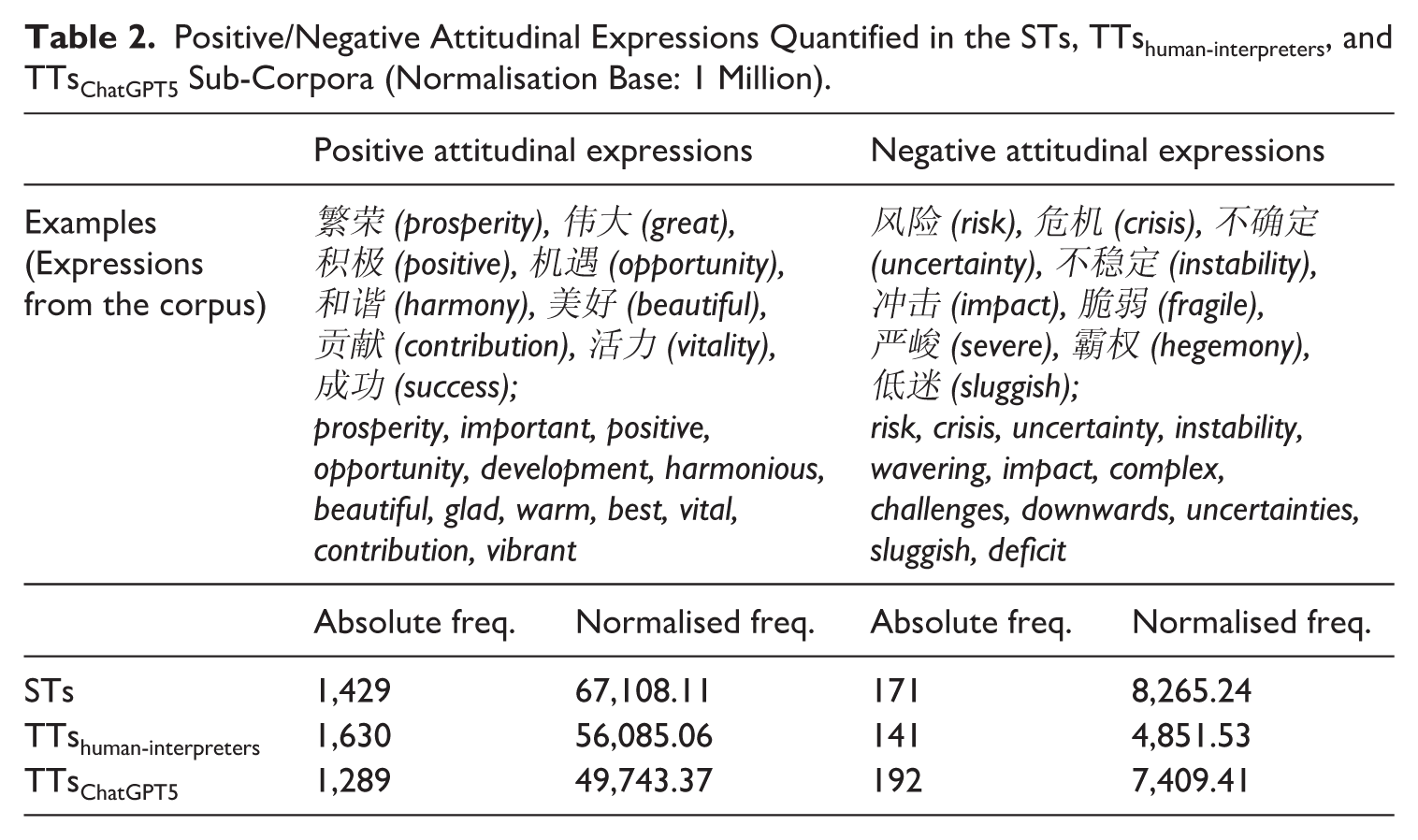

Table 2 delineates the distribution of positive and negative attitudinal expressions across the sub-corpora of the STs, the TTshuman-interpreters, and the TTsChatGPT5, revealing salient divergences in evaluative orientations. The STs register a markedly affirmative profile, with 67,108.11 positive and 8,265.24 negative instances per million words (as the normalisation base), epitomising the state-endorsed optimism and developmental ethos characteristic of diplomatic rhetoric. The human interpreters’ renditions display 56,085.06 positive and 4,851.53 negative items per million words, indicating points of decision-making to reshape attitudinal orientations (cf. Munday, 2012). This attenuation of negativity, coupled with sustained positivity, reflects the interpreters’ agentive tendency towards discursive tempering, strategically aligning their output with diplomatic decorum and communicative harmony. GPT-5’s translations, by contrast, yield 49,743.37 positive and 7,409.41 negative items per million words, suggesting a recalibrated evaluative balance. The model’s moderation of positive polarity and partial reinforcement of negative lexis signal an algorithmic pursuit of neutrality rather than attitudinal resonance. The analyses below illustrate their differences with examples derived from the corpus.

Positive/Negative Attitudinal Expressions Quantified in the STs, TTshuman-interpreters, and TTsChatGPT5 Sub-Corpora (Normalisation Base: 1 Million).

Evaluative modulation in this study is interpreted in the qualitative analysis with reference to the diplomatic and institutional context of the Boao Forum for Asia, a high-level platform emphasising harmony, cooperation, and stability. Within such a setting, strongly negative or confrontational expressions are pragmatically dispreferred, as interpreters tend to recalibrate affect and judgement to maintain decorum and align with the forum’s cooperative ethos in such political/diplomatic settings (cf. Munday, 2012; Schäffner, 2004). Hence, shifts that temper negativity or accentuate positivity are assessed not as linguistic anomalies but rather as contextually motivated acts of interpersonal accommodation.

Example 1 exemplifies positive attitudinal amplification through metaphorical construction and lexical alteration. The ST evokes an evaluative metaphor of light with “美好未来” (a beautiful future) that encodes Appreciation to construe futurity as morally and aesthetically desirable (cf. Martin & White, 2005). The human interpreter renders this as “a bright future,” which creates a luminance metaphor and subtly amplifies the emotional tenor, producing a vivid and affectively resonant image that aligns with the original’s motivational ethos. GPT-5, by contrast, translates as “a better future,” which is a more literal and less imagistically charged rendering. This lexical substitution attenuates the figurative radiance, reducing the evaluative intensity and aesthetic vividness of the ST expression. Further, the interpreter transforms “属于” (belongs to) into “favours,” a subtle yet ideologically charged shift that personifies success as an active agent rather than a passive possession. This lexical reconfiguration injects dynamism and moral volition into the discourse, reinforcing Judgement of human agency and perseverance as praiseworthy virtues (Martin & White, 2005). By contrast, GPT-5 did a literal translation of “belongs to.” Evidently, GPT-5’s translation gravitates towards literalism, showing algorithmic restraint rather than the rhetorical engagement the human rendition delivers: Example 1 (2018) ST : 幸福和美好未来不会自己出现,成功属于勇毅而笃行的人。 Gloss: Happiness and a beautiful future do not appear by themselves; success belongs to the one who is both brave in perseverance and steadfast in action. TThuman-interpreter: Happiness and a bright future will not appear automatically; success only favours those with courage and perseverance. TTChatGPT5: Happiness and a better future will not appear on their own; success belongs to those with courage and perseverance.

Example 2 illustrates a case of negative attitudinal mitigation regarding the global crisis discourse. The Chinese ST “世界进入动荡变革期” (the world has entered a turbulent period) contains strong judgmental and affective overtones via “动荡” (turbulence), signifying instability and disorder. The human interpreter renders this as “a phase of fluidity,” which reframes the negativity through metaphorical softening: “fluidity” replaces “turbulence.” This rendition invokes adaptability rather than chaos. This re-lexicalisation functions to neutralise potential alarmism and project a tone of composure consistent with institutional diplomacy. GPT-5, conversely, preserves the literal negativity “a turbulent period” that sustains the ST’s evaluative sharpness. While lexically accurate, this version discursively reinstates forceful negativity, diminishing the interpretive diplomacy manifested in the human rendition. The divergence underscores the interpreter’s agency through euphemistic toning, whereas GPT-5’s translation remains semantically faithful but pragmatically inert, which displays minimal accommodation to interpersonal tenor: Example 2 (2021) ST: 当前,百年变局和世纪疫情交织叠加,世界进入动荡变革期。 Gloss: At present, the centennial transformation and the century-long pandemic are intertwined and superimposed, the world has entered a turbulent period. TThuman-interpreter: Now, the combined forces of changes and a pandemic both unseen in a century have brought the world into a phase of fluidity. TTChatGPT5: At present, the once-in-a-century changes and the pandemic of the century are intertwined, pushing the world into a period of turbulence.

Example 3 foregrounds positive attitudinal adaptation via the SHARED-BOAT metaphor, emblematic of China’s globalisation rhetoric. The ST juxtaposes a moral imperative “必须同舟共济” (must work together in the same boat) with a negatively charged warning (“企图把谁扔下大海都是不可接受的” (it is unacceptable to throw anyone into the sea), balancing solidarity with admonition. The human rendition, however, discursively reconfigures this polarity into a wholly affirmative appeal: “all passengers must pull together.” By substituting “passengers” for “countries” and omitting the punitive clause, the interpreter humanises the imagery and recasts obligation as collective effort, which serves to intensify the positive Judgement and Appreciation embedded in the metaphor (cf. Martin & White, 2005). In contrast, GPT-5 compresses the SHARED-BOAT into its sense “we must help each other” and retains a literal translation of the negative “casting anyone overboard is unacceptable.” While delivering the metaphorical meaning, this version reduces the metaphorical force/image and reinstates literally the binary of cooperation versus exclusion: Example 3 (2022) ST: 世界各国乘坐在一条命运与共的大船上,要穿越惊涛骇浪、驶向光明未来,必须同舟共济,企图把谁扔下大海都是不可接受的。 Gloss: All countries in the world are riding in a big boat that shares the same destiny. To ride through the stormy waves and sail toward a bright future, they must help each other in the same boat. It is unacceptable to try to throw anyone into the sea. TThuman-interpreter: Countries around the world are like passengers aboard the same ship who share the same destiny. For the ship to navigate the storm and sail toward a bright future, all passengers must pull together. TTChatGPT5: All nations are aboard the same ship of shared destiny. To navigate through stormy seas toward a brighter future, we must help each other; casting anyone overboard is unacceptable.

These cases exemplify human interpreters’ tendency for evaluative modulations: amplifying positivity, neutralising negativity, and enriching metaphorical vividness in line with the ST diplomatic stance. GPT-5, by contrast, tends towards lexical “equilibrium,” accurate yet affectively restrained, revealing its inclination towards informational rather than interpersonal reconstruction. The contrast illustrates a continuum from humanly empathetic mediation to algorithmic neutrality in attitudinal realisation.

5.2 Negotiated dialogic space: human expansiveness versus algorithmic contractiveness

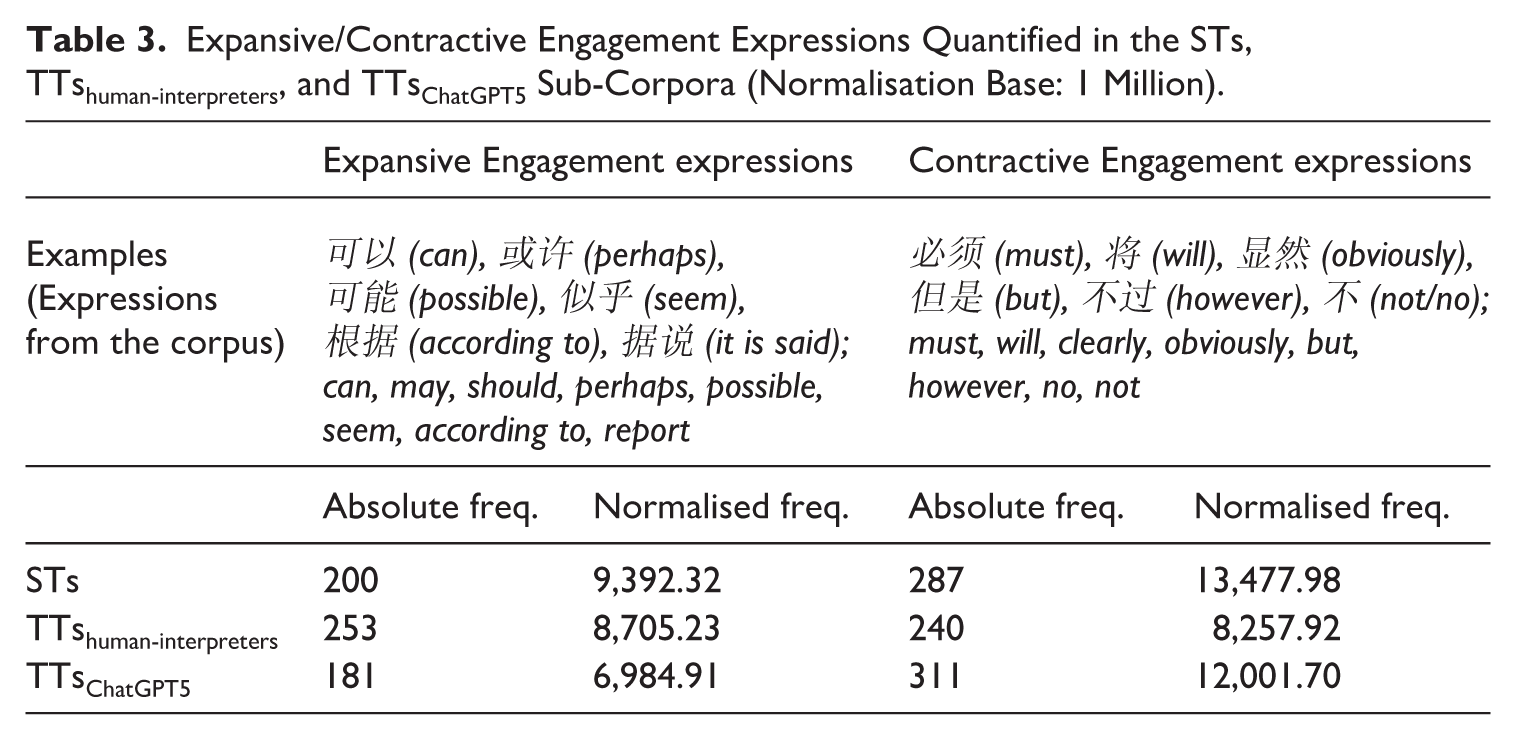

Table 3 outlines the distribution of Expansive and Contractive engagement resources across the three sub-corpora, illuminating distinct dialogic orientations in the recontextualisation of political discourse. The interpreters’ renditions register 8,705.23 expansive and 8,257.92 contractive expressions per million, revealing a marked reduction in dialogic closure compared with those of the ST. This moderation suggests a discursive strategy of interpersonal accommodation, in which the interpreters open the dialogic space through modalisation (may, can, perhaps) and attenuate categorical stance markers (must, will). By contrast, GPT-5’s translations reveal 6,984.91 expansive and 12,001.70 contractive items per million words, a pattern indicating a retraction of dialogic latitude. GPT-5’s overrepresentation of contractive forms (must, will, however) and underuse of epistemic modals render its discourse epistemically denser and less interactionally negotiable. Such tendencies signal GPT-5’s predilection for propositional closure rather than interpersonal alignment. In effect, human interpreters manifest rhetorical empathy through dialogic openness, whereas GPT-5 tends to augment the surface assertiveness of the STs but without the interpersonal tact that human mediation instinctively restores. This trend is illustrated by the examples below.

Expansive/Contractive Engagement Expressions Quantified in the STs, TTshuman-interpreters, and TTsChatGPT5 Sub-Corpora (Normalisation Base: 1 Million).

Example 4 exemplifies divergent engagement enactment through agent visibility and modality. The ST employs a balanced directive “亚洲稳定需要共同呵护[. . .]” (Asia’s stability requires/needs joint safeguarding [. . .]) which projects Engagement: entertain through an implicit call for cooperation (cf. Martin & White, 2005). The human interpreter reconfigures this into “[w]e need to make concerted efforts to resolve major difficulties to ensure stability in Asia,” which activates a first-person plural subject and recasts the clause into the active voice. This adjustment discursively performs a dual interpersonal function: first, it realises Attitude: Judgement (tenacity and propriety) by positioning collective agency as morally desirable; second, it broadens dialogic inclusivity through Expansion (cf. Martin & White, 2005), inviting shared responsibility. By contrast, GPT-5’s “Asia’s stability must be safeguarded [. . .]” resorts to a passive construction and the categorical modal must be. This re-encodes Contraction via proclaim (Martin & White, 2005), transforming a cooperative exhortation into an obligatory directive. The shift elevates epistemic certainty but suppresses interpersonal interactiveness, revealing GPT-5’s tendency towards reduced expansiveness and its disposition for impersonal authority over participatory engagement: Example 4 (2013) ST:亚洲稳定需要共同呵护、破解难题。 Gloss: Asia’s stability requires/needs joint safeguarding and solving difficult problems. TThuman-interpreter: We need to make concerted efforts to resolve major difficulties to ensure stability in Asia. TTChatGPT5: Asia’s stability must be safeguarded through joint efforts and effective problem-solving.

Example 5 further substantiates the divergence in dialogic orientation through subtle yet consequential shifts in modality. In the ST, “需要指出的是” (It needs to be pointed out) preserves the impersonal, quasi-obligatory tone of the Chinese, signals a conventionalised metadiscursive alert. The human interpreter’s rendition, “Let me stress,” nonetheless, re-personalises the utterance by introducing an explicit first-person agent, thereby heightening speaker visibility and transforming an impersonal procedural remark into an engaged rhetorical intervention. This shift expands dialogic space by foregrounding subjectivity and inviting audience alignment, rather than presenting the proposition as institutionally pre-given. GPT-5’s “It should be noted,” by contrast, retains the impersonal construction and reinstates a contractive stance through the modal should, which encodes normative expectation while suppressing interpersonal agency. A similar pattern emerges in the subsequent clause: whereas the ST’s “仍不容低估” (should still not be underestimated) conveys guarded caution, the human interpreter’s “may still lie ahead” recasts evaluative warning into epistemic possibility, thereby enacting Engagement (Martin & White, 2005): entertain and preserving diplomatic elasticity. GPT-5 intensifies contraction through “should by no means be underestimated,” where the emphatic negative reinforcement (“by no means”) amplifies categorical force and narrows dialogic latitude. These micro-shifts demonstrate how human mediation modulates risk discourse towards anticipatory openness and relational tact, while GPT-5 gravitates towards epistemic closure and impersonal assertiveness, reinforcing the broader pattern of algorithmic contractiveness identified in this section.

Examples 5 (2019) ST: 需要指出的是,今年不稳定不确定因素明显增多,外部输入性风险上升,困难和挑战仍不容低估,[……] Gloss: It needs to be pointed out that this year unstable and uncertain factors have clearly increased, externally transmitted risks have risen, and the difficulties and challenges should still not be underestimated, […] TThuman-interpreter: Let me stress that given the visible increase of uncertainties and destabilising factors as well as externally-generated risks, difficulties and challenges may still lie ahead, […] TTChatGPT5: It should be noted that this year has seen a marked increase in destabilising and uncertain factors, rising external spillover risks, and difficulties and challenges that should by no means be underestimated, […]

These examples elucidate a systematic divergence in Engagement realisation. Human interpreters recurrently enact dialogic expansion to foreground agency, hedging assertion, and maintain epistemic flexibility. Therefore, they discursively preserve the relational tenor and ideological courtesy of political discourse within the diplomatic setting of the Boao Forum. GPT-5, conversely, favours contractive formulations through categorical modality and assertive adverbs, rendering the discourse interactionally monologic. When considered from the perspective of the Appraisal framework (Martin & White, 2005), this contrast epitomises a shift from humanly negotiated dialogism towards algorithmic monoglossia, an interpersonal detachment that, while coherent, lacks the affective and diplomatic calibration characteristic of human mediation. The interpreters’ consistent orientation towards expansion can further be contextualised within China’s broader communicative ethos, which privileges harmony, reciprocity, and inclusivity in international discourse. Expansive engagement that is realised through hedging, epistemic openness, and agentive reframing functions as pragmatic diplomacy, diffusing potential tension and fostering solidarity among global audiences. Such modulation exemplifies the interpreter’s adaptive agency in aligning interpersonal stance with the institutional pursuit of cooperative image-building, which de facto transforms categorical propositions into sites of shared negotiation and attitudinal accommodation.

5.3 Reconstructed deictic solidarity: different pronoun usage of “WE”

The use of first-person plural pronouns, we, us, our, constitutes a critical linguistic resource for negotiating solidarity and proximity in political discourse. Building on Munday’s (2015, 2018) extension of Martin and White’s (2005) Engagement system into translation and interpreting studies, these deictic markers operate as spatial–ideological coordinates that centre the speaker within the discourse space while simultaneously aligning the audience through a co-constructed field of reference and solidarity. In this light, the deictic pronoun WE (we, us, our) projects a centripetal orientation that draws interlocutors inward, collapsing interpersonal distance between speakers/writers and listeners/readers to construct a solidarity discourse. Scrutinising the use of WE in human interpreters’ renditions and GPT-5’s translations, therefore, has the potential to illuminate the way in which each version reconstructs interpersonal proximity in the context of multilingual political discourse.

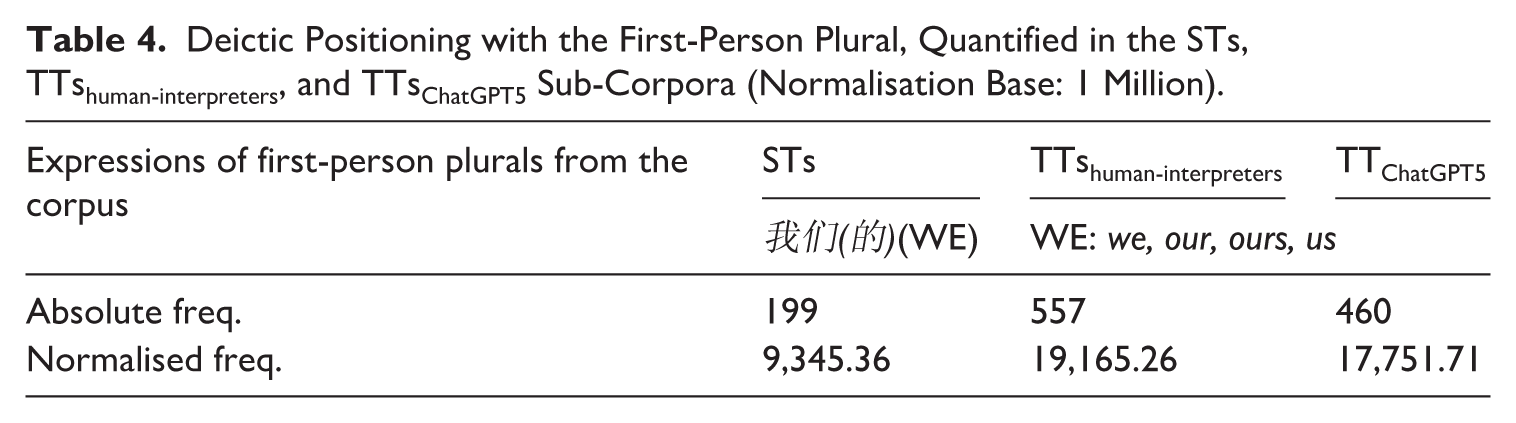

As shown in Table 4, the distribution of first-person plurals displays an upwards shift from the STs to human interpreters and GPT-5 versions. The STs contain 9,345.36 instances per million words, whereas the interpreters’ renditions nearly double this frequency to 19,165.26, and GPT-5’s translations are noticeably lower at 17,751.71. The interpreters’ amplification of WE foregrounds a strategic, rhetorical reengineering: by activating collective agency, they transform institutional discourse into a participatory register, expanding Engagement through interpersonal sharedness. GPT-5, while also increasing the frequency of WE, does so, arguably, in a mechanistic rather than relational fashion. Although the Chinese language features a topic-prominent and pro-drop linguistic nature for which subjects are non-mandatory and context-driven, the marked increase points to interpreters’ mental model of affiliation/alterity.

Deictic Positioning with the First-Person Plural, Quantified in the STs, TTshuman-interpreters, and TTsChatGPT5 Sub-Corpora (Normalisation Base: 1 Million).

This increase in first-person plurals in both human and GPT-5 renditions can be partially attributed to the typological contrast between Chinese and English. As a topic-prominent and pro-drop language, Chinese allows subjects to remain contextually implicit, whereas English syntactically necessitates overt pronominal realisation to maintain cohesion and agency (Gong, 2019). Such cross-linguistic asymmetry legitimises the general rise of WE in both versions. Yet, the human interpreters’ intensified use of WE goes beyond grammatical explicitation: it reflects a rhetorical manoeuvre that repositions the speaker within a shared discursive space for collective participation. Through this strategic deixis, interpreters enact affiliative alignment and interpersonal proximity to infuse the discourse with solidarity. GPT-5, though increasing pronominal frequency, does so in a more mechanistic manner, reproducing structural regularities without the contextual attunement or emotional calibration that characterise human mediation. Examples 6 and 7 below further illustrate how these differing uses of WE manifest in distinct patterns of deictic positioning and relational stance.

Example 6 illustrates how first-person plural deixis used by the human interpreter reconfigures engagement positioning. When the ST uses the referential noun “各国” (all countries), the human interpreter’s “we must” introduces discursive solidarity, shifting from collective description to co-solidary participation to draw together the speaker and audience to a shared stance-taking centre. GPT-5’s literal translation of “countries” retains impersonal distance: Example 6 (2019) ST: 面向未来,各国应深化战略互信,积极践行共同、综合、合作、可持续的亚洲安全观。 Gloss: Facing the future, all countries should deepen strategic mutual trust, actively practice the common, comprehensive, cooperative, sustainable Asian security concept. TThuman-interpreter: Looking ahead, we must deepen strategic mutual trust and work toward the vision of common, comprehensive, cooperative and sustainable security for Asia. TTChatGPT5: Going forward, countries should deepen strategic mutual trust, put into practice a common, comprehensive, cooperative, and sustainable Asian security concept.

In Example 7, deixis again signals divergent interpersonal strategies. The human interpreter’s “[o]ur efforts [. . .] and our endeavour” inserts “our” twice to construct collective agency and shared moral responsibility; it serves to align the speaker and audience in a common national project. GPT-5’s literal translation “Economic development and improvements [. . .]” reproduces descriptive neutrality. In contrast to the human interpreter’s version, the absence of deixis in the GPT-5 translation yields a cooler, reportive tone: Example 7 (2012) ST:发展经济与改善民生相辅相成,可以形成良性互动。 Gloss: Developing the economy and improving people’s livelihoods complement each other and can form a positive interaction. TThuman-interpreter: Our efforts to promote growth and our endeavour to improve people’s lives reinforce each other. TTChatGPT5:Economic development and improvements in people’s well-being reinforce each other and can create a virtuous cycle.

In this category of interpersonal function, human interpreters deploy we and our as deictic acts to reposition the speaker and audience in a shared moral and political horizon. This discursive centripetality transforms official address into collective participation for audiences in situ of the conference setting. GPT-5, though occasionally reproducing plural deixis, does so mechanically or grammatically for the non-subject sentences in the Chinese language, achieving grammatical inclusion without interpersonal resonance. This divergence marks the boundary between humanly dialogic engagement and algorithmic detachment.

6. Concluding remarks

This exploratory study has interrogated whether and to what extent a non-human language model such as GPT-5 can reproduce the interpersonal texture which has long been the preserve of human interpreters for the task of interpreting political speeches. Informed by Appraisal Theory (Martin & White, 2005) and deictic positioning as an extension of its Engagement system applied in translation/interpreting research (Munday, 2015), this study compared the Attitudinal and Engagement manifestations in human interpreters’ renditions and GPT-5’s translations of Chinese speakers’ speeches delivered at the Boao Forum for Asia (2012–2024). The findings reveal a conspicuous asymmetry between human renditions and machine translations. While GPT-5 may have seemingly displayed linguistic precision, structural coherence, and lexical consistency, its outputs remain predominantly interpersonally “neutral” or “detached.” Human interpreters, by contrast, demonstrate a refined sensitivity to relational cues for instance by reconfiguring the ST discourse through evaluative amplification, dialogic expansion, and deictic pronouns for solidarity discourse. In situ the context of institutional, political, diplomatic, and cross-cultural contexts of Asia’s Boao Forum, they transform institutional rhetoric into affectively resonant communication, modulating voice and tone to forge alignment with the audience. GPT-5’s translations, though seemingly grammatically impeccable, tend to flatten evaluative nuance and interpersonal intent, thus yielding discourse that is textually accomplished but relationally diminished.

In direct response to the research questions at the beginning of the article, the analysis indicates that GPT-5 only partially simulates the interpersonal patterns of human mediation. Its renditions achieve propositional fidelity but fall short of reproducing the affective tact and empathic adaptability inherent in human presence. Human interpreters evince a cogent proclivity towards evaluative modulation, including amplifying positivity, softening negativity, and enriching metaphorical vividness in alignment with institutional diplomacy; whereas, GPT-5 maintains lexical “equilibrium,” accurate yet affectively restrained. In Engagement realisation, interpreters consistently expand dialogic space through various linguistic means, sustaining relational courtesy and epistemic openness; GPT-5, by contrast, favours contractive modality and categorical stance, rendering the discourse monologic and detached. Deictically, interpreters deploy we and our to construct a shared moral and communicative horizon, discursively rendering official address into collective participation; yet, GPT-5 reproduces such forms more mechanically without nuanced considerations of interpersonal resonance. These differences delineate the current threshold of machine-mediated communication: while LLMs can emulate, to some level, textual sophistication, they cannot yet inhabit the relational consciousness through which human interpreters enact nuanced intersubjective meaning.

Such findings reaffirm that the interpersonal function constitutes the defining essence of human-centred interpreting practices and the site of what Pym (2016) terms “added value.” In an era where neural architectures (earlier machine translation models) and LLMs rival human translators/interpreters in syntactic fluency, the distinctive merit of human interpreters lies in their capacity for creative mediation (Pym, 2016); that is, the reconstruction of the ST discourse in ways that sustain, inter alia, affective engagement and pragmatic resonance across communicative boundaries. As Pym (2016, p. 231) argues, “the added value of our work must increasingly be found at higher levels of cross-cultural communication,” where translation/interpreting involves purposeful shaping rather than mechanical replication. Human interpreters engage in such “text tailoring” by recalibrating the semiotic fabric of the STs to produce target renditions that not only convey meaning but also perform interpersonal relationships. Their agency resides in this delicate orchestration of empathy, authority, and alignment, qualities that cannot be algorithmically parameterised, for they derive from a lived understanding of human interlocution. The present study therefore suggests that the interpersonal domain, where linguistic choices are saturated with affective, ethical, and affiliative significance, remains the threshold that delineates human relational intelligence from computational approximation.

The present inquiry is bounded by several limitations. The corpus, while diachronically representative, draws exclusively from a single institutional context: the Boao Forum for Asia, and therefore does not exhaust the interpersonal variability observable across different communicative genres, languages, or geopolitical settings. Moreover, GPT-5 represents one iteration within a rapidly evolving ecosystem of LLMs, whose performance is contingent upon architecture, training data, and prompting parameters. Future research could expand the empirical base by incorporating diverse institutional corpora, multilingual configurations, and comparative model analysis to capture how human renditions and machine translations differently negotiate interpersonal meaning. A multi-modal or cognitive dimension might also enrich our understanding of how evaluative stance and affective alignment are processed and perceived.

The findings in this exploratory study invite a broader reflection on the evolving ontology of presence in interpreting. If human interpreters bring empathic intentionality, a consciousness of otherness and shared affect to communicative events, LLMs offer merely a computational semblance of that intentionality. While AI machine translation might be useful to support language diversity and enhance cross-lingual information access, its usage inevitably involves consequential risk of errors and serious ethical issues, particularly when it is used in high-stakes situations such as government public services that matter to the rights, benefits and well-being of the public (Wang, 2025). Crucially, between human translation/interpreting and AI machine translation, as two modes of communication, lies the defining question of the discipline: not whether machines can speak fluently, but whether they can communicate interpersonally. As translation/interpreting increasingly traverses the liminal space between the human and the artificial, the task is not to measure machines by human warmth, but to recognise what human mediation continues uniquely to accomplish—the ethical, affective, and interpersonal “re-writing/re-speaking” of language use in the service of human connections. The interpersonal dimension, therefore, is not a human residue in an automated age; it is the irreducible, irreplaceable core of translation/interpreting itself.

Footnotes

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The first author holds a co-editorial position with this journal; the submission and review of this manuscript were conducted in strict accordance with the journal’s editorial policies to ensure transparency and the absence of any conflict of interest. The peer review process was handled by an independent editor, and the first author was kept blinded to the review and decision-making process.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Social Science Foundation with Grant No. 25BYY046, Chongqing Municipal Education Commissions with Grant No. CYS25432/CYS25433 and under the scheme of “Education-AI-Industry” for language students.