Abstract

The convergence of agent-based modeling (ABM) and large language model (LLM)-driven autonomous agents presents a transformative approach to social simulation, particularly for addressing the complexities of the United Nations sustainable development goals (SDGs). ABM enables the exploration of emergent behaviors in complex systems but is limited by static, rule-based agents that fail to capture the nuances of human decision-making. LLM-based agents, equipped with adaptive reasoning, contextual awareness, and generative capabilities, offer a solution by enhancing realism, diversity, and inclusivity in simulations. This paper explores how integrating these technologies can advance policy experimentation, enabling simulations that reflect diverse cultural contexts and emergent social norms. We discuss the technical and ethical challenges of LLM-based systems, including reasoning limitations, hallucinations, and alignment constraints, and propose strategies for governance that balance innovation with accountability. This paper, based on insights from the UNU Macau AI Conference 2024 session titled “AI Agents in Practice: Harnessing AI for All,” advocates for interdisciplinary research and human-in-the-loop frameworks to ensure responsible AI use in sustainable policymaking.

Keywords

Introduction

The world's most pressing social, environmental, and economic challenges—embodied in the United Nations (UN) sustainable development goals (SDGs)—demand innovative methodologies that can capture and simulate complex human behaviors. Agent-based modeling (ABM) has been instrumental in exploring how individual actions can collectively drive societal outcomes. However, conventional ABM agents often rely on fixed, simplified rules that cannot capture the nuanced, ever-evolving nature of human decision-making, making it difficult to fully represent phenomena such as cultural differences, emergent norms, and complex policy responses.

The UN SDGs represent a global consensus on the most critical social, environmental, and economic challenges confronting humanity. These goals are inherently complex, characterized by deep interconnections, feedback loops, and non-linear dynamics. Tackling issues like climate change, poverty, and inequality requires policy interventions whose outcomes are often difficult to predict using traditional analytical methods. These methods frequently struggle to capture the emergent, system-level consequences that arise from the diverse decisions and interactions of myriad individual actors within a society. It is this very complexity, where the whole often behaves differently than the sum of its parts, that demands innovative methodologies capable of simulating human behavior and societal dynamics in a more granular and realistic fashion.

ABM is a computational approach that offers a “bottom-up” perspective, constructing simulations not from overarching equations but from the actions and interactions of individual “agents.” These agents serve as computational representations of real-world actors such as individuals, households, businesses, or even government bodies. Each agent can be endowed with specific characteristics (such as age, income, beliefs, or preferences) and decision-making rules that govern their behavior. By placing these agents within a simulated environment and allowing them to interact with the environment and each other by exchanging information, competing for resources, forming networks, ABM allows researchers to observe how large-scale patterns and societal outcomes, such as market trends, disease spread, or social norm shifts, emerge directly from micro-level activities. This makes ABM an invaluable tool for exploring how complex social systems function and why certain policies might succeed or fail.

Despite its strengths, conventional ABM faces a significant limitation: the agents themselves are often programmed with relatively simple, static, or pre-defined rules. While useful for modeling certain behaviors, these rule-based approaches often fail to capture the full richness and adaptability of human decision-making. Real people learn, adapt, possess cultural biases, experience emotions, and respond to novel situations in ways that hard-coded rules cannot easily replicate. Concurrently, large language models (LLMs) have reshaped AI by excelling in text comprehension and generation, recent work has extended these capabilities into “agentic” systems that integrate multi-step reasoning, external tool use, and memory-based contexts (Xi et al., 2025). By incorporating a spectrum of perspectives and adapting to new information, such LLM-driven agents present a transformative opportunity to enhance ABM. It means moving beyond simple rules to agents that can: (a) reason with context: they can understand and react to nuanced information, including policy descriptions or news updates, in a manner more aligned with human comprehension. (b) Exhibit diversity: LLMs can be prompted or fine-tuned to represent a vast spectrum of human perspectives, cultural backgrounds, and decision-making styles, leading to more inclusive and representative simulations. (c) Adapt and learn: while still an area of active research, agentic LLMs can potentially modify their behavior based on simulated experiences and interactions, capturing emergent social learning and norm evolution.

This paper explores the integration of LLM-based autonomous agents within ABM frameworks as a novel approach to social simulation, especially tailored to address the complexities inherent in SDG-related policy strategies. We argue that this synergy allows for richer, more dynamic simulations that can better inform policy design and evaluation. We will examine the potential benefits, from fostering collective intelligence within simulations to ensuring the inclusion of diverse, even marginalized, perspectives. However, we also acknowledge and address the significant challenges—from the technical limitations of current LLMs, such as their potential for generating inaccurate information (“hallucinations”) and the difficulties in ensuring their alignment with human values, to the pressing ethical and governance considerations. Ultimately, this research aims to delineate a path toward leveraging these advanced AI capabilities responsibly, creating more effective and equitable policy solutions for a sustainable future.

Enhancing ABM with LLM-based Generative Agents

ABM provides a potent framework for understanding complex systems by simulating the interactions of individual actors. In ABM, an “agent” serves as a computational entity, typically characterized by specific attributes (such as age, location, income, or beliefs) and a set of decision rules that dictate its behavior, like movement or interaction strategies (Dorri et al., 2018). But real-world situations, such as navigating a crisis or adopting a new policy, often depend heavily on how individuals interpret evolving information, weigh conflicting goals, and respond based on their unique experiences and cultural backgrounds—dynamics that conventional models struggle to capture fully (Liang et al., 2022).

Given the complexity of SDG targets, AI can be significantly useful in advancing sustainable development (Vinuesa et al., 2020), though earlier AI successes often demonstrated superhuman capabilities within highly constrained domains. Modern LLMs exhibit a more generalized capacity for understanding and reasoning, built upon training with vast amounts of textual data. Through innovations like sophisticated prompting techniques that guide their reasoning processes, these models can generate human-like narratives, analyze complex scenarios, and engage in step-by-step problem-solving (Wei et al., 2022), providing a foundation for more flexible, general-purpose intelligence. A particularly relevant development in this space is the concept of “Generative Agents,” which specifically aims to simulate human-like behaviors within virtual social environments based on generated texts for “next-step” instructions (Park et al., 2023). These agents are designed to maintain internal states, including memories, goals, and even simulated emotional responses, allowing them to engage in complex social interactions. They can form relationships, develop trust or conflict, and participate in collective dynamics, leading to the emergence of phenomena like community norms or cultural diffusion. This focus on simulating rich inner lives and social interactions makes generative agents particularly well-suited for integration into ABM frameworks.

This approach opens new avenues for policymakers seeking to navigate the complex interdependencies inherent in the SDGs. By integrating LLM-driven agents, models can be populated with actors representing a rich tapestry of demographic, cultural, and socioeconomic perspectives, which is essential for understanding how policies impact diverse communities, which is a cornerstone of the SDG agenda. These agents can be endowed with access to up-to-date knowledge through retrieval mechanisms and empowered with decision-making processes that transcend simple rule-based heuristics, allowing for a more realistic simulation of human responses to policy shifts. Consequently, when modeling the potential outcomes of interventions targeting specific SDGs, such as poverty reduction (SDG 1), climate action (SDG 13), or promoting inclusive institutions (SDG 16), it becomes possible to consider their intricate economic, social, and environmental ripple effects with greater fidelity. Therefore, these enhanced models can become significantly more descriptive and capable of reflecting diverse, context-sensitive human responses (Sanders et al., 2023). This capability allows policymakers to experiment with multifaceted scenarios, exploring how interventions might unfold within varied societal contexts and capturing emergent outcomes that reflect the genuine complexities of human behavior, thereby fostering more robust and equitable strategies for achieving sustainable development.

System Architecture

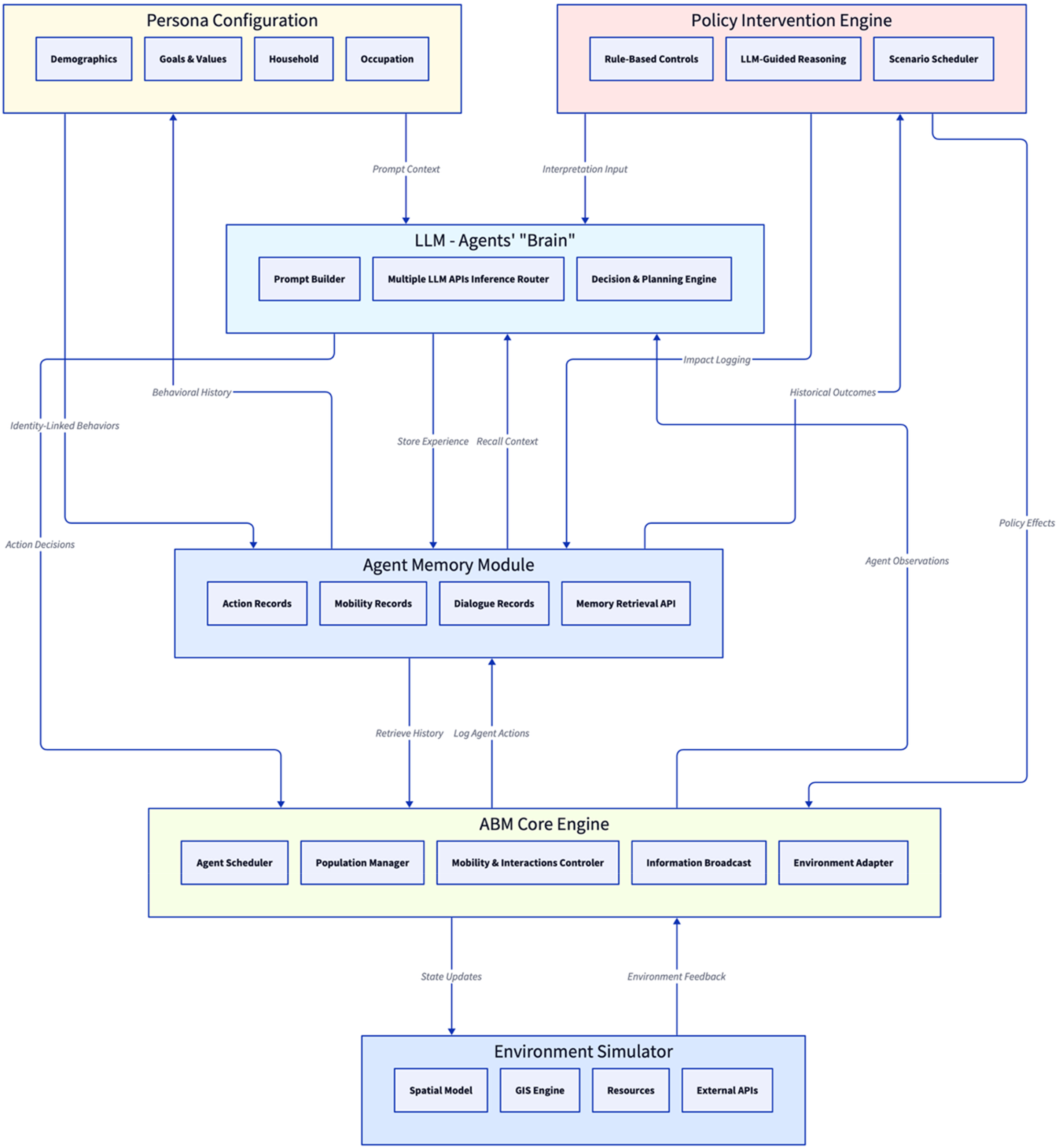

The integration of LLMs into ABM necessitates a carefully designed system architecture that allows seamless communication and control flow between the ABM simulation environment and the LLM services. This work proposed a modular architecture (Figure 1). The ABM environment manages the overall simulation clock, agent populations, spatial environment, and interaction protocols. LLM capabilities are encapsulated as a distinct module or service that agents can invoke.

Framework Design of LLM-enhanced Agent-based Modeling System.

Rather than reimplementing well-tested ABM primitives as in scratch engines such as AgentSociety (Piao et al., 2025), our proposed framework builds atop existing (largely tested, with good performance metrics in common models) python-based ABM library, leveraging its flexible scheduler, grid and network representations, and rich community ecosystem. This choice reduces development overhead, ensures robust performance on standard use cases, and allows focus on agents’ cognitive capabilities rather than duplicating core scheduling logic. The flexibility for defining and customizing the

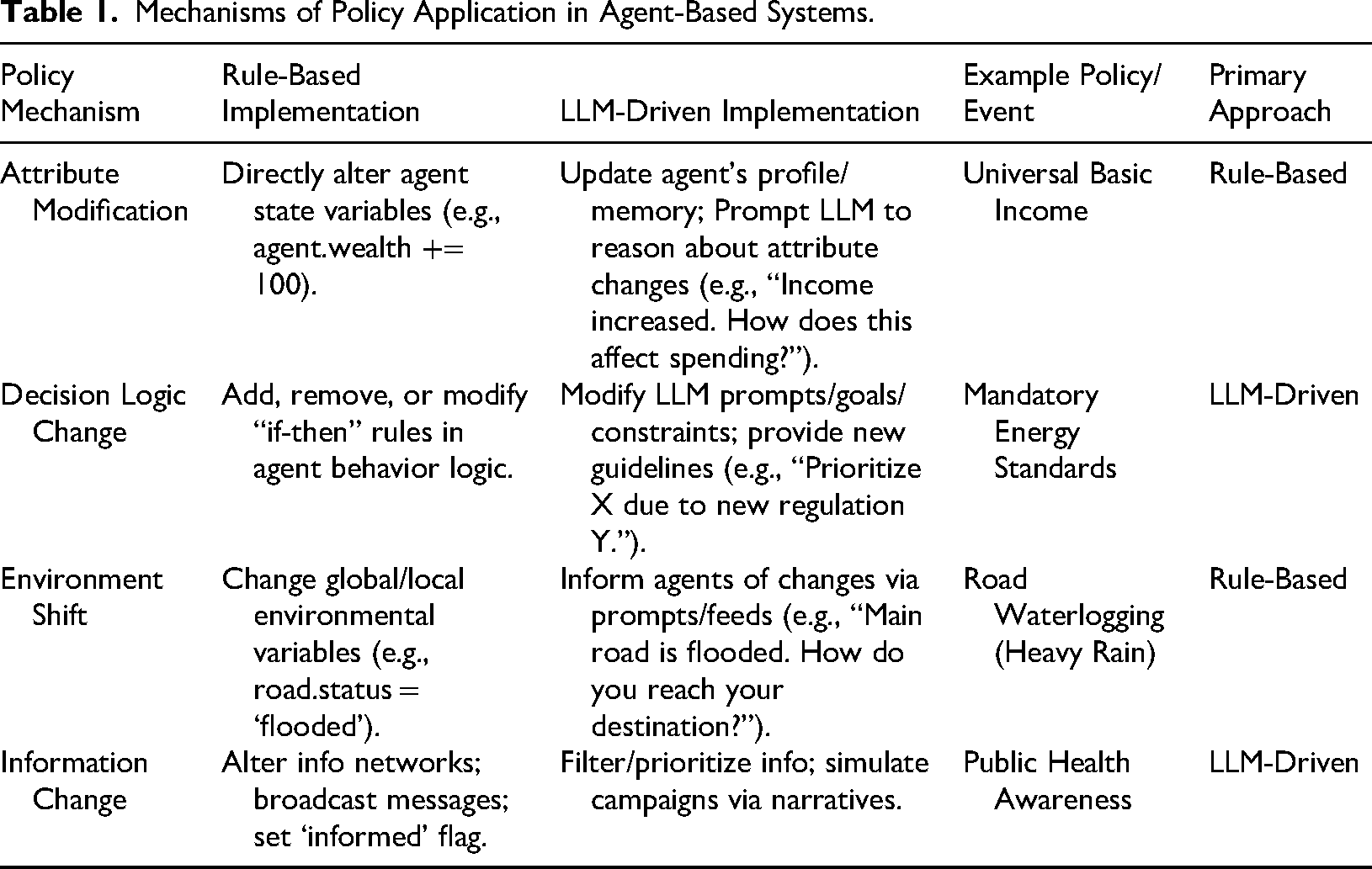

Classic methods in ABM software such as directly modifying agent attributes or altering fixed decision-making offer advantages in terms of computational efficiency and ease of interpretation. or policies where interpretation, negotiation, belief-updating, or culturally inflected responses are central, an LLM-agent can be informed of a policy change and asked to reason about its implications based on its persona, memory, and goals. This allows for modeling more nuanced responses, capturing the heterogeneity and complexity inherent in human reactions to policy. Table 1 provides a comparative overview of how these core policy mechanisms can be implemented using both rule-based and LLM-driven approaches, and specifies the primary approach within our framework, along with relevant examples. We propose using rule-based methods for the direct implementation of changes to tangible attributes and environmental parameters, leveraging their efficiency. For changes concerning decision logic, information processing and interpretation, we designate the LLM-driven approach as primary.

Mechanisms of Policy Application in Agent-Based Systems.

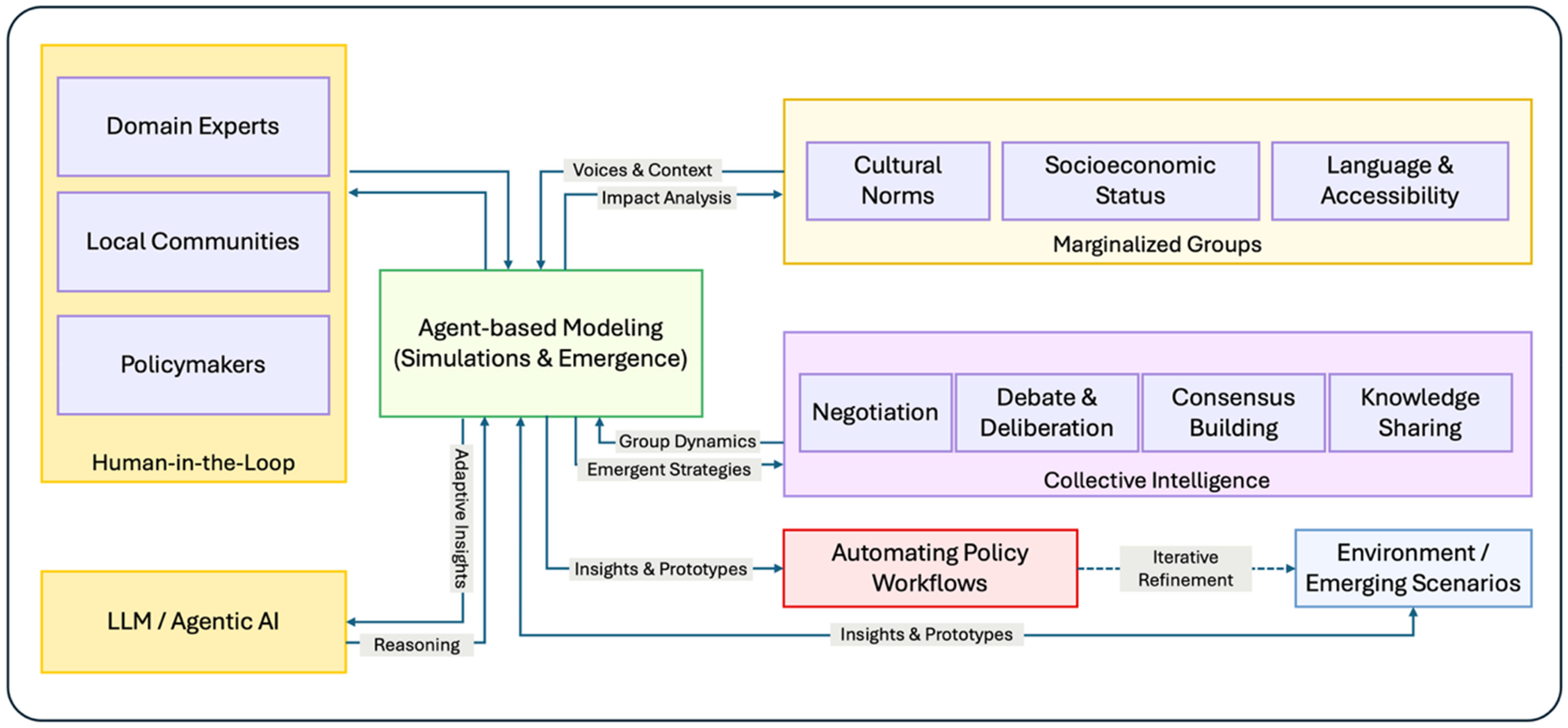

Leveraging LLM-Enhanced ABM: Scenarios in Policy Co-Creation, Inclusion, and Automation

The potential applications of the integrated LLM-driven ABM herald advancements for social simulation, particularly in navigating the intricate landscape of SDGs policymaking, offering novel methodologies for understanding, shaping, and even automating aspects of policy development and implementation. Our proposed framework provides a conceptual blueprint for these advanced applications (Figure 2), centering on an LLM-enhanced ABM engine designed to harness collective intelligence, ensure the inclusion of diverse perspectives, streamline policy workflows, especially those from marginalized communities. The agentic applications critically supported by human-in-the-loop (HITL) paradigms.

LLM-enhanced Agent-based Modeling Framework for Collective Intelligence, Inclusion, and Policy Automation.

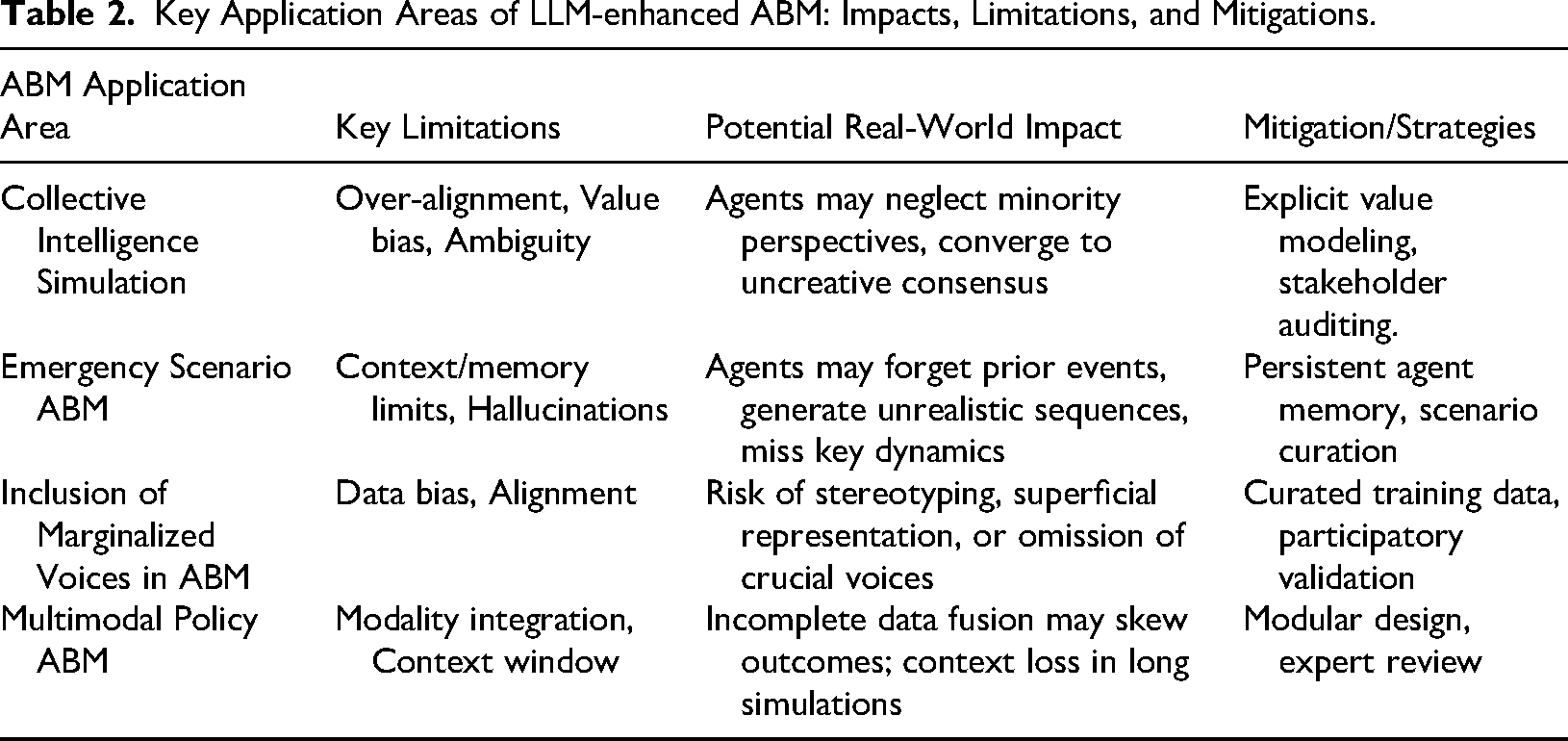

The symbiotic relationship between the LLM, providing “generative reasoning” by text-based planning, and the ABM Engine, which simulates emergent behaviors and returns “adaptive insights” is fueled by data representing the Environment or Emerging Scenarios—be it climate models, economic forecasts, or public health data—allowing the simulation to dynamically respond to changing conditions. Table 2 summarizes several important application areas of our proposed ABM framework, corresponding impacts, limitations, and potential mitigations.

Key Application Areas of LLM-enhanced ABM: Impacts, Limitations, and Mitigations.

Agents mimicking different policy stances can negotiate, debate, and generate shared positions, mirroring real legislative or community dialogues. This process ensures that the simulated outcomes reflect a plurality of perspectives, enhancing the realism and applicability of policy simulations. Instead of top-down modeling, simulations can incorporate bottom-up intelligence, reflecting the input of thousands or millions of virtual stakeholders. This approach allows for more nuanced and representative policy prototyping, capturing the complexity of real-world stakeholder interactions.

However, if models are over-aligned to prevailing norms, the simulation may underrepresent dissenting or minority views, resulting in policies that are less robust or inclusive in the real world. Over-aligning a model to a specific set of norms could unintentionally bias its behavior or exclude alternative value systems (Senthilkumar et al., 2024).

Conclusion and Discussion

The convergence of ABM with LLM-driven autonomous agents marks a pivotal step in social simulation, offering significant potential for navigating the intricate policy landscapes associated with the UN SDGs. This paper has explored how leveraging the adaptive, context-aware, and generative capabilities of LLM-based agents can enrich ABM, enabling simulations that achieve greater realism in human decision-making, foster the inclusion of diverse cultural and socioeconomic perspectives, and allow for more dynamic and nuanced policy prototyping. By simulating emergent behaviors with agents that can reason, remember, and interact in human-like ways, we can move beyond the limitations of static, rule-based models and begin to explore complex societal responses to policy interventions with unprecedented fidelity.

However, the integration of these powerful AI technologies is not without its challenges, demanding careful consideration of both technical and ethical dimensions. LLMs, despite their advancements, are still susceptible to reasoning limitations, the generation of “hallucinations,” and complexities surrounding their alignment with diverse ethical frameworks and human values. These technical hurdles necessitate ongoing research into robust validation techniques, methods for ensuring the verifiability of agent behaviors, and architectures that can manage context and memory effectively within large-scale simulations.

Beyond the technical, the deployment of LLM-enhanced agentic AI for policy raises profound governance questions. The existing landscape of AI regulation, while evolving through initiatives like the EU AI Act (Kop, 2021) and U.S. guidelines, often struggles to keep pace with the rapid advancements in generative AI and its agentic applications. These frameworks, while important for establishing foundational safety and accountability standards and reflecting a desire that AI should not override human decision-making (Hagendorff, 2020), can be outpaced by technological shifts, potentially stifling innovation or failing to address specific risks associated with autonomous policy simulation. This gap highlights the indispensable role of more agile governance mechanisms, often emerging from “soft law” instruments and multi-stakeholder collaborations (Abbott & Snidal, 2000; Tallberg et al., 2023).

Effectively governing this space requires a concerted effort involving diverse actors. Technology enterprises developing the core LLM and ABM platforms bear a responsibility for building in ethical safeguards and transparency, as seen in emerging industry initiatives (Fjeld et al., 2020). Research institutions must pioneer methods for responsible development and validation. Crucially, civil society organizations, particularly those representing marginalized communities, must be integral partners, ensuring that simulations reflect lived realities and promote equitable outcomes, rather than perpetuating existing biases. Their involvement is vital for grounding agent personas and validating simulation results. We must also remain cognizant of broader societal concerns, including potential impacts on labor markets, persistent copyright and liability issues, the risk of exacerbating the digital divide, and political threats arising from misuse.

Addressing these multifaceted challenges necessitates a shift toward multi-stakeholder governance models. Such frameworks, fostering continuous dialogue between governments, industry, academia, and civil society, are best positioned to develop norms that are both ethically sound and practically implementable. A core component of this approach must be the robust implementation of HITL frameworks. As discussed, HITL is not merely a technical feature but a fundamental governance strategy, ensuring that domain experts, policymakers, and community representatives remain central to the design, validation, and interpretation of policy simulations. This ongoing human oversight is critical for mitigating AI risks, building trust, and ensuring that the outputs of these complex models serve human-defined goals.

Looking ahead, future research must prioritize interdisciplinary collaborations. Computer scientists, social scientists, legal scholars, ethicists, and policymakers must work together to refine LLM-enhanced ABM methodologies, develop rigorous validation standards, and co-create governance protocols. Deepening HITL frameworks to make them more interactive and accessible, particularly for non-technical stakeholders and marginalized communities, is paramount. By embracing these collaborative and human-centric approaches, and by aligning AI development with shared human values—anchored in international norms yet sensitive to local contexts—we can ensure that LLM-enhanced ABM evolves as a transformative and trustworthy tool, capable of supporting the complex, inclusive, and sustainable policymaking required to achieve the SDGs.

Footnotes

Ethical Statement

There are no human participants in this article and informed consent is not required.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.