Abstract

Previous efforts to support creative problem-solving have included (a) techniques such as brainstorming and design thinking to stimulate creative ideas, and (b) software tools to record and share these ideas. Now, generative AI technologies can suggest new ideas that might never have occurred to the users, and users can then select from these ideas or use them to stimulate even more ideas. To explore these possibilities, we developed a system called Supermind Ideator that uses a large language model (LLM) and adds prompts, fine tuning, and a specialized user interface in order to help users reformulate their problem statements and generate possible solutions. This provides scaffolding to guide users through a set of creative problem-solving techniques, including some techniques specifically intended to help generate innovative ideas about designing groups of people and/or computers (“superminds”). In an experimental study, we found that people using Supermind Ideator generated significantly more innovative ideas than those generated by people using ChatGPT or people working alone. Thus our results suggest that the benefits of using LLMs for creative problem-solving can be substantially enhanced by scaffolding designed specifically for this purpose.

This research explores the ways that generative AI technologies can enhance creative problem-solving, explicitly evaluating the role of Collective Intelligence techniques and structured user guidance on user-produced solutions. This work presents Supermind Ideator, a system developed to combine large language models with tailored prompts and a specialized user interface to scaffold creative thinking. In an experimental study, participants using the Supermind Ideator generated significantly more innovative ideas compared to those who used only ChatGPT or no AI assistance. The study underscores that providing scaffolding—that is, structured guidance through predefined techniques—boosts the effectiveness of generative AI in augmenting human creativity. The significance of this research lies in its implications for both basic science and applied settings. It advances our understanding of how human-computer interactions can be optimized by incorporating tailored processes, rather than relying solely on general AI capabilities. In applied settings, such as organizational innovation and collective decision-making, tools like the Supermind Ideator could streamline ideation, reduce cognitive biases like fixation, and democratize access to creative problem-solving methods. This work contributes to collective intelligence research by demonstrating that combining human judgment with structured AI-generated inputs can lead to superior creative outcomes, highlighting the potential for designing more intelligent and collaborative systems.Significance Statement

Introduction

Creative problem-solving is critical to success in many kinds of human activity, from architecture, engineering, and public policy to art, entrepreneurship, and designing the human groups that perform all these activities (Mednick (1962)) (Dorst and Cross (2001)) (Gabora (2002)) (Sanders and Stappers (2008)) (Cardoso and Badke-Schaub (2011)). It is, therefore, not surprising that many techniques to improve creative problem-solving have been proposed over the years, including brainstorming, design thinking, mind-mapping, crowdsourcing, and many others (Robertson and Radcliffe (2006)) (Frich et al. (2018) Frich, Mose Biskjaer, and Dalsgaard) (Griebel et al. (2020) Griebel, Flath, and Friesike).

In this paper, we investigate the potential of a new kind of tool—generative AI—for supporting creative problem-solving. In particular, we focus on how large language models (LLMs)—such as the GPT (Generative Pre-trained Transformer) family of LLMs (Brown et al. (2020) Brown, Mann, Ryder, Subbiah, Kaplan, Dhariwal, Neelakantan, Shyam, Sastry, Askell et al.)—can take natural language descriptions of a problem as input and produce as output natural language ideas about how to reframe or solve the problem.

Interestingly, even though one widely discussed limitation of today’s LLMs is that they sometimes produce incorrect or irrelevant outputs; this limitation is usually not a problem when the generative AI system is used to augment human creativity instead of replacing it. In this case, human users can often easily decide which of the outputs from the system are useful enough to consider further and which aren’t. And even ideas that may at first seem irrelevant can sometimes trigger further useful ideas for human users. In fact, trying to make connections between a problem and seemingly unrelated ideas is one simple technique for triggering creative ideas (Lee et al. (2023) Lee, O’Mahony, and Lebeck).

However, it is still unclear how best to support the use of generative AI technologies for creative problem-solving and whether their usefulness can be substantially improved by scaffolding to help users carry out structured processes. To study this question, we developed the Supermind Ideator, a system that uses an LLM with specialized prompts, fine-tuning, and a user interface to help users reflect upon their problems and generate possible solutions. The system does this using a set of conceptual moves—techniques that humans can use to trigger creative ideas. By sequencing these moves in different orders and combinations, users can explore many different ideas for a given problem.

Most of the techniques we currently use in the Supermind Ideator are based on the “Supermind Design” methodology (Center for Collective Intelligence (2021)). Some of these techniques, such as looking at sub-parts or analogies, can be helpful for addressing any problem. Other techniques are specifically intended to help generate innovative ideas about how to design superminds, defined as groups of individuals acting together in ways that seem intelligent (Malone (2018)). For instance, one such supermind design technique encourages users to consider how a problem could be solved with different kinds of groups, such as hierarchies, democracies, markets, or communities.

In other words, “superminds” is a short way of saying “collectively intelligent systems.” Thus the Supermind Ideator illustrates how a collectively intelligent system composed of a person and a computer can design other collectively intelligent systems. The rest of this paper is about the implementation of the Supermind Ideator, how people used it to help them generate creative ideas, and how that process compared with two other standard practices: (a) humans working alone and (b) humans using ChatGPT.

Related work

Prior work has extensively discussed creative problem-solving approaches that use techniques from design thinking to collective intelligence. These approaches predominantly focus on groups using organizational and methodological approaches to address issues such as design fixation, knowledge curation, and creative inspiration.

Facilitated idea generation

From the early days of design being applied in the scientific arena (Cross (1982)) to the modern-day frameworks for using Design Thinking methodologies (Dam and Siang (2020)), creative problem-solving techniques have evolved significantly. For example, the mix of deep problem understanding and iterative solution generation, most notably combined in the Double Diamond method (Design Council (2005)), enables a rigorous and empathetic approach to integrate the needs of people, the possibilities of technology, and the requirements for business success. In the first of the two diamonds, practitioners (a) diverge by considering different ways to frame the problem and then (b) converge to narrow down to a useful problem definition. Then, in the second diamond, they (a) diverge by considering different potential solutions to the problem and then (b) converge on a few of the best solutions, as shown in Figure 1. The Double Diamond method highlights two components of creative problem-solving (understanding the problem and developing a solution) and showcases the four steps where one first discovers and defines the problem before then developing and delivering the solution. Source: Wikipedia - Double Diamond (design process model).

Other prior work has studied the phenomenon of creative ideation through sociocultural lenses (Dorst and Cross (2001)) (Lubart (2001)) (Gabora (2002)) (Pavie and Carthy (2015)) (Frich et al. (2018) Frich, Mose Biskjaer, and Dalsgaard), digging into specific topics and issues such as design fixation (Linsey et al. (2010) Linsey, Tseng, Wood, Schunn, Fu, and Cagan) (Cardoso and Badke-Schaub (2011)) (Smith and Linsey (2011)) (Youmans and Arciszewski (2014)), bias (Mednick (1962)), inspiration (Eckert and Stacey (2000)) (Thrash et al. (2014) Thrash, Moldovan, Oleynick, and Maruskin), and innovation (Howard et al. (2010) Howard, Dekoninck, and Culley) (Pavie and Carthy (2015)). According to Gabora (Gabora (2002)), for instance, ideas emerge, evolve, and manifest as creative products through the formation of combinations and reorganization of existing ideas. The process can be modeled by constraint-based iteration to transform ideas into tangible solutions.

However, idea-generation activities can be long and laborious, and mental tendencies, such as functional fixedness, often lead to design fixation (Jansson and Smith (1991)). Design fixation is a cognitive bias that presents as an over-fitting of design space to pre-existing knowledge and experiences of the designer (Cardoso and Badke-Schaub (2011)). It hinders wide-reaching ideas and overly constrains the domain of idea generation, functionally falling into a local maximum and missing the wider absolute maximum available. Studies have attempted to develop prevention methods such as concept mapping, remote association, facilitated design thinking, and exposure to other new and unrelated content (Howard et al. (2010) Howard, Dekoninck, and Culley) (Smith and Linsey (2011)) (Youmans and Arciszewski (2014)). More recent work has examined the potential for collective intelligence approaches such as crowdsourcing idea generation, crowd-based ratings, and other forms of computer-supported cooperative work to help alleviate design fixation and access diverse types of knowledge (Gregg (2010)) (Majchrzak and Malhotra (2013)) (Lee and Jin, (2019)) (Yun et al. (2021) Yun, Jeong, Kim, Ahn, Kim, Hahm, and Park) (Klein and Convertino (2014)) (Langham and Paulsen (2020)). All of these approaches, however, still largely rely on human effort and access to knowledge resources that do not easily scale.

Generative AI

The advent of Transformer architectures in language modeling has led to a meteoric rise in the use of LLMs (Vaswani (2017)). New systems like OpenAI’s GPT systems have facilitated general access to inferencing models that rapidly produce large-scale human-quality text output from a small input (Brown et al. (2020) Brown, Mann, Ryder, Subbiah, Kaplan, Dhariwal, Neelakantan, Shyam, Sastry, Askell et al.). These models can be tuned and directed with either (a) simple instructions (zero-shot learning), (b) small numbers of example input and output pairs (few-shot learning), or (c) large numbers of example inputs and outputs (fine-tuning). With these elements, LLMs are capable of very wide-ranging idea generation (Summers-Stay et al. (2023) Summers-Stay, Voss, and Lukin), and researchers are beginning to understand how to improve the quality of output from LLMs to better match the design space constraints (Zhu and Luo (2023)). Prior research suggests that generating more ideas can lead to greater creativity (Paulus et al. (2011) Paulus, Kohn, and Arditti) so it seems plausible that using generative AI would help humans generate more ideas and in turn lead to greater creativity.

To our knowledge, little systematic work has explicitly explored how to combine the expertise of humans with the capabilities of generative AI technologies through a workshop-like methodology. Some prior work has used LLMs to expand, rewrite, combine, and suggest ideas (Di et al. (2022) Di Fede, Rocchesso, Dow, and Andolina), to promote reflection (Xu et al. (2024) Xu, Yin, Gu, Mar, Zhang E, and Dow), or to generate analogies to boost creativity (Ding et al. (2023) Ding, Srinivasan, MacNeil, and Chan) (Bhavya et al. (2023) Bhavya, Xiong, and Zhai). Other prior work has looked at how to support the wider exploration of LLM-based content generation (Suh et al. (2024) Suh, Chen, Min, Li, and Xia) or how semantic diversity helps crowd ideation (Cox et al. (2021) Cox, Wang, Abdul, Von Der Weth, and Y. Lim) (Siangliulue et al. (2016) Siangliulue, Chan, Dow, and Gajos). Still all of this work does not seek to create the same dynamic of a workshop ideation session, did not empirically assess the effects of treatments on human creativity, and did not center itself on creating ideas about how groups could solve problems in innovative ways.

Given that current generative AI systems by themselves are often not capable of producing uniformly high-quality ideas, a very promising possibility is to let generative AI systems rapidly generate ideas for humans to consider, as suggested by Suh et al. with Luminate (Suh et al. (2024) Suh, Chen, Min, Li, and Xia). Generative technologies are already capable of providing usefully unexpected inputs and stimuli for human designers. And this can increase the range of possibilities to be considered by humans. Thoughtfully designing the way a system guides and facilitates this process can thus improve the probability that designers will find better solutions faster than they would have otherwise.

Two core facets underlie this concept: reflection and inspiration.

Reflection

During creative problem-solving, it is often useful to engage in a reflective feedback loop (Wooten and Ulrich (2017)) (Chen and Terken (2022)). While random feedback can be much better than no feedback, an approach that facilitates reflection can prevent early fixation on solutions and help explore design space constraints from both the problem and solution domains (Nakata and Hwang (2020)).

A critical concern with generative AI, and LLMs in particular, is that end users need to be able to articulate their goals in a way that leads the model to produce the desired output (Subramonyam et al. (2023) Subramonyam, Pondoc, Seifert, Agrawala, and Pea). In the same way that Hutchins, Hollan, and Norman (Hutchins et al. (1985) Hutchins, Hollan, and Norman) discuss minimizing the distance between a user’s thoughts and the user interface they are manipulating, it is important that generative systems provide indications to the end user of what the system can do and how to do it.

We extend this perspective by observing that much of the work done by design thinking and innovation facilitators is less an act of solution generation and curation and more of prompting insight through introspective questions and reflective actions. With this in mind, we suggest a useful characteristic for any innovation support system—it should promote reflection by humans rather than impose unnecessary constraints on their thinking. For instance, considering system outputs as “ideas” rather than “answers” and positioning the system as a tool to support human cognition rather than replace it may be key. And it seems especially important to emphasize to the human users that they are responsible for deciding what to do with the system’s outputs. They can ignore the system outputs, use them without change, or let them stimulate further creative thinking by the users.

Inspiration

The second critical quality for any innovation support system is to promote wide-reaching exploration of the available design space. This is often accomplished through facilitated activities that promote divergent rather than convergent thinking, such as the alternative uses test (Gilhooly et al. (2007) Gilhooly, Fioratou, Anthony, and Wynn). Many divergent thinking activities put the onus of creating new uses on the human engaging in the activity (Bygstad (2010)). Fortunately, it is increasingly being found that generative technologies like GPT can do quite well to replicate human idea generation within such divergent thinking tasks (Summers-Stay et al. (2023) Summers-Stay, Voss, and Lukin). This does not replace human ideation. Instead, it presents a chance to augment human idea generation and avoid design fixation by exposing humans to novel and diverse stimuli that stimulate new connections (Howard et al. (2010) Howard, Dekoninck, and Culley) (Smith and Linsey (2011)) (Youmans and Arciszewski (2014)).

A complex topic concerning LLMs today is their safety and accuracy with generated content (Bender et al. (2021) Bender, Gebru, McMillan-Major, and Shmitchell) (Singh et al. (2023) Singh, Bernal, Savchenko, and Glassman). Transformer-based systems are capable of exceptionally human-like language generation, but they do not always produce factual content nor can they reliably perform reasoning tasks in their current state (Valmeekam et al. (2022) Valmeekam, Olmo, Sreedharan, and Kambhampati). These “hallucinations” by the machine can sound as if they carry a sense of authority and accuracy even when they are simply false combinations of words that statistically sound appropriate given prior text and the underlying model of the LLM (Goodman et al. (2022) Goodman, Buehler, Clary, Coenen, Donsbach, Horne, Lahav, MacDonald, Michaels, Narayanan et al.).

We see this (and much of the rest of the content that LLMs can create) more as an opportunity and feature rather than a bug. Outlandish and unfeasible ideas are a natural fit for idea-generation practices. By interrogating the extremes of a design space, it is possible to construct a better understanding of what is actually possible and appropriate. By framing all content as ideas (including both good and bad ideas), we present the designer with a more far-reaching design space exploration. Such support for divergent thinking is essential for the success of any creative problem-solving support system.

The supermind design methodology

The Supermind Ideator is based on the Supermind Design methodology, which includes a set of conceptual moves that people can use to spur their creativity, especially about how to design collectively intelligent groups (Center for Collective Intelligence (2021)). These moves have been used successfully in multiple settings (Koppineni et al. (2022) Koppineni, Kong, and Malone) (Laubacher et al. (2020) Laubacher, Giacomelli, Kennedy, Kong, Bachmann, Kramer, Schlag, and Malone) (Laubacher et al. (2023) Laubacher, Bachmann, Kennedy, and Malone).

The methodology includes the following basic design moves that are based on general techniques that can be used for any kind of creative problem-solving (Osborn (2012)) (De Bono (1970)) (Koberg and Bagnall (1974)) (Brown and Katz (2011)) (Dschool (2018)) (Malone et al. (2003)): • Zoom In - Parts: What are the parts of this problem? • Zoom In - Types: What are the types of this problem? • Zoom Out - Parts: What is this problem a part of? • Zoom Out - Types: What is this problem a type of? • Analogize: What are analogies for this problem?

The methodology also includes the following supermind design moves that are specifically for generating ideas about how to design superminds (i.e., collectively intelligent groups) (Malone (2018)) (Center for Collective Intelligence (2021)): • Groupify: How can different kinds of groups help solve the problem? Possibilities include: – Hierarchy - where group decisions are made by delegating them to individuals in the group – Democracy - where group decisions are made by voting – Market - where group decisions are the combination of all the pairwise agreements between individual buyers and sellers – Community - where group decisions are made by informal consensus based on shared norms and reputations – Ecosystem - where group decisions are made by whoever has the most power and by survival of the fittest • Cognify: How can different cognitive processes help solve the problem? Possibilities include: – Create - How can groups create new information collectively? – Decide - How can groups make choices? – Sense - How can groups collect and interpret information from the environment? – Remember - How can groups recall information from the past? – Learn - How can groups improve their performance with experience? • Technify: How can technologies be used to help solve the problem?

Finally, the methodology includes three experimental moves which have not, to our knowledge, been previously used as part of systematic ideation exercises but which appear to take advantage of LLM capabilities and are incorporated in the Supermind Ideator: • Reflect - What is missing from the current problem statement? • Reformulate - How could the problem be reformulated? • Case examples - How does the problem relate to case examples of real groups?

The Supermind Ideator software

The Supermind Ideator is a web application designed to guide users into a focused state of idea generation and reflection, and as such is intentionally kept minimalistic, as shown in Figure 2. The left side consists of a “Generate Panel” that drives all of the idea creation on the right side. After users type in their problem, they are given three options for idea creation: Explore Problem, Explore Solutions, and More Choices. These options are meant to provide scaffolding for novice users who may not know where to begin, as well as to support more advanced users who already know what they want to do next. The first two options, Explore Problems and Explore Solutions, comprise what we have called “move sets,” or groups of moves that focus on a specific aspect of the idea generation and refinement process. The Supermind Ideator Interface. The left side contains the Generate Panel where users input their problem and select Moves to run. The right side contains ideas generated by the system.

The Explore Problem move set supports the problem definition part of the double diamond approach using the basic design moves and the experimental moves from the Supermind Design methodology. In this way, it helps users reflect on how they can generalize and specialize the various parts and types of their problem, consider relevant analogies to their problem, and identify potentially missing aspects of their problem statement.

The Explore Solutions move set supports the solution generation part of the double diamond approach using the supermind design moves from the Supermind Design methodology. For example, it helps users consider how different kinds of groups (such as markets, communities, and democracies) could help solve their problem. It also helps users think about how innovative ways of performing different cognitive processes (such as creating, deciding, and sensing) or using various kinds of technologies could help solve their problem.

These “move sets” take the user input and then execute several moves in order to create several ideas, one output for each move. An example set of input and output from each move set can be seen in the appendix.

More Choices allows a user to select any individual move(s) they want. The More Choices option also exposes a more advanced parameter that the LLM API calls “temperature”—a measure of the amount of randomness used to generate output. Lower temperature leads to less random (more conventional) outputs, and higher temperature leads to more random (more potentially creative) outputs. To avoid confusion, we name this “Creativity” and provide three choices: Low, Medium, and High, corresponding to temperatures of 0.7, 1.0, and 1.3, respectively.

In each of the above cases, the Ideator system generates one or more ideas using the Supermind Design moves. After ideas are created and appear on the right, users can rate each of these ideas with a Thumbs Up or Thumbs Down button, and they can Bookmark ideas they particularly like to save these ideas in their personal collection.

Implementation

The Ideator system includes multiple layers that work together to provide structure and guidance to an otherwise open-ended problem. These layers (shown in Figure 3) include the User Interface, the API, and the LLM. The Layers of the Supermind Ideator System. These consist of the User Interface, the API, and the LLM being used. The interface is on top (left) and is based on a React framework. The API is in the middle and connects the interface to the LLM through the software that implements our Moves. The LLM is at the bottom (right) and includes special prompts and specially fine-tuned versions of the LLM.

To increase the ease of maintaining and extending the system, we built an API (Application Programming Interface) to separate the client-side user interface logic from server-side processing logic.

We expect this API-based approach to also be valuable in making the Supermind Ideator moves available to others who might want to build upon or extend our methodology beyond the initial web interface we have provided. The API simplifies each move into a simple set of text strings with most of the formatting and interaction with the LLM abstracted away to optional parameters.

The API finally allows for the logic of each move to be isolated and version-controlled to support experimentation and evolution. As we improve our understanding of prompting techniques and as the capabilities of LLMs expand and change, we have infrastructure in place to support upgrading and adapting moves.

Prompting

To tell the LLM how to respond to a given problem using a given move, we use two kinds of prompts: “zero-shot” and “few shot.” In a zero-shot prompt for a move, the API simply gives English instructions to the LLM for how to apply the move. For example, the zero-shot prompt for the Groupify - Democracy move is: “Democracies are systems where group decisions are made by voting. How can we use democracies to {problem}? To solve this problem,” the API inserts the user’s problem description in place of “{problem},” and the LLM then generates text that would be likely to follow this prompt.

In a few-shot prompt for a move, the API gives the LLM several examples of problems and desirable responses to those problems, followed by the users’ problem. Then the LLM generates a response to the user’s problem following the same pattern of problem/response that is illustrated by the examples.

For example, with Zoom In - Parts, we provide multiple examples of how to break down a problem into its parts. One such example is:

What are the parts of my problem? Problem: sell - Identify potential customers - Identify potential customer needs - Inform potential customers - Obtain order - Deliver product or service - Receive payment

Fine-tuning

While the general knowledge embedded within the LLM base model can produce interesting and fitting output with relatively limited guidance (zero-shot and few-shot prompts), it seemed apt to augment this base knowledge with specialized information so that more precise and useful ideas could also be generated. To accomplish this, we created approximately 1,600 brief case studies of real-world organizational practices that solved problems using various innovative approaches. These case studies were primarily summaries of descriptions previously published in the business press and elsewhere. We organized the case study corpus with tags that connected the problem and case study with an associated Cognify or Groupify move.

This enabled us to fine-tune a version of the LLM to map between input language, Supermind move, and output content. This new fine-tuned model is potentially more capable than our zero-shot and few-shot moves as it amalgamates the 1,600 examples into the approximately 10 Cognify and Groupify submoves. While our zero-shot and few-shot variants produce content based on the base LLM and our prompting (with or without an example or two), the fine-tuned approach reconfigures the LLM so that output more tightly reflects the input training data. As our training data was a corpus of case studies, the fine-tuned model produces output more akin to those case studies. For example, when prompted with the problem: “I want to improve the way companies retrain employees whose jobs have been replaced by automation,” we get examples like the following: • Groupify - Market: “Swiss logistics company Travida uses a collective intelligence method to help with employee retraining in the face of automation replacement. Its platform, called Morpheus, allows for crowdsourcing of training needs, as well as for matching of people with training openings” • Cognify - Remember: “Microsoft has been experimenting with virtual retraining environments that mix real and virtual reality to allow workers to experience new scenarios. The idea is that learning should be less dependent on physical location, and could be reimagined as a continuous, fluid process that doesn’t end with graduation.”

Even though both of these examples sound factual, they are actually “hallucinations,” fictional combinations of information from different case studies in our corpus, the base LLM, and other predictions made by the transformer’s neural network.

If our goal was to help users retrieve factual content, this would be a problem. But as noted above, since our goal is to help users come up with creative ideas, we think these fictitious hallucinations are more likely to be a feature than a bug. Creativity researchers have found, for example, that exposure to other creative ideas can often be useful triggers for stimulating people to have creative ideas they would never have had otherwise (Fink et al. (2012) Fink, Koschutnig, Benedek, Reishofer, Ischebeck, Weiss, and Ebner). We intentionally draw attention to the potential for the system to hallucinate and seek to avoid any misconceptions on the part of our users by labeling these outputs as “wild (possibly fictitious) ideas” which can be seen in Figure 2.

Experimental study

In order to evaluate the system, we conducted an experimental study of how Ideator can facilitate humans in creative problem solving tasks. At the time this study took place, Ideator was configured to make use of OpenAI’s GPT-3.5 Turbo LLM.

Methods

Procedure

To examine how Ideator facilitates humans in creative problem solving tasks, we employed a two phase (Idea Generation and Idea Evaluation) study. In the Idea Generation phase, we employed a 2 (problem statement) by 3 (group configuration) mixed-factorial design with problem statement being randomized within-person and group configuration being randomized between-person. Participants completed two rounds of a creative problem solving task in which they were asked to generate as many ideas as they could to solve the counterbalanced problem statements “How can I discern fake news from real news” and “How can I achieve better work-life balance.” Participants had no time limit and were free to spend as much or as little time as needed to complete the task successfully.

Study phases

The study involved three phases: Baseline, Idea Generation, and Idea Evaluation.

Baseline

In the first round of this task, all participants were asked to generate ideas without any help from external sources (i.e., not using Google, ChatGPT, etc). This was used as a baseline level of performance on the creative problem solving task (N Ideas = 611).

Idea Generation

Participants were then randomly assigned to one of three group-configuration conditions and asked to complete the creative problem solving task again with the other problem statement. Specifically, participants were assigned to one of three conditions: a) Human + Ideator condition, in which they were asked to generate as many ideas as possible with the help of Ideator (N

Participant

= 51, N

Ideas

= 200). b) Human + ChatGPT condition, in which they were asked to generate as many ideas as possible with the help of ChatGPT (N

Participant

= 52, N

Ideas

= 231). Users accessed ChatGPT through a user interface (UI) we wrote that imitated the appearance of ChatGPT and called the actual ChatGPT system in the background. c) Human Only condition, in which they were asked to generate as many ideas as possible without any help from external sources (N

Participant

= 49, N

Ideas

= 172).

Idea Evaluation

The submitted ideas were finally evaluated by a separate group of participants who rated each solution based on its level of innovativeness (i.e., creativity and usefulness) using a 1 (not at all innovative) to 5 (extremely innovative) scale. Each evaluator viewed a random subset of 100 ideas and each was rated by at least 17 evaluators. The intra-class correlation coefficient was computed to assess the agreement between evaluators who rated the innovativeness of the same ideas. There was a good reliability between the evaluators using the one-way random effects model and “absolute agreement” using R’s ICC function (kappa = 0.81, p < .0001).

Participants

Participants were recruited from the online crowdsourcing site Prolific. All participants were located in the United States and were fluent in English. We recruited a total of 152 idea generators (M Age = 37.19 years, SD Age = 12.93 years, 53.3% Females, 45.4% Males, 1.3% Other Sex, 9.9% Asian, 10.5% Black, 63.8% White, 10.5% Mixed, and 5.3% Other Ethnicity) and 505 idea evaluators (M Age = 40.83 years, SD Age = 13.86 years, 62.8% Females, 35.4% Males, 1.8% Other Sex, 7.3% Asian, 12.5% Black, 65.5% White, 7.7% Mixed, and 7.0% Other Ethnicity).

Results

Does innovativeness vary by condition?

To examine whether innovativeness ratings vary by condition, we conducted a one-way analysis of variance. However, our data did not meet the homogeneity of variances assumption. Therefore, we conducted a Welch’s one-way ANOVA and a Games-Howell post-hoc test.

We found that there was a significant difference between the Human + Ideator, Human + ChatGPT, and Human Only condition in terms of their innovativeness ratings (F (2, 8058.15) = 106.48, p < .0001). Specifically, the Human + Ideator ideas were rated as being the most innovative (M = 3.21, SD = 1.26), followed by Human + ChatGPT (M = 2.96, SD = 1.24) and Human Only (M = 2.81, SD = 1.27). All comparisons were significant (all ps < .0001; see Figure 4). Furthermore, participant age, sex, and ethnicity did not significantly moderate the relationship between experimental condition and innovativeness ratings (all ps > .13). Comparison between the different experimental conditions in terms of Innovativeness ratings. ***p < .0001.

Does baseline performance moderate the relationship between condition and innovativeness?

To examine whether baseline performance on the idea generation task moderated the relationship between condition and innovativeness, we added baseline performance as a covariate in the above ANOVA. Additionally, we conducted a linear regression with innovativeness being regressed on both condition and baseline performance.

We found that performance on the baseline creative problem solving task significantly predicted better performance on the experimental task for the Human Only condition (b = 0.63, z = 3.86, p = .0002). However, performance on the baseline creative problem solving task did not significantly predict better performance on the experimental task for those in the Human + Ideator (b = 0.20, z = 1.43, p = .16) or Human + ChatGPT conditions (b = 0.31, z = 1.81, p = .07).

Interestingly, if we assume that a Person’s performance on the baseline task is a measure of their skill in doing this kind of work, then this means that using Ideator or ChatGPT leads the lower-skilled people to perform about as well as the higher-skilled ones. (see Figure 5). Association between the innovativeness of a subject’s performance on the Baseline task and the Experimental task. Each point represents one subject, and the colored lines are best-fit lines for each of the three conditions.

When we analyze the data using difference scores we see a similar effect, but this time only for the Human + Ideator condition. That is, when comparing the difference between baseline performance and experimental performance in the three conditions, we find that people in the Human + Ideator condition improved significantly (M Diff = −0.23, p = .01), but those in the other two conditions did not (Human + ChatGPT (M Diff = −0.10, p = .68); Human Only (M Diff = 0.04, p = .99). Considering the previous result, this is presumably because the lower-skilled subjects in the Human + Ideator condition improved enough to make overall improvement in that condition significant, even though that wasn’t true for the other two conditions.

To examine the moderating effects of age, sex, and ethnicity, we added these variables as additional covariates in the linear regression model. Participant age and sex did not significantly moderate the relationship between performance on the baseline task and innovativeness ratings for any of the conditions (all ps > .19). In general, participant ethnicity did not play a significant role either. However, for those in the Human + Ideator condition, those identifying as white had a significant positive association between their performance on the baseline task and their performance on the experimental task (b = 1.64, z = 2.31, p = .02).

Timing

To examine whether people in the Human Only, Human + Ideator, and Human + Chat conditions spent different amounts of time on the idea generation task, we ran a one-way ANOVA. However, given that the data were not normally distributed, we conducted a Kruskal–Wallis non-parametric test to evaluate the differences between the three experimental conditions.

We found that there was a significant difference between the three experimental conditions in the amount of time they tended to spend on the idea generation task (H = 16.07, p = .0003). Specifically, those in the Human Only condition tended to spend significantly less time on the idea generation task (M = 3.70, SD = 2.73) compared to those in the Human + Ideator (M = 6.75, SD = 5.19) and Human + Chat (M = 7.52, SD = 6.89) conditions. However, the difference in the amount of time spent on the idea generation task between the Human + Ideator and Human + Chat conditions was not significant (Figure 6). Comparison between the Human + Ideator, Human + ChatGPT, and Human Only conditions in terms of the amount of time spent on the idea generation task.

Furthermore, age played a significant role in the relationship between experimental condition and time spent on the experimental task, but only for those in the Human + ChatGPT condition. That is, older participants who were assigned to the Human + ChatGPT condition tended to spend longer on the idea generation task (b = 0.13, z = 2.58, p = .01), although age did not significantly moderate the relationship between experimental condition and time spent on the idea generation task for those in the Human Only (b = 0.06, z = 1.00, p = .32) or Human + Ideator (b = 0.10, z = 1.42, p = .16) conditions. Neither participant sex nor ethnicity significantly moderated the relationship between experimental condition and time spent on the idea generation task (all ps > .13).

Furthermore, to examine whether individuals who spent more time on the idea generation task tended to submit ideas with higher innovativeness ratings, we conducted a linear regression with duration predicting innovation. We found that, overall, there was a significantly positive relationship between time spent on the idea generation task and innovativeness ratings (b = 0.01, z = 2.29, p = .02). That is, those who spent more time on the idea generation task tended to submit ideas that were seen as being slightly more innovative. Furthermore, this association remains significant after controlling for participant age, sex, and ethnicity.

When we look more closely at this relationship, we find that individuals in the Human Only condition who spent more time on the task tended to have significantly higher innovativeness ratings (b = 0.05, z = 2.55, p = .01), but this association was not significant for those in the Human + Ideator (b = −0.00, z = −0.24, p = .81) and Human + ChatGPT (b = 0.01, z = 1.23, p = .22) conditions (Figure 7). Association between the amount of time taken on the idea generation task and the innovativeness of ideas by condition.

In other words, similar to the previous section where we found that the subjects’ skills did not have much effect on innovativeness when using the Ideator and ChatGPT tools, here we find that spending more time did not have much effect either, when using these tools.

Finally, we found that there was a non-significant relationship between time spent on the idea generation task and the number of ideas submitted (b = 0.05, z = 1.54, p = .13). In other words, those who spent more time on the idea generation task tended to submit a similar number of ideas to those who spent less time on the task (Figure 8). Association between the amount of time taken on the idea generation task and the number of ideas submitted.

Number of conversational turns

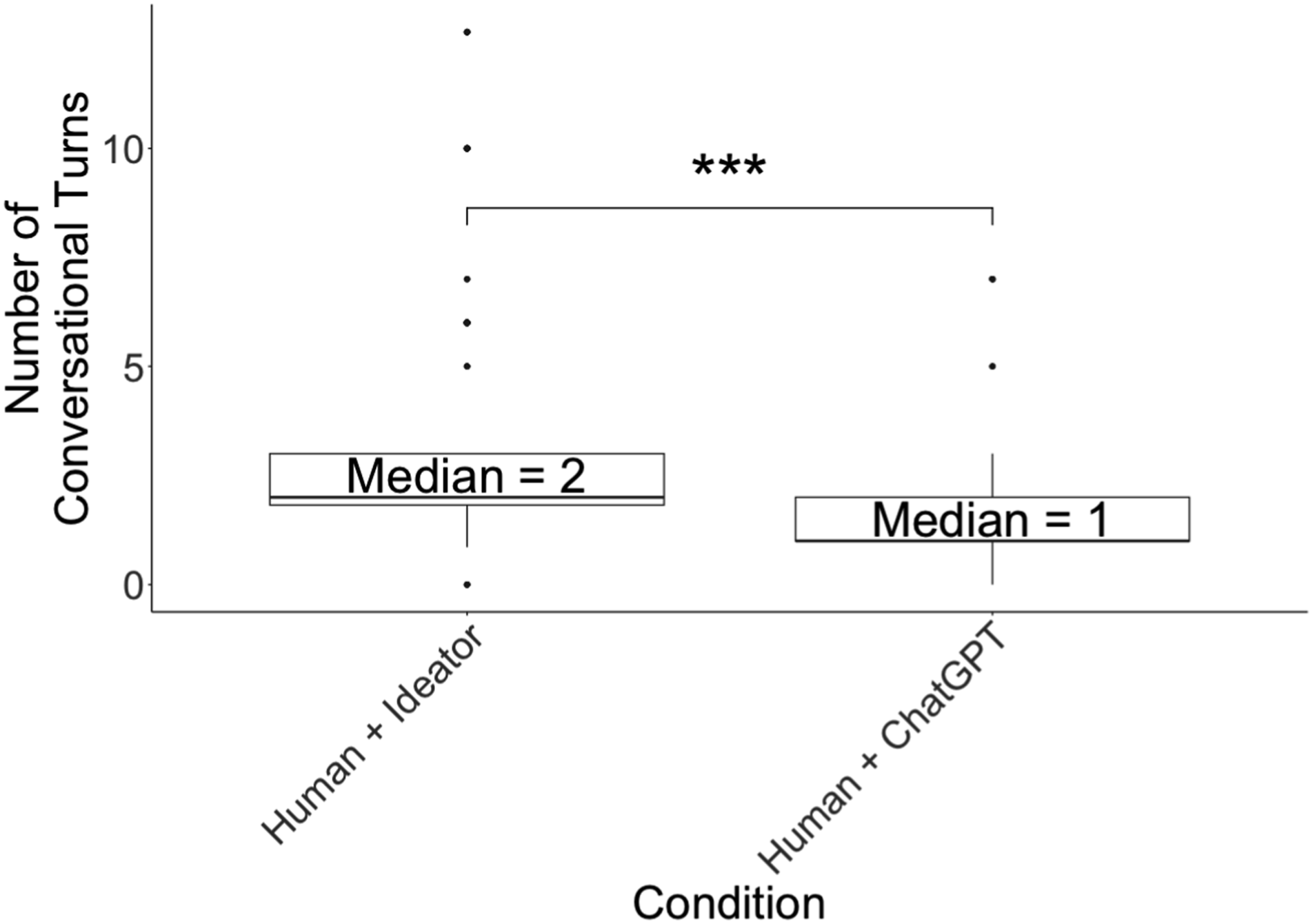

By tracking individual usage of the two treatment systems (Ideator and ChatGPT), we were able to compare how many times the users interacted with the system, which we call “conversational turns” with the machine. In the Human + ChatGPT condition, we count one turn for each time a user sent a message and received a response. In the Human + Ideator condition, we count one turn for each time a user submitted a problem statement (to Explore Problem, Explore Solution, or other individual moves) and received back one or more ideas.

A Wilcoxon rank-sum test indicated that participants in the Human + Ideator condition (M = 3.11, Mdn = (2) went through a significantly greater number of conversational turns compared to the Human + ChatGPT condition (M = 1.54, Mdn = 1; W = 12,278.50, p < .0001, effect size r = 0.42). In other words, those using Ideator tended to take approximately 3 conversational turns before finishing the creative problem solving task, whereas those using ChatGPT tended to take less than 2 conversational turns before finishing the task (see Figure 9). Comparison between the Human + ChatGPT and Human + Ideator conditions in terms of the number of conversational turns idea generators typed in.

Since one problem statement with Ideator generated more ideas than one problem statement with ChatGPT, this means that the Ideator users saw significantly more software-generated ideas than the users of ChatGPT (M

Ideator

= 17.00, Mdn

Ideator

= 13; M

Chat

= 1.54, Mdn

Chat

= 1; W = 1329.00, p < .0001, effect size r = 0.83 - see Figure 10). Comparison between the Human + ChatGPT and Human + Ideator conditions in terms of the number of ideas seen by each idea generator.

While users in the Human + Ideator condition took more conversational turns with the system and saw significantly more ideas than users in the Human + ChatGPT condition, Ideator users did not submit more unique textual inputs (e.g., problem statements) compared to the ChatGPT condition (M Ideator = 1.45, M Chat = 1.33; t (96.09) = 0.49, p = .63).

Overall, most users in both Human + Ideator and Human + ChatGPT conditions (65.05%) submitted a single textual input and did not change it as they engaged with their respective system. A small set of users in these conditions submitted 2 or 3 unique inputs (21.36%) and an even smaller set of remaining users submitted more than 3 unique inputs (3.88%) 1 .

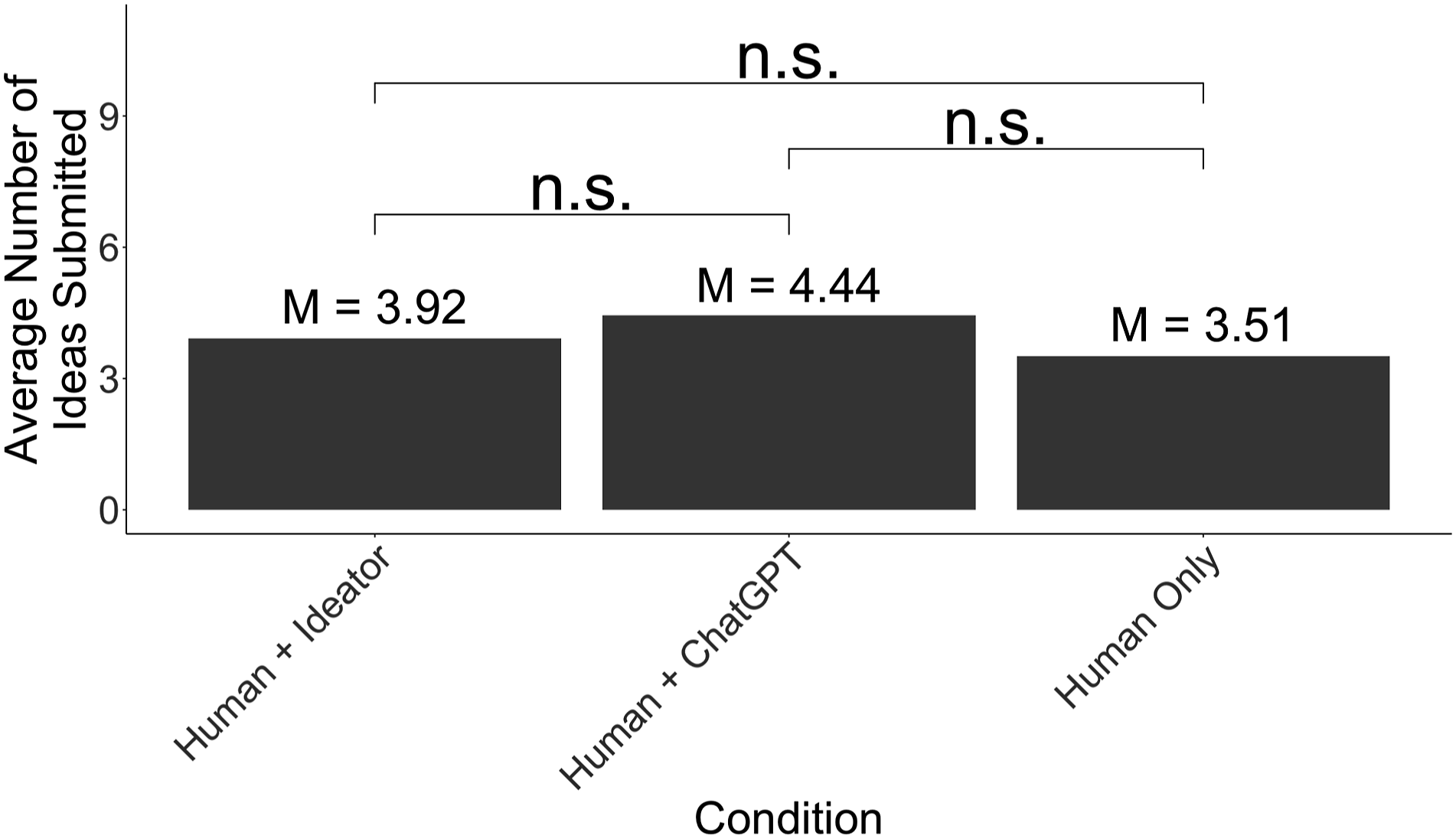

Additionally, we examined whether there was a significant difference in the number of final ideas submitted between the Human + Ideator, Human + Chat, and Human Only conditions. A Kruskal–Wallis test indicated that there was no significant difference in the number of ideas submitted between those in the Human + Ideator (M = 3.92, SD = 2.64), Human + ChatGPT (M = 4.44, SD = 2.39), or Human Only (M = 3.51, SD = 1.49) conditions (H = 3.59, p = .17 - see Figure 11). Comparison between the experimental conditions in terms of the average number of ideas submitted by idea generators.

Overall, since users across all three conditions submitted approximately the same number of ideas, and users in the Human + Ideator condition saw more ideas and had more to choose from, this may help explain why the ideas from the Ideator users were rated as higher quality.

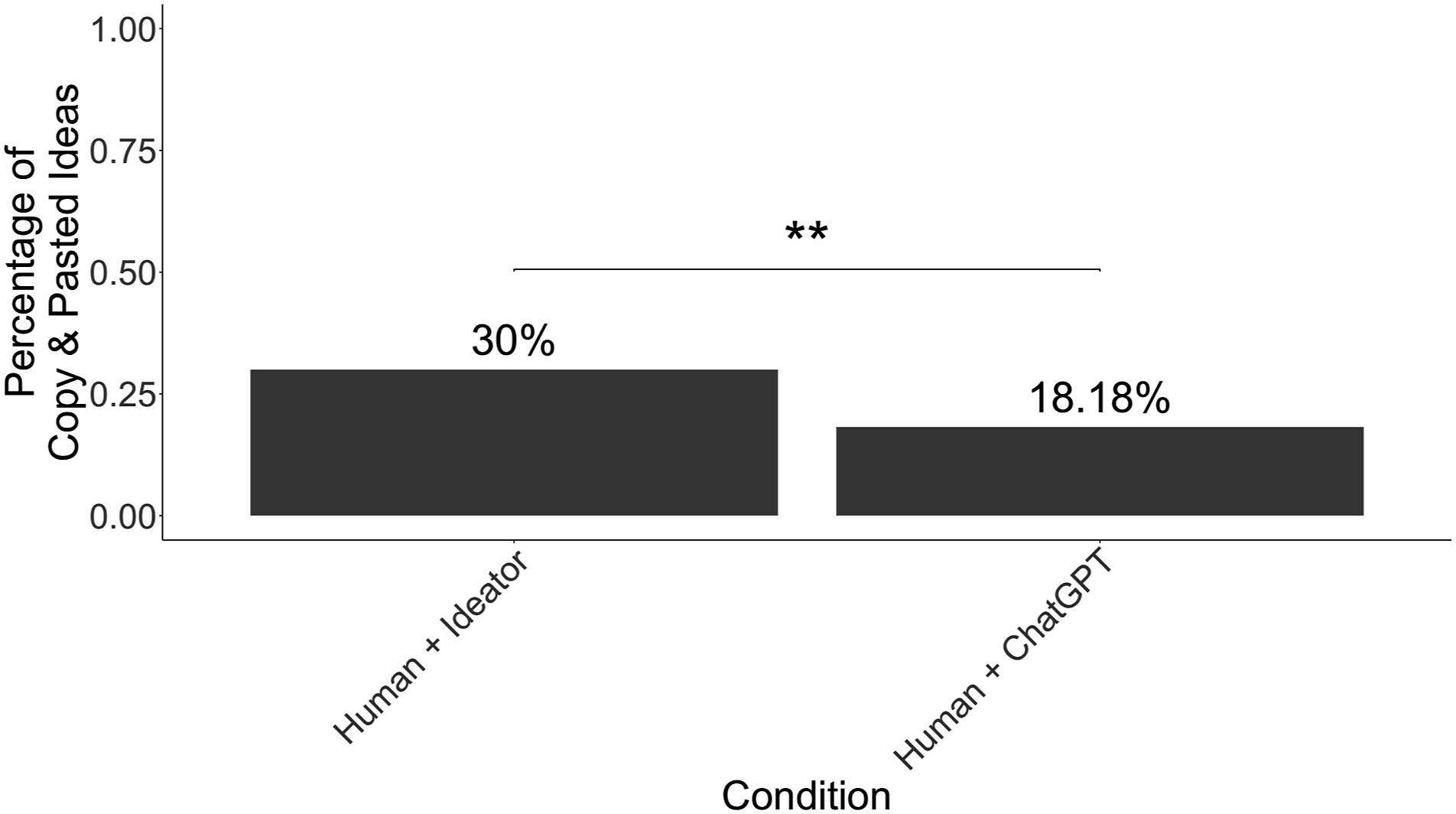

Copy and paste

To examine whether the extent to which participants copied and pasted responses directly from the two treatment systems (Ideator + Human and ChatGPT + Human) differed between the two conditions, we conducted a chi-square test of independence. We found that those in the Human + Ideator condition tended to copy and paste their answers from the system significantly more frequently than those in the Human + ChatGPT condition. Specifically, 30% of solutions submitted from the Human + Ideator condition were copy-pasted directly from the system, whereas 18.18% of ideas generated from the Human + ChatGPT were copy-pasted (χ2 (1, 431) = 7.65, p = .006 - see Figure 12). This suggests that the human users found ideas generated by Ideator more directly usable than those generated by ChatGPT. Comparison between the Human + ChatGPT and Human + Ideator conditions in terms of the percentage of ideas copy and pasted directly from the system.

Furthermore, we conducted an independent sample t test to examine whether the innovativeness ratings differed between ideas that were copy-pasted and ideas that were not. We found that ideas that were copy-pasted tended to be rated as being more innovative, although this effect was stronger for those in the Human + Ideator condition (t = 10.94, p < .0001) than those in the Human + ChatGPT condition (t = 2.73, p = .006). This suggests that humans are better at recognizing innovative ideas than they are at generating them.

Moves

Across all 30 moves that Ideator can present to help users process their problem statements, the results of only 13 moves were copy-pasted directly from the system and submitted as ideas. Interestingly, the most and least innovative of these moves differed depending on the problem statement that participants were exploring. Specifically, when exploring the work-life balance problem statement, moves generated from Cognify—Create (Zero-shot) were rated as being the most innovative (M = 3.80, Mdn = 4), whereas moves generated from Zoom Out—Parts were rated as being the least innovative (M = 2.63, Mdn = 3). In contrast, when exploring the fake news problem statement, the moves generated by Technify (Zero-shot) were rated as being the most innovative (M = 3.89, Mdn = 4) whereas the moves generated by Analogize were rated as being the least innovative (M = 2.86, Mdn = 3).

Diving into this more deeply, we found that innovativeness of submitted ideas generated by the various implementations of moves (few-shot, fine-tune, and zero-shot) differed depending on which problem statement participants were exploring. Specifically, when exploring the work-life balance problem statement, there was no significant difference in innovativeness ratings of moves generated from few-shot (M = 3.56, Mdn = 4), fine-tune (M = 3.16, Mdn = 3), or zero-shot (M = 3.54, Mdn = 4; F (2, 104.67) = 2.56, p = .08). However, when exploring the fake-news problem statement, moves generated from zero-shot were rated as being significantly more innovative than moves generated from few-shot (M zero−shot = 3.89, M few−shot = 3.57, t = 3.23, p = .001). No ideas were submitted from fine-tuned moves for those who saw the fake-news problem statement.

Together, these results suggest that the most helpful moves might differ depending on the type of problem users are exploring when using Ideator. We discuss this in greater detail in the discussion section.

In addition, the frequency with which generated ideas were submitted as final ideas differed by the type of move implementation (χ2 (2, 51) = 35.41, p < .0001; see Figure 13). The most common was few-shot (N = 36), followed by zero-shot (N = 13), followed by fine-tune (N = 2). This suggests that the users did not highly value the results from fine-tune moves. And since creating the training data for the fine-tune moves requires much more effort than creating the zero-shot and few-shot moves, this suggests that, at least for the fine-tune examples we used, the additional effort may not have been worth the cost. Frequency of move implementation in submitted ideas.

Discussion

As generative AI systems (and LLMs in particular) continue to be developed and deployed in real world applications, our work suggests several implications for researchers who study these technologies and practitioners who apply them. In particular, we show how specialized front ends that support systematic processes for specific problem types can scaffold human-AI interactions in useful ways.

Specialized front ends

While chat-based LLMs are usually very easy to use and can be used for a wide range of applications, this work demonstrates that there are also situations where specialized front ends can be valuable. As our results show, human users of Ideator were better able to generate innovative solutions to complex problems than human users of ChatGPT.

We believe this is partly because most users are not expert prompt engineers, and they may not be very good at using a chat-based interface to carry out a structured process for a specific kind of task. But as the Supermind Ideator illustrates for the task of creative problem-solving, a specialized front end can include (a) carefully selected prompts that are known to achieve good results for this task and (b) customized UIs that greatly simplify using these prompts in a structured process with inputs specific to a user’s problem.

Systematic processes

For tasks with codified methodologies that humans use, there is value in guiding users through a process. Unlike a chat-based interface that tries to gratify the user at each step of the chat, a specialized front-end can act more like a human facilitator who guides the user through the activities specified in the methodology. For example, in the creative problem-solving domain, human facilitators often take participants through a long process to break them out of their own fixations and find new ways of understanding the problem before exploring possible solutions. The Supermind Ideator supports this process without human facilitators by guiding users to explore the problem space first followed by the solution space. Indeed, we found that users of Ideator tended to engage with the system more than users of ChatGPT.

Importantly, however, approximately one-third of Ideator users tended to take results from Ideator verbatim, rather than using the results as a jumping-off point to further revise and build upon. We believe this role for humans as “curators” of ideas generated by AI is an important one, and our results showed that this was associated with high ratings of innovativeness.

In fact, this may be one reason why the less-skilled users appeared to benefit more from using Ideator (see Figure 5). Even if these users didn’t have very many innovative ideas themselves, they could still recognize and submit innovative ideas generated by Ideator. This effect of generative AI benefiting lower-skilled workers more than higher-skilled ones has been found by a number of other researchers (Noy and Zhang (2023)) (Brynjolfsson et al. (2023) Brynjolfsson, Li, and Raymond) (Campero et al. (2022) Campero, Vaccaro, Song, Wen, Almaatouq, and Malone).

In the future, however, we also hope to encourage Ideator users to reflect and iterate upon the Ideator results which, ultimately, may contribute to even better ideas being formed.

Problem types

There is a near-infinite range of complex problems in the world, from personal to socio-political. And different types of problems can often benefit from specialized knowledge or approaches. For example, the Ideator includes the three supermind design moves, which are especially relevant for designing collectively intelligent systems. And as noted below, it may also be useful to add new moves for other types of problems.

In addition, even within a given domain, some moves may be more useful than others for specific problems. For example, we demonstrated here that the most innovative move provided by Ideator tended to differ depending on which problem statement was being examined. Although Ideator provides a wide array of moves, it does not currently tailor the moves to specific types of problems. As such, we expect one focus of our future work to involve suggesting specific moves or sequences of moves for specific problem types and situations.

Recombination of ideas

An important part of creative problem-solving is often the selection of fragments of ideas that can be combined with others. For example, our UI supports this process, in part, by letting users bookmark key results, thus reducing the potential for good ideas to get lost in the avalanche of text that LLMs can produce. We believe future interfaces should also be designed in ways that explicitly support idea synthesis and recombination, for example, by rating the subparts of an idea (at the word or sentence level) rather than simply rating an entire idea.

Community-based extension

While we have not yet demonstrated this capability, the structure of the Ideator suggests how a specialized front end and flexible back end can help create a platform for community creation and evolution. Collective software development by an open-source community, for example, can improve or add individual “moves” or run combinations of moves within custom UIs. We hope that our contribution scaffolds future design and development of tools that augment the collective ability of many others.

Future work

It appears that systems like the Supermind Ideator have the potential to provide substantial assistance to humans doing creative problem-solving. But much work remains to be done for this potential to be fully realized, and we see at least three short-term paths forward to improve Ideator:

Adding and improving moves

One of our short-term goals is to refine the prompts and fine-tuning of our moves to improve the quality of the ideas the moves generate. In addition, it seems very promising (and feasible) to add support for new types of moves. Some of these moves might be relevant for creative problem-solving in any domain. For example, moves might be developed for techniques such as lateral thinking (De Bono (1970)) or “5 W’s and 1 H” (Brown and Katz (2011)).

It would also be possible to include moves to support other methodologies for thinking about business questions, such as Porter’s 5-forces (Porter (1998)) or Blue Ocean Strategy (Kim and Renee (2017)). And it would potentially be possible to add moves to support other specific topic domains such as mechanical design or building architecture.

We hope that by having an extensible and open-source API for the Supermind Ideator, communities of professionals, researchers, consultants, and others could contribute to a growing collective of knowledge about how to guide LLMs to help with specific types of ideation and creative problem solving.

Evaluating ideas

Currently, the moves we have implemented only cover the divergent aspects of idea generation: generating possible ways of re-framing the problem and generating possible solutions to the problem. As the double diamond process suggests, however, evaluating and selecting among these possibilities is also necessary to be able to actually use the results.

For instance, as described above, the thumbs-up, thumbs-down, and bookmark buttons provide simple tools for evaluating ideas. With these features, users can quickly skim through their previous ideas and find things they liked, disliked, or saved.

Looking forward, we feel that idea evaluation can be greatly expanded upon in a number of ways such as sharing ratings in a group and using previous ratings to recommend items in a new situation.

Adding exploration modes

The Supermind Ideator currently produces output in a single thread of ideas. Each round of idea generation comes after the next in a linear manner. Re-running moves allows for a nesting of output, but that is as far as we currently go. From our user evaluation we found that people do not always work in linear patterns of thought and would occasionally find themselves wanting to combine outputs from different moves or otherwise reorganize output in a manner that allowed the user to see clusters of thought and reflect on them in non-threaded and non-linear manners.

Taking into consideration the power of affinity diagrams when brainstorming, we believe a more flexible and dynamic interface could be designed to enable users to connect their input, output, and associated ideas in less restrictive ways. For example, by giving “sticky note” like flexibility to the interface there might be increased capacity for synthesis of output.

Furthermore, if the idea generation process is thought of as traversing a graph, then an interface that treats each idea as a node could allow for one to see the spaces they have already deeply explored vs. those they have not yet tapped into and either help broaden exploration or narrow focus.

Conclusion

In this paper, we have shown how the use of generative AI technologies, like LLMs, can benefit substantially from scaffolding designed to help users perform specific kinds of tasks. In particular, we have shown how these systems can be customized to help people do creative problem-solving in a broad range of areas and then further customized to help with creative problem-solving in the specific area of designing groups of people and computers. We believe that these approaches can also be extended to include many other creativity techniques and many other techniques for other topic areas.

In the long run, we hope that tools like this will help meet one of the most important practical challenges facing the field of collective intelligence: How can we design more collectively intelligent systems of people and computers to deal with our most important problems in business, government, science, and many other areas of society?

Footnotes

Acknowledgements

We want to thank Hendrik Maier and Stephen Dwyer for their help with the early Ideator concept and Lilly Kammerling for her help with the Ideator API.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported in part by the Toyota Research Institute, the Collective Intelligence Mission of the MIT Quest for Intelligence, and the National Research Foundation, Prime Minister’s Office, Singapore under its Campus for Research Excellence and Technological Enterprise (CREATE) programme.