Abstract

Collective intelligence (CI) is an interdisciplinary field that draws on a wide range of academic disciplines but has struggled to capitalise on cross-pollination between fields, particularly ones which do not self-identify with the collective intelligence label. Past studies have largely undertaken a qualitative and manual approach to classifying different trends in the CI literature. This method risks missing a significant proportion of publications in the field. To this end, we present the first attempt to reflect the field to itself through an automated and quantitative descriptive approach using Microsoft Academic Graph (MAG) to collect and analyse 39,334 CI papers. We further focus our investigation on a subset of the CI literature, at the intersection of artificial intelligence (AI) and CI to understand how these two fields are interacting. We show that while the annual number of CI-only publications has remained steady since 2015, AI+CI research has continued to increase. Publications in the crossover of AI+CI are growing at a faster rate than CI-only papers but show less topical and disciplinary breadth. This may be having a spillover effect on the topical focus of non-AI collective intelligence research. We hope this analysis sheds more light on the dynamics of the CI ecosystem.

Keywords

Introduction

Brief history of the field and its evolution

Collective intelligence (CI) is an emerging interdisciplinary research field that draws on a wide range of academic disciplines, including sociology, economics and biology, as well as software engineering and computer science. The collective intelligence research community has embraced this thematic diversity since the field’s inception but has not yet fully capitalised on cross-pollination between related fields, particularly ones which do not self-identify with the collective intelligence label. As the field continues to mature, disciplinary breadth may pose a challenge to knowledge discovery and collaboration. There is also a risk of increased fragmentation as certain disciplines and research topics may dominate more than others and pull the field towards a narrower focus.

Several reviews of the field thus far have focussed on qualitative descriptions of frameworks and typologies rather than quantitative analyses of how the field has changed over time, often with a thematic focus (e.g. Landemore, 2012; Levy, 2010; Luo et al., 2009; Malone and Bernstein, 2015; Malone et al., 2009). For example, Yu et al. (Yu et al., 2018) presented a literature review of historical trends in the science of CI, with a focus on its more recent iteration as crowd science. Salminen (2012) summarised the studies on CI of human society at three levels of abstraction, namely, micro-, macro- and emergence-level studies while Nguyen et al. (2019) focused on reviewing studies on CI that used crowdsourcing. In a more comprehensive systematic review from 2020, Suran et al. (2020) created a framework for organising multiple different dimensions of collective intelligence research.

These analyses have typically limited their search to Web of Science (WoS) to classify the different trends in the CI literature into an ontology and used a largely manual approach. This method risks missing a significant proportion of publications in the field, particularly preprints and says nothing of the scale or rate at which the trends are materialising. Past studies have also limited the analysis to the metadata available through the original database search. The absence of a descriptive overview of the field makes it, therefore, challenging to tie the efforts of different disciplines together in a coherent way. A better understanding of the span of the discipline and how it has changed in the last 20 years could make it easier for researchers to discover relevant research across scales of analysis and collaborate.

To this end, we present the first attempt to reflect the field to itself. We used Microsoft Academic Graph (MAG) to collect and analyse 39,334 Collective Intelligence (CI) papers and 4354 Artificial Intelligence (AI) +CI papers since 2000, which significantly increases the size of and disciplinary breadth examined compared to previous studies. We further enriched this dataset with information about author affiliations and publication type. Our analysis maps the CI papers that have been published over a 20-year period, broader trends in the thematic focus of research, the contribution of private sector research to the literature and indicators of patterns in publication venues (journals and conferences).

We further focus our investigation on a subset of the CI literature, at the intersection of artificial intelligence (AI) and CI. We refer to this subcategory in the literature as AI+CI for the remainder of this paper. In recent years, AI has started to influence research across most academic disciplines. 1 The field of collective intelligence has also seen the increased integration of AI into both research and practice (Glikson et al., 2019; Malone, 2018) with excitement about the potential to amplify human intelligence with AI or overcoming limitations of AI with the help of collective human intelligence (Verhulst, 2018). Realising these benefits is likely to require a deliberate and explicit research agenda (Peeters et al., 2021). Our previous research has shown that most examples of AI being applied within collective intelligence initiatives typically rely on crowdsourcing to collect and aggregate data, which is then used to train supervised machine learning algorithms for the classification of text or images (Berditchevskaia and Baeck, 2020). In order to better understand whether the underlying research pipeline can explain the narrow current applications of AI and collective intelligence, we undertook a mapping and analysis of the academic literature in CI and the intersection between AI+CI.

We show that while the annual number of CI-only publications has remained steady since 2015, AI+CI research has continued to increase. Publications in the crossover of AI+CI are growing at a faster rate than CI-only papers. We also find that research at the crossover between the fields shows fewer topics and disciplinary breadth. This may be having a spillover effect on the topical focus of non-AI collective intelligence research. Researchers with industry affiliations increasingly author publications in AI+CI, which may be influencing the narrowing of the field. Finally, we show little overlap between the journals and conferences publishing research in CI-only or AI+CI. These findings reinforce other work about the trajectory of research in AI, where mapping efforts have shown that the field on AI is narrowing to focus on a narrower range of methods, namely deep learning, and that these trends are being driven by the increasing contribution of industry to research (Frank et al., 2019; Klinger et al., 2020).

We hope this analysis sheds more light on the dynamics of the CI ecosystem and identifies the trends and opportunities to help researchers, practitioners and funders make better decisions to help advance the field. Additionally, we hope that it serves as a catalyst for additional quantitative studies in the field, building on our codebase. 2

Methodology

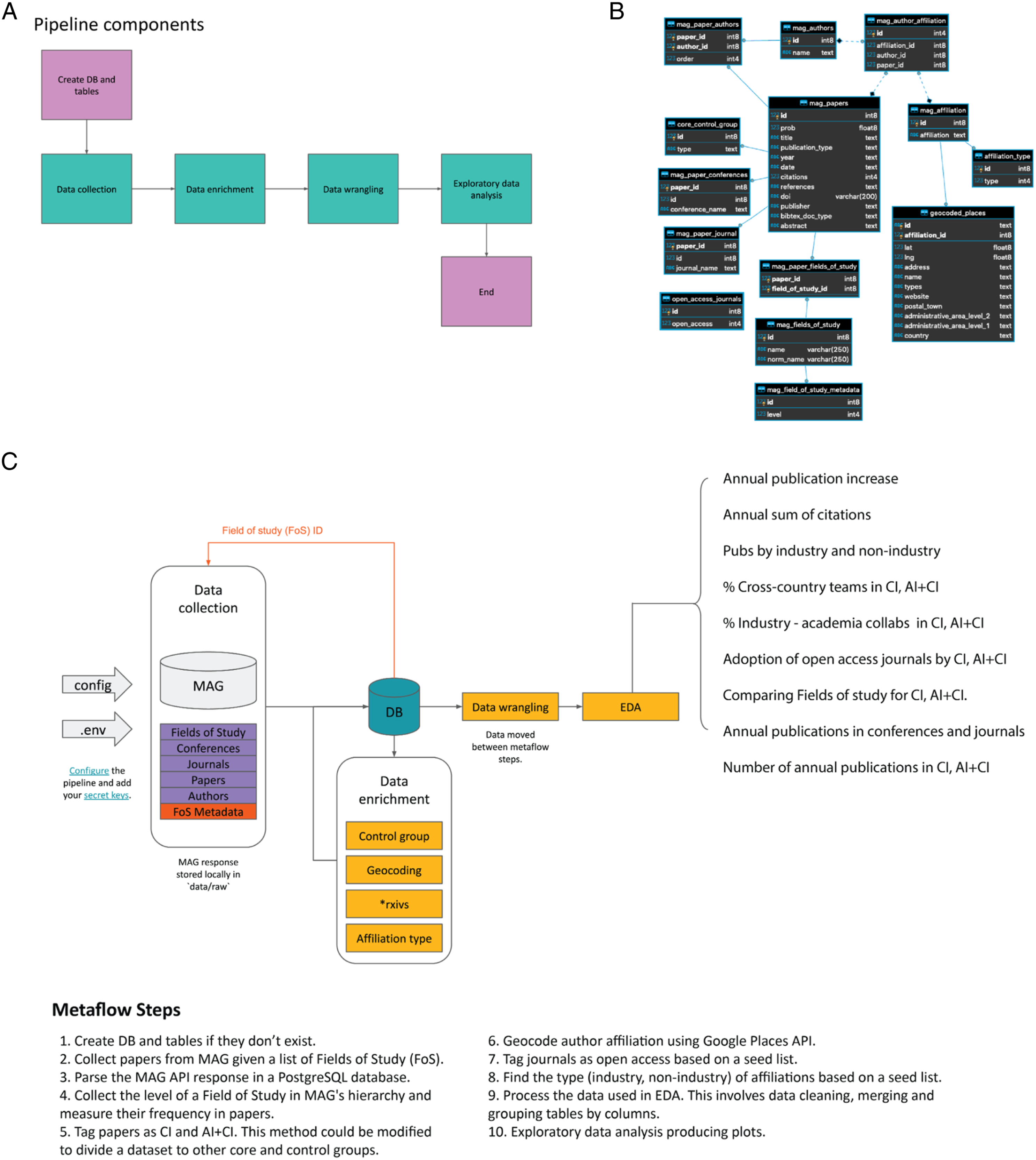

To ensure that this study can be reproduced, all code used to query the different publishers’ APIs and to produce the descriptive analysis is available online at https://github.com/kstathou/ci_mapping, with full documentation. To rerun the analysis, it is necessary to install PostGres, Python and a register with MAG. Figure 1 shows the full data collection, preprocessing and analysis pipeline. The data collection, enrichment and analysis pipeline. Full documentation available at https://github.com/kstathou/ci_mapping

Dataset description

We collected data from Microsoft Academic Graph (MAG) – a scientific database with more than 236M documents. MAG has been described as the largest openly available academic dataset. (Herrmannova and Knoth, 2016) We queried MAG using their open application protocol interface (API) with fields of study related to collective intelligence. We used two keyword lists to create our dataset: 1. 2.

Using these keywords, we tagged each publication as belonging to one of two categories: Collective Intelligence publications without any reference to AI were designated CI-only, and papers containing references to both AI and CI were tagged as AI+CI. In total, we collected 39,334 CI and 4354 AI+CI research papers alongside their metadata such as citation count, publication year and source, title and abstract, fields of study, author names and affiliations. These papers include peer-reviewed academic journal publications, conference proceedings and preprint collections such as arXiv. The latest data collection was performed on 14 July 2021.

Data pre-processing

We removed duplicate papers and enriched this dataset by geocoding author affiliations, tagging affiliations as industry and non-industry institutions, as well as identifying publications from open access journals. Scientific articles are indexed by keywords that span different hierarchies of fields of study (FoS). Databases that list publications typically assign keywords through a combination of manual selection by authors and automated methods. The MAG database uses topic modelling to assign FoS which are then organised into a hierarchy of four different levels, between 0 and 3. 3 We collected the FoS for all of the publications in our dataset to explore how the popularity of different topics changed between 2000 and 2020. We used the MAG FoS level 0, the highest, to analyse the disciplinary breadth. We also generated a preselected keyword list to understand the popularity of certain topics in the literature: ‘Social psychology’, ‘Human–computer interaction’, ‘Artificial intelligence’, ‘Data science’, ‘Environmental planning’, ‘Environmental resource management’, ‘Data mining’, ‘Distributed computing’, ‘Cognitive science’, ‘Natural language processing’, ‘Knowledge management’, ‘Ecology’, ‘Machine learning’, ‘Computer vision’, ‘Collective wisdom’, ‘Social media’, ‘Incentive’, ‘Social learning’, ‘Government’, ‘Open innovation’, ‘Crowds’, ‘Annotation’, ‘Data collection’, ‘Citizen science’, ‘Crowdsourcing’, ‘Wisdom of crowds’, ‘Collective intelligence’, ‘Training set’, ‘Swarm robotics’, ‘Social computing’, ‘Supervised learning’, ‘Smart city’, ‘Web 2.0’, ‘Open data’, ‘Social network analysis’, ‘Democracy’, ‘Ant colony’, ‘Human computation’, ‘Collaborative learning’, ‘User-generated content’, ‘Online participation’, ‘Computational intelligence’ and ‘Swarm intelligence’. These keywords were chosen inductively based on analysis of the dominant sub-topics across FoS levels 1–3 in the dataset. We analysed the changes in the relative use of the top 20 sub-topics from this list.

Exploratory data analysis and visualisations

Our data processing pipeline automatically produces 10 html visualisations, mapping the trends in the CI literature. The full list can be found in Figure 1. We exported static versions of the visualisations for this article. Figures 3 and 4 are available as interactive charts that show the annual changes in FoS at https://github.com/kstathou/ci_mapping

Several limitations of our approach are worth mentioning as they influence what we were able to capture and the subsequent framing of the results. First, our choice of search terms to capture the collective intelligence literature includes both a selection of classic terms associated with the field (wisdom of crowds, collective intelligence) and terms such as social computing, citizen science and crowdsourcing which are more likely to be associated with applied methods being used by practitioners. These choices carry the risk of potential overrepresentation of certain sub-topics while excluding others. Second, although our use of MAG allowed us to expand the search beyond the long-form publications in WoS, it has some missing metadata. For example, information about the source of publications (journal and conferences) was only available for 22% and 35% of the AI+CI and CI papers, respectively. The subsample for which we have publications source information may be skewed in some way so we present these findings cautiously.

Results

The number of CI articles published increased yearly between 2000 and 2015, with the highest rate of increase between 2010 and 2015. Since 2015, the number of articles has stabilised to between 3750 and 3850. The peak of CI-only publications occurred in 2017 with 3985 articles. In contrast, publications in the crossover of AI+CI have continued to increase annually since 2000, reaching an absolute peak in 2020 of just under 600 articles (see Figure 2). Changes in publications about collective intelligence and AI+CI.

Collective intelligence research shows more disciplinary and topic breadth than AI+CI

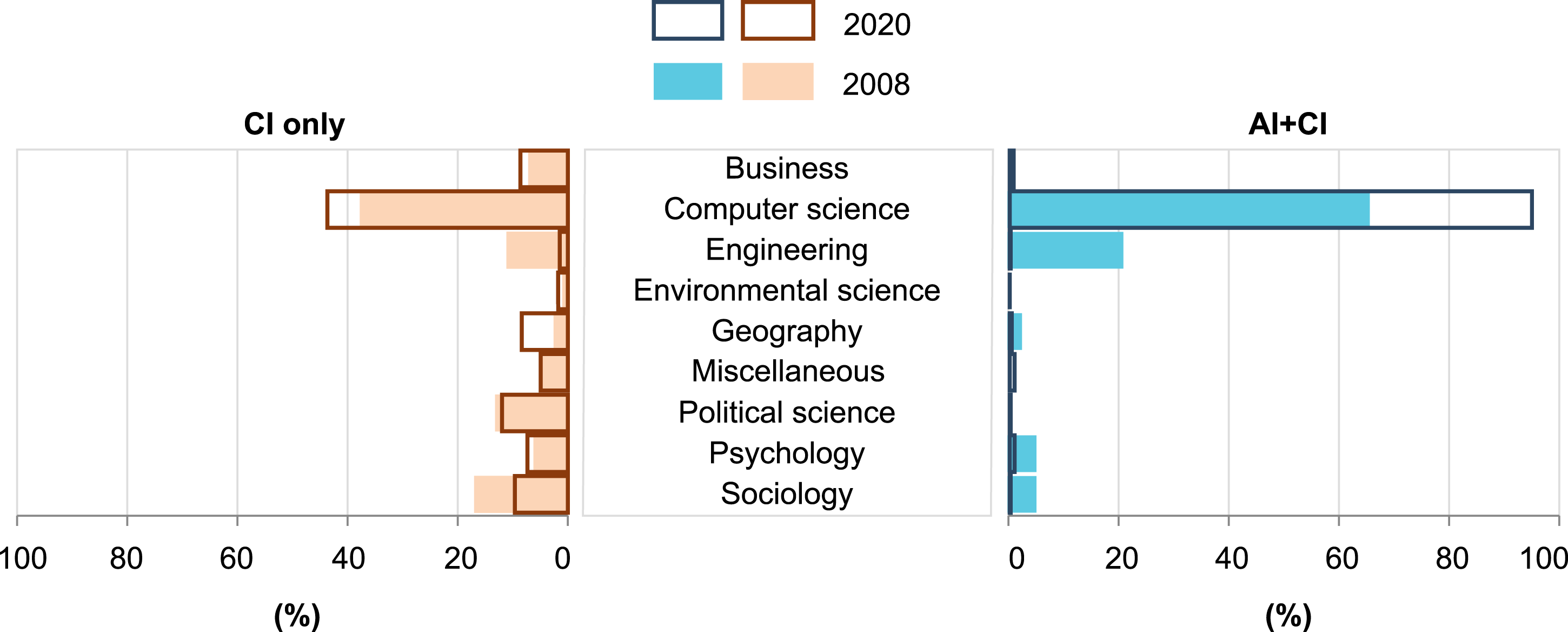

We used the FoS assigned by MAG within their level 0 hierarchy to analyse the disciplines that were producing CI articles. Figure 3 shows the changes in the disciplinary contributions to the literature between 2008 and 2020. We chose 2008 as the base year because it was the first year that the field saw more than 500 CI articles published. Over the last 20 years, CI research has maintained high disciplinary breadth, with publications spanning Political Science, Engineering, Sociology, Computer Science and more. Disciplinary diversity of collective intelligence publications in 2008 and 2020.

In contrast, more than 80% of AI+CI publications in 2008 were classified as Computer Science or Engineering, with a small percentage in Sociology, Psychology and Geography. Since then, Computer Science has come to dominate AI+CI publications entirely. CI-only research is also increasingly associated with Computer Science (more than 35% of publications since 2008), while publications from Engineering and Sociology have decreased by 5%. CI-only research has also increased its proportion of publications in Geography, which has increased to 8%. In 2020, researchers from Business, Sociology, Psychology and Political Science all contributed ∼10% of CI-only articles.

AI-focused collective intelligence research is dominated by supervised machine learning

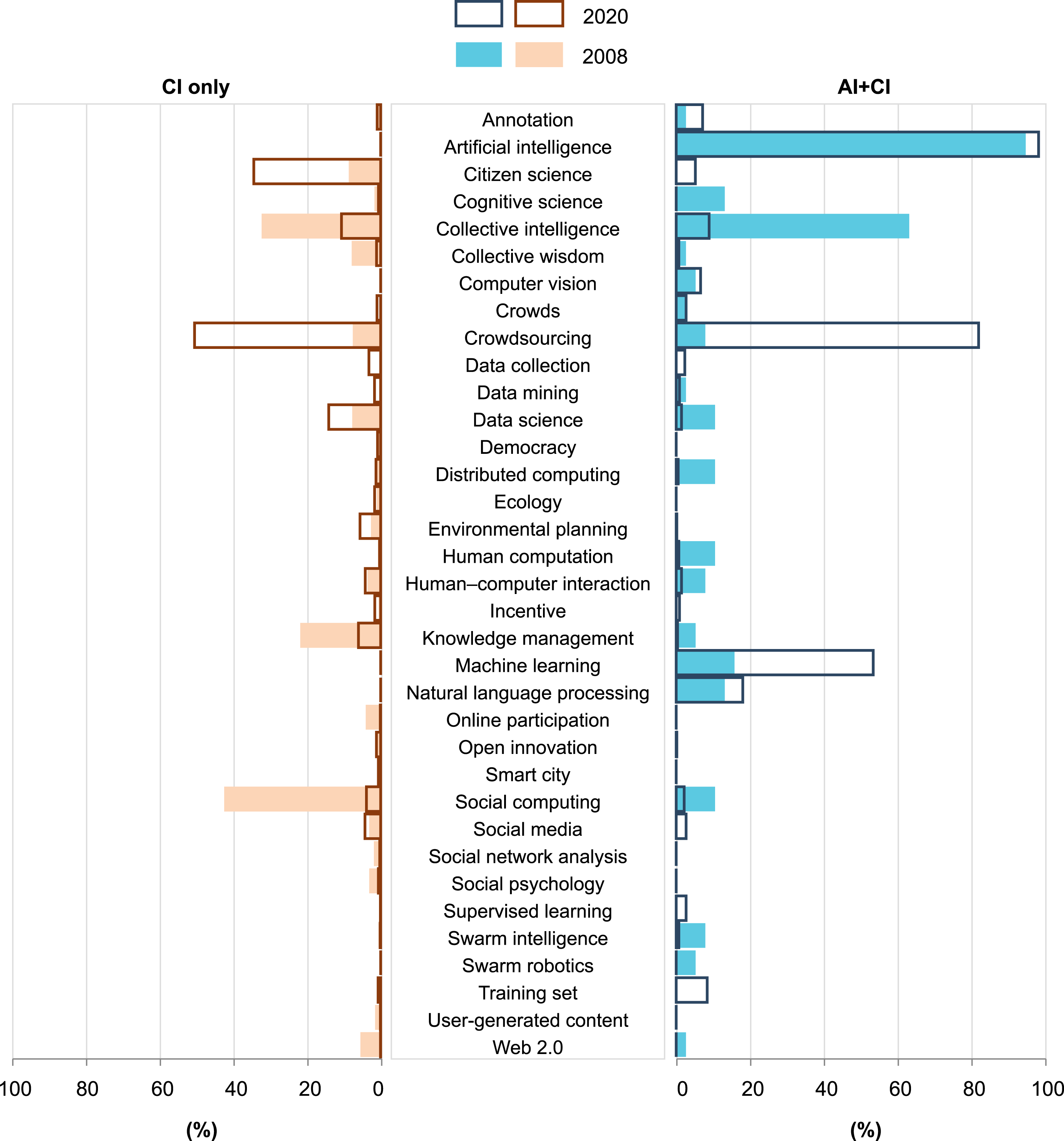

The relative frequency of the topics in the CI literature has shifted considerably in the last 20 years. We used a preselected shortlist to analyse the frequency of topics within the dataset, comparing CI-only publications with the AI+CI subset. Figure 4 shows the direct comparison between frequently occurring topics in 2008 and 2020. As a topic, the relative frequency of ‘collective intelligence’ has decreased significantly in both subsets of the data, from 33% to 10% for CI-only and 63%–9% in AI+CI publications. For CI-only articles, there has been a corresponding increase in relative popularity of citizen science and crowdsourcing; they occur in one third (34%) or half (50%) of all CI-only articles, respectively. At the same time, ‘social computing’ and ‘knowledge management’ have both decreased in popularity, from 42% to 4% and from 22% to 6%, respectively. Topics in collective intelligence publications for 2008 and 2020.

Since 2008, there has been a significant increase in the dominance of two topics in AI+CI research, namely ‘crowdsourcing’, 82% of papers, and ‘machine learning’, 54% of papers. This trend and the appearance of four new topics: ‘citizen science’, ‘supervised learning’, ‘training set’ and ‘annotation’ suggest that most articles published in the crossover of the collective intelligence and artificial intelligence literature focus on the use of collective intelligence for annotation. Overall, AI+CI may be shifting away from a more integrated human-machine interaction (as evidenced by decreased frequency of terms ‘social computing’ and ‘human computation’, 10% in 2008 to ≤2% in 2020). There is also evidence of a narrowing of AI+CI research to focus on machine learning and associated techniques such as computer vision and natural language processing, with the disappearance of topics such as swarm robotics, swarm intelligence and distributed computing (6, 8 and 10% of AI+CI publications in 2008, respectively.

Industry is the key driver for AI+CI research

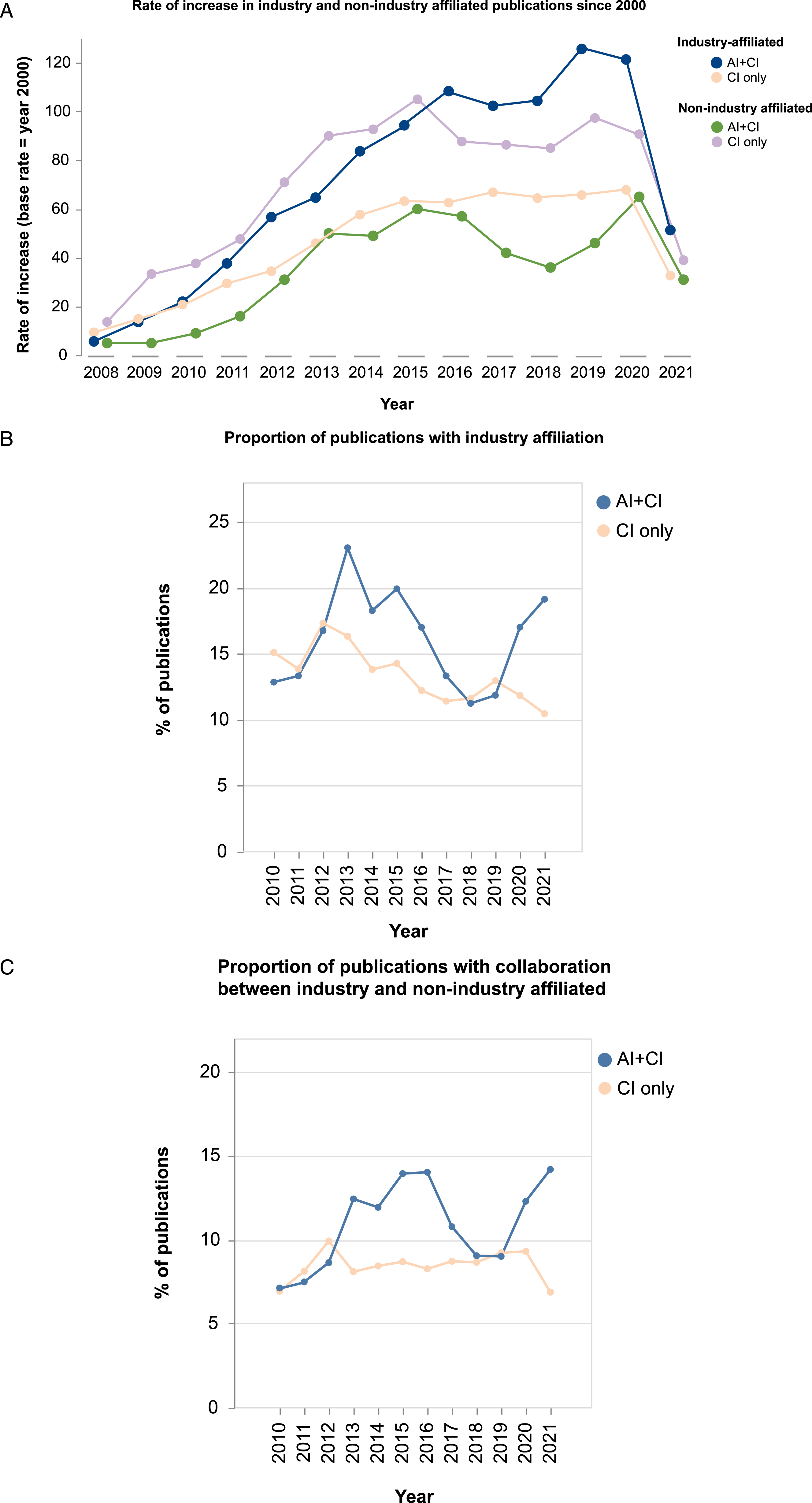

We collected metadata about authors’ affiliations for each of the papers in our dataset. We then assigned industry or non-industry labels based on the type of institution authors were affiliated with to understand the relative importance of industry led research to publications in the field. Since 2010, AI+CI publications from industry have been increasing faster than CI-only industry publications, measured taking the year 2000 as the base rate (Figure 5(a)). Microsoft is responsible for the highest share of AI+CI publications amongst big tech companies. Non-industry AI+CI publications have also show an increase on the base year but at half the rate of industry publications. For CI-only publications, we observed a reversal of this trend. Both industry and non-industry affiliated publications showed an increase on the base rate but non-industry affiliated papers grew at a faster rate than industry-affiliated publications. We also analysed industry-affiliated publications as a proportion of total AI+CI and CI-only publications each year (Figure 5(b) and (c)). In general, AI+CI research has had a higher proportion of cross industry-academia collaboration, (between 10 and 15% since 2013) of all published papers than CI alone, apart from 2018 and 2019 where both subsets of our data showed a similar proportion of collaborations between industry and non-industry (Figure 5(b)). The role of industry in CI-only and AI+CI publications over time.

Journals, conferences and preprints

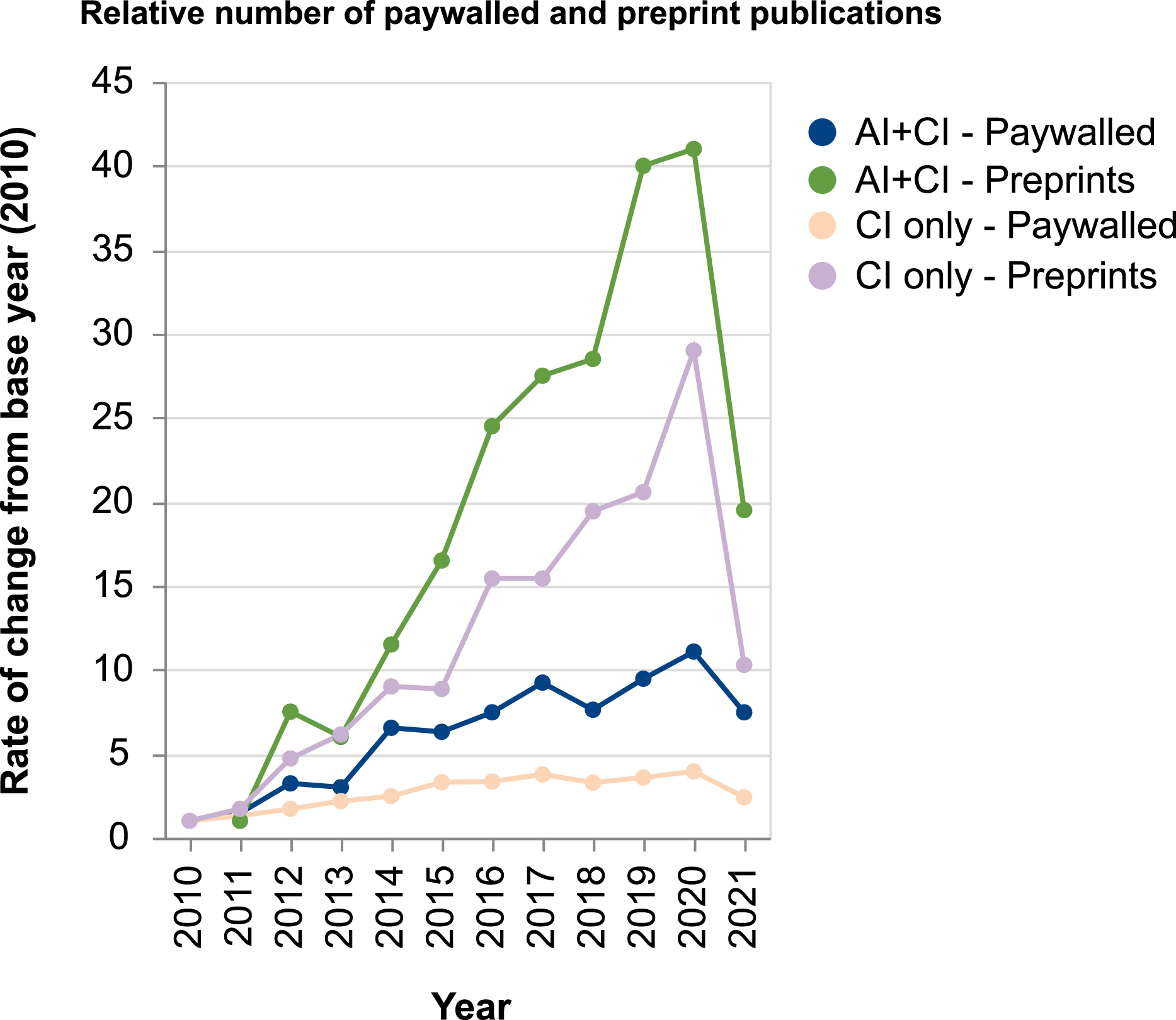

We were able to obtain the source of publications (journal, preprint repositories and conferences) for 22% and 35% of the AI+CI and CI papers, respectively. For the subset of publications that had journal information available, we looked at the number of articles being published as preprints versus paywalled publications (Figure 6). We show this as the year-to-year increase in publications relative to the base year 2010. Since 2012, the number of both CI and AI+CI articles being published as preprints has shown an annual increase. Paywalled publications of AI+CI research have also followed this trend, while CI-only paywalled papers have shown the smallest increase relative to 2010. Relative change in paywalled and preprint publications between 2010 and 2021.

Similar to the trend observed across most research domains and sub-disciplines (Xie et al., 2021), our results show that preprint repositories have become an increasingly important publication venue for CI-only research. In 2010, only one of the top publication venues for CI-only research was a preprint repository (social science research, network, SSRN) and this increased to three (SSRN, bioarxiv, arxiv computers and society) in 2020. The top five journals and preprint repositories publishing AI+CI and CI-only papers in 2020 (Figure 7(b)) were non-overlapping. This shows a change from 2010 when three publication venues co-occurred for both AI+CI and CI-only research. Our previous research has shown that AI+CI research has a higher degree of overlap in both journals and conferences with pure AI research than with CI.

4

This is unsurprising given the focus on technical topics and narrow disciplinary range revealed by our analysis above (see Figures 3 and 4). AI+CI publications are thus more likely to be influenced by trends in AI research rather than CI. Although all of our categories had publications in the preprint repository arXiv in their top 20, AI and AI+CI publications were more likely to share subject labels than CI and AI+CI (e.g. arXiv computation and language, arXiv learning as the two with highest number of publications). This may influence co-discoverability of papers on the platform and through peer review processes which may affect the likelihood of researchers becoming aware of each others’ work. The journals and preprint repositories with the most CI-only and AI+CI publications in 2010 and 2020. The conferences with the most CI-only and AI+CI publications in 2010 and 2020.

There was a shift in the top five conferences between 2010 and 2020 for both CI-only publications and the AI+CI subset (Figure 8). Since 2010, computer science, design and human-computer interaction conferences have become increasingly important venues for CI research. This may explain the increased dominance of computer science in CI literature in recent years (Figure 3). Two conferences feature in the top five for both CI-only and AI+CI literature (LREC and AAAI). These conferences may be important touchpoints for researchers publishing CI-only and AI+CI research. In 2020, we found that three conferences in the top five for AI+CI research were focused on computational linguistics, which is unsurprising given the increased frequency of natural language processing as a topic in the AI+CI literature (Figure 4).

Discussion

Our analysis has shown that the number of CI publications experienced a period of steady growth between 2008 and 2015 but the annual number of CI-only publications has remained steady since 2015. In contrast, AI+CI research has continued to increase over the 20 year period we explored, despite accounting for a smaller overall share of the CI literature. Publications in the crossover of AI+CI are growing at a faster rate than CI-only papers. If AI+CI literature continues to gain momentum and non-AI collective intelligence literature remains the same, the trajectory of research in the field may be increasingly influenced by AI-focused research.

There are early signs that this is already happening. Comparing the changes in the FoS between CI-only and AI-CI literature over the last 12 years, we found that AI+CI research has decreased in topic diversity and disciplinary breadth. AI+CI research has fewer contributions from social science, psychology or engineering and is mostly carried out by researchers in Computer Science. The focus of collective intelligence in this literature seems to be on annotation, crowdsourcing and supervised learning. This may be having a spillover effect on the topical focus of non-AI collective intelligence research, which has seen an increase in the proportion of articles from computer science while the prominence of sociology has decreased.

CI-only research has also shown a significant increase in the number of publications focussing on crowdsourcing and citizen science while the frequency of topics in the categories of collective intelligence and collective wisdom has declined. Although CI publications have maintained a degree of topic diversity over the years, the increased focus on crowdsourcing and the growing prominence of AI+CI publications may pose a risk to this diversity in the future. If the most we can imagine about AI+CI research is to support the improvement of supervised learning algorithms, we may miss out on the potential technological diversity in AI R&D in the future (Klinger et al., 2020) and the more human-centric and societally beneficial focus that broader integration of CI topics into AI+CI could enable (Peeters et al., 2021; Verhulst, 2018).

We enriched our dataset with information about the affiliation of researchers, to identify papers that had been authored by individuals based in the private sector. We found that the number of publications with industry affiliations has been growing steadily since 2010. This may be influencing the narrowing of the field. The constraints and incentives that determine the trajectory of research in industry are different to those in academia or the public sector. The dominance of industry-driven research for AI+CI may cause the overrepresentation of certain methods such as deep learning which are problematic in public-sector contexts where interpretability is important. This trend may also be limiting the kinds of questions we address with the combination of human and machine intelligence. These findings reinforce other work about the trajectory of research in AI, where mapping efforts have shown that the field on AI is narrowing to focus on a narrower range of methods, namely deep learning, and that these trends are being driven by the increasing contribution of industry to research (Frank et al., 2019; Klinger et al., 2020).

Finally, for a smaller subset of publications (22% in AI+CI and 35% in CI-only) we were able to analyse the source publication to understand the venues (journals and conferences) where CI research was being shared. We show little overlap between the top five journals publishing research in non-AI CI research or AI+CI. At least two conferences are found in the top five of both subsets of our data showing that there are some venues where researchers working on AI+CI may overlap with the broader CI community. Our previous research has shown that AI+CI journals and conferences have more crossover with the AI community than the broader CI community. Conferences provide an important platform for the research community to find collaborators and share their work. Likewise journals and (increasingly) preprint repositories are leveraged by scientists to share ideas, results and data. If these important channels for cross-fertilisation are less established between researchers working in AI and CI and the rest of the CI field, it is likely that both sides will miss out on the potential added value of collaboration (Peeters et al., 2021). For example, the AI+CI community can learn a lot from researchers in other parts of collective intelligence about improving participation through incentives and motivation, while the broader CI community can learn new methods to scale up their analyses of qualitative data from researchers specialising in AI+CI techniques like natural language processing. It is possible that the fragmentation we see between AI+CI and the broader CI field would also be replicated for other subsets of the CI literature.

Conclusion

This paper presented a new data and analysis pipeline for understanding how collective intelligence literature has evolved in the last 20 years. While previous attempts have focused on summarising the field by providing qualitative frameworks, we instead used a quantitative approach to track the year-on-year changes in the field. We looked at both the field as a whole and a subset of the literature where CI intersects with artificial intelligence research. Our findings suggest that this subset of CI is continuing to grow even as the number of articles being produced in the wider field has plateaued. AI+CI research shows distinct trends, in that more of the research is carried out by the private sector and there has been a narrowing of the topical diversity in the last 12 years. This pattern is similar to the trends observed by other groups who have mapped artificial intelligence research. It suggests that AI+CI is more closely aligned to the broader AI community than researchers from other disciplinary backgrounds in the CI community. Our analysis of the minimal overlap between journals and conferences for CI-only and AI+CI research offers early evidence to support this.

We hope this exploratory mapping of the field helps to demonstrate the potential value from quantifying the changes to the way that research is practiced by the CI community and will inspire others to build on our methods. Our choice of search terms was constrained by our definition of collective intelligence as well as the aim of covering the AI+CI literature. Other subsets of the CI field may warrant similarly focused studies, for example, collective decision making or prediction markets. Although we did not retrieve references or citations as part of our data pipeline, it is possible to collect this metadata for MAG entities. This metadata could be used to perform network analyses of the dataset to understand co-citation networks, which would give further insight into the interconnections between different disciplines within the field. Future work could look towards using a collective intelligence approach to analyse the field, including using new hybrid human-machine approaches and exploiting new measures of diversity or different indicators of changes to the field (Shi et al., 2019).

Footnotes

Acknowledgements

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by funding from the Centre for Collective Intelligence Design, Nesta.