Abstract

Longitudinal studies with time-varying treatments or exposures make it hard to figure out “what effect” is being estimated. Drawing on causal inference, we clarify this by distinguishing between total, direct, and—centrally—joint effects, defined within the potential-outcomes framework and illustrated with directed acyclic graphs. Joint effects extend average treatment effects to repeated interventions, providing a practical measure of combined intervention effects over time. Using a worked example on smartphone use and sleep quality, we demonstrate how different estimands answer different questions, why single total effects can sometimes mislead in longitudinal settings, and how joint effects capture strategy-level consequences across time. A key practical takeaway is that joint effects can be estimated in both experimental and observational studies. In the latter, it typically suffices to adjust only for variables that govern treatment decisions at each time point rather than modeling the entire causal system. Building on this, we propose covariate-driven treatment assignment (information-restriction designs in which decisions depend only on observed covariates) as a practical route to causal inference in nonexperimental psychology, and we connect these designs to estimation via g-methods from epidemiology. We provide open materials, including R code, to support adoption.

Keywords

Psychologists are increasingly interested in longitudinal study designs, driven by the desire to gain a deeper understanding of how psychological processes unfold over time (Baumert et al., 2017; Ebner Priemer et al., 2009). This growing interest has been fueled, in part, by the advent of novel data-collection techniques, including experience-sampling and mobile-sensing methods (Harari et al., 2016; Schoedel & Mehl, 2024; Wrzus & Neubauer, 2023). Alongside this development, psychological methodology has increasingly incorporated causal-estimation approaches from other disciplines, such as epidemiology and econometrics (Chatton & Rohrer, 2024; Grosz et al., 2024; Rohrer, 2018). In this context, methodological researchers have called for clearer communication of the causal estimand (i.e., the mathematical quantity that answers a causal research question; Auspurg & Brüderl, 2021; Lundberg et al., 2021) and greater precision in specifying the assumptions required for causal inference (Bailey et al., 2024). However, this body of research has focused predominantly on nonlongitudinal outcomes. As a result, there remains a gap in the integration of causal-inference methods for longitudinal data in psychological research (Bailey et al., 2024; Rohrer & Murayama, 2023).

The distinguishing feature of longitudinal designs in the context of causal inference is that treatment-outcome relationships are evaluated repeatedly over time, resulting in many possible estimands that researchers might be interested in. Rohrer and Murayama (2023) called for greater clarity in longitudinal settings because vague verbal theories lead to multiple possible estimands (for an example from cross-sectional research, see Auspurg & Brüderl, 2021). Articulating temporally precise theories and connecting them to statistical methods is essential in longitudinal designs (Hopwood et al., 2022). But this is achievable only if psychological researchers (a) are aware of the different estimands and (b) clearly specify their target estimand.

For this purpose, in the present article, we provide a conceptual introduction to the different causal estimands that can arise in longitudinal settings. In particular, we describe a class of estimands known as “joint effects.” These joint effects quantify how well an intervention or a treatment works when it is administered multiple times over a period of time. Their effectiveness is evaluated based on later outcomes, allowing for the comparison of temporal dependencies. Conceptually, joint effects allow researchers to address questions such as the following: Should a patient attend all therapy sessions (i.e., receive every treatment)? Is it sufficient to begin therapy later (i.e., receive only later treatments)? Or is it preferable to forgo therapy entirely (i.e., receive no treatment)?

Joint effects were first conceptualized in epidemiology, in which they remain the predominant estimand in longitudinal designs (Hernán & Robins, 2020; Robins, 1986). They have recently gained traction in psychology through a series of rather technical tutorial articles: Researchers have demonstrated how joint effects can be formulated in linear structural equation models (SEMs; Mulder et al., 2024, 2025) 1 and how SEM software can be used to implement different estimation strategies for joint effects (Loh et al., 2024; Loh & Ren, 2023). However, this literature devotes little attention to the underlying concept of the estimand itself or to the scenarios in which it can be meaningfully estimated. Instead, the focus is on what to do once joint effects have been selected as the target estimand and their identification is ensured.

Although psychology has a long tradition of modeling longitudinal data using SEMs (Usami et al., 2019), joint effects have rarely been treated as compelling estimands. We believe that more researchers would be interested in joint effects if they understood their meaning and usefulness more clearly. In this article, we bridge that gap by offering a concise, nontechnical introduction to what joint effects are and why they matter for psychologists. To that end, we develop a hypothetical longitudinal study to show how to identify and interpret joint effects, and we provide R code for simulation and estimation in the online supplement (https://osf.io/eczky/). We then discuss the practical value of joint effects, emphasizing that they can often be estimated under weaker confounding assumptions than methods commonly used in observational psychological research. Joint effects typically require adjustment only for variables that govern treatment decisions, and researchers do not need to know all variables in the causal system. We introduce a class of research designs that leverage this property. First, however, we present a conceptual overview of causal inference for longitudinal data using potential outcomes and causal graphs.

Introduction to Causal-Inference Tools

Quantitative causal reasoning usually follows a road map (see Dang et al., 2023; Lawes et al., 2025) that can be grouped into three broader stages. The first stage involves defining a quantifiable causal estimand: the quantity one wants to estimate (Lundberg et al., 2021). Once this target estimand is specified, the next stage is to articulate the assumptions necessary to identify the estimand and connect it to the available data. Finally, the third stage is to develop a statistical model that estimates the causal estimand.

In this article, we primarily focus on clarifying the first and second stages in the context of psychological-research scenarios. Our focus is not on the third stage, although we present one approach for estimating longitudinal effects in the online supplement.

Stage 1: defining the causal estimand of interest

Specifying the estimand is the foundational step in causal inference (Lundberg et al., 2021). It is essential to first state which causal effect one intends to investigate before describing the techniques used for its estimation (Rubin, 2005). In causal inference, these target quantities that answer the research question of interest are called “causal estimands.” For example, if a researcher is interested in how effective a drug is in relieving headaches, a corresponding causal estimand is the variable that addresses the following question: What is the quantitative difference in headache severity if a person takes the drug versus if the person does not?

Causal estimands become more difficult to conceptualize in longitudinal settings. In these contexts, numerous causal estimands can arise simply because there are many time points that can be compared. For instance, researchers might be interested in the effect of taking the drug only at a specific time point

A causal estimand represents the effect of actions or interventions and is therefore not merely a description of statistical models or coefficients. Thus, causal estimands require a distinct language. One predominant framework for formulating causal estimands is the potential-outcomes framework (POF; Neyman, 1923/1990; Rubin, 1974).

The potential-outcomes framework is a general framework for causal inference that is widely used in both epidemiology and economics (Hernán & Robins, 2020; Imbens, 2020). Psychologists may find this perspective on causality intuitive because it is based directly on an experimental point of view (Eronen, 2020; Rohrer & Lucas, 2020). The basic idea is that before a treatment (

As an example, consider the question of whether smartphone usage affects sleep quality. Let

Because only one potential outcome can be observed for each person, we cannot know individual causal effects (Holland, 1986). But we can still reason about the average treatment effect (ATE): the mean difference between sleep quality if everyone used their smartphone and if no one did, defined as

In longitudinal study designs with time-varying treatments, a treatment

From a temporal perspective, potential outcomes depend on when the intervention occurs and when the outcome is assessed. We can consider distinct interventions at each time point.

This sequence of treatments is called a treatment “regime” or “strategy,” denoted by

For simplicity, in this article, we focus on static treatment strategies in which treatment assignment is fixed in advance for all

The causal effect of a treatment strategy on the outcome—the difference in the outcome under two longitudinal treatment strategies—is called the “joint effect” of a treatment strategy (Elwert, 2013). The term “joint” highlights that multiple treatments are administered, and their combined effect on the outcome

Therefore, one way to think about joint effects is to view them as the result of an RCT in which two complete strategies are compared. Alternatively, joint effects can be understood as the combination of individual treatment effects at each time point. This perspective is crucial for interpreting joint effects and also makes it possible to learn them from observational data rather than only from experiments (Daniel et al., 2013). We further elaborate on these points and provide guidance on their identification in a later section of this article.

Stage 2: identification of the estimand

After defining the causal estimand of interest, the second stage of causal inference is identification. Identification asks which causal assumptions are necessary to link the hypothetical estimand to the observed data (consistency, exchangeability, and positivity; see Hernán & Robins, 2020). In this article, we focus only on the assumption of exchangeability (Hernán & Robins, 2020), also called “unconfoundedness” (Imbens & Rubin, 2015), which concerns the structure of confounding variables. We need to assume that differences in sleep quality are due to only different smartphone usage and not a common cause of the two. For example, younger people use their smartphone more often yet have different sleep patterns than older adults. In experimental settings, researchers assume that confounding variables are controlled through randomization. In nonexperimental settings, researchers assume that confounding can be mitigated by measuring relevant variables and adjusting for them in a statistical model.

We stress this assumption about unconfoundedness in this article because of the key advantage that joint effects do not require every confounder to be measured but, rather, only a specific subset of them (Richardson & Robins, 2013). Generally, the step of identification becomes challenging in longitudinal studies because there are multiple variables and interactions between them. Using one’s phone at time point

DAGs are a valuable tool in causal inference because they visually represent a researcher’s beliefs about the causal process. Their benefits are well established (Pearl, 2009), and intuitive introductions for psychologists are available (Rohrer, 2018).

2

In a DAG, relevant variables (denoted here as

DAGs help researchers to determine which variables need to be controlled for to identify a causal effect of interest (Cinelli et al., 2024; Poppe et al., 2025). Confounders must be adjusted for, and adjusting for colliders introduces bias. Whether it is appropriate to adjust for mediators depends on the estimand. Ultimately, the identification of causal effects relies on independence assumptions between variables. These independence assumptions come in two forms, which can be depicted in a DAG (Pearl, 2009): First, assume that conditional on observed confounders, there is no unmeasured common cause

DAGs are particularly useful in longitudinal designs involving many time-varying variables because some of these variables may act as both confounders and colliders at the same time (Hernán & Robins, 2020). Moreover, prior treatments typically serve as confounders for subsequent treatment effects because they influence both the likelihood of receiving later treatments and the outcome. In the next section, we illustrate this concept alongside the other concepts discussed here using a running example. For a brief review of the terminology, see Box 1.

Short Glossary of Causal-Inference Methods

Causal Inference in Longitudinal Studies: An Illustrative Example

As already mentioned in the previous section, some challenges arise when conducting causal inference in longitudinal study designs. To explain these challenges in more detail and to illustrate the definition (Stage 1) and identification (Stage 2) of causal estimands in longitudinal settings, we use a running example based on a hypothetical RCT. This example is motivated by the question of whether smartphone usage affects sleep quality and in particular, whether usage that occurs well before bedtime already influences sleep.

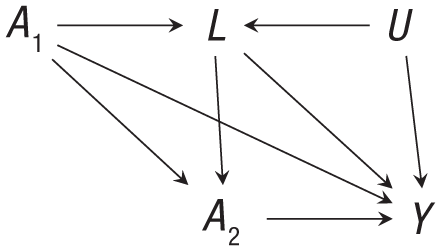

In this RCT, participants are randomly assigned to use a specific social media app from 8 p.m. to 10 p.m. (

Directed acyclic graph that represents the running example.

In our online supplementary material, we use this example to demonstrate how to specify a data-generating process that aligns with the DAG presented in Figure 1 and how to determine the true expected potential outcomes and corresponding causal effects by simulating data from this process. The supplementary materials, including the R code, are available in our OSF repository (https://osf.io/eczky/). For a more convenient reading experience, we also present our supplementary materials as a website (https://florianpargent.github.io/joint_effects_ampps/).

Stage 1: defining the causal estimand of interest in longitudinal study designs

The overarching research question of whether using a social media app affects sleep quality leads to multiple estimands of interest in a longitudinal setting. In particular, one can distinguish between different estimands that can be of interest, depending on the respective research questions: total effects, joint effects, or direct effects (Daniel et al., 2013).

Total effects

It is well known that RCTs offer a way to estimate certain causal effects by leveraging randomization: Because

In longitudinal settings, the strength and direction of total effects can strongly depend on the time point of the intervention and the time point when the outcome is measured.

In our hypothetical study, the researchers uncover this unexpected result (see Supplement 2 online, https://florianpargent.github.io/joint_effects_ampps/supplement_2.html): The total effect of social media usage at

Joint effects

The first joint effect that researchers may wish to investigate is the effectiveness of the strategies “always treat” versus “never treat”:

For example, the researchers may want to assess whether early social media usage at

Direct effects

Direct effects evaluate the causal impact of an exposure or treatment while controlling for intermediate variables (Robins et al., 1992). They are appealing because they allow researchers to reason about causal mechanisms (Pearl, 2014). In our example, a direct effect corresponds to the effect of social media usage at

A popular approach is to equate direct effects to linear path coefficients in regression models or SEMs (for an overview, see Rohrer et al., 2022). This path-coefficient approach relies on a simplified, interaction-free view of the causal process (VanderWeele, 2015). Beyond this, methodologists have often emphasized that popular approaches to estimate direct effects are biased in most applied research settings (Bullock et al., 2010; Mayer et al., 2014; Rohrer et al., 2022). The main reason for this bias lies in issues of identification: In many mediation analyses of direct effects, confounding is not properly addressed. In this article, we therefore do not focus on direct effects; they will be discussed only to improve the understanding of joint effects and their usefulness in psychological research.

Stage 2: identification in longitudinal study designs

Any method of estimating causal effects must deal with confounding in some way. In our example—as in most RCTs—the treatment was randomly assigned at time point

Assumptions about the structure of confounding can be conveniently expressed in a DAG (Fig. 1). Graphically, if no unmeasured confounding

If researchers do not discuss confounding, they implicitly assume there is none. This assumption of “no unmeasured confounding” is highly problematic because it is valid only under very specific conditions in psychological research (Brandt, 2024; Bullock et al., 2010). Nonetheless, this assumption is commonly invoked in mediation analyses (Brandt, 2024; Rohrer et al., 2022) or in panel research using SEMs (Mulder et al., 2024; VanderWeele, 2012). Similar to

The DAG in Figure 1 allows for the identification of joint effects because there is no unmeasured confounding of the form

Stage 3: interpreting causal effects in longitudinal study designs

After specifying the target estimand and evaluating its identification, the next step is to estimate it. Because estimation is not the focus of this article, we focus here only on interpreting the results. In Supplement 1 online (https://florianpargent.github.io/joint_effects_ampps/supplement_1.html), the causal effects were estimated by Bayesian simulation of the g-formula (based on regression models estimated with the brms package in R; Bürkner, 2017). Table 1 shows the true potential outcomes and corresponding estimates based on a simulated data set produced by the true data-generating process.

True and Estimated Potential Outcomes of Sleep Quality

Note: Any contrast of these potential outcomes, for example,

Comparing the different potential outcomes of interventions at both time points reveals that the “never use” condition (

Recall that the average total effect of social media usage at

Generalizing joint effects to more complex longitudinal study designs

We can now generalize the concept of joint effects from our simplified example to more complex settings. Many applications in psychology involve more than two time points. For example, the average experience-sampling study includes more than six assessments per day (Wrzus & Neubauer, 2023). In such settings, the relevant confounders

We now look at the structure of joint effects in these settings in more detail. Because we are primarily interested in the effect of social media usage

Conceptual graph with time-varying treatment

Figure 2 illustrates that joint effects are not simply the sum of individual total effects because total effects include pathways through future treatments. Instead, joint effects represent a combination of direct effects of

This structure of joint effects might not immediately be obvious from the corresponding verbal research question: “What if everyone in a population had followed the treatment strategy

To conclude this section, in Box 2, we provide an overview of relevant terminology in longitudinal causal inference. As a final remark, the previous considerations also extend to settings in which the outcome

Short Glossary of Longitudinal Causal Inference

Joint Effects: Why They Matter for Psychologists

Joint effects are rarely discussed as target estimands in the psychological literature (Mulder et al., 2025). We address this gap in the following sections by explaining why joint effects are relevant for psychological research. In a nutshell, their identification relies on weaker assumptions about the causal confounding structure than the methods and estimands that are currently popular in longitudinal psychological research.

To illustrate why psychologists should devote more attention to joint effects, we first outline how causal inference is typically approached in longitudinal settings and discuss the associated challenges. We then draw on recent developments in causal inference (Gelman, 2011; Hernán & Robins, 2020; Pearl, 2009) to propose a class of research designs that allows for the estimation of causal effects under reasonable assumptions in nonexperimental settings.

Challenges in longitudinal psychological research

In the introduction, we divided causal inference into three stages: specifying the estimand, outlining the assumptions required for its identification, and statistically estimating it. This structured approach is uncommon in psychological research. In nonexperimental studies in particular, researchers typically proceed directly to estimating a statistical model and then attempt to interpret the resulting coefficients. In doing so, they effectively bypass Stages 1 and 2. As a result, it often remains unclear which causal estimand is being targeted. Moreover, these coefficients generally correspond to a form of direct effect, whose causal interpretation depends on very strong—and unrealistic—identification assumptions.

Challenge 1: selecting the appropriate target estimand

Many applied researchers have difficulty defining their target estimands, and this problem is exacerbated in longitudinal settings because there are many variables and interactions between them. If we acknowledge that previous social media usage can affect both the strength of the causal effect and the likelihood of future social media usage, we realize that there is no single effect of social media usage but many total, direct, and joint effects.

To circumvent these complexities that can arise in longitudinal studies, psychologists often read causal meaning into single path coefficients, such as

In research aiming at predicting the effectiveness of a longitudinal intervention strategy, this focus on clear causal estimands leads to joint effects being the natural estimand of interest. However, psychologists are often also interested in other causal questions. Psychologists who focus on less applied research traditionally seek to understand through which psychological mechanisms (e.g., rumination) a behavioral change (e.g., reduced social media usage) affects relevant outcomes (e.g., sleep quality; Eronen & Bringmann, 2021). Such process-oriented questions are linked to the causal concepts of mediation and the analysis of direct effects (Pearl, 2014). In our example, one might be interested in the direct effect of the social media usage strategy “always treat” while also controlling for rumination—that is, the effect of repeated social media usage on sleep quality that cannot be attributed to changes in rumination. This corresponds to a “natural” direct effect (Pearl, 2014; Robins et al., 1992) of a hypothetical intervention in which participants are randomly assigned to a “never-treat” condition and an “always-treat” condition, but rumination in the “always-treat” condition has been forced to the same level as in the “never-treat” condition by some (hypothetical) force. Although these effects are appealing, they require strong and unrealistic assumptions regarding their identification.

Challenge 2: identification issues

In the section on identification in longitudinal study designs, we outlined that if not all relevant variables are manipulated in an experiment, the identification of causal effects relies on strong assumptions about the structure of the causal process. Here, longitudinal study designs can offer an advantage over cross-sectional studies because they can address some unmeasured confounding by focusing on within-persons changes (Lawes et al., 2025). If the outcome is measured repeatedly, one can account for additive and noninteracting confounders that remain constant over time (e.g., stable personality traits; Imai & Kim, 2019).

We stress that beyond these time-constant confounders, all time-varying confounders (e.g., fluctuating personality states, mood, or daily behavior) need to be adjusted for (Lawes et al., 2025; Rohrer & Murayama, 2023). In social or behavioral sciences, it is generally not possible to observe or control for all confounders directly without smart research designs (Gelman, 2011). It is therefore not advisable to estimate the whole causal system and then interpret every path coefficient but rather to focus on specific paths in which a causal effect is identified (Rohrer & Murayama, 2023; VanderWeele & Hernán, 2012).

To see this, consider the DAG of Figure 3, which represents an observational study. This DAG has unobserved confounding variables

Extended directed acyclic graph that corresponds to an observational study in which joint effects are identified. The crucial assumption is that treatment assignment of

In the DAG of Figure 3, a direct effect of a treatment strategy that also controls for rumination would not be identified because of the unmeasured confounders

Why psychologists should care about joint effects

Although these considerations might be frustrating from a theoretical perspective, we argue that without stronger research designs, psychologists often have no choice but to focus their efforts on total effects, which can be identified in RCTs with a single intervention. In longitudinal settings with time-varying treatments, this naturally leads to evaluating joint effects of treatment strategies because RCTs can identify the ATE of a whole strategy

Even in nonexperimental settings, targeting joint effects as target estimands can be worthwhile. Joint effects simplify the complex structure of longitudinal causal effects by providing a useful summary measure of the combined treatment impact. They correspond to the effect of an intervention in which treatment is administered repeatedly, which makes them easily interpretable as if an intervention strategy had actually been implemented in the real world. The joint effect of the treatment strategies “never treat” versus “always treat” simulates an RCT that compares an intervention group with a control group, which is highly relevant in many practical scenarios. Although psychology has a strong history of experimental research, this interventionist perspective is rare when thinking about causal inference in nonexperimental settings.

We argue that psychology could learn from other research fields, such as epidemiology or economics, which use the potential-outcomes framework to closely tie their causal estimands to hypothetical interventions (Hernán et al., 2022; Holland, 1986). These fields strongly focus their research on applied questions that could theoretically be answered by RCTs (Hernán, 2016). By putting their estimands first, epidemiologists developed methods that can more reliably estimate them even in nonexperimental settings (see Box 3).

A Guide to g-methods With Longitudinal Data

Although RCTs remain the “gold standard” for causal inference, it is also possible to draw causal conclusions in nonexperimental settings. The widely held belief that longitudinal designs automatically enable causal claims that are not possible in a cross-sectional setting is wrong (see Rohrer & Murayama, 2023) and hinders progress toward stronger research designs for causal inference in psychology. Researchers need to identify scenarios in which a structure such as the one depicted in Figure 3 is reasonable.

To provide a potential solution to some of the identification problems discussed here, our next section proposes a concept for study designs beyond RCTs in which joint-effect estimation with observational data might be possible under more plausible assumptions. These research designs take advantage of the key insight that joint-effect estimation does not require measuring the entire causal system but only a specific subset of causal pathways (Richardson & Robins, 2013).

A call for stronger study designs

In our social media example, we sampled from a causal structure similar to that of Figure 1. Because this example was used for illustration purposes, we did not consider whether this structure is plausible in real-world scenarios. Social media usage was randomized only at

A DAG should reflect the researcher’s belief about the causal system and not, like in our simplified example, contain only covariates that are conveniently measured and therefore available for statistical analysis (Poppe et al., 2025). We argue that there are only a few scenarios in psychological research in which longitudinal causal effects can be identified without complex study designs, such as micro-randomized trials in social media usage studies (see Balaskas et al., 2021). The psychological literature has long ignored the need to explore study designs beyond RCTs that allow causal inferences under more plausible assumptions.

This observation has also been made by other psychological researchers, who have started to advocate for more nonexperimental designs that can be adopted from other disciplines. For example, Grosz et al. (2024) outlined how psychology could make use of natural experiments that allow for causal inference based on instrumental variables, which are a central pillar of causal research in economics (Imbens, 2020). Natural experiments leverage naturally occurring randomization that is not introduced by controlled scientific studies but has similar consequences. This could be a random shutdown of mobile networks that prevents a local group of people from using their social media apps. In such scenarios, it can be assumed that social media usage is not confounded with the outcome, which justifies removing any

In the social sciences, it has been argued that the only settings in which researchers can reasonably be sure that variables are independent (such that causal arrows between them can be deleted in a DAG) are randomization or information restriction (Gelman, 2011). The economic literature showcases many designs in which randomization occurs naturally (Angrist & Pischke, 2009). The epidemiological literature provides an example that leverages information restriction.

How information restriction can address unmeasured confounding

This example can be found in medical research, which shows many instances in which a DAG similar to Figure 3 is plausible and, therefore, that causal identification is possible with observational data (Hernán & Robins, 2020). In this scenario, a doctor assesses whether a treatment strategy should be continued based on an observed biomarker (

The key feature of this causal process is that all unobserved confounders

Our social media usage example and many other applied settings in psychology deviate from the medical example because treatment is self-selected at time point

Covariate-driven treatment assignment

In the medical example (also depicted by Fig. 3), treatment is administered by doctors who are restricted in their information, which allows crucial arrows (

We propose that the concept of information restriction of actors who administer treatments could inspire psychological researchers to develop new study designs. Such designs are useful in settings in which causal inference is desired but experiments are difficult to implement. Covariate-driven treatment assignment does not occur naturally in most psychological applications because in contrast to medicine, many psychological treatments are self-selected and are not a drug that must be prescribed by a doctor. However, the most intuitive setting for integrating covariate-driven treatment assignments is with therapeutic interventions in psychotherapy, which closely mirror the medical example described above.

Continuing with the social media example, one could construct an intervention in which certain aspects of social media usage are controlled for by an external agent, such as the participant’s therapist (e.g., by letting the therapist remotely adjust the settings of a screen-detox app installed on the participant’s phone). Whether a specific app can be used on a particular day could be determined based on covariates. In this case, a digital standardized symptom questionnaire could be used (or in our simplified example, only a single measure of rumination) that is updated daily in the therapist’s dashboard. The therapist does not know the true rumination (

In addition to clinical psychology, educational psychology is another area in which similar settings might be plausible. For example, a teacher might assign students to additional support or enrichment classes (

A brief outlook on estimation

In this article, we have focused on specifying longitudinal estimands and proposed study designs for causal identification. The next step in the practical application of causal inference is statistical estimation. Because joint effects are a combination of treatment effects, they generally cannot be estimated without bias using single-step regressions (e.g., a single longitudinal random-effects model; see Hernán & Robins, 2020, Chapter 20). In theory, joint effects can be estimated using SEMs as a combination of path coefficients, a method that is popular in psychology and often employed in cross-lagged panel modeling (Mulder et al., 2025). However, this path-coefficient approach relies on the assumption that the complete system (including

Building on this, epidemiologists have developed specialized estimation techniques called “g-methods” (Hernán & Robins, 2020), which can also be adopted to estimate causal effects of treatment strategies in longitudinal settings in psychological research. Rather than attempting to estimate the entire causal system, g-methods focus only on the components strictly necessary for estimating causal effects. As a result, they rely on only the minimal assumptions required for causal inference (VanderWeele, 2012).

Like all causal-estimation strategies, g-methods require statistical assumptions in addition to the causal identification assumptions that the assumed DAG holds. We briefly mention the most important methods and direct interested readers to the relevant literature and tutorials aimed at psychologists in Box 3. The estimation methods and their respective statistical and functional assumptions differ in nuanced ways, and we strongly advise against applying an estimation strategy without carefully considering the specific prerequisites of the method.

In general, joint-effect estimation is most appropriate when there is only a moderate number of time points of treatment. 4 Experience-sampling studies therefore offer promising use cases for these methods (with median six measurements per day or daily measurements over 12 days; see Wrzus & Neubauer, 2023). In contrast, when researchers work with data in which exposure and outcome are measured very frequently (“intensive longitudinal data”) and aim to model continuous temporal relationships, alternative methods—such as continuous-time SEMs—are more suitable (Driver, 2025; Driver et al., 2017).

Conclusion

In this article, we introduced longitudinal estimands from the causal-inference literature and discussed their relevance to psychological research. In longitudinal settings with time-varying treatments, the potential-outcomes framework leads to several important estimands that can be grouped into total, direct, and joint effects. Joint effects summarize the effectiveness of a treatment strategy consisting of repeated interventions over time. However, because joint effects aim to predict intervention effects, they are currently not the primary focus of psychologists, who are more often concerned with identifying causal processes (Eronen & Bringmann, 2021).

Joint effects of treatment strategies are estimands that are relatively easy to interpret and can be identified under less restrictive assumptions than other estimands commonly used in psychological analyses. In nonexperimental studies, identification requires measuring most of the relevant variables, which is rarely possible in psychological research. One notable design in which joint effects can be estimated lies in covariate-driven treatment assignment, in which an actor intervenes in a process based on observable covariates. We encourage researchers to use designs, similar to natural experiments (Grosz et al., 2024), in which information of actors can be leveraged to infer causal effects from observational data.

Footnotes

Acknowledgements

We thank Charles Driver, Jeroen Mulder, and an anonymous reviewer for their helpful comments and suggestions, which were vital in improving the clarity and focus of our article.

Transparency

Action Editor: Rogier Kievit

Editor: David A. Sbarra

Author Contributions