Abstract

The network theory of psychopathology inspired clinicians and researchers to use idiographic networks to study how symptoms of an individual interact over time, hoping to find the target symptom(s) for intervention to most effectively break this self-sustaining network. These networks are often based on the vector-autoregressive (VAR) model and rely on intensive longitudinal data collected in patients’ daily lives. Nowadays, one major challenge these networks are faced with is that they are used without sufficient quality assessments. Because VAR-based temporal networks are complex and highly parameterized, they can easily face problems of low statistical power and overfitting, especially when the time series available is short. In this study, we review existing idiographic-network studies with a focus on the number of variables and time points used in the analysis and show that the “big network, short time series” problem is prevalent. As potential solutions, we propose two simulation-based methods that aim to find the optimal number of time points to be collected: power analysis and predictive-accuracy analysis. Two applications of both methods are demonstrated: (a) “a priori”—informing the sample-size planning of future network studies and (b) “retrospective”—evaluating whether the sample size of existing network studies was large enough to avoid problems of low statistical power and overfitting. Results confirmed the observation that the sample sizes in past network studies are often insufficient, suggesting that findings of existing network studies should be critically assessed. Future idiographic-network studies are thus strongly advised to make more guided decisions on sample size using the proposed methods.

Keywords

The network theory of psychopathology considers the development of mental disorders as the result of interactions among symptoms over time (Borsboom, 2017; Borsboom & Cramer, 2013; Cramer et al., 2010). Rather than driven by a neurobiological common cause, symptoms sustain each other and eventually form a chronic disordered equilibrium (Borsboom et al., 2019). Since the introduction of the network theory, more and more researchers and clinicians have focused on studying mental-health disorders on the within-persons level through collecting intensive longitudinal data (ILD). ILD allow the exploration of the intraindividual variation of momentary experiences (Hamaker, 2012; Molenaar, 2004) and how such experiences interact over time (Wichers, 2014).

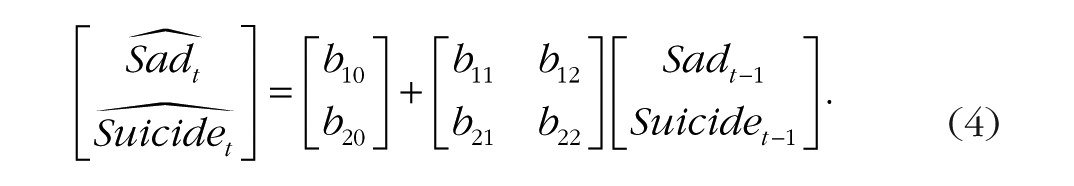

To capture such temporal dynamics in a patient’s daily life, ILD can be collected using methods such as experience-sampling methods (ESMs; Larson & Csikszentmihalyi, 2014) and ecological-momentary assessments (EMAs; Smyth & Stone, 2003). The methodological tool of idiographic (or person-specific) temporal networks has been developed for exploring the symptom interactions in ILD and has been increasingly used in clinical research and practice in recent years (e.g., Bringmann, 2021; Schemer et al., 2023; von Klipstein et al., 2020). In an idiographic temporal network, nodes represent the analyzed variables (e.g., emotions and symptoms) and are connected with directed edges (arrows). Take the hypothetical network in Bringmann (2021) as an example (Fig. 1): The arrow pointing from sad mood to sleep problems suggests that the sleep problems of this person can be predicted by the person’s preceding sad mood. Such a network can be a helpful exploratory tool for clinicians to establish potential causal links among one specific patient’s emotion, cognition, and physical problems. This can eventually benefit the process of case conceptualization (e.g., de Vos et al., 2017; Frumkin et al., 2021; Hall et al., 2025) and the design of personalized treatment plans (e.g., Levinson et al., 2021; Piccirillo & Rodebaugh, 2022). To summarize, the idiographic analysis aims to describe the within-persons psychological processes of one individual. This differs from the traditional nomothetic approach (i.e., multiple participants being measured once) used in psychological research that concerns only between-persons differences (Hamaker & Wichers, 2017; Molenaar, 2004).

A hypothetical network in Bringmann (2021).

To generate an idiographic temporal network, the lag-1 vector-autoregressive model (VAR[1]; Brandt & Williams, 2007; Lütkepohl, 2005) needs to be fitted to the ILD gathered from an individual. The VAR(1) model predicts the value of each variable at a given time point using the values of all variables in the system at the previous time point (i.e., the lag-1 value). Two types of effects are of primary interest in a VAR(1) model and become visualized in the network: the autoregressive and cross-regressive effects. The autoregressive effect refers to how the value of one variable is related to the lagged value of the same variable (e.g., the arrow pointing from sad mood to itself in Fig. 1), whereas the cross-regressive effect shows how well one variable can be predicted by the lagged value of another variable. Both types of effects represent the unique predictive value of each lagged variable.

To make meaningful interpretations of an idiographic temporal network, satisfactory quality of the VAR(1) model is necessary. However, this is often taken for granted and not examined thoroughly (Vogelsmeier et al., 2024). Therefore, our main goal in this article is to present methods that can be useful for quality assessment of the VAR(1)-based network and discuss practical methodological considerations for ensuring model quality.

Statistical models have the dual functionality of explanation (e.g., testing the hypothesized association among variables) and prediction (e.g., predicting the outcome variable’s value based on predictors’ values for an unseen observation; Shmueli, 2010). Therefore, their quality assessments should also involve both aspects. From the explanatory-modeling perspective, a key factor of model quality is the statistical power of the conducted significance tests—the probability of yielding statistically significant results when the effect of interest is true (Cohen, 1992). Significance tests with low statistical power cannot reliably detect the meaningful relationships among variables across samples and will thus hurt the model’s explanatory capacity. Because power analysis is usually conducted for the significance test of one effect at a time, it is of limited value when assessing the quality of a multivariate model (Mulder, 2022; Wang & Rhemtulla, 2021). In contrast, for predictive modeling, the generalizability of the model is crucial: Can a model estimated with a time series of an individual also make accurate predictions of data observed in other similar time periods for the same individual? One common reason why this predictability is not realized is overfitting: The model mistakenly treats sample-specific noises as generalizable signals and eventually yields unreplicable estimates that provide researchers with overly an optimistic impression of the model’s performance (Kuhn & Johnson, 2013). Based on this definition, an overfitted model is unlikely to uncover and explain the true data-generating process well. This suggests that the two functionalities, explanation and prediction, should not be seen as completely disconnected from each other (Hofman et al., 2021; Rocca & Yarkoni, 2021).

Complex models with a large number of parameters (e.g., networks) are known to have a strong tendency to overfit, especially when fitted to a small sample (i.e., a short time series; Bulteel et al., 2018; Yarkoni & Westfall, 2017). Clinicians would hope that the idiographic networks they build for their patients are generalizable, implying that the network can accurately describe the patients’ temporal dynamics during both the data collection and a similar time period. This way, the resulting personalized treatment plans are not guided by random noises. Careless usage of such networks without sufficient quality assessment could lead to false conclusions of how symptoms interact and can be misleading for the patients. However, overfitting is not a problem that can be instantly spotted. Overfitting will become visible only through certain validation procedures in which the estimated network gets applied to another sample and evaluated on its predictive accuracy: whether it can make accurate predictions with minimal errors for data in this other sample.

Predictive accuracy, therefore, should be considered as an important quality index of a statistical model that indicates a model’s risk of overfitting. Fortunately, this quality index is gradually attracting more attention from psychological researchers (Rocca & Yarkoni, 2021; Verhagen, 2022), especially in the field of time-series analysis (Lafit et al., 2022; Loossens, Dejonckheere, et al., 2021; Loossens, Tuerlinckx, & Verdonck, 2021; Revol et al., 2024). Two studies provided first indications that the generalizability and predictive accuracy of VAR(1) networks are not satisfactory (e.g., Bulteel et al., 2018; Mansueto et al., 2023). Bulteel et al. (2018) showed that when fitted to short time series generated under a VAR(1) process, the correctly specified VAR(1) model still had a lower predictive accuracy than simpler models because of overfitting. Such findings clearly show the importance of carefully planning the number of time points to be collected from one individual to ensure sufficient predictive accuracy of the VAR(1) networks. It is thus crucial to apply methodologies that can calculate the exact number of time points required for a temporal network to be accurately retrieved with minimal risk of overfitting. Ideally, such methods are used before data collection for sample-size planning. Yet for existing network studies, the methods could also be very useful for evaluating the risk of overfitting for estimated networks and helping researchers interpret the networks with a critical perspective.

In the remainder of this article, we start by providing a detailed description of the idiographic VAR(1) model for the readers to become more familiar with the foundation of a temporal network. Then, we discuss two simulation-based sample-size planning methods for VAR(1) networks recently developed by Revol et al. (2024): (a) power analysis of individual edges and (b) predictive-accuracy analysis of the entire network. Using a set of hypothetical network parameters, we further demonstrate how to use both methods for a priori sample-size planning. Then, we show how both methods can be used retrospectively to assess the quality of idiographic networks estimated in existing studies. For this section, we begin by presenting a review of existing idiographic-network studies, focusing on the number of nodes and time points used and providing an overview of the current “big network, short time series” problem in the field. We then apply both methods to networks estimated in Bak et al. (2016) and Epskamp, van Borkulo, et al. (2018) to show whether the number of time points used in both studies was large enough to ensure sufficient power for the significance test of individual edges and network predictive accuracy. Finally, we provide a summary of the findings and discuss the future directions of improving the sample-size optimization methods and the usage of idiographic networks.

Method

The VAR(1) model and temporal network

Model specification and assumptions

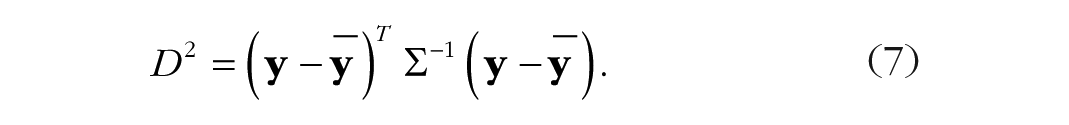

A typical ESM study aiming to build an idiographic temporal network for a single patient assesses

where

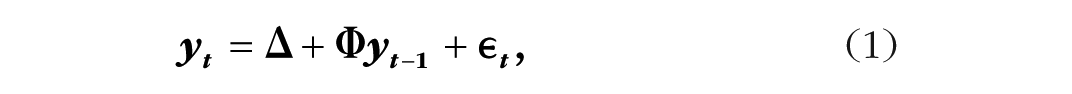

For example, to study the temporal relationships between sad mood and suicidal thoughts for a patient diagnosed with major depressive disorder, the following VAR(1) model can be employed:

where

In this example, the autoregressive coefficient

A simulated bivariate time series of variables

Just like any parametric statistical model, the VAR(1) model has certain assumptions that need to be met to ensure valid inferences. Besides the common assumptions of normality, linearity, and homoscedasticity for linear-regression models (Poole & O’Farrell, 1971), another particular assumption of the VAR(1) model is covariance stationarity: All parameters in the VAR(1) model (i.e.,

Visualizing the VAR(1) model

The VAR(1) model in the example can be visualized as an idiographic temporal network (see Fig. 3), which presents the temporal relationships (i.e., the autoregressive/cross-regressive coefficients

A hypothetical temporal network.

Simulation-Based Power Analysis

When researchers collect a sample of ILD from a patient and estimate an idiographic network, they aim that estimated intercepts and slopes can accurately resemble the true parameters in the VAR(1) model: Ideally, nonzero effects (i.e., the autoregressive and cross-regressive effects in the previous example) can be detected, and zero effects (i.e., the intercept,

Stepwise procedure

Suppose that the temporal dynamics of sadness and suicidal thoughts of a patient were estimated through Equations 2 and 3. Now, researcher Jesse intends to replicate such findings in a similar patient by fitting the VAR(1) model to the ILD that will be collected from this other patient. To decide how many time points are needed to ensure sufficient power of all the significance tests to be conducted (i.e., for the individual elements in

The procedures of simulation-based power analysis for the lag-1 vector-autoregressive model.

Step 1: determine model and testing parameters

First, Jesse needs to specify the hypothesized effect sizes of the VAR(1) model. This requires input on all parameters of the VAR(1) model:

Other necessary parameters to specify for this analysis include the significance level for the test of each effect,

Step 2: data simulation

With the parameter input, samples of ILD following the specified VAR(1) process can be simulated, representing the time series one can collect from the patient. For detailed procedures on data simulation, see Appendix 2 in the Supplemental Material.

Step 3: estimate VAR(1) models from simulated samples

For each of the

In Equation 4, Latin letters are used to represent the estimates (e.g.,

Step 4: extract p values and calculate power

The statistical power of each

Results of Power Analysis for the Hypothetical Network

Note: Values in bold denote results that exceed the target performance of .8.

As expected, in general, the power of the significance tests for larger effects is higher. As the sample size becomes larger, the power of the significance tests for all nonzero effects (e.g., all slopes and the intercept

Reflection on power analysis

Simulation-based power analysis focuses on one effect at a time and can offer recommendations on the required sample size for the significance test of each effect to be sufficiently powered. Yet as shown in the example, such recommendations usually vary for different effects in the same VAR(1) model and thus cannot always offer clear suggestions on an appropriate sample size for a whole model to be of good quality. A sample size that ensures sufficient power (i.e., higher than .8) for the significance tests of small effects (e.g., the cross-regressive effect in the previous example,

Simulation-Based Predictive-Accuracy Analysis

To assess the predictive accuracy of a statistical model, the common workflow usually starts by collecting a sample on variables of interest. This sample is then divided into two parts: the training set and the test set. Researchers estimate (“train”) the model with the training set and use the model estimates to make predictions for the outcome variables in the separate test set. Then, they can compare the predicted values for the test set and the observed values in the test set to evaluate the model’s predictive accuracy as a way to assess the generalizability of the model estimates to unseen samples. For the assessment process to be unbiased, the two parts of the sample should be comparable, more specifically, generated with the same underlying processes in which the true relationships among variables are the same (Hastie et al., 2009).

In empirical research, the method of cross-validation is often applied to estimate a model’s predictive accuracy such that the aforementioned division into training and test sets is carried out multiple times (e.g., Bulteel et al., 2018). Because the goal is to conduct such generalizability analysis before data collection as a way to avoid overfitting, such training and test sets can be simulated (e.g., Ernst et al., 2021; Lafit et al., 2022). In the following section, we describe step by step the procedure of simulation-based predictive-accuracy analysis proposed in Revol et al. (2024) while leaving out technical details to make it easily accessible for applied researchers. For details, see Revol et al. and Appendix 2 in the Supplemental Material. We also demonstrate how this method can be applied in a relevant research setting and compare this method with power analysis using the earlier example.

Stepwise procedure

Step 1: determine model and testing parameters

Again, we provide an overview of the procedures in Figure 5. The first three steps of predictive-accuracy analysis are highly similar to those of power analysis. As a preparation for simulating the training sets (i.e., the samples on which the VAR[1] model is estimated), Jesse needs to specify (a) the hypothesized network parameters (

The procedures of simulation-based predictive accuracy analysis for the lag-1 vector-autoregressive model.

Steps 2 and 3: data simulation; estimate VAR(1) models from training sets

The same simulated samples in power analysis can be used as training sets in predictive-accuracy analysis for a meaningful comparison between the two methods. Naturally, the estimates of the VAR(1) model acquired earlier can also be used here.

The process of simulating the test set is identical to the process described earlier. Because the test set is only for assessing the risk of overfitting for the earlier estimates, Jesse will not fit the VAR(1) model to it. This highlights a core difference between power analysis and predictive-accuracy analysis: Power analysis follows a purely in-sample approach in which no separate test set is used, whereas predictive-accuracy analysis investigates the out-of-sample performance of estimated models.

Step 4: apply model estimates to the test set and calculate prediction errors

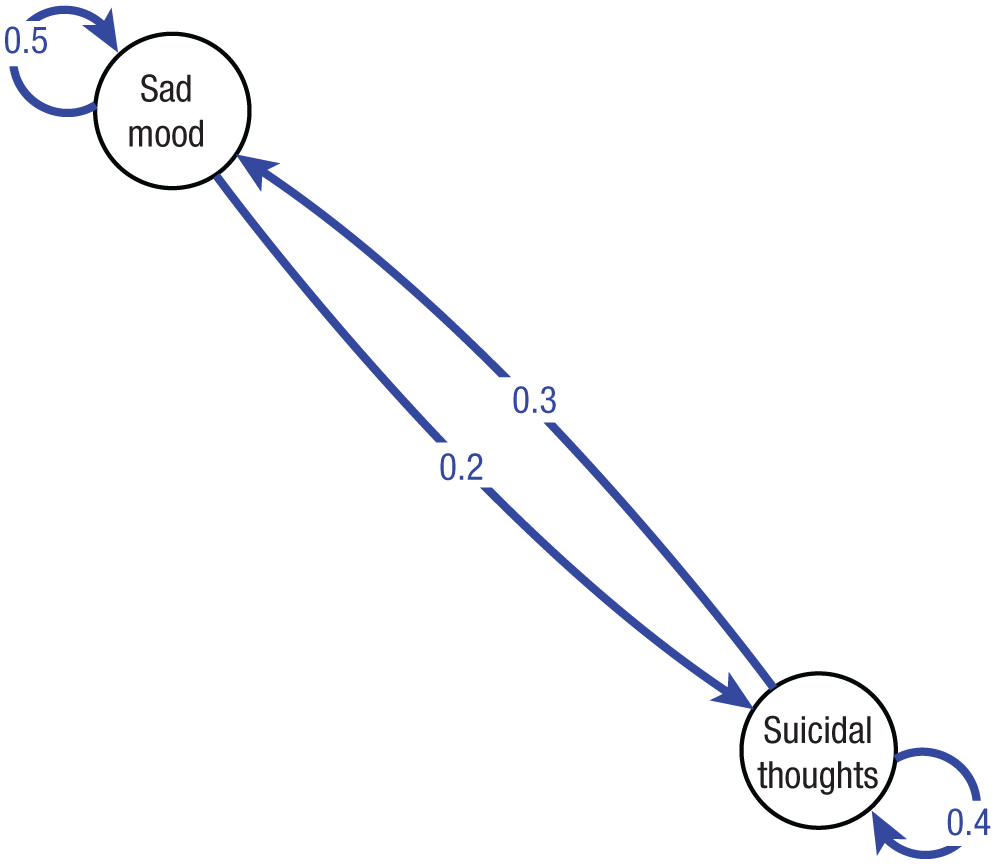

Jesse then uses the VAR(1) estimates acquired from each training set to calculate the predicted values (e.g.,

To assess the predictive accuracy of a set of VAR(1) estimates, we examine whether the multivariate prediction errors resemble the simulated errors for each time point. Because every observation includes some random noise (

The prediction-error vector for a given time point

This vector of prediction errors,

The Mahalanobis distance is a standardized distance measure between one observation and the center of a multivariate normal distribution in which different weights are applied to different variables based on their (co)variances. Therefore, we can standardize the prediction-error vectors by calculating the squared Mahalanobis distance between each error vector and the center of the innovation distribution, which is considered to have the mean of

This calculation achieves an important dimension reduction: The vectors of both prediction errors and simulated innovation (i.e.,

The similarity can then be quantified as the squared Pearson’s correlation (

Step 5: calculate the percentage of networks with satisfactory predictive accuracy

As the final step, we calculate the percentage of networks estimated from the 5,000 training sets that can be deemed not overfitting using the thresholds

The results of predictive-accuracy analysis for the example is presented in Table 2. If the threshold of SPAP is set as .8, the sample-size recommendations are 100 using the threshold of

Sufficient Predictive Accuracy Probability of the Hypothetical Network

Note: Values in bold denote results that exceed the target performance of .8.

Reflection on predictive-accuracy analysis

In this section, we demonstrate the predictive-accuracy analysis using one set of VAR(1) model parameters. Such analysis can be performed rapidly: For the

Application: Retrospective Quality Assessment of Existing Network Studies

In the previous section, we showed how a priori power analysis and predictive-accuracy analysis can be applied to support sample-size planning before the data collection of single-case network studies. Here, we demonstrate the retrospective usage of both methods. With the same methods, we can assess whether the sample size adopted in an existing network study was large enough to ensure sufficient quality of the estimated networks. This application is based on the assumption that the true network parameters are identical to the estimates. Given this assumption and the number of time points collected in an existing study, if the study is to be replicated, one can calculate through simulation (a) the achieved statistical power of the test for each edge in the network and (b) SPAP—the probability of the estimated network having sufficient predictive accuracy and not overfitting the sample.

To demonstrate this usage of both methods, we first conducted a systematic review of previous studies in the field of clinical psychology that estimated idiographic networks. For the detailed search protocol, see Appendix 2 in the Supplemental Material; for an overview of such studies, see Table 3 below. A first impression of the results is that on average, the number of variables/nodes used in these studies was large (range = 5–21, Mdn = 9), yet the number of time points available for analysis tended to be small. 5 This suggests a high risk of overfitting for the estimated networks (Bulteel et al., 2018). Here, we apply the two methods to two studies (i.e., Bak et al., 2016; Epskamp, van Borkulo, et al., 2018) for a closer inspection of the quality of the estimated networks. These two studies were chosen as representative examples of the field: The number of variables and predictable time points in both studies are on an average level across all studies in Table 3 below. In addition, both studies reported the research design and data-analysis procedures thoroughly and made the materials necessary for reproducing the results easily accessible.

An Overview of Studies Using Person-Specific Temporal Networks

Note: HCs = healthy control subjects.

Before we start, an important message we wish to convey is that this retrospective approach is recommended only for assessing the quality of existing studies. When planning for a new study, we strongly recommend that researchers justify their plans of sample size before data collection instead of afterward. The reason lies in the uncertainty of the effect size estimated from samples, especially from small samples (Leon et al., 2011). Without proper consideration of the uncertainty, using such estimates as parameters in retrospective power analysis can further lead to biased power estimates (Albers & Lakens, 2018) and naturally biased SPAP. To account for such uncertainty in this retrospective analysis, we incorporated the standard errors of all autoregressive/cross-regressive coefficients when deciding the value of

Example 1: Bak et al

Bak et al. (2016) collected ESM data from a patient (“Miss A”) who was receiving pharmacological treatment for psychosis experiences. The patient was followed for a year in this study and asked to provide ratings for multiple symptoms 10 times per day during 4 days of each week. Such symptoms included hearing (i.e., hearing voices), down, relaxed, paranoia, and control (i.e., loss of control), and they were all measured on 7-point Likert scales. Throughout the year, Miss A was in a “stable state” most of the time, during which she was prescribed 350 mg of clozapine per day. Yet there were a few episodes of impending relapses and full relapses in which Miss A experienced heightened severity of her symptoms. The dosage of clozapine was increased to 400 and 450 mg/day, respectively, during the two states. Bak et al. fitted a temporal network to the data in each of the three states (i.e., stable state, impending relapse, and full relapse) and was interested in exploring whether the temporal relationships among Miss A’s symptoms differed among the three states.

In this section, we demonstrate the stepwise procedure of applying both retrospective power analysis and predictive-accuracy analysis to this study. Such applications will provide ways of evaluating the quality of the estimated networks and the validity of conclusions drawn by Bak et al. (2016) and from other similar network studies.

Step 1: record the actual sample size

When fitting a network to a time series, the actual number of predictable time points in the analysis is smaller than the total number of measurement prompts sent to a participant because of the exclusion of certain observations. Therefore, we cannot directly use the latter in simulation-based analyses. Here, we briefly discuss the two most common reasons for such exclusions.

First, an observation can be analyzed in the VAR(1) model only if its lagged values are not missing. Given that imputation of the missing data is not common practice in such time-series analysis yet, observations that are either missing or have missing lagged values cannot be predicted.

Second, the VAR(1) model assumes equal intervals between two consecutive observations. This is a reasonable assumption because the strength of a temporal relation depends on the intervals between the two measurements. For example, if Miss A feels down at this moment, we can be more confident in predicting that she will still feel down in 3 hr than in 3 days. This assumption of the VAR(1) model usually holds for same-day observations: Miss A received a prompt approximately every 90 min between 7:30 a.m. and 10:30 p.m. in this study. However, the overnight lag between the last observation of a day and the first observation of the next day is much longer than 90 min, yet these two observations are still considered consecutive in the time series. Therefore, the first observation of each day is not accompanied by a valid lagged value and thus is not being predicted by the previous observation. For a more nuanced discussion of this issue, see Berkhout et al. (2025).

After such data-preprocessing procedures, the number of predictable time points to be used in later data simulation is naturally smaller than the total number of prompts sent to the patient, even smaller than the number of prompts that the patient responded to. For the three states of Miss A (stable state, impending relapse, and full relapse), although the number of complete observations was 662, 158, and 119, respectively, the actual number of observations used in the network analysis was only 353, 86, and 63.

Step 2: acquire network estimates

Before starting the data simulation, we also need to specify the network parameters, for which we will use the network estimates in an existing study. In general, if researchers reported the estimates of the intercepts, slopes, and innovation (co)variances thoroughly, we can use this information as the simulation parameters. Otherwise, we should reanalyze the data by fitting the VAR(1) model to the data following the identical data-preprocessing procedures used in the original study. For the current example, we contacted one of the coauthors of Bak et al. (2016) who had access to the data of Miss A and successfully reproduced the analysis with R.

The estimated network of Miss A’s stable state is shown in Figure 6a 6 with

and

The estimated networks of Miss A’s stable state with uncertainty: (a) point estimates, (b) 1 SE larger, and (c) 1 SE smaller.

Likewise, the estimated networks of Miss A during the states of impending relapse and full relapse are shown in Figure 7. In the original study, nonsignificant edges were also visualized in all three networks with the corresponding point estimate (Bak et al., 2016). From visual inspections of the networks, the authors concluded that connections among symptoms became stronger during the relapse states.

7

In addition, the authors also calculated network centrality indices for each symptom and compared them among the three networks. The centrality indices of a node are calculated by aggregating the edges pointing toward it and from it. This is considered a way of quantifying the importance of a node (Johal & Rhemtulla, 2024).

8

For the symptom

The estimated networks of Miss A’s impending-relapse and full-relapse states.

These two conclusions were both based on the point estimates of edges in the networks and did not consider the degree of uncertainty of these estimates—the standard error of the estimate of each edge. The uncertainty of the estimates of all lagged effects was indeed small for the stable state (all SEs were smaller than .08) given that the analyzed sample contained a large number of time points (

Taken all together, three sets of values are used for data simulation. First, the network point estimates of each of the three states are used as the true network parameters for data simulation in later steps. To account for the uncertainty of the estimates, we used the standard error of each estimated lagged coefficient (Liu & Wang, 2019; Perugini et al., 2014) to create two additional networks for data simulation. One network has a larger effect size than the point estimates, with all lagged effects enlarged by 1 SE. The other network has a smaller effect size from all lagged effects being shrunk by 1 SE.

9

These two networks represent an upper bound and a lower bound, respectively, of the true network parameters (for the network visualizations of these two matrices for Miss A’s stable state, see Figs. 6b and 6c). By running power and predictive-accuracy analyses with these two lower-bound and upper-bound matrices as the true

Step 3a: power analysis

With input acquired in Steps 1 and 2, we can further set the significance level of the test (e.g.,

It is important to recall that power analysis and predictive-accuracy analysis share the same training sets and can thus be conducted in parallel to each other. For Miss A’s stable state, power analysis was run with a set of values for

Results of Power Analysis for Edges Directed to

Note: Values in bold denote results that exceed the target performance of .8.

For the two networks in Miss A’s impending- and full-relapse states, power analysis was first conducted for each state with

Results of Power Analysis for the Network of Miss A’s Impending-Relapse State

Note: Values in bold denote results that exceed the target performance of .8.

Results of Power Analysis for the Network of Miss A’s Full-Relapse State

Note: Values in bold denote results that exceed the target performance of .8.

Step 3b: predictive-accuracy analysis

As mentioned in the previous section, the search for the optimal number of time points is primarily dependent on the results of the predictive-accuracy analysis. The search process is as follows. We set the starting value of

Results of the predictive-accuracy analysis for Miss A’s stable state are shown in Table 7. All networks estimated with simulated training sets with 353 time points showed sufficient predictive accuracy on the test set (

Sufficient Predictive Accuracy Probability of the Network of Miss A’s Stable State

Note: The result presented in the first line of each cell represents the sufficient predictive accuracy probability when using the point estimates as the simulation parameter,

Results based on the lower-bound and upper-bound

Tables 8 and 9 show the results of predictive-accuracy analysis for the networks during the impending- and full-relapse states. Given the actual number of analyzable time points during the two states (

Sufficient Predictive Accuracy Probability of the Network of Miss A’s Impending-Relapse State

Note: Values in bold denote results that exceed the target performance of .8.

Sufficient Predictive Accuracy Probability of the Network of Miss A’s Full-Relapse State

Note: Values in bold denote results that exceed the target performance of .8.

Example 2: Epskamp, van Borkulo, et al

Hoping to limit spurious edges in networks and to avoid overfitting, many network researchers have started to use a series of methods implementing regularization techniques (Bar-Kalifa & Sened, 2020; Epskamp, van Borkulo, et al., 2018). The most commonly used is the least absolute shrinkage and selection operator (LASSO; Tibshirani, 1996). LASSO applies a downward bias to the model such that strong edges in the network get reduced (“shrinkage”) and rather weak edges are set to 0 (“selection”), resulting in a sparser network with fewer edges. Regularized networks have shown better predictive accuracy than standard networks (Bulteel et al., 2018). However, this relative advantage over standard networks does not mean that regularized networks are guaranteed to have satisfactory quality (Zhou et al., 2024). For example, LASSO regression models suffer from a higher false-positive rate, especially when the available number of time points for the analysis is small relative to the number of predictors (Lafit et al., 2019). Moreover, LASSO regression with a strong penalty can yield an overly sparse model and show unsatisfactory predictive performance (Musoro et al., 2014).

Here, we apply the current method of predictive-accuracy analysis to a regularized network estimated by Epskamp, van Borkulo, et al. (2018) for a retrospective evaluation of the risk of having low predictive accuracy for this network. Power analysis is not conducted for this example because of the difficulty of making unbiased statistical inferences about any edge (i.e., calculating the standard-error and

Data used for the network analysis were collected from a female patient who suffered from major depressive disorder. The patient received five prompts per day at an average interval of 3 hr for 2 weeks, resulting in a total number of 70 prompts. Seven variables were used in the network analysis, including sadness, tiredness, rumination, bodily discomfort, nervousness, relaxation, and the ability to concentrate. After excluding missing data and overnight lags, 47 time points were available for the analysis.

The estimated regularized network is shown in Figure 8. Note that given the difficulty of statistical inferences with regularized models, the decisions of which edges to visualize cannot be based on the results of the significance tests of them anymore as for the standard networks discussed previously. Thus, all nonzero edges are visualized in the network. Accordingly, only the point estimates of the edges are used as the parameter values during data simulation—no plausible range of

The estimated regularized temporal network in Epskamp, van Borkulo, et al. (2018).

We conducted simulation-based predictive-accuracy analysis on this network after minor changes in the network-estimation process: All the zero edges were constrained to be 0 during the estimation. This adapted estimation process has been used in related algorithms, such as relaxed LASSO (Meinshausen, 2007). All other procedures remain the same as previously described.

Results of the analysis are shown in Table 10. With samples of 47 time points, only 42% of the networks estimated with the simulated time series demonstrate sufficient predictive accuracy with the threshold of

Sufficient Predictive Accuracy Probability of the Regularized Network in Epskamp, van Borkulo, et al. (2018)

Note: Values in bold denote results that exceed the target performance of .8.

To summarize, results of retrospective predictive-accuracy analysis show that the estimated networks in both Bak et al. (2016) and Epskamp, van Borkulo, et al. (2018) are under considerable risk of overfitting, except for the network of Miss A’s stable state. Such results further suggest that the temporal networks estimated in other studies (see Table 3) are also likely to overfit given that in most cases, more nodes and fewer time points were used in the network analysis (except for Bos et al., 2018; Wichers et al., 2016).

Discussion

As a promising tool for clinical research and practice, idiographic temporal networks have been receiving increasing interest from researchers and clinicians. However, reasonable quality concerns of such networks, for example, the replicability of each individual edge and the risk of overfitting for the whole network, have been raised but not sufficiently handled in previous literature (Bringmann, 2021). In this article, we showed how simulation-based power analysis and predictive-accuracy analysis can be used to tackle these concerns. Applying both methods before starting a new study (a priori) can help researchers determine a sufficient number of time points to collect from an individual, which helps ensure satisfactory edge replicability and network predictive accuracy. Moreover, both methods can also be applied retrospectively to quantify the risk of both quality problems for a previously estimated network.

Our key findings are as follows. First, for both power analysis and predictive-accuracy analysis, we showed that the number of time points in a sample (

The simulation-based methods described in this article can efficiently provide sample-size suggestions in an “idealistic” setting with no assumption violation of the VAR(1) model. Thus, feasibility and practical considerations should still have a role in the sample-size-planning procedures for acquiring a valid suggestion. When power analysis and predictive-accuracy analysis are used, the suggested number of time points refers only to the number of observations that are accompanied by valid lagged values and are not missing themselves. However, the more practical question researchers and clinicians need to answer with sample-size planning is how many measurement prompts they need to send the participants. Thus, additional steps need to be taken beyond the simulation-based analysis to acquire a more practical sample-size suggestion. Imagine an example in which the power analysis and predictive-accuracy analysis suggest that a researcher should collect 100 time points so that the network to be estimated can reach the aforementioned thresholds. If the researcher decides to send five measurement prompts to the participant each day, one out of five prompts will not have valid lagged values because of overnight lags. Moreover, if the researcher expects a compliance rate of 80% from the participant, then a maximum of 40% of the observations will be either missing themselves or have missing lagged values. Given these considerations, a safe suggestion for the number of time points to collect can be calculated as:

which is much larger than the suggestion made by the idealistic simulation-based analysis. Likewise, when conducting the retrospective analysis for a study, the number of predictable observations is needed but often not reported explicitly (see Table 3; Lafit et al., 2025). Therefore, we urge future ESM researchers to report such information explicitly.

A general impression readers get from such findings might be that the more time points in the sample, the better. This is indeed true in an ideal setting in which no model assumption is violated. However, it is unrealistic to expect that the assumption of stationarity always holds, especially when the sampling period becomes longer. For example, the change of treatment plans can potentially influence the temporal dynamics of a patient’s symptoms (Wichers et al., 2016). Specifically, a more effective treatment will ideally result in a lower mean level of the symptoms and looser connections among different symptoms (Cramer et al., 2016; van Borkulo et al., 2015). On the other hand, a heightened mean level of symptoms and stronger connections among symptoms are often considered as a sign of an impending episode of mental-health crisis in the literature of early warning signals (e.g., Helmich et al., 2021; Schreuder et al., 2020; Smit et al., 2022). Thus, the number of time points in a sample and the risk of assumption violation for the VAR(1) model can become two competing factors of network quality. This delicate yet crucial balance between these two factors requires more careful consideration from researchers.

A starting point for moving forward might be to rethink the general guideline of “the more nodes, the better” and not to embrace a complexity that the statistical model is not yet ready for. Because our findings suggested that networks estimated with few nodes usually require a rather small number of time points to be of good quality (consistent with findings of Mansueto et al., 2023), we see two directions of improving the practice of temporal network analysis. First, more research should be done to assess the quality of regularized networks because using such regularization is an effective way to create sparser and, thus, smaller networks. Such research can include (a) power analysis of regularized networks with the help of proper inference methods (Dezeure et al., 2015; Waldorp & Haslbeck, 2024) and (b) extending the predictive-accuracy analysis to regularized networks. Second, researchers could consider shifting the purpose of using such networks from exploratory analysis to confirmatory analysis and from delineating a complex system with dozens of symptoms to a simple dynamic of a few symptoms. If clearer hypotheses and theories can be generated regarding the temporal dynamics in dyads or triads of symptoms (Bringmann, 2024; Bringmann et al., 2024; Eronen & Bringmann, 2021), the networks can be powerful tools to confirm or to falsify such hypotheses because they will require only a reasonable number of time points to be of good quality. With a shorter sampling period, patients will also be less burdened by the data collection, which can, in turn, appease the feasibility concerns many researchers have for long sampling duration in the first place. Besides “downsizing” the network, we also encourage researchers to think beyond the VAR(1) model and question whether the specification of this model reflects the dynamics of the symptoms accurately. In line with this, we consider it important to develop new statistical models that are more congruent with the network theory (e.g., incorporating context into analysis; Bringmann et al., 2024) and to evaluate these models by performing formal model comparisons with the VAR(1) model (Borsboom, 2022).

Reflecting on the data simulations performed in the current study, we acknowledge that these procedures tend to have rather strong assumptions. First, we assume that the parameter we specify in the a priori analysis and the parameter estimates we use in the retrospective analysis are accurate. We recommend that researchers conduct both a priori and retrospective analyses based on network estimates acquired in existing studies. Moreover, we encourage researchers to test the robustness of such network estimates via resampling approaches, such as bootstrapping, when they have access to the raw time-series data (Bringmann et al., 2013; Epskamp, Borsboom, & Fried, 2018). Second, the simulated innovation terms in the VAR(1) model are assumed to follow a multivariate normal distribution. For one of the studies we investigated in this article (Bak et al., 2016), we tested whether the empirical innovation terms followed such a multivariate normal distribution by calculating the multivariate kurtosis and skewness statistics (Cain et al., 2017). Our results suggested that even for the stable state, the state with the largest number of time points (

With this article, we hope to emphasize the importance of careful sample-size planning when using idiographic temporal networks. For such single-case network studies, sample-size justification is equally if not more important than for cross-sectional studies, considering the complex network’s tendency of overfitting. An insufficient sample size cannot result only in low statistical power—certain important lagged effects could be deemed statistically insignificant but also overfitting—the network estimates could be largely driven by noise and thus differ from the true temporal dynamics. Such inaccurate networks can be highly misleading for patients if used for personalized feedback because personalized feedback based on time-series analysis has been shown to have a considerable impact on the receiver’s self-perception regardless of the feedback being accurate or not (Leertouwer et al., 2022). Therefore, we argue that sample-size justification from the perspectives of network quality should become the standard practice in studies using temporal networks. For this purpose, researchers should ideally conduct the a priori analysis during the planning phase of a study. If this is not realized, researchers should conduct the retrospective power and predictive-accuracy analysis to show whether an estimated network can reach the quality thresholds. Through defining a quality bare minimum and checking whether it can be met, such methods will advance the research practice of idiographic networks.

Supplemental Material

sj-docx-1-amp-10.1177_25152459251372116 – Supplemental material for Meeting the Bare Minimum: Quality Assessment of Idiographic Temporal Networks Using Power Analysis and Predictive-Accuracy Analysis

Supplemental material, sj-docx-1-amp-10.1177_25152459251372116 for Meeting the Bare Minimum: Quality Assessment of Idiographic Temporal Networks Using Power Analysis and Predictive-Accuracy Analysis by Yong Zhang, Jordan Revol, Ginette Lafit, Anja F. Ernst, Josip Razum, Eva Ceulemans and Laura F. Bringmann in Advances in Methods and Practices in Psychological Science

Footnotes

Acknowledgements

We thank Peter de Jonge for providing valuable input to this study. We thank Marjan Drukker for kindly providing us the data necessary for the analysis and Sacha Epskamp for making the data and analysis code openly available. We thank Fridtjof Petersen for helping us design the flow diagrams used in this article. We are grateful to the editors, reviewers, Ena Vojvodic, Theodor Nowicki, and Ilse P. Peringa for providing helpful comments to the article.

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contributions

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.