Abstract

Despite its potential to accelerate academic progress in psychological science, public data sharing remains relatively uncommon. In order to discover the perceived barriers to public data sharing and possible means for lowering them, we conducted a survey, which elicited responses from 600 authors of articles in psychology. The results confirmed that data are shared only infrequently. Perceived barriers included respondents’ belief that sharing is not a common practice in their fields, their preference to share data only upon request, their perception that sharing requires extra work, and their lack of training in sharing data. Our survey suggests that strong encouragement from institutions, journals, and funders will be particularly effective in overcoming these barriers, in combination with educational materials that demonstrate where and how data can be shared effectively.

Keywords

In every empirical discipline, scientific progress rests upon the availability of research data. The public sharing of such data holds great potential for scientific progress (Ceci & Walker, 1983; Fecher, Friesike & Hebing, 2015). Nevertheless, in many disciplines—including psychology— data sharing is still relatively rare (e.g., Ceci, 1988; Reidpath & Allotey, 2001; Savage & Vickers, 2009; Vanpaemel, Vermorgen, Deriemaecker & Storms, 2015; Wicherts, Borsboom, Kats, & Molenaar, 2006; Wolins, 1962). In this article, we report the outcome of a survey designed to reveal why researchers are reluctant to share data, and what can be done to make data sharing more attractive. We define

In order to put our survey into context, we first outline the recent initiatives that journals, researchers, and funders have developed in order to encourage public sharing of primary research data. This list of initiatives underscores the fact that public data sharing is increasingly being recognized as an important component of the scientific process. Nevertheless, it remains unclear how successful these initiatives will be, how their effectiveness can be increased, and how well they resonate with researchers.

Recent Initiatives to Encourage Data Sharing

Initiatives from journals

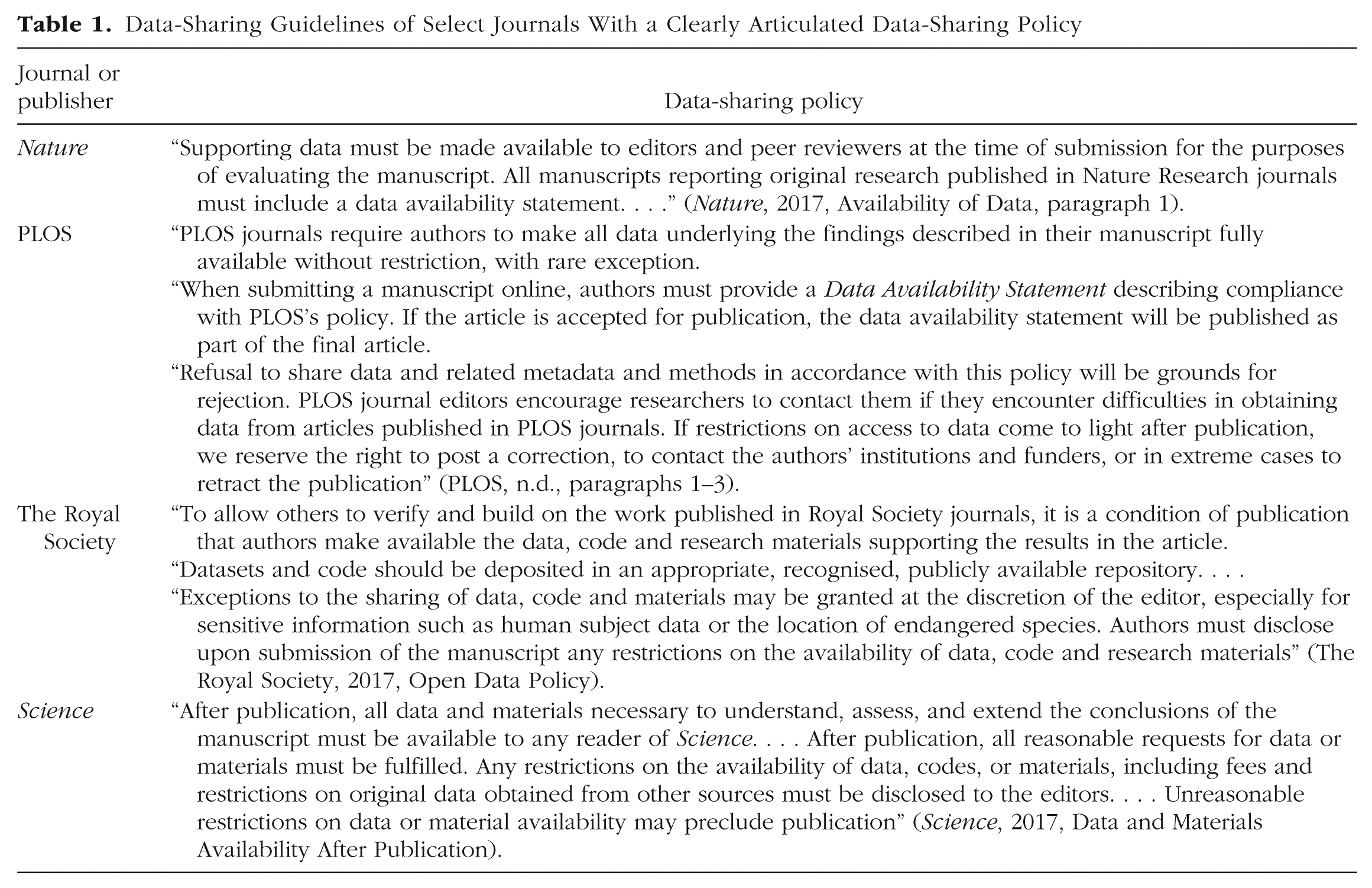

Academic journals may promote data sharing either by mandating it (i.e., stipulating sharing as a condition for publication) or by encouraging it (e.g., referring authors to appropriate sharing tools; Alsheikh-Ali, Qureshi, Al-Mallah, & Ioannidis, 2011).

A prominent example of stringent sharing policies are those employed by the collective journals of The Royal Society. To publish in a Royal Society journal, authors must make data, code, and research materials publicly available in appropriate and recognized repositories (The Royal Society, 2017). At PLOS, a similar data-sharing policy applies to all journals (PLOS, n.d.).

Stringent journal policies appear to be effective. It may not come as a surprise that mandatory archiving policies substantially increase the probability of actually finding the data in an online archive (Vines et al., 2013). In addition, authors are more likely to share their data if their article was published in a high-impact journal (Piwowar & Chapman, 2010), possibly because high-impact journals often have more stringent sharing policies than other journals do (Sturges et al., 2015).

Several initiatives have emerged to further encourage data sharing across a wide range of scientific journals. A prominent example is the badges project. Initiated by the Center for Open Science (COS), the badges project enables journals to incentivize authors to follow open-science practices by explicitly acknowledging them as such (Open Science Framework, 2016). Badges can be awarded for different kinds of open practices (Open Science Framework, 2016). For example, the Open Data badge is earned when researchers make publicly available the data that are necessary to reproduce the results reported in the publication. Kidwell et al. (2016) found that after the premier journal

COS also developed another initiative to promote an open research culture: the Transparency and Openness Promotion guidelines (TOP; Nosek et al., 2015). The TOP guidelines provide concrete journal policies about eight transparency standards, one of which is data sharing. Not every standard applies equally to all journals and disciplines, and therefore the TOP guidelines come in three levels of strictness. The first level is the most lenient, and the third is the most strict. The first level for data sharing makes it optional for authors and cost free for journals (i.e., the article indicates “whether data are available and, if so, where to access them”). The second level makes data sharing mandatory for authors but virtually cost free for journals (i.e., “Data must be posted to a trusted repository,” and “Exceptions must be identified at article submission”). The third level of implementation requires that authors share their data and may entail costs in time and effort for journals (i.e., “Data must be posted to a trusted repository, and reported analyses will be reproduced independently before publication”; all quotes taken from Nosek et al., 2015, p. 1424).

The badges project and TOP guidelines are being adopted by an increasing number of journals (Center for Open Science, n.d.-b). Moreover, a growing number of journals have outlined their own policies regarding data sharing. An overview of some of these policies is provided in Table 1.

Data-Sharing Guidelines of Select Journals With a Clearly Articulated Data-Sharing Policy

Initiatives from researchers

Data sharing has also been promoted through collaborative efforts of individual researchers. A prominent example is the Peer Reviewers’ Openness initiative (Morey et al., 2016). Researchers who sign on to this initiative refuse to offer comprehensive reviews of manuscripts unless five requirements are met. One of these requirements is that the data are publicly available in a trusted repository. Authors who do not wish to comply with the requirements should provide a justification.

The community-led Registered Reports (RR) initiative also includes an emphasis on data sharing (COS, n.d.-a). In contrast to conventional articles, Registered Reports are peer reviewed prior to data collection (COS, n.d.-a). Manuscripts that survive prestudy review based purely on theoretical and methodological quality are provisionally accepted for publication regardless of the results that are subsequently obtained, provided that various prespecified quality checks are met. Although public data archiving is not a necessary feature of the RR format, many journals that have adopted RRs incorporate it into their policies to facilitate sharing and further enhance transparency. To date, of 88 journals that have adopted RRs, 32 require data deposition (Registered Reports, n.d.); these journals include, among others,

Another community-led initiative has resulted in the FAIR data principles, a set of guidelines that require data to be

Initiatives from funders

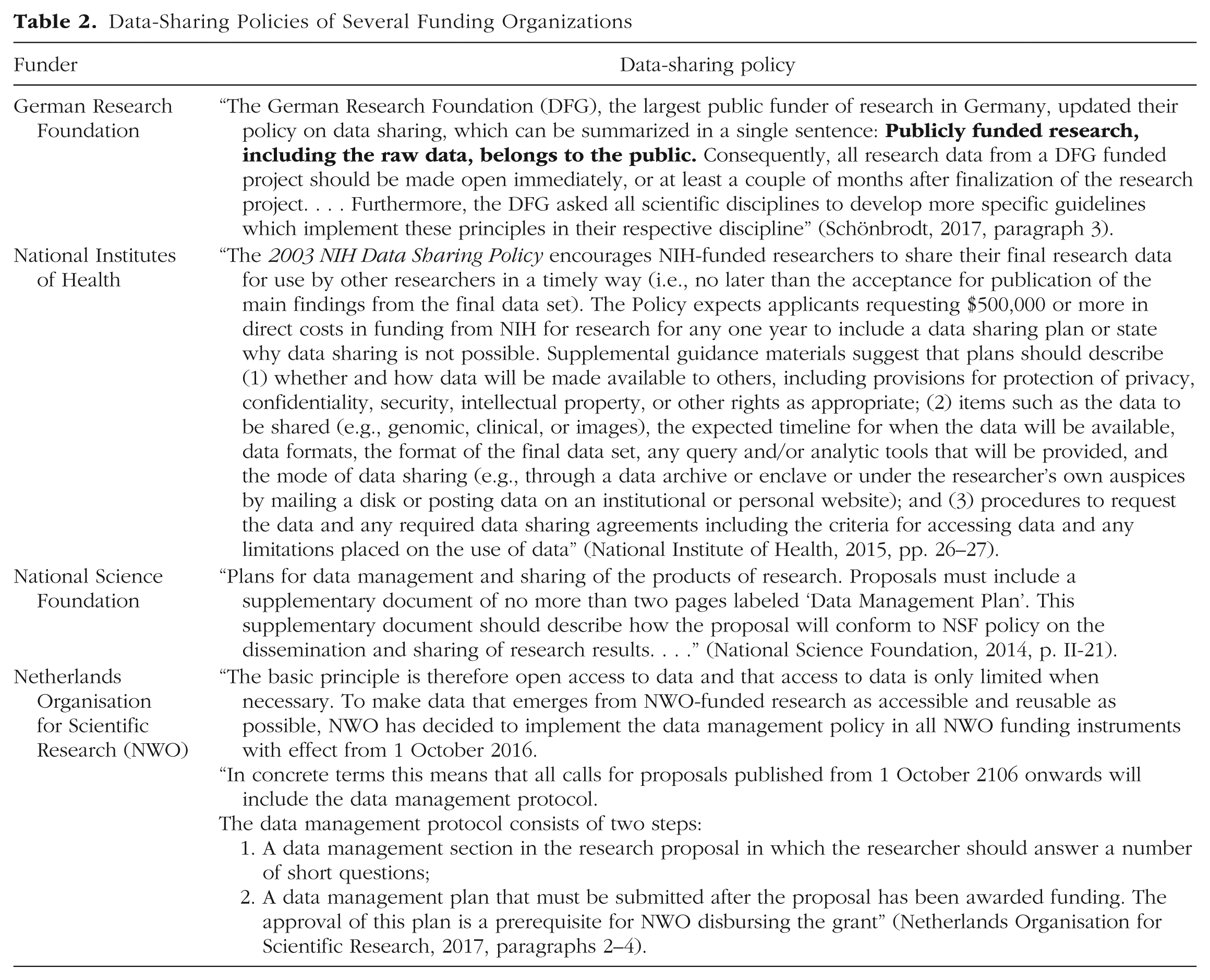

Research funders worldwide actively promote data sharing. Such funders include the National Science Foundation (2014), the National Institutes of Health (2015), and the Netherlands Organisation for Scientific Research (2017), all three of which require that applicants provide a detailed plan for data sharing (see Table 2).

Data-Sharing Policies of Several Funding Organizations

In addition, the German Research Foundation (DFG), the largest public funder in Germany, recently adopted a policy on data sharing that can be summarized as follows: “Publicly funded research, including the raw data, belongs to the public” (Schönbrodt, 2017, paragraph; see Table 2 for additional details). To effectively apply this policy to various scientific disciplines, the DFG requested various sections of the scientific community to develop discipline-specific implementation guidelines (Deutsche Forschungsgemeinschaft, 2015). In reply to this request, the German Psychological Society wrote a guideline in which they proposed a distinction between two levels of data sharing: “Type 1” data sharing refers to sharing the data needed to reproduce the results reported in the article, which means that only a subset of the collected data may be publicly released, and “Type 2” data sharing refers to sharing the entire data set.

Finally, several funders in the United Kingdom, such as Wellcome and Research Councils UK, are signatories to the Concordat on Open Research Data 2016 (Johnson, 2016). This concordat was developed by the United Kingdom research community and aims to

help to ensure research data gathered and generated by members of the [United Kingdom] research community is, wherever possible, made openly available for use by others in a manner consistent with relevant legal, ethical and regulatory frameworks and disciplinary norms, and with due regard to the costs involved. (Johnson, 2016, p. 4)

Summary

The recent initiatives from journals, researchers, and funders highlight the change in awareness concerning the importance of data sharing. Nevertheless, in order to accomplish corresponding behavioral change, it will be helpful to know why psychologists are reluctant to share their data. As Morey et al. (2016) noted, “knowing why authors choose not to be open would be tremendously useful to the scientific community at large” (p. 4). If the perceived barriers to sharing are not targeted by current initiatives, alternative initiatives can be designed. Similarly, knowing what researchers consider to be important preconditions for data sharing will be beneficial for developing programs that effectively increase sharing. We briefly review the small literature on barriers to and conditions that facilitate data sharing before turning to our own study.

Barriers to and Preconditions for Data Sharing

Two empirical studies have identified factors that deter researchers from sharing their data. Tenopir et al. (2011) found that the most common barriers reported by researchers were insufficient time and a lack of funding. Other reasons for not sharing included not having the rights to make the data public, not having a place to put the data, a lack of standards, not being required by the sponsor to share the data, and the belief that the data should not be electronically available to other researchers. By means of a different questionnaire, Schmidt, Gemeinholzer, and Treloar (2016) identified several other barriers to data sharing: the desire to publish results before releasing the data, legal constraints, and concerns about loss of credit or recognition. 2

Other studies with a smaller scope have also contributed to the understanding of barriers to data sharing. For example, Wicherts, Bakker, and Molenaar (2011) found that willingness to share research data was related to the internal consistency of statistical results and strength of evidence. In a qualitative study, Cheah et al. (2015) identified three main factors that contributed to researchers’ reluctance to share their primary research data: concerns relating to the anonymity of participants, worries that their contribution will not be acknowledged and fear of not publishing findings before secondary researchers do, and concerns related to the demands on resources that are required to share data effectively (e.g., time needed to prepare a data set for sharing and adequately curating shared data). Additional barriers may include ethical and legal concerns. These latter barriers are not our focus here but are discussed elsewhere in this special section (Meyer, 2018, this issue).

Studies of barriers to data sharing are complemented by studies of the conditions under which researchers would be more willing to share their data. Tenopir et al. (2011) found that researchers would be more likely to share data if they could place conditions on access to their files and if secondary users of their data would properly cite them. Using the same data set as Tenopir et al. (2011), Sayogo and Pardo (2013) concluded that having data-management skills and receiving institutional support (i.e., funding, training, technical support, data storage, and data management) positively influence the likelihood that researchers share their data. They also concluded that another important determinant is acknowledgment of the primary researcher, in the form of coauthorship on publications, formal acknowledgment of the source of the data, and opportunity to collaborate.

In a qualitative study, Wallis, Rolando, and Borgman (2013) found that the five most important conditions for a researcher being willing to share data were retaining first rights to publish results, having assurances of being properly acknowledged as the data source, being familiar with the data recipient, receiving funding from an agency that expects data to be shared, and being able to share the data without expending a large amount of effort. Cheah et al. (2015) found that practices encouraging data sharing fell into three categories: ensuring that shared data sets are of high quality and are both well managed and curated, maintaining high standards of consent that ease worries regarding adequate protection of the interests of research participants and communities, and maintaining high standards of data governance.

The current study

Despite promising initiatives and technological developments, public sharing of research data remains uncommon. A handful of studies have investigated barriers to data sharing and remedial measures, but none has specifically focused on data sharing in psychology and the distinctive issues that may arise with data about human participants. In the current study, we asked why researchers in psychology are reluctant to share their research data and what measures provide effective incentives to share. To answer these questions, we used data from a questionnaire distributed to a sample of 14,184 unique e-mail addresses belonging to researchers in the field of psychology.

Disclosures

Preregistration

Both the preliminary study and the primary study (see the Method section) were preregistered through AsPredicted.org (http://aspredicted.org/ik7wt.pdf and https://aspredicted.org/qq7wj.pdf, respectively).

Data, materials, and online resources

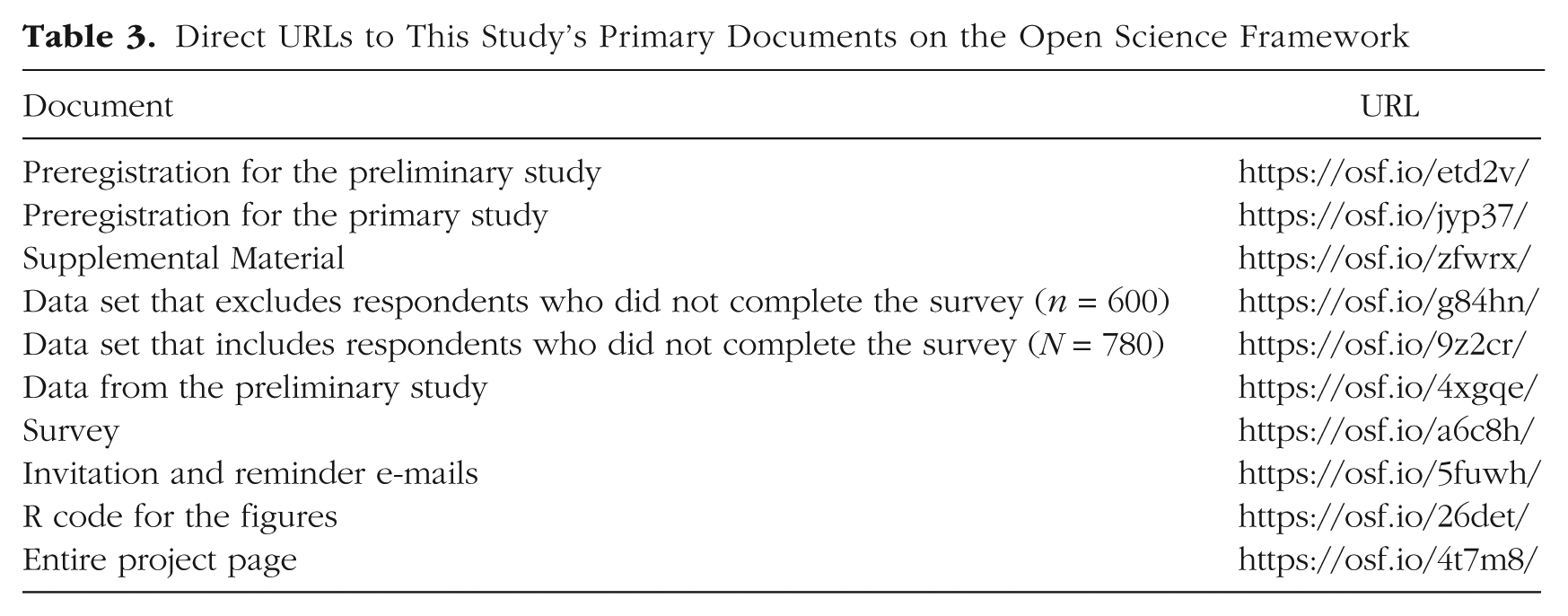

The full data from the preliminary and primary studies, the full survey, the text of the survey invitation and reminder e-mails, and R code for the figures in this article are available on the Open Science Framework (OSF; see Table 3).

Direct URLs to This Study’s Primary Documents on the Open Science Framework

Measures

We report how we determined our sample size, all data exclusions, all manipulations, and all measures in the study.

Ethical approval

In this work, we polled the opinions of colleagues without providing any remuneration. Participation in the poll was entirely voluntary, and we indicated that the data would be analyzed anonymously. In accordance with Dutch ethics-review procedures, we did not seek approval from an institutional review board for this nonmedical study. This study was conducted in accordance with the Declaration of Helsinki.

Method

Sample

To collect e-mail addresses of researchers active in psychology, we retrieved metadata from a set of articles from Web of Science, a database for research articles in a range of disciplines. We searched for articles in the English language that were published from 2005 through 2015 and had been indexed as having psychology as both the topic and the specific research area. 3 This search resulted in a list of 21,116 research articles (collected on July 6, 2016). 4 In order to obtain metadata, including the authors’ e-mail addresses, from these articles, we used an automated Python script, which yielded a total of 16,284 unique e-mail addresses.

Note that this method of obtaining a sample of participants is subject to self-selection: We could not force researchers to complete the survey, and researchers who already had a strong opinion about open-science practices may have been more likely than others to participate. Therefore, our sample may not adequately represent the population of researchers in psychology. We revisit this inevitable limitation in the Discussion section.

The 16,284 e-mail addresses were used for three phases: a pilot study that assessed the quality of the questionnaire (6 responses from researchers at 100 e-mail addresses), a preliminary study used to formulate a proper analysis plan (132 responses from researchers at 2,000 e-mail addresses), and the primary study (780 responses from researchers at the remaining 14,184 e-mail addresses).

Materials

Our questionnaire items were based on earlier surveys (Cheah et al., 2015; Sayogo & Pardo, 2013; Schmidt et al., 2016; Tenopir et al., 2011; Wallis et al., 2013) and a discussion at a meeting of experts on reproducibility, held in Oxford in 2015. The adequacy of the items, including the response options, was tested in the pilot study and the preliminary study, in which researchers were invited to provide feedback on the items.

The survey included questions in four categories: generic information, perceived barriers for data sharing, preconditions for data sharing, and desirability and profitability of data sharing (see https://osf.io/nts79/ for the full version of the questionnaire).

Generic information

Seven items asked respondents to report their age, gender, and current academic position; the number of years they had been active in academia; the percentage of their published articles for which they had shared their data; how likely they were to share their research data for their next publication; and how likely they were to share their research data if the current academic system remained unchanged. The questionnaire used for the preliminary study (and the initial phase of the primary study) also asked respondents to report the university at which they were employed, but in order to guarantee anonymity, we decided to remove this question from the questionnaire.

Desirability and profitability of data sharing

Five items assessed respondents’ general opinion about data sharing. Four of these items related to the desirability and profitability of data sharing in respondents’ field and for their current research project (e.g., “To what extent do you think your current research project would profit from data sharing?”). The desirability items were answered using a 5-point Likert scale from 1 (

Barriers to data sharing

Twenty-nine items addressed barriers to data sharing. One item asked respondents about the extent to which they were allowed to share data. If respondents indicated that they were not allowed to share, this question was followed up with an item that asked what legal constraints they experienced. Fifteen of the remaining 27 questions were not expected to be subject to dishonest responding. They asked respondents to indicate their agreement with statements relating to 15 non-fear-related barriers to sharing their own data (e.g., “It is unfair for other researchers to profit from my hard work; they should collect their own data” and “I am afraid that other researchers will perform alternative analyses on my data and argue that my conclusions are invalid”). These items were answered on a 7-point Likert scale from 1 (

We expected that items regarding six fear-related barriers were particularly likely to be subject to dishonest responding. We addressed this problem by creating two items for each of these barriers; in one formulation, the respondent evaluated the barrier from his or her own perspective, and in the second formulation, the respondent evaluated the barrier from other researchers’ perspective (e.g., John, Loewenstein, & Prelec, 2012; see also Anderson, Martinson, & De Vries, 2007). For example, we assessed whether loss of control over intellectual property was a perceived barrier using the following two statements: “I feel that by sharing my data I lose control over my intellectual property” and “I think that other researchers are afraid that by sharing their data, they lose control over their intellectual property.” These items were answered on a 7-point Likert scale from 1 (

Preconditions for data sharing

Twenty-two items ad-dressed the conditions under which respondents would be willing to share their primary research data. Each item started with “How likely are you to share your research data if . . .” and was completed with a different possible precondition, such as “minimal time is required” and “you had the first rights to publish the results from the data.” Respondents answered these items using a 7-point Likert scale from 1 (

Procedure

For the primary study, the questionnaire was sent to 14,184 unique e-mail addresses using the Qualtrics Mailer service. If no response was recorded within 2 weeks, we sent a single reminder.

The questionnaire contained two instructions. The first instruction provided the definition of data sharing that respondents were to use when answering the questions: “Data sharing is the activity of making primary research data available in an online repository upon publication of the article associated with the data.” The second instruction concerned legal constraints relating to data sharing. Participants were told that if legal constraints in their field prohibited data sharing, they should disregard this issue when filling out the remainder of the questionnaire.

The median amount of time respondents took to fill out the questionnaire was 8 min 56 s, and the interquartile range was 5 min 21 s.

Analysis plan based on the preliminary data

As mentioned earlier, we collected preliminary data in order to develop a plan for analyzing the survey data appropriately. At this exploratory stage, we initially considered factor analysis and network analysis. However, bar plots of the preliminary data appeared to yield the most valuable information. Consequently, we use bar plots to summarize the data from the primary study.

The preliminary data analysis yielded two results that we hoped to confirm in the analysis of the primary data set by means of an explicit test. Specifically, in the preliminary study, (a) respondents rated levels of fear differently when they evaluated their own fears then when they evaluated other researchers’ fears and (b) they rated the desirability of sharing differently when they responded about their research fields than when they responded about their current research projects. To evaluate the evidence for these patterns in the primary study, we executed one-tailed Bayesian

Results

In the primary study, 2,155 of the 14,184 e-mail invitations bounced (i.e., there was an automatic failure to deliver the e-mail, for instance, because an address was no longer active). Thus, the total number of requests was 12,029. The questionnaire was filled out by 826 researchers, but not every respondent completed the survey. Removing incomplete surveys left a total sample of 600 respondents (i.e., a response rate of 4.99%).

Generic information

Of these 600 respondents, 411 reported that they were male, and 189 reported that they were female. The average age of the respondents was 45.9 years (

The majority of respondents indicated that in less than 10% of their projects, they had shared the primary data in an online repository (see Fig. S2 in the Supplemental Material available online). In the group of researchers younger than 45, 25.23% indicated that they had shared data in more than 10% of their research projects. In the age group of researchers 45 and older, 8.87% indicated that they had shared data in more than 10% of their research projects. This difference may be explained by a lack of suitable repositories in the past.

Regarding future data sharing, many researchers indicated that they would be somewhat or extremely likely to share the research data of their next publication in an online repository (see Fig. S3 in the Supplemental Material). Among researchers younger than 45, 48.33% indicated that they would be somewhat likely or extremely likely to share the data for their next publication, whereas the corresponding percentage was 37.64% among researchers ages 45 and older.

Additionally, we assessed how likely respondents would be to share their data if the current academic system did not change. Responses were fairly equally distributed across the response options (see Fig. S4 in the Supplemental Material).

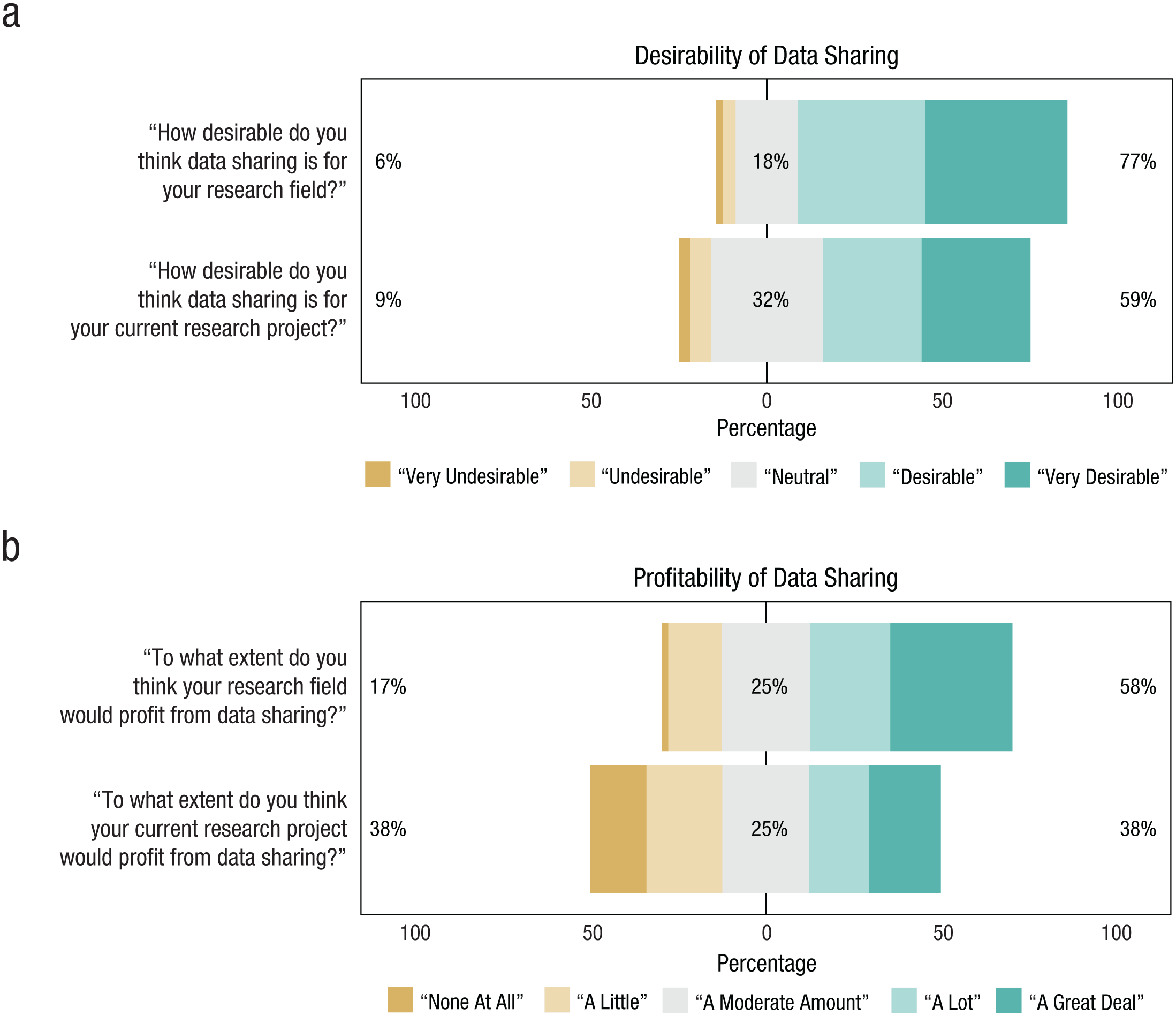

Desirability and profitability of data sharing

The vast majority of respondents considered data sharing desirable and profitable for their own research field; the percentages were slightly lower for the perceived desirability and profitability of data sharing for researchers’ current research projects (Fig. 1).

Responses to the survey questions on the (a) desirability and (b) profitability of data sharing for the researchers’ own fields and their own current projects. For each statement in (a), the number to the left of the data bar indicates the percentage of researchers who responded with “very undesirable” or “undesirable,” the number in the center of the data bar indicates the percentage who responded with “neutral,” and the number to the right of the data bar indicates the percentage who responded with “desirable” or “very desirable.” For each statement in (b), the number to the left of the data bar indicates the percentage of researchers who responded with “none at all” or “a little,” the number in the center of the data bar indicates the percentage who responded with “a moderate amount,” and the number to the right of the data bar indicates the percentage who responded with “a lot” or “a great deal.” This figure was created using the

To confirm statistically that respondents considered data sharing more desirable and profitable in their research fields than in their current research projects, we conducted one-tailed Bayesian paired-samples

We also asked respondents which of four benefits (from Borgman, 2012) they considered to be the greatest benefit of data sharing. Two hundred seven respondents (34.5%) chose the ability to reproduce or verify research, 44 (7.33%) chose making results of publicly funded research available to the public, 231 (38.5%) chose enabling other researchers to ask new questions of extant data, and 118 (19.67%) chose advancing the state of research and innovation.

Barriers to data sharing

Of the 600 respondents, 169 (28.17%) indicated that they strongly agreed, somewhat agreed, or neither agreed nor disagreed with the statement that they were not allowed to share their research data (see Fig. S5 in the Supplemental Material). These respondents were presented with a follow-up question about the type of legal constraint they experienced. Eight (4.73%) reported that their institutional review board prohibited them from sharing their data, 47 (27.81%) said they were constrained by a consent form stating that the data would not be shared, 34 (20.12 %) indicated that anonymity could not be guaranteed if the data were shared, 27 (15.98%) said they were prohibited from sharing data because of the interests of stakeholders (e.g., companies, institutions), and 14 (8.28%) indicated that their funder, advisor, or boss did not allow them to share data. The remaining 39 respondents (23.08%) experienced other legal constraints.

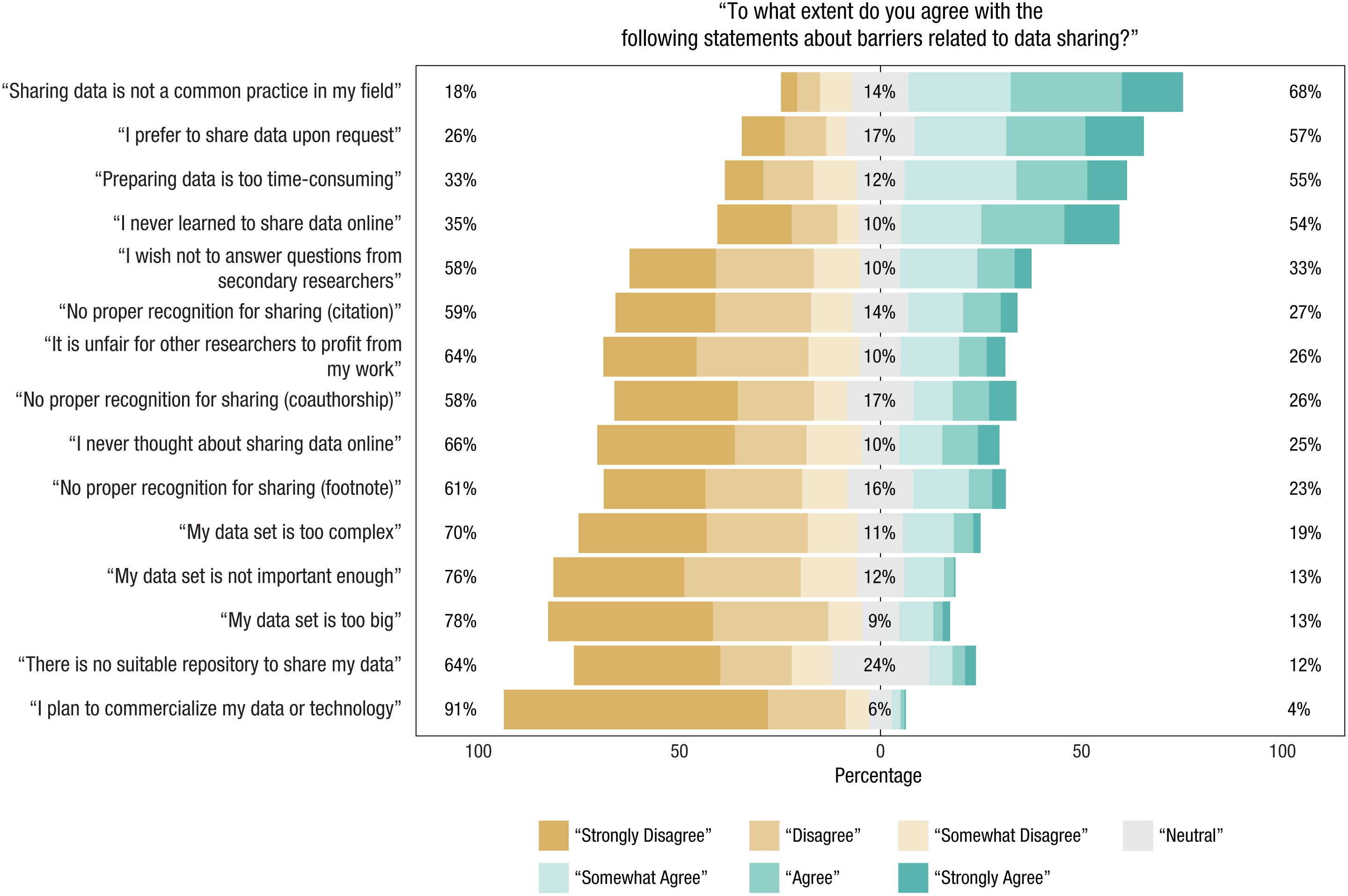

As described in the Method section, the survey included 15 questions on specific non-fear-related barriers and 12 questions on specific fear-related barriers (i.e., 6 questions that respondents evaluated from their own perspective and 6 questions that they evaluated from other researchers’ perspective). Figure 2 shows the ordered results for respondents’ agreement with the statements referring to non-fear-related barriers that might have been keeping them from sharing their data. Out of all 15 barriers, the 4 that researchers considered most relevant were the following: (a) Online sharing of research data was not a common practice in their fields, (b) they preferred to share their data sets upon request (which suggests that they wanted to control what they distributed and whom they distributed it to), (c) they considered preparing data for sharing too time-consuming, and (d) they had learned to share their data online. These findings are highly similar to those of our preliminary study.

Responses to the survey questions asking respondents to indicate the extent to which the 15 non-fear-related barriers kept them from sharing their research data. For each statement, the number to the left of the data bar indicates the percentage of researchers who responded with “strongly disagree,” “disagree,” or “somewhat disagree”; the number in the center of the data bar indicates the percentage of researchers who responded with “neutral”; and the number to the right of the data bar indicates the percentage who responded with “somewhat agree,” “agree,” or “strongly agree.” The statements are ordered according to the percentage of agreement (greatest agreement at the top). This figure was created using the

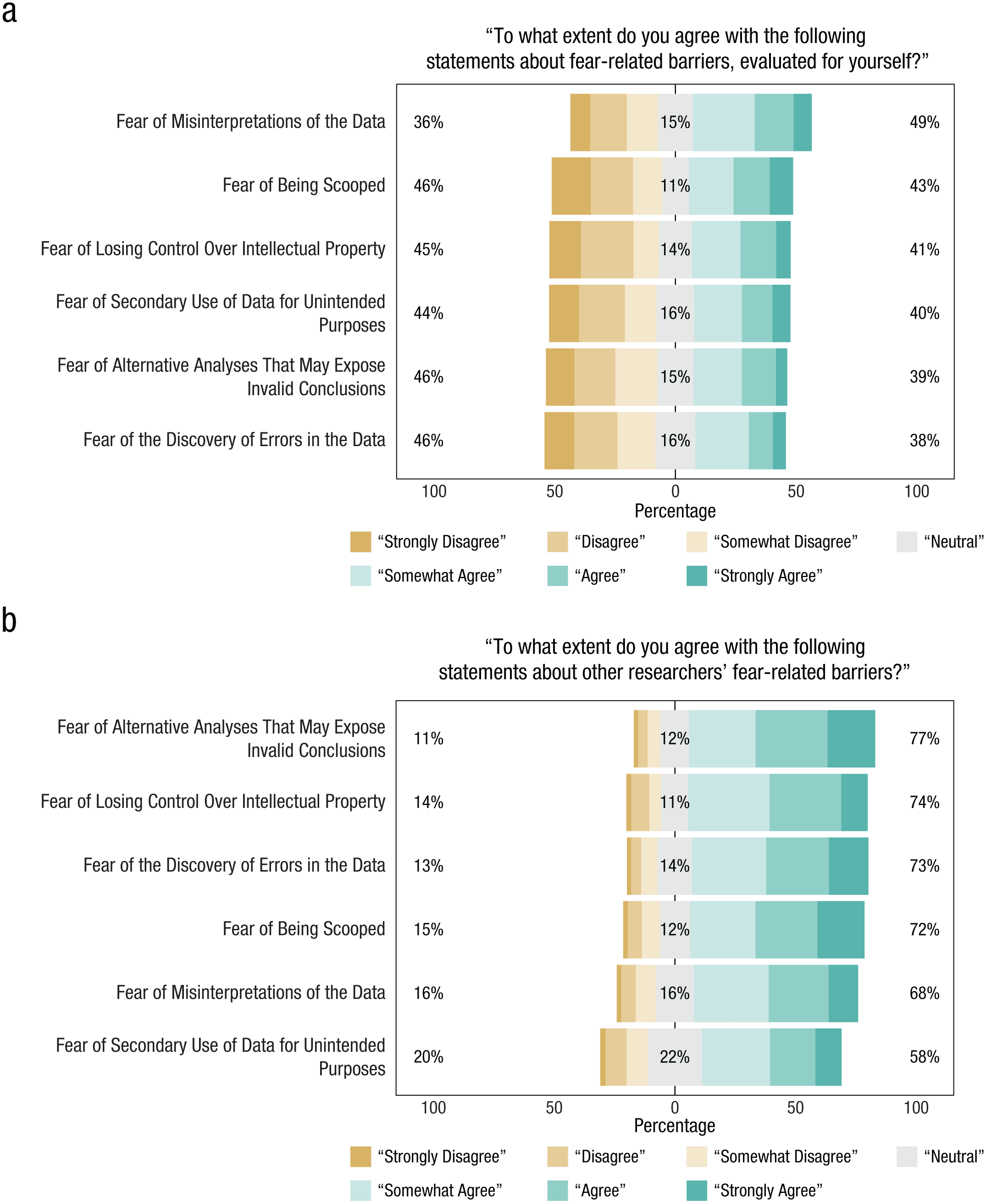

Respondents also indicated the extent of their agreement with statements referring to six possible negative outcomes of sharing data (i.e., the fear-related barriers): (a) primary researchers being scooped (i.e., other researchers publishing results from the data before the primary researchers can), (b) rejection of conclusions as a result of alternative analyses of the shared data, (c) loss of control over intellectual property, (d) detection of errors in the data, (e) misinterpretation of the data by secondary users, and (f) use of shared data for unintended purposes. We asked researchers to evaluate both their own fear that these negative outcomes might occur (Fig. 3a) and their perception of other researchers’ fear of these outcomes (Fig. 3b) because differences between these two sets of evaluations might expose the presence of socially desirable responding.

Responses to the survey questions asking researchers to indicate the extent to which the six fear-related barriers kept (a) themselves or (b) other researchers from sharing their data. For each statement, the number to the left of the data bar indicates the percentage of researchers who responded with “strongly disagree,” “disagree,” or “somewhat disagree”; the number in the center of the data bar indicates the percentage who responded with “neutral”; and the number to the right of the data bar indicates the percentage who responded with “somewhat agree,” “agree,” or “strongly agree.” In each panel, the statements are ordered according to the percentage of agreement (greatest agreement at the top). This figure was created using the

The results showed that respondents often agreed that the six fears keep other researchers from sharing their data (Fig. 3b). Fear that alternative analyses might expose invalid conclusions and fear of loss of control were reported as the most prominent barriers for other researchers. When respondents evaluated these fear-related barriers for themselves (Fig. 3a), they indicated that the most relevant barriers were fear of misinterpretation of the data and fear of being scooped. Thus, there was a remarkable difference between how respondents evaluated these barriers for themselves and how they evaluated these barriers for other researchers.

Another noteworthy difference was that respondents indicated that other researchers were more strongly affected by these barriers to data sharing than they themselves were. To test this difference statistically, we conducted a one-tailed Bayesian paired-samples

Preconditions for data sharing

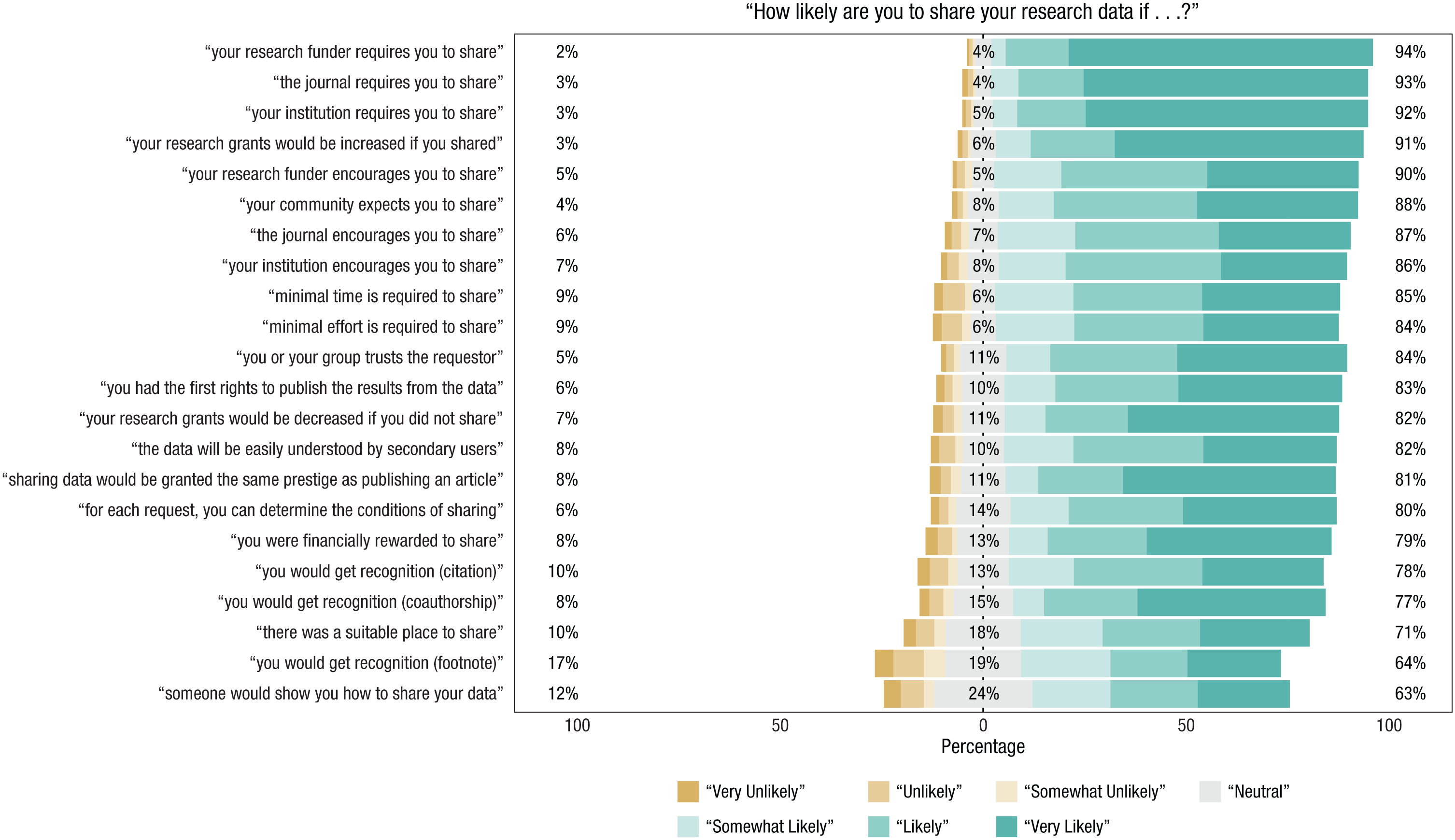

Figure 4 summarizes the results for the questions asking researchers how likely they would to share their research data under 22 different conditions. The responses to these questions suggest that fulfilling any of the conditions would lead to substantially increased willingness to share research data.

Responses to the survey questions asking researchers to indicate how likely they would be to share their data under several conditions. For each statement, the number to the left of the data bar indicates the percentage of researchers who responded with “very unlikely,” “unlikely,” or “somewhat unlikely”; the number in the center of the data bar indicates the percentage who responded with “neutral”; and the number to the right of the data bar indicates the percentage who responded with “somewhat likely,” “likely,” or “very likely.” The statements are ordered according to the percentage of agreement (greatest agreement at the top). This figure was created using the

Of the five conditions that respondents indicated would be most effective, the top three concerned mandatory sharing of data. Specifically, respondents indicated that they would comply if research funders, journals, and institutions were to mandate data sharing. This suggests that mandatory dating sharing would not lead researchers to seek alternative funders or publishers that do not have this requirement. The condition that respondents indicated was next most likely to increase their likelihood of sharing data concerned financial encouragement. They reported that they would be more likely to share their data if, as an incentive, the amounts of their research grants would increase. The condition that respondents indicated would be the fifth most effective was encouragement of data sharing by funders.

Conclusions

We conducted this study to gain insight into the perceived barriers that prevent psychologists from sharing their primary research data. In addition, we sought to determine the specific conditions under which psychologists would be more willing to share their data. The main findings of our study are as follows:

Public data sharing is not a common practice among research psychologists.

Respondents considered data sharing to be both desirable and profitable for their particular research fields, but somewhat less desirable and profitable in the case of their own current research projects.

The non-fear-related barriers to data sharing most frequently reported by respondents were that (a) sharing is not a common practice in their field, (b) they prefer to share their data only upon request, (c) they consider preparing data for sharing to be excessively time-consuming, and (d) they have never learned to share data online.

Respondents believed that the largest fear-related obstacles preventing other researchers from sharing their data are the fear that alternative analyses might expose invalid conclusions and the fear of loss of control.

Respondents reported that their greatest fears about sharing their own data are that the data might be misinterpreted and they might be scooped.

In general, respondents felt that fear-related barriers affected other researchers more strongly than themselves.

Mandatory data sharing (enforced by institutions, journals, or funders) and financial encouragement (i.e., increased grant amounts) are measures that would apparently be highly effective in increasing researchers’ willingness to share their data.

Limitations

The main limitation of this study is that the sample was self-selected. Specifically, the response rate was about 5%; given the daily pressure on researchers’ time, we do not consider this surprisingly low. Similarly modest response rates were obtained in past studies that surveyed researchers on the topic of data sharing. Schmidt et al. (2016) reported a response rate of 4.32% (i.e., 1,253 complete responses out of 29,000 invitations). Tenopir et al. (2011) received responses from 1,329 people out of an estimated sample of 15,000, for a response rate of 8.86%, but the response rate would likely be substantially lower if respondents who failed to complete the entire questionnaire were excluded. In sum, our response rate was modest but not unusually low.

We note that our 5% response rate still translated to a sizable number of respondents (i.e., 600). The key concern, however, is that the researchers who accepted our invitation may already have had a strong opinion about data sharing. The problem of self-selection is difficult to overcome for survey research in general. But even if we had been able to enforce a 100% response rate, there would have been no certainty that participants answered the items truthfully. Moreover, even if all participants answered truthfully, there would be no certainty that their later actions would be consistent with their earlier answers; the drawbacks of data sharing may loom larger in real life than during the process of filling out a survey online.

Several demographic factors may have led to self-selection and a modest response rate. For example, people who were still active in academia may have been more interested in the survey than people who had moved into teaching positions, and people in the early stages of their careers may have had more time or been more comfortable with an online survey than people in the later stages of their career. It is possible that some of these demographic factors correlate with attitudes concerning data sharing. For these reasons, the present results, although suggestive, cannot be blindly generalized to the population.

A final limitation is that both the authors and the coauthors of articles were approached for participation. Consequently, the responses to our questionnaire were not completely independent. Authors and their coauthors often work with each other extensively and for long periods of time; they may have adopted each other’s opinions on open science or started working together because of common interests related to open science in the first place.

Recommendations

This study suggests several recommendations concerning future research on data sharing, the development of educational materials, and policy. With respect to future research, one of the respondents suggested adopting a different perspective and studying what motivates the researchers who regularly share their data. Another suggestion was to focus specifically on sharing of qualitative data. Qualitative data not only are more complex than quantitative data but also may be more difficult to anonymize. Finally, future research could also devote more attention to ethical, moral, and legal issues related to data sharing (see Meyer, 2018, this issue).

Currently, the practice of data sharing is not given much attention in the curricula for psychological researchers (Wicherts, 2016). To increase the frequency of data sharing, we recommend that institutions develop and provide educational materials on this topic. Such materials can indicate where data can be shared (e.g., on the OSF) and outline how they can be shared (e.g., as a .csv file, together with a text document that describes its contents using some universal coding system). The development of educational materials will be useful even when they affect only a select subset of researchers. In addition, funders that make data sharing mandatory would do well to point grant recipients to materials that can inform them about where and how to share their data effectively.

An increased focus on educating students and researchers about data-sharing practices may also ease their concerns that data sharing is excessively time-consuming. Knowing exactly where and how to share data will make the data-sharing process more efficient and less time-consuming. For example, we recommend that researchers describe the variables in their data set while the research project is still ongoing and the pertinent information is still fresh in their mind. A related recommendation is to develop software solutions that help automate the process of data sharing.

With respect to policies that promote data sharing, our results showed that researchers consider mandatory data-sharing policies to be the most effective. Enforced by funders, journals, or institutions, such policies will almost certainly increase researchers’ propensity to share. Journals may refuse to publish any articles for which the data have not been uploaded to a freely accessible online repository, funders may refuse to support studies if the data will not be shared, and institutions may refuse to hire researchers who have proven unwilling to share their research data. Such policies should safeguard the anonymity of respondents and respect the ethics involved in data sharing. However, even in challenging scenarios, steps can be taken to keep the research process and the resulting data as open as possible. For example, the Medical Research Council (2017) has developed guidelines to ensure patients’ privacy by having researchers sign data-sharing agreements that contain specific restrictions: “Data-sharing agreements must prohibit any attempt to (a) identify study participants from the released data or otherwise breach confidentiality, (b) make unapproved contact with study participants” (p. 4). Although the context of this particular policy is medical, it could perhaps benefit clinical psychologists as well.

Our survey suggests that, in addition to mandating data sharing, positive reinforcement, such as financial encouragement by funders, may be effective in increasing data sharing. From the perspective of the funder, such encouragement could be seen as covering the cost of sharing and curating data. However, from the researcher’s perspective, it may be perceived as an incentive for data sharing.

To conclude, our findings suggest that although researchers perceive barriers to data sharing, at least some important barriers can be overcome relatively easily. Specifically, we recommend that mandatory data sharing be combined with the development of educational materials that demonstrate where and how data can be shared effectively and systematically. We hope that this study will contribute to both the acceleration of scientific progress and the extensive employment of transparent research practices.

Footnotes

Acknowledgements

We are grateful to C. H. J. Hartgerink from Tilburg University for collecting the set of e-mail addresses used for our survey.

Action Editor

Daniel J. Simons served as action editor for this article.

Author Contributions

E.-J. Wagenmakers, C. Chambers, D. V. M. Bishop, M. Macleod, and T. E. Nichols generated the idea for the study. B. L. Houtkoop conducted the literature study. B. L. Houtkoop, E.-J. Wagenmakers, C. Chambers, D. V. M. Bishop, M. Macleod, and T. E. Nichols created the survey. B. L. Houtkoop gathered and analyzed the data for the preliminary and primary studies. B. L. Houtkoop wrote the first draft of the manuscript. E.-J. Wagenmakers, C. Chambers, D. V. M. Bishop, M. Macleod, and T. E. Nichols provided feedback. All the authors approved the final version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared that there were no conflicts of interest with respect to the authorship or the publication of this article.

Open Practices

All data and materials, including the R code for the figures, have been made publicly available via the Open Science Framework and can be accessed at https://osf.io/xm8dh/. The design and analysis plans for the preliminary study and the primary study were preregistered through AsPredicted.org (http://aspredicted.org/ik7wt.pdf and https://aspredicted.org/qq7wj.pdf, respectively) and are also available at the Open Science Framework (https://osf.io/etd2v/ and https://osf.io/jyp37/, respectively). The complete Open Practices Disclosure for this article can be found at http://journals.sagepub.com/doi/suppl/10.1177/2515245917751886. This article has received badges for Open Data, Open Materials, and Preregistration. More information about the Open Practices badges can be found at ![]() .

.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.