Abstract

The prevalence of effect-size (ES) reporting has risen significantly, yet studies comparing two groups tend to rely exclusively on the Cohen’s d family of ESs. In this article, we aim to broaden the readers’ horizon of known ESs by introducing various ES families for two-group comparisons, including indices of standardized differences in central tendency, overlap, dominance, and differences in variability and distributional tails. We describe parametric and nonparametric estimators in each ES family and present an interactive web application (R Shiny) for computing these ESs and facilitating their application. This one-stop calculator allows for the computation of 95 applications of 67 unique ESs and their confidence intervals and various plotting options and provides detailed descriptions for each ES, making it a valuable resource for both self-guided exploration and instructor-led teaching. With this comprehensive guide and its companion app, we aim to improve the clarity and accuracy of ES reporting in research design that involves two-group comparisons.

Keywords

More than one third of quantitative research conducted in psychological science uses research designs that compare two or more groups with parametric tests, such as t tests and analyses of variance (Blanca et al., 2018). Over the past decades, there have been repeated calls for supplementing or even replacing null hypothesis significance testing with the reporting of effect sizes (ESs) and confidence intervals (CIs; e.g., Cumming, 2014; Thompson, 2002; Wilkinson & Task Force on Statistical Inference, American Psychological Association, Science Directorate, 1999). For such a shift to occur, it is vital that researchers know which ESs are relevant to their study design and how to interpret them. In the present article, we aim to aid this process by (a) describing a wide variety of ESs that provide meaningful quantification of group differences and (b) presenting a web application for computing, teaching, and exploring them.

The American Psychological Association (APA) first recommended the reporting of ESs in the fourth edition of its Publication Manual (APA, 1994). Two years later, the APA Task Force on Statistical Inference reaffirmed this recommendation by stating that ESs and their CIs should be provided routinely (Task Force on Statistical Inference, American Psychological Association, Science Directorate, 1996). Another 3 years later, the task force expanded its recommendations with a call to interpret ESs in both practical and theoretical terms (Wilkinson & Task Force on Statistical Inference, American Psychological Association, Science Directorate, 1999). All these suggestions are now part of the reporting standards for quantitative research in the seventh edition of the APA Publication Manual (APA, 2020) and the Journal Article Reporting Standards for quantitative research designs (JARS–Quant), as issued by the APA (2024).

Initiators and proponents of these new guidelines have argued that these practices are vital for two main reasons. First, “the effect size is the best single answer to our research question that our data can provide” (Cumming & Calin-Jageman, 2024, p. 119). The seventh edition of the APA Publication Manual (APA, 2020) similarly stated that ESs allow “readers to appreciate the magnitude or importance of a study’s findings” (p. 89). Second, its CI provides information about the precision of the point estimate and consequently, the quality of information obtained. In addition, CIs can be used to infer whether an effect differs significantly from a hypothesized value (APA, 2020; Cumming, 2011; Cumming & Calin-Jageman, 2024). Thus, ESs and their CIs provide the best foundation for understanding the results of quantitative analyses (Cumming, 2011). This holds especially true when psychological science embraces quantitative “‘How much?’ or ‘To what extent?’ estimation questions” (Cumming et al., 2012, p. 139).

Studies assessing the prevalence of ES reporting have noted steady progress in the right direction, in line with the development of the APA recommendations on ES reporting since 1994, albeit with substantial differences between individual journals and fields of study. A. Fritz et al. (2013) identified a mean prevalence of ES reporting of 38.4% (range = 1%–81%) in selected volumes of several psychological journals published between 1990 and 2007. Peng et al. (2013) found a median prevalence of 29.4% (range = 0%–77%) for selected volumes of psychological- and educational-science journals published before 1999 and a median prevalence of 58% (range = 8.3%–100%) for volumes published between 1999 and 2010. Barry et al. (2016) reported a prevalence of 47.9% (range = 30.7%–67.1%) for six well-known health-education and behavioral-science journals in the years 2010 to 2013. Woods et al. (2023) found a prevalence of 93.6% of ESs for six prominent neuropsychological-science journals in 2020. For the field of social and personality psychology, Farmus et al. (2023) found that 97% of the analyzed articles reported an ES for primary analyses and that 87% reported an ES for follow-up analyses. Both Peng et al. (2013) and C. O. Fritz et al. (2012) documented increases in ES-reporting prevalence over the years, with a notable jump from the period before 1999 to the period after 1999 across all but one of the analyzed journals (Peng et al., 2013) and a growth rate in ES-reporting prevalence of about 2% per year for the period between 1990 and 2007 (C. O. Fritz et al., 2012).

For research designs that compare groups, (partial) η2 is the most frequently reported ES, followed by Cohen’s d (e.g., Alhija & Levy, 2009; Farmus et al., 2023; C. O. Fritz et al., 2012). Multiple degrees-of-freedom ESs, such as (partial) η2 and ω2, are meaningful and important estimators to report and interpret for research designs comparing multiple groups (e.g., Grissom & Kim, 2012; Kirk, 2005). However, one degree-of-freedom ESs, such as Cohen’s d for planned contrasts or post hoc comparisons, are often more meaningful and more readily interpretable and address research questions more concretely (Cumming et al., 2012). For this reason, the APA Publication Manual has recommended decomposing multiple degrees-of-freedom effects into multiple one degree-of-freedom effects since its fifth edition (APA, 2021). Thus, ES measures for comparing two groups lend themselves as appropriate units of analysis for designs with two or more groups.

The prominence of (partial) η2 and Cohen’s d is unsurprising for two reasons. First, these two estimators are those ESs primarily discussed in books on applied statistics (e.g., Agresti, 2018; Cumming & Calin-Jageman, 2024; Field, 2024) and can be easily computed by hand. Second, they are emphasized in the statistical packages most commonly used in psychology, such as IBM SPSS Statistics (Blanca et al., 2018). Even though there exist several R packages and functions for the calculation of ESs (see Votruba & Finch, 2024), here, we focus on point-and-click software with a graphical user interface (GUI). Thus, it seems that primarily those ESs are commonly reported that either are widely known because of their inclusion in the relevant literature, have been implemented in commonly used statistical software, or can easily be computed by hand. A plethora of ESs for comparing two groups have been discussed over the years and have been the topic of extensive reviews (e.g., Goulet-Pelletier & Cousineau, 2018; Keselman et al., 2008; Peng & Chen, 2014) and book chapters (e.g., Ellis, 2010; Grissom & Kim, 2012). Yet most of these ESs are not mentioned in applied-statistics textbooks, and only a handful have been implemented in statistical software widely adopted by psychologists. Table 1 shows a list of ESs offered in software with a GUI, such as SPSS, JASP (https://jasp-stats.org/), NCSS (https://www.ncss.com/), and SAS software (https://www.sas.com/), or in the Desktop Calculator for Effect Sizes (Zhang, 2023). It is important to supplement educational texts about ESs with user-friendly software for computing them to ensure their adoption by the scientific community (e.g., Lakens, 2013). In the present article, we offer precisely such a point-and-click one-stop solution for computing ESs for comparing two groups, akin to the primer by Tran et al. (2021) for measures of distributional inequality and statistical concentration.

Effect Sizes Implemented in Widely Used Statistical Software/Comparable Applications With a Graphical User Interface

Note: See main text for detailed information on the various effect-size estimators.

Although there is a growing trend of reporting ESs in research articles, change has been much slower in the reporting of the corresponding CIs. C. O. Fritz et al. (2012) found that CIs are rarely reported, and there was no evidence of improvement over the time span covered by their study. In the field of social and personality psychology in particular, which has a near 100% rate of ES reporting, CIs are reported only 54% of the time (Farmus et al., 2023). Thus, the precision of estimates, or the set of likely values of the estimated population effects, cannot be evaluated for many reported ESs because of missing CIs. This is especially concerning because publication bias often leads to inflated point estimates of population effects that find their way into the published literature (C. O. Fritz et al., 2012). Without information on the precision of an estimate, the reader cannot ascertain the accuracy and thus the trustworthiness of published, potentially inflated estimates (C. O. Fritz et al., 2012).

Considering APA’s recommendation that findings should be interpreted based on both point and interval estimates (APA, 2020), the lack of CIs clearly hinders the interpretation of a study’s results. The highly popular software package IBM SPSS Statistics did not offer an option to compute CIs for ESs via its GUI before its 27th version, which was released in 2020 (probably in response to JASP, which had already been providing CIs for ESs for some time). In all fairness, SPSS scripts for the computation of CIs for certain ESs have been available since at least 2001 (see Smithson, n.d.), and there is a Microsoft Excel module (ESCI) that has been offering useful tools since 2011 (Cumming, 2011). However, the use of SPSS scripts demands at least some familiarity with the SPSS programming language, and ESCI depends on Microsoft Excel. Similar issues of usability may also apply to the many available R packages and functions that allow the computation of CIs. The lack of easy-to-use software implementations might have contributed to the stagnation of CI reporting in the past. Thus, it is crucial that any new software for computing ESs provides CIs alongside point estimates.

Aims of the Current Article

The aims of the current article are twofold. First, we provide a comprehensive overview and explanation of ESs for research designs in which two groups are compared. In addition to the Cohen’s d family of ESs, we discuss a wide variety of lesser known ESs that are not commonly used in the field of psychology. This overview may thus acquaint readers with ESs that may then prove useful in their own research. Second, we present an easy-to-use, one-stop solution web application that calculates all covered ESs and their corresponding CIs. This web application requires no prior knowledge of programming or the mathematical details of the ESs it implements, allowing psychologists to draw on a broader menu of ESs when reporting empirical findings of their studies.

The current article is informed by best-practice models, such as a recent tutorial and shiny app for measures of distributional inequality and statistical concentration (Tran et al., 2021), and follows up on a highly cited resource by Lakens (2013) on multigroup comparisons. However, in the current article, we go beyond Lakens in four important aspects: (a) the range of designs for group comparison, (b) the number of ES estimators per design, (c) the variety of estimators, and (d) the ease of use and versatility of the companion tool. First, Lakens covered only ESs for comparing two independent or dependent groups on a single outcome variable. In the current article, we also present ESs for pretest–posttest control (PPC) and multivariate designs. Moreover, Lakens (2013) described four to five ES estimators for each research design, all from the Cohen’s d family, in addition to the common language ES. Here, 10+ estimators are presented for each research design, with as many as 34 estimators for the independent-groups design with a single dependent variable. Besides the Cohen’s d family of ES, in the current article, we include four additional groups of ESs with nonparametric and parametric estimators. Finally, Lakens (2013) provided a Microsoft Excel spreadsheet for calculating ES estimators and their CIs based on summary statistics. The shiny web app presented in the current article is a more sophisticated and simultaneously more user-friendly tool that allows the computation of ESs and their CIs based on both summary statistics and user-uploaded raw data. It also provides visualizations that facilitate exploration and deeper understanding of the ESs and that can be used for teaching statistical methods in psychology and other fields.

Structure of the Article

In the following section, we give an overview of the ESs offered by the companion Shiny application. Broad categorizations of the ES estimators are briefly presented, which are followed up by descriptions of the various parametric and nonparametric estimators for four common research designs. Because many subheadings in this section would be otherwise identical in wording, we distinguish them by inserting “independent groups,” “dependent groups,” “multivariate,” “parametric,” or “nonparametric” in parentheses. Next, we present the core functionalities of the companion R Shiny app. We guide the reader through the home page, the sidebar menu, and the panels for inputting data, computing ESs, and obtaining visualizations. We then describe in some detail the plotting capabilities of the app and follow with a brief description of its documentation. In the closing section, we discuss possible future extensions of the application.

ESs and ES Families

In what follows, we present 95 applications of 67 unique ESs for four common designs: the independent-groups, dependent-groups, PPC, and multivariate designs (described in detail below). This overview structurally follows Chapters 3 and 5 of Grissom and Kim’s (2005, 2012) monographs on ESs and the reviews by Peng and Chen (2014) and Del Giudice (2022).

The ESs we discuss can be grouped into five families: (a) standardized differences between measures of central tendencies, (b) measures of the degree of (non)overlap between groups’ distributions, (c) measures of the dominance of one group over the other, (d) measures of differences in group variability, and (e) ratios of frequencies in the distributional tail regions of the groups. Parametric estimators (discussed first, followed by nonparametric estimators) are further grouped based on their underlying distributional assumptions and their robustness to violations of these assumptions.

Some ESs can be applied to both the independent-groups and dependent-groups designs, which is why we count 95 applications of 67 unique ESs. However, the calculation of the CIs for the identical estimator and the exact definition of the estimated population effect often differ, depending on design. Information regarding which design(s) each of the 67 unique ESs applies to and their formulas and verbal descriptions are compiled in Table 2. Detailed documentation on the assumptions of each ES is further provided in the web application. Numbers in square brackets in the text correspond to the respective ESs in Table 2.

Overview of the Effect Sizes Described in This Article and Implemented in the Companion Shiny App

Note:

Although 95 applications may sound like a daunting number, the list is still not exhaustive given that research on ESs is an active field of study with ongoing innovations (e.g., the S index proposed by Del Giudice, 2023b). To compile this collection, we started with relevant chapters of the seminal monographs on ESs by Grissom and Kim (2005, 2012). All the ESs in those chapters were implemented in the companion app with the exception of relative distributions (see Handcock & Janssen, 2002; Handcock & Morris, 1998) and measures comparing quantiles of the groups’ distributions based on the shift function (see Wilcox, 2017, pp. 146–162). We also consulted a number of influential reviews to include other ESs that we regarded as meaningful additions to the collection (e.g., Del Giudice, 2022; Feingold, 2009; Goulet-Pelletier & Cousineau, 2018; Keselman et al., 2008; Lakens, 2013; Morris, 2008; Peng & Chen, 2014). ESs from these sources were excluded if they did not significantly add to the already large list of ESs (e.g., Rom’s measure of overlap, Rom & Hwang, 1996, which corresponds to the parametric overlapping coefficient, Bradley, 2006, in the case of equal population variances and differs from it in the case of unequal population variances—a scenario in which we recommend computing the nonparametric measure of distributional overlap) or did not fit in with the families covered in this article (e.g., different versions of η2). Omitted ESs can, however, be incorporated in future releases of the companion app.

Effect sizes for two independent groups

The independent-groups design—also often referred to as the between-subjects design or the between-groups design—is characterized by different groups being exposed to different levels of an independent variable (e.g., different experimental conditions). Each test person can be a member of only one group. No individual’s score in one group may be related to or predicted from any individual’s score in another group.

Parametric estimators of effect sizes for two independent groups

Central tendency: standardized mean differences (independent groups, parametric)

Under the assumption that the populations being compared have equal variances (we refer to this assumption as “equality of variances”), the most widely used standardized mean difference (SMD) is Cohen’s d [1] (Cohen, 1988). It estimates the difference in population means standardized—that is, divided—by the common standard deviation of the two populations. The d estimator [1] has a positive/upward bias, meaning its expected value is larger than the true population effect (Hedges, 1981). Hedges’s g estimator [2] (Hedges, 1981) corrects for this bias and can be recommended above Cohen’s d [1], particularly in small samples. Because the population effect defined above uses the common standard deviation as a standardizer, both estimators assume equality of variances. Consequently, both estimators pool sample standard deviations to estimate the common-population standard deviation. An additional assumption of normality can be leveraged for constructing exact noncentral t CIs for Cohen’s d [1]/Hedges’s g [2] and in the calculation of the ESs discussed next.

(Non)Overlap (independent groups, parametric)

Measures of (non)overlap can be estimated by functions of Cohen’s d [1] under the assumptions of normality and equality of variances (Cohen, 1988). Cohen (1988) proposed three indices of nonoverlap (U1, U2, and U3), which he thought to be intuitively meaningful (for further discussion, see Huberty & Lowman, 2000; Pastore & Calcagnì, 2019). U1 [23] is the proportion of nonoverlap relative to the joint distribution of two populations (i.e., the amount of combined area not shared by the two populations). U2 [24] is the proportion of one population that exceeds the same proportion of the other population. For example, a value of 0.7 would indicate that the top 70% of one population exceeds the bottom 70% of the other population. U3 [25] is the proportion of the population with the lower mean that is exceeded by the top 50% of the population with the higher mean. U3 [25] may be particularly helpful in improving the transparency of research findings because it is intuitively meaningful and does not require a great deal of statistical knowledge (Hanel & Mehler, 2019). Complementary to nonoverlap measures are measures of overlap (Del Giudice, 2022). The overlapping coefficient (OVL [17]) is defined as the common area under two probability densities and is thus the proportion of one distribution that overlaps with the other (Bradley, 2006). The overlapping coefficient 2 (OVL2 [18]) is the proportion of overlap relative to the joint distribution of the two populations (Del Giudice, 2022). Overlap measures emphasize similarities (vs. differences) between groups, which may yield more accurate lay perceptions and potentially foster more positive attitudes toward members of an outgroup (Hanel et al., 2019).

Dominance (independent groups, parametric)

Another family of ESs comprises probabilistic measures of group dominance, which can also be estimated as functions of Cohen’s d [1] assuming normality and equality of variances. One such ES is the common-language ES (CLES [20]), which is defined as the probability that a score chosen at random from one group will be higher than a score chosen at random from the other group (McGraw & Wong, 1992). The CLES [20] may provide an intuitive way to understand statistical results and can therefore aid practitioners in understanding research findings and in making informed decisions (Mastrich & Hernandez, 2021). Another probabilistic measure of effect is the probability of correct classification (PCC [19]), or classification accuracy, which is the probability of correctly determining the group membership of a randomly picked individual based on the value of the dependent variable (Del Giudice, 2022).

Differences in variability and tail ratios (independent groups, parametric)

Besides differences in group means, differences in variability around group means can be of interest as well, if only to assess whether equality of variances is a sensible assumption. Differences in variability can be quantified with the variance ratio (VR [26]) between two groups, which can be estimated by the ratio of the respective sample variances. Combined with difference in means, difference in variances—indicated by a VR [26] less or greater than 1—can lead to a pronounced difference in the proportion of individuals of the contrasted groups with particularly high/low values of the dependent variable. Whenever such high/low values of the dependent variable predict adverse or beneficial outcomes, group differences in the tail regions of the distributions are often of greater concern than differences around the means of the same distributions (Voracek et al., 2013). In these scenarios, an informative ES is represented by the tail ratio (TR [27]), which is the ratio of the proportion of observations in each group falling below versus above a cutoff value of interest (Voracek et al., 2013; for further background, see Hill & Arden, 2023; Hill & Fox, 2022). Under a normality assumption, this quantity can be estimated based on sample summary statistics (but note that the resulting TR values can be very sensitive to small deviations from normality; see Del Giudice, 2022).

Violations of assumptions (independent groups, parametric)

Under certain circumstances, assuming equality of variances may be unreasonable. For example, interventions can increase the variance in the dependent variable of interest because of differential responsiveness of subjects to the treatment (Grissom & Kim, 2012, pp. 17–20). In such cases, the pooled variance used in Cohen’s d [1] and Hedges’s g [2] does not estimate a single population variance as intended but becomes a weighted average of two different variances, which may distort the interpretation of these ESs and other indices (e.g., of overlap) calculated as functions of the same ESs. Glass’s dG [5]/Hedges’s gG [6], Cohen’s d ′ [7]/Hedges’s g ′ [8], and Kulinskaya-Staudte’s d2KS [10] estimate population SMDs without assuming equal variances. Glass’s dG [5] (Glass, 1976) is a biased estimator of the population mean difference standardized by the standard deviation of the baseline population, and Hedges’s gG [6] (Hedges, 1981) is the corresponding unbiased estimator. When variances are unequal, these ESs estimate different population quantities depending on which population is chosen as the baseline. Cohen’s d ′ [7] is a biased estimator of the population mean difference standardized by the root mean square of the population variances (e.g., Bonett, 2008), and Hedges’s g ′ [8] is the unbiased estimator. However, the meaningfulness of these ESs may be questionable when variances differ substantially (Bonett, 2008). Kulinskaya and Staudte (2006) favored estimating the squared difference in population means standardized by a sample-size weighted average of population variances (d2KS [10]); however, this could be viewed as a flawed ES because of its dependence on sample sizes (Keselman et al., 2008). When the contrasted populations have equal variances, Glass’s dG [5]/Hedges’s gG [6], Cohen’s d ′ [7]/Hedges’s g ′ [8], and the square root of Kulinskaya-Staudte’s d2KS [10] all estimate the same population effect as Cohen’s d [1]. The original definition of the CLES [21] relies only on the normality assumption (McGraw & Wong 1992) and does not require equal variances (unless it is calculated as a function of Cohen’s d [1]). For other measures of (non)overlap and probabilistic measures of effect, nonparametric estimators may also be used (see below) instead of the parametric estimators described above.

The mean and variance are nonrobust measures of location and scale (Staudte & Sheather, 1990), meaning that small changes in the population distributions can greatly affect the value of these parameters. Thus, the SMDs that are functions of these parameters are themselves nonrobust (see Algina et al., 2005a). Therefore, researchers have devised robust equivalents of Cohen’s d [13], Glass’s dG [14], and Cohen’s d ′ [15] (Algina et al., 2005a, 2006; Keselman et al., 2008) that replace means with 20% trimmed means 1 and variances/standard deviations with 20% winsorized variances/standard deviations 2 in the respective ES definitions. These robust ESs can be scaled so that when the populations being compared follow normal distributions, they become equivalent to their nonrobust counterparts. Outlier-resistant SMDs are also provided by estimators of SMDs that standardize the sample median difference by a robust measure of scale, that is, the median absolute deviation (dMAD [40]), a scaled interquartile range (dRIQ [41]), or the biweight standard deviation (dbw [42]).

Nonparametric estimators of ESs for two independent groups

When normality and equality of variances are not satisfied, most of the effects described above remain perfectly sensible and interpretable. However, estimators that assume normality and equality of variances are going to yield distorted results, especially in the face of severe violations. If this is the case, nonparametric estimators can be recommended because they do not rely on the aforementioned assumptions.

Central tendency: SMDs (independent groups, nonparametric)

Hedges and Olkin (1984) proposed the nonparametric estimator

(Non)Overlap (independent groups, nonparametric)

For the measures of overlap and Cohen’s U1 [17], U2 [18], and U3 [23], nonparametric estimators ([35]–[37]) can be obtained by modeling the population probability density functions with appropriate kernel density estimators and calculating the proportion of (non)overlap with an appropriate quadrature formula (e.g., Schmid & Schmidt, 2006). The proportion of scores in one sample that exceeds (approximately) the same proportion of scores in the other sample can then be determined and used as a nonparametric estimator of Cohen’s U2 [38]. Likewise, evaluating the empirical distribution function of the sample with the lower mean at the 0.5 empirical quantile of the sample with the higher mean—that is, at the sample median of the higher mean sample—yields a nonparametric estimator of Cohen’s U3 [39].

Dominance (independent groups, nonparametric)

A nonparametric counterpart of the CLES [20] is the probability of superiority (PS [28]) estimator, which estimates the same population effect (Peng & Chen, 2014). Because the PS [28] is not derived under normality and equality of variances assumptions like the CLES [20], it can be applied more broadly (Grissom & Kim, 2005). The PS [28] can be computed using the Mann-Whitney U statistic, which indicates how often a randomly selected observation from one sample has a larger value than a randomly selected observation from the other sample. Much like the CLES [20], the PS [28] ignores tied values, which are not included in the computation of the estimator. However, there is a related measure introduced by Vargha and Delaney (2000), called the A measure of stochastic superiority. This index estimates the probability that a randomly sampled score from one group is greater than or equal to an independently drawn score from the other group. The estimator A [30] is computed using a version of the U statistics that accounts for ties by giving them a weight of 0.5. When ties are not possible in practice—for example, when the dependent variable is continuous and precisely measured—PS [28] and A [30] and the population effects they estimate are identical.

Another probabilistic measure of effect is the dominance measure (DM [32]), which is the probability that a score drawn at random from one group will be higher than a score drawn at random from the other group minus the probability that a score drawn at random from the latter group will be smaller than a score drawn at random from the former group (Cliff, 1993). This index measures the dominance of one group over another (Cliff, 1993), or the stochastic difference between the groups (Vargha & Delaney, 2000). The nonparametric estimator of this effect is a function of the PS [28]. Specifically, it is the difference between the PS [28] of one group over the other and the PS [28] of the other group over the first one.

Another ES that can be calculated as a function of the PS [28] is Agresti’s generalized odds ratio (ORg [34]). This index estimates the odds that a randomly sampled observation from one group will be superior to a randomly drawn observation from the other group (Grissom & Kim, 2012). The estimator is the ratio of the PS [28] of one group over the other and the PS [28] of the other group over the first one. Grissom and Kim (2012) recommended using these estimators when a research question can be adequately addressed by quantifying the extent to which the values of the dependent variable of one group are probabilistically superior to those in the other group. They further emphasized that A [30] can be considered a unifying ES because it is applicable to ordinal dependent variables and contingency tables. Finally, Mastrich and Hernandez’s (2021) argument that the CLES [20] can make research findings more readily interpretable by laypeople and practitioners applies just as well to its nonparametric counterparts.

TRs (independent groups, nonparametric)

A nonparametric estimator of the TR [45] is given by the ratio of the proportions of values falling below versus above a cutoff value in each sample. Thus, the nonparametric estimator of the TR [45] corresponds to the prevalence ratio (the analogue of the better known risk ratio in cross-sectional studies) if the contrasted groups are regarded as “exposed” and “unexposed” and values of the dependent variable below versus above the cutoff are treated as occurrences of the outcome.

Effect sizes for two dependent groups

The dependent-groups design—also known as repeated-measures design, within-subjects design, or within-groups design—is characterized by taking multiple measurements of a dependent variable on the same or matched individuals/observations under different conditions or across multiple points in time (Kraska, 2022).

Parametric estimators of effect sizes for two dependent groups

Central tendency: SMDs (dependent groups, parametric)

Under the assumptions of normality and equality of variances, Cohen’s d [1] and Hedges’s g [2] can be also applied to designs with dependent groups. They estimate the same population effect as in the independent-samples design, that is, the difference between two population means standardized by the common-population standard deviation. An alternate estimator of this population effect is dRM [3] (Morris & DeShon, 2002) and its small-N bias-corrected counterpart gRM [4] (Borenstein et al., 2021, p. 29). The dRM [3] estimator transforms the dz [11] estimator (see below) into the scale of Cohen’s d [1] based on the assumption of equality of variances and the relation between the standard deviations of individual measurements and that of change/difference scores (defined as the difference between the second and the first measurement or between related/matched units of observation). However, the values of d [1] and dRM [3] will differ in any given sample because the common-population standard deviation is estimated differently. Although the use of dRM [3] instead of d [1] as an estimator had a raison d’être before the derivation of the approximate distribution of d [1] in the dependent-groups design (Cousineau, 2020), as of this writing, we recommend reporting Cohen’s d [1] and its CI instead.

However, when the research question concerns a change in the level of the outcome measure within individuals—for example, as a result of some intervention—the proper kind of effect size is an SMD based on the standard deviation of difference scores themselves (Feingold, 2009). One such ES is the mean of difference scores—which is equivalent to the difference between the means of the dependent groups—standardized by the standard deviation of difference scores. Cohen’s dz [11] (Cohen, 1988) and Hedges’s gz [12] (Gibbons et al., 1993) provide a biased and unbiased estimator of this effect, respectively.

(Non)Overlap (dependent groups, parametric)

All measures of group (non)overlap described as ESs for the independent-groups design can be applied to dependent groups as well. Their interpretations change only in that they quantify the (non)overlap of dependent instead of independent population distributions. Under the assumptions of normality and equality of variances, these quantities can be estimated as functions of Cohen’s d [1], as in the case of independent groups.

Dominance (dependent groups, parametric)

For the dependent-groups design, the definition of the population effect estimated by the CLES [20] changes somewhat: It becomes the probability that within a randomly sampled pair of dependent observations, the observation obtained under one measurement is greater than the observation obtained under the other (Grissom & Kim, 2012, p. 172). This quantity is equivalent to the probability that a randomly sampled difference score is greater than zero. For example, if positive difference scores indicate an improvement in the outcome variable for the same person, the effect is the probability that a randomly drawn person will improve between two time points or conditions. If positive difference scores indicate deterioration in the outcome, the effect is the probability that a randomly sampled individual will worsen. Consequently, this ES is well suited for addressing research questions focusing on change within individuals. The parametric estimator of this effect [22] assumes a normal distribution of difference scores and is a function of Cohen’s dz [11].

Differences in variability and TRs (dependent groups, parametric)

Both the VR [26] and the TR [27] can be estimated in the dependent-groups design. Both the computation of CIs for the VR [26] and that of parametric point and interval estimators for the TR [27] assume bivariate normality in the dependent measurements.

Violations of assumptions (dependent groups, parametric)

When the assumption of equal variances is not satisfied, Glass’s dG [5]/Hedges’s gG [6] and Cohen’s d ′ [7] can be used to quantify the difference between means of two dependent groups. For dependent groups, the contrasted samples have equal sample size, and thus, the value of Cohen’s d ′ [7] coincides with the value of Cohen’s d [1]. However, because of differing underlying assumptions, the two estimators continue to estimate distinct population effects as described in the section on parametric ES estimators in the independent-groups design. Bonett (2015) described a bias-corrected version of d ′ [7] that we label d ′corr [9] in this article.

The outlier-resistant estimators of SMDs ([40]–[42]) discussed by Grissom and Kim (2001) can also be used when groups are dependent. The same goes for the robust versions of Cohen’s d [13], Glass’s dG [14], and Cohen’s d ′ [15] described for the independent-groups design. Keselman et al. (2008) and Algina et al. (2005b) argued in favor of dR,j [14] (the robust version of Glass’s dG [5]). However, when the research focus is on the average change within individuals, the appropriate ES is the robust version of Cohen’s dz (Wilcox, 2017, p. 213), labeled dRz [16] in this article. Like the other robust ESs, dRz [16] replaces the mean and the variance with robust statistics—that is, the 20% trimmed mean and the rescaled 20% winsorized variance.

Nonparametric estimators of effect sizes for two dependent groups

Central tendency: standardized median differences (dependent groups, nonparametric)

The nonparametric estimator

(Non)Overlap and dominance (dependent groups, nonparametric)

The measures of (non)overlap ([17], [18], [23]–[25]), the A measure of stochastic superiority [30], the PS [28], the generalized OR [34], and the DM [32], are all meaningful ESs for dependent groups. As mentioned above, the population effect estimated by the CLES [20]—and consequently by the corresponding nonparametric estimators—changes when the contrasted groups are dependent. The PS [29] estimates the probability that within a randomly sampled pair of dependent observations, the observation obtained under one measurement is greater than the observation obtained under the other (Grissom & Kim, 2012, p. 172). Likewise, A [31] estimates the probability that within a randomly sampled pair of dependent observations, the observation obtained under one measurement is greater than or equal to the observation obtained under the other. As in the context of independent groups, the population effect estimated by A [31] explicitly accounts for the possibility of tied observations. When ties are impossible, the PS [29] and A [31] become identical. Grissom and Kim (2012) recommended “that researchers provide both results, so that their results can be compared, or meta-analyzed” (p. 173). Along with PS [29] and A [31], the definition of the ORg [34] changes as well. It no longer estimates the odds that a randomly sampled observation from one group is superior to a randomly drawn observation from the other group but rather, that within a randomly sampled pair of dependent scores, the score observed under one condition is superior to the score observed under the other one (Grissom & Kim, 2012). Finally, in the dependent-groups design, the DM [32] can be defined as the sum of the within-subjects and between-subjects dominance [33]. The within-subjects dominance is the probability that individuals change in a given direction, which corresponds to the PS [29] and the A [31] measure. The between-subjects dominance is the probability that a randomly sampled score obtained by an individual on one measurement is higher than a randomly sampled score obtained by another unrelated individual on the other measurement (Cliff, 1993). Thus, this ES merges two distinct aspects of dominance of one measurement over the other.

TRs (dependent groups, nonparametric)

For any cutoff value on the dependent variable, each pair of dependent observations can be viewed as realization of a pair of Bernoulli events in which the possible outcomes fall above or below the cutoff value. This allows for a definition of a nonparametric estimator of the TR [45], again as a prevalence ratio. In the case of dependent samples, the prevalence ratio is the ratio of paired binomial proportions, or the ratio of the proportion of hits in one measurement to the proportion of hits in the other measurement (with hits being defined as values of the dependent variable above vs. below a given cutoff value).

ESs for PPC designs

The PPC design—also known as the pretest–posttest control-group design or the independent-groups pretest-posttest design—entails the random or quasirandom assignment of research participants to one of two conditions (e.g., a treatment or a control condition, a novel treatment, or a “gold-standard” treatment condition) and the measurement of an outcome variable at two points in time (i.e., before and after treatment; Morris, 2008). The major advantage of this design lies in its ability to estimate the effect of an intervention adjusting for potential maturation bias, which would confound a pretest–posttest design without a control group (Morris & DeShon, 2002).

Parametric estimators of ESs for PPC designs

All the ESs described in this section are calculated as differences between (a) the standardized difference between the posttest and pretest means of one group and (b) the standardized difference between posttest and pretest means of another group. In other words, they measure the difference between two independent SMDs of two dependent measurements. The main distinction between the various ESs lies in the choice of the standard deviation used to standardize each difference between the posttest and the pretest means.

As discussed in the section on parametric ES estimators for the dependent-groups design, the choice of a standardizer should reflect the focus of the research question that the ES is intended to address. Thus, if researchers wish to investigate the presence of a group difference in the level of the outcome variable, the standard deviation of the raw scores should be used to standardize each posttest–pretest mean difference (Feingold, 2009; Morris & DeShon, 2002). Under the assumptions that the pretest and posttest scores follow bivariate normal distributions with equal variances and pretest–posttest correlations, Morris (2008) proposed the difference between two groups’ posttest–pretest mean differences standardized by the common standard deviation as a suitable ES. This population effect can be estimated by dPPC,pooled-pre-post [52] and gPPC,pooled-pre-post [53] (e.g., Morris, 2008). Both estimators are constructed as the difference of the sample posttest–pretest mean differences divided by the pooled standard deviation of both groups’ pretest and posttest measurements; the gPPC,pooled-pre-post [53] is a bias-corrected version of this quotient. However, especially in research on intervention efficacy—a natural field of application for the PPC design—the assumption of equal variances is easily violated because of an increased postintervention variance resulting from differential effects of the intervention across test subjects (Byrk & Raudenbush, 1988; Carlson & Schmidt, 1999). As long as the pretest variances are assumed to be equal across the compared groups, the population effect can be recast as the difference between the groups’ posttest–pretest mean differences standardized by the two groups’ common pretest standard deviation. The dPPC,pooled-pre [50] (Carlson & Schmidt, 1999) estimator of this population effect is the difference of the sample posttest–pretest mean differences divided by the pooled standard deviation of both groups’ pretest measurements. The gPPC,pooled-pre [51] (Morris, 2008) statistic corrects for the upward bias in dPPC,pooled-pre [50]. Two additional estimators are given by the difference between the two groups’ posttest–pretest Glass’s dG [5] and Hedges’s gG [6] values; these are called dPPC,pre [48] and gPPC,pre [49], respectively (Becker, 1988).

When researchers aim to examine whether the level of an outcome measure shows a larger average change within individuals in one group—for example, a treatment group—than within individuals of another group—for example, a control group—the proper ESs are based on the standard deviation of change/difference scores (Morris & DeShon, 2002). Estimating the difference of within-groups posttest and pretest mean differences standardized by the respective group’s standard deviation of change scores yields the change-focused ES estimator dPCC-change [46] (Feingold, 2009; Morris & DeShon, 2002). This estimator is the difference between dz [11] computed for one group—for example, the treatment group—and dz [11] computed for the other group—for example, the control group. Because each dz [11] has an upward bias, the dPCC-change [46] estimator is upward biased as well. Computing the difference between the bias-corrected SMDs—that is, gz [12]—results in the bias-corrected estimator gPPC-change [47].

Nonparametric estimators of ESs for PPC designs

As described in the previous sections on nonparametric ES estimators, both Glass’s dG [5] and Cohen’s dz [11] have nonparametric counterparts that estimate the same respective population effects under normality. Because both dPPC,pre [48] and dPPC-change [46] can be decomposed into the difference between the dG [5] or dz [11] values of the two groups, the corresponding nonparametric estimators can be constructed as the difference between their nonparametric counterparts. Thus, the difference between the two groups’

Cliff (1993) argued that the research question addressed by the PPC design can be framed as whether posttest scores are more likely to be higher than pretest scores in one group (e.g., a treatment group) than in another group (e.g., a control group). A suitable ES to answer this question is the difference between each group’s probability of a posttest measurement being higher than a pretest measurement—that is, the difference between the two groups’ DMs [33]. Computing the difference of the respective group DM [33] estimates yields the dsPPC [57] estimator of the population effect (Cliff, 1993).

ESs for multivariate group comparisons

In this section, we discuss how two groups can be simultaneously compared on a set of multiple, possibly interrelated dependent variables. As stressed, for example, by Thompson (1994), whenever the question one is investigating has a multivariate nature, it should be matched by a proper multivariate data-analytic model. Thus, hypotheses about group differences in multidimensional psychological constructs (e.g., “profiles” of personality traits) are best addressed by computing multivariate ESs. Because they take into account the patterns of correlations among variables, multivariate ESs (e.g., multivariate group differences) often yield different results than a series of univariate analyses (e.g., univariate differences on each of the dependent variables; see Del Giudice, 2009; Del Giudice et al., 2012; Kaiser et al., 2020).

Central tendency: SMDs (multivariate)

Under the assumptions that the dependent variables of interest follow a multivariate normal distribution within each population, with equal covariance matrices, one can derive the multivariate counterparts of several univariate ESs discussed earlier. To begin with, the Mahalanobis distance D [58] (Mahalanobis, 2019) generalizes the concept of a standardized difference between means from the previously discussed one-dimensional case to higher dimensions (Olejnik & Algina, 2000). Just as Cohen’s d [1] estimates the distance between two group means in terms of the common standard deviation of the two groups, D [58] estimates the distance between the mean vectors (centroids) of the two groups in terms of the common multivariate standard deviation of the two groups in the direction of the line that connects the centroids (Del Giudice, 2009). In fact, D [58] is a function of the Cohen’s ds [1] of the dependent variables of interest (e.g., Olejnik & Algina, 2000).

Because it takes correlations into account, D [58] is not a simple sum or average of the univariate d [1] values for the dependent variables. D [58] equals or exceeds the largest of the univariate ds [1], and—depending on the pattern of correlations among variables—it can be substantially larger, such that a smorgasbord of small differences on multiple dimensions of a construct may well result in a sizable overall pattern of average dissimilarity between two groups (Del Giudice, 2009, 2013, 2023a). However, a given value of D [58] does not say whether the overall ES is the result of equal contributions of differences on the individual dimensions or the result of large differences on only one or a few dimensions (Del Giudice, 2017). In addition, note that being a distance, D [58] is always positive and thus can serve as only a global summary of similarity/dissimilarity between two groups (Del Giudice, 2009; Del Giudice et al., 2012; Kaiser et al., 2020), with no information about the direction of specific univariate differences. To address the issue of unequal contributions, Del Giudice (2017, 2018) proposed two indices, H2 and EPV2, to capture the heterogeneity in the contributions of the individual variables to the overall ES. These statistics are informative but admittedly crude, and future developments might bring about improved ways of measuring heterogeneity in multivariate ESs [58]. Scrutinizing the univariate SMDs and the correlation structure of the individual variables provides additional information about patterns of directional differences, highlighting the fact that univariate and multivariate ESs are complementary rather than alternative tools (Del Giudice, 2009).

Multivariate indices, such as D [58], must be used with care to avoid potential pitfalls (e.g., Del Giudice, 2013; Hyde, 2014; Stewart-Williams & Thomas, 2013). In this regard, we highlight two important points. First, one should include only conceptually related variables in the computation of D [58], such as those measuring distinct dimensions of a psychological construct, to avoid artificially inflating the size of D [58] by adding a large number of superfluous or irrelevant variables (Del Giudice, 2013). Second, D [58] has an upward bias that can be quite large when the collected sample size is low relative to the number of dependent variables and/or the population value of D [58] is small. Therefore, a bias correction should be applied to D [58], much like to Cohen’s d [1] in the univariate case, yielding the bias-corrected estimator

Other estimators (multivariate)

Just like Cohen’s d [1] can be converted into different ES estimators under the assumption of normal, homoscedastic population distributions, the Mahalanobis D [58] can be used to calculate the same estimators under the assumption of multivariate normality and equality of population covariance matrices. This holds true for the measures of (non)overlap described in the section on parametric ES estimators in the independent-groups design (e.g., Del Giudice, 2009, 2022; Reiser, 2001). In multidimensional space, the OVL [60] is the common area under the multivariate probability densities of the compared groups and can still be interpreted as a measure of agreement between groups (Reiser, 2001). Likewise, the multivariate OVL2 [61] is the proportion of the area under the combined multivariate density shared by two groups (Del Giudice, 2022), and U1 [62] is the proportion of the area under not shared by the groups (Del Giudice, 2009). See Table 2 for how the parametric estimators of these effects are related to D [58] and to each other.

Although the definitions of the (non)overlap measures discussed so far are essentially identical to the univariate case, some care is required to correctly interpret the multivariate versions of U3 [25], the CLES [20], the VR [26], and the TR [27]. Del Giudice (2022) described U3 [63] in multidimensional space as the proportion of one group with combinations of values of the dependent variables that are more typical of that group than the multivariate median of the other group. Likewise, the effect estimated by the multivariate CLES [64] is the probability that the combination of values of the dependent variables of a randomly selected member of one group is more typical of that group than the combination of values of a randomly sampled member of the other group (Del Giudice, 2022). In both cases, the group typicality of a data point is measured by its distance from the classification boundary between the two groups (for a detailed explanation, see Del Giudice, 2024). The PCC [65] in the multivariate case is the probability of correctly determining the group membership of a randomly sampled individual based on the individual’s scores on multiple dependent variables instead of being based on a single score in the univariate case. The parametric estimators of these ESs are also functions of Mahalanobis D [58] under the assumptions of multivariate normal populations with equal covariance matrices (Del Giudice, 2022).

With multidimensional data, the VR [26] of two groups can be defined as the ratio of their generalized variances (GV [67]) (Del Giudice, 2022), with the GV [67] being a one-dimensional measure of multidimensional scatter (see Sen Gupta, 2004). Finally, Del Giudice (2022) offered a definition of the TR [27] in multidimensional space as the proportion of members of one group relative to members of the other group in the region delimited by a hyperplane that is parallel to the classification boundary and z standard deviations away from one group’s centroid in the direction of the other group’s centroid. Under the assumptions of multivariate normality and equality of covariance matrices, the multivariate TR [66] becomes a function of Mahalanobis D [58], like the other ESs discussed above.

Shiny App

The web application was developed with the programming language R (R Core Team, 2021) and the Shiny package (Chang et al., 2018). It allows users to calculate 95 applications of 67 unique ES estimators along with their CIs based on both raw data and commonly reported summary statistics. In addition, it offers 14 different plotting options to visually explore the ESs and their interrelations. The application can be accessed through https://marton-l-gy.shinyapps.io/StatCompare-Whiz/. The complete source code and all the packages employed are available on Github: https://github.com/farambis/StatCompare-Whiz. The application runs in the most recent versions of the browsers Google Chrome, Mozilla Firefox, Safari, and Microsoft Edge. Inspired by the best-practice example of a shiny app for ES calculation presented by Tran et al. (2021), the app was designed to facilitate the computation of ESs, allowing users to explore and gain a deeper understanding of ESs through documentation and visualizations, which can also be used effectively in the teaching of statistics.

Home-page menu option

The home-page menu option contains important information on the application’s background and functioning. The home-page menu option is the starting point of the application (Fig. 1). Its interactive structure allows the users to expand the information they are particularly interested in. The menu option is divided into “About this app,” “File uploads,” and the “Design-specific data requirements & example data sets” sections (Fig. 1).

Application home page. The home page of the application, containing a section introducing the app, file uploads, and design-specific requirements.

The section “About this app” contains important information about the source code of the web application, a short summary of the motivation behind the app, and a condensed user guide for the app. The sections “File upload” and “Design-specific data requirements and example data sets” familiarize users with the expected file format and data structure for the different designs.

Navigation sidebar and dashboard body

The app is organized into a navigation sidebar and a dashboard body. Depending on which menu item the user has selected in the navigation sidebar, different contents appear in the dashboard body. These contents generally consist of a data-input panel on the left and top bar panels on the right, where outputs—such as computed ESs and visualizations—are rendered based on the user’s input. When first accessed by the user, the home page and the sidebar navigation menu are displayed by the application (Fig. 1).

The sidebar menu options after the home page follow the same conceptual structure used in the present article. Specifically, the top-level menu options correspond to the main design options—independent groups, dependent groups, PPC, and multivariate. Clicking most of these options displays two suboptions—parametric versus nonparametric—which correspond to the two kinds of estimators that can be computed. Finally, when the parametric option is clicked, a final layer of suboptions appears, allowing users to choose between the input of raw data and aggregate data (i.e., summary statistics). In the raw-data mode, users can upload their own data file and choose which variables to analyze and visualize. In the aggregate-data mode, users input the values of summary sample statistics that will be used for ES computation and plotting. This distinction between raw-data and aggregate-data mode is not made for nonparametric estimators because those ESs cannot be calculated based on summary statistics and always require the raw data. For multivariate analyses, the app does not offer a nonparametric suboption because no nonparametric estimators are implemented at this time. In addition, the aggregate-data mode for the multivariate design requires the user to upload two data files containing the group means and pooled covariance matrix.

Data-input panel and data visualization

There are two different ways for users to input the data used for calculations and visualizations, depending on whether the raw-data or aggregate-data mode is selected in a given design.

If the raw-data panel of a given design is selected, users must upload a CSV file containing the data they wish to analyze. The first row of the file should contain the variable names. Missing values should be coded as “NA.” Depending on the selected ES, users are required to specify the variables used for calculations and visualizations (Fig. 2).

Effect-size computation panel for (a) the dR [13] and (b) the tail ratio TR [27]. (Top) The dR [13] requires the user to choose only the α level of the confidence interval. (Bottom) The tail ratio TR [27] requires the user to select a cutoff value, a reference group, and the tail of interest.

After the variables have been selected, rows containing “NA” entries or empty fields in at least one of the selected variables are removed for the calculation (listwise deletion). Users are notified of this procedure and of the number of rows removed. Users are also notified if there is a problem with the selected variables. For example, selecting a grouping variable with more than two values will result in an error message informing the user that the group variable has to contain exactly two different values (i.e., denoting the two groups of the study design).

The uploaded data are also displayed as a table within the application, and summary statistics relevant to the selected design are calculated and displayed after the user has selected all required variables (Fig. 3, bottom).

Data panel. The data panel with the corresponding summary statistics of the uploaded data.

In the aggregate-data mode, the user can input the values of the summary statistics relevant to the selected design. For example, for the independent-groups design, the user can specify the sample means and standard deviations along with group sizes. The aggregate-data mode is particularly useful for exploring the effects of different input values on the ESs and their CIs both numerically and visually. The aggregate-data mode is available only for parametric data analysis within each design because the nonparametric ESs can be computed only from raw data.

ESs and test-statistics panel

Depending on the selected design, users can choose from a design-specific set of ESs to be calculated. Some of the ESs require additional inputs—such as a TR [27], for which a cutoff value, a reference group, and the tail region of interest have to be specified. When this is the case, previously hidden input fields are revealed, and the user is asked to provide the relevant values. Furthermore, users can specify the α level of the CIs for the selected ESs. Once the user has made all necessary specifications, the chosen ESs along with their CIs are computed and displayed in a table. In cases for which no closed-form formula for the computation of a CI could be identified in the literature, the CI bounds of the respective ESs are set to NA. In the raw-data mode, percentile bootstrap CIs based on 200 bootstrap samples are computed and displayed alongside the CIs based on closed-form formulas. Users can easily download the table as a CSV file by clicking a download button. The rendered table gets updated reactively as inputs change—either the data- or the ES-related specifications. This allows users, particularly in the aggregate-data mode, to observe the impact of varying input values on the ESs and their CIs in real time. A side effect of these recalculations is that bootstrapped CIs change every time inputs are altered because bootstrap resampling is random and thus yields somewhat different values each time. A notification informs the user whenever the bootstrap procedure is rerun to alleviate possible confusions. By automatically calculating and displaying the CIs along with the point estimates, this app should contribute to normalize the default reporting of CIs for effect sizes.

In addition to ESs, users can also select a number of informative test statistics (e.g., Welch’s t, Yuen’s t, Mann-Whitney U), which are also displayed in a downloadable output table. We note that test statistics are provided only for the independent-groups and the dependent-groups designs. The reason for this decision is that although there are clear “gold-standard” parametric and nonparametric inferential procedures for the independent-groups and dependent-groups designs, such as the t test or the U test and the Wilcoxon signed-rank test, data in the PPC and multivariate designs can be analyzed in a plethora of ways (see Morris, 2008).

Plots

The web application provides the option to visualize selected ESs (Fig. 4) for all the designs except the multivariate one. The plots contain selected summary statistics and ES values in the legend and can be downloaded as PDF files.

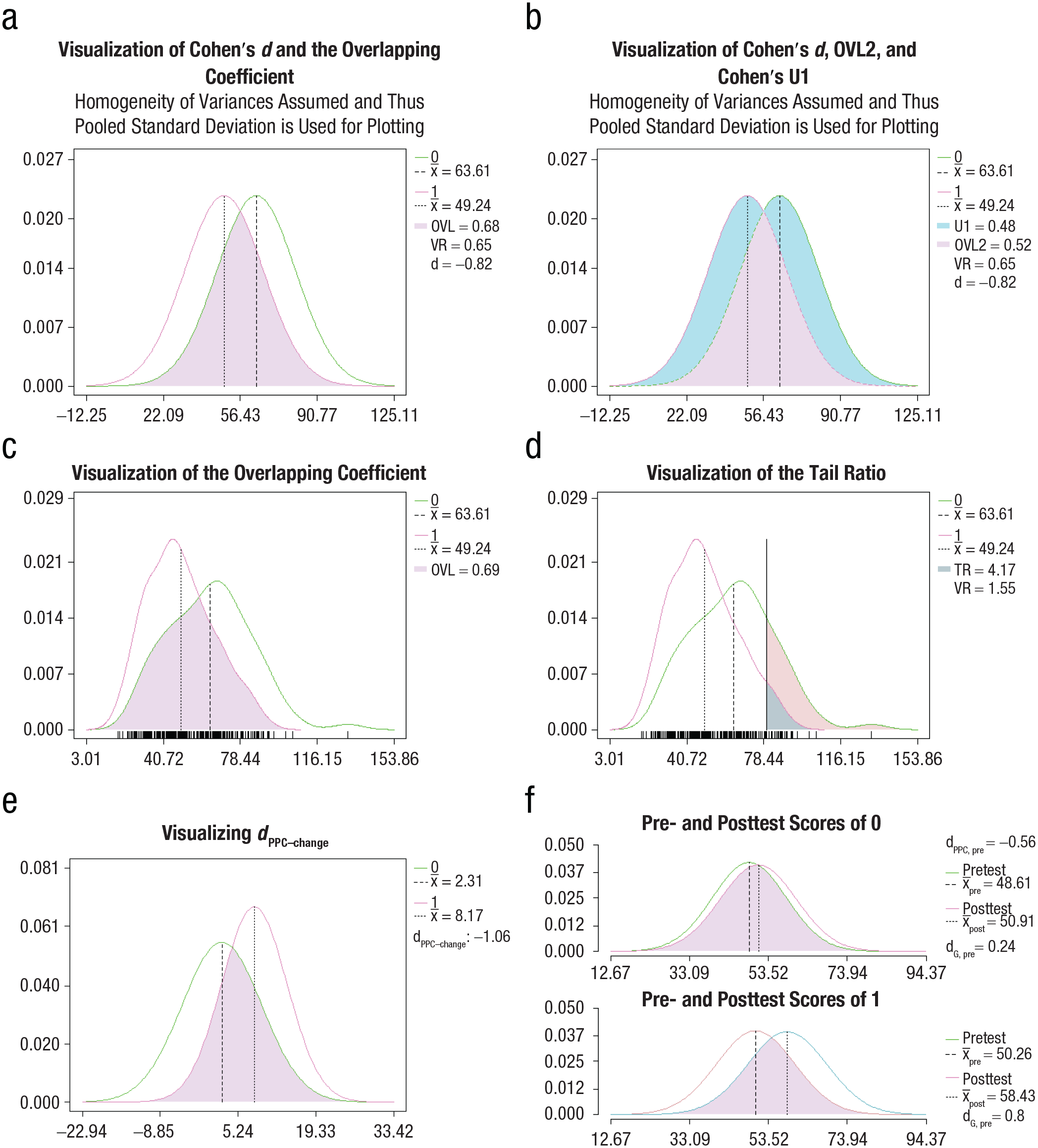

Example visualizations from the interactive web application: (a) Cohen’s d [1] alongside OVL [17] and VR [26]; (b) Cohen’s d [1] alongside OVL2 [18] and Cohen’s U1 [23]; (c) the nonparametric OVL [35]; (d) the VR [26], and the nonparametric TR [45]; (e) dPPC-change [46]; (f) dPPC,pre [48] alongside dG [5] in each group.

Presently, there are 14 different chart options available in our application, providing visualizations of (a) Cohen’s d [1] and OVL [17]; (b) Cohen’s d [1], OVL2 [18], and Cohen’s U1 [23]; (c) Cohen’s d [1] and Cohen’s U3 [25]; (d) the TR [27] and Glass’s dG [5]; (e) a zoomed-in visualization of the TR [27]; (f) the nonparametric TR [45]; (g) a zoomed-in visualization of the nonparametric TR [47]; (h) the nonparametric OVL [35]; (i) the nonparametric OVL2 [36] and Cohen’s U1 [37]; (j) the nonparametric Cohen’s U3 [39]; (k) a boxplot of all pairwise difference scores; (l) an interaction plot for the PPC design; (m) pretest and posttest scores of groups in the PPC design; and (n) the dPPC-change [46].

The nonparametric plots highlight how the data are actually distributed in the sample compared with the plots created based on the parametric assumptions. To our knowledge, the plots for the PCC design are not featured in any other point-and-click software and are available only in this app.

The visualizations highlight the mathematical and conceptual connections between different ESs. An example is the link between Cohen’s d [1], a measure focused on mean group differences, and the OVL [17], a measure focused on group similarities (Fig. 4, top left). The plots also highlight how the parametric analysis can differ from the nonparametric analysis because of the underlying assumptions.

The plots are updated reactively as inputs change. In the aggregate-data mode, this allows users to gain a real-time visual understanding of the different ESs and how they change with different input values. Thus, the aggregate-data mode offers an effective learning environment in which users can explore or demonstrate what different ES values mean in terms of the separation and overlap of the contrasted distributions. As shown in a recent study, psychologists tend to overestimate the amount of difference in standard-deviation units between the means of normal distributions by 0.5 on average (Schuetze & Yan, 2023). These data suggest that many researchers lack an accurate understanding of SMDs in terms of distributional separation. According to Schuetze and Yan (2023), a possible culprit is the long-standing but nonsensical practice of categorizing ESs into small, medium, or large categories based on arbitrary rules of thumb (see e.g., Funder & Ozer, 2019; Hill et al., 2008; Vacha-Haase & Thompson, 2004). This tendency to overestimate ESs can be alleviated by gaining familiarity with visualizations of the distributional separations with different values of Cohen’s d [1], which is a key functionality offered by our companion app.

Documentation page

For every study design, the application provides a documentation page containing background information on the offered ESs and on the formulas on which the calculations are based. Thus, the information pages promote transparency, verifiability, and reproducibility of the conducted calculations. They inform users about the variety of existing ESs for a given research design and offer information under which circumstances a certain ES should be considered. Note that we deliberately abstained from providing or advocating the use of fixed benchmarks for evaluating the size of the ESs provided by the app. What constitutes a “small” or “large” effect depends critically on the context and goals of a study; even different guidelines provide different criteria for classifying ESs into categories (Funder & Ozer, 2019). We actively encourage users to interpret ES values depending on the specific research domain and research question at hand and reach their own conclusions about the practical significance of a given effect (see Del Giudice, 2022; Funder & Ozer, 2019; Hill et al., 2008; Vacha-Haase & Thompson, 2004). This statement is also provided in the last paragraph of the “About this app” section.

Conclusion

The reporting and interpretation of ESs and their CIs have been deemed vital for psychological science because they provide crucial information about the magnitude or importance of a result, the precision of the estimate, the range of plausible values for the population effect, and the statistical significance of the effect (APA, 2020; Cumming, 2014; Thompson, 2002; Wilkinson & Task Force on Statistical Inference, American Psychological Association, Science Directorate, 1999). Although the prevalence of ES reporting has been on a steady rise since the 1990s, only a handful of estimators, mainly the Cohen’s d family of ESs, have been used for comparisons in two-group designs (e.g., Farmus et al., 2023). However, since the early days of SMDs (Cohen, 1962; Glass, 1976; Hedges, 1981), many novel types of SMDs have been introduced (e.g., Keselman et al., 2008). In addition, many alternate ES measures that have existed for a long time remain underused (e.g., the OVL; Cohen’s measures of nonoverlap; the nonparametric estimators proposed by Kraemer & Andrews, 1982, and Hedges & Olkin, 1984; or probabilistic ESs, such as the CLES or the DM). Many of these alternate ESs have been found to be informative by scientists and practitioners; hence, their adoption could aid both science and science communication at the same time (Hanel & Mehler, 2019; Mastrich & Hernandez, 2021). Finally, the multivariate counterparts of univariate ESs have yet to be widely adopted in psychological science even though many psychological constructs are multidimensional in nature, and group comparisons of these constructs would therefore benefit from multivariate quantification (e.g., Del Giudice, 2022).

Although extensive conceptual, theoretical, and statistical-mathematical reviews of these ESs exist (e.g., Del Giudice, 2022; Goulet-Pelletier & Cousineau, 2018; Keselman et al., 2008; Lakens, 2013; Peng & Chen, 2014), most of them have remained unavailable in user-friendly statistical software. As Lakens (2013) argued, reviews of ESs should be accompanied by easy-to-use applications to allow researchers to calculate the ESs described in the articles. We fully embrace this view and thus provide an easy-to-use, one-stop solution in the form of an online application. Crucially, the companion Shiny app computes an exact or approximate CI for every ES for which a CI procedure could be identified or a percentile bootstrap CI if raw data are provided. With this combined toolbox, our aim is to combat the lacking prevalence of reporting CIs for ESs.

The aggregate-data mode allows meta-analysts to compute most of the parametric ES estimators described here based on summary statistics that are commonly reported in primary studies. In addition, it offers an easy-to-use environment for exploring ESs that can be used by both course instructors and students. Although the output tables allow users to observe the effects that sample means, variances, sample sizes, confidence levels, and other variables have on the size of the ES estimates and the width of the corresponding CIs, the various plots can aid in gaining an intuitive understanding of the computed quantities by providing visualizations highlighting the parts of the distributions the ESs are based on.

The documentation pages not only list the mathematical formulas underlying the computation of every ES and their CI but also provide clear verbal descriptions of the ESs and also suggest possible areas of application. With these details, we aim to help users choose the appropriate ES(s) for their particular research question and interpret the chosen ES(s) correctly.

The plethora of ES estimators and their application described in the current article still does not represent an exhaustive list of estimators for the research designs we considered. In a similar vein, the suite of visualizations offered by the app does not cover all conceivable approaches to plotting group differences and similarities. Alternate approaches include, for example, the visualization of the groups’ quantiles based on the shift function and the relative distribution plots (see Handcock & Janssen, 2002; Wilcox, 2006). Such visualizations could be easily added to future versions of the online application (Khan & McLean, 2024 discussed still other options, including Gardner-Altman plots combined with features from box plot, box-violin plot, Cumming plot, density plot, jitter plot, spaghetti plot, or violin plot). Future updates of the app will also include additional ESs and visualizations and expanded functionalities to further improve the user experience (e.g., different file types for uploading data, different file types for downloading tables and charts, cross-references to the corresponding ES documentations from the ES selection menu). We hope that these tools will contribute to improve the statistical sophistication of psychological research and help integrate ES reporting in the everyday practice of our discipline.

Footnotes

Transparency

Action Editor: Pamela Davis-Kean

Editor: David A. Sbarra

Author Contributions

M. L. Gyimesi and V. Webersberger contributed equally to this article. The order in which they are listed as authors is based on the alphabetical order of their names.