Abstract

Plotting the data of an experiment allows researchers to illustrate the main results of a study, show effect sizes, compare conditions, and guide interpretations. To achieve all this, it is necessary to show point estimates of the results and their precision using error bars. Often, and potentially unbeknownst to them, researchers use a type of error bars—the confidence intervals—that convey limited information. For instance, confidence intervals do not allow comparing results (a) between groups, (b) between repeated measures, (c) when participants are sampled in clusters, and (d) when the population size is finite. The use of such stand-alone error bars can lead to discrepancies between the plot’s display and the conclusions derived from statistical tests. To overcome this problem, we propose to generalize the precision of the results (the confidence intervals) by adjusting them so that they take into account the experimental design and the sampling methodology. Unfortunately, most software dedicated to statistical analyses do not offer options to adjust error bars. As a solution, we developed an open-access, open-source library for R—superb—that allows users to create summary plots with easily adjusted error bars.

Keywords

After gathering the necessary data from an experiment, researchers are likely to plot their results before interpreting them. Although plots can be used to display raw data (e.g., scatterplots), in this article, we focus on plots that display summary statistics (e.g., mean plots). To be informative, summary plots must convey some indications of the results’ uncertainty. To that end, all summary statistics should be accompanied by error bars indicating the precision with which such results were obtained (Cumming, 2014; Loftus, 1993; Wilkinson & The Task Force on Statistical Inference, 1999).

Sometimes, error bars represent the standard deviation of the sample. However, many authors have recommended using bars depicting a range of values relevant to assess the true population parameter, either the standard error or the confidence interval (Cumming & Fidler, 2009; Cumming & Finch, 2001, 2005). In the long run, the former has about 68% chance of containing the population mean (if the population is normally distributed), whereas for the latter, the coverage is up to the researcher, and 95% is common. All descriptive statistics have an associated measure of standard error or confidence interval (for reviews, see Goulet-Pelletier & Cousineau, 2018; Harding et al., 2014, 2015). For concision’s sake, we concentrate herein on the mean and its confidence interval, but everything that follows is equally applicable to other descriptive statistics, such as the median or skewness. We return to this in the Discussion.

The standard error of the mean (SEM) is estimated with

in which S is the sample standard deviation and n is the sample size. Transforming a standard error of the mean into a confidence interval of the mean (CIM) requires increasing the coverage of this interval to a desired level. For the mean, the t distribution is used to get a coverage factor,

Equations 1 and 2 are well known. What is less known is that the resulting error bars offer limited information when comparing two groups and when comparing repeated measures, among others. These error bars should be understood as stand-alone bars: They apply to a single mean examined in isolation (the equivalent of a one-sample t test). Unfortunately, it is very uncommon to perform an experiment with a single group measured in a single condition. Hence, most of the time, the stand-alone error bars do not match the intentions of the researchers using them.

Through the years, a number of adjustments to the stand-alone measures of precision have been proposed, starting with the seminal work of Loftus and Masson (1994) for comparing means in within-subjects designs and Goldstein and Healy (1995) for comparing means in between-subjects designs (also see Baguley, 2012; Cousineau, 2019; Franz & Loftus, 2012; Nathoo et al., 2018; Tryon, 2001). Later, other adjustments were found to compare means from data that were not sampled randomly (Cousineau & Laurencelle, 2016) or coming from finite-size populations (Thompson, 2012).

When performing statistical tests of means, the procedure is adapted to the experimental design. For example, a researcher would use a two-group t test when comparing two groups or a paired-sample t test when comparing two repeated measures. These tests are tailored to the experimental design and to the purpose of comparing means. In that case, why would the stand-alone confidence intervals be used in all these diverse situations? Using these unadjusted confidence intervals is the equivalent of using a single-group t test. Would it be adequate to use that test in all situations? The answer is certainly not. Yet in making plots, this is exactly what is being done.

The purpose of this article is to propose and provide a coherent and unified approach to the computation of error bars for a wide array of situations. We call this the superb (summary plots with adjusted error bars) framework. In what follows, we first review the limitations of stand-alone confidence intervals. We then present the four classes of adjustments that compose superb. To demonstrate the viability of this framework, it was programmed into an R library that provides all the necessary adjustments by selecting options, as described in Appendix A. Before concluding, we examine the relation of these adjustments with well-known statistical tests of means. However, in the Discussion, we open the framework to a much wider array of utilization.

What Really Is a Stand-Alone Confidence Interval?

The stand-alone confidence interval of a parameter (e.g., the population mean) is an estimator of an interval that—over many samples—has a certain probability of containing the true population parameter. The probability, chosen by the researcher, is called the confidence level (often noted with γ). Technically, it estimates the quantiles of the predictive distribution of the population parameter (assumed to be a constant) given an observed sample (Rouanet & Lecoutre, 1983). It is an equal-tail interval in the sense that both extremities have an equal, weak chance of containing the population parameter in the long run. Confidence intervals are related to statistical tests: When the hypothesized population parameter is not included in the interval, the test will reject that hypothesis with a p value below

The stand-alone confidence interval of the mean of Equation 2 is based on assumptions. Most notably, it assumes the normality of the population. This assumption may be problematic (and we consider a workaround in the Discussion). However, it also surmises four additional assumptions. First, it assumes that the interval is used to compare a result with a fixed value (e.g., a value posed in a hypothesis). Yet almost always, researchers want to compare conditions with other conditions whose values are not preestablished. When comparing two samples, their mean separation is a different parameter, and its precision, being based on two imprecise means, is weaker (Cousineau, 2020). Thus, in this situation, the stand-alone confidence intervals of the mean are too short. Second, even when confidence intervals are adapted to relative assessments, they also assume that groups are measured independently. Yet repeated measures (e.g., pre-post designs) are frequently used because they are generally statistically more powerful than independent-sample designs. Increasing statistical power translates into improved precision. Consequently, the stand-alone confidence intervals of the mean are likely too long in such designs. Third, the data sampling method is assumed to be simple randomized sampling. This is not the only possible method, and other sampling techniques are often found in the literature that may increase or decrease precision (e.g., stratified sampling in survey studies or cluster randomized sampling in education; more on this later). When cluster randomized sampling is used, the stand-alone confidence intervals of the mean are probably too short. Finally, it assumes that the population size is very large or infinite. Again, for studies examining specific populations, the population size might be finite. Sampling a sizable part of a finite population improves precision. Thus, when the population size is small, the stand-alone confidence intervals of the mean are too long.

Past authors have published heuristics to handle some of these methodological considerations (beginning with Cumming & Finch, 2005). However, it was on the reader’s onus to mentally make these adjustments with all the approximations and biases that they entail. Yet the impact of such methodological considerations on precision is known with exactitude. Therefore, error bars can be adjusted to exactly reflect the experimental situation. The purpose of the superb framework is to provide these adjustments so that confidence intervals adequately reflect the research’s design and objectives.

As will be illustrated with the examples that follow, the risk with stand-alone confidence intervals is that researchers provide, possibly unbeknownst to them, visual information that contradicts the inferences made with statistical tests (Estes, 1997; Goldstein & Healy, 1995; Loftus & Masson, 1994). It would be unfortunate that such contradictory information plants the seeds of suspicion in a reader’s mind. The risk is larger when the reader is unaware of the existence of heuristics to interpret stand-alone confidence intervals and could have the detrimental effect that the readers no longer consider error bars. The present framework does not require heuristics from the readers, and all confidence intervals are to be interpreted in the exact same manner, which encourages their use.

The

superb

Framework

The superb framework implements the principle that adequate error bars must support (and consequently can complement) results obtained from other techniques. In the present case, the confidence intervals are like inverted statistical inference because they contain all results for which p > α. Following Tryon (2001), we may call the adjusted confidence intervals inferential intervals. To that end, the stand-alone confidence intervals undergo a series of adjustments to incorporate additional information with regard to the experimental design and the sampling methodology, all of which are known to alter precision. Each adjustment is applied to correct the length of the error bars by using a multiplicative correction factor; all adjustments can be combined by multiplying the correction factors. Thus, adjusting error bars is simple to implement and easy to understand. For example, a correction factor smaller than 1 implies shorter confidence intervals and, consequently, a data set with improved precision in comparison with the stand-alone confidence intervals that would have initially, and erroneously, been used.

Four classes of adjustments exist to account for these various methodological situations. The first adjustment accounts for the purpose of the experiment, the second accounts for the experimental design; the last two are adjustments for the sampling methodology and the population size. Each adjustment is described and illustrated with a brief example. The data and the script used to construct the figures are available at https://dcousin3.github.io/superb/articles/TheMakingOf.html. All the data are fictitious.

Adjustment for the purpose of the research

When the purpose of the experiment is to compare a single result with a prespecified value (i.e., is the average IQ of a certain group different from 100?), the stand-alone confidence interval of the mean is correct because it is designed precisely for that purpose. However, in most studies, means are to be compared with other means to assess their difference. When this is the case, an adjustment must be made to account for the additional uncertainty regarding the position of the two means relative to one another.

The simplest difference adjustment is a multiplier of

When the variances are heterogeneous, an alternative is the Tryon adjustment (Tryon 2001). Given the variances and the sample sizes in each group, it returns an adjustment that is 1.41 or larger (although it is rarely larger than 1.75). We return to this adjustment later.

Example 1

Consider a study examining the attitude of primary school students toward engaging in class activities. The participants are divided into two groups, one performing collaborative games when arriving to school and the other engaging in unstructured activities.

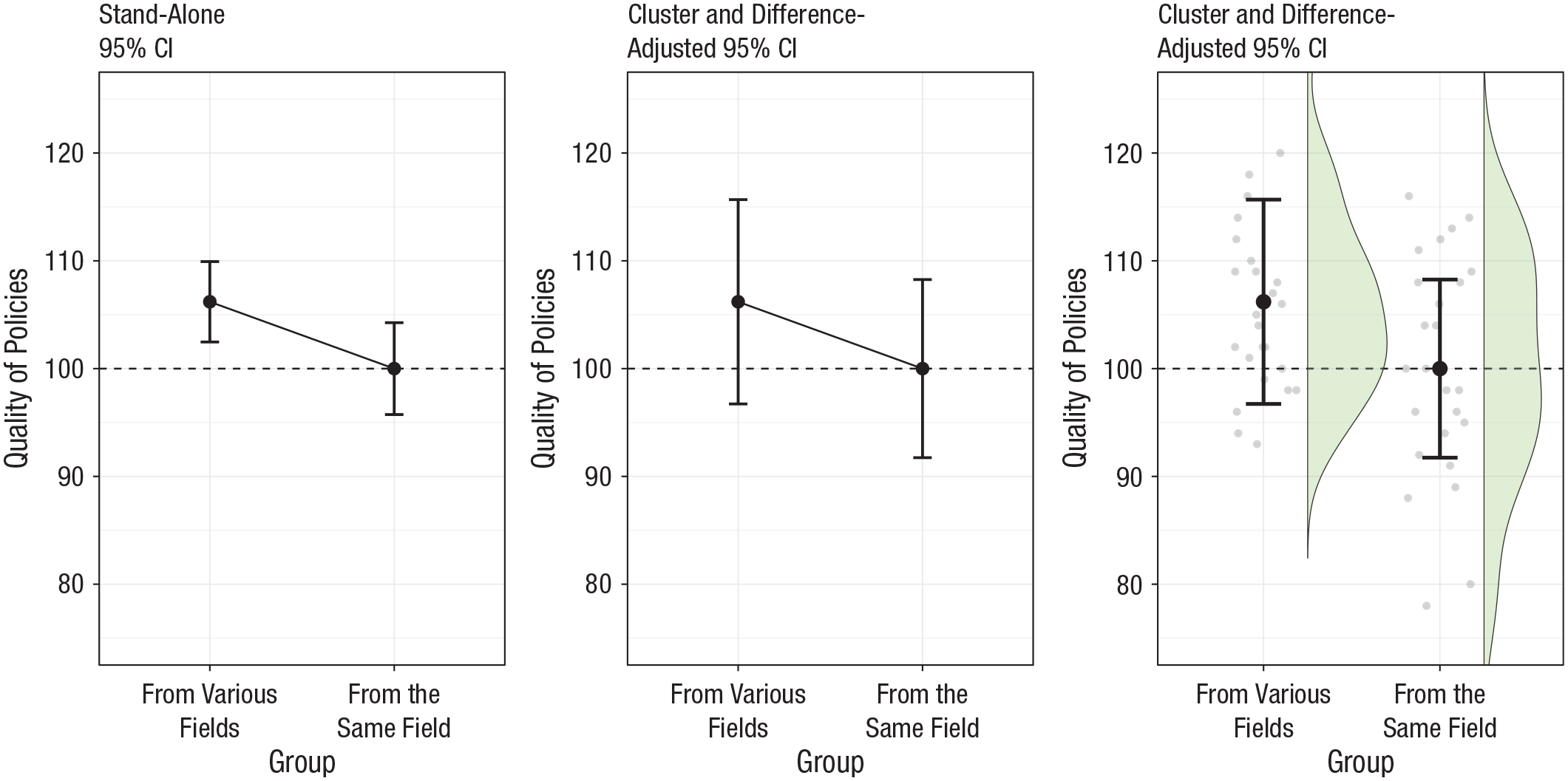

When comparing the two groups’ data with a t test, we find that the difference is not statistically significant, t(48) = 1.76, p = .085. Yet the stand-alone 95% confidence intervals of the mean seen in Figure 1 (left), suggest otherwise: The mean of the second group is not included in the first group interval (and vice versa). Applying a correction for the purpose of comparing the two groups, we get the plot in the center of Figure 1, which shows that the difference-adjusted 95% confidence intervals of the mean include the mean of the other condition, which suggests—rightly so—a lack of statistical difference at the .05 (1 - .95) level. The 41% increase in confidence interval lengths is enough to correct a situation that if gone unadjusted, would have returned conflicting information regarding the statistical relationship between both groups (statistical tests vs. plot).

Mean scores of fictional data examining attitude toward school activity. The dashed line corresponds to the mean of the control group. The left plot shows stand-alone 95% confidence intervals of the mean. Here, the mean of the control group is not included within the error bar of the experimental group mean, which suggests a significant difference between the two groups. In the central panel are the difference-adjusted 95% confidence intervals of the mean. Here, the mean of the control group is included within the error bar of the experimental group, which indicates rightly a nonsignificant difference between the two groups. The right panel shows a raincloud plot in addition to the difference-adjusted 95% confidence intervals of the mean.

If instead of using the difference-adjusted confidence intervals, the plot had been based on the Tryon-adjusted confidence intervals, the change would have been unnoticeable; the two correction factors are actually identical to three decimals because the two groups’ standard deviations are nearly identical (10.1 vs. 9.9).

In the third panel of Figure 1, a raincloud layout is used that shows the raw data with jittered dots and their distribution with a half-violin plot (Allen et al., 2019; Lane, 2019; Marmolejo-Ramos & Matsunaga, 2009; Rousselet et al., 2017; Weissgerber et al., 2015; Yang et al., 2021).

Adjustment for the experimental design

When comparisons are made between repeated measures, the confidence intervals may be adjusted further to account for the correlation between the data. For example, the correlation may arise because high-scoring participants tend to produce high scores on all measurements and vice versa. A simple adjustment, the correlation adjustment (CA), consists of multiplying the length of the error bars by the square root of 1 minus the average correlation between the measurements (Cousineau, 2019). Such confidence intervals are to be appraised relative to the lag between means and apply only to means of scores that possess a correlation similar to the observed correlation. They therefore are meaningless in different research contexts that have different correlations and in different experimental designs (e.g., with independent groups). In other words, they are tailored specifically for the present experiment. This is analogous to the fact that we used statistical tests adapted to the present experiment.

This CA is based on the assumption that the data are compound symmetric, a stronger assumption than sphericity. 1 Because the correlation is a pairwise statistic, it necessarily implies that the measures are compared. Therefore, the difference adjustment must also be applied in conjunction with the correlation adjustment.

When compound symmetry is not met, there is no multiplicative adjustment. However, some authors have suggested decorrelating the raw data using transformations before computing precision (Baguley, 2012; Bakeman & McArthur, 1996; Cousineau, 2005, 2017; Cousineau & O’Brien, 2014; Loftus & Masson, 1994; Morey, 2008). See https://dcousin3.github.io/superb/articles/Vignette8.html for definitions of these transformations. There exist two main techniques to obtain decorrelated data, one that gives all data points the same pooled standard deviation (referred to hereafter as LM, which stands for Loftus & Masson, 1994) and one that preserves the different standard deviations for each measurement (referred to hereafter as CM, which stands for Cousineau-Morey, following Baguley, 2012).

Example 2

Consider the pretreatment and posttreatment scores of 25 college students invited to perform visualization exercises to see whether it would increase statistics understanding. The design is a within-subjects design with two measurements.

Performing a paired-sample t test, we get a statistically significant difference, t(24) = −2.91, p = .008. The difference is 5.00 points, with a standard error of the difference of 1.72; the 95% confidence interval of the difference is [1.45, 8.55].

Despite this significant result, note that the stand-alone confidence intervals (Fig. 2, left) suggest a lack of significant difference. The adjusted confidence intervals seen on the center of Figure 2 rightly indicate the statistically significant difference.

Effect of the visualization exercises on statistics understanding. The dashed line corresponds to the mean of the pretest measurement. The leftmost plot shows stand-alone 95% confidence intervals of the mean. Here, the mean of the posttreatment measurement is included within the error bars of the pretreatment mean, which suggests a nonsignificant difference. The second plot shows correlation- and difference-adjusted 95% confidence intervals of the mean. Here, the mean of the posttreatment measurement is not included within the error bars of the pretreatment mean, which suggests rightly a significant difference between the two measurements. In the third panel, each participant’s data are connected with a line, which shows a benefit of the treatment for a strong majority of the participants. In the last panel, only the difference scores are shown.

In the central panel of Figure 2, a correction for correlation was used (the CA method; but the CM or LM methods would do equally well). It reduced the length of the confidence intervals. In the present data, the correlation is .84 (which may be high for human data; see Goulet & Cousineau, 2019). Consequently, the confidence interval lengths, which were first increased by a factor of 1.41 for the difference adjustment, are subsequently shortened by a factor of

The third panel of Figure 2 further shows each participant using a line. This makes it possible to see whether there is a trend (upward here) at the level of each participant. This trend is absent or reversed only for five participants out of 25. The fourth and last panel shows only the difference scores. In this last panel, there is no adjustment because the mean difference is to be compared with zero, a fixed value.

Adjustment for the sampling design

The standard for sampling participants from a population is called simple randomized sampling (SRS). It requires each member of the population to have an equal chance of being part of the study. SRS is the reference and requires no adjustments to the confidence interval of the mean.

However, it is not always possible to implement SRS. An alternative sampling method is cluster randomized sampling (CRS). In such methodology, clusters of participants are selected randomly, but all the members of the cluster take part in the study. CRS is often used in education, in which the clusters are classes.

In a situation in which the participants are recruited in clusters, there is a possibility that the cluster members are more homogeneous (compared with participants recruited randomly) because of a common history/environment. When this is the case, a small number of clusters reduces the chance of seeing the entire population’s variance, and consequently, statistical procedures may be too liberal for an astute interpretation of significance. An adjustment for CRS uses the number of clusters and the number of participants per cluster in addition to an intraclass measure of correlation (Shrout & Fleiss, 1979). The resulting error bars are most commonly increased under CRS. The adjustment is given by a correction factor called λ (Cousineau & Laurencelle, 2016). Again, the cluster-adjusted confidence intervals are meaningful in this experimental context only and should not be used to make inference relative to means obtained in different experimental situations.

Another sampling alternative is the stratified sampling method, by which the participants are selected to preserve some characteristics of the population, for example the prevalence of each age category. There is no known solution to adjust the confidence interval of the mean (it is even not known whether confidence intervals are too short or too long and which parameters are relevant to assess this; Kish, 1965; Thompson, 2012).

Example 3

The study examined the policymaking of teams composed of risk managers from various governmental agencies under a catastrophe scenario. The teams could either include members that have similar higher education and the same field of expertise (homogeneous teams) or include members that have various levels of education and different fields of expertise (heterogeneous teams). Although the teams were randomly selected, all its members participated in the study, which therefore resulted in a cluster-randomized sample. In this example, all the teams contain five members, but there can be any number of members per cluster.

Figure 3 (left) shows the stand-alone 95% confidence intervals (unadjusted for the presence of clusters of participants in the data). They suggest a significant difference between the two groups. However, a hierarchical linear model analysis indicates the lack of a statistically significant difference, t(4) =1.42, p = .11 (Bryk & Raudenbush, 1992). When adjusted for the presence of clusters (Fig. 3, center), we get the correct picture. The λ parameters in each group equal 1.80 for Group 1 and 1.36 for Group 2. Along with the difference adjustments (1.41), the confidence intervals are expanded by factors of 2.5 and 1.9, respectively. Fig. 3 (right) shows a raincloud layout (however, because the cluster members are not visible, it is not clear how useful that additional information is).

Mean scores of homogeneous and heterogeneous teams on the quality of risk management policies. The dashed line corresponds to the mean of the second group. The leftmost plot shows stand-alone 95% confidence intervals of the mean. Here, the mean of the control group is not included within the error bars of the experimental group mean, which suggests a significant difference between the two groups. The center plot shows cluster- and difference-adjusted 95% confidence intervals of the mean. Here, the mean of the control group is included within the error bars of the experimental mean, which suggests rightly a nonsignificant difference between the two groups. The third panel adds a raincloud plot to the second.

Adjustment for the population size

A final adjustment can be made if the size of the population is finite and known and if the sample is large enough that it represents a sizable proportion of said population (generally more than 5% of the population). When the population is limited in size, any additional participant makes the sample closer to the whole population, which allows for more precise inferences. If the sample contains more than 5% of the population, researchers are encouraged to use the finite-population adjustment (Cochran, 1953; Kish, 1965; Thompson, 2012). This adjustment is given by the square root of the proportion of the population left unsampled.

Example 4

Consider a sample in which 25 patients suffering from Hutchinson-Gilford-Progeria syndrome are examined. There is, at this time, only 50 patients known with this illness still alive. Hence, the current sample is actually very large, containing 50% of the whole population.

In Figure 4 (left), the stand-alone confidence interval suggests no improvement following a treatment relative to the baseline metabolic value of 100. Yet informing the interval that the whole population is composed of 50 individuals, the correct t test (Kish, 1965) returns a statistically significant difference to 100, t(24) = 2.64, p = .007. The population-size-adjusted confidence interval plotted in the Figure 4 (center) is congruent with this result. The adjustment is

Mean scores of patients treated. The dashed line corresponds to the baseline score. The leftmost plot shows a stand-alone 95% confidence interval of the mean. Here, the baseline is included within the error bar, which suggests a nonsignificant difference. The center plot shows a population-size-adjusted 95% confidence interval of the mean, which suggests a significant improvement. In the right panel, a violin plot is added to the adjusted confidence interval picturing the distribution of the sample, shown with jittered dots.

Naming the adjustments

To be interpretable, it is necessary that error bars be identified clearly and indicate which adjustments were made. There is no official nomenclature as of now, but some terms have been proposed to unify the practice (Baguley, 2012). Here, we suggest labels to unambiguously identify all adjustments described so far. These labels are listed in Table 1. With these labels, the expression difference-adjusted 95% confidence intervals would denote intervals proper for comparisons, whereas pooled correlation- and difference-adjusted 95% confidence intervals represents Loftus and Masson’s (1994) proposal in which all the error bars of the within-subjects factors are of the same length (pooled) and based on decorrelated data (correlation-adjusted). To our knowledge, no author has ever proposed to pool error bars across groups, and so this feature is absent from superb. Finally, the stand-alone confidence intervals—those obtained from most computer software—are plainly termed 95% confidence intervals. The adjustments need be indicated only in the figure caption to avoid overloading the main text.

Suggested Names Given to the Confidence Intervals Adjustments

Note: The equation numbers refer to Appendix B.

To conclude this section, in Appendix A, we provide a quick overview of an R library that implements the superb framework, the

Relation to Statistical Tests of Mean and the Golden Rule of Adjusted Confidence Intervals

In the superb framework, the confidence intervals of the means are adjusted to account for the purpose of the study (comparison or examination in isolation), for the experimental design (independent groups or repeated measures), for the sampling methodology used, and for situations in which the population is of finite size.

Herein, we briefly show that all the adjustments are exact, updating the standard errors from which the confidence intervals are obtained. To that end, we use the t test as a reference point. Keep in mind that the difference-adjusted confidence intervals are to be appraised with respect to the lag between means in a specific experimental design. It therefore cannot be interpreted in isolation or with means obtained using different experimental designs.

In a graphical representation, inference is made by examining whether one mean is included in the other mean’s confidence interval or, equivalently, whether the lag between the two means,

which is equivalent in mathematical form to

This is exactly a t test in which S is estimated with the pooled standard deviation. The only difference is that the coverage tc in the plot is based on separate degrees of freedom (

When the standard deviations, the sample sizes, or both are heterogeneous, the bars will likely be of differing lengths. In that case, the average confidence interval length is the reference. There are two ways to average confidence intervals.

Average in the square sense

Technically, standard deviations cannot be added and, consequently, cannot be averaged. Their square, the error variance, can and so is the squared lengths of confidence intervals. Thus, confidence intervals must first be squared, averaged, and then the result squared root (keep with us, Solution 2 is much easier). Computing the mean in the square sense, noted Mean* for short, we get

in which

which is exactly a Welch test (Derrick et al., 2016; Welch, 1938). In other words, a Welch test is obtained any time separate confidence intervals are averaged in the square sense. This test is more general than the two-group t test, but including this test as a special case should be preferred at all times (Delacre et al., 2018).

Here again, the graphical confidence intervals have separate degrees of freedom (

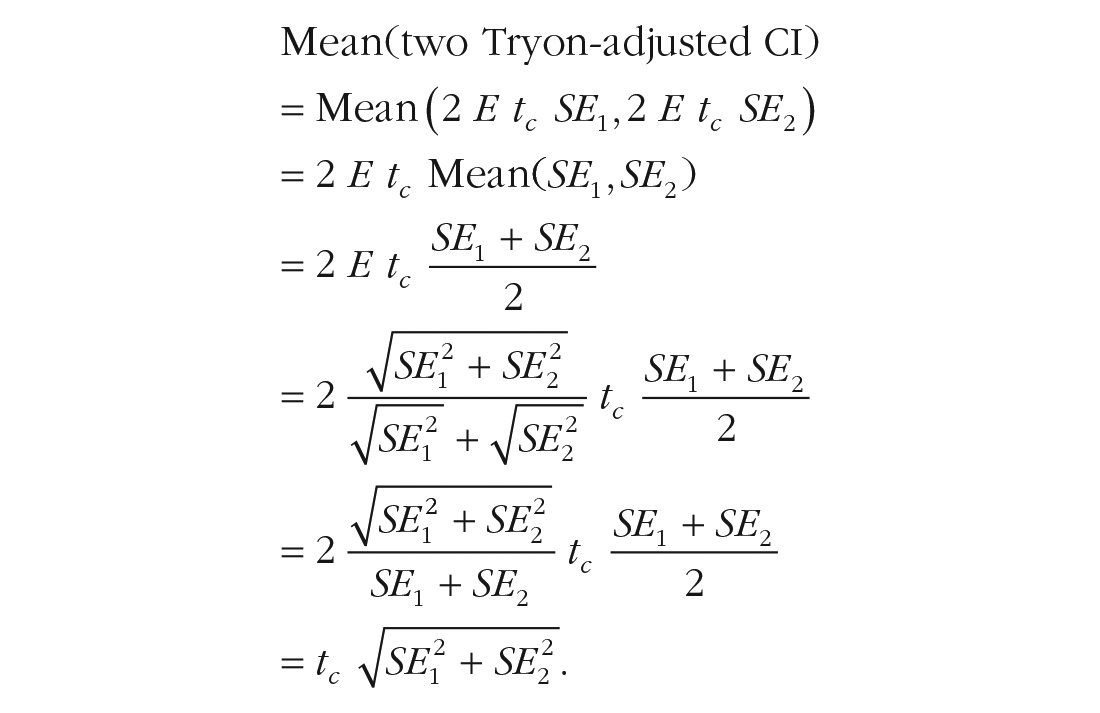

Simple average

The mean in the square sense returns a length similar to the mean in the usual sense. It is a little bit more influenced by long lengths than by short lengths so that it tends to be slightly longer than the regular mean. If this bias is to be avoided, an alternative is to use the Tryon adjustment (Tryon, 2001). This adjustment replaces the standard errors (which cannot be averaged in the usual sense) by slightly longer standard errors. By doing so, the usual mean on these altered lengths results in the correct length required for comparisons. Tryon’s adjustment, 2 × E, is based on the quantity E defined in Tryon (2001; see Appendix B). In the special case in which both sample sizes and variances are homogeneous, it equals

Hence, with Tryon-adjusted confidence intervals, a Welch test is performed visually with a regular mean of the error bar lengths.

As we show, all the ingredients of a Welch test are visually accessible in a plot with separate precision estimates. Furthermore, Tryon adjustment makes it easier to average the two error bar lengths. Finally, it shows that with unequal error bars, inference must be based on an average of the bars.

Similar formal derivations can be done for the CA (Cousineau, 2019; Cousineau & Goulet-Pelletier, 2021), for the cluster adjustment (Cousineau & Laurencelle, 2016), and for the population-size adjustment (Thompson, 2012). All are based on whether the lag between means is shorter than the adjusted confidence interval length (or their average).

Thus, any plot showing the adequately adjusted confidence intervals can be interpreted using a unique rule, the golden rule of adjusted confidence intervals: If a mean is not included within the confidence interval of another mean, it can be interpreted as being statistically different from that mean at the 1 - g level. When adjusted confidence intervals are of unequal lengths, difference-adjusted ones must be averaged in the square sense, whereas Tryon-adjusted ones must be averaged in the usual sense.

The key in this rule is the notion of inclusion. There have been heuristics published in the past that focused on overlap; however, this metric is inappropriate for adjusted confidence intervals.

The golden rule is mathematically equivalent to an unpooled Welsh test of mean. However, its application in plots is approximate in two ways. First, the exact limits of the confidence intervals are difficult to position visually precisely because the markers indicating the end of the confidence intervals have a certain thickness and it is not clear whether that thickness is located outside the interval, entirely within the interval, or partially inside and outside of the interval. Second, by default, the confidence intervals are based on separate degrees of freedom, whereas the statistical tests pool all the degrees of freedom together (in the R package, custom degrees of freedom can be provided to override the ones generated by default).

We can estimate the impact of these sources of approximation. Let us assume that we examine two groups with 20 observations per groups. The 95% confidence interval would be based on a critical t value of 2.02. If the limits are visually assessed with ±2% error and based on unpooled degrees of freedom, the resulting confidence interval could be inferred to be based on a critical t value of 2.13 and when perceived too short, to be based on a critical t value of 1.93. This is, in both cases, a 5% misestimation of the bar’s length; they correspond to a 96.0% and a 94.6% confidence interval. Hence, for small samples, misestimation results in a confidence level within ±1% of the desired 95% confidence level; for large samples, the confidence level is within ±0.5% of the desired level. Hence, if Rosnow and Rosenthal (1989, p. 1277) are right to say, “Surely, God loves .06 nearly as much as the .05,” then we can conclude that God loves plots with confidence intervals as well.

In sum, adjusted confidence intervals computed using the superb framework are tailored to the testing situation. Consequently, they match a Welch test very closely in any situation for which an adjustment is known and applied. Thus, the golden rule is constant irrespective of the methodological details. It is therefore a universal rule of adjusted confidence intervals. This unique rule is in our opinion more useful than assessing proportions of overlap, as suggested by the numerous rules of thumb that many apply (and misapply).

We offer one final word. Goldstein and Healy (1995), Baguley (2012), and Franz and Loftus (2012) suggested dividing the interval width by 2. Tryon (2001) framed this as a human-factor necessity. The golden rule of half-width confidence intervals would be “If the extremity of one statistic’s confidence interval does not overlap the extremity of another statistic’s confidence interval, the two statistics should be interpreted as being statistically different” (also see Tryon, 2001, p. 375). However, first, the two rules are quite different and potentially confusing (one being based on inclusion, the other on overlap). Confusion can be avoided only by using a single type of interval in a consistent manner so that the reader can develop the correct automatisms (Cousineau & Larochelle, 2004). Second, half-width intervals are meaningful only when comparing a mean with other means. As we demonstrated in the examples, this excludes results that are compared with fixed values. Fixed values do not have confidence intervals, which precludes one touching the other. For these two reasons, we do not recommend half-width intervals.

Discussion

In this article, we focused solely on means and confidence intervals of the means. However, any functions can be provided. First, any function that, given a list of scores, returns a summary statistic can be used for the point estimates. That includes the median (and other measures of central tendency), but it could also be measures of spread (standard deviation, variance, or interquartile range), measures of skew (Fisher skew, Pearson skew), and so on. See the documentation for the full list of functions currently implemented. It can also be custom-made functions. As an example, see https://dcousin3.github.io/superb/articles/Vignette4.html for how the Cohen’s d1 statistic can be defined in R and this function’s name given to the plotting function to generate point estimates. This summary statistic computes the standardized mean difference to a reference value (Goulet-Pelletier & Cousineau, 2018). Second, any measure of precision can also be used. It can be the standard error or the confidence interval (already available in the package) but could also be any custom-made function returning a length or an interval boundary. The above Web page shows how to define a confidence interval to d1 (Goulet-Pelletier & Cousineau, 2018) and use it in plots. Note that the difference between two Cohens’s d1s is in fact the usual Cohen’s dp. It is therefore a convenient plot to illustrate standardized effect sizes.

When superb is used to show confidence intervals, the complementary framework is null hypothesis statistical testing. This specific use of superb could therefore be labeled a frequentist superb framework. However, the superb framework is also compatible with other inference methods. The superb framework is indifferent to which inferential platform you choose. If you want to perform inference following null hypothesis significance testing, then confidence intervals are perfect. If you wish to remain neutral, you could choose to display standard errors. Both confidence intervals and standard errors are prepackaged in superb for more than a dozen descriptive statistics (including the mean, median, variance, skew, etc.). If you want to assess sampling error, opt for precision intervals (Cousineau & Goulet-Pelletier, 2021). Finally, for Bayesian examination, highest density intervals could be used, although they are not implemented at this time. Any custom-made function can be fed to superb.

Note that most estimators of precision included in the package at this time are parametric: They make assumptions regarding the distribution of the population. Alternatively, one could use nonparametric methods. One such method is the bootstrap method (Efron & Tibshirani, 1993). In a nutshell, this technique iteratively resamples data from the initial sample (with replacement) a large number of times (e.g., 10,000 times) to estimate the lower and upper boundaries containing the statistic of interest in 95% of the subsamples (Rousselet et al., 2019). Bootstrap intervals of the mean are nonparametric and require no assumptions other than the fact that the researcher has gathered an adequate sample. As we are reminded by Rousselet et al. (2019), bootstrap estimators are estimating the sampling distribution of the statistic, not the predictive distribution of the parameter. Hence, they are not confidence intervals but precision intervals (for the distinction, see Cousineau & Goulet-Pelletier, 2021).

In the package

Keep in mind that summary statistics along with adequate error bars is the bare minimum. Informative plots should be supplemented with information illustrating the whole distribution whenever possible, as was done with jittered dots (Lane, 2019), rainclouds (Allen et al., 2019), and violins (Marmolejo-Ramos & Matsunaga, 2009). These layouts are packaged in

Strengths and limitations of superb

The purpose of the

In addition, like any statistical method, precision estimation is based on assumptions. The confidence interval of the mean is based on a few assumptions. These assumptions could be tested, although some authors have warned against this practice (e.g., Delacre et al., 2018; Rochon et al., 2012, among others). The normality assumption can be assessed using a test of normality. When conditions from a within-subjects design are compared, the measurements must respect the sphericity assumption (methods CM and LM) or the compound symmetry assumption (method CA). These assumptions can be assessed with Mauchly’s test of sphericity (Mauchly, 1940) or Winer’s test of compound symmetry (Winer et al., 1991). The violation of these assumptions does not forbid the use of confidence intervals in graphs because they still provide a meaningful estimation of the variability around the displayed statistics. However, these confidence intervals should be interpreted with care.

As a last limitation, the superb framework is restricted to univariate (including repeated measures) variables. Multivariate studies in which interrelations between variables are important are not suited for superb. For example, there is no obvious way to plot a variance-covariance matrix with the present approach. Furthermore, it assumes that precision is also computed for a unique parameter. It is not obvious how the framework could represent parameter estimation or parameter precision from a hierarchical model with hyperparameters. A defining feature of the superb framework is that superb performs separate estimations. They can be pooled to make a Welch test, but they cannot interact.

The R package

Statistical tests can assess significance, but too often, they place the focus on whether the p threshold has been reached, which sometimes leads to using controversial manipulation, referred to as “p-hacking” (Simmons et al., 2011). Using visualization tools such as plots, researchers can better interpret the results, relying not only on statistical significance but also on the magnitude of the effect (Lakens, 2013; Loftus, 1993). The interpretation of results should then be more balanced. A contrario, without measures of precision, interpretation is less nuanced (Fricker et al., 2019). However, researchers must always keep in mind that confidence intervals, like significance testing, may bring focus on null hypotheses, which rarely reflect the hypothesized effect (Beaulieu-Prévost, 2006). To be thorough, one must also consider alternative hypotheses, contextualizing the observed results under different models, and generate new predictions, a cornerstone of scientific discovery (Jamieson & Pexman, 2020).

By providing an easy-to-apply framework to adjust the error bars, we wish to ease the transition toward a better visualization and interpretation of summary statistics. Although the techniques presented here are accurate and based on formal mathematical demonstrations, very few statistical software offer anything but the stand-alone (and potentially contradictory) error bars. Science is difficult; there is no reason to make it more difficult by using stand-alone measures of precision whose interpretation is inconsistent with the methodology used. Despite its limitations, we believe that the superb framework is a major improvement over the use of stand-alone measures of precision supplemented with approximate heuristics. We elaborate powerful experimental methodologies to improve statistical power; it does not make sense that the measures of precision shown in plots hide these gains.

Footnotes

Appendix A: A Very Short Introduction to the Superb Library

To implement the superb framework, we devised an R library, summary plots with adjusted error bar, or in short,

To upload it, issue the command

Once installed, only the following is necessary for subsequent sessions:

The library provides a main function,

Appendix B: Four Steps to Obtain superb-Compliant Adjustments

Algorithm B.1 provides an overview of the steps involved to obtain a superb plot. These steps are described in the next sections.

Acknowledgements

We thank Rick Hoekstra, Daniel Lakens, and Guillaume Rousselet for their comments and Félix Chiasson for advice on ggplots.

Transparency

Action Editor: Brent Donnellan

Editor: Daniel J. Simons

Author Contributions

D. Cousineau contributed the code and the first draft of the manuscript. M-A. Goulet and B. Harding tested the library and contributed to the text. All of the authors approved the final manuscript for submission.