Abstract

Most published meta-analyses address only artifactual variance due to sampling error and ignore the role of other statistical and psychometric artifacts, such as measurement error variance (due to factors including unreliability of measurements, group misclassification, and variable treatment strength) and selection effects (including range restriction or enhancement and collider biases). These artifacts can have severe biasing effects on the results of individual studies and meta-analyses. Failing to account for these artifacts can lead to inaccurate conclusions about the mean effect size and between-studies effect-size heterogeneity, and can influence the results of meta-regression, publication-bias, and sensitivity analyses. In this article, we provide a brief introduction to the biasing effects of measurement error variance and selection effects and their relevance to a variety of research designs. We describe how to estimate the effects of these artifacts in different research designs and correct for their impacts in primary studies and meta-analyses. We consider meta-analyses of correlations, observational group differences, and experimental effects. We provide R code to implement the corrections described.

Keywords

Meta-analysis is a critical tool for increasing the rigor of research syntheses by increasing confidence that apparent differences in findings across samples are not merely attributable to statistical artifacts (Schmidt, 2010). However, most published meta-analyses are concerned only with artifactual variance due to sampling error and ignore the role of other statistical and psychometric artifacts, such as measurement error variance (stemming from factors including unreliability of measurements, group misclassification, and variable treatment strength) and selection effects (including range restriction or enhancement and collider biases). In this article, we provide a brief introduction to the biasing effects of measurement error variance and selection effects and their relevance to a variety of research designs. We describe how to estimate the effects of these artifacts in different research designs and how to correct for their impacts in meta-analyses. We consider meta-analyses of correlations, observational group differences, and experimental effects.

As we just noted, most published meta-analyses are concerned only with sampling error variance and do not correct for other statistical artifacts. For example, we reviewed the 71 meta-analyses published in Psychological Bulletin during 2016 through 2018 and found that only 6 made corrections for measurement error variance, and only 1 corrected for selection biases (a similar review by Schmidt, 2010, found similarly low rates of corrections for statistical artifacts). Corrections for measurement error variance are commonly applied in industrial-organizational (I-O) psychology meta-analyses, but rarely applied in meta-analyses in other psychology subfields or other disciplines (Schmidt & Hunter, 2015; Schmidt, Le, & Oh, 2009). Corrections for selection effects are even rarer. They are typically performed only in meta-analyses of personnel selection and educational-admissions research (Dahlke & Wiernik, 2019a; Sackett & Yang, 2000). Measurement error variance and selection artifacts have severe biasing effects on the results of individual studies and meta-analyses. Failing to account for these artifacts can lead to inaccurate estimates and conclusions about the mean effect size and between-studies effect-size heterogeneity, and they can also influence the results of meta-regression, publication-bias analyses, and sensitivity analyses. When measurement error variance and selection effects are considered in meta-analyses outside of I-O psychology, they are often treated simply as indicators of general “study quality” and used as exclusion criteria or as moderators (Schmidt & Hunter, 2015, p. 485); both approaches are suboptimal, as measurement error variance and selection effects have predictable formulaic impacts on observed study results. Therefore, the best way to handle measurement error variance and selection effects is to apply statistical corrections that account for the known impacts of these artifacts. In this article, we describe the impacts of measurement error variance and selection effects on primary studies and meta-analyses, as well as methods to correct for these artifacts.

Disclosures

R code to reproduce the simulation results and corresponding figures presented in this article is available at https://osf.io/cp6rt/. R code to implement the correction and meta-analytic methods described in this article is available in the psychmeta package (Dahlke & Wiernik, 2019b).

Measurement Error Variance

Measurement error variance is an artifact that causes observed (i.e., measured) values to deviate from the “true” values of underlying latent variables (Schmidt & Hunter, 1996). 1 For example, consider a political psychologist assessing political orientation using a 10-item measure with items rated on a 7-point scale. Some respondents might obtain a mean score of 5 (somewhat conservative) or 3 (somewhat liberal) across the 10 items, when their true score is in fact 4 (moderate, centrist). Measurement error variance is also called unreliability (Rogers, Schmitt, & Mullins, 2002), observational error (Walter, 1983), and information bias (L. K. Alexander, Lopes, Ricchetti-Masterson, & Yeatts, 2014a). In the case of continuous variables, measurement error variance is also called low precision (Stallings & Gillmore, 1971); in the case of dichotomous or grouping variables, measurement error variance is also called misclassification (L. K. Alexander et al., 2014a).

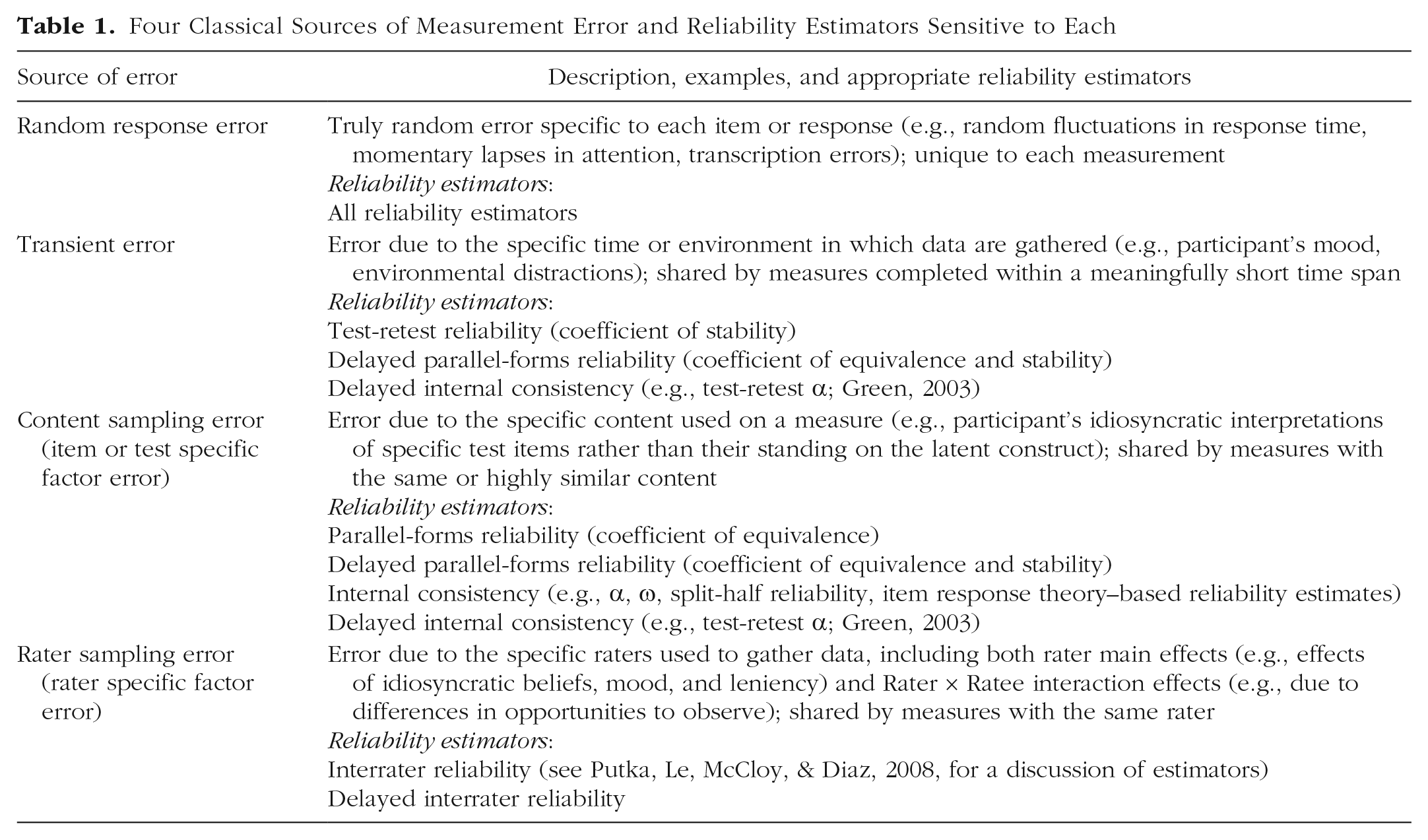

Measurement error variance can come from a variety of sources, including truly random response errors (e.g., momentary distractions, arbitrary choices between adjacent scale points), transcription errors, transient or temporal effects (e.g., poor performance on a cognitive assessment due to fatigue on a particular day), environmental effects, content sampling effects (i.e., the specific items or content in a measure not functioning the same way as possible alternative items or content), rater effects (e.g., raters’ differences in knowledge, motivation, or beliefs), and low sensitivity or specificity of measurement instruments, among others. These diverse sources of error can be grouped into four “classical” categories (Cronbach, 1947; Schmidt, Le, & Ilies, 2003; see Table 1 for descriptions).

Four Classical Sources of Measurement Error and Reliability Estimators Sensitive to Each

It is important to note that measurement error variance concerns more than just whether participants’ responses were correctly recorded; rather, measurement error variance is more about the process that generated the responses and whether the responses would be the same if the process were repeated at different times, by different raters, or with a different instrument or item set (Schmidt & Hunter, 1996). For psychological measures, random response error and transient error are typically the largest sources of measurement error (Ones, Wiernik, Wilmot, & Kostal, 2016).

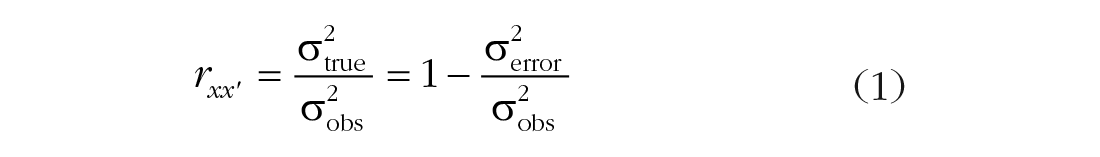

The amount of measurement error variance in a sample of scores is quantified using a reliability coefficient,

Conceptually, the reliability coefficient is the correlation between two parallel measures of a construct. The square root of the reliability coefficient (

Structural model illustrating the relationship among two parallel measures and their underlying latent variable. The correlation between the measures (rxx′) is their reliability coefficient, and the correlation between each measure and the underlying latent variable is the square root of the reliability coefficient (

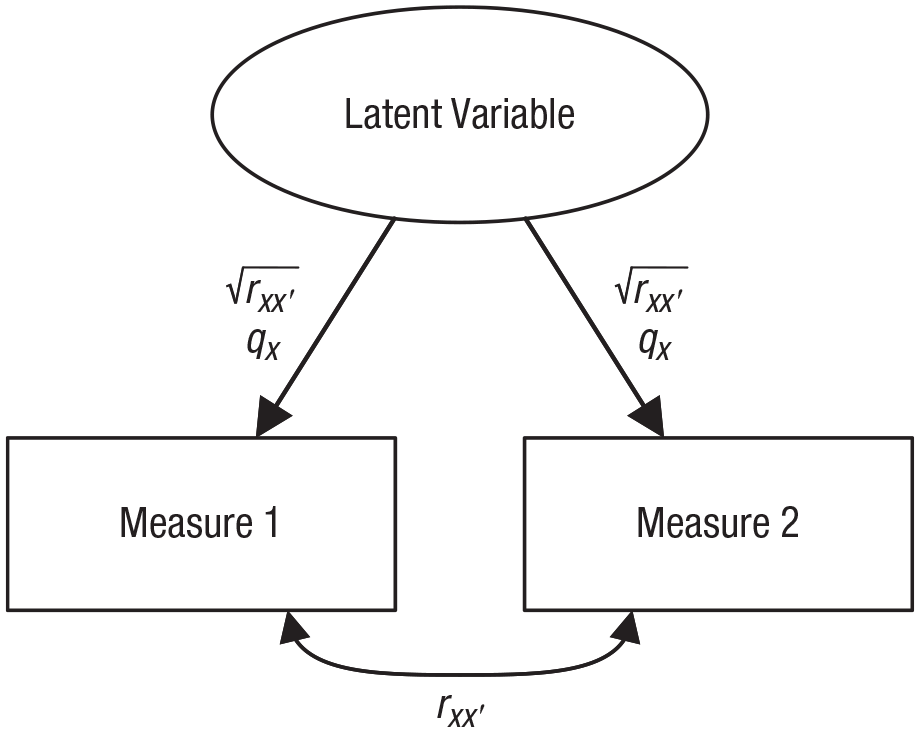

At the level of individual scores, random measurement error cannot be corrected, as the effect on a specific score is unknown; the best that can be done is to place a confidence interval around a score by using the reliability coefficient and the standard deviation of observed scores to estimate the standard error of measurement (Dudek, 1979; Revelle, 2009). However, when scores are aggregated in data sets and are used to compute correlations between two variables or to compare scores across groups, measurement error variance has a known, predictable impact of biasing the effect size toward the null (i.e., toward zero). For example, consider the case shown in Figure 2. The scatterplots show hypothetical data for a researcher studying the relationship between intentions to recycle and actual recycling behavior, both measured using self-reports. The true correlation between these latent constructs, ρ, is .50, but the reliability of each measure, rxx′, is .80. Consequently, the expected observed correlation, rxy, is only .40. In other words, the expected observed correlation underestimates the true correlation between the latent constructs by .10, or 20%. 3

Illustration of measurement error variance’s impact on correlation coefficients. Both the predictor (x) and the criterion (y) measures have reliabilities, rxx′, of .80 (qx = .89). Consequently, although the true correlation between the constructs, ρ xy , is .50 (left panel), the expected observed correlation, rxy, is only .40 (right panel).

Impacts of measurement error variance in meta-analysis

Measurement error variance will impact the results of meta-analyses in three ways: by (a) biasing the mean effect size toward zero, (b) inflating effect-size heterogeneity and confounding moderator effects, and (c) confounding publication-bias and sensitivity analyses.

Mean effect size

When constructs are measured with random error, the mean effect size in a meta-analysis of relations between measures of these constructs will be biased toward the null (i.e., toward zero for r or d values). The amount of this null bias in the mean effect size is a function of mean reliability across studies. For example, if the true mean correlation between neuroticism and life satisfaction across 30 studies,

Effect-size heterogeneity and moderator effects

Measurement error variance can also bias estimates of the between-studies heterogeneity random-effects variance component (i.e., τ 2 in Hedges-Vevea notation;

Conversely, measurement error variance can also obscure true moderator effects that do exist. Measurement error variance will increase the standard errors of mean effect sizes in subgroup moderator analyses and moderator regression coefficients in meta-regression. These larger standard errors might lead meta-analysts to retain the null hypothesis of no moderator effects.

Publication-bias and sensitivity analyses

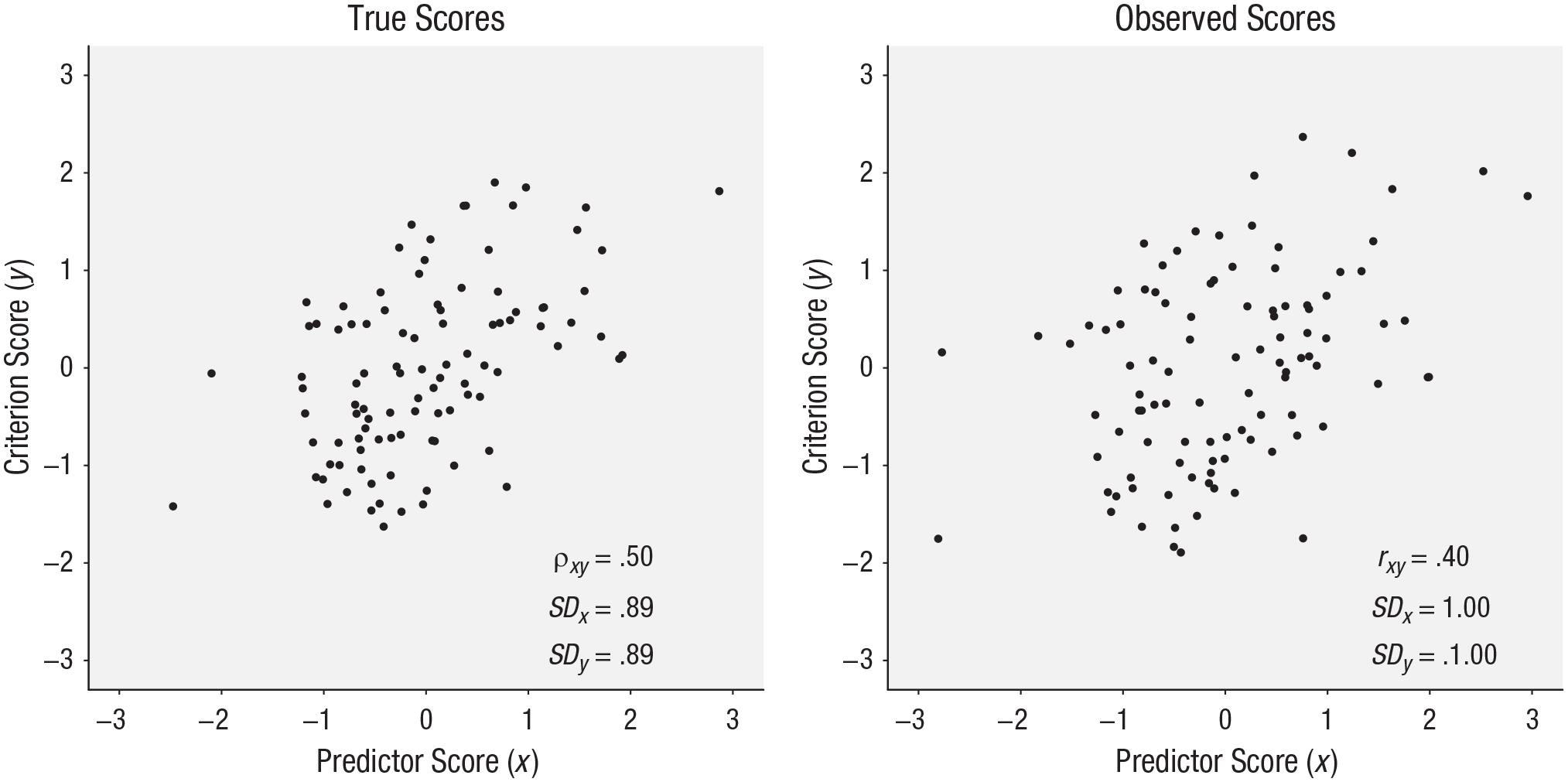

Measurement error variance can also cause publication-bias analyses to suggest the presence of bias when none exists. For example, consider a case in which meta-analysts compare published with unpublished studies and find that published studies have larger effect sizes (e.g.,

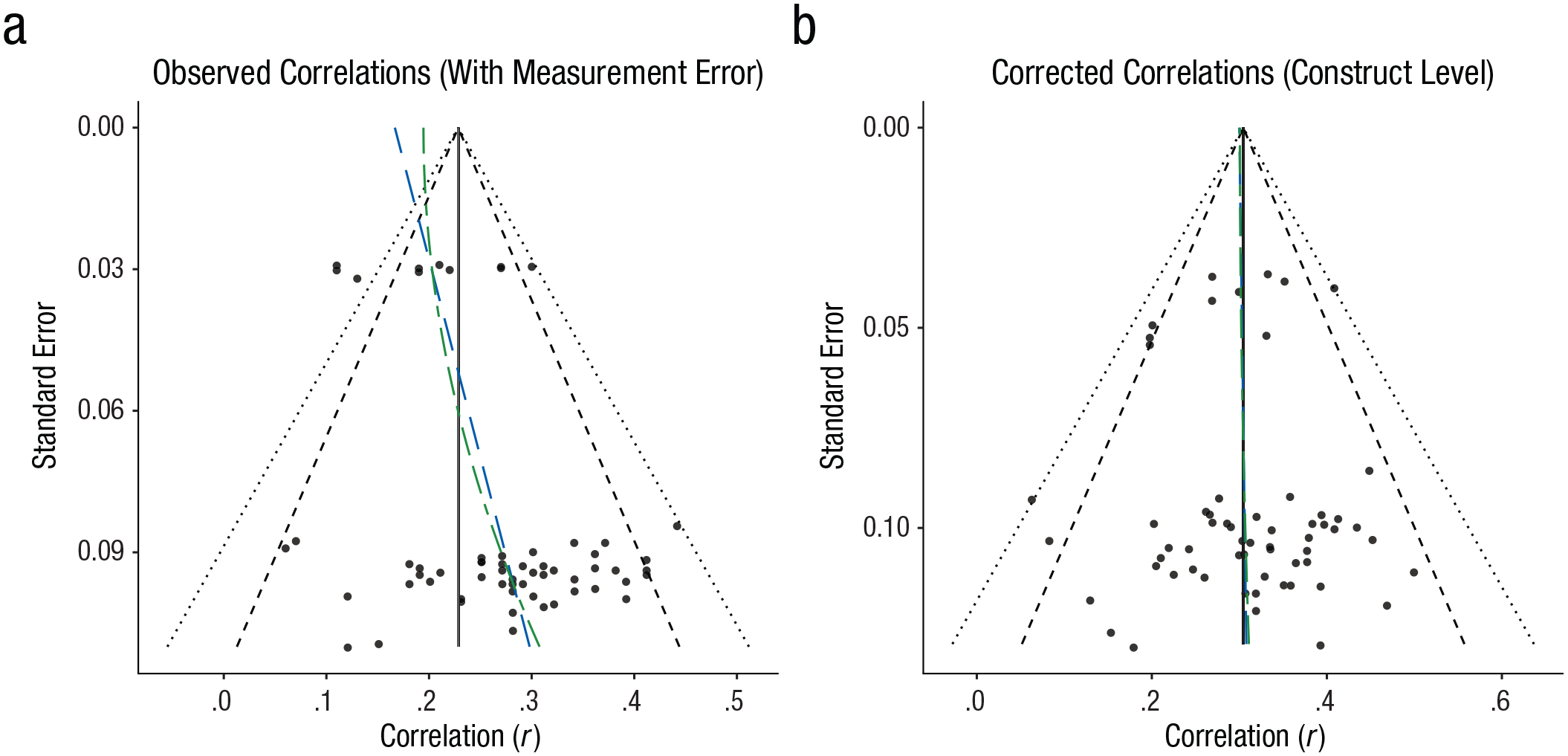

More seriously, cumulative meta-analysis, the precision-effect test and precision-effect-estimate-with-standard-errors estimator (PET-PEESE), selection models, and other procedures for correcting for publication bias will provide accurate results only if included studies have equal reliability or are corrected for measurement error variance (Carter, Schönbrodt, Gervais, & Hilgard, 2017; Schmidt & Hunter, 2015). If measure reliability is correlated with sample size, then small studies may have larger effect sizes for reasons other than publication bias. For example, a meta-analysis might find that smaller-N studies show a larger relationship between personality traits and mental-health outcomes. However, if these smaller studies used longer, more reliable personality scales, but the larger studies used less reliable, ultrashort scales (cf. Credé, Harms, Niehorster, & Gaye-Valentine, 2012), then this small-sample effect is the result of differences in measurement quality, not due to publication bias. This effect is illustrated in Figure 3. The funnel plot for observed correlations (Fig. 3a) is asymmetric. Fitting PET and PEESE regression models (see Carter et al., 2017, for details on computation and interpretation) shows a substantial relation of effect size with either standard error (PET: bSEi = 1.19, 95% confidence interval = [0.53, 1.86]) or sampling error variance (PEESE: bV i = 9.35, 95% CI = [4.04, 14.66]). These results would typically be interpreted as indicating at least some effect-size inflation due to publication bias or questionable research practices. Conversely, the funnel plot for corrected correlations (Fig. 3b) is more symmetric, and the PET and PEESE models show negligible relations of effect size with either standard error (PET: bSEi = 0.06, 95% CI = [−0.60, 0.73]) or sampling variance (PEESE: bV i = 0.62, 95% CI = [−3.78, 5.01]).

Illustration of biasing effects of differential reliability on publication-bias analyses: (a) funnel plot of the relation between observed correlation effect sizes and standard error in published reports and (b) funnel plot of the same data after the correlations have been corrected for measurement error variance. Black vertical lines indicate mean effect sizes; black dashed lines indicate 95% confidence regions, and black dotted lines indicate 99% confidence regions. Blue long-dashed lines are precision-effect-test (PET) regression lines. Green dashed lines are precision-effect-estimate-with-standard-errors (PEESE) regression lines. The true correlations between the constructs have a mean,

In short, differential reliability can distort inferences about publication bias by weakening and distorting observed relations of effect sizes with standard errors or other metrics.

Correcting for measurement error variance

As noted earlier, measurement error poses a serious threat to the accuracy of conclusions drawn from primary studies and meta-analyses. Researchers are well advised to reduce these biases by using more reliable scales. However, measurement error variance can also be statistically corrected post hoc to estimate the true relation between latent constructs, without measurement error variance. In this section, we discuss impacts of measurement error variance on correlations and group comparisons, and present methods for correcting for measurement error variance for different research designs.

Applying corrections for measurement error variance will generally yield less biased estimates of relationships between constructs, particularly in meta-analyses, but corrections for measurement error do not come without a cost. Applying statistical corrections increases the sampling error in the corrected effect sizes, leading to larger standard errors and wider confidence intervals. It is always better to reduce measurement error by using more reliable measurement procedures during data collection, rather than to rely on statistical corrections after the fact (Oswald, Ercan, McAbee, Ock, & Shaw, 2015).

Correcting for measurement error variance in correlations

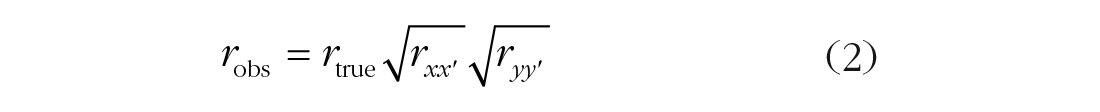

When a meta-analysis cumulates correlation coefficients, the correlation will be attenuated by measurement error variance in both variables. This is the case for both psychological scales (e.g., personality measures) and other variables (e.g., course grades, self-reported eating behavior, objectively measured exercise behavior, clinician-rated symptoms). Even demographic variables are subject to measurement error variance, such as incorrectly rounding ages, mismarking responses, or adjusting one’s responses depending on whether or not one is allowed to endorse multiple races or ethnicities or to endorse a nonbinary gender. The amount of attenuation in a correlation is a multiplicative function of the square roots of the reliabilities of the two measures:

To correct for this attenuation due to measurement error variance, divide the observed correlation by the product of the square root of the reliabilities (Spearman, 1904) 4 :

This formula is analogous to estimating the correlation between latent variables in a structural equation model when the specified model has simple structure (Westfall & Yarkoni, 2016).

Correcting for measurement error variance in group-comparison research

Measurement error in the dependent variable

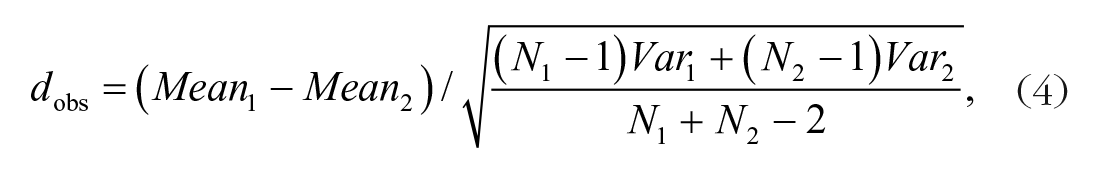

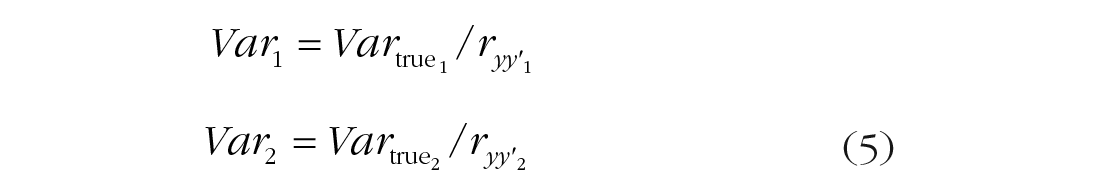

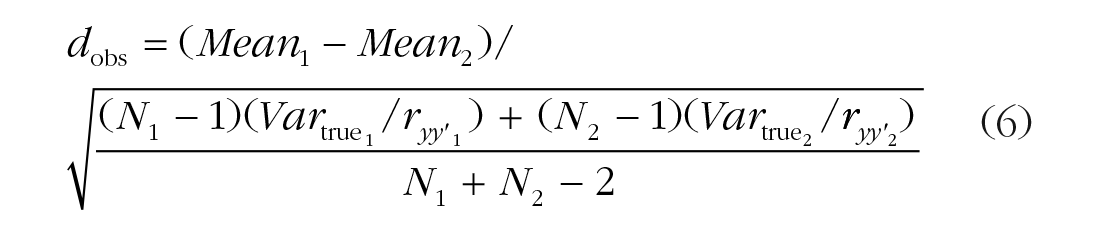

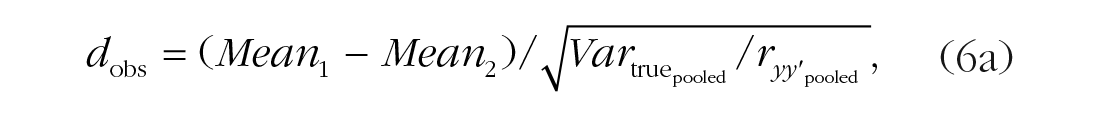

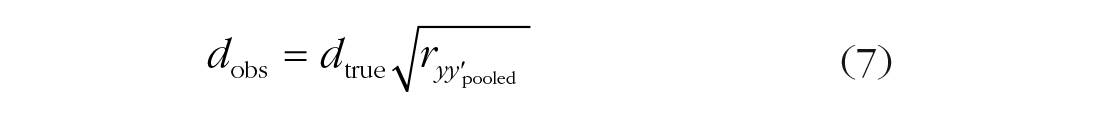

Measurement error variance is also an issue in group-comparison research (e.g., studies of gender differences or studies comparing people with and without a psychological diagnosis). In these studies, measurement error variance in the dependent variable has an effect on the standardized mean difference (Cohen’s d) that is similar to the effect of measurement error variance on correlations. Cohen’s d is calculated as

where Var1 and Var2 are the variances for Groups 1 and 2, respectively. The value under the square-root operator in Equation 4 is the weighted average within-group variance (Varpooled), so the equation can be written more simply as

Random measurement error in the dependent variable does not affect the difference between group means, but it does increase the magnitude of the within-group variances:

Note that dividing by

where

Measurement error variance in the dependent variable can be corrected using a correction similar to that for correlations 5 :

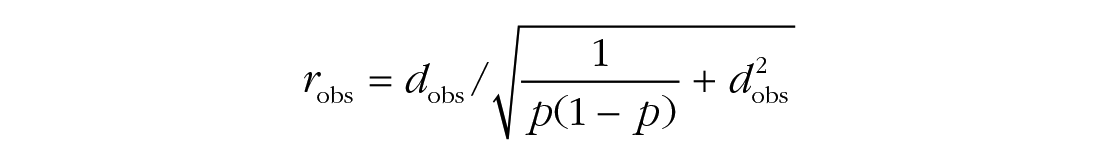

If estimates of

where p is the proportion of the sample in one group. Second, correct robs using Equation 3:

This three-step approach is also used to correct d values for other statistical artifacts, as we discuss later in this article.

Group misclassification

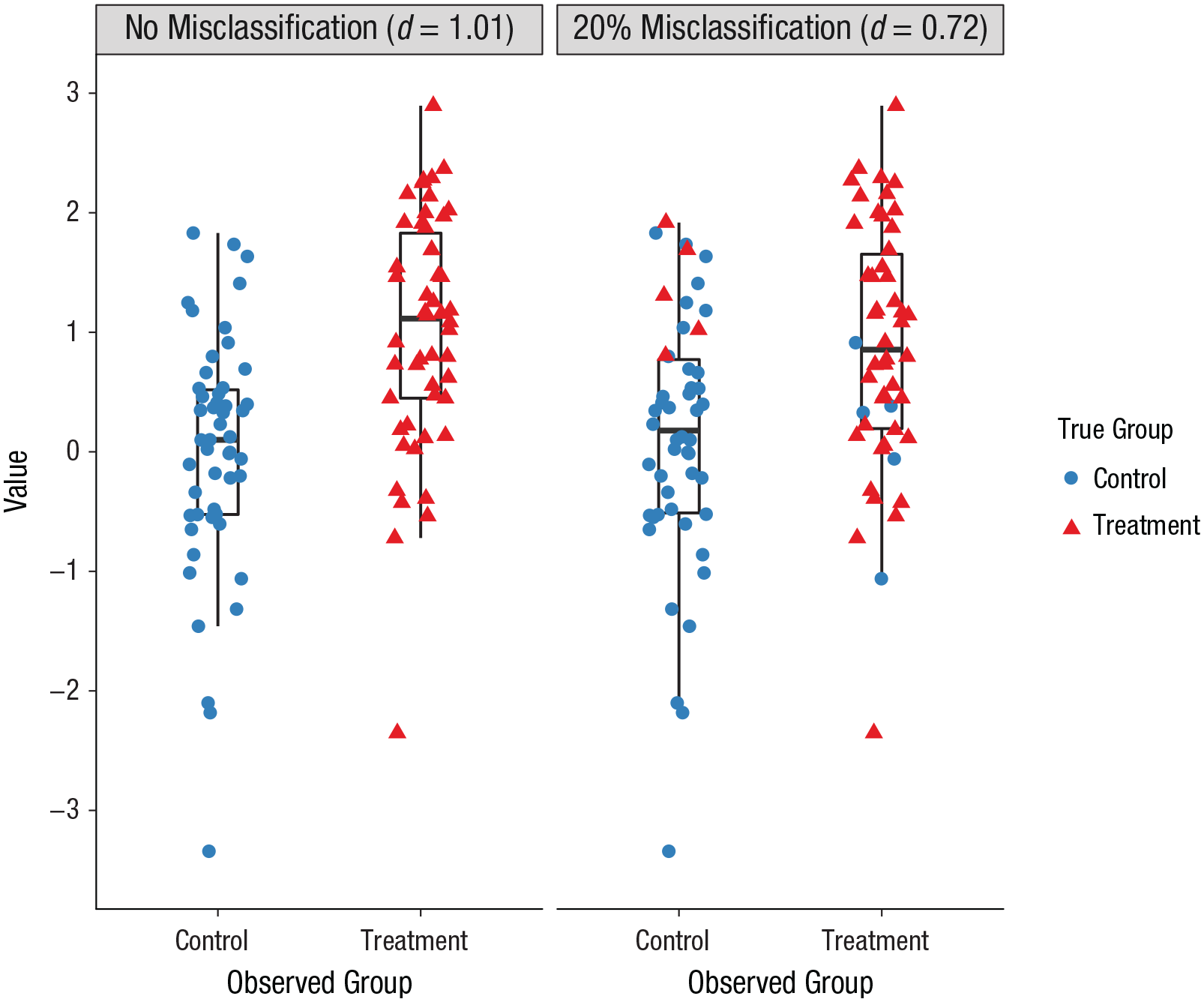

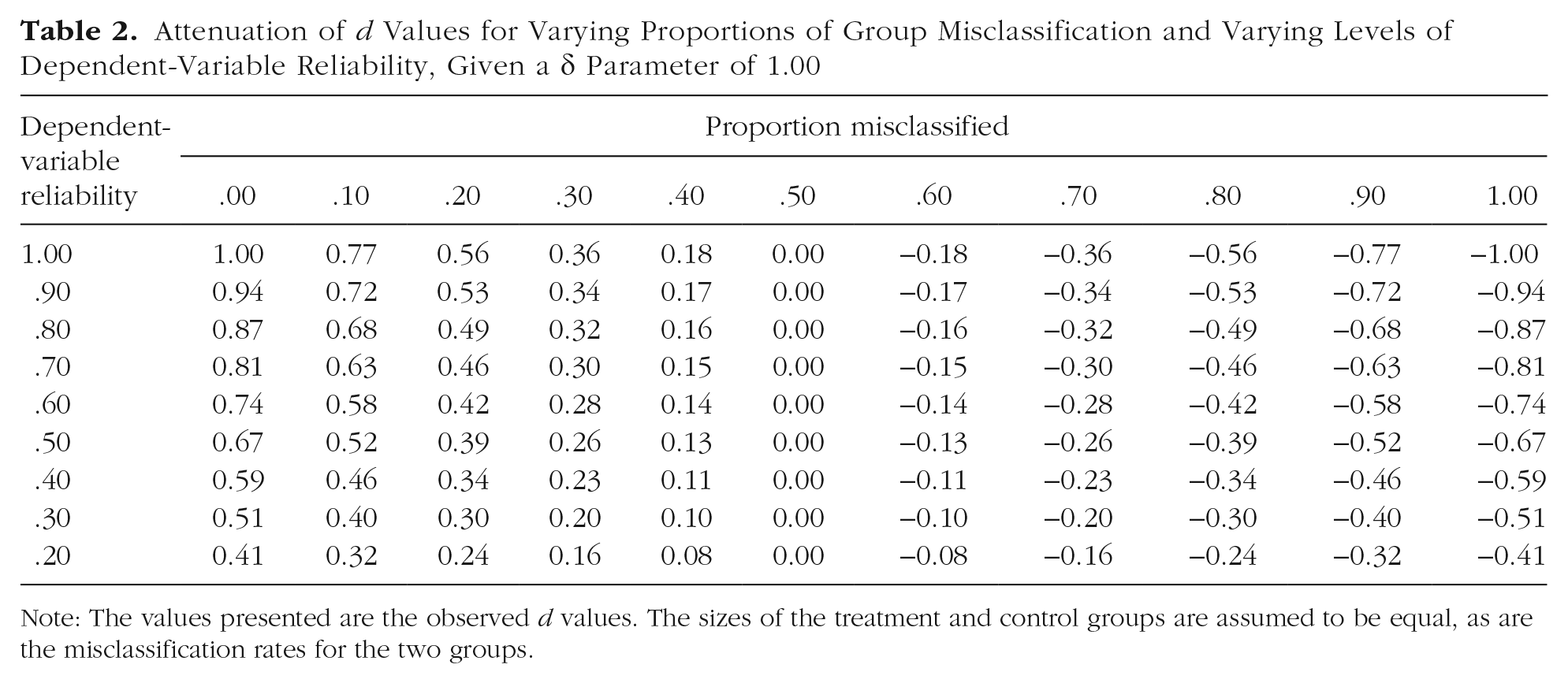

Measurement error variance can also be present in the independent variable used in group comparisons. This occurs when individuals are misclassified as being a member of one group when they are actually members of another group (Wacholder, Hartge, Lubin, & Dosemeci, 1995). For example, in a study comparing coping behaviors of people with and without a specific disorder, some participants in the no-disorder group may actually have the disorder but be undiagnosed. Similarly, in a study comparing substance users with nonusers, some participants may be unwilling to respond honestly when asked about their substance use. The impact of misclassification on observed d values is illustrated in the simulated data in Figure 4. In this case, a misclassification rate of 20% reduced the magnitude of the group difference from a true d value of 1.01 to an observed d value of only 0.72, a reduction by .29, or 28.7%. Expected magnitudes of attenuation in d values caused by differing degrees of misclassification and varying levels of dependent-variable reliability are shown in Table 2.

Illustration of the impact of misclassification on d values. Even with no measurement error variance in the dependent variable, the misclassification rate of 20% (10% of participants in each group misclassified) leads to nearly a 30% reduction in the observed d value.

Attenuation of d Values for Varying Proportions of Group Misclassification and Varying Levels of Dependent-Variable Reliability, Given a δ Parameter of 1.00

Note: The values presented are the observed d values. The sizes of the treatment and control groups are assumed to be equal, as are the misclassification rates for the two groups.

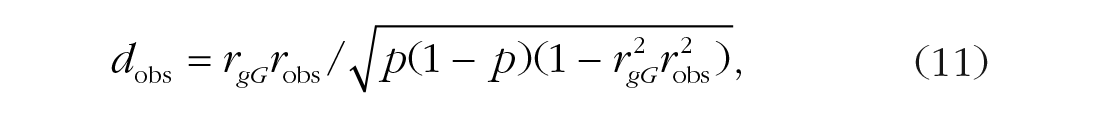

The impact of group misclassification on d values is a function of rgG, the correlation between observed group membership (g) and actual group membership (G) 6 :

where robs is the point-biserial correlation calculated using Equation 9.

To correct a d value for group misclassification, use the three-step procedure described earlier (cf. Schmidt & Hunter, p. 262). First, convert dobs to robs using Equation 9. Second, correct robs using the same disattenuation formula described in Equation 3:

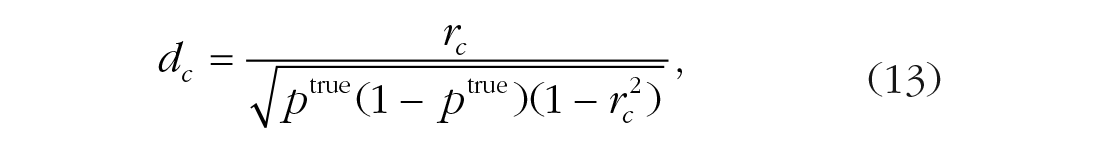

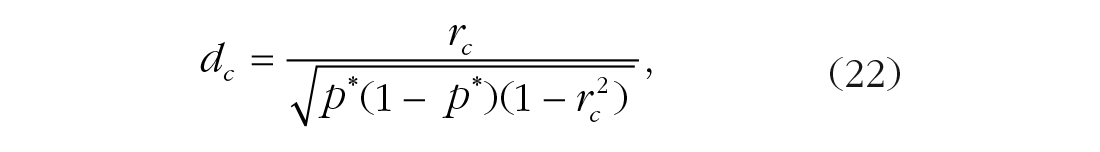

Note that robs is divided by rgG itself, not the square root of rgG, because rgG is analogous to the square root of the reliability (the correlation between the measured variable and the latent construct). Third, convert rc back to dc using a variant of Equation 10 7 :

where ptrue is an estimate of the true group proportion (without misclassification). If ptrue is unknown, the observed group proportion, p, can be used with the assumption that misclassification is equal across groups.

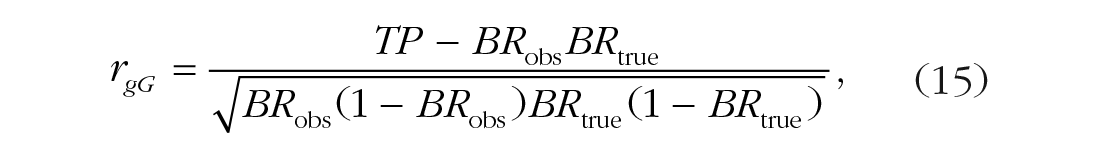

You can estimate rgG by conducting a study to quantify the accuracy of observed group classifications. For example, in the case of diagnosis for a disorder, epidemiological data could be consulted to estimate rates of overdiagnosis and underdiagnosis of the disorder. For the example of substance users, self-report accuracy could be assessed using chemical drug tests in a subset of the sample (or in a similar external example). With this information, rgG is computed as the phi coefficient between observed and actual group membership:

where

where TP is the proportion of true positives (individuals correctly assigned to one of the two groups) in the total sample, BRobs is the base-rate proportion of the sample observed to be part of that group, and BRtrue is the base-rate proportion of the sample actually part of that group.

If the group sizes and misclassification rates are similar for the two groups, this correlation can be approximated using the proportion of correctly classified individuals in the full sample:

When the group sizes or misclassification rates for treatment and control groups are uneven, using Equation 16 will overestimate rgG and thus result in undercorrection of the d value for misclassification. This undercorrection can be severe when the group sizes differ greatly (Chicco, 2017). In these cases, Equation 14 or 15 will be more accurate.

Correcting for measurement error variance in experimental research

The same principles described for observational research apply to group differences calculated in experimental and treatment research. Measurement error variance in both the manipulation or intervention and the dependent variable will attenuate observed effect sizes.

Measurement error variance in the dependent variable

In experimental research, dependent-variable constructs can be measured in a variety of ways, including responses to scales or questionnaires, observers’ ratings of participants’ behaviors, or performance on trials of behavioral tasks. Each of these methods can produce multiple types of random measurement error that can be accounted for by specific types of reliability coefficients.

Measurement error variance in the dependent variable: scales or questionnaires

If the dependent variable is participants’ responses to a scale or questionnaire, reliability can be estimated using internal consistency (α, ω, test-information-based reliability from item response theory, etc.), parallel-forms reliability, or test-retest reliability for the scale, depending on which sources of measurement error variance are most important (see Choosing an Appropriate Reliability Estimate for Corrections). To correct for unreliability, calculate the reliability coefficient within each group, then compute the sample-size-weighted average within-group reliability,

Measurement error variance in the dependent variable: multiple trials

In experiments in which participants complete multiple trials of a task (e.g., several trials in a Stroop task) and group comparisons are made using participants’ average performance across trials, the key source of measurement error is random response error (Cooper, Gonthier, Barch, & Braver, 2017). For example, a participant may randomly have a somewhat longer reaction time on one trial than on the next. For these designs, reliability can be estimated using coefficient α (treating each trial as an item) or the Spearman-Brown-corrected split-half reliability, rSBSH:

where rh1h2 is the correlation of a participant’s mean on one half of the trials with their mean on the other half of the trials. The Spearman-Brown-corrected split-half reliability is useful because it can be calculated if participants complete varying numbers of trials (e.g., if only trials with correct responses are retained). It is also appropriate if different participants complete trials for the stimuli in different orders and order or fatigue effects are a concern. Values of rSBSH can vary depending on how trials are split (e.g., odd vs. even, first half vs. last half), so rSBSH should be calculated by creating a large number of random splits (e.g., 5,000), calculating rh1h2 for each split, and then using the average rh1h2 across splits to compute rSBSH. The splithalf package in R (Parsons, Kruijt, & Fox, 2019) provides functions to facilitate calculating rSBSH for multitrial data.

If the dependent variable is the difference between participants’ average performance in two conditions (e.g., congruent vs. incongruent trials), then reliability must be estimated for these difference scores, not for the individual condition averages. Generally, difference scores are much less reliable than averages of single scores (Williams & Zimmerman, 1977). To calculate rSBSH for difference scores, divide the trials for each condition in half and calculate difference scores using these half-sets of trials (i.e., Dh1 = Ah1 – Bh1, where Ah1 is a participant’s mean performance on half the trials in Condition A, Bh1 is the participant’s mean performance on half the trials in Condition B, and Dh1 is the participant’s difference score for half of the trials). Calculate rh1h2 as the correlation between the difference scores for the two halves of the trials and enter this value in Equation 17.

To correct for measurement error variance in the experimental d value, calculate the reliability coefficient (α or rSBSH) within each experimental group, then compute the sample-size-weighted average within-group reliability,

Measurement error variance in the dependent variable: single outcomes

If the dependent variable in an experiment is performance on a single-trial behavioral task (e.g., the length of time a participant persists in attempting to solve unsolvable anagrams, whether a participant donates to a charity, the amount of material recycled by an office during a month), a key question is how consistent performance on this task would be even if the treatment condition did not change. If performance would be inconsistent (i.e., there is a high degree of random response and transient error), the effect of the experimental manipulation on the construct underlying task performance will be underestimated. In these cases, researchers can estimate test-retest reliability by having members of the control group complete the measure again at a later time and using this within-group reliability value (

Measurement error variance in the dependent variable: observer ratings

If the dependent variable is observers’ ratings of participants’ behaviors (e.g., ratings of participants’ aggressive behaviors), the key source of measurement error variance is rater-specific error. In these cases, interrater reliability is the most appropriate method to correct for measurement error. Estimate interrater reliability using an appropriate intraclass correlation, ICC(1), based on the number of raters and the measurement design (see Putka, Le, McCloy, & Diaz, 2008, for a discussion of considerations for estimating interrater reliability, including the use of reliability estimates based on generalizability theory to account for ill-structured measurement designs). Calculate the reliability coefficient within each group and the sample-size-weighted average within-group reliability,

Measurement error in experimental manipulations

Measurement error in an experimental manipulation can occur if participants differ in their attentiveness or responsiveness to the manipulation. For example, in a study using vignettes to study attributions of responsibility for accidents, some participants may not read the vignettes carefully and thus not be affected by differences in situational features across conditions. Similarly, in a study on responses to a confederate’s aggressive behaviors (as compared with responses to a confederate’s nonaggressive behaviors in a control condition), participants may differ in how they evaluate the behaviors. In such cases, members of the experimental group might effectively function as though they were actually members of the control group, or vice versa. In intervention or treatment studies, there may be differences in treatment strength across participants due to treatment noncompliance or logistical challenges. For example, in a study evaluating a mindfulness intervention in which members of the treatment group are supposed to complete a daily mindfulness exercise for 14 days, they may complete the exercise only on some of the intended days, so that received dosage varies within the treatment group. Treatment measurement error variance might also occur if members of the control group are inadvertently exposed to the treatment. For example, in the mindfulness study, some control-group members might engage in their own mindfulness practice outside of the experiment.

Experimental researchers are often interested in the relationship of the dependent variable with the construct targeted by a manipulation or intervention (e.g., perceived aggression or mindfulness), rather than merely the impact of assigning participants to a treatment intended to influence this target construct. This distinction is analogous to the distinction in clinical research between treatment “as assigned” and treatment “as received” (Ten Have et al., 2008). If there is substantial within-group heterogeneity in attentiveness to, interpretation of, or compliance with a manipulation, this can strongly bias the experimental d value as an index of the relationship between the target construct and the dependent variable. If a valid manipulation check or compliance check is available, this bias can be estimated or corrected using several methods for experimental causal mediation analysis (Imai, Keele, & Tingley, 2010). For example, in the aggression study, participants could be asked to rate how aggressive they perceived the confederate’s behavior to be. In the mindfulness study, participants could be asked to report how often they engaged in any sort of mindfulness or meditation practice during the study period.

When a manipulation or compliance check is available, the most straightforward correction method for correction is the instrumental-variable estimator (Angrist, Imbens, & Rubin, 1996). The reliability of treatment is estimated as rgM, the correlation between assigned group membership (g) and the measured manipulation or compliance check (M). To correct an experimental d value for measurement error variance due to differential attentiveness to or compliance with treatment, use the three-step procedure described earlier. First, convert dobs to robs using Equation 9. Second, correct robs using the same type of disattenuation formula described in Equation 12:

Note that robs is divided by rgM itself, not its square root. Third, convert rc back to dc using Equation 10.

The instrumental-variable estimator relies on three key assumptions (Imai et al., 2010; cf. McNamee, 2009; Mealli & Rubin, 2002):

The manipulation or compliance check fully accounts for the effect of the treatment on the dependent variable (i.e., that treatment effect is fully mediated through the manipulation check; this is the exclusion restriction assumption);

The treatment affects the manipulation or compliance check in the same direction for all participants (e.g., no one misinterprets the aggressive confederate as behaving less aggressively than the nonaggressive confederate; no participants assigned to the mindfulness intervention practices less mindfulness than they would have otherwise; this is the monotonicity assumption);

There is no interaction between the treatment and the manipulation check (e.g., the manipulation-check procedure does not enhance the treatment effect by clueing participants in on a concealed purpose of the study; Hauser, Ellsworth, & Gonzalez, 2018; this is the noninteraction assumption).

If these assumptions are unreasonable, other estimators with different assumptions can be used (Imai et al., 2010), but these are less readily applied in meta-analysis. 8

Choosing an appropriate reliability estimate for corrections

What are the major sources of measurement error variance?

As shown in Table 1, there are a variety of different types of reliability coefficients, sensitive to different sources of measurement error variance. When choosing a reliability coefficient to correct for measurement error, it is important to carefully consider which sources of error are likely to have had major impacts on the measures and to select a method that is sensitive to these sources (for overviews, see Revelle, 2009; Schmidt & Hunter, 1996; Schmidt et al., 2003). For example, in a study using a personality scale to predict supervisor-rated job performance, a major source of error will be the supervisors’ idiosyncratic beliefs and ability to observe; interrater reliability is thus an appropriate method to capture this source of error (Connelly & Ones, 2010; Viswesvaran, Ones, & Schmidt, 1996). In a study predicting life satisfaction, transient effects, such as participants’ mood or temporary circumstances, will be a major source of error; test-retest reliability is an appropriate method to capture this source of error (Green, 2003; Le, Schmidt, & Putka, 2009; Schmidt et al., 2003). Although internal-consistency statistics such as coefficient α are the most commonly reported reliability estimates, in many cases these will not capture critical sources of measurement error; when correlations are corrected for internal consistency alone, the true correlations between constructs can be substantially misestimated.

Internal-consistency methods can also be inappropriate even if content sampling error is a major concern. As an estimate of reliability, internal consistency assumes item homogeneity—that all items are indicators of the same underlying construct and that the reliability of items as indicators of this construct is reflected solely by the covariance among items, not the items’ unique variance. Some measures are heterogeneous composites of items with nonredundant content. For example, a biodata inventory designed to predict borderline personality disorder (BPD) might assess a diverse set of life experiences that are each related to BPD but not highly correlated with one another. Such a measure would have low internal consistency but could still be reliable as a measure of overall BPD risk due to life experiences. For these types of measures, parallel-forms reliability and test-retest reliability are more appropriate reliability estimators, provided the lag between measurements is long enough to account for transient effects yet short enough that participants do not change systematically (e.g., BPD symptoms could be mitigated by therapy between temporally distant testing occasions, which would undermine a reliability estimate). Short-form measures of personality traits and other constructs are related examples. Many short-form measures of constructs include items chosen to tap a construct broadly and with limited redundancy (Rammstedt & John, 2007; Yarkoni, 2010). By removing redundancy, these measures will show low internal consistency, but they can still show high convergent correlations with longer measures. For short-form measures, parallel-forms and test-retest reliability are also more appropriate methods to estimate reliability. 9

What if reliability estimates are not available for a sample?

Researchers conducting a meta-analysis are likely to find that for many included studies, authors do not report reliability estimates for measures or experimental manipulations or do not report the most appropriate form of reliability (e.g., the authors report coefficient α rather than test-retest or interrater reliability; Flake, Pek, & Hehman, 2017; Fried & Flake, 2018). Even in primary studies, it is often not possible to estimate a relevant form of reliability with a specific sample. To correct for measurement error variance in these cases, it is necessary to draw an estimate from some other source. One approach is to draw reliability estimates from external sources, such as test manuals, previous meta-analyses, or previous studies using the same measure or manipulation. This approach is reasonable only if it can be assumed that the sample (or samples) in the present study and the external source (or sources) were drawn from the same underlying population (or at least comparable populations). Another approach is to conduct one or more new studies specifically for the purpose of estimating relevant reliability coefficients. For example, Beatty, Walmsley, Sackett, Kuncel, and Koch (2015) conducted a large-scale study to estimate reliability coefficients for university grade point averages that are specifically based on the number of courses taken. In meta-analyses, if reliability information is available for some studies but not others, artifact-distribution and reliability-generalization methods can also be used to address missing reliability data in individual studies (see Correcting for Artifacts in Meta-Analysis).

When should you correct for measurement error variance?

Whether and how to correct for measurement error variance is the subject of ongoing debate in many areas of psychology and other fields (Muchinsky, 1996; Schmidt & Hunter, 1996; cf. Schennach, 2016, for more favorable attitudes in econometrics). The appropriateness of correcting for measurement error variance depends on the nature of the research question. Are you interested in understanding measures or their underlying constructs? For example, if the goal of a study is to evaluate the diagnostic accuracy of a tool for practical use, correcting for measurement error variance in the criterion variable would be appropriate, but correcting for measurement error variance in the diagnostic tool would overestimate its practical utility because practitioners have access only to patients’ observed scores, not their standing on the underlying construct (Binning & Barrett, 1989). However, if the scientific goal of a study is to identify the nature of underlying constructs, relationships, and principles, then not correcting for measurement error variance can lead to highly inaccurate theoretical conclusions (Westfall & Yarkoni, 2016). Advancing scientific knowledge requires estimating measurement error variance and correcting for its impacts on research findings, especially in meta-analysis. For meta-analyses, even if the research question concerns observed measures, controlling for differences in reliability across studies is important to ensure accuracy of moderator and sensitivity analyses and to avoid erroneously interpreting differences across studies as substantive in origin (see Fig. 3).

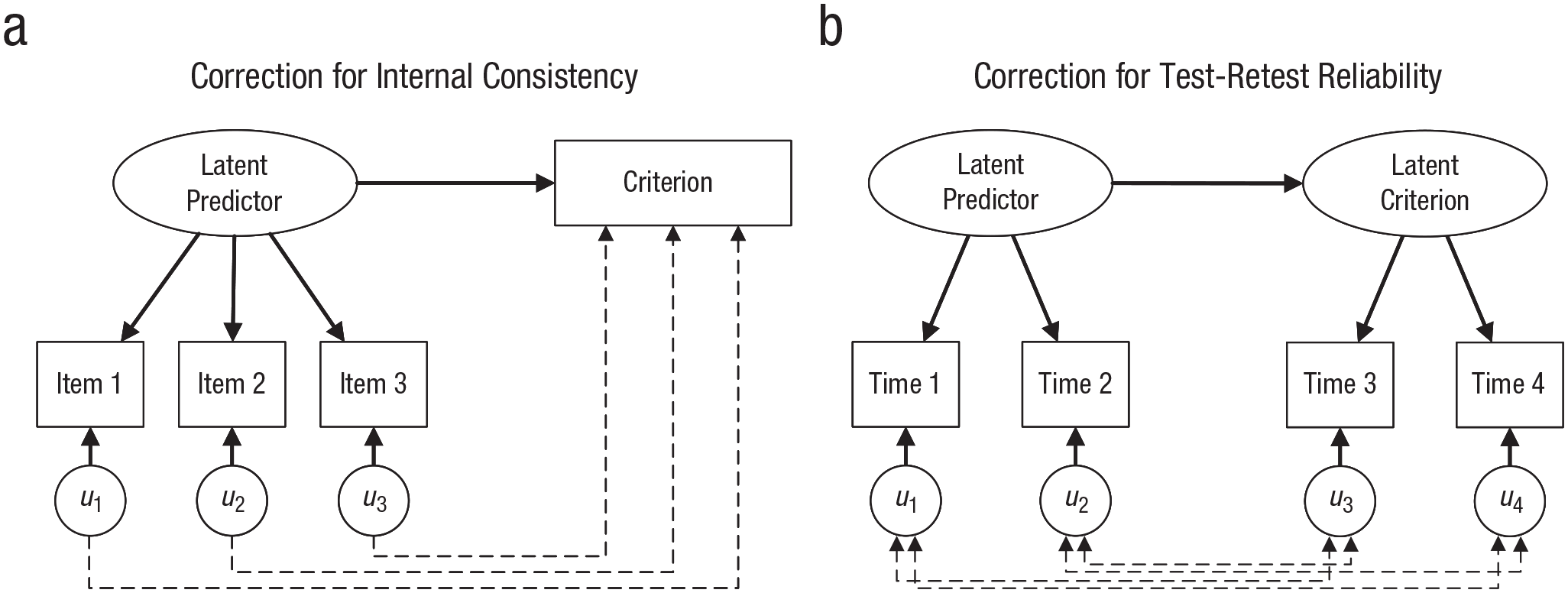

The accuracy of corrections for measurement error variance depends on whether the assumptions of the model for measurement error variance used in the corrections are reasonably met. One important assumption of the correction procedures we have described is that error scores for the independent variable are unrelated to the true scores for the dependent variable (and vice versa). A structural model illustrating this assumption for internal-consistency corrections is shown in Figure 5a. Correcting for internal consistency assumes that the dependent variable is predicted only by the interitem covariance of the independent variable (i.e., the variance the items have in common). If individual items in the independent variable have unique predictive power for the dependent variable, then the corrected correlation will either overestimate or underestimate the true correlation (Rhemtulla, van Bork, & Borsboom, 2019; cf. Putka, Hoffman, & Carter, 2014, for similar issues regarding interrater reliability). In these cases, correcting for test-retest reliability instead may yield a more accurate estimate of the true correlation between latent constructs, as the transient errors in observed measures are less likely to have unique predictive power.

Structural models illustrating the assumptions of Spearman’s disattenuation formula for two types of reliability coefficients: (a) internal-consistency coefficients (e.g., coefficient α, coefficient ω) and (b) the coefficient of stability (e.g., test-retest reliability). Variables labeled u are the unique variances associated with each item (internal consistency) or each occasion (test-retest reliability). Dashed lines indicate key assumed-zero paths.

This assumption can also be a particular concern for corrections for group misclassification. For example, in a study comparing impulsivity in substance-use patients who report resuming use with impulsivity among patients who report remaining sober, it may be that more impulsive patients are more likely to lie about resuming use. This will result in an underestimate of the correlation between relapse and impulsivity. In this case, the correction in Equation 12 will undercorrect, and the corrected correlation will still be a negatively biased estimate of the true relationship between these constructs.

A second important assumption of the procedures we have described is that error scores for the two variables are unrelated. If errors for the two variables are correlated, the corrections will overestimate the true relationship between the two constructs. Figure 5b illustrates this assumption for test-retest reliability corrections. If the predictor variable (e.g., a personality trait) and the criterion variable (e.g., subsequent life satisfaction) are measured at different times, then the two measures will not share transient errors, and correcting for test-retest reliability is appropriate. If the two variables are measured at roughly the same time, however, then the measures might share transient errors. In this case, the observed correlation between the two measures will reflect not only covariance between the latent constructs, but also covariance between the shared transient errors. For example, stressful life events might cause an employee to be more likely to endorse items measuring both anxiety and job burnout. If anxiety and job burnout are measured at the same time, such that responses to both sets of items are affected by the same transient events, this would inflate the observed correlation between these two variables. As a result, correcting using test-retest reliability will overestimate the true relationship between constructs. In this case, parallel-forms reliability or internal consistency would be a more appropriate reliability method to use in correcting for measurement error variance. 10

When deciding whether to correct for measurement error variance and which reliability estimate to use, meta-analysts and primary researchers must carefully consider whether these assumptions are reasonable for the study design and the chosen reliability method. Alternative methods for estimating true-score correlations without these assumptions are also possible depending on the specific research design (see, e.g., Charles, 2005; Schennach, 2016; Zimmerman, 2007).

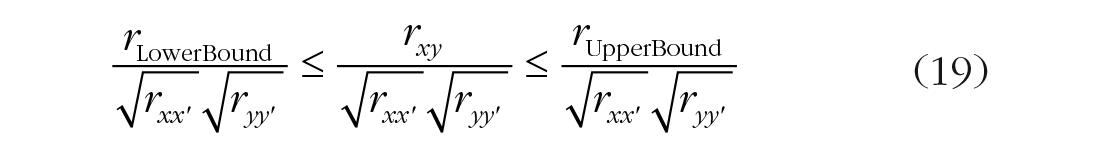

Finally, as noted previously, although corrections for measurement error variance can reduce bias in estimates of relationships between constructs, these corrections come with the cost of increased sampling error in the corrected effect sizes. The increased uncertainty in corrected effect sizes can be indexed by adjusting standard errors as shown in Table 3 or by applying the same correction to the endpoints of the effect-size confidence interval as is applied to the point estimate, for example,

Formulas for Corrected Effect Sizes and Sampling Error Variances for Different Artifact-Correction Models

Note: All reliability values in the formulas presented in this table are assumed to be estimated in the restricted sample (subject to selection effects). If the reliability values are estimated in samples from the unrestricted reference population, they should be adjusted using the following formula:

When an effect size is corrected for measurement error variance, corresponding corrections must always be applied to the standard error and confidence interval for the effect size.

Selection Effects

Most psychologists are familiar with the concept of measurement error variance, even if corrections for measurement error are uncommon in primary studies and meta-analyses. Awareness of the impacts of diverse types of selection effects on study results, along with available methods to correct those study results, is much less widespread. 11 A selection effect is present when the relationship observed between two variables in a sample is not representative of the relationship in the target or reference population as a result of selection or conditioning on one or more variables (Sackett & Yang, 2000). In psychology, selection effects are most frequently discussed in terms of range restriction, that is, reduced variances of the variables in the sample relative to the target population. Range restriction biases effect sizes toward null or zero. However, this is only one of many possible effects of selection. Depending on the exact selection mechanism, selection effects can attenuate sample effect sizes, inflate them, or even reverse their direction (R. A. Alexander, 1990; Ree, Carretta, Earles, & Albert, 1994; Sackett, Lievens, Berry, & Landers, 2007). These diverse types of selection effects are variously called range restriction, range enhancement, range variation (Schmidt & Hunter, 2015), selection bias (L. K. Alexander, Lopes, Ricchetti-Masterson, & Yeatts, 2014b), and collider bias (Greenland, 2003; Rohrer, 2018).

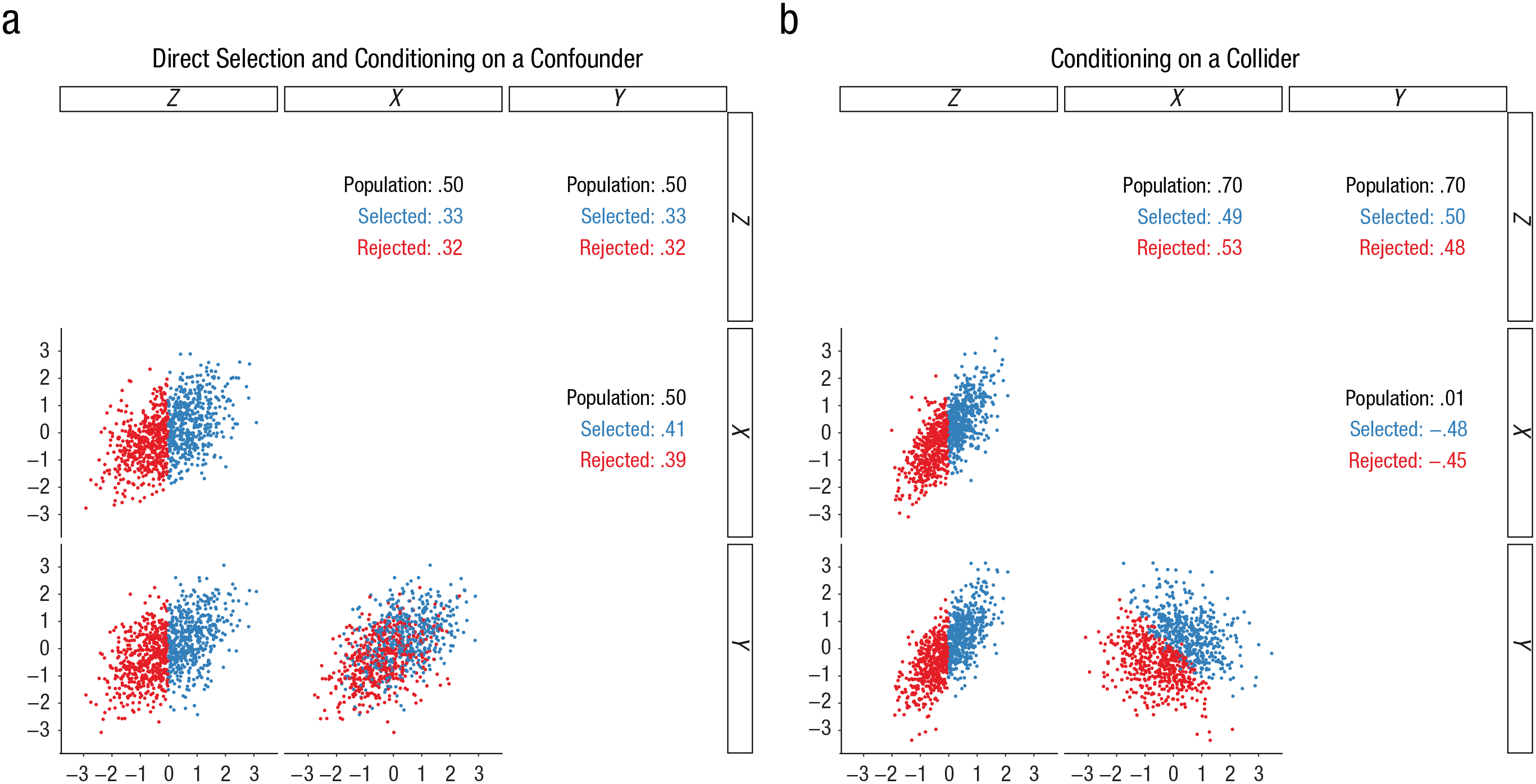

The impacts of selection effects on correlations are illustrated in Figure 6. Selection effects can be direct (i.e., when one or both of the variables in a relationship is directly selected on or truncated). For example, only severely ill patients might be referred to an in-patient treatment center. In such a setting, any relations between potential antecedents and symptoms will likely be reduced because of limited symptom variability. This scenario is illustrated in Figure 6a. The correlation, r, between Z and X is .50 in the population, but is reduced to only .33 if Z is directly restricted to only the top 50% of scores. Direct selection can also increase observed correlations through range enhancement. For example, if a researcher uses an extreme-groups design and compares outcomes for the top 25% and bottom 25% of scorers on an attitudes measure, the variance on attitudes is artificially inflated, and the relationship between attitudes and outcomes will be overestimated relative to the relationship in the full population (Preacher, Rucker, MacCallum, & Nicewander, 2005; Wherry, 2014, p. 50).

Impact of direct range and indirect range restriction on correlations. In (a), X, Y, and Z are three distinct variables, and all have intercorrelations of .50. In (b), X and Y are uncorrelated, and Z is a composite of X and Y plus a small amount of error variance. In each panel, black correlations reflect the total population, blue correlations and data points reflect the selected group (the top 50% of scorers on Z), and red correlations and data points reflect the rejected group. Selected rXZ and rYZ are directly range restricted; selected rXY is indirectly range restricted.

Selection effects can also be indirect, or incidental (i.e., when there is selection on a third variable related to one or both of the two focal variables). This process is sometimes called conditioning on a confounder (Rohrer, 2018). For example, the relationship between overall health and life satisfaction may be reduced in a sample of professionals, as socioeconomic status is related to both health and life satisfaction (Roberts, Kuncel, Shiner, Caspi, & Goldberg, 2007). In Figure 6a, indirect selection on Z reduces the correlation, r, between X and Y from .50 to only .41. Indirect selection can even reverse the sign of a correlation if the two focal variables are strongly related to the selection mechanism (Ree et al., 1994). For example, imagine that success in a class is equally determined by ability and effort, and that these two predictors are uncorrelated (see Fig. 6b). If a sample of highly successful students is selected, ability and effort will become artifactually strongly negatively correlated because of indirect range restriction, or what is sometimes referred to as conditioning on a collider (Rohrer, 2018). This effect occurs because high effort can compensate for low ability (or vice versa) as a path to success.

Impacts of selection effects in meta-analysis

Selection effects influence meta-analysis results in the same three ways as measurement error variance does. The mean effect size in the observed samples will be biased relative to the effect size in the desired reference population; the magnitude of this bias is based on the average selection process across studies. Similarly, if selection processes or the size of selection effects differ across studies (e.g., if some studies were sampled from a general population, but others were restricted to university students; cf. Murray, Johnson, McGue, & Iacono, 2014), this will inflate the random-effects variance component and bias the results of moderator and publication-bias analyses.

Quantifying and correcting for selection effects

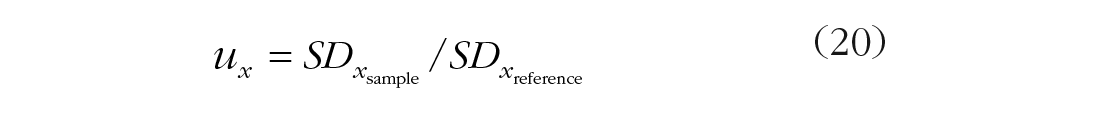

The impact of selection effects is quantified using a u ratio—a ratio of the standard deviation of a variable in the sample to the standard deviation of that variable in the reference population:

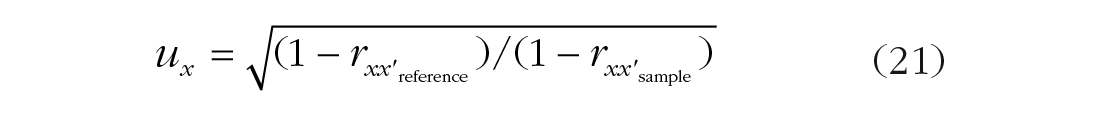

If a reference standard deviation is unavailable, the u ratio for a continuous variable can be estimated from the sample and reference reliability coefficients:

If the exact selection mechanism is known, very accurate estimates of the population relationship can be calculated using a multivariate selection correction (Held & Foley, 1994; derived by Aitken, 1935; Lawley, 1944; based on Pearson, 1903). 12 Such complete information is rarely available in psychology research. However, there are several accurate approximations that rely on information more commonly available to primary researchers and meta-analysts; these are listed in Table 3. An appropriate correction model can be selected according to whether (a) direct selection occurs on one variable (univariate direct range restriction, UVDRR), (b) direct selection occurs on both variables (bivariate direct range restriction, BVDRR), or (c) indirect selection occurs and u ratios are available for one variable (univariate indirect range restriction, UVIRR) or both variables (bivariate indirect range restriction, BVIRR). For example, for the indirect selection in Figure 6b, the u ratio is .82 for X and Y. Using these values and the observed correlation, robs, of −.48 in the formula for the BVIRR correction, one obtains an estimated population correlation, rc, of .005, a value that reverses sign and differs in magnitude by .475, or 99%.

An important nuance to consider is that selection never affects only one variable. When one variable is restricted, other variables with which it is correlated are also restricted. For example, grade point average will be less variable in a sample of university students selected on high school grade point average than in an unselected group. The correction formulas in Table 3 correct for these indirect selection effects on the basis of assumptions about the selection process (see Dahlke & Wiernik, 2019a, and Hunter, Schmidt, & Le, 2006, for discussions).

Selection effects in correlations

Selection effects are most commonly discussed in terms of correlations, and methods for correcting for selection effects have been developed directly for this metric. To correct for selection effects in correlations, identify an appropriate reference sample and the most likely selection mechanism. Then, apply the appropriate correction formula from Table 3.

Selection effects in group comparison and experimental research

Selection effects impact group comparisons in the same way as they do correlations (Bobko, Roth, & Bobko, 2001; Li, 2015). For example, a study of gender differences in political orientation may show attenuated mean differences in a sample of urban university students as a result of their reduced variability in political orientation compared with the general population (e.g., given students who are more liberal overall). In experimental research, d values for a manipulation or treatment may differ across studies simply because of differences in response variability across the studies.

To correct a d value for these selection and range-variation effects, use the three-step procedure described earlier. First, convert dobs to robs using Equation 9:

Second, correct robs using the appropriate formula from Table 3. For these corrections, uy and

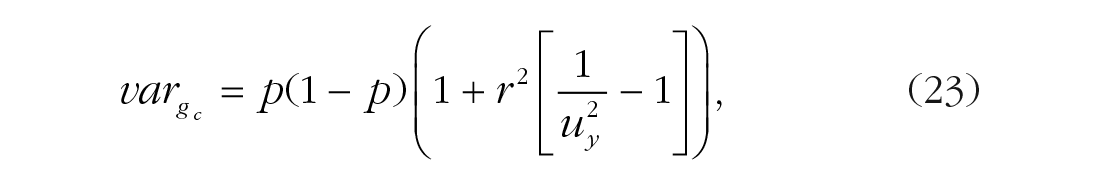

where p* is an adjusted group proportion. As discussed earlier, direct selection on one variable also indirectly induces selection on other correlated variables. When applied to correlations, the equations in Table 3 implicitly correct for this indirect selection. However, this adjustment must be manually applied when converting back from rc to the dc metric. If the observed group proportions were used to convert back from rc to dc, this would imply no change in the grouping variable’s variance after the correction, which is generally possible only if the d value equals 0 (i.e., if there is no correlation between group membership and score on the dependent variable).

The effect of a correction for selection effects on the variance of the dichotomous grouping variable can be estimated as

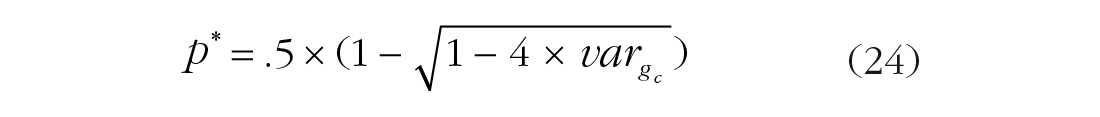

which can be converted back to a proportion: 13

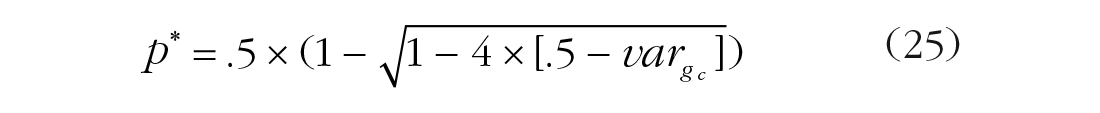

However, if

Choosing a reference sample

Appropriate reference samples can be chosen in several ways. If there is a clear population about which meta-analysts wish to make inferences (e.g., if the goal is to estimate the relationship between attitudes and grades among the university’s entire student body, rather than only scholarship recipients; DesJardins, McCall, Ott, & Kim, 2010) and local standard deviation or reliability estimates for this population are available, these can be used directly. Reference-group population values can also be drawn from external sources (e.g., standard deviations from test manuals’ norms or national statistical databases). Critically, researchers must ensure that these external reference samples actually represent the population of interest (e.g., a standard deviation from test norms for the general population of American adults may not accurately estimate the standard deviation for a target population of South African job applicants).

In a meta-analysis, even if there is not a clear reference population to which the meta-analyst wishes to generalize results, differences in an independent or dependent variable’s variability across samples will produce artifactual between-studies heterogeneity. In such cases, this range variation should be accounted for by adjusting each effect size to reflect a common pooled or total-sample standard deviation across the included samples that have the same measure (see Dahlke & Wiernik, 2019a, for details). Corrections based on this reference standard deviation remove artifactual effect-size heterogeneity without changing the mean effect size.

When should you correct for selection effects?

Selection effects are pervasive in psychological research (Rohrer, 2018). Studies in all subfields can be affected by nonrepresentative sampling, attrition, nonresponse, and other selection processes. Failure to consider and correct for selection effects can lead to highly inaccurate substantive conclusions. For example, many studies have shown a negative correlation between cognitive ability and conscientiousness, suggesting support for an intelligence-compensation hypothesis (i.e., that conscientiousness develops in part as a mechanism to overcome limitations of ability). Murray et al. (2014) showed that these findings were the result of selection effects in studies conducted among university students; students are admitted to universities primarily on the basis of high-school grade point average. A high grade point average, and the knowledge it reflects, can be attained through some combination of effective learning (high ability) and hard work (conscientiousness). By selecting on an outcome of these two variables, the university admissions process induces an artifactual negative correlation between ability and conscientiousness (this is the classic conditioning-on-a-collider effect; Rohrer, 2018). Similarly, studies relating admissions tests to success among graduate students underestimate the predictive validity of these tests because applicants with very low test scores are rarely admitted to graduate programs (Kuncel, Wee, Serafin, & Hezlett, 2010). These examples illustrate that it is critical to consider potential selection effects and apply appropriate corrections in order to draw accurate theoretical inferences and make sound data-based decisions.

The formulas used to correct for selection effects assume that (a) variables are linearly related and (b) conditional residual variances are equal in the selected sample and the target population (Sackett & Yang, 2000). 14 The linearity assumption is absolutely essential, as correlations quantify linear relations, and corrections for selection effects therefore cannot extrapolate information about nonlinear associations. The equal-residual-variances assumption is satisfied when one’s chosen correction formula reflects the direct or indirect nature of the selection effects and includes all of the selection variables that gave rise to the selection effects (or, in the case of the UVIRR correction, when the formula includes a correction variable that fully mediates the effect of the actual selection variable; see Hunter et al., 2006). Incomplete satisfaction of this assumption (e.g., using only a subset of the selection variables to make a correction) is suboptimal, but as long as the right correction formula is used, the corrected statistic will still provide a better estimate of the effect size in the target population than will the observed effect size (e.g., Beatty, Barratt, Berry, & Sackett, 2014; cf. Rohrer, 2018).

In both primary studies and meta-analyses, it is valuable to consider potential selection processes that may be at play and to apply a correction model to account for the most likely or most reasonable processes (e.g., Mendoza, Bard, Mumford, & Ang, 2004). Doing so can yield less-biased estimates of the true relationships between constructs and can also be regarded as a sensitivity analysis gauging the potential impact of selection effects on study results. It is even possible to correct for multiple potential selection processes separately and compare the results to consider potential impacts of different selection mechanisms.

As is the case with corrections for measurement error variance, statistical adjustments for selection effects come with the cost of increased sampling error in the corrected effect sizes. Confidence intervals and standard errors must be adjusted to account for this increased uncertainty. It is always better to improve the sampling design before conducting a study than to rely on statistical adjustments during data analysis.

Correcting for Artifacts in Meta-Analysis

Both measurement error variance and selection effects should be considered and corrected for in meta-analyses. Artifact corrections can be approached in two ways. First, each effect size can be individually corrected for artifacts before the meta-analysis is conducted. Second, uncorrected effect sizes can be meta-analyzed, simply weighted by effective sample size or inverse sampling error variance, and the results of this “barebones” meta-analysis (Schmidt & Hunter, 2015) can be corrected post hoc using the mean and variance of the distribution for each artifact. In this section, we describe these approaches, as well as their advantages and disadvantages.

Individual-corrections meta-analysis

In the individual-corrections approach, each effect size is corrected using artifact values based on the same sample that provided the effect size. For each effect size, the artifacts of concern should be identified, and then the relevant correction formulas should be applied. Table 3 presents a variety of correction formulas for correlations, d values, and their standard errors that can be used to correct for measurement error and/or various types of selection effects. For example, if you want to correct an experimental study’s d value for measurement error variance in the dependent variable, measurement error variance in the treatment, and indirect range restriction for the dependent variable, apply the d-value procedure for UVIRR in Table 3 using the dobs value and the sample values for

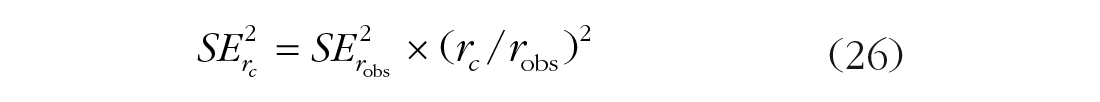

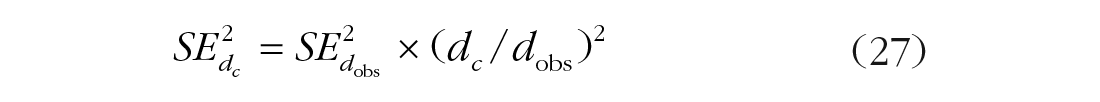

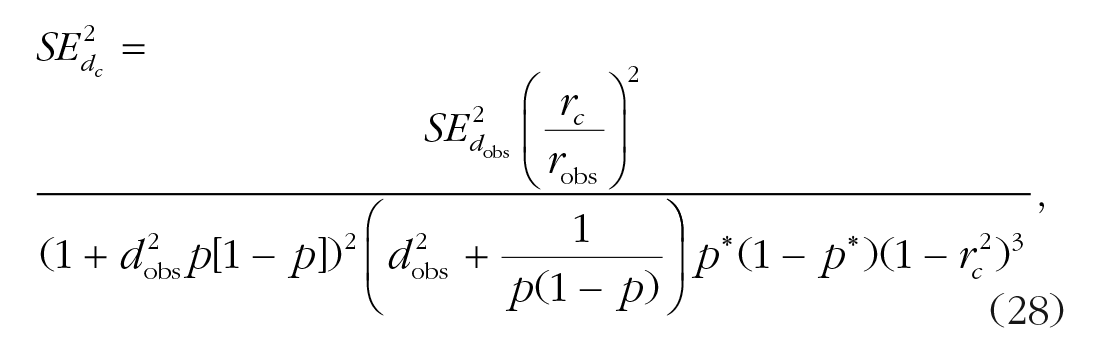

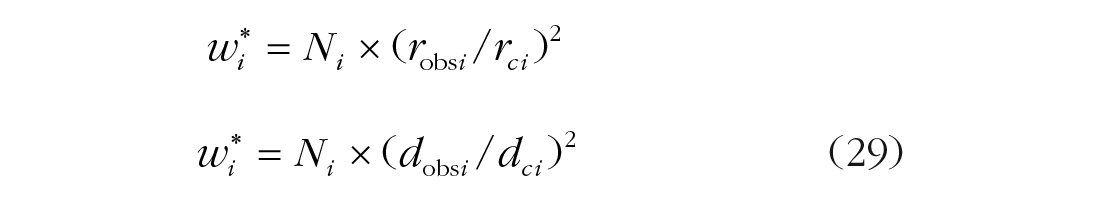

Each effect size’s sampling error must also be adjusted to account for increased uncertainty stemming from the corrections. For correlations, the sampling-error adjustment for most corrections takes the following form:

That is, the sampling error variance is adjusted by the square of the adjustment applied to the effect size. For bivariate indirect selection, the adjustment to sampling error variance is more complex, to account for the unique additive term in this correction (see Dahlke & Wiernik, 2019a, for details).

When d values are corrected only for measurement error in the dependent variable and

For corrections involving converting d to r and back, the sampling error is adjusted by converting

where

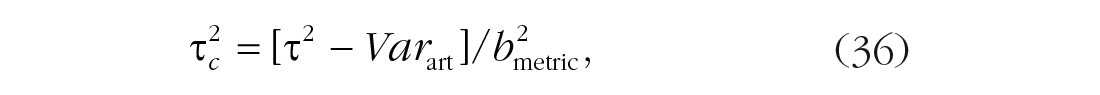

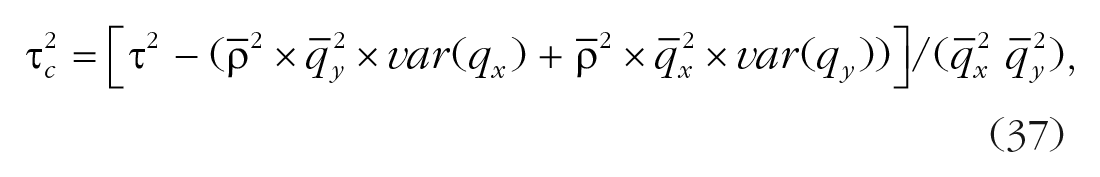

If meta-analyses are conducted using inverse-sampling-error-variance weights (or weights based on these, such as Hedges-Vevea random-effects weights; Hedges & Vevea, 1998), the adjusted sampling error variances for the corrected effect sizes are used in the weights in place of the original sampling error variances. The meta-analytic computations for the mean effect size, random-effects variance component (in Hedges-Vevea notation,

A potential problem with weighting effect sizes by inverse sampling error variance estimated in each sample is that the sampling-error-variance formulas for correlations and d values include the population effect sizes. As a result, weighting effect sizes by inverse sampling error variance introduces dependency between study weights and study effect sizes, which can bias meta-analysis results (Hedges & Olkin, 1985, p. 110; Kulinskaya & Bakbergenuly, 2018; Schmidt & Hunter, 2015; Shuster, 2010). An alternative method that avoids this bias is to weight by sample size (or effective sample size in the case of d values with unequal group sizes; Kulinskaya & Bakbergenuly, 2018). If sample-size weights are used, then adjusted weights are computed as follows 18 :

In the Hunter-Schmidt meta-analytic procedure, these adjusted weights are then used to compute the mean effect size, the observed and expected effect-size variances, and the random-effects variance component (in the Hunter-Schmidt notation,

Meta-analyses of corrected d values can also be computed using the rc value for each sample. The final meta-analytic results are converted back to the δ metric using one of these formulas:

Corrected effect sizes, sampling error variances, and weights are also used in other meta-analytic procedures, such as meta-regression and subgroup moderator analyses, publication-bias analyses, and sensitivity analyses. Using corrected effect sizes for these analyses is critical to prevent heterogeneity in artifacts across studies from biasing results.

It is often the case that the necessary statistics to correct for measurement error variance or selection effects (i.e., reliability coefficients, standard deviations, and reference-population standard deviations) are not reported for all the studies in a meta-analysis. Several options are available to address missing artifact data. A simple option is to impute missing artifacts by bootstrapping (i.e., sampling with replacement) artifact values from the studies for which this information is not reported. This approach works well and generally yields accurate estimates of the mean effect size, its standard error, and the random-effects variance component (Schmidt & Hunter, 2015). A more robust approach is to apply reliability or selection generalization (Vacha-Haase, 1998), wherein meta-regression is used to predict missing reliability values or selection u ratios on the basis of characteristics of the sample and study design, the measure used, scale properties, and other moderators. This approach can yield somewhat more accurate results than the naïve bootstrapping approach if these factors have strong impacts on observed artifact values. A third approach is to sidestep the issue of missing data by not correcting effect sizes individually, but instead to correct overall results of the meta-analysis on the basis of the artifact means and standard deviations. This approach is called the artifact-distribution method (Schmidt & Hunter, 2015) and is described next. 19

Artifact-distribution meta-analysis

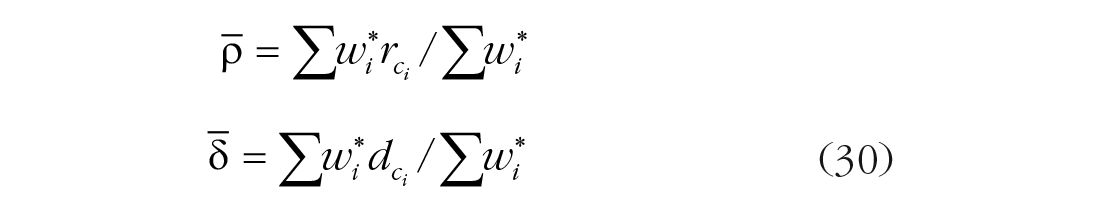

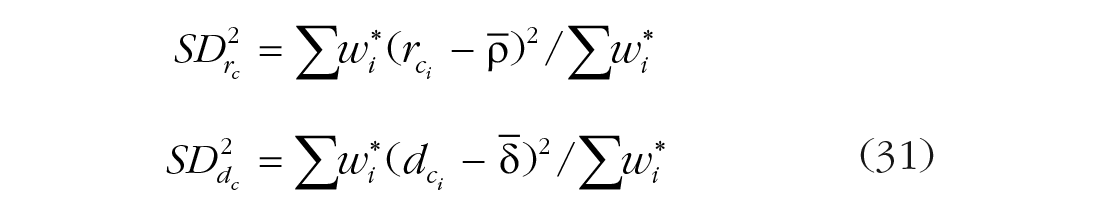

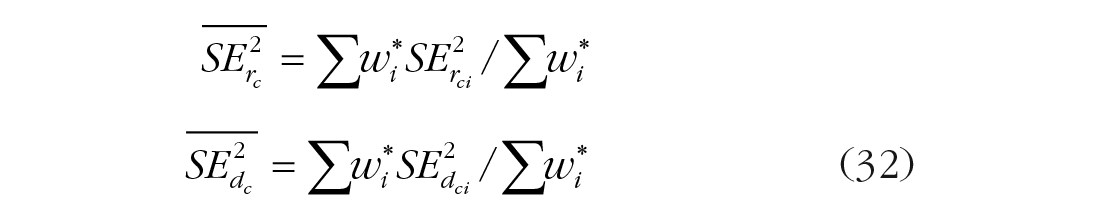

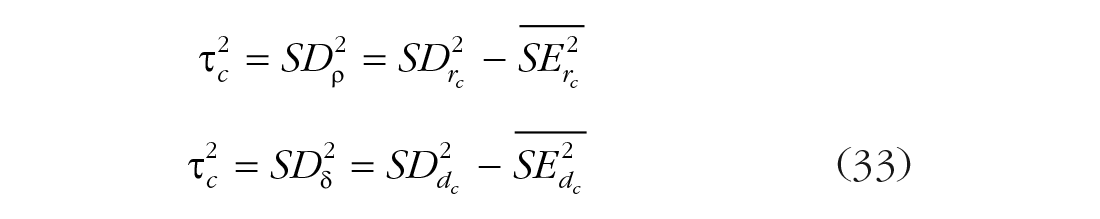

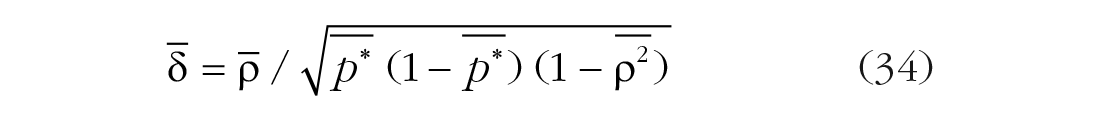

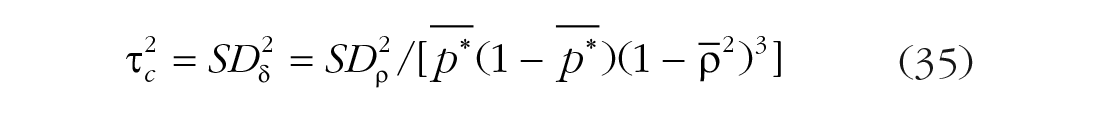

In the artifact-distribution method, studies are initially meta-analyzed using observed effect sizes, without correcting for measurement error variance or selection effects. Then, the initial meta-analysis results are corrected using the means and variances of the distributions of artifacts that are observed. The mean effect size is corrected using the means of the square-root reliabilities (

where

where

Taylor series artifact-distribution methods are derived from the principle of maximum likelihood (Raju & Drasgow, 2003), and the accuracy of their results is comparable to that of individual-correction approaches to meta-analysis (Hunter et al., 2006; Raju et al., 1991; Schmidt & Hunter, 2015). It should be noted that these artifact-distribution methods assume that the population artifact values (u ratios and reference-population, or unselected, square-root reliabilities) are uncorrelated; this assumption is often reasonable (Raju & Drasgow, 2003; see Yuan, Morgeson, & LeBreton, 2018, 2019, and Köhler, Cortina, Kurtessis, & Gölz, 2015, for discussions of statistical and situational factors that may create correlations among artifacts).

Artifact-distribution methods have several potential advantages over individual-correction approaches to meta-analysis. First, artifact-distribution methods are easier to apply because the meta-analysis results can be corrected after computation, so that each effect size does not need to be individually corrected beforehand. The difference in the ease of application is particularly large when some studies have missing artifact data, as no imputation is required. Second, artifact-distribution methods allow meta-analyses to be corrected using artifact distributions reported in previously published meta-analyses. For example, the reports of many studies of job performance do not include appropriate interrater-reliability estimates that can be used to correct for measurement error; the artifact distributions reported by Viswesvaran et al. (1996) have been widely used in subsequent meta-analyses to correct for unreliability in this variable. Third, in some cases, artifact-distribution methods can be more accurate than individual-correction methods (Dahlke & Wiernik, 2019a).

However, the artifact-distribution method also has several disadvantages. Unlike the individual-corrections approach, it requires that the same artifact-correction model be applied to all effect sizes (e.g., it is not possible to correct for direct range restriction in one sample and indirect range restriction in another). 20 Moreover, because the artifact-distribution method does not correct effect sizes individually, it cannot correct for the impact of differential reliability or selection across studies on results of meta-regression or publication-bias analyses. Instead, these analyses are conducted with the uncorrected effect sizes. For example, if smaller-sample studies with null results were more likely to be unpublished because of low reliability or restricted sampling, rather than publication bias, PET-PEESE analyses and cumulative meta-analyses (Leimu & Koricheva, 2004; McDaniel, 2009) would not detect this.

Conclusion

Measurement error variance and selection effects are pervasive in psychological research and research in other fields, and the detrimental impacts of these artifacts on the validity of research conclusions have been widely documented (Schmidt & Hunter, 2015). The infrequency with which measurement error variance and selection effects are considered and corrected in meta-analyses in many literatures conveys the risk of substantial bias in their results. By applying corrections as described in this article, researchers can make more accurate meta-analytic inferences, and more useful recommendations for future psychological research and practice can be realized.

Supplemental Material

Wiernik_AMPPSOpenPracticesDisclosure-18-0148.R3 – Supplemental material for Obtaining Unbiased Results in Meta-Analysis: The Importance of Correcting for Statistical Artifacts

Supplemental material, Wiernik_AMPPSOpenPracticesDisclosure-18-0148.R3 for Obtaining Unbiased Results in Meta-Analysis: The Importance of Correcting for Statistical Artifacts by Brenton M. Wiernik and Jeffrey A. Dahlke in Advances in Methods and Practices in Psychological Science

Footnotes

Appendix A: Using psychmeta to Correct Artifacts and Conduct Psychometric Meta-analyses in R

The psychmeta package (Dahlke & Wiernik, 2019b) in R can be used to correct correlations and d values (and adjust confidence intervals and standard errors) for the artifact models described in this article. It can also be used to conduct individual-correction and artifact-distribution meta-analyses.

To correct correlations, use the

The

To correct d values, use the

The

To conduct individual-correction meta-analyses, use the

The

These functions will correct each effect size for measurement error variance, selection effects, or both, and then conduct Hunter-Schmidt meta-analyses for each set of construct variables. Moderators can also be specified, and a variety of follow-up analyses (sensitivity analyses, meta-regression, etc.) can be conducted. It is also possible to use the

In this code,

To conduct artifact-distribution meta-analyses, use the

The arguments are the same as previously specified.

Additional details about these and other functions are provided in the psychmeta documentation and vignettes (Dahlke & Wiernik, 2019c).

Appendix B: Accuracy of Corrections for d Values When Groups Have Been Misclassified

This appendix illustrates the effects of group misclassification on observed d values in group-comparison research. We simulated d values, varying the total sample size (N = 50, 100, 200, 500, or 1,000) and the misclassification rate (10%, 20%, or 30%). For each combination of sample size and misclassification rate, we simulated 1,000 samples of scores on a dependent variable y for two groups with equal sample sizes (i.e., n1 = n2 = N/2). The population distribution for Group A was specified to have a mean, y–, of .00 and standard deviation of 1.0. The population distribution for Group B was specified to have a mean, y–, of .40 and standard deviation of 1.0. Thus, the population for simulated samples was specified to be homogeneous, with δ = .40. For each simulated sample, we calculated the true d value using the standard formula: d = (MeanA – MeanB)/SDpooled. The distribution of true d values for each condition is shown in Figure B1. These distributions reflect only variability introduced by sampling error variance.

To illustrate the impact of group misclassification, in each simulated sample, we created an observed-group variable by randomly reassigning a proportion of the sample equal to the misclassification rate to the opposite group, thereby ensuring equal misclassification for the two groups. We then calculated the d value for the difference between these observed groups on the dependent variable, y. The distributions of observed d values after misclassification are shown in Figure B2. These distributions reflect both variability introduced by sampling error variance and the bias and variability introduced by group misclassification.

In Figure B2, it is apparent that the mean observed d value is biased toward 0 relative to the true population value (i.e., δ = .40). Moreover, as the total sample size and misclassification rate increase, the distributions of observed d values become increasingly negatively skewed and bimodal. This is because in a minority of samples, group members with the most extreme values of y are misclassified, causing the sign of the observed d value to flip relative to the true d value.

We then corrected for group misclassification using Equations 12 and 13. We calculated rgG using the correlation between the true and observed group membership for each simulated case. The distributions of the corrected d (i.e., dc) values are shown in Figure B3.

In Figure B3, the larger mode in the bimodal distributions is centered on the mean true d value, an indication that the correction model was accurate. However, the dc values retain the negative skew and bimodal distribution of the observed d values because the correction cannot adjust for potential sign flipping in observed d values.

Summary statistics for the distributions of true d values, observed d values, and corrected d values (dc values) are shown in Table B1. Because of the negative skew and bimodal distribution of the observed d values when groups are misclassified, the mean dc value is negatively biased as an estimator of the mean true d value. Similarly, the standard deviation of the dc values is positively biased as an estimator of the standard deviation of the true d values. These biases can be corrected by instead calculating the median dc value to estimate the mean true d value and by calculating the median absolute deviation from the median dc value (MAD) to estimate the standard deviation of the true d values. These estimators are much more robust to the presence of observed d values that are outliers because of sign flipping (cf. Lin et al., 2017). When group misclassification is a potential concern (even if corrections using rgG are not made), we recommend conducting meta-analyses using the median and MAD, rather than mean and standard deviation, of observed or corrected d values.

Transparency

Action Editor: Frederick L. Oswald

Editor: Daniel J. Simons

Author Contributions

B. M. Wiernik outlined the manuscript, wrote the first draft, and prepared Figures 1, 3, and 5. J. A. Dahlke critically revised and edited the manuscript and prepared Figures 2, 4, and 6 and the Appendix B figures.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.