Abstract

It has recently been demonstrated that metrics of structural validity are severely underreported in social and personality psychology. We comprehensively assessed structural validity in a uniquely large and varied data set (N = 144,496 experimental sessions) to investigate the psychometric properties of some of the most widely used self-report measures (k = 15 questionnaires, 26 scales) in social and personality psychology. When the scales were assessed using the modal practice of considering only internal consistency, 88% of them appeared to possess good validity. Yet when validity was assessed comprehensively (via internal consistency, immediate and delayed test-retest reliability, factor structure, and measurement invariance for age and gender groups), only 4% demonstrated good validity. Furthermore, the less commonly a test was reported in the literature, the more likely the scales were to fail that test (e.g., scales failed measurement invariance much more often than internal consistency). This suggests that the pattern of underreporting in the field may represent widespread hidden invalidity of the measures used and may therefore pose a threat to many research findings. We highlight the degrees of freedom afforded to researchers in the assessment and reporting of structural validity and introduce the concept of validity hacking (v-hacking), similar to the better-known concept of p-hacking. We argue that the practice of v-hacking should be acknowledged and addressed.

Confidence in the replicability and reproducibility of research findings is a foundational pillar upon which theory, application, and progress reside. However, this pillar has recently been shaken. Large-scale efforts to document the replicability of research in psychological science have led many of its core findings to be called into question (Open Science Collaboration, 2015). These discipline-wide efforts have unleashed a tidal wave of new discussion and reflection on those modal practices that have contributed to the so-called replication crisis (LeBel & Peters, 2011; Simmons, Nelson, & Simonsohn, 2011). Numerous research and analytic practices, such as overreliance on and misuse of null-hypothesis significance testing, have been questioned, and the need for increased transparency, data sharing, preregistration, and direct replication has been highlighted and encouraged (Asendorpf et al., 2013; Munafò et al., 2017). Despite these laudable developments, Flake, Pek, and Hehman (2017) noted that the topic of measurement has received far less attention. This is surprising given that measurement plays a key role in replicability and ultimately calibrates the confidence researchers can have in their findings: If a measure is invalid, then theoretical conclusions derived from it are questionable.

Many, if not most, measures in social and personality psychology are designed to assess latent constructs that are unobservable in nature. For instance, a self-report scale may be created to assess belief in a just world or right-wing authoritarianism, or to quantify personality traits. 1 Designing valid measures of latent constructs requires that the measures themselves be subject to an ongoing process known as construct validation (Loevinger, 1957). Although psychological measures most commonly take the form of self-report scales, they can also take a variety of other forms, as in the case of reaction time–based implicit measures (for discussion of the assessment of the validity of implicit measures specifically, see De Schryver, Hughes, De Houwer, & Rosseel, 2018; for further information on construct validation, see Borsboom, Mellenbergh, & van Heerden, 2004; Cronbach & Meehl, 1955). As Flake et al. (2017) explained, construct validation “is the process of integrating evidence to support the meaning of a number which is assumed to represent a psychological construct” (p. 2; see Cronbach & Meehl, 1955) and consists of three sequential phases (for a more detailed treatment, see Loevinger, 1957). The first, the substantive phase, involves identifying and defining a construct (via literature review and conceptualization of the construct), determining how it will be assessed (via item development and selection), and ensuring that the resulting scale content is both relevant and representative. In the second phase, the structural phase, a theory about the construct’s structure is developed. Quantitative analyses (e.g., item and factor analyses; assessments of consistency, stability, and measurement invariance) are used to determine the psychometric properties of the measure. The third phase, the external phase, involves examining if the measure appropriately represents the construct via checks for convergent and discriminant validity with other measures, predictive or criterion checks using known outcomes, or comparisons of known groups (for a more detailed overview, see American Educational Research Association, American Psychological Association, & National Council on Measurement in Education, 2014; Cronbach & Meehl, 1955; Loevinger, 1957).

Much of the theoretical work in social and personality psychology centers on the identification and definition of constructs (first phase), and empirical work tends to assess whether these constructs predict, discriminate between, or converge with other measures (third phase). Ascertaining the structure and psychometric properties of the measures used to assess these constructs (second phase) often receives far less attention. For instance, Flake et al. (2017) examined a representative sample of articles from a flagship journal in the field (Journal of Personality and Social Psychology) and found that many constructs studied in social and personality research lack appropriate validation. Specifically, they found an overreliance on Cronbach’s α as the sole source of evidence for structural validity, and argued that rigorous methodologies for measurement are rarely reported. Indeed, Flake et al. found that the problem with validation was actually more severe than it initially appeared. Specifically, they found that research with well-known measures overrelied on Cronbach’s α as the sole test of structural validity. In addition, nearly half of the measures sampled were ad hoc and lacked evidence of validity testing at any of the three phases of validation.

Such a situation poses several threats: It (a) increases the potential for questionable theoretical conclusions and (b) decreases the chance that subsequent research will replicate results, given that (c) the three phases of validation are intertwined. Put simply, conclusions about the construct stemming from the external phase may not hold if issues exist at the substantive phase (e.g., the construct lacks a strong theoretical basis) or the structural phase (e.g., the measure lacks acceptable psychometric properties). Thus, substantive and structural validity need to be assessed if researchers wish to engage in theory testing (external validation) or replication. Fortunately, a set of best practices is already available. They involve moving beyond the simple modal practice of assessing internal consistency (Cronbach’s α) to investigating the stability of scores across time (test-retest reliability), examining the factor structure of the latent construct (or constructs; confirmatory factor analysis, or CFA), and testing for the equivalence of measurement properties across populations, time points, and contexts (measurement invariance; Putnick & Bornstein, 2016; Vandenberg & Lance, 2000). Although tests such as Cronbach’s α and test-retest reliability are widely known and frequently reported, other tests of structural validity, such as measurement invariance, are poorly understood and infrequently conducted, despite their equal importance for theorizing (Flake et al., 2017). Indeed, if evidence for measurement invariance is not obtained—which is typically the case—then it is difficult to determine if a given measure reflects the same construct across samples, contexts, and conditions (see the Results section for a more detailed treatment of different types of structural-validity assessment).

Purpose of the Present Study

In short, measurement validity is central to theory and research in social and personality psychology. Yet rigorous tests of validity are rarely conducted or reported. This widespread tendency to underreport tests of validity leaves the field in a sticky situation: It is currently impossible to know whether the field is facing a mere problem of underreporting (as Flake et al., 2017, highlighted) or the potentially deeper issue of hidden invalidity. It may be that many of the measures used appear to be perfectly adequate on the surface and yet fall apart when subjected to more rigorous tests of validity beyond Cronbach’s α.

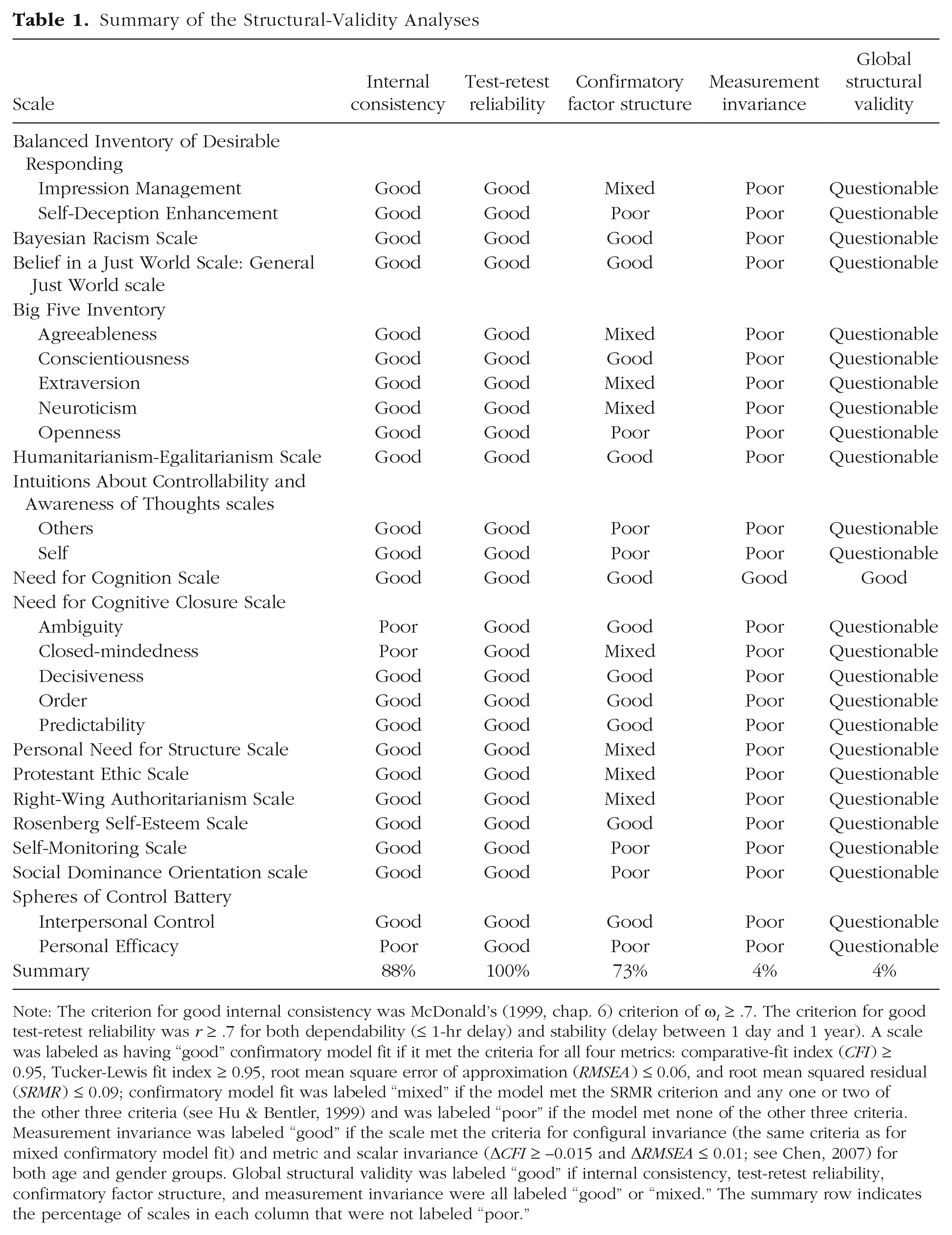

With this in mind, we used several best practices to examine the structural validity of 15 well-known self-report measures that are often used in social and personality psychology (see Table 1). This unique case study demonstrates what can be achieved when best practices are followed in applying a wide number of validity metrics to a large number of measures, each tested in a large sample, and reporting the results. For our investigation, we used data from the Attitudes, Identities, and Individual Differences (AIID) study, a large-scale, multivariate, planned-missing-data study that was conducted via the Project Implicit website (implicit.harvard.edu) between 2004 and 2007 (Hussey et al., 2019).

Summary of the Structural-Validity Analyses

Note: The criterion for good internal consistency was McDonald’s (1999, chap. 6) criterion of ω t ≥ .7. The criterion for good test-retest reliability was r ≥ .7 for both dependability (≤ 1-hr delay) and stability (delay between 1 day and 1 year). A scale was labeled as having “good” confirmatory model fit if it met the criteria for all four metrics: comparative-fit index (CFI ) ≥ 0.95, Tucker-Lewis fit index ≥ 0.95, root mean square error of approximation (RMSEA ) ≤ 0.06, and root mean squared residual (SRMR ) ≤ 0.09; confirmatory model fit was labeled “mixed” if the model met the SRMR criterion and any one or two of the other three criteria (see Hu & Bentler, 1999) and was labeled “poor” if the model met none of the other three criteria. Measurement invariance was labeled “good” if the scale met the criteria for configural invariance (the same criteria as for mixed confirmatory model fit) and metric and scalar invariance (ΔCFI ≥ –0.015 and ΔRMSEA ≤ 0.01; see Chen, 2007) for both age and gender groups. Global structural validity was labeled “good” if internal consistency, test-retest reliability, confirmatory factor structure, and measurement invariance were all labeled “good” or “mixed.” The summary row indicates the percentage of scales in each column that were not labeled “poor.”

Utilizing this data set provided several advantages and unique opportunities. First, the sheer size of the sample involved (N = 81,986 individuals, N = 144,496 experimental sessions) allowed us to assess the psychometric properties of the 15 measures with numbers that were far greater than those used in many earlier validation studies. Second, the data set’s structure allowed us to apply a large range of structural-validity metrics to the same measure in the same study, and to include tests of stability (test-retest reliability) based on multiple delay ranges (immediate vs. up to 1 year). Third, we were able to adopt a comprehensive strategy to structural-validity testing that extended beyond the strategies of previous studies in both nuance and scope. Following best practices, we obtained metrics of consistency (Cronbach’s α; McDonald’s, 1999, ω t and ω h ), test-retest reliability (both dependability and stability; Revelle & Condon, 2018), factor structure (CFA), and measurement invariance. Although some of these tests have been applied to some of the scales we examined, this was often done separately, study by study and sample by sample, never comprehensively within and across a range of measures, as in the present study. Fourth, the recent explosion in Internet-based research and renewed reliance on self-report scales within social and personality psychology (Bohannon, 2016; Gosling & Mason, 2015; Sassenberg & Ditrich, 2019) has led to a situation in which many self-report scales are being used in contexts, and with samples, that differ from those in which they were originally validated. If researchers wish to use these measures in online settings, it is imperative that their structural validity be examined in that context to ensure that their psychometric properties are adequate and do not diverge from those observed in traditional (laboratory) settings.

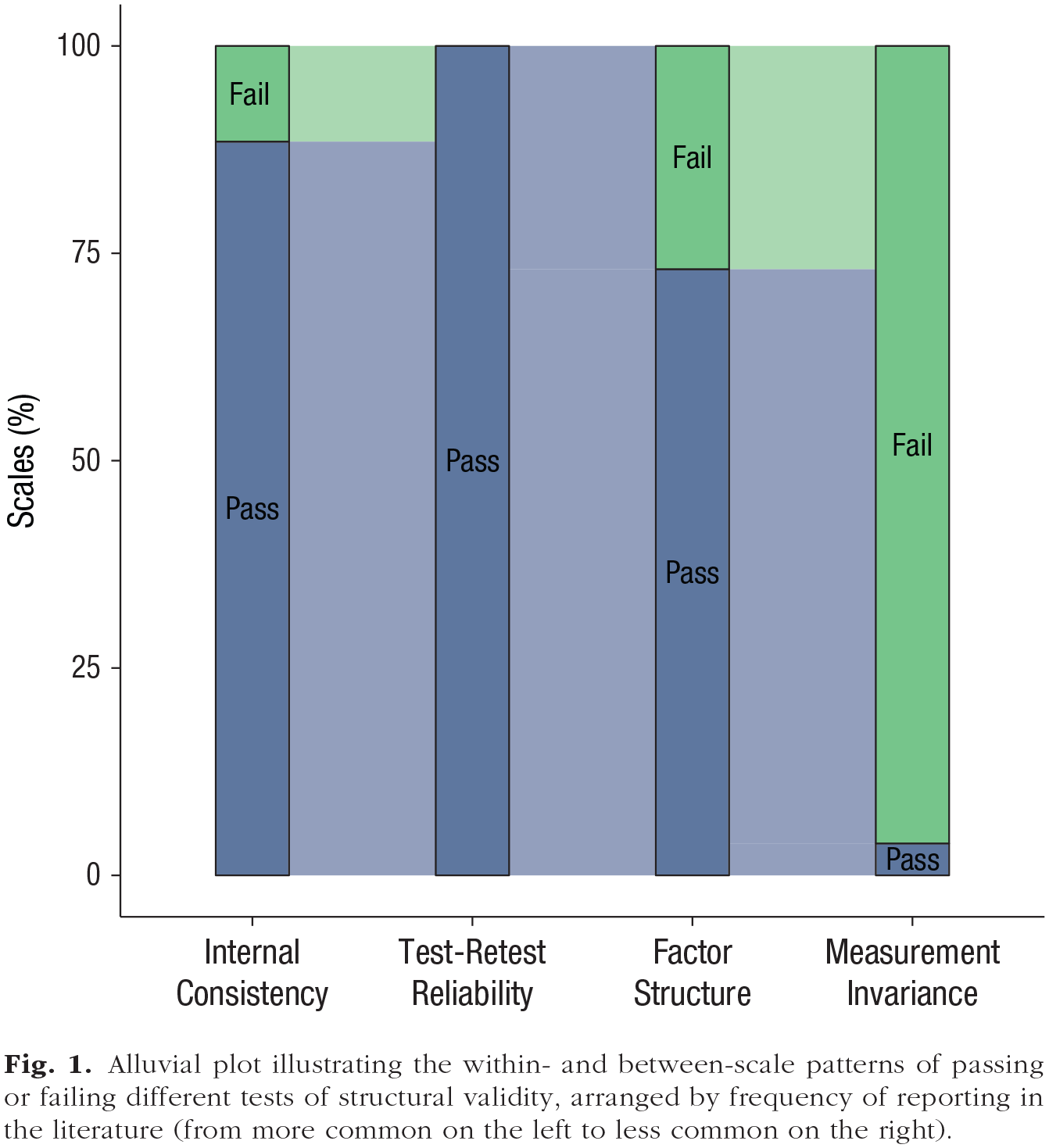

We conducted the tests and report their results in order of the frequency with which the tests are reported in the literature (see Flake et al., 2017). We adopted this strategy in order to demonstrate the inverse relationship between rate of reporting and hidden invalidity. Note we are not suggesting that other researchers should sequence their analyses or reporting in a similar way. Indeed, as argued elsewhere (Flake et al., 2017) the most common test (α) relies on numerous assumptions that can be assessed only by less commonly applied analyses (e.g., within a CFA context).

Before we continue, we want to be clear: Our goal was not to make a final or absolute determination on the validity of any of the scales we assessed, to make a binary determination of their validity or invalidity, or even to present our analytic strategy as a prescriptive set of standards for future work. This is not to say that our results cannot provide input into the ongoing process of validating these scales. Rather, our primary goal was to investigate the issue highlighted by Flake et al. (2017), namely, whether the widespread underreporting of structural-validity information reflects hidden validity or, more worryingly, hidden invalidity.

Disclosures

Preregistration

Our analyses were not preregistered.

Data, materials, and online resources

All code and data to reproduce our analyses are available at the Open Science Framework, at osf.io/23rzk. Additional information on questionnaire items, results not reported here, simplified R scripts for educational purposes, and a change log documenting differences between manuscript versions are also available in supplementary materials at the Open Science Framework, at osf.io/2zx64.

Reporting

We report how we determined our sample size, all data exclusions, all manipulations, and all measures in the study.

Ethical approval

Ethical approval for the underlying AIID study and data set was granted by the University of Virginia’s Institutional Review Board for the Social and Behavioral Sciences (Protocol 2003-0173-00). As these data were collected between 2004 and 2007, this study is technically not in accordance with the most recent version (2013) of the Declaration of Helsinki, which requires preregistration prior to data collection. Ethical approval was not required for our analysis of the existing data.

Method

Participants

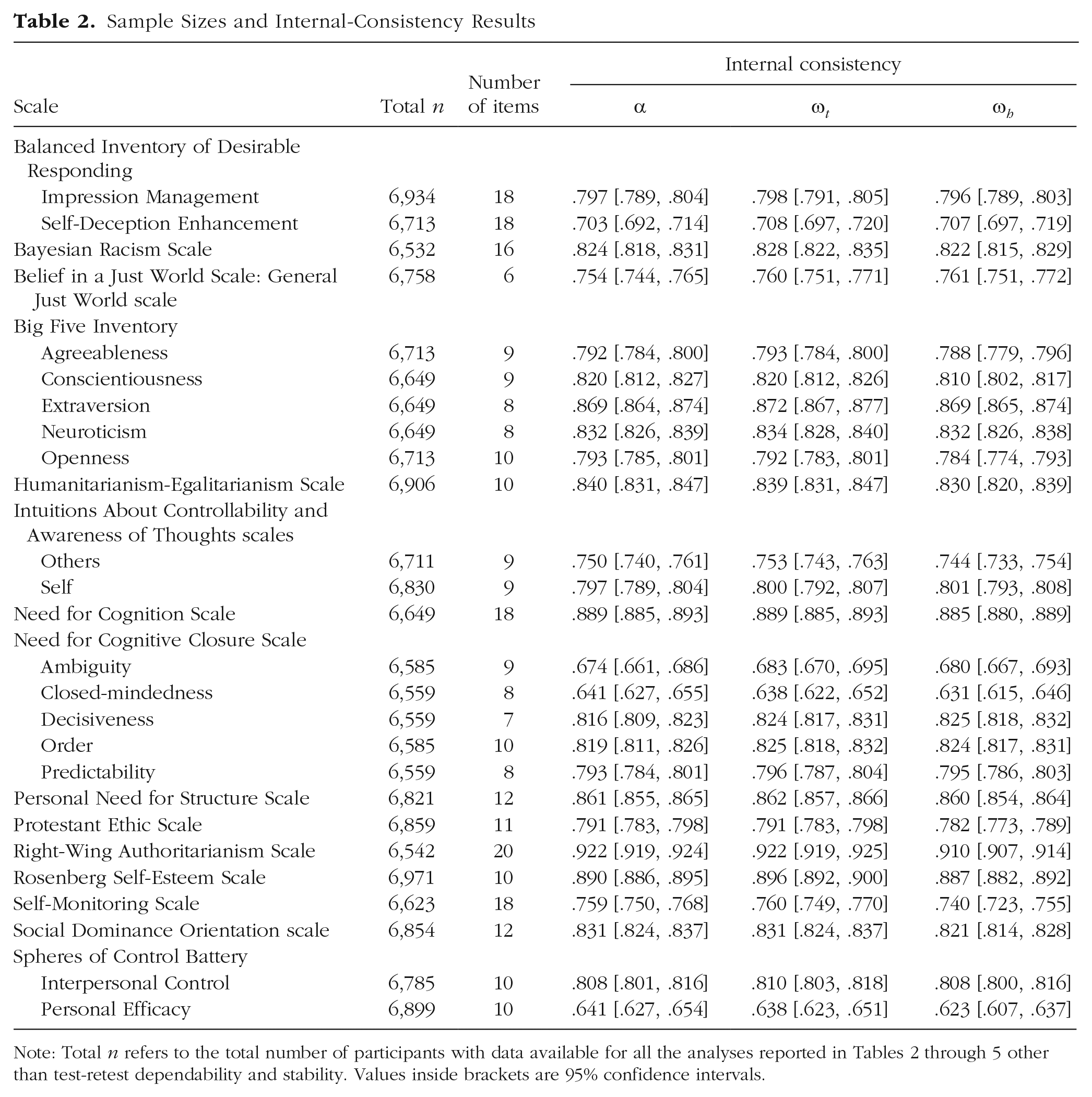

The data of 144,496 experimental sessions involving 81,986 unique participants (50,141 women and 31,845 men; mean age = 30.84, SD = 11.40) were selected for inclusion from the AIID data set on the basis that the participants met our predefined study criteria (i.e., age 18–65, self-reported fluency in English, and complete data on the individual-differences measures and demographics items). Table 2 lists the sample size for each measure. Repeat participation in the study was possible and allowed for the assessment of test-retest reliability. The modal number of participations was 1 (M = 1.76, SD = 2.22).

Sample Sizes and Internal-Consistency Results

Measures

Fifteen individual-differences questionnaires were selected for inclusion in this study on the basis of their availability in the AIID data set. Five of these questionnaires had a particularly large number of items and were subdivided into two parts that were delivered between participants because of time constraints on the Project Implicit site. This resulted in participants being assigned to 1 of 20 different versions of the study materials. The 15 questionnaires and their subdivisions for purposes of the AIID study were as follows: the Balanced Inventory of Desirable Responding (Version 6; Paulhus, 1988; cited in Robinson, Shaver, & Wrightsman, 1991; Impression Management scale vs. Self-Deception Enhancement scale), Bayesian Racism Scale (Uhlmann, Brescoll, & Machery, 2010), Belief in a Just World Scale (General Just World scale only; Dalbert, Lipkus, Sallay, & Goch, 2001), Big Five Inventory (John & Srivastava, 1999; Extraversion, Conscientiousness, and Neuroticism scales vs. Agreeableness and Openness scales), Humanitarianism-Egalitarianism Scale (Katz & Hass, 1988), Intuitions About Controllability and Awareness of Thoughts scales (Nosek, 2012; Self scale vs. Others scale), Need for Cognition Scale (Cacioppo, Petty, & Kao, 1984), Need for Cognitive Closure Scale (Webster & Kruglanski, 1994; Order and Ambiguity scales vs. Predictability, Decisiveness, and Closed-mindedness scales), Personal Need for Structure Scale (Neuberg & Newsom, 1993), Protestant Ethic Scale (Katz & Hass, 1988), Right-Wing Authoritarianism Scale (Altemeyer, 1981), Rosenberg Self-Esteem Scale (Rosenberg, 1965), Self-Monitoring Scale (Snyder, 1987), Social Dominance Orientation scale (Scale 4; Pratto, Sidanius, Stallworth, & Malle, 1994), and Spheres of Control Battery (Paulhus, 1983; Interpersonal Control scale vs. Personal Efficacy scale).

Fourteen of these questionnaires had previously been employed in a study reported in a published article or book chapter, whereas one (the Intuitions about Controllability and Awareness of Thoughts scales) had not. The psychometric properties of all measures that had been used in previous publications had been examined to at least some extent, with one exception (i.e., the Bayesian Racism Scale, which has been used to make theoretical conclusions without a published validation study). As implemented in the AIID study, the questionnaires employed between 6 and 44 items (M = 19.5, SD = 11.8) and between 1 and 5 scales (M = 1.7, SD = 1.4). All scales used the same response format, a Likert scale ranging from 1 (strongly disagree) to 6 (strongly agree). In some cases, the response format differed from the measure’s original format, and when significant modifications were made (i.e., change from a dichotomous to a Likert response format), they were carried out in accordance with recommendations in the literature (Dalbert et al., 2001; Stöber, Dette, & Musch, 2002). The wording of a minority of items in several measures was adjusted to make them more appropriate for a general rather than student sample (see the supplementary materials at https://osf.io/2zx64/).

Procedure

In this section, we provide a brief overview of the AIID study (for a more detailed description, see Hussey et al., 2019). Prior to the study, participants voluntarily navigated to the Project Implicit research website, created a unique log-in name and password, and provided demographic information. Those assigned to the AIID study then provided informed consent and completed one Implicit Association Test (Greenwald, McGhee, & Schwartz, 1998) and a subset of self-report measures from an attitudes battery. The IAT and the self-report measures centered on the same attitude domain, selected from a set of 95. Each domain consisted of two concept categories that were related to social groups, political ideologies, preferences, or popular concepts from the wider culture (e.g., African Americans vs. European Americans, Democrats vs. Republicans, coffee vs. tea, or Lord of the Rings vs. Harry Potter). Following the IAT and self-report ratings, participants were randomly assigned to complete 1 of the 20 versions of the individual-differences self-report measures.

In the current study, we made use of data only from the demographics questionnaire (age, gender, and English fluency) and individual-differences measures. Given that people completed only a small subset of the total available measures in any one session, repeat participation in the AIID study was allowed. No restrictions were placed on the time between experimental sessions (i.e., individuals could compete one session immediately after another or up to several years later). In order to maintain a consistent analytic strategy, we analyzed each questionnaire’s scales separately. This is consistent with past use of these questionnaires in the almost all cases.

Results

Data preparation

Analyses for a given questionnaire were conducted on data obtained from the first experimental session in which participants completed that questionnaire, with the exception that test-retest reliability analyses were conducted on data obtained from the first two sessions in which participants completed the questionnaire. Reverse scoring of items was conducted according to the recommendations of each scale’s original publication.

Analytic strategy

For each scale, we calculated both distributional information and multiple metrics of structural validity (see Tables 2–5), following the recommendations of Flake et al. (2017) and Revelle and Condon (2018). Distributional information (mean, standard deviation, skewness, and kurtosis) was calculated from each scale’s sum scores. All analyses were implemented using the R packages lavaan (Rosseel, 2012) and semTools (Jorgensen et al., 2019). Confidence intervals were bootstrapped via the case-removal and quantile method using 1,000 resamples, and were implemented using the R packages rsample (Kuhn, Chow, & Wickham, 2019) and purrr (Henry & Wickham, 2019).

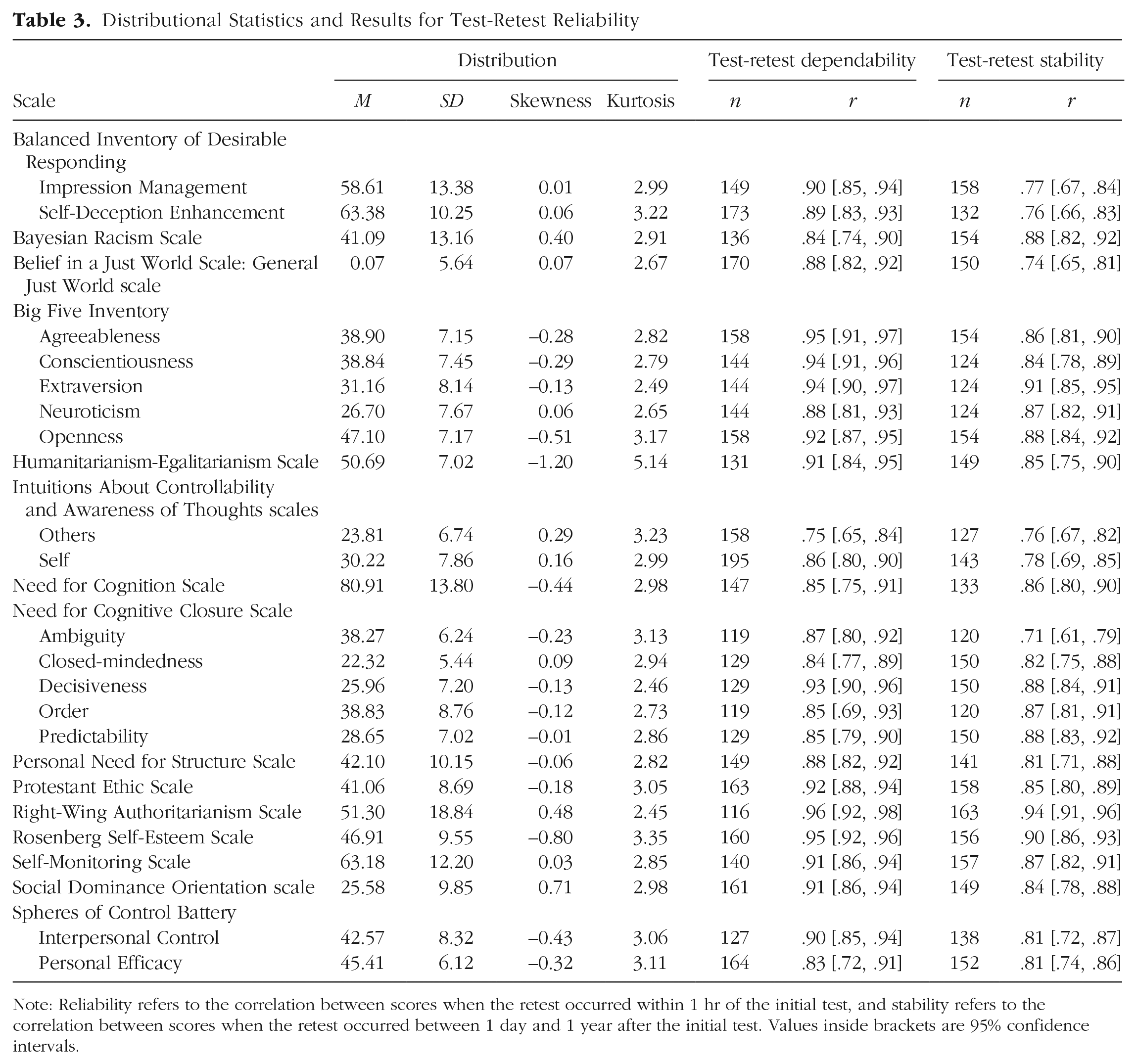

Distributional Statistics and Results for Test-Retest Reliability

Note: Reliability refers to the correlation between scores when the retest occurred within 1 hr of the initial test, and stability refers to the correlation between scores when the retest occurred between 1 day and 1 year after the initial test. Values inside brackets are 95% confidence intervals.

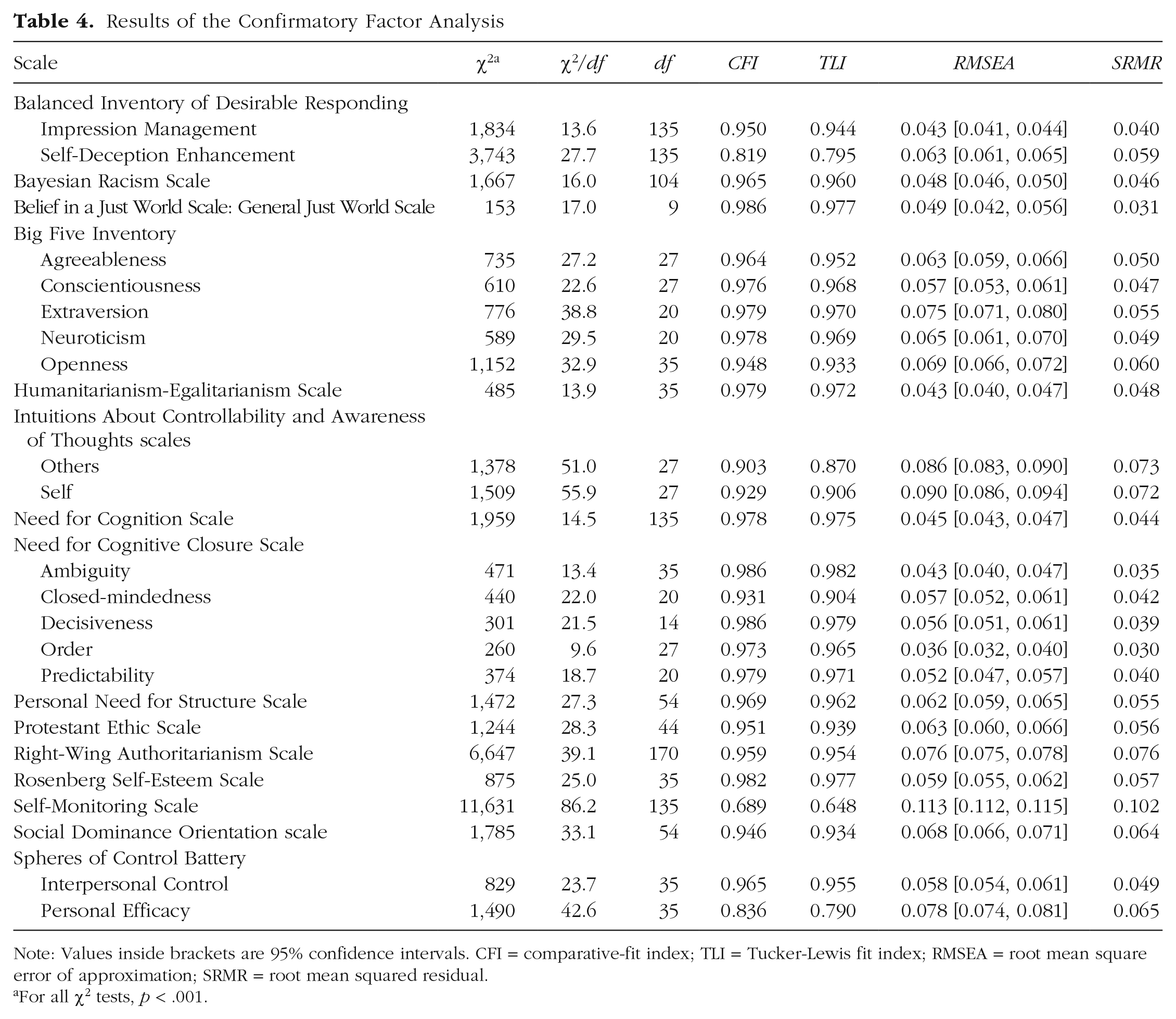

Results of the Confirmatory Factor Analysis

Note: Values inside brackets are 95% confidence intervals. CFI = comparative-fit index; TLI = Tucker-Lewis fit index; RMSEA = root mean square error of approximation; SRMR = root mean squared residual.

For all χ2 tests, p < .001.

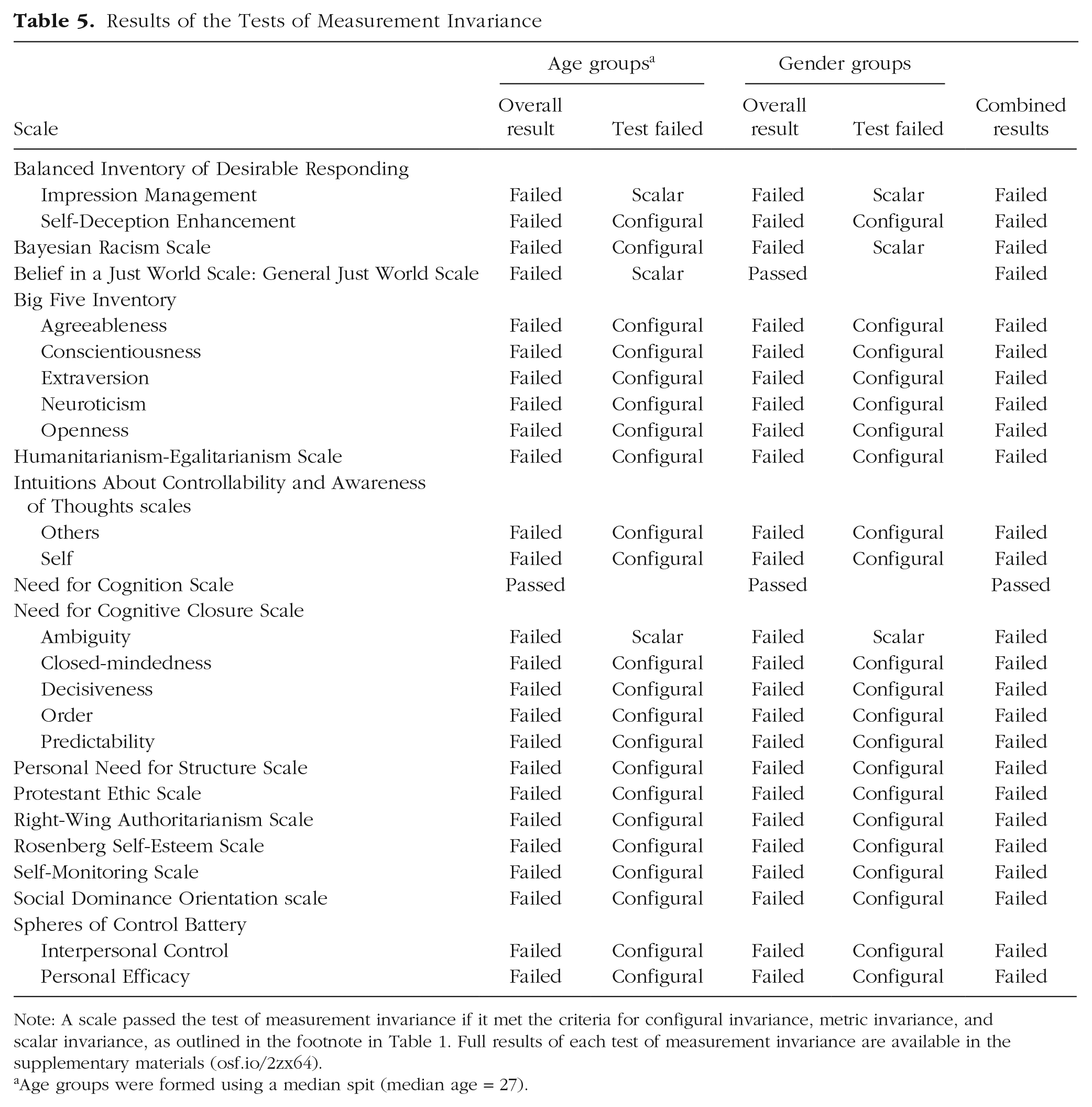

Results of the Tests of Measurement Invariance

Note: A scale passed the test of measurement invariance if it met the criteria for configural invariance, metric invariance, and scalar invariance, as outlined in the footnote in Table 1. Full results of each test of measurement invariance are available in the supplementary materials (osf.io/2zx64).

Age groups were formed using a median spit (median age = 27).

For all scales, we employed simple measurement models that did not involve method factors (e.g., negatively worded items) or item cross-loadings. We did so for three reasons. First, this uniform analytic strategy allowed us to compare rates of validity across scales, to address our primary research question. Second, with few exceptions (e.g., the Big Five Inventory), the “true” measurement model for most scales either is a matter of long debate (e.g., the Rosenberg Self-Esteem scale; see Mullen, Gothe, & McAuley, 2013; Salerno, Ingoglia, & Lo Coco, 2017; Supple, Su, Plunkett, Peterson, & Bush, 2013; Tomas & Oliver, 1999) or has of yet received no scrutiny (e.g., the Bayesian Racism Scale). Therefore, choices to employ alternative models would be exploratory or weakly informed, and comparisons among these models would detract from answering our primary research question. Third, most researchers who use these scales simply calculate sum scores and rely on these in their subsequent analyses. In doing so, they are tacitly endorsing simple measurement models with no cross-loadings or method factors (Rose, Wagner, Mayer, & Nagengast, 2019). Adopting similar assumptions meant that our findings would reflect how these scales are commonly used and interpreted.

The use of cutoff values for decision making has both potential benefits and potential costs, and results thus obtained should be interpreted with caution (Hu & Bentler, 1999). Following the recommendations of Vandenberg and Lance (2000), we report full results for all tests in order to allow researchers to apply their own decision-making methods if they so wish. Nonetheless, the decision whether or not to employ a scale in a future study is arguably a dichotomous decision, and therefore binary recommendations are useful in many cases. This is particularly true for researchers who do not have a background in psychometrics and want to rely on others’ expertise to judge whether a scale is sufficiently valid for use. We therefore apply common and recommended cutoff values to all our test metrics in order to summarize and compare the relative validity of different scales across different aspects of structural validity.

Consistency

Given that researchers have argued that Cronbach’s α is frequently misused and of limited utility (Flake et al., 2017; Schmitt, 1996; Sijtsma, 2009), we also used two less frequently reported but arguably superior metrics of internal consistency: McDonald’s ω t (omega total) and ω h (omega hierarchical; McDonald, 1999, chap. 6); ω t provides a metric of total measure reliability, or the proportion of variance that is attributable to sources other than measurement error., whereas ω h provides a metric of factor saturation, or the proportion of variance that is attributable to a measure’s primary factor (rather than additional factors or method factors; see Revelle & Condon, 2018). We employed ω t ≥ .7 as the cutoff value for good internal consistency because this cutoff is typically used for α and the two metrics employ the same scale (Nunnally & Bernstein, 1994).

Dependability and stability

Test-retest reliability was estimated for the subset of participants with available data (n = 7,542) using Pearson’s r correlations. We calculated two forms of test-retest reliability, according to the recommendations of Revelle and Condon (2018). First, test-retest dependability was calculated using the data from those participants who completed a scale twice within 1 hr. Second, test-retest stability was calculated using the data from those participants who completed a scale twice with a delay between 1 day and 1 year. Our cutoff value for both good test-retest dependability and good test-retest stability was r ≥ .7, as is commonly recommended in the literature (Nunnally & Bernstein, 1994).

Factor structure

Because of the large number of scales, we specified and assessed the fit of measurement models using a standardized approach based on recommended best practices (Hu & Bentler, 1999; Rose et al., 2019). First, a confirmatory factor-structure model for each scale was defined using the items specified in the scales’ original publication. No method factors (e.g., for negatively worded items) or item cross-loadings were included.

Given that an ordinal Likert response format was used for all scales, and that the amount of skew differed among the scales, we employed the diagonally weighted least squares estimator along with robust standard errors of parameter estimates (i.e., the WLSMV, estimator option within lavaan). Simulation studies have shown that this estimator function is superior to the more common maximum likelihood method (Li, 2016). Performance of all the scales was poorer when the models were refitted using the maximum likelihood method, with or without robust standard errors.

Previous work has repeatedly suggested that multiple indices of a model’s goodness of fit should be calculated and reported even if only a subset of these are used for decision-making purposes (Vandenberg & Lance, 2000). We therefore calculated the following indices: Our metrics of absolute fit were chi-square tests (although, given our sample sizes, the p values for these were universally significant and therefore uninformative; nonetheless, chi-square values should be reported), chi-square normalized by number of items, and the root mean squared residual (SRMR). Our measure of relative fit was the Tucker-Lewis fit index (TLI). Our noncentrality indices were the comparative-fit index (CFI) and root mean square error of approximation (RMSEA; its 95% confidence intervals were also calculated). For decision making regarding model fit, we employed the cutoffs suggested by Hu and Bentler (1999: i.e., SRMR ≤ 0.09, TLI ≥ 0.95, CFI ≥ 0.95, RMSEA ≤ 0.06). Hu and Bentler argued that basing model-fit decisions two fit indices, rather than one, lowers the combined rate of Type I and Type II errors. Specifically, they recommended that model-fit determinations be based on SRMR combined with one of the following: CFI, TLI, or RMSEA. However, having no strong prior preferences among these multiple fit indices, we labeled individual scales as demonstrating good or poor fit according to the fit observed with all three combinations. That is, if a scale demonstrated good fit with all three metric permutations (i.e., SRMR + CFI, SRMR + TLI, and SRMR + RMSEA), we labeled the fit as “good”; if the fit was good using one or two but not all three permutations, it was labeled as “mixed”; and if it was not good for any of the three permutations, it was labeled as “poor.” 2

Measurement invariance

Assessing a scale’s capacity to measure the same construct comparably in different populations or contexts typically involves three component tests: tests of (a) configural invariance (i.e., equivalence of model form: whether the unconstrained model provides adequate fit in each of the groups), (b) metric invariance (or weak factorial invariance; i.e., equivalence of factor loadings), and (c) scalar invariance (or strong factorial invariance; i.e., equivalence of item intercepts, or thresholds; Putnick & Bornstein, 2016). These types of invariance are typically assessed with nested models; the initial measurement model is first fit to each group’s data, a second fit constrains factor loadings to be equivalent, and a third fit constrains item intercepts (or thresholds) to be equivalent. Change in fit metrics between these nested models is then typically used to determine whether each test is passed in sequence. When a scale passes all three tests, one can conclude that correlations between scores on the scale and other external variables have equivalent interpretations across the groups. That is, individuals’ observed scores on the scale are likely to measure the same latent variable and in a comparable way, regardless of the groups to which the individuals belong. Loosely speaking, one accessible interpretation of meeting measurement invariance is that individuals in the different groups interpret the items in an equivalent manner. Not meeting measurement invariance has important implications for the researcher: It is not possible to meaningfully interpret comparison between groups or associations between scores on the scale and external variables.

Although tests of measurement invariance are typically performed between groups that the researcher wants to compare directly, one should also assess measurement invariance between groups that one tacitly assumes should be invariant. For example, for many studies, researchers recruit adults (e.g., ages 18–65) and both men and women, but do not seek to make comparisons based on either age or gender, or to account for the influence of age or gender within their statistical models. In such cases, the researchers implicitly assume that the scales measure the same construct (or constructs) in different age groups and in both men and women. It is therefore useful to test these two assumptions, specifically, that the employed scales are invariant across gender and, for example, across individuals above versus below the median age in the sample. Similarly, if researchers explicitly wish to make comparisons between such categories (e.g., between men and women), measurement invariance is a requirement for these comparisons to be meaningful. For example, personality differences between men and women are theoretically meaningful only if they represent differences in latent means rather than factor loadings or intercepts. In all cases, measurement invariance is therefore a necessary prerequisite for subsequent substantive analyses. Therefore, we tested measurement invariance for individuals above versus below the median age in our sample (median age = 27) and for men versus women.

Historically, the most common method used to test measurement invariance was to assess the statistical significance of changes in absolute model fit (Putnick & Bornstein, 2016; Vandenberg & Lance, 2000). This was not suitable in the present study because of the sensitivity of chi-square tests to our large sample sizes. In addition, relying exclusively on the significance of chi-square tests, in place of alternative fit indices such as RMSEA, has fallen out of favor over time (Putnick & Bornstein, 2016). Numerous simulation studies have been conducted to explore which indices and cutoffs (if any) should be used. Recommended cutoff values have been described as ranging from liberal (e.g., Cheung & Rensvold, 2002) to conservative (e.g., Meade, Johnson, & Braddy, 2008), and the real-world applicability of these cutoffs is a matter of ongoing debate (Little, 2013). For tests of configural invariance, we elected to employ the same criteria as for mixed CFA fit (Hu & Bentler, 1999), and for tests of metric and scalar invariance, we chose to use Chen’s (2007) moderate criteria of both ΔCFI ≥ –0.015 and ΔRMSEA ≤ 0.01. This two-metric strategy is broadly compatible with the criteria used for CFA and configural invariance fits, as well as being the modal reporting practice according to a recent review (Putnick & Bornstein, 2016). The same estimator was used as in the CFA fits.

Results synthesis

A summary of the results for these metrics of structural validity using recommended cutoff values is presented in Table 1. This table provides a concise summary of the structural-validity evidence for each individual scale, as well as of the evidence across scales. Tables 2 through 5 provide the results for all statistical metrics and the aspects of structural validity to which they speak (i.e., internal consistency, test-retest reliability, factor structure, and measurement invariance for age and gender groups), along with details regarding each scale (number of participants, number of items), and distributional information (mean, standard deviation, skewness, kurtosis). When combined, Tables 1 through 5 provide a wide range of psychometric properties for 15 commonly used self-report individual-differences scales that could inform their future use. Full results of the tests of measurement invariance (i.e., results for each fit index for each test) are available in the supplementary materials. Additionally, recent research has quantified the impact of failure to meet measurement invariance as a continuous variable (e.g., Nye & Drasgow, 2011). Although this is beyond the scope of this article, the supplementary materials provide continuous estimates of the impact of measurement invariance on the magnitude of between-groups comparisons: For each between-groups comparison (i.e., participants above vs. below the median age, male vs. female participants), the between-groups effect size (Cohen’s d) was calculated separately for the observed sum scores and the latent scores, and then the difference between these two estimates was computed.

The summary labels in Table 1 serve to condense multifaceted metrics of validity into categorical conclusions in order to enable decision making with regard to our core research question (i.e., whether the underreporting in the literature represents hidden validity or invalidity). This trade-off between nuance and heuristic value is analogous to the use of p values, which are natively continuous, but which are often reduced to a significant-versus-nonsignificant dichotomy to facilitate conclusions regarding hypotheses. These categorical labels should not be taken as claims about literal truth for any research question other than our own (e.g., for assessing the adequacy of a scale for future use). Instead, such questions should be informed by the continuous and multifaceted results reported in Tables 2 through 5, which offer a more nuanced perspective on structural validity.

Discussion

The reproducibility and replicability of research findings, as well as confidence in theory and application, require valid measures. Yet as Flake et al. (2017) pointed out, structural validity is rarely reported in the literature, and even when it is, the reported tests are usually restricted to a single and flawed index (Cronbach’s α). This raises the question: Is the underreporting of tests of structural validity a mere nuisance, insofar as these measures are in fact valid, or, more troublingly, is there an abundance of invalid measures hiding in plain sight (i.e., hidden invalidity)? To examine this question, we submitted 15 self-report measures from social and personality psychology to a comprehensive battery of structural-validity tests (i.e., we examined their distribution, consistency, test-retest reliability, factor structure, and measurement invariance for gender groups and age groups defined by a median split). Doing so seems timely and necessary given the broader reevaluation of modal practices taking place in psychological science (Munafò et al., 2017) and a growing reliance on self-report data collected from online samples (Sassenberg & Ditrich, 2019).

Before unpacking our findings it seems useful to distinguish between two concepts: the weight of evidence (e.g., presence and quality of evidence, ranging weak to strong) and the nature of conclusions (e.g., given that evidence, what should one conclude about a measure’s validity, on a continuum ranging from “good” to “questionable” to “poor”?). We argue that our results have strong evidential weight insofar as they were derived from a large and diverse sample (n > 6,500 per scale), were obtained across follow-up periods, and were obtained using a wider-than-usual variety of structural-validity metrics applied to many different scales. Indeed, to the best of our knowledge, this is the first study to consider the full range of metrics of structural validity, including multiple metrics of internal consistency, test-retest reliability, confirmatory factor structure, and measurement invariance, and the first to simultaneously apply them to so many scales. We also acknowledge our study’s potential evidential weaknesses, in that recruitment was from a single population (i.e., an online sample) and that we considered only the structural phase of validity assessment but not the external phase.

To develop a conclusion, we employed a dichotomization strategy to synthesize the results across the scales. Most of the scales passed certain tests of structural validity: Specifically, 88% demonstrated good internal consistency, and 100% demonstrated good test-retest reliability. Yet many failed other tests of structural validity: Only 73% demonstrated good fit with the expected factor structure, and a surprisingly tiny fraction (4%) demonstrated measurement invariance for both age and gender groups. Only a single scale (Need for Cognition) passed all four metrics and can be said to have good global structural validity. Our results therefore appear to suggest that the widespread underreporting of structural validity highlighted by Flake et al. (2017) may reflect hidden invalidity. Why would this be the case given that most of these scales are widely used throughout psychological science?

One possibility is that invalidity may simply have been hidden until now: The full range of metrics of structural validity has been reported for very few studies. Our findings support this idea, as the metrics the scales tended to pass or fail were not random. The scales were more likely to fail those validity metrics that have been less often reported in the literature (factor structure and measurement invariance). Conversely, the scales were more likely to pass those metrics that have been reported more often in the literature (Cronbach’s α and test-retest r). Figure 1 illustrates this hierarchical, or Guttman, structure among the validity metrics. The correlation between failure rate and reporting rate highlights the potential for a general pattern of hidden invalidity throughout the discipline.

Alluvial plot illustrating the within- and between-scale patterns of passing or failing different tests of structural validity, arranged by frequency of reporting in the literature (from more common on the left to less common on the right).

The question then becomes, why was the structural fit and measurement invariance of these scales mixed or poor when their internal consistency and test-retest reliability were generally so good? One possibility that comes to mind is that tests of confirmatory factor structure and measurement invariance are inherently stricter. A second is the measures used in the field of psychological science have been overoptimized to demonstrate good consistency, to the detriment of other psychometric properties.

To understand this idea more clearly, imagine that a researcher sets out to develop a new scale assessing negative automatic thoughts among people with depression. After constructing her scale, she attempts to determine how reliable it is, calculates Cronbach’s α, and obtains a value of .60. As things currently stand, reviewers and users of the scale might comment that this value is problematically low. The researcher might then spend her limited time and resources attempting to improve α so that it tips over the commonly used and sought-after (yet arbitrary) .70 cutoff, for example, by excluding or rewording items and testing a new version of the scale. As a consequence, she would be less likely to spend her finite resources assessing and attempting to improve other aspects of the scale’s structural validity, such as measurement invariance between groups. Yet doing so might have a larger payoff than chasing α: If the scale does not meet criteria of measurement invariance, subsequent longitudinal research using the scale with, for example, depressed individuals before and after therapeutic intervention might lead researchers to incorrectly infer that those individuals changed in terms of the latent variable (e.g., automatic thoughts in depression), when in fact they might simply have interpreted the items differently across the two measurement time points. For example, the therapeutic intervention might not serve to decrease the frequency of automatic thoughts (i.e., produce changes in the underlying latent variable), but instead might increase participants’ introspective abilities to more accurately report on the frequency of those thoughts (i.e., only the measurement properties of the scale might have changed). In other words, researchers might incorrectly infer that the intervention is effective in decreasing negative automatic thoughts in depression when in fact it is not.

In short, we are not arguing that internal consistency should be neglected, but rather saying only that it (via Cronbach’s α) should not be the sole focus in assessing structural validity, especially given its various flaws (Flake et al., 2017). Instead, researchers should adopt a more considered perspective by probing structural validity from multiple angles, especially those relevant to the context in which the scale in question is likely to be used (e.g., measurement invariance for known groups, test-retest reliability for longitudinal research). Failing to do so risks overoptimizing the measure on a flawed metric and without regard to other important but often overlooked properties.

Of course, the two possible explanations of the tests’ differential failure rates (i.e., relative strictness of the tests vs. overoptimization on internal consistency to the neglect of other forms of validity) are not necessarily mutually exclusive. Regardless of the explanation, more rigorous reporting of these metrics is required.

We have also considered a number of other factors—none of which are incompatible with hidden invalidity—that could have contributed to our results. One possibility is that the scales themselves are less than optimal measures of the construct (or constructs) of interest. This could be for several reasons. For example, the items may be more poorly worded than previously appreciated, or the structure among the items may not be as originally assumed. It may also be the case that responding in this sample was influenced by factors that are theoretically relevant; for example, the scales may have unintentionally measured closely related but previously unappreciated constructs. Or responses may have been influenced by theoretically irrelevant factors (e.g., low-quality responding, demand effects, additional latent factors, or item cross-loading among these factors). Indeed, articles considering the confirmatory factor structure of established measures frequently reject the expected model and suggest alternative models with different latent-variable structures or item cross-loadings (e.g., the Rosenberg Self-Esteem Scale: Mullen et al., 2013; Salerno et al., 2017; Supple et al., 2013; Tomas & Oliver, 1999). In many cases, despite subsequent work suggesting that the factor structure is not what the scale’s creators originally conceived or evidence that certain items should be dropped or modified, scales are most commonly used with the originally posited items and interpreted according to the originally posited factor structures, so that there is something of a primacy bias in the use of many scales. Indeed, the resistance to incorporating emerging structural-validity evidence into scales’ use (e.g., when researchers decide whether to use a given scale, how to score it, how to interpret its scores, and what variations in items or response options to use) is an ongoing issue for the field.

A second possibility is that there was something problematic about the current sample or that participants differed from those used during the original validation processes for these scales. We believe that this is unlikely given that the sample was, if not representative of the general population, far more representative than the samples typically used in laboratory-based research.

Finally, it is possible that scales demonstrated poor structural validity because the constructs they were intended to measure were poorly conceived in the first place (i.e., in the substantive phase of validation; Flake et al., 2017) or poorly captured by the scale items. Although this may seem unlikely given how well-known many of the scales we tested are, allowing for such a possibility protects against the reification of a construct merely because a scale has been created to assess it. Scales for which such issues do exist could be improved (or even avoided) by following Tay and Jebb’s (2018) recent suggestions for continuum specification. For instance, researchers could address issues of polarity ambiguity within their scales. Do low scores on a scale (e.g., a perfectionism scale) represent the absence of the construct of interest (e.g., low or absent perfectionism) or the presence of its opposite (e.g., high carelessness)? Researchers could also address issues of gradation, that is, the quality, or dimension, separating low from high scores. Take, once again, the example of depression: Multiple scales are available to assess depression, but they differ in their dimension of gradation; one measures the frequency of depressive thoughts, but another measures the degree of belief in the literality of those thoughts, and yet another measures the experienced emotional intensity of those thoughts. The take-home message here is that well-developed frameworks for measurement development already exist for researchers looking to construct or refine their scales. We encourage researchers to make better use of them, including by attending to all three interrelated phases of validation (substantive, structural, external; Flake et al., 2017). Although we have focused on the second phase, all phases of this process must be attended to when making a holistic evaluation of a measure’s validity. One phase is neither sufficient nor singularly important relative to the other two, nor should one phase be maximized at the expense of the others.

Implications and future directions

Our findings have implications for individual researchers in particular and for the field more generally. To understand why, imagine that a research team sets out to test a specific hypothesis using one of these scales (e.g., whether belief in a just world predicts some behavior of interest). They run their study and then assess if the scale they used provides a reliable index of the construct of interest. Behaving as most researchers do, they answer this question by examining the consistency of their data, and possibly the data’s test-retest reliability. These tests tell the team that the scale demonstrates adequate validity. This necessity taken care of, they then proceed to what is, for them, the real meat of the issue—interpreting their findings relative to their original hypothesis. Yet our findings suggest that if the researchers were to adopt a more comprehensive assessment following best practices, they would discover that the underlying factor structure of their construct and its invariance across samples are problematic, and thus might exert more caution before interpreting their data. In other words, issues at the second phase of validation (structural) moderate researchers’ ability to make claims at the third phase (external validation), such as claims about differences between known groups, interrelationships between latent constructs, and the prediction of behavior. Therefore, although questions concerning the structural validity of their measures may not be inherently appealing to all researchers, assessing structural validity is a requirement for making conclusions at other levels.

Another take-home message, one that we have not seen explicated elsewhere, is that a finding can be extremely replicable and yet give rise to invalid conclusions. For example, even if two groups (e.g., depressive and nondepressive individuals) were shown across multiple studies to differ in their observed mean scores on a given scale (e.g., the Rosenberg Self-Esteem Scale), this replicable finding would typically be interesting and useful only if it also reflects differences in a latent variable (e.g., self-esteem), rather than mere differences in how the two groups interpret the items in the questionnaire. In short, replicability does not equal validity. The potential for hidden structural invalidity therefore has implications for the conclusions made using a given scale.

What applies to an individual also applies to the field as a whole. Our findings highlight the possibility that hidden invalidity may be a common feature of many scales in the literature. The overwhelming majority of the scales we examined were found to be structurally invalid in some regard (at least in a categorical sense). As a thought experiment, imagine that the scales examined here are a representative subset of those used in social and personality psychology. If so, there are likely many other instances of hidden invalidity in other scales used in the field. Indeed, even if the true rate of hidden invalidity is only a fraction of that observed here, this would still bring the conclusions of a large number of studies using invalid scales into question. It is currently difficult to assess the true prevalence of hidden invalidity given that researchers often report, and reviewers and editors request, only a single metric of structural validity (Cronbach’s α). Therefore, at worst, the literature may be unwittingly advancing a simplistic and overly positive view of how valid many of the most commonly used measures actually are, and reporting invalid conclusions based on these scales. At best, the hidden invalidity we observed may simply reflect underreporting of scales that will ultimately be shown to be valid. Yet until comprehensive reporting of tests of validity is common practice, one cannot know. We therefore encourage a more rigorous, multimetric approach to structural validity across all areas of psychology, an approach in which researchers identify and report, and reviewers and editors request, multiple sources of validity evidence. Note that although we endorse more widespread assessment of structural validity, we are not prescribing how it should be done, or presenting the methods or any cutoff values we have used here as prescriptive recommendations. For pragmatic advice on improving measurement practices, we encourage readers to consult Flake and Fried (2019). That said, and for educational purposes, we have included in our supplementary materials simplified and commented R code to illustrate how we implemented our validity assessments.

Finally, two barriers limit the field’s ability to reach the goals of increasing the frequency with which metrics of structural validity are used and reported and conditioning substantive claims on the basis of evidence: (a) the staggering degrees of freedom available to researchers when they assess the structural validity of their measures and (b) the fact that researchers are heavily motivated to conclude that their measures are valid in order to test their core hypotheses. Imagine, for instance, that a researcher accepts the importance of assessing structural validity and sets out to test the internal consistency, test-retest reliability, factor structure, and measurement invariance of a study’s measures. In order to do so, the researcher would have to choose a specific metric for each validity dimension from the many available options, select a cutoff for each metric from among many recommended values, choose an implementation of each test from among multiple options that frequently differ in their results, and make choices among numerous less visible experimenter degrees of freedom. And this is not to mention all the potential interactions between these steps. In the absence of firm guidelines, one’s decision-making pathway when choosing how to report structural validity is massively unconstrained, a garden of forking paths (Gelman & Loken, 2013).

This lack of constraint may lead to two practices that are equally detrimental to the reproducibility, replicability, and validity of research findings. Making an analogy with p-hacking (Simmons et al., 2011), we refer to the first practice as v-hacking: selectively choosing and reporting a combination of metrics, including their implementations and cutoffs, and taking advantage of other degrees of experimenter freedom so as to improve the apparent validity of measures. For example, in 2004, Watson noted that test-retest reliability studies were rarely conducted, but that when they were, authors “almost invariably concluded that their stability correlations were ‘adequate’ or ‘satisfactory’ regardless of the size of the coefficient or the length of the retest interval” (p. 326). Researchers may be driven to such conclusions given the current incentive structures for both research and applied work: In research, for example, reporting that a measure demonstrates adequate validity allows one to test one’s core hypotheses using that measure, therefore increasing one’s chances of being published (theories may be supported or questioned only on the basis of valid measures). Also, a valid measure is more likely to be adopted in applied settings; there are both financial and academic incentives for developing proprietary scales that are deemed valid.

The second practice we refer to as v-ignorance: relying on and reporting those metrics that other researchers have used, without considering the issues underlying their use. Indeed, a 2008 review of graduate training in psychology revealed that measurement theory and practice is often ignored in doctoral programs and that only a minority of students know how to apply the methods of reliability correctly (Aiken, West, & Millsap, 2008). Of course, v-ignorance can sometimes reflect motivated ignorance. For example, current modal practices do not involve the assessment of measurement invariance. Choosing to test for invariance can greatly decrease one’s chances of publication (e.g., measurement issues can undermine theoretical conclusions), and therefore there is little incentive to do so. Both v-hacking and v-ignorance can lead to an overinflation of the true structural validity of a measure and thus undermine the validity of research findings.

There are several ways to address and immunize research against these practices. One is for journals, editors, and reviewers to require the psychometric evaluation of all measures used, much as effect sizes, confidence intervals, and precise p values are now commonly required (Parsons, Kruijt, & Fox, 2019). A second is for psychological scientists to come together and discuss issues such as choice of metrics, implementations, and cutoffs, as well as other experimenter degrees of freedom. Let us be clear here: We are not advocating for the introduction of some set of universally applied metrics or cutoff values for those metrics. Such an approach may lead researchers to mindlessly employ such standards and would raise a host of well-known issues (e.g., those associated with using null-hypothesis significance testing and treating p < .05 or a Bayes factor ≥ 3 as a sacrosanct threshold; for related arguments see Simmons, Nelson, & Simonsohn, 2018). Rather, we hope that readers will recognize that massive heterogeneity in the choice of cutoffs and metrics serves to inflate research degrees of freedom, and therefore threatens confidence in measurement. If the ongoing debate around p values is any indication (e.g., Benjamin et al., 2018; Lakens et al., 2018), the effort required to address this issue is unlikely to be trivial, and change may take some time.

However, there is no reason to be pessimistic. Researcher degrees of freedom could be greatly constrained by expanding the practice of preregistration to also include choices concerning the assessment of structural validity (e.g., metrics, cutoffs, measurement models, and decision-making strategies). Preregistration of design and analytic strategy prior to data collection greatly increases confidence in the conclusions of hypothesis-testing research (Nosek, Ebersole, DeHaven, & Mellor, 2018). We expect that preregistration of measurement choices would yield comparable benefits. Finally, providing open access to data also allows future researchers to examine the structural validity of a measure using metrics not originally reported, and enables data to be pooled across studies for reuse and meta-analytic validation. Although ethical considerations are sometimes cited as a barrier to data sharing, innovations such as synthetic data sets (e.g., using the synthpop R package; Nowok, Raab, Snoke, & Dibben, 2019) allow researchers to create and share data sets with statistical properties (e.g., covariance matrices, means, and distributions) highly similar to those of original data sets without including any of the original data (see Quintana, 2019, for an accessible primer).

Conclusion

This article provides a psychometrically rich assessment of the structural validity of 15 commonly used questionnaires. These analyses are useful for readers who (a) are interested in a large-scale examination of the structural validity of measures used in social and personality psychology, (b) wish to know more about normative distributions and psychometric properties of several well-known self-report questionnaires (e.g., for deciding whether to employ a measure in a future study or for compare results with those found in large samples elsewhere), (c) want confidence that measures developed offline have good structural validity when used online, or (d) plan to use the AIID data set for other purposes and need information about the structural validity of the scales therein. Perhaps the most important contribution of our findings is that they suggest that the documented underreporting of structural-validity metrics in social and personality psychology presents an even more worrying issue of hidden invalidity among commonly used measures. We have offered recommendations on how this issue might be addressed (e.g., with preregistration of the plan for assessing structural validity and of how validity assessments could impact substantive conclusions). Researchers are currently afforded a large number of degrees of freedom, and validity-related decisions can be hidden or made post hoc. This can lead to situations in which there are few, if any, constraints that prevent researchers from cherry-picking those validity metrics that provide the most favorable impression of their measures (v-hacking), to the potential detriment of the validity of their conclusions.

Supplemental Material

Hussey_Rev_Open_Practices_Disclosure – Supplemental material for Hidden Invalidity Among 15 Commonly Used Measures in Social and Personality Psychology

Supplemental material, Hussey_Rev_Open_Practices_Disclosure for Hidden Invalidity Among 15 Commonly Used Measures in Social and Personality Psychology by Ian Hussey and Sean Hughes in Advances in Methods and Practices in Psychological Science

Footnotes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.