Abstract

Eskine, Kacinik, and Prinz’s (2011) influential experiment demonstrated that gustatory disgust triggers a heightened sense of moral wrongness. We report a large-scale multisite direct replication of this study conducted by labs in the Collaborative Replications and Education Project. Subjects in each sample were randomly assigned to one of three beverage conditions: bitter (disgusting), control (neutral), or sweet. Then, subjects made a series of judgments about the moral wrongness of the behavior depicted in six vignettes. In the original study (

This article presents results from a multilab replication of an experiment suggesting that gustatory disgust (via taste perception) can make moral judgments harsher (Eskine, Kacinik, & Prinz, 2011). Previous studies had revealed a similar link between sensory disgust induced by other means, such as olfactory stimulation (Schnall, Haidt, Clore, & Jordan, 2008), and judgments of moral wrongness. Moreover, since Eskine and colleagues’ (2011) study, empirical and conceptual work has grown around the idea that inducing perceptions of gustatory disgust is an especially effective way of increasing the severity with which people make moral judgments (Hellmann, Thoben, & Echterhoff, 2013; Schnall, Haidt, Clore, & Jordan, 2015). However, a recent meta-analysis failed to replicate the association between disgust and moral judgment (Landy & Goodwin, 2015a). Moreover, to our knowledge, there have been no attempts to replicate the relation between physical disgust via taste perception and moral wrongness. The purpose of this project was therefore to precisely estimate the effect of gustatory disgust on moral judgment by replicating the methods used by Eskine et al.

In the 1990s, the body of research that would eventually coalesce under the banner of embodied cognition began taking shape (Wilson, 2002). This research is based on the proposal that real-world thinking and problem solving are deeply situated within sensoriperceptual processes and are not merely cognitive processes (Anderson, 2003; Ionescu & Vasc, 2014). For example, it has been theorized that although disgust likely evolved to steer organisms away from pathogens, it also evolved to guide humans in their decision making in the domains of mate selection and morality (Tybur, Lieberman, Kurzban, & DeScioli, 2013). Other researchers agree that the cognitive computation systems involved in pathogenic (

The idea that similar parts of the brain are activated by physical and moral disgust has prompted other researchers to examine how inducing a sensoriperceptual experience of disgust can affect moral judgment more specifically (Cameron, Payne, & Doris, 2013; Case, Oaten, & Stevenson, 2012; Gill & Nichols, 2008; Greene, Sommerville, Nystrom, Darley, & Cohen, 2001; Landy & Goodwin, 2015a). Several studies have suggested that olfactory (e.g., Inbar, Pizarro, & Bloom, 2012) and gustatory (e.g., Chapman, 2018; Hellmann et al., 2013) input, for example, can affect moral judgment. In particular, these studies found that perceptions of sensory disgust, induced by several means, led to harsher moral judgment (Cameron et al., 2013; Eskine et al., 2011; Schnall, Haidt, et al., 2008; Wheatley & Haidt, 2005). However, the estimated effect of disgust on moral judgment was negligible in Landy and Goodwin’s (2015a) meta-analytic review (

The Original Study

Eskine and colleagues (2011) found that subjects who drank a bitter beverage before reading six moral vignettes judged the characters’ actions more harshly than did subjects who drank a sweet beverage or water. Eskine et al. selected a bitter drink to induce disgust because they hypothesized that a strong bitter taste would be reliably experienced as disgusting. A manipulation check confirmed that their sample experienced the bitter drink (Swedish Bitters) as disgusting. In addition to the main effect of gustatory disgust on moral judgment, the results indicated that politically conservative subjects, compared with liberal subjects, were more sensitive to the effect, which suggested that political conservatives are more likely to be influenced by incidental sensoriperceptual cues (e.g., gustatory disgust) when making moral judgments.

The original research (

The Current Study

In this article, we report a multilab effort to replicate the methods used by Eskine et al. (2011). We tested (a) whether a bitter beverage indeed prompts harsher moral judgments than both a sweet beverage and water and (b) whether these effects are stronger in politically conservative populations than in politically liberal ones. Our overarching goal was to provide high-powered estimates of the effect observed by Eskine et al. using a crowdsourcing approach (see Hagger et al., 2016; Moshontz et al., 2018). That is, instead of evaluating the “replication success” of the original study solely on the basis of the results of a single replication, we meta-analyzed many distinct replication attempts using frequentist and Bayesian statistical approaches.

In sum, our goal was both to estimate the main effect of beverage type on moral judgments and to test political orientation as a moderator of this effect. We examined two contrasts for the effect of beverage type: bitter versus control and bitter versus sweet. With respect to moderation by political orientation, we focused primarily on the comparison between conservative and liberal subjects.

Disclosures

Preregistration

Before data collection, each lab preregistered their materials, protocol, and analysis plans on the Open Science Framework (OSF). In addition, we registered our analysis plan for this manuscript on OSF prior to conducting the analyses reported here (see our project at https://osf.io/5ygsp). Our preregistration is a partial preregistration in that each author knew the results of at least one particular replication before the meta-analysis plan was preregistered.

Data, materials, and online resources

After labs completed their studies, they uploaded their raw data, their analyses (including syntax), and a clear explanation of their results to their own pages at OSF. The data and all other relevant documentation from each of the labs included in this meta-analysis can be found at https://osf.io/kuyn8/wiki/home/. We uploaded the data and analysis code for this report to our project page at https://osf.io/5ygsp. An appendix with additional information is also available at OSF, at https://osf.io/a4cs3/.

Reporting

This article is based on analysis of existing data rather than new data collection. We report how we determined the sample size for our analyses and all data exclusions, manipulations, and measures.

Ethical approval

Each individual site secured approval from an institutional review board or similar committee prior to data collection and carried out the study in accordance with the provisions of the World Medical Association Declaration of Helsinki.

Method

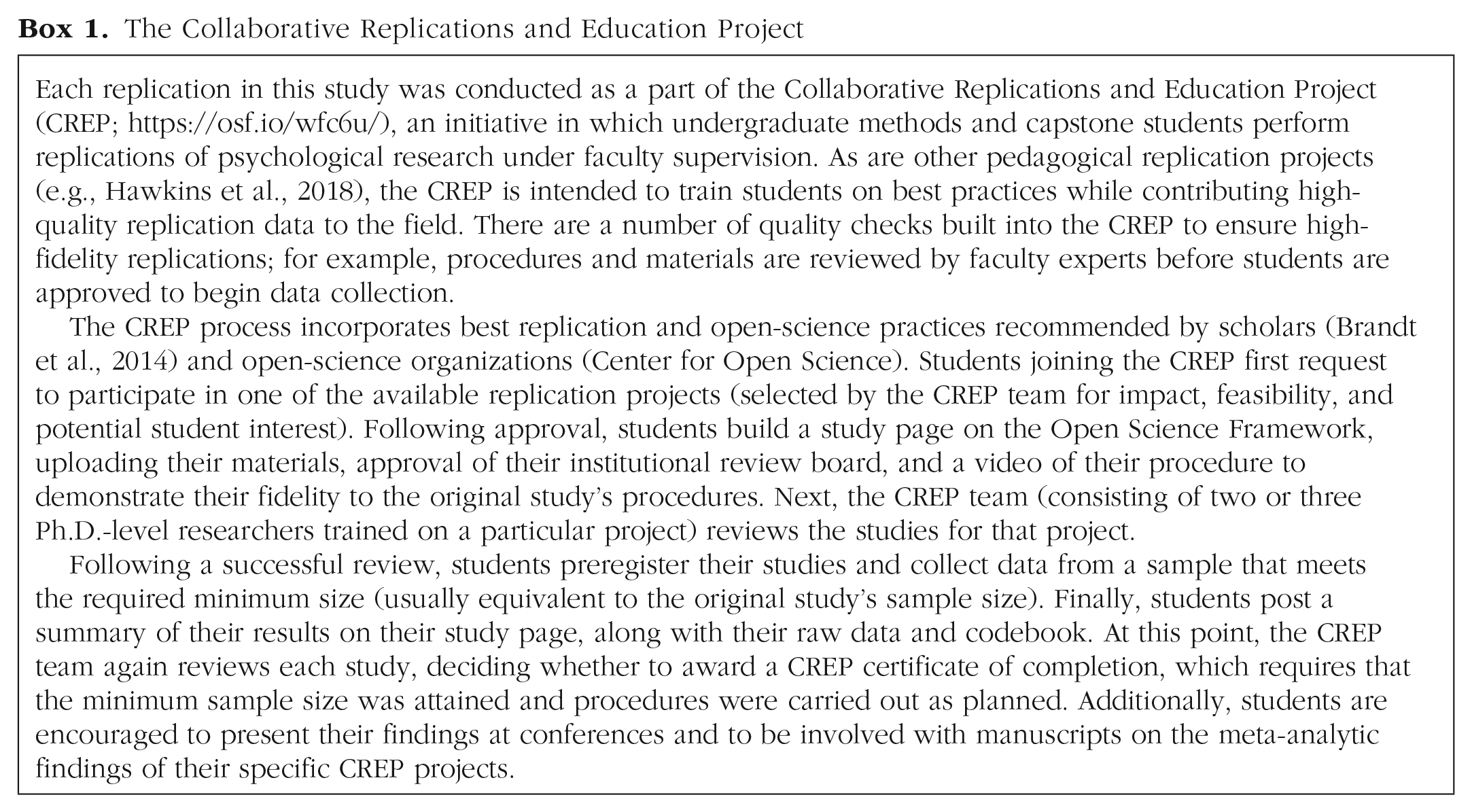

The replication studies we report were conducted as part of the Collaborative Replications and Education Project (CREP), an initiative in which students perform replications of psychological research under faculty supervision (see Box 1 for further information). All subjects provided informed consent prior to participating. The 11 replication studies took place at five universities in the United States and three universities in Europe (United Kingdom, Germany, and The Netherlands). Table A1 in the appendix at OSF lists the site and mentor for each study.

The Collaborative Replications and Education Project

Design

The design was a 3

Target sample size

The minimum target for the overall sample for meta-analysis was 2.5 times the original sample of 57, or 143 subjects, which would be sufficient for a “small telescopes” analysis (Simonsohn, 2015). The individual labs were permitted to continue to submit qualified replication samples from January 2015 until December 2017. The CREP recommended that each lab collect data from at least 57 subjects (to match the original sample), but not all labs hit this target. We included labs that did not hit the target sample size in our analysis because we were primarily concerned with having adequate power to accurately estimate the overall effect size in the weighted meta-analysis across all samples (and we were not concerned about the power of an individual sample).

Deviations from the original study

The CREP team contacted the original authors, who provided the original moral-dilemma vignettes, manipulation check, and informed-consent forms. We could not obtain materials for the original imageability distractor task or cover story, so we created our own on the basis of the description in Eskine et al.’s (2011) report. Our distractor task is available at OSF (https://osf.io/ju7nq/). Our cover story consisted of the following paragraph: In this study you will be asked to read several vignettes and make judgments about the characters in them. Your job will be to judge the actions of the characters. During this task, you will be asked to drink a beverage. The purpose of this study is to determine whether motor movements involved with drinking influence your judgments while reading about others. In order to successfully attain this, please drink each dose in a single swift motion, as if you were drinking a shot.

Two problems arose with using an international sample to replicate the original study: First, the terms

Second, the drinks differed slightly from country to country. For example, Minute Maid Berry Punch and Swedish Bitters, the drinks used in the original study, are not widely available in some locations outside the United States. Where these beverages were not available in the original form, researchers were told to substitute a sweet drink with similar sugar content and a bitter but inert drink, respectively. Individual labs had discretion to substitute any brand of Swedish Bitters given that the brand was not specified in the original report. We used the manipulation check to ensure that subjects found the sweet drink sweet but not neutral or disgusting and the bitter drink disgusting but not sweet or neutral.

A third deviation from the original study was that in three of the studies (7, 9, and 10), the moral vignettes were presented via Qualtrics surveys rather than on paper. Subjects in these three studies indicated their moral judgments by using a slider scale that yielded a number rather than by making a mark on a line on paper.

A fourth (potential) deviation from the original study was that we computed a moral-wrongness composite score for all subjects who rated at least three of the six vignettes. 3 We preregistered this approach to maximize statistical power and minimize selection biases. There were very few exclusions based on this preregistered criterion; only 3 of the subjects tested rated fewer than three vignettes. We provide further details in the Results section (see our summary of preliminary analyses of the moral-wrongness composite).

Protocol fidelity

Labs qualified to participate in this CREP project by submitting an application, preparing an OSF page with all materials and procedures, and submitting a video of a mock experimental procedure. Volunteer psychology professors working with the CREP reviewed this material for fidelity to the CREP protocol. The protocol required the use of exact stimuli, such as the materials for the moral-judgment task, though use of approximate stimuli was allowed where necessary (e.g., another drink could be substituted for Minute Maid Berry Punch where it was not easily available). The protocol also required appropriate data reporting. The CREP instructions and materials are available at OSF (https://osf.io/4hkjv/). Labs were required to revise their procedures in accordance with CREP reviewers’ feedback on both their written procedures and their video to increase precise compliance. Each lab included at least one student researcher and one supervising faculty mentor. Faculty mentors supervised students’ work and, in some cases, helped run subjects and analyze data. Labs that successfully complied with the CREP protocol, passed review, and submitted data for at least the minimum sample size (57) were eligible to receive a CREP certificate and a monetary reward (monetary rewards ended in July 2017, as funding ended).

Subjects

We analyzed data from a total of 1,137 subjects in 11 studies. There were 142 conservative subjects and 635 liberal subjects. The per-study

In our preregistration, we stated that if linear mixed-effects analyses indicated that the effect of beverage condition or the interaction of beverage condition with political orientation varied with subjects’ knowledge of the hypothesis, we would exclude subjects who demonstrated knowledge of the hypothesis. However, in part because we could not obtain the open-ended responses necessary to code this variable for 3 of the 11 studies, we retained all available subjects in our analyses.

With regard to gender, 671 (59%) subjects identified as female, 392 (34%) as male, and 6 (1%) as nonbinary; there was no gender information available for 68 (6%) subjects.

Materials

All materials, including two versions of the moral-vignette packet (identical but with different orders), were provided on the OSF website.

As in the original study, the labs used six vignettes developed by Wheatley and Haidt (2005). The six vignettes focus on the following main characters and situations: Bob, who has a sexual relationship with his second cousin; Frank, who cooks and eats his dead dog; George, a lawyer who seeks clients at the hospital emergency room; Arnold, a politician who condemns corruption but accepts bribes himself; Robert, who shoplifts clothing; and Tim, who takes books and other items out of the library without checking them out.

Moral judgments were obtained by asking subjects to read each vignette and then answer the question “How morally wrong is this?” by making a mark on a line or selecting a number on a scale; in both cases,

The imageability distractor task and cover story were intended to disguise the purpose of the study. The distractor task was based on the description in the original report (i.e., “[subjects] . . . rated sentences for their imageability”; Eskine et al., 2011, p. 296).

Subjects rated how much they enjoyed their beverage, and how sweet, bitter, neutral, or disgusting they found it, using a 7-point scale ranging from 1 (

To run the experiment, labs needed drinking water; fruit punch; a brand of Swedish Bitters; cups; a private space where subjects could fill out the experimental forms; and a booklet containing the consent forms, the moral vignettes, the survey used for the manipulation check, the demographic (including political-orientation) questions, the distractor task, and the procedures. The specific questions used to collect demographic information differed somewhat among the labs. For example, some labs used an open-response box to collect political-orientation data, whereas others gave subjects specific options to choose from.

Procedure

Prior to the arrival of each subject, experimenters consulted a preprinted list of random numbers or an online tool used for random assignment to determine the appropriate drink preparation. Subjects were run individually in physically separate spaces.

After subjects provided informed consent, experimenters explained that the study was an examination of the influence of motor interference on cognitive processing. The ingredients for the appropriate beverage condition were listed on the informed-consent form so that subjects would not be exposed to allergens unwittingly. The experimenters called attention to the ingredient list in case subjects had not read the consent form closely. (Subjects at the Tufts University site verbally confirmed that they were not allergic to any of the ingredients during the consent process.) The experimenters provided the beverage and told subjects to drink it in a swift motion, “as if drinking a shot.” Subjects then completed the first half of the moral-judgment task. The experimenters administered a second serving of the beverage and then instructed subjects to complete the second half of the moral-judgment task.

Subjects then completed the distractor task, beverage ratings, and demographic survey. Finally, they responded to the prompt “What do you think this study is about? Please provide a few details to explain your answer.” Upon completion of this item, subjects were debriefed verbally and in writing. The complete protocol, including a script for researchers, is available on the OSF page for this study.

Two of the authors (E. Ghelfi and M. A. Fischer), who were blind to subjects’ assignment to condition, coded political-orientation responses using three categories: “conservative,” “liberal,” and “other.” The “other” category included subjects who declined to provide information. The two coders exhibited excellent agreement, Cohen’s κ = 1.00, 95% confidence interval (CI) = [.99, 1] (weighted κ = .99). We used the first coder’s responses in subsequent analyses. In total, 648 subjects (57%) were coded as liberal, 162 (14%) as conservative, and 327 (29%) as other.

The same two authors coded subjects’ explanations of what they thought the study was about using three categories capturing levels of knowledge about the hypothesis. The three categories were “naive” (i.e., no insight into the hypothesis; e.g., “how the mechanical act of drinking would affect an individual’s moral judgment”), “partially suspicious” (i.e., insight into part of the hypothesis; e.g., “This study may be about how much we liked or disliked the beverage and whether or not that influenced our answers”), and “fully suspicious” (i.e., clear and accurate description of the hypothesis; e.g., “This study is about how arm movement/taste can determine how you feel/think about something. I had a bad taste in my mouth so I saw everyone as bad in the short stories.”). The two coders exhibited very good agreement, Cohen’s κ = .76, 95% CI = [.71, .80] (weighted κ = .83). When the two coders disagreed, we used the code representing greater knowledge of the hypothesis. We could not obtain subjects’ responses to this question for Studies 1, 2, and 3 and thus could not code level of knowledge of the hypothesis for any of the subjects in these three studies.

Note that because the knowledge question was presented after the beverage-rating manipulation check, it is possible that thinking about the beverages during the manipulation check triggered subjects’ subsequent guesses about the purpose of the study. As a result, some subjects classified as partially or fully suspicious may have actually been naive when they made their moral judgments but “clued in” to the hypothesis by the time they were asked to guess the purpose of the study. We can be fairly certain, however, that subjects who were classified as naive even with the benefit of exposure to the beverage-rating task were in fact naive when they made their moral judgments.

Data analysis and inference criteria

We present the rationale for and details of all analyses where applicable in the Results section. Broadly speaking, in preliminary analyses, we computed descriptive statistics for moral-wrongness and beverage ratings, level of knowledge, and internal-consistency reliability for the moral-wrongness composite. In confirmatory analyses, we conducted random-effects meta-analyses for two effects of interest: bitter versus neutral condition and bitter versus sweet condition. We also conducted one-sided tests to determine whether observed effects were either smaller than the original study could have detected with 33% power or equivalent to zero. To complement these approaches, we conducted linear mixed-effects regression (LMER) modeling of individual subjects’ judgments of moral wrongness. Finally, for each replication study, we conducted a series of four Bayes factors (BF) tests described by Verhagen and Wagenmakers (2014).

For null-hypothesis significance testing, we set an alpha criterion of .05 (two-tailed). For BF tests, we considered BFs greater than 3 to provide nonanecdotal evidence for the replication hypothesis and BFs less than 1/3 to be nonanecdotal evidence against the replication hypothesis.

We wrote this manuscript as an R Markdown document in RStudio 1.2.1335 (RStudio Team, 2015) and analyzed our data using R (Version 3.5.2; R Core Team, 2017) and the following R packages:

When analyzing the data, we discovered that some studies contributed no or very few observations to one or more cells of the study design. We made the post hoc decision to include in random-effects meta-analyses only those studies for which there were at least 2 subjects in each cell, so that we could compute both a mean and a standard deviation. This left five studies for comparisons between the beverage conditions among conservative subjects and nine studies for comparisons among liberal subjects. 4 We included all available observations in our LMER models.

Results

Preliminary analyses

Moral-wrongness composite

Because we excluded the few subjects who rated fewer than three of the six vignettes, and because no subjects rated exactly three vignettes, all subjects included in analyses provided judgments for at least four vignettes; 99.12% had a value for five (7.04%) or six (92.08%) vignettes.

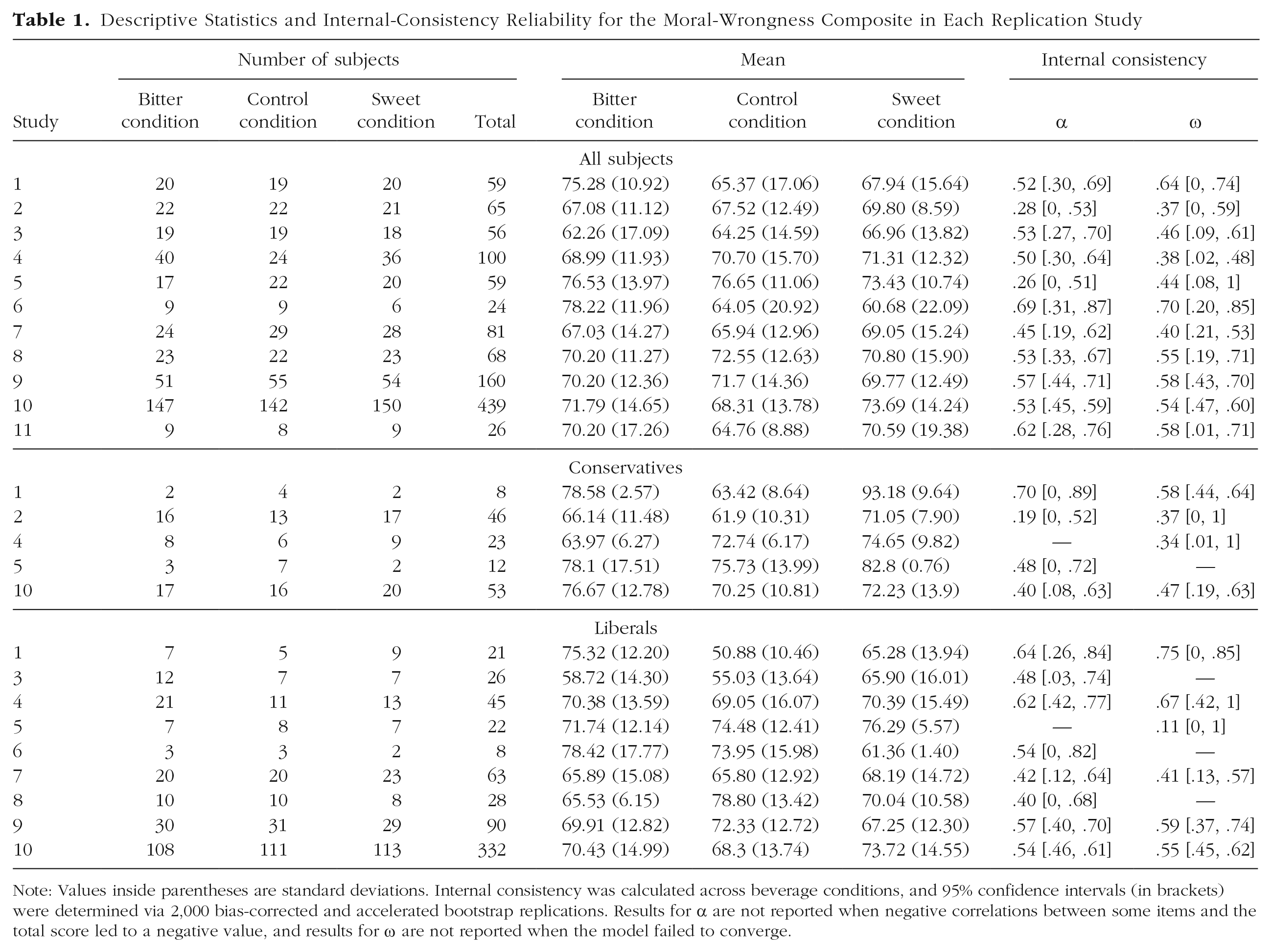

Table 1 shows descriptive statistics for the moral-wrongness composite in each beverage condition for each replication study. Results for all subjects and the conservative and liberal subgroups are presented separately. We provide descriptive statistics across studies in the Confirmatory Analyses subsection of the Results section. 5

Descriptive Statistics and Internal-Consistency Reliability for the Moral-Wrongness Composite in Each Replication Study

Note: Values inside parentheses are standard deviations. Internal consistency was calculated across beverage conditions, and 95% confidence intervals (in brackets) were determined via 2,000 bias-corrected and accelerated bootstrap replications. Results for α are not reported when negative correlations between some items and the total score led to a negative value, and results for ω are not reported when the model failed to converge.

Table 1 also shows the internal-consistency reliability of the moral-wrongness composite across beverage conditions in each replication study, again separately for all subjects and the conservative and liberal subgroups. Cronbach’s alpha calculated across all political orientations within each study ranged from .26 to .69 (median = .53), and omega ranged from .37 to .70 (median = .54). Internal consistency therefore ranged from poor to acceptable, in most cases falling below .70. Across subjects in all 11 studies, Cronbach’s alpha was .50, 95% CI = [.45, .54], and omega was .49, 95% CI = [.43, .54].

Beverage ratings

As a manipulation check, we assessed the extent to which the three beverages had the intended effect on subjective ratings (bitter, disgusting, neutral, and sweet). For each study, we computed descriptive statistics and linear regression models that compared ratings in the bitter condition with ratings in each of the other two conditions across all subjects and within the conservative and liberal subgroups (see Table A3 in the appendix). In addition, to summarize across studies, we assessed the fixed effects of beverage type and political orientation on each rating in four LMER models with a random intercept for studies (see Table A4 in the appendix). The beverage contrasts were consistently significant in the expected directions for all three political orientations.

The estimated marginal means from the LMER models indicated that subjects in the bitter condition perceived their beverage to be quite bitter,

Level of knowledge of the hypothesis

Of the 933 subjects for whom we were able to code knowledge of the hypothesis, 543 (58.20%) were naive, 336 (36.01%) were partially suspicious, and 54 (5.79%) were fully suspicious. The original authors did not report how many subjects were partially suspicious, but indicated that 3 out of 57 (5.26%) “correctly guessed our hypothesis” (Eskine et al., 2011, p. 296).

Predicted probabilities were obtained in a generalized linear mixed-effects logistic regression assessing the fixed effects of beverage type and political orientation on level of knowledge (0 = naive, 1 = partially or fully suspicious). We included a random intercept for studies in the model. More subjects in the bitter (60.65%) and sweet (52.37%) conditions than in the neutral control condition (38.85%) were partially or fully suspicious. The difference between the bitter and control conditions was statistically significant,

Confirmatory analyses

Random-effects meta-analyses and one-sided tests

In our random-effects meta-analyses, we used two contrasts to estimate the standardized effects of drinking the bitter beverage on moral-wrongness judgments within and across political orientations and within and across replication studies. These meta-analyses enabled us to estimate, in standardized units, the extent to which drinking a disgusting, bitter beverage harshens moral judgments relative to drinking water (control) or juice (sweet). We would conclude that there was support for the hypothesis if the magnitudes of the two overall effects (bitter vs. control and bitter vs. sweet) were significantly greater than zero in the positive direction, perhaps only among conservative subjects as in the original study. We used a random-effects approach to determine the extent to which effect sizes varied from one study to the next. We excluded the original study from these analyses, as is typical for Registered Replication Reports (e.g., Wagenmakers et al., 2016), so that the estimates would be based only on unpublished studies that were registered in advance and, thus, were unbiased.

In addition to random-effects meta-analyses, we conducted one-sided tests examining whether the standardized meta-analytic effect sizes for the two contrasts of interest were significantly smaller than the effect size the original authors had 33% power to detect (

Finally, we also conducted one-sided tests examining whether the replication effect was effectively equivalent to zero. We preregistered two sets of standardized effect-size equivalence bounds around 0, specifically 0 ±

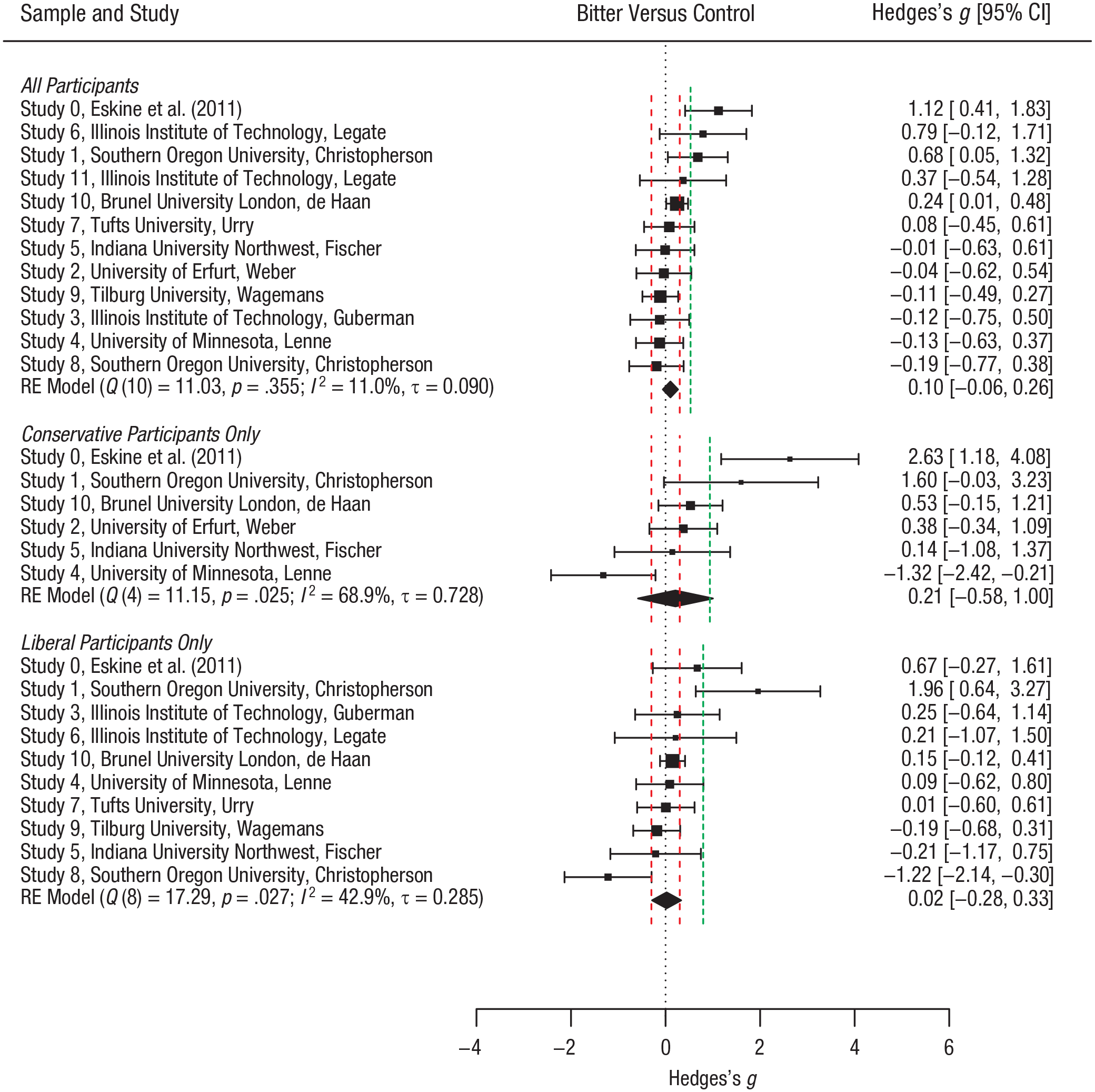

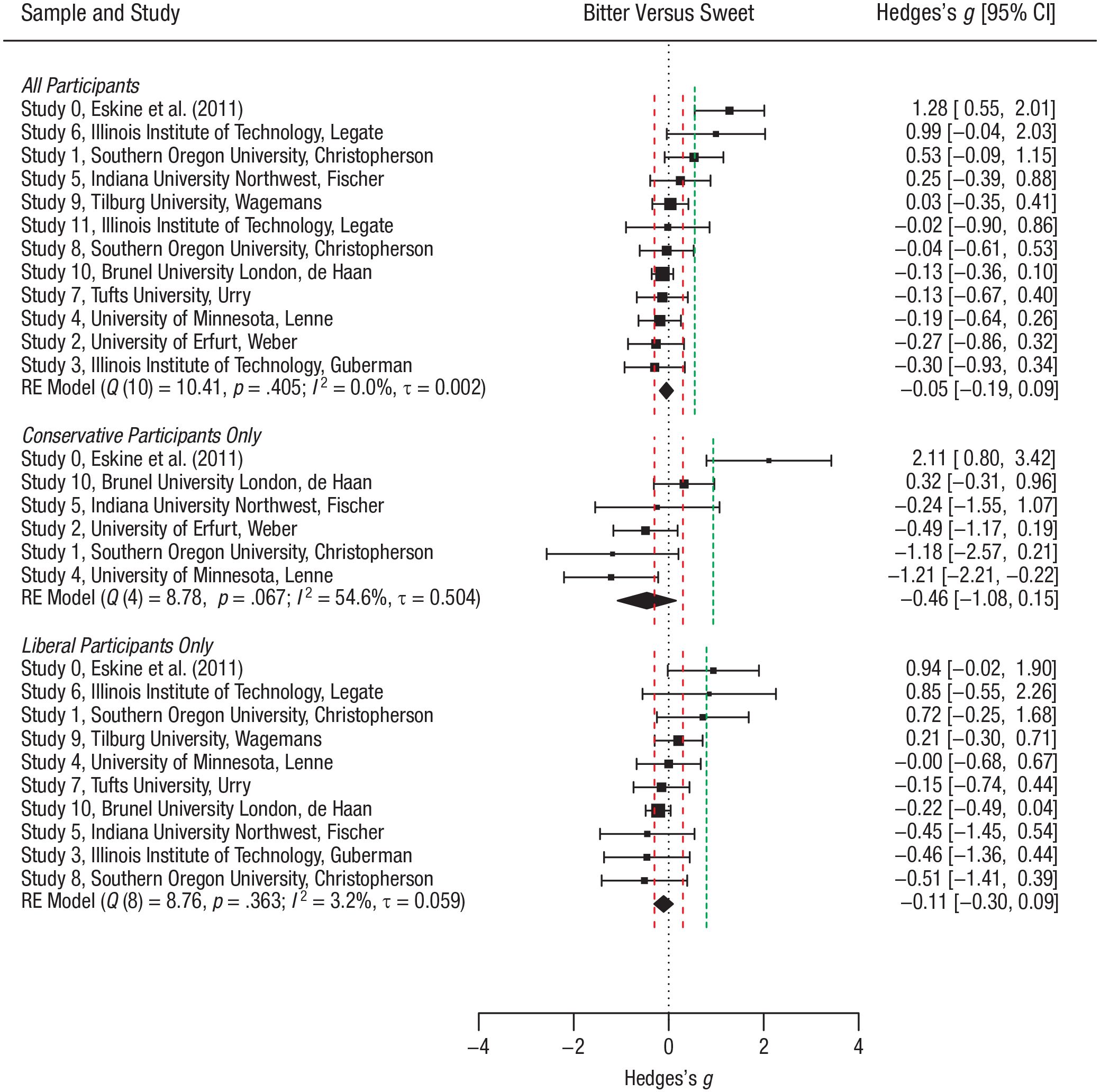

Figures 1 and 2 display the standardized effect sizes and 95% confidence intervals for the two contrasts, within and across studies across all subjects and for the conservative and liberal subgroups. Figures A2 and A3 in the appendix display the parallel information for the raw mean differences instead of the standardized mean differences; the pattern is quite similar. In what follows, we summarize results for the two contrasts of interest.

Forest plot of the effect sizes for the bitter-versus-control contrast in Eskine, Kacinik, and Prinz’s (2011) original study and the replications in the current project. Results are shown for contrasts across all subjects, within the conservative subgroup, and within the liberal subgroup. Within each group, replication studies are presented in descending order of the Hedges’s

Forest plot of the effect sizes for the bitter-versus-sweet contrast in Eskine, Kacinik, and Prinz’s (2011) original study and the replications in the current project. Results are shown for contrasts across all subjects, within the conservative subgroup, and within the liberal subgroup. Within each group, replication studies are presented in descending order of the Hedges’s

All subjects

The mean moral-wrongness judgment across the 11 studies, weighted by the number of subjects in each group for each study, was 70.65 (

The overall effect for the bitter-versus-control contrast was negligible and in the predicted direction. This effect was significantly smaller than

The overall effect for the bitter-versus-sweet contrast was negligible but in the opposite of the predicted direction. This effect was significantly smaller than

Conservative subjects

Among conservative subjects in 5 studies, the mean moral-wrongness judgment across studies, weighted by the number of subjects in each group for each study, was 70.98 (

The overall effect for the bitter-versus-control contrast was small and in the predicted direction. This effect was significantly smaller than

The overall effect for the bitter-versus-sweet contrast was medium and in the opposite of the predicted direction. This effect was significantly smaller than

Liberal subjects

Among liberal subjects in 9 studies, the mean moral-wrongness judgment across studies, weighted by the number of subjects in each group for each study, was 69.38 (

The overall effect for the bitter-versus-control contrast was near zero. This effect was significantly smaller than

The overall effect for the bitter-versus-sweet contrast was negligible and in the opposite of the predicted direction. This effect was significantly smaller than

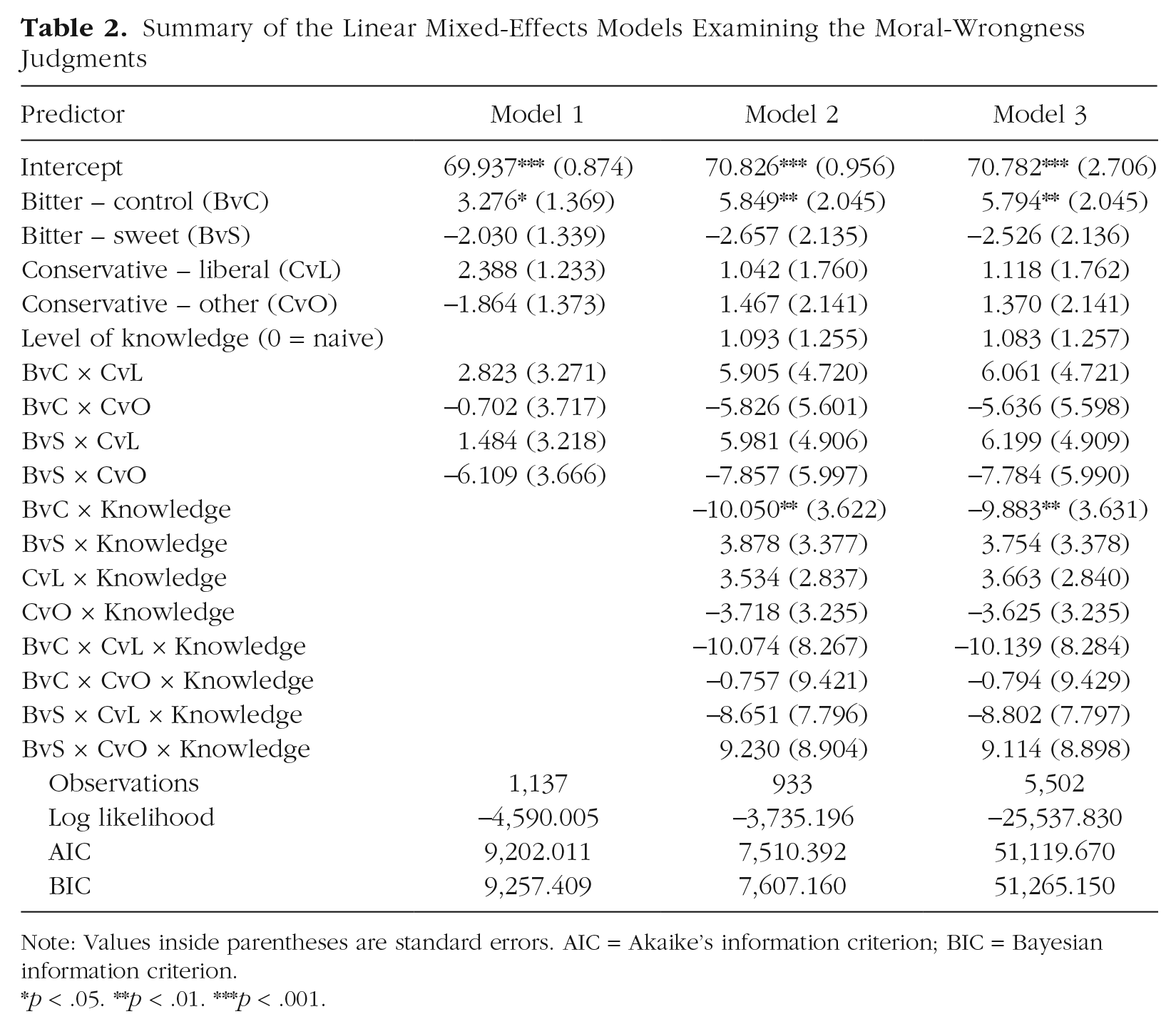

Linear mixed-effects regression models

The random-effects meta-analyses and one-sided tests enabled us to examine the standardized effect of the beverage manipulation on moral-wrongness judgments within and across political-orientation groups. These analyses also addressed the extent to which beverage effects varied across the replication studies. They did not, however, reveal whether political orientation or knowledge of the hypothesis formally moderated the effects of beverage condition on moral-wrongness judgments. They also did not account for potential random variation in moral-wrongness judgments across subjects and vignettes. Thus, we conducted LMER models of the individual subjects’ data to address these gaps. Apportioning all relevant sources of variation simultaneously in LMER may increase sensitivity to detect predicted effects. For the same reasons as expressed for the random-effects meta-analyses, we excluded the original study from these analyses.

LMER model specification

We report three LMER models.

7

All models included fixed effects reflecting beverage type with two contrasts: For the bitter-versus-control (

In Model 1, we analyzed moral-wrongness ratings as a composite averaged across vignettes, with a random intercept to allow variation in the average rating across studies. Because we used effect coding, the intercept reflects the mean moral-wrongness rating across all subjects. There were 1,137 observations (subjects) for this analysis.

In Model 2, we added level of knowledge of the hypothesis as a fixed effect that could interact with beverage type, political orientation, or both. 8 We recoded the knowledge variable to 0, naive, or 1, partially or fully suspicious 9 ; the intercept therefore reflects the mean moral-wrongness rating among naive subjects. For three studies, we could not retrieve the open-ended responses to the question about what the study was about. Therefore, we could not code level of knowledge of the hypothesis for any of the subjects in those studies. With these study-level exclusions, there were 933 observations (subjects) for this analysis.

In Model 3, we analyzed moral-wrongness ratings for each vignette with the fixed effects from Model 2 and with random intercepts that allowed variation in the mean rating across studies, subjects, and vignettes. The intercept reflects the mean moral-wrongness rating among naive subjects. There were 5,502 observations for 933 subjects, each with moral-wrongness ratings for up to six vignettes. A total of 96 ratings from three studies (1.71%) were missing. We did not impute missing values.

LMER model results

Table 2 summarizes the results of the three confirmatory LMER models. The appendix at OSF includes figures depicting the moral-wrongness ratings in all design cells after accounting for fixed and random sources of variation in Model 1 (Fig. A4) and Model 2 (Fig. A5), respectively.

Summary of the Linear Mixed-Effects Models Examining the Moral-Wrongness Judgments

Note: Values inside parentheses are standard errors. AIC = Akaike’s information criterion; BIC = Bayesian information criterion.

Results were consistent with the hypothesis that physical disgust induces greater feelings of moral wrongness, in that subjects who drank a bitter beverage made significantly harsher moral judgments than did those who drank water (see the BvC terms in Table 2). However, contrary to the hypothesis, subjects who drank a bitter beverage made numerically milder moral judgments than did those who drank the sweet juice; this difference was not statistically significant (see BvS terms in Table 2).

The bitter-versus-control effect did not vary significantly between conservatives and liberals (see the BvC

The bitter-versus-control effect did, however, vary significantly by level of knowledge of the hypothesis (see the BvC

The bitter-versus-sweet effect did not vary significantly by level of knowledge of the hypothesis (see the BvS

Intraclass correlation coefficients (ICCs) based on Models 1 and 2 indicated that the proportion of variance in moral-wrongness judgments due to studies was .03 and .01, respectively. The proportion of variance due to studies was lower in Model 3,

The preceding confirmatory LMER analyses as a whole did not reveal support for the hypothesis that drinking a bitter, disgusting beverage promotes a heightened sense of moral wrongness relative to drinking water and relative to drinking sweet juice, either in all subjects or among conservative subjects. Therefore, we conducted some exploratory LMER analyses.

First, in our confirmatory analyses, we included all subjects who provided ratings of at least three of the six vignettes, but it was possible that hypothesized effects would emerge only among subjects who rated all six. Therefore, we conducted an exploratory analysis in which we excluded subjects who rated fewer than six vignettes (see Table A7 in the appendix for results). Estimates in these models were all rather similar in magnitude and direction to those from the models that included subjects who rated only three or more vignettes; none of the estimates in these exploratory models revealed the hypothesized elevation of moral-wrongness judgments in the bitter condition relative to the control and sweet condition across all subjects or as a function of political orientation.

Second, it was also possible that the hypothesized effects would emerge in a subset of the vignettes. For example, researchers have argued that moral judgments of purity violations might be particularly susceptible to manipulations of physical disgust (Horberg, Oveis, Keltner, & Cohen, 2009). Therefore, we conducted exploratory analyses using six linear mixed-effects models structured the same as Model 1 but with the moral-wrongness rating for an individual vignette as the criterion variable in each. The results are presented in Table A8 in the appendix. We also conducted analyses using two linear mixed-effects models structured the same as Models 1 and 2 but with moral-wrongness judgment across the two purity-violation vignettes as the criterion variable. Those results are presented in Table A9 in the appendix. None of these models revealed elevations of moral-wrongness judgments in the bitter condition relative to the control and sweet conditions across all subjects or as a function of political orientation. These results fail to support a causal effect of physical disgust on moral-wrongness judgments.

Third, Eskine et al. (2011) reported a positive association between disgust ratings and moral-wrongness judgments across conditions, β = 0.53,

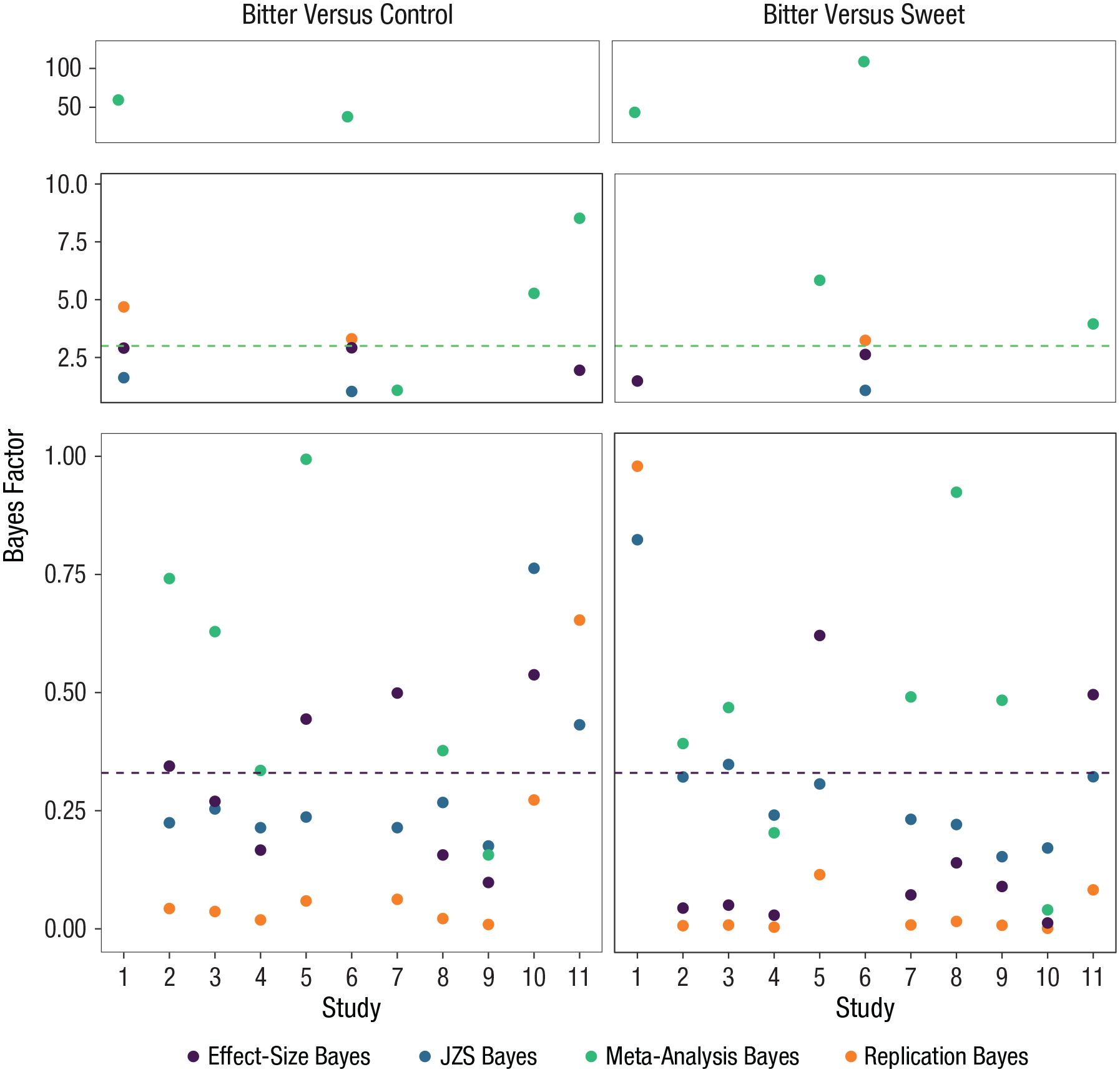

Bayes factor tests

Finally, the random-effects meta-analyses and the LMER analyses all relied on a frequentist statistical perspective, involving standardized effect sizes, confidence intervals, and

Bayes factor model specification

Following the logic and code given by Verhagen and Wagenmakers (2014), we report four complementary BF tests for each replication study. In each case, the BF represents a comparison between two models; it captures the extent of evidence for one model relative to the other. The first test used the Jeffreys-Zellner-Siow (JZS) BF, which is independent from the original finding This test determined the relative evidence for the effect being present versus absent in the replication by setting a standard two-tailed Cauchy(0, 1) distribution as the prior, ignoring the original study. The second test was the replication BF test, in which the prior was based on the posterior distribution from the original study. It determined the relative evidence for the original effect versus a null effect. The third test was the equality-of-effect-size BF test, which determined whether the effect size in the replication study was equal to the effect size in the original study by determining relative evidence for the variance τ 2 of the effect sizes being zero versus nonzero. The fourth test was the fixed-effect meta-analysis BF test, which pooled the original and replication studies’ data, and used the two-sided Cauchy(0,1) distribution as the prior (as in the JZS BF test). 10

Bayes factor results

Figure 3 summarizes the BF results across all subjects in all studies. (See Table A10 and accompanying text in the appendix for BF test results within the conservative and liberal subgroups.)

Results of the four Bayes factor (BF) tests across all subjects in each replication study. Results for the bitter-versus-control contrast are shown on the left, and results for the bitter-versus-sweet contrast are shown on the right. The dashed horizontal lines mark

The equality-of-effect-size, JZS, and replication BF tests yielded relatively consistent evidence against the replication hypothesis (bitter > control and bitter > sweet) across most of the studies. By contrast, about one third of the studies provided evidence for the replication hypothesis according to the fixed-effect meta-analysis BF test. That said, because the latter test pools the original and replication effects, the very large original effect size likely had a strong influence on the outcome. Note that of the four replication studies for which this test provided more than nonanecdotal support for the replication hypothesis in the case of the bitter-versus-control contrast, only one had a large enough sample size to inspire confidence in the precision of the estimation (Study 10:

Discussion

Several studies have shown that experimental manipulations of disgust can harshen moral judgments (e.g., Harlé & Sanfey, 2010; Moretti & di Pellegrino, 2010; Schnall, Benton, & Harvey, 2008; Schnall, Haidt, et al., 2008; Van Dillen, van der Wal, & van den Bos, 2012; Wheatley & Haidt, 2005). Although more recent research has cast some doubt on the existence of this relationship, some researchers have proposed that the effect might be more robust for a specific type of manipulation: induction of gustatory and olfactory disgust (see Landy & Goodwin, 2015a, 2015b). As this notion was largely based on one low-powered study with a particularly large effect size (i.e., Eskine et al., 2011), we were interested in obtaining an accurate estimate of the effect size by conducting a high-powered meta-analysis of preregistered direct-replication studies. Overall, in 11 studies, we found little to no support for the conclusion that gustatory disgust harshens moral judgments.

We adopted a multifaceted analysis strategy in an effort to fairly examine the research question (i.e., does gustatory disgust induced via a bitter drink amplify moral-wrongness judgments?) from slightly different angles. In part, we employed a frequentist approach, running random-effects meta-analyses, one-sided tests, and LMER models to make inferences across all the replication studies. We observed a very small overall random-effects meta-analytic effect in which the bitter drink induced harsher moral judgments than water across all participants. The parallel effect was small and statistically significant in the LMER models. However, in the random-effects meta-analyses comparing the bitter drink with water, the confidence intervals for the effect sizes encompassed zero in all but two replications, and the confidence interval for the overall effect included zero (see Fig. 1). In addition, standardized effects from these meta-analyses comparing the bitter drink with water were significantly smaller than the smallest effect the original authors could have found, and equivalence tests indicated that the effects were unlikely to be of greater magnitude than ± 0.30. Moreover, none of the frequentist tests indicated that the bitter drink resulted in harsher moral judgments than the sweet one did; if anything, the bitter drink led to more lenient moral judgments than the sweet drink. This latter finding is important in that it undermines the notion that disgust is responsible for any effects of the bitter drink on moral judgments; instead, any such effects might be explained by the act of drinking something with flavor. Finally, we found no evidence that political conservatives were especially harsh in their judgments after consuming a bitter drink. It should be noted, though, that this latter finding is based on a relatively small sample of conservative subjects (a limitation discussed in more detail later in this section).

We also used a Bayesian approach, which quantified the relative strength of the evidence in favor of versus against the replication hypothesis. We computed four different BF tests, of which three (i.e., JZS, replication, and equality-of-effect-size BF tests) showed evidence against the replication hypothesis in most of the studies. The fixed-effect meta-analytic BF test showed more support for the idea that disgust amplifies the harshness of moral judgments. However, this is not surprising, as this test pooled each of the replication studies with the original study, which had a particularly large effect. Even in this case, though, only 4 of the 11 replication studies (3 of which had small sample sizes) favored support for the replication hypothesis.

Given the results from these various tests, we conclude that there is little to no support for the notion that gustatory disgust can increase ratings of moral wrongness. Our study adds to the growing number of studies that have failed to find support for a relationship between incidental manipulations of disgust and moral judgments (e.g., Johnson, Cheung, & Donnellan, 2014; Johnson et al., 2016; Landy & Goodwin, 2015a). Our results not only cast doubt on Eskine and colleagues’ (2011) conclusion that a disgusting taste affects moral judgments, but also fail to support Schnall and colleagues’ (2015) proposal that gustatory inductions of disgust have a special potency to influence moral condemnation.

It is possible that the effect of incidental disgust on moral judgments depends heavily on moderator variables, such as individual difference measures. For example, it has been suggested that the relationship is stronger for individuals who are generally more sensitive to bodily sensations (i.e., who score high on measures of private body consciousness; Schnall et al., 2008, also see Schnall et al., 2015). We did not measure this variables, or any other individual difference measure, consistently across our studies, so we cannot directly test this hypothesis. However, an earlier replication study by Johnson and colleagues (2016) tested the importance of private body consciousness to the link between disgust and moral judgment, but did not find evidence indicating that it has a moderating effect (also see Johnson et al., 2014).

One moderator variable that we identified is knowledge of the hypothesis. A total of 6% of our subjects demonstrated full knowledge of the hypothesis that drinking a bitter beverage would harshen moral judgments; an additional 36% partially guessed that hypothesis. We could not separate out effects of partial versus full suspicion because of the absence of observations in one cell of the factorial design. However, when we combined the fully and partially suspicious groups in our LMER models, we found that the bitter-versus-control effect was larger in the predicted direction among naive subjects than among fully or partially suspicious subjects. This result is in line with the idea that induced disgust has a stronger effect on individuals who are unaware of a link between the taste of the drink and the moral judgments than among individuals who are aware of such a link (Schnall et al., 2015). Contrary to this idea, however, knowledge of the hypothesis did not moderate the bitter-versus-sweet effect.

It is unclear how closely the coding scheme we used to assess knowledge of the hypothesis maps onto the scheme used by the original authors. They reported that 3 of 57 subjects (5%), who were excluded from analyses, correctly guessed the hypothesis. Given that 6% of our subjects demonstrated full knowledge of the hypothesis, the 5% of subjects with knowledge of the hypothesis in the original study may have had full knowledge, not just partial. Regardless, we can conclude that knowledge of the hypothesis moderated the effect of beverage type on moral judgments to some extent. Future studies would benefit from refinement of deception procedures to minimize such knowledge. On this point, it is noteworthy that in the replication studies, more subjects in the bitter and sweet conditions than in the control condition were partially or fully suspicious; deception procedures would ideally yield similar rates of suspicion in all conditions so that level of knowledge of the hypothesis is orthogonal to the beverage manipulation.

Some limitations of the current investigation may have affected our findings. One potential limitation is the low reliability of the moral-wrongness composite in most of our replication samples. This may have influenced our ability to detect effects, especially if our average reliability was substantially lower than what was observed in the original study. Unfortunately, information about reliability was not reported by Eskine et al. (2011), or by Wheatley and Haidt (2005), the developers of these vignettes. However, linear mixed-effects analyses of the individual vignettes revealed that the bitter drink, compared with the sweet drink or water, did not induce harsher moral judgments in response to any of the vignettes. It therefore seems unlikely that the observed low reliability of the moral-wrongness composite explains our mostly null findings.

Another limitation is that we had few samples with enough conservative subjects for us to test the moderating effect of political ideology. Only 5 of the 11 replications had at least 2 conservative subjects in each of the three beverage conditions. Among those studies, only 3 had more than 6 conservative subjects in each of the three beverage conditions (i.e., the estimated number of conservatives per condition in the original study). This likely decreased our ability to detect potential moderation by political ideology. It should be noted, though, that we had substantially more conservative subjects across the replication samples (

Conclusion

This work joins the growing number of crowdsourced replication projects combining multiple laboratories’ efforts to replicate a single study (Hagger et al., 2016; e.g., Moshontz et al., 2018; Schweinsberg et al., 2016). Multilab replications have an advantage over single-lab replications in that they can more accurately estimate effect sizes given the possibility of much larger sample sizes. We carried out this research as part of the CREP. Thus, this project also joins the growing movement of conducting replications in the classroom (see also Leighton, Legate, LePine, Anderson, & Grahe, 2018; Wagge et al., 2019). Involving students in replication projects is more than a valuable pedagogical tool; it has been shown to be a promising means of carrying out high-quality replications (Frank & Saxe, 2012; Hawkins et al., 2018). Here we have demonstrated that pedagogical replications can provide valuable evidence about the robustness of an effect that has not yet been submitted to independent replication. We believe that pedagogical replications could be implemented widely to advance psychological science.

Supplemental Material

AMPPSOpenPracticesDisclosure-v1-0_Ghelfi – Supplemental material for Reexamining the Effect of Gustatory Disgust on Moral Judgment: A Multilab Direct Replication of Eskine, Kacinik, and Prinz (2011)

Supplemental material, AMPPSOpenPracticesDisclosure-v1-0_Ghelfi for Reexamining the Effect of Gustatory Disgust on Moral Judgment: A Multilab Direct Replication of Eskine, Kacinik, and Prinz (2011) by Eric Ghelfi, Cody D. Christopherson, Heather L. Urry, Richie L. Lenne, Nicole Legate, Mary Ann Fischer, Fieke M. A. Wagemans, Brady Wiggins, Tamara Barrett, Michelle Bornstein, Bianca de Haan, Joshua Guberman, Nada Issa, Joan Kim, Elim Na, Justin O’Brien, Aidan Paulk, Tayler Peck, Marissa Sashihara, Karen Sheelar, Justin Song, Hannah Steinberg and Dasan Sullivan in Advances in Methods and Practices in Psychological Science

Footnotes

Acknowledgements

Transparency

We have collectively identified major and minor contributors to this work. The first 8 authors are major contributors, and the other 15 (listed in alphabetical order) are minor contributors. According to the Contributor Roles Taxonomy (CRediT; ![]() ), the specific contributions were as follows: All the authors contributed to the conceptualization of this project, provided necessary resources, and developed the methodology. E. Ghelfi and C. D. Christopherson were responsible for project administration, and all the major contributors supervised the project. E. Ghelfi, F. M. A. Wagemans, and M. A. Fischer were responsible for data curation, and H. L. Urry and R. L. Lenne performed the formal analysis. H. L. Urry acquired the funds for the study at Tufts University. All the minor contributors conducted the investigation.; H. L. Urry and R. L. Lenne were responsible for validation of the research outputs and prepared the visualization of results All the major contributors wrote the original draft of the manuscript, and all the authors reviewed and edited it. At the University of Erfurt, Helene Weber, Liv Hochhäuser Conde, Mirjam Düben, Frank Renkewitz, Cornelia Betsch, and Lisa Felgendreff organized and conducted the study. Helene Weber, Liv Hochhäuser Conde, and Mirjam Düben helped provide their data to the meta-analysis team and helped frame German answers to the political-orientation question. No contributors from the University of Erfurt were involved in writing or reviewing the manuscript, and none elected to be authors.

), the specific contributions were as follows: All the authors contributed to the conceptualization of this project, provided necessary resources, and developed the methodology. E. Ghelfi and C. D. Christopherson were responsible for project administration, and all the major contributors supervised the project. E. Ghelfi, F. M. A. Wagemans, and M. A. Fischer were responsible for data curation, and H. L. Urry and R. L. Lenne performed the formal analysis. H. L. Urry acquired the funds for the study at Tufts University. All the minor contributors conducted the investigation.; H. L. Urry and R. L. Lenne were responsible for validation of the research outputs and prepared the visualization of results All the major contributors wrote the original draft of the manuscript, and all the authors reviewed and edited it. At the University of Erfurt, Helene Weber, Liv Hochhäuser Conde, Mirjam Düben, Frank Renkewitz, Cornelia Betsch, and Lisa Felgendreff organized and conducted the study. Helene Weber, Liv Hochhäuser Conde, and Mirjam Düben helped provide their data to the meta-analysis team and helped frame German answers to the political-orientation question. No contributors from the University of Erfurt were involved in writing or reviewing the manuscript, and none elected to be authors.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.