Abstract

A robotic cloud laboratory driven by a state-of-the-art unified laboratory operating system integrates automated hardware, humans, and sensors. This lab of the future system enables researchers to transparently and collaboratively create, optimize, and organize biological experiments to achieve more reproducible results, perform around-the-clock experimentation, and more efficiently navigate the vast parameter space of biology.

Reproducing results of published experiments remains a great challenge for the life sciences. Fewer than half of all preclinical findings can be replicated, according to a 2015 meta-analysis of studies from 2011 to 2014. 1 In 2016, biotechnology company Amgen said its scientists had failed to reproduce the findings of research recently published in three high-profile journals. 2 Many variables contribute to this poor rate of reproducibility, including mishandled reagents and reference materials, poorly defined laboratory protocols, and the inability to re-create and compare experiments.

The consequences are significant. In drug discovery, failure to reproduce experimental outcomes stands in the way of translating discoveries to life-saving therapeutics and revenue-generating products. In economic terms, at least $28 billion in the United States alone is spent annually on preclinical research that is not reproducible. 1 Even a small improvement to the rate of reproducibility could make a large impact on speeding the pace and reducing the costs of drug development.

Most recommendations to address the reproducibility crisis have focused on developing and adopting reporting guidelines, standards, and best practices for biological research.3–6 Those approaches aim to reduce human error by requiring more skilled human involvement in a field already short on human resources. More standards and regulations might improve reproducibility but would come at the cost of slower and more expensive research. An alternative approach would be to establish a system where protocols are encoded and shared as open-source software that could be modified collaboratively by scientific peers and run on automated laboratory platforms. Such a system would minimize sources of irreproducibility, allow protocols to be compared, and create an authority chain between a protocol and the data it collects.

The Lab of the Future Is Automated, Encoded, and IoT Enabled

Cloud computing combined with the Internet of Things (IoT) offers an opportunity to leapfrog the standards-setting debate and create more precise and reproducible research without adding human capital or increasing process time. An automated, programmatic laboratory eliminates much of the risk of error by relying on hands-free experiments that follow coded research protocols. Tracked by an array of sensors and pushed to the cloud, every documented step, measurement, and detail is automatically collected and stored for easy access from anywhere through a web interface. Underpinned by a connected, digital backbone, biological experimentation looks more like information technology driven by data, computation, and high-throughput robotics, ultimately leading to advances in drug discovery and synthetic biology. As experiments become more complex, datasets larger, and phenotypes more nuanced, transformative technologies like a programmatic robotic cloud lab will be necessary to ensure that these high-value experiments are reproducible, the results can be trusted, and the protocols producing these experiments can be compared. This advance builds on a foundation of IoT-enabled innovations that have already contributed to “a smarter, more efficient, and safer world.” 7

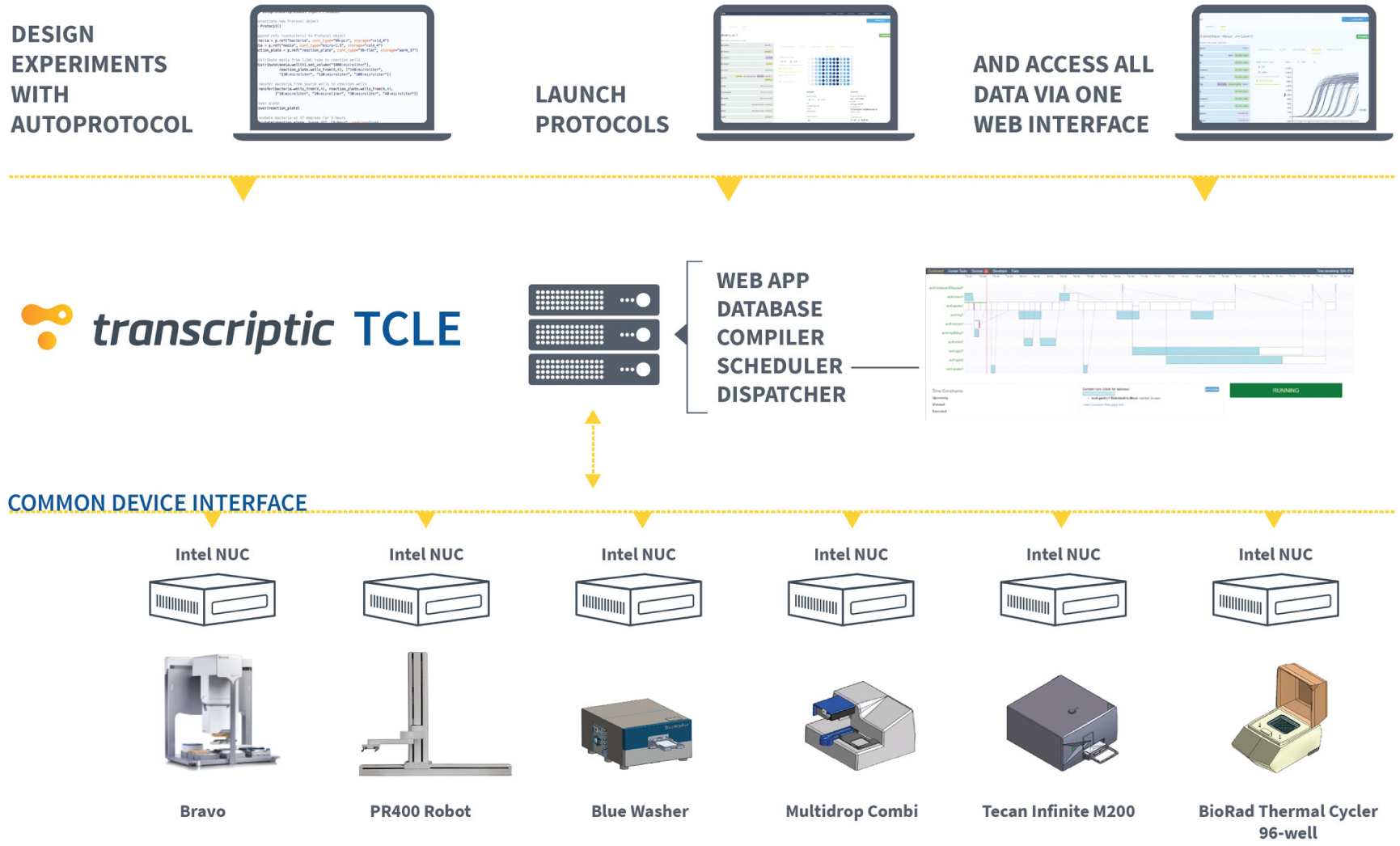

At Transcriptic, we have developed the Transcriptic Common Lab Environment (TCLE; Fig. 1 ) to address the current state of large-scale experimentation and to unlock a future where software and technology innovation drive high-throughput scientific research and this laboratory of the future is available to users anywhere on the planet via the Internet today. The unified software stack encompassing TCLE is also available for on-premises deployment in pharma and biotech companies desiring to bring lab-of-the-future technologies in-house. TCLE uses the entire integrated stack of automated hardware and sensors driven by a state-of-the-art lab operating system capable of incorporating existing equipment and rapidly onboarding equipment expansions.

The flow and transfer of data from assay execution through TCLE to the common device interface.

As part of developing the TCLE, we have curated and released an open standard for experimental design and specification called Autoprotocol, which programmatically defines an experimental plan. It is a precise, unambiguous data standard (understandable by humans and computers) that codifies experimental protocols into structured data objects with a consistent schema, versioned and shareable as units of software. These protocol scripts can be easily made accessible to collaborators or shared with others who wish to run the same workflow, and they provide a direct and traceable link between protocol execution and data capture. 8

After transferring assays to the robotic cloud lab infrastructure, researchers may execute and monitor experiments in real time via a web interface that gives them high-fidelity control over lab instruments. Following every manipulation of a sample across workflows and observing sensor-tracked data on a cloud-based dashboard, a team of scientists can collaborate to facilitate rapid assay development, optimization, and execution with scalable production. Whether working together locally or from their own laptops anywhere around the globe, collaborators in a cloud-based ecosystem can traceably fine-tune, debug, and optimize iterative biological experiments to achieve reproducible results.

A robotic cloud lab relies on scripted, coded protocols, not ambiguously worded notebook entries. Automated protocols are compiled into commands for the IoT environment, where a robotic scheduler controls when and on which device each command is executed. When those devices generate data, they are recorded in a web application for viewing by authorized users. Programmed protocols allow complete transparency, streamlined replication, and online collaboration. Every detail—from pipette pressure at every millisecond to temperature at each cycle on a thermocycler—is recorded, and each change to a protocol is versioned for easy identification.

Pharmaceutical and biotech companies are implementing cloud-connected software systems within their own laboratories to accelerate existing and new laboratory infrastructures with the benefits of a robotic cloud lab. For example, the synthetic biology company Ginkgo Bioworks recently began incorporating an automation and data cloud solution into its microorganism foundry. The initiative aims to automate Ginkgo’s experimental design process in order to double foundry output, deliver products faster and more efficiently, and scale to meet the industry’s expanding demands. 9

To be sure, robots and automation are already employed in many steps of biological experimentation, and equipping a robotic laboratory with the IoT will not equate to error-proofing. Machines can be miscalibrated, robots can break, and power can fail. Even pipette tips can cause problems. 10 The difference in a unified robotic cloud lab is that every device is connected to a central software infrastructure and programmed unambiguously with shareable scripts not tied to any one device. Instruments are controlled directly through the unified platform so that assay development can be executed in a single controlled environment, all instrument metadata is recorded, and control of the instrument is reproducible from one run to the next. The human error factor, which manifests in reagent mix-ups, inaccurate volumes, and missed experimental steps, is completely removed for more precise and reproducible workflows. Experiments that require constant real-time feedback are currently challenging to automate. Eventually, these will be addressed with more data and machine learning algorithms tuned to make decisions more accurately and more quickly than human intervention would allow.

This paradigm features a user interacting with a group of devices to define an experiment as a set of parameters and instructions. Submitted to the cloud platform, those instructions will dynamically provision sufficient robotic capability to undertake that experiment, store the samples, and return results to the user for analysis without the need for external monitoring or intervention. With the consistency and accuracy of a robotic cloud lab, scientists can generate more reliable and reproducible data.

Additional Advantages of a Cloud-Available Robotic Environment

Organizations that pursue an integrated, automated platform for biological research are likely to reap additional benefits. The advantages of moving research from error-prone, human-controlled environments to a self-contained, cloud-accessed robotic environment include the following: · Remote, real-time access from anywhere · Data and protocol transparency and traceability · Data and protocol sharing · Precise and unambiguous instructions · Increased productivity · Reduced costs · Expanded staffing options

Each of these enhancements ultimately contributes to a greater likelihood of producing reliable, verifiable, and cost-effective research results. It is why we believe an IoT-enabled robotic cloud lab is the research environment of the future.

Accessibility, Transparency, Data Sharing, and Precision

More life sciences groups are following the lead of IT experts by moving to the cloud, especially as long-distance collaborations become more common within or between companies. Eli Lilly, for instance, recently announced plans to develop an automated studio for organic synthesis in San Diego that will be accessible via the cloud to its researchers worldwide. 11

Programmatic cloud-connected labs will enable workflows in which decision-making about subsequent steps can be automated based on the results of previous steps. As a simple example, the yield from a sample prep in step A would programmatically and computationally determine whether samples would proceed directly to step B or if normalization would be required, all without the need for human intervention. Ultimately, we envision closed-loop biological research conducted in a robotic cloud lab infrastructure that is enhanced by machine learning tools to automatically optimize experimental design, speed up research and discovery, and allow experiments to progress rapidly. The addition of machine learning to a robotic cloud lab would augment automated protocols and allow for instantaneous response to experimental data through the computational design of novel experiments capable of furthering research independent of outside guidance. An IoT-enabled robotic cloud lab collects data from instruments, samples, and storage environments in real time for analysis. Users and their machine learning algorithms have instant access to all experimental data and output while a run is being executed and can act immediately on that information to troubleshoot, optimize experiments, analyze results, and design additional experiments. Carefully formalized protocols allow for better identification of badly controlled experiments.

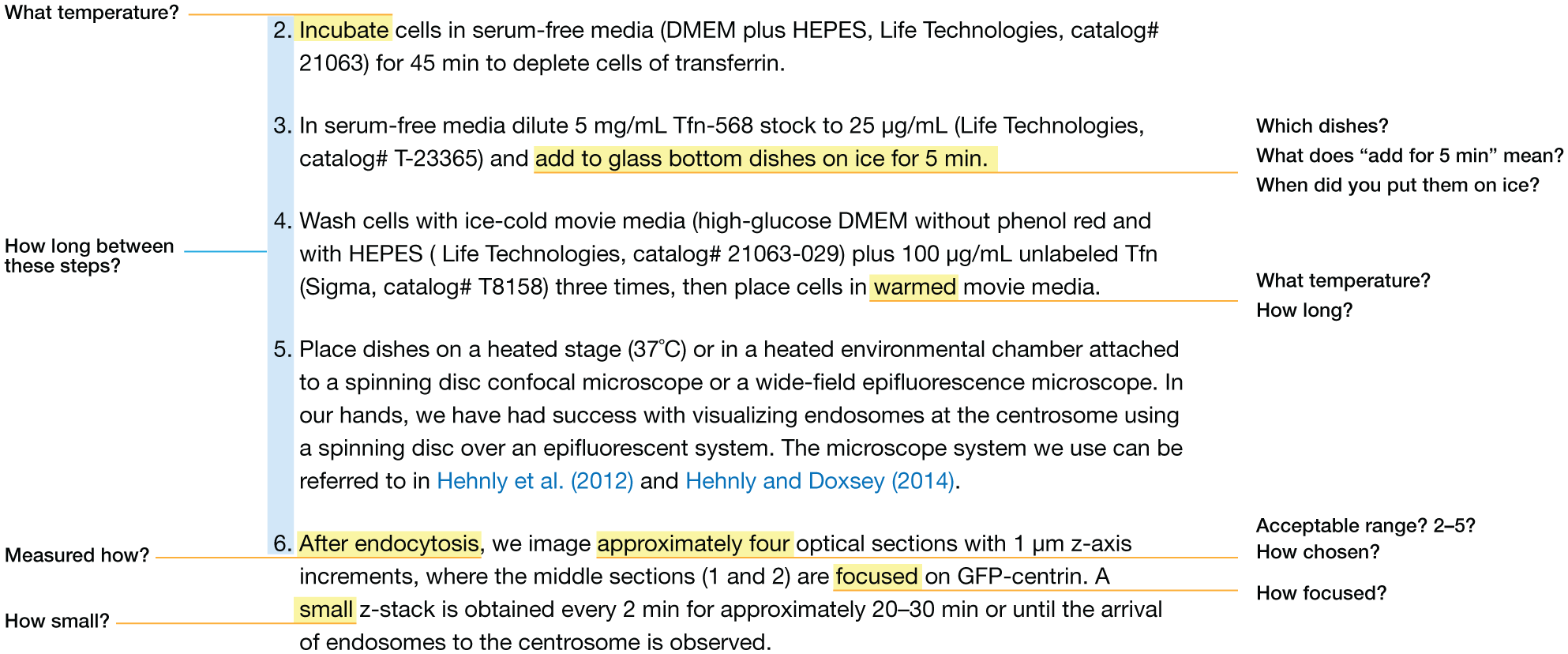

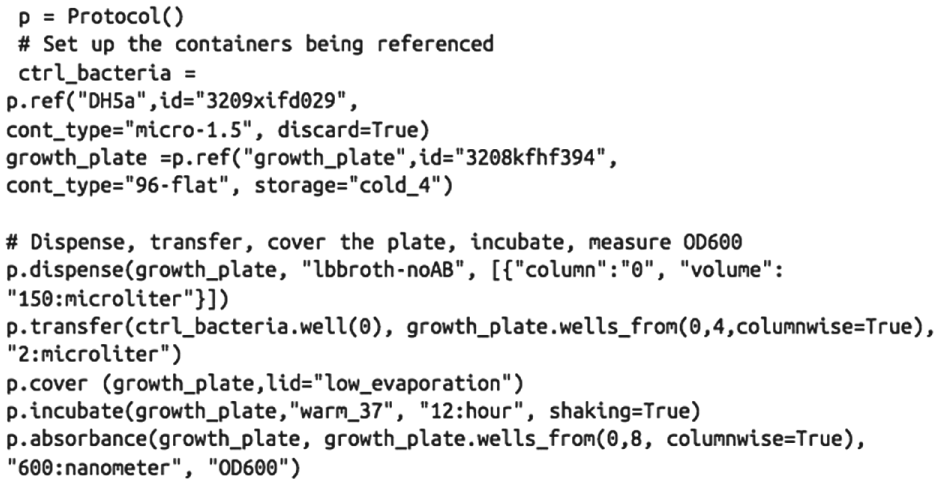

Knowledge of precise protocol details and the ability to compare differences among protocols are key to reproducing experimental results. When scientists try to verify previous findings by repeating a poorly detailed experimental process, it is little wonder they are unsuccessful. Figure 2 illustrates the kinds of questions a less-than-detailed protocol raises for those who hope to replicate an experiment. Figure 3 shows a similar experiment codified with Autoprotocol to be more precise and less ambiguous, thereby increasing reproducibility.

The ambiguity of a typical protocol makes interpretation challenging. Text adapted from ref 13. Copyright 2018 Elsevier.

A python script written to generate Autoprotocol JSON.

Biologists who come to rely on programmatic robotic cloud labs will not need to be code-fluent to repeat an experiment. Insulating the workflow from interference by individual members of a research team eradicates a “stir-fry” approach to experimentation that does not track spontaneously added steps. Entrusting experiments to a robotic cloud lab also answers the concerns many researchers have about outsourcing experiments to a contract research organization (CRO): researchers remain in control of their experiments by agreeing on a precisely detailed experimental plan beforehand. They can follow progress remotely and get immediate access to any data that have been generated.

Productivity, Cost, and Staffing

It stands to reason that running experiments in such a closed and controlled system would be more efficient and less expensive. In our own robotic work cells, we have shown how using a cloud laboratory to automate high-throughput screening of assays is fast, inexpensive, and effective. As can be seen from the following experiment types performed on the Transcriptic robotic cloud platform using Autoprotocol, the system allows for a diverse set of biologically relevant experiments to be performed with flexibility and robustness. Autoprotocol makes it possible to quickly codify an experiment, execute it, and return well-controlled and reproducible results.

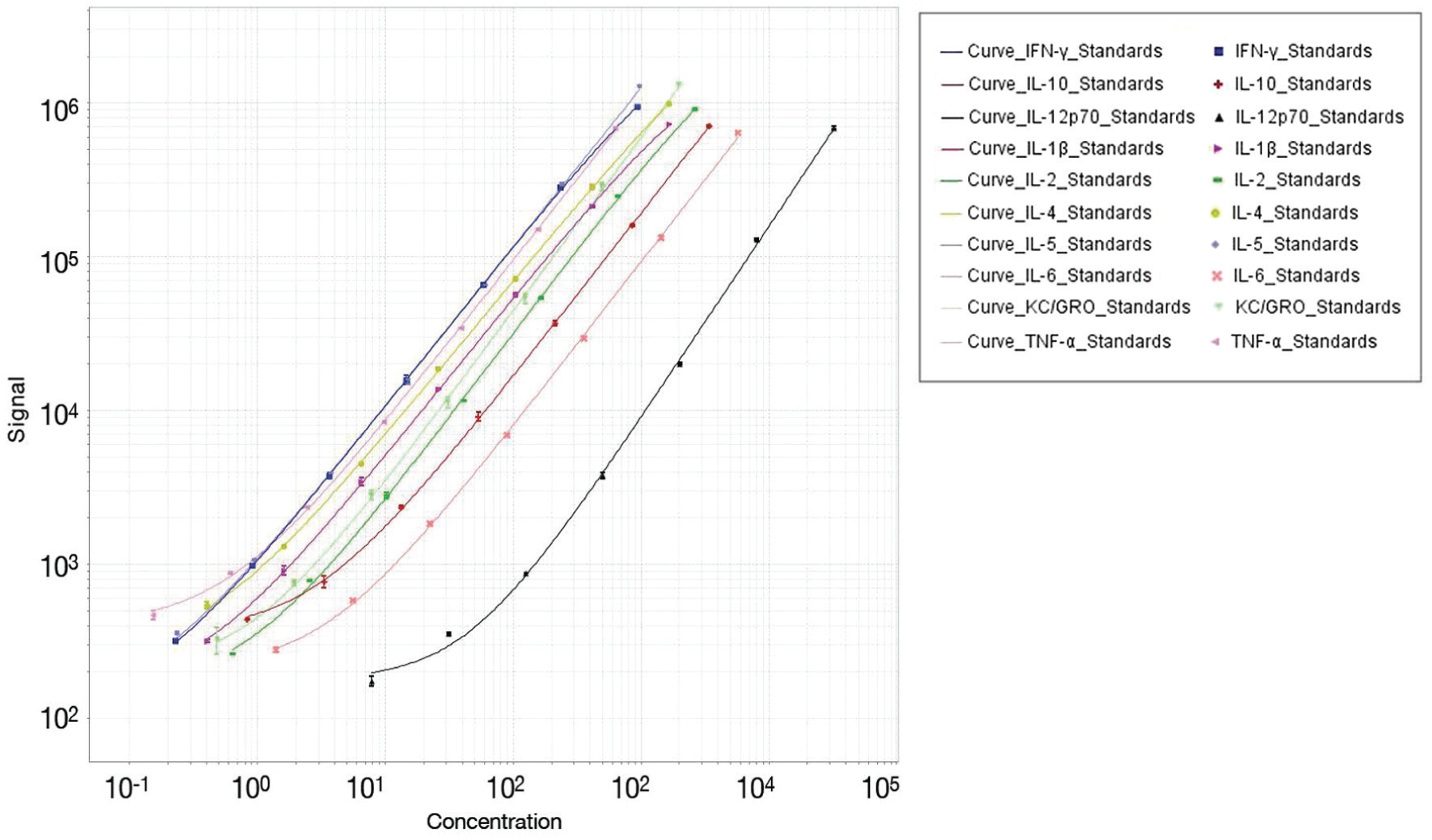

Biomarker quantification: Electrochemiluminescent detection assays are capable of quantifying proteins across a broad range of concentrations, often in a multiplexed format. Figure 4 shows the quantification of 10 different proinflammatory cytokines, each quantified simultaneously from the same sample of mouse liver biopsy extracts. The complex analytical devices like the one used to produce the electrochemiluminescent data can be seamlessly integrated into a robotic cloud lab to enable the reproducible automation of studies while minimizing the number of operator hours required. With the advent of modern machine learning techniques, a large number of samples and many measurement dimensions are required to build models of complex biological systems. Reproducible and scalable methods provided by automation and auditable, software-defined protocols are required in order to accurately generate reliable and vast datasets at scale.

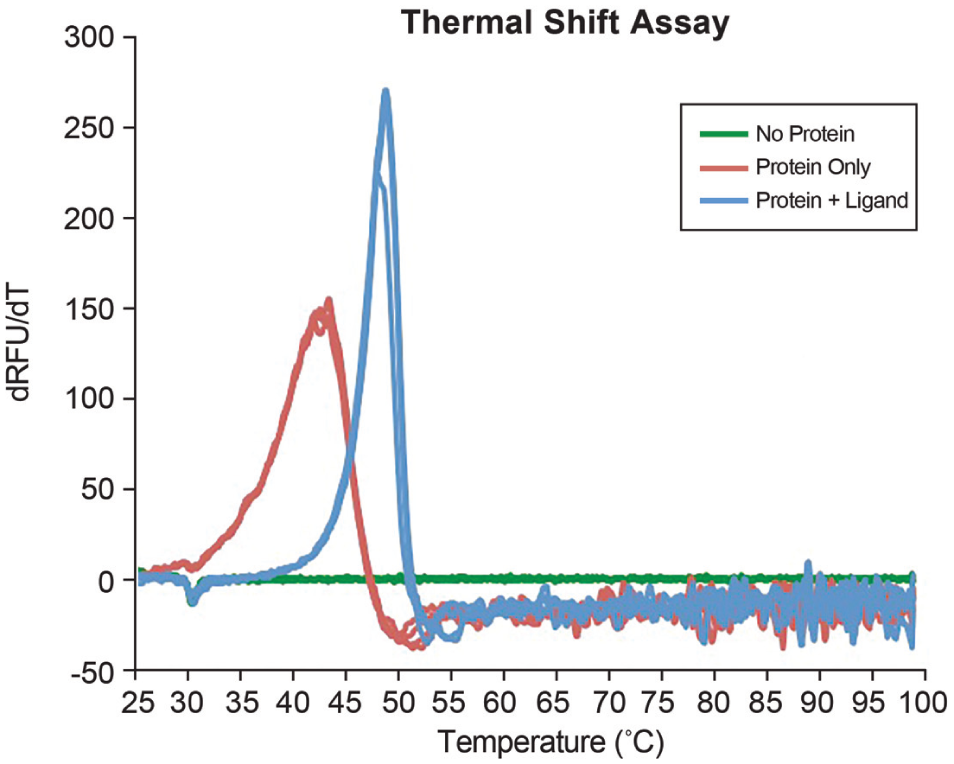

Biochemical assays: We screened for small molecules that affect protein thermal stability and identified one that stabilized the protein of interest by using a fluorimetric assay to measure protein melting temperatures. The robotic cloud platform provides a high degree of reproducibility between replicates that can be difficult for a human operator to achieve consistently ( Fig. 5 ). The cognitive and technical overhead to generate large reproducible datasets is massively reduced by leveraging software-driven abstraction of the user interface for using automation at scale.

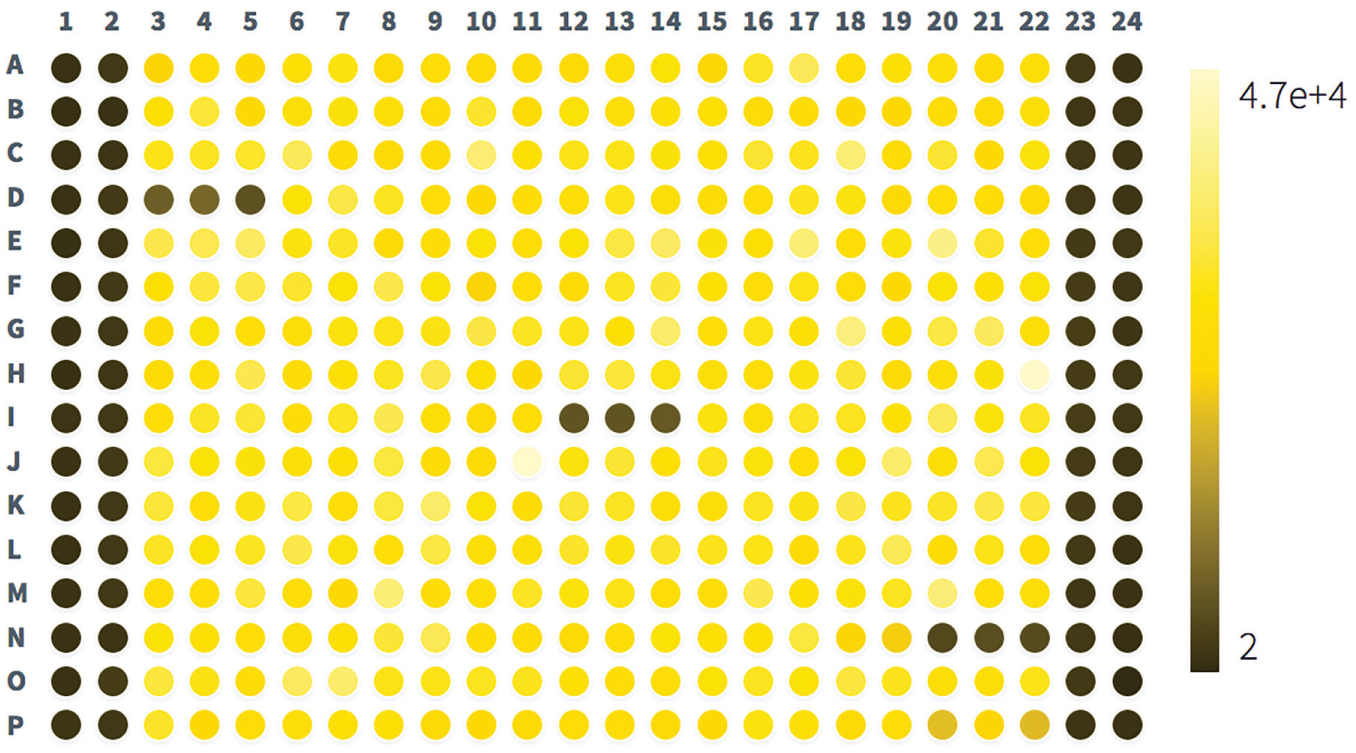

Cell-based assays: Scientists at the California Pacific Medical Center Research Institute (CPMCRI) identified drug combinations that effectively target patient-derived tumor cells by high-throughput screening of nanoliter-scale volumes of drugs and flagged for further evaluation those that showed a cytotoxic effect. This assay represents a stable, production-quality assay that has been run consistently on the Transcriptic platform. The use of nanoliter-scale volumes allows the conservation of precious compounds that would not be possible on a completely manual workflow. Furthermore, the complete integration between protocol and data generation provided by a robotic cloud lab enables scalability, provenance, and the ability to quickly identify the compounds and phenotypes of interest ( Fig. 6 ). Without software-defined experimentation within a single environment of Autoprotocol, the usability of an automated system would be far more complex, creating a user–experience barrier for researchers leveraging a high-performance technique such as this. Autoprotocol and software-defined experimentation creates a means of abstracting complexity in protocols and enabling the researcher to rapidly answer the biological questions they have while maintaining the option of diving deeper into the complexity when necessary.

Multiplexed protein concentrations were quantified across a broad dynamic range using an electrochemiluminescent detection assay. Sample preparation and assay were defined in Autoprotocol as an unambiguous and automation-interpretable guide to drive reproducibility between experiments, scientists, and even separate sites.

Protein melt curves were generated for the protein of interest with or without ligand, and for buffer only (four replicates each). An increase in melt temperature indicates a binding affinity between the ligand and protein of interest and is often used as a preliminary label-free screening assay for identifying drug candidates.

A luminescent viability kit was used to measure patient-derived tumor cell viability following incubation with multiple drug combinations. Low luminescence indicates low viability (columns 1 and 2 and 23 and 24 are blank). Courtesy of CPMCRI.

To speed discovery and improve outcomes for cancer patients, the CPMCRI in San Francisco uses a cloud-based automated laboratory for executing high-throughput drug screening with patient-derived tumor samples. The method employs xenograft models and cancer tissue biopsies to generate patient-derived cell lines and then screens multiple-dose regimens or combinations of chemotherapies against patients’ specific tumors. CPMCRI researchers use a web-based interface to create and submit dosing screens tailored to individual patients. They can quickly and iteratively screen a variety of chemotherapy compounds, doses, and combinations. Controlling these high-throughput assessments remotely via a web browser reduces human-introduced bias and error and elicits robust, reproducible data in real time.

Clinicians at the research institute have realized several cost- and time-saving advantages using a cloud-based robotic laboratory, including the following: · Eliminating the need for an expensive in-house high-throughput screening platform, saving capital expenditures and the cost of full-time equivalents to support it · Access to micronized liquid handling assays, which use minimal amounts of expensive reagents and drug compounds · Reduced data cycle times and increased scalability of the workflow · Real-time access to data and cross-collaboration with different teams and different sites · Getting new employees up and running more quickly

According to CPMCRI scientists, the robotic cloud lab offers improved flexibility and control when it comes to protocol development and implementation. It also makes it possible to start or manage experiments anytime directly through a web browser, and quality control measures allow for troubleshooting any experimental inconsistencies. Most important of all, patients benefit enormously from practitioners’ ability to rapidly assess the efficacy of therapies and combinations of therapies in xenograft models. The approach allows for customized treatment plans for each patient and drives better outcomes.

In another example, AbbVie is leveraging a robotic cloud lab to take a more rapid and flexible approach to testing humanized mouse livers for a new therapeutic area. The company’s head of future therapeutics and technologies, Steve England, said this has enabled quicker poststudy analysis and data turnaround that has accelerated the company’s drug discovery programs. 12

In addition to offering new opportunities to accelerate drug discovery, IoT-enabled laboratories open new possibilities for the scientific workforce. Traditionally, it has taken a group of experts to run an automation platform. Experiments supported by IoT and the cloud can be distributed to researchers who can tweak automatic protocols, monitor workflows, and examine results from their laptops while sitting in coffee shops. Likewise, drug discovery and synthetic biology companies can tap and retain a broader and more diverse pool of talent. It is another way biology will look more like information technology. A robotic cloud lab maximizes researcher effort and focuses researchers on the important tasks of experimental planning and analysis while eliminating the need for them to carry out the more routine and time-consuming task of experimental execution.

When scientists can access and analyze data on the cloud and see the unambiguous protocols that generated those data, life sciences organizations gain the flexibility to engage experts to collaborate on investigations without being in the same physical space. If everyone did experiments in the cloud remotely, we could have global collaboration with staff moving freely around the planet. And as research questions become more complex with more interdisciplinary inputs, IoT-equipped labs will also enable teams of experts from various disciplines to ask more complicated research questions while quickly and systematically organizing data, eliminating time wasted on repeated experiments, and accelerating transfer of preclinical results to clinical research. A new economic model for science staffing would mean more research, and more complex research could be performed with the same resources, while expenses for equipment, human capital, and training could be reduced. Ultimately, when scientists are all able to run experiments on industry-leading technologies using the same well-maintained systems, the community will benefit from better comparisons and normalization.

Security Considerations

While cloud computing has revolutionized the software industry, there is still a common perception that storing data externally makes it more susceptible to intrusion. However, cloud computing service providers such as Amazon or Google have more experience and expertise with operational security than an average lab has. These massive organizations have the scale and resources that are unavailable to smaller companies, and they allow for backups and redundancy to protect against accidental loss. That said, any individual or institution seeking to store data in the cloud or create their own connected, automated lab can take steps to increase security, such as using encryption for all communications and for data at rest and in transit, carefully managing firewall rules with monitored virtual private network (VPN) access, automating anomaly detection, and minimizing exposed network surface areas to limit the open ports with access to the data.

Experimental Standards of the Future

If an IoT-enabled robotic cloud lab is proven to eradicate errors, boost transparency, improve reproducibility, reduce costs, speed discovery, and solve more complex and interdisciplinary research challenges in biological experimentation, could this state-of-the-art approach become the new standard? We think so. A programmatic robotic cloud lab is the next step in the evolution of biological experimentation. It is the enabling technology for a modern preclinical laboratory that integrates automated hardware and sensors driven by a state-of-the-art operating system running automated protocol-directed experiments. It is the key to gaining greater clarity about crucial details of each step in our experimental processes and a deeper understanding of life sciences.

We imagine a future in which all preclinical research is clearly shown to have relied on reproducible methods and open-source protocols, perhaps through electronic links to experimental data and processes stored in the cloud. It is a future in which drug discovery happens faster, researchers are dedicated to generating hypotheses and analyzing data instead of sitting at a bench running experiments, and budgets are directed to generating robust and verifiable scientific results.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.