Abstract

Background

Residency programs have the advantage of comparing applicants through a single platform that combines factors that program directors value. Conversely, applicants have innumerable resources with fragmented information, and there are limited data on what they value when considering residency programs. This study sought to identify what students value from existing resources, how resources could be improved, and if a centralized resource would help meet the needs of residency applicants.

Methods

An online survey was sent to medical students at a single academic institution during the 2022 to 2023 residency application cycle. Students were asked to rank resources, identify their favorite and least favorite resources, and rate the utility of specific features and of a centralized platform. Statistical analysis was performed using an ordinal regression model. Statistical significance was defined by P < .05.

Results

Sixty-four out of 188 fourth-year medical students completed the survey. Students ranked program websites and FREIDA (Fellowship & Residency Electronic Interactive Database Access) the highest (P < .01). Program websites were the most favored for reliability, followed by FREIDA for its comparison tools. Student Doctor Network and Reddit were the least favored due to limited reliability, but felt helpful for candid reviews. Respondents indicated that fellowship placement ratings would be most helpful, while unvetted anonymous commentary on resident wellness would also be helpful. Surgical specialty applicants preferred resources like Google Sheets that offered detailed fellowship information, while nonsurgical applicants did not prioritize this. Ninety-one percent of respondents said a centralized platform integrating standardized information would be helpful.

Conclusions

Medical students desire a unified resource that combines reliable information with candid perspectives. A centralized platform with standardized data across programs, tailored to the differing needs of surgical and nonsurgical applicants, could fulfill this need and improve the residency application process.

Introduction

The National Resident Matching Program (NRMP), or “the Match,” was created in the 1940s to balance the intense competition among hospitals to fill their residency positions as early as possible and to minimize the pressure placed on applicants to make decisions in a very short time frame. 1 In 1952, the Match was officially launched aiming to reduce the chaos behind the residency application process and integrate both the students’ and programs’ preferences within the ranking process. 1

After 70 years and the advent of the Electronic Residency Application Service (ERAS), an online system where students submit their residency applications, there has been an upward trend in the number of applications that each residency program receives. According to the Association of American Medical Colleges (AAMC), on average, there were 78.2 applications submitted per person in 2022, a 30% increase from 2018. 2 One major reason for this trend was the perceived competitiveness for residency positions and the lack of reliable data to compare residency programs prior to interviews. 3 As a result, students felt that increasing the number of programs to which they apply would lead to a corresponding increase in the number of interview invitations and improve their chances for a successful Match.3,4 However, studies have shown that submitting more applications did not improve Match rates and may contribute to unnecessary costs and Match inefficiency. 5 This may occur because high applicant volume can overwhelm residency programs, leading them to rely more on screening processes that may filter out qualified applicants and less on holistic reviews that are time-consuming.

Residency programs have the advantage of comparing applicants through ERAS, a single platform that combines the factors residency program directors find important for considering interview invitations.4,6 Applicants, however, are presented with innumerable resources that present fragmented or potentially biased information about residency programs. For example, the Fellowship & Residency Electronic Interactive Database Access (FREIDA) developed by the American Medical Association is a commonly used resource that provides limited information, including predicted monthly costs, provided by the residency programs themselves. 7 Doximity, a database that rates programs based on current resident and alumni satisfaction data and overall reputation, has been publicly criticized for its algorithm favoring larger programs. 8 The NRMP, Texas Star, and individual program websites provide different information on residency programs with variable reliability and utility.9,10 Applicants may also choose to rely on resources curated by applicants themselves, such as Reddit, Student Doctor Network (SDN), and crowdsourced documents such as the Specialty-specific Google spreadsheet (Google Sheets).

The lack of clear and reliable data for comparing programs prior to interviews highlights the need for improvements in existing resources or creation of a new resource. There are limited data, however, as to which factors applicants find important when considering residency programs. This study aimed to identify features of existing resources that students found useful during the residency application process, to determine how residency information sources might better meet the needs of medical students applying to all specialties, and to determine if the creation of a resource that aimed to centralize information would meet those needs.

Materials and Methods

The study institution's Committee on Human Research Institutional Review Board (IRB, FWA00005945) determined that this cross-sectional survey study qualified for exemption due to its minimal risk to participants (IRB# NCR224203). The reporting of this study conforms to the Consensus-Based Checklist for Reporting of Survey Studies checklist 11 (Appendix 2). An online 27-question survey was created via the Research Electronic Data Capture application and distributed to all fourth-year medical students at a single academic institution who applied to residency programs during the 2022 to 2023 application cycle (Appendix 1). The survey was open from September—when the majority of students submit their applications—to the end of the academic year in May. To prevent multiple submissions, each student was provided with a unique survey link that could only be completed once. All surveys were anonymous and confidential.

The survey collected demographic information, including gender, race, and intended specialty. The first part of the survey asked students to evaluate current resources available for residency applications. Participants ranked up to 9 popular application resources in order of preference. Independent of the rankings, they also identified their favorite and least favorite resources with rationale for their choices. The resources in this questionnaire included the following: AAMC, Doximity, FREIDA, NRMP, Reddit, program website, Google Sheets, SDN, and “Other.”

The second part of the survey asked students to give their opinion on a 5-point scale (1 star = not helpful to 5 stars = very helpful) as to the utility of a resource that allowed users to post ratings of the overall residency program and program components (eg, educational sessions) as well as an opportunity to provide comments.

Statistical analysis for the resource rankings was performed using ordinal logistic regression to model the log odds of rank as a linear function of resource. The dependent variable was the rank that participants assigned to a resource, and the independent variable was the resource. This gave an estimation of the odds of each resource being ranked higher than a baseline resource. The highest-ranked resource was assigned as the baseline. Statistical significance was defined by P < .05. For Likert-scale questions, the responses were reported in tables and visualized in figures. Analyses and visualizations were completed in R via the RStudio integrated development environment. Data, code, and a comprehensive list of software versions, package versions, and dependency versions for this analysis can be found in the code repository. Users may automatically install all versions used in this study and reproduce the analyses described here by cloning the repository and opening the project in RStudio.

Survey responses were then categorized based on applicants’ specialties, grouping them into surgical and nonsurgical categories. We then calculated the predicted probabilities of each resource being selected as the preferred choice using a multinomial model with specialty category (ie, surgical vs nonsurgical) as the predictor variable. The specific specialties within each category are listed in Table 1. Students who identified their specialty as “Other” were excluded from this analysis.

Classification of Specialties as Surgical or Nonsurgical.

Results

Demographics

Sixty-four out of 188 fourth-year medical students completed the survey for a 34.0% response rate. 59% of included participants identified as female and 41% identified as male with a racial distribution of 70.3% Caucasian, 23.4% Asian, 3.1% Hispanic or Latino, 1.6% African American, and 9.4% not listed (see Figure 1A). Intended specialties included 28.1% surgical specialties, 18.8% Internal Medicine, 17.2% Pediatrics, 12.5% Emergency Medicine, 18.8% other nonsurgical specialties, and 4.7% other specialties not listed (see Figure 1B).

Demographic Distribution by (A) Race/Ethnicity and (B) Intended Specialty.

Specialty Advising

The majority of respondents (93.8%) utilized specialty faculty mentoring for advising during the recruitment process. Peer mentoring (73.4%) and the Dean's Office (54.7%) were the second and third most used advising resources, respectively.

Resource Ranking

Respondents ranked program websites and FREIDA as the first and second resources, respectively (Figure 2 and Table 2). There was not a statistically significant difference between the ranking of these resources (P = .163). The remaining resources were ranked as follows from third through last: AAMC, Google Sheets, Reddit, Doximity, NRMP, and SDN—all of which were significantly less likely to be ranked higher than the program websites (P < .001 for all listed resources) (Table 2).

Proportions of Respondents Ranking Each Resource From First to Ninth in Order of Preference in the First Part of the Survey.

Ordinal Logistic Regression Model Summary on Linear Predictor Scale With Log Odds Ratio (OR) and 95% Confidence Intervals (CIs).a

Abbreviations: AAMC, Association of American Medical Colleges; NRMP, National Resident Matching Program.

The baseline resource is Residency Program Websites with statistical significance indicating that the named resource is significantly less likely to be ranked higher than residency program websites.

Favorite Resource

Respondents chose the program websites as their favorite resource (23.4%), and FREIDA was the second most favored (18.8%). The odds of either resource ranking better than all others were significant (P < .01). Respondents ranked both Google Sheets and AAMC third (14.1%). Resources that were not included in the ranking questionnaire but were submitted in the “other” section as the respondent's “favorite resource” include Emergency Medicine Residents’ Association (EMRA), Texas Star, Away rotation experiences, Otomatch, Orthopaedic Residency Information Network, Medmap.io, and Discord. The NRMP and SDN were the only resources included in the ranking questionnaire that were not selected as a favorite resource by any respondent.

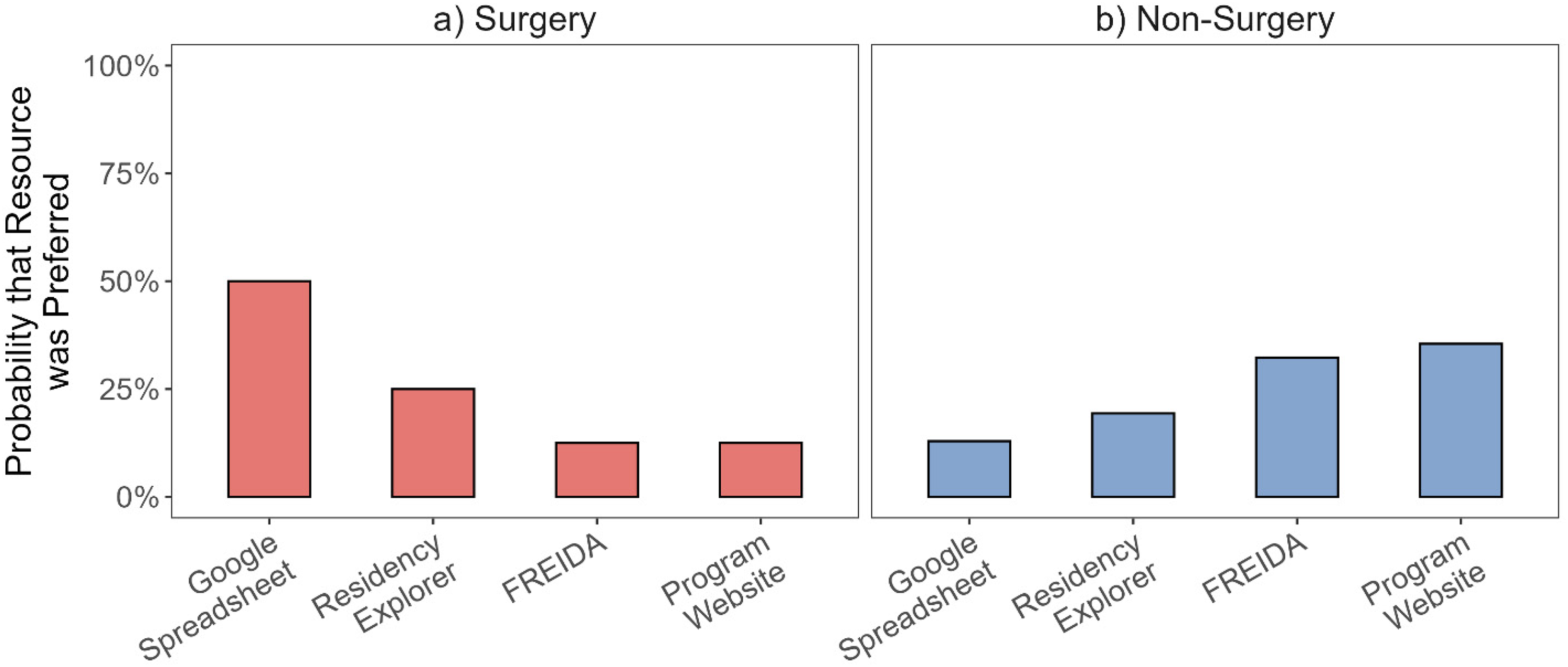

When the responses were grouped by intended specialty, the preferences of those applying to surgical specialties (N = 17) were the opposite of those applying to nonsurgical specialties (N = 44) (Figure 3, Table 1). Respondents applying to surgical specialties preferred Google Sheets the most, followed by Residency Explorer, FREIDA, and program websites. Respondents applying to nonsurgical specialties preferred program websites the most, followed by FREIDA, Residency Explorer, and Google Sheets.

Predicted Probabilities of Each Resource Being Selected as the Preferred Resource From a Multinomial Model Fitted as a Function of Specialty Category (ie, Surgery [N = 17] and Nonsurgery [N = 44]).

When asked to comment on their favorite resource, participants cited that program websites were the most accurate and comprehensive, offering details such as rotation sites, sample schedules, and salary/benefit information. Comments included, “It [program website] has the most information about the program,” “Had all the information I was looking for,” and “Getting information straight from the source.” FREIDA was valued for its user-friendly interface with participants commenting about the “Easily accessible data points about the program,” “Lots of filters to organize and distill down searches,” and ability to “save favorites in a list and compare them.” These features allowed for efficient searching, comparison, and saving of program details, though some found the information outdated. Google Sheets was noted for its up-to-date content and “honest” reviews, but concerns about the reliability and potential bias of anonymous feedback were raised. Association of American Medical Colleges was appreciated for its objective data on program competitiveness but was noted to lack information available on other resources.

Least Favorite Resource

Respondents chose SDN (28.1%) and Reddit (25%) as their least favored resources, followed by AAMC (12.5%) and Google Sheets (10.9%). Resources chosen by less than 10% of respondents as their least favorite included Doximity, NRMP, FREIDA, and program websites.

When asked to comment on their least favorite resource, respondents who chose SDN and Reddit as their least favored resources had similar comments and cited these platforms as “very biased” and “unreliable,” while providing candid evaluations of programs. Association of American Medical Colleges was described as having limited and vague information on specific programs. Respondents who chose Google Sheets as their least favorite or favorite resource had the same reasoning, stating that while the resource was up-to-date, its trustworthiness was questionable. Comments on Google Sheets included, “too much information,” “it was pretty overwhelming as it wasn’t regulated with many different opinions going into one document,” and “it caused a lot of anxiety because you are comparing yourself directly with other people.”

Resource Star Ratings

Respondents rated their favorite and least favorite resources on a 1-to-5 star scale (Table 3). The star ratings aligned with the resource ranking data as program websites received the most 5-star ratings, and FREIDA received the most 4-star ratings. The average ratings for program websites and FREIDA were 4 stars, while AAMC and Google Sheets had average ratings of 3 stars. Reddit, Doximity, and NRMP received an average of 2 stars, and SDN averaged 1 star.

Respondents Resource Preferences From 5-Stars (Most Preferred) to 1-Star (Least Preferred).

Ratings and Anonymous Commenting Features

In the second part of the survey, respondents were asked to evaluate the potential utility of additional features for existing resources or new resource development. A majority (95.2%) felt that it would be somewhat or very helpful if a resource contained ratings for “fellowship placements” of training programs. Other features deemed useful included ratings for “resident wellness” (91.9%) and “board preparation” (88.7%) (Figure 4). Respondents also expressed strong support (90.3%) for a feature that would allow anonymous comments on “resident wellness.” Other areas where anonymous feedback was deemed valuable included the “interview process” (88.7%) and “diversity, equity, and inclusion” (83.6%) (Figure 5).

Proportions of Respondents Indicating How Helpful a Rating Scale Would be for Each Residency Feature on a 5-Point Likert Scale (Not Helpful, Somewhat Unhelpful, Unsure, Somewhat Helpful, Very Helpful).

Proportions of Respondents Indicating How Helpful Anonymous Commenting Would be for Each Residency Feature on a 5-Point Likert Scale (Not Helpful, Somewhat Unhelpful, Unsure, Somewhat Helpful, Very Helpful).

New Resource

The majority of respondents (90.6%) said that a single platform that integrates subjective and objective data with other resources would be helpful. In the free comments section, many respondents wrote about the need for “a single resource” with “consolidated” and “standardized” information, regular updates of information at the beginning of each recruitment cycle, and greater accessibility of comments directly from peers.

Discussion

The residency application process requires students to navigate a wide array of resources to guide program selection, interview preparation, and rank list decisions. While previous studies have evaluated the utility of various resources in the residency selection process (eg, social media, 12 digital recruitment videos13,14), to our knowledge, this study is the first to analyze a wider range of online resources across multiple specialties. To provide a clearer picture of applicant preferences, we examined each resource, highlighting notable findings and contrasts between surgical and nonsurgical applicants.

Program websites were the most favored resource by our study cohort. Consistent with prior studies, participants valued websites for comprehensive information, including schedules, rotation sites, salary, benefits, curriculum, research opportunities, and resident/faculty information.12,14–18 While viewed as comprehensive, program websites were also criticized by our participants for a lack of consistency with up-to-date and accurate information. This sentiment is supported in the literature with program websites often lacking up-to-date information applicants deem as important to the decision-making process. 9 These findings emphasize the importance of maintaining current and robust program websites given their influence on applicants’ decision-making.12,16

Interestingly, surgical specialty applicants were more likely to rank program websites as their least preferred resource. This may reflect the unique information needs of surgical applicants, who prioritize detailed fellowship data. Lambdin et al found that many program websites omit this content, despite it being the most important information for students applying to surgical residencies. 9 The absence of fellowship information may explain why surgical applicants in our cohort turned to other resources, whereas nonsurgical applicants, with less need for this information, rated websites more favorably.

FREIDA was the second most favored resource in our cohort, consistent with Smith et al, who identified it as the most frequently used website after program websites. 17 Respondents appreciated its reliability, accuracy, and easy-to-use dashboard and comparison tools. Similarly, Smith et al and our study found AAMC to be valuable for reliable and accurate program information. 17

In contrast to existing literature, our cohort did not choose the NRMP website as a favorite resource. This contrasts with Blissett et al which found that an analogous site for the Canadian match (CaRMS) was the most frequently used website in their study and considered the most influential in application preparation.16,19 While both NRMP and CaRMS provide similar information on the overall match process, timelines, applicant demographics, and outcomes, CaRMS has more robust information about the individual programs and universities. Though providing robust information may be more feasible in Canada, given the smaller number of programs compared to hundreds of US programs, this may represent an opportunity for improvement for the US-based NRMP website.

Our data revealed mixed opinions about Doximity, which ranked fifth out of 7 with an average rating of 2 stars. Students appreciated the student perspective but questioned the reliability of the information and viewed the rankings as “subjective” or “arbitrary.” Smith et al found that 62% of respondents used Doximity during the residency match, with rankings influencing application, interview, and rank list decisions. 17 Similar to our cohort, 56% of respondents doubted the platform's accuracy. 17 Rolston et al similarly reported an accuracy score of 56.7/100. 20 Criticisms largely stem from the methodology, including subjective polling, sampling bias (only Doximity members are polled), and potential for recruitment of physicians within the department to join Doximity to vote and thereby impact rankings.20,21 Overall, Doximity provides useful student perspectives but is limited by the subjective nature of its data.

Perhaps as expected, resources from less official channels such as SDN and Reddit were less favored and ranked lower due to their low reliability and limited oversight of who can contribute and what can be posted. However, these resources were also viewed as having the most candors due to the ability to post anonymously. These channels also have specific information on individual programs and convenient sections for specific specialties, regions, and interests. Overall, we found SDN and Reddit to be unreliable options that are limited by the nature of their anonymous posting and limited regulation.

To our knowledge, our study is the first to survey medical students on their opinions of Google Sheets as a residency application resource across multiple specialties. Interestingly, Google Sheets ranked both among the top 3 and the bottom 3 resources in our study. Surgical applicants were more likely to prefer Google Sheets, which often contain candid, detailed information on program competitiveness, research opportunities, and fellowship matches. Ganguli et al found these to be important data points for surgical applicants. 22 Nonsurgical applicants ranked Google Sheets lower, likely reflecting less emphasis on such detailed data. Despite some applicant preferences, the crowdsourced nature of Google Sheets raises concerns about data reliability, as the information may be skewed and may not be representative of the entire applicant pool.

Prior studies comparing Google Sheets data with NRMP information have confirmed these reliability concerns. Rothfusz et al found statistically significant differences in USMLE scores, research productivity, and AOA membership in orthopedic surgery spreadsheets, 23 while dermatology spreadsheets overestimated Part 2 USMLE scores and underestimated publications. 24 Despite these limitations, Google Sheets has expanded to cover nearly all specialties, with at least 23 specialty-specific spreadsheets in use since 2017 to 2019. 25

One potential strategy to balance the candor-reliability trade-off found in platforms like Google Sheets is to create a reliable resource that incorporates both vetted and anonymous content. To explore interest in this, the second part of our survey asked about the utility of quantitative ratings by those familiar with the program (eg, students, residents) and the ability of users to write anonymous comments. Our results suggested that some information, such as fellowship placements and board preparation, may be best conveyed through quantitative rating scales, while topics such as the interview process and diversity, equity, and inclusion efforts may be more useful as anonymous content. Information on resident wellness was perceived as valuable when presented in either format (rating scale or anonymous commentary).

This study has several limitations. First, it was conducted with a small sample from a single fourth-year class at an urban U.S. medical school, with a response rate of 34%. As such, the findings may not be generalizable to other medical schools of different sizes or settings, or to international medical students. The small sample size may introduce selection bias, as the students who chose to respond may have different opinions or experiences compared to those who did not participate. Additionally, due to sample size, we could not adjust for potential nonresponse bias. Most importantly, our findings reflect the perspectives and online resource usage of U.S. medical students, limiting applicability to international contexts.

Second, survey distribution occurred throughout the Match process without stipulating the timing of completion relative to Match week. As a result, some students responded before Match results were released, while others responded afterward, potentially introducing recall bias. Post-Match reflections may have been influenced by individual outcomes (eg, unmatched, matched to top choices), which could affect perceived utility of resources. This study did not examine longitudinal changes in resource utilization or preferences over the Match timeline. McHugh et al similarly noted potential bias when distributing surveys after Match results. 12 Lastly, this study did not analyze how specialty choice affected the student's selection of favorable or unfavorable resources.

Despite these limitations, our study adds to the existing literature by providing insights into medical student preferences for residency application resources across multiple specialties. While previous studies have focused on specific resources or individual specialties, our study further examines a broader range of online resources and their perceived value to applicants. Additionally, we contribute to the understanding of how surgical and nonsurgical applicants prioritize different types of information when selecting residency programs, further informing the development of tailored resources to meet the distinct needs of these groups.

Further research is needed with larger sample sizes across diverse medical schools, considering factors such as size, geography, and urban versus rural settings. These varying demographics may reveal different preferences for online resources. Future studies should also evaluate the information provided by specialty organizations, away rotation experiences, and faculty mentoring. Additionally, given the increasing number of resources available, attention should be paid to emerging platforms. For example, resources such as Texas Star and the Medical Student Advising Resource List from EMRA 26 have gained popularity and may reflect regional preferences. Notably, both Texas Star and EMRA were listed in the “other” section by students in our cohort, even though they were not included as primary options. Lastly, most of the existing studies on medical student usage of residency application resources, including our own study, were conducted at American or Canadian medical schools. The needs of International Medical Graduates are areas requiring further study. These data may create a more complete picture of the existing resources and may inform the creation of more comprehensive resources with reliable, accurate, and credible information.

Conclusions

This study provides new insights into how US medical students use and evaluate residency application resources, revealing differences between surgical and nonsurgical applicants that warrant further study. Student preferences were shaped by the perceived reliability, level of detail, and candor of each resource, as well as by the format in which information is presented (ie, ratings vs anonymous commentary). There is a desire for a single, centralized platform integrating standardized information while accommodating specialty-specific needs. Optimizing existing resources and developing such a platform could streamline the application process, reduce costs, and improve the Match experience for both applicants and residency programs.

Footnotes

Acknowledgments

There were no contributors or collaborators who did not qualify for authorship.

Ethical Considerations

The study institution's Committee on Human Research Institutional Review Board (IRB, FWA00005945) exemption was obtained for this cross-sectional survey study as participation in the study was determined to have minimal risk to participants (IRB# NCR224203).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability

Data, code, and a comprehensive list of software versions, package versions, and dependency versions for this analysis can be found in the code repository. Users may automatically install all versions used in this study and reproduce the analyses described here by cloning the repository and opening the project in RStudio.

Appendix 1. Online Survey Created and Distributed Through REDCap. CAP is the Clinical Apprenticeship Program for First-Year Medical Students. POM is the Practice of Medicine Course for First- and Second-Year Medical Students Taught in Small Groups by the Same Clinical Faculty Member Throughout.

Appendix 2. The CROSS (Consensus-Based Checklist for Reporting of Survey Studies) Checklist,the EQUATOR (Enhancing the Quality and Transparency Of Health Research) Guideline for This Study. 11

| Section/Topic | Item | Item Description | Reported on Page # | |

|---|---|---|---|---|

| Title and abstract | ||||

| Title and abstract | 1a | State the word “survey” along with a commonly used term in title or abstract to introduce the study's design. | 1 | |

| 1b | Provide an informative summary in the abstract, covering background, objectives, methods, findings/results, interpretation/discussion, and conclusions. | 2-3 | ||

| Introduction | ||||

| Background | 2 | Provide a background about the rationale of study, what has been previously done, and why this survey is needed. | 4-5 | |

| Purpose/aim | 3 | Identify specific purposes, aims, goals, or objectives of the study. | 5-6 | |

| Methods | ||||

| Study design | 4 | Specify the study design in the methods section with a commonly used term (eg, cross-sectional or longitudinal). | 6 | |

| 5a | Describe the questionnaire (eg, number of sections, number of questions, number and names of instruments used). | 6 | ||

| Data collection methods | 5b | Describe all questionnaire instruments that were used in the survey to measure particular concepts. Report target population, reported validity and reliability information, scoring/classification procedure, and reference links (if any). | 6 | |

| 5c | Provide information on pretesting of the questionnaire, if performed (in the article or in an online supplement). Report the method of pretesting, number of times questionnaire was pretested, number and demographics of participants used for pretesting, and the level of similarity of demographics between pretesting participants and sample population. | NA | ||

| 5d | Questionnaire if possible, should be fully provided (in the article, or as appendices or as an online supplement). | 23-26 | ||

| Sample characteristics | 6a | Describe the study population (ie, background, locations, eligibility criteria for participant inclusion in survey, exclusion criteria). | 6 | |

| 6b | Describe the sampling techniques used (eg, single stage or multistage sampling, simple random sampling, stratified sampling, cluster sampling, convenience sampling). Specify the locations of sample participants whenever clustered sampling was applied. | NA | ||

| 6c | Provide information on sample size, along with details of sample size calculation. | NA | ||

| 6d | Describe how representative the sample is of the study population (or target population if possible), particularly for population-based surveys. | NA | ||

| Survey administration | 7a | Provide information on modes of questionnaire administration, including the type and number of contacts, the location where the survey was conducted (eg, outpatient room or by use of online tools, such as SurveyMonkey). | 6 | |

| 7b | Provide information of survey's time frame, such as periods of recruitment, exposure, and follow-up days. | 6 | ||

| 7c | Provide information on the entry process: →For nonweb-based surveys, provide approaches to minimize human error in data entry. |

NA |

||

| Study preparation | 8 | Describe any preparation process before conducting the survey (eg, interviewers’ training process, advertising the survey). | NA | |

| Ethical considerations | 9a | Provide information on ethical approval for the survey if obtained, including informed consent, institutional review board [IRB] approval, Helsinki declaration, and good clinical practice [GCP] declaration (as appropriate). | 6 | |

| 9b | Provide information about survey anonymity and confidentiality and describe what mechanisms were used to protect unauthorized access. | 6 | ||

| Statistical analysis | 10a | Describe statistical methods and analytical approach. Report the statistical software that was used for data analysis. | 7 | |

| 10b | Report any modification of variables used in the analysis, along with reference (if available). | NA | ||

| 10c | Report details about how missing data was handled. Include rate of missing items, missing data mechanism (ie, missing completely at random [MCAR], missing at random [MAR] or missing not at random [MNAR]) and methods used to deal with missing data (eg, multiple imputation). | NA | ||

| 10d | State how nonresponse error was addressed. | 16 | ||

| 10e | For longitudinal surveys, state how loss to follow-up was addressed. | NA | ||

| 10f | Indicate whether any methods such as weighting of items or propensity scores have been used to adjust for nonrepresentativeness of the sample. | NA | ||

| 10g | Describe any sensitivity analysis conducted. | NA | ||

| Results | ||||

| Respondent characteristics | 11a | Report numbers of individuals at each stage of the study. Consider using a flow diagram, if possible. | 8 | |

| 11b | Provide reasons for nonparticipation at each stage, if possible. | NA | ||

| 11c | Report response rate, present the definition of response rate or the formula used to calculate response rate. | 8 | ||

| 11d | Provide information to define how unique visitors are determined. Report number of unique visitors along with relevant proportions (eg, view proportion, participation proportion, completion proportion). | NA | ||

| Descriptive results | 12 | Provide characteristics of study participants, as well as information on potential confounders and assessed outcomes. | 8 | |

| Main findings | 13a | Give unadjusted estimates and, if applicable, confounder-adjusted estimates along with 95% confidence intervals and P-values. | 8-11 | |

| 13b | For multivariable analysis, provide information on the model building process, model fit statistics, and model assumptions (as appropriate). | NA | ||

| 13c | Provide details about any sensitivity analysis performed. If there are considerable amount of missing data, report sensitivity analyses comparing the results of complete cases with that of the imputed dataset (if possible). | NA | ||

| Discussion | ||||

| Limitations | 14 | Discuss the limitations of the study, considering sources of potential biases and imprecisions, such as nonrepresentativeness of sample, study design, important uncontrolled confounders. | 16-17 | |

| Interpretations | 15 | Give a cautious overall interpretation of results, based on potential biases and imprecisions and suggest areas for future research. | 18 | |

| Generalizability | 16 | Discuss the external validity of the results. | 16 | |

| Other sections | ||||

| Role of funding source | 17 | State whether any funding organization has had any roles in the survey's design, implementation, and analysis. | 19 | |

| Conflict of interest | 18 | Declare any potential conflict of interest. | NA | |

| Acknowledgements | 19 | Provide names of organizations/persons that are acknowledged along with their contribution to the research. | 19 | |