Abstract

From our initial screening of applications, we assess that the 10% to 15% of applicants whom we will interview are all academically qualified to complete our residency training program. This initial screening to select applicants to interview includes a personality assessment provided by the personal statement, Dean’s letter, and letters of recommendation that, taken together, begin our evaluation of the applicant’s cultural fit for our program. While the numerical scoring ranks applicants preinterview, the final ranking into best fit categories is determined solely on the interview day at a consensus conference by faculty and residents. We analyzed data of 819 applicants from 2005 to 2017. Most candidates were US medical graduates (62.5%) with 23.7% international medical graduates, 11.7% Doctors of Osteopathic Medicine (DO), and 2.1% Caribbean medical graduates. Given that personality assessment began with application review, there was excellent correlation between the preinterview composite score and the final categorical ranking in all 4 categories. For most comparisons, higher scores and categorical rankings were associated with applicants subsequently working in academia versus private practice. We found no problem in using our 3-step process employing virtual interviews during the COVID pandemic.

Keywords

Introduction

For decades, residency training programs have relied on numerical rankings to evaluate applicants for academic study and clinical training. 1 -10 Certainly, some aspects of emotional intelligence are considered in examining personal statements, letters of recommendation ([LoR] including the recommender), and Dean’s letters; usually, these considerations result in a tempering of a numerical ranking. During the past 2 to 3 decades, our pathology residency training program has utilized a process to override numerical ranking. This was likely driven by experience; that is, selecting a high scoring disruptive applicant and, alternatively, working with a lower scoring empathic applicant who became a top of class performer. We needed to define ourselves and our training program culture, as each program needs to do. By knowing ourselves, our faculty and resident trainees could put applicants into categories based on goodness of fit for our program. Thus, the final ranking would be a categorical ranking, and the numerical ranking would be used within each category.

How did we achieve our goal to identify highly qualified candidates who will fit our culture 11 -15 (eg, geography, ethos, practice norms, social mores…) and benefit most from our unique training program? 11 -16 We did not know if a particular metric or characteristic would identify candidates who would perform optimally in our program. 16 Thus, we undertook a retrospective review of 5 years (2005-2009) to tabulate personality assessments and metrics (metadata) of those selected for an interview. We then reflected upon our process for residency candidate selection as generated by our algorithms and metrics that included academic records, LoRs, the applicant’s narrative, interests, hobbies, publications, volunteer and research experiences. Ultimately the candidate’s multifocal, multidimensional interviews with us, with the subsequent collaborative discussion, yielded our final impression and ranking. The composite features of our optimal applicant included sufficient intelligence, pertinent performance, optimal emotional intelligence, adequate pathology experience with a strong commitment to pathology, yielding the best-fit candidate for our culture and training program. This evaluation was then used prospectively. We now report on the outcomes of this recruitment process, up through the applicants evaluated in 2017 who have now completed their categorical training.

We used established evaluation methods for constructing our algorithms and metrics. We used personality assessment inventories (PAI, with the

Worrisome indicators of potential future suboptimal performance included evidence of deficient communication skills. Some doctors have suboptimal communication skills and fail to communicate adequately or appropriately with peers, mentors, patients, and families. The Australian New South Wales Health Care Complaints Commission (who follow these matters closely) noted the number of doctors’ complaints has been increasing annually, medical practitioners more than dental, nurses, midwives, pharmacists, psychologists, and other health practitioners. 14,17 A significant percentage attract complaints and reported cases to the UK General Medical Council (GMC) had the highest ever number of complaints against doctors. This is a significant area of concern—and the ability to communicate with patients and peers is critical for success, under the rubric of “Can you talk to me?” 26,27

Medical training can give a doctor the basic knowledge required and foster their skills, including updating that knowledge to ensure continued academic competence. It can also teach or nurture some of the other skills and attitudes in the competency list. However, it is unrealistic to expect that medical education can do it all, mainly if the student is attitudinally unsuited or otherwise ill-equipped in their psychological makeup to meet the profession’s expectations and the community outlined above. Acceptance of this line of thought must lead us to acknowledge that we should take particular care in selecting medical practitioners, basing our choice on a range of criteria that reflect the excellent generic doctor’s picture. Dr Powis describes techniques and methods used to measure some nonacademic and noncognitive qualities and provides empirical data on their reliability, construct validity, and, most importantly, their predictive validity that supports their adoption, suitable health professionals. 16

Ultimately, the PAI advocates for a checklist 28 and dashboard of the most highlighted aspects of character and traits that one would like to include within a training program candidate and those that may be disruptive individually and likely to the group at large.

The attributes of the “better doctor” professional that can work within the present socially complex health care system include 29 : academic ability and cognitive skills; ability to communicate appropriately; good interpersonal skills; and demonstrated teamwork and empathy. Attributes that could detract from more optimal performance include: psychological vulnerability (inability to handle stress appropriately, low resilience); high levels of neuroticism; low levels of conscientiousness; extreme detachment or extreme emotional involvement; and high levels of impulsiveness and permissiveness. The PAI seeks to concretize and communalize these concepts by creating a common touchstone that evaluators could visibly see and discuss, to yield our final categorical ranking of the applicants who are likely to do best and gain most from our training program.

Materials and Methods

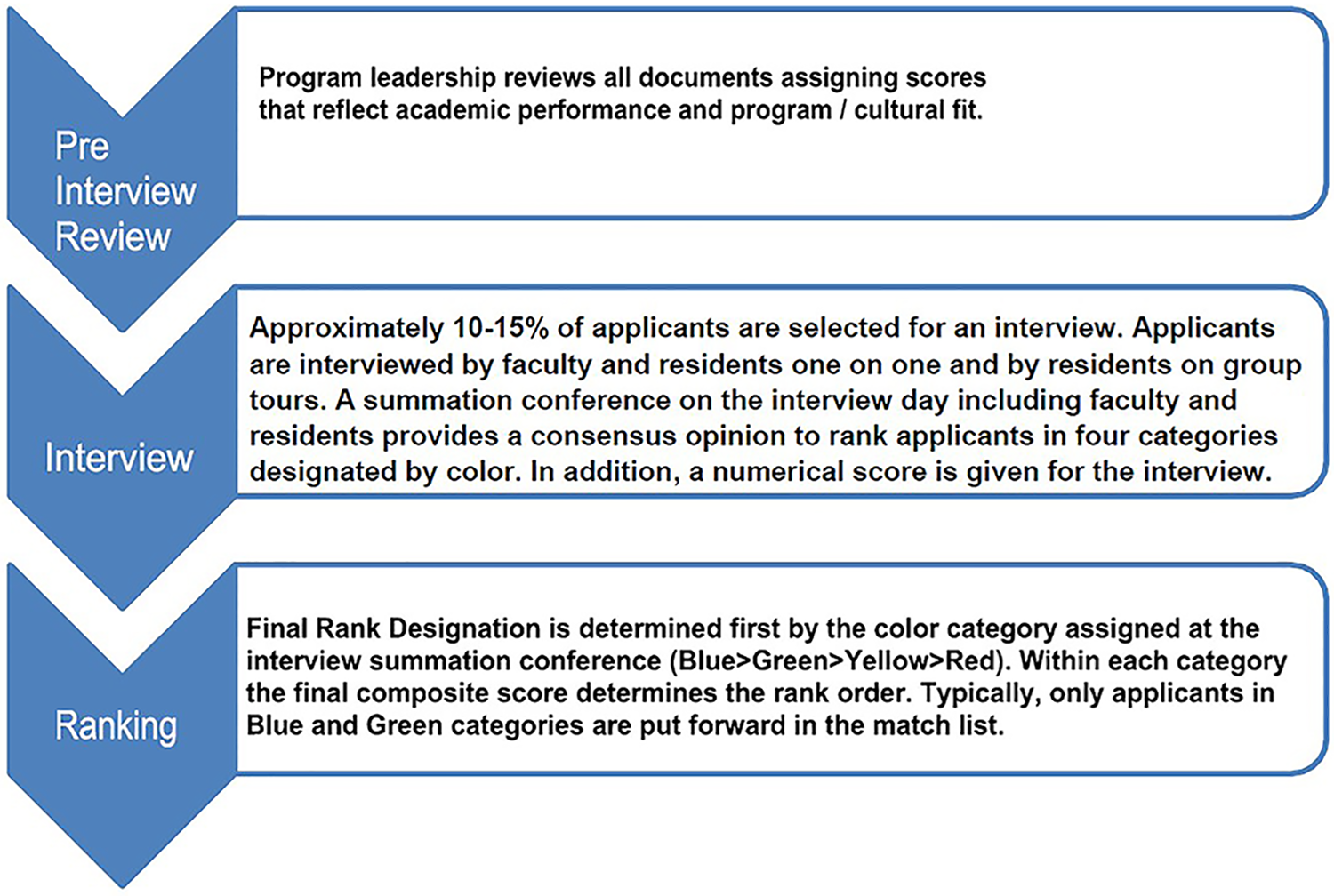

We used the following 3 types of metrics in the review process: numerical scores, a semi-quantitative score assigned to text documents (eg, LoR), and a rank priority rating based on interactions during the interview day (Figure 1). Our process has evolved during the past 2 decades primarily in how we weight non numerical data; the specific measures used today are presented below.

Three step application review. Applications are screened by the program director and associate directors. Approximately 10% to 15% of applicants are invited for interviews based on academic performance and potential programmatic fit. Programmatic fit is assessed during the interview. A consensus conference is held immediately after interviews to rank order applicants.

Initial Screen by Program Director

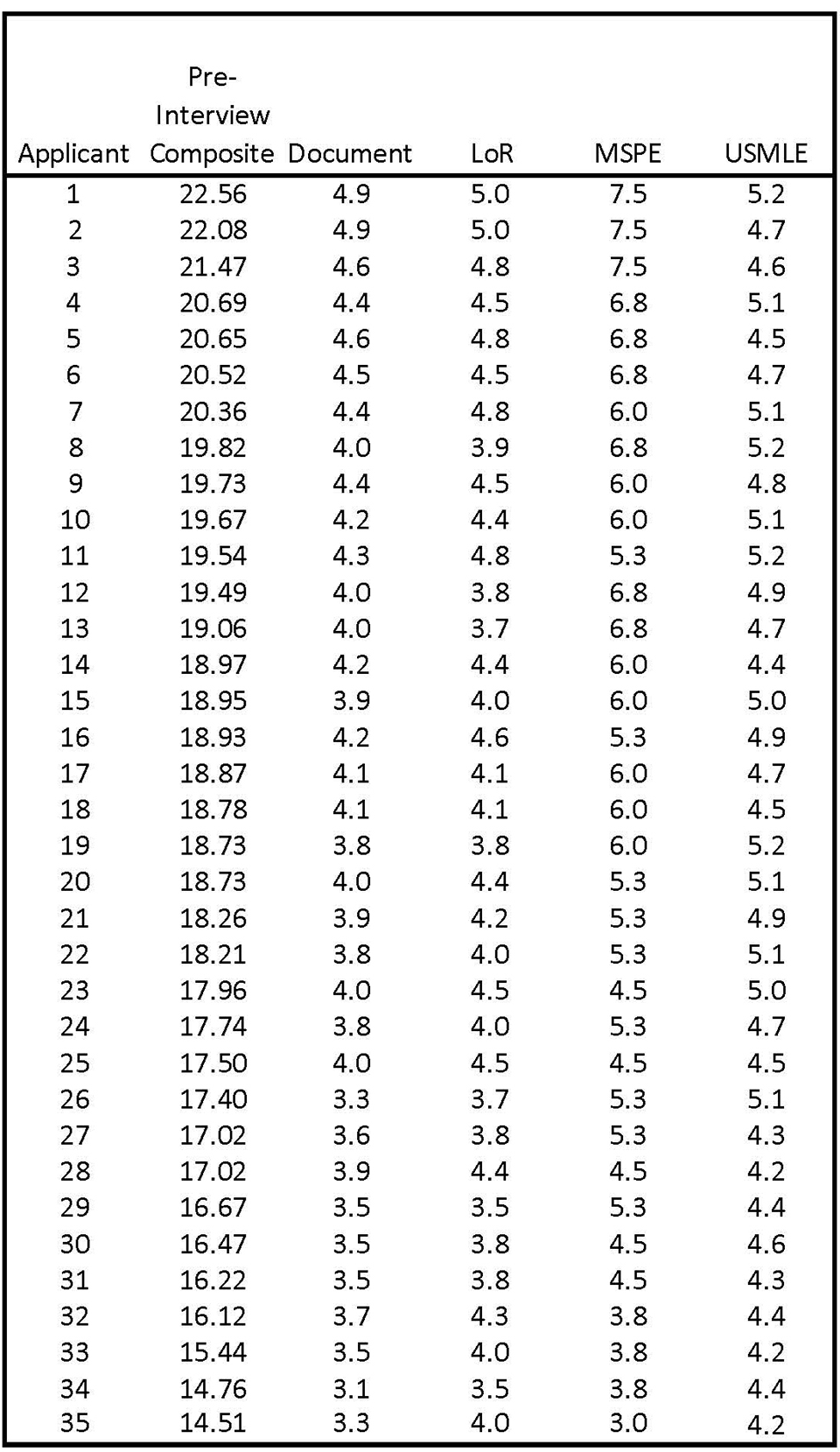

We have generally received 400 to 600 applications per year over the past 3 decades. The program director screens these applications with input from faculty and selects 60 to 70 candidates to interview for 5 positions. Elements in the ERAS application including Medical Student Performance Evaluation (MSPE), 30 LoR, Personal Statement, and Medical School (MS) transcript are given 1 to 5 points and multiplied by a weighting factor provided by ERAS. USMLE 31 -37 scores are divided by 100. A Preliminary Composite Score is derived from the sum of the 5 previous elements; a hypothetical preinterview ranking sheet is shown in Figure 2.

Representative preinterview rank order of applicants. Academic performance and academic/life experience as determined by information in the ERAS application yield a preinterview composite score that determines which applicants will be invited for interviews.

Criteria used for exclusion from consideration include the following: Partial completion of residency training in another specialty, looking to transfer into pathology Dismissed from pathology residency training program elsewhere >10 years from clinical exposure MSPE or LoR with flags for professionalism No pathology LoRs No pathology clinical experience or observerships Unexplained leave of absence or gaps in curriculum vitae Inarticulate writing in the personal statement.

Additional points for outstanding performance

An additional score of up to 1 point could be added for exceptional achievements in the early days of developing our ranking algorithm, for example,

Social media profile 41 -45

A review of propriety content is now unavoidable. We begin with a general Google search, an examination of images from Google search, a Twitter review, and a Facebook review, if applicable.

Interview preparation 46,47

Because we rank order the candidates into discrete categories, we in-service the Faculty, House staff, and Associates for interviewing goals, methods, and management to obtain consistency and optimal results. We meet to review the interview process enabling us to discover more about each candidate, to assess the candidate’s ability to communicate interests, and to learn and deliver the following: Candidate’s passion for pathology, intellectual, and social qualities Positive impression of us and the ethos of Montefiore and Einstein Combination of self-motivation and successful teamwork Our training culture: A kind (empathy

19,48

-54

), nurturing, visionary residency Our goal to grow and develop the pathologist for the 21st century Forward-looking view for defining the competencies/milestones/outcomes

We outline and underscore our guidelines 55 -57 for significant interview events. These guidelines (barring the handshakes and refreshments) are applicable in the virtual interview environment. We utilized a guideline and checklist of what to do—and what not to do. Ultimately, we would underscore the programs and hospital’s collegiality, residency quality-of-life, teaching, research, national organizational membership, citizenship and leadership, teamwork 58 -60 and communal involvement and activities, and success in fellowships and beyond.

Interview process

The interview process includes face-to-face interactions in multiple settings including, individual (Dyadic Mini Interviews; 3 per applicant), panels, and groups in multiple social settings. The 3 face-to-face interviews are scored 0 to 3 for each interview with the average multiplied by 2 and added to the preinterview composite score to yield a final composite score. The personal interactions of the formal interviews and informal interactions with current residents (on tours, at lunch) enable us to identify a candidate’s weaknesses, and to address factual record concerns, for example, great interviews versus poor USMLE’s. At the end of each interview day, all faculty and residents who participated in the interview process confer and assess each applicant with respect to their ability to adapt to our culture and take advantage of our program. Ultimately, this results in a categorical rank of applicants, usually focused primarily on emotional interactions and subjective assessment of how the candidate will fit in our program. Color coding includes 4 categories as follows from best to worst: Blue (4) > Green (3) > Yellow (2) > Red (1). Each candidate is presented by the 3 interviewers and the group (approximately 20 reviewers) assigns a color rank to the candidate. The most crucial decision at the summation conference is to delete candidates who do not fit in our program (Red).

In the program, we further understand that we attract and recruit young trainee candidates who will enter a particular culture. We’d like to know that they value equality, that they can deal with uncertainty and not avoid it but when presented, are not too overwhelmed by the complexities of the uncertain and unknown will pause, ask for help, reflect, be comfortable in the inevitable hierarchies of medicine. It is best if the candidate exhibits some sense of agency, executive function, planning, and organization; can demonstrate significant restraint, and is resourceful and has reliable resilience. These attributes will give them the best chance of success in survival within the excessive stresses that hammer them during residency training. These interactions we observe and try to verbalize and semi-quantify can only become more and more complex. Still, to avoid an over-obsessive or a sophistication that could impair our committee’s reproducibility to decide to the point of nonutility, we try to adhere to our basics and algorithms. This is nicely presented in the “PPIK” theory of process, personality, interests, and knowledge that inform us, pique our selection process, hone our skills for common ground to choose the better candidate (hopefully!).

Some Questions that help us unravel a working-PAI: Any arts you enjoy? Examples? Memorable volunteer experience? Example? Extracurricular activity? Example? Memorable medical case example? Memorable pathology (clinical) case example? Group/team activity example? Why Montefiore? How do you unwind? Any measures of stress?

Telemedicine, telehealth, telepathology—interviews from afar 61 -63

Given the present COVID-19 pandemic and a sea-change in how we practice medicine, the ubiquitous presence of technology in our candidates’ lives and our own, as well as in our clinical work through the increasing use of technologically mediated psychologically and medically informed health care and screening interviews, we needed to develop a heightened awareness of both the gains and the losses provided using available technologies. We in-serviced both our faculty and residents to optimize their Telemedicine ability for both care and the interview process in a human resources strategy and mode. We also are attentive to how heavy use of the internet, social media, texting, and so on may be affecting our candidates, concerning cognition, development, memory, attention span, future vulnerability, character tendencies, reality-testing, capacity for identity formation, and other consequences. We also reviewed the ethical and legal aspects of providing technologically mediated screening, interviews, and evaluations.

Final rank order

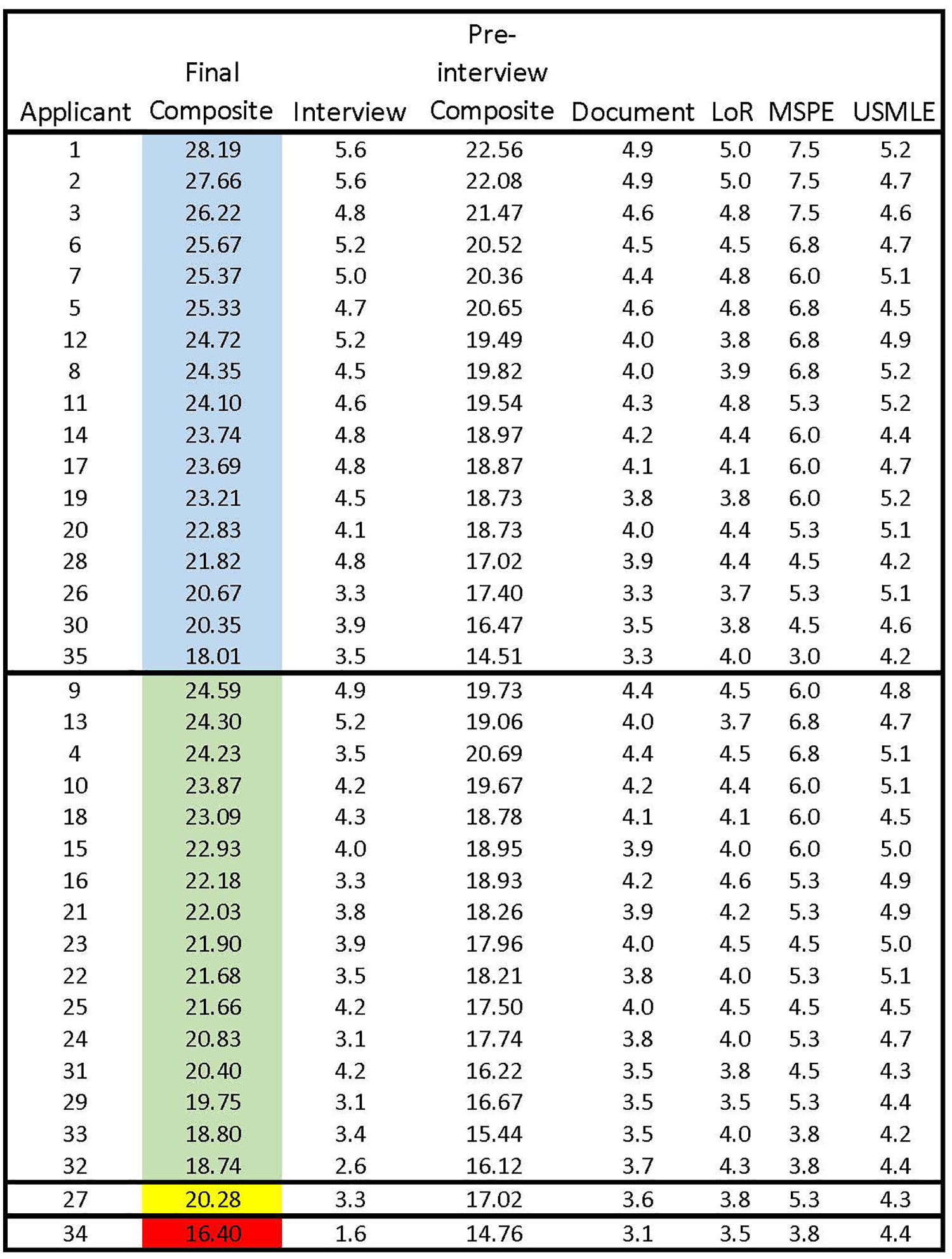

The last rank order is set initially by the color category. All candidates in the blue category are ranked higher than candidates in the green category regardless of the composite score as shown in a representative final ranking sheet (Figure 3). Candidates within a color category are ranked by the final combined score, which includes the PAI from interview day. Finally, the bottom of the rank list is trimmed by answering the following question—would we want this candidate rather than selecting 64 a candidate from the Supplemental Offer and Acceptance Program (SOAP) lists? All candidates in the red category are deleted, and most in the yellow category are deleted. Because the initial screening successfully selects candidates with acceptable academic achievement who are likely to fit in our program, we always have sufficient candidates in the blue and green categories to produce a match list with about 10 times the applicants required to fill our 5 slots.

Representative final rank order of applicants. Goodness of fit defines the color category of each applicant and academic scoring defines the rank order within each color category. The final rank order usually only containing the blue and green categories becomes the match list yielding approximately a 10:1 ratio of applicants to positions available. To demonstrate the impact of the interview within each color category, the preinterview ranking is given in the applicant column. Blue represents our highest priority applicants with Green as the next priority level. Yellow applicants are seldom included in the match and Red applicants are excluded from the match list.

Statistics

Differences in gender and medical school training among 4 color groups and between faculty position and private practice were compared using χ2 tests or Fisher exact tests. Differences in composite scores, interview scores, recommendation letter scores, Dean’s letter scores, and USMLE#1 scores among 4 color groups were compared using Kruskal-Wallis tests, and between faculty position and private practice were compared using Wilcoxon rank-sum tests. The Bonferroni correction was used to adjust

Results

The outcome data presented below shows that essentially all candidates interviewed and accepted into our program have had successful outcomes of both their residency training and ensuant careers.

Screening and Ranking

Our preinterview screen selected for academic performance and cultural fit for our program; thus, there was an intentional screening bias up front. The interview process enabled us either to confirm our initial bias or adjust our ranking based on personal interactions that probed for programmatic fit. In reviewing the hypothetical data in Figure 3, we present 2 possible scenarios for applicant 35 and applicant 4.

We present these 2 examples to demonstrate how dependent our final selection is on the values that we consider highly and the personal interactions during the interview process. We believe that all of the candidates whom we interview are academically acceptable and will be successful in their careers. We focus our selection on the candidates who will fit our culture and training program.

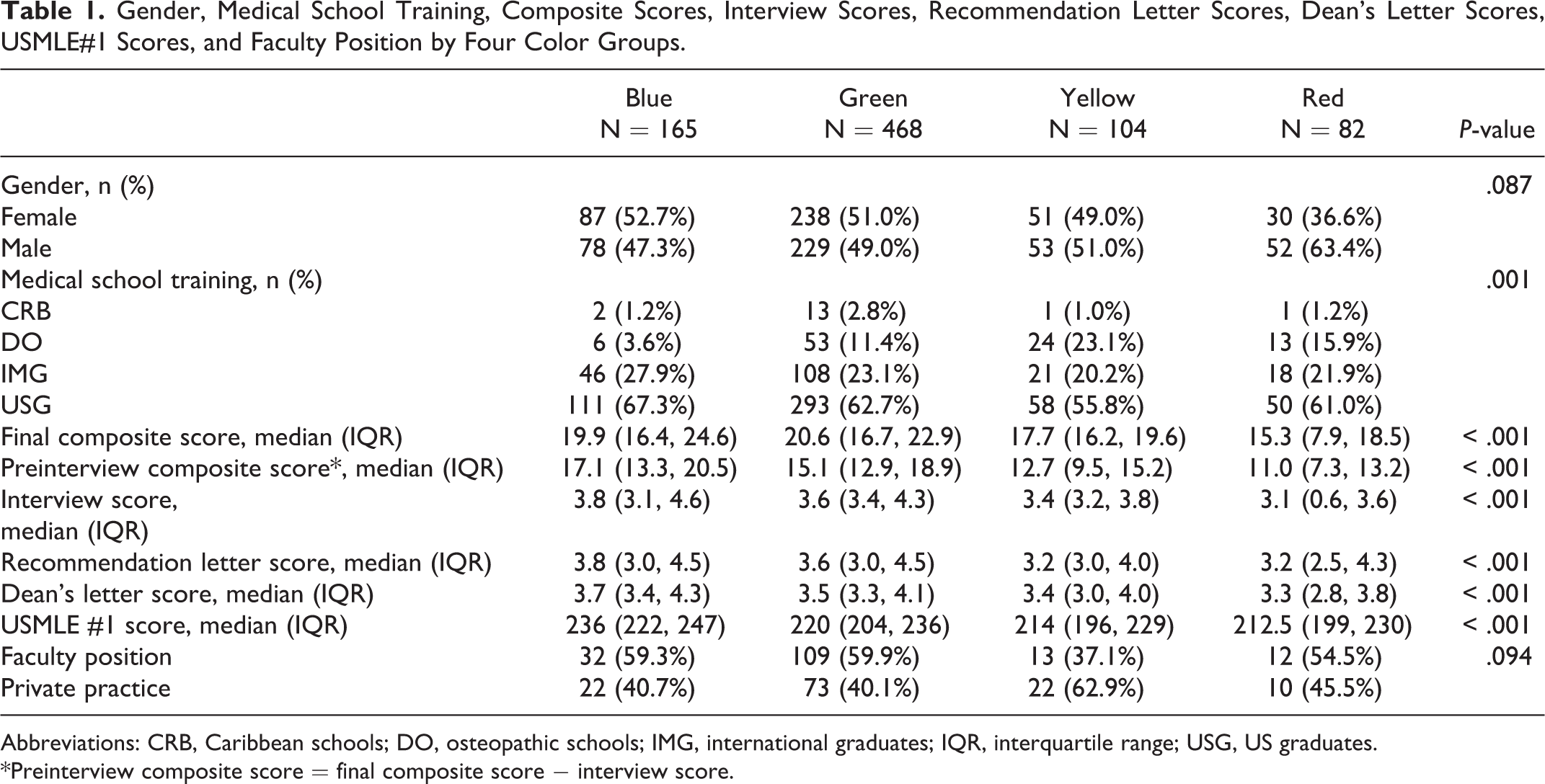

Applicant Data

We analyzed data of 819 applicants from 2005 to 2017. Most candidates were US medical graduates (USG, 62.5%) with 23.7% international medical graduates (IMG), 11.7% DO, and 2.1% Caribbean medical graduates (CRB). When we compared our final ranking using the color categories (Table 1), there was predominantly gender equality in all color categories except for Red, where there were near twice as many males; the red category accounted for only 10% of the total candidate pool (

Gender, Medical School Training, Composite Scores, Interview Scores, Recommendation Letter Scores, Dean’s Letter Scores, USMLE#1 Scores, and Faculty Position by Four Color Groups.

Abbreviations: CRB, Caribbean schools; DO, osteopathic schools; IMG, international graduates; IQR, interquartile range; USG, US graduates.

*Preinterview composite score = final composite score − interview score.

It is important to note that the color category is determined solely by the group discussion after the interviews. All parameters are considered, but the interview goodness of fit in our program determines the color. The composite (numerical) score determines the rank within a color category (Figure 3).

Candidate gender—males and females

There was an essential parity of males and females chosen—over a historical era that ranged from more male candidates in the past to the present decade, where more female candidates predominate (Table 1).

Composites (metric algorithm)

The final composite score includes numerical ratings for academic performance, step exams, Dean’s letter, LoR, and a weighted score for on-site interviews (Figure 3, Table 1). The median (interquartile range [IQR]) final composite scores were 19.9 (16.4, 24.6) in Blue, 20.6 (16.7, 22.9) in Green, 17.7 (16.2, 19.6) in Yellow, and 15.3 (7.9, 18.5) in Red (

Interview score, including the numerous face-to-face encounters

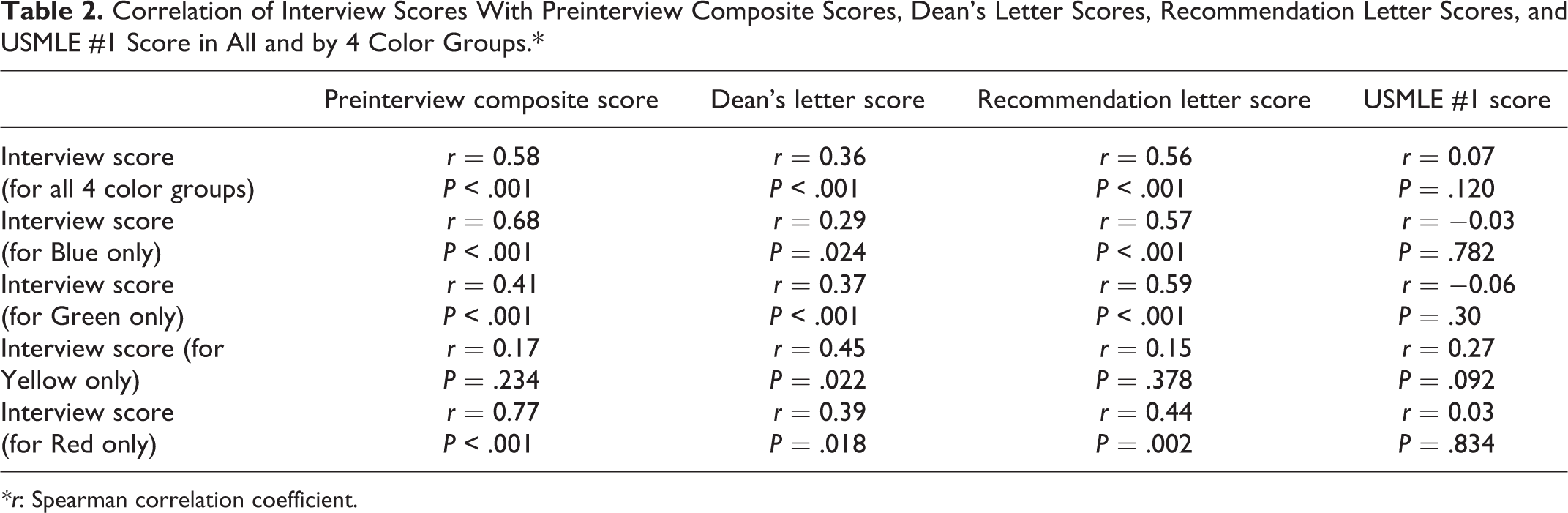

Median quantitative interview score again mirrored the color category with ample separation between Blue (3.8 [3.1, 4.6]) and Red (3.1 [0.6, 3.6]), but much closer assessments in Green and Yellow of 3.6 (3.4, 4.3) versus 3.4 (3.2, 3.8)—indicating a more difficult decision to make (Table 2).

Correlation of Interview Scores With Preinterview Composite Scores, Dean’s Letter Scores, Recommendation Letter Scores, and USMLE #1 Score in All and by 4 Color Groups.*

Recommendation letters

Scores were significantly different among 4 color groups (

USMLE#1

Blue had the highest median score of 236 (222, 247), followed by Green 220 (204, 236), Yellow 214 (196, 229), and Red 212.5 (199, 230;

Correlation of interview scores with preinterview composite scores (including deans’ scores, recommendation scores, and USMLE#1)

For all 4 groups, the interview score was moderately to strongly correlated with the preinterview composite score (

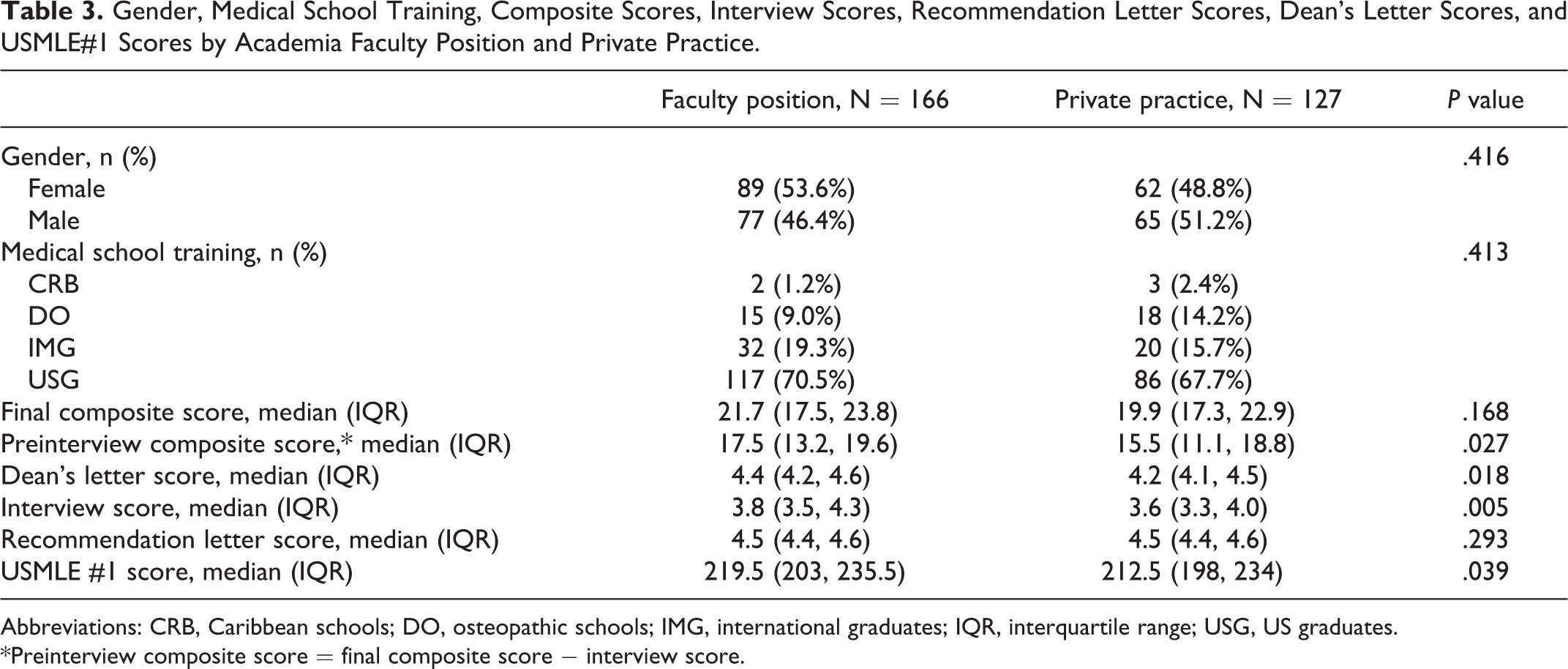

Careers in academia faculty positions versus private practice

At the time of this report, for those graduates who had completed their fellowship training and were in practice, almost 60% of Blue and Green candidates were discovered in academic faculty practices (Table 1). This was only 5% greater than the Red category. The highest percentage of private practitioners occurred in the Yellow category. Higher preinterview composite scores (

Gender, Medical School Training, Composite Scores, Interview Scores, Recommendation Letter Scores, Dean’s Letter Scores, and USMLE#1 Scores by Academia Faculty Position and Private Practice.

Abbreviations: CRB, Caribbean schools; DO, osteopathic schools; IMG, international graduates; IQR, interquartile range; USG, US graduates.

*Preinterview composite score = final composite score − interview score.

Candidate questionnaires’ responses of interview day experience

Though not quantified, our anonymous, a brief questionnaire has generated very positive response (∼4.5 positive on a scale of 5.0), with few comments of not being able to engage long enough or at all with select faculty (data not shown). For those applicants seeking more information. We would attempt to arrange a call or rarely, a second visit (usually for an applicant interested in working in a particular research laboratory).

Overview of our ranked candidates’ outcome

The top of our lists were predominantly outstanding candidates who achieved impressive residencies and are now in positions typically at leading academic and medical research centers as faculty. A smaller group entered private practice in large multistate practice corporations, for example, dermatopathology. Surprisingly, the less “right-fit” selective to us, the bottom of our lists also did reasonably well and better than we would have expected. A higher percentage of candidates in the Yellow and Red groups went into smaller community private practice, and surprisingly some of them are harder to find on the internet. To seem to disappear is most unusual, but this seems to be occurring with a small cohort, particularly from the bottom of the list.

Adapting to COVID19—Virtual Interviews

As a result of restrictions on travel and physical distancing, we needed to implement virtual tours and interviews for resident candidates. 65 Our process shown in Figure 1 remained unchanged. We screened 711 applications and invited 60 candidates to interview, splitting them among 4 half-day interview sessions. In preparing for the virtual environment, we reviewed materials from the AAMC, ACGME, and various specialties (many surgical specialties had already been using this technology to some degree in their recruitment strategies) to determine best practices. Faculty, residents, and coordinators all underwent a thorough in-service on these best practices and potential pitfalls in the virtual environment. We also included practice sessions to facilitate comfort with the technology. The schedules were meticulously planned and practiced, minimizing the stress on both the interviewers and the candidates. Interviewing with virtual technology introduces additional opportunities for potential influence by unconscious bias. We added to our typical training on unconscious bias, emphasis on the need to recognize that candidates may have variable access to technology, access to a pleasant interview environment, and even comfortability with the virtual environment. 65 Interviewers were explicitly instructed not to allow these factors, largely out of the control of the interviewee, to negatively impact their evaluation of the candidate. 65 On the interview day, each candidate was interviewed by 2 faculty members and 1 resident. They also had small breakout groups with only residents present where they could have questions answered without any faculty present. The interviews took place in the morning of the interview day. We followed our same protocol for same-day preliminary discussion and ranking of candidates, albeit now in a virtual Zoom room.

A key component of attracting candidates of “good fit” 22,65 was our ability to give the candidates a sense of what it would be like to train as a resident in the Bronx as part of our Montefiore team. Within the virtual setting, providing a virtual tour was pivotal. We worked closely with the hospital’s public relations department, developing a Montefiore general and Pathology-specific tour and overview of our program. The public relations team photographed all our laboratories and key educational staff. Our residents provided quotes and vignettes and recorded videos expressing what they felt makes Montefiore a special place to train. We invited all candidates, regardless of expressed affinity group, to attend the institution-wide House Staff Diversity Event. Over one-third of our interviewed candidates attended this event. Finally, our chief residents organized an informal, optional Zoom Q&A chat with only the residents and candidates. We do not typically offer a “second look” visit, but this year it felt necessary to offer candidates more opportunities to engage with our residents given the more impersonal, virtual setting that was required due to the pandemic.

In the end, we did not substantially change the number of ranked candidates over previous years. This year’s outcome resembled previous years, with 5 slots comfortably filled.

Discussion

How do we find the best method to determine future performance of an applicant in an individual program? It is common to rely on numerical scores including test scores, grades, and the soft scores that we assign to letters of evaluation and dean’s letters. If we allow these numbers to be our final ranking even with weighting, have we really evaluated applicants on the characteristics that we value most? For Montefiore/Einstein, our program focuses on training individuals to practice pathology as an integral physician member of a health care team. This requires empathy, teamwork, and communication skills. Our struggle over the past few decades was to find a way to use both the numerical score and a personality score to rank academically qualified applicants with the best personality fit for our residency training.

Our initial screening process yielded a small fraction of the academically qualified candidates whom we considered had the potential for a good cultural fit. The interpersonal interactions observed during the interview day constituted an essential factor for determining ranking on our match list (color category) and, of most importance, if the candidate was to be eliminated from the match list.

With this level of importance placed on the interview, we prioritize “the culture” at Montefiore/Einstein, in support of the institutional mission to

More detailed comment is in order regarding the dean’s letter. There is forever difficulty and confusion in the various formats of the MSPE as it evolved over the last 2 decades.

30,87

-92

The MSPE was originally an evaluative assessment that highlighted the young doctor’s strengths and weaknesses in training and allowed programs to visualize how that young trainee would fit into their programs’ culture and context. The literature strongly supports a realistic view of what the Dean’s letter has become. In 2010, the Journal of

In 2017, a group of surgeons in Philadelphia 30,35 also looked at the revised MSPE at over 100 institutions; they noted that some 30+ percent of schools do not report summative comparative performance data. This lack of standardization among the institutions remains an ongoing issue. Indeed, even the highest assessment of honors, and a serious example of grade-inflation, appear worrisome, with some 40+ percent of the medical students receiving honors. The MSPE has evolved with routine severe omissions from the Deans’ offices for the 6 ACGME competencies to be assessed.

Some have found 94 difficulty with the MSPE, the Dean’s letter, and the evaluative process; there is a marked difference between men’s and women’s medical students’ assessment. Moreover, that they differed significantly depending on both the gender of the student and the authors’ gender. This insightful analysis was carried out by Dr Carol Isaac 94 and her Team at the University of Wisconsin. In addition, there is emerging literature confirming the presence of racial bias in conferring Alpha Omega Alpha Honor Medical Society (AOA) honors, further muddying the waters in the assessment of medical student performance. 95 -101

To improve this process, a group headed by Dean Linda Hedrick 102 demonstrated that review and rereview and continuous review of prior years’ Dean’s letter was an ongoing process that was carried out by all the Dean’s letter writers together and continuously. This process, which was structured along with the Institute for Healthcare Improvement (IHI) requirement of improvement of health care, achieves better success and uniformity and equivalence among student assessment and the repetition of this assessment every year.

We have an ongoing evolution related to the MSPE, the Dean’s letter, how we describe, assess, evaluate, and recommend our medical students. How this information can be made more uniform and meaningful is a challenge. We are indeed entering a new era of the Flexnerian revolution. We hope that we can achieve a modicum of success for the deans’ committees’ evaluations but, more importantly improve our evaluation process with professionals in psychology and education for our graduating and trainee physician. 30,73,87,90 -92,94,95,102 -106

Conclusion

We came to this present interview format based on faculty and associated desire to concentrate the more regular, long-drawn-out interview season into an efficient, compressed process. We also wanted to maximize involvement of our faculty and residents in the evaluation process, where the most “eyes” could interact with candidates. 87,107 Our postinterview summation promoted collaborative interactions with broad exchange of opinions, impressions, and ideas. Factual information about a candidate revealed through the interview process could be discussed in the broadest forum possible, with the attending faculty who would ultimately work with the candidate if accepted into the program.

We believe that our evaluation process has worked superbly for our program goals and mission. The strategy is highly rewarding to our faculty and, upon arrival in the program, to our trainees. This evaluation process establishes the foundation of community and promotes the ethos that we all carry together and with each other and underscoring of our communal social-capital. We also consider that this report constitutes opportunity to examine the anthropological process of evaluating, training, and graduating individuals who will carry this concept of “Bronx-Care” forward as they proceed in their careers.

Pragmatically, we believe the process, methodically presented in this article, has defined the “best-fit” candidate to work with us and succeed in a bustling medical center with highly challenging health care issues and the extraordinary diversity that comprises the citizens, patients, and neighbors we at Montefiore and Einstein care for, including city schools, social centers, nursing homes, and all aspects of acute and chronic care. The Montefiore enterprise has won the famous Foreman Award for community and population health care, named after a recent CEO and President of Montefiore, Dr. Spencer (“Spike”) Foreman, who outlined his vision of future health care in 1995 in Academic Medicine, that still defines many of the challenges we face a generation later.

Our “PAI” 1,71,108,109 is the core guidance, our pragmatic and aspirational “compass” of how we choose the next generation of medical practitioners. We are all engaged in this better physician adventure. This paper outlines what we are doing—as flawed as it is—to select and create a better practitioner, a better trainee and colleague who will practice competently and with joy, avoid and diminish burnout 110 -117 and medical error. 58,118 -125 We need ongoing refinement, comments and improvement to improve and be better doctors.

Footnotes

Acknowledgments

To the fantastic faculties and house staff at Montefiore and Einstein, who have sustained ever-ongoing change in the pursuit of better patient-centered care and excellence. No Better group exemplifies humanism and empathy and always to our supportive families. Immense gratitude to our administrators and coordinators over many decades: Annie D’Errico, Betty Edwards, Zudith Lopez, Jacqueline Vazquez, Arlene Sepulveda, and Vera Solomon.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest concerning the research, authorship, and publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.