Abstract

ChatGPT is an artificial intelligence (AI) chatbot application. In this study, we explore the creation and use of a customized version of ChatGPT designed specifically for patient education, called “Lab Explainer.” Lab Explainer aims to simplify and clarify the results of complex laboratory tests for patients, using the sophisticated capabilities of AI in natural language processing; it analyses various laboratory test data and provides clear explanations and contextual information. The approach involved adapting OpenAI's ChatGPT model specifically to analyze laboratory test data. The results suggest that Lab Explainer has the potential to improve understanding by providing an interpretation of laboratory tests to the patient. In conclusion, the Lab Explainer can assist patient education by providing intelligible interpretations of laboratory tests.

The use of tools powered by artificial intelligence (AI) in the medical field has experienced a notable increase due to the consistent and dependable outcomes it offers. ChatGPT (Chat Generative Pre-Trained Transformer), developed by OpenAI, has demonstrated considerable potential to provide precise responses to medical queries, including questions that resemble those found on the US Medical Licensing Examination. 1 ChatGPT achieves outstanding clinical decision-making accuracy, which grows in strength as more clinical information becomes available to it, particularly excelling in final diagnosis tasks compared to initial diagnosis; furthermore, it has demonstrated its capacity to aid healthcare professionals in medical report writing and allows them to focus on relevant points of patient care.2,3 These actions have notable social consequences since they have already been used in numerous scientific and medical contexts. 4 In the field of medical education, the capacity of ChatGPT as a cutting-edge instrument has been emphasized with proposals that could substantially accelerate patient care and medical research. 5

There are novel possibilities for its integration into various routine activities that require the analysis of information by both patients and healthcare professionals. This same potential allows the training of ChatGPT’s AI to generate personalized versions (“Create a GPT”) related to any topic. To develop a GPT, using OpenAI's GPT Builder, the process requires explaining the desired creation and its intended purpose to the conversational interface (Figure 1).

GPT development, using OpenAI's GPT builder.

The purpose of this study was to use the GPT Builder to provide clear and complete explanations of the results of the laboratory tests carried out, called “Lab Explainer” (https://chat.openai.com/g/g-S8Ui6i2iI-lab-explainer).

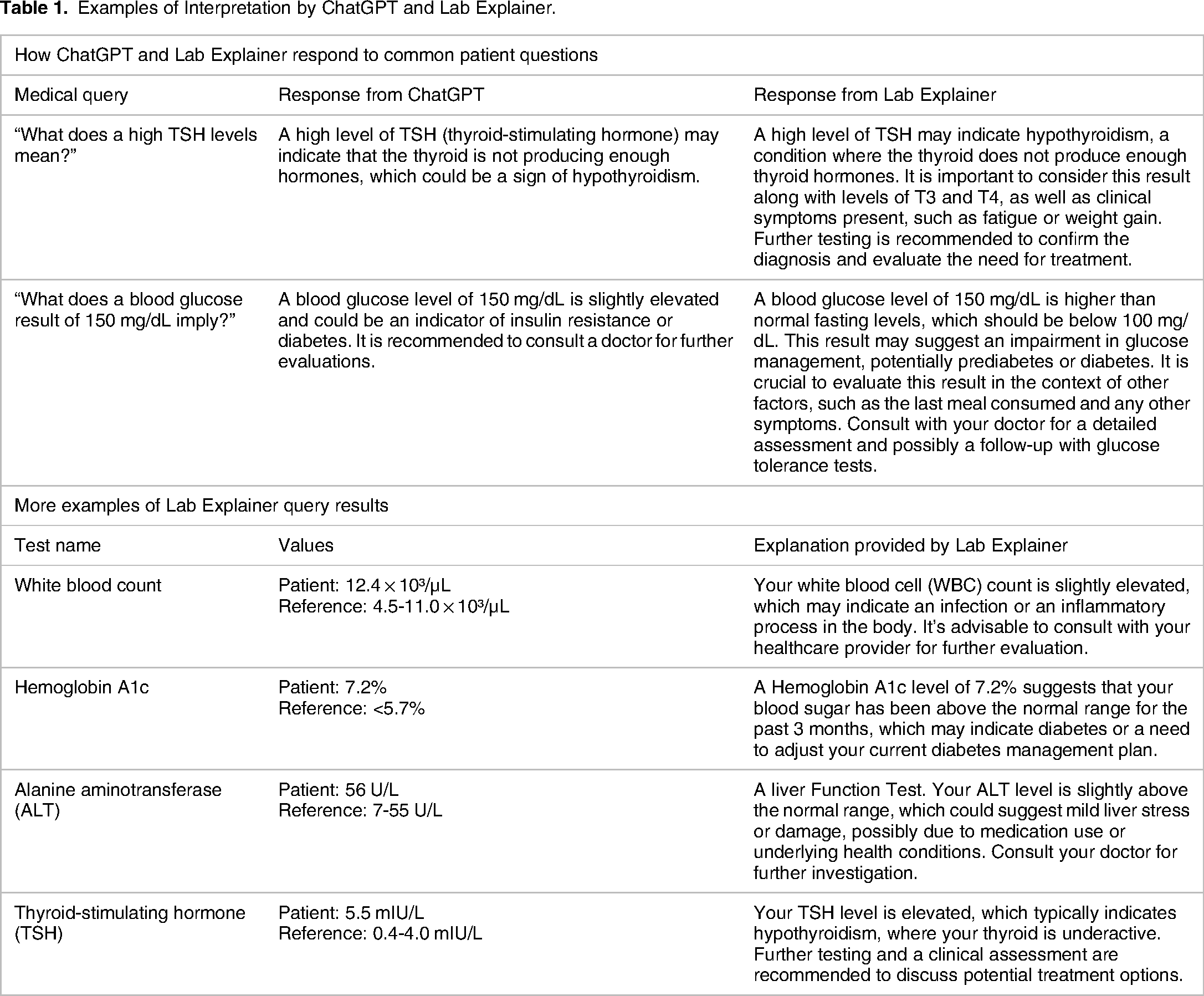

As a source of information, common questions from patients and clinical situations that frequently occur in clinical pathology laboratories were included in the context of the interpretation of laboratory results, as well as information from books on clinical pathology and laboratory medicine. The GPT Builder database uses supervised machine learning and transfer learning. Two hundred queries were generated and processed according to the user's needs and the complexity of the information requested. Natural or everyday language was used, specific medical terminology was used for technical or detailed queries, and images or photographs of laboratory tests were uploaded. The model has been tested since November 2023. The model does not learn autonomously or in real time. FAQ patterns and types are used to refine and improve answers. Unlike ChatGPT, Lab Explainer is specifically tailored to provide relevant answers to interpret lab results. To develop a GPT using OpenAI's GPT Builder, the process requires and goes through the following stages: data collection and analysis, rapid design, model training and tuning, testing and validation, and implementation and continuous feedback. Below is Table 1, which shows how ChatGPT and Lab Explainer react differently.

Examples of Interpretation by ChatGPT and Lab Explainer.

As with any advanced AI tool, the accuracy of the results provided by Lab Explainer may vary depending on several factors. Lab Explainer highlights the interpretation of typical laboratory results, such as blood tests, providing contextual information, normal ranges, and interpretation of clinical results (Table 1). It can be an appropriate source of information for end-users on medical issues and laboratory tests. However, limitations of Lab Explainer include that it can be inaccurate when multidisciplinary knowledge is required, it does not immediately incorporate new evidence or newer guidelines, it relies on accurate data information for learning, and to access it, you must have a subscription to https://chat.openai.com.

We show 2 examples, one of success and one of failure of the Lab Explainer:

Case 1: Explaining Hemoglobin A1c Levels

- Scenario: A patient inquires about her hemoglobin A1c level, which is slightly above the normal range. - Response: The Lab Explainer provided a detailed explanation of what A1c levels indicate about blood glucose control over the past 3 months, possible reasons for an increase, and steps to take for management. - Outcome: The patient appreciated the context provided and understood the implications for diabetes management and the need for potential adjustments to her treatment plan. - Scenario: A user received an explanation of their renal function tests where Lab Explainer failed to accurately assess severity based on creatinine clearance rate. - Response: The tool provided general information about kidney function, but did not adequately emphasize the critical nature of the values presented and failed to recommend urgent medical consultation. - Outcome: This oversight may have delayed necessary medical intervention. The issue was later identified during a routine review of system responses, resulting in an update to the way Lab Explainer handles similar queries. - Improvement: Enhanced training with more specific examples of renal function abnormalities and inclusion of more explicit warnings for critical values.

Case 2: Misinterpretation of Kidney Function Tests

It can inform end users about medical issues and laboratory tests, as shown in the case of explaining hemoglobin A1c levels, where the patient receives the readable information (Table 1). However, limitations of Lab Explainer include that it can be inaccurate when multidisciplinary knowledge is required, does not incorporate recent evidence or newer guidelines immediately, and relies on accurate data information for learning.

The results of a Lab Explainer query include a table with the name of the test, intended purpose, patient values (with any abnormalities emphasized), reference values, and a simplified explanation of the results. Assuming an informative position, it is strongly recommended that people consult with a healthcare professional about their abnormal results; the GPT platform does not provide direct medical advice.

Conversation starters in Lab Explainer include topics such as: (1) Interpreting complex data (Lab Explainer, I have my liver function test results with several values out of range. Can you explain to me what these numbers mean in a general health context?); (2) Trend query (I have had blood tests done regularly for the last 5 years, Lab Explainer, explain to me how my cholesterol levels have changed over this time and what implications this could have); (3) Comparison to population norms (Lab Explainer, my latest blood glucose results are slightly different from my previous ones. How do these values compare to normal ranges for my age group and gender?); (4) Advice on follow-up testing (I received a high result on my PSA test, Lab Explainer, what other tests or exams should I consider to get a clearer idea of my health?), and lastly; (5) Plain language explanations (I have a lab report that says that my TSH levels are elevated, Lab Explainer, could you explain to me in simple terms what this means and what should I do about it?).

This Lab Explainer is easy to use and can provide clear and understandable explanations; therefore, it has the potential to inform patients so that they understand their current health status. In addition, this tool could be used for medical research purposes to analyze large volumes of laboratory tests and identify possible patterns and trends.

The accuracy of the response is determined as the user interacts with the Lab Explainer.

Although ChatGPT and related models have demonstrated the ability to understand natural language and generate responses, the extremely complex and varied nature of laboratory test results requires a level of precision and medical expertise that AI systems do not currently possess. Therefore, these cannot replace the physician, as the work of Stevenson et al demonstrates. However, the incorporation of AI tools represents an advance in knowledge. 6

Conclusion

In conclusion, the Lab Explainer program provides accurate and patient-relevant explanations of the interpretation of laboratory tests.

Footnotes

Acknowledgments

The authors would like to thank the National Council of Humanities, Sciences and Technologies (CONAHCyT) for the scholarship No.791084 awarded to V.H.O.M., and the support given by the “Eduardo Perez Ortega” Clinical Pathology Laboratory.

Author's Contribution

VHOM and EPC conceived and designed the work; LPCM and MTHH drafted the manuscript, with critical comments from CAMC, EPCM, and ECP. All authors contributed to the article and approved the version submitted.

DECLARATION OF CONFLICTING INTERESTS

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

FUNDING

The author(s) received no financial support for the research, authorship, and/or publication of this article.