Abstract

In today’s workplaces, the promise of AI recommendations must be balanced against possible risks. We conducted an experiment to better understand when and how ethical concerns could arise. In total, 379 managers made either one or multiple organizational decisions with input from a human or AI source. We found that, when making multiple, simultaneous decisions, managers who received AI recommendations were more likely to exhibit lowered moral awareness, meaning reduced recognition of a situation’s moral or ethical implications, compared with those receiving human guidance. This tendency did not occur when making a single decision. In supplemental experiments, we found that receiving AI recommendations on multiple decisions increased the likelihood of making a less ethical choice. These findings highlight the importance of developing organizational policies that mitigate ethical risks posed by using AI in decision-making. Such policies could, for example, nudge employees toward recalling ethical guidelines or reduce the volume of decisions that are made simultaneously.

Keywords

AI, which is designed to simulate human intelligence and handle tasks traditionally performed by humans, is becoming increasingly integrated into organizational functions. 1 According to a 2023 McKinsey survey, 55% of organizations have adopted AI, including in areas with profound social, legal, and ethical implications such as human resources (HR) management. 2 At the same time, AI’s influence on ethical decision-making has emerged as a critical concern. 3 Although AI can make decision-making more efficient, it may also reduce a user’s moral awareness, or recognition that a situation involves ethical aspects and thus should be assessed from a moral perspective.4,5 Failure to recognize moral concerns at the outset of the decision-making process can hinder employees’ ability to make ethically sound judgments and choices.6,7

Several high-profile scandals illustrate this danger. For instance, between 2013 and 2019, Dutch tax authorities used AI-driven software to identify households likely to commit tax fraud.8,9 At that time, people were not as inclined to question the ethical implications of AI recommendations and perceived the recommendations as free from ethical concerns. However, these recommendations disproportionately targeted disadvantaged socioeconomic groups. As a result, the tax authorities wrongfully accused over 20,000 households of fraud, which pushed many families into poverty and placed thousands of children into foster care.

Despite the significance of such real-world evidence, many questions about AI’s ethical implications remain. For example, in one pioneering study, scholars observed that employees were more likely to comply with unethical instructions from human supervisors than from AI systems. 10 However, that investigation involved making a single decision, whereas in the real world, people often make multiple decisions simultaneously. Therefore, it remains unclear when and how using AI recommendations can increase the risk of people making unethical decisions.

We conducted a study that addressed this important issue in two ways. We compared how decision-makers respond to unethical recommendations issued by AI as opposed to humans, and we examined how making just one or multiple decisions simultaneously moderated this response. We found that, when making multiple decisions, people showed less moral awareness after reviewing advice from AI than they did when reviewing such advice from humans and were thus more likely to accept it. By contrast, when making a single decision, people subjected AI and human recommendations to almost equal ethical scrutiny. Taken together, these insights can help organizational leaders better weigh the ethical risks of AI against this technology’s potential economic benefits.

The Fuller Rationale for This Study

Our experiment built on broader research into decision-making and psychological responses involving AI systems. Human decision-making is often constrained by cognitive biases and reliance on mental shortcuts, which can lead to systematic errors.11,12 In contrast, well-designed AI systems can process vast amounts of data more consistently and efficiently, mitigating these human limitations. 13 The possibility that AI could therefore improve decision quality and reduce errors in human judgment has led to the widespread adoption of AI recommendation systems across sectors such as consulting and health care.14 –17 Consequently, there is a growing need to understand how humans think about and use AI decision-making tools.

Existing studies identify two primary but opposing psychological reactions to AI recommendations: algorithm aversion and algorithm appreciation. Algorithm aversion refers to a reluctance to rely on AI. For instance, some studies have found that employees who view AI as reductionist and unable to fully capture their performance tend to perceive HR decisions driven by AI as unfair. 18 People often hesitate to use algorithms once they see them make mistakes, even if the algorithm is statistically more accurate than human judgment.19,20 On the other hand, algorithm appreciation occurs when individuals recognize AI’s strengths, such as its efficiency and ability to process large data sets quickly and accurately. When AI systems are perceived as outperforming human capabilities in specific tasks, people may develop a strong preference for relying on AI recommendations. 21

Researchers have recently started to extend this line of work into the ethical space. In this context, algorithm appreciation, in particular, raises certain concerns. Management scholars suggest that when people observe AI making consistent, accurate decisions, these observations can reinforce that appreciation, leading to a distorted trust in or overreliance on these systems. 22 Ethical blind spots may then develop; for example, problematic acceptance of bias in AI systems. 23

Despite offering a level of consistency that exceeds human capabilities, AI is not immune to biases, which often originate from the data used to train AI systems; these data can reflect historical inequalities and societal prejudices.24,25 The AI Incident Database tracks “algorithmic harms,” events in which AI had negative consequences for people, property, or the environment. In the case of health care, AI has been accused of wrongfully denying elderly patients extended care. 26 Similarly, AI systems in hiring practices often replicate gender and racial biases from historical data, disadvantaging certain groups. 27

But perhaps unsurprisingly, given the dual responses of algorithm aversion and appreciation, investigations into how AI influences ethical decision-making have yielded contradictory findings. Several studies have found that people view AI systems as objective, neutral, and free from the emotional and cognitive biases that affect human judgment.29,30 These beliefs reduce moral scrutiny and efforts to hold AI systems accountable. Knowing that AI recommendations contribute to a decision may therefore lead to fewer feelings of guilt, reduced intentions to behave morally, greater moral disengagement, and indifference toward unfair outcomes.31 –33 The use of AI algorithms in managerial decision-making has also been linked to reductions in employees’ desire to help others. 34 Such findings suggest that people may be more likely to accept potentially immoral recommendations from AI than from humans.

In contrast, some studies suggest that employees are less likely to adhere to unethical instructions from AI than from human supervisors and may exhibit greater vigilance against AI-generated advice.10,25 This resistance may be driven by perceptions that AI lacks the human judgment and contextual sensitivity required for fairness in tasks like hiring or evaluation. 35 In these contexts, people may become more morally aware of potential flaws in AI recommendations and attempt to enhance AI systems with better moral capacities.24,36

Given this mix of contradictory findings, some researchers have argued that investigators must reframe the study of AI’s influence on moral decision-making to consider the context involved,37 –40 contending that researchers should consider when, as well as how, AI recommendations come into play to understand an individual’s ethical responses to AI advice. In our research, we took this context-sensitive approach and brought it to the area of AI in HR management.27,41 Decisions in this domain include determining an employee’s compensation and selecting appropriate job candidates. In the workplace, these choices are often structured as a set of simultaneous decisions and have long posed challenges for managers, given the difficulty of objectively evaluating employee performance or applicant fit.42,43 Today, numerous AI technologies support these decisions. 44 Although prior studies typically focused on single decisions, such as adjusting the salary of one employee,10,29 we attempted to better simulate workplace reality by examining how ethical responses to AI or human recommendations vary across different decision contexts. Specifically, we asked: When faced with multiple decisions at once, are organizational decision-makers less likely to recognize ethical issues and therefore more likely to accept morally questionable advice?

We theorized that decision-makers receiving AI recommendations would exhibit lower moral awareness than would decision-makers receiving recommendations from humans and that, because moral awareness is a critical precursor to upright decision-making, the reduced moral awareness would increase the likelihood of making an unethical choice. More specifically, we hypothesized that reduced moral awareness of AI recommendations would be more pronounced or likely to occur in contexts in which people have to make multiple simultaneous decisions rather than a single decision.

We reasoned that the need to make multiple decisions at once is cognitively taxing, leaving people with mental overload. As a result, in this case, decision-makers may rely on intuition by default 45 and unintentionally lean more heavily on AI recommendations to streamline the decision process without fully considering their moral implications. We thus proposed that when engaging with AI recommendations, biases about AI would be more likely to creep in, such as the general belief that AI lacks the moral motivation to discriminate or harm anyone. 46 Studies show that people are less inclined to morally condemn AI than they are to condemn humans for making illegal errors.46,47 If people assume AI is exempt from biases or harmful intentions, for example, potentially dubious recommendations generated by AI systems may not appear unfair or unethical. In contrast, a heightened awareness of ethical considerations in human-provided recommendations might prompt greater scrutiny, rendering unethical decision-making less probable when multiple simultaneous decisions must be made.

If our hypothesis was correct, then, it could help explain some of the inconsistent findings in the literature on AI-informed decision-making relating to unethical behavior, weaker responses to injustice, and moral disengagement.31 –33 In particular, our results would help to explain how studies that relied on single-decision tasks, which demand fewer cognitive resources, have not found a potential diminution in moral awareness linked to the use of AI. When faced with a single decision, decision-makers presumably have more mental bandwidth to identify or evaluate ethical concerns raised by AI recommendations. 10

Method

The Primary Study

To examine our hypotheses, we conducted a preregistered experiment (https://aspredicted.org/x4qg-bc3j.pdf). Preregistration enhances transparency and scientific credibility by publicly declaring one’s research hypotheses, methods, and analyses before conducting a study. For our primary experiment, we initially recruited 384 people as participants, but five failed an attention check question. Our 379 participants were real managers, recruited through Connect (https://www.cloudresearch.com/products/connect-for-participants/), a specialized online survey platform. The average age of our sample was 42.67 years, and 37.6% were women. On average, these managers had 10.27 years of managerial experience and held managerial positions across different levels, including executive roles (such as chief executive officer, vice president, dean), senior management positions (such as director, general manager, senior manager), and midlevel management roles (such as team lead, middle manager, HR manager).

In the primary experiment, people had to make a decision related to adjusting pay for a hypothetical employee. In this task, participants assumed the role of a manager tasked with modifying salaries for their subordinates based on their real-life managerial experience at work. To heighten experimental realism, participants first provided names or nicknames of five subordinates from their current workplace, which were subsequently integrated into standardized employee profiles. These profiles included job tenure, performance metrics, and salary adjustment recommendations.

We created four different experimental conditions by manipulating two factors: the recommendation source and the number of decisions. More specifically, we provided participants with either AI or human recommendations and presented them with either a single decision or multiple decisions. We then randomly assigned our participants to one of four conditions in which they received the following: (a) multiple AI-generated recommendations for five employee profiles, (b) multiple human-generated recommendations for five profiles, (c) a single AI-generated recommendation for one profile, or (d) a single human-generated recommendation for one profile. All participants received a small cash payment for participation. (We informed our participants that we would evaluate their choices and provide greater payment for better decision-making, although in practice, all participants received the same payment. This step was simply intended to enhance participant attention and encourage them to take the scenario seriously.)

A critical experimental feature involved embedding one unethical recommendation across all four conditions: a 2% salary reduction for an employee on paternity leave, despite the absence of any performance-based justification. Participants in multiple-decision conditions reviewed five profiles, with one employee recommendation containing this ethical violation. This recommendation contrasted with the recommendation of no salary change or a raise for other employees, including those with documented performance deficiencies (see the Supplemental Material for simulation setups). Participants in single-decision conditions received only the unethical suggestion for the corresponding employee.

After reviewing the profiles, participants filled out a brief survey. We included three items to specifically assess their moral awareness 4 and two unrelated “filler” questions to disguise our study purpose. We instructed participants to think about the recommendations and decisions to be made and then rate, on a scale of 1 to 7, the extent to which they agreed with statements such as “There are very important ethical aspects in these decision-makings.” A score of 7 indicated strongest agreement, 1 indicated weakest agreement.

Participants then reported whether they accepted or rejected the recommendation or were unsure about making their decision. We coded these responses as 1, –1, or 0, respectively. In the case of people asked to make multiple decisions, participants weighed in on each profile after reading all of the materials and recommendations back to back, meaning the decision-making for the five profiles needed to be made all together. Additionally, we asked participants in this primary study to answer several questions that could help us gauge whether their behavior reflected potentially confounding factors, such as their familiarity with AI tools and closeness to the people whose names they provided for the purposes of the study.

We based the study design on prior decision-making research, 10 with the notable modification of requiring some participants to make several decisions at once, a demand that, as we noted above, mirrors real workplace situations. We also tested our experimental design in advance through a separate pilot study with 174 participants to assess whether participants considered it a realistic representation of these kinds of organizational scenarios.

Supplemental Studies

In addition to our primary study, we ran two supplemental preregistered studies in which participants (recruited through Prolific, another online platform), made decisions in one of four scenarios: HR decisions about salaries, selecting job candidates for interviews, choosing employees to receive skill-enhancing training, and determining who would be laid off to save money (see the Supplemental Material for more information). We selected these scenarios in light of the growing use of AI recommendations in handling these HR-related processes at varied organizations.48,49 Our comparisons of different decision-making situations were meant to help generalize our findings to diverse workplaces in the real world.

The study design largely mirrored the primary experiment with one significant difference: These experiments did not include a comparison condition in which participants made only one decision. Instead, all participants contemplated recommendations from either an AI or human source for multiple simultaneous decisions, resulting in two different experimental conditions rather than four in total. As in the primary experiment, we incorporated one unethical recommendation across our two conditions, such as rejecting a job applicant with a history of medical leave. To further investigate various psychological mechanisms at play, we also asked participants about the degree to which they felt the recommendations they had received were fair as opposed to being biased or prejudiced in some way.

Results

Primary Findings

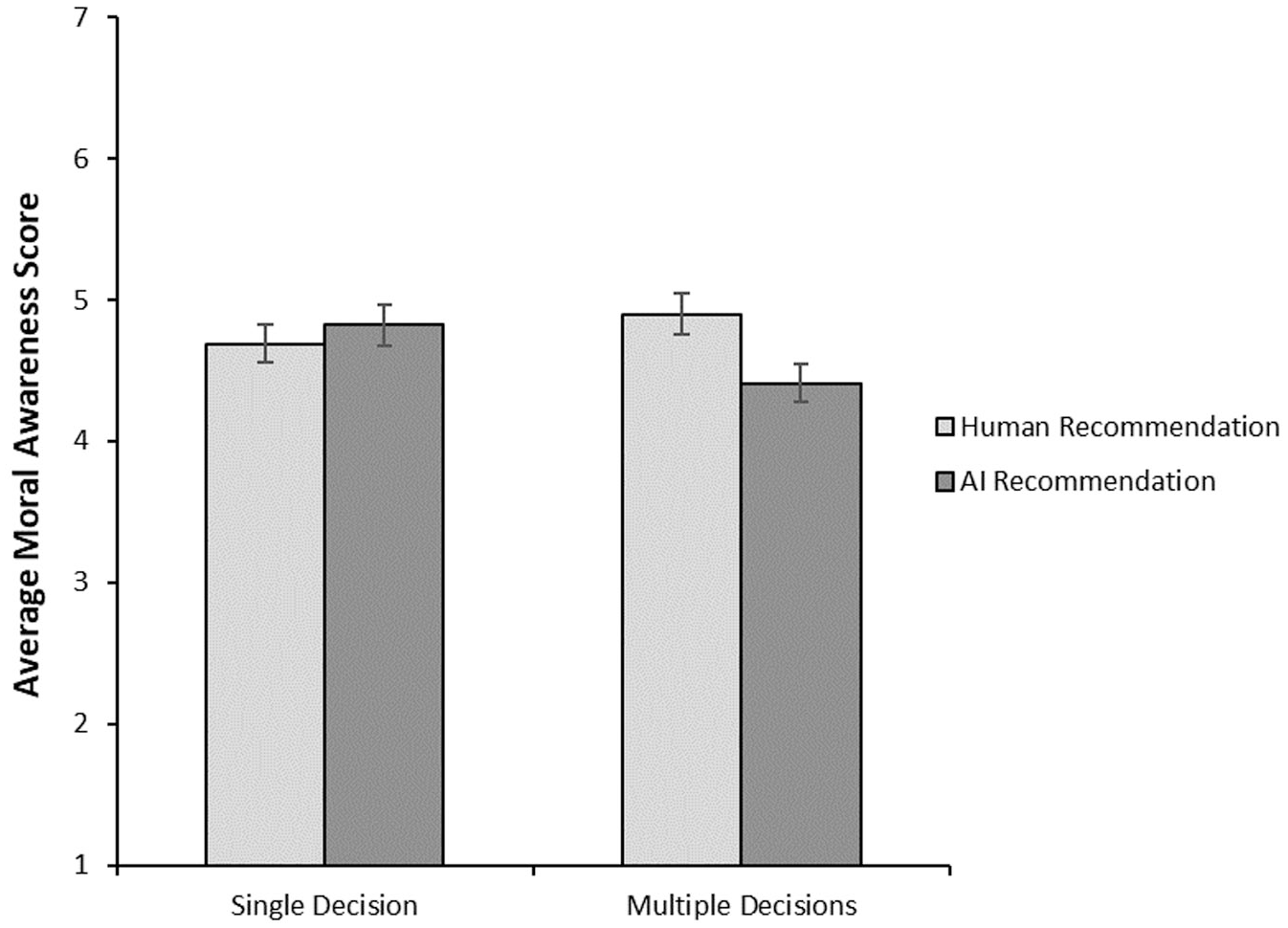

When we examined whether participants’ scores on moral awareness varied depending on whether they received AI or human advice (without looking at the number of decisions being made), one analytic approach we applied, a two-way ANOVA, found no significant results (p = .20). (See Note A for a discussion of the statistics mentioned in this article.) Similarly, when we did not take the source of the recommendations into account, moral awareness scores did not differ when participants made multiple decisions or just a single decision (p = .47). However, as we predicted, the interaction, or combination, of recommendation source (human or AI) and number of decisions (single or multiple) did affect moral awareness (p = .02). As Figure 1 shows, when making only a single decision, participants in the AI recommendation condition did not show a different level of moral awareness than did those in the human recommendation condition (directional p = .24). In contrast, when forming multiple decisions, participants displayed lower average moral awareness if the recommendations came from AI than if they came from a human colleague (directional p = .006). This finding suggests that when multiple decisions are being made, decision-makers perceive recommendations from AI to contain less ethically relevant content than do recommendations provided by humans.

Moral awareness levels

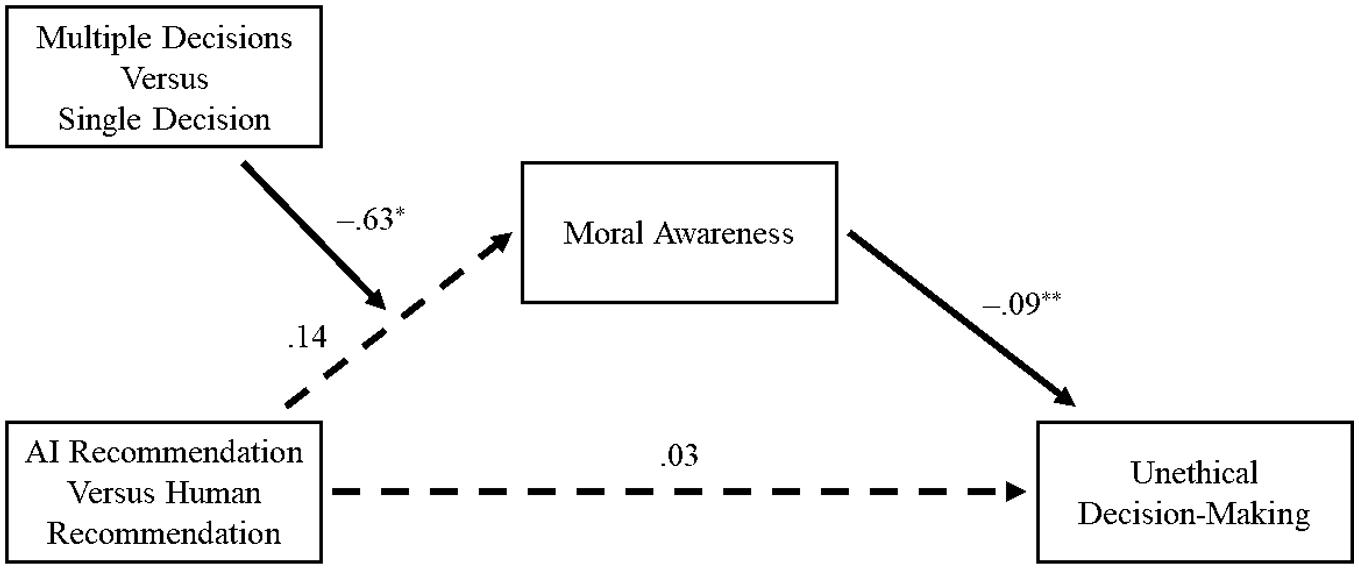

Regarding ethical decision-making, the two-way ANOVA results did not indicate a higher percentage of unethical choices in the AI rather than human recommendation situation (p = .51). Nor were unethical decisions linked to participants making five decisions as opposed to just one (p = .94). And surprisingly, the interaction of both factors did not reveal a significant tie with unethical decision-making (p = .33). (See Note B for more detail on the net tendency to accept as opposed to reject unethical recommendations in each experimental condition.) However, when we used a more precise, fine-grained statistical approach called moderated mediation analysis, 50 we found that, in the context of making multiple decisions, receiving AI recommendations reduced moral awareness (p = .01), which in turn made participants more likely to approve unethical decision-making (p < .001). This form of analysis offers insights into explanatory factors: In other words, lowered moral awareness could help us understand why people in this group might approve the unethical AI recommendation. Furthermore, we did not observe this mediation effect in the case of a single decision. The full statistics are shown in Figure 2.

Multipart model

To ensure our scientific findings were robust, we controlled for variables such as familiarity with AI, trust in AI, personal closeness, familiarity, and relationship length with the (recalled) employee “in question.” (See the Supplemental Material for additional information.)

Findings from the Supplemental Experiments

Statistical analyses, specifically chi-square and t tests, of the data from our supplemental experiments showed that participants making multiple decisions were more likely to show low moral awareness and accept an unethical proposal if recommendations came from AI as opposed to humans. (See Table S1 in the Supplemental Material for a breakdown of unethical decision-making by experimental condition.) For this analysis, we pooled data from across different scenarios. It is therefore possible that differences in the decision-making scenario could help explain why these supplemental studies found evidence that AI advice, in the context of making many decisions, was directly tied to taking unethical counsel whereas our primary study did not show that direct link. Advanced analyses (mediation analysis) also showed how moral awareness serves as a psychological mechanism in these unethical decisions: People who received the AI recommendations showed lower moral awareness and, in turn, people with lower moral awareness were more likely to accept an unethical recommendation. (See Figures S1 and S3 in the Supplemental Material for more details on the mediation analysis findings.)

One of our supplemental studies also allowed us to assess an additional psychological process: fairness perception, meaning the extent to which people believed recommendations were fair and unbiased. Past psychological research suggests that fairness perception could be an alternative to moral awareness in explaining unethical decision-making because perceiving something as fair or biased can lead people to form judgments about the morality of a particular choice. 51 We conducted two additional mediation analyses. The first indicated that, as in the primary study, moral awareness was a critical mediating factor even when accounting for fairness perception as the parallel psychological mechanism. (In other words, even after taking participants’ fairness perception into account, moral awareness still helped explain their tendency toward unethical decisions.) The second analysis suggested that fairness perception could be a second-stage mediator in a sequential process: After receiving AI recommendations, participants with lower moral awareness could subsequently fail to perceive possible bias or prejudice and were then more likely to engage in unethical decision-making. (See Figure S2 in the Supplemental Material for more detailed results of this analysis.)

Our supplemental experiment findings hint at other future research avenues. For example, we found some evidence that the degree to which participants identified as a moral or ethical person could affect their decision-making, but our results in this area are preliminary. (See the Supplemental Material for more details.)

Discussion

With AI adoption in management functions increasing across organizations, we investigated the ethical costs of providing algorithmic recommendations to human decision-makers. Moderated mediation analysis showed that, when multiple decisions had to be made simultaneously, using AI (as opposed to human) recommendations lowered moral awareness, which in turn led managers to be more likely to accept unethical advice. Our supplemental studies extended our primary experiment, finding a direct link between receiving AI recommendations, but not human counsel, and making more unethical decisions.

Adding to Past Insights on AI Ethics & Human Decision-Making

Our primary and supplemental experiments together contribute to a better understanding of how AI recommendations might sway managerial decision-making toward unethical outcomes. First, ours is one of the few studies to consider the ethical consequences of relying on algorithmic advice. Recognizing the well-documented risk of biases in AI outputs, we drew on the ethical decision-making literature to highlight the moral risks of accepting AI recommendations. This effort aligns with warnings from AI ethics scholars that unquestioning faith in algorithms can create serious ethical blind spots, with severe consequences for individuals and organizations. 52 Our study introduces moral awareness as a novel mechanism in explaining why people may accept potentially unethical AI recommendations.

Second, given that management scholars have called for more investigation of AI’s ethical impact on employees and managers in the workplace,53,54 we answer these important calls by providing some insights into possible ethical costs of AI-assisted decision-making in organizational scenarios. Past work, in contrast, focused on unethical actions in the context of a person’s purchasing decisions. For example, in one study, researchers found that customers were more likely to engage in unethical behaviors—such as providing a false reason for a return—when interacting with AI as opposed to human customer service representatives. That work found that when interacting with AI, as opposed to humans, customers experienced less anticipatory guilt, a negative emotion elicited by thinking about a possible future transgression. 55

Third, by designing an experiment that closely simulated workplace reality, with managers making simultaneous decisions assisted by AI or human recommendations, we obtained evidence that better reflects actual managerial practice than previous studies focused on making single decisions. 10

Finally, our subtle manipulation of unethical content (for instance minor salary reductions for employees on parental leave) allowed for a rigorous test of the moral awareness mechanism. Participants needed to detect an ethical issue embedded in a realistic yet ambiguous recommendation, reflecting the way ethical risks often manifest in practice.

Implications for Managers, Organizational Leaders, & Policymakers

To mitigate unethical outcomes associated with AI recommendations, scholars and policymakers have typically emphasized improved data sourcing used in AI training, which could reduce the risk of introducing unwanted biases into AI. Another frequent recommendation is establishing more rigorous and regular audits of algorithmic tools, a practice that can improve the understanding of their performance and limitations.56,57

Recognizing that AI rarely bears moral responsibility, another proposed solution involves maintaining human oversight in algorithmic processes. 58 For instance, the European Union AI Act requires that AI tools used in employment and worker management have functionality that supports users should they decide to not use, disregard, override, or reverse the output these tools provide. 59 However, our findings suggest that managers, who typically oversee these decisions, may themselves be vulnerable to the problem of reduced moral awareness when using AI, particularly when they face recommendations for making several important decisions at once. We instead encourage organizations and policymakers to invest in the potential interventions documented below to strengthen decision-makers’ ethical awareness and judgments.

Design Nudges to Improve Moral Awareness

Using nudges is one promising solution informed by behavioral science. Richard Thaler and Cass Sunstein, who coined the term, defined nudges as brief interventions that alter “people’s behavior in a predictable way without forbidding any options or significantly changing economic incentives.” 60 These interventions could be low cost and effective in curbing unethical behavior. For example, past research shows that interventions can reduce unethical behavior by providing cues that prompt decision-makers to think about their moral values.61 –64 Accordingly, organizations and policymakers can develop behavioral interventions that act as moral reminders for decision-makers.

We suggest that nudges such as subtle prompts embedded in decision-making software or interfaces could encourage managers to more carefully evaluate AI-generated recommendations.65 –67 The messages might include reminders about ethical guidelines, explicit references to organizational values, or checklists prompting ethical reflection. Organizations could regularly display warnings or pop-ups in decision systems, especially when a large set of high-stakes decisions must be made, reminding decision-makers that AI recommendations can be biased. Slogans that emphasize potential moral concerns could also be shown to sway decision-makers. These interventions help shift people’s attention in ways that encourage them to keep ethics top of mind during AI-assisted decision-making.

Separate Evaluations & Single Decisions

A second practical strategy from the scholarly literature that could enhance moral awareness and prevent unethical decision-making involves reducing the challenges associated with making multiple decisions simultaneously. For example, organizational leaders can separate evaluations to ensure that decisions are made one at a time rather than burdening a manager with the task of reviewing multiple employees all at once. (See Note C for a discussion of how the multiple and single decision-making scenarios compare with related paradigms involving joint and separate evaluations.) Behavioral science research indicates that decision-makers exhibit significant differences in their judgments when evaluating options individually rather than simultaneously, which is similar to our findings in the primary experiment, a phenomenon known as preference reversals. 68 Separate evaluations often heighten sensitivity to morally significant details that might be overlooked when several decisions are considered simultaneously. 69 Implementing separate evaluations may ease cognitive load, encourage deeper ethical reflection, and consequently strengthen decision-makers’ ability to recognize and reject potentially unethical AI advice.

Limitations

Bear in mind several limitations to our research when interpreting our findings. First, although we sought to examine various workplace situations across our studies, we cannot be certain that our results generalize to other conditions. Future research could replicate our findings beyond HR management functions, for example. In addition, several mechanisms may come into play when explaining how people’s moral awareness declines when turning to AI recommendations and making multiple decisions. Our supplemental studies tested only one such mechanism, fairness perception, but others warrant further consideration. For instance, the adoption of AI-based decision support systems might make humans feel less responsible for their decisions, 70 thereby increasing the likelihood of people behaving unethically.

Finally, the decision-making scenarios used in our study involve relatively subtle instances of unethicality, with only one of multiple recommendations being unethical. Although this approach aligns with many real-world cases involving AI recommendations, in day-to-day life, people may also encounter more overt or egregious examples of unethical recommendations. We encourage future studies to investigate whether the effect on moral awareness could be replicated with more obvious unethical recommendations provided by AI as opposed to human advisors.

Conclusion

In conclusion, our research represents a crucial first step to understanding when and how decision-makers accept the unacceptable in the AI era. We open new avenues for future studies and offer insights into possible policies that organizations or societies could broadly employ to prevent AI recommendations from eroding moral awareness in workplace decision-making contexts.

Supplemental Material

sj-docx-1-bsx-10.1177_23794607251384574 – Supplemental material for Accepting the unacceptable in the AI era: When & how AI recommendations drive unethical decisions in organizations

Supplemental material, sj-docx-1-bsx-10.1177_23794607251384574 for Accepting the unacceptable in the AI era: When & how AI recommendations drive unethical decisions in organizations by Gabrielle Voiseux, Ryan Tao Zhou and Hsuan-Che (Brad) Huang in Behavioral Science & Policy

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.