Abstract

The application of artificial intelligence (AI) in medicine presents unprecedented potential but challenges traditional notions of human identity and medical ethics. Through a systematic literature review and thematic analysis of the interdisciplinary conference “Minds and Machines”, we examine the transformative impact of AI on medical practice, consciousness, and human enhancement considering the clinical, ethical, and regulatory contexts. Successful integration of AI requires a delicate balance between innovation and safeguarding the human component in healthcare through robust ethical frameworks, enhanced medical education, and person-centered implementation.

Keywords

Introduction

The convergence of artificial intelligence (AI) and medicine represents a significant transformation in healthcare, radically reshaping medical practice and challenging our understanding of medical cognition and professional identity. As AI systems demonstrate increasingly advanced capabilities in analyzing medical data, making clinical decisions, and executing autonomous tasks, healthcare faces unprecedented opportunities and challenges in care delivery and medical ethics.1,2 These changes are occurring amid emerging new pressures, including ageing societies, increased disease burdens, and limited means to face them. The evolution from mythical creations, such as the Golem, to contemporary transhumanist perspectives, which advocates the enhancement of the human condition is evidence of humanity's perennial desire to create intelligent beings. 3

New data reveal AI's singular potential and limitations. Current evidence presents stark contrasts. For example, Google's AI reduced breast cancer screening errors by 9.4% 4 and IDx-DR, the FDA's first approved independent diagnostic system, has a reported sensitivity of 87.2% for diabetic retinopathy. 5 In contrast, a healthcare algorithm undertreats African American patients by confounding healthcare costs with needs, 6 and after investing 62 million of dollars IBM Watson's oncology project failed due to unstructured data processing difficulties. 7 Furthermore, Epic's Deterioration Index embedded within the Epic electronic healthcare system showed 14% lower performance for Native American/Alaska Native patients, raising questions about algorithmic bias. 8

These contrasting results, technological triumphs, and algorithmic injustices, underscore how the potential of AI confronts fundamental questions of human identity and patient equity. Drawing from the interdisciplinary “Minds and Machines” conference at the University of Milan, where researchers from neurology, AI sciences, bioethics, and law examined these issues (Box 1), this article explores how AI redraws our conceptual map of human thought, medical decision-making, and the doctor–patient relationship, analyzing integration methodologies that enhance, rather than beat human potential at the bedside.

Key insights from “Minds and Machines” symposium.

AI development: from Golem mythology to transhumanism (Barbieri) Consciousness research reveals phenomenal experience as uniquely biological (Massimini) “Byte versus Bio” debate on machine consciousness (Folgieri) Technical substitution threatens human faculties (Crippa) AI challenges legal frameworks (Tanga) Ethics as a barrier against technocracy (Magnoni) Brain–machine interfaces: restorative (Angotzi) versus enhancement (Stigliani) Digital medicine in neuroscience and psychiatry (Brambilla) Robotics/VR/AR revolutionize the operating room (De Momi) Big data enables personalized medicine (Marceglia) AI transforms healthcare management (Buijs, Carrafiello)

AI: artificial intelligence; VR: virtual reality; AR: augmented reality.

Consciousness metrics

Understanding the nature of human consciousness provides essential insights for the integration of AI into medicine (Table 1). Consciousness can be defined as the result of the brain achieving a dynamic equilibrium between functional integration (i.e. the binding of widely distributed neural activity into an integrated experience) and differentiation, which is understood as the rapid transition between distinct neural states.9,10 This balance, quantifiable as neural complexity, is maintained at high levels during wakefulness and rapid eye movement sleep but breaks apart during deep sleep, anesthesia, and epileptic seizures, consequently signaling that consciousness does not depend on global brain activity but on simultaneous integration and differentiation. The perturbational complexity index (PCI) can quantify these states by measuring the complexity of the cortical response to transcranial magnetic stimulation and returning a value for conscious states. 11 In a case series of 43 patients in a vegetative state, the PCI accurately distinguished possible residual consciousness in nine (21%) behaviorally unresponsive patients, and these high-complexity patients had indeed improved clinical outcomes at the six-month follow-up. 12 Those advances in understanding and measuring the human consciousness states have implications for AI-based diagnostic platforms and brain–computer interfaces (BCIs) and enable more accurate determination of the state of patient consciousness.13,14

Brain–machine interfaces and neural enhancement

Recent advances in neuroelectronics are enabling next-generation brain–machine interfaces (BMIs) with profound medical implications. For example, simultaneous neural active pixel sensor probes using complementary metal-oxide semiconductor technology can record 512–1024 neurons simultaneously and maintain stable recordings for days in preclinical in vivo models.15,16 Neuropixels 2.0 offers miniaturized probes with 5120 recording sites that weigh only 1.1 g when paired, tracking the same neurons for months. 17 In addition, commercial BCIs are advancing rapidly. Neuralink's first human patient can play video games with thought control, while companies such as Synchron, Paradromics, and Precision Neuroscience are competing in expanding markets.18,19 These technologies could help paralyzed patients communicate and enable the development of diagnostic tools for seizure prediction and adaptive deep-brain stimulation.

In addition, BMIs are increasingly blurring the boundaries between treatment and enhancement, pushing against frameworks that focus solely on restoring functions. A key point that emerged from recent symposium discussions was the concept of the “enhancement cascade”: once cognitive enhancement becomes available, social and professional competition could make it seem less like a choice and more like a necessity. However, therapeutic BCIs are very expensive, in the order of hundreds of thousands of dollars, making them accessible only to individuals with strong insurance coverage or economic means. 18 If these systems transitioned from medical tools to enhancement devices, such high costs could fuel a new level of inequality, creating a future where only the wealthy can afford cognitive upgrades.

These technologies also collect in depth personal data about our thoughts and mental identity, putting neural privacy at the forefront and raising important questions about data sovereignty (Who owns our brain data?), mental privacy (Could our thoughts be disclosed), and cognitive freedom (Do we have the right to control our mental processes?).

Neuroimaging and AI diagnostics

The convergence of AI with neuroimaging transforms our understanding of brain function and neuropsychiatric disorders. 20 Breakthrough applications include using multimodal magnetic resonance imaging-positron emission tomography (MRI-PET) to detect Alzheimer's disease 6–10 years before symptom onset, achieving >95% accuracy in diagnosis. 20 In addition, neuroimaging biomarkers increasingly predict psychosis conversion in high-risk youth with 74% accuracy, 21 and the PRONIA consortium demonstrated 82.7% accuracy in predicting functional outcomes in high-risk individuals and identified transdiagnostic predictors, including gray matter volume patterns.22,23 Furthermore, our research group utilized support vector machines and machine learning to identify patient subgroups across neuropsychiatric conditions. 24 First-episode psychosis classification reached >80% accuracy using cortical thickness in 127 patients, whereas electroencephalography-based models predicted depression treatment responses with >80% accuracy. 25 These multimodal approaches consistently outperform clinical prognostication, enabling personalized treatment based on individual characteristics.

Surgical robotics and augmented reality

Surgical practice is being fundamentally modified by robotics and augmented reality powered by AI. The da Vinci Surgical Robotic System, for example, has been used in more than 3.3 million procedures, with an overall malfunction rate of only 1%. Most problems stem from instrument failures (0.4%) rather than the system itself (0.1%), leading to conversion to open surgery in 0.09% of cases and patient injuries in 0.01%.26,27

Today's robotic systems significantly enhance what surgeons can see, offering high-definition 3D images and augmented reality overlays that project real-time navigation data directly into the surgeon's field of view. However, the lack of comprehensive haptic feedback is still a key limitation. This gap means that surgeons cannot fully perceive tissue consistency or tension as they would during traditional surgery, forcing them to rely more on visual information and experience than on touch.

Administrative AI

Healthcare efficiency

Administrative AI offers healthcare a very much-needed solution for issues, such as rising costs exceeding 10% of the gross domestic product in affluent nations. Chong et al. 28 demonstrated a 17.2% reduction in MRI no-show rates (from 19.3% to 15.9% with an area under the receiver operating characteristic curve of 0.746) using machine learning to guide targeted phone reminders for 32,957 appointments, with their XGBoost model. A systematic review by Buijs et al. 29 found that AI improves healthcare access through appointment scheduling, worklist prioritization, and automated billing, allowing staff more time for patient care, though limited quantitative evidence exists. The idea of “intelligent time,” in the sense of prioritizing meaningful patient interactions rather than administrative efficiency, which AI could enhance, brings the focus back to human-centered care.30,31

Big data analysis

AI and big data analysis are revolutionizing clinical research and evidence-based medicine. Since the completion of the Human Genome Project, we have witnessed exponential growth in omics sciences, paving the way for machine learning and personalized medicine. Today, we have access to an unprecedented volume of health data that feed advanced algorithms capable of analyzing complex clinical information, such as identifying subtle connections between genetic variations and drug responses that traditional methods might overlook.

Patient autonomy

However, alongside these revolutionary discoveries, real concerns emerge regarding patient autonomy. For example, general practitioners are nine-times more likely to modify a treatment plan when AI offers a conflicting recommendation, with 66.7% deferring to the algorithm, often at the expense of patient collaboration. 32 Furthermore, Conformité Européenne-certified medical AI tools scored approximately 60% for transparency, often failing to disclose crucial details, such as training data, ethical safeguards, or known limitations. 33 Moreover, although 90% of patients are aware of AI in healthcare, only 24% understand how it is used. 34

Equity and global health disparities

The “AI deployment paradox” refers to a troubling trend: AI tools built to support health equity in low- and middle-income countries (LMICs) can deepen inequalities due to poor infrastructure and lack of local data, often called “data poverty” 35 in LMIC countries. For example, healthcare systems in Africa, which handle 13% of global cardiovascular deaths, have to struggle with electricity shortages and understaffing, making effective use of AI nearly impossible. 36

Furthermore, many medical AI systems are trained on data from wealthier countries, which means they often do not reflect the realities or needs of populations in LMICs. This can lead to biased outcomes and continued reliance on foreign technology. On the other hand, offline AI tools that run on smartphones have shown excellent accuracy even when used by minimally trained operators. 37 Open-source models such as Llama 3.1 now rival GPT-4 in diagnostic accuracy, and they offer clear benefits in terms of both accessibility and data privacy for settings with limited resources. 38

Thus, approaches such as transfer learning and locally adapted AI models are promising. Grassroots efforts focused on “frugal AI,” the use of basic smartphones and community-led strategies, could help close the gap in global health technology access. 39

Legal and regulatory evolution

The European Union AI Act (2024) and FDA framework classify medical AI as high risky, requiring continuous oversight, though “locked” versus adaptive algorithms create regulatory tensions between safety and innovation. 40 A recent analysis highlighted specific challenges in implementing large language models under this framework, particularly regarding transparency requirements, data governance, and the need for continuous monitoring in healthcare applications. 41

Legal experts during the symposium highlighted how liability models evolve from traditional malpractice to complex frameworks including shared responsibilities, vicarious liability, and “liability overlaps” where multiple actors bear partial accountability, particularly when AI approaches autonomous decision-making. 42 Courts are beginning to address these challenges, as in Estate of Lokken v. UnitedHealth, where AI's denial of care with a 90% reversal rate on appeal raised questions about algorithmic accountability versus physician oversight becoming mere “rubber-stamping”.43,44 We propose a framework for AI error accountability in Box 2. Additional information on preserving human capabilities, medical reform, and the implementation framework, including a clinical override protocol, is presented in Supplemental File S1.

Proposed framework for AI error accountability.

Technical failure → Developer liability Data bias → shared liability (Institution + data provider) Implementation error → Healthcare institution liability Human override → Individual practitioner liability Meaningful review (>2 min documented) → shared responsibility Rubber-stamping (<30 s) → institutional liability for inadequate protocols No review of high-risk decision → negligence AI confidence score Time spent reviewing Clinical reasoning if disagreement Outcome tracking

Practitioners meeting these standards receive liability protection:

Maintains 20% manual procedure quota Documents all AI overrides with rationale Completes annual AI competency certification Reports systematic AI errors within 48 h

AI: artificial intelligence.

AI limitations in clinical applications

Recent advances have potential clinical applications, but we must differentiate functional consciousness (information processing) and phenomenal consciousness (subjective experience).45,46 Although AI excels at function-based work, the problem of subjective experience—Chalmers’ so-called “hard problem”—has highlighted intrinsic limitations in the field. 47 Clinical judgment relies on “tacit knowledge,” the know-how derived from direct patient experience that does not appear to be algorithmically encodable.48,49 For example, experienced clinicians often perceive patient deterioration before changes in measurable parameters, 50 a talent that AI does not currently possess. This quality transcends pattern recognition and is the culmination of microexaminations and contextual clues processed unconsciously and integrated by virtue of years of physical presence with patients.

Conclusions

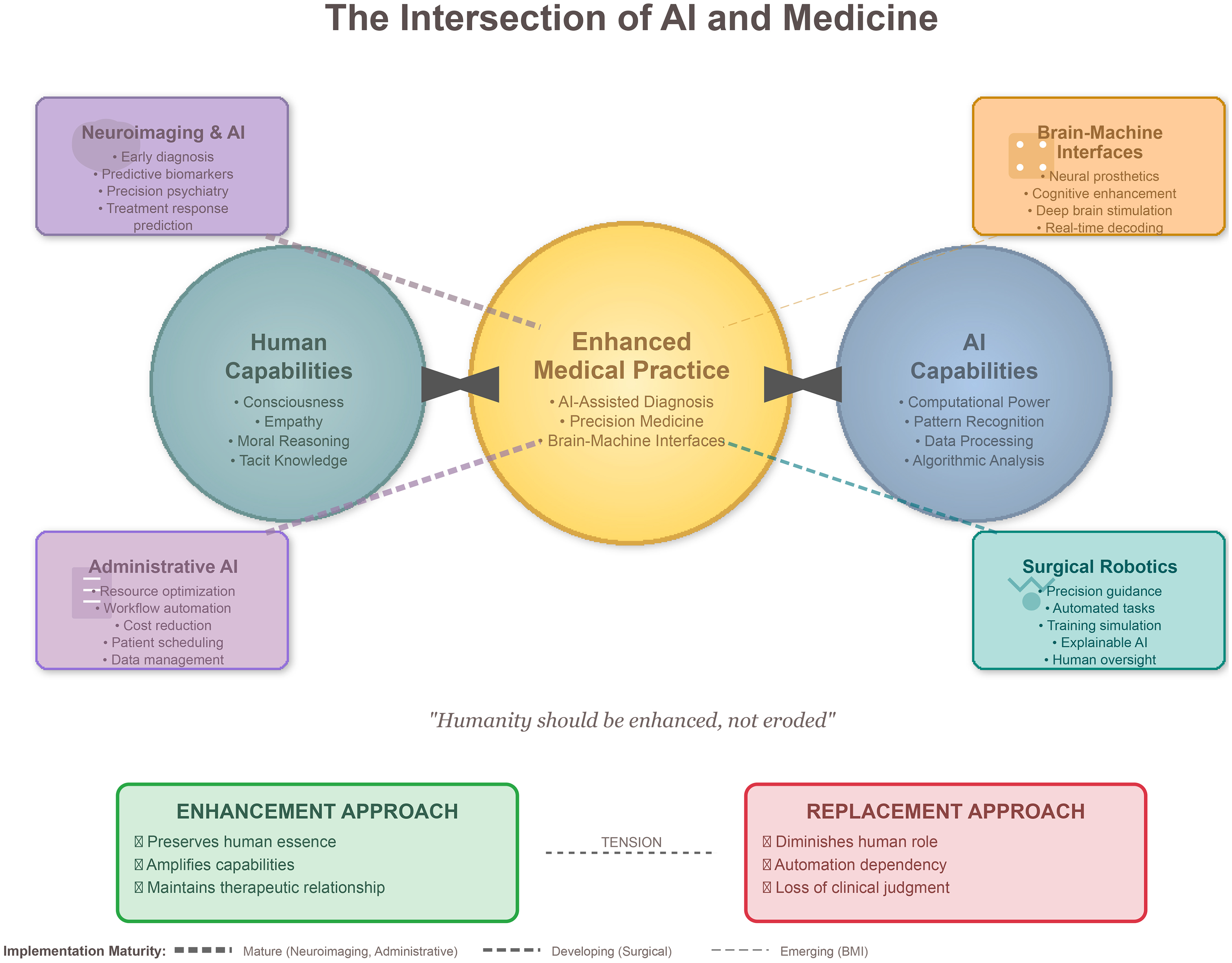

At the interface of medicine and AI, rigorous implementation is essential to ensure that technological advances align with patient-centered values (Figure 1). The future is not a choice between human and AI but blending them harmoniously. The key to success is maintaining the humanitarianism of medicine and embracing technical advances. As participants at our symposium noted: “The question isn't whether AI will change medicine, but whether medicine will change AI to respond to humanity's fundamental needs.” The key aspects of human experience (consciousness, emotion, ethical thinking, and capability for profound relational interaction) must be complemented, not supplanted, by technology. Only by tempering technology with these aspects it will contribute to the healing art of medicine.

Diagram depicting the prospective synergistic integration of human and artificial intelligence (AI)-specific capabilities within the field of medicine. Directional arrows represent the bidirectional enhancement of human expertise (green) and AI competencies (blue), converging toward an optimized and improved medical practice (yellow center). Four principal domains of application are delineated and color-coded: neuroimaging and AI (purple), brain–machine interfaces (orange), surgical robotics (teal), and administrative AI (lavender). The thickness of the connecting lines denotes the relative maturity of the implementation across these domains. The lower section contrasts the enhancement paradigm (green box) with the replacement paradigm (red box), underscoring that our conceptual framework promotes human augmentation rather than human substitution. Implementation strategies for AI integration in medicine. AI: artificial intelligence; XAI: explainable AI; RCT: randomized controlled trial. References list in Supplemental File.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251390473 - Supplemental material for Minds and machines: AI's transformative role in human identity and medicine

Supplemental material, sj-docx-1-dhj-10.1177_20552076251390473 for Minds and machines: AI's transformative role in human identity and medicine by Stefania Corti, Roberta Ferrucci, Gian Nicola Angotzi, Andrea Arighi, Paolo Brambilla, Elvira Buijs, Gianpaolo Carrafiello, Mauro Crippa, Elena De Momi, Paolo Del Debbio, Raffaella Folgieri, Marco Giachetti, Aldo Bruno Giannì, Walter Magnoni, Sara Marceglia, Marcello Massimini, Domenico Stigliani, Matteo Stocco, Antonio Tanga, Linda Ottoboni and Sergio Barbieri in DIGITAL HEALTH

Footnotes

Acknowledgments

We thank all participants of the “Minds and Machines” conference for their valuable contributions.

Author contributors

SC and RF contributed equally as cofirst authors to conceptualization, methodology, investigation, and writing; SB served as the senior author, providing supervision and final review; GNA contributed brain–machine interface expertise; AA and PB provided neuroimaging and clinical applications; EB and GC contributed healthcare management and radiology perspectives; MC provided media expertise; EDM contributed surgical robotics analysis; PDD and RF (University of Milan) provided philosophical perspectives on consciousness and AI; MG contributed clinical methodology; ABG provided surgical expertise; WM contributed ethical analysis; SM provided health sciences expertise; MM contributed consciousness measurement expertise; DS provided technology industry perspectives; MS contributed philosophical analysis on human enhancement; AT provided legal framework analysis; LO contributed neuroscience methodology. All authors participated in the “Minds and Machines” symposium, contributed to critical revision, and approved the final manuscript.

Funding

SC and PB were partially supported by grants from the Italian Ministry of Education and Research—MUR and the Italian Ministry of Health. Ricerca Corrente 2025 Italian Ministery of health to SC. The Department of Pathophysiology and Transplantation, University of Milan, is funded by the Italian Ministery of Education and Research (MUR): Dipartimenti di Eccellenza Program 2023 to 2027. PNC “Hub Life Science- Diagnostica Avanzata (HLS-DA). PNC-E3-2022-23683266-CUP: C43C22001630001,” finanziato dal Ministero della Salute nell'ambito del Piano Nazionale Complementare Ecosistema Innovativo della Salute.

Conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.