Abstract

Officer-worn body cameras are ubiquitous in modern law enforcement as a tool for accountability. The cameras’ mere presence may deter bad behavior, and recordings of misconduct can serve as evidence in the courtroom. We suggest an additional use for body camera footage: as data for identifying patterns in and ways of improving policing practices, which are often challenging to observe by other means. This use requires scaling up the analysis of body camera footage to the hundreds of recordings police departments collect each day. Natural language processing, a set of computational methods to process and analyze human language, is an important tool for this purpose. This tool typically uses artificial intelligence to extract key social components of speech—such as politeness, sentiment, or emotion—captured by body camera audio. We offer suggestions for using these tools to analyze body camera footage, and we identify issues relevant to the analysis, application, and governance of this footage. In the era of artificial intelligence, body camera footage is a promising source of data; the advice in this article is designed to maximize the chances that the conclusions drawn from that data are valid and in the public interest.

Since they were first introduced in the early 2000s, body cameras have become a fixture of modern policing. By 2016, almost half of all law enforcement agencies in the United States—and 80% of large police departments—had adopted them. 1 Their rapid proliferation has largely been motivated by a desire for accountability. By their mere presence, the devices may positively affect the behavior of officers and citizens if they believe they will be held accountable for any bad behavior 2 —a cameras-as-intervention approach. Alternatively, the videos may be used as evidence after the fact when the conduct of those involved in an encounter is questioned.3 –5 But most recordings simply sit in storage, unviewed, costing the taxpayer while providing little or no benefit. 6

We propose a third use for recordings: treating the footage as data, so that recordings are used not just to adjudicate individual incidents but to inform the broader practice of policing—for instance, by revealing recurrent conduct problems, showing where there is a need for training, or providing evidence for the success or failure of new policies. Whereas traditional sources of data, such as police reports, typically contain basic information about what happened during, say, a traffic stop, body camera recordings document police interactions in depth and are uniquely suited to revealing how officers communicate with the public. Officers’ words carry legal, social, and even physical consequences but are invisible in traditional police records. Body cameras capture features as subtle as officers’ tone of voice 7 and can reveal what happens in real time, so observers can understand, for instance, how an initially peaceful encounter escalated and led to the use of force. 8 These observers—who could include researchers, police department staff, or members of civilian oversight bodies, among others—can review body camera footage of police activities (essentially engaging in virtual ride-alongs) and code them manually for features of interest, but their research and conclusions are limited by the number of these videos they can realistically watch.

Automating the coding process would allow researchers and others to dramatically scale up their analyses. Natural language processing is a subfield of computer science and linguistics that often tasks artificial intelligence with gleaning meaning from human language. We believe it holds promise for analyzing body camera footage at scale. In this article, we describe how natural language processing works and provide advice for police departments, developers of platforms that store and organize footage, and data analysts on how to make the best use of this tool to inform policing practices. We also discuss several ways the data may be misused and how to avoid those traps.

Blue Language: How Natural Language Processing of Video Footage Works

Applying natural language processing to video footage, such as that from body cameras, involves several steps. After police officers upload footage from their body cameras to an evidence management system, they may link that footage to any police reports, spreadsheets, or other police department records documenting the recorded encounter. Next, the footage audio needs to be transcribed, annotated to indicate who is speaking at a given time, and preprocessed—converted into a format that a computer can interpret. This transcription and preprocessing can either be done manually or automated (at some cost of accuracy). A programmer can then use natural language processing software to help draw out important inferences from the preprocessed data in a variety of ways: analyzing the frequency of particular words or phrases in the data set; assessing the degree to which language is abstract or concrete; pulling out particular phrasings, such as those indicative of a command or a question; or ferreting out subtler linguistic patterns, such as whether an officer implicitly states the reason for a stop or whether the officer’s words would be likely to be perceived as respectful.

Before being applied to a given data set, the natural language processing software is first exposed to a training data set consisting of examples of the type of data from which inferences will be drawn. In a process called supervised learning, humans label, categorize, or rate text for attributes of interest, such as respect, politeness, or aggressiveness. Then the software identifies associations between features of language and the corresponding labels. In unsupervised or semisupervised learning, there are no human raters. Instead, a computer is programmed to identify associations in the data; to cluster similar words, phrases, or statements; or to predict the next word in a sequence from the prior or surrounding words.

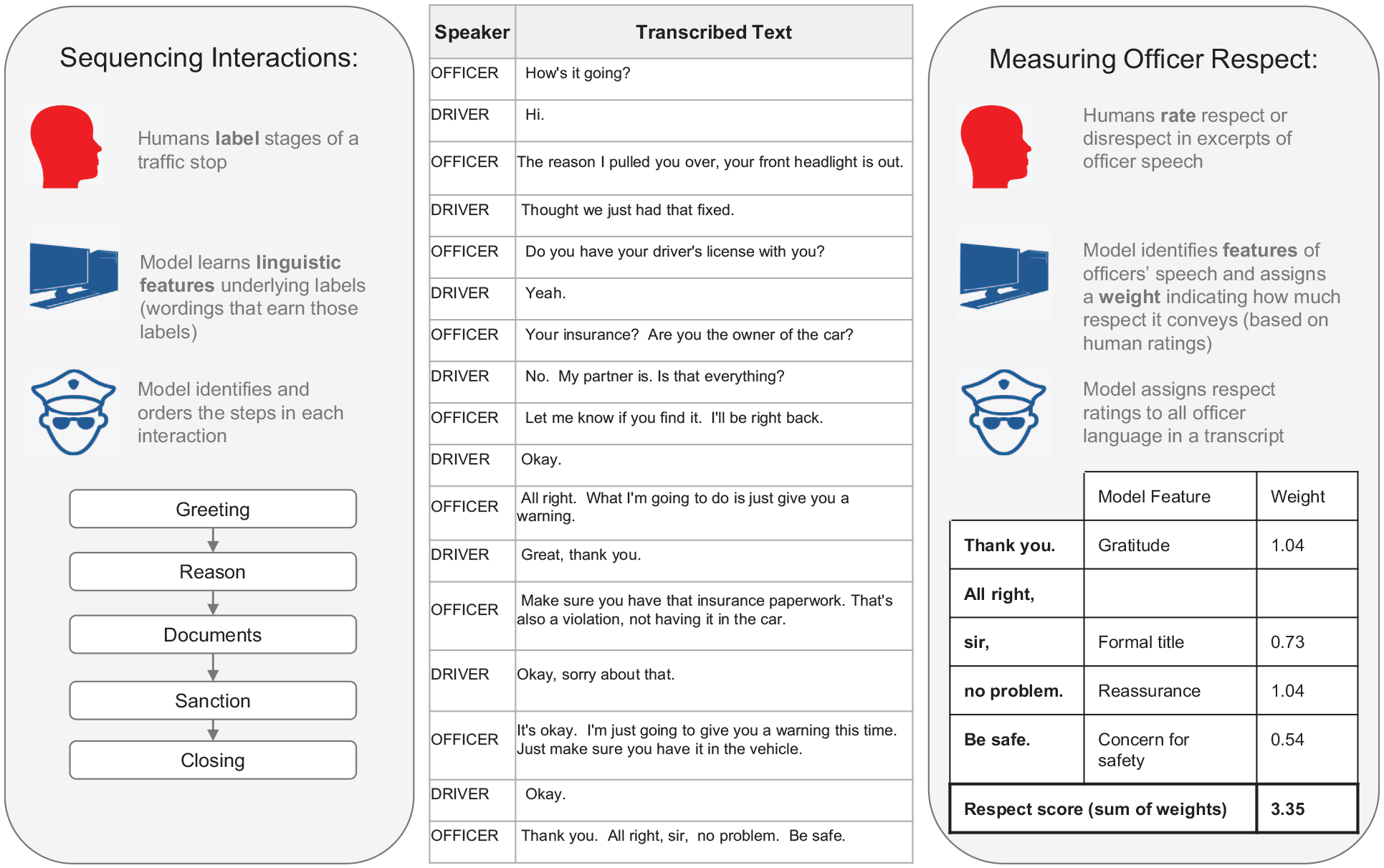

The result of this training process is a language model: an algorithm that can produce outputs automatically when new text is fed in. Although natural language processing has not yet been applied widely to collections of body camera recordings, we next share two examples of how it has been used in the context of policing. In one example, the analysis revealed the tenor of officers’ communication with citizens they stopped, measuring signs of respect and disrespect. In the other example, the technology outlined the structure of traffic stops, picking up harbingers of escalation (see Figure 1).

Examples of natural language processing of a transcript of body camera footage

Measuring Officer Respect

Decades of research attest to the importance of respect in policing.9 –11 On the one hand, when citizens are treated with respect by police, they are more likely to respect and trust officers and judge the officers’ behavior as being fair. Increased trust also makes cooperation more likely. On the other hand, if police are rude or disrespectful, citizens are less likely to respect, trust, and cooperate with them.

Assessing officers’ expressed respect for the citizens they encounter in the course of their job may not be possible from police reports or other departmental records of these encounters. Supervisors and others could ride along with officers and observe them directly, but doing so is costly and impractical at a large scale, and the presence of observers may unduly influence officers’ behavior. Body camera footage can provide a more efficient and less biased window into how officers communicate with the public.

In the first application of natural language processing to body camera footage, the two of us and our colleagues examined racial disparities in the respectfulness of officer language during routine traffic stops in Oakland, California. 12 First, human raters judged the respectfulness evident in brief excerpts from transcripts of the stops. Our language model then correlated these respect ratings with different language features—from apologizing and expressing concern for the driver (correlated with high respect ratings) to using informal titles and negative words (correlated with low respect ratings)—and gave the features numerical values, or weights, to indicate how much respect they conveyed.

Equipped with this set of linguistic features and their weights, we used the natural language processing model to assign an estimated respect score to all officer language from a month of traffic stops—amounting to 36,738 utterances. We then conducted a statistical analysis to associate these scores with variables such as the race of the person stopped, the race of the officer, and the outcome of the interaction (warning, citation, or arrest).

By scoring respect at scale, we were able to “zoom out” to account for the influence of these variables. We found that that police officers were significantly less respectful when speaking to a Black driver than to a White one, regardless of the race of the officer or the severity of the infraction. And because every line in a transcript could be assigned a score, we were also able to “zoom in” to observe levels of respect across the time course of stop interactions. For example, racial disparities in respect showed up right away—usually in the first 5% of an interaction, before the driver had a chance to say much—suggesting that the disparate treatment may start with the officer and is not solely a reaction to the citizen’s response to being stopped.

On the basis of our findings, the Oakland Police Department offered concrete suggestions to its officers, instructing them, for instance, to offer apologies for the stop, thank the driver for being cooperative, and show concern for a driver’s safety—expressions that were given high respect ratings in our model. We then used the same natural language processing tools to detect whether officers followed these recommendations in the field. We found that they did. Officers used more of the suggested techniques in stops after the training than they had before it. 13 Body camera footage could be used to evaluate the success of many other programs designed to improve police–community interactions.

Sequencing Traffic Stops

The application of natural language processing to body camera footage can also shed light on the sequence and timing of events that occur during police interactions because the technology can reconstruct the chain of events from officers’ language. For example, the software can find specific phrases that characterize and mark the function of each stage of a traffic stop, such as “Can I see your license?” or “I’m going to give you a warning.” In this way, the software can accurately reconstruct the sequence of each stop, akin to the storyboard of a film.14,15

A play-by-play analysis of storyboarded footage can show, for example, whether an officer resorted to the use of force and, if so, how quickly and how much force was used.8,16 One manual play-by-play analysis of such footage found that the use of force occurred relatively early in most interactions and more quickly against Black individuals and males. 8 Storyboarding can also reveal signs of potential escalation. In a recent study, researchers used natural language processing to analyze body camera footage of 577 stops of Black drivers. They found two police behaviors—issuing commands to the driver or failing to disclose the reason for the stop—that, when they occurred in the first moments of traffic stops of Black men, were more likely to lead to an escalated outcome such as search, handcuffing, or arrest. 17

A record of how events unfold can also indicate whether officers’ conversations with members of the community begin with trust or suspicion. 18 Consider again a traffic stop. One officer might routinely conduct traffic stops by first telling drivers why they were stopped, asking for their license, and then issuing a ticket, whereas another officer might only disclose the reason for the stop when a ticket is issued. These conversational paths lead to the same outcome, but the latter is less transparent and more likely to elicit concern among community members.17,19 There would be no way to discern between these paths in typical traffic stop data records, which would report only the legal outcome of the stop.

Observers could sequence police interactions 20 and identify expressions of respect 21 by personally reviewing body camera footage.22,23 However, the scale at which natural language processing can storyboard stops offers the possibility that departments could continually monitor, for example, whether and when officers share the reason for a traffic stop with drivers. Such monitoring could offer data-driven ideas for addressing important questions of equity and efficacy in policing, for avoiding unnecessary escalation in police–community interactions, and for increasing citizens’ trust in and respect of police in general.

Advice for Collecting & Analyzing Body Camera Data

For these reasons, we recommend that police departments use natural language processing to analyze body camera footage at scale. More than 30 police departments, including New York City’s, have begun to do so, partnering with companies such as TRULEO that include natural language processing in their footage analysis software. 24 TRULEO software scans footage and tags recorded utterances with labels like “high professionalism,” “de-escalation attempts,” and “high composure.” 25 Two police departments using this software have seen modest improvements in the professionalism of officers, according to preliminary data. 25 Another company, Polis Solutions, offers TrustStat, a product that analyzes police interactions “following the same patterns that human expert[s] use.” 26 These software programs represent the first commercial forays into natural language processing of body camera footage and are aimed at making the process practical for use in a wide range of police departments.

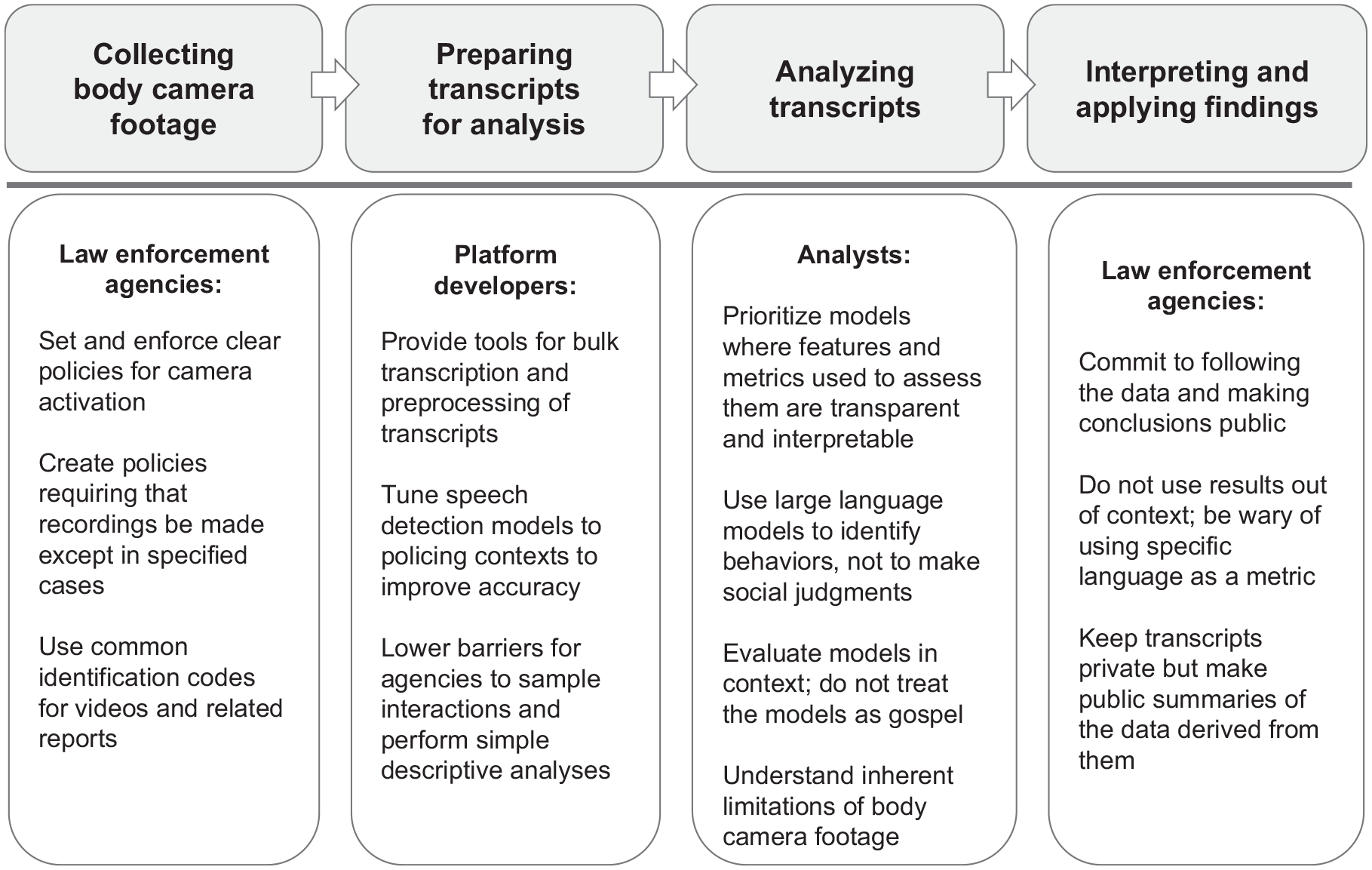

The growing business interest in body camera analytics recalls initial enthusiasm for body cameras as deterrents to bad police behavior and recordings as courtroom evidence. As it turns out, the civilizing benefits of the devices21,27 and the evidentiary value of their videos28 –30 depend a great deal on body camera implementation. For instance, if officers get to decide whether to turn on their body cameras, they are unlikely to do so in the very situations in which a camera would be most useful for both purposes. What is more, even videos can introduce bias, depending on the camera angle and courtroom instructions. A footage-as-data approach comes with its own practical, analytic, and social considerations at each step of what we call the data pipeline, which encompasses the collection of footage, its transcription, analysis of those transcripts, and use of the results in guiding policy (see Figure 2).

Recommendations for law enforcement agencies, developers of video storage platforms, & data analysts across the data pipeline

For each step of the pipeline, we provide recommendations that collectively should enable policymakers, law enforcement agencies, researchers, and communities to capitalize on body camera data. Some of our suggestions apply to police departments, which determine how body cameras are deployed, how recordings are managed, and how data are used within the organization and shared outside of it. Others pertain to the developers of the software that is used to store, organize, and transcribe footage. We provide additional advice for data analysts—who may work for police departments or as outside criminologists—charged with choosing or writing natural language processing programs and using them to draw conclusions and make recommendations to law enforcement.

Police Departments: Use Cameras Consistently

Police department policies concerning body cameras and their data greatly influence the value that can be derived from body camera footage. The influence begins with the rules about when and whether body cameras must be turned on. One Bureau of Justice Statistics survey found that more than one quarter of law enforcement agencies that used body cameras lacked a policy specifying which events officers were required to record. 1 In the agencies that have policies, rules may be loose (say, deferring to officers’ judgment on when they should activate their cameras) or may specify only a narrow set of conditions in which recording is required. 21

Any of these scenarios can limit the usefulness of body camera footage as data by creating a biased sample from which to draw conclusions. Bias can arise because interactions that are not recorded cannot be analyzed and because the circumstances in which officers decide to activate their cameras may differ from those in which they choose not to. For these and other reasons, the U.S. Department of Justice recommends that police departments make recording mandatory during law enforcement activities, except in circumscribed cases—for example, sensitive interviews with victims or informants—and even then, officers should be required to document why they turned off the camera. 31

How recordings are stored in management systems can also affect the usefulness of body camera footage. When uploading body camera footage, officers typically log into the system using their ID, and the recordings are all time-stamped. However, identifying footage with just a time and an officer ID is often insufficient for later matching videos to incident reports or for comparing similar kinds of police interactions. Adding a common identification code that links footage to related data and other footage could save hours of time spent sorting through videos trying to figure out what video goes with what incident. For instance, the officer uploading footage might be required to put a numerical sequence in the footage management system that matches the same sequence of numbers on a police report of the same incident or apply a label to the recording that enables it to be compared with similar kinds of encounters. Adding these labels also makes it easier for police departments to ensure compliance with their own policies regarding body camera activation, allowing them to identify incident reports that lack an associated recording.

Developers of Video Storage Platforms: Lower Barriers to Using Recordings as Data

Systems or platforms designed to store body camera recordings for evidence purposes could be adapted to also handle footage as data. Automatic transcription of the audio would be one valuable feature. The most used platform—Axon Enterprise’s evidence management system, Evidence.com—offers a transcription feature, but it is most practical when applied to individual videos, not large-scale transcription. And, in general, technical challenges remain. Automated transcription is prone to inaccuracies. It is particularly bad at identifying who is speaking. Other errors may stem from poor recording quality, given the noisy environments police often operate in. What is more, the microphone is mounted on the officer’s chest, so recording (and transcription) will be more accurate for officers than for the people they are interacting with. Machine transcription may also be less accurate for certain dialects or accents, which could introduce additional errors when interactions involve community members who use speech conventions and pronunciations that differ from “standard” classroom English, such as African American Vernacular English. In a recent comparison of popular automated speech recognition systems, average word error rates were almost double for Black versus White speakers. 32

Developers of transcription software could tune their products to police work to improve their accuracy, optimizing them for, say, phrases that police use frequently and providing contextual clues for interpreting sounds that may occur during an interaction, such as laughter, screaming, and other nonspeech sounds. With these and other technical advances, the transcription of speech recorded by body cameras might eventually become fully automated, rendering obsolete the need for manual transcription to prepare videos for language analysis. 15

A larger challenge is adding tools to video storage platforms that enable individuals who lack sophisticated programming skills working in a police department or outside it (in, say, an oversight capacity) to extract large numbers of whole or partial transcripts for analysis. Because the current platforms were built for the purpose of using recordings as evidence, not data, a user can request a transcript for a specific recording, but the platforms generally do not allow users to easily sample interactions and retrieve transcripts at scale. Doing so requires users to navigate the backend of the video management system, an intimidating prospect for a nonexpert. Enhancing platforms by adding tools that enable the easy sampling and transcription of text and even some rudimentary analysis of it could significantly lower the barriers to using this footage for research, civilian oversight, or police department decision-making. For example, a user wanting to analyze how officers are interacting with people at traffic stops or the circumstances in which officers use force could select recordings with a common tag—say, “traffic stop” or “use of force”—within the platform, easily transcribe the footage in bulk, and then compute simple analyses of expressed sentiments or flag key phrases.

Data Analysts: Put Transparency First

Data analysts are needed to perform bulk analyses of video data sets. These experts have a large and expanding toolbox of natural language processing techniques at their disposal for this task. When analyzing body camera footage, they may be tempted to deploy the most cutting-edge techniques, but exactly how these techniques derive their conclusions can be obscure. Transparency should trump predictive power in the choice of analytic models. Given the importance and sensitivity of this type of data, it is critical to understand the models’ limitations.

The natural language processing technologies available to data analysts vary in their transparency. At one end are simple pattern-matching methods, which involve searching text for certain words (from a list) or sequences of words. They are very transparent in how they work but have the potential to miss occurrences of searched-for events because of the variability in people’s everyday speech. For instance, an officer might communicate that a driver is being stopped for speeding by using any of countless phrases, such as “I caught you going 50 in a 40 zone” or “Whoa, where’s the fire, buddy?” or “You are being stopped for excessive speed.” It is hard to come up with an exhaustive set of words to reflect all ways of explaining the same offense.

At the other extreme are large language models, artificial intelligence programs that learn to recognize and interpret human language after being fed large amounts of text that serve as examples. These models are better at capturing the underlying meaning across different statements because they pick up on co-occurrences of words in huge amounts of text. They also interpret a broader set of relationships between words than one could hard code into a dictionary, enabling them to achieve moderate accuracy at even complex tasks involving the social nuances of language. 33 However, the models’ architecture makes it challenging to discern how they arrive at their predictions and can mask biases in the models.

For instance, large language models often reproduce any social biases present in the sample text they are given. In one case, a model expressed biased assumptions about a person’s occupation that were based on the person’s gender. 34 In another, it showed a preference for White over Hispanic applicants in hiring decisions. 35 A number of these large language models harbor persistent stereotypes involving associations between race and criminality, race and weapons, gender and scientific aptitude, and advanced age and negativity. 36

To mitigate such issues, analysts who use traditional machine learning should be able to show how they derived each feature specified in the model and should provide their model weights; at a minimum, other analysts should be able to replicate the process of building the model, even if the model itself is opaque. 37 Using open-source (freely available) code instead of commercial language models may also be necessary because open-source code can be examined, whereas the internal architecture of commercial models is often undisclosed. (A lack of privacy may preclude the use of commercial large language models in any case, because any data put into one may become the property of the company that built the model, which may use the data in unanticipated ways.)

One middle path is illustrated by the examples we have shared of supervised learning to label respect and discern sequences of events during stops. In this approach, humans add labels to a training data set by reading transcripts and judging how various linguistic features, such as reassurance or concern, match up with their intuitive perceptions of officers’ language in specific instances and tagging the text accordingly. After training on this labeled data set, the algorithm searches for those features in unlabeled transcripts, generating data to answer the question at hand: in this case, whether officers communicate more respectfully with White drivers than Black drivers. This method can capture more abstract aspects of interactions, such as whether officers provided implicit reasons for their actions, than can be identified from word lists or pattern matching alone. Ultimately, this approach ensures that model predictions can be readily understood and discussed with the police officers and community members affected by recommendations resulting from an analysis.

This transparency is all the more important when models aim to capture qualities such as “respect” or “fairness” that may not mean the same thing to everyone. Variations in how individuals interpret language could even be incorporated into the model itself.38,39 Tasks requiring social knowledge are a significant challenge for large language models. 33 We believe these models are better suited to identifying specific behaviors or utterances, such as the phrases we mentioned in the Sequencing Traffic Stops section. Regardless of which modeling approach analysts use, they should critically evaluate their model’s results instead of taking them as gospel. Specifically, context-specific evaluation is important. A model trained on traffic stops may not be useful for analyzing 911 responses; one trained in Oakland may not translate to Baltimore. Analysts must conduct a rigorous empirical evaluation of any new model—or any newly applied model originally trained for a different context—to determine the degree to which it actually captures the desired output in its context.

Other limitations in the accuracy of any data analysis do not involve the model but rather what is put into it. Body camera footage varies in quality and transcripts reflect only what is said, leaving out potentially critical visual information needed to interpret that language, such as whether a citizen is, in fact, resisting arrest or complying with a lawful order. Analysts need to be aware of these imperfections so that they understand how any inferences from them may be limited and can convey these caveats to others.

When people watch body camera recordings, they may feel as though they are getting the full picture of police encounters, a neutral view of what really happened. The reality is that recordings are the products of decisions individuals, organizations, and platforms make, and no all-purpose method for analyzing them exists.

Avoiding Traps: Politics, Perverse Incentives, & Privacy Violations

Putting body camera analysis to use for improving policing presents another set of potential problems and concerns. These issues include police department reluctance to uncover information that may reflect badly on its officers, misuse of data, and threats to privacy.40 –42

Body camera footage, like all data, does not speak for itself; the insights it yields depend on what is asked of it. The questions police departments would ask likely differ from those civilian agencies or elected officials might pose. As we have shown in our examples, police departments can use body camera footage to surface hard truths, such as systematically different treatment of minority groups, and address them. But not all departments and police officers want to examine their weaknesses. As a result of such reluctance, at least two police departments stopped doing body camera analytics when the generated results portrayed them in an unflattering light. 43 If law enforcement agencies demand favorable results, industry may pivot to supply them. For example, TRULEO’s Virtual Public Information Officer selects positive interactions for agencies to share with the public. 44

If law enforcement agencies truly intend to use footage as data, they should commit to following the data wherever they lead, even when—indeed, because—they may reveal unpleasant truths. In addition, making policing data available benefits a broad range of stakeholders, including law enforcement itself. For example, after Kalven v. City of Chicago made public all allegations of police misconduct in the Chicago Police Department, those records were used as data in a study of the benefits of training police in procedural justice strategies, which build ways of ensuring fairness into procedures. The study showed that training in these strategies—such as treating civilians respectfully, explaining police actions, and giving civilians a chance give their side of the story—reduced civilian complaints of misconduct. 45 Body camera data could similarly provide evidence for or against the efficacy of new training or other programs.

Although such transparency can benefit science and the public interest, accruing those benefits also requires that the data are properly used and interpreted. History provides no shortage of examples in which metrics (think college rankings in education or body mass index in health) have been decoupled from the qualities they were meant to capture and taken on a life of their own. 46 An overemphasis on any one metric may create a perverse incentive to focus on only the outcomes measured—and rewarded—by that metric. In policing, for example, tying career advancement to the number of arrests made and tickets issued as measures of enforcement activity incentivized more aggressive policing at the expense of other important behaviors not captured by this metric, such as outreach efforts to build rapport and cooperation between communities and the officers serving them. 47 Any one measure captures some aspects of what is to be assessed—whether that be job performance, education quality, or general health—but not all of them.

By recording the relational aspects of policing, body camera footage can widen the scope of behaviors departments use in evaluating officers’ performance. However, a narrow focus on a linguistic metric may nonetheless lead officers to conform to that specific metric without simultaneously adopting the qualities the metric is meant to capture. That is, rewarding officers for saying “sir” or “please” as indicators of respect could encourage them to change the specific words they utter without changing the underlying interactions. At the extreme, stressing the metric may lead to data manipulation by officers. 48 We advise law enforcement supervisors and policymakers applying results from body camera footage to move their focus beyond metrics to the behaviors they represent. If they do, the results could lead them to detect both departmental issues and effective ways of addressing them. 49

As for privacy concerns, making captured police encounter data public poses risks to privacy, given the many ways that people could be identified from raw footage or transcripts.50,51 Deidentifying a single recording is time-consuming; complete anonymization of footage at scale is likely impossible, although we applaud any technological solutions, such as blurring faces, that are developed to reduce the burden of releasing footage in the long-term. How can transparency and privacy be balanced? A straightforward approach would be for agencies to keep body camera videos and transcripts private while publicly identifying the linguistic features—such as expressions of apology, reassurance, and concern—derived from each recording, as we did in our study of racial disparities in officers’ respect for drivers at traffic stops. 12 However, this approach would preclude analysts outside of police departments from identifying additional features from the footage. As one regulatory solution, states could consolidate their law enforcement agencies’ body camera footage into a central clearinghouse. 52 This consolidation could shift some of the cost of storage and management from local law enforcement to the state as well as create a controlled access point for analysts.

Being Candid on Cameras

Body camera analytics represent the cutting edge of data use in policing. Analyses of large sets of body camera footage can reveal aspects of police interactions that would be unobservable otherwise. Natural language processing can facilitate these observations at scale, taking advantage of the large amounts of body camera footage departments create. Body cameras alone cannot fix flaws in policing, nor can recordings solve the problem of police misconduct. Nevertheless, this novel and powerful source of data has the potential to contribute to more effective and equitable policing if law enforcement leaders heed important lessons for generating, analyzing, and making use of the data.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.