Abstract

Introduction

Nurses use clinical judgment (CJ), or the process of “thinking like a nurse” by recognizing and interpreting a patient's concerns, to guide care decisions. However, nursing students graduate without sufficient CJ for safe nursing practice. A prior investigation found observing simulations leads to significant, but inconsistent CJ gains, depending on learner ability. Observers watched diverse simulation designs with increasing complexity, highlighting the need to understand which conditions best support CJ development.

Objective

This secondary analysis of a prior investigation aims to describe how simulation designs (i.e., scenario topic and situation urgency) and complexity level impacts CJ. A secondary aim is to investigate how simulation designs and complexity level impacts CJ based on learner ability.

Methods

Sixty-one junior-level baccalaureate students participated in the study over a semester. Participants observed eight expert-modeled simulations, each focused on medical-surgical or mental health scenarios in either urgent or routine situations. After each simulation, participants responded to 11 CJ prompts, which were scored using the Lasater CJ Rubric (LCJR). The scenarios were rated for complexity (simple, moderate, difficult, complex) based on author-identified sources. Participants were grouped into low-, medium-, or high-ability categories based on their cumulative LCJR scores. Two separate two-way repeated measures ANOVAs examined how simulation topics and situation urgency influenced CJ, with analyses repeated for each ability group.

Results

Observers’ mean CJ is influenced by both simulation design and complexity level. CJ decreases in complex medical-surgical and mental health scenarios as complexity exceeds a “difficult” rating, regardless of ability. However, CJ improves in simple and moderately complex routine situations. Observing “difficult” and “complex” simulations hindered significant CJ gains, regardless of ability.

Conclusions

Nurse educators should consider learner ability and complexity level when designing simulation observations to prevent overwhelming observers’ CJ, especially during urgent and complex medical surgical or mental health scenarios.

Problem

Nurses use clinical judgment (CJ) in nearly every nursing task to make safe patient care decisions (Dickison et al., 2019). CJ, a complex cognitive skill, involves thinking through patient care situations to notice salient cues, interpret meaning, respond appropriately, and reflect on clinical decisions (Klenke-Borgmann et al., 2020; Tanner, 2006). However, novice nurses demonstrate inadequate CJ (Kavanagh & Sharpnack, 2021). Consequently, newly graduated nurses have trouble applying theoretical knowledge, which negatively impacts their clinical judgment and leads to medication errors (Murray et al., 2020) as well as unsafe hospital conditions (Chaboyer et al., 2021; Jessee, 2021; Treacy & Caroline Stayt, 2019). Nurse educators must find ways to improve learners’ CJ to meet essential nursing education requirements (American Association of Colleges of Nursing, 2021).

Review of Literature

Simulation-based learning experiences (SBLE) expose learners to realistic situations replicating real-world practice complexities (Lioce et al., 2025). Active SBLE participation develops CJ (Lee & Oh, 2015; Nielsen et al., 2023) more efficiently than traditional hospital-based clinical learning experiences because learners spend more time-on-task (Sullivan et al., 2019). Therefore, many American nursing boards allow replacing half of hospital-based clinical hours with simulation (Bradley et al., 2019). However, learners spend a majority of simulation time observing in non-active roles to accommodate multiple learners and increase simulation capacity (Hayden et al., 2014; O’Regan et al., 2021; Smiley, 2019). While assigning observer roles improves simulation scalability, learners’ random rotation through simulation roles could lead to inequitable simulation exposure.

Nurse educators assign asynchronous expert-modeled simulation (EMS) observations to increase simulation exposure, create equitable experience, and facilitate simulation CJ development (Dodson, 2023; Huun, 2018; Rogers & Franklin, 2022, 2023). During asynchronous EMS observations, learners independently watch expert providers delivering simulated nursing care (Baldwin et al., 2014). Educators vary simulation observations by altering scenario topics and situation urgency with increasing complexity, assuming exposure to different experiences builds CJ. The original study found 63 junior-level nursing students’ CJ significantly increased after observing eight EMS videos with varying scenario topics, situation urgency, and complexity levels. However, gains were variable and peaked at the fifth video before declining and stalling after the sixth. When learners were stratified into groups by ranking total scores, high- and medium-groups showed more linear improvement trends up to the fifth video, while lower-performing groups exhibited less predictable gains (Rogers & Franklin, 2022). These findings suggest scenario topics, urgency, and complexity could influence CJ.

Nurse educators vary scenario topics by using different medical diagnoses to promote clinical judgment (CJ) development across diverse clinical contexts. Hambach et al. (2023) reported mean CJ improvements when learners engage with varying diagnoses during active simulation participation. In contrast, Fogg et al. (2023) found CJ performance decreased when learners cared for patients with different diagnoses in repeated virtual simulations, raising questions about the transferability of CJ across scenario topics. Notably, no studies have examined how exposure to different scenario topics during observation influences CJ development (Rogers et al., 2020). Understanding the relationship between scenario topics and observers’ CJ is essential to optimizing simulation design.

Novice nurses are most often criticized for having insufficient CJ when caring for deteriorating patients in urgent situations (Kavanagh & Sharpnack, 2021; Lasater et al., 2015; Treacy & Caroline Stayt, 2019). Zulkosky et al. (2016), a seminal study investigating simulation observers’ CJ during urgent situations, found observers outperformed active participants in unfamiliar, urgent situations due to better cue recognition and interpretation. However, in familiar scenarios observers’ CJ was less accurate, suggesting unfamiliarity during urgent situations impacts observers’ cue processing. However, researchers measured CJ within one simulation during reflective pauses, which are known to impact learners’ thinking (Lee et al., 2024). Examining observer CJ across multiple scenarios during varying levels of situation urgency without relying on reflective pauses could clarify how situation urgency impacts observers’ CJ.

Nurse educators expose learners to diverse scenario topics and highly urgent situations to prepare learners for rising patient acuity and complexity levels. However, better preparing nurses for real-world complexities requires understanding what contributes to complex patient care. Recent work to define nursing patient care complexity confirms the multifaceted nature of complex patient care (Huber et al., 2021). Using a hybrid concept development mode, Huber et al. (2021) redefined patient-related complexity as a “relational and dynamic phenomenon with multiple, interconnected processes” shaped by the interplay of several elements such as illness severity, therapy challenges, psychosocial factors, and available resources.

This definition aligns patient care complexity with Cognitive Load Theory (CLT), which explains complexity as the sum of interacting elements within a task or environment (Choi et al., 2014; Sweller et al., 2011). These interacting elements can either enhance or hinder learning by influencing cognitive resources for learning. As the number and interactivity of elements increase, learners must process more information, which can overwhelm their working memory, particularly for novices (Sweller et al., 2011). Previous studies have shown that increasing the number of elements in simulation designs negatively impacts simulation performance (Smith et al., 2022; Tremblay et al., 2019, 2023). Other research consistently demonstrates novices are better equipped to manage simpler tasks, while highly complex tasks exceed their cognitive capacity, hindering performance (Cabrera-Mino et al., 2019; Haji et al., 2016; Shinnick, 2022; Shinnick & Cabrera-Mino, 2021; Smith et al., 2022; Tremblay et al., 2019, 2023). However, there is a gap in the literature comparing how different complexity levels impact observers’ CJ outcomes. This gap limits designing simulation observations that appropriately challenge learners based on their expertise level.

Learners’ expertise, or ability, is shaped by prior knowledge and backgrounds, which impacts CJ development (Klenke-Borgmann et al., 2023; Lasater et al., 2019; Lavoie et al., 2022; Lesā et al., 2021; Roskos et al., 2021; Shinnick, 2022; Shinnick & Cabrera-Mino, 2021; Young et al., 2016). While many studies demonstrate the impact of prior knowledge on CJ development during active simulations, few have explored this relationship in the context of simulation observations (Lasater et al., 2019; Rogers & Franklin, 2022). The original study revealed that while observers across stratified ability levels showed meaningful gains in CJ, these gains were not uniform. Learners in different ability groups exhibited distinct CJ trajectories and responded differently to increasing simulation complexity. Qualitative findings further illustrated learners’ approaches, knowledge application, and thought processes varied by ability level (Rogers & Franklin, 2022). This secondary analysis aims to stratify learners by ability to better understand how prior knowledge influences CJ during simulation observations. By exploring how learners of varying ability levels respond to simulation complexity, nurse educators can gain critical insights into tailoring simulation designs and determining appropriate complexity levels according to learner ability.

The Healthcare Simulation Standards of Best Practice (HSSOBP) emphasize the importance of scaffolding in simulation design, but they do not clarify when or how learners are ready for more complex simulation experiences (Miller et al., 2021). As a result, decisions about what students are ready for are often left to expert discretion. Understanding how simulation design conditions influence CJ enables educators to create more efficient simulation experiences that align with learners’ developmental stages and cognitive capacities (Eppich & Reedy, 2022). Therefore, the purpose of this study is to describe how simulation designs (i.e., scenario topic and situation urgency) and complexity level impacts CJ. A secondary purpose is to investigate how simulation designs and complexity level impacts CJ based on learner ability. Therefore, this study will answer the following research questions:

How do simulation design elements (scenario topics or situation urgency) impact clinical judgment (CJ) outcomes across different complexity levels?

How does learner ability influence clinical judgment (CJ) outcomes in response to simulation designs (scenario topics and situation urgency) and varying complexity levels?

Theoretical Framework

The NLN Jeffries Simulation Theory (Jeffries, 2021) posits simulation design and participant characteristics impact participant outcomes. Simulation design in this study includes investigating simulation scenario topics, situation urgency, and complexity level. Participant characteristics include learner ability. The outcome of interest is CJ.

Methods

Study Design

This secondary data analysis is a descriptive, one-group quasi-experimental design. The study used a within-subject factorial design with simulation design (i.e., scenario topic and situation urgency) and complexity level as within-subjects factors.

Sample and Setting

The sample included 61 out of the 63 junior-level traditional baccalaureate learners from the original study conducted in a university in the southern United States who voluntarily consented to researchers maintaining data in a repository.

Ethical Considerations

The university institutional review board concluded this study was exempt from board oversight (IRB #2023-429). Participants provided voluntary consent to participate in the original study and to researchers maintaining data in a repository for future use. Deidentified data were stored in a password-protected, double identification cloud system managed by the university IT department according to the IRB protocol. Participation was voluntary and there were no incentives or extra credit offered to participate because all study activities were regularly scheduled coursework.

Data Collection Procedure

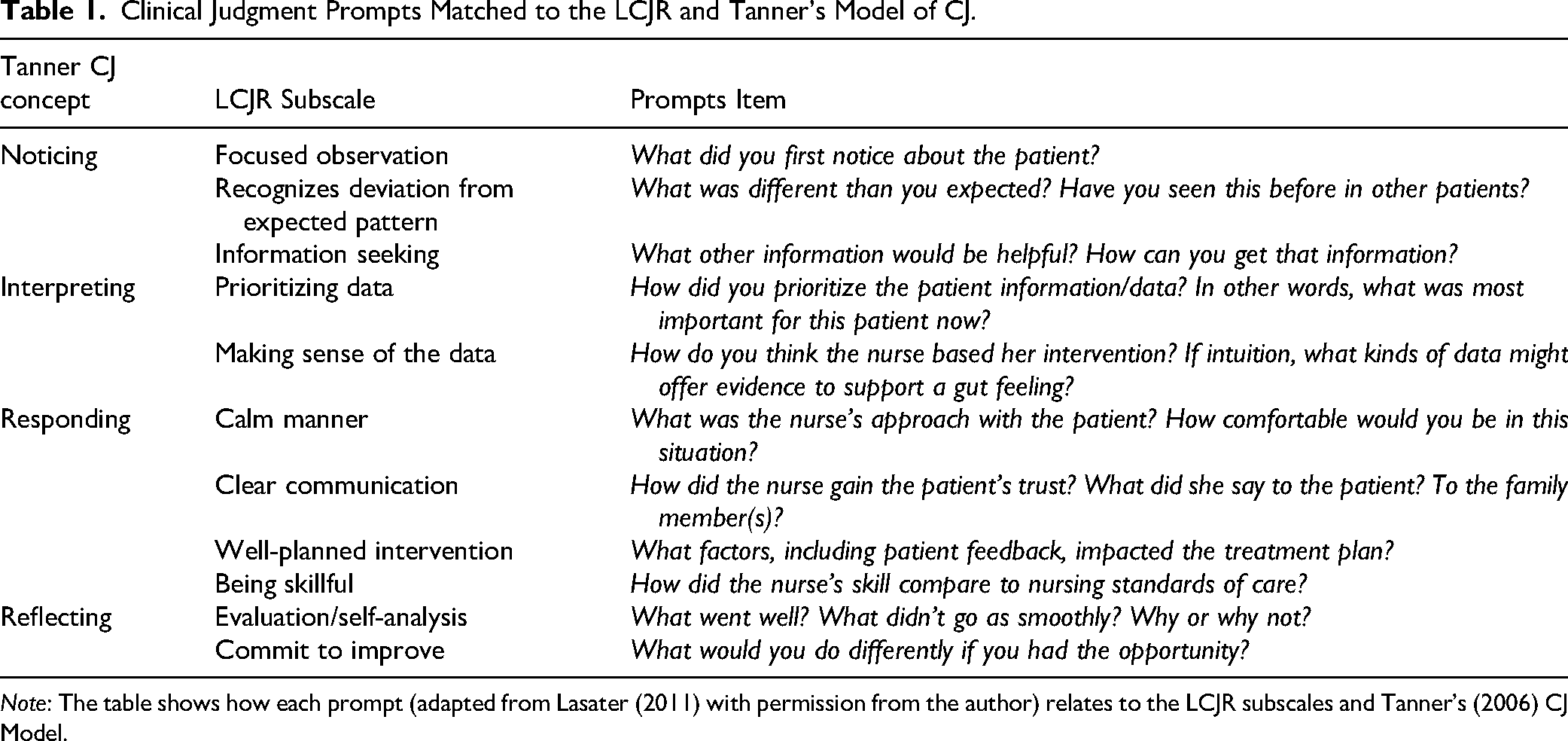

Participants observed two asynchronous EMS videos prior to each in-person simulation day throughout the semester, totaling eight observations. Participants provided written responses to 11 CJ prompts aligned, with the LCJR, describing what participants noticed, interpreted, responded, and reflected from each EMS observation using QualtricsXM prior to debriefing (Table 1). Written responses were scored using the Lasater Clinical Judgment Rubric (LCJR, Lasater, 2007) after obtaining the author's consent. For this secondary analysis, EMS videos were categorized by scenario topic and situation urgency (Table 2). EMS videos increased in complexity over time.

Clinical Judgment Prompts Matched to the LCJR and Tanner's Model of CJ.

Note: The table shows how each prompt (adapted from Lasater (2011) with permission from the author) relates to the LCJR subscales and Tanner's (2006) CJ Model.

Expert Modeling Video Scenario Design by Scenario Topic and Situation Urgency.

Study Factors

Simulation Design

Simulation observation scenarios were designed according to the Healthcare Simulation Standards of Best Practice (INACSL Standards Committee, 2021). Scenarios are reviewed each semester by expert simulation faculty. Practice content experts review scenarios at the initial design and each semester when faculty have questions or make changes to ensure scenarios align with current practice.

Scenario Topic

Medical-Surgical

Medical-surgical scenarios depict caring for clients with acute or chronic medical conditions from wide-ranging pathophysiological disorders or surgical procedures. In this study, medical-surgical scenarios included clients awaiting surgery, recovering from an appendectomy, experiencing heart failure, and receiving antibiotics for pyelonephritis.

Mental Health

Mental health scenarios depict nursing care for clients experiencing mood alterations, behavioral challenges, emotional instability, or psychiatric disorders. Mental health scenarios depicted clients who attempted suicide, experienced mania, expressed suicidal ideation, and experienced gender dysphoria.

Situation Urgency

Urgent

Urgent situations in this study refer to any situation requiring immediate nursing action to prevent losing life, limb, or hemodynamic stability, or maintaining client or healthcare provider's safety. In this study, urgent situations included observing nursing care for a client experiencing acute mania, suicidal ideation, respiratory distress from pulmonary edema, and anaphylactic reaction.

Routine

Conversely, routine situations involve standard procedures, assessments, or skills without an unexpected client condition change. Routine scenarios included observing nursing care for clients awaiting a femoral-popliteal bypass graft, experiencing nausea, recovering from a recent appendectomy, and being discharged with pneumonia.

Complexity Level

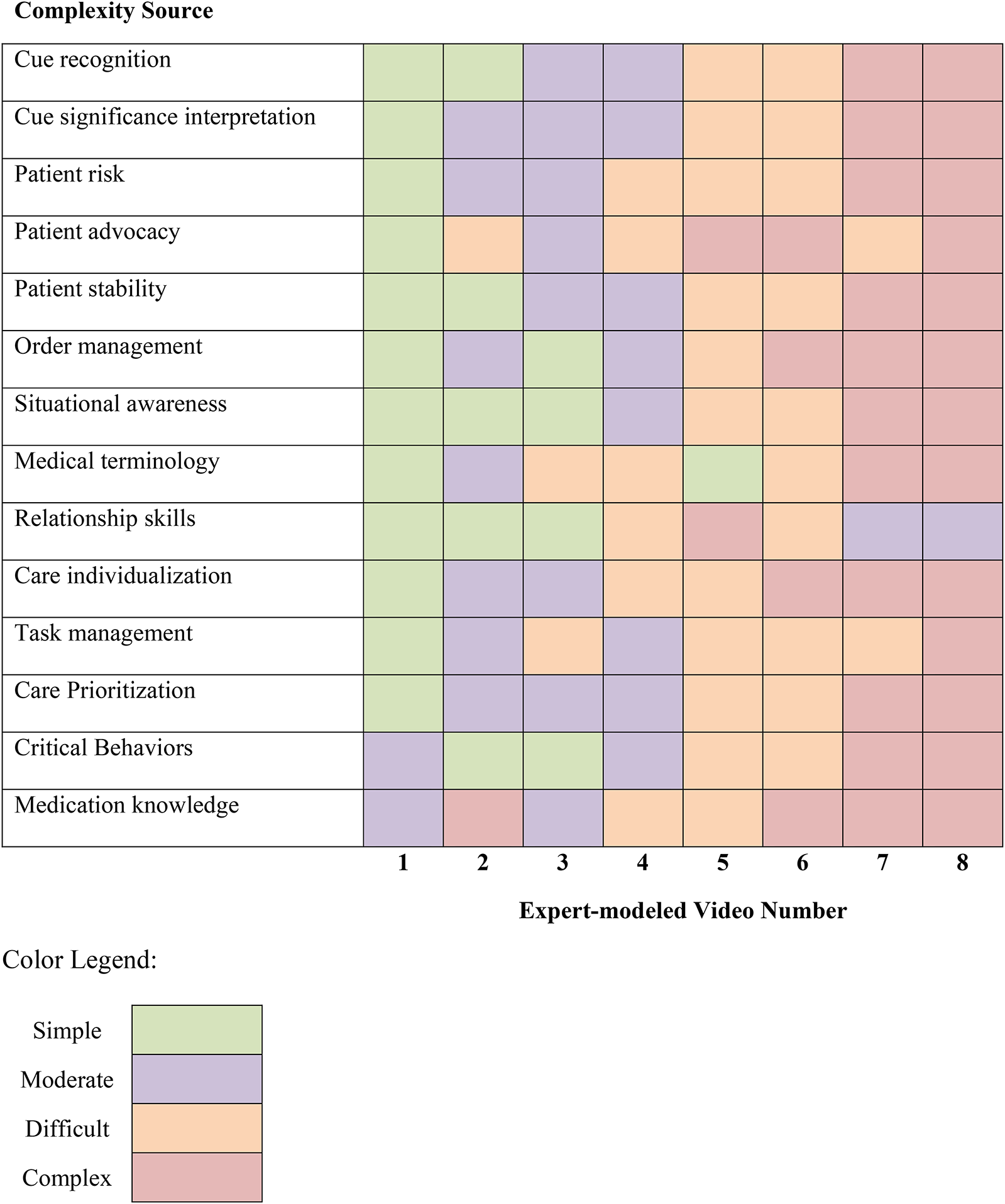

Complexity level was determined post hoc by rating researcher-identified complexity sources from simple, moderate, difficult, to complex. Table 3 provides EMS simulation design examples determining complexity ratings for each complexity source. Table 4 rates each EMS's complexity based on each complexity source. Generally, the first and second simulation were simple, then increased by a complexity level every two simulations.

Example Video Components for Determining Complexity Ratings by Complexity Source.

Expert Modeling Video Complexity Ratings by Source.

Learner Ability

Participants were classified as having low, medium, or high CJ ability based on ranking cumulative total LCJR scores from all eight EMS observations. Low ability learners cumulative LCJR ranged from 114 to 138, whereas medium and high ability ranged from 140 to 148 and 149 to 172, respectively. Higher cumulative scores indicated high learner ability.

Measures

Clinical Judgment

The LCJR, based on Tanner's CJ model (Tanner, 2006), measures CJ over four phases (i.e., noticing, interpreting, reflecting, and responding). These phases are subdivided into 11 domain items that describe required elements for developing effective noticing, interpreting, responding, and reflecting. For example, effective noticing requires focused observation, recognizing deviations from expected patterns, and information seeking. Each domain item is assessed using a four-point Likert scale, with descriptors corresponding to four performance levels: beginning (1), developing (2), accomplished (3), and exemplary (4). Total scores range from 11 to 44, with higher scores indicating more advanced clinical judgment. LCJR has good internal consistency, with Cronbach alpha ranging between .89 and .974 with measuring CJ in undergraduate nursing students (Adamson & Kardong-Edgren, 2012; Cantrell et al., 2021). LCJR is also valid (Victor-Chmil & Larew, 2013) and sensitive (Shinnick & Woo, 2020) for measuring novice learners’ CJ. LCJR also has acceptable reliability for measuring CJ in observers’ written reflections, with a Cronbach alpha of .67 (Rogers & Franklin, 2023). While Cronbach alpha in observers represent marginally acceptable scores, there are no other current methods in the literature for objectively measuring observers’ clinical judgment.

Demographics

Participants reported age, race, gender, ethnicity, learning style preference, previous degrees, and previous healthcare experience via QualtricsXM using a researcher-developed tool.

Statistical Analysis

Data were analyzed using commercially available statistical software (IBM SPSS v.26 and Stata MP v.15). A statistician provided oversight for developing analysis plans and interpreting results. Power calculations using G*Power indicated 36 participants were needed for detecting a medium effect, based on 95% power with an α value 0.05 (Faul et al., 2007). Inter-rater reliability was performed on ten percent of LCJR scores using Gwet's AC statistic (Gwet, 2008) and percent agreement. Gwet's AC serves as an alternative to Cohen's kappa for assessing inter-rater reliability (IRR). Unlike kappa, which can be influenced by biases stemming from observer disagreements or imbalanced marginal distributions, Gwet's AC is designed to mitigate these issues and provide a more robust measure of agreement. Interrater reliability in this study represented substantial agreement with a cumulative AC 0.74 (± 0.045 CD) and acceptable agreement with 78.15 (±3.39% SD) percent agreement.

Participant Demographics

Participants’ demographics were compared between high, medium, and low ability groups using Fisher Exact, chi-square, Kruskal Wallis, and one-way analysis of variance (ANOVA) for dichotomous, multinomial, ordinal, and continuous variables respectively. Kruskal Wallis analysis was used when ANOVA assumptions were violated.

Simulation Design Impact on CJ

After checking test assumptions, the effects of scenario topic and complexity level on CJ were analyzed using a two-way repeated measure analysis of variance (RM ANOVA). This analysis was repeated to examine the effect of situation urgency and complexity level on CJ.

Simulation Design Impact on CJ by Learner Ability

Serial two-way RM ANOVA's were performed to analyze the effect of scenario topic and complexity level for high, medium, and low ability learners’ CJ after checking test assumptions. Serial analyses were repeated to examine situation urgency and complexity level effect on CJ for each learner ability group.

Results

Sample Characteristics

The study population was predominantly white and female. Participants had similar demographic characteristics based on CJ ability (Table 5).

Demographic Characteristics by Ability Groups.

The data met 2-way RM ANOVA test assumptions except CJ was not normally distributed as assessed by Shapiro-Wilk's test on the studentized residuals values p > .05. There were also a few studentized residual outliers, with values ranging from 3.08 to 3.43. After consulting with a statistician, non-normally distributed CJ data were not transformed, and outliers were not removed because neither significantly impacted results. Degrees of freedom were corrected using Greenhouse-Geisser method for any test with Mauchly's Test of Sphericity p > .05. Table 6 provides mean LCJR scores by simulation design and learner ability.

LCJR Means by Simulation Design for Overall Group and by Learner Ability.

Simulation Design Impact on CJ

Scenario Topic and Complexity Level

There was a statistically significant interaction between scenario topic and complexity level on CJ F(3, 180) = 4.773, p = .003. ηp2 = .074. There was a significant effect of complexity level on CJ for medical surgical and mental health scenario topics, respectively F(3, 180) = 15.877, p < .001, F(2.479, 148.753) = 36.077, p < .001, ε = .826 (Figure 1). Post hoc complexity rating planned comparisons revealed CJ did not significantly change after the second medical surgical observation complexity level rated “difficult” (M = −0.262, SE .314, p = 1). As complexity increased, CJ significantly increased in mental health scenarios between “Simple” and “Moderate” complexity ratings (M = 3.836, SE .408, p < .001), but significantly decreased when rated “difficult” (M = −1.885, SE = .313, p < .001).

Clinical judgment means by scenario topic and scaffolded complexity.

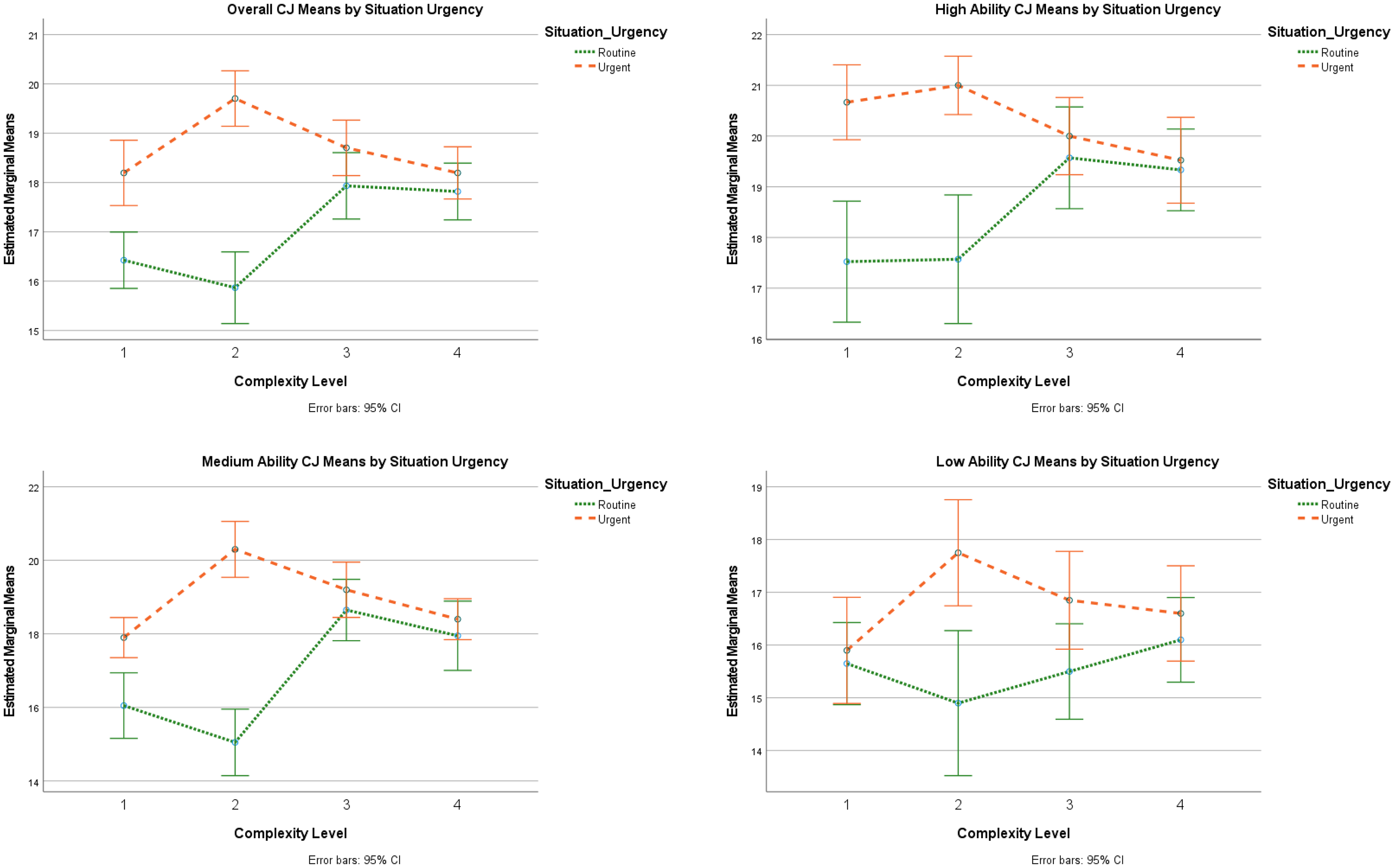

Situation Urgency and Complexity Level

There was a statistically significant interaction between scenario urgency and complexity level on CJ F(3, 180) = 20.181, p < .001, ηp2 = .252. There was a significant effect of complexity level on CJ for routine and urgent scenarios, respectively F(3,180) = 13.475, p < .001, ηp2 = .183; F(3, 180) = 10.741, p < .001, ηp2 = .152 (Figure 2). Post hoc complexity rating planned comparisons revealed as complexity increased in routine scenarios, observers’ CJ made significant gains in “moderate” complexity levels (M = 1.508, SE = .409, p < .001), but then decreased insignificantly when advancing to “difficult” complexity levels (M = −.114, SE = .351, p = 1). More notably, during urgent situations, observers made significant gains over the first two “moderate” complexity observations (M = 1.508, SE .301, p < .001), but CJ significantly declined in “Difficult” (M = −1.000, SE .283, p = .005) and “Complex” levels (M = −1.508, SE .283, p < .001).

Clinical judgment means by situation urgency and scaffolded complexity.

Simulation Design Impact on CJ by Learner Ability

Scenario Topic and Complexity Level

There was not a statistically significant interaction between scenario topic and complexity level on CJ in high, medium, or low ability learners, respectively F(3,60) = 1.324, p = .275, ηp2 = 0.062, F(3,57) = 2.561, p = .064, ηp2 = .119, F(3,57) = 2.135, p = .106, ηp2 = .101 (Figure 1). The main effect of scenario topic did not significantly impact CJ between complexity level in high, medium, or low learners, respectively F(1,20) = 3.027, p = 0.097, ηp2 = .131, F(1,19) = 1.174, p = .292, ηp2 = .058, F(1,19) = .001, p = .972, ηp2 = .000. However, complexity level did significantly impact CJ in high, medium, and low ability learners, respectively F(3,60) = 14.412, p < .001, ηp2 = .419, F(3,57) = 42.617, p < .001, ηp2 = .692, F(3,57) = 5.991, p = .001, ηp2 = .240. Post hoc complexity rating planned comparisons revealed the following CJ impacts by learner level.

High Ability

Observers made significant gains between the first two complexity levels (M = 2.571, SE .578, p = 0.001), but then stalled at “difficult” levels and significantly declined during “complex” levels (M = −1.071, SE .330, p = .024).

Medium Ability

As complexity increased, observers made significant gains over the first three complexity levels (M = 4.2, SE = .413, p < .001), but then significantly decreased at the “complex” level (M = −1.575, SD = .293, p < .001).

Low Ability

Low performers were not able to demonstrate significant gains over the first two complexity levels, but then significantly increased CJ at “Difficult” levels (M = 1.6, SE .392, p = .001) but then lost most CJ gains at the “complex” level (M = −.950, SE .451, p = .379).

Situation Urgency and Complexity Level

CJ outcomes depend on the situation urgency and complexity level in high, medium, and low ability observers, respectively F(3,60) = 31.244, p < .001, ηp2 = .306, F(3,57) = 13.741, p < .001, ηp2 = .420, F(3,57) = 4.815, p = .005, ηp2 = .202 (Figure 2). There was a significant effect of complexity level on high and medium, but not low ability observers’ CJ for routine situations, respectively F(3,60) = 4.549, p = .006, ηp2 = .185, F(3,57) = 14.524, p < .001, ηp2 = .433, F(1.91, 36.294) = 1.186, p = .315, ηp2 = .059, ε = .637. Complexity level significantly impacted high, medium, and low ability observers’ CJ in urgent scenarios respectively F(3,60) = 3.402, p = .023, ηp2 = .145, F(3,57) = 10.107, p < .001, ηp2 = .347, F(3,57) = 3.425, p = .023, ηp2 = .153. Post hoc complexity rating planned comparisons revealed the following CJ impacts by learner level.

High Ability

During routine situations, high-ability learners made the largest CJ gains in “moderate” complexity levels (M = 2, SE .778, p = .109), but increasing complexity level prevented making significant gains from the first to last scenario (M = 1.8, SE .758, p = .162). During urgent situations, CJ significantly declined as complexity increased from “difficult to “complex” levels (M = −1.476, SE .450, p = .023).

Medium Ability

During routine situations, CJ increased significantly in “moderate” complexity levels after observing two simple procedures (M = 3.6, SE .520, p < .001), but then there was no significant increase beyond “difficult” levels (M = 0.7, SE .711, p = 1). As complexity increased in urgent EMS observations, CJ significantly increased significantly in a “difficult” level after one practice observation (M = 2.4, SE .419, p < .001) but then significantly declined as complexity level rose beyond “difficult” (M = −1.9, SE .429, p = .002).

Low Ability

Increasing complexity prevented low-ability learners from gaining significant CJ from the first to last routine EMS observation (M = .45, SE .484, p = 1). As complexity increased in urgent situations, CJ significantly increased between “moderate” observations (M = 1.85, SE .563, p = .023) but then declined as complexity level rose beyond “difficult” (M = −.7, SE .539, p = 1).

Discussion

Nurse educators need to understand conditions impacting CJ, an essential novice nurse competency. Assigning simulation observations improves CJ, but gains are not consistent over time (Rogers & Franklin, 2022) This secondary data analysis is the first to explain how simulation designs (i.e., scenario topics and situation urgency) and complexity level impacts observers’ CJ. Findings from 61 junior-level prelicensure participants indicate observers’ CJ outcomes depend on the scenario topic and situation urgency complexity level. As complexity increases beyond “difficult” levels, CJ is negatively impacted in urgent and complex medical surgical or mental health scenarios, despite ability. Overall, observers’ CJ significantly improves in routine situations but is easily overwhelmed in urgent situations. However, high and low ability learners are vulnerable to premature complexity level increases even in routine situations. Therefore, nurse educators should consider learner ability and simulation designs when increasing complexity.

This study found complexity level impacted junior-level observers’ CJ in all simulation designs, despite learner ability. Specifically, CJ gains were significantly impacted when increasing to “difficult” levels. These findings are consistent with multiple previous studies that found placing healthcare professions learners in increased complexity negatively impacts learning outcomes (Haji et al., 2015; Smith et al., 2022; Tremblay et al., 2019, 2023). Educators need to understand what complexity level is appropriate for learners at all levels to inform simulation design best practice (Miller et al., 2021) and ensure instructional efficiency. This study suggests junior-level learners should have more time in “simple” and “moderate” simulations prior to increasing to higher levels. It is important to note that complexity in this study was determined by grading EMS qualities post hoc based on researcher-identified complexity sources. Learners could identify scenario complexity differently. Future studies investigating simulation complexity from the learners’ perspective could inform simulation design best practice.

Next, this study found observers’ overall CJ depends on the scenario topic and complexity level. Furthermore, overall CJ is negatively impacted in urgent and complex medical surgical or mental health scenarios. Contrarily, a recent study found junior-level nurses’ learning outcomes from complex medical-surgical simulations were significantly improved by repeating the same simulation with deliberate observer role practice after debriefing (Zulkosky et al., 2021). Debriefing and simulation sequencing may explain these differences. Observers in this study received debriefing after measuring CJ outcomes and were then placed in different simulation scenarios instead of repeating the same scenario. This required observers to transfer learning from previous simulation observations instead of applying knowledge gained from debriefing in the same simulation scenario. This study design mimics current practice because most simulation programs randomly assign observers to simulation scenarios thereby increasing simulation capacity but limiting opportunities for deliberate practice following debriefing feedback. It is possible junior-level observers need more time repeating observing complex urgent, medical-surgical, and mental health scenarios after debriefing rather than moving to a new, more complex scenario. Future studies measuring CJ outcomes prior to debriefing following deliberate role practice could help clarify simulation observation best practice.

This study found observers’ CJ significantly declines in urgent situations as complexity increases, despite learner ability. This is consistent with other studies finding novice nurses being ill-prepared when managing deteriorating patients (Kavanagh & Sharpnack, 2021). Klenke-Borgmann et al. (2023) suggest simulation is a method for developing novice nurses’ cognitive resources to effectively notice and respond to patients’ deterioration. Accrediting bodies require nurse educators evaluate learners’ CJ competency prior to graduation (American Association of Colleges of Nursing, 2021). A recent study demonstrates nurse educators can reliably measure and track observers’ CJ outcomes (Rogers & Franklin, 2023). However, this study adds important implications for measuring CJ competency. Since CJ declined with increasing complexity, nurse educators should not measure CJ using one scenario to determine CJ competency. Instead, CJ should be measured in multiple situations to ensure learners are able to display CJ in multiple situations. Furthermore, junior-level observers’ CJ was overwhelmed by observing complex situations indicating this may not be a reasonable learner expectation. Future research should quantify what CJ educators can reasonably expect learners to demonstrate at each level prior to graduation in complex situations to define CJ competency criteria.

Interestingly, even though observers’ overall CJ depends on the scenario topic and complexity level, findings were not the same based on learner ability. High, medium and low ability observers’ CJ did not depend on the scenario topic, only complexity level. Since simulation observations are an important educational tool for developing learners’ competency, educators need to understand more about conditions impacting observer outcomes. Exploring human factors associated with simulation role assignments could be an important next step. For example, simulation designs impact learners’ cognitive load (Rogers & Franklin, 2021). It is postulated observers have less cognitive load and more situational awareness than active participants because there is less performance stress (El Hussein & Ha, 2023; O’Regan et al., 2021). However, there is a dearth of evidence measuring cognitive load and situational awareness in observers. Measuring cognitive load and situational awareness could be an important next step in future studies for explaining this study's findings.

This study compared observers’ longitudinal CJ learning outcomes prior to debriefing over time and discovered simulation designs, most notably complexity level, impact learning outcomes. Collectively this study demonstrates nurse educators need to know more about leveling appropriate simulation complexity. Prematurely increasing simulation complexity either stalled progress or significantly negatively impacted observers’ CJ outcomes. This study used faculty opinions to determine appropriate complexity levels, and the researcher unintentionally put learners in too complex scenarios too soon. There is a conversation equating intentionally placing learners in highly complex situations as learner abuse because this practice causes significant training scars (Kardong-Edgren et al., 2024). This study confirms that prematurely increasing complexity negatively impacts CJ in complex and urgent, medical-surgical, and mental health scenarios. Therefore, it is imperative that future studies explore and quantify what learners perceive as complex and what situations are appropriate based on learner level and ability.

Nurse educators need to rethink how simulation complexity is scaffolded to ensure junior-level nursing students retain and effectively apply clinical judgment (CJ) skills. Instead of varying scenario topics and situation urgency, scaffolding complexity within a single topic and complexity source may be more effective for developing and retaining CJ. This approach could allow learners to build upon foundational skills and apply them to more complex situations, fostering deeper understanding and skill transfer. Research shows gradually increasing complexity levels enhances noticing skills (Poledna et al., 2022) and CJ improves when novices perform similar simulations when only slight changes are made to the patients’ scenario (Williams, 2024). Conversely, abrupt increases in complexity have been shown to negatively impact learning outcomes (Smith et al., 2022; Tremblay et al., 2019, 2023). By adopting a more deliberate and progressive approach to scaffolding complexity within similar scenarios, nurse educators can better support students in developing robust CJ skills that are both retained and readily applicable in practice.

In this study observers were provided simulation preparation, but not specifically preparing for observer roles. The HSSOBP for Pre-briefing recently defined role orientation as a critical step for learner simulation preparation (McDermott et al., 2021). Simulation observation thought leaders suggest observers need different preparation for simulation observation experiences (Johnson & Fey, 2023). It is possible providing individualized observer preparation prior to urgent and complex scenarios could have improved results by focusing observers’ attention to salient cues, thereby improving situational awareness. Future studies investigating observer simulation preparation and pre-briefing could inform HSSOBP for pre-briefing simulation observers.

As nurse educators move to use simulation for evaluating competency, it is important to ensure learners have adequate practice before high stakes simulation testing occurs. Specifically, the HSSOBP for learner evaluation requires learners to have adequate practice in similar experiences before high stakes simulation testing (McMahon et al., 2021). Many programs use simulation observations as preparation for active simulation, assuming this prepares learners for active simulation experiences. This study indicates most observers made CJ gains during less complex practice sessions, but more practice is needed before increasing complexity in complex, urgent, medical-surgical, and mental health scenarios. It is important to note that low and high performers were especially vulnerable to prematurely increasing complexity because they were unable to make CJ gains in routine situations along with complex urgent, medical-surgical, and mental health scenarios. This suggests observers need more practice in less complex scenarios to allow more CJ development and learner ability may influence practice needs. This is consistent with other research indicating starting simulation earlier in the nursing curriculum improves CJ outcomes (Hambach et al., 2023). Specifically, low performers need more time observing simulation and learners may need more tailored simulation observation experience based on learner ability. Nursing simulationists need to understand more about simulation observation dosing prior to using simulation for high stakes competency testing to ensure learners have adequate practice.

This study found when observers are placed in too complex environments, CJ may be easily overwhelmed. Observing expert nurses perform nursing care during hospital-based clinical learning experience rotations is very similar to observing EMS. However, there is a paucity of evidence for learning outcomes from clinical experiences (Leighton et al., 2021, 2022). This study could have implications for observing complex and urgent, medical-surgical, and mental health hospital-based clinical learning experience rotations. Nurse educators often ask charge nurses for input on appropriate patient selection who subsequently suggest patients with “interesting,” unique, or complex health problems. It is possible students need more repetition in routine hospital-based clinical learning experiences before caring for more complex patients. Similarly, when learners observe a complex urgent, medical-surgical, or mental health scenario, important details may be missed resulting in inadequate CJ. In both cases, nurse educators and nurse preceptors must ensure learners have adequate debriefing. Using guided reflective questions from this study could help evaluate learners’ CJ outcomes from hospital-based clinical learning experiences (Billings & Kowalski, 2019; Lasater & Nielsen, 2024; Lee & Wessol, 2022; Schooley et al., 2024; Walsh & Sethares, 2022; Williams & Hence, 2024). Future studies investigating the reliability for LCJR measuring CJ in clinical reflections could fill an important void in the nursing education literature for measuring clinical experience learning outcomes.

Implications for Nursing Education

This study has many important implications for simulation design, competency assessment, and learner simulation experiences. First, nurse educators should consider learner ability and complexity level when designing simulation observations. This study substantiates following the HSSOBP Outcome & Objectives (Miller et al., 2021) for scaffolding simulation objective complexity and helps nurse educators identify potential complexity sources. Next, increasing complexity beyond learner ability consistently negatively impacts learning outcomes (Smith et al., 2022; Tremblay et al., 2019, 2023), just as it did in this study. Nurse educators can use the complexity descriptions in this study to ensure junior level learners have more practice with “simple” and “moderate” complexity levels. Nurse educators should consider using these descriptions for building complexity more gradually to improve skill performance (Haji et al., 2016; Poledna et al., 2022). Additionally, this study found simulation design decisions significantly impact CJ learning outcomes, which is consistent with findings from Fogg et al. (2023). Therefore, as many nursing programs are moving towards using simulation to evaluate learners’ competency, nurse educators need to ensure simulation designs match learner ability. Furthermore, since CJ depends on scenario topics and situation urgency complexity level, nurse educators should evaluate learners using multiple different situations before determining CJ competency. Nurse educators should ask learners to respond to clinical judgment prompts after clinical and simulation for measuring clinical judgment and tracking progress over time (Billings & Kowalski, 2019; Lasater & Nielsen, 2024; Lee & Wessol, 2022; Schooley et al., 2024; Walsh & Sethares, 2022; Williams & Hence, 2024). Finally, this study identified novice nurses who could make significant CJ gains in more simple simulation observations. This suggests junior-level learners need more time in simple scenarios before increasing complexity to prevent overwhelming CJ gains. It is possible starting simulations at an earlier time in the nursing program could help learners build skills more efficiently (Hambach et al., 2023).

Strengths and Limitations

The strengths of this study include the within-subjects design because it allows participants to serve as their own control. This helps reduce individual differences in knowledge, ability, or motivation from impacting results. This secondary analysis is limited by reviewing previously collected data and therefore did not precisely control scenario design conditions. Furthermore, videos design components were not precisely controlled according to LCJR expertise criteria. Even with this limitation, we were able to demonstrate consistency between overall and learner ability levels therefore improving the findings’ trustability. Future studies could more precisely control simulation design according to LCJR expertise criteria to quantify how learners respond to simulation designs. Next, this study is limited by defining complexity post hoc according to expert opinion rather than precisely controlling complexity in the simulation design. Despite this limitation, increasing complexity significantly impacted learners’ CJ no matter how the results were analyzed. Investigating complexity using a priori controlled simulation designs would inform simulation design complexity best practice.

Conclusion

Simulation designs impact observers’ CJ outcomes. Namely, prematurely increasing complexity levels negatively impact observers’ CJ no matter the situation urgency, scenario topic, or learner ability. Nurse educators should use caution when increasing simulation complexity to prevent overwhelming observers’ CJ. Nurse educators need to ensure observers have adequate deliberate practice in simple, routine scenarios before building complexity with complex and urgent, mental health, and medical-surgical scenarios. This article is an important first step to understanding conditions impacting simulation observer learning outcomes. This article also informs the HSSOBP for scaffolding learner SBLE objective complexity. We need more evidence comparing CJ outcomes under different conditions using all learner levels and role assignments to inform simulation design best practice.

Footnotes

Ethical Approval and Informed Consent Statements

The Texas Christian University Institutional Review Board determined this study was exempt (TCU#2023-429). Participants provided informed consent electronically and provided additional volunteer consent for data use for secondary analysis.

Author Contributions

Beth A. Rogers was responsible for conceptualization, data curation, formal analysis, methodology, funding acquisition, investigation, data curation, writing original draft., editing revisions, and visualization of this manuscript.

Data Availability Statement

Inquiries to access data and other research-related materials may be submitted for consideration to the corresponding author.

Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported in part by internal grants from the TCU Research and Creative Activities Fund and TCU Invests in Scholarship Fund. Publication charges for this article were supported by the TCU Library Open Access Fund.