Abstract

Increased interest in the opportunities provided by artificial intelligence and machine learning has spawned a new field of health-care research. The new tools under development are targeting many aspects of medical practice, including changes to the practice of pathology and laboratory medicine. Optimal design in these powerful tools requires cross-disciplinary literacy, including basic knowledge and understanding of critical concepts that have traditionally been unfamiliar to pathologists and laboratorians. This review provides definitions and basic knowledge of machine learning categories (supervised, unsupervised, and reinforcement learning), introduces the underlying concept of the bias-variance trade-off as an important foundation in supervised machine learning, and discusses approaches to the supervised machine learning study design along with an overview and description of common supervised machine learning algorithms (linear regression, logistic regression, Naive Bayes,

Keywords

Introduction

Medical data are reported to be growing by as much as 48% each year. 1 This explosion of data and the associated challenges of its optimal use to improve patient care are driving development of a myriad of new tools that utilize artificial intelligence (AI) and machine learning (ML). Artificial intelligence is the capability for machines to imitate intelligent human behavior, while ML is an application of AI that allows computer systems to automatically learn from experience without explicit programming. Paraphrasing Arthur Samuel and others, ML models are constructed by a set of data points and trained through mathematical and statistical approaches that ultimately enable prediction of new previously unseen data without being explicitly programmed to do so. 2,3 Once residing only in the realm of science fiction, advancements in computing power and accessibility has prompted a technological revolution involving AI and ML that is already impacting many domains of our everyday lives, including credit decisions, travel, personalized suggestions for movies, books, and other products as well as temperature control in our own homes. These tools are being increasingly incorporated into a broad range of clinical practice in many different medical disciplines and have become an area of intense investigation. Reflecting the growing role of AI/ML in medicine, the Food and Drug Administration recently issued a white paper 4 to safely guide AI development, which underscores the promise that AI and ML are believed to hold for improving medical practice and patient care.

The field of pathology and laboratory medicine is important to the development and ongoing improvement in many medical AI/ML tools and will likely play an even larger and more pivotal role as AI and ML applications expand across health-care settings. Perhaps as many as 70% of all medical decisions are based on laboratory tests. 5 Additionally, the bulk of data in the electronic medical record is from the clinical laboratory. Test results from pathology and the clinical laboratory frequently serve as the gold standard for clinical outcomes studies, clinical trials, and quality improvement. This massive amount of data requires enormous capacity for storage and sophisticated methods for handling and retrieval of information, necessitating the application of certain data science disciplines such as AI/ML.

Pathologists and laboratorians are therefore excited about the promise that AI/ML can bring to their ability to impact health care; however, even those interested in pursuing AI/ML as an area of clinical investigation or quality improvement are largely unfamiliar with the field and the processes involved with utilizing the tools it has to offer. The variable quality of medical and laboratory data available for use as well as the sheer diversity and complexity of ML algorithms creates a cornucopia of choices as well as challenges for investigators seeking to develop the best AI/ML predictive model. Once a quality data set has been established, an optimal ML model needs to be identified which means fully vetting the algorithms by building and testing multiple models for their appropriateness to the task at hand.

The most successful AI/ML models arise from multidisciplinary teams with expertise in ML, clinical medicine, pathology and laboratory medicine, biostatistics, and other relevant skillsets. Such a multidisciplinary team will be best equipped to address the following queries that are fundamental to successful project design: Does the project address a need? Is there sufficient data and is it the “right” kind of data that is both readily available and vetted by clinical experts in the field? Which ML approach to use? Are the optimized ML models applicable and generalizable when applied to a novel data set?

The purpose of this article is to facilitate cross-disciplinary literacy among pathologists, laboratorians, biomedical scientists, and individuals from other medical disciplines seeking to work in multidisciplinary teams to develop or facilitate the early adoption of AI/ML tools in health care. We define cross-disciplinary literacy as having sufficient content knowledge (including strengths and limitations of availability tools and the concepts behind them) as well as a working understanding of the field’s unique vocabulary that interested individuals from other disciplines can understand written and spoken communications, think critically, and use this knowledge and skill in a meaningful way for their own discipline. To accomplish this goal, we describe the current landscape for AI/ML in pathology and laboratory medicine by defining the elements and numerous available options necessary to address the 4 queries essential to design of AI/ML tools in health care outlined above: defining the purpose, data curation and quality, choosing the most appropriate ML algorithm, and testing/validation. Table 1 is the glossary of commonly encountered ML terminology within the scope of this article which provides definitions and examples for each term.

Common Machine Learning Terms.

Abbreviations: AKI, acute kidney injury; CV, cross-validation;

Current Landscape and Approach to Developing Machine Learning tools

There is clearly a need to apply rational and systems-based data science principles for handling the ever-growing body of both qualitative and quantitative aspects of medical laboratory information and classification. Faced with the limitations of human processing of rapid, accurate, and precise retrieval of data in real time, the heuristic provided and amplified by ML offers an attractive approach to substantially improve the delivery of health care. Current health problems that are deemed suitable to ML include, but are not limited to, integrating multiple variables to mimic human clinical decision-making skills (eg, multiparameter disease diagnosis), automation of testing and treatment algorithms (eg, reflex testing) and workflows, pattern recognition using imaging data (eg, radiology, histology slides, and vital sign waveforms), and/or test utilization trends. However, although one could use AI/ML, it may not always be necessary to apply such tools for every situation since simple statistical approaches may sometimes suffice.

The familiar concept of “garbage-in/garbage-out” highlights the critical importance of having high-quality data for AI/ML applications, since incomplete and/or erroneous values may inappropriately train an algorithm in the wrong direction. Likewise, highly controlled data may not represent real-world conditions. “Quality data” for AI/ML training applications must include accurate, precise, complete, and generalizable information. 6 Laboratory data are often assumed to be sufficiently accurate and precise by both health-care providers and researchers. Unfortunately, it is a truism that not all laboratory tests are created equal, and poor analytical bias and imprecision degrade the performance of AI/ML algorithms. Additionally, both providers and researchers are often not aware that test methods may lack standardization. For instance, a cardiac troponin I assay from one manufacturer may not be the same when compared to another due to differences in epitopes for antibody-based capture and detection. 7 The concept of imprecision reported as coefficient of variation is also poorly understood by most bedside providers with many assuming any change in numerical values reflecting a true biological change without taking into account sources of variability.

Data completeness and generalizability are other important considerations when developing and training AI/ML algorithms. 8 Unfortunately, despite the convenience of collecting real-world information from electronic health records, the retrieved medical data are often incomplete. This is attributed to the several inconsistencies in test ordering and resulting. Ordered laboratory tests may be cancelled due to patients not showing up for a visit, or samples were found to be not acceptable upon receipt by the laboratory. Incomplete data create significant challenges for AI/ML developers, where the predictive power of algorithms may be severely diminished. The limitation of real-world evidence has thus prompted investigators to gravitate toward more complete and rigorous data derived from clinical trials. However, caution is advised when using data that are “too complete” or “too controlled,” since it may not represent the real-world population and contribute to overfitting, discussed later in this article. 9

Ultimately, the best and most balanced approach is to pilot AI/ML algorithms using more controlled data during the initial stages and later refining these algorithms using real-world data to confirm generalizability.

Choosing the right ML approach for a given task requires a basic understanding of the general categories of ML algorithms as well as a basic understanding of these algorithms’ inner workings, strengths, and limitations. These are outlined below.

Machine Learning General Categories: The Big Picture

Within the various ML platforms, there are a multitude of algorithms to choose from.

10,11

The choice of an algorithm depends on a variety of factors that include, but are not limited to, data type/learning approach (supervised or unsupervised learning), the need for

Overview diagram of machine learning algorithms. Machine learning is a subset of artificial intelligence. This figure illustrates the hierarchy of different machine learning algorithms including supervised versus unsupervised versus reinforcement learning techniques. The 2 major categories of supervised learning are classification and regression which lead to discrete/qualitative and continuous/quantitative targets, respectively.

A supervised ML algorithm makes use of the training data to learn a function (f) by mapping certain input variables/features (X) from the training data into some output/target (Y). In general, supervised ML platforms employ “labeled” training data sets to yield a qualitative or quantitative output. The labeled nature of the data evaluated in the training phase is a key feature of this method, since it allows the ML model to ultimately emulate the expert’s input data. As a result, the ML model can distinguish an unknown input based on its prior training parameters. In the “classification” approach of supervised learning, the labeled data/variables (which can be numbers, text, or unstructured data such as images) yield a discrete (qualitative) “class” output. An example of a classification approach is the breast cancer histology image identification model in which a supervised ML platform is used to yield a qualitative answer/identification based on labeled histologic image training data sets that are then used to predict future unknown histologic images. In contrast, the “regression” approach of supervised learning involves the cumulative acquisition of data variables to yield a continuous (quantitative) numerical output (Figure 1). Notably, most reproducible supervised studies follow the Cross Industry Standard Process for Data Mining or some modification thereof. 13,14

Unsupervised ML methods involve agnostic aggregation of unlabeled data sets yielding groups or clusters of entities with shared similarities that may be unknown to the user prior to the analysis step. These are also sometimes referred to as clustering algorithms. Some of the most common methods employed in this approach include

Reinforcement learning platforms may share features of both a supervised and an unsupervised process and usually function through a policy-based platform. An example of reinforcement learning is International Business Machine (IBM)’s Deep Blue (Armonk, New York) and Google’s Go (Alphabet, Mountain View, California) that were able to beat champion chess 18 and Go players, 19 respectively. However, currently reinforcement learning approaches are rarely employed in pathology. This may change in the future.

“Supervised” Machine Learning Algorithms (General Overview)

As noted earlier, in medicine and in pathology in particular, ML models employed are chiefly based on supervised approaches. Based on the amount of data and data type (eg, image vs numerical values vs text), the type of algorithm employed could drastically alter the ML model’s predictive capabilities. In the sections below, the various supervised algorithms within ML are discussed with an emphasis on the classification approach within the supervised learning category. The advantages and limitations of each are also provided since these provide insight into the approach to such studies.

The type of the input data can alter the approach to the analysis step and the type of algorithm that needs to be employed. Although similar algorithms may be applied to different data types, commonly used data types in the health sciences include image and text which have made use of visual recognition platforms and natural language processing frameworks, respectively. In both of these settings, deep learning neural network algorithms are now commonly employed. Deep neural networks have become the gold standard for image classification. However, neural networks are not the only algorithms within ML and may not always be the most suitable method when using nonimage data (eg, numerical laboratory data).

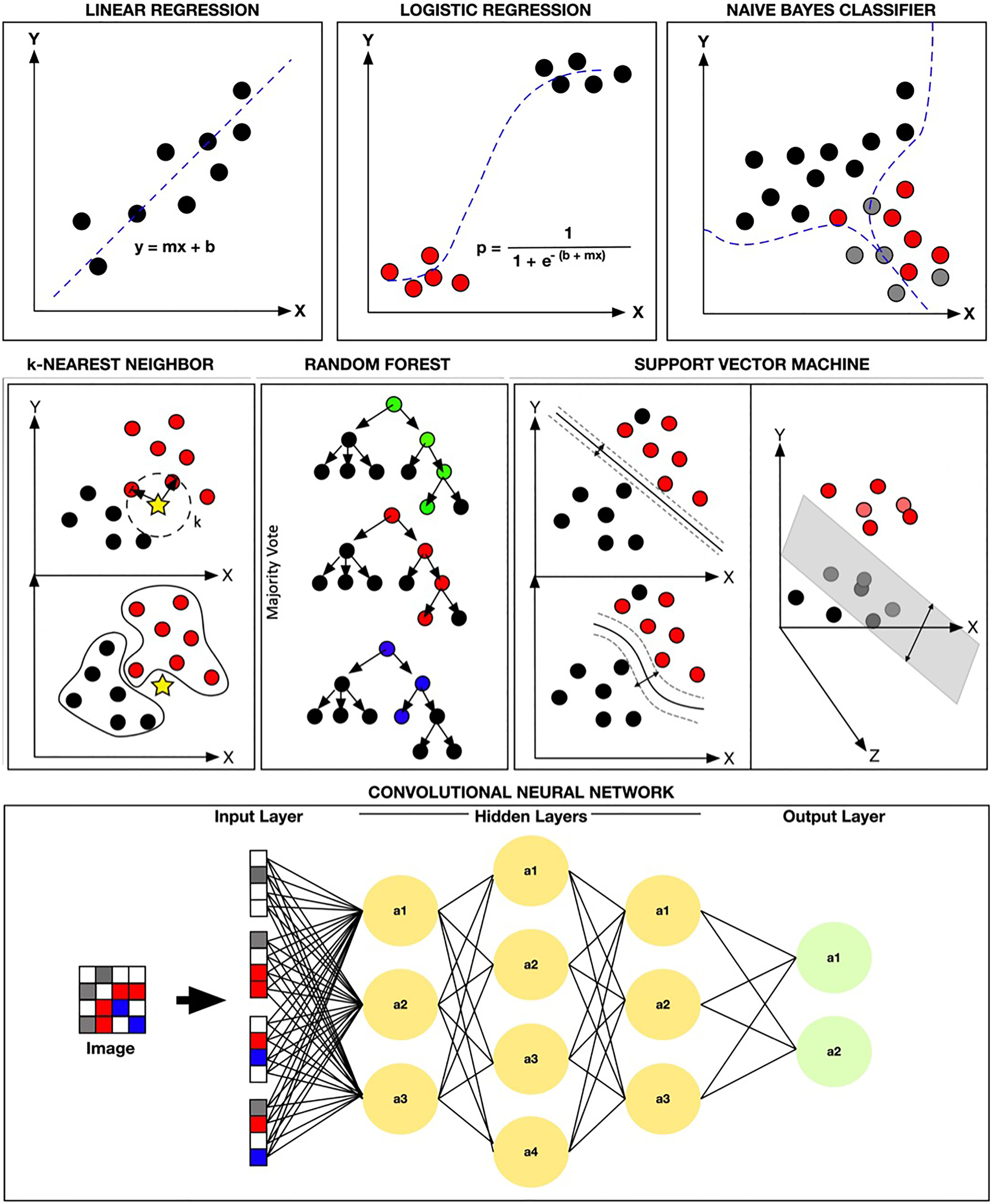

Commonly used supervised learning algorithms encompass both convolutional neural networks (CNNs; eg, deep learning) and various non-neural network algorithms (Table 2). Some of the most common non-neural network algorithms employed include linear regression, logistic regression, naive Bayes, decision tree,

Comparison of Most Common Supervised Learning Algorithms.

*Support Vector Regression (SVR), not discussed here, is the counterpart to SVM and used for regression studies.

In supervised classification platforms, if accuracy is not the ultimate goal, algorithms such as logistic regression or naive Bayes may suffice. However, if accuracy is the primary objective in these classification tasks, then the algorithms of choice currently include kernel SVM,

On the other hand, in supervised regression (nonclassification) platforms, if accuracy is not the ultimate goal, algorithms such as the linear regression and decision tree may suffice. In contrast, if accuracy is the primary objective, then the algorithms of choice currently include RF and CNNs (Table 2).

Bias-Variance Trade-Off in “Supervised” Machine Learning: A Fundamental Concept

The concept of bias and variance and their relationship with each other is fundamental to the true performance of supervised ML models. To identify the most optimized supervised ML model, the trade-off between bias and variance must be addressed. Briefly, bias gives the algorithm its rigidity while variance gives it its flexibility. 21 -23 A high bias causes underfitting; simply stated, this means missing real relationships between the features of the data set and the target. In contrast, a high variance causes overfitting which may be thought of as introducing false relationships due to increased noise between the data set features and the target. 24 Thus, overfitting gives rise to the model appearing as a good predictor on the training data while underperforming on future new and previously unseen data (ie, not generalizable). In the end, the ultimate goal of any ML algorithm is to find the right balance between bias and variance (bias-variance trade-off). This balance is key in finding the most generalizable model (Figure 2). Within many supervised ML approaches, with the appropriate test sets, this balance can be intrinsically automated and sometimes incorporated into the platform to ultimately identify the most suitable model. Being aware of such limitations and knowing how to appropriately approach these platforms for building the most suitable model is key to good ML practice.

Bias-variance trade-off in machine learning. This figure illustrates the trade-off between bias and variance. Training data (green line) often do not completely represent results from the testing phase. Underfitting data are less variable but exhibit a high error rate and high bias (blue box). In contrast, overfitting data result in low bias and high variance (yellow box). The ideal zone lies between over- versus underfitting of data and may not be optimal until several attempts at testing have been made (red line).

Supervised Machine Learning Algorithms: (Common Algorithms and Their Inner Workings)

In addition to the abovementioned categorizations, the algorithms can be further divided into either parametric or nonparametric groups.

25

The set of parameters in a parametric algorithm is fixed which confines the function to a known form. In nonparametric methods, the algorithm does not make any assumptions about the function to which it will map its variables. In general, the assumption within parametric algorithms is that the function is linear or assumes a normal distribution, while nonparametric methods do not make such assumptions. The most commonly encountered parametric algorithms include linear regression, logistic regression, and naive Bayes, while some of the most common nonparametric algorithms include

Comparison of popular supervised learning methodologies. This figure illustrates a variety of popular supervised machine learning (ML) methodologies. In the top row, linear regression, logistic regression, and Naïve Bayes Classifier (via TensorFlow) are shown. In the second row,

Linear Regression Algorithm

One of the oldest and simplest parametric statistical approaches is least squares linear regression. This technique has been regularly used for various correlational studies. 26 Linear regression models allow us to find the target variable (usually a numerical value) by finding the best-fitted straight line that is also known as the “least squares regression line” (the best dotted line with the lowest error sum) between the independent variables (the cause or features) and the dependent variables (the effect or target). The ultimate goal of this technique is to fit a straight line to the data set in question (Figure 3). The advantage of such an approach is its simplicity and transparency for finding linear relationship that can ultimately be very efficient (rapid). However, its major limitation is not being generally useful when relationships between the independent variable (the cause or features) and the dependent variables (the effect or target) are nonlinear.

Logistic Regression Algorithm

The term regression is somewhat of a misnomer since in general this is a classification method that uses a logistic function for predicting a dichotomous dependent variable (target). A variation of this method (multinomial logistic regression) can also be used to classify more than 2 targets. 27,28 In the binary approach, the function yields a value of 0 or 1 which represents the negative (0) and the positive (1) case (Figure 3). This may be accomplished by calculating an odds ratio probability for assigning a value as positive (1) or negative (0) based on the relationships between the independent input variables (features) and the dependent variables (target). This algorithm is relatively popular and has been regularly used in both industry 29 and medicine. 30 The use of a logistic regression method may become limiting if there are large number of features/variables present or if the variables are highly correlated. Additionally, this approach assumes that the relationship between the independent variables (features) and the dependent variables (target) are uniform which may limit the model’s performance. 31,32

Naive Bayes

Naive Bayes classifiers use a probabilistic approach that is based on the Bayes theorem. This approach is a subset of the Bayesian logic that assumes the naive notion that the features being evaluated are independent of each other. 33 -35 Although this basic assumption may seem to be a disadvantage of this method, in reality, naive Bayes classifiers can sometimes yield reasonable results, 34 especially for simple tasks. However, their performance has been shown to be inferior to some of the other well-established algorithms such as boosted trees and RF. 10

Decision Tree and Boosted Tree (Gradient Boosting Machine)

A decision tree uses a flowchart structure that typically contains a root, internal nodes, branches, and leaves. The internal node is where the attribute in question (eg, creatinine >1 or creatine <1) is tested, while the branch is where the outcome of this tested question is then delegated. The leaves are where the final class label is assigned which, in short, represents the final decision after it has incorporated the results of all the attributes. 36 -39 The end result of the decision tree is a set of rules that governs the path from the root to the leaves. Simple decision trees are not commonly used in ML. However, variations such as the Gradient boosting machine is used for both classification and regression tasks. 40,41 Gradient boosting machine is an ensemble method that uses weak predictors (eg, decision trees) that can ultimately be boosted and lead to a better performing model (ie, the boosted tree). This method can sometimes yield very reasonable models, especially with unbalanced data sets. However, their limited number of tuning parameters may make them more prone to overfitting compared to RF that contains a larger number of parameters for tuning and finding the optimized model.

k -Nearest Neighbor

k-nearest neighbor (

Support Vector Machine

Support vector machine classifies data by defining a hyperplane that best differentiates 2 groups. This differentiation is maximized by increasing the margin (the distance) on either side of this hyperplane. In the end, the hyperplane-bounded region with the largest possible margin is used for analysis. 47 One of the key highlights of the SVM method is its ability to find nonlinear relationships through the use of a kernel function (kernel trick). In short, the kernel trick allows the data to be transformed into another dimension which ultimately enhances the dividing margin between the classes of interest 48 (Figure 3). The limitation of this method is its tendency for overfitting.

Random Forest

Random forest uses a network of decision trees for ensemble learning. Bootstrap technique is commonly employed in this method to generate the randomly generated data sets that can then be used to train the data for the ensemble of decision trees.

49

Ultimately, each decision tree will determine an outcome, and a majority “vote” approach is used to classify the data (Figure 3). Appropriately, this is called RF, since a large number of randomly generated decision trees are used to construct the final model.

50

-54

This random sampling generally enhances the generalizability of this ML process by minimizing the overfitting phenomena. The number of trees and various other internal parameters within this process may hinder its performance. Additionally, the number of variables evaluated may be more time-consuming using this approach compared with the other nonparametric (eg, SVM and

Convolutional Neural Network

Neural networks attempt to emulate the neuron and for that matter the human brain. The artificial neuron within neural networks uses certain input features/variables to find and assign appropriate mathematical weights that are ultimately able to predict some output target (Figure 3). A deep neural network usually refers to a neural network with a large number of nodal connections within its hidden layer, and the CNNs are typically the deep neural networks that are most suitable for more complex data analyses such as imagery. As noted, in most CNN studies, a transfer learning approach is employed which allows the training data of interest to be incorporated into a retrained preestablished CNN. 20,55 The CNNs with the transfer learning approach are the method of choice for most image analysis studies. However, they are also prone to overfitting similar to the aforementioned algorithms.

Selecting the appropriate algorithm is essential in finding the most suitable model for a given task. Hence, to enhance the algorithm’s predictive capability (most importantly its ability to generalize), an optimal study design along with an iterative validation process is required.

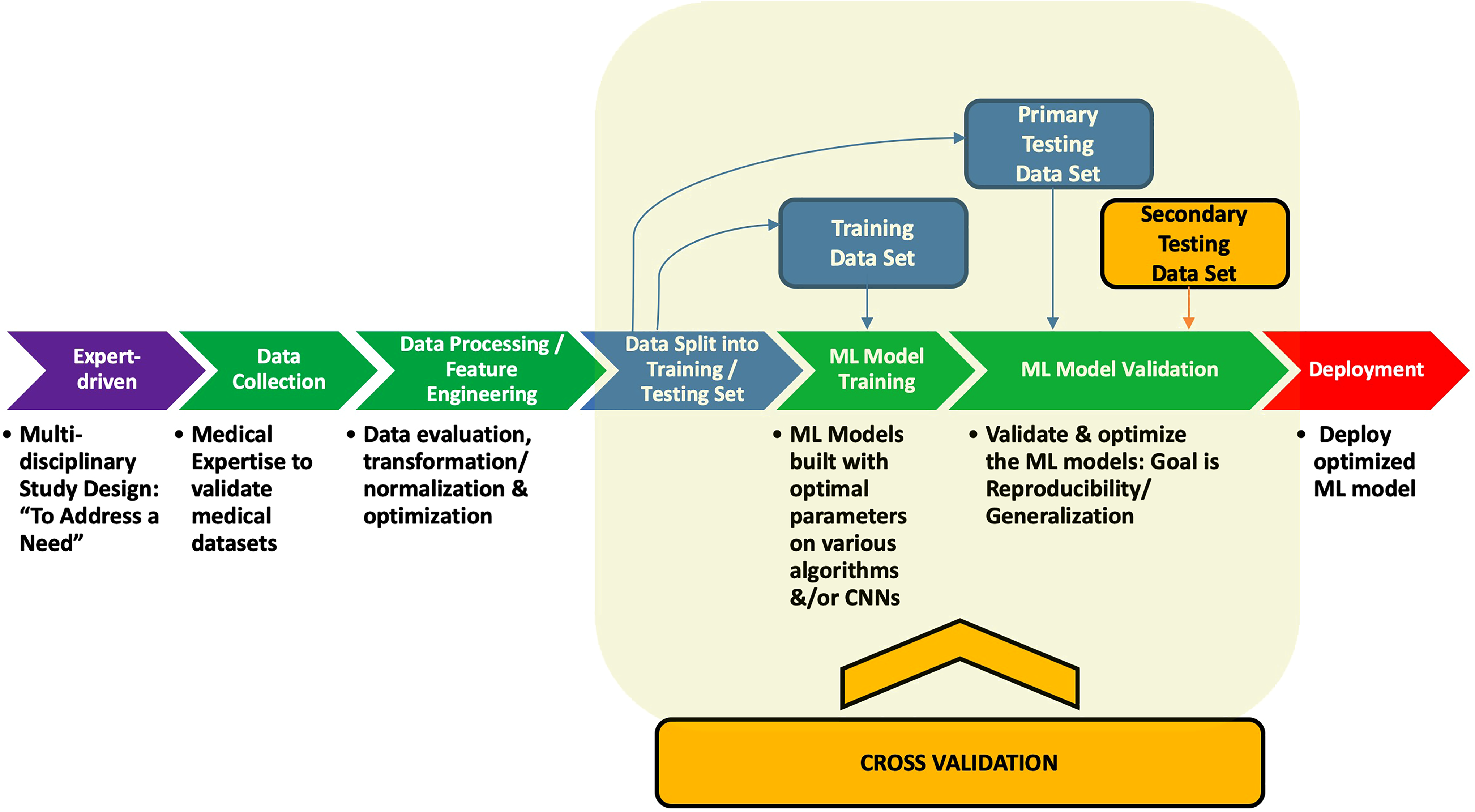

Supervised Machine Learning Study Design and Validation

After data are collected, cleaned, and preprocessed, and the correct ML approach has been chosen, the next step is model building and validation studies which ultimately yields the deployed model (Figure 4). The supervised ML model building phase usually includes splitting the data into an initial training and testing set that allows training of the model followed by testing for its initial validation phase. To minimize overfitting of the models, certain model adjustments and incorporating cross-validation (CV) processes allows the empirical build of a large number of models whose performances can be subsequently assessed with the goal of finding the most generalizable model. It is well known that assessment based on the initial validation test set does not always yield a generalizable model as we have shown in our recent studies. 20 Hence, it is essential to include secondary and sometimes tertiary external test sets (previously unseen by the model) to assess its true generalizability.

Supervised (labeled) machine learning model study design overview. Steps for the deployment of a supervised machine learning model. From left to right, the figure shows the initial team of multidisciplinary experts defining a study design to address a need. Data are then collected, processed, trained tested, validated, and ultimately deployed.

In brief, the model is initially trained and preliminarily validated on its train-test split data set. An example of this is where an “80-20 train-test” split initially trains the model using 80% of the data, followed by the remaining 20% which is used for testing and its initial validation (Figure 4). However, this approach alone for building a single ML model is prone to overfitting. Hence, to minimize the overfitting phenomena as noted earlier, good practice demands that one build large number of models with variable parameters through one or more CV platforms. Some of the most commonly employed CV studies include the “

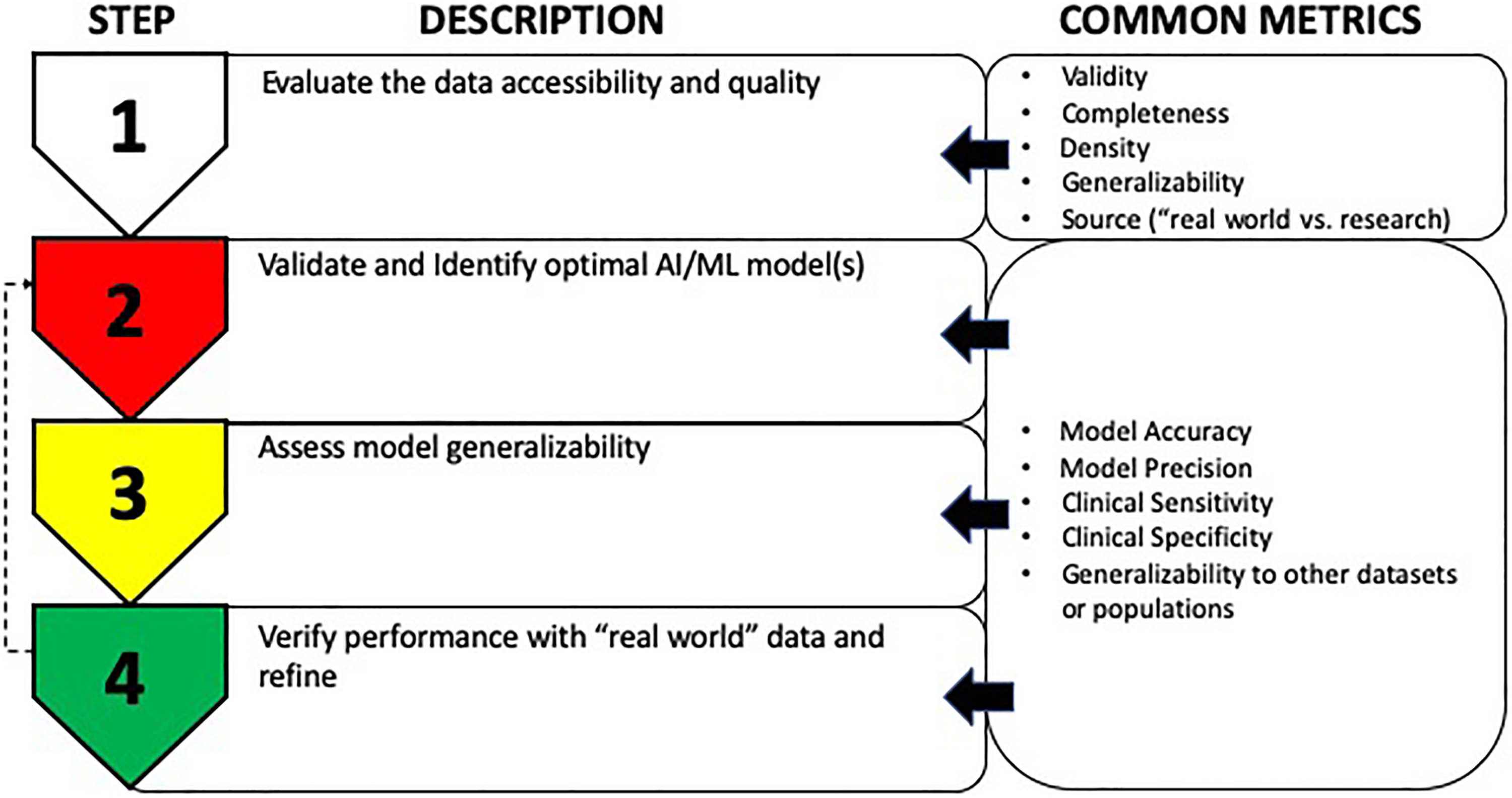

Summary

Artificial intelligence and ML have the potential to transform health care in the coming years. To ensure that pathologists and laboratorians are equipped to play important roles in the multidisciplinary teams, we have provided definitions, descriptions, and an outline of 4 of the essential steps for developing AI/ML applications. The need for high-quality data (Figure 5) illustrates the role of pathologists and laboratorians in appropriately curating, interpreting, and providing results for AI/ML applications. We encourage a balanced approach utilizing clinical trial data, when available, combined with real-world data to optimize AI/ML training. The approach and technique chosen should be tailored to the data available and the problem to be solved. Since many AI/ML techniques are available and not all are the same, pathologists and laboratorians must be sufficiently familiar and literate with these options so that they can communicate effectively and make meaningful contributions within the AI development team. Determining the overall generalizability of AI/ML models for real-world populations is critical to most successful development and implementation strategies. Researchers in this area are encouraged to be aware of their data limitations and develop cross-disciplinary literacy in AI/ML methods to effectively harness their optimal implementation plan, thus maximizing its impact.

Stepwise considerations for development and validation of the machine learning (ML) model. The figure describes a very general stepwise approach for development and validation of an ML model. Common metrics used in each step are shown on the right. Step 1 involves assessing the quality and accessibility of the data, followed by step 2 that requires method validation to identify optimal ML model(s). Once optimal ML models have been identified, step 3 involves determining their ability to work with other data sets to assess generalizability. Finally, step 4 involves evaluating the data in more “real-world” conditions to further assess performance and generalizability along with further refinement (go back to step 2) to improve the performance and desirable outcomes.

Footnotes

Acknowledgments

Special thanks to all our machine learning collaborators who keep us energized in this exciting new arena.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.