Abstract

This study examines the early adoption of ChatGPT in late 2022 and early 2023 by identifying variation in student awareness, academic use, and perceived instructional support for the technology at a diverse U.S. public research university. Specifically, we investigate (a) how individual awareness and academic use of ChatGPT vary by student characteristics and field of study, (b) how instructor encouragement or discouragement varies across courses and student demographics, and (c) how academic use patterns differ between science, technology, engineering, and mathematics (STEM) and non-STEM fields. Data from 938 undergraduates, merged with administrative records, revealed disparities in ChatGPT awareness and use, with underrepresented minority, first-generation, and international students less likely to know about or use the tool academically. Course-level analysis highlighted that instructor encouragement is more prevalent in STEM fields and upper-division courses but decreases in classes with higher underrepresented minority representation. Open-ended responses showed distinct patterns of ChatGPT use, with STEM students favoring conceptual assistance and coding and non-STEM students engaging in writing and instructor-assigned activities. These findings underscore early inequities in access and use of emerging educational technologies and call for institutional strategies to promote equitable awareness and skill development aligned with academic and professional goals.

Keywords

Introduction

The introduction and rapid dissemination of ChatGPT in late winter 2022 was experienced by much of global society, including the higher-education sector, as potentially transformative or existential in character. Using large language models to generate individualized outputs or responses to user-specified prompts (OpenAI, 2023, as cited in Strzelecki, 2023), ChatGPT has been recognized for its potential applications as an adaptive student-centered learning tool (Rasul et al., 2023). Scholars have highlighted ChatGPT’s potential utility in providing students with research and writing support (Dempere et al., 2023), personalized and immediate feedback on course assignments (Sok & Heng, 2024), and resources to overcome language barriers (Fütterer et al., 2023). In addition, researchers have documented university faculty adoption of ChatGPT for administrative tasks and have reported improvements in faculty productivity, energy, and workplace happiness (Cambra-Fierro et al., 2025; Tiwari et al., 2024). These developments increasingly position ChatGPT as a valuable tool for improving both student and faculty work quality and productivity (Fauzi et al., 2023; Firat, 2023).

However, a growing body of research also has highlighted concerns about students’ ChatGPT adoption or use. Scholars have noted the potential of this tool to encourage technological overreliance and discourage critical thinking among students (Chan & Hu, 2023; Farrokhnia et al., 2024). Additional concerns stem from evidence documenting ChatGPT’s tendency to produce socially biased responses (Zheng, 2024) and inaccurate citation outputs (Day, 2023) while simultaneously facilitating plagiarism that is harder to detect (Su et al., 2023). Therefore, although ChatGPT holds significant promise for improving student learning and productivity, these drawbacks warrant careful consideration to ensure students’ ethical use and adoption of ChatGPT in higher-education contexts.

Our research takes advantage of the rapid dissemination of ChatGPT in late 2022 and the first quarter of 2023 to examine student knowledge, use, and perceived instructional support for early adoption of the technology at a large U.S. public research university serving 50% first-generation college students and 85% students of color. We focus specifically on three research questions examining student early awareness, academic use, and exposure to coursework with faculty encouragement or discouragement of use of the technology. First (Reseacrh Question 1 [RQ1]), how does individual awareness and use of ChatGPT vary by individual characteristics and field of study? Second (RQ2), how does classroom-level instructor encouragement/discouragement of student use vary by field of study, upper-/lower-level course, and student demographic characteristics (e.g., percentage female, underrepresented minority [URM], and foreign students)? And finally (RQ3), how does the type of individual student-level use in courses vary across science, technology, engineering, and mathematics (STEM) and non-STEM fields? In examining these three research questions, we focus particular attention on equity issues, documenting differences by URM status, gender, first-generation college-going, and international students.

Conceptual Framework

Technology Adoption: Learning Management Systems

Although the novelty of ChatGPT limits our empirical understanding of its adoption in higher education, scholars have long studied the adoption of related technologies, providing us with a robust literature from which to draw (Venkatesh et al., 2003). Since the 1990s and tracking with the advancement of information technologies, higher-education institutions globally have increasingly adopted e-learning tools or learning management systems (Coates et al., 2005; McGill & Klobas, 2009), such as Blackboard, Moodle, and Canvas. These online information technology–supported platforms aid in the management and organization of educational material (Junglas et al., 2008) while also providing students with collaborative platforms to facilitate peer feedback on course work (Zanjani et al., 2016). Researchers studying learning management system adoption have often used variations of the technological adoption model of Venkatesh et al. (2003) or the unified theory of acceptance and use of technology 2 (UTAUT2; Venkatesh et al., 2012) to identify individual and organizational factors predicting adoption (El-Masri et al., 2017; Raza et al., 2022; Yu et al., 2021). The predictive power of UTAUT2 comes from integrating “the dominant constructs of eight prior prevailing models” with three additional constructs from UTAUT, helping to explain 74% of the variation in behavioral intention and 52% of actual technology adoption (Chang, 2012). These models include hedonic motivation (i.e., perceived enjoyment), price value, habit (i.e., perceived behavior), performance expectancy (i.e., perceived usefulness), effort expectancy (i.e., perceived ease of use), social influence (i.e., perceived importance or acceptance among social norms), and organizational facilitating conditions (i.e., infrastructure to support technology use; Venkatesh et al., 2012). These factors provide a comprehensive understanding of the various cross-contextual elements influencing individual and collective adoption of learning management systems and related technologies such as ChatGPT in educational settings.

Despite its widespread use, UTAUT2 arguably overlooks issues of equity and how differences in adoption contexts contribute to disparities in student opportunities to “geek out” with technologies and access technologically rich learning experiences both in and out of school (Ito, 2010; Neuman & Celano, 2012; Putnam, 1995). That is, although the model hypothesizes that sociodemographic factors such as age, gender, and experience moderate the relationship between the aforementioned constructs and technology adoption, it does not consider how structures of inequality outlined by critical technologists shape technology adoption patterns in U.S contexts by race, ethnicity, class, gender, immigration status, or levels of educational attainment (Delcore & Neufeld, 2017; Montenegro-Rueda et al., 2023). This unequal access to technology is compounded by K–12 tracking, in which students from minoritized groups are often placed in tracks that are less academically oriented and provided with fewer instructional resources (Oakes, 2005). Together these factors contribute to the digital vs digital literacy divide, characterized by ongoing disparities in the acquisition of technological competencies (Hargittai & Hinnant, 2008; Jenkins, 2008; Wartella et al., 2000; Yardi, 2009), STEM achievement (Carter, 2009), and STEM occupational attainment (Metcalf, 2010). Therefore, it is crucial to examine how individual sociodemographic factors as well as program-level factors such as instructor encouragement (Gorski, 2005) affect student awareness and use of ChatGPT within U.S higher education (Abrahams, 2010; Moser, 2007).

ChatGPT Adoption: Student Perspectives

The empirical research examining ChatGPT adoption in higher-education settings is just beginning to emerge. While there are some studies exploring the factors shaping faculty-level adoption of ChatGPT (Al-Mughairi & Bhaskar, 2024; Cambra-Fierro et al., 2025; Tiwari et al., 2024), the limited research skews toward examining student perspectives to understand adoption patterns and is largely situated outside U.S contexts (see, e.g., Firat, 2023; Maheshwari et al., 2023; Shahzad et al., 2024; Shoufan, 2023; Strzelecki, 2023). For instance, Strzelecki (2023) found that Polish students’ use was largely driven by student habit, perceived performance expectancy, and hedonic motivation (i.e., perceived enjoyment). Conversely, Shahzad et al. (2024) additionally found that in China, university students’ perceptions of ChatGPT’s “intelligence” relative to other large language models significantly mediated the relationship between ChatGPT awareness and adoption. Similar trends were observed in the Vietnamese context (Maheshwari et al., 2023) and in additional studies conducted in the Netherlands (Polyportis & Pahos, 2024), Arab countries (i.e., Iraq, Kuwait, Egypt, Lebanon, and Jordan; Abdaljaleel et al., 2024), and Bangladesh (Mahmud et al., 2024). Studies also have reported student tendencies to anthropomorphize ChatGPT (Polyportis & Pahos, 2024) and to trust naively in its outputs (Abdaljaleel et al., 2024). Although many of these studies revealed similarities in factors driving student adoption patterns (i.e., ChatGPT is useful, easy to use, and enjoyable), there are still context-specific factors, unique to the United States, that remain underexplored. Collectively, these studies demonstrated a growing global interest in the role of ChatGPT in higher education and reaffirmed the need to understand how individual and contextual factors are shaping ChatGPT adoption in U.S. higher education.

Data and Methods

Data for this study were collected at the University of California, Irvine (UCI) as part of the Measuring Undergraduate Success Trajectories Project, a large longitudinal measurement project aimed at improving understanding of undergraduate experience, trajectories, and outcomes while supporting campus efforts to improve institutional performance and enhance educational equity (Arum et al., 2021). The project focused on student educational experience at a selective large, research-oriented public university on the quarter system with half its students first generation and 85% Latino/a, Asian, Black, Pacific Islander, or Native American. Since the fall of 2019, the project has annually tracked new cohorts of freshmen and juniors with longitudinal surveys administered at the end of every academic quarter. Data from the winter of 2023 end-of-term assessment, administered in the first week of April, were pooled for four longitudinal study cohorts for this study (i.e., fall 2019 to fall 2022 cohorts). There was an overall response rate of 42.5% for the winter 2023 end-of-term assessment. This allowed us to consider student responses from freshmen through senior years enrolled in courses throughout the university.

Students completed questionnaire items about their knowledge and use of ChatGPT in and out of the classroom during the winter 2023 academic term. In total, 1,129 students completed the questionnaire, which asked questions about knowledge of ChatGPT (“Do you know what ChatGPT is?”), general use (“Have you used ChatGPT before?”), and instructor attitude (“What was the attitude of your instructor for [a specific course students enrolled in] regarding the use of ChatGPT?”). Of those 1,129 students, 191 had missing data for at least one variable of interest and were subsequently dropped from analysis, resulting in a final sample of 938 students.

In addition, for this study, we merged our survey data with administrative data from campus that encompassed details on student background, including gender, race, first-generation college going, and international student status. Campus administrative data also provided course-level characteristics, including whether a particular class was a lower- or upper-division course as well as the academic unit on campus offering the course. In addition, we used administrative data on all students enrolled at the university to generate classroom composition measures for every individual course taken by students in our sample—specifically the proportion of underrepresented minority students in the class, the proportion of international students in the class, and the proportion of female students in the class.

For our student-level analysis (RQ1), we used binary logistic regressions to examine the association between individual characteristics and (a) individual awareness and (b) individual academic use of ChatGPT employing the student-level data of 938 students. Individual characteristics includes gender, underrepresented minority student status, international student status, first-generation college-going student status, student standing (i.e., lower or upper classman), cumulative grade point average, and field of study. Field of study was based on student major assigned to the broad categories of physical sciences (e.g., physical sciences, engineering, and information and computer science), health sciences (e.g., pharmacy, biological sciences, public health, and nursing), humanities, social sciences (e.g., business, education, and social sciences), the arts, or undeclared. We defined awareness of ChatGPT as an affirmative response to the question, “Do you know what ChatGPT is?” Regarding ChatGPT use, we focused on academic use, which was defined as an affirmative response of either “Yes, for academic use” or “Yes, for academic and personal use” to the question, “Have you used ChatGPT before?”

For our course-level analysis (RQ2), we constructed a measure—course-level instructor encouragement for ChatGPT use—based on student responses to the end-of-term survey conducted at completion of the winter 2023 term. In the survey, students were asked to indicate the extent to which their instructors encouraged them to use ChatGPT in each of their enrolled courses. The response options were (a) “Very much discouraged,” (b) “Somewhat discouraged,” (c) “Neither discouraged nor encouraged,” (d) “Somewhat encouraged,” and (e) “Very much encouraged.” Our study generated data on 57% of all standard course sections offered in the winter of 2023, excluding those without a classroom component, such as independent studies or study abroad. For course sections mentioned multiple times by students in the sample, we aggregated student responses at the course level (i.e., courses in our analysis on average had 10.4 students reporting on instructor encouragement or discouragement of ChatGPT use). As a result, our analysis encompassed 1,047 distinct course sections reported by 915 students, with an average encouragement score of 2.7 (out of 5). For the course-level analysis, we conducted simple bivariate regressions to examine the relationships between average student-reported instructor encouragement in specific courses and the proportion of underrepresented minority students, as well as the proportion of international students, in the class. Scatterplots were created to visualize these relationships, with fitted regression lines included to highlight general trends. Both the scatterplot markers and the regression models were weighted by classroom size to ensure that larger classes are more influential than smaller classes in our course-level statistical analyses.

Our third set of analyses makes use of student open-ended descriptive accounts of academic use of ChatGPT in specific courses. Students who reported using ChatGPT in their coursework were asked to provide open-ended descriptions of how they engaged with the tool. In total, 214 such responses were collected. These descriptions were systematically coded into five broad, inductively generated categories. Through an iterative qualitative coding process, patterns and themes emerged directly from the data rather than being predetermined. This approach aligns with grounded-theory methodologies. The categories applied to student descriptions of ChatGPT course use were (a) study and conceptual assistance (used to help understand, clarify, and delve deeper into complex concepts, definitions, and subjects taught in class), (b) writing and content generation (used to assist in creating, revising, or organizing content, whether it be essays, papers, summaries, or even brainstorming ideas), (c), research and information retrieval (used as a tool for researching specific topics, looking up information, summarizing articles, or finding sources and references), (d) coding and technical assistance (used for help related to programming, coding, software libraries, and technical details), and (e) ChatGPT assignment specific (used when students were explicitly instructed by professors to use the technology for a particular assignment or activity). We had two researchers code the data with an intercoder reliability of 77%, with coder disagreements adjudicated by a third coder. Fourteen student course ChatGPT use responses provided inadequate data to code and were excluded from the analysis. We identified how reported use varied for students in STEM (n = 114) courses compared with non-STEM courses (n = 86), with Z tests used to identify statistical differences. STEM courses were defined as those being offered by the schools of biological science, engineering, informatics and computer science, nursing, pharmaceutical science, and physical science; non-STEM courses were those offered by the schools of arts, business, education, humanities, social ecology, and social science.

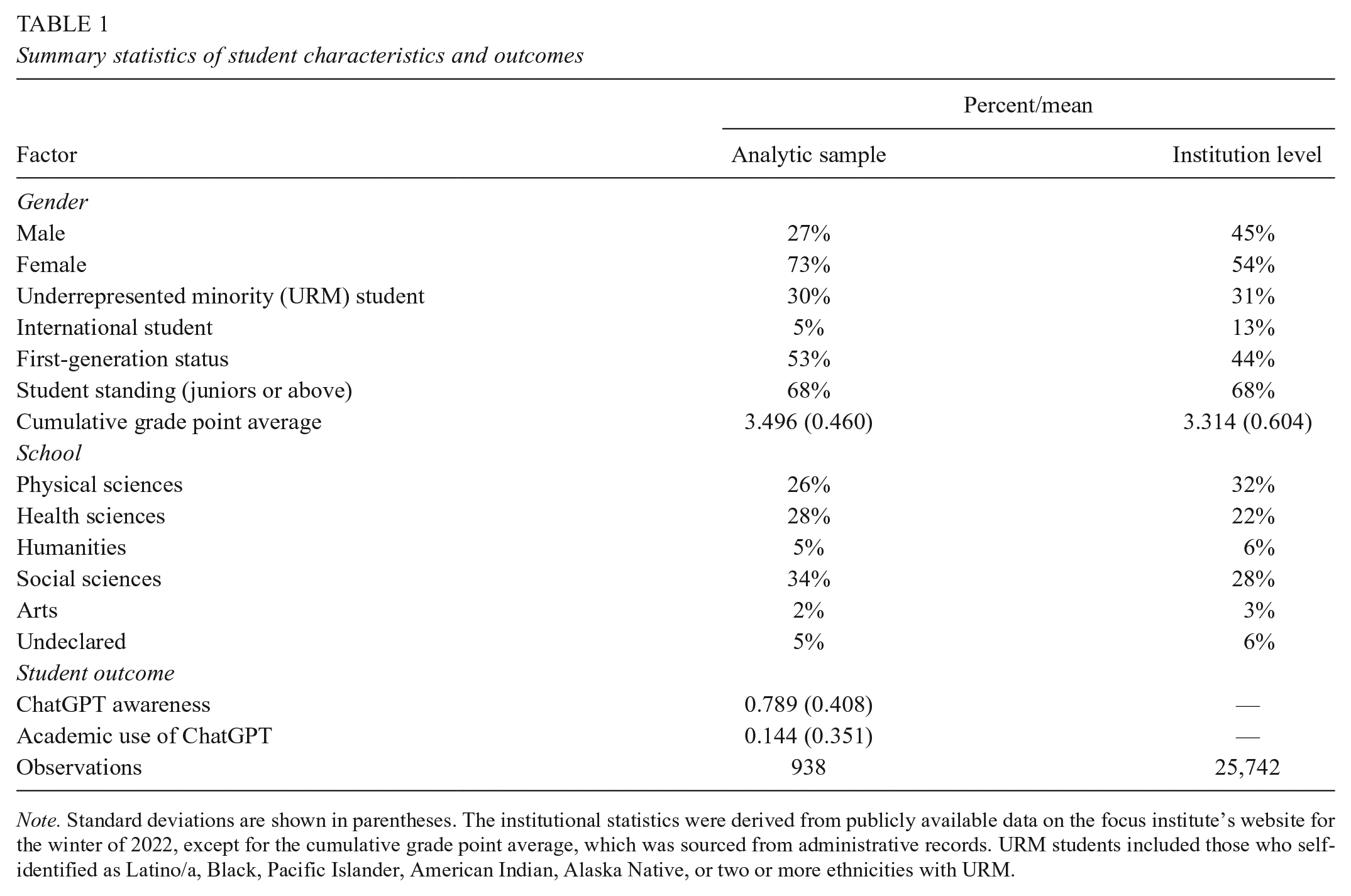

Table 1 displays descriptive statistics for student characteristics for our individual-level analytic sample as well as for the population of students at the institution. Although students participating in our project were largely representative of the population, international students were significantly underrepresented in our sample, and female students were overrepresented. International students were likely less inclined to participate in our study because of language barriers or privacy concerns; gender disparities in sampling are a prevalent feature of higher-education research. Two thirds of our sample were upperclassmen because we pooled data from four longitudinal cohorts that were initially composed of both freshmen and juniors.

Summary statistics of student characteristics and outcomes

Note. Standard deviations are shown in parentheses. The institutional statistics were derived from publicly available data on the focus institute’s website for the winter of 2022, except for the cumulative grade point average, which was sourced from administrative records. URM students included those who self-identified as Latino/a, Black, Pacific Islander, American Indian, Alaska Native, or two or more ethnicities with URM.

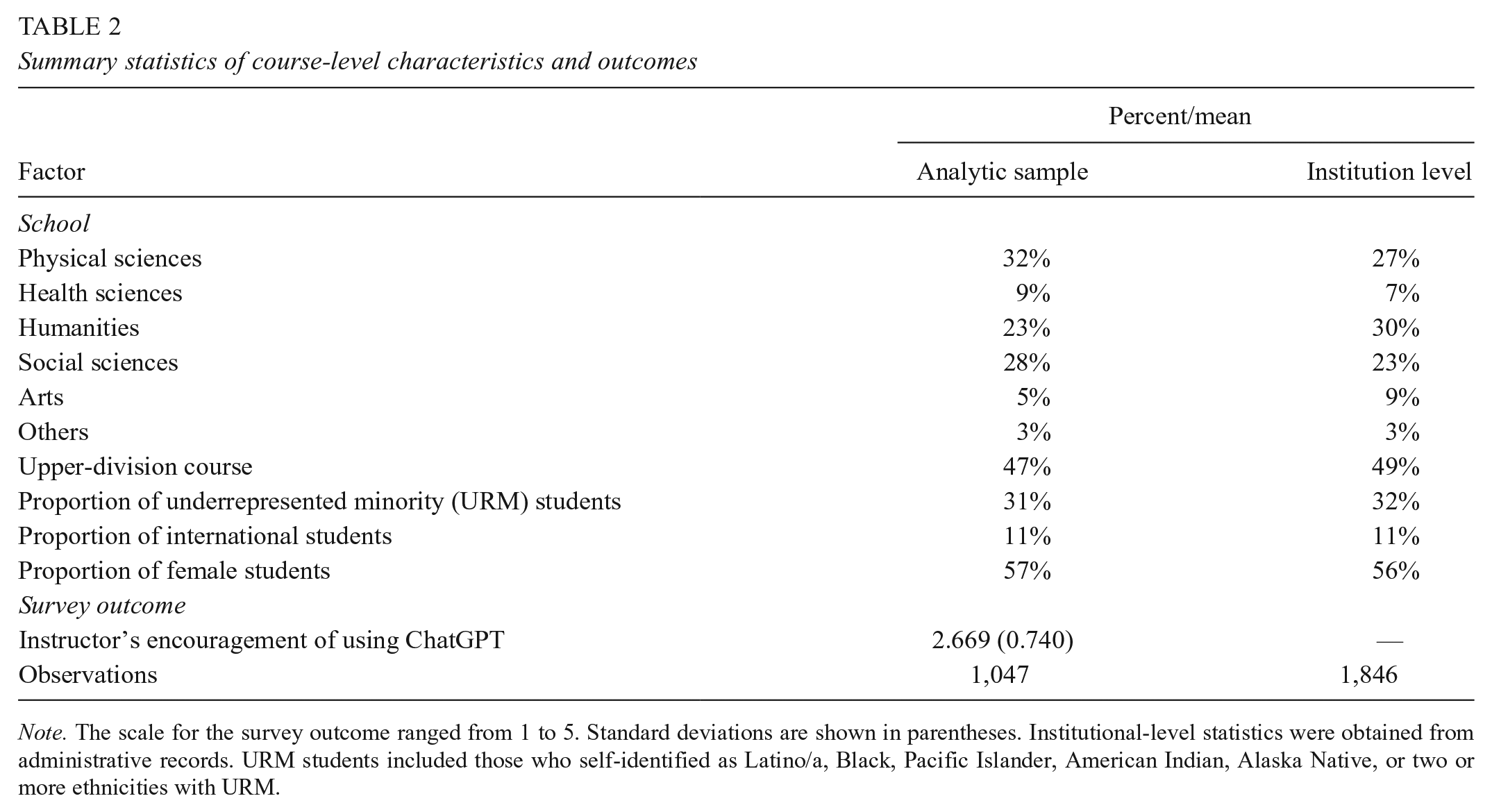

Table 2 presents summary statistics for course-level characteristics and measures in our analytic sample and for the population of courses at the institution level. Our analytic sample included 57% of the courses offered during the winter of 2023 academic term on campus and represented a relatively similar proportion of courses across different schools, with the exception that it included significantly more courses from social sciences and fewer courses from humanities, potentially because these units vary in the proportion of large classes offered (e.g., social science has high enrollments with large class sizes prevalent, whereas humanities has low enrollments and relatively smaller class sizes).

Summary statistics of course-level characteristics and outcomes

Note. The scale for the survey outcome ranged from 1 to 5. Standard deviations are shown in parentheses. Institutional-level statistics were obtained from administrative records. URM students included those who self-identified as Latino/a, Black, Pacific Islander, American Indian, Alaska Native, or two or more ethnicities with URM.

Analysis and Discussion

Variation in ChatGPT Knowledge and Academic Use

We focused our initial analysis on how individual awareness and use of Chat-GPT varied by individual characteristics and field of study. Seventy-nine percent of students reported that they knew what ChatGPT was when asked in the first week of April 2023, and 14% reported having used it for academic purposes in the winter (January–March) 2023 term. Although the dissemination and early adoption of this new technological tool were exceptional, it is worth noting that even with widespread social and mass media reporting on ChatGPT in early 2023, 21% of students still had no knowledge of it, and only a relatively small proportion of students reported using it for academic purposes. Students could conceivably be underreporting academic use of the tool, but we believe that any bias in that direction is relatively limited given that students were part of a longitudinal project where they had developed trust in data confidentiality, student reports of using ChatGPT solely for personal but not academic use also were very low (18%), and students were willing to report that they used it in classes where the instructor actively discouraged it. Rather than underreporting, we interpret the lack of universal knowledge of the new tool and its modest level of academic use as a feature of our observation window occurring early in the technology-adoption cycle.

In Table 3 we present the results of the first binary logistic regression, which examined how awareness of ChatGPT varied by student characteristics and field of study. There were no statistically significant differences in the likelihood of ChatGPT awareness based on gender or student standing. However, a statistically significant difference was found by URM status. Specifically, the odds of URM students knowing about ChatGPT were 0.355 times the odds for non-URM students, holding all other factors constant. In practical terms, this meant that a male lowerclassmen in the physical sciences with an average grade point average who was not a first-generation college-going student, a member of an underrepresented minority, or an international student (defined for this study as a reference student) would have a 93.7 percent chance of knowing about ChatGPT. In contrast, a URM student with identical characteristics would have an 83.8% chance of knowing about ChatGPT.

Logistic regression of student awareness and student academic use of ChatGPT

Note. n = 938 students for both models; reference categories were male, non-URM, non-international student, non-first-generation student, lowerclassman standing, and physical sciences.

p < .05; **p < .01; ***p < .001.

We also observed statistically significant differences based on international student status, with international students having 0.451 times the odds of noninternational students of knowing about ChatGPT, holding all else constant. For a reference student, the likelihood of knowing about ChatGPT would be 93.7%, whereas for an international student with the same characteristics, it would be 86.9%. Additionally, the odds ratio for first-generation college-going students was 0.553, meaning that first-generation college-going students had 0.553 times the odds of non-first-generation students of being aware of ChatGPT. Controlling for other factors, first-generation college-going students had an 89.1% chance of knowing about ChatGPT vs 93.7% for non-first-generation students.

We also found significant differences in ChatGPT awareness by field of study. Health science students had 0.458 times the odds of physical science students of knowing about ChatGPT, holding all other factors constant. This corresponds to an 87.1% likelihood of health science students being aware of ChatGPT vs 93.7% for physical science students, controlling for other factors. The relationship between field of study and awareness may reflect varying levels of interest in technology across disciplines. Lastly, for each one-unit increase in cumulative grade point average, the odds of knowing about ChatGPT increased by a factor of 2.251, all else equal.

These results provide an important context regarding how awareness of ChatGPT varied by student characteristics, highlighting groups that were more likely aware of ChatGPT early in the technological adoption cycle. From an equity perspective, students who are unaware of ChatGPT may face a disadvantage compared with their peers who are familiar with the tool. Regardless of opinions on whether ChatGPT should be used by students, disparities in awareness contribute to inequitable access to educational resources and opportunities for skill development.

Additionally, we ran a second binary logistic regression (see Table 3, model 2) to examine whether academic use of ChatGPT varied by individual characteristics and field of study. There were no significant differences in academic use by gender, international student status, first-generation college-going status, student standing, or cumulative grade point average. However, there were statistically significant differences based on URM status and field of study. The odds of URM students using ChatGPT for academic purposes were 0.443 times the odds of non-URM students, holding all else constant. In practical terms, a reference student would have an 18.1% chance of using ChatGPT for academic purposes, whereas a URM student would have a 9.0% chance.

Students in health sciences had 0.462 times the odds of using ChatGPT for academic purposes vs physical science students, with a 9.2% likelihood of using ChatGPT for academic purposes in health sciences vs 18.1% in the physical sciences. Similarly, social science students had 0.599 times the odds of using ChatGPT for academic purposes vs physical science students, with an 11.8% likelihood of using ChatGPT for academic purposes. These differences could be influenced by course content differences across disciplines and students’ general attitudes toward ChatGPT, which may vary by major.

In supplementary analyses (available online), we restricted the sample to those who were aware of ChatGPT to assess whether statistically significant differences in academic use remained. Even with this restriction, we still found significant differences in academic use of ChatGPT by URM status and field of study in health sciences but not in social sciences. In other supplementary analyses (also available online), we assessed whether differences in ChatGPT knowledge 1 and academic use 2 by student characteristics (i.e., gender, URM status, first-generation status, and international student status) were conditional on STEM status 3 (i.e., whether there were student characteristic × STEM interactions). Overall, students’ knowledge and use of ChatGPT varied by student characteristics and field of study, with differential awareness of the technology appearing to be a primary driver of variations in academic use.

Social Influence: Variation in Instructor Encouragement

The association between student-level awareness and use of ChatGPT may be influenced by their exposure to varying levels of ChatGPT encouragement in classrooms—that is, what would be defined by the technological adoption model of Venkatesh et al. (2003) as a social influence or organizational-level facilitating condition for adoption and use. We shifted our focus from individual-level student reports of their knowledge and use of ChatGPT to student reported course-level instructor support for ChatGPT adoption and use, examining the extent of instructor encouragement across different course types. Our findings highlighted substantial course-level variation in instructors’ encouragement of ChatGPT use.

Initially, we explored the variability in instructor encouragement across different schools and course levels within the focal university. In Figures 1 and 2, the y-axis represents the level of instructor encouragement, with higher scores indicating greater encouragement. Figure 1 reveals that average instructor encouragement ranged from 2.37 in courses offered in humanities to 2.88 in courses offered in arts. It is worth noting that even in schools with the most favorable attitudes toward ChatGPT, the average ratings leaned toward discouragement (i.e., lower than the neutral attitude of 3). In supplemental analyses (available online), we also looked at reports of instructor encouragement for the 20 largest departments. The departments with the highest levels of instructor encouragement were mathematics, computer science, and physics, with scores ranging from 2.95 to 3.09. These scores were close to the midpoint of the scale, which was labeled “Neither discouraged nor encouraged.” Conversely, the departments with the lowest levels of encouragement were political science, general humanities, and criminology, with scores lower than 2.23, aligning more closely with “Somewhat discouraged.”

Instructor encouragement of ChatGPT use by school.

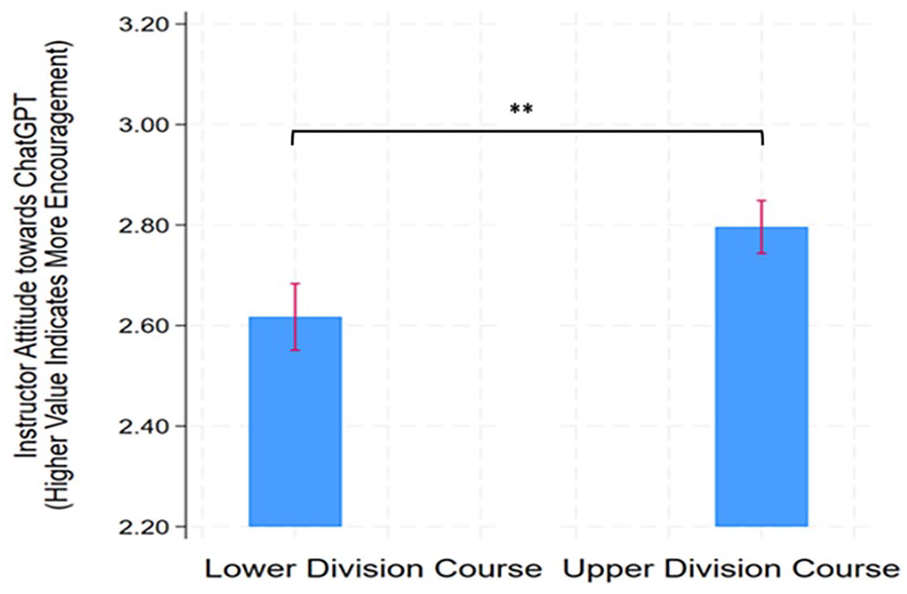

Instructor encouragement of ChatGPT use by course level.

Figure 2 further examines instructor encouragement in lower-division courses versus upper-division courses. There was a notable difference in instructors’ attitudes, with upper-division courses displaying greater encouragement than their lower-division counterparts. Upper-level courses tended to have smaller class sizes with more advanced students further along in their progress in a particular field of study.

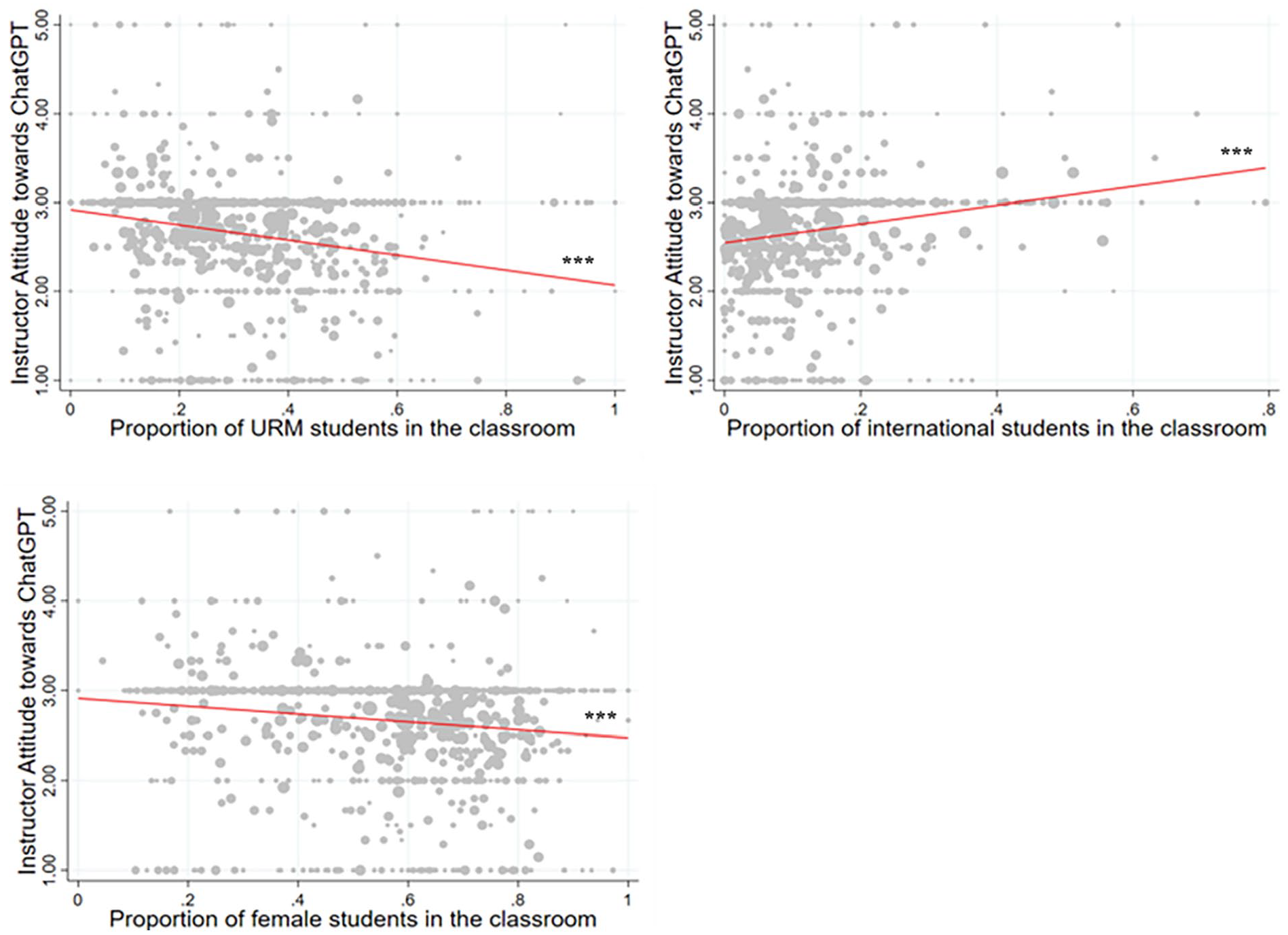

Figure 3 delves into instructor encouragement in courses with varied student compositions. The x-axis spans from 0 to 1, showcasing the proportion of specific student demographics, whereas the y-axis indicates the level of instructor encouragement. The first graph within Figure 3 uncovers a trend wherein instructors’ encouragement declined as the URM student proportion rose. Conversely, the second graph illustrates that a higher international student presence corresponded with increased instructor encouragement of ChatGPT. When instructor encouragement was shown in relationship to female student proportion in courses in the third graph within Figure 3, a higher proportion of female students in a classroom was associated with a slight decrease in instructor encouragement of ChatGPT. These findings underscored that instructors’ attitudes toward the emergent ChatGPT technology differed significantly based on course attributes and student demographics. This implies that students from diverse backgrounds encounter varying levels of exposure and encouragement to use this technology.

Scatterplot of instructor encouragement of ChatGPT use and classroom composition with fitted line.

Variation in types of STEM and Non-STEM Course Use

Student descriptions of ChatGPT academic use in courses were coded into five broad inductively generated categories, four of which varied in prevalence in STEM compared with non-STEM courses (Table 4). STEM and non-STEM course reports were similar in the prevalence of ChatGPT use for research and information retrieval (22%). For example, a student in an informatics and computer science course who reported an instructor who somewhat encouraged the technology noted using it for “new documentation and information I had to understand,” and a student in a humanities course who noted an instructor who very much encouraged the technology’s use reported using it “for sources and summaries of articles/ sources.”

Student descriptive reports of ChatGPT use in science, technology, engineering, and mathematics (STEM) and non-STEM courses

Note. n = 86 student descriptive reports in non-STEM courses; n = 114 student descriptive reports in STEM courses; six additional non-STEM courses and eight STEM courses were excluded from analysis due to incomplete descriptions; intercoder reliability was 76.5%.

p ≤ .01 (STEM/non-STEM differences).

Not surprisingly, STEM course reports were more likely to entail coding and technical assistance than non-STEM course reports (18% and 4%, respectively). For example, a student in a physical science course who reported an instructor who very much encouraged the technology’s use noted, “I asked it to help me write some code.” In an informatics and computer science course, a student who reported an instructor who neither discouraged nor encouraged the use of the technology commented, “I use the tool to ask several coding questions such as what libraries I could use and implement, how to maximize efficiency of code, and how certain blocks of code function.”

Non-STEM course reports were more likely than STEM course reports to involve writing and content generation (25% and 15%, respectively, statistically different at the p < .10 level)). For example, a student in a social science course who reported an instructor who neither discouraged nor encouraged the technology’s use noted ChatGPT was used “to strengthen sentences that I felt sounded off in my paper.” A student in a humanities course who reported an instructor who neither discouraged nor encouraged its use commented, “I used it to generate sample arguments for a paper as well as to provide me with philosophy writing tips.”

More interestingly, STEM and non-STEM course reports varied significantly in the use of ChatGPT for study and conceptual assistance. Forty-one percent of STEM course reports entailed ChatGPT use for study and conceptual assistance compared with only 24% of non-STEM course reports. A student in a course in biological science who reported a professor who neither discouraged nor encouraged ChatGPT use reflected critically that the tool was used “to summarize complex concepts that weren’t well described in lecture, it wasn’t always accurate with its answers, though.” A student in a humanities course who reported an instructor who somewhat discouraged ChatGPT use defensively noted that the tool was used “for studying terms but nothing else.” A student taking a course in pharmaceutical science who reported an instructor who neither discouraged nor encouraged ChatGPT use noted, “I used it to study and find simpler explanations for terms.”

Our analysis also identified that non-STEM course reports were more likely than STEM course reports to entail instructors proactively assigning students to use the technology. Twenty-six percent of non-STEM course reports compared with 4% of STEM course reports involved ChatGPT assignment-specific use. For example, a student in a social science course who reported a professor who very much encouraged ChatGPT use noted, “We had to ask it to write a literature review on the same research question that we were writing our own literature reviews on. The purpose was to demonstrate that ChatGPT is often inaccurate.” A student in an arts course who reported an instructor who somewhat discouraged use noted, “One of the question assignments was on the topic of AI [artificial intelligence]. The professor said to try using ChatGPT for the question to see what comes up.”

Conclusion

This study provided useful descriptive information on student awareness, academic use, and exposure to faculty encouragement/discouragement of ChatGPT use early in the technological adoption process. Our study, however, has several limitations worth highlighting. First, our research relied on student reports of instructors’ behaviors. Although it is true that student perceptions of instructor attitudes are a relevant factor in identifying instructional environments that potentially shape student behaviors, our understanding of the phenomenon would be enhanced by collecting instructor reports of attitudes and pedagogic intentions related to encouraging or discouraging student use of ChatGPT technology. In addition, particularly for courses with smaller class sizes, we had a limited number of students per class observing instructor behaviors. Finally, our reliance on student self-reported academic use of ChatGPT technology potentially led to an underreporting of actual uses, particularly in courses where instructors were actively discouraging adoption.

Given these limitations, future research is needed to independently observe instructors’ behaviors and to directly collect survey and interview data from them. Data also were collected early in the technological adoption cycle. It would be worthwhile to conduct additional research in the future to observe the extent to which the individual student-level and instructor course-level variation observed here persists or changes over time as the technological adoption cycle progresses. Research is also needed to identify whether the observed variation in ChatGPT knowledge, use, and instructor encouragement is related to undergraduate student course performance, achievement, and development of workplace competencies.

Our study found that early in the technological adoption cycle, URM students were less likely either to be aware of or use the new technology for academic purposes. In addition, we found that in courses where URM students were concentrated, instructors were more discouraging of ChatGPT use. In reports of non-STEM courses, however, we found greater likelihood than in STEM courses that instructors were explicitly assigning students ChatGPT-specific assignments. Although these ChatGPT-specific assignments were still relatively rare (only 26% of non-STEM course reports), they suggest a potential mechanism for instructors to promote ChatGPT awareness, use, and technological skill development.

We are careful, however, not to advance normative claims as to whether encouragement or discouragement of ChatGPT use in general is desirable because we recognize that instructional practices should be aligned to promote learning outcomes that are developed for specific courses and fields. For different courses and fields of study, the desirability of student academic use of ChatGPT may vary in terms of both its applicability and its nature of use. While we are reluctant to encourage or discourage ChatGPT use in particular courses or fields of study, institutional commitment to educational equity would be enhanced by ensuring that all students are made aware of ChatGPT and have had opportunities to use the tool in coursework in a manner aligned with cognitive growth and the development of 21st century civic and workplace competencies.

Footnotes

Declaration of Conflicting Interests

The authors declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Notes

Authors

RICHARD ARUM is professor of sociology and education at the University of California, Irvine. His research focuses on examining undergraduate student experiences, trajectories, and outcomes to improve institutional performance and advance knowledge of educational processes.

MARIA CALDERON LEON is a doctoral student in developmental psychology at the University of California, Davis. Her research takes a lifespan approach from childhood to emerging adulthood to elucidate how individuals shape and are shaped by their social environments and relationships, including student experiences in higher education.

XUNFEI LI is a postdoctoral scholar in education at the University of California, Irvine. Her research explores how curriculum policies and classroom experiences influence students’ academic and social outcomes in postsecondary education.

JOMAR LOPES is a doctoral student in education at the University of California, Irvine. His research explores the implementation and institutionalization processes of equity-oriented K–12 policy reform.