Abstract

Formative assessment is a key element of effective education. Recently, a growing body of evidence has highlighted the potential of learning analytics to support formative assessment. However, this evidence is sparse, and concerns have been raised about the connection between learning analytics results and formative assessment models. When learning analytics results do not align well with formative assessment models, teachers may be hesitant to place trust in, interpret, and act upon the insights provided by learning analytics for formative assessment. In this study, we address this concern by adopting a well-established formative assessment model and conducting a systematic review to explore how learning analytics can support formative assessment. A total of 93 relevant articles, published between 2011 and 2023 and retrieved from Web of Science (WOS), Scopus, IEEE Xplore, and ACM, were analyzed using a coding scheme grounded in a formative assessment model. Drawing on the model of formative assessment, we report how learning analytics can support formative assessment by connecting the three key actors, teachers, students as peers, and students as self-assessors with the three critical stages of the process: (a) where the learner is going, (b) where the learner is now, and (c) how the learner is getting there. This study extends our understanding of the alignment between learning analytics results and theoretical concepts and further contributes to the advancement of the application of learning analytics for formative assessment.

Keywords

Knowing where students stand in their learning is key to effective teaching and learning. Formative assessment offers this insight to both teachers and students, helping them track and support progress (Bennett, 2011; Evans, 2013; Harris et al., 2022). Although multiple definitions of formative assessment exist without a unified consensus (Bennett; Dunn & Mulvenon, 2019), it is commonly understood as a process that is followed by both teachers and learners to provide and ask for feedback about learners’ performance to modify ongoing teaching and learning processes and improve learners’ achievement (McManus, 2008). This definition indicates that effective formative assessment encompasses feedback on the learner’s current learning performance (where the learner is now), identification of problems and issues within that performance (how the learner is doing), and suggestions and constructive feedback to aid in improving learning and achieving desired goals (where the learner should be) (Black & Wiliam, 2009).

There is evidence that confirms the positive role of formative assessment in improving teaching and learning processes, learner motivation, engagement, and learning outcomes (e.g., Evans, 2013; Faber et al., 2017; Rushton, 2005). In addition, the evidence has shown that the application of formative assessment enhances learners’ higher-order skills, such as self-regulation and meta-cognitive skills (e.g., Meusen-Beekman et al., 2016). For example, Clark (2012) argued that formative assessment enhances the development of self-regulated strategies among learners because it involves learners analyzing a task or a work of their own or peers; planning behaviors; monitoring and controlling behaviors, emotions, and motivations; and doing self-reflections based on the received feedback or providing feedback on their peers’ works.

Although various studies report benefits of formative assessment, Dunn and Mulvenon (2019) critically review the literature and emphasize the need for more rigorous research, arguing that the current empirical evidence does not robustly support its effectiveness in improving educational outcomes. In addition, effective implementation of formative assessment is challenging (Falchikov & Boud, 1989). This is partly due to systemic misalignments between formative assessment and broader instructional practices, which require coherent integration with classroom tasks, learning objectives, and teaching strategies (Banihashem et al., 2025a).

Since feedback plays a central role in formative assessment, to be effective, feedback must be delivered timely and of sufficient quality (Evans, 2013; Shute, 2008). However, this is difficult for teachers due to their high workloads and large student populations (Er et al., 2021; Pardo et al., 2019). In the case of peer feedback, learners tend to have limited feedback literacy (Carless & Winstone, 2023), which can hinder their grasp of the principles governing high-quality feedback (Patchan et al., 2016; Topping, 1998). In addition, learners may experience a lack of trust in peers’ feedback competence, which means that they do not consider feedback from peers as good enough to take it seriously or uptake it (Kerman et al., 2022). There is also a domain dependency issue. This means that students often do not have sufficient domain-specific knowledge, which can impede their ability to identify problems and issues in peers’ work to provide constructive feedback (Bennett, 2011). Compounding these challenges, teacher capacity is often constrained by heavy workloads and large class sizes, limiting their ability to design and deliver individualized, high-quality feedback at scale (Banihashem et al., 2025a).

In addition, for formative assessment to be considered adequately valid, feedback should be well-designed, timely, ongoing, formatively useful, and easy to understand (Divjak et al., 2023; Falchikov & Goldfinch, 2000; Gikandi et al., 2011), yet often lacks these essential qualities. Another issue comes from overly simplistic framing and unexamined assumptions in much formative assessment research. Hickey (2015) argues that many studies merely show students doing better with feedback than without it, a comparison he dismisses as exposing “educational malpractice.” That is, of course, students learn more when they receive feedback; what these studies fail to consider is whether that time might have been better spent on even more productive learning activities.

When it comes to technology-integrated assessment, its effective implementation requires a careful balance between what digital tools can offer and the deeper learning objectives teachers aim to support. Bearman et al. (2023) highlight this issue, noting that although tools like automated quizzes provide efficiency and instant feedback, they often lack the nuance needed to assess complex thinking, potentially undermining educational intentions.

Last but not least, Torrance (2012) argues that much of what is labeled as formative assessment is no longer truly formative—let alone transformative. Instead, it has become “conformative” and even “deformative,” shaped by institutional pressures rather than student-centered learning goals. Torrance’s analysis highlights the complexities and potential pitfalls of formative assessment, emphasizing the need for careful consideration in its application to truly enhance student learning.

One of the promising disciplines that can contribute to effective formative assessment is learning analytics (Banihashem et al., 2025a; Joksimović et al., 2018; Moon et al., 2024; Raković et al., 2023). Learning analytics is defined as the measurement, collection, analysis, and reporting of data about learners and their contexts for purposes of understanding and optimizing learning and the environments in which it occurs (Lang et al., 2022). In recent years, a large number of studies have been dedicated to investigating the potential of learning analytics in supporting formative assessment (e.g., Banihashem et al., 2024; Darvishi et al., 2022; Moon et al., 2024; Stanja et al., 2023). Despite the significant quantity of research conducted in this domain and the promising outcomes it may yield, a concern has arisen—there seems to be a disconnect between the practical implementation of learning analytics and the theoretical underpinnings advanced in these studies (Gašević et al., 2015; Kitto et al., 2023; Noroozi et al., 2019). This concern necessitates a comprehensive review of the literature and synthesis of the learning analytics results for supporting formative assessment building on a well-known formative assessment model. By anchoring these insights in an established model, we can enhance our understanding of how learning analytics can be strategically leveraged to facilitate formative assessment.

We found that there are two literature reviews exploring the intersection of learning analytics and formative assessment. In one literature review, Banihashem et al. (2022) synthesized how learning analytics supports feedback practices in higher education. While acknowledging the significance of this research, we believe it does not fully address the intersection of learning analytics and formative assessment for two main reasons. First, the authors adopted a learning analytics model to report on the data and algorithms used to support feedback but not formative assessment. Therefore, although this approach clarifies the technical aspects of applying learning analytics to feedback practices, it lacks a theoretical and operational conceptualization of formative assessment, which may lead to a lack of trust among practitioners in the results of learning analytics for supporting feedback. Second, this review does not provide insights into the agents of formative assessment, including teacher assessment, peer assessment, and self-assessment, nor does it cover the key processes of formative assessment (where the learner is now, where the learner is going, and how to get there) as outlined by Black and Wiliam (2009), resulting in an incomplete picture of how learning analytics supports different assessment agents.

Another relevant review study by Misiejuk and Wasson (2023) focuses on the role of learning analytics in peer assessment. Similar to Banihashem et al. (2022), this study fails to provide a comprehensive picture of the role of learning analytics in formative assessment, as it only addresses one actor (peer assessment) and overlooks the other two main aspects (teacher assessment and self-assessment). Additionally, while this review reports the objectives and challenges of using learning analytics for peer assessment, it fails to map these objectives to the key processes of formative assessment (where the learner is now, where the learner is going, and how to get there). This omission results in a lack of understanding of how learning analytics supports formative assessment at different stages.

In addition to the two literature reviews discussed previously related to learning analytics, feedback, and peer assessment, there are other studies (Altinay et al., 2024; Madland et al., 2024; Morris et al., 2021; Ortiz-Lopez et al., 2024; Zeng et al., 2018) that need to be acknowledged. And it is important to highlight how our review distinguishes itself from these studies and adds value to existing literature.

The review by Altinay et al. (2024) offers a bibliometric analysis of research on online assessment in higher education. While it successfully outlines publication trends, prolific authors, and key sources, its scope remains limited to mapping the research landscape. It does not engage with the pedagogical implications or examine the impact of online assessments, particularly formative assessments. Zeng et al. (2018) center their review on the conceptual foundations of learning-oriented assessment (LOA), examining the state art of LOA, the history and nature of LOA, and the evolution of LOA. In addition, the search period spans 1971–2016, meaning the review predates many recent developments in learning analytics. Therefore, the difference lies not only in focus but also in timeliness. Morris et al. (2021), on the other hand, concentrate on feedback and assessment in digital environments, with a particular emphasis on their influence on learning outcomes. Although their work touches upon feedback and learning, it does not specifically interrogate the role of technology-mediated assessment within the model of formative assessment, nor does it investigate how learning analytics can systematically support different agents (teachers, peers, and learners) across formative assessment processes.

Regarding the review by Madland et al. (2024), while the study focuses on technology-integrated assessment, its scope is limited to teacher-led assessment, overlooking the roles of peer and self-assessment in technology-enhanced contexts. Furthermore, the concept of “technology” is not clearly defined or operationalized. An examination of the keywords used in the review, such as MOOC, remote, digital, online, and distance education, suggests a narrow and somewhat ambiguous interpretation, leaving it unclear what specific technologies are being considered or how they are integrated into assessment practices. Similar to other reviews, the work by Ortiz-Lopez et al. (2024), while focused on e-assessment, differs from our review in two key ways. First, their study employed a search string limited to terms such as “e-assessment,” “computer-based assessment,” and “mobile-based assessment.” This narrow search scope significantly overlooks relevant literature on learning analytics and formative assessment, resulting in the exclusion of studies central to our review. Second, the purpose and focus of their review diverge from ours. They do not provide a clear categorization or analysis of teacher-led, peer, and self e-assessment practices, which are central to our systematic examination of how learning analytics supports different actors in formative assessment.

Therefore, our review, compared to previous literature, makes several unique contributions to the existing body of literature. First, it addresses a critical gap in understanding the intersection of learning analytics and formative assessment. Despite the growing body of research in both areas, there remains a lack of cohesive insight into how learning analytics can meaningfully support formative assessment. This gap contributes to uncertainty among teachers, learners, and policymakers, who may hesitate to adopt learning analytics tools without a clear, theoretically and conceptually grounded understanding of their pedagogical value and practical implications. Secondly, without a comprehensive overview, it becomes challenging to identify specific areas where learning analytics can be most effectively applied to support formative assessment. Consequently, opportunities to optimize teaching and learning experiences may be missed.

To address this critical gap, the present study adopts the formative assessment model proposed by Black and Wiliam (2009) as the underlying conceptual model. This model provides a coherent and structured perspective that facilitates the synthesis of existing research and enables a deeper understanding of how learning analytics can support formative assessment practices. There are two key reasons for selecting this model. First, it is one of the most widely cited and influential models in the formative assessment literature. Its sustained use in both research and practice highlights its credibility as a guiding structure for examining formative assessment. Second, and more importantly, the model’s structure aligns closely with the key concepts of learning analytics. The three primary agents of formative assessment identified by Black and Wiliam, including teachers, students, and peers, map directly onto the dual emphasis in learning analytics on teacher-centered and student-centered applications. Furthermore, the model’s three key assessment stages—namely, where the learner is now, where the learner is going, and how to get there—correspond well with the various levels of learning analytics: descriptive, diagnostic, predictive, and prescriptive. This alignment enabled us to conduct a systematic review that not only reflects established formative assessment practices but also integrates them meaningfully with developments in learning analytics.

Learning Analytics for Formative Assessment

Learning analytics has been traditionally considered an assessment tool to monitor and improve learning (Knight et al., 2014). The main purpose of learning analytics is to understand and optimize learning and the environment in which it occurs (Lang et al., 2022). This is closely in line with formative assessment purpose, which is about improving learning processes (Black & Wiliam, 1998; Rushton, 2005).

The literature highlights that learning analytics can support learning across four levels (Eriksson et al., 2020; Maoz, 2013). The first level, descriptive analytics, involves generating dashboards or reports summarizing learners’ current and past performance (e.g., tracking engagement and interaction patterns) to answer “What has happened?” (Eriksson et al., 2020). The second level, diagnostic analytics, focuses on identifying patterns, anomalies, and exceptions (e.g., detecting disengaged students or collaboration issues using social network analysis) to explain “why it happened” (Banihashem et al., 2022).

The third level, predictive analytics, uses historical data to forecast learner behavior and performance, such as providing early warnings for at-risk students (Arnold & Pistilli, 2012). Finally, the fourth level, prescriptive analytics, offers actionable recommendations to address “What should be done?” (Banihashem et al., 2022; Maoz, 2013), such as suggesting task-specific strategies through learning analytics dashboards.

These levels of analytics seem to be well-aligned with formative assessment practices as they provide insights into the main three stages of formative assessment (Black & Wiliam, 2009), including where the learner is going (for this purpose, learning analytics at the predictive level could serve), where the learner is now (descriptive and diagnostic analytics tend to be appropriate learning analytics levels for supporting this stage of formative assessment), and how to get there (prescriptive analytics could serve this stage) (Maoz, 2013).

In addition, in both learning analytics and formative assessment, the learning environment plays a pivotal role, as both occur within a broader educational context and are closely intertwined with other educational activities (Bennett, 2011; Gašević et al., 2022). For example, effective formative assessment must align with learning goals and students’ motivation for learning (Black & Wiliam, 2009; Leenknecht et al., 2021). In this regard, learning analytics can capture and report data about other learning activities such as learners’ motivation, engagement, and interaction activities (e.g., discussions, reading materials) or performance activities (e.g., feedback, tasks, assignments) (B. Chen et al., 2018; Gašević et al., 2015). Capturing different types of data from a learning context and providing fine-grained information about different learning activities could enable learning analytics to provide a holistic picture of the learning process for formative assessment. This can create an opportunity to make formative assessment more in line and coherent with other learning activities (Raković et al., 2023).

Timely and personalized feedback is at the heart of effective formative assessment (Boston, 2002; Rushton, 2005). This is also where learning analytics can significantly contribute to formative assessment by offering scaled, real-time, and personalized feedback (Banihashem et al., 2025a). Several studies have shown that learning analytics can enhance feedback practices by making feedback manageable for busy and overworked teachers and by monitoring learning activities, providing real-time and personalized reflections and feedback to both teachers and learners (e.g., Knight et al., 2020; Pardo et al., 2019; D. Wang & Han, 2021; Zheng et al., 2022).

Learning analytics has the potential to address key validity concerns associated with formative assessment. As Gikandi et al. (2011) argue, assessments are not simply valid or invalid; rather, validity is a matter of degree. The validity of formative assessment is compromised if it fails to measure what it claims to measure (Shaw & Crisp, 2011). In other words, valid formative assessment must provide sufficient evidence that the inferences drawn about student learning are accurate and aligned with intended learning outcomes. Learning analytics can provide sufficient evidence for formative evidence to make evidence-based interventions, and this can improve the validity of formative assessment (Banihashem et al., 2025a).

According to Gikandi et al. (2011), four characteristics underpin the validity of formative assessment: (1) authenticity of assessment activities, (2) effective formative feedback, (3) inclusion of multidimensional perspectives, and (4) robust learner support. Similarly, Divjak et al. (2023) conceptualize validity as the alignment between assessment practices and intended learning outcomes (LOs). Their model uses learning analytics to support constructive alignment by comparing ideal LO priorities, actual assessment weights, and student achievement data. Techniques such as clustering and trace data analysis further enhance the understanding of student learning and assessment performance. This approach offers actionable insights for improving learning design, assessment strategies, and support for self-regulated learning.

In addition, Bennett (2011) emphasizes the importance of a validity argument, which asks whether the evidence gathered from assessment genuinely reflects what students know and can do. Learning analytics can contribute significantly to this argument by capturing, analyzing, and reporting rich, multimodal evidence of student learning progress (Gašević et al., 2022; Lahza et al., 2023; Raković et al., 2023). In doing so, learning analytics strengthens the foundation for valid formative assessment practices.

In conclusion, while there is a growing body of research indicating that learning analytics enhances formative assessment (e.g., Darvishi et al., 2022), literature remains sparse, and a comprehensive understanding of the areas in which learning analytics can effectively address the challenges of formative assessment is lacking. This dearth of a cohesive overview hampers the identification of crucial research gaps required to strengthen the integration of learning analytics-based formative assessment (Gašević et al., 2022). To bridge this gap, a systematic literature review becomes imperative.

Theoretical Model and Research Questions

This review study is based on Black and Wiliam’s (2009) model of formative assessment. In the view of Black and Wiliam (2009), formative assessment refers to “all those activities undertaken by teachers and by their students in assessing themselves that provide information to be used as feedback to modify teaching and learning activities. Such assessment becomes formative assessment when the evidence is actually used to adapt the teaching to meet student needs’’ (p. 140). Based on this definition, which emphasizes the formative function of assessment and the pivotal role of feedback in shaping the teaching and learning process, Black and Wiliam regard formative assessment as synonymous with “assessment for learning.”

According to Black and Wiliam, formative assessment is conceptualized by five key strategies: (1) articulating and disseminating learning goals and success criteria, (2) orchestrating productive classroom discussions and activities to uncover student understanding, (3) providing feedback that facilitates student progression, (4) encouraging peers as instructional resources, and (5) fostering student autonomy in their learning process. These strategies are embedded within the three key processes including (a) where the learners are in their learning, (b) where they are going, and (c) what needs to be done to get them there. Based on this model, in phase one (where the learner is going), there is a need to have a clear definition of learning intentions and criteria for success. By doing this, all three agents know what the key factors are that they should focus on to achieve intended goals and be considered successful agents. In phase two (where the learner is now), evidence of the learner’s current learning understanding and performance should be collected. In phase three (how to get there), feedback with relevant points for improvement is provided to move learners from their current state to the desired place.

While it is mainly the role of teachers to design these three phases, learners are expected to play active roles in phases two and three by being instructional resources for each other and taking a role in their own and others’ learning. In addition, this model not only acknowledges teachers as central figures in formative assessment but also recognizes the essential roles of learners themselves and their peers as key contributors alongside teachers. This means that teachers, peers, and learners are considered the three main agents of formative assessment who play a role in the three key processes of formative assessment (see Appendix I for Black and Wiliam’s model of formative assessment). Drawing upon this model, in this study, we conceptualized formative assessment as “assessment for learning,” and we formulated the following research questions to guide this study:

RQ1. How does learning analytics support teachers in formative assessment practices within higher education contexts?

RQ2. How does learning analytics support students in peer formative assessment practices within higher education contexts?

RQ3. How does learning analytics support students in self-formative assessment practices within higher education contexts?

Method

We employed the PRISMA method (Preferred Reporting Items for Systematic reviews and Meta-Analyses) for systematic reviews (Moher et al., 2009). This method was used to define the search strategy, inclusion criteria, and screening of the identified studies. In the next step, we refined the remaining studies by following a critical appraisal strategy (Theelen et al., 2019). This helped us to only include studies that had a solid methodology. Later, we developed a coding scheme to analyze the final studies based on Black and Wiliam’s formative assessment model.

Search Strategy

Four main databases—namely, Web of Science (WOS), Scopus, IEEE Xplore, and ACM—were systematically searched using a comparable search strategy. These databases were chosen for their prominence as primary sources in the fields of learning analytics, education, psychology, and social sciences research (Berkowitz et al., 2017). Searches were conducted using the following terms, matched to the databases’ subject headings, and as keywords in the title and abstract. The search terms addressed different conceptualizations of (a) formative assessment and (b) learning analytics. The search term for formative assessment included “formative assessment,” “formative feedback,” “teacher assessment,” “teacher feedback,” “peer assessment,” “peer feedback,” and “self-assessment.” The search term for learning analytics included “learning analytics.” After conceptualizing the search terms, separated by the Boolean term, the search queries used were “learning analytics” AND “formative assessment,” OR “learning analytics” AND “formative evaluation,” OR “learning analytics” AND “formative feedback,” OR “learning analytics” AND “teacher assessment,” OR “learning analytics” AND “teacher feedback,” OR “learning analytics” AND “peer assessment,” OR “learning analytics” AND “peer feedback,” OR “learning analytics” AND “self-assessment,” OR “learning analytics” AND “self-feedback.”

Selection Criteria

In this study, two sets of selection criteria were implemented across two phases. In the first phase, the retrieved articles were filtered based on defined criteria: (a) publication in English, (b) publication between 2011 and 2023, (c) peer-reviewed status, and (d) availability of an online abstract. Therefore, we excluded studies that were published in non-English language, studies that were published before 2011 given the formal emergence of learning analytics in 2011, and studies that lack peer review due to potential bias and lack of quality control of these studies.

In the second phase, articles were restricted based on the following criteria: (a) articles had to present empirical research, and (b) they had to pertain to higher education contexts. Therefore, in the second round, we excluded peer-reviewed studies that were not empirical, such as conceptual, theoretical, or review papers. The rationale behind this decision was to prioritize the inclusion of studies offering direct and credible evidence regarding the impacts of learning analytics on formative assessment. Additionally, we excluded studies conducted in non–higher education contexts, such as those in K–12 education settings.

There were two main reasons why we restricted studies to only higher education contexts. Firstly, and from a theoretical standpoint, higher education students exhibit distinct characteristics compared to K–12 students, including levels of learning autonomy, experiences, and expectations, prior and domain knowledge, attitudes, and self-regulation levels (Banihashem et al., 2022). These factors significantly influence the approaches to learning and assessment methods employed in both K–12 and higher education settings. Secondly, and from the perspective of learning analytics, its application in higher education and primary education exhibits distinctions. In higher education, learning analytics predominantly aims to support both teachers and learners, while in primary education, its focus appears to be primarily on aiding teachers (Kovanović et al., 2021). This discrepancy arises partly due to the differing levels of independence and self-regulation among learners in these two educational settings. Learners in higher education typically demonstrate greater independence and self-regulation compared to their primary education counterparts, who rely more heavily on teacher guidance and support (Jossberger et al., 2010).

Moreover, the objectives and methodologies of analytics employed in learning analytics can vary between higher education and primary education, influenced by differences in age and educational levels. In higher education, analytics may encompass predictive modeling, clustering analysis, and social network analysis, aligning with the emphasis on fostering higher-order thinking skills (Banihashem et al., 2022). While in primary education, analytics may center on descriptive analysis, classification analysis, and clustering analysis (Ebner & Schön, 2013), these distinctions reflect the diverse needs, learning environments, and developmental stages inherent in higher education and primary education contexts. Given the attention to these differences, there is a need to separate review studies for higher education and K—12 education students.

Screening

We identified a total of 2727 papers that satisfied our initial inclusion criteria. These papers were found from several databases, with the following distribution: Web of Sciences (n = 142), Scopus (n = 2333), IEEE Xplore (n = 31), and ACM (n = 221). A total of 920 papers were identified as duplicates via EndNote X9, resulting in 1807 unique papers. A first screening showed that most of the papers (n = 1332) were not relevant to addressing our research questions due to various reasons. Firstly, some studies were excluded because they focused mainly on learning analytics but not on formative assessment (e.g., Cukurova et al., 2022). Therefore, there was no report on the contribution of learning analytics to formative assessment. Secondly, on the other hand, we excluded some studies since they focused on formative assessment but not on learning analytics (e.g., Spector et al., 2016). Thirdly, certain studies were excluded because they were not conducted within a formal education context (e.g., Yilmaz et al., 2022). After removing off-topic papers, 475 papers remained for the second round of screening.

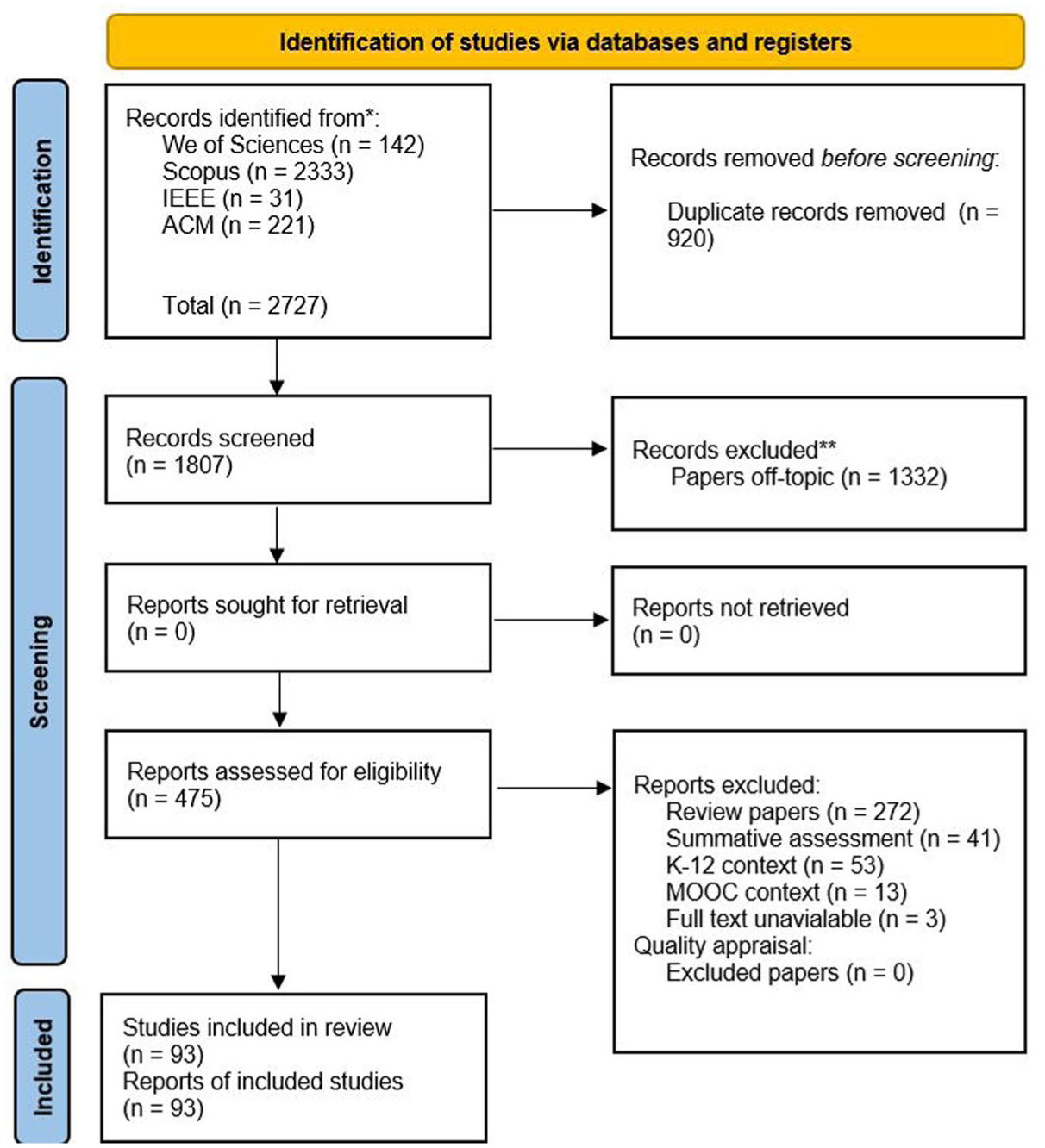

In the second round of the screening process, we discovered that 272 of the remaining studies were not empirical (e.g., Barana et al., 2019). Furthermore, 41 studies primarily focused on the use of learning analytics for summative assessment purposes rather than formative assessment. Moreover, 66 studies were conducted outside of higher education contexts, with 53 studies focusing on K–12 education (e.g., Hu et al., 2017) and 13 studies centered around MOOCs. Furthermore, we encountered difficulties in obtaining the full text of three studies (e.g., Sierra et al., 2018). As a result of these exclusions, we were left with 93 studies that remained for further evaluation and quality appraisal, as depicted in Figure 1.

Flowchart of the selection process based on PRISMA (Page et al., 2021).

Quality Appraisal

Ensuring the inclusion of empirical studies with valid and reliable methodologies is a crucial step for less biased and credible systematic review because valid and reliable methodologies help minimize bias in study design, data collection, and analysis, and by including studies with rigorous methodologies, systematic reviews can minimize the risk of biased results (Haddaway et al., 2015).

To fulfil this objective and evaluate the methodological rigor of the remaining publications, we utilized a set of quality appraisal criteria, as outlined by Theelen et al. (2019). These criteria consist of two distinct sections: firstly, a critical evaluation of qualitative studies, comprising nine items (e.g., study methodologically is clear, or participant involvement in data interpretation), and secondly, an appraisal of quantitative studies, consisting of seven items (e.g., Is the source population or source area well-described, or were outcome measures reliable?). Each criterion in the quality appraisal was assigned a score ranging from no mention (0) to extensive mention (3), as detailed in Appendix II. As recommended by Theelen et al., we excluded studies that received an average score of less than two points. For this purpose, the included studies (N = 93) were evenly distributed between two authors who acted as the reviewers (reviewer 1: N = 47; reviewer 2: N = 46). The two reviewers utilized quality appraisal criteria as a checklist to assess the methodological quality of the studies. They reported that all studies demonstrated solid methodology, resulting in the inclusion of all 93 studies in the final analysis.

Included Publications

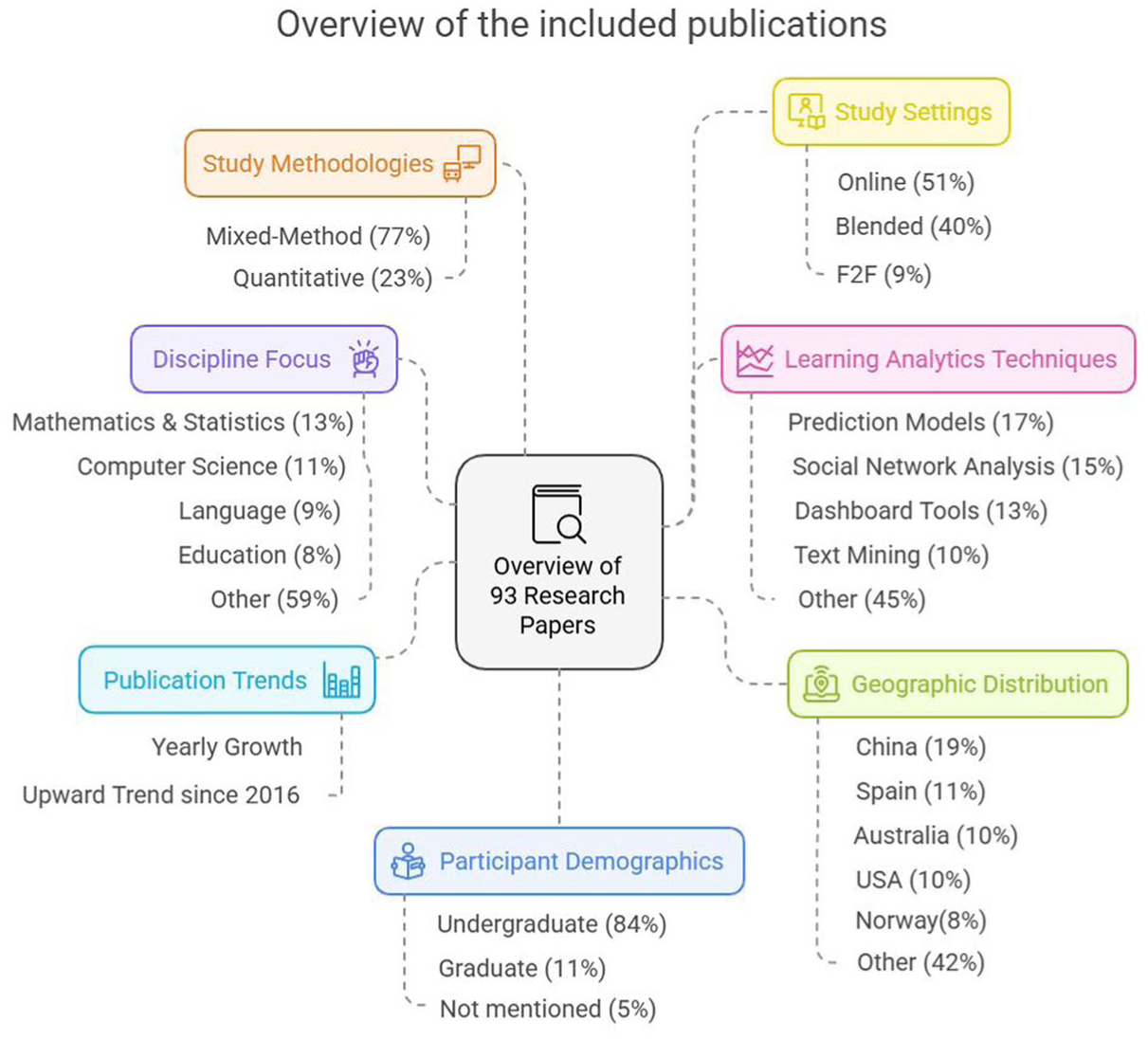

After the screening process, finally, 93 papers remained for analysis (see Appendix III). Figure 2 summarizes key findings from the papers included.

An overview of the included publications.

- Study methodologies: The majority of studies (77%) employed a mixed-methods approach, while 22% used quantitative methods and approximately 1% relied on qualitative methods.

- Study settings: The majority were conducted in online (51%) and blended (40%) environments, with fewer in face-to-face settings (9%).

- Learning analytics techniques: Common methods included prediction models (17%), social network analysis (15%), dashboard tools (13%), and text mining (10%).

- Geographic distribution: Studies were primarily from China (19%), Spain (11%), Australia (10%), the USA (10%), and Norway (8%).

- Participant demographics: Focus was largely on undergraduate students (84%), followed by graduate students (11%), with 5% not specifying.

- Publication trends: There has been a steady growth in publications, especially since 2016.

- Discipline focus: Most studies were in mathematics and statistics (13%), computer science (11%), language (9%), and education (8%), with 59% in other fields.

The diverse range of international journals, subjects, and geographical distribution of the included papers demonstrates citational justice and broad coverage in the current review. This diversity, along with the varied methodologies of the included papers, aligns with RER’s vision for comprehensive systematic reviews (Boveda et al., 2023).

Analysis Strategy

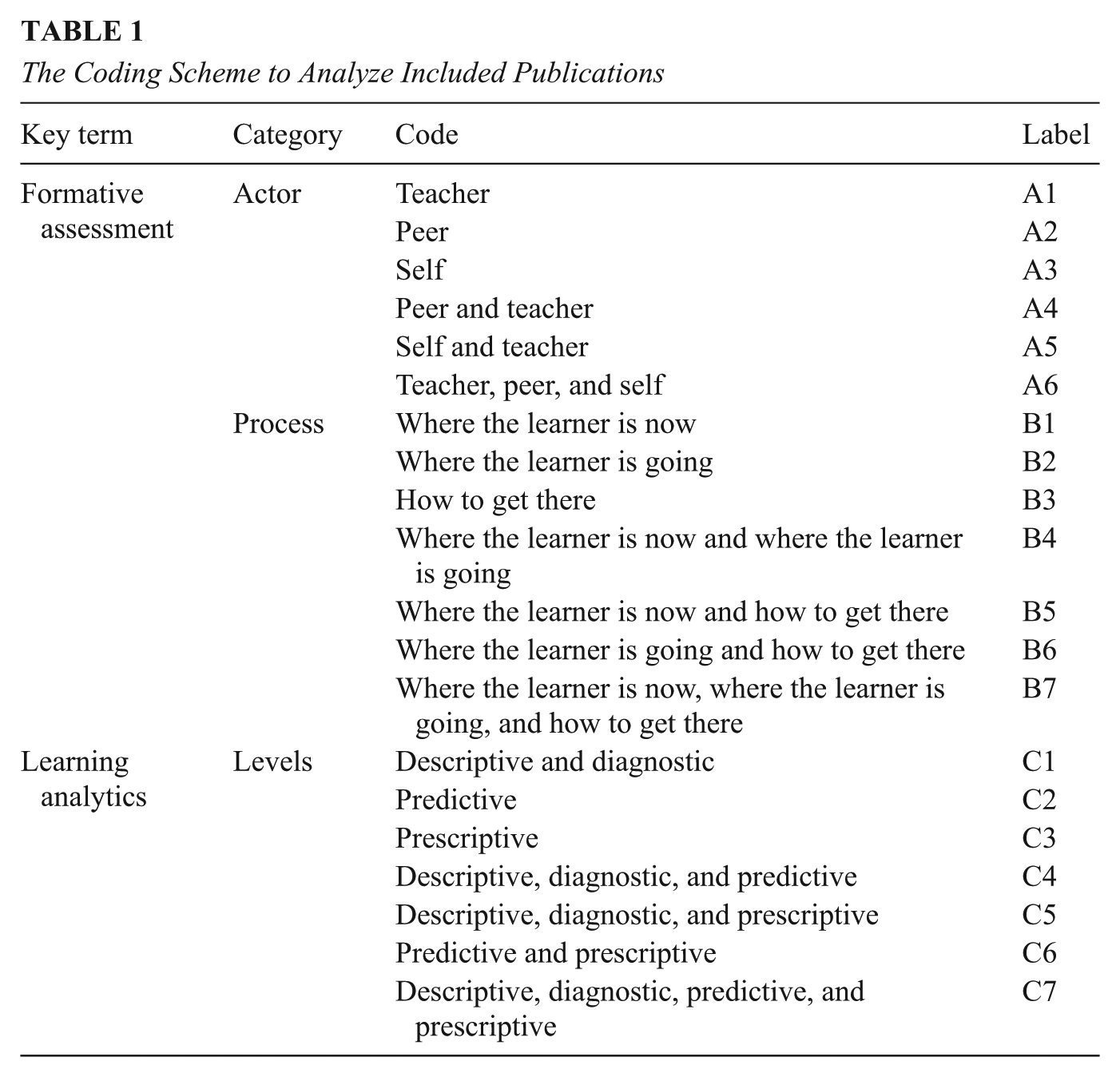

A coding scheme was developed utilizing the principles of the formative assessment model (Black & Wiliam, 2009) and the levels of learning analytics (Banihashem et al., 2022) to analyze the selected studies, as presented in Table 1. The analysis strategy for the formative assessment section comprised two primary categories: actors and processes. Within the actor category, there were three main actors identified: teachers, peers, and learners. The process category encompassed three primary stages, which were derived from Black and Wiliam’s model: the intended destination for the learner (“where to go”), the learner’s current position (“where is s/he now”), and the means to reach the desired outcome (“how to get there”). In the analysis strategy for learning analytics section, we delved into the core levels of learning analytics: descriptive and diagnostic, predictive, and prescriptive, as well as their combination.

The Coding Scheme to Analyze Included Publications

The reason why we also added the combined category of learning analytics is that during our review of studies linking learning analytics to formative assessment, we observed that several studies utilized learning analytics in multiple ways at different levels. This indicates that learning analytics is not always used in a unimodal way. Consequently, while some studies focused on a specific level of analytics to support a particular aspect of formative assessment, others applied learning analytics at various levels to enhance formative assessment at different stages. In light of this observation, we decided to include joint categories to distinguish between these studies and to highlight the multidimensional impact of learning analytics on formative assessment.

To analyze the included studies using the coding scheme, the documents were initially imported into the ATLAS.ti tool that facilitates the management and analysis of substantial amounts of qualitative data (Friese, 2019).

Coding Reliability

To improve the reliability of our review analysis, we employed an inter-rater reliability test to ensure consistency and agreement between the raters, who independently assessed the same set of data or observations. Inter-rater reliability serves as a crucial measure to evaluate the dependability of judgments or assessments made by different individuals (Armstrong et al., 1997). For the assessment of inter-rater reliability, one of the commonly employed statistical measures is the Kappa statistic, also referred to as Cohen’s kappa (Fleiss, 1971). In the present study, we utilized the Kappa statistic to control bias and examine inter-rater reliability. We randomly selected 30 papers, which were subsequently analyzed by two authors serving as coders. The inter-rater reliability assessment demonstrated a significant level of agreement (κ = .80, p < .001).

Results

Before delving into our research questions, we provide an overview of the current state of learning analytics–supported formative assessment in higher education. This overview can provide insights into where learning analytics stands in supporting formative assessment. For this purpose, we focused on exploring the key actors and key processes of formative assessment from Black and Wiliam’s (2009) formative assessment model, in which learning analytics was supportive.

Our findings revealed that among the selected studies, teachers emerged as the primary actors in learning analytics–supported formative assessment, being featured in 33% of the studies. This indicates that learning analytics played a vital role in supporting teachers in formative assessment. Following closely, self-assessment appeared in 25% of the studies. In 23% of the studies, both teachers and students (as self) played crucial roles as main actors in learning analytics–supported formative assessment. However, only 8% of the studies incorporated both students and peers in the learning analytics–supported formative assessment process, indicating a relatively smaller emphasis on peer involvement. Similarly, peers and teachers shared the main actor role in only 6% of the studies of learning analytics–supported formative assessment. This suggests that collaborative assessment involving peers and teachers was not as prevalent in the selected studies. Additionally, peer assessment received relatively limited attention, featuring in only 5% of the studies. In studies where teachers, students (as self), and peers played a role in formative assessment, teachers primarily received learning analytics–supported formative assessment, which was featured in a total of 59 studies. This was followed by formative self-assessment, which was featured in 51 studies, and formative peer assessment, which was featured in 18 studies.

Regarding how learning analytics contributes to the key stages of formative assessment, 51% of the studies employed learning analytics to provide insights into the “where the learner is now” process, thus shedding light on students’ past and current performance and progress. Only 6% of the studies addressed the “where the learner is going” aspect of formative assessment. These studies explored how learning analytics could predict students’ future learning trajectories. Interestingly, only 2% of the studies addressed the “how to get there” process of formative assessment. Furthermore, in 35% of the studies, learning analytics supported both key stages of formative assessment, “where the learner is now” and “where the learner is going.” Only 4% of the studies focused on employing learning analytics to support “where the learner is now” and “how to get there” processes. Only 2% of the studies implemented learning analytics to address all three key stages of formative assessment.

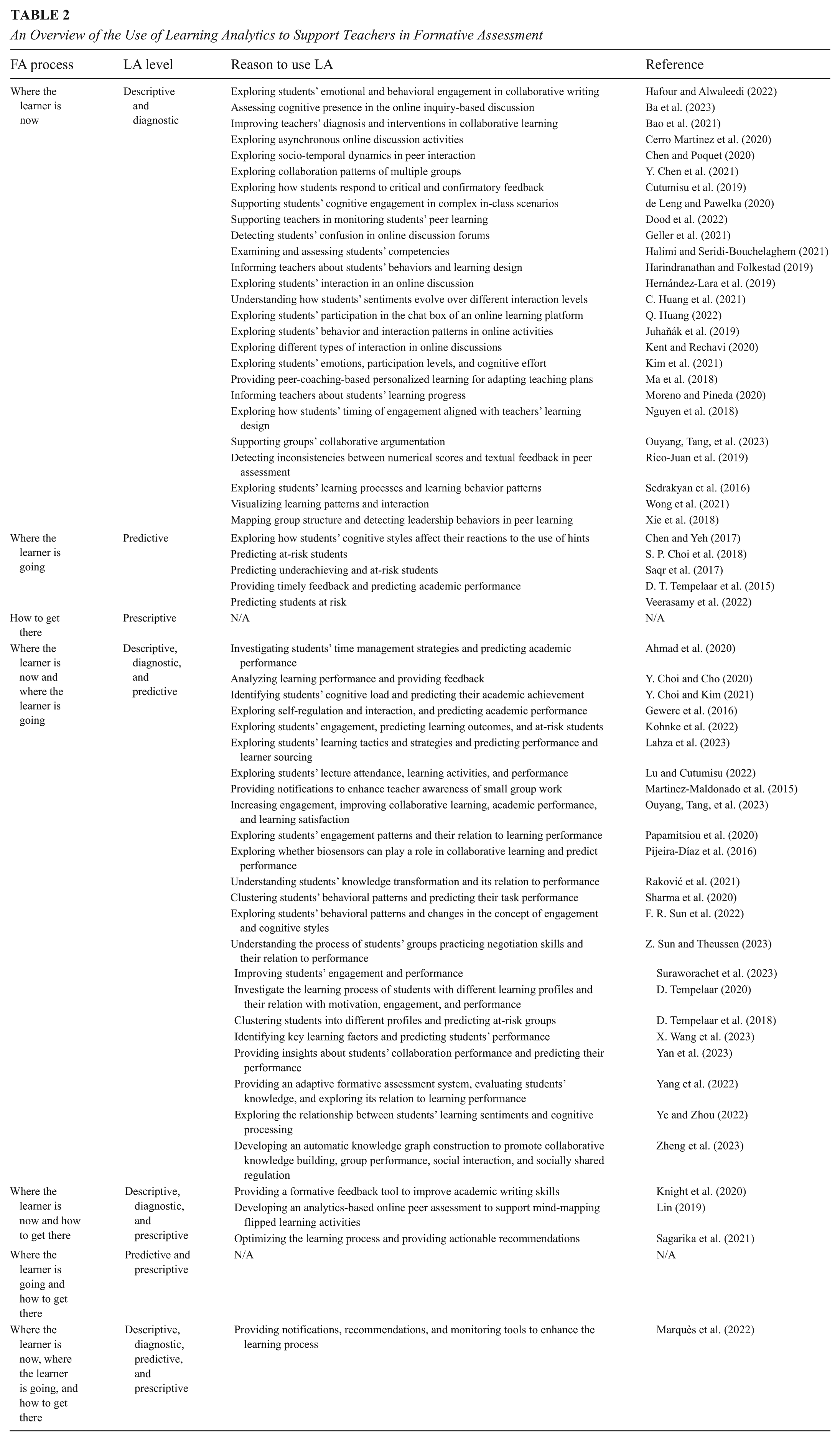

Results for RQ1

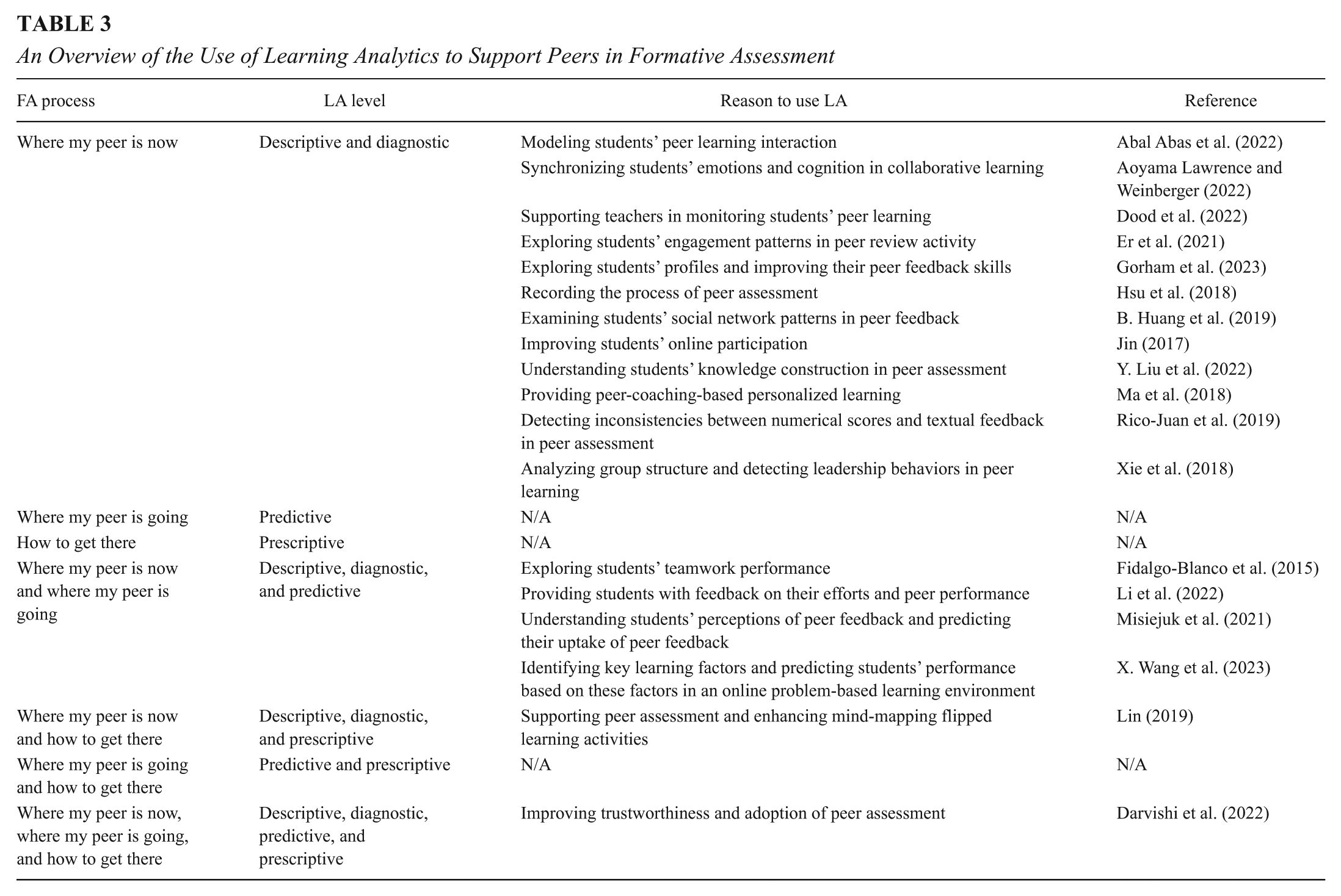

To elucidate the role of learning analytics in supporting formative teacher assessment in 59 selected studies, we focused on the three crucial processes of formative assessment based on Black and Wiliam’s (2009) model, and we examined at which level and for what reason learning analytics played a supporting role in selected formative teacher assessment studies. Our analysis revealed that nearly half of the learning analytics–supported formative teacher assessment studies concentrated on the initial stage of formative assessment, “where the learner is now” (45%, N = 27), followed by “where the learner is now” and “where the learner is going” (40%, N = 23). Only a few studies focused solely on “where the learner is going” (9%, N = 5), and an even smaller number (5%, N = 3) delved into both “where the learner is going” and “how to get there” (see Table 2).

An Overview of the Use of Learning Analytics to Support Teachers in Formative Assessment

Based on our findings from the reviewed papers, we explain how learning analytics supports teachers in the three essential formative assessment stages:

In the context of collaborative learning, learning analytics showed the potential to enhance teachers’ awareness. A study conducted by Martinez-Maldonado et al. (2015) revealed that the utilization of learning analytics for generating real-time automatic notifications regarding group collaborative tasks can enhance teachers’ ability to efficiently focus their attention. This, in turn, enables them to provide more relevant feedback to students engaged in small group learning activities. In another study, Chen et al. (2021) used learning analytics to create visual representations of group collaboration patterns within a problem-based learning (PBL) context. Presenting these visualizations to teachers enabled them to better facilitate and support students’ collaborative efforts in the context of PBL. In another study, F. R. Sun et al. (2022) built their study on Bloom’s cognitive taxonomy and made use of a sequential analysis to explore students’ change of concept of engagement. The results showed that the concepts of “evaluate” (31.52%) and “analyze” (27.77%) were the two most frequent behaviors in Bloom’s taxonomy.

Additionally, learning analytics can aid in promoting effective group argumentation (Ouyang, Tang, et al., 2023), group learning (Z. Sun & Theussen, 2023), knowledge construction (Zheng et al., 2023), and knowledge transformation (Raković et al., 2021) in collaborative learning settings. By offering insights into student participation and contributions within group dynamics, learning analytics empowers teachers to foster more productive and engaging collaborative learning experiences. Additionally, by visualizing students’ interaction patterns, teachers can identify variations in students’ levels of interactivity during collaborative learning (Juhaňák et al., 2019). Such visualizations enable teachers to pinpoint students who might require additional support and encouragement to actively participate, ensuring a more inclusive and participatory learning environment. Moreover, learning analytics provides an in-depth understanding of students’ behavioral and cognitive engagement during the learning process (e.g., de Leng & Pawelka, 2020; Sharma et al., 2020). These insights can help teachers gauge the effectiveness of their instructional methods and identify potential challenges that students might be facing. In conclusion, our findings from the reviewed papers indicate that learning analytics can inform and support teachers in different ways regarding students’ learning progress and performance.

Results for RQ2

Our findings revealed that out of the 18 studies examined, twelve of them concentrated primarily on the initial stage of formative assessment, known as “where the learner is now.” In these learning analytics–supported peer assessment studies, the use of learning analytics was limited to a descriptive and diagnostic level. Its purpose was to offer students insights into their peers’ current learning progress within specific tasks. Conversely, four studies explored the potential of learning analytics to support the two other stages of formative assessment: “where the learner is now” and “where the learner is going.” These studies utilized learning analytics at a higher level, employing predictive analytics next to descriptive and diagnostic analytics to not only provide insights into current learning performance but also to anticipate future learning behavior. Only one study integrated learning analytics to address both “where the learner is now” and “how to get there.” In this case, learning analytics operated at a prescriptive level and offered actionable insights and strategies to guide peers in providing effective feedback. Finally, just one study managed to address all three stages of formative assessment (see Table 3).

An Overview of the Use of Learning Analytics to Support Peers in Formative Assessment

Based on our findings from the reviewed papers, we explain how learning analytics supports peers in the three essential formative assessment stages:

Beyond feedback, learning analytics also showed a role in supporting group projects and teamwork. Fidalgo-Blanco et al. (2015) highlighted how learning analytics can inform students about their peers’ team efforts and performance in group work. By timely extracting and analyzing data, students can detect potential issues and take corrective measures to enhance the group learning experience. Furthermore, learning analytics could provide insights into how students construct knowledge during peer assessment activities (Y. Liu et al., 2022). By analyzing the data generated from peer assessment activities, insights can be obtained into how students engage with the material, identify misconceptions, and tailor instructional approaches accordingly. Last but not least, a study by Xie et al. (2018) showed that learning analytics could assist in identifying students who exhibit peer leadership behavior, which is a critical factor in effective group composition in collaborative learning settings. By recognizing students who naturally take on leadership roles, balanced and productive teamwork can be supported in peer learning. To conclude, the literature indicates that learning analytics could support peers in formative assessment processes regarding “where the peers are now” in multidimensional ways.

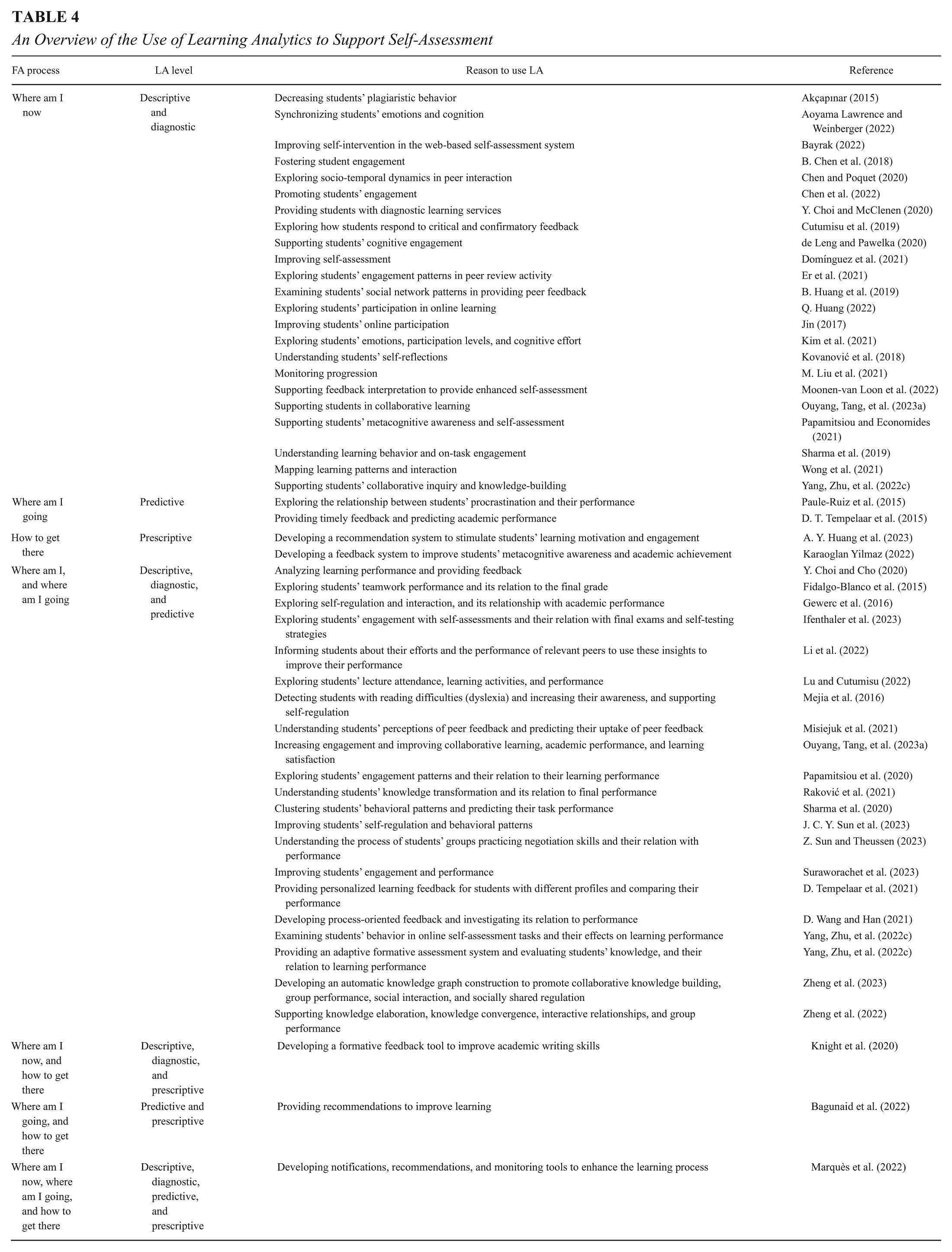

Results for RQ3

In our analysis of 51 self-assessment studies, we observed that most of the studies (44%, N = 23) focused on employing learning analytics at a descriptive and diagnostic level. This approach aims to shed light on “where the student as self is now” in their learning journey. In contrast, a relatively smaller number of studies delved into the predictive level (4%, N = 2) and prescriptive level (4%, N = 2) of learning analytics. Additionally, a significant proportion of studies (42%, N = 21) demonstrated the utilization of learning analytics at multiple levels, incorporating both descriptive, diagnostic, and predictive levels (see Table 4).

An Overview of the Use of Learning Analytics to Support Self-Assessment

Based on our findings from the reviewed papers, we explain how learning analytics supports self-assessment in the three essential formative assessment stages:

Another aspect where learning analytics can be useful is in promoting students’ meta-cognitive awareness in the self-assessment process. Papamitsiou and Economides (2021) found that using learning analytics to visualize students’ on-task engagement and effortful behavior during task performance can act as a meta-cognitive awareness tool. Students gain a deeper understanding of their learning strategies and cognitive processes, enabling them to identify areas for improvement and make necessary adjustments to their approach to learning. Furthermore, learning analytics was instrumental in addressing issues related to academic integrity in the self-assessment process. For instance, Akçapınar (2015) demonstrated how learning analytics can provide crucial insights into students’ plagiaristic behavior in online assignments. By being aware of their tendencies toward plagiarism, students can take proactive steps to avoid it, such as understanding proper citation practices and the significance of originality. Moreover, learning analytics ventured into students’ emotions during the learning process. Aoyama Lawrence and Weinberger (2022) explored how learning analytics can mine emotional states and demonstrate how emotions can influence various aspects of students’ learning journey. This knowledge helps students recognize their emotional triggers and responses, enabling them to develop emotional intelligence and effectively manage their emotions. In conclusion, the literature suggests that learning analytics could support students’ self-assessment in different ways by providing insights into their learning progress and where they stand in the learning process.

Discussion

The overall picture of the current state of learning analytics–supported formative assessment indicated that teachers were the main stakeholders and strongly supported by learning analytics-supported formative assessment. Self-assessment was also prevalent in a considerable number of studies showcasing support from learning analytics. In contrast, the engagement of peers in the formative assessment and using learning analytics to support it appeared to be limited. This underlines a noteworthy avenue for further investigation and integration of learning analytics, as peers play a pivotal role in facilitating peer learning, nurturing collaborative and supportive learning environments, and offering diverse insights into students’ advancements (Topping, 1998).

In addition, the overall findings revealed that learning analytics predominantly serve the purpose of offering insights into the initial stage of the formative assessment process, “where the learner is now.” Followed by using learning analytics to tackle “where the learner is going,” delving into the prediction of students’ upcoming learning paths. While limited attention has been devoted to the use of learning analytics to support the “how to get there” stage of formative assessment, which is integral in supporting the pathway to improvement process within formative assessment (Black & Wiliam, 2009; Shute, 2008). That means that most studies operated at the descriptive and diagnostic levels, representing the lower levels of learning analytics. Also, the usage of learning analytics at the predictive and, more importantly, prescriptive levels was limited.

Findings regarding formative teacher assessment highlight the strong utility of learning analytics in supporting teachers, particularly, with regard to understanding “where the learner is now” (e.g., visualizing learning behavior and progression), “where the learner is going” (e.g., identifying students at risk of failure), and “how to get there” (e.g., offering personalized feedback on students’ writing endeavors). The findings support the adoption of learning analytics to support teachers in their instructional practices. By leveraging data and insights from learning analytics, teachers can make well-informed decisions, enhance student learning experiences, and foster improved academic performance and success among their students.

In the context of learning analytics for formative peer assessment, the findings revealed multifaceted benefits such as improving the effectiveness of peer feedback, facilitating peers’ teamwork, and providing recommendations to support peer assessment (e.g., Misiejuk et al., 2021). These findings indicate that integrating learning analytics into peer assessment can lead to more effective and informed learning experiences for all students involved in peer learning.

Regarding the key stages of formative peer assessment, the findings revealed a clear emphasis on understanding “where my peer is now,” with most studies focusing on providing students with insights into their peers’ present learning status. This aligns with the notion that peer learning can be valuable in helping students become aware of their progress and that of their peers (Yang, Zhu, et al., 2022c). However, there was comparatively less attention given to the aspects of “where my peer is going” and “how to get there.” This could be attributed to several factors. From an educational perspective, providing students with insights into their peers’ possible future performance may not hold as much educational value. This information might inadvertently create unnecessary competition among students, diverting their attention away from genuine learning and collaboration (C. H. Chen, 2019).

Furthermore, ethical considerations surrounding the sharing of information in learning analytics deserve attention. While some studies have focused on addressing ethics and privacy concerns when the stakeholders are teachers or the students themselves (e.g., Drachsler & Greller, 2016), there remains a gap in understanding and dealing with ethical implications related to sharing learning data with peers. Sharing learning data with peers raises unique ethical challenges that require careful examination. For example, one primary concern is the issue of consent. Students should have the right to decide whether they want their learning data to be shared with their peers. Another ethical aspect to consider is the potential impact on students’ psychological well-being (e.g., Hanson et al., 2016). Providing predictive insights into their peers’ future performance might lead to feelings of competition, pressure, or comparison. Such emotional consequences can be detrimental to students’ learning experiences and may even create an unhealthy learning environment (e.g., Buchanan & Bowen, 2008). Therefore, there is a need to develop guidelines and best practices for sharing learning analytics insights with peers. In addition, to strike a balance, learning analytics in peer assessment should primarily focus on understanding the current status of peers’ learning. This information can be used constructively by students to support each other and foster a positive learning environment.

The findings for formative self-assessment suggest that learning analytics is predominantly used at the descriptive and diagnostic levels to support self-assessment (e.g., Y. Choi & McClenen, 2020; Kovanović et al., 2018). This means that learning analytics can support students’ self-assessment by giving insights into their learning progress and enhancing their metacognitive awareness. While this approach provides useful feedback and self-awareness, there is an opportunity for greater exploration of the predictive and prescriptive aspects of learning analytics. By integrating predictive analytics to forecast future performance and prescriptive analytics to offer personalized recommendations, self-assessment could be further enhanced (Y. Choi & Cho, 2020). Adopting a more comprehensive approach by utilizing learning analytics at multiple levels can empower students with a holistic view of their learning progress, contributing to more effective self-assessment practices (Banihashem et al., 2022). From a practical perspective, educational institutions should persist in integrating learning analytics into their classrooms. This integration has the potential to empower students with self-awareness and self-reflection, ultimately fostering self-regulated learning.

From a theoretical perspective, the understanding of formative assessment has evolved considerably, shifting from “assessment of learning” toward “assessment for learning” and “assessment as learning” (Dann, 2014). While “assessment of learning” focuses primarily on evaluating outcomes and assigning grades, “assessment for learning” centers on supporting ongoing learning by providing timely, actionable insights (Stiggins, 2005; Wood, 2018). In this context, feedback plays a central role, acting as a compass to guide both learners and teachers in adjusting strategies to improve learning (Shute, 2008). Building further, the notion of “assessment as learning” emphasizes the integration of assessment into the learning process itself, where students are actively engaged in assessing their own progress (Dann, 2002; Clark, 2010).

This conceptual progression aligns closely with the goals of learning analytics–supported formative practices, where the aim is not only to provide feedback but also to cultivate students’ capacity to reflect, self-regulate, and take ownership of their learning. Our findings align with this broader theoretical shift. The design of the learning analytics tools in this study, particularly the feedback analytics, supports both “assessment for learning” and “assessment as learning” principles by offering students opportunities to review, interpret, and revise their work based on specific criteria. However, the limited uptake of feedback among some students points to the need for greater emphasis on developing feedback literacy and reflective capacity—essential components in the “assessment as learning” paradigm.

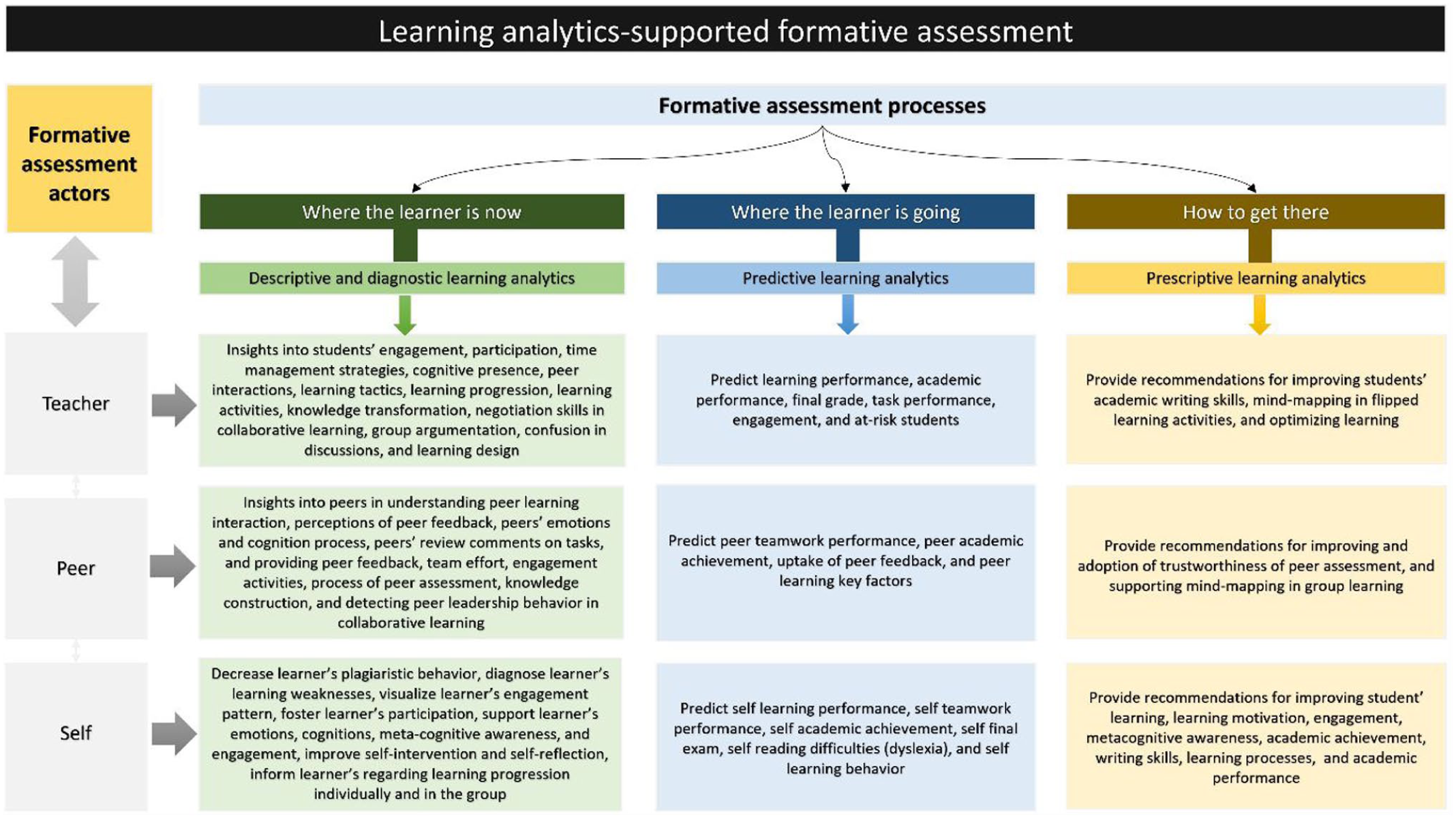

As we outlined the role of learning analytics in supporting formative assessment, now, we can propose an overview of learning analytics–supported formative assessment (see Figure 3). The provided figure offers a comprehensive overview of the current status of this field within higher education, firmly grounded in a well-established model. It could serve as a visual representation of the alignment between learning analytics outcomes and the elements of formative assessment. Showing this alignment could potentially help teachers, peers, and students to place trust in, interpret, and act upon the insights provided by learning analytics for formative assessment. Moreover, this overview could contribute to filling the knowledge gap in the literature regarding the missing research areas and identification of specific areas where learning analytics can be most effective in supporting formative assessment.

An overview of learning analytics–supported formative assessment based on the Black and Wiliam model.

Limitations and Suggestions for Future Research and Practice

We recognize and acknowledge the limitations encountered in this review study. Firstly, the decision to focus solely on empirical studies (because of their rigorous methodologies and reliable findings) limited our inclusion of other valuable sources of information. By excluding reviews and theoretical papers, we may have missed out on important insights and perspectives that could have enriched the comprehensiveness of our results. Secondly, while we conducted a thorough search using prominent databases—namely, Web of Sciences, Scopus, IEEE Xplore, and ACM, there is a possibility that relevant studies might exist outside of these indexed sources. Therefore, there remains a chance that some pertinent research was not included in our review. Thirdly, the focus of our study was specifically on learning analytics–supported formative assessment in higher education. Consequently, the generalizability of our findings to other educational settings, such as primary, secondary, or vocational education, could be limited. The dynamics and context of these settings may vary significantly, and thus, the implications of learning analytics in formative assessment could also differ. Future investigations can consider exploring these different educational contexts to understand potential variations in the use and impact of learning analytics.

Fourthly, a key theoretical limitation of this review is its exclusive reliance on Black and Wiliam’s (2009) formative assessment model. While influential, this model has been critiqued for its simplicity, lack of conceptual clarity, empirical rigor, and datedness (Bennett, 2011; Torrance, 2012). More recent models, such as those by Bearman et al. (2023) and Madland et al. (2024), offer more contextual sensitivity and could be better suited to digital learning environments. Future research should engage with contemporary models and critically examine how learning analytics aligns with or challenges newer models of formative assessment. It should also assess whether these tools support meaningful learning or simply reinforce procedural compliance, with greater attention to the quality of learning outcomes. Fifthly, this study employed a systematic literature review to provide a comprehensive overview of how learning analytics supports formative assessment. While this approach offers valuable insights, it does not include a statistical synthesis of effect sizes across the included studies. Unlike a meta-analysis, which quantitatively aggregates effect sizes to determine the strength of evidence, our review focused on qualitative synthesis aligned with a formative assessment model proposed by Black and Wiliam (2009). We acknowledge this as a limitation and encourage future research to conduct meta-analyses that can estimate the overall effect size of learning analytics–supported formative assessment on learning. Lastly, this review did not include generative AI as a keyword. Future research should examine how generative AI can be responsibly integrated into feedback and assessment, particularly through hybrid human–AI approaches as outlined by Banihashem et al. (2025b).

Drawing from our research findings, we propose several recommendations for future research in the domain of learning analytics and formative assessment. These suggestions aim to foster deeper exploration into the capabilities of learning analytics in providing support for formative assessment within higher education settings.

First, our findings showed that limited attention was paid to the use of learning analytics for peer-based formative assessment. Future research should aim to delve into how learning analytics can support peer assessment further. Second, we found that there is a lower emphasis on using learning analytics at a prescriptive level. Future research and educational efforts should focus on exploring and implementing the potential benefits of prescriptive learning analytics in formative assessment. In line with this, to maximize the potential benefits of learning analytics in formative assessment, there is a need for more research and implementation efforts that explore the integration of learning analytics in addressing all three key processes of formative assessment comprehensively. This could lead to a more holistic and effective use of learning analytics to support student learning and instructional practices.

Third, while our review revealed the positive role of learning analytics in supporting teachers, peers, and students in formative assessment, it is evident that some critical factors, such as students’ epistemic beliefs and cultural background, have not been thoroughly explored in the existing literature. For instance, some studies have indicated that students’ thought processes and perceptions of knowledge significantly impact their engagement in learning and their ability to provide constructive feedback to peers (e.g., Noroozi & Hatami, 2018). Therefore, future studies should focus on investigating the role of epistemic beliefs and cultural background in the context of learning analytics–supported formative assessment to gain a more comprehensive understanding. Fifth, as we have highlighted the importance of addressing ethical considerations in the context of learning analytics–supported peer assessment, future studies should place a significant focus on this critical aspect. Exploring and understanding the potential ethical implications of using learning analytics in peer assessment is essential to ensure the responsible and ethical implementation of these technologies in educational settings.

Building on our findings, we suggest several recommendations for educational practice.

Enhancing collaborative learning with learning analytics: Teachers should explore the integration of learning analytics insights in peer-based formative assessment to foster effective collaborative learning experiences. By leveraging learning analytics tools, peers can receive valuable feedback and support, leading to improved engagement and participation in collaborative learning activities.

Utilizing prescriptive learning analytics: Educational practitioners should emphasize the use of learning analytics as a prescriptive tool to offer personalized recommendations and guidance for individual student progress. Implementing prescriptive learning analytics in formative assessment can optimize learning outcomes by tailoring instructional strategies to meet the unique needs of each student.

Empowering teachers with predictive and prescriptive analytics: Educational institutions should invest in development efforts to create predictive and prescriptive analytics tools that assist teachers in anticipating student learning trajectories and providing actionable guidance. Subsequently, educational practitioners should emphasize the use of learning analytics at a higher level to offer personalized recommendations and guidance for individual student progress. Such implementation in formative assessment can optimize learning outcomes by tailoring instructional strategies to meet the unique needs of each student.

Comprehensive integration of learning analytics: To fully capture the potential benefits of learning analytics, teachers should focus on integrating these tools across all three key processes of formative assessment—descriptive and diagnostic, predictive, and prescriptive. This holistic approach allows for a more informed understanding of student progress, leading to targeted and effective instructional practices.

Addressing ethical considerations in learning analytics–based peer assessment: Educational institutions should prioritize addressing ethical concerns related to the use of learning analytics in peer assessment. Developing clear guidelines and best practices for handling sensitive student data and ensuring the ethical sharing of learning analytics insights among peers is essential to maintaining student privacy and psychological well-being.

The multifaceted use of learning analytics to support formative assessment: The reported studies on the way learning analytics supports formative assessment in higher education (e.g., exploring time management strategies, visualizing learning patterns, identifying at-risk students, providing personalized recommendations, and supporting formative assessment processes for teachers, peers, and self-assessment) serve as a valuable example for educational practitioners. By incorporating learning analytics tools and insights in similar ways, educational practitioners can enhance formative assessment practices and support student learning effectively.

Conclusion

The contributions of this study to the existing literature are manifold. Firstly, this study strengthened the theoretical bridge between learning analytics and formative assessment by adopting a well-established model in conducting the systematic review. This alignment reinforces the understanding of the interconnections between these two domains and enhances the potential for more informed decision-making in the design and implementation of learning analytics for formative assessment. Subsequently, by grounding this literature review in a well-known model of formative assessment, we were able to provide an overall picture of the extent to which learning analytics results for formative assessment were in line with the model of formative assessment. Secondly, by offering a comprehensive overview of the current state of learning analytics–supported formative assessment, this research identified crucial research gaps, guiding future researchers in their investigations. This insight helps direct the focus of future studies to address areas that require further exploration and development. Thirdly, the study’s findings outlined specific ways in which learning analytics can be leveraged to support teachers, peers, and students in formative assessment practice. This information has the potential to serve as a guiding compass for educational stakeholders, offering insights on how to integrate and optimize learning analytics to enhance the effectiveness of formative assessment practices.

Included papers

The included papers can be found in the online supplementary data for this review.

Footnotes

Author’s Note

Seyyed Kazem Banihashem is now affiliated with School of Health Professions Education, Maastricht University.

Authors

SEYYED KAZEM BANIHASHEM is an assistant professor at Open Universiteit, Heerlen and a lecturer at Maastricht Univresity, Maastricht, the Netherlands; email:

DRAGAN GAŠEVIĆ is a distinguished professor of learning analytics and the director of the Centre for Learning Analytics (CoLAM) at Monash University, Melbourne, Australia; email:

OMID NOROOZI is an associate professor of educational technology at Wageningen University and Research, Wageningen, the Netherlands; email:

HALSZKA JARODZKA is a professor of instructional design and online learning at Open Universiteit, Heerlen, the Netherlands; email:

DESIREE JOOSTEN-TEN BRINKE is a professor of assessment at Maastricht University, Maastricht, the Netherlands; email:

HENDRIK DRACHSLER is a professor of educational technologies and learning analytics at DIPF Leibniz Institute for Research and Information in Education, Frankfurt am Main, Germany, and Goethe University, Frankfurt am Main, Germany; email:

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.