Abstract

To accelerate literacy learning for upper-elementary multilingual children designated as English learners (ML-ELs), teachers need instructional tools that create sustained opportunities for reading and discussing informational texts, examining the language encountered in those texts, and building new content knowledge. To address this need, we developed a multicomponent small-group intervention to build ML-ELs’ reading comprehension by emphasizing knowledge, language, and structured inquiry (K.L.I.). We describe our iterative design process and report findings related to usability, which we argue is an essential but often unreported step in examining the internal logic of new interventions. Using design-based research, we developed K.L.I. through repeated design-implement-observe-revise cycles in collaboration with 15 teachers over 2 years. We analyzed teacher interviews and ratings of lessons implemented during afterschool supplemental instruction. Findings suggest pressure points and enhancing factors affecting the usability of K.L.I. These findings can inform future efforts to design and implement similar multicomponent reading comprehension interventions.

Keywords

It is vital for educators to foster literacy proficiency among multilingual children identified as English learners (ML-ELs 1 ), who now constitute 10.4% of the school-age population in the United States (National Center for Education Statistics [NCES], 2022). When learning to read English in school, ML-ELs typically develop word reading skills (e.g., phonological awareness, decoding) at levels similar to their native English-speaking counterparts (Mancilla-Martinez & Lesaux, 2011). However, ML-ELs can experience challenges in higher-level language and reading comprehension skills in English, particularly in complex, informational texts. This challenge becomes more pronounced as they concurrently build their academic language proficiency in English and gain domain and content knowledge in upper-elementary grades (Goldenberg, 2020; Snow & Matthews, 2016).

The challenges associated with reading comprehension development may be attributed to the multifaceted nature of the construct. Reading comprehension depends on multiple language skills, including orthography, phonology, morphosyntax, and vocabulary. Children who experience difficulties with reading comprehension often have underlying difficulties with these language components (e.g., Cain, 2007; Murphy et al., 2016; Pearson et al., 2020). Moreover, reading comprehension depends on an array of cognitive skills, such as the ability to connect the information presented in the text with their existing knowledge (Alexander et al., 1994; Kintsch, 1998) and to monitor ongoing understanding during reading (Oakhill et al., 2019). To support ML-ELs in the development of reading comprehension, teachers need resources that address these multiple facets.

There has been increasing recognition of the importance of a multicomponent instructional approach to enhance reading comprehension among upper-elementary students (Wanzek et al., 2010). While there are relatively few studies in the existing literature focused specifically on reading comprehension interventions for upper elementary ML-ELs, emerging research emphasizes the critical importance of cultivating diverse facets of the English language (e.g., vocabulary, morphology, and morphosyntax) through structured and sustained instructional routines with collaborative text-based discussions (e.g., Capin et al., 2021; Proctor et al., 2020; Vaughn et al., 2017, 2022).

To add to the resources available to teachers, we developed a small-group intervention intended to bolster English reading comprehension among ML-ELs in Grades 3 to 5, with an emphasis on the development of content knowledge, language competency, and opportunities for structured inquiry (K.L.I.). In this study, we describe the iterative design process for developing the intervention and report findings related to usability, defined as “the extent to which the intended user understands or can learn how to use the intervention effectively and efficiently, is physically able to use the intervention, and is willing to use the intervention” (Institute of Education Sciences, 2017, p. 53). We posit that usability testing can provide valuable insights for enhancing the design and implementation of complex interventions.

There is a long history of educational research that acknowledges that new tools and pedagogies will have limited impact in schools if the intended users are unable or unwilling to deploy them (e.g., Carnine, 1997; Klingner et al., 2013). Despite the acknowledged importance of usability of educational interventions, usability is rarely the explicit goal of iterative design processes in published intervention research. Aside from a few studies in which usability testing is nested within a broader focus on feasibility (e.g., Philippakos et al., 2024; Poch et al., 2020), we are unaware of recently published studies that detail the data-driven decision-making involved in successively improving the usability of a new literacy intervention through iterative design cycles. This study can help fill this gap.

The K.L.I. Intervention Framework

K.L.I. is intended for the subset of upper-elementary ML-ELs who need supplemental reading comprehension support. To ground our understanding of the components involved in reading comprehension, we draw on the Simple View of Reading (SVR), which posits that the broad domains of decoding and language comprehension explain variations in text comprehension among children (Hoover & Gough, 1990; Hoover & Tunmer, 2022). We focus our work primarily on the language comprehension domain, which is made up of multiple component skills and competencies, including inferential reasoning, conceptual knowledge, and awareness of language structure (Scarborough, 2001). Text comprehension requires the fluid orchestration of these components in the active process of meaning making. Thus, our understanding of reading comprehension is also informed by theory that explains how this process unfolds, namely the Construction-Integration Model (Kintsch, 2004). This model holds that readers build meaning in layers using their linguistic and world knowledge to fill in gaps left by the author to move from a mostly literal textbase representation to a coherent situation model (Kintsch, 1988). This process is driven by inferences, defined in text processing theories as unstated ideas inserted in the reader’s mental model, developed by relating two or more pieces of information together across different parts of the text or across the text and the reader’s prior knowledge (Oakhill et al., 2015).

In line with this theoretical grounding, K.L.I. possesses three core characteristics signaled in its name. First, K.L.I. is knowledge-focused, grounded in an understanding of reading comprehension as a process that begins with background knowledge and ends with the construction of new enduring knowledge (Davis et al., 2022; Duke et al., 2011). As proposed in the Construction-Integration Model (Kintsch, 2004), readers must use and revise their topic knowledge to construct a coherent mental model of a text. A coherent representation meets the constraints of both the text and the reader’s knowledge (Kucan & Palincsar, 2013; van den Broek, 2010) and corresponds with the demands of the activity and context of the reading (RAND Reading Study Group, 2002). Content knowledge is crucial for reading comprehension, especially for ML-ELs, because it equips students with a scaffold upon which new information and vocabulary can be anchored (Davis et al., 2017; Taboada et al., 2012; Vaughn et al., 2017). For ML-ELs, who may be navigating both a new language and unfamiliar cultural references, a strong foundation in content knowledge can reduce the cognitive load associated with language processing (Cummins, 2021; Goldenberg & Cárdenas-Hagan, 2023).

Second, K.L.I. is language-focused, targeting the components of written language proficiency essential for successful text comprehension. Language and knowledge work together in the construction of meaning (Kintsch, 1988; McNamara & Kintsch, 1996). Readers apply their linguistic knowledge to construct a textbase representation, which forms the basis for the top-down process of activating inferences and integrating text and knowledge to achieve a coherent mental model (Pearson & Cervetti, 2015). Models of reading that delineate the competencies in the language comprehension domain have highlighted the importance of supporting children in the development of multiple layers of language spanning from word-level (e.g., semantic and morphological awareness) to sentence-level structures (e.g., syntax), and the organizational patterns of extended text (Duke & Cartwright, 2021; Hoover & Tunmer, 2022; Knecht et al., 2023; Language and Reading Research Consortium [LARCC], 2015; Oakhill et al., 2015). By virtue of being multilingual, ML-ELs have unique language assets that enable them to notice and reflect on these English language structures in complex and interesting ways. They can link new words they are learning to similar words in their home languages and explain their learning to their peers in their own words, which, for many, might involve home language use (Proctor et al., 2020). Teachers—even those who may not speak students’ home languages—can encourage students to leverage their existing language assets (Goodwin & Jiménez, 2016).

Finally, K.L.I. incorporates opportunities for structured, text-based inquiry (Coiro et al., 2016; Sekeres et al., 2014). Students are guided to collect new ideas related to a set of overarching questions as they engage in language and reading activities. They synthesize their new knowledge at the conclusion of each module in informal presentations to peers and teachers. Although K.L.I. focuses more explicitly on knowledge and language outcomes than on the development of inquiry skills, the structured inquiry approach establishes a shared learning purpose for the group and empowers ML-ELs to develop confidence as experts who can create and share new knowledge with others.

The K.L.I. Intervention Design Logic

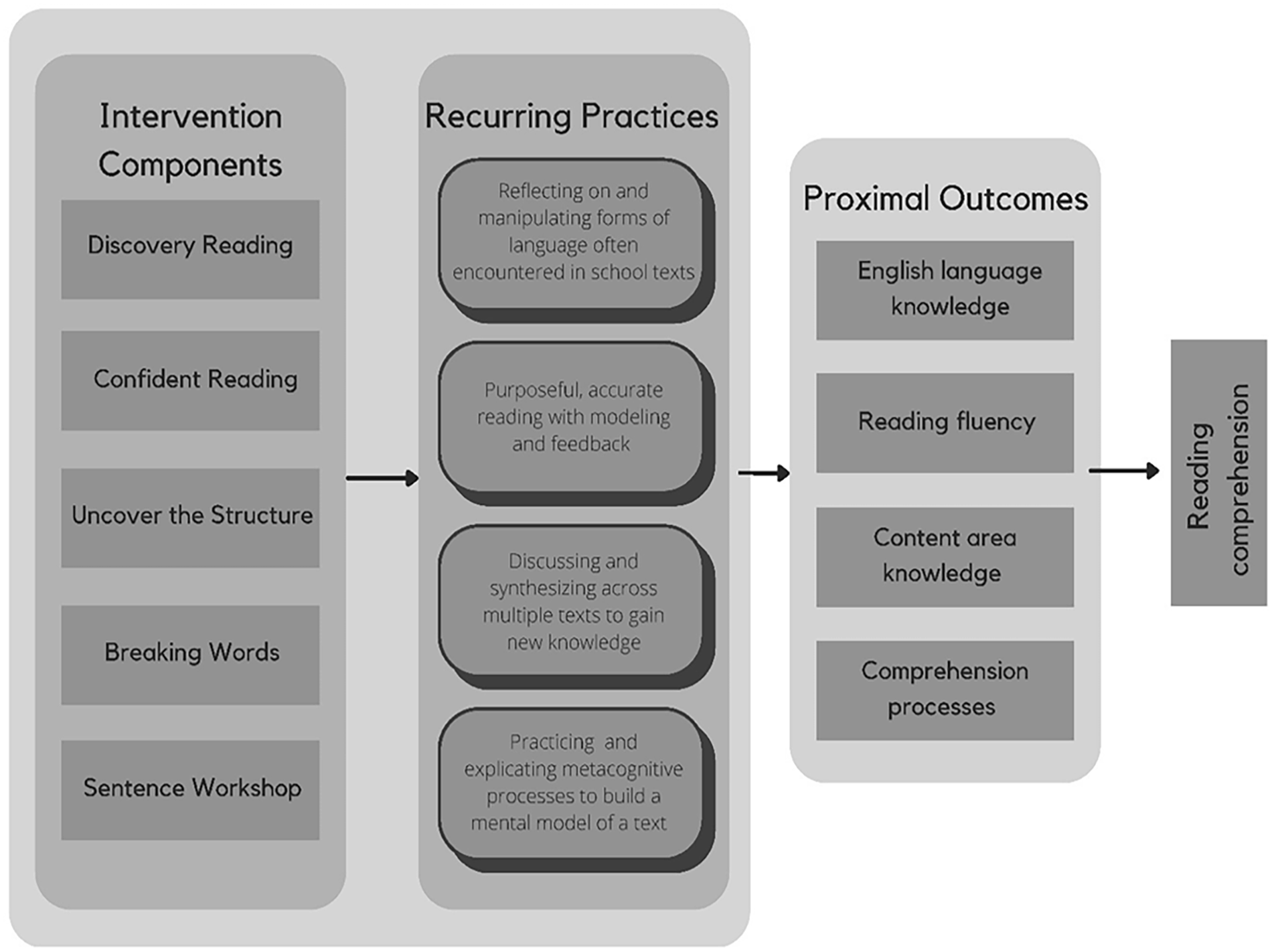

Figure 1 offers a visual representation of the logic model outlining the anticipated sequence of effects, from the implementation of the K.L.I. components to the attainment of proximal (immediate) and distal (long-term) learning goals. The theory and resources that constitute K.L.I. were developed by working backwards from the distal goal of accelerating reading comprehension development of upper-elementary ML-ELs (far right-hand side of the figure).

The Knowledge, Language, and Structured Inquiry (K.L.I.) Intervention Logic Model

Proximal Outcomes

Research on upper elementary ML-ELs in the United States has identified characteristics of effective reading comprehension instruction. Much of this research emphasizes instructional opportunities for developing English language skills concurrently with content learning (Proctor et al., 2020; Silverman et al., 2020; Vaughn et al., 2022) and multi-strategy instruction emphasizing discussion-based meaning making processes (Klingner & Vaughn, 2000; Klingner et al., 1998, 2012). With all readers, but perhaps especially for those who are learning to read in a new language, comprehension processes hinge on the availability of teacher support and modeling of accurate, fluent reading of complex texts (Crosson & Lesaux, 2010; Geva & Farnia, 2012; Mokhtari & Thompson, 2006). Drawing on this research, we identified a set of proximal outcomes known to contribute to English reading comprehension skills: English language proficiency (i.e., vocabulary, morphology, morphosyntax, and text structure found in school texts), topic-specific conceptual knowledge, reading fluency, and text comprehension processes used to construct meaning. These outcomes primarily contribute to reading comprehension via language comprehension (Oakhill et al., 2015). The specific comprehension processes emphasized in K.L.I. are inference generation, comprehension monitoring, paraphrasing (explaining new ideas in one’s own words), and selectively attending to important (non-trivial) ideas to inform the reader’s mental model.

Recurring Practices

After we identified the language comprehension outcomes that would be targeted in the intervention, we established a set of core practices that could be built into small-group instruction to produce changes in the proposed outcomes. While our intervention is grounded largely in a cognitive view of text comprehension components and processes, as detailed above, we recognize that the development of these within-reader competencies—like all school learning—is inherently an interactional process between learners and the practices and opportunities available in their environments (Collins & Greeno, 2010). The underlying theory of the K.L.I. intervention is that in order to build the knowledge and skills essential for reading comprehension, ML-ELs need sustained opportunities to engage in four recurring practices: (a) reflecting on and manipulating forms of language that they often encounter in school texts; (b) purposeful, accurate reading with teacher modeling and feedback; (c) discussing and synthesizing across multiple texts to gain new knowledge; and (d) practicing and explicating metacognitive processes that help build a mental model of a text. Our theory proposes that an educational intervention can only yield observable impacts on student outcomes by instigating stable, consistent changes in the culture of a learning community. This culture can be defined as the shared modes of reading, sharing, and discussing that evolve as the group spends time together (Gutiérrez & Rogoff, 2003). The ability of the intervention to immerse students in this theory-aligned culture of literacy learning is an essential step in the internal logic of K.L.I.

Instructional Components and Intervention Structure

We developed five instructional components that give teachers concrete routines to instantiate the desired recurring practices. We aimed to create resources that would foster students’ increasing independence in the practices through the repeated use of carefully scaffolded, fast-paced instructional routines. The K.L.I. components, described in the sections below, are organized in modules. The five components are interleaved across each module in a repeating sequence. Each module focuses on a different inquiry topic. The topics used in the current implementation are: Robotic Technology, Space Pollution, Animal Communication, A New Home (Immigrant Experiences), and Voting in a Democracy.

Each module begins with inquiry questions (e.g., how have humans solved challenges by advancing robotic technology?) that serve as a catalyst for student thinking. Topics were developed based on student and teacher input in previous work conducted by the first author (Davis et al., 2021), with the goal of choosing compelling topics related to upper elementary content area standards. As they move through the components in a module, students keep track of their learning by adding ideas to their group’s “Inquiry Space.” The Inquiry Space is the anchor that helps connect the lessons together. Teachers can choose to use a digital space (e.g., a Google Jamboard or Padlet wall), or they can create a bulletin board or large poster display in their classrooms. The group adds at least one new idea to this space during each lesson. On selected days spread throughout a module, they use these curated ideas to review what they learned in response to the big question guiding their inquiry. At the end of a module, students create presentations for teachers and peers using the ideas in the Inquiry Space as a starting point. Across all the components, teachers encourage students to leverage their full range of linguistic resources—for example, by linking new vocabulary to similar words in their home languages and explaining their learning to their peers in their shared first languages.

Discovery reading

Students read informational books and engage in structured discussion, framed around a guiding question that helps them develop new knowledge of their topic. The teacher uses scaffolds to help students read the text, one short chunk at a time (usually 1–2 paragraphs). At each stopping point, students use metacognitive discussion prompts to build a complete mental model of the text. The prompts are: “monitor and repair” (identify and resolve confusions); “tell what you see” (explain what you picture in your mind while reading); “tell what you learned” (paraphrase one new idea from the text in your own words); “quiz me” (check for understanding by asking peers a question about a central idea in the text); and “word in the spotlight” (discuss the meaning of pre-selected words in context). After reading, students collaborate to answer the guiding question.

Confident reading

Students follow a repeated reading routine to read and discuss a series of short expository texts that target core concepts related to the inquiry topic. The teacher coaches students to attend to specific aspects of language important for constructing a mental representation of the text, including linguistic features that bridge ideas across clauses and sentences. The texts are controlled for difficulty using a “stacking” approach. There are three versions of a text in each stack. Each version is slightly more complex (i.e., more low-frequency content words and multi-clause sentences) than the previous one but covers the same content. 2 The group begins with the least difficult text, and they move up the stack over three successive Confident Reading lessons. Then they start over with a new stack. The stacking approach is a scaffolding technique that eases children into the demands of complex texts, ensuring that they practice fluency with high word-recognition accuracy while maintaining access to topic-related vocabulary and connective words used in complex sentences.

Uncover the structure

Using a short text that was previously read during Confident Reading, the teacher guides the students in a discussion of the organization of the author’s ideas. The texts are written to exemplify common structures used in expository texts. Using explicit routines (e.g., Roehling et al., 2017; Williams et al., 2014), the teacher guides the students to notice the structure, use the structure to map the ideas on a graphic organizer that explicates the structure, and to write a summary that adheres to the structure.

Breaking words

The teacher leads students through a structured word analysis routine using multisyllabic academic words that relate to the inquiry topic. The routine helps students build high-quality lexical representations of new words by focusing their attention on sounds, spelling, meanings, and morphological structure (Goodwin & Ahn, 2010; Perfetti, 2007). The routine includes: reading the word and using it in a sentence, breaking the word into syllables, writing and spelling the word, and transforming the word by removing/adding affixes and discussing the resulting changes in structure and meaning.

Sentence workshop

Students engage in a sentence-building routine designed to enhance their syntactic knowledge, in particular, their understanding of cohesive ties within multi-clause sentences (Silva & Cain, 2015). With teacher prompting, students use word cards to unscramble, build, expand, and combine multiple topic-related sentences of increasing length and grammatical complexity.

Purpose and Questions in the Current Study

In the current study, we focus on our examination of usability during the 1st and 2nd years of a 4-year project in which our research team iteratively designed and modified the components of the K.L.I. intervention. In subsequent years of the project, we examined the feasibility of the intervention in school-day contexts (Year 3) and tested the efficacy of the intervention to improve reading comprehension as proposed in the logic model (Year 4).

The 2-year design phases of the study were carried out using a design-based research (DBR) approach. The goal of DBR is to “directly impact practice while advancing theory that will be of use to others” (Barab & Squire, 2004, p. 8). To attain this goal, DBR involves several key procedures: (a) identifying a complex educational problem and developing a theoretical framework to address it; (b) designing an educational intervention based on this framework; (c) implementing the intervention in a real-world setting; and (d) collecting data about its usability, feasibility, and effectiveness (Joseph, 2004; Philippakos et al., 2021).

Testing the usability of an intervention is a crucial part of DBR. Usability testing provides feedback on various aspects of the intervention, such as its design, materials, instructions, and overall implementation (Poch et al., 2020). This feedback is useful in refining the initial design of the intervention materials, making them more effective, easier to use, and more satisfactory to teachers. Usability testing is a bridge between the intervention design and its implementation, ensuring that the intervention is not only theoretically sound but also practically usable in real-world educational settings, thereby increasing the likelihood that it will be adopted and sustained over time (Dede, 2005).

We operationalized usability for this study by establishing two criteria that informed data collection and analysis. First, the intervention must instantiate the underlying theory of reading comprehension development in observable ways when lessons are implemented. We examined this criterion by rating implementation of the intervention components using indicators keyed to the four recurring practices that informed the design of K.L.I. This criterion is represented by the arrow in Figure 1 between the lesson components and the proposed practices. Thus, our test of usability is a direct test of the first step in our logic model. Second, the intended users of the intervention must perceive it as useful and appropriate for supporting English reading comprehension in the target population. Evidence to examine this criterion was gathered through interviews with teacher collaborators who reviewed and provided feedback on K.L.I. We pursued research questions (RQ) aligned with the two criteria of usability:

RQ1: When teachers implement K.L.I. with small groups of ML-ELs, to what extent do the lessons create opportunities for students to engage in the four theorized practices needed for reading comprehension development?

RQ2: How do teachers perceive the usability of K.L.I. as an intervention that can support ML-ELs in reading comprehension?

Method

Participants and Context

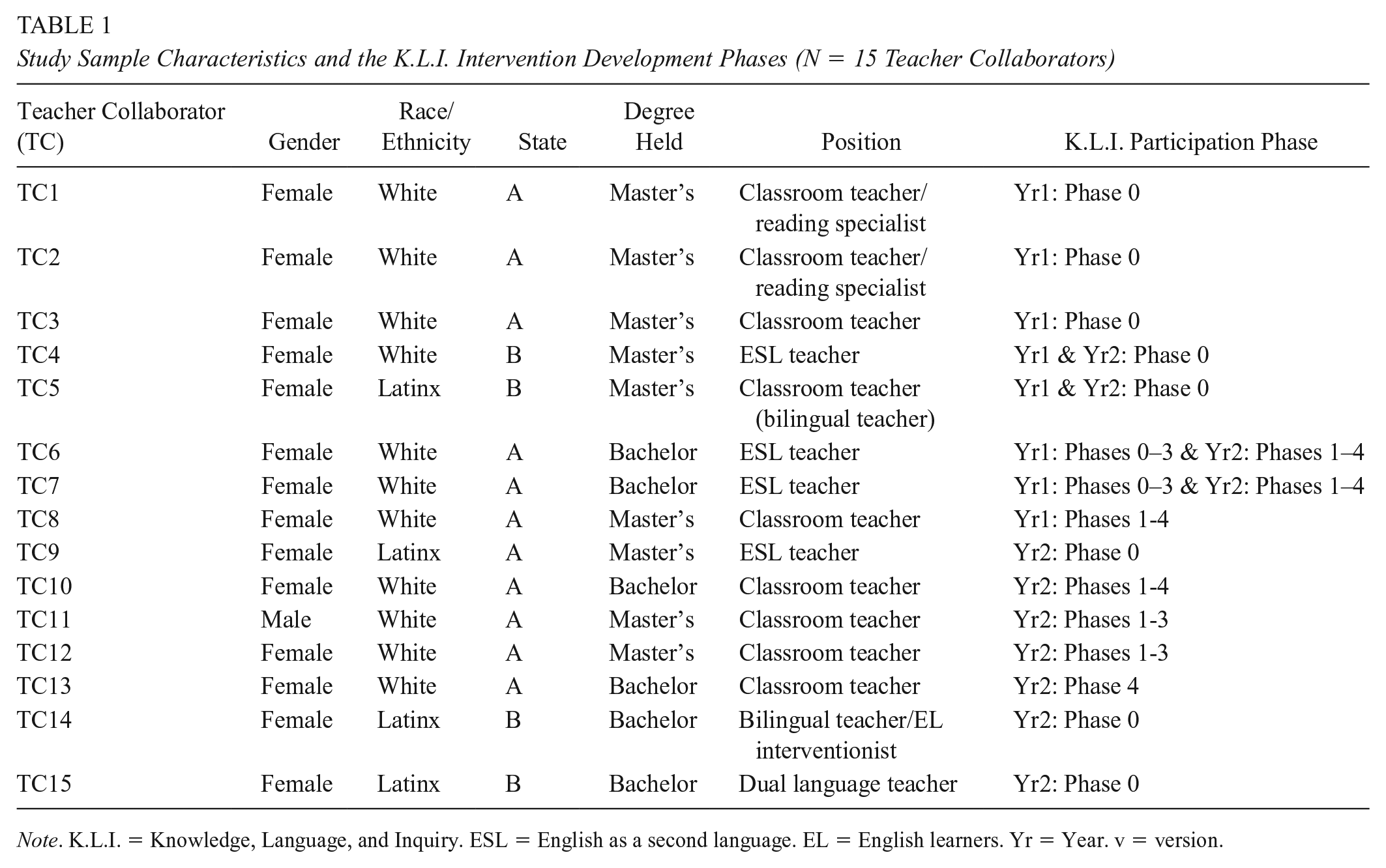

The primary participants were 15 elementary school teachers from two states in the southeastern and southwestern regions of the United States. We recruited teachers from school district partners and our professional networks. All teachers had at least 3 years of teaching experience and worked in roles that involved supporting ML-ELs in language and reading outcomes. These participants were involved in various phases of the study, as indicated in Table 1. Eight teachers (TC1-5; TC9; TC14-15) were only involved in Phase 0 activities, which involved reviewing the initial intervention materials. The remaining seven teachers implemented selected lessons with their students and provided feedback about their experiences with the intervention across Phases 1 to 4 (the study phases are described in more detail in a later section).

Study Sample Characteristics and the K.L.I. Intervention Development Phases (N = 15 Teacher Collaborators)

Note. K.L.I. = Knowledge, Language, and Inquiry. ESL = English as a second language. EL = English learners. Yr = Year. v = version.

Teachers implemented the intervention during supplemental afterschool instruction at two elementary schools in the southeastern United States. Both schools received Title 1 funding and had approximately 60% of students eligible for free/reduced lunch. Instruction was provided in small groups of 3 to 4 students (N = 24 in Year 1; N = 18 in Year 2) in Grades 3 to 5. These students recruited for the study were designated as ML-ELs by their schools based on a home language survey and English language proficiency testing. They were identified by teachers as needing additional English reading comprehension support. All spoke Spanish as their home language. They participated in afterschool K.L.I. lessons for 1 hour, twice a week.

We selected afterschool instruction as the preferred context for testing usability because this setting afforded us more freedom to make quick changes to the intervention across versions compared to school-day instruction. The administrators and teachers at the partner schools appreciated this approach because it created extended learning opportunities for their students without requiring changes to their regular curricula or schedules. We intentionally designed the multi-year study with the expectation that we would design and revise the intervention in this flexible afterschool context and then move the intervention into school-day instruction in subsequent years when our focus turned toward examining feasibility and effectiveness.

Design Phases

We used repeated design-implement-observe-revise cycles to develop the K.L.I. materials. Figure 2 displays the timeline of the iterative design process. During Year 1, we developed three components: Discovery Reading, Confident Reading, and Uncover the Structure. In Year 2, we developed the remaining two components: Breaking Words and Sentence Workshop.

Timeline of K.L.I. Intervention Component Development

Each component was developed individually through successive phases, enumerated as Phase 0 through Phase 4, corresponding to the version of the component under review at each phase (Version 0–Version 4). In Phase 0, teachers were presented with the initial lesson plans and implementation guides (Version 0; abbreviated as v0) for each component. They reviewed the materials and gave feedback to inform revisions, which produced Version 1 (v1) for Phase 1. In that phase, teachers implemented selected lessons during afterschool instruction. The data gathered during Phase 1 informed further revisions to the component. These revisions were subsequently implemented in Phases 2 (v2) and then again in Phase 3 (v3). 3 Phase 4 took place at the end of Year 2. Using v4 materials that integrated all the components together, anchored by the Inquiry Space, each teacher implemented a complete K.L.I. module so we could gain insights about the usability of the intervention when fully implemented as intended.

The research team conducted professional development (PD) sessions over Zoom with teachers to introduce the materials prior to implementation of v1 of each component. The curricular materials (e.g., implementation guides, lesson plans, and student-facing materials) were sent to the teachers before the PD sessions to give them ample time to review and prepare. While the format of each PD session varied depending on the component, all sessions shared common features of modeling and demonstration by the research team, group analysis of example videos, and guided discussion. Each session lasted between 30 to 60 minutes, allowing time for teachers to ask questions. The majority of time was spent demonstrating the lesson components and showing teachers how to follow the implementation guidance provided in the written plans. We began the meetings with a brief overview of the rationale for each component and how the pieces work together as a system to create the desired learning opportunities according to our theory. The insights gained from the analysis reported in the present study helped us to create a fully online self-paced professional development course, which was used in subsequent years of the project. 4

Data Sources

Teacher interviews

We designed semi-structured interview protocols to collect teachers’ feedback and perspectives on the usability of K.L.I. Following the review of v0 materials, we met individually with teachers to gather their feedback and suggestions for improvement. We asked questions pertaining to usability concerns based on the organization and presentation of the materials. During Phases 2 and 3, we again conducted individual, semi-structured interviews to gain insights into their experiences while implementing each component. We asked them to evaluate the organization of materials, their suitability for the target population, the clarity and appropriateness of lesson structures, and overall perceptions of usability within a school environment. Across the phases, there were 48 interviews in total conducted via Zoom by trained research team members lasting 25 to 45 minutes. Interviews were audio recorded and transcribed. Because this project emphasized close collaboration with teachers, we had many informal conversations with them throughout the design cycles, but we limited our analyses to systematic data collected through interviews.

Implementation map ratings

To evaluate the extent to which each component facilitated sustained learning opportunities outlined in our theory, we developed a list of observable, low-inference indicators describing optimal implementation of each K.L.I. component. Each indicator was rated as either present (1) or absent (0), based on adherence to specific instructional expectations in K.L.I. The indicators were developed concurrently with each component and aligned with the recurring practices underlying the logic of the intervention. We referred to these as implementation “maps” because they served as roadmaps to guide our understanding of what it would look like if a component fully realized the theory on which it was based (Hall, 2013). The implementation maps included indicators describing teacher and group behaviors, allowing us to examine the implementation by teachers and the uptake by students as part of the culture of their small group during the intervention. Teachers submitted videos of all the lessons they implemented for a total of 240 videos across all phases and components. Videos were reviewed and rated by two research team members. Before rating, they underwent training using sample videos. The inter-rater reliabilities, based on independent ratings of a randomly selected 15% of the data, ranged from .83 to .95.

Analyses

Data analysis was both concurrent and retrospective. The use of these two layers of analyses is typical in DBR studies. Concurrent analyses were conducted during the 2 years of the design-based work between each of the phases to inform revisions to subsequent versions of the intervention. Following the completion of the design cycles, we engaged in retrospective analysis with the goal of identifying overarching insights related to usability that could inform future work.

Concurrent Analysis

During the 2-year design phase portion of this study, the goal of the intervention was to maximize students’ exposure to the learning opportunities specified in the theory of K.L.I., as operationalized in the implementation maps. We used these ratings to identify areas of the intervention that needed adjustments and to monitor successive progress toward our goal across iterations. After each implementation phase, we calculated the proportion of lessons in which each indicator was marked as present. We established 80% as the minimum acceptable threshold for achieving the goals of the intervention. During our weekly research team meetings, indicators that were near or below this threshold were brought to the table for discussion. We developed refinements to improve their ratings in the next round of implementation. These analyses were completed rapidly in the few days or weeks separating each iteration so that new materials could be launched in time for teachers to integrate them into their instruction. This process repeated for each version of all five components of the intervention across 2 years.

We also flagged areas of concern raised by teachers. Teacher interviews were analyzed by research team members (trained graduate assistants) who were not involved in designing the intervention. After each round of interviews, they coded teachers’ comments using descriptive (naming and re-phrasing key ideas) and in vivo (using participants’ own words) coding (Saldaña, 2021). Then they grouped the codes into two predetermined categories (strengths of the intervention practices and materials; suggestions for improvement). They created summary tables displaying patterns and quotes from teachers organized in these categories (see Table 2 for examples). The tables were meant to capture all teachers’ suggestions for improvements as thoroughly and candidly as possible. We made the decision to partition the interview analysis team and the design team to mitigate the possibility that the design team might be too close to the intervention to accurately see weaknesses in the intervention. The analysis team presented the findings of these initial analyses at our meetings, which were held after each iteration point. In these meetings, we used their summary tables to come up with actionable next steps, organized in two categories shown in Table 2 (feedback about the intervention routines and practices; feedback about the teacher materials). For instance, one suggested improvement for the Uncover the Structure component was to provide more scaffolding for students when leading them in writing a summary at the end of that routine. This represented a suggested change to the intervention component itself. Other suggestions, such as “the meaning of connective words should be given to teachers” or “chunk out the teacher manual into smaller pieces,” were coded as feedback about the teacher-facing materials. The insights constructed through this process served as the roadmap for making improvements in the next iteration. We were particularly attuned to concerns raised by teachers that dovetailed with trends identified in the implementation map ratings. Often, the interviews helped us interpret the possible reasons for low indicator ratings.

Representative Data Examples for Coding of Teacher Interview Feedback

Retrospective Analysis

The purpose of this analysis was to look back on the full data set to establish design principles to inform future iterations of K.L.I. and similar multicomponent reading comprehension interventions. We began by retrospectively analyzing patterns in the implementation map ratings. We visually inspected the trends across the successive versions of the intervention for all the indicators (see Tables 3–7). We were particularly interested in flagging indicators that were not consistently implemented in the latest version (v4), which incorporated all the data-informed revisions from the repeated design cycles and had the best chance of creating all the sustained learning opportunities proposed in the theory. We also aggregated the indicators corresponding to the four recurring practices, which allowed us to describe improvements in the consistency of implementation of these practices from v1 to v4 (see Figure 3).

Implementation Map Ratings of the Discovery Reading Component by Version (N = 58 Lessons)

Note. v = version. A total of 58 Discovery Reading lessons (n = 15 for v1 in Year 1, n =18 for v2 in Year 1, and n = 25 for v4 in Year 2) were observed and rated. Recurring practice 1 = Manipulating language in school texts; Recurring practice 2 = Purposeful, accurate reading; Recurring practice 3 = Synthesizing to build knowledge; Recurring practice 4 = Metacognitive processes.

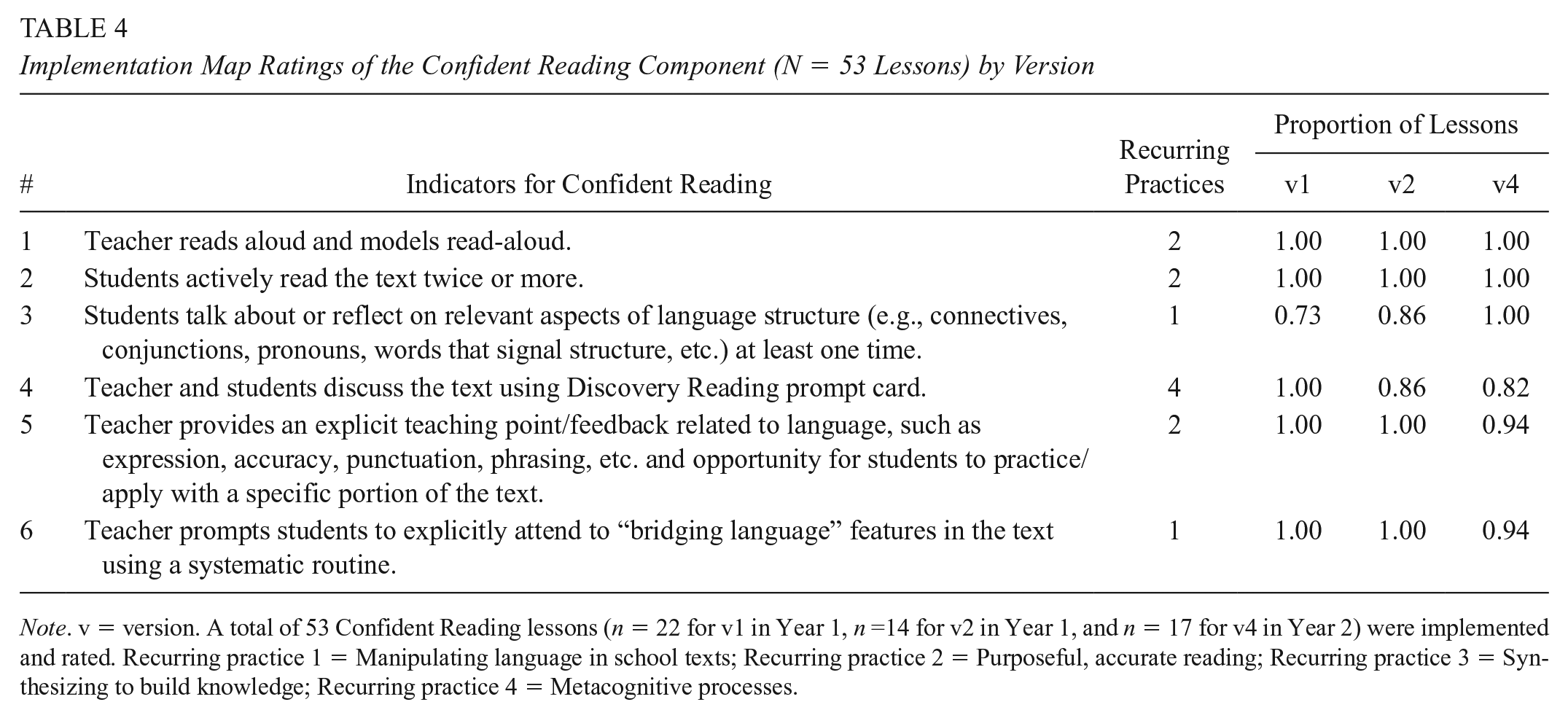

Implementation Map Ratings of the Confident Reading Component (N = 53 Lessons) by Version

Note. v = version. A total of 53 Confident Reading lessons (n = 22 for v1 in Year 1, n =14 for v2 in Year 1, and n = 17 for v4 in Year 2) were implemented and rated. Recurring practice 1 = Manipulating language in school texts; Recurring practice 2 = Purposeful, accurate reading; Recurring practice 3 = Synthesizing to build knowledge; Recurring practice 4 = Metacognitive processes.

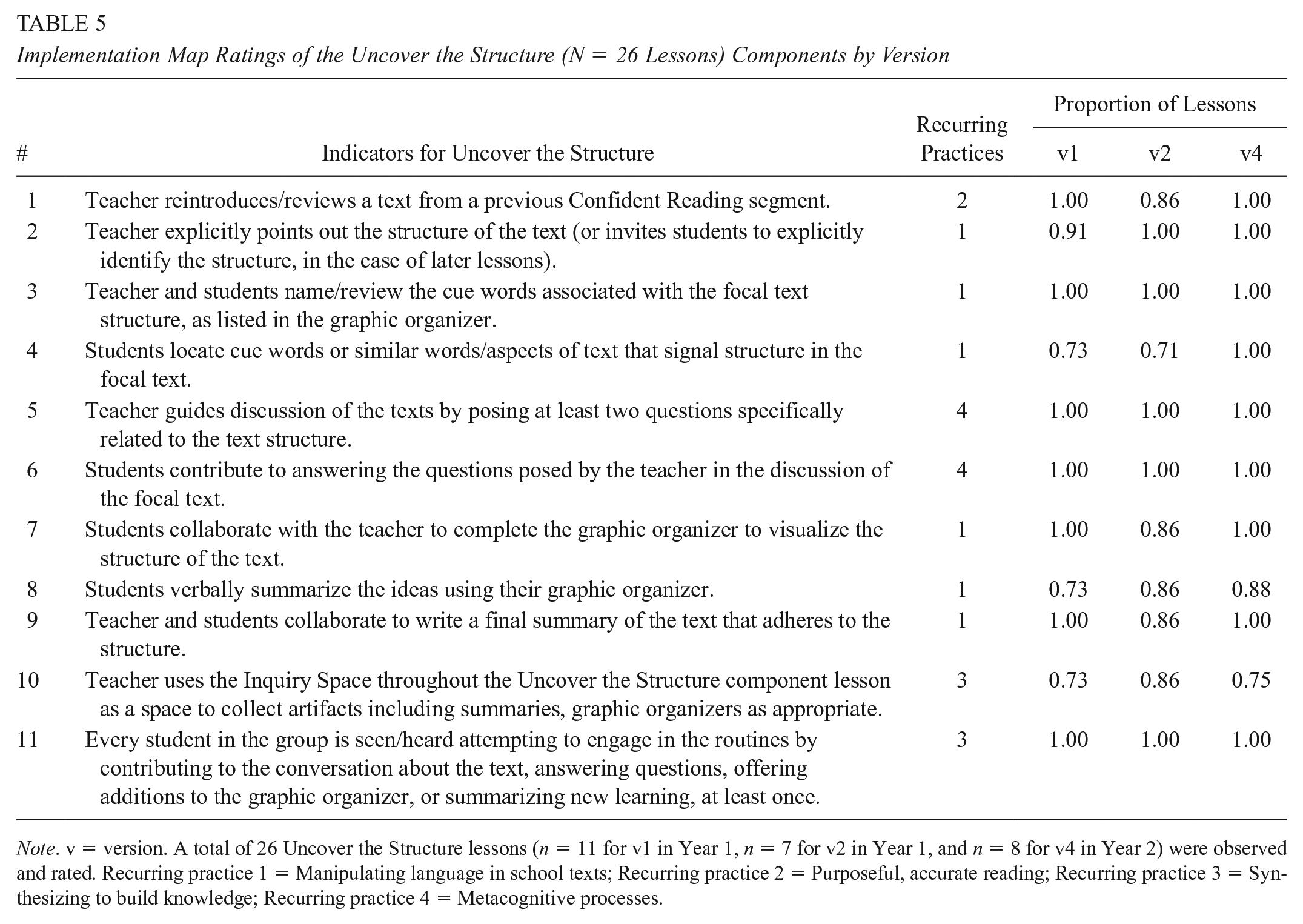

Implementation Map Ratings of the Uncover the Structure (N = 26 Lessons) Components by Version

Note. v = version. A total of 26 Uncover the Structure lessons (n = 11 for v1 in Year 1, n = 7 for v2 in Year 1, and n = 8 for v4 in Year 2) were observed and rated. Recurring practice 1 = Manipulating language in school texts; Recurring practice 2 = Purposeful, accurate reading; Recurring practice 3 = Synthesizing to build knowledge; Recurring practice 4 = Metacognitive processes.

Implementation Map Ratings of the Breaking Words Component (N = 53 Lessons) by Version

Note. v = version. A total of 53 Breaking Words lessons (n = 16 for v1, n =16 for v2, n = 10 for v3, and n = 11 for v4, all in Year 2) were observed and rated. Recurring practice 1 = Manipulating language in school texts; Recurring practice 2 = Purposeful, accurate reading; Recurring practice 3 = Synthesizing to build knowledge; Recurring practice 4 = Metacognitive processes.

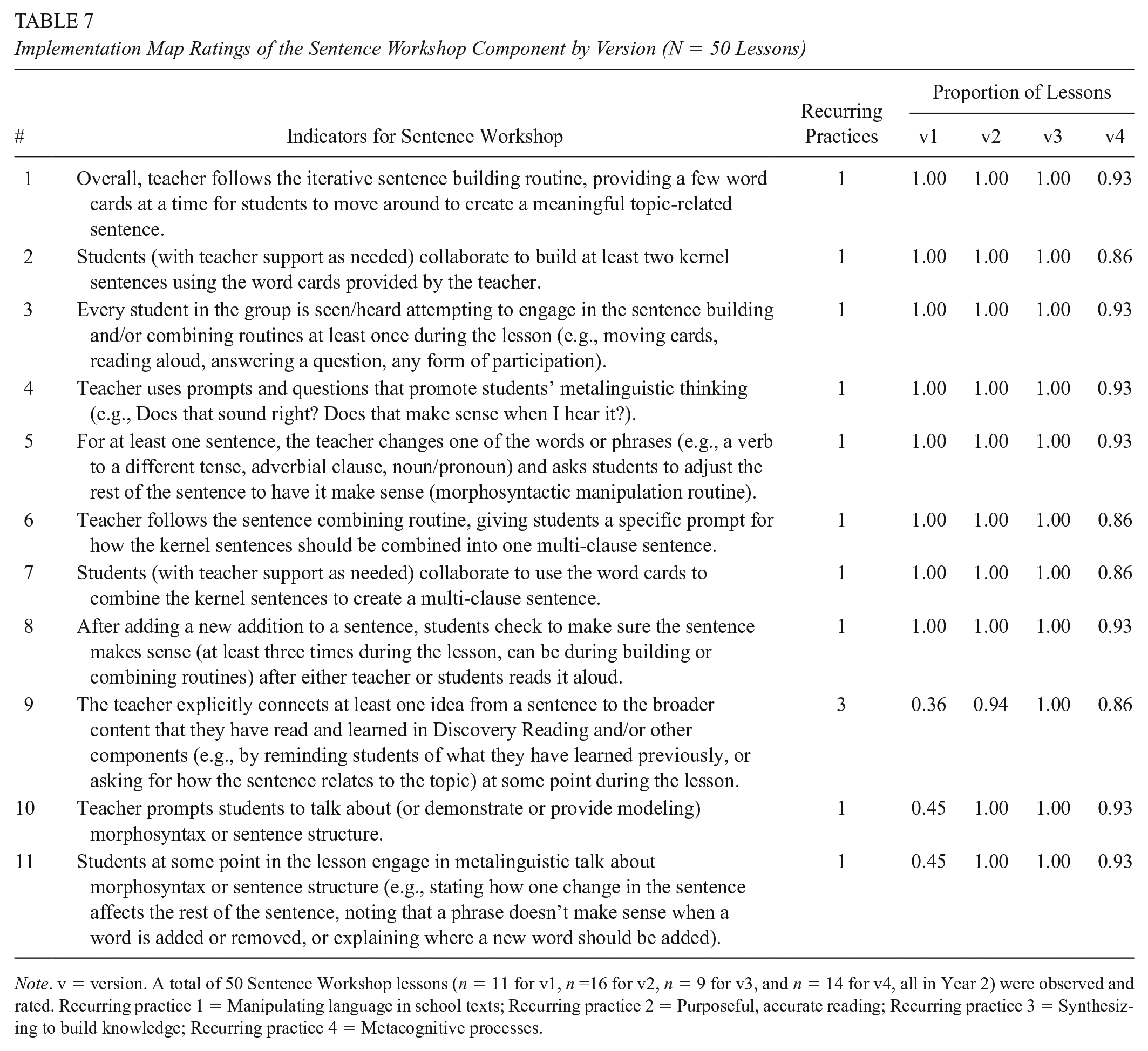

Implementation Map Ratings of the Sentence Workshop Component by Version (N = 50 Lessons)

Note. v = version. A total of 50 Sentence Workshop lessons (n = 11 for v1, n =16 for v2, n = 9 for v3, and n = 14 for v4, all in Year 2) were observed and rated. Recurring practice 1 = Manipulating language in school texts; Recurring practice 2 = Purposeful, accurate reading; Recurring practice 3 = Synthesizing to build knowledge; Recurring practice 4 = Metacognitive processes.

Implementation Map Ratings for K.L.I. Recurring Practices at Versions 1 and 4

Additionally, we returned to the interview data to identify factors that teachers highlighted as important for maximizing usability. First, we re-read all the data from the teacher interviews and the feedback tables we constructed for all five components of the intervention. In our concurrent analyses, we had already closely engaged with teachers’ insights about each component separately, but, in this step, we familiarized ourselves with the dataset as a whole. Then we went through the data to code teachers’ suggestions and concerns. We relied on two analytic tools associated with grounded theory methodology (Corbin, 2021) to structure this process: asking questions (i.e., What does this suggestion tell us about usability?) and making comparisons (i.e., How does this suggestion relate to other suggestions we encountered in the data?). Through discussion, we refined and grouped the codes into broader categories reflecting our interpretation of the major concepts related to teachers’ perceptions of usability. At this stage, we also revisited our weekly team meeting notes from the 2 years of the study, which documented the insights and decisions made during the concurrent design phases. These notes helped us reconstruct a broader understanding of how the teachers’ insights aligned with our own observations and interpretations from the implementation map ratings.

We came to agreement on four concepts that encapsulated all the patterns in the data. We interpreted these concepts as “enhancing factors” that, when in place, enhance the usability of the K.L.I. intervention. To check the adequacy of the findings, we returned to the dataset to confirm that these four factors captured the major patterns in the data (to guard against missing other important factors) and that each factor was evident across multiple teachers (to guard against naming a factor that was idiosyncratic to one person). Finally, we selected quotes and examples for reporting that exemplified teachers’ ways of explaining their perceptions related to each of the concepts. Throughout these steps, we used accepted practices in qualitative research to ensure trustworthiness of our findings (Corbin & Strauss, 2015; Stahl & King, 2020). Our team constructed shared definitions of codes and categories at all stages of the analyses. All coding was done in teams, and consensus was achieved through discussion prior to finalizing any interpretations of the data. We triangulated our findings across multiple participants and data sources. We made sure our findings were confirmable by tracing them back to the raw data.

Findings: Concurrent Analysis

Our concurrent analyses were quicker and more tentative than the retrospective analysis. This was necessary because the ongoing analyses were completed between iterations to generate insights put into action in the upcoming versions, which were typically separated by only a few days or weeks. The retrospective analysis, on the other hand, was an opportunity to thoroughly re-examine the data sources without this time pressure. This distinction is not unique to our study but is an inherent feature of collaborative design-based research that is perhaps not frequently explicated in research reports. In this section we briefly describe how the concurrent analyses accomplished the purpose of moving K.L.I. successively closer to desired levels of usability based on implementation map ratings and teacher feedback. In the next section, we offer direct answers to our research questions based on the retrospective analysis.

The primary outcome of the ongoing analyses in DBR is a newly designed product. In our design phases, we created four iterations of the K.L.I. components resulting in a version of the intervention that adequately accomplished the recurring practices theorized to improve reading comprehension for ML-ELs. During the initial implementation phase (v1), several features of K.L.I. were not fully enacted. After collecting feedback and experiences from teachers and incorporating these into necessary modifications in subsequent versions (v2, v3, and v4), improvements were observed. Overall, implementation map ratings indicated an upward trend from v1 through v4 (Tables 3–7).

A summary of the major revisions made to each component across the iterations is shown in Table 8. As explained previously, these revisions were developed by the researchers during team meetings held between each iteration. Using our observation ratings and teacher feedback, we identified ways the intervention was not meeting our usability threshold and proposed ways to get closer to the designed goal. These changes were then designed into the subsequent iteration. To illustrate how the iterative design process produced improvements in the intervention across versions, we detail the changes made to the Discovery Reading (DR) component as an example. At each iteration point for DR, teachers were provided with lesson plans, an implementation guide describing the component, and all the materials needed for implementation. In our initial version of Discovery Reading (DRv0), we identified two or three words to preview before reading. We divided the reading into three chunks and provided a prompt card that included four metacognitive prompts to be used at all the stopping points.

Summary of Major Revisions to Each Component Across Iterations

Note. LP = lesson plan; IG = implementation guidance/teachers’ manual. Each column shows the changes incorporated in that version based on feedback and observations of the previous version, starting with Version 0.

Based on the initial teacher review, we made revisions when creating DRv1. Teachers pointed out ways to strengthen the format and content of the lessons and the implementation guidance. We made formatting changes to clarify the structure of a DR lesson. We added recommended times to each section of the lesson plan to facilitate pacing. We also began working toward a more realistic 20-minute time frame, based on the teachers’ assertions that they rarely have extended time for their supplemental instruction. To facilitate a shorter time frame, we recommended previewing only two words before reading and divided the text into only two stopping points.

After observing and rating the first set of lessons, we made further refinements in DRv2. We added annotations and additional pointers to the lesson plans to make them more educative for teachers. In particular, we gave clearer guidance to encourage every student to verbally contribute, in some form, at least once during the lesson. To help with efficient pacing, we clarified the role of teacher modeling/examples when using the prompt card and reduced our recommendation to only three routines from the prompt card per stopping point. We also decided to only ask teachers to preview one word before reading.

We used our observations of DRv2 to make changes to produce DRv3. We revised the prompt card to put the comprehension monitoring routine (“monitor and repair”) at the top so that teachers would use it to start the discussion for every chunk of text. We then recommended they choose one more metacognitive routine from the prompt card, rather than trying to do too many of them. We reduced the length of the recommended text chunks (to about one or two paragraphs) and increased the number of chunks to three. This change created more frequent opportunities for students to explain and expand their mental models. We also made minor editorial changes to the prompt card to make the wording clearer for each routine.

We gathered feedback on DRv3 through another round of teacher interviews. Teachers again provided a large amount of constructive feedback. Their insights led to the changes made for DRv4. This version clarified for teachers that it is acceptable for them to read aloud some of the text chunks while students follow along. This guidance was added in response to their concerns about the high level of complexity of some of the texts. Also, we gave clearer guidance on how to use the Inquiry Space at the beginning and end of each Discovery Reading lesson. We established a new lesson plan structure for each module that includes elaborative plans and blueprint plans. Elaborative plans are provided for the early lessons for a K.L.I. component. They include detailed examples of the language teachers might use at each step of the lesson. Recognizing that teachers do not need detailed examples or scripts once they gain familiarity with the structure of an intervention component, we provided blueprint plans for the remaining lessons in each module.

We followed a similar process for the remaining four components. The resulting version of K.L.I. consists of five modules each on a different topic. Each module begins with an introductory lesson that provides an overview of the topic. Then the instruction alternates through the various components across 39 lessons, broken down among the components as follows: 12 Discovery Reading, 9 Confident Reading, 6 Breaking Words, 6 Sentence Workshop, 3 Uncover the Structure, and 3 Inquiry Space segments. Implementation of a full module takes around 17 hours, spread over multiple weeks. Detailed descriptions of the K.L.I. practices and sample materials are available on the project website (https://go.ncsu.edu/kli) or from the first author by request.

Findings: Retrospective Analyses

While the concurrent analyses supported the ongoing design work to produce the desired intervention, the retrospective analysis provided an opportunity to use teacher interviews and observations to examine trends related to usability directly related to our research questions. We operationalized usability using two criteria: the intervention materials provide meaningful, theory-aligned learning opportunities during small-group implementation; and teachers perceive the intervention as useful and appropriate. We present our retrospective findings for each of these criteria separately.

To What Extent Did K.L.I. Create Opportunities for Students to Engage in the Four Theorized Recurring Practices?

According to the theory of K.L.I., the intervention should create sustained opportunities for ML-ELs to engage in four proposed practices that cut across the intervention components. To evaluate our progress in meeting this goal, we clustered the implementation map indicators according to their corresponding recurring practices and visually compared differences between the v4 and v1 implementation cycles (Figure 3).

Trends for each of the recurring practices

The recurring practice of manipulating language structures found in written school texts (top left panel of Figure 3) comprised 33 indicators spanning all five K.L.I. components. Using our established 80% threshold, only 17 of these indicators were adequately present in v1. Overall, the proportion of affirmative ratings for this practice was .78. This improved to .93 at v4, at which point all the indicators exceeded the 80% threshold. Recurring Practice 2 (purposeful, accurate reading, top right panel in Figure 3) started off with high implementation in Version 1 and continued this pattern in Version 4. Recurring Practice 3 (synthesizing new knowledge) started with low implementation, with only two indicators above the 80% threshold. The indicators for this practice improved from an overall proportion of .70 to .93 from v1 to v4. Recurring Practice 4 (metacognitive processes) was slightly above the adequacy threshold at Version 1 overall (.86) and improved noticeably in Version 4 to .98.

Pressure points that characterize challenging indicators

While the patterns across the four recurring practices affirmed that v4 of the intervention was generally successful in producing the forms of talking and thinking that we hoped to see in K.L.I., we further explored the variability we observed across the indicators to identify the pressure points that could pose challenges in future versions of K.L.I. and similar interventions. Many (26 of 58) of the indicators were easily implementable under the controlled conditions of the present study. They were consistently present in v1 when teachers tried the component for the first time and remained that way in v2. For example, the first two indicators for Confident Reading (CR1-teacher reads aloud and models; CR2-students actively read the text twice or more) were present in all the observed Confident Reading lessons. However, other indicators did not immediately get implemented consistently and did so only after intentional design efforts. This was particularly true for the first and third recurring practices (manipulating language and synthesizing new knowledge). Also, a small number of indicators remained stubborn to our repeated efforts to ensure their implementation and were inconsistent even in v4.

Based on the patterns evident in our implementation map ratings, we determined that K.L.I. indicators were more challenging to consistently implement when they: (a) required soliciting wide participation from all students in a group; (b) could not be fully routinized as a discrete procedure in the lesson plans (and thus, required the teacher to extrapolate or adapt their instruction based on their understanding of the logic of the intervention); or (c) involved explicitly connecting one part of the intervention to another.

For example, CR3, an indicator related to the practice of reflecting on language structure, was only observed in 73% of the v1 lessons. This indicator required that nearly all students in the group were observed engaging in the desired forms of talk. (Note: We operationalized “nearly all” to mean all but one student present in the group.) With revisions to the materials, this indicator was observed in 86% and 100% of v2 and v4, respectively. These revisions provided teachers a menu of suggestions for how to maximize student interaction in the Confident Reading component. The revisions also gave teachers more explicit guidance in how to prompt students to share their metalinguistic insights rather than leaving up to chance that teachers would notice these moments on their own (e.g., by clarifying how to point students to examples of function words that bridge ideas across sentences or clauses). In hindsight, we recognize that these revisions directly supported implementation along two of K.L.I.’s pressure points (wide participation and teacher extrapolation).

These pressure points are helpful in explaining the three indicators in our implementation maps that were resistant to our design improvements and remained at unacceptable levels in v4. The first of these, DR15, was only observed in 17 of the 25 v4 Discovery Reading lessons (68%). This indicator was rated affirmatively if nearly all the students were seen or heard contributing ideas from the text to help the group construct an answer to the guiding question that had been introduced at the beginning of the lesson. It required wide participation from students and for students to pull ideas together from multiple chunks of text to answer a question that was referenced early in the lesson (connecting one part of the lesson with another part).

CR4 was another stubborn indicator that was not as consistent as desired in Version 4, having been rated as present in only 14 of 17 Confident Reading lessons (82%). This indicator was judged positively if someone in the group was observed explicitly using the Discovery Reading prompt card (which contained metacognitive thinking prompts) when discussing the stacked text during the Confident Reading lesson. In hindsight, we speculate that this form of intentional connection across the multiple components of the intervention will require additional design work to ensure that it takes hold in the culture of small-group instruction.

Finally, UtS10 was also inconsistently implemented in v4 (75%). This indicator was rated as present only when the teacher explicitly referenced the Inquiry Space during an Uncover the Structure lesson—for example, by adding the summary or graphic organizer the group constructed to the Inquiry Space or by asking students to consider how the ideas they were analyzing in the text were related to previously posted ideas. This indicator required the teacher to make adaptive decisions to choose an appropriate point in the discussion to support students in connecting what they were learning to their broader inquiry goals.

How Did Teachers Perceive the Usability of K.L.I.?

Based on our retrospective analysis of teacher feedback, we identified four factors that teachers perceived to enhance the usability of the intervention: cohesion across the components, scaffolds to support students at different levels of language and reading development, scaffolds to support teacher learning that fade in elaboration over time, and designing the components to fit in realistic time increments available for supplemental instruction. These factors were evident both in the feedback teachers gave regarding areas of the intervention that needed revision and in their positive comments about features they appreciated about the design of the components. These factors aligned with the trends and pressure points we identified in our objective ratings of implementation indicators.

Cohesion across components

Teachers perceived the intervention as usable when the components provided students with opportunities to apply and practice concepts from one component in other components. This included the use of the same texts in Confident Reading and Uncover the Structure, and the application of the key vocabulary words from Discovery Reading in the Breaking Words component. In particular, they appreciated how the Inquiry Space served to anchor the multiple components together, helping students draw connections across multiple lessons. As one teacher shared, At the beginning of your lesson, you can refer back to [the Inquiry Space] and say “oh last time you know we talked about this” or “This is something that we learned, and now we’re going to continue on with robots.” (Teacher collaborator [TC]6)

Another teacher shared that the Inquiry Space helped make these overlapping components more cohesive, I could easily see how students would be able to make those connections between their Discovery Reading and the vocabulary [in Breaking Words] and I like that it goes on to the Inquiry Space as part of that learning and helps connect it all. (TC2)

While teachers agreed that the Inquiry Space served as an anchor to connect the components together, they expressed concerns about the level of guidance provided in teacher-facing materials for implementing the Inquiry Space. They were skeptical about being able to fully leverage the Inquiry Space within the time constraints of typical small-group lessons.

Scaffolds for student learning

Teachers valued that the K.L.I. routines were intentionally designed to ensure that students could increase their participation and leadership in the group over time, even if they began by making small, structured contributions to the discussion. As one example, teachers found that the scaffolded design of the Sentence Workshop routine contributed to the highly engaging nature of this component. One teacher shared, “The students like being successful with that initial short sentence for each kernel, then building complexity” (TC7), while another commented that Sentence Workshop routine is inherently differentiated by design and would be easy to scale up or down in complexity, It felt like the activity itself is kind of differentiated. Some of the kids might get more out of the smaller segmented sentences and then some of them might actually be able to do the big long mystery one [mystery sentence] by themselves, maybe with just a little bit of scaffolding. (TC6)

Our implementation manuals included guidance for how teachers could adapt routines to make them more or less challenging for various groups of learners based on their individual needs. Many of our collaborators praised these ideas, stating that they were “very helpful” (TC9), “tells teachers exactly what they can do” (TC1), and “easy to read over and pick a scaffold right before teaching the lesson” (TC3). However, teachers indicated that there is still more design work to be done to ensure that adequate student scaffolds are in place. They suggested places where we can provide more examples of how to scaffold students’ thinking, such as making it clearer how to gradually give students more responsibility when analyzing text structure and summarizing in the Uncover the Structure routine.

Scaffolds for teacher learning

Recognizing that teachers would have different levels of experience and expertise with language- and knowledge-focused comprehension instruction, we also intentionally designed our teacher manuals and lessons to scaffold teacher learning. One method of scaffolding we employed was to provide two types of lesson plans for our teachers that varied in level of guidance and detail. As described previously, modules began with elaborative plans that included suggested verbiage, question strategies, and sample student answers; and these were replaced with blueprint lesson plans as the module proceeded. Teachers shared how helpful it was to have the different types of plans, as they relied more heavily on the elaborative plans early on during implementation and then were able to utilize the blueprint plans as they gained comfort and confidence executing the routines. They appreciated that the elaborative plans, although detailed, were not intended to be used as a script but rather to provide teachers a sense of the language and rhythm of a well-paced lesson. Echoing a common sentiment among the sample, one teacher shared, Both plans are helpful. . . . I would still give teachers the elaborate lesson plan for the beginning, but then the teachers can individually go to the blueprint one where it’s right there—you get right to the meat of it because you know about the routines already. (TC1)

Teachers also suggested places where additional supports for teachers could be provided. For example, they wanted the implementation guides to provide more options for acceptable adaptations they could make when time runs short or when students are particularly engaged and want extra time on a concept, without sacrificing the integrity of the logic of the intervention.

Realistic time increments and pacing

Time limitations frequently emerged in teachers’ feedback as a major factor affecting usability. When reviewing Version 0, teachers expressed that the lesson plans contained too many steps for the allotted time. We received concerns such as from one teacher who noted, When I think about implementing [Confident Reading] with my students, I feel like 10 minutes would be too fast, and I would be rushing to make it, when I think about my students and giving them good feedback and listening back to it. . . . Here it says you need to do these three activities before reading [in DR], that’s not going to happen. There’s no way. These activities take more time, they’re more complex. (TC5)

Many teachers shared that when they tried to promote discussion, their lessons often ran behind. One teacher admitted her struggles trying to solicit participation during the “monitor and repair” routine in the Discovery Reading component, I was only able to finish one lesson from this batch. I think again that perhaps students may have a hard time admitting they do not understand a concept in front of others in a small group. Hopefully by me continually modeling this it has helped spur some students to be willing to share and open up in front of others. (TC6)

As teachers and students became more familiar with the intervention and implemented guidance we added to the materials to address pacing, lessons became more efficient. One teacher shared, I felt like the pace of the lessons picked up more with the tips that were provided for us during our second PD of the Discovery Reading. In my case, less of the teacher talking, like sharing examples or telling what I learned. I like how the lesson gave me prompts to ask students how they felt about another student’s answer and if they wanted to add or change anything to another student’s response. (TC7)

We are optimistic about teachers’ improvements in pacing over time, but we recognize that additional revisions are needed to maximize students’ learning opportunities within the short blocks of time available for supplemental reading comprehension support for ML-ELs.

Discussion and Implications

Drawing on insights from teachers and our own observations of implementation, we identified pressure points that created challenges for implementation and factors that enhanced the usability of K.L.I. Following precedent from previous DBR studies (Colwell & Reinking, 2016; Howell et al., 2017; Wilder et al., 2021), we summarize these challenges and enhancements in the form of five assertions that can inform future iterations of K.L.I. We propose these assertions as a humble theory (Cobb et al., 2015) of usability, one that is grounded in the particulars of our intervention and design process but has potential applicability to other multicomponent interventions. Given the multifaceted nature of reading, carefully orchestrated multicomponent interventions may offer the best chance for improvements in reading comprehension (Pearson et al., 2020). Such interventions are inherently complex and can face implementation challenges when introduced into real-world contexts. These five assertions may point researchers, developers, and intervention adopters to important considerations that can mitigate these challenges.

First, our findings suggest that usability is enhanced when the multiple components of the intervention are connected by an underlying logic that is clearly articulated in the intervention materials (Assertion 1). Second, the teacher-facing materials and accompanying PD should help teachers gain an understanding of the theory of the intervention so they can move beyond procedural adherence with the lesson plans and make informed instructional choices that preserve the underlying design logic (Assertion 2). Teachers’ understandings of the theory of an intervention can be supported by building intentional scaffolds for teacher learning into the design of the lesson plans (e.g., providing detailed guidance early on and then removing the guidance in later lessons; Assertion 3). Additionally, multicomponent interventions will inevitably place ambitious time demands on teachers; usability is maximized when these demands are kept as realistic as possible and when teachers are guided to streamline their implementation based on their deep knowledge of what really matters in the intervention (Assertion 4). Finally, we propose that usability is maximized when the intervention materials provide clear guidance for how teachers can solicit and scaffold active participation from all students during each lesson, acknowledging that students will enter the lessons at different starting points and will need varying levels of support to increase their ownership of the instructional routines (Assertion 5).

DBR differs from other methodologies in its focus on both products (i.e., the newly developed intervention) and processes (i.e., the decision-making used to guide successive iterations of the intervention). Given this dual emphasis, we outline the practical implications of K.L.I. as a designed product, and then we highlight how our detailed explication of the design process can inform future research.

Implications for Practice

The K.L.I. components can expand teachers’ instructional repertoires for supporting students in language comprehension. Such tools are needed given the recognized importance of augmenting effective literacy instruction with opportunities for language development for ML-ELs (Goldenberg, 2020; Goldenberg & Cárdenas-Hagan, 2023) and for upper-elementary students with difficulties in reading comprehension (Silverman et al., 2020). Teachers can adapt the components for multiple texts and topics, similar to other instructional routines that target reading development through structured collaboration (e.g., Collaborative Strategic Reading [Klingner et al., 2000], Reciprocal Teaching [Palincsar & Brown, 1984]). While we have not yet systematically studied how teachers adapt the components, we expect that once teachers become familiar with the logic and structure of K.L.I., they can implement the routines using any text or topic. For instance, the routines in the Discovery Reading component could be used with any text that teachers can divide into small pieces for collaborative reading and discussion. Breaking Words could be used anytime a teacher wants to explicitly introduce a new multisyllabic word. Sentence Workshop can be used with any complex sentence that teachers want students to analyze and discuss. We make these recommendations with caution, however, since our examination of the impact of the K.L.I. routines on student learning is still in progress.

In addition to providing versatile resources for language comprehension instruction, K.L.I. also fulfills a need for instructional tools that exploit the strong relationship between knowledge and reading comprehension (Hwang et al., 2023; O’Reilly et al., 2019). This intervention exemplifies how to provide meaningful opportunities for readers to become experts and sharers of new knowledge through their engagement with multiple texts on a common topic. This knowledge-focused approach creates rich opportunities for upper-elementary ML-ELs to experience and use forms of language often found in school texts, a well-theorized requirement for comprehension achievement in this population (Uccelli et al., 2015).

Implications for Research

A central tenet of DBR is the iterative development of an intervention across multiple cycles of implementation, with each iteration getting closer to the desired goal (Campanella & Penuel, 2021). The Institute of Education Sciences (IES) makes a similar mandate for iteration in their Development and Innovation grants program, advocating for researchers to continuously refine an intervention to improve its usability and feasibility (Institute of Education Sciences [IES], 2017). However, despite the recognized significance of iteration as a crucial part of intervention design, we have found few examples in existing literature that detail the specific processes of iteration used to develop new interventions in literacy. There is concern among researchers using DBR that design-oriented studies are underrepresented in high-impact journals (Hjalmarson et al., 2021), further contributing to the lack of guidance available for systematic, iterative development of new educational resources.

In this study, we counter this trend by explicating how we intentionally put each version of the K.L.I. components “in harm’s way” (Cobb et al., 2003, p. 10) to expose areas of needed improvement. As our findings show, we did not get the intervention right on the first try, and many improvements were needed to move the lesson plans, implementation guides, and student materials towards acceptable levels of usability. Our design process serves as an example of how close collaboration with teachers can inform initial design and revision along the full development cycle of an intervention. We also detailed our utilization of an observation tool to evaluate the capacity of the intervention to foster desired interactions among teachers and students. Our description of this observation tool, available at the project website (https://go.ncsu.edu/kli) or from the first author, can guide other researchers’ efforts to collect reliable, objective observations of practice to inform their decision-making processes when revising interventions. Moreover, this tool serves as the groundwork for developing additional measures, such as fidelity and implementation validity tools (Buckley et al., 2017), that will be needed to fully test the impact of K.L.I. on student outcomes in future studies.

Limitations and Conclusion

This study has important limitations that should be considered. We worked with a small sample of teachers who volunteered to participate with high interest in the intervention and in close partnership with the research team during afterschool instruction. Testing usability in this context has drawbacks. The high levels of implementation that were observed are not likely representative of how this intervention will operate under less controlled conditions. In the next phases of the project, we examine feasibility and efficacy of K.L.I. in more authentic contexts. Obtaining empirical evidence of usability enables us to identify preliminary needs and opportunities to enhance the capacity of teachers to use the intervention within real-world instructional settings to accelerate reading comprehension among upper-elementary ML-ELs.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R305A200283 to North Carolina State University. The opinions expressed are those of the authors and do not represent views of the Institute or the U.S. Department of Education.

Open Practices

Notes

Authors

DENNIS S. DAVIS is an associate professor in the Department of Teacher Education and Learning Sciences in the College of Education at NC State University, Raleigh, NC (email:

JACKIE E. RELYEA is an assistant professor in the Department of Teacher Education and Learning Sciences in the College of Education at NC State University, Raleigh, NC (email:

COURTNEY SAMUELSON is an assistant professor of teacher education at Methodist University, Fayetteville, NC (email:

BECKY H. HUANG is a professor in the Department of Teaching and Learning at the Ohio State University, Columbus, OH (email:

CORRIE DOBIS is a doctoral candidate in the Literacy and English Language Arts (LELA) program at North Carolina State University, Raleigh, NC (email: