Abstract

Schools experienced unprecedented disruptions to instruction during the COVID-19 pandemic, largely driven by the abrupt transition to online learning in the spring of 2020. Often, this shift created a “black box” around remote learning and instruction. However, data generated by educational technology platforms can provide a window into instruction during this time. Here, we report on the amount and frequency of usage of an online platform for independent practice used by 58 grade 7 math teachers from seven school districts across multiple U.S. states between August 2019 and July 2021, providing insight into instruction just prior to and during COVID-19 disruptions. Results showed an increased proportion of teachers using the platform at least twice a week over the study period, from 22.2% to 44.1%. Further, platform usage was related to teachers’ level of experience and the amount of coach support received, suggesting areas for teacher support during remote instruction.

Keywords

Introduction

The COVID-19 pandemic and resulting school closures caused an unprecedented disruption to education in the United States and around the world. The widespread and nearly instantaneous shift to either hybrid (i.e., both in-person and online) or entirely online instruction during the spring of 2020 led teachers to rely more heavily on online educational platforms and technologies (Engzell et al., 2021). In cases where there was a shift from in-person instruction to entirely remote instruction, it became difficult to know the extent to which teachers maintained their typical instruction as a result of this shift and, therefore, how to best support teachers’ practice during this period. However, close analysis of teacher and student usage data provided by online platforms used over the course of this transition can provide some insight into the extent of changes in instruction that occurred during this period of remote instruction.

Homework, typically defined as the schoolwork that students bring home to complete independently, is a central and long-standing instructional practice (Cooper & Valentine, 2001) that was likely to be impacted by COVID-19 and the shift to online learning. For example, some early research has shown large increases during COVID school shutdowns in the number of hours students reported doing homework (Fernández et al., 2022). With more students learning from home, differences in how homework is assigned and completed may also alter students’ opportunities to learn, potentially increasing already existing gaps in achievement (Chandra et al., 2020; Lewis & Kuhfeld., 2021; Ritzhaupt et al., 2020). Some studies have suggested that the frequency and length of assignments, the amount of classroom follow-up and feedback, and the use of homework for formative assessment may determine if it improves academic achievement (Cooper et al., 2006, 2012) or has a negative effect on student attitudes in the long term (Pressman et al., 2015).

Educational technology holds promise to support teachers in remotely providing students with high-quality instruction (Cheung & Slavin, 2011; Olive & Makar, 2010). Specifically, online platforms that include features like immediate feedback and scaffolded hints have been demonstrated to be effective ways to provide students with opportunities for remote independent practice in mathematics (i.e., work students complete on their own with little to no direct intervention from their teacher, either during class time or as homework; Mendicino et al., 2009). However, prior research found that mathematics teachers were particularly resistant to adapting their routines to incorporate educational technology (Becker, 2000). For example, science and humanities teachers have been shown to be over 10 percentage points more likely to include technology in their instruction than mathematics teachers (Howard et al., 2014; Yuen et al., 2010). There is little research in more recent years directly leading up to the pandemic’s onset to understand how math instruction was further adapting to emerging education technologies. Therefore, there is an urgent need to examine how mathematics teachers used online platforms for student homework and other forms of independent practice just before and after COVID-19 began. This will help the field understand how teachers and students may use online platforms during events that necessitate remote learning and disruptions to common educational routines.

Literature Review

The Importance of Independent Practice in K–12 Mathematics

Evidence has consistently shown a positive association of homework completion with student achievement in mathematics. A synthesis of over 15 years of research examining the causal link between homework and academic achievement showed strong positive effects, with larger effects for upper middle and secondary (e.g., 7th–12th grade) students (Cooper et al., 2006; Ozyildirim, 2021). Homework shows particularly strong effects on achievement in mathematics, perhaps because mathematics homework often requires solving problems and not simple memorization (Eren & Henderson, 2011).

Improving students’ experiences with mathematics is particularly important in middle school. Overall, students’ achievement and engagement in mathematics and other STEM disciplines tends to decline for all students over the middle school years (Collie et al., 2019; Juvonen et al., 2004; Maltese et al., 2014). Teacher feedback on homework and other forms of independent practice is related to positive student attitudes toward mathematics (Chen & Stevenson, 1989; Xu, 2008). Notably, an experimental study showed that students randomly assigned to receive immediate and tailored feedback on independent practice problems in mathematics demonstrated greater interest and more positive perceptions of their ability in mathematics than students in the comparison group (Nguyen et al., 2006); attitudes that have been related to continued participation in secondary and postsecondary mathematics (Maltese & Tai, 2011). Therefore, supporting middle school teachers in providing feedback on independent practice could have downstream consequences for students’ mathematics achievement and persistence.

The Role of Technology in Supporting Feedback for Students During Independent Practice

There is an abundance of evidence that using independent practice as a means of formative assessment can improve students’ content understanding (see Lee et al., 2020, for a review), and that individualized feedback provided immediately after independent practice can have a particularly large impact on academic achievement in mathematics (Klute et al., 2017; Shute, 2008). Yet, providing every student with rapid feedback on their independent practice is extremely time-consuming for teachers. Therefore, despite acknowledging the importance of providing timely and individualized feedback, studies show that mathematics teachers often report it is not feasible due to the time required to review individual student work (Rosário et al., 2015, 2018). Online platforms may be able to support teachers in delivering and reviewing homework and other independent practice assignments in ways that improve achievement and engagement. For example, a prior review of the literature suggested that online homework may have a more positive effect on student engagement than traditional homework (see Magalhães et al., 2020), and teachers who are able to provide students more autonomy over homework completion show increased student effort and more positive attitudes toward homework (Trautwein et al., 2009).

Technological innovations that support students’ independent practice, focus more on formative feedback, and reduce the amount of time required for teachers’ input, can help to improve the experience of homework for both students and teachers. input, which can help to improve the experience of homework for both students and teachers. Some online platforms support this by automating the interpretation of student responses to help reduce the time required for teachers to use this information in their instruction or to provide formative feedback (Burch & Kuo, 2010). Others help teachers to individualize the questions that students are assigned, selecting them from teachers’ own set of materials or tailoring them to be more specific to the learning needs of a particular student (Arora, 2013). Specifically, during periods of time where students are working remotely, online platforms that allow teachers to quickly and easily review students’ independent work and provide more immediate personalized feedback may be particularly impactful.

Factors Influencing Teachers’ Use of Educational Technology

Decades of research on teachers’ use of technology in schools has identified strong predictors of whether education technology is actually used in the classroom (see Marangunić & Granić, 2015, for a review). For example, several studies suggest that the perceived ease of use of the platform and teachers’ self-efficacy for using technology influence teachers’ adoption of educational technology (Holden & Rada, 2011; Joo et al., 2018). Teachers’ perceptions of their level of autonomy of their instructional process has also been shown to be positively and significantly correlated with their positive attitudes toward the use of technology in educational practice (Serin & Bozdag, 2020). While the technical features of a particular platform can influence teachers’ perception of its ease of use and their ability to use it successfully, a range of other personal and institutional factors have been shown to influence teachers’ adoption of new instructional technologies more generally (Buabeng-Andoh, 2012; Reid, 2014). For example, teachers’ perceptions of the utility of the tools to their practice and students’ learning (Backfisch et al., 2021), and the extent to which the tools integrate and support teachers’ current instructional routines (Martin et al., 2010; Penuel, 2006), have both been related to increased adoption of educational technologies. Across several studies, teachers’ experience level and the type of training with the platform that they receive have been found to be key factors related to teachers’ uptake of educational technologies, as described here.

Teacher Experience

Studies hypothesizing a relationship between teacher experience and technology use have shown mixed results. Some studies suggest that teaching experience is negatively correlated with teachers’ confidence and comfort with using technology (Liu et al., 2017), whereas others find no association (Bakar et al., 2020). While some studies find that teachers with five or fewer years of teaching experience are, on average, more confident in their use of educational technology (Ritzhaupt et al., 2012), others have found that they have lower technological pedagogical content knowledge (that is, knowledge about how to use technology to enhance their pedagogy within a particular subject matter; Jang & Tsai, 2012). Likewise, while teachers with six or more years of experience may hold stronger beliefs about the negative impacts of technology use on students and therefore may be less likely to have students use technology during class time (Russell et al., 2003), they may also be more likely to use technology for lesson preparation and to direct student use of technology (Russell et al., 2007). These conflicting findings suggest the need for additional research on the association between teaching experience and teacher technology use—particularly in situations like the COVID-19 pandemic where choices about technology use were less likely to be teacher-driven.

Coaching

Access to technical support, resources, and professional development can help increase the likelihood that teachers will use technology in their classrooms (Scrimshaw, 2004). Overall, coaching has been shown to be a powerful model for professional development that can influence teachers’ classroom practice and student achievement (Desimone & Pak, 2017; Garrett et al., 2019; Kraft et al., 2018). Specifically, features of coaching like the provision of sustained, individualized support focused on the skills most relevant to teachers’ practice can be more responsive to the immediate needs of teachers than traditional models of professional development (Kraft et al., 2018). Therefore, in the face of the numerous adaptations required by teachers during the COVID-19 pandemic, coaching that provides support for the use of technology could help teachers more quickly make the transition to remote learning and more seamlessly continue ongoing instructional practices like homework and other forms of independent study (Brown et al., 2021).

Coaching to support technology use has also been specifically shown to positively influence K–12 teachers’ behaviors toward the use of educational technology (Liao et al., 2021; Ottenbreit-Leftwich et al., 2020). A review of evaluations of professional development for technology integration have shown coaching can improve teacher’s comfort with technology use (Lawless & Pellegrino, 2007). However, while coaching shows promise for encouraging technology use, the empirical research remains limited (Ehsanipour & Gomez Zaccarelli, 2017). Therefore, further research on the relationship between coaching and teachers’ use of technology is needed.

The Current Study

The current study is part of a broader evaluation of ASSISTments, an online platform for independent practice in mathematics, conducted in seventh-grade mathematics classrooms in seven school districts across six U.S. states and Washington, D.C. Schools were recruited and randomized to two groups: a treatment group that was offered use of the online platform and coaching supports with two cohorts of their seventh-grade math students during the 2019–2020 and 2020–2021 school years, and another delayed implementation comparison group that was offered use of the online platform and the same coaching supports in 2022–2023. Here, we report our analysis of the usage of this platform by the group of treatment teachers in the 26 schools that began implementation in the 2019–2020 school year.

The Online-Homework Platform

ASSISTments is a freely available web-based online platform that was designed to both provide immediate feedback for students as they work through independent practice problems assigned by their teachers, as well as generate individualized assessment information for teachers to review, both key features of formative assessment (Klute et al., 2017). An initial efficacy study in the state of Maine found positive effects on end-of-year grade seven math achievement among student of teachers randomly assigned to use ASSISTments (Roschelle et al., 2016). Importantly, the design of ASSISTments incorporates a number of elements that would support teachers’ sense of autonomy over their use of the platform. In addition to an existing library of materials compiled from widely used textbooks and resources, ASSISTments developers can integrate a teacher’s own curricular materials into the platform, or teachers can add their own problems and materials. Teachers then create assignments by selecting problems from their curricula of choice. Teachers may choose problems from their existing curriculum or choose to search for material from other sources, including problems created by other teachers (see Figure 1).

Example view of teacher selection of problems from textbook and resource library.

This platform differs from other independent practice platforms, such as AI-directed platforms, where the bank of questions and the specific items selected are largely out of teachers’ direct control, and instead allow the teachers’ selection of items to be more personalized to the needs of their students (Arora, 2013). Moreover, by using a teacher’s own curriculum, ASSISTments aims to integrate platform use into teachers’ existing routines and enhance their instruction without requiring a substantial shift in instructional approach, elements of educational technology that are likely to support teacher uptake (Serin & Bozdag, 2020).

Students may access ASSISTments through their Google Classroom account. Depending on whether their curriculum is publicly available, students may either see and respond to full questions within the ASSISTments platform (as with open access resources such as EngageNY or Open Up; see Figure 2a) or refer to a question in their textbook and then enter their response in the platform (as with copyrighted textbooks such as Big Ideas Math; see Figure 2b).

Sample view of student problems from an open access (a) or copyrighted textbook (b).

When a student enters a response, they receive immediate feedback on the accuracy of their responses to closed-response questions. A review of the research suggests that providing immediate feedback after students have attempted a solution best supports student learning, particularly when learning new concepts or procedures (Shute, 2008). Students entering correct responses may advance to the next question, while those entering incorrect responses must continue attempting the problem until they enter the correct answer. Some problems are programmed with hints to help students who may be struggling, but all problems allow students who may be stuck to reveal the correct answer in order to proceed.

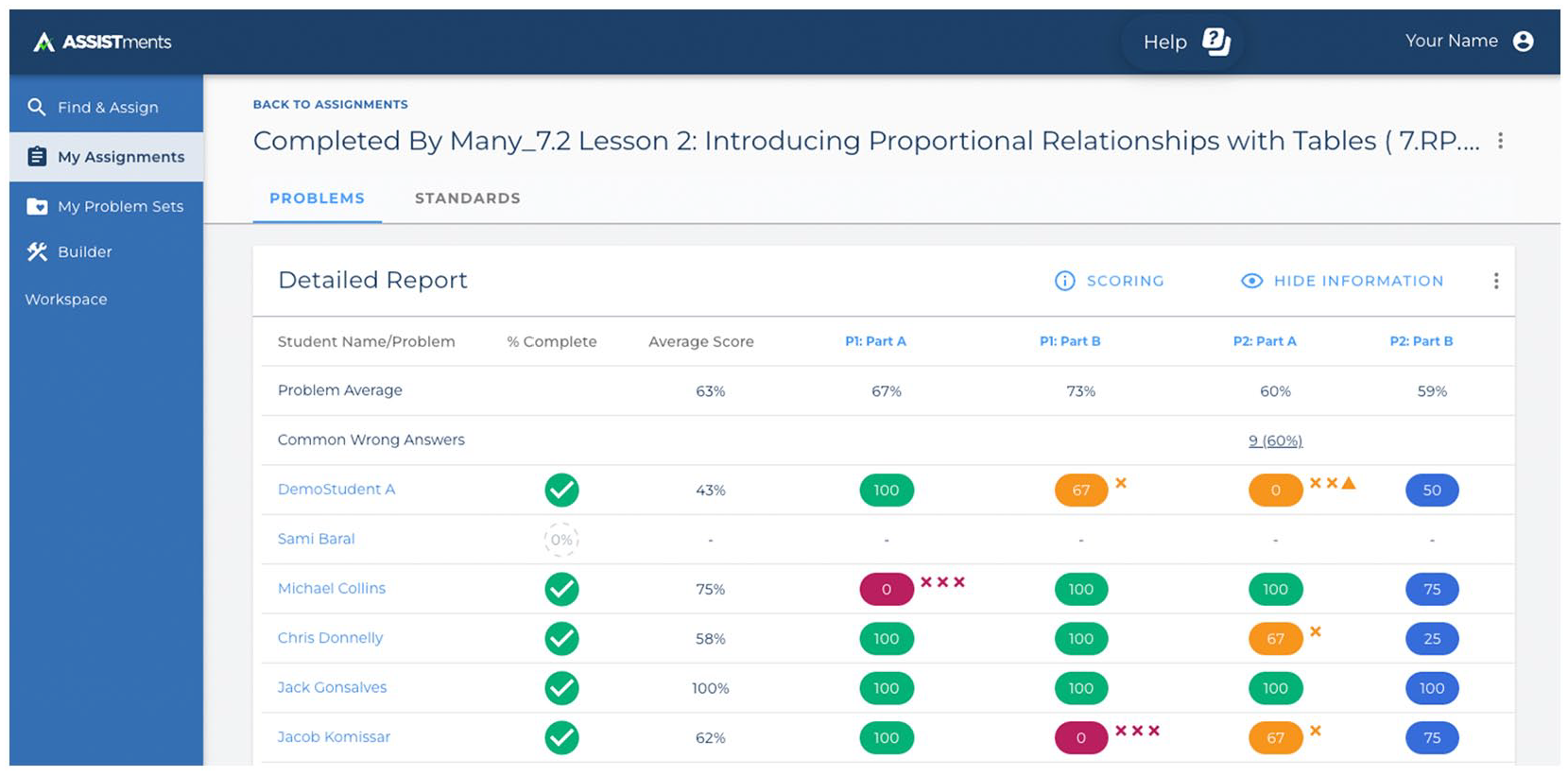

As students complete problems, the platform provides teachers with automated completion reports (see Figure 3). These reports include actionable class-level information, such as the total number of completed assignments, the percentage of students who got a given question correct, and the most common wrong answers to problems. They also provide student-level information, such as the number of attempts per problem and whether a student needed to reveal the answer to a problem in order to progress. Student responses to open-response questions are also collected and displayed in the reports, allowing teachers to provide feedback on problems unable to be automatically graded by the system. These reports provide insight into areas where students experience difficulties and enable teachers to target homework review, an important feature that can encourage teachers to use formative data to adapt their instruction (Bennett, 2011; U.S. Department of Education, 2011).

Example view of teacher automated completion report generated by the platform.

The Coaching Model

Teachers using ASSISTments as part of the study were asked to attend two one-day trainings and were also invited to participate in virtual coaching sessions over the course of the school year. Trainings were held 6 to 12 months apart, first at the beginning of a teacher’s participation and again before the start of the next school year, and organized regionally, with an ASSISTments coach either traveling in person to or connecting remotely with teachers from a district or cluster of nearby districts. The first one-day training was designed to introduce the platform to new teachers and get teachers comfortable with the technical aspects of the platform, such as finding content, creating assignments, and reading and using completion reports. The goal of this initial training was to familiarize teachers with the platform, provide technical support, and reduce their perception about the difficulty of using it, a key factor supporting teachers’ technology use (Marangunić & Granić, 2015; Scrimshaw, 2004). The second training provided returning teachers with an opportunity to share their experiences as a community of learners and discuss strategies for dealing with problems of practice that arose as they used the platform. Studies have shown that such professional learning communities can be effective in promoting technology use by promoting a teacher-centered approach (Paulus et al., 2020). Finally, teachers were invited to schedule virtual coaching sessions with a dedicated ASSISTments coach over a given school year. All coaches were either current or former teachers with prior experience using ASSISTments in their classrooms. During the coaching sessions, the teacher and coach would review the teacher’s usage data from the platform and discuss any areas of challenge teachers were experiencing with incorporating platform use into their instruction and routines. The coaching offered teachers more individualized, sustained support that was focused on their implementation context, all features that have been shown to positively influence teachers practice and adopt more positive stance toward technology use in their classrooms (Kraft et al., 2018; Liao et al., 2021; Ottenbreit-Leftwich et al., 2020). After meeting with teachers, coaches would record their impressions of teacher implementation and produce a rating for their level of readiness to use the program (see Appendix A). Because nearly all participating teachers received both training sessions, and the content of the training sessions were more focused on foundational knowledge for using the platform, our analysis focuses on differences in the number of coaching sessions that teachers received.

Research Questions

Based on a prior efficacy study of ASSISTments that found positive impacts on grade seven math achievement (Roschelle et al., 2016), teachers were expected to use the platform approximately two to three times per week to realize benefits for student math learning. However, the implementation timeline of the current study, which included the height of the COVID-19 pandemic, provided a number of challenges. First, in response to the pandemic, all of the participating schools closed during March of 2020. While many of those schools eventually transitioned to remote or hybrid learning models, this disruption and subsequent uncertainty led to a high degree of variation in school implementation during the spring semester of 2020. Second, in the following 2020–2021 school year, while some schools continued to provide remote learning, others began to transition to hybrid models or back to in-person learning. This natural disruption and variation in instructional setting provided an opportunity to examine differences in implementation during the 2019–2020 and 2020–2021 school years, allowing us to address the following research questions:

How did teachers’ use of an online platform for independent practice change between fall 2019 and spring 2021 as the COVID-19 pandemic disrupted teaching and learning?

Which factors might explain differences or changes in teachers’ usage of the platform between fall 2019 and spring 2021?

Methods

Descriptive statistics of teacher usage of the ASSISTments platform were analyzed using data tables automatically generated by the platform between the fall of 2019 and the spring of 2021. The complete dataset consisted of four data tables, each recording a different resolution of platform usage metrics from participating teachers (see supplemental materials for a complete list of the data tables used for this analysis and a description of the raw data and variables included in each data table). These data tables were combined into a single dataset used for analysis describing usage of the platform at the individual teacher level (e.g., unique assignments given, reports viewed).

Sample

The analytic sample is based on 58 unique teachers from the treatment group of the larger study who had not indicated that they left the study for any reasons unrelated to the platform (e.g., changed schools or no longer taught seventh grade) and who used the platform during any of the following four semesters: fall 2019, spring 2020, fall 2020, and spring 2021. Therefore, the analytic sample of teachers were only those participants who generated usage data and had a similar opportunity for participation in study activities (i.e., coaching) and usage of the ASSISTments platform within each semester. The number of teachers contributing data varied from semester to semester, ranging from 26 unique teachers in fall 2019 to 34 unique teachers in spring 2021. This sample allows us to examine natural variation in platform usage across these semesters for a comparable cross-sectional sample of teachers and, in particular, to examine if there were any meaningful changes in usage of the platform corresponding to the large number of school closings in the spring 2020 semester. 1

Data Sources and Cleaning

As described in more detail later, the teacher platform usage data consisted of records of both the number of assignments they created and the number of times they viewed student reports. From the initial assignment and report data (N = 19,644), there were 241 report views that could not be matched to a study teacher, 131 assignments delegated by an automatic assigner program briefly used by a single teacher, 61 observations from a teacher in a comparison school, 1,623 observations from practice classes (e.g., classes used by teachers to familiarize themselves with the platform during training) or non-seventh-grade classes that were ineligible for this study, 3,238 observations from an outlier district, 3,536 observations from an account shared by two teachers that could not be disaggregated, and 1,965 observations from usage that occurred before fall 2019 or after spring 2021. After removing these cases, our final sample was 5,062 total assignments and 3,787 report views across 58 unique teachers with platform usage data.

Measures and Analyses

Within each year and semester, we examined the average amount of teacher platform usage during the weeks in which there was activity observed on the platform, as has been reported elsewhere (Murphy et al., 2020). This approach allows for a comparison of the frequency of usage of the platform by teachers in schools that may have had different numbers of noninstructional weeks, which was particularly likely to vary during COVID-19. “Usage” of the platform was defined as any time teachers either (a) gave an assignment to students 2 or (b) viewed any of the student reports. To provide a comprehensive picture of teacher usage of the platform, we operationalized the amount, duration, and frequency of usage of the platform, as described later. For each of these measures, we reported the means, standard deviation, and range for each semester (fall and spring) in both Year 1 (2019–2020 school year) and Year 2 (2020–2021 school year) to provide a sense of the variation in usage of the platform across each time-point.

Platform Usage Data

Total Number of Times Used

This measure reports the total number of times the platform was used by a teacher in a semester, averaged across all active users during that particular semester. This was calculated as the total number of times a teacher either read a report or gave an assignment and is reported here as the total usage of the platform averaged across all participants with active usage of the platform within each year and semester. We provide this measure to give a comprehensive measure of the total amount that a teacher interacted with the platform.

Total Weeks With Usage

This measure reports the total number of weeks in a semester that teachers were actively using the platform, averaged across all active users during that particular semester. This was calculated as the total number of unique weeks with any usage of the platform per teacher and is reported here as the average number of total weeks across all participants with active usage of the platform within each year and semester. We provide this measure to give a sense of the overall duration within which a teacher was using the platform.

Number of Times Used per Week

This measure reports the average number of times the platform was used per week (during weeks when there was usage of the platform), averaged across all teachers using the platform during that particular semester. This was calculated by first identifying the unique weeks during which there was any teacher usage of the platform, and finding the average number of observations of platform usage there were for each teacher within each of those weeks. As noted previously, this measure was designed to allow for comparison of the amount teachers used the platform each week across multiple districts with different constraints on the duration of implementation.

Days per Week With Usage

This measure reports the average number of days within an instructional week (e.g., Monday to Friday) during which a teacher had any usage of the platform. This was calculated as the number of school days on average that there was a record of any usage of the platform by a teacher. We provide this measure to show the frequency of teachers’ usage of the platform, in units (days per week) that are easily interpretable and comparable to other instructional activities.

Percent Using at Least Twice a Week

This measure is based on the days per week with usage measure described previously, and it reports the proportion of teachers that used the platform at least two times per week. This was calculated by first identifying the unique weeks during which there was any usage of the platform, and then finding the number of days during those weeks when there was any usage of the platform. Based on a prior efficacy study that reported average platform usage as between two to three times a week (Roschelle et al., 2016), we set a threshold for “typical” usage of the platform to be defined as using the platform at least twice a week. That is, within the weeks where teachers were using the ASSISTments platform, and for teachers who were using the platform, we calculated the proportion of those teachers whose usage of the platform was at least twice a week on average. Because this measure is based on weeks when teachers were using the platform, it also provides a measure of frequency of usage of the platform that is robust to school closings when teachers were not using the platform.

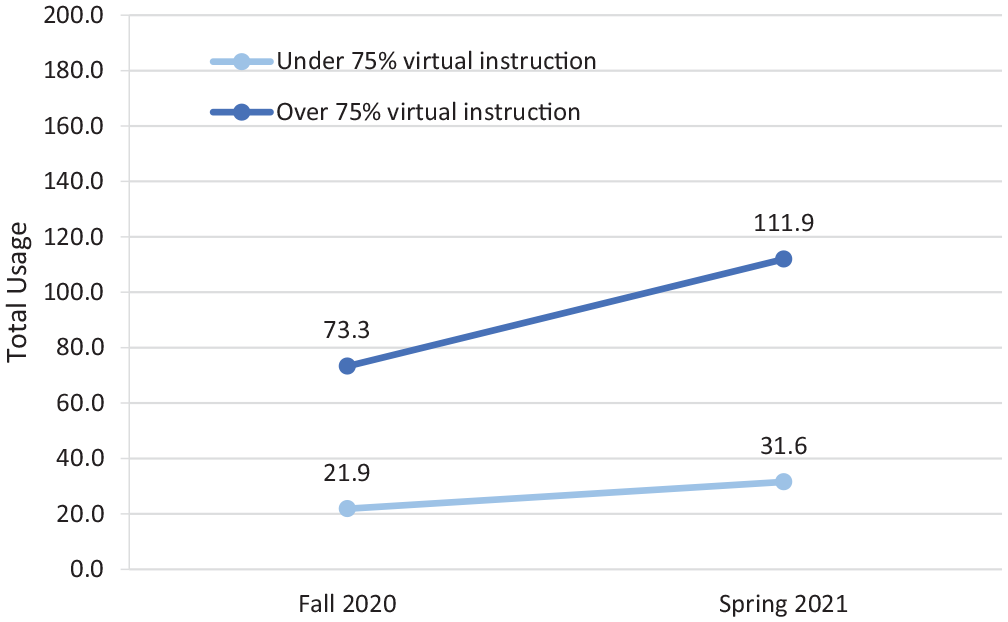

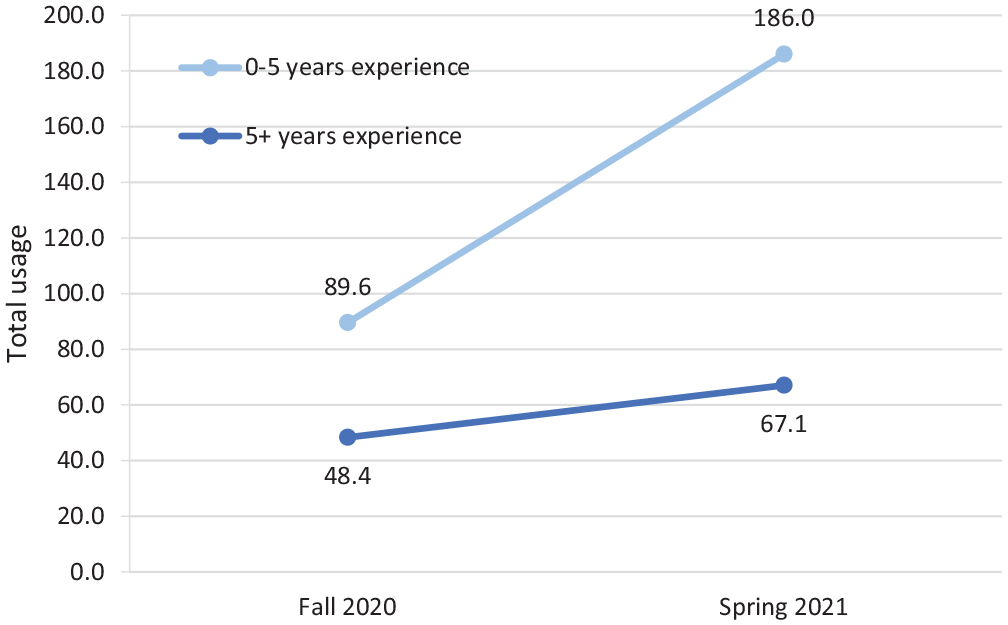

Survey Data

In addition to the usage data collected from the platform, teachers completed a short survey during the spring of 2021 containing a number of Likert-scale items. For the current study, we examined a single item asking teachers about their instructional context (e.g., “During the 2020–21 school year, what percentage of your time with seventh-grade math students was spent online/virtual?”). Given that the vast majority of schools had shifted to online learning due to COVID-19, we grouped this variable into teachers who reported that 75% or less of their time was spent online/virtual and teachers who reported that more than 75% of their time was spent online/virtual. A second item asked teachers about their teaching experience (e.g., “How many years of teaching experience do you have in total?”). Here, we divided these into teachers with 0 to 5 years of experience, and teachers with over 5 years of experience, which are common thresholds for different stages of teacher development that have shown differences in propensity to integrate technology (see Kini & Podolsky, 2016; Russell et al., 2003). Of the 34 total respondents who were using the platform in spring 2021, between 29 and 34 teachers responded to each item, for response rates of 85% and 100%, respectively.

Coaching Session Data

Coaches reported the time and date of coaching sessions with each teacher, as well as provided an initial rating of the teacher’s readiness to use the platform with fidelity, using a rubric (see Appendix A). For the current study, we divided teachers into groups based on whether teachers had no coaching sessions or only one coaching session per year, versus teachers who had two or more coaching sessions. The coaching rubric was designed to capture coaches’ initial evaluation of teachers’ readiness to use the platform with fidelity, scored as ratings from 0 to 3 on four elements of usage of the platform: (1) teachers knowing where to find content and creating assignments, (2) the proportion of their students completing assignments, (3) the proportion of assignments for which teachers viewed reports on student work, and (4) teachers engaging with data from the platform to review assignments with students. These rubric scores were calculated and reported as an overall proficiency score, with a score 0–5 representing “Developing” teachers, 6–10 representing “On Track” teachers, and 11–12 representing “Above and Beyond” teachers. In our sample, no teachers received the “Above and Beyond” rating; therefore, for analysis, we divided teachers into “Developing” (i.e., a rating of 0–5) and “On Track” (i.e., a rating of 6 or more).

Findings

Overall Teacher Usage of the Platform

Overall, average total usage of the platform for teachers was slightly less in the 2019–2020 school year (M = 72.7 platform usage records, SD = 101.0) than in the 2020–2021 school year (M = 140.9 platform usage records, SD = 167.9). The 2020–2021 school year also demonstrated a larger variance in platform usage, perhaps reflecting the different approaches with respect to online instruction from teachers and schools in response to disruptions to in-person instruction during COVID-19 (see Appendix B, Table B4). In the 2019–2020 school year, there was a slight decrease across all measures of teacher usage of the platform from fall 2019 to spring 2020, including a decrease in the total number of weeks with teacher usage of the platform in each semester (from an average of 6.6 weeks to an average of 5.7 weeks). This decrease may be at least partially attributable to the period of time that many schools in the United States paused instruction due to the onset of COVID-19. Usage of the platform also differed somewhat within each school year, between fall and spring semesters. In the 2020–2021 school year, weeks of usage of the platform increased steadily from an average of 7.7 weeks in fall 2020 and 9.6 weeks in spring 2021 (see Appendix B, Table B1).

The weekly frequency of teachers’ usage of the platform followed a similar pattern, with a slight decrease in the number of times the platform was used per week between fall 2019 (M = 6.9, SD = 5.7) and spring 2020 (M = 5.1, SD = 3.5) and then increasing in fall 2020 (M = 6.0, SD = 4.6) and spring 2021 (M = 7.3, SD = 5.2). The proportion of participating teachers using the platform at least twice a week remained relatively stable between fall 2019 and spring 2020, at 19.2% and 19.4% of teachers using the platform twice a week, respectively. This rate of teacher weekly platform usage increased in fall 2020 to 34.5% and peaked at 44.1% in spring 2021 (see Figure 4). Together, these results suggest that usage of the platform for all teachers appeared to respond to the onset of the pandemic, showing no growth in usage during the period of initial school closures but usage increasing steadily over the next two semesters.

Percentage of teachers using the online independent practice platform at least twice per week and average number of weeks with usage of the platform by semester.

Differences in Platform Usage by Coaching Sessions and Rating of Teacher Readiness

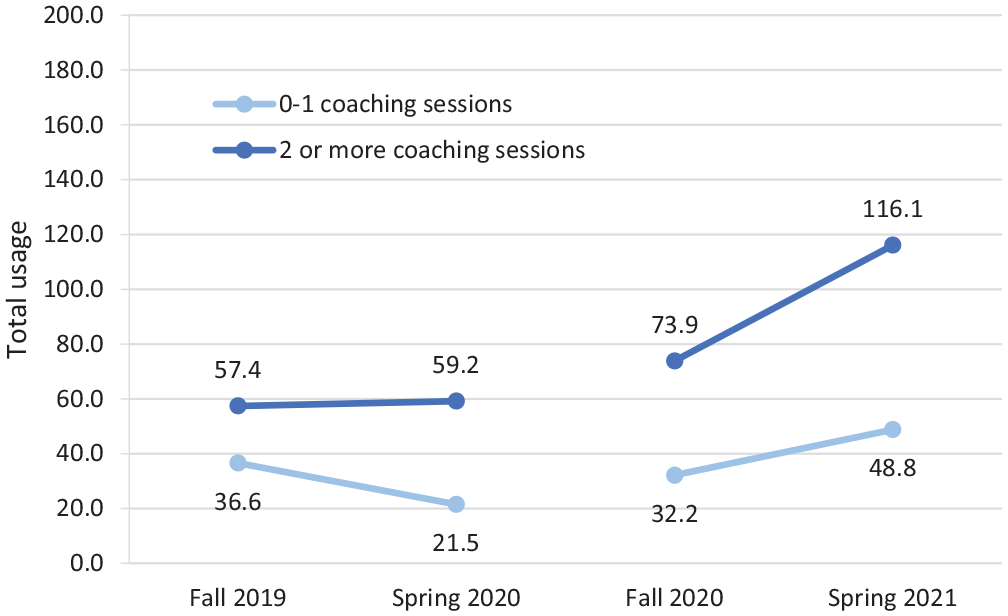

To determine if patterns of teacher usage of the platform across each semester were associated with either the number of coaching sessions a teacher experienced or the rating teachers received from their coaches on the coaching rubric, we examined measures of platform usage for teachers who had participated in one or fewer coaching sessions, or teachers who had participated in two or more coaching sessions (see Appendix B, Table B2). Overall, teachers with two or more coaching sessions used the platform an average of 86.3 times compared to an average of 33.7 times for teachers with one or no coaching sessions. Moreover, teachers with two or more coaching sessions did not reduce their usage of the platform when the COVID-19 pandemic first disrupted schools in spring 2020 (see Figure 5), whereas teachers with only one or no coaching sessions did reduce their usage. This difference in usage of the platform continued into the 2020–2021 school year, with teachers in the Fall of 2020 who received at least two coaching sessions being more likely to use the platform at least twice a week (47.1%) than teachers who only had one or no coaching sessions (16.7%). These results show that teachers with more coaching sessions maintained their use of the platform during the onset of COVID-19 disruptions and had greater increases in their usage of the platform over the next two semesters. Further, teachers who received more coaching tended to have higher variance in their usage (see Appendix B, Table B2). While data on the content of specific coaching interactions was limited, the larger variance observed for this group could suggest that differences in teacher uptake were reactive to individual coaches and coaching interactions, which compounded with additional coaching.

Average per semester total usage of teachers using the online independent practice platform from fall 2019 to spring 2021 by number of coaching sessions received in that school year.

We also examined usage of the platform separately for the coaches’ assessment of teacher readiness to use the platform as either “Developing” (i.e., teachers who had received a rating of five or less), or those who were rated as “On Track” (i.e., received a rating of six or more). While teachers had a similar number of observations of platform usage and frequency of platform usage regardless of rating in fall 2019, usage of the platform by teachers with a higher rating increased more than by those with lower ratings over the next three semesters (see Appendix B, Table B3).

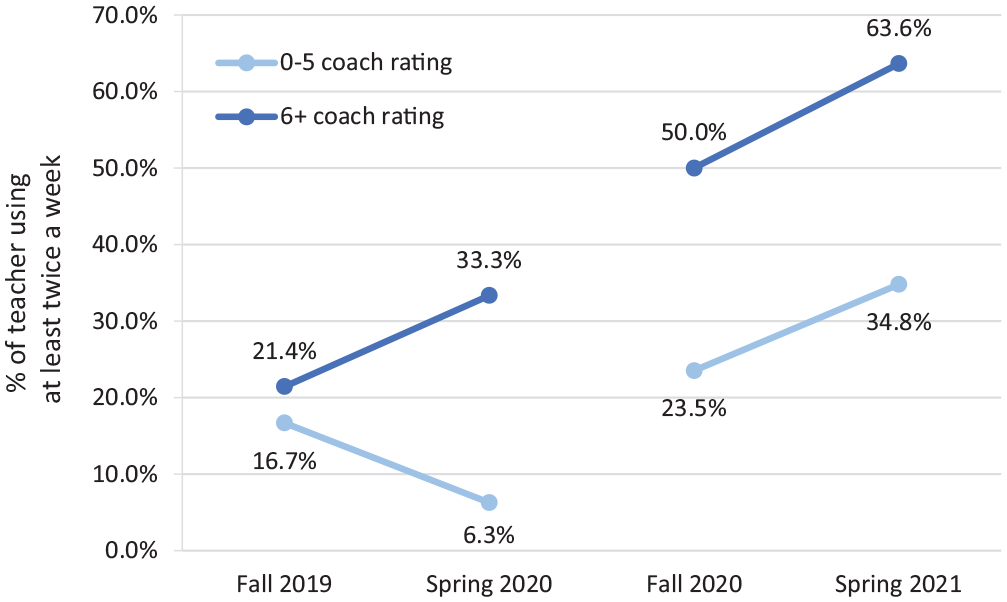

Usage of the platform declined in both frequency (from 16.7% to 6.3% using twice per week) and duration (from 5.3 weeks per semester to 4.0 weeks per semester) between fall 2019 and spring 2020 for teachers with lower initial ratings, whereas teachers with higher initial ratings saw no difference in the duration of usage of the platform (7.6 weeks in both semesters) and an increase iSprn frequency (from 21.4% to 33.3% using twice per week) between fall 2019 and spring 2020 (see Figure 6). These results show that teachers who were identified by their coaches as more ready to use the platform prior to the onset of the COVID-19 pandemic increased their usage of the platform during the initial pandemic disruptions in spring 2020, while those who were less ready declined in their usage of the platform.

Percentage of teachers using the online independent practice platform at least twice per week by semester and by coach rating.

Differences in Usage of the Platform by In-Person Versus Virtual Instructional Setting

Using survey data collected from participating teachers in the 2020–2021 school year, we were next interested in seeing if the patterns of usage of the platform observed during fall 2020 and spring 2021 differed by the teachers’ primary instructional context—that is, whether the majority of instruction was taking place in an online/virtual setting. In terms of total platform usage, teachers who reported that at least 75% of their class time was online/virtual had more platform usage on average, and used the platform for more weeks, than teachers with a lower percentage of online/virtual time during fall 2020. This difference widened in spring 2021. Teachers with a higher percentage of online/virtual time used the platform 38.6 more times on average in spring 2021 than in fall 2020, while teachers with a lower percentage of online/virtual time only used the platform 9.7 more times on average (see Figure 7). Teachers with more virtual/online classes also used the platform for an average of 2.6 more weeks in spring 2021 than in fall 2020, while teachers with fewer online/virtual classes saw no difference in the average number of weeks used. This finding suggests that in both semesters after the onset of the pandemic, teachers with a higher proportion of instruction conducted virtually or online used the platform slightly more than those with a higher proportion of in-person instruction. Further, teachers with more virtual/online classes tended to have higher variance in their usage (see Appendix B, Table B4).

Average total usage of the online independent practice platform for teachers reporting over 75% online/virtual classes and teacher reporting under 75% online/virtual classes in the 2020–21 school year by semester.

Differences in Usage of the Platform by Teacher Experience

We also used survey data to examine if teachers’ years of teaching experience moderated the amount of usage of the platform across the 2020–2021 school year. Overall, all teachers used more in spring 2021 than in fall 2020 (see Appendix B, Table B5). Across both years, teachers with 0 to 5 years of experience used the platform more on average (M = 137.8, SD = 121.2) than teachers with over 5 years of experience (M = 58.4, SD = 76.2) and were more likely to use the platform at least twice a week (57.1% of teachers with 0 to 5 years of experience vs. 36.6% of teachers with over 5 years of experience).

Furthermore, between the fall 2020 and spring 2021 semester, this gap in usage of the platform between teachers with fewer and more years of experience widened. Teachers with 0 to 5 years of teaching experience used the platform 96.4 more times in fall 2020 than in spring 2021, while teachers who had more than 5 years of teaching experience used the platform only 18.1 more times on average (see Figure 8). This finding shows that in the semesters immediately following the onset of the pandemic, and particularly in spring 2021, teachers with fewer years of teaching experience used the platform more than teachers with more years of teaching experience. Additionally, while variance in usage increased for both groups in spring 2021, teachers with less experience had a larger increase in variance than those with greater experience (see Appendix B, Table B5). This could suggest that with regard to the use of an educational technology platform, experienced teachers were more similar in their responses to COVID-19 disruptions, while the response of teachers with less experience varied more widely.

Average total usage of the online independent practice platform for teachers with 0 to 5 years of teaching experience and teachers with 5 or more years of teaching experience in the 2020–21 school year by semester.

Discussion

This study examined teachers’ usage of an online platform during the year that educational systems experienced the historic disruption of COVID-19 and continued through the following year as school communities experienced ongoing challenges related to remote instruction. The data from this online platform provided unique insights into shifts in teachers assigning and reviewing homework and independent practice during that time. Importantly, this study combines extant platform data with other data sources that were available during remote instruction to offer suggestions of potential moderators of teacher platform usage. These findings both contribute theoretically to our understanding of factors that contribute to teacher uptake of educational technology and suggest key data points that developers of educational platforms might consider including to support schools and researchers in better understanding variation in teachers’ use of these technologies.

Teacher usage of the platform appeared to respond to the onset of the pandemic. Perhaps not surprisingly, we observed a decrease in the total number of weeks during the period of school closures in spring 2020, when pandemic disruptions were most severe. Yet, the decrease from fall 2019 to spring 2020 was modest, followed by increased teacher usage of the platform in the two following semesters, fall 2020 and spring 2021. Similar to other studies on the use of educational technology during the COVID-19 pandemic (Sun et al., 2020), this finding suggests that teachers who had usage of the platform prior to the disruption were more likely to increase the rate at which they used the ASSISTments platform as schools shifted to fully remote learning. Our findings also show larger variance in usage during the pandemic, which could reflect differences in online instruction based on the range of approaches to dealing with disruptions to in-person instruction during COVID-19. Using our survey results, we show that teachers who reported that over 75% of their classes were online or virtual used the platform more, had a greater increase in usage in the two semesters following the onset of the pandemic, and had a larger variance in usage than those teachers who had less than 75% of their classes online. Even though ASSISTments was not designed for fully remote or hybrid instruction, it appeared to be used by teachers as they transitioned from in-person implementation. Teachers were able to leverage the platform to continue to provide students with opportunities for independent practice in Spring 2020 and increased their use of the platform in the following two semesters, though this usage varied more widely for teachers with more online classes. While this study did not have information on what other types of opportunities for independent practice teachers may have offered in addition to ASSISTments, this difference is worth noting since studies have shown opportunities for independent practice to be a key factor in mathematics achievement (Cooper et al., 2006).

Our results also suggest that coaching helped support the level of teachers’ platform usage. Importantly, teachers with at least two coaching sessions maintained their level of usage of the platform during the beginning of the pandemic (i.e., spring 2020), while teachers who had only one session or no coaching declined in their platform use. A similar pattern was found for teachers who received higher ratings from coaches for their readiness to implement instruction using the platform. While platform usage for all teachers began to increase during the 2020–2021 school year, teachers with more coaching and higher ratings continued to have more usage overall, and teachers with additional coaching sessions had greater increases in usage of the platform than those with fewer coaching sessions. This suggests that while all teachers were eventually able to use the ASSSISTments platform, teachers who were better prepared and received more coaching maintained or increased their use of ASSISTments in their classrooms during the onset of the pandemic. Specifically, teachers who received the additional coaching sessions designed to focus on responding to problems of practice showed more usage than those receiving only the training on how to use the platform. These differences were large enough (i.e., over 50 additional uses on average) to suggest that it may be not only the additional support or use during the additional coaching, but the nature of the support in the second training that encouraged teachers’ usage. It may be that teachers with additional one-on-one support from coaches with classroom experience using the tool were better able to utilize features that allowed them to adapt the tool to their own instructional approach and therefore feel more autonomy in its use (Lawless & Pellegrino, 2007). Further, this finding aligns with recent literature that suggests coaching can provide teachers with “just-in-time” information, critical in uncertain situations like those presented by the 2020–21 school year (Brown et al., 2021).

Finally, our results contribute to the knowledge base on the relationship between teacher experience and technology adoption, aligning with other studies showing that teachers with five or fewer years of teaching experience may be more likely to incorporate technology into their practice than those with more years of experience (Liu et al., 2017; Ritzhaupt et al., 2012). However, our data do not provide strong conclusions as to the reasons why more experienced teachers chose to use the platform less. It may be that more experienced teachers already have well-established routines for providing independent practice and feedback and, therefore, see the platform as less useful or necessary (Sturdivant et al., 2009), while teachers with less experience found the platform useful in helping them establish those routines. More experienced teachers also could have had prior negative experiences with educational technology that lead them to be wary of new innovations (Russell et al., 2003). If the latter is the case, demonstrating through support materials and coaching how the platform can be integrated into and support existing pedagogical approaches could help to increase platform usage among more experienced teachers (Lau & Yuen, 2013; Martin et al., 2010; Penuel, 2006). Research suggests that having other teachers share successful experiences with technology can help to demonstrate value and demonstrate ways the technology can be used effectively (Zhao & Cziko, 2001). Future studies that collect qualitative interview or classroom observation data on the quality of the independent practice delivered with such online platforms and examine how teachers with varying levels of experience interact around their use of the platform (i.e., through a professional learning community or community of practice) would help to provide a more holistic picture of how and to what extent these measures of platform usage translate into mathematics learning opportunities for students.

Limitations

There are a number of limitations that should be considered when interpreting these findings. First, the analyses presented here are descriptive and based on multiple cross-sectional samples of teachers that began using the platform prior to the pandemic’s onset. In addition, disruptions caused by the pandemic and regular teacher turnover led to a relatively small number of teachers (N = 8) that participated across all semesters (see Appendix B, Table B6). Therefore, we are reporting on descriptive patterns of usage and associations between teacher characteristics and their usage based on a targeted, non-longitudinal sample. Second, the usage data collected may present imperfect or incomplete indicators of instructional patterns or educational experiences. For example, for this study we did not have direct measures of student participation in or engagement with teacher assignments. However, as a minimum prerequisite for student participation and engagement, teachers’ use of the platform (i.e., creating assignments and viewing student reports) provides information about the amount and frequency of opportunities for independent practice that students receive. Further, preliminary analyses of student use data suggest that teacher platform use is highly correlated with student platform use (r = .71). We have provided detailed descriptions of how these variables were created to allow other researchers to interpret and replicate these details in future studies. Finally, because of the nature of the pandemic and the online platform, we were unable to qualitatively observe how the platform was used with students, or how the teachers may have supplemented with other available platforms. Additional information such as administrative support, school culture around technology, and ongoing alternative technology initiatives could help contextualize these findings. For example, a survey item asking about other platforms used for providing homework showed that teachers using the ASSISTments platform also used on average between one and two of other platforms (M = 1.8, SD = 1.0), such as Khan Academy, Zearn, and Study Island. However, we were not able to access usage data for these platforms, or whether coaching was provided, and therefore do not know the extent to which these other platforms were used compared to ASSISTments. Data of usage from other similar platforms could provide a useful reference to understand how teachers leveraged technology more broadly during pandemic disruptions and thus help contextualize these findings.

Conclusion

The COVID-19 pandemic has presented educators with unprecedented challenges, specifically when it comes to navigating remote instruction. Such challenges can lead to decreased opportunities for students to receive immediate and personalized formative feedback on their independent practice, which has been shown to be related to student achievement in mathematics. This study provides an example of how data automatically generated by educational technology platforms can be used to provide a picture of teaching and learning that took place during the COVID-19 pandemic that was otherwise difficult to observe due to the shift to remote instruction. Specifically, this study contributes to the knowledge base on how coaching, level of teaching experience, and online vs. in-person instruction may be related to mathematics teachers’ use of an online independent practice platform during this period. Findings suggest that having technology-supported routines already established may help make the transition to online more seamless when disruptions to in-person instruction arise and point to the importance of coaching support to help teachers transition to online instruction during disruptions like COVID-19. Further, our findings underline the importance of better understanding why more experienced teachers may be less likely to adopt new technologies, and how to support them during specific events like COVID-19 that may necessitate the use of such technologies. Finally, conducting a study during a period of disruption to in-person instruction made evident the affordances and constraints of remote data collection on usage for understanding teachers use of educational technology, even with a platform that collects relatively fine-grained data. The shift to more online instruction is a phenomenon that is likely to continue beyond the pandemic; indeed, because of adaptations made during this period, incorporation of online platforms may very well become the “new normal” as teachers, students, and families adapt to these conditions and as educational technologies become more accessible and ubiquitous. It is therefore important for educational researchers and designers of educational platforms to consider what additional information can be collected remotely to better understand how teachers’ instruction may change as a result of such shifts, and how best to support all teachers in making this transition.

Supplemental Material

sj-docx-1-ero-10.1177_23328584241230054 – Supplemental material for Teacher Use of an Online Platform to Support Independent Practice in Middle School Mathematics During COVID-19 Disruptions

Supplemental material, sj-docx-1-ero-10.1177_23328584241230054 for Teacher Use of an Online Platform to Support Independent Practice in Middle School Mathematics During COVID-19 Disruptions by Eben B. Witherspoon, Max Pardo, Kirk Walters, Rachel Garrett, Matthew Hilbert, Jennifer Ford, Lisa B. Hsin, Melissa A. Rodgers, Dionisio Garcia Piriz, Lauren Burr and Leslie Thornley in AERA Open

Footnotes

Appendices

Appendix B

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the U.S. Department of Education, Institute of Education Sciences; Grant number R305A170243.

Open Practices Statement

Notes

Supplemental Material

Supplemental material for this article is available.

Authors

EBEN WITHERSPOON is a researcher at American Institutes for Research (AIR). His research focuses on the development and evaluation of educational technology interventions in STEM education.

MAX PARDO is a researcher at AIR. His primary responsibilities include collecting, cleaning, and analyzing quantitative data; assisting in the development of data collection instruments and protocols; and reporting findings.

KIRK WALTERS is a senior managing director of mathematics at WestEd. His research focuses on understanding ways to improve K–12 math teaching and learning.

RACHEL GARRETT is a managing researcher at AIR who conducts rigorous studies of teaching and learning programs, particularly those focused on teacher professional learning and mathematics.

MATTHEW HILBERT is a research assistant at AIR. His primary responsibilities include providing technical support, which has included survey development, data entry, data management, data analysis, and project documentation.

JENNIFER FORD is a researcher at AIR. She has more than 8 years of experience leading data collection and other major task on RCTs.

LISA B. HSIN is a senior researcher at AIR. Her major areas of focus include the teaching and learning of literacy skills, English learners’ education, and impact variation in educational interventions.

MELISSA A.RODGERS is a quantitative researcher at American Institutes for Research. Rodgers is the quantitative lead on multiple educational research projects including meta-analytic studies, evaluation of teacher preparation and training programs, and student web-based interventions to improve academic achievement.

DIONISIO GARCIA PIRIZ is a senior researcher at AIR. He has experience conducting and leading analysis for multiple evaluations of educational programs and interventions using experimental and quasi-experimental designs.

LAUREN BURR is a research associate at American Institutes for Research. Her primary interests are addressing equity issues in education and disseminating actionable education research findings to wide audiences.

LESLIE THORNLEY is a mathematics research associate at WestEd. Her research interest is in supporting communities that have historically been underserved.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.