Abstract

The COVID-19 pandemic led to an abrupt shift from in-person to virtual instruction in the spring of 2020. We use two complementary difference-in-differences frameworks: one that leverages within-instructor-by-course variation on whether students started their spring 2020 courses in person or online and another that incorporates student fixed effects. We estimate the impact of this shift on the academic performance of Virginia’s community college students. With both approaches, we find modest negative impacts (3%–6%) on course completion. Our results suggest that faculty experience teaching a given course online does not mitigate the negative effects. In an exploratory analysis, we find minimal long-term impacts of the switch to online instruction.

Introduction

The COVID-19 health crisis led to one of the largest disruptions in the history of American education. Beginning in March 2020, tens of millions of students attending school in person at all education levels abruptly shifted to online learning due to stay-at-home orders put in place to curb transmission of the virus. Although some teachers and faculty had experience teaching online, many had to pivot into online teaching for the first time, often using videoconferencing technology (e.g., Zoom) to deliver instruction and engage students.

There are various reasons why the COVID-19 crisis and the ensuing abrupt shift to virtual instruction may have led to worse outcomes for community college students (Office for Civil Rights, 2021). Students may have been dealing with health challenges associated with COVID-19 infection or have had family members who became sick. Many community college students and their family members were among the tens of millions of Americans who lost their jobs during the spring of 2020; the stress of these job losses may have reduced the cognitive bandwidth and attention that students could devote to class (Shah et al., 2015). Increased childcare responsibilities may have detracted from time that adult students could invest in their college course work (Office for Civil Rights, 2021).

A growing body of research on online learning reinforces the potential negative impacts that an abrupt shift to online courses could have had on students’ academic performance. Experimental and quasi-experimental analyses show that students in online courses have lower rates of course completion and final grades, lower rates of persistence, and increased course repetition (Alpert et al., 2016; Bettinger et al., 2017; Figlio et al., 2013; Hart et al., 2016; Jaggars & Xu, 2016; Xu & Xu, 2019). Moreover, this body of research suggests that negative impacts of online learning are most pronounced for students from lower socioeconomic and underrepresented backgrounds as well as for academically weaker students—populations that may have been disproportionately affected by COVID-19–related health, economic, and childcare challenges (Office for Civil Rights, 2021).

Specific to the COVID-19 context, several studies have investigated how this abrupt shift to online learning affected students. Most like our analysis is the work of Altindag et al. (2021), which leverages data from a public university to investigate the performance of students in in-person versus online courses during the pandemic. Using a student fixed-effects (FE) model, the authors find that students fared better academically in in-person courses compared to online. In addition, a recent paper by Kofoed et al. (2021), which studies West Point cadets who were randomly assigned to an online or in-person introductory economics class during the fall 2020 semester, finds that students in online courses performed worse than their peers in in-person courses. Aucejo et al. (2020) have surveyed undergraduates at Arizona State University about their expectations for academic performance because of COVID-19–induced learning disruptions. A sizable share of students reported that they anticipated needing to delay graduation, withdraw from classes, or change majors, with lower-income students more likely to report that they anticipated delaying graduation than their higher-income peers. 1

While research to date supports the likely negative impacts of the abrupt shift to online learning, it is also worth noting that there are several reasons why the magnitude of this effect may have been not as profound as some might expect. For instance, the combined shift to online learning, remote work, and even job loss may have substantially increased the time available to students to invest in their courses. Many colleges implemented emergency grading policies that could have reduced the effort required from students to pass their courses and make further progress toward their degree.

We build on this body of research by using two complementary identification strategies to estimate the impact of the abrupt shift to virtual instruction on students’ academic performance across the Virginia Community College System (VCCS). VCCS enrolls approximately 250,000 students per year and is broadly representative of open access institutions across the country. Our primary identification strategy is a difference-in-differences (DiD) model with instructor-by-course FE in which we compare changes in course completion rates along two dimensions: (1) in-person versus online courses and (2) the spring of 2020 versus recent comparison terms. We classify students enrolled in in-person courses at the start of the spring of 2020 as “treated”—that is, they experienced the sudden shift to online instruction. In our secondary strategy, we estimate a student FE model using a similar DiD framework, which we elaborate upon in the “Research Design” section.

The advantage of the instructor-by-course FE model is that we maintain a substantially larger (and therefore more generalizable) sample of VCCS students, and for this reason, we privilege results from the instructor-by-course FE model. While the parallel trends assumption generally appears to hold for the instructor-by-course FE model, we do see evidence of a diminishing gap over time in prior online experience between students who started the semester in person versus online. To the extent that students starting the semester in person in the spring of 2020 had more previous online experience (relative to students starting the semester online) than in prior terms, the abrupt shift to online learning during COVID-19 could have had a smaller impact on students’ academic performance. We discuss these and other threats to identification with the instructor-by-course and student FE models in greater detail in the “Research Design” section. Although we acknowledge that our identification strategies separately do not warrant the strongest causal claim, given the scale of the COVID-19 disruption to higher education and the nascent literature examining its impact on student academic performance, and given the consistency of results across many alternative specifications, we believe that our study still provides a meaningful contribution to researchers and policymakers.

Both identification strategies yield similar conclusions. Using the instructor-by-course FE model, we estimate that the move from in-person to virtual instruction resulted in a 4.9 percentage point (pp) decrease in course completion. This translates to a 6.1% decrease when compared to the pre-COVID course completion rate for in-person students of 80.7%. This decrease in course completion was due to a relative increase in course withdrawal (2.7 pp) and course failure (1.3 pp). The negative impacts were largest for students with lower GPAs or no prior credit accumulation.

In exploratory analyses of students’ academic performance in the following academic year, we find that near-term reductions in academic performance do not appear to have resulted in substantial reductions in longer-term persistence or academic performance. Students affected by the shift to virtual instruction in the spring of 2020 were 1.1 pp (1.2%) less likely to reenroll in the following year and earned .58 (4.7%) fewer credits.

While the point estimates from the student FE model are smaller than those from the instructor-by-course FE, both sets of estimates indicate a statistically significant but modest negative impact on course performance from the abrupt shift to virtual instruction.

Our results contribute further evidence on how COVID-19—and, specifically, the abrupt shift to online education that occurred during the pandemic—affected community college students’ academic performance. Although we do estimate negative impacts of the switch to virtual instruction from both identification strategies, the magnitude of these impacts is relatively modest, especially relative to the 19% decline in fall 2020 enrollment among first-year students at community colleges (National Student Clearinghouse, 2020). Our exploratory analysis suggests that these near-term reductions in performance did not translate into substantial declines in persistence or academic performance in the following academic year. Our findings therefore suggest that the highest priority for policy intervention may be to support postsecondary planning among students whose initial college entry was disrupted by COVID-19 as well as to support students whose postsecondary trajectories were interrupted by COVID-19, regardless of instructional delivery format.

Although it is situated in the COVID-19 context, our paper also contributes to the larger body of research on the efficacy of online education. One novel contribution relative to prior research is that we leverage plausibly exogenous variation in a midsemester shift to virtual instruction, whereas previous analyses estimate the impact of online versus in-person learning from the start of a semester. While it is hoped that an abrupt, nationwide shift to virtual instruction will be rare, student-specific midsemester switches may be more generalizable; many speculate that flexible hybrid offerings will continue to be available at many institutions (Anderson, 2021), and disability advocates have used remote learning during COVID-19 as an example of accommodations that should continue to be offered in a postpandemic world (Morris & Anthes, 2021). Interestingly, our results suggest that instructor experience teaching the same class online does not mitigate the negative effects of midsemester shifts to online learning, suggesting that aspects of the student experience—more so than pedagogical challenges that instructors face when teaching online—make the transition difficult. Consistent with prior work, we find that midsemester shifts to virtual instruction had the most pronounced negative effects on students with worse prior academic performance. Efforts to increase student success in online education, whether from the start of or during the semester, will likely be most important for this population of students.

Literature Review

As we describe in the introduction, a sizeable body of research has documented the generally worse learning outcomes that students experience in online versus in-person courses. One important distinction between this research and our paper is that most prior papers exploit variation in whether students enroll in online or in-person courses at the start of a term. We present a brief literature review for how the shift to virtual instruction in the middle of the semester may introduce unique challenges for students, drawing on research that has investigated factors inhibiting or promoting success in online education.

Several factors contribute to students’ academic struggle in online education. Online learning requires a higher degree of autonomy among learners than in-person courses, which may be challenging for academically weaker students or students with nontraditional enrollment trajectories (Corbeil, 2003; Dabbagh et al., 2019). The lack of in-person interaction in online courses can lead to a sense of isolation and disconnectedness from a learning community (Picciano, 2002) and can make it more difficult for students to engage with and learn from peers and instructors (Friesen & Kuskis, 2013; Xu & Jaggars, 2014).

In the face of these challenges, researchers and educators have developed several strategies to promote a greater sense of connection and more interaction in online courses. For instance, Project Compass increased the frequency of synchronous class sessions and promoted more frequent individual interaction between instructors and students (Edmunds et al., 2019). Cung et al. (2018) have investigated the impact of providing students with opportunities for in-person office hours and more frequent digital communication with instructors and have found that these enhancements to student interaction led to stronger performance.

With the abrupt shift to virtual instruction during the COVID-19 pandemic, however, students who opted to start the semester in in-person courses were perhaps negatively selected for the autonomy required in online courses. Meanwhile, instructors who had to shift to online teaching did not have sufficient advance notice to put into place strategies to increase students’ sense of connectedness and interaction with them and peers. Both factors may have contributed to worse academic outcomes for students who started the semester in person than those who started the term online.

Study Setting

Virginia Community College System

The Virginia Community College System (VCCS) comprises 23 colleges across the commonwealth and in the 2019–2020 academic year enrolled 218,985 students. 2 The demographic characteristics of VCCS students are similar to the broader community college landscape; at similar institutions, 49% of students are White or Asian, and 37% are Black or Hispanic. VCCS serves a slightly higher percentage of White and Asian students (58%), with 33% Black or Hispanic. 3 Thirty-five percent of students at similar institutions receive Pell grants, compared to 31% at VCCS. The graduation rate in 150% of expected time to completion is 34% at VCCS and at similar institutions.

VCCS Online Course Offerings

Online learning is a well-established practice within VCCS, dating back to 1996. Online instruction can take different forms, from synchronous formats in which instructors and students connect virtually in real time, to fully asynchronous instruction administered through a learning management system, to a “hybrid” approach in which the majority (50%–99%) of coursework is completed online synchronously or asynchronously but is coupled with some in-person instruction or assessment. 4 In the 2008–2009 academic year, 38.5% of the student population was enrolled in online learning, either exclusively or coupled with in-person courses. 5 By the 2018–2019 academic year, this number had increased to 55.9%. 6

Changes Within VCCS Due to COVID

In response to the COVID-19 crisis and the governor’s declaration of a state of emergency on March 12, 2020, in-person VCCS courses were moved to virtual instruction. The switch to virtual instruction happened on March 18, 2020, and courses remained virtual through the end of the spring semester, on May 11, 2020. On March 24, the VCCS chancellor announced that an emergency Pass/No Pass grading system would be instituted for the spring 2020 semester. The emergency grading system consisted of four options: P+, indicating that the course credit is transferable and counts toward VCCS degree requirements; P-, indicating that the course credit is not transferable but still counts toward VCCS degree requirements; incomplete; and withdrawal. There were no updates to the financial aspect of the withdrawal policy, meaning that students were not reimbursed for withdrawals after the January 29, 2020, deadline, well before the move to virtual instruction. Although the emergency grading system was the default, students had the option of receiving a traditional letter grade (A–F). In practice, 71% of students chose the traditional grading scale for at least one of their courses.

Research Design

Data

Data for this study come from systemwide administrative records for students enrolled in credit-bearing coursework at a VCCS college. For each term in which a particular student was enrolled, these records contain detailed academic information, including the program of study the student was pursuing (e.g., an associate of arts and sciences degree in liberal arts); the courses and course sections in which the student was enrolled (e.g., ENG 111 taught by Instructor X, MWF 9–10am); the grades the student earned; and any VCCS credentials awarded. The data also contain information about each course and course section, including the modality of instruction (online, in person), an instructor-specific identifier, and basic instructor characteristics (sex, race/ethnicity, full-time versus adjunct status). We also observe basic demographic information about each student as well as National Student Clearinghouse matches starting in 2005.

Analytic Samples

The basis for all sample specifications presented in this paper is student-by-course-level observations from the spring of 2020 and several recent pre-COVID comparison terms (beginning in the spring of 2016). 7 For most of our analyses, we make a set of core restrictions to the sample to focus our attention on college-level students and courses that either were affected by the switch to virtual instruction or that serve as an appropriate comparison. The core restrictions exclude the following observations:

Dual-enrollment students. The transition from in-person to virtual instruction may have been operationalized in a significantly different manner for dual-enrollment classes, as many of these courses are taught in high schools by high school faculty.

Courses offered outside the full session. Although the majority of VCCS courses are offered within the full session, which lasts 15 or 16 weeks and spans January through May (with exact start and end dates depending on the college), some courses are offered during shorter sessions. The shorter sessions during the first half of the spring of 2020 were largely or entirely unaffected by COVID because they ended during March 2020, while the shorter sessions during the second half of the spring of 2020 were fully online, and some students may have decided not to attempt these courses due to COVID.

Developmental courses. The vast majority of developmental courses, which are not credit-bearing, are offered during the abbreviated sessions. Additionally, many VCCS colleges have made meaningful changes to their developmental course policies in recent years, resulting in significant decreases in the share of students required to take such courses.

Courses that could not be switched to virtual instruction, such as clinical or on-site training courses.

Audited courses that students are not taking for credit; this situation is very rare.

After these core restrictions, the population of VCCS students in full-session, college-level, credit-bearing courses contains 2,159,200 student-by-course-by-semester observations, corresponding to 352,177 unique students. 8 As our samples are defined at the student-by-course level, individual students contribute multiple observations to the sample.

Instructor-by-Course FE Sample

For the instructor-by-course FE specification, we further restrict the sample to students who were enrolled in courses that were taught online and in person during the spring of 2020 and were taught online and in person during at least one of the pre-COVID comparison terms. We use the spring terms from 2016 through 2019 as the pre-COVID comparison term; we focus on the spring terms because the population of VCCS students varies meaningfully between the spring and fall terms, making observations from the fall terms less desirable counterfactuals. 9 The instructor-by-course FE sample consists of 537,115 total student-by-course observations from the 2016 through 2020 spring semesters, which corresponds to 218,624 unique students.

Student FE Sample

To identify the sample of students for the student FE model, we make the student-level restriction that the students must have been enrolled in online and in-person courses in the spring 2020 semester and at least one pre-COVID comparison term. 10 We use the spring 2018, fall 2018, spring 2019, and fall 2019 semesters as the comparison terms for the student FE sample; because all students in the sample were enrolled in the spring 2020 semester, there is not the same concern about the compositional differences between the spring and fall terms described above. We make the additional course-level restriction that the courses offered in the spring 2020 semester must have been offered in that modality in at least one prior semester. The student FE sample consists of 101,077 total student-by-course observations from the 2018 through 2020 spring and fall semesters, which corresponds to 9,164 unique students.

DiD Models

Our first specification is a DiD model with instructor-by-course FE, represented by the following regression equation:

where

One concern about the instructor-by-course FE approach is that students who started the spring 2020 semester in person versus online may have been differentially affected by COVID-19 in ways unrelated to the shift to virtual instruction. For instance, because in-person students are, on average, younger than online students (see Table 1), in-person students may have been less likely to experience childcare challenges or may have been more likely to experience job loss due to the types of jobs they held. Our complementary estimation strategy in which we use student FE does not suffer from this limitation.

Summary Statistics of Students During the Spring 2020 Semester

Note. The “Core restrictions” sample excludes all observations corresponding to dual-enrollment students, developmental courses, audited courses, courses that could not be switched to virtual instruction, and courses offered outside the full session. The instructor-by-course FE sample includes all observations corresponding to courses that were offered online and in person during the spring 2020 semester and offered online and in person during at least one of the comparison terms. The student FE sample includes observations for students who were enrolled in online and in-person courses during the spring 2020 semester and one of the comparison terms. All information presented is for students enrolled during the spring 2020 term. In calculating these metrics, we use only one observation per student, as these characteristics are stable at the student level for a given semester. The “Other race” category includes students who identify as American Indian or Alaskan, Hawaiian or Pacific Islander, two or more races, or whose race is missing. If a student had no prior VCCS enrollment history, their values for previous online enrollment and share of previously attempted credits online are set to 0, but their value for prior cumulative GPA is left as missing.

Our empirical specification for the student FE model is the same as equation (1), except for removing the student covariates and replacing the instructor-by-course FE with student FE. Our identifying variation for the DiD estimator comes from students who took courses in both modalities (online and in person) during the spring 2020 semester and at least one of the comparison terms, with an individual student serving as their own comparison for the in-person versus online and COVID versus pre-COVID dimensions.

Testing Model Assumptions

The key identifying assumption for our DiD model is parallel trends in the pre-COVID outcomes for the in-person and online observations.

11

In this context, the parallel trends assumption is that the trend in outcomes for students enrolled online serve as an appropriate counterfactual for students who began in person.

12

We provide evidence to support this underlying assumption by testing whether the differences in outcomes between online and in-person students were stable in all the pre-COVID periods using event studies.

13

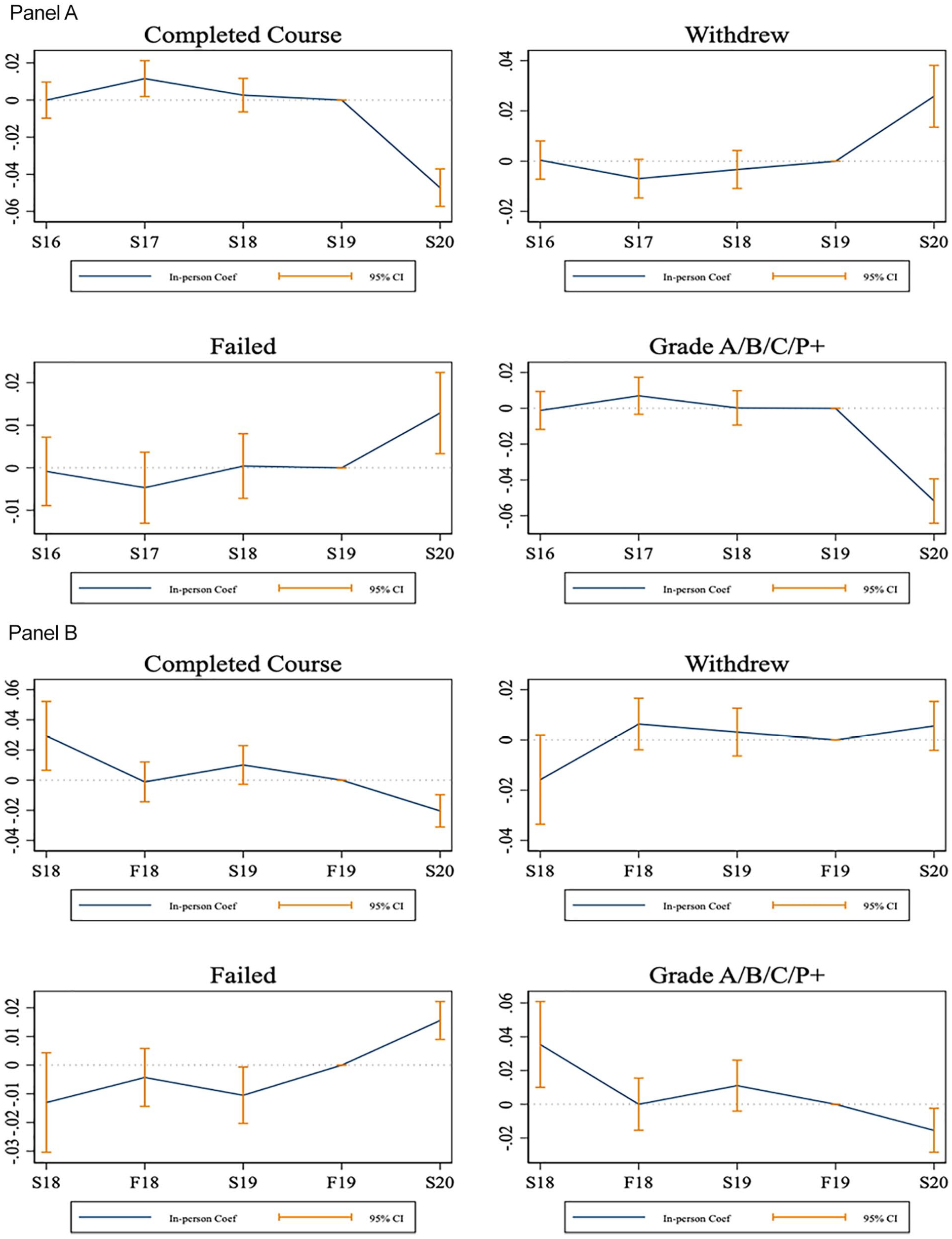

Figure 1, Panel A supports the parallel-trends assumption for the instructor-by-course FE model by showing that the pre-COVID estimates are generally statistically indistinguishable from 0 for the four outcomes. One exception is that the coefficient on

Event study outcome plots, instructor-by-course FE and student FE models.

Figure 1, Panel B shows the corresponding event study plots for the student FE model. We observe that students had better outcomes in in-person versus online courses during the spring 2018 semester. Note that 23% of students in the student FE sample have observations for the spring 2018 semester, and these would correspond to courses taken considerably earlier in their academic progression. The fall 2018, spring 2019, and fall 2019 terms are more comparable to that of spring 2020. 14

We suggest two hypotheses for the differential trends in the event studies, which also highlight the complementarity of our two approaches. First, online course offerings increased over the sample period, and it is possible that more difficult courses were offered online during more recent terms that were not offered earlier in the sample. The instructor-by-course FE model accounts for this potential source of bias, while the student FE model does not. Second, student preferences for online versus in-person courses may have changed over the course of the sample period. For example, students may have been more willing to take more difficult courses online during more recent terms compared to earlier in the sample. The student FE model accounts for this potential source of bias (assuming time-invariant preferences within student), while the instructor-by-course FE model does not. We explore these hypotheses in two ways. First, we estimate event studies by using student demographic and academic characteristics as the outcome variable to observe how the student composition in online versus in-person courses may have changed over the study period. In Online Appendix Figure A3, we do see some differential trends—in particular, a growing age gap (approximately .2 year) between in-person and online students and a differential trend in any prior online experience and the share of previous credits attempted. The fact that in-person students were more likely to have online experience in the spring 2020 term calls into question whether earlier comparison terms are an appropriate counterfactual. For example, suppose that a student was deciding whether to take a certain course online or in person in the spring of 2020. Based on the trend in online-course enrollment, the spring 2020 student would be more likely to have taken that course online, while an otherwise similar student in spring 2016 would be more likely to have taken that course in person.

Second, we present event studies using course attributes as the outcome variable—specifically, an indicator for the course being 200 level, an indicator for the course being in the math department, and the historic average and completion rates of the course. 15 Online Appendix Figure A4, Panel A shows that students in the instructor-by-course sample are over time increasingly more likely to take 200-level courses and math courses in person (relative to online) and over time increasingly less likely to take courses with higher historic average grades in person. In Panel B, we observe similar increases in math courses and courses with lower historic completion rates to be taken in person. Although these trends do not necessarily represent overall trends in course enrollment among VCCS students due to the selected nature of the samples and because of the full set of covariates included in the event study models, they do suggest a relative shift in student preferences for taking less difficult courses online instead of in person. While the student FE model accounts for any time-invariant unobservable student preferences, the instructor-by-course FE model does not. Therefore, the negative impact of the abrupt shift to online instruction that we estimate may be inflated due to this potential source of bias.

Exploring Next-Year Impacts

As we describe above, the identifying variation from our models is defined at the student-by-course level. Because the longer-term outcomes that researchers and policymakers are interested in (e.g., reenrollment in subsequent terms) are defined at the student level, they are not conducive with the identification strategies described above that rely on within-instructor-by-course or within-student variation in modality of instruction.

Given the value of additional evidence on the longer-term impact of the switch to virtual instruction, we estimate the following student-level DiD model:

where we limit the sample to students who were enrolled either fully in person or fully online during term t. The outcomes that we consider are for the following academic year—for instance, for observations from the spring 2020 semester, we construct the outcomes by using records from the summer 2020, fall 2020, and spring 2021 terms. These outcomes include reenrollment, credits earned, whether the student earned a degree, and GPA (conditional on reenrollment). We interpret these results with caution given the event studies included in Online Appendix Figure A5, which shows a downward trend in differential reenrollment for in-person versus online students.

Exploring Grading Leniency

One major constraint in the interpretation of our results is that the switch to virtual instruction was coupled with a formal emergency grading policy and could in parallel have been coupled with more lenient grading practices by instructors. We explore the extent to which grading leniency took place during the spring 2020 term by comparing the grades assigned within courses taught by the same instructor online during the spring 2019 and the spring 2020 semesters. Specifically, we estimate the following version of equation (1):

Assuming that instructors extended the same degree of grading leniency to students who were already online as to those who switched to virtual instruction, the coefficient estimate for

Results

Summary Statistics

In Table 1, we present summary statistics for select student-level characteristics from the spring 2020 term for the full VCCS population (column 1); after making the core restrictions described above (column 2); separating the core restrictions sample to students enrolled in person or online (columns 3 and 4); and the analytic samples for the instructor-by-course and student FE models (columns 5 and 6). The data in this table are collapsed to the student level; if students show up in these samples multiple times, we only include one of those observations when presenting student-level characteristics, as these demographic and academic characteristics are stable for each student in a given semester. We present an alternative version in Online Appendix Table A2, which summarizes the data at the student-by-course level. Comparing the columns of Table 1, we see that students in the instructor-by-course and student FE samples are slightly younger compared to the overall samples; the instructor-by-course sample is slightly more Black and Hispanic, while the student FE model is significantly more White. Instructor-by-course FE students have similar academic histories to those of students in the overall sample, with slightly lower cumulative GPAs and fewer accumulated credits, and they are slightly more likely to have previous experience taking online courses at VCCS. Due to the sample construction requiring prior enrollment, students in the student FE sample have significantly different academic histories than those of the overall and instructor-by-course samples: students in the student FE sample have higher average GPAs, nearly double the number of credits accumulated, and a larger share of attempted past credits online. Considering programs of study, students in the instructor-by-course and student FE samples are more likely to be pursuing liberal arts and transfer-oriented degree programs and are less likely to be pursuing applied or vocational/technical programs of study. This pattern is indicative of differences across programs of study in course requirements and the availability of online programming.

Columns (3) and (4) of Table 1 compare the characteristics of students who were enrolled in in-person versus online courses. Note that if a student was enrolled in both modalities in the spring 2020 term, they are represented in both columns. Online students are older, are more likely to be female and White, and have higher GPAs and more credits accumulated. Not surprisingly, online students are 53% more likely to have previously taken an online course at VCCS and have attempted a higher share of previous credits online. Finally, online students are slightly more likely to be pursuing applied degree and certificate programs.

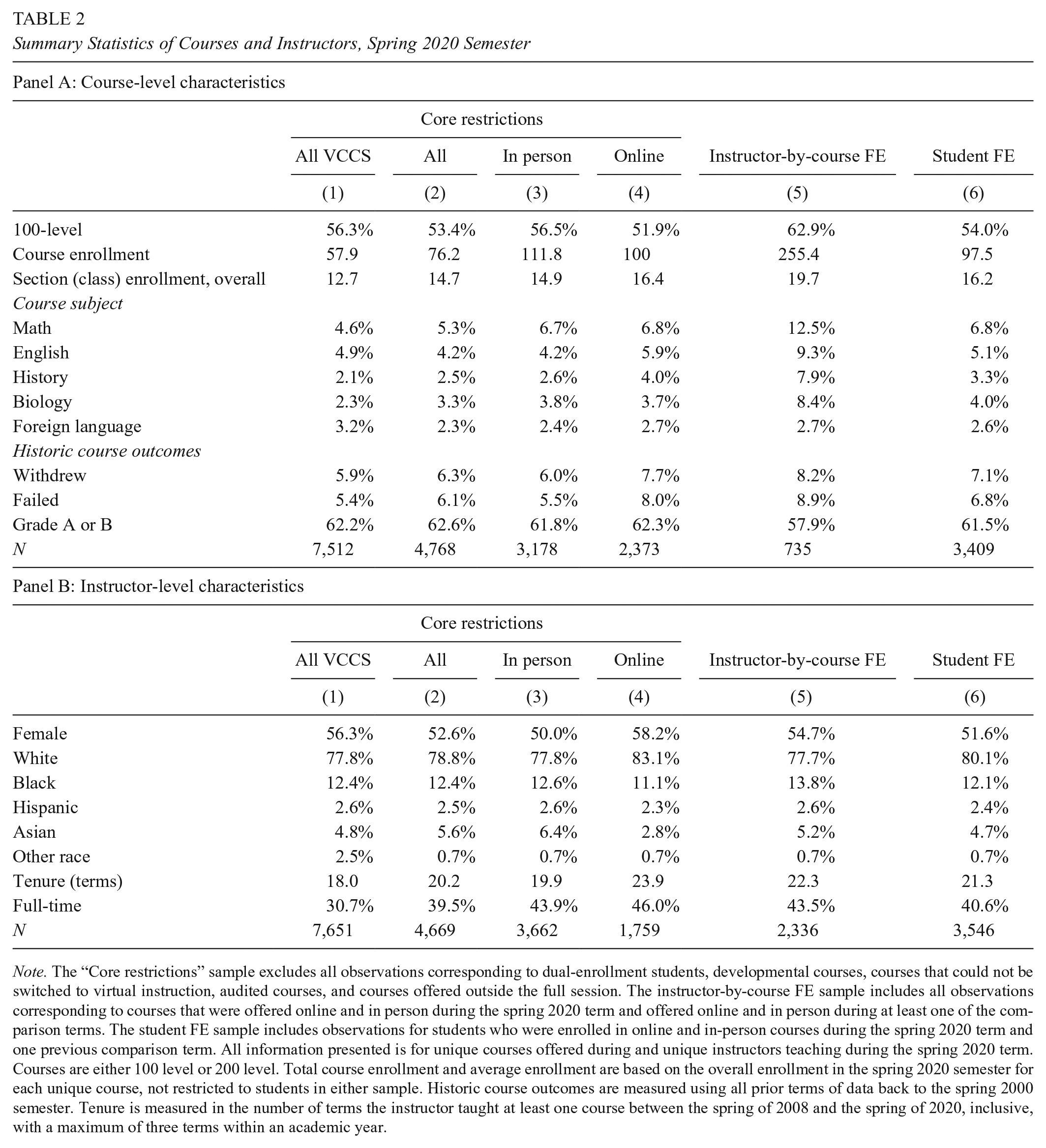

Table 2 compares the characteristics of the courses, including the characteristics of the instructors who taught those courses, represented in the overall samples with the instructor-by-course and student FE samples. As with Table 1, we only present these statistics for the unique course observations in each sample, but we include the student-by-course level summary in Online Appendix Table A2. The instructor-by-course FE sample contains a larger share of 100-level courses (versus 200 level), a larger share of “general education” courses (math, English, history, and biology), and courses with larger class sizes. Instructors in the instructor-by-course and student FE samples have slightly longer tenures than those in the overall samples but are otherwise similar.

Summary Statistics of Courses and Instructors, Spring 2020 Semester

Note. The “Core restrictions” sample excludes all observations corresponding to dual-enrollment students, developmental courses, courses that could not be switched to virtual instruction, audited courses, and courses offered outside the full session. The instructor-by-course FE sample includes all observations corresponding to courses that were offered online and in person during the spring 2020 term and offered online and in person during at least one of the comparison terms. The student FE sample includes observations for students who were enrolled in online and in-person courses during the spring 2020 term and one previous comparison term. All information presented is for unique courses offered during and unique instructors teaching during the spring 2020 term. Courses are either 100 level or 200 level. Total course enrollment and average enrollment are based on the overall enrollment in the spring 2020 semester for each unique course, not restricted to students in either sample. Historic course outcomes are measured using all prior terms of data back to the spring 2000 semester. Tenure is measured in the number of terms the instructor taught at least one course between the spring of 2008 and the spring of 2020, inclusive, with a maximum of three terms within an academic year.

Changes in Grading During COVID-19

An important contextual factor in interpreting the impact of the midsemester shift to online learning is how grading changed overall at VCCS institutions during COVID-19 relative to prior terms. Figure 2 shows the distribution of grades for student-by-course observations in the instructor-by-course and student FE samples across two dimensions: (1) online versus in-person courses and (2) the spring 2019 term versus the spring 2020 term. The pre-COVID distribution of grades for online students is more concentrated at the tails than for in-person students, with a larger share of online students earning either As, Fs, or Ws. For both samples, there is a significant reduction in failing grades and a significant increase in withdrawals for online and in-person students in the spring 2020 term. The decrease in failing grades is likely due to a combination of positive selection into the A–F scale as well as more lenient grading practices by VCCS instructors. The grades P+ and P- are only populated during the spring 2020 semester as part of VCCS’s emergency grading policy.

Distribution of grades in the spring 2019 and spring 2020 terms, by instructional modality.

Online Appendix Table A3 shows the results from our exploratory grade leniency model in equation (3), comparing student outcomes in online courses that were taught by the same instructor in the spring 2019 and the spring 2020 terms. Compared to students enrolled in the same courses in the spring 2019 term, we see that online students in the spring term were similarly likely to complete the course or earn at least a C (columns 1 and 4); 2 pp (23%) more likely to withdraw, although this estimate is not statistically significant (column 2); and significantly less likely to fail the course (3.2 pp, or 27%). Overall, this analysis suggests that the most likely margin of grading leniency occurred at the fail / do not fail mark, while the most likely margin of nonacademic COVID-related impacts occurred at the withdrawal margin. These results suggest that significantly more students who started the term in person would have failed if not for the more lenient grading policies.

Impact Estimates of the Shift to Online Learning

We present our main results from equation (1) in Panel A of Table 3, focusing our discussion on the DiD estimator

DiD Estimates of the Impact of Switching to Virtual Instruction

Note. Within each panel, each column represents a separate regression by using the model specified in the text, with the outcome variable as noted in the column header. The course completion outcome is equal to 1 if the student earned a grade of A−D, P+, or P- and is equal to 0 if the student earned a grade of F, I, or W. ***p < 0.01. **p < 0.05. *p < 0.1.

In Panel B, we present results from the student FE model. Here, we see a smaller negative impact on course completion (2.5 pp, or 2.8 percent). We see no effect on the outcome of course withdrawal. Instead, the effect on course completion is driven entirely by an increase in course failure (34% increase relative to the pre-COVID mean). One possible explanation for this pattern of results is that students in the student FE sample, who were enrolled in online and in-person courses at the beginning of the spring 2020 term, were more likely to opt out of the emergency grading policy and “stick it out” until the end of the term because they felt more confident with their ability to navigate online coursework. However, the transition to online learning did still have a negative impact on these students’ ability to earn credit for the course.

Although the instructor-by-course FE estimates differ from those in the student FE model, this is expected, as these two samples are quite different, as shown above in Table 1. Students in the student FE sample had longer enrollment histories, which means that they are positively selected for higher performance because they have achieved some level of persistence in college. These students also had current and prior experience in online coursework, which likely made their transition to virtual instruction for their in-person coursework smoother.

Subgroup Impacts

We test for differential impacts across student subgroups according to prior academic history. Table 4 shows the impact estimates on course completion for the academic subgroups, with each column showing the results from a separate regression with the sample limited to students in the particular subgroup listed in the column heading. For both the instructor-by-course FE (Panel A) and student FE (Panel B) models, we observe the largest impacts for students with a baseline GPA in the bottom third of the distribution; the DiD estimates across GPA terciles are statistically distinguishable from each other. Similarly, we observe significantly larger impacts for students with fewer credits accumulated compared to students who had previously earned at least 30 credits. These first two comparisons show that higher-performing and more experienced students were less affected by the switch to virtual instruction compared to lower-performing and less experienced students. This result is in line with prior research that finds that random assignment to a hybrid course with an online component led to worse outcomes for lower-performing students but had no negative impact among higher-performing students (Joyce et al., 2015). One explanation is that higher-performing students typically have better self-regulatory behaviors, which are thought to be particularly important for success in an online learning environment (see Li et al., 2020, for a thorough review).

Academic Subgroup-Specific Impacts on Course Completion

Note. Each column within each panel represents a separate regression using the models specified in the text, which use the outcome of course completion, restricted to the subgroup denoted by the column headers. Note that students with no prior cumulative GPA are not included in the first three columns. By construction of the sample, there are insufficient observations in the student FE sample with zero prior credits accumulated, and all observations had prior online experience. ***p < 0.01. **p < 0.05. *p < 0.1.

We also estimate impacts based on prior experience with online learning (for the instructor-by-course FE model only, as all students in the student FE had prior online experience); we observe larger impacts for students who had no prior online learning experience at VCCS before the spring 2020 semester as compared to those who had experience with online learning. This is intuitive, as students who had prior experience with online learning may have found the abrupt transition to online learning during the spring 2020 semester slightly easier than those who had never experienced an online learning environment.

Online Appendix Table A4 presents additional results for the demographic subgroups. We observe more negative impacts for male students and for students currently receiving Pell grants (both statistically distinguishable in the instructor-by-course model), although we do not find meaningful differential effects by age, race/ethnicity, or enrollment intensity.

Next-Year Impacts

Online Appendix Table A5 shows the results from our exploratory next-year DiD model represented in equation (2), comparing next-year outcomes for students enrolled fully in person versus fully online. We find statistically significant but meaningfully small effects on persistence in the next year, with students affected by the virtual shift being 1.1 pp (1.2%) less likely to reenroll in the following year and earning .58 (4.7%) fewer credits. We see no impact on degree completion in the following year or on GPA (the latter conditional on enrollment). While we caution against interpreting these results too strongly due to the patterns that we see in Online Appendix Figure A5, they do suggest that the virtual switch to online instruction had minimal next-year impacts on VCCS students. However, it is worth reiterating that this is not a statement about the pandemic’s overall impacts on college students’ outcomes but instead is focused on the change in instructional modality.

Alternative Specifications

Given the selected nature of our analytic samples and the large set of covariates and FE in our regression models, we test the robustness of our estimates to different specifications. We present the robustness estimates of the DiD coefficient for the instructor-by-course FE and student FE models in Panels A and B, respectively, of Online Appendix Table A6.

16

We begin in Panel A, column (1) with the full sample of all VCCS students from the spring 2016 through the spring 2020 semesters (fall terms included) with no controls, other than

We test four additional sample definitions in the remaining columns of Online Appendix Table A6. First, we restrict the sample to instructors who taught the same course in both modalities in the spring 2020 term and at least one comparison term. The DID estimate in column (15) is slightly larger, at 6.5 pp. The fact that this sample includes only instructors who had prior experience teaching the course online implies that the persistent negative impact is driven by the struggles of students, as opposed to those of instructors, with the shift to virtual learning. 17 Next, the estimate in column (16) is the result of excluding hybrid courses from the sample. The DID estimate is slightly larger, at 6.2 pp / 7.8%, suggesting that students in hybrid courses experienced some degree of negative impact of the shift to virtual instruction, although we caution against strong interpretation of this result due to differences across colleges and over time in classification of hybrid versus online courses in our sample. 18 When we restrict the main instructor-by-course analytic sample to students who were enrolled either fully online or fully in person (column 17), we find a similar result (5.6 pp / 7%). Finally, when we estimate the fully specified model on the sample of all VCCS students (column 18), we estimate a similar coefficient as in column (1). The patterns are quite similar in Panel B, which follows the same pattern, although some columns are not applicable for the student FE model. 19

Discussion

Using two complementary estimation strategies, we demonstrate that the abrupt shift to online learning as a result of the COVID-19 crisis led to a modest decrease in course completion among community college students in Virginia. This decrease in completion rates occurred despite suggestive evidence of more lenient grading in the context of the pandemic. This negative effect was particularly pronounced for lower-performing and less experienced students. The subgroup-specific patterns suggest that, consistent with prior research on the efficacy of online learning, institutions and instructors likely need to target outreach and support efforts after midsemester shifts to online learning to students who are most likely to struggle with virtual learning.

Moreover, our results indicate that instructors’ familiarity with online teaching was not able to mitigate the negative impact for in-person students. Instead, the impacts appear to have been driven by student struggles with the shift to online learning. Faced with a similar need to abruptly shift students to online learning in the middle of future semesters, colleges and instructors may want to prioritize strategies that ease the transition from in-person environments and that foster a stronger sense of community and connection. These efforts could include some of the approaches that researchers have tested for improving online students’ success and that we describe in our literature review, such as increasing the frequency of synchronous class sessions and promoting more frequent individual interaction between instructors and students.

The declines in the spring 2020 term’s performance resulting from the abrupt midsemester shift to online were modest in comparison to the large year-over-year declines in initial college enrollment, particularly among lower-income student populations. Our exploratory analysis also suggests that these near-term reductions in performance were not accompanied by substantial reductions in students’ longer-term persistence or academic performance. A higher priority for policy intervention coming out of the COVID-19 context may be to encourage initial postsecondary participation among students whose initial college entry was disrupted by COVID-19 and to provide reenrollment supports to students whose postsecondary progress was interrupted by COVID-19–related factors independent of the abrupt shift to online learning.

Supplemental Material

sj-docx-1-jis-10.1177_23328584221081220 – Supplemental material for Negative Impacts From the Shift to Online Learning During the COVID-19 Crisis: Evidence From a Statewide Community College System

Supplemental material, sj-docx-1-jis-10.1177_23328584221081220 for Negative Impacts From the Shift to Online Learning During the COVID-19 Crisis: Evidence From a Statewide Community College System by Kelli A. Bird, Benjamin L. Castleman and Gabrielle Lohner in AERA Open

Footnotes

Acknowledgements

We are grateful for our partnership with the Virginia Community College System, and in particular Dr. Catherine Finnegan. We thank Alex Smith for valuable conversations, and we appreciate feedback from anonymous reviewers. Any errors are our own.

Notes

Authors

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.