Abstract

Cochlear implant (CI) users are usually poor at using timing information to detect changes in either pitch or sound location. This deficit occurs even for listeners with good speech perception and even when the speech processor is bypassed to present simple, idealized stimuli to one or more electrodes. The present article presents seven expert opinion pieces on the likely neural bases for these limitations, the extent to which they are modifiable by sensory experience and training, and the most promising ways to overcome them in future. The article combines insights from physiology and psychophysics in cochlear-implanted humans and animals, highlights areas of agreement and controversy, and proposes new experiments that could resolve areas of disagreement.

Introduction

Cochlear implants (CIs) have proven remarkably successful at restoring speech perception to severely and profoundly deaf people, at least in quiet situations. Under such conditions the more successful listeners achieve good open-set speech perception, despite the rather coarse representation of the speech signal provided by their device(s). Most contemporary CI processing strategies extract the envelope in each frequency band and use it to amplitude-modulate a fixed-rate pulse train applied to the corresponding electrode of the implant, thereby discarding the temporal fine structure (TFS) in the waveform. Combined with the spread of current along the cochlea and across the auditory nerve (AN) array, this means that the brain must extract speech from a slowly varying and rather blurred neural excitation pattern. Hence, in addition to the substantial clinical benefits, the remarkable success of many CI listeners in this task informs our understanding of how the brain does, or at least can, process speech (cf. Shannon et al., 1995).

Unfortunately, even when CI listeners show good speech perception in quiet, they usually perform poorly on two tasks that depend on the processing of fine timing information. One task involves the use of temporal information to perceive pitch, which is important for the perception of melody, prosody, and for the comprehension of tonal languages. A second task concerns the use of interaural time differences (ITDs) to localize sounds, which is a major problem for bilaterally implanted listeners. Figure 1 illustrates how the removal of TFS cues by CI processors may impair pitch and ITD perception. Figure 1a shows the output of the Advanced Combination Encoder (ACE) processing strategy (Vandali et al., 2000) to a piano note having a fundamental frequency (F0) of 110 Hz. It can be seen that the F0 is encoded by the envelope in several frequency bands but that the envelopes are often quite shallow and not aligned across channels. The shallow modulation results from reverberation due to room acoustics and from the limited number of harmonics falling within each analysis band. This latter factor is illustrated in Figure 1b, which plots the output of a subset of channel to notes with F0 s of 110, 220, and 440 Hz, and shows the reduced modulation depth with increasing F0; in addition, CI processors usually low-pass filter the envelope in each channel, thereby further contributing to the reduction in modulation depth with increasing F0.

(a) Electrodogram showing the output of the ACE processing strategy to a piano note having an F0 of 110 Hz. The period of the waveform, equal to 9.1 ms, is shown by the solid horizontal line. (b) Zoomed-in versions of the outputs of channels 8–15 for notes with F0 s of 110, 220, and 440 Hz. (c) Schematic of the output of one analysis filter of CIs in the left (blue) and right (red) ear of a bilaterally implanted listener and where each output consists of a single amplitude-modulated sinusoid. Note that the fine structure and envelope both lead on the left ear relative to the right ear. (d) Pulse trains in the two ears resulting from the filtered waveforms in part (c). Note that because the outputs of the two CIs are not synchronized, the fine structure can lead on the right ear, as illustrated here, even though the direction of the ITD envelope is conveyed correctly. ACE = Advanced Combination Encoder; CI = cochlear implant; ITD = interaural time difference.

Figure 1c illustrates the bandpass filtered waveform of one channel of a CI that passes only one frequency component of the input, together with the same plot for the contralateral CI of a bilaterally implanted listener; the resulting pulse trains are shown in Figure 1d. Although the envelope ITD is represented in the pulse train, the carrier pulse trains in the two ears are unsynchronized with their own arbitrary ITD. Furthermore, tiny differences between the clock rates of the two processors can cause this irrelevant carrier ITD to vary over time (not shown), confounding the CI patient's spatial perception.

The effects of contemporary CI processors on the cues necessary for pitch and ITD perception have prompted some companies to develop alternative strategies that preserve TFS on a subset of apical (low-frequency) channels (Büchner et al., 2008; Dhanasingh & Hochmair, 2021). Unfortunately, deficits in the temporal coding of pitch and ITDs by CI listeners persist even in experimental settings where the speech processor is bypassed and highly simplified stimuli are presented to one electrode (for pitch perception) or pair of electrodes (for ITD processing). These limitations have been the subject of experimental investigation for several decades (Shannon, 1983; Townshend et al., 1987; van Hoesel & Clark, 1997) and are described in detail in the following sections. Figure 2 compares pulse-rate discrimination thresholds as a function of the rate of a pulse train applied to a single CI electrode to pure-tone frequency discrimination thresholds for normally hearing (NH) listeners. The CI thresholds are not only much higher than the NH pure-tone thresholds, but also increase steeply with increasing pulse rate. Indeed, pitch-ranking studies reveal that, for most CI listeners, pitch does not increase with increases in pulse rate above some value, which is typically about 300 Hz but that varies across listeners, reaching 800–900 Hz in a small number of cases (dashed line with arrows at the bottom of Figure 2a; see also e.g., Kong & Carlyon, 2010). This “upper limit” raises the possibility that the CI rate-discrimination thresholds shown in Figure 2 at very high rates may be based on a percept other than pitch, and this point will be discussed in the next two sections of the present article.

Rate difference limens (DLs) for pulse trains presented to one CI electrode are shown before and after extensive training by the red and blue symbols, respectively. The yellow line shows pure-tone DLs in NH. The dashed line with arrows at the bottom of the plot shows the range of “upper limits” for rate discrimination in the study by Carlyon et al. (2019). The purple bar shows the range of DLs for rate discrimination of bandpass-filtered pulse trains observed in a range of NH studies. CI = cochlear implant; NH = normally hearing.

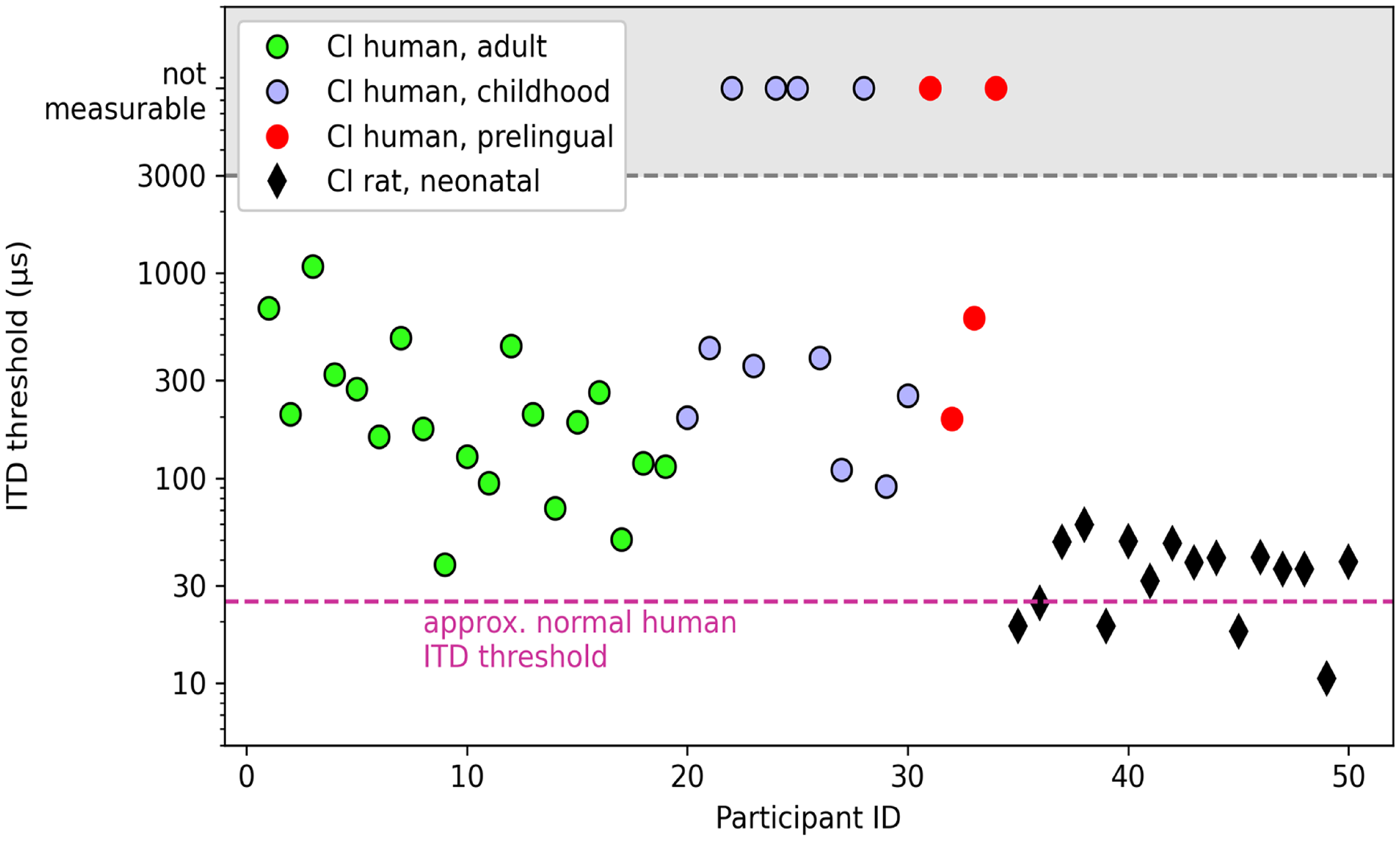

ITD processing by bilaterally implanted listeners is also worse than in NH listeners, again even for idealized stimuli, and deteriorates with increases in pulse rate, as illustrated in Figure 3 for data reported by van Hoesel et al. (2009), and summarized for a wide range of studies in the review by Laback et al. (2015). Also similar to monaural rate discrimination, ITD discrimination varies markedly across listeners, both in terms of the overall size of thresholds and of the upper limit. For example, although ITD discrimination thresholds typically increase markedly or become unmeasurable for rates above about 300 pulses per second (pps; Figure 3), some exceptional CI users are sensitive to ongoing ITDs at considerably higher rates (e.g., 600 pps for two listeners in van Hoesel et al., 2009 and one of the four listeners in Laback et al., 2007).

Blue lines: ITD thresholds as a function of pulse rate from a study by van Hoesel et al. (2009) that bypassed the speech processor and presented simple pulse trains to CI listeners. Red lines: ITD thresholds as a function of the rate of bandpass-filtered acoustic pulse trains presented to NH listeners (van Hoesel et al, 2009). Faint lines show data from individual participants; solid-lines show broken-stick fits to the mean data from each study. Yellow shaded area: data obtained from pure tones presented to NH listeners (Brughera et al., 2013).

The data summarized in Figures 2 and 3 raise an interesting conundrum that, in a sense, is opposite to the issue of how CI listeners can achieve good speech perception from a highly degraded peripheral representation: Why is the sensitivity of CI listeners to changes in pulse rate and in ITD so poor, even when clear and unambiguous information is presented? To address this issue, each section of the present article provides an opinion piece from seven research groups with expertise in the temporal processing of CI stimulation. Each section provides a different perspective on the problem, focusing primarily either on physiological data from animals or on human psychophysics, and on either pitch or ITD processing. However, although the perspectives differ, each contributor was asked to address the same set of questions. This approach was inspired by the multi-author “lack of consensus” article by Verschooten et al. (2019) on the controversy surrounding the highest frequency at which phase-locking is important for pitch perception in NH. It differs from that article not only in addressing monaural and binaural temporal processing in response to electrical stimulation by CI listeners rather than to acoustic stimulation in NH, but also in focusing on the roots of the limitations in temporal processing both at low and at high stimulation rates. The result here was a combination of converging evidence from different disciplines and authors on some issues and disagreement on others.

The first question posed to all contributors was: To what extent are the limits on CI users’ use of purely temporal cues to perceive the pitch and spatial location of sounds (a) due to a fundamental biological limitation, and (b) modified by the presence and type of electrical stimulation that they have experienced? Answers to part (a) addressed questions such as the neural stage(s) at which limitations in TFS processing likely arise, and whether the limitations are specific to electrical stimulation per se. Part (b) addressed the important issue of plasticity and of whether the removal of TFS by speech processors has limited the processing of fine timing cues by CI listeners, even when those processors are bypassed. The answers to both parts of the questions are intertwined, because the neural locus of the limitations may inform the likelihood of their modification by experience, and because the presence of neural plasticity may constrain the likely neural locus. In practical, clinical terms, the question could be rephrased as two thought experiments. First, if we provided newly implanted congenitally deaf infants with processors that accurately conveyed pitch and ITD TFS cues, how good would their perception of those cues be in adulthood, compared to people who had only ever been fitted with conventional processors, when the processor is removed and idealized stimuli presented to the CI electrodes? Second, in adult CI listeners, to what extent could sensitivity to these cues, assessed using idealized stimuli, be restored either by continued exposure to TFS-preserving processors or by extensive training? As with the early research on speech perception, the answers to these questions not only have clear and important clinical implications, but also provide insights into auditory processing and to sensory plasticity in general. We next invited authors to answer the question: What would change your mind?. We expected some controversy concerning the role of plasticity in particular, and so considered it important, to quote Verschooten et al. (2019), “to put the authors on the spot: for them to demonstrate that their theoretical position is falsifiable (and hence is science rather than dogma), and to commit them to changing their mind, should the results turn against them.”

The last question asked of each author was: What if anything can be done to improve the temporal processing of pitch and localization cues by CI listeners? It is related to our question about plasticity to the extent that improvements can be achieved by early exposure to, or training with, TFS cues, but extends the debate to other methods, such as changes to speech-processing strategies or to the method of stimulating the electrode array. Here the authors bring their scientific knowledge to explore the reasons for the limited success of existing attempts to improve pitch and ITD processing, and to propose modifications or replacements to those attempts.

Our final section summarizes the areas of agreement and highlights the areas of controversy. To aid the reader we provide a short bullet-point summary of each contributor's main arguments, focusing on the issues where there is most disagreement, namely the role of auditory experience and the potential for overcoming these temporal limitations. We hope that both the consensus and controversy summarized here will prove informative both to academic research groups and to CI companies in their efforts to improve hearing outcomes for CI listeners.

Bob Carlyon and John Deeks

To What Extent are the Limits on CI Users’ Use of Purely Temporal Cues to Perceive the Pitch and Spatial Location of Sounds

(a) Due to a Fundamental Biological Limitation?

As noted in the Introduction, processing of fine timing cues by CI listeners is impaired even when the speech processor is bypassed and idealized stimuli are presented to a single electrode. The pitches of such stimuli rise with increases in pulse rate only up to some “upper limit,” which varies across listeners but is typically in the range 300–500 pps (e.g., Kong et al., 2009; Townshend et al., 1987; dashed line with arrows in Figure 2a). Similar findings have been observed with other periodic electrical stimuli such as sinusoids and amplitude-modulated (AM) pulse trains (Kong et al., 2009; Shannon, 1983). This contrasts with the situation in NH where many researchers believe that phase-locking to pure tones contributes to pitch perception up to at least 1000 Hz, with some arguing that it does so up to about 8000 Hz (Verschooten et al., 2019; but see Oxenham et al., 2011). Even for pulse rates of about 100–150 pps, where CI listeners’ detection of rate changes is best, difference limens (DLs) are typically in the range 5%–20%, considerably larger than the DLs of less than 1% for 125 Hz pure tones that have been reported in NH (e.g., Moore, 1973; see summary in Figure 2). Processing of ITDs also deteriorates at high rates (Figure 3); here we focus on pitch perception and refer the reader to the sections of this article that focus on ITD limitations.

A useful source of information on the nature of the neural limitations on temporal pitch perception in CIs comes from experiments with NH listeners, using acoustic pulse trains that have been band-pass filtered so that their frequency spectra contain only the high-numbered harmonics of the pulse rate that are “unresolved” by the peripheral auditory system. Such stimuli share some significant features with electric pulse trains presented to a CI electrode, including stimulation of mid-to-basal regions of the cochlea and that changes in pulse rate do not produce detectable changes in the place of excitation. Experiments that manipulated the relative amplitude or timing of different pulses have reported very similar effects of these manipulations on pitch for acoustic (NH) and electric (CI) stimuli (Carlyon et al., 2002; van Wieringen et al., 2003). We therefore believe that filtered acoustic pulse trains presented to NH listeners provide a good model of “optimal” perception of electric pulse trains presented to a CI, in the absence of damage to the auditory system or of auditory deprivation.

As illustrated by the purple bar in Figure 2, rate DLs for low-rate (e.g., 100 pps) acoustic pulse trains that have been bandpass filtered into a high-frequency region are roughly similar (5%–10%) to that observed for electric pulse trains presented to the best-performing CI listeners (Carlyon & Deeks, 2002; Moore & Carlyon, 2005), showing that at these rates the limitations in temporal sensitivity are not specific to electrical stimulation. The comparison between electric and acoustic pulse trains for the upper limit of pitch is complicated by the fact that, for high pulse rates, acoustic pulse trains can contain harmonics that are resolved by the auditory system, leading to the presence of place-of-excitation cues. This complication can be partially overcome by summing the harmonics of pulse trains in so-called alternating (“ALT”) phase, which produces pulse rates equal to double the F0. Macherey and Carlyon (2014) required NH listeners to pitch-rank bandpass-filtered harmonic complexes of different F0 s and summed in either sine- or ALT phase. Pitch ranks for the ALT-phase stimulus increased up to an F0 of 315 Hz, at which point the pitch was equal to that of a sine-phase complex with F0 = 630 Hz. This shows that the highest pitch that can be produced by purely temporal cues in NH hearing is at least 630 Hz. At higher F0 s pitch ranks for the ALT-phase stimulus may have been affected by basilar-membrane filtering, and so the true limit may or may not be higher. The highest upper limit that is observed for the best-performing CI listeners, which is of course unaffected by basilar-membrane filtering, is about 800–900 pps (Kong & Carlyon, 2010). Note however that although pitch may increase with increases in rate up to 800–900 pps, this does not mean that CI listeners hear a pitch equal to 800–900 Hz. Rather, it is possible (and indeed likely) that over some range the slope of the function relating pitch to pulse rate decreases but is not zero.

We conclude that, at least at low rates, the limitations on the temporal processing of the best-performing CI listeners broadly resemble that observed in NH listeners presented with analogous stimuli. Hence, the limitations are not specific to electrical stimulation per se. However, performance can vary substantially between listeners and even between electrodes in the same listener, suggesting that there are additional factors that can degrade temporal processing, which we now consider with particular reference to the upper limit.

An important fact is that the upper limit differs between electrodes in the same CI (e.g., Cosentino et al., 2016), thereby demonstrating that poor performance cannot be attributed to a general problem with pitch perception. This conclusion is bolstered by the findings of Ihlefeld et al. (2015), who measured ITD discrimination as a function of pulse rate for three place-pitch-matched pairs of electrodes in each of seven bilaterally implanted listeners. They also measured rate discrimination for each of the six electrodes. Performance on both tasks deteriorated with increasing rate, as expected. Importantly, performance (d’) on the ITD task could to some extent be predicted by the lower of the d’ scores for monaural rate discrimination in the corresponding electrodes in each ear. They concluded that the variation in upper limit of pitch across electrodes at least partially shares a basis with that for a non-pitch task, namely lateralization. An obvious locus for this variation could lie in between-electrode differences in the temporal fidelity of the AN response. However, there is also evidence that the upper limit can be limited by processing central to the AN. Carlyon and Deeks (2015) measured electrically evoked compound action potentials (ECAPs) and rate discrimination with the same stimuli and same group of CI participants, and presented a preliminary analysis showing that the timing of the ECAPs was sufficiently accurate to support rate discrimination even for rates above the upper limit and for which discrimination was at chance. This finding, obtained with human CI listeners who had been deaf for many years, is broadly consistent with the excellent AN phase-locking to electrical stimulation in recently deafened animals, as discussed in the sections by Volmer and Ohl, Delgutte and Chung, and Kral and Tillein.

(b) Modified by the Presence and Type of Electrical Stimulation That They Have Experienced?

Physiological data from animals, reviewed elsewhere in this article, suggests that the neural coding of pulse rate and of ITD can be significantly affected by auditory deprivation and by the presence and type of chronic electrical stimulation that has been provided, particularly so early in life. However, data on the effects of early stimulation on monaural temporal coding on humans are quite sparse and have not been studied with enough participants for firm conclusions to be drawn. For example, Busby et al. (1993) tested four patients who had been deafened before the age of 3 and four adult-deafened patients on a rate-discrimination task, and found that three of the adult-deafened patients performed better than the early-deafened patients but that one did not.

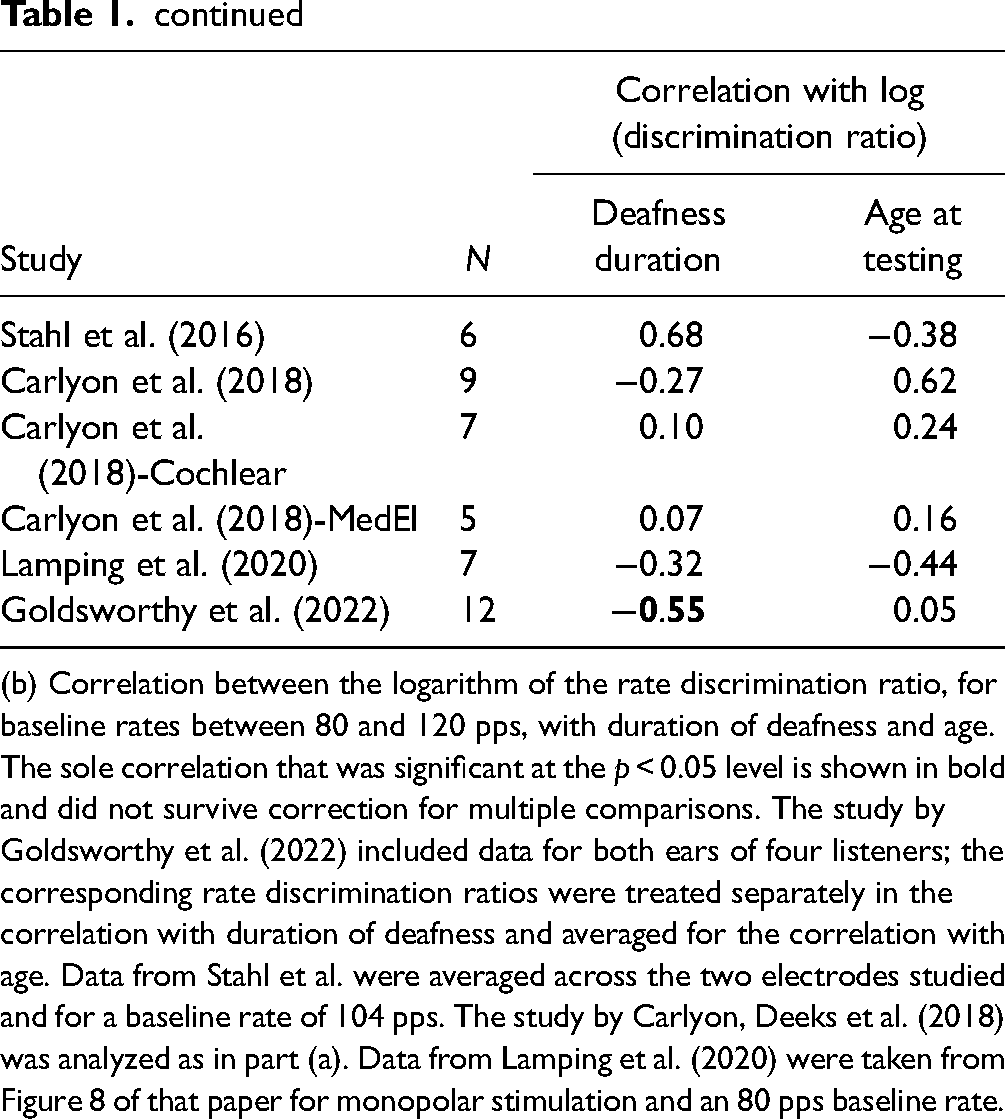

There are more data available on the effects of deprivation and stimulation in adulthood. Cosentino et al. (2016) reported a correlation between duration of deafness and the upper limit of temporal pitch in nine CI participants. This value of N is modest and so we combined data from six studies from our laboratory (total N = 50), all of which used the optimally efficient MidPoint Comparison (MPC) ranking procedure to estimate the upper limit of pitch, and for which we had also recorded the duration of deafness for each participant (Table 1). We then performed a univariate analysis of covariance with upper limit as the dependent variable, study as a fixed factor, and duration of deafness as co-variate. This allowed us to estimate the correlation between upper limit and duration of deafness whilst removing the across-study differences that may have arisen from variations in the stimuli, methods, and participants. The effect of study was significant, likely reflecting differences in the maximum pulse rate presented in the different studies (F[6,42] = 3.73, p = .005), but the effect of duration of deafness was not significant (F[1, 42] = 0.21, p = .65, r = −.07)). A similar analysis using age at testing as co-variate also failed to reveal an effect (F[1, 42] = 0.214, p = .65, r = −.07). Finally, we analyzed data on rate-discrimination thresholds and for a pulse rate close to 100 pps in five studies from different laboratories (total N = 45). Again there was a significant effect of study (F[5, 38] = 2.58; p = .042) but not of duration of deafness (F[1, 38] = 0.82, p = .37). When we analyzed the four studies where participant age was reported, the effect of age was once more not significant (F[1, 34] = 0.00, p = .98). Hence, we do not have any evidence that either the upper limit of temporal pitch or rate discrimination at low rates in adult CI listeners varies reliably as a function of the duration of deafness. It remains possible however that such an effect would have been observed in experiments designed to examine these factors and that therefore included a wider range of deafness durations and ages.

Summary of Studies Included in the Meta-Analyses Described in the Section by Carlyon & Deeks.

(a) Correlation between the upper limit of pitch and both duration of deafness and age for each study. The sole correlation that was significant at the p < 0.05 level is shown in bold and did not survive correction for multiple comparisons. The study by Carlyon et al. (2018) was a longitudinal study of a pharmaceutical intervention; data from sessions 1 and 3 (baseline and post wash-out) were averaged and analyzed separately for the Cochlear and MedEl participants. Data from the study by de Groote et al. (2024) measured the upper limit for pulse trains applied to the most-apical electrode of the MedEl device. Data for the study by Lamping et al. (2020) were taken from the condition with monopolar stimuli presented to the most-apical electrode of the Advanced Bionics implant.

continued

(b) Correlation between the logarithm of the rate discrimination ratio, for baseline rates between 80 and 120 pps, with duration of deafness and age. The sole correlation that was significant at the p < 0.05 level is shown in bold and did not survive correction for multiple comparisons. The study by Goldsworthy et al. (2022) included data for both ears of four listeners; the corresponding rate discrimination ratios were treated separately in the correlation with duration of deafness and averaged for the correlation with age. Data from Stahl et al. were averaged across the two electrodes studied and for a baseline rate of 104 pps. The study by Carlyon, Deeks et al. (2018) was analyzed as in part (a). Data from Lamping et al. (2020) were taken from Figure 8 of that paper for monopolar stimulation and an 80 pps baseline rate.

Correlational analyses are limited in that they cannot demonstrate causality. A longitudinal study by Carlyon et al. (2019) reported an increase in the upper limit between the day a participant's CI was first switched on compared to 2 months later. However, they noted that the stimulus level, equal to the most comfortable level at each session, had also increased, and so could not rule out the possibility that the improvement was due either to the increase in level or to practice. This latter issue is pertinent to another approach, adopted by Goldsworthy and colleagues (Bissmeyer et al., 2020; Goldsworthy & Shannon, 2014), who showed that rate discrimination by experienced CI listeners could be significantly improved by extensive training (Figure 2). Our view is that improvement with training has been reported for almost all tasks, can occur for many reasons (Ortiz & Wright, 2010), and does not necessarily reflect sensory plasticity. For example, participants may become more familiar with the experimental procedure, learn to use cues such as loudness or timbre that may co-vary with the temporal features of the stimulus, or become adept at “perceptual strategies” such as off-frequency listening in the measurement of psychophysical tuning curves observed in NH studies (Moore et al., 1984). Furthermore, if the especially poor rate discrimination at high rates (compared to lower rates) was due to speech processors failing to provide fast temporal fluctuations, then we might expect training effects to be larger at high than at low rates. This is because although CI speech processors do not preserve TFS, they do present pulse trains that are amplitude modulated at rates up to (but not beyond) a few hundred Hz (Figure 1b), and because listeners are sensitive to differences in those AM rates (e.g., Chatterjee & Oberzut, 2011). However, improvement on the rate discrimination task was either similar at all rates (Goldsworthy & Shannon, 2014; Figure 2a) or only significant at low pulse rates (Bissmeyer et al., 2020).

Finally, it is worth noting that variations in the upper limit and in ITD coding between electrodes, described above, reflect significant limitations on temporal processing that are unlikely to be driven by differences in exposure to CI processing strategies or to the duration of deafness, and that will likely limit the effectiveness of new attempts to improve sensitivity to these cues.

What Would Change My Mind?

We are not convinced that there is evidence that training or long-term exposure to TFS cues in adulthood can improve temporal pitch processing. An important consideration when evaluating the effects of any manipulation, including training, on pitch perception is the nature of the psychophysical task. This is especially the case when, as with temporal pitch at high rates in CI listeners, the pitch percept is weak, and the participant may learn to perform the task using other cues. Procedures involving forced-choice tasks are likely to be susceptible to the use of extraneous cues when the same or similar standard stimulus is presented on every trial, when correct-answer feedback is provided, and/or an odd-one-out trial structure is employed. Procedures such as the MPC that do not provide feedback and where different pairs of stimuli are presented on each trial are less susceptible to these effects, and so improvements in the upper limit with training/exposure are less likely to be attributable to the use of extraneous cues. Even so, one cannot completely rule out practice effects and either of two other approaches would be needed to convince us that training or extended exposure in adulthood can genuinely improve temporal pitch processing. One would be a change in an objective measure of phase-locking, for example, the electrically evoked frequency following response (Gransier et al., 2024). The other would be a selective transfer of training—for example, showing that improvements in a rate discrimination task transferred more strongly to ITD discrimination than to monaural electrode discrimination in bilaterally implanted listeners.

What If Anything Can Be Done to Improve the Temporal Processing of Pitch and Localization Cues by CI Listeners?

Although there are biological limits on temporal coding by CI listeners, it is also true that speech-processing strategies are far from optimized for pitch perception. Experimental approaches that enhance the modulations in each frequency channel and/or align the modulations across channels have produced modest but significant improvements in pitch perception with small groups of participants and may be worth more formal investigation in larger-scale trials (Francart et al., 2015; Lawrence, 1953; Milczynski et al., 2009; Vandali et al., 2019). Another approach, implemented commercially in some strategies, has been to present the TFS from the most-apical channels to the corresponding apical electrodes. This leads to different patterns of TFS being presented to each electrode, and so current spread could lead to apical neurons responding with a complex temporal pattern. We believe that a clearer pitch might emerge if the same pulse rate, perhaps derived from a real-time F0 tracker, was applied to these electrodes (de Groote et al., 2024).

Attempts to increase the upper limit and/or to improve pitch perception at low rates in CI listeners will depend on why performance is poor to begin with for these stimuli. One possible explanation arises from the observation that the traveling-wave delay for pure tones in NH is absent with electrical stimuli, and that the auditory system might “correct” for a delay that is not present with electrical stimulation. This would cause different parts of the excitation pattern to be processed with different delays, thereby blurring the temporal representation of each pulse (Šodan et al., 2024). If so, then stimulation methods that produce narrow excitation patterns might improve temporal coding. However, at least with existing technology, the maximum temporal processing expected from CI listeners is likely to be limited to that of NH listeners presented with analogous stimuli, which still falls short of that experienced by NH listeners in everyday situations. Finally, selective excitation of the apical AN might activate a pathway that is specialized for accurate temporal processing, as suggested by recordings from the cat inferior colliculus (IC; Middlebrooks & Snyder, 2010). Selective apical stimulation is unfortunately not available with existing CIs, because the only (MedEl) device that has an electrode array that reaches the apex only supports monopolar stimulation. Psychophysical studies that compared stimulation of the most-apical electrode of long MedEl arrays with more-basal stimulation reveal improved rate discrimination at low rates but no increase in the upper limit (de Groote et al., 2024; Stahl et al., 2016). A further limitation of traditional (intra-scalar) CIs in humans comes from the limited extent of Rosenthal's canal, meaning that the pattern of apical stimulation will significantly depend on the survival and trajectories of peripheral processes (Kalkman et al., 2014). However further investigations using electrodes that directly contact the AN in cats (Richardson et al., 2024) and humans (Adams & Lenarz, 2023) are currently in progress.

Ray Goldsworthy

To What Extent Are the Limits on CI Users’ Use of Purely Temporal Cues to Perceive the Pitch and Spatial Location of Sounds

(a) Due to a Fundamental Biological Limitation?

Pitch perception and sound localization vary widely across CI users. For example, people born deaf, who receive CIs after the age of three or 4 years, generally have just noticeable differences for pitch around 4 semitones (∼25%) (Zaltz et al., 2018). In contrast, many CI users with a history of normal hearing can discriminate pitches less than a semitone apart for a wide range of simple and complex sounds (Goldsworthy, 2015; Looi et al., 2012). This diversity in outcomes is consistent with animal studies of long- and short-term effects of deafness (Fallon et al., 2014a, 2014b). Specifically, severe abnormalities in the auditory pathways occur with early postnatal deprivation, whereas, these effects are reduced in mature animals with previous auditory experience (Hancock et al., 2010; Powell & Erulkar, 1962; Webster, 1983). Consequently, individual differences in hearing loss history affect the physiological limits of pitch and sound localization based purely on temporal cues.

The neural circuitry for temporal processing is exquisite. Golding and Oertel (2012) described how dendritic filtering of octopus cells of the cochlear nucleus (CN) compensates for traveling wave delays across AN fibers responding to broadband sounds. They also described how principal cells of the medial superior olive detect coincident activation of tuned neurons from the two ears through separate dendritic tufts. Pajevic et al. (2014) summarized how conduction velocity, mediated by myelin, provides an additional mechanism of activity-dependent nervous system plasticity. These mechanisms of temporal processing, dendritic filtering, and regulation of conduction time along axons are sensitive to auditory deprivation (Long et al., 2018). Thus, while temporal encoding of electrical stimulation is highly synchronized in the AN, it is likely that downstream processing is degraded by synaptic degeneration and myelin pathology. Critically, however, there is evidence that neuronal activity promotes oligodendrocyte progenitors, cell proliferation, and myelin formation along axons throughout the mammalian lifespan (Chapman & Hill, 2020; Sinclair et al., 2017; Williamson & Lyons, 2018). The extent to which stimulus-driven plasticity across the lifespan can overcome deficits caused by hearing loss and sensory deprivation is unknown.

(b) Modified by the Presence and Type of Electrical Stimulation That They Have Experienced?

There is evidence that the neural mechanisms that support temporal processing in the auditory system degenerate with sensory deprivation (Fallon et al., 2014b), but there is also evidence that experience-driven plasticity persists throughout the lifespan (Long et al., 2018; Seidl, 2014; Seidl et al., 2010; Sinclair et al., 2017). The extent to which electrical stimulation can modify the biological limits of pitch and localization based on temporal cues depends on the fidelity of the cues provided. Most CIs do not use stimulation timing to convey acoustic TFS (Goldsworthy, 2022; Goldsworthy & Bissmeyer, 2023; Svirsky, 2017). Consequently, there remains much uncertainty whether timing cues can be learned, or relearned, as a cue for pitch and localization.

My Estimate of Best Outcomes

A frequently discussed limit of timing cues for CIs is the upper limit of pitch based on stimulation rate. Many studies have reported that CI users weakly—or categorically cannot—hear pitch for pulse rates above 300 Hz (Carlyon et al., 2010; Shannon, 1983; Tong et al., 1982; Zeng, 2002). This upper limit strongly contrasts with the upper limit of usable TFS in normal hearing described by Verschooten et al. (2019). In that article, expert opinions of the upper limit of usable TFS in normal hearing ranged from 1500 Hz to 10 kHz. If any of those experts are correct, then an upper limit of 300 Hz for CI users is a considerable and unfortunate loss of information. I was a graduate student when I first learned that CI users could not hear pitch associated with pulse rates above 300 Hz. As a CI user, I immediately wanted to hear this for myself. After I received the training to perform such experiments, I started exploring my own limits of pitch based on pulse rate. When I first started, I could not discriminate between pulse rates above 300 Hz. I built a training procedure, described in Goldsworthy and Shannon (2014) to provide practice listening to pitch comparisons in a pulse-rate range centered on an individual's upper limit. That study found that CI users could improve their ability to rank pitch of pulse rates, and most of the participants attained just noticeable differences better than 3 semitones (∼20%) for pulse rates as high as 1760 Hz (A6 in the Western music tradition).

Because I am a CI user and a scientist leading studies of electrode psychophysics, I am in a unique position to describe the qualitative aspects of pulse-rate pitch. My implant, an N22 from Cochlear Corporation, has a technological upper limit around 3520 pps (A7). I routinely listen to pulse rates up to this technological limit when I am setting up new experiments. I can consistently discriminate pulse rates up to 3520 Hz with just noticeable differences of 2 semitones. I am often asked if the percept is pitch or some other percept. It is clearly pitch for pulse rates up to 440 Hz (A4), but the pitch salience diminishes between 440 and 880 Hz (A5), at which point the pitch is a weak, buzzy percept, but one that still allows distinction of pulse rates. Qualitatively, pulse rates above 880 Hz convey a sense of pitch height, but it is a weak percept. To make an estimate, the upper limit of temporal pitch in highly trained CI users is around 880 Hz with diminishing returns above that rate. Nevertheless, pulse rate provides a weak sense of pitch for rates as high as 3520 Hz (A7).

Perhaps more important than the upper limit is the corresponding lower limit of resolution. Studies have typically found that CI users can only discriminate rates that differ by 2 semitones or more (10%–20%) even for relatively low pulse rates between 100 and 300 Hz (Kong & Carlyon, 2010; Zeng, 2002). These lower limits of discrimination based on timing cues agree with observed limits in people with normal hearing listening to timing cues of varying temporal precision (Oxenham et al., 2004; Shackleton & Carlyon, 1994). Studies that use temporally less precise stimuli, such as AM sinusoids, typically find discrimination thresholds between two and three semitones (∼10%–20%), but those that use temporally precise acoustic pulse trains find thresholds of a half semitone (2%–3%) (Deeks et al., 2013; Kaernbach & Bering, 2001). Most studies of CI users find lower limits of resolution more like normal hearing for less precise timing cues (Kong & Carlyon, 2010; Zeng, 2002); however, there is evidence that CI users can take advantage of higher temporal precision provided by variable pulse rates compared to AM pulse trains (Baumann & Nobbe, 2006; Goldsworthy et al., 2021, 2022), and that discrimination for temporally precise stimuli improves to better than a semitone with training (Goldsworthy & Shannon, 2014). Given these considerations, I estimate that the lower limit of discrimination for CI users is about a half semitone (2%–3%) for pulse rates as high as 440 Hz.

Unlike pitch based on stimulation rate, I have no experience listening to interaural aural timing differences since I am unilaterally implanted with no residual hearing in my right ear, but the literature describes CI users as typically having just noticeable differences for interaural timing differences around 200 μs or worse for pulse trains presented to pitch-matched electrode pairs (Kan & Litovsky, 2015; Laback et al., 2015). This notably poor detection of interaural timing differences worsens with increasing pulse rate. There is an upper limit associated with increasing pulse rate and a lower limit of resolution within the usable range. The best outcomes reported in the literature indicate that CI users attain lower limits less than 100 μs for pulse rates as high as 1000 Hz (van Hoesel et al., 2009). This resolution observed in laboratory assessments of interaural timing sensitivity is remarkable given that clinical devices do not synchronize stimulation. Best outcomes for interaural timing discrimination might improve to better than 20 μs for pulse rates up to 1000 Hz once CI users are provided coordinated and synchronized bilateral stimulation.

What Would Change My Mind?

To better characterize the limits of timing cues for pitch and sound localization, studies should provide CI users with timing cues in a clear and consistent manner while providing them extended exposure, familiarization, and training for these new cues. Deep longitudinal assessments, with participants followed over months and years, would characterize learning as participants approach their peak potential. This approach should incorporate engaging games to encourage attention and motivation for learning. A study that assesses learning of stimulation timing in a dozen participants, with timing cues precisely provided using psychophysical methods and synchronized hardware, with participants receiving hundreds of hours of familiarization and training, would demonstrate the extent that learning persists and thus could change my mind as to the limits of timing cues for pitch and localization.

Likewise, studies of new, fully implemented, stimulation strategies could also better characterize the limits of timing cues for pitch and localization. The problem with prior studies is that there is too much uncertainty as to how well new strategies encode timing cues. Future studies should provide better stimulation monitoring, for example, by recording stimulation patterns during everyday exposure. Similar tools already exist on clinical processors but with limited capacity. Future experiments could record daily stimulation as evidence that the new strategy does, in fact, encode timing cues with precision. A study that assesses pitch or localization with new stimulation strategies designed to encode acoustic TFS, while providing stronger evidence that the strategy effectively encodes timing cues, would demonstrate the limits of learning for these new cues, and thus could also change my mind.

What if Anything Can Be Done to Improve the Temporal Processing of Pitch and Localization Cues by CI Listeners?

My central hypothesis is that CI users can learn to use timing cues for pitch and localization if these cues are provided in a clear and consistent manner. I believe that existing stimulation strategies for CIs do not provide these cues in a clear and consistent manner. Specifically, timing cues of nearby electrodes are smeared by current spread, thus degrading neural representation, which was also suggested by van Hoesel (2007). If current spread is a primary limitation for transmitting acoustic into neural representation of TFS, then there are both short- and long-term solutions. A short-term solution would be stimulation strategies modeled after peak-derived timing (PDT) or fine structure processing (FSP) but that provide relatively sparse spectral stimulation for electrode regions where temporal cues are most important (i.e., low frequencies). Unlike existing implementations of PDT and FSP, which attempt to convey TFS for all harmonics of a periodic sound, a spectrally sparse representation would provide a single place of stimulation across two or three electrodes with a covarying stimulation rate to represent fundamental frequency. There is evidence that CI users have better discrimination when stimulation place and timing cues covary than with either cue alone (Bissmeyer & Goldsworthy, 2022). Though there is evidence that this advantage may be a combination of independent cues rather than a dependent synergy (McKay et al., 2000), we note that for a dependent synergy to arise, the covaried place-rate stimulation would need to be consistently provided, thus affording the listener opportunity to learn (Keysers & Gazzola, 2014). The long-term solution may depend, ironically, on improving place precision of stimulation. The many efforts to improve place of stimulation using intraneural, magnetic, and optic stimulation, perhaps combined with neurotrophic support may lead to better specificity for place of excitation (Lee et al., 2022; Middlebrooks & Snyder, 2007; Moser & Dieter, 2020). In so doing, these solutions for providing better place of excitation might also provide independent neural channels for processing TFS.

The auditory system is justly celebrated for its remarkable tonotopic and temporal response properties. The importance of resolved harmonics for pitch and of low-frequency interaural timing differences for localization is clearly established. Many people might first think of place-of-excitation cues when considering resolved harmonics, but resolved harmonics also provide separate neural processing channels for TFS. Existing electrode arrays, combined with current spread in the cochlea, do not provide resolved place-of-excitation cues for densely spaced harmonics; consequently, they also do not provide separate processing channels for TFS for all harmonics of a complex sound. Recognizing this, I believe that stimulation strategies that provide focused delivery of the fundamental frequency of a complex sound into a clear and consistent combined place-rate stimulation cue have the best potential for improving both pitch and localization for CI users.

Ruth Litovsky

To What Extent are the Limits on CI Users’ Use of Purely Temporal Cues to Perceive the Pitch and Spatial Location of Sounds

(a) Due to a Fundamental Biological Limitation?

Individuals with normal hearing (NH) are known to utilize binaural cues to determine the location of a sound source in the horizontal plane and to distinguish target speech from background noise (Litovsky et al., 2021). These cues consist of ITDs and interaural level differences (ILDs). NH listeners typically have excellent sensitivity to both ITDs and ILDs, but they rely more heavily on ITDs at low frequencies to localize broadband sound sources (Blauert, 1996; Macpherson & Middlebrooks, 2002). Early studies in bilaterally implanted patients demonstrated that, while two CIs result in better spatial hearing abilities than unilateral CIs, performance seen in bilateral CI users is worse than performance of NH listeners. Various factors have been considered to contribute to this gap in performance, with temporal coding being one of the most significant culprits. Research findings discussed below suggest that temporal coding is impacted both by today's clinical CI speech processors, which are not designed to preserve finely controlled low-frequency ITDs, and by alterations to the auditory system due to deprivation during periods of deafness.

In bilateral CI users, clinical speech processors pose several notable limitations. Each CI processor is fitted independently to each ear, without any obligatory coordination or synchronization of inputs to the two ears. The term “synchronization” used here denotes the timing of sampling by the analog-to-digital converter and the timing of electrically pulsed stimulation delivered to specific electrodes in the right and left ears. A related issue is the actual limited encoding of binaural cues. First, if the processors are not simultaneously activated, a constant offset can occur between the two processors, ranging from −550 to +550 μs for stimulation rate of 900 pps as illustrated in Figure 1d and demonstrated by van Hoesel et al. (2002). Second, jittered timing between the processors in the two ears could occur due to the two processors having independent timing clocks that may drift over time. Third, some CI speech processing strategies (e.g., ACE) rely on “peak-picking” in which acoustic inputs are used to determine which set of channels are activated at each moment in time, and thus likely to have differences in channels at the two ears which minimize binaural cues (Kan et al., 2018). Even if these issues did not present limitations, signal processing strategies used in today's CI processors are inherently problematic. In general, TFS of the acoustic input is replaced with fixed-rate stimulation that is typically around 1000 pps—a rate that is too high for CI users to extract usable low-frequency ITDs (Laback et al., 2015; van Hoesel et al., 2009). The limitations serve as an important lens through which we can view the impact of experience with temporally coded inputs, as discussed further below.

Using research processors that bypass the clinical processors, researchers can electrically stimulate selected pairs of electrodes in the right and left ears. The unique nature of such studies is that electrode pairs are deliberately coordinated with precisely controlled timing to the two ears. Studies to date show enormous variability in sensitivity to ITDs across groups of bilateral CI users (Kan et al., 2013; Laback et al., 2015; Litovsky et al., 2012; Thakkar et al., 2020). This variability has been shown for various stimuli, with much of the data focusing on low stimulation rate of 100 pps, which is known to produce best performance, that is, lowest ITD discrimination thresholds. At 100 pps, the range of ITD thresholds found in adult bilateral CI listeners extends from a few tens of μs (within normal limits) to over 1000 μs (Cleary et al., 2022; Thakkar et al., 2020). The poor sensitivity of many CI listeners, even when presented with optimized stimuli, whereby the limitations imposed by the speech-processing strategy are bypassed, reflects a basic inability of the auditory system to process interaural timing cues. In the following section, I will argue that this reflects a basic biological limitation that arises from a combination of deprivation of binaural cues and exposure to suboptimal processing strategies.

(b) Modified by the Presence and Type of Electrical Stimulation They Have Experienced

A number of studies have shown that the across-listener variability can be attributed to age- and experience-related factors. The age at which onset of deafness occurs is an especially important factor; individuals whose auditory system has received normal acoustic input during development are more likely to retain sensitivity to ITDs than individuals who were deprived of acoustic hearing early in life. Litovsky et al. (2010) identified age at onset of deafness as a potential factor to consider, in a relatively small N size of patients. Thakkar et al. (2020) then measured sensitivity to ITDs and ILDs in the largest cohort known to date, 46 adult bilateral CI users who varied as to whether they had onset of deafness pre- or post-language acquisition. They found that binaural sensitivity was best in individuals who experienced shorter duration of bilateral hearing impairment, who had greater duration of experience with CIs, and who were younger at the time of testing. However, it is important to note that very few of these listeners show ITD sensitivity within the range of that observed in NH listeners. This is not surprising, given that in their daily lives bilateral CI users do not receive ITD cues with fidelity through their clinical processors. The impact of years of deprivation is also a likely factor. Notably, sensitivity to binaural cues is not affected uniformly—while ITD sensitivity in adults is clearly impacted and difficult to restore to CI users, sensitivity to ILDs might be less impacted (Litovsky et al., 2010; Thakkar et al., 2020). A deeper understanding of the extent to which ILD processing is impacted is needed, as some evidence suggests that even ILD processing is not on par with that of NH listeners, including both adults (Litovsky et al., 2010; Thakkar et al., 2020) and children (Easwar et al., 2017; Ehlers et al., 2017; Salloum et al., 2010).

Studies in children who are bilaterally implanted show that neural circuitry involved in binaural processing can fail to develop properly, as indicated by asymmetry of brainstem function shown by differences between the right and left auditory pathways in brainstem response latencies (Steel et al., 2015). Downstream effects on cortical asymmetries have also been shown (Lee et al., 2020; Polonenko et al., 2017). It is also possible that binaural neural circuits develop in early life but deteriorate after onset of deafness (Kral, 2013; Polonenko et al., 2018). Studies in animals that are deafened either neonatally or during early development have defined early periods involved in the maturation of auditory circuitry and pathways at the level of cellular morphology, molecular and synaptic properties, and tuning to ITD. Binaural processing depends on very precise timing of neural responses (spikes) from the left and right AN, in order for brainstem mechanisms to code ITDs with fidelity. Additionally, inhibitory synapses onto neurons in the brainstem are refined in substantial ways through synaptic and structural alterations during auditory development; importantly, these refinements in NH animals depend on auditory input and experience and are at risk for deterioration due to deafening (Kapfer et al., 2002; Werthat et al., 2008). Studies conducted in animals who receive CIs also suggest that binaural processing is impacted by degraded balance of inhibitory and excitatory inputs, and is associated with poor tuning of neuronal ITD properties in the auditory brainstem and cortex in deafened, implanted animals (Chung et al., 2016; Hancock et al., 2013; Jakob et al., 2019; Tillien et al., 2010). To date, little is known about how such disruptions and alterations are controlled or prevented. It stands to reason that in humans who are deaf and deprived of access to acoustic hearing, the neural mechanisms involved in processing ITD cues may be at risk for permanent disruption with limited potential for restoring processing to NH levels of functioning. This problem is intricately related to the fact that, if children grow up with bilateral CIs that fail to deliver low-rate, synchronized, and well-preserved ITDs, their auditory system is likely to eventually lose the capacity to have sensitivity to ITDs restored with future generation of signal processing strategies that provide access ITDs. Furthermore, even in one ear alone, encoding of TFS in electrical stimulation by CI users is limited by stimulation rates that are higher than about 300 pps. There is an extensive literature (covered elsewhere in this paper) discussing the problems with sensitivity to temporal properties of electrical stimulation, which deteriorates at much lower rates than seen in NH listeners. Rate limitations were shown in monaural stimulation (e.g., Carlyon et al., 2008; Kong et al., 2009; Kong & Carlyon, 2010; McDermott & McKay, 1997; Shannon, 1983; Zeng, 2002) and in binaural stimulation (Carlyon et al., 2008; van Hoesel 2007; van Hoesel et al., 2009). Critically, there is evidence to suggest that monaural rate sensitivity and binaural sensitivity for ITDs may be limited by a shared mechanism (Ihlefeld et al., 2015). The extent to which the shared mechanism reflects information transmission, health of neural elements, or integrity of electrode–neuron interface remains to be determined.

What Would Change My Mind?

Thus far, this section has focused on how limitations in today's CIs limit the ability of bilateral CI users to fully benefit from binaural hearing. For children, the greatest risk is disruption to the neural mechanisms involved in ITD processing and downstream effects on central processing of binaural information. My mind regarding this limitation would change if data suggested that infants and young children who are exposed to ITDs early in life do not achieve the same level of performance as peers with NH. That outcome would likely occur if electrical stimulation cannot achieve the same type of processing as acoustic stimulation and/or if the underlying neural infrastructure of deaf infants and children is differently wired and simply cannot decode and encode low-frequency ITDs in such a way that provides benefits observed in NH listeners. Such a finding would potentially place stronger pressures on advancement of genetic testing and biologically based treatment for deafness with approaches such as gene therapy and/or regeneration.

What, If Anything, Can Be Done to Improve CI Performance for Pitch and Binaural Time Processing?

The clearest potential approach to modifying and improving temporal coding by experience in childhood is to ensure that infants who are deaf and are implanted with bilateral CI devices can receive binaural cues with fidelity. That will mean engineering bilaterally synchronized devices that operate successfully in everyday environments. The devices must be able to minimally (1) capture binaural cues at multiple frequency channels, (2) preserve low-frequency onset and ongoing ITDs with precision known to occur in normal acoustic hearing, (3) preserve speech envelope cues in at least some of the channels, and (4) process multi-source information and reverberation. One possibility is the CCi-MOBILE device, which is a portable research device compatible with Cochlear Ltd. (Ghosh et al., 2022; Hansen et al., 2019), with potential to be extended to other CI manufacturers. The CCi-MOBILE is bilaterally synchronized; thus, it operates using a single time clock to simultaneously extract information from two microphones and deliver coordinated stimulation to two CI processors. The CCi-MOBILE is the only portable research processor that can operate without being tethered to a computer, and that is capable of real-time processing, with the potential to account for the hardware limitations described above. The device has been recently implemented with the use of envelope ITDs (Dennison et al., 2023) and has the potential to be further developed for coding TFS and very small ITDs at low frequencies. If these devices are not available in clinical applications, perhaps an interim step can be taken to offer take-home devices that allow listening through computer interfaces to stimuli that are processed with binaurally preserved cues. That would minimally provide the developing auditory system with daily exposure to the information that is needed for optimal coding of temporal cues. Such an approach will need to be investigated in clinical trials, with outcome measures that focus not only on temporal coding and binaural sensitivity, but downstream effects on cognitive abilities, listening effort, and more generalized aspects of speech understanding and language development. If these “interim” interventions were available, they could be used to prepare the brain to take advantage of improved temporal coding and binaural processing when those processors become clinically implementable.

The ultimate solution is to promote reengineering of CIs such that signal processing encodes and transmits TFS cues with fidelity, while preserving speech envelope cues. The general idea is to convey ITDs in the timing of electrical pulses on some channels by firing them at low stimulation rates but preserving high rates at other electrodes. By sending low-rate stimulation to some electrodes, and high-rate stimulation to other electrodes, it may be possible to transmit ITDs at the low rates and to preserve speech envelope cues at electrodes receiving high rates. Over the years, approaches included the PDT strategy (van Hoesel, 2007), the FSP/FS4 strategy which is designed to slow down the repetition rate to follow the instantaneous TFS frequency by introducing a pulse at each positive-going zero crossing in the bandpass filter output of a channel (Hochmair et al., 2006; Zirn et al., 2016); these strategies were not yet been shown to benefit bilateral CI users. Litovsky et al. have been testing a mixed-rate strategy approach which deliberately sends low-rate stimulation to interaural pairs of electrodes that are pre-tested based on knowledge that they produce good ITD sensitivity, and high rates to other electrodes to preserve speech cues (Thakkar et al., 2018, 2023). This approach has shown promising results thus far for preserving ITD sensitivity, but its efficacy for preserving speech cues remains to be seen. Another approach, a temporal limits encoder strategy (Zhou et al., 2022) was suggested as a means of improving pitch discrimination and tone recognition in languages such as Mandarin (Zhou et al., 2022). By down-transposing mid-frequency channel information at restricted bands to lower frequencies, envelope modulations are slowed down, and when used bilaterally, this strategy has the potential to encode ITDs within the down-transposed envelope modulations (Kan & Meng, 2021). Again, studies to date have shown modest outcomes, in bilateral CI listeners. Importantly, while in acoustic hearing low-frequency ITDs are known to be processed in the apical region of the cochlea, when selecting electrodes for delivery of temporal information such as ITDs, stimulation need not be presented to apical electrodes; in fact, mid- or basal-stimulation can produce best ITD sensitivity in many listeners (Litovsky et al., 2010, 2012; Thakkar et al., 2020). This idea is critical in the future design of novel stimulation strategies, as it must consider the fact that neural health varies along the electrode arrays in each ear, and across individual CI users. The variation is a complex product of effects of auditory deprivation, trauma, and many factors that impact survival and function of the AN, as well as neural processes at the brainstem and beyond.

Could Performance in CI Theoretically Match That of NH Listeners One Day?

Theoretically, yes! The key factor is providing CI users with stimulation that mimics acoustic hearing as much as possible. If signal processing strategies can be designed as such, then infants and children who are congenitally deaf or children who would receive appropriate binaural and pitch cues from a young age have the potential to experience auditory development on par with that of NH listeners. Adults who experience deafness after they have already developed a normal auditory system through acoustic hearing would then benefit from the same improved engineering processes that are akin to inputs enjoyed by NH listeners.

Bertrand Delgutte and Yoojin Chung

To What Extent Are the Limits on CI Users’ Use of Purely Temporal Cues to Perceive the Pitch and Spatial Location of Sounds

(a) Due to a Fundamental Biological Limitation?

The deficits in the perception of temporal cues in users of CIs are due to fundamental neural limitations that are influenced by auditory experience, especially during development. These limitations are not primarily of peripheral origin, but rather result from a reduced ability of the central processor to make effective use of the temporal cues delivered in the ANs. Our opinion derives from comparing data on responses of auditory neurons to electric stimulation in animal models of CIs with perceptual results in human CI users. We primarily discuss responses to constant-amplitude, electric pulse trains, which are the simplest stimuli for understanding fundamental limitations on temporal processing.

Exaggerated Temporal Coding in the Auditory Nerve with Electric Stimulation

In NH animals, AN fibers phase lock to sinusoidal acoustic stimuli (pure tones) for frequencies up to 3–5 kHz (Johnson, 1980; Palmer & Russell, 1986), and this limit is probably no higher in humans (Verschooten et al., 2018). In contrast, AN fibers phase lock to sinusoidal electric stimuli up to at least 10 kHz, more than an octave higher than for acoustic stimuli (Dynes & Delgutte, 1992; Hartmann et al., 1984). For pulse-train stimuli like those used in most CI processors, synchronization to pulse rates as high as 5000 pps has been reported in cat AN fibers (Miller et al., 2008). Moreover, AN fibers can entrain (fire one synchronized spike per pulse) to electric pulse trains for rates up to 800 pps, much higher than for acoustic stimulation (Javel & Shepherd, 2000; Shepherd & Javel, 1997).

The very high limit of synchronization of AN firings to electric pulse trains observed in experimental animals also applies to human CI users. The ECAP of the human AN has been isolated in response to individual pulses in a pulse train for rates as high as 3500–4000 pps (Hughes et al., 2012; Tejani et al., 2017). The presence of such synchronized responses in the ECAP implies not only that a large number of AN fibers synchronize to the pulse train, consistent with the single-fiber recordings in animals, but also that the AN firings are synchronized to each other (“across-fiber synchrony”). The precise synchrony of AN fibers is observed not only for constant-amplitude pulse trains, but also to the modulation waveform of AM pulse trains, in both animals (Jeng et al., 2009) and human CI users (Tejani et al., 2017). The exaggerated synchrony observed in the AN with electric stimulation also holds for neurons in the anteroventral CN and the medial nucleus of the trapezoid body (MNTB), two auditory brainstem nuclei involved in binaural processing (Müller et al., 2023). These results suggest that the perceptual limits on rate pitch (Carlyon & Deeks, 2002; Kong et al., 2009) and binaural interactions (Laback et al., 2015) with CIs are not caused by a lack of precise temporal information in the peripheral inputs.

The massive across-fiber synchrony occurring with electric stimulation results from several factors, including: (1) the spatial patterns of AN excitation along the tonotopic axis of the cochlea are broader for electric stimulation than for pure tone stimuli; (2) the cochlear traveling wave disperses the latencies of AN fibers to broadband acoustic stimuli such as clicks, while there is no traveling wave with electric stimulation; (3) AN fibers fire to electric stimuli more deterministically (less stochastically) than they do for acoustic stimuli (Kiang & Moxon, 1972). The massive across-fiber synchronization in electric stimulation may impair the ability of central circuits for binaural (ITD) and pitch processing to make use of the available temporal information.

Central Limitations on ITD Sensitivity with CIs

The initial stages of ITD processing in the lateral and medial superior olives (LSO and MSO) in NH animals are relatively well understood (Grothe & Pecka, 2014; Joris & van der Heijden, 2019; Yin et al., 2019). Unfortunately, no study has yet recorded responses of MSO and LSO neurons to bilateral electric stimulation through CIs; the available data are mostly from the IC in the midbrain, to which both LSO and MSO project. MSO and LSO neurons transform interaural differences in the timing of their spike inputs into changes in firing rate via a process of coincidence detection (or anticoincidence for LSO). Following this transformation, ITDs are primarily represented by a rate code rather than a temporal code in the IC and beyond (Fitzpatrick et al., 1997, 2000, 2002).

For optimal stimulus conditions, many IC neurons in acutely deafened animals are sensitive to ITDs of bilateral electric pulse trains, in proportions comparable to those observed for broadband acoustic stimuli in NH animals, and the shapes of ITD tuning curves resemble those observed for acoustic stimulation (Chung et al., 2016; Smith & Delgutte, 2007; Sunwoo & Oh, 2022; Vollmer, 2018). However, good ITD sensitivity only occurs over a narrow range of pulse rates and stimulus levels. For most IC neurons in anesthetized preparations, ITD sensitivity is limited to the onset of electric pulse trains for pulse rates above 100 pps (Smith & Delgutte, 2007; Sunwoo & Oh, 2022). Sensitivity to ongoing ITDs at higher pulse rates is more common in the IC of unanesthetized animals (Chung et al., 2016) and in neurons that respond to apical stimulation of the cochlea (Sunwoo & Oh, 2022). The rate limitations observed in neural responses to electric stimulation are consistent with the low limit of perceptual ITD sensitivity in CI users (Laback et al., 2015) but contrast with the responses to isolated pairs of binaural pulses reported in the rat (Buck et al., 2021; see section by Schnupp & Rosskothen-Kuhl). These low limits contrast with the ∼2000 Hz limit of neural ITD sensitivity to the ongoing temporal time structure in NH animals (Devore & Delgutte, 2010; Joris, 2003; Yin & Kuwada, 1983) and the ∼1400 Hz limit of perceptual ITD sensitivity in human NH listeners (Brughera et al., 2013).

It has been suggested that the poor ITD sensitivity with CIs results from a switch from MSO dominance in NH to LSO dominance with CI because CI devices may not reach sufficiently far into the cochlear apex to stimulate the MSO neurons, which are tuned primarily to low frequencies (e.g., Dietz, 2016; Müller et al., 2023). A further argument for this view is that perceptual ITD sensitivity in bilateral CI users is more in line with the sensitivity to envelope ITDs thought to be created in the LSO than with the sensitivity to ITDs in the TFS created in the MSO. While this view has the appeal of simplicity, it fails to account for important observations. Many IC neurons in deaf animals show peak-type ITD tuning for electric stimulation similar to the tuning of MSO neurons in NH animals (Chung et al., 2016; Smith & Delgutte, 2007; Sunwoo & Oh, 2022), and many neurons sensitive to ITD with electric stimulation in a preparation with preserved hearing are tuned to low acoustic frequencies in the range of MSO neurons (Vollmer, 2018). Whole-cell recordings in NH animals show that, contrary to the view that LSO neurons are sluggish compared to MSO neurons (Remme et al., 2014), LSO principal cells in fact display better ITD sensitivity than MSO neurons for click stimuli resembling the pulses used for electric stimulation (Franken et al., 2021). Thus, the low rate limit of ITD sensitivity with electric stimulation may not be caused by a failure to effectively stimulate the MSO circuit with CIs, but rather by a degraded sensitivity in both LSO and MSO.

We suggest that the abnormally broad spatial patterns of excitation and the excessive across-fiber synchrony produced in the AN by electric stimulation may engage inhibitory and other suppressive mechanisms in the brainstem more effectively than acoustic stimulation, thereby blocking excitatory responses at high pulse rates. Excessive synchrony in the inputs to MSO may also increase monaural coincidences, leading to degraded ITD sensitivity (Chung et al., 2015).

Central Limitations on the Coding of Temporal Pitch with CIs

In NH animals, the ability of central auditory neurons to phase lock to either the TFS or the temporal envelope of acoustic stimuli tends to degrade as one ascends the auditory pathway (Joris et al., 2004; Liu et al., 2006). As the temporal code degrades in the IC and beyond, the repetition rate of acoustic stimuli is increasingly represented by a rate code, whereby the firing rates are tuned to the envelope repetition rate of AM tones and harmonic complex tones (Joris et al., 2004; Lu et al., 2001; Nelson & Carney, 2007; Su & Delgutte, 2019). In principle, the stimulus repetition rate can be decoded from the across-neuron pattern of firing rates in a population of neurons whose firing rates are tuned to different repetition rates.

Both the temporal code (Chung et al., 2014; Hancock et al., 2013; Middlebrooks & Snyder, 2010; Snyder et al., 1995, 2000; Vollmer et al., 1999, 2005) and the rate code (Chung et al., 2014; Hancock et al., 2012; Snyder et al., 1995) are also present for electric pulse trains in the IC of implanted animals, but the two codes are subject to different limitations.

Su et al. (2021) directly compared the limits of synchronization to electric pulse trains in the IC of unanesthetized rabbits with the limits for the most comparable acoustic stimulus, a click train. The synchronization limits were higher for electric stimuli (median 206 pps) than for acoustic stimuli (112 pps). Thus, in the IC like in the AN, performance with CIs is not limited by a reduced availability of temporal information in the neural firing patterns. Importantly, in contrast to the temporal code, a lower range of pulse rates was represented by the rate code in the IC of CI animals compared to NH animals.

In most IC neurons, the limit of synchronization to electric pulse trains is lower than the ∼300 pps limit of temporal pitch perception in most human CI users (Carlyon & Deeks, 2002; Kong et al., 2009), suggesting that pitch perception at higher pulse rates is likely to rely on the rate code. The degradation in rate coding observed in the IC with electric stimulation is consistent with the lower limit of rate pitch perception for CI users compared to NH listeners. Still, the distribution of synchronization limits across the neuronal IC population is quite broad, so that some neurons still synchronize to pulse trains at 300 pps, and these synchronized neurons are particularly common in the IC region responsive to stimulation of the cochlear apex (Middlebrooks & Snyder, 2010).

The rate code to repetition rate is even more important in the ACx, where the limits of neural synchronization to electric pulse trains (Beitel et al., 2011; Fallon et al., 2014b; Johnson et al., 2017; Vollmer & Beitel, 2011), are much lower than in the IC, and below the range over which periodic pulse trains evoke pitch percepts.

The mechanisms for the transformation from a temporal code in the auditory periphery to rate codes in the IC and above are not fully understood. In one model, bandpass rate tuning is created via the interaction of fast excitation and slower, delayed inhibition (Hancock et al., 2017; Nelson & Carney, 2004; Smith & Delgutte, 2008). If so, the degradation in rate coding observed in the IC of deaf animals with CIs is consistent with the disrupted inhibition associated with hearing loss (Takesian et al., 2009).

Unlike ITD sensitivity, which is ultimately limited by the temporal windows of coincidence detection in LSO and MSO, the limitations on the coding of rate pitch are likely to arise in the IC and beyond where the transformations from a temporal code to a rate code take place. Because some of the inputs to the IC bypass MSO and LSO, the limitations on rate pitch may differ from those on ITD sensitivity. Consistent with this hypothesis, a new analysis of the data from Sunwoo et al. (2021) reveals no across-neurons correlation between the upper frequency limits of ITD sensitivity and synchronization to pulse trains in the IC of deaf rabbits. This result contrasts with the finding of a correlation between performance in monaural rate discrimination and performance in ITD discrimination in a modest number of CI subjects (Ihlefeld et al., 2015).

(b) Modified by the Presence and Type of Electrical Stimulation That They Have Experienced?

ITD Sensitivity

Deafness history and auditory experience with CIs can influence ITD sensitivity with bilateral electrical stimulation. A decreased incidence of ITD-sensitive units and poorer ITD sensitivity was observed in the IC of congenitally deaf cats (Hancock et al., 2010), and in neonatally deafened (ND) rabbits (Chung et al., 2019), cats (Thompson et al., 2021), and rats (Sunwoo, 2023) compared to animals with normal hearing during development. Similar trends have been observed in the auditory cortex (ACx) of congenitally deaf cats (Tillein et al., 2010). Modest improvements in ITD sensitivity have been reported in neonatally deafened animals that were provided with ITD cues through bilateral CIs during development (Sunwoo et al., 2021; Thompson et al., 2021).

Contrary to these trends, Rosskothen-Kuhl et al. (2021) reported behavioral ITD sensitivity comparable to normal in neonatally deafened rats that were implanted in adult age. (The neurophysiological results also presented in this paper cannot be meaningfully compared with those from other studies because they used single electric pulses rather than pulse trains.) This result contrasts with the poor perceptual ITD sensitivity in prelingually deaf human bilateral CI listeners (Ehlers et al., 2017; Laback et al., 2015). The authors suggest that maladaptive plasticity to conventional continuous interleaved sampling (CIS) processing might cause the poor perceptual ITD sensitivity in CI listeners. While this view may be plausible for prelingually deaf CI users, it cannot explain the poor ITD sensitivity in subjects with normal auditory development who became deaf in adulthood. To directly test this hypothesis, ITD sensitivity should be compared between unstimulated adult-deafened animals and animals given stimulation lacking ITD cues.

Pitch Processing

The limits of neural synchronization to electric pulse trains in the IC and ACx of CI animals are also influenced by deafness history (Hancock et al., 2013; Vollmer et al., 2005) and auditory experience with the CI (Snyder et al., 1995; Vollmer et al., 1999, 2005, 2017a), but the effects in the IC are modest. These studies were focused on temporal coding and did not analyze effects on the rate code which is likely to be important at higher pulse rates.

What Would Change Your Mind?

Since we poorly understand how the abnormal spatiotemporal patterns of peripheral activity with CI may result in poor temporal and ITD coding by central neurons, new experiments are needed to unravel these mechanisms. Direct recordings from the primary sites of binaural interaction in LSO and MSO are needed to test the hypothesis that the deficits result from ineffective stimulation of the MSO. Alternatively, recordings from the IC and ACx, combined with selective manipulation of neural activity in LSO and MSO by optogenetic or pharmacological techniques, would be valuable. More studies are also needed to compare temporal coding and ITD sensitivity between CI and NH in the same species and using the same methods and comparable stimuli. These include studies using electric stimulation in animals with preserved hearing, so that the same neurons can be studied with both forms of stimulation.