Abstract

Cochlear synaptopathy, a form of cochlear deafferentation, has been demonstrated in a number of animal species, including non-human primates. Both age and noise exposure contribute to synaptopathy in animal models, indicating that it may be a common type of auditory dysfunction in humans. Temporal bone and auditory physiological data suggest that age and occupational/military noise exposure also lead to synaptopathy in humans. The predicted perceptual consequences of synaptopathy include tinnitus, hyperacusis, and difficulty with speech-in-noise perception. However, confirming the perceptual impacts of this form of cochlear deafferentation presents a particular challenge because synaptopathy can only be confirmed through post-mortem temporal bone analysis and auditory perception is difficult to evaluate in animals. Animal data suggest that deafferentation leads to increased central gain, signs of tinnitus and abnormal loudness perception, and deficits in temporal processing and signal-in-noise detection. If equivalent changes occur in humans following deafferentation, this would be expected to increase the likelihood of developing tinnitus, hyperacusis, and difficulty with speech-in-noise perception. Physiological data from humans is consistent with the hypothesis that deafferentation is associated with increased central gain and a greater likelihood of tinnitus perception, while human data on the relationship between deafferentation and hyperacusis is extremely limited. Many human studies have investigated the relationship between physiological correlates of deafferentation and difficulty with speech-in-noise perception, with mixed findings. A non-linear relationship between deafferentation and speech perception may have contributed to the mixed results. When differences in sample characteristics and study measurements are considered, the findings may be more consistent.

Introduction

Cochlear synaptopathy, the loss of the synaptic connections between the inner hair cells (IHCs) and their afferent auditory nerve fiber targets, has been a topic of great interest ever since it was first described in mice (Kujawa & Liberman, 2009). Since the publication of that seminal paper, a large number of studies have been conducted in an attempt to answer two main questions: (1) Does cochlear synaptopathy occur in humans? and (2) What are the perceptual impacts of cochlear synaptopathy? It is now relatively well accepted that synaptopathy occurs in humans with age (Wu et al., 2019) and there is both temporal bone and physiological data suggesting that synaptopathy also occurs in response to military or occupational noise exposure (Bramhall et al., 2017, 2021, 2022; Wu et al., 2021). However, answering the question about the perceptual consequences of synaptopathy is particularly challenging because synaptopathy can only be confirmed in humans through post-mortem temporal bone analysis and perception is difficult to evaluate in animal models. We can address these challenges by carefully identifying links between the animal and human literature. In this review, we will summarize the research that has been conducted in both animals and humans to answer this important question.

Identification of Cochlear Synaptopathy in Humans

Two different approaches have been used to identify synaptopathy in humans: post-mortem temporal bone analysis and non-invasive physiological correlates of synaptopathy.

Post-mortem Temporal Bone Analysis

Human temporal bone analysis allows for post-mortem quantification of the number of auditory nerve fibers (ANFs) per inner hair cell (a measure of the degree of synaptopathy), outer hair cells (OHCs), inner hair cells, and spiral ganglion cells. These counts can then be analyzed relative to risk factors for synaptopathy, such as age (Wu et al., 2019) and noise exposure history (Wu et al., 2021). Wu et al. (2019) observed that age-related loss of ANFs outpaced OHC, IHC, and spiral ganglion cell loss, with approximately 70% of OHCs, 90% of IHCs, and 85% of spiral ganglion cells remaining at age 60 years, but only 50% of ANFs. Wu et al. (2021) evaluated ANF loss in temporal bones from adults with a significant history of occupational or military noise exposure. They found that temporal bone donors, aged 50–74 years, with occupation/military noise exposure showed a 25% reduction in ANF survival across the cochlear spiral compared with age matched controls without a significant noise exposure history. Although temporal bone analysis has been helpful in assessing the impacts of age and noise exposure on ANF survival in humans, it is more difficult to make conclusions about the perceptual impacts of synaptopathy based on temporal bone data because perceptual measures from temporal bone donors may be non-existent or they may have been collected many years prior to death.

Physiological Correlates of Cochlear Synaptopathy/Deafferentation

Non-invasive physiological measures that have been shown to be sensitive to synaptopathy in animal models include the amplitude of wave 1 of the auditory brainstem response (ABR; Hickox et al., 2017; Kujawa & Liberman, 2009; Lin et al., 2011; Parthasarathy & Kujawa, 2018), the envelope following response (EFR; Parthasarathy & Kujawa, 2018; Shaheen et al., 2015), and the middle ear muscle reflex (MEMR; Bharadwaj et al., 2022; Valero et al., 2018). These physiological measures can all be obtained in living humans, although some differences in stimulus parameters and methodology are required.

ABR wave 1 amplitude is a measure for the summed response of the auditory nerve fibers to a suprathreshold stimulus (see Bramhall, 2021 for a detailed review of the use of this measure of identifying synaptopathy). The EFR is a measure of how well the auditory system phase locks to the envelope of an amplitude modulated stimulus (Dolphin & Mountain, 1992). Van Der Biest et al. (2023) reviewed the complexities involved in translating EFR measurements from animal models to humans and used a computational model of the human auditory periphery to predict which EFR stimulus parameters will be the most effective for estimating cochlear synapse loss. The MEMR is an auditory-evoked reflex resulting in frequency-specific changes in middle ear impedance and is mediated by a reflex pathway that includes the cochlea and auditory nerve (see review by Trevino et al., 2023 for a discussion of the use of the MEMR as a measure of synaptopathy). Note that two different methods of measuring the MEMR have been used in human studies. The first is already part of the standard clinical audiometric test battery and uses a tonal 226 Hz probe tone. The second uses a wideband probe, such as a click or chirp. A wideband probe was used in the animal studies relating MEMR strength to synapse numbers (Bharadwaj et al., 2022; Valero et al., 2018). The advantage of using the wideband probe is that it provides information about MEMR-related middle ear impedance changes across a broad range of frequencies. Because the frequency of maximal impedance change varies across individuals, conclusions about the magnitude of the MEMR can differ depending on whether a tonal or a wideband probe is used (see Figure 5 from Bharadwaj et al., 2019). Hereafter, we will refer to MEMR measurements obtained with a wideband probe as wideband MEMR.

No animal study has explicitly compared all three physiological measures in the same animals to evaluate their relative value as indicators of cochlear synaptopathy. However, Shaheen et al. (2015) measured both ABR wave 1 amplitude and EFR strength in mice with noise-induced synaptopathy, Valero et al. (2018) measured both ABR wave 1 amplitude and wideband MEMR in mice with noise-induced synaptopathy, Parthasarathy and Kujawa (2018) measured ABR wave 1 amplitude and EFR strength in mice with age-related synaptopathy, and Bharadwaj et al. (2022) measured ABR wave 1/5 amplitude ratio and wideband MEMR in chinchillas with noise-induced synaptopathy. Shaheen et al. found larger noise-exposure related effect sizes for EFR strength relative to ABR wave 1 amplitude. Valero et al. observed that wideband MEMR metrics showed a stronger correlation with synapse numbers than ABR wave 1 amplitude. Parthasarathy and Kujawa found larger age-related effect sizes for the EFR than for the ABR at 30 kHz, but the opposite at 12 kHz. Finally, Bharadwaj et al. showed larger effect sizes for wideband MEMR magnitude/threshold than ABR wave 1/5 amplitude ratio. Overall, these studies suggest that the EFR and wideband MEMR are more sensitive metrics of synaptopathy than the ABR.

Although synapse numbers cannot be confirmed in living humans, based on the assumption that military Veterans are at greater risk for noise-induced cochlear synaptopathy than non-Veterans, Bramhall et al. (2021, 2022) measured ABR wave I amplitude, EFR magnitude, and wideband MEMR strength in a sample of young Veterans and non-Veterans with normal audiograms. They found that of these three measures, EFR magnitude provided the clearest differences between Veterans with high noise exposure and non-Veterans with minimal noise exposure after statistical adjustment for DPOAE levels and sex. Bharadwaj et al. (2022) measured wideband MEMR and ABR wave I/V amplitude ratio in young adults with noise exposure from firearm use/playing in a marching band, middle-aged adults, and young adults controls and found that the wideband MEMR was better than ABR wave I/V ratio at differentiating the controls from the noise exposed and middle-aged groups. These human studies suggest that the EFR and wideband MEMR are more sensitive to expected age- and noise exposure-related cochlear synaptopathy than the ABR, similar to what has been shown in animal models.

The optimal measure for assessing age-related versus noise exposure-related synaptopathy may differ if the subtypes of ANFs impacted by these two types of synaptopathy differ. For example, if low spontaneous rate ANFs are preferentially impacted by aging, but low, mid, and high spontaneous rate ANFs are all similarly impacted by noise exposure, the best metric for assessing age-related synaptopathy would be particularly sensitive to loss of low spontaneous rate ANFs, while the best metric for detecting noise-induced synaptopathy would be sensitive to loss of all ANF subtypes.

Additional factors, such as co-existing OHC loss, may also impact which measure is most appropriate for estimating synapse loss in a particular situation. The findings of Don and Eggermont (1978) indicate that the basal end of the cochlea contributes to ABR wave I amplitude and computational modeling suggests that OHC dysfunction can impact ABR wave I amplitude (Verhulst et al., 2018). Bharadwaj et al. (2019) showed that among people with clinically normal hearing, reduced ABR wave I amplitude was associated with poorer hearing thresholds in the extended high frequencies (9–16 kHz). Similarly, in mice with noise-induced permanent threshold shifts, ABR wave 1 amplitudes are reduced much more than would be expected given their synapse counts (Fernandez et al., 2020). This makes it difficult to disentangle the effects of OHC dysfunction and synaptopathy on ABR wave I amplitude. In contrast, computational modeling suggests that when hearing thresholds are within the normal range, subclinical variation in OHC function should have little impact on EFR magnitude (Encina-Llamas et al., 2019). Similarly, Bramhall et al. (2022) found that among individuals with normal hearing thresholds, there was no clear impact of average DPOAE level from 3–8 kHz on wideband MEMR magnitude. This suggests that in populations with clinically normal hearing, the EFR and wideband MEMR may be better metrics of cochlear synaptopathy than the ABR due to less confounding from subclinical OHC dysfunction. Further research will be necessary to determine which physiological measures perform best in populations with overt hearing loss.

It is important to understand that these three physiological measures are not specific to cochlear synaptopathy, but will be impacted by any type of cochlear deafferentation. We use the term cochlear deafferentation to refer to any source of disruption of the afferent input from the cochlea to the central auditory system. This deafferentation could be due to loss of inner hair cells (IHCs), cochlear synapses, or spiral ganglion cells. These types of damage cannot be differentiated physiologically with current diagnostics, but the functional consequences of these different types of reduced afferent input should be similar.

Predicted Perceptual Consequences of Synaptopathy

Predicted perceptual consequences of cochlear synaptopathy include tinnitus, hyperacusis, and difficulty with speech-in-noise perception. Tinnitus and hyperacusis are predicted sequelae of synaptopathy because they are believed to be consequences of maladaptive central gain in the central auditory system in response to decreased peripheral auditory input (Kujawa & Liberman, 2015). The central gain theory refers to the hypothesis that reduced peripheral auditory input leads to hyperactivity in downstream neurons in the central auditory system (central gain) and that this hyperactivity can result in the perception of hyperacusis or tinnitus (reviewed in Auerbach et al., 2014; Sedley, 2019). Reduced peripheral input following noise-induced cochlear damage has been shown to result in increased neuronal firing rates in multiple regions of the central auditory system, including the cochlear nucleus, inferior colliculus, medial geniculate body, and auditory cortex (reviewed in Sedley, 2019). The link between central gain and the perception of tinnitus/hyperacusis is harder to establish because central hyperactivity is not always associated with these abnormal percepts. This may be due to secondary factors in addition to central gain, such as attention, that are necessary to initiate these perceptions (reviewed in Sedley, 2019). As a source of reduced peripheral auditory input, cochlear synaptopathy would be expected to result in increased firing rates in the central auditory system. Accordingly, if the central gain theory is correct, synaptopathy-induced hyperactivity in the central auditory system should increase the likelihood of developing tinnitus and/or hyperacusis.

Synaptopathy is expected to have a negative impact on speech perception in noise due to the loss of synapses with low spontaneous rate (SR) auditory nerve fibers that have a high response threshold and a large dynamic range (reviewed in Bharadwaj et al., 2014). Low SR fibers are thought to be important for the coding of suprathreshold sounds, particularly in the presence of background noise (Costalupes et al., 1984). The proportion of low versus high SR fibers varies across species (Liberman, 1980, 1982; Tsuji & Liberman, 1997) and the relative distribution of fiber subtypes is unknown in humans. Initial reports from gerbils and guinea pigs suggested that low and medium SR fibers were preferentially lost with age and noise-induced synaptopathy (Furman et al., 2013; Marmel et al., 2015; Schmiedt et al., 1996), but more recent data from mouse suggests that there may be a more uniform loss of fiber subtypes in noise-induced synaptopathy (Suthakar & Liberman, 2021, 2022).

Perceptual Consequences of Cochlear Deafferentation in Animal Models

Tinnitus, Hyperacusis, and Central Gain

Several animal studies have evaluated changes in central gain following cochlear deafferentation. Central gain can be evaluated in animal models fairly easily by measuring neuronal firing rates at various stages of the auditory pathway. Chambers et al. (2016) used the neurotoxic drug ouabain to induce approximately 95% spiral ganglion cell loss in mice. The amount of damage increased over time, with greater spiral ganglion cell loss at 30 days post drug treatment than at seven days. As expected, ABR wave 1 amplitude was very small in animals seven days after drug treatment and even smaller at 30 days after treatment, reflecting decreased firing in the auditory nerve over time. However, this substantial impact on neuronal firing did not persist throughout the auditory pathway. Multi-unit recordings in the inferior colliculus indicated that neuronal firing, while reduced for ouabain-treated mice compared with controls, was greater for mice 30 days after drug treatment than it was for mice seven days after treatment. Given that the mice had greater spiral ganglion cell loss 30 days post-treatment, this result suggests the development of central gain in the inferior colliculus over time. Neuronal firing in the auditory cortex for the mice 30 days after ouabain treatment was so robust, that it surpassed not only the firing observed in mice seven days after ouabain treatment, but at higher stimulus levels, it also exceeded the firing in controls. This suggests excessive central gain occurring in the auditory cortex 30 days after spiral ganglion cell loss. In a similar study, Salvi et al. (2017) induced approximately 80% IHC loss in chinchillas by treating them with carboplatin, a chemotherapy drug. Outer hair cells (OHCs) and behavioral thresholds were relatively unaffected, while the compound action potential, reflecting firing in the auditory nerve, was greatly diminished. In the inferior colliculus, neuronal firing was slightly reduced compared to controls, while firing in the auditory cortex was considerably elevated relative to controls. This recovery and eventual overshoot of normal levels of neuronal activity, observed in both animal studies, is suggestive of central gain occurring in the central auditory system in response to cochlear deafferentation. Given the hypothesized relationship between central gain and tinnitus/hyperacusis, these findings suggest that cochlear deafferentation may increase the likelihood of developing tinnitus or hyperacusis. In addition, a recent study in mice suggests that some aspects of ANF coding are actually improved in surviving ANFs following synaptopathy (Suthakar & Liberman, 2021). Specifically, onset responses are enhanced and temporal jitter is reduced. The authors suggest that in addition to central gain, this peripheral hyperactivity at the level of the auditory nerve may also contribute to the perception of hyperacusis. Further research will be necessary to investigate this possibility.

Several studies in animals have evaluated possible relationships between cochlear synaptopathy and the perception of tinnitus or hyperacusis. However, it is important to note that there is no standardized method for confirming the perception of tinnitus or hyperacusis in animal models. Although several behavioral screening paradigms for these perceptual deficits have been developed for use in animal models (reviewed in Hayes et al., 2014), whether these assessments actually measure tinnitus or hyperacusis remains a topic of debate (reviewed in Brozoski & Bauer, 2016; Galazyuk & Brozoski, 2020).

One of the behavioral assessments of tinnitus perception used in animal studies of synaptopathy is prepulse inhibition of the acoustic startle response. When the acoustic startle stimulus is preceded by another stimulus (the prepulse), the amplitude of the startle reflex is reduced. The prepulse can also be delivered as a silent gap in a continuous sound stimulus. It has been hypothesized that animals with tinnitus will not detect the silent gap because it will be filled in by the tinnitus percept. This would then result in decreased prepulse inhibition for animals with tinnitus relative to animals without tinnitus (Turner et al., 2006). Hickox and Liberman (2014) evaluated prepulse inhibition of the acoustic startle reflex in CBA/CaJ mice with histologically-confirmed noise-induced synaptopathy. They found that the mice with synaptopathy demonstrated reduced prepulse inhibition for gaps occurring immediately before the onset of the startle stimulus, but not for gaps that ended 80 ms before the startle stimulus began. Tinnitus would be expected to fill in gaps in the prepulse regardless of when they occur relative to the startle stimulus, so this was inconclusive evidence of tinnitus perception in the mice with synaptopathy. Tziridis et al. (2021) used prepulse inhibition of the acoustic startle reflex to evaluate tinnitus perception in gerbils with unilateral histologically-confirmed noise-induced cochlear synaptopathy. Out of 15 noise-exposed gerbils, five developed a temporary threshold shift and behavioral evidence of tinnitus, five developed a permanent threshold shift and behavioral evidence of tinnitus, and five developed a permanent threshold shift, but did not exhibit evidence of tinnitus. In rats with noise-induced temporary threshold shift, Mohrle et al. (2019) assessed perception of tinnitus using a behavioral task where the animals were rewarded for responding to an external sound and received a foot shock if they responded to silence. Following noise exposure, responding to silence was interpreted as evidence of tinnitus. Among 31 noise-exposed rats, they found behavioral evidence of tinnitus in 16 rats. Note that no histology was performed to confirm synaptopathy in this study.

Hickox & Liberman (2014) assessed the acoustic startle reflex in CBA/CaJ mice with and without histologically confirmed noise-induced cochlear synaptopathy as a behavioral assessment of hyperacusis. They found that mice with synaptopathy demonstrated lower startle thresholds and enhanced startle amplitudes for moderate intensity sounds compared with control mice. In other words, the mice with synaptopathy reacted to moderate intensity sounds as a control mouse would react to higher intensity sounds. This suggests that the mice with synaptopathy were perceiving moderate intensity sounds as being abnormally loud (i.e., experiencing hyperacusis). Similarly, Peineau et al. (2021) found that C57B/6J mice with histologically-confirmed age-related synaptopathy had enhanced startle responses to moderate intensity sounds relative to young mice. Note, however, that the aged mice also had reduced ABR thresholds and DPOAE amplitudes compared with the young mice, which makes it difficult to confirm whether the enhanced startle responses were solely related to synapse loss. Pienkowski (2018) exposed CBA/Ca mice to 8–16 kHz noise at ∼75 dB SPL for 2 months (24 h/day), resulting in reduced ABR wave 1 amplitudes, but not ABR or DPOAE thresholds. Although these physiological data are consistent with synaptopathy, no histology was performed to confirm synaptopathy. In contrast to Hickox & Liberman, acoustic startle amplitudes were similar pre- and post-exposure in these mice. Mohrle et al. (2019) assessed perception of hyperacusis using a behavioral task where the animals were rewarded for responding to an external sound and received a foot shock if they responded to silence. Following noise exposure resulting in a temporary threshold shift, elevated response activity compared to sham controls was interpreted as evidence of hyperacusis. Among 31 noise-exposed rats, they found behavioral evidence of hyperacusis in 12 rats, eight of whom also had evidence of tinnitus. Note that no histology was performed to confirm synaptopathy in this study.

These animal studies of cochlear deafferentation suggest that deafferentation can lead to enhanced central gain and may also increase the likelihood of developing tinnitus and/or hyperacusis.

Temporal Processing and Signal Detection in Noise

Although speech perception in noise cannot be directly evaluated in animal models, temporal processing and signal-in-noise detection ability can be measured in animals and both are expected to be important skills for speech-in-noise perception. Several animal studies have evaluated the impacts of cochlear deafferentation on temporal processing and signal detection in noise. Lobarinas et al. (2020) measured gap detection in broadband noise (a measure of temporal processing) in chinchillas with carboplatin-induced IHC loss. Following approximately 70% IHC loss, gap detection ability worsened, particularly for the lowest stimulus level (40 dB SPL broadband noise). Lobarinas et al. (2017) found that rats with presumed noise-induced cochlear synaptopathy (based on temporary threshold shift and reduced ABR wave 1 amplitude following noise exposure) had decreased ability to detect narrowband noise bursts presented in broadband background noise. In mice with approximately 70% ouabain-induced spiral ganglion cell loss, Resnik & Polley (2021) found that tone detection in noise was impaired while tone detection in quiet was preserved. These studies suggest that cochlear deafferentation is associated with temporal processing and signal-in-noise detection deficits that would be expected to negatively impact speech perception in noise.

Perceptual Consequences of Cochlear Deafferentation in Humans: Tinnitus

A number of human studies have investigated the relationship between physiological indicators of cochlear deafferentation (ABR, EFR, and MEMR) and tinnitus, summarized in Table 1. Studies were identified by entering the search terms “cochlear synaptopathy tinnitus” and “hidden hearing loss tinnitus” into PubMed. Only studies that explicitly compared a physiological correlate of cochlear deafferentation and perception of tinnitus were included. Studies that evaluated relationships between risk factors for cochlear synaptopathy (e.g., age and noise exposure history) and tinnitus were not included because genetic susceptibility to synaptopathy is expected to vary across individuals. For example, the degree of synaptopathy in two individuals of the same age or with the same noise exposure history could differ considerably depending on their genetics. For this reason, physiological measurements are a better indicator of cochlear deafferentation at an individual level than risk factors and are therefore more likely to show relationships with tinnitus. Studies that only compared tinnitus and non-tinnitus ears in participants with unilateral tinnitus and did not include a separate control group were also not included because tinnitus involves central auditory pathways that can have bilateral impacts. The majority of the studies listed in Table 1 recruited populations with clinically normal hearing to reduce the confounding impacts of OHC dysfunction, although some studies included broader populations. The range of hearing thresholds included in each study is indicated in the table.

Studies that Have Investigated the Relationship Between Physiological Correlates of Cochlear Deafferentation and Tinnitus.

If a study included a population at increased risk of tinnitus, this is indicated in the “Tinnitus risk factor” column. ABR – auditory brainstem response; EFR – envelope following response; MEMR – middle ear muscle reflex; SAM – sinusoidally amplitude modulated; SP – summating potential; AP – action potential.

Human studies initially focused on the relationship between ABR wave I amplitude and the perception of tinnitus. While several studies showed that tinnitus was associated with lower ABR wave I amplitudes relative to controls (e.g., Bramhall et al., 2018; Gu et al., 2012; Schaette & McAlpine, 2011), others did not (e.g., Guest et al., 2017b; Shim et al., 2017). One explanation for the differing results across studies is that many of the studies had small sample sizes (e.g., 40 participants [20 with tinnitus, 20 controls] in the Guest et al. study, 36 participants [15 with tinnitus, 21 controls] in the Gu et al. study, and 33 participants [15 with tinnitus, 18 controls] in the Schaette & McAlpine study). To illustrate this point, we ran a power analysis using data from Bramhall et al. (2018) that indicates a mean ABR wave I amplitude of 0.29 µV for the tinnitus group (standard deviation 0.10 µV) and 0.38 µV for the control group (standard deviation 0.11 µV). The results of the power analysis suggest that a sample of 25 participants for each group (tinnitus and control) would be necessary to have at least an 80% chance of rejecting the null hypothesis of equal mean ABR wave I amplitude. To address the sample size issue, Bramhall et al. (2019) combined ABR wave I amplitude and tinnitus perception data collected from four different studies that included military Veterans into a single Bayesian logistic regression analysis of 193 participants (43 with tinnitus, 150 controls) that included statistical adjustment for sex and OHC dysfunction, as indicated by distortion product otoacoustic emissions (DPOAEs). The results suggest that among individuals with robust DPOAEs, lower ABR wave I amplitudes are associated with an increased likelihood of perceiving tinnitus (Figure 1).

Among individuals with robust DPOAEs, lower ABR wave I amplitudes are associated with an increased probability of reporting tinnitus. This plot shows posterior predictive comparisons derived from a Bayesian logistic regression model of the relationship between ABR wave I amplitude and the probability of reporting tinnitus. Each black dot and confidence interval corresponds to a single participant at their average DPOAE level (shown on the x-axis). The y-axis shows the average difference in the probability of reporting tinnitus for each individual participant when contrasting an ABR wave I amplitude that is 50% higher than average with an ABR wave I amplitude that is 50% lower than average. This is plotted for separately for males and females for different average DPOAE levels (from 3–8 kHz). Positive values on the y-axis indicate that lower ABR wave I amplitudes are associated with an increased risk of reporting tinnitus, while negative values indicate that lower ABR wave I amplitudes are associated with a decreased risk of tinnitus. Thick lines show the interquartile range, while thin lines show the 90% Bayesian confidence interval. The vertical dashed line indicates the sample mean average DPOAE level (2.7 dB SPL). Reproduced from Naomi F. Bramhall, Garnett P. McMillan, Frederick J. Gallun, Dawn Konrad-Martin; Auditory brainstem response demonstrates that reduced peripheral auditory input is associated with self-report of tinnitus. J. Acoust. Soc. Am. 1 November 2019; 146 (5): 3849–3862. https://doi.org/10.1121/1.5132708, with the permission of the Acoustical Society of America.

Unfortunately, the impacts of OHC dysfunction on ABR wave I amplitude may complicate the interpretation of this metric, even among adults with clinically normal hearing. In a sample of young military Veterans and non-Veterans with normal audiograms, Bramhall et al. (2018) showed a clear increase in the probability of reporting tinnitus as ABR wave I amplitude decreases, even after statistical adjustment for average DPOAE level from 3–8 kHz. In contrast, in a second study of young military Veterans and non-Veterans with normal audiograms, Bramhall et al. (2023) showed a reduction in mean ABR wave I amplitude for Veterans with tinnitus compared with non-Veteran controls in their raw data, but this reduction disappeared after statistical adjustment for average DPOAE level from 3–8 kHz. In the 2018 study, the DPOAE inclusion criteria were very strict, which restricted the sample to participants with robust DPOAEs, while the DPOAE inclusion criteria for the more recent study were less strict, resulting in a sample with greater subclinical OHC dysfunction. The differing results between these two studies after statistical adjustment for DPOAE levels suggest that in populations with OHC dysfunction, the relationship between ABR wave I amplitude and tinnitus may be difficult to interpret due to the non-linear effects of OHC dysfunction on ABR wave I amplitude (Verhulst et al., 2018), which are not well represented by linear regression models.

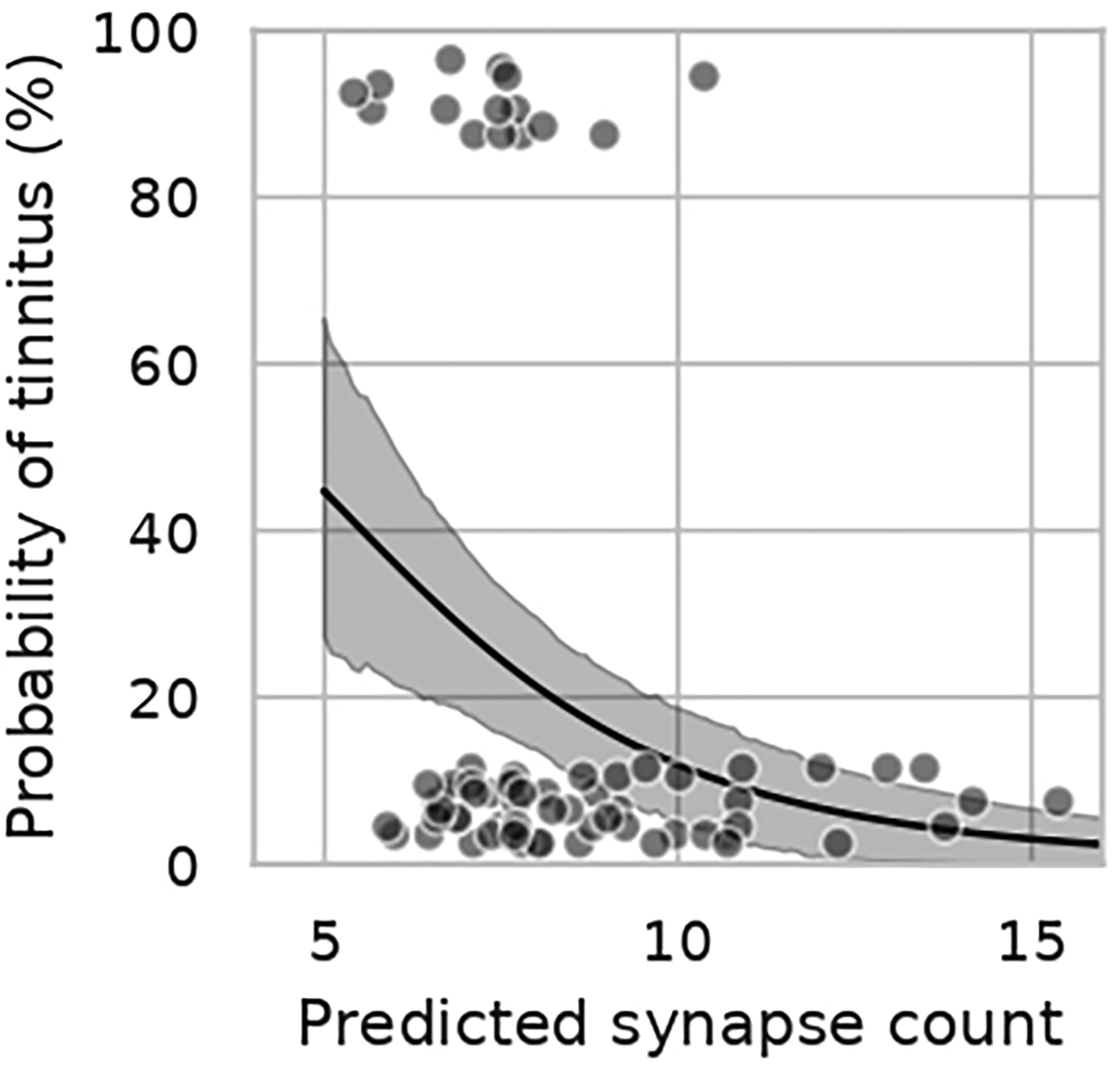

One alternative method of accounting for the impacts of OHC dysfunction on ABR wave I amplitude is to use computational modeling. Verhulst et al. (2018) developed a computational model of the human auditory periphery that provides estimates of cochlear gain based on measured otoacoustic emissions or pure tone thresholds and estimates the resulting ABR or EFR in response to any stimulus based on the input cochlear gain and number of intact afferent synapses with the auditory nerve. Buran et al. (2022) fit this computational model with Bayesian regression so that the number of cochlear synapses for individual human subjects could be predicted based on their ABR wave I amplitude measurements and their DPOAEs. This allowed for the impacts of OHC dysfunction to be incorporated into the synapse predictions. This modeling algorithm was used to predict synapse counts for the Bramhall et al. (2018) dataset of young Veterans and non-Veterans with normal audiograms based on their measured ABR wave I amplitudes and DPOAEs. Synapse counts per IHC were predicted across frequency for each participant, with maximum synapse counts occurring in the middle of the frequency range. If we look at predicted maximum synapse counts for the individuals in that dataset who reported tinnitus, it appears that young Veterans who report tinnitus tend to have fewer than 10 predicted synaptic connections per IHC in the middle of the cochlea (Figure 2). In comparison, the highest number of predicted synapses in the sample of young Veterans and non-Veterans was 16 synapses per IHC, which is similar to the maximum number of synapses per IHC observed in human temporal bone data from a neonate (Wu et al., 2019).

Relationship between predicted synapse count and the probability of reporting tinnitus. Veterans with tinnitus tend to have less than 10 predicted synapses per IHC. Individual participants are represented by gray circles; those that reported tinnitus are plotted at 100% (top), while those that denied tinnitus are plotted at 0% (bottom). Data are jittered on the y-axis to better allow for visualization of individual data points. The black line and shaded region show the mean and 90% Bayesian confidence interval, respectively, of a Bayesian linear regression model relating predicted synapse count to the probability of reporting tinnitus. Reproduced from Brad N. Buran, Garnett P. McMillan, Sarineh Keshishzadeh, Sarah Verhulst, Naomi F. Bramhall; Predicting synapse counts in living humans by combining computational models with auditory physiology. J. Acoust. Soc. Am. 1 January 2022; 151 (1): 561–576. https://doi.org/10.1121/10.0009238, with the permission of the Acoustical Society of America.

More recent studies have investigated the relationship between MEMR or EFR magnitude and the perception of tinnitus. These measures may be less impacted by OHC dysfunction than ABR wave I amplitude, at least in individuals with normal audiograms (Bramhall et al., 2022; Encina-Llamas et al., 2019). In addition, as discussed earlier, previous animal and human studies of noise-induced and age-related synaptopathy/deafferentation have suggested that the EFR and wideband MEMR may be more sensitive metrics of deafferentation than the ABR. In a sample of 36 participants with normal hearing to mild hearing loss (18 with tinnitus, 18 controls), Wojtczak et al. (2017) found that the perception of tinnitus was associated with a reduction in wideband MEMR magnitude, even after statistically adjusting for hearing thresholds. In a sample of 59 participants with normal hearing thresholds (23 with tinnitus, 36 controls), Bramhall et al. (2022) observed a trend toward reduced wideband MEMR magnitude for military Veterans with tinnitus after adjusting for average DPOAE level from 3–8 kHz, consistent with the Wojtczak et al. findings. Similarly, in a sample of 201 participants (29 with chronic tinnitus, 64 with intermittent tinnitus, and 108 controls) with normal hearing thresholds, Vasilkov et al. (2023) showed that chronic tinnitus was associated with weaker wideband MEMR strength and higher MEMR thresholds. However, in a sample of 38 participants (19 with tinnitus, 19 controls), Guest et al. (2019) showed a trend toward higher MEMR thresholds (measured with a 226 Hz probe) for controls compared with participants reporting tinnitus, indicating weaker MEMR magnitude. This is the opposite of the relationship that would be expected if cochlear deafferentation was associated with tinnitus. Note, however, that the Guest et al. study used a tonal MEMR probe, while the Wojtczak et al., Bramhall et al., and Vasilkov et al. studies used a wideband probe. This may explain the differing results for the Guest et al. study. However, Casolani et al. (2022) used a wideband probe in a sample of 35 participants (20 tinnitus, 15 controls), yet did not find a significant relationship between wideband MEMR magnitude and tinnitus.

It's possible that the MEMR is not the best indicator of noise-induced cochlear deafferentation. If we assume that Veterans are at an increased risk of cochlear synaptopathy due to their history of military noise exposure and that cochlear deafferentation increases the likelihood of developing tinnitus, we would expect that Veterans with tinnitus would have particularly high rates of cochlear deafferentation compared with non-Veterans with minimal noise exposure history. Bramhall et al. (2023) evaluated magnitude contrasts between young Veterans with tinnitus and non-Veteran controls for all three physiological indicators of cochlear deafferentation (ABR, EFR, and wideband MEMR) in the same sample. After statistical adjustment for average DPOAE level from 3–8 kHz and sex, the results indicated that only EFR magnitude measurements provided a clear contrast between Veterans with tinnitus and controls, with a 2.4 dB reduction in EFR magnitude for Veterans with tinnitus for a 100% sinusoidally amplitude modulated (SAM) 4 kHz carrier tone. Bramhall et al. used two different methods to equate the estimated mean reduction in EFR magnitude for Veterans with tinnitus (relative to controls) to age-related changes in cochlear deafferentation. First, they compared the reduction in EFR magnitude for Veterans with tinnitus to the average age-related change in EFR magnitude observed across three previous human studies (after adjusting for pure tone thresholds and sex) and estimated that the EFR magnitude reduction observed in Veterans with tinnitus was equivalent to 21 years of aging. Second, they used a computational model of the human auditory periphery (Verhulst et al., 2018) to estimate how many synapses would need to be lost to reduce the EFR magnitude by the observed amount (2.8–3.3 synapses) and then they used human temporal bone data (Wu et al., 2019) to estimate how many years of age-related synaptopathy it would take to lose that number of synapses (23–27 years). Although these are rough estimates of the equivalent age-related change, these results suggest that the reduction in EFR magnitude observed in young Veterans with tinnitus compared with controls is similar to the reduction in EFR magnitude/cochlear synapse number associated with over 20 years of aging. This comparison makes it clear that a tinnitus-related EFR reduction of this magnitude is cause for concern.

Two other studies that investigated the relationship between EFR magnitude and the perception of tinnitus have shown a trend toward reduced EFR magnitude for individuals with tinnitus compared with controls (Guest et al., 2017a; Paul et al., 2017), but the contrasts were not as clear as for the Bramhall et al. (2023) study. The contrasts may be clearer in the Bramhall et al. study because they specifically investigated a population suspected to have noise-induced tinnitus (young military Veterans), whereas the other studies sampled from broader tinnitus populations and may have inadvertently included individuals with forms of tinnitus that were not related to cochlear deafferentation (e.g., tinnitus secondary to cardiovascular problems or thyroid disorder).

Bramhall et al. (2020) measured the middle latency response (MLR) and the late latency response (LLR) in a subset of the sample from Bramhall et al. (2023). The MLR and LLR are auditory evoked potentials with longer latencies than the ABR. The MLR occurs approximately 10–70 ms following stimulus onset, while the components of the LLR (P1, N1, and P2) occur roughly 50–200 ms after stimulus onset. The MLR is generated by multiple sources along the auditory thalamocortical pathway, with a potential contribution from the inferior colliculus (reviewed by Musiek & Nagle, 2018), while the LLR is generated by the auditory cortex and auditory association areas (reviewed by Martin et al., 2008; Naatanen & Picton, 1987).

The MLR results from Bramhall et al. (2020) indicated a reduction in MLR magnitude for Veterans with tinnitus compared with non-Veteran controls, which is not particularly surprising given the reduction in EFR magnitude observed for Veterans with tinnitus compared with controls in Bramhall et al. (2023). This is similar to the findings of a guinea pig study that showed a reduction in electrically-evoked MLR magnitude following drug-induced spiral ganglion cell loss (Jyung et al., 1989). The MLR results are also consistent with the observation that mice with ouabain-induced spiral ganglion cell loss and chinchillas with carboplatin-induced IHC loss show reduced firing in the inferior colliculus compared with controls (Chambers et al., 2016; Salvi et al., 2017). Note that the observed reduction in MLR amplitude for Veterans with tinnitus compared with controls does not necessarily mean that no central gain was occurring in the brainstem, just that there was insufficient gain to produce MLR amplitudes similar to those observed in controls. In the future, to better estimate gain at the level of the brainstem, the MLR could be measured shortly after noise exposure and then again at a later timepoint to see if MLR amplitude increased over time.

In contrast to the MLR results, mean LLR magnitude for the Veterans with tinnitus was not reduced relative to controls. In fact, mean LLR magnitude for Veterans with tinnitus was greater than for controls. This pattern of reduced MLR strength for Veterans with tinnitus relative to controls, but increased LLR strength remained even after statistical adjustment for sex and average DPOAE level from 3–8 kHz. This suggests that central gain at the level of the auditory cortex that cannot be solely explained by OHC dysfunction occurs in young Veterans with tinnitus who have normal audiograms. This is consistent with animal studies that show elevated neural firing in the auditory cortex of animals with cochlear deafferentation relative to controls (Chambers et al., 2016; Salvi et al., 2017).

It is important to note that cochlear deafferentation and resulting increases in central gain do not always lead to the perception of tinnitus. This is likely due to currently unconfirmed secondary factors triggered by central gain that are necessary for the perception of tinnitus. Attention, for example, is one potential secondary factor that may be necessary for the perception of tinnitus (reviewed in Sedley, 2019). In the Bramhall et al. (2023) study, Veterans without tinnitus exhibited mean EFR magnitudes for some modulation depths (63% and 40%) that were reduced relative to non-Veteran controls with minimal noise exposure history, but the contrasts were not as large or as consistent across modulation depths as they were between Veterans with tinnitus and controls. This suggests, on average, some degree of cochlear deafferentation in Veterans without tinnitus, although this tends to be less severe than for Veterans with tinnitus. Similarly, predicted synapse counts from Buran et al. (2022) suggest that while Veterans with tinnitus tend to have maximum predicted synapse counts of less than 10 per IHC, many individuals without tinnitus also have predicted synapse counts of less than 10 per IHC (Figure 2). Additionally, while mean MLR magnitude for Veterans without tinnitus was reduced in the Bramhall et al. (2020) study relative to controls, mean LLR magnitude for Veterans without tinnitus was slightly elevated compared with controls. This suggests that young Veterans without tinnitus who have normal audiograms also experience central gain at the level of the auditory cortex. These individuals may develop tinnitus in the future if the necessary secondary factors are present.

Taken together, the animal and human findings suggest that cochlear deafferentation is associated with increased central gain at the level of the auditory cortex. This central gain appears to increase the probability of developing tinnitus.

Perceptual Consequences of Cochlear Deafferentation in Humans: Hyperacusis

Unfortunately, few human studies have investigated relationships between physiological indicators of cochlear deafferentation and hyperacusis. Barriers to studying these relationships include the lack of a standard definition or screening tool for hyperacusis and difficulty recruiting sufficient study participants who report decreased sound tolerance. Several different sound tolerance conditions (e.g., hyperacusis, misophonia, phonophobia, and noise sensitivity) are often lumped together under the term “hyperacusis.” These conditions may differ in terms of their underlying etiology, but there is currently no consensus on their definition or assessment (reviewed in Henry et al., 2022). This complicates the identification of study participants who experience hyperacusis. Some cochlear deafferentation studies have used loudness discomfort level (LDL; the level at which a pure tone is judged to be uncomfortably loud) as an indicator of sound tolerance (e.g., Bramhall et al., 2018), but unfortunately, LDL by itself is not considered a reliable measure for identifying decreased sound tolerance (Sheldrake et al., 2015; Zaugg et al., 2016). Hyperacusis is also less prevalent than tinnitus. In the general population, the estimated prevalence of hyperacusis is 8–15%, with greater prevalence in older adults and individuals with more hearing loss (Smit et al., 2021). However, many previous human studies of cochlear deafferentation have focused on populations of young adults with normal hearing thresholds, further reducing the potential pool of study participants with hyperacusis.

We entered the following search terms in Pubmed and found only a single human study of the relationship between physiological indicators of deafferentation and hyperacusis: “hyperacusis synaptopathy,” “hyperacusis hidden hearing loss,” “hyperacusis deafferentation,” and “hyperacusis neural degeneration.” In a small sample of 12 adults with clinically normal hearing and an asymmetric summating potential (SP)/action potential (AP) ratio (a proposed indicator of cochlear deafferentation), Jahn et al. (2022) evaluated loudness growth perception in both ears. They found that, on average, the ear with greater estimated cochlear deafferentation (larger SP/AP ratio) showed elevated loudness perception when compared with the contralateral ear of the same participant. This may indicate that cochlear deafferentation increases the likelihood of elevated loudness perception, but it is unclear if any of these participants experienced clinically significant degrees of sound intolerance.

Further research with larger samples of individuals who experience hyperacusis will be necessary to confirm if cochlear deafferentation is associated with the development of hyperacusis.

Perceptual Consequences of Cochlear Deafferentation in Humans: Difficulty with Speech Perception in Noise

The prediction that cochlear synaptopathy will negatively impact speech perception in noise has been particularly difficult to confirm. A number of human studies have looked for relationships between physiological indicators of cochlear deafferentation and speech perception measures, with mixed results. These studies are summarized in Table 2. Studies were identified by entering the search terms “cochlear synaptopathy speech perception,” “hidden hearing loss speech perception,” “auditory brainstem response wave I amplitude speech perception,” “envelope following response speech perception,” and “middle ear muscle reflex speech perception” into PubMed. As for Table 1, only studies that explicitly compared a physiological correlate of cochlear deafferentation and a speech perception measure were included. Studies that only compared a physiological measure to a difference measure of speech perception (e.g., the difference in speech perception performance when speech is presented at two different intensity levels) were not included because difference measures of speech perception are an indicator of the relative impact of the different stimulus conditions, not the underlying speech perception performance. The majority of the studies listed in Table 2 restricted study participation to individuals with clinically normal hearing, although a handful included participants with some hearing loss. The range of hearing thresholds included in each study is indicated in the table.

Studies that Have Investigated the Relationship Between Physiological Correlates of Cochlear Deafferentation and Speech Perception.

If a study included a population at increased risk of cochlear deafferentation due to occupational/military noise exposure or age, this is indicated in the “High risk population included in sample” column. ABR – auditory brainstem response; EFR – envelope following response; MEMR – middle ear muscle reflex; SP – summating potential; AP – action potential; SAM – sinusoidally amplitude modulated; RAM – rectangular amplitude modulated; PLV – phase locking value; SNR – signal-to-noise ratio.

The speech perception measures vary considerably across these studies, as do the physiological measures. This makes it difficult to compare results across studies. It's possible that the sensitivity to cochlear deafferentation-related speech perception deficits may differ depending on the speech perception measure used. DiNino et al. (2022) proposed that the ideal speech perception measure for capturing cochlear deafferentation-related deficits would possess four features; it would be appropriately difficult for adults with normal hearing thresholds; it would have limited contextual, semantic, and syntactic content; it would emphasize temporal processing (e.g., by using time compression or reverberation); and it would allow competing speech streams to be differentiated only by fine spatial cues (rather than based on fundamental frequency). They found that previous studies that showed evidence of a relationship between a physiological correlate of cochlear deafferentation and speech perception performance included a speech perception metric with one or more of these features.

As mentioned earlier, some physiological measures may be more sensitive to cochlear deafferentation than others. In Bramhall et al. (2023), EFR magnitude showed stronger associations with tinnitus than ABR wave I amplitude or wideband MEMR strength. This could be interpreted as the EFR providing a better indication of the degree of cochlear deafferentation than the ABR or MEMR. If that is the case, EFR measurements may be more likely to show an association with speech perception measures than ABR or MEMR measurements. Some previous studies have shown relationships between EFR strength and speech perception performance (Garrett et al., 2020; Mepani et al., 2021), while others did not find a clear relationship (Carcagno & Plack, 2022; Guest et al., 2018; Prendergast et al., 2017). The two previous studies that showed a clear relationship between EFR strength and speech perception performance measured the EFR in response to a rectangular amplitude modulated (RAM) stimulus (Garrett et al., 2020; Mepani et al., 2021). Computational modeling suggests that use of a RAM stimulus for measuring the EFR allows for greater neural synchrony due to the sharp rise time compared with the more conventional sinusoidally amplitude modulated (SAM) stimulus (Vasilkov et al., 2021). This increased synchrony results in larger EFR responses and may increase the sensitivity to cochlear deafferentation.

Previous studies that have investigated the relationship between physiological correlates of cochlear deafferentation and speech perception have assumed a linear relationship. However, data we collected from a sample of 88 Veterans and non-Veterans aged 18–59 years with no more than a mild hearing loss challenges that assumption. Scatterplots in Figure 3 show the relationship between EFR magnitude in response to a RAM stimulus and performance on the Words-in-Noise (WIN; Wilson & Burks, 2005) test. The data was fit with a Locally Weighted Scatterplot Smoothing (LOWESS) line. Looking at this line, it appears that there is a non-linear relationship between EFR magnitude and performance on the WIN List 1, such that at very low EFR magnitudes, performance improves as EFR magnitude increases, but that at higher EFR magnitudes this relationship plateaus and any further increases in EFR magnitude are not associated with improved WIN performance. The relationship between EFR magnitude and performance on WIN List 2 was less clear. Across participants, performance on WIN List 2 tended to be poorer than performance on WIN List 1 (see Supplemental data). This is likely due to an order effect because all participants were tested on WIN List 1 first and WIN List 2 second. The test developers have previously reported order effects on the WIN with a 0.5 dB drop in performance for the WIN list tested second compared with the list tested first (Wilson & Burks, 2005). If performance on WIN List 2 was more negatively impacted by fatigue, boredom, and/or inattention than performance on WIN List 1, this could obscure the relationship between EFR magnitude and WIN List 2 performance.

Raw data suggests a non-linear relationship between EFR magnitude and performance on the WIN. LOWESS smooth line (blue) suggests that at low EFR magnitudes, WIN List 1 performance improves as EFR magnitude increases, but this relationship plateaus at higher EFR magnitudes. Shaded region indicates the 95% confidence interval. Black circles show data from individual participants. The EFR was measured in response to a 4 kHz carrier rectangular amplitude modulated at 110 Hz with a 25% duty cycle and presented at 70 dB SPL. EFR magnitude, relative to the noise floor (dB signal-to-noise ratio [SNR]), was calculated by summing the power at the modulation frequency and the first four harmonics of the modulation frequency. The noise floor was estimated as described in Vasilkov et al. (2021). WIN performance was measured using WIN List 1 and List 2. Multi-talker babble was set at 66 dB HL, while the target speech was varied from 66 dB HL to 90 dB HL in 4 dB increments. The WIN score is the SNR representing the 50% correct recognition point. Higher WIN scores indicate poorer performance. The relationship between EFR magnitude and performance on WIN List 2 is less clear. Across participants, performance on WIN List 2 tended to be poorer than performance on WIN List 1 (see Supplemental Data). This is likely due to an order effect because all participants completed WIN List 1 first and the test developers report a drop in performance for the WIN list tested second compared with the list tested first (Wilson & Burks, 2005). If performance on WIN List 2 was more negatively impacted by non-auditory factors such as fatigue, boredom, and/or inattention, this could obscure the relationship between EFR magnitude and WIN List 2 performance. All participants provided written informed consent and were paid for their participation. All study procedures were approved by the VA Portland Health Care System Institutional Review Board.

To confirm that the observed relationship between EFR magnitude and WIN List 1 performance was not just due to differences in OHC function between participants, a semi-parametric model was used to evaluate the relationship between EFR magnitude, average DPOAE level from 3–8 kHz, and performance on the WIN. In contrast to a linear regression model, a semi-parametric model defines the change in average WIN score as a non-linear function of EFR magnitude or average DPOAE level (see Supplemental Data for additional analysis details). The results of the analysis for WIN List 1 are plotted in Figure 4. In this population with normal to near normal hearing, average DPOAE level does not appear to affect WIN score. In contrast, for EFR magnitudes of less than 20 dB signal-to-noise ratio (SNR), WIN List 1 performance improves as EFR magnitude increases. However, this relationship plateaus at EFR magnitudes of approximately 20 dB SNR, with no further improvement in WIN performance with additional increases in EFR magnitude. One explanation for this non-linear relationship is that there is substantial redundancy in ANF innervation of the IHCs. Human temporal bone data suggests that, on average, neonates have approximately 16 auditory nerve synapses per IHC for frequencies from 1.4–8 kHz (Wu et al., 2019). While there will be differences in the information transmitted by these fibers depending on their spontaneous firing rate (Bharadwaj et al., 2014), many fibers can likely be lost without having a clear impact on speech perception. However, when all redundant fibers have been lost, each additional lost fiber would be expected to result in a greater negative impact on speech perception. This could help explain the mixed results in previous studies of the relationship between physiological correlates of deafferentation and speech perception. If the relationship is only clear in individuals with significant cochlear deafferentation, studies that included participants at highest risk for synaptopathy (e.g., older adults and individuals with a history of military or occupational noise exposure) would be more likely to see a relationship than studies that sampled from populations at low risk of synaptopathy (e.g., young people with and without recreational noise exposure). Supporting this view, in Table 2 it is clear that studies that included populations at high risk for synaptopathy, particularly if they used EFR or wideband MEMR, tended to find that weaker physiological measurements were associated with poorer speech perception performance.

The non-linear relationship between EFR magnitude and WIN list 1 performance persists after accounting for OHC function. The right-hand plot shows the effect of average DPOAE level from 3–8 kHz on WIN List 1 performance when EFR magnitude is held constant. The estimated relationship (black line), is relatively flat, suggesting little impact of OHC function (in a population with normal to near normal hearing) on WIN List 1 performance. The left-hand plot shows that when average DPOAE level is held constant, WIN List 1 performance improves with increasing EFR magnitude up to an EFR magnitude of approximately 20 dB SNR, followed by a plateau. Note that positive values for the effect on WIN score indicate decreased WIN performance, while negative values indicate improved WIN performance. The blue shaded region shows the 95% confidence interval. EFR magnitude and WIN performance were measured as described in the caption for Figure 3. See Supplemental Data for a plot of the relationship between EFR magnitude and performance on WIN List 2.

To further evaluate the potential non-linear relationship between degree of cochlear deafferentation and speech perception performance, we reanalyzed data from Buran et al. (2022) where synapse numbers were predicted for individual human participants based on their measured ABR wave I amplitudes using a computational modeling approach and compared their synapse numbers with their performance on the WIN. In that dataset, participants were tested with WIN list 1 first, followed by WIN list 2. Performance was poorer for WIN List 2 than for WIN List 1 (see Supplemental data), again suggesting an order effect. As with the EFR dataset, a semi-parametric model was used to evaluate the relationship between predicted synapse number, average DPOAE level from 3–8 kHz, and performance on the WIN. The results of the analysis for WIN List 1 is plotted in Figure 5 (see Supplemental data for the results for WIN List 2). Although there is considerably more uncertainty in the estimate (indicated by the broad confidence intervals) than for the EFR analysis, the estimated relationship between synapse number and WIN performance is qualitatively similar to the non-linear relationship observed between EFR magnitude and WIN performance, with a plateau for synapse counts above approximately 10 per IHC. This provides additional evidence of a non-linear relationship between the extent of cochlear deafferentation and speech perception performance.

Potential non-linear relationship between predicted synapse number and WIN list 1 performance. In the left-hand plot, the black line shows the estimated relationship between predicted synapses per IHC and performance on WIN List 1 when average DPOAE level is held constant. There appears to be a non-linear relationship, with better performance as synapse numbers increase up to 10 synapses per IHC, and then a plateau. However, the 95% confidence interval (blue shading) is quite broad, indicating uncertainty in the estimate. The right-hand plot shows the effect of average DPOAE level from 3–8 kHz on WIN List 1 performance when predicted synapse number is held constant. See Supplemental Data for a plot of the relationship between predicted synapse number and performance on WIN List 2.

Overall, although the relationship between physiological correlates of cochlear deafferentation and speech perception measures were not consistent across all studies, those that used speech perception measures with some of the features suggested by DiNino et al. (2022), used EFR with a RAM stimulus or wideband MEMR as the physiological measure, and/or included populations at high risk for synaptopathy (due to age or military noise exposure) tended to find that weaker physiological measurements were associated with poorer speech perception performance. It is possible that this relationship is non-linear and that the negative impacts of cochlear deafferentation on speech perception only become evident when a particular threshold level of deafferentation is reached. This possibility should be explored in future research. If a non-linear relationship is confirmed, patients with degrees of cochlear deafferentation that exceed this threshold would be good candidates for future treatments.

Conclusions

Overall, results from animal and human studies suggest that cochlear deafferentation has negative consequences for auditory perception. While behavioral evidence of synaptopathy-related tinnitus in animal models is inconclusive, a number of human studies suggest that reduced magnitude of physiological correlates of cochlear deafferentation is associated with an increased probability of reporting tinnitus. Data from animal and human studies concerning the relationship between cochlear deafferentation and hyperacusis is limited, but there is some evidence from rodents that deafferentation can result in hyperacusis. Both animal and human studies indicate that cochlear deafferentation is associated with increased central gain in the auditory cortex, which is thought to contribute to the development of tinnitus and hyperacusis. Several animal studies indicate that cochlear deafferentation is associated with diminished temporal processing and signal-in-noise detection. Although the findings across all human studies that have evaluated the relationship between physiological correlates of cochlear deafferentation and speech perception are mixed, this may be due to differences in the speech perception or physiological measures used and/or a non-linear relationship between cochlear deafferentation and speech perception performance. Impacts of cochlear deafferentation on speech perception may only become apparent once a certain threshold level of deafferentation is reached. This is more likely to occur in populations at high risk of cochlear synaptopathy, such as older adults and individuals with a history of military or occupational noise exposure. When only studies that included a population at high risk of cochlear synaptopathy are considered, 13 out of 18 of the studies in Table 2 show poorer speech perception performance associated with reduced magnitude of physiological indicators of cochlear deafferentation. The five studies that included a high risk population, but did not show an association all used ABR metrics as their physiological correlate of cochlear deafferentation. Previous animal and human studies suggest that the ABR is a less sensitive metric of cochlear deafferentation than the EFR or wideband MEMR and may be more impacted by the confounding effects of subclinical OHC damage. Further investigation will be necessary to confirm the association between cochlear deafferentation and hyperacusis and the non-linear relationship between cochlear deafferentation and speech perception performance.

Supplemental Material

sj-docx-1-tia-10.1177_23312165241239541 - Supplemental material for Perceptual Consequences of Cochlear Deafferentation in Humans

Supplemental material, sj-docx-1-tia-10.1177_23312165241239541 for Perceptual Consequences of Cochlear Deafferentation in Humans by Naomi F. Bramhall and Garnett P. McMillan in Trends in Hearing

Footnotes

Acknowledgements

The opinions and assertions presented are private views of the authors and are not to be construed as official or as necessarily reflecting the views of the Department of Veterans Affairs.

Data Availability

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institutes of Health, National Institute on Deafness and Other Communication Disorders – Award #R01DC020423 (to N.F.B), Department of Veterans Affairs, Veterans Health Administration, Rehabilitation Research and Development Service – Award #C3804-R/I01 RX003804 (to N.F.B.), and by resources and facilities at the VA National Center for Rehabilitative Auditory Research (NCRAR) [Center Award #C2361C/I50 RX002361] at the VA Portland Health Care System in Portland, OR.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.