Abstract

Several studies have demonstrated that extended high frequencies (EHFs; >8 kHz) in speech are not only audible but also have some utility for speech recognition, including for speech-in-speech recognition when maskers are facing away from the listener. However, the contribution of EHF spectral versus temporal information to speech recognition is unknown. Here, we show that access to EHF temporal information improved speech-in-speech recognition relative to speech bandlimited at 8 kHz but that additional access to EHF spectral detail provided an additional small but significant benefit. Results suggest that both EHF spectral structure and the temporal envelope contribute to the observed EHF benefit. Speech recognition performance was quite sensitive to masker head orientation, with a rotation of only 15° providing a highly significant benefit. An exploratory analysis indicated that pure-tone thresholds at EHFs are better predictors of speech recognition performance than low-frequency pure-tone thresholds.

The frequency range of human hearing extends up to approximately 20 kHz for young, healthy listeners. Speech perception research has generally focused on the frequency range below about 6–8 kHz, likely because key phonetic features of speech occur in this range (e.g., vowel formants), and it is therefore understood to have the greatest influence on speech perception. The prevailing viewpoint has been that extended high frequencies (EHFs; >8 kHz) provide little information useful for speech perception. Thus, whereas the audibility of speech frequencies below 8 kHz and corresponding effects on speech perception have been studied extensively over the past several decades (e.g., Ching et al., 1998; McCreery & Stelmachowicz, 2013), the audibility of higher frequency bands and corresponding effects have been studied far less (Monson et al., 2014a).

The EHF range in speech is audible and has some utility for speech perception (Hunter et al., 2020). For example, the average young, normal-hearing listener can detect the absence of speech energy beyond approximately 13 kHz, although listeners with better 16-kHz pure-tone thresholds can detect losses at even higher frequencies (Monson & Caravello, 2019). It has also been demonstrated that EHF audibility contributes to speech localization (Best et al., 2005), speech quality (Monson et al., 2014b; Moore & Tan, 2003), talker head orientation discrimination (Monson et al., 2019), and speech recognition in the presence of background speech (Monson et al., 2019) and noise (Motlagh Zadeh et al., 2019).

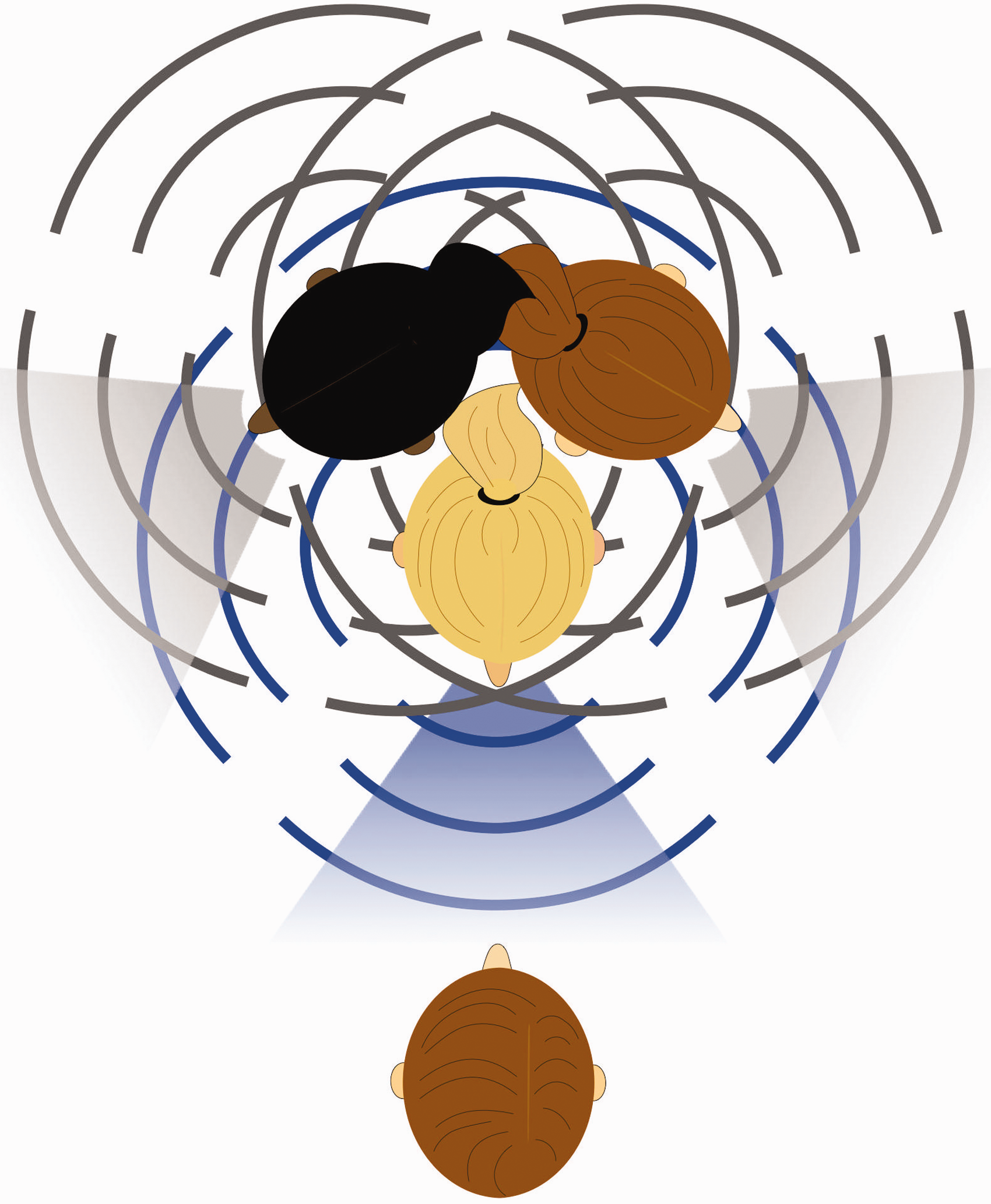

In a previous study, we showed that access to EHFs in speech supported speech-in-speech listening when the target talker was facing the listener while colocated maskers were facing away from the listener (Monson et al., 2019). This listening scenario departs from the traditional design but reflects a more realistic ‘cocktail party’ listening environment where the talker of interest is typically facing the listener and background talkers are typically facing other directions (Figure 1). Our approach followed from the hypothesis that rotated maskers result in less masking at the highest frequencies. This is because directivity patterns of speech radiation are frequency dependent, with low frequencies radiating more omnidirectionally around the talker’s head and high frequencies radiating more directionally (i.e., with less horizontal spread away from the front of the talker; Chu & Warnock, 2002; Halkosaari et al., 2005; Kocon & Monson, 2018; Monson et al., 2012a). Because of the increasing directionality at higher frequencies, rotating a masker’s head to face away from the listener effectively low-pass filters the masker speech signal received at the ear of the listener, providing potential spectral cues to the auditory system for detection and segregation of a target speech signal amidst masker speech signals. Under these conditions, we found that providing access to full-band speech (cutoff frequency of 20 kHz) improved normal-hearing listeners’ speech-in-speech recognition performance relative to speech bandlimited at 8 kHz.

The Target (Blue) and Masker (Gray) Arrangement Simulated in this Study. Due to the directionality of extended high-frequency radiation (shading) compared with low-frequency radiation (bars), this scenario results in substantial masking at low frequencies, but not at extended high frequencies. Target and masker were presented from a single loudspeaker in front of the listener.

The results of that study suggest that EHF energy in speech conveys information regarding the speech signal. However, the type of information provided by EHFs that benefits speech recognition remains unclear. One possibility is that EHF temporal information (e.g., the temporal envelope) serves as a segregation and grouping cue, facilitating segregation of low-frequency phonetic information. This is possible because high-frequency energy in speech is at least partially temporally coherent with low-frequency energy (Crouzet & Ainsworth, 2001; see Figure 2). Temporal coherence facilitates the grouping of sound features into a single stream, improving sound segregation for auditory scene analysis (Shamma et al., 2011), and it has been demonstrated that temporal (envelope) information becomes increasingly important for higher frequency bands in speech recognition in noise (Apoux & Bacon, 2004). Another possibility is that EHF spectral detail per se provides phonetic information. EHF spectral energy does provide information useful for phoneme identification when low-frequency information is absent (Vitela et al., 2015) or severely degraded (Lippmann, 1996). However, it may be that phonetic information provided by EHFs is redundant with phonetic information provided by lower frequencies and may not be useful when phonetic information at low frequencies is accessible. Indeed, the likelihood of this redundancy is supported by the history of speech intelligibility research, which resulted in models predicting negligible contribution from frequencies above 7 kHz for speech recognition when low and/or midrange frequencies are accessible (Monson et al., 2014a).

Cochleograms of the Female Target Talker Phrase, “The Clown Had a Funny Face.” The three filtering conditions are shown: the full-band signal (+EHF; left), the signal with EHF spectral detail removed, but EHF temporal envelope preserved (+EHFTemp; middle), and the signal low-pass filtered at 8 kHz (–EHF; right).

There is evidence that listeners with clinically normal audiograms but poorer pure-tone thresholds at EHFs have diminished speech-in-noise abilities. Badri et al. (2011) showed that listeners who self-reported and exhibited speech-in-noise difficulties had elevated EHF thresholds at 12.5 and 14 kHz compared with a control group. Motlagh Zadeh et al. (2019) also found group-level differences in self-reported speech-in-noise difficulty, with greater likelihood of reporting difficulty for groups with more severe EHF hearing loss (measured at 10, 12.5, 14, and 16 kHz). They also reported a correlation between EHF pure-tone averages (PTAs) and speech-in-noise scores when the noise masker was a broadband speech-shaped noise, although, surprisingly, no such relationship was observed when the noise masker was bandlimited to 8 kHz. Yeend et al. (2019) found that EHF PTAs (measured from 9 to 12.5 kHz) correlated with a composite speech score derived from both self-reported difficulty and objective speech-in-noise assessments.

These studies suggest that EHF hearing loss could potentially be a diagnostic or predictive factor for speech-in-noise difficulty. Others studies, however, have failed to find a relationship between EHF thresholds and speech-in-noise performance. Liberman et al. (2016) found that, although group-level differences in EHF thresholds (measured at 9, 10, 11.2, 12.5, 14, and 16 kHz) were present between individuals at high risk versus low risk for cochlear synaptopathy, EHF PTAs did not predict speech-in-noise performance. However, that study used speech materials that were bandlimited at 8.8 kHz. Similarly, Smith et al. (2019) found no relationship between EHF PTAs (measured at 10, 12.5, and 14 kHz) and speech-in-noise scores, although listeners in that study all had relatively good EHF thresholds. Prendergast et al. (2019) reported that speech-in-noise performance was predicted by statistical models that included 16-kHz thresholds as predictors, along with age and noise exposure. However, replacing the 16-kHz threshold with pure-tone thresholds at standard audiometric frequencies as predictors resulted in improved model predictions. Thus, there are mixed findings on the relationship between EHF pure-tone thresholds and speech-in-noise difficulty.

Another study provided data that might help resolve these mixed findings. Taking into consideration the effects of directivity of speech radiation, Corbin et al. (2019) demonstrated that better 16-kHz thresholds were associated with better speech-in-noise scores when maskers were facing away from the listener while the target talker was facing the listener. However, there was no relationship between 16-kHz thresholds and speech-in-noise scores when maskers and the target talker were all facing the listener. As described earlier, the rotating of the maskers’ heads introduces low-pass filtering effects, increasing the salience of EHF acoustic features for the target speech. This approach may have teased out the true relationship between EHF thresholds and speech-in-noise difficulty. Notably, listeners in that study had clinically normal audiograms but exhibited EHF pure-tone thresholds ranging from –20 to 60 dB HL.

To gain a better understanding of how spectral and temporal information contribute to the observed benefit of access to EHFs in speech, the present study assessed whether access to temporal information alone in the EHF speech band provided a benefit for speech-in-speech listening, and whether access to spectral detail provided any additional benefit. We hypothesized that access to both temporal information and spectral detail at EHFs would provide a benefit beyond that provided by temporal information alone. We assessed the effect of a change in masker head orientation, hypothesizing that maskers that were facing further away from the listener would lead to improved performance. In addition, we examined whether better pure-tone thresholds predicted better performance in our speech-in-speech task for a group of listeners who had normal hearing at both standard audiometric frequencies and EHFs.

Methods

Participants

Forty-one participants (six male), ages 19–25 years (mean = 21.3 years), participated in this experiment. Participants had normal hearing across the frequency range of hearing, as indicated by pure-tone audiometric thresholds better than 25 dB HL in at least one ear for octave frequencies between 0.5 and 8 kHz and EHFs of 9, 10, 11.2, 12.5, 14, and 16 kHz. All experimental procedures were approved by the Institutional Review Board at the University of Illinois at Urbana-Champaign.

Stimuli

The masker stimuli consisted of two-female-talker babble with both talkers facing 45° or both talkers facing 60° relative to the listener. Masker stimuli were generated using recordings made at angles to the right of the talkers, taken from a database of high-fidelity (44.1-kHz sampling rate, 16-bit precision) anechoic multichannel recordings (Monson et al., 2012a). Left-right symmetry in speech radiation from the talker was assumed during the recording process. A semantically unpredictable speech babble signal was created for each angle. Target speech stimuli were the Bamford-Kowal-Bench sentences (Bench et al., 1979) recorded by a single female talker in a sound-treated booth using a class I precision microphone located at 0°, with 44.1-kHz sampling rate and 16-bit precision.

Three filtering schemes were used. For the low-pass filtered condition, all stimuli were low-pass filtered using a 32-pole Butterworth filter with a cutoff frequency of 8 kHz. For the full-band condition, all stimuli were low-pass filtered at 20 kHz. For the third condition, designed to preserve temporal EHF information while removing EHF spectral detail, the amplitude envelope of the EHF band of each target and masker stimulus was extracted by (a) high-pass filtering at 8 kHz using a Parks-McClellan equiripple finite impulse response (FIR) filter, (b) computing the Hilbert transform of the high-pass filtered signal, and (c) low-pass filtering the magnitude of the Hilbert transform at 100 Hz. Each 8-kHz low-pass filtered target and masker stimulus was then summed with a spectrally flat EHF noise band (8–20 kHz) that was amplitude modulated using the envelope of the EHF band (i.e., a single-channel vocoded EHF band) corresponding to that stimulus (Figure 2).

Procedure

Stimuli were presented to listeners using a KRK Rokit 8 G3 loudspeaker at 1 m directly in front of the listener seated in a sound-treated booth. The level of the two-talker masker was set at 70 dB sound pressure level at 1 m, while the level of the target was adaptively varied. Two interleaved adaptive tracks were used, each incorporating a one-down, one-up adaptive rule. For one track, the signal-to-noise ratio (SNR) was decreased if one or more words were correctly repeated; otherwise, the SNR was increased. For the second track, the SNR was decreased if all words or all but one word were correctly repeated; otherwise, the SNR was increased. Both tracks started at an SNR of 4 dB. The SNR was initially adjusted in steps of 4 dB and then by 2 dB after the first reversal. Each of the two tracks comprised 16 sentences. Word-level data from the two tracks were combined and fitted with a logit function with asymptotes at 0 and 100% correct. The speech reception threshold (SRT) was defined as the SNR associated with 50% correct. Data fits were associated with r2 values ranging from .50 to .99, with a median value of .85.

Three filtering conditions were tested: full band (+EHF), full band with only EHF temporal information (+EHFTemp), and low-pass filtered at 8 kHz (–EHF). Two masker head orientation conditions were tested: both maskers facing 45° or both maskers facing 60° relative to the target talker. Following a single training block consisting of 16 sentences, the six conditions (three filtering conditions × two masker head angles) were tested in separate blocks with block order randomized across participants. The starting sentence list number was randomized for each participant and continued in numerical order of the Bamford-Kowal-Bench sentence lists.

Analysis

Statistical analysis consisted of a two-way repeated-measures analysis of variance (ANOVA) to assess the effect of filtering condition and masker head angle. Univariate Pearson’s correlation was used to assess the relationship between pure-tone thresholds and task performance. Statistical analyses were conducted using the ezANOVA and corr functions in R (R Core Team, 2018). Custom scripts written in MATLAB (MathWorks) were used for signal processing and experimental control. All recording materials and data for this study will be made available upon request.

Results

There was a main effect of filtering condition, with mean SRTs of –9.7, –9.2, and –8.3 dB (medians –9.9, –9.4, and –8.6 dB) for the +EHF, +EHFTemp, and –EHF conditions, respectively—two-way repeated-measures ANOVA, F(80, 2) = 15.8, p <.001 (Figure 3). Post hoc pairwise comparisons (Holm-Bonferroni corrected) revealed a significant difference between all EHF conditions (corrected p < .05 for all comparisons; see Figure 3). There was a main effect of masker head orientation, with mean SRTs of –8.4 and –9.7 dB (medians –8.7 and –10.2 dB) for the 45° and 60° conditions, respectively, F(40, 1) = 39.4, p < .001, and no interaction between filtering condition and masker head orientation (p = .2).

SRTs for the Three Filtering Conditions and Two Masker Head Orientations.

We conducted an exploratory analysis to assess whether pure-tone thresholds across the frequency range of hearing predicted performance in our full-band task (Figure 4). The 12.5-kHz, 16-kHz, and EHF PTA (9–16 kHz) exhibited the highest correlation coefficients (Pearson’s r > .3) between full-band (+EHF) task performance (averaged across masker head angles) and left-right-averaged pure-tone thresholds.

Mean SRTs for the +EHF Condition Plotted Against Pure-Tone Thresholds Averaged Across Both Ears. Shading represents 95% confidence intervals. Displayed p values are not corrected for multiple comparisons.

Discussion

We replicated our previous finding that access to EHFs in speech improves normal-hearing listeners’ speech-in-speech recognition performance relative to speech bandlimited at 8 kHz (Monson et al., 2019). The improvements observed in the present study between the +EHF and –EHF conditions were of similar magnitudes to those reported previously. These findings continue to support the use of high-fidelity speech materials when testing and/or simulating speech-in-speech environments as information at EHFs is audible and useful for speech recognition for normal-hearing listeners.

We hypothesized that spectral detail at EHFs provides benefit for listeners beyond that provided by EHF temporal information alone. Our results lend support for this hypothesis as a significant decrease in speech recognition was observed when spectral detail was removed and only temporal (i.e., envelope) information from the EHF band was provided to listeners. The size of this effect was small (0.5 dB on average), whereas EHF temporal information alone provided 0.9 dB of benefit, on average. Thus, our data suggest that EHF temporal information may account for a larger proportion of the EHF benefit, but the full complement of EHF benefit only occurs when additional spectral detail is also available. This finding highlights the exquisite sensitivity of the human auditory system to EHFs in speech, despite poorer frequency discrimination ability (Moore & Ernst, 2012), poorer pure-tone audibility (International Organization for Standardization, 2003), and larger widths of auditory filters beyond 8 kHz (Glasberg & Moore, 1990).

Although it has not been shown definitively here, our findings lend credence to the idea that EHFs provide phonetic information useful for speech-in-speech recognition rather than purely serving as a target speech segregation cue. This is possible because individual phonemes, such as voiceless fricatives, exhibit distinctive spectral features at EHFs (e.g., energy peak loci, spectral slopes; Monson et al., 2012b) sufficient to facilitate phoneme recognition, especially for consonants (Lippmann, 1996; Vitela et al., 2015). This finding is of importance for potential amplification of EHFs in hearing devices. For example, if EHFs were to be represented in cochlear implants, our data suggest that devoting more than a single electrode channel to EHFs may be useful to provide the intended EHF benefit.

The observed EHF benefit is also in line with previous reports that EHF hearing loss is correlated with both self-reported and objectively measured speech-in-noise difficulty (Badri et al., 2011; Barbee et al., 2018; Corbin et al., 2019; Motlagh Zadeh et al., 2019; Yeend et al., 2019). The inclusion of routine EHF examinations may help to identify listeners at risk of difficulties listening in noise with otherwise normal clinical audiograms. There are multiple reasons why EHF loss might lead to a speech-in-noise difficulty. As shown here, EHFs contribute to speech-in-speech recognition when maskers are facing different directions, which is typical for real-world cocktail party environments. Similar to how visual cues of a social partner’s head orientation and gaze can direct attention to that partner or other objects of interest (Frischen et al., 2007; Gamer & Hecht, 2007), highly directional EHFs could serve to herald the potential importance of an interlocutor’s speech signal, thereby drawing the listener’s attention to that signal. That is, high-amplitude EHF energy will only be received from a talker that is directly facing a listener, which likely indicates that this listener is the intended recipient of the talker’s utterance. In addition to this potential real-world cue, we have demonstrated here that spectral detail at EHFs provides information useful for speech-in-speech recognition. EHF hearing loss might lead to the degradation of these multiple sources of information.

Our exploratory analysis revealed that relationships between full-band SRTs and pure-tone thresholds across the frequency range of hearing only emerged at EHFs, in spite of our strict inclusion criterion for normal hearing (< 25 dB HL in at least one ear) at all frequencies, including EHFs. This finding should inform future hypotheses regarding the relationship between EHF thresholds and speech-in-noise performance. The rotating of the maskers’ heads in the present study introduces low-pass filtering effects, increasing the salience of EHF acoustic features for the target speech. This approach may elucidate the true relationship between EHF thresholds and speech-in-noise difficulty. We previously found that 16-kHz thresholds for normal-hearing listeners correlated with ability to detect EHF energy in speech (Monson & Caravello, 2019), and we found preliminary evidence here for a relationship with ability to use EHFs for speech-in-speech recognition.

We observed approximately 2 dB of improvement in SRT when the maskers were rotated from 45° to 60° for full-band speech. That this consistent and highly significant improvement occurs with a change of only 15° in head orientation is striking and highlights the sensitivity of the auditory system to talker/masker head orientation, particularly as it pertains to speech recognition (Corbin et al., 2019; Strelcyk et al., 2014). We have shown previously that the minimum audible change in a talker’s head orientation, relative to a 0° head orientation, is approximately 41° for the average normal-hearing listener (Monson et al., 2019). Although we have not tested the minimum audible change relative to a 45° head orientation, it is questionable whether this subtle 15° change in head orientation is detectable to the average listener. Nonetheless, it is clear that head orientation release from masking has a robust effect on speech-in-speech recognition for colocated maskers, although this effect may be reduced when maskers and target are spatially separated (Corbin et al., 2019).

In summary, we found that, despite the well-known decrease in sensitivity and acuity at EHFs for the human auditory system, spectral detail at EHFs conveys information useful for speech-in-speech recognition. EHF spectral detail provides additional gains beyond that provided by EHF temporal (i.e., envelope) information. Speech-in-speech performance is highly sensitive to masker head orientation, with a change of only 15° having a robust effect. We found evidence for a relationship between EHF pure-tone sensitivity and speech-in-noise scores when listeners have no substantial hearing loss at EHFs. Implications include that the preservation of spectral detail at EHFs may be beneficial in ongoing efforts to extend the bandwidth of hearing aids and other devices (Arbogast et al., 2019; Seeto & Searchfield, 2018; Van Eeckhoutte et al., 2020) or to restore audibility using frequency lowering or other amplification techniques (Alexander & Rallapalli, 2017; McCreery et al., 2014). Furthermore, the continued use of speech materials that are bandlimited by recording sampling rate and/or transducer frequency response for speech-in-noise testing in the clinic and the laboratory precludes the beneficial effects of EHF hearing. Finally, real-world speech signals include effects of talker head orientation, and incorporating these effects might improve the precision and predictive power of speech recognition measures.

Footnotes

Acknowledgments

The authors thank Andrew Oxenham for helpful discussions regarding the approach, Emily Buss for assistance with experimental software implementation, Sa Shen for biostatical advice, and Chris Plack and one anonymous reviewer for helpful comments on the article. Melanie Flores, Raquel Rojas, Ana Sabic, and Paige Valente assisted with data collection.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.