Abstract

Background:

Low back pain (LBP) is the leading cause of disability worldwide and is highly prevalent in Arabic-speaking countries. Many patients seek health information online, but the quality and reliability of Arabic resources remain unclear. This study evaluated the quality, reliability, and readability of Arabic websites on LBP.

Design and methods:

A cross-sectional study was conducted on July 15, 2025, using the Arabic keyword “low back pain” in Google, Yahoo, and Bing. The first 100 results per engine were screened in incognito mode. Eligible websites were Arabic, publicly accessible, and patient oriented. After exclusions, 95 websites were included. Websites were classified by affiliation and assessed using the DISCERN instrument (quality), Journal of the American Medical Association (JAMA) benchmarks (reliability), and automated readability indices (Flesch Reading Ease, Flesch-Kincaid Grade Level, SMOG).

Results:

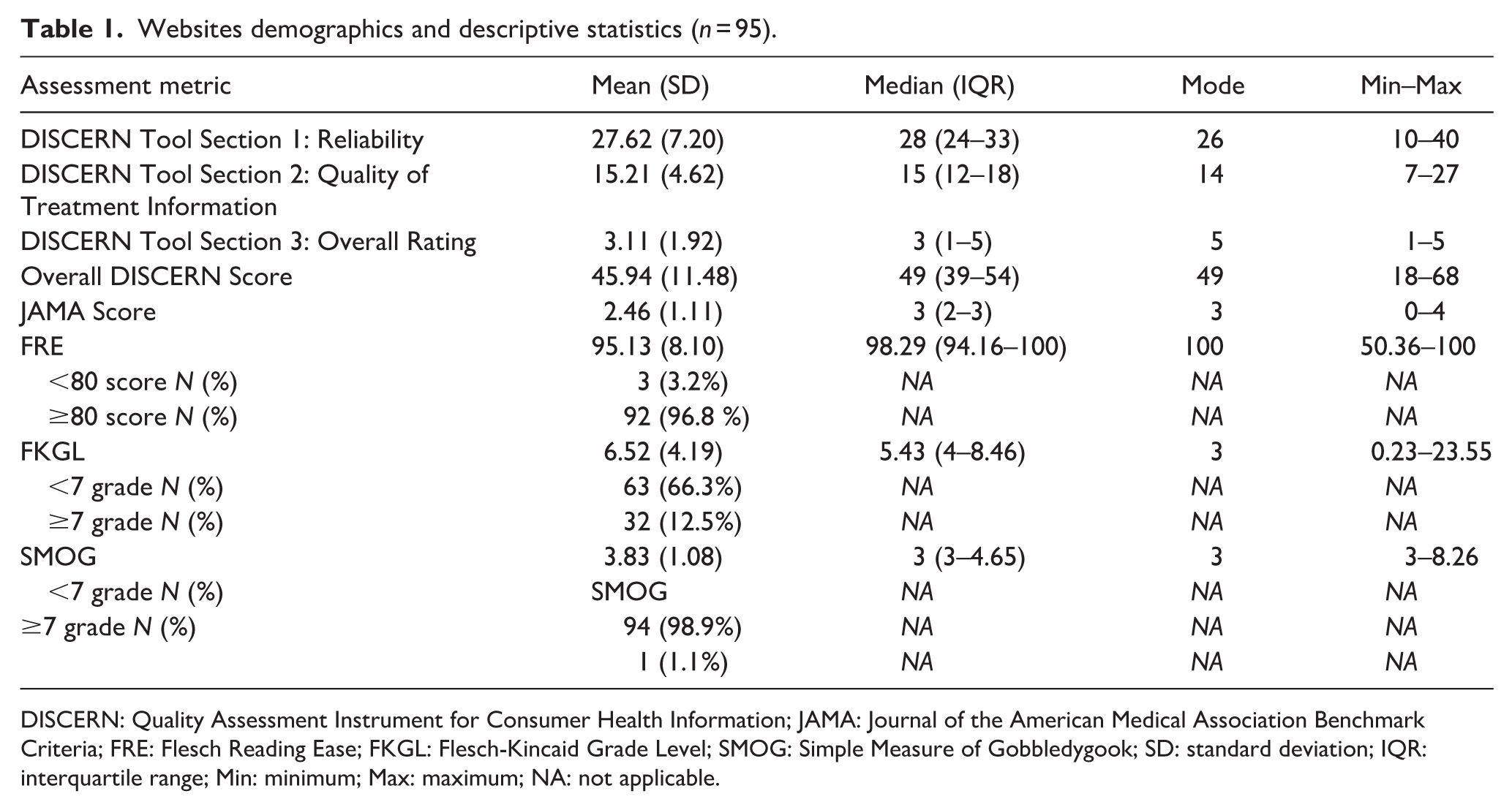

Of 300 screened websites, 95 met inclusion criteria. Health portals and educational sites comprised 46.3%. Overall quality was moderate (mean DISCERN 45.9 ± 11.5), with 82.1% rated as moderate and only 4.2% as good. Reliability was low (mean JAMA 2.46 ± 1.1); only 20% met all four benchmarks. Authorship and currency were present in 36.8% and 26.3% of sites. Readability was high, with 96.8% achieving FRE ≥ 80. Top-ranked websites showed higher quality and reliability (p < 0.001), though readability differences were minimal. DISCERN and JAMA correlated moderately (rho = 0.472, p < 0.001).

Conclusions:

Arabic websites on LBP are generally easy to read but often lack transparency, reliability, and evidence-based content. Strengthening online Arabic health resources through standardized quality frameworks is crucial to reduce misinformation and support informed decision-making.

Introduction

Low back pain (LBP) is defined as pain below the 12th rib extending to the gluteal folds, with or without leg radiation, lasting at least one day. 1 LBP is the leading cause of disability worldwide. 2 It affected an estimated 629 million people globally in 2021, with predictions indicating a rise to 890 million by 2050 due to population growth and aging. 3 This burden is pronounced in Arabic-speaking regions, where studies report high prevalence rates of LBP, such as 46.2% in Tabuk, Saudi Arabia, and 64.6% in Al-Ain, the United Arab Emirates, highlighting the substantial impact on individuals’ health and well-being.1,4

Given the significant burden of LBP, effective patient education is essential to enhance self-management and empower patients to participate actively in their care. It can also enhance adherence to treatment plans and result in improved long-term outcomes. 5 Recent evidence demonstrates that combining structured health education with exercise therapy produces superior functional and psychosocial outcomes in patients with non-specific low back pain compared to usual medical care alone.6,7 This multimodal approach facilitates a significant reduction in disability and kinesiophobia by addressing fear-avoidance behaviors and reducing pain catastrophizing, while simultaneously improving pain-related cognitions and perceived functional capability. 8 These findings align with broader evidence from multidisciplinary rehabilitation programs across chronic musculoskeletal conditions, where exercise combined with cognitive-behavioral and self-management education consistently improves work participation, functional status, and long-term quality of life. 9 Despite the availability of numerous educational materials on LBP, misconceptions about its causes and prognosis remain widespread among patients. This highlights the need for clear, evidence-based information that provides accurate knowledge about the condition and treatment options, enabling patients to practice effective self-care and ultimately improve their quality of life. 5

To access reliable information, many patients turn to the internet, a widely used global source of health knowledge. Patients increasingly use web searches to learn about medical conditions, drawn by the growth of resources, vast databases, and easy accessibility. 10 In Arabic-speaking countries, this trend is evident, with studies indicating a substantial proportion of individuals seek health information online owing to its convenience. For instance, a 2021 Saudi Arabian study reported that 92.6% of 1363 participants used the internet for medical information, while a 2017 Egyptian study found that 55.4% of 490 internet users regarded it as their primary source of health information.11,12 Yet this accessibility is a double-edged sword. While offering quick insights, it can expose users to unreliable content, leading to misguided self-management, delayed professional care, and unnecessary anxiety. 10 Moreover, readability is a key driver of misconceptions; even trustworthy websites with accurate insights can cause misunderstandings if the writing style is overly advanced or complex. 10

Consequently, a rigorous evaluation of the quality, reliability, and readability of online resources is crucial for empowering informed decision-making and effective self-care. In response, validated and developed tools have emerged to standardize the evaluation of health information. These include the DISCERN instrument, a validated questionnaire for evaluating the quality of consumer health information, and the JAMA benchmarks, which are commonly used to assess the reliability of websites using metrics like authorship and currency. Readability metrics, such as the Flesch-Kincaid Grade Level (FKGL) and Flesch Reading Ease (FRE), assess text complexity and help ensure that health information is accessible, reducing the risk of misunderstandings.13,14 Numerous studies have assessed the reliability of online LBP content, but primarily in non-Arabic languages. Given LBP’s high prevalence in Arabic-speaking regions, research on the evaluation of Arabic-language online materials remains absent.

Health literacy plays a key role in how individuals access, understand, and use health information to make informed decisions. Leading health authorities, including the National Institutes of Health (NIH), the U.S. Department of Health and Human Services (HHS), and the American Medical Association (AMA), recommend that patient education materials be written at or below Grade 6 level to ensure broad accessibility. Materials written above this level may impair comprehension and patient engagement. Therefore, evaluating the readability of online health information is essential to determine its suitability for patient education and alignment with established health literacy standards. 15 Beyond readability, patient knowledge of disease causes, pathophysiology, treatment options, and prevention is closely linked to clinical outcomes. Well-informed patients are more likely to participate in care, adhere to treatment recommendations, and engage in preventive behaviors, ultimately improving treatment effectiveness. This highlights the importance of evaluating not only the readability but also the educational value of online health information. 16

To address this gap, the current study conducts a cross-sectional infodemiological analysis of Arabic-language online educational materials for low back pain, employing the DISCERN instrument for quality assessment, JAMA benchmarks for reliability evaluation, and readability metrics to elucidate the strengths and limitations of these resources for Arabic-speaking populations.

Methods

Search strategy

In this cross-sectional infodemiological study, we sought to evaluate Arabic-language online educational materials related to LBP by simulating typical patient search behavior. At the outset of the search, the term “Low Back Pain” (translated into Arabic) was selected as the search keyword due to its relevance and high frequency of usage, as corroborated by Google Trends data. Its appropriateness was further supported by input from a convenience sample of Arabic-speaking individuals without medical backgrounds, as well as expert physicians experienced in patient education. The search was conducted across three widely used search engines: Google (http://www.google.com), Yahoo (http://www.yahoo.com), and Bing (http://www.bing.com). This selection was guided by data from the Pew Research Center’s Internet & American Life Project, 17 which indicated that 79% of individuals searching for health-related information online predominantly use general search engines. In contrast, medical-specific search engines are generally less effective at providing accessible and user-friendly health information for the general public. 18 To minimize algorithmic and personalization bias, all searches were conducted in incognito (private browsing) mode without logging into any user accounts. This approach prevented the influence of prior browsing history, stored cookies, and personalized algorithms on search outcomes. Since incognito mode automatically disables the storage of cookies and clears session data upon exit, it ensures a standardized and unbiased search environment across all engines, thereby enhancing the reliability and reproducibility of the search results.

Selection of websites

Searches were conducted on July 15, 2025, and completed within a 24-h window to ensure consistency in search results over time. The sampling strategy involved systematic screening of the first 100 search results from each search engine to approximate typical patient information-seeking behavior. The study size was determined based on prior infodemiological research evaluating the first 100 search results per search engine to simulate real patient search behavior and ensure methodological comparability with previous studies. This sampling strategy is consistent with methodologies employed in prior infodemiological research evaluating web-based health content. 19 Websites were included if they were written in Arabic, publicly accessible, and primarily intended to educate patients or the general public about LBP. Those not meeting these criteria were excluded, with additional exclusion criteria. The eligible web pages were then categorized into six typologies to enable a structured comparison of content sources. Moreover, the content of each website will be assessed for the inclusion of key elements related to LBP, including its definition, signs and symptoms, causes, risk factors, complications, prognosis, treatment options, and prevention strategies.

Data extraction

Data were abstracted and organized using a Google form by two reviewers. The extracted variables included the website title, content coverage (e.g. causes, symptoms, and prevention), and quality and readability assessment scores. Additionally, the unique web addresses (URLs) of all websites identified in the search were recorded.

Readability assessment

The ease of reading and comprehending written material is referred to as readability, and it plays a crucial role in determining how well patients comprehend health-related information.20,21 All websites were evaluated for readability using an online calculator tool. 22 Although initially developed for English-language texts, the tool is adaptable and has been applied to other languages to provide general estimates of readability. 23 The readability assessment follows recommendations from the American Medical Association and the U.S. Department of Health and Human Services, which advises that patient education materials should be written at or below a fifth–sixth grade reading level to ensure broad public understanding.21,24

The online tool evaluated text readability using several established metrics, including the Flesch Kincaid Grade Level (FKGL), Simple Measure of Gobbledygook (SMOG), and Flesch Reading Ease (FRE). Cut-off values for acceptable readability were defined based on previously published literature. Accordingly, an average Flesch Reading Ease (FRE) score of ≥80 was considered acceptable, while grade-based readability formulas, including the Flesch–Kincaid Grade Level (FKGL) and Simple Measure of Gobbledygook (SMOG), were considered acceptable at a level of ≤6, reflecting the recommended sixth-grade reading level for patient education materials. 25

Although the Gunning Fog Index (GFI), Coleman Liau Index (CLI), and Automated Readability Index (ARI) were available, they were excluded from analysis because these measures rely on letter counts, which are not suitable for Arabic. Unlike English, Arabic words are formed by connecting characters in a continuous script, rather than being composed of clearly separated letters. This structural difference makes letter-based readability formulas inaccurate for Arabic text.21,24

According to established guidelines, acceptable readability thresholds were set at a FRE of≥80 and FKGL and SMOG scores of <7. The FKGL index ranges from 0 to 18, with higher scores reflecting increased reading difficulty. The SMOG index estimates the educational level required to understand a given text, with scores of 7–8 indicating suitability for readers at the seventh- to eighth-grade level. FRE scores span from 0 to 100, where higher values denote more accessible content: scores of 90–100 is considered “very easy,” 80–89 “easy,” 70–79 “fairly easy,” 60–69 “standard” (ideal for general audiences), 50–59 “fairly difficult,” and scores below 50 are deemed “difficult” (typically suitable for college-level or graduate readers). All assessments were conducted using automated readability formulas embedded in the tool, which have been previously validated in peer-reviewed literature.26–28

Quality assessment

The quality of the included websites was evaluated using multiple validated tools, including the DISCERN instrument, 29 and the Journal of the American Medical Association (JAMA) benchmarks. 30 The DISCERN tool is a 16-item questionnaire designed to evaluate the quality of health information. It is divided into three sections: questions 1–8 assess the reliability of the content as a trustworthy source on a specific treatment; questions 9–15 evaluate the presentation of treatment choices; and question 16 provides an overall quality rating. Each question is scored from 1 (low quality) to 5 (high quality), with a total possible score ranging from 16 to 80. Scores of 65 or higher indicate high quality, 33–64 indicate moderate quality, and 16–32 reflect low quality. The JAMA benchmark tool measures reliability based on four criteria: (1) authorship—disclosure of authors, affiliations, and credentials; (2) attribution—presence of references and sources; (3) disclosure—transparency about ownership, funding, and conflicts of interest; and (4) currency—indication of the publication or update date. Each criterion earns 1 point if met, resulting in a total score ranging from 0 to 4. Websites with a score of 3 or higher are considered highly reliable, while those scoring 2 or lower raise concerns about trustworthiness. Two reviewers (A.A and M.A) independently evaluated the included websites using the DISCERN and JAMA tools. To ensure consistency, both reviewers together assessed five websites initially and resolved any discrepancies through consensus discussion.

Ethical considerations and data accessibility

This study did not involve human or animal subjects, as only publicly accessible websites were analyzed. Consequently, ethical approval from our institutional review board was not necessary.

Statistical analysis

All statistical analyses were conducted using SPSS version 27. The Statistical significance was set at a two-tailed α = 0.05. The continuous variables, such as the DISCERN score, JAMA score, and readability indices, were summarized as mean and standard deviation (SD), median with interquartile range (IQR), mode, and range. One-way analysis of variance (ANOVA) was applied to normally distributed continuous data, while the Kruskal–Wallis test was used for non-normally distributed variables. Spearman’s rho correlation analysis was conducted to assess the relationships between DISCERN and JAMA scores, as well as their associations with readability metrics, including Flesch Reading Ease (FRE), Flesch–Kincaid Grade Level (FKGL), and Simple Measure of Gobbledygook (SMOG). No missing data were identified, as all included websites contained complete measurable information for the assessed variables. Also, sensitivity analyses were not performed due to the descriptive and exploratory nature of the study design. The reporting of this study conforms to the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) statement. 31 The completed checklist is provided as Supplemental File 1.

Results

Website search and demographics

A comprehensive web search initially identified 31,879,100 records across the three search engines. After retrieval, 300 websites were screened, and 103 duplicates were removed, leaving 197 unique websites for evaluation. Following application of the exclusion criteria, 102 websites were excluded, resulting in 95 eligible websites that met the full inclusion criteria (Supplemental Figure 1). These websites were subsequently evaluated for quality and readability using validated assessment tools. Table 1 summarizes the quality and readability scores of the included websites.

Websites demographics and descriptive statistics (n = 95).

DISCERN: Quality Assessment Instrument for Consumer Health Information; JAMA: Journal of the American Medical Association Benchmark Criteria; FRE: Flesch Reading Ease; FKGL: Flesch-Kincaid Grade Level; SMOG: Simple Measure of Gobbledygook; SD: standard deviation; IQR: interquartile range; Min: minimum; Max: maximum; NA: not applicable.

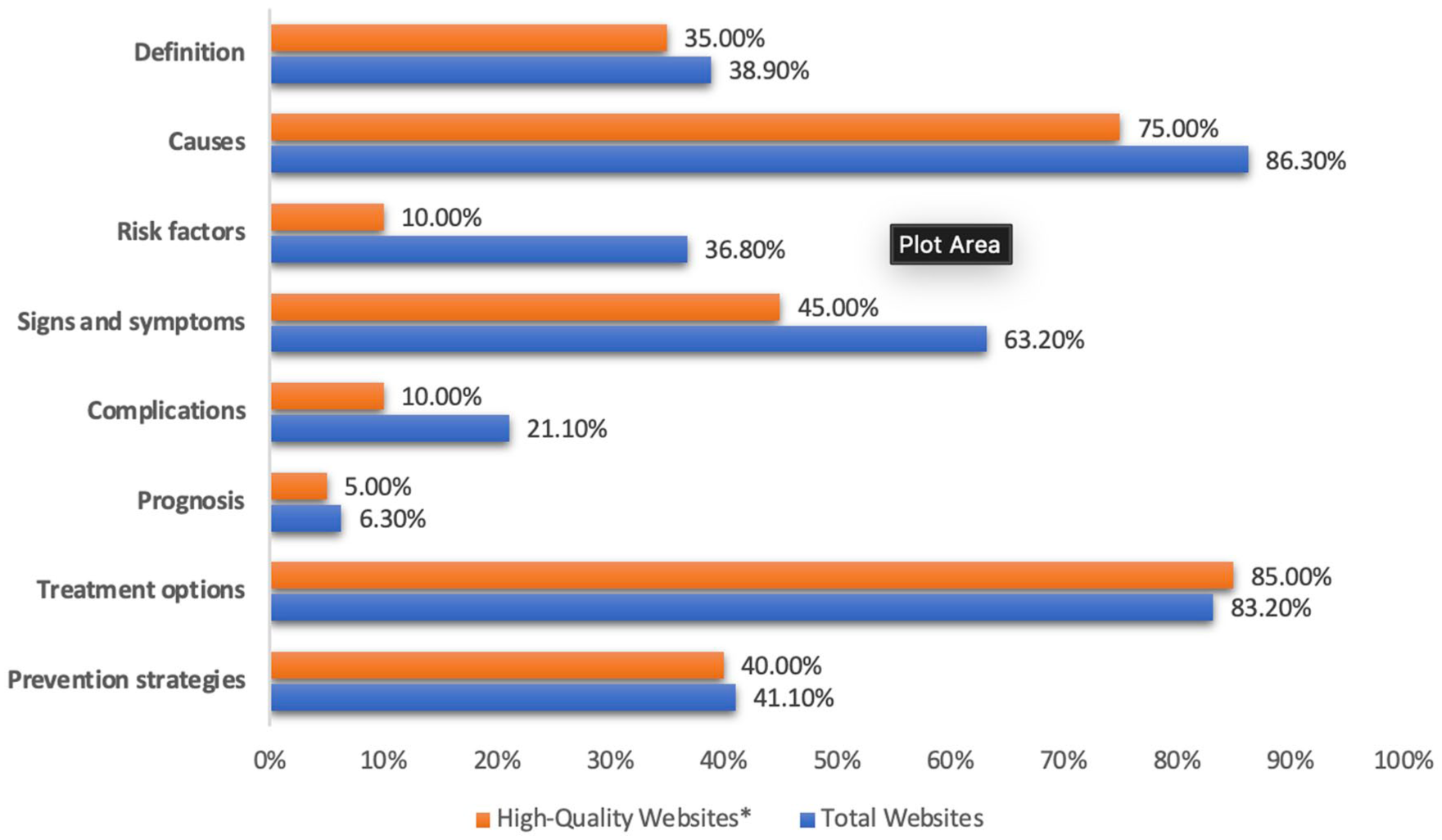

Regarding website typologies, the majority were classified as health portals and general education sources, accounting for 44 websites (46.3%), highlighting their predominant role in disseminating online health information (Supplemental Figure 2). These websites were further examined for content coverage across key LBP subtopics. Figure 1 illustrates the subtopic analysis of the 95 included websites, among which 20 met the high-quality criteria (DISCERN score > 64 and JAMA score > 3).

Low back pain subtopic analysis.

Quality assessment

Regarding the overall JAMA benchmarks, 4 (4.2%) websites met none of the criteria of JAMA, 15 (15.8%) websites met one criterion, 28 (29.5%) websites met two criteria, 29 (30.5%) websites met three criteria, and only 19 websites (20.0%) met all four criteria of JAMA. According to the DISCERN classification, 13 (13.7%) websites were rated as having poor quality (scores 16–32). There were 78 (82.1%) websites labeled as moderate quality (33–64), and just 4 (4.2%) were labeled as good quality (>64). (Table 2). The distribution of individual DISCERN item scores is illustrated in the heatmap (Figure 2). Most health portals and general education websites achieved higher JAMA compliance, with 14 (31.8%) meeting all four benchmarks, whereas medical institutions and hospitals rarely did so, 1 (3.2%). Across all affiliations, the majority of websites were rated as moderate in quality, with only a few achieving a “good” DISCERN rating (>64), indicating overall moderate performance across affiliations (Table 3).

Quality assessment of the included websites based on JAMA and DISCERN (n = 95).

Heatmap for DISCERN Questionnaire.

Quality of the included websites based on their affiliation.

FRE: Flesch Reading Ease; FKGL: Flesch-Kincaid Grade Level; SMOG: Simple Measure of Gobbledygook; NA: not applicable.

Readability of LBP related websites

As shown in Table 4, the mean SMOG score was 3.82 ± 1.07, with only 1 (1.1%) website exceeding the recommended score of ≥7 grade, while the mean FRE score was 95.12 ± 8.09, with the majority 92 (96.8%) achieving an FRE ≥ 80, indicating easy-to-read content, and overall, most Arabic-language low back pain websites were within recommended readability levels for public health education. For the Flesch Reading Ease (FRE) score categories by website affiliation, among the websites rated as “difficult,” most were health portals or general education sites (66.7%), followed by medical institutions and hospitals (33.3%). Website characteristics across search engines showed a significant difference in JAMA scores (p = 0.004), with Bing scoring the highest (2.89 ± 1.05), followed by Yahoo (2.54 ± 0.66) and Google (1.92 ± 1.09).

All websites characteristics based on the search engines and quality comparison.

DISCERN: Quality Assessment Instrument for Consumer Health Information; JAMA: Journal of the American Medical Association Benchmark Criteria; FRE: Flesch Reading Ease; FKGL: Flesch-Kincaid Grade Level; SMOG: Simple Measure of Gobbledygook.

Comparison of readability and quality parameters between the top 10 highest-ranked websites and the remaining 85. The top 10 had significantly higher FRE scores (96.11 ± 6.52 vs 86.77 ± 14.18, p < 0.001) and higher FKGL scores (9.18 ± 6.06 vs 6.21 ± 3.85, p = 0.033). The SMOG scores were similar between groups (p = 0.728). Overall DISCERN scores were markedly higher for the top 10 (63.00 ± 3.59 vs 43.93 ± 10.36, p < 0.001), with 40% rated as “good” quality compared to none in the other group.

Correlations of quality and readability

Spearman’s correlations (Supplemental Table 1) between JAMA/DISCERN and readability indices were as follows: The JAMA score showed a significant positive correlation with DISCERN (rho = 0.472, p < 0.001). The DISCERN score demonstrated a weak negative correlation with FRE (rho = –0.237, p = 0.020) and weak, non-significant positive correlations with FKGL (rho = 0.170, p = 0.100) and SMOG (rho = 0.174, p = 0.091). The FRE and FKGL were strongly negatively correlated (rho = –0.007, p = 0.947), while FKGL and SMOG showed a strong positive correlation (rho = 0.420, p < 0.001).

Discussion

This cross-sectional study examined whether freely available Arabic-language online resources about LBP meet accepted standards for transparency, reliability, and readability while remaining accessible to the general public. The results show a clear imbalance: the majority of websites were written at an easy-to-read level (mean FRE ≈ 95; two-thirds below a seventh-grade FKGL) but achieved only moderate scores for quality (mean DISCERN ≈ 46) and transparency (mean JAMA ≈ 2.5/4). Authorship and attribution were inconsistent, and the presence of publication or update dates was particularly rare. Higher JAMA scores were moderately correlated with higher DISCERN scores, but readability was not associated with improved quality. Health portals and general-education sites were most likely to meet all JAMA criteria, while medical institutions most often disclosed conflicts of interest. Additionally, Bing-sourced pages generally had higher JAMA scores than those from Google. Content coverage strongly favored causes and treatment options, while discussions of prognosis, complications, and the balance of treatment benefits and risks were infrequently addressed.

Placed in the context of the wider literature, our findings align with international evaluations of LBP web content, which reveal that guideline-concordant information is underrepresented, and transparency measures are lacking. For example, Ferreira et al. 32 found that fewer than half of the statements on LBP websites were accurate, and that core guideline recommendations for acute LBP, such as advice to remain active, were addressed on only around one-quarter of the sites. Hendrick et al. 33 similarly documented variability in quality and completeness across acute LBP resources. Importantly, a recent Turkish study evaluating internet-based patient education materials on low back pain using the Ateşman readability scale found that most resources were difficult to read, despite covering common LBP topics, and concluded that readability barriers may limit patient understanding even when content is clinically relevant. 34 The current results also align with repeated observations in spine-related patient education, where professional society websites and general health resources often present information at reading levels above recommended thresholds, yet still omit important details on treatment risks, prognosis, and shared decision-making.35–37 Notably, while many English-language resources are considered too difficult for the general public, the Arabic-language materials here were very easy to read but still lacked critical quality elements, reinforcing the principle that readability alone is not a reliable proxy for high-quality patient education.

Within Arabic-language online health information more broadly, similar patterns have been reported across dental and oral health topics—moderate DISCERN scores, incomplete JAMA adherence, and generally easy readability.38,39 These findings suggest a recurring regional pattern rather than a condition-specific issue. Two contextual factors may explain this. First, the FRE, FKGL, and SMOG formulas were developed for English and may misrepresent the complexity of Arabic text due to linguistic differences in morphology, orthography, and syntax.40,41 Second, the Arabic online information ecosystem is dominated by portal-style content designed for rapid consumption, which may prioritize readability and brevity over citation, comprehensive coverage, and transparent authorship.

Search engine differences are also relevant. Previous research has shown that ranking algorithms tend to prioritize popularity and freshness over credibility signals, resulting in high-traffic but lower-quality content ranking more prominently. 42 This study’s finding that Bing-sourced results had higher JAMA scores than Google-sourced results suggests that algorithmic differences may influence the visibility of more transparent sources. In Arabic-speaking populations, where eHealth literacy varies widely and internet search is often the first step in seeking care,43,44 such differences in search engine outputs may significantly affect the quality of information patients encounter. Beyond traditional search engines, AI-supported conversational platforms are increasingly used as primary sources of health information. These systems generate synthesized responses in natural language, often perceived as authoritative and personalized. While they have the potential to improve accessibility and comprehension, concerns remain regarding transparency of sources, citation practices, algorithmic bias, and the risk of confidently presented but outdated or non–guideline-concordant information. The opacity of training data and variability in response generation may further complicate quality assurance, particularly in languages where validated evaluation frameworks are limited. 45

Similarly, video-sharing platforms such as YouTube have become prominent channels for musculoskeletal health information. Their audiovisual format may enhance engagement and comprehension; however, prior evaluations of spine-related content on YouTube have demonstrated substantial variability in reliability, completeness, and disclosure of risks. Content is frequently produced by non-specialists, commercial entities, or influencers, and algorithmic promotion is driven primarily by engagement metrics rather than clinical accuracy. As a result, videos emphasizing quick fixes, passive modalities, or unproven interventions may achieve greater visibility than evidence-based guidance promoting exercise, reassurance, and active self-management. For Arabic-speaking users, where professionally produced guideline-concordant video resources remain limited, this imbalance may further amplify exposure to incomplete or misleading information.46,47

From a clinical perspective, these shortcomings are particularly concerning given the strong evidence base supporting exercise therapy combined with patient education for the management and prevention of non-specific low back pain. Systematic reviews and randomized controlled trials consistently demonstrate that interventions integrating exercise with structured health education are more effective than usual medical care in reducing pain, improving function, and preventing recurrence.6,7 Educational components have been shown to enhance patient understanding, engagement, and adherence when delivered alongside exercise-based therapy. In particular, randomized and controlled trials evaluating structured “back school”–type interventions and multimodal educational-therapeutic programs have reported significant improvements in pain intensity, functional outcomes, and self-management capacity among individuals with non-specific low back pain. These programs typically combine biomechanical education, cognitive-behavioral strategies, supervised exercise, and ergonomic counseling, reflecting a biopsychosocial model of care. Such interventions exemplify the type of structured, evidence-based educational content that is often underrepresented or insufficiently explained in freely accessible online materials.7,48 When online information omits or inaccurately represents these evidence-based approaches, patients may be less likely to adopt effective self-management strategies, potentially undermining the real-world implementation of interventions that are otherwise clinically effective. Therefore, the deficiencies identified in web-based Arabic resources may not merely represent informational gaps, but could translate into missed opportunities for prevention, delayed adoption of active management strategies, and persistence of maladaptive beliefs regarding rest, imaging, or passive treatments.

To improve Arabic-language online health information, developers should adopt validated quality frameworks such as DISCERN and the JAMA benchmarks,29,30 as well as tools for understandability and actionability like the PEMAT and CDC Clear Communication Index.49,50 Content should include named authorship, update dates, high-quality references, explicit statements of benefits and risks, potential consequences of not treating, and prompts for shared decision-making. 51 In addition, search engines and health authorities could collaborate to enhance the ranking of sources that meet these transparency and quality thresholds, in line with current infodemic management frameworks.

Study limitations and future directions

The study has some limitations. Search results are dynamic and influenced by personalization, meaning that a single cross-sectional capture cannot reflect ongoing changes. The keyword set may have excluded relevant multimedia or niche resources. Applying English-derived readability tools to Arabic likely introduced measurement bias. Finally, DISCERN and JAMA assess quality and transparency but not the accuracy of statements against current clinical guidelines. In addition, the quality of online information was assessed independently of patient behavior or clinical outcomes, and the study cannot determine how the identified deficiencies directly influence treatment adherence or health outcomes. Additionally, readability assessment in this study was performed using a single online calculator, which represents a potential methodological limitation. Previous research has shown that different readability formulas and calculators may produce variable readability estimates, reflecting differences in underlying algorithms and scoring approaches. Consequently, the readability findings in the present study should be interpreted with caution, and future studies may benefit from using multiple readability tools and averaging results to improve robustness. 52

Future research should monitor Arabic-language search results longitudinally, compare algorithmic changes across search engines, and incorporate Arabic-specific readability metrics alongside human comprehension testing to enhance the understanding of Arabic search results. Automated credibility assessment tools, adapted to Arabic, could help flag missing transparency markers and facilitate large-scale monitoring. Co-designed, guideline-concordant patient education resources in Modern Standard Arabic and major dialects, developed with input from patients and clinicians, should be evaluated for their impact on knowledge, decisional conflict, and adherence to evidence-based care.

Strengths of the study

This study has several notable strengths. It represents one of the first comprehensive infodemiological evaluations of Arabic-language online educational materials on low back pain, addressing a clear gap in the literature for Arabic-speaking populations. The study employed a systematic and reproducible search strategy across three major search engines using incognito mode, minimizing personalization and selection bias while closely simulating real patient search behavior. Multiple validated assessment tools were applied, including the DISCERN instrument for quality, JAMA benchmarks for reliability, and established readability formulas, allowing for a multidimensional evaluation of online content. Additionally, websites were categorized by affiliation and search engine ranking, enabling meaningful comparisons across content sources and visibility levels.

Conclusion

Arabic websites on LBP are generally user-friendly, but they frequently lack transparency, reliability, and comprehensive evidence-based information. Nevertheless, only a limited number of these websites adhere to stringent quality standards. The enhancement of Arabic online health resources through the application of validated quality frameworks, transparent authorship, and balanced treatment content is crucial in mitigating misinformation and facilitating informed patient decision-making.

Supplemental Material

sj-docx-3-phj-10.1177_22799036261441327 – Supplemental material for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability

Supplemental material, sj-docx-3-phj-10.1177_22799036261441327 for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability by Hassan Ali Alradhi, Mohammed Bader Altaha, Ayat Jassim Al Eid, Mohammad Hasan Alatiyah, Biam Saydo, Layan Saleh Baeesa, Abdulrahman Jafar Sabbagh and Saleh Salem Baeesa in Journal of Public Health Research

Supplemental Material

sj-docx-4-phj-10.1177_22799036261441327 – Supplemental material for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability

Supplemental material, sj-docx-4-phj-10.1177_22799036261441327 for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability by Hassan Ali Alradhi, Mohammed Bader Altaha, Ayat Jassim Al Eid, Mohammad Hasan Alatiyah, Biam Saydo, Layan Saleh Baeesa, Abdulrahman Jafar Sabbagh and Saleh Salem Baeesa in Journal of Public Health Research

Supplemental Material

sj-tiff-1-phj-10.1177_22799036261441327 – Supplemental material for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability

Supplemental material, sj-tiff-1-phj-10.1177_22799036261441327 for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability by Hassan Ali Alradhi, Mohammed Bader Altaha, Ayat Jassim Al Eid, Mohammad Hasan Alatiyah, Biam Saydo, Layan Saleh Baeesa, Abdulrahman Jafar Sabbagh and Saleh Salem Baeesa in Journal of Public Health Research

Supplemental Material

sj-tiff-2-phj-10.1177_22799036261441327 – Supplemental material for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability

Supplemental material, sj-tiff-2-phj-10.1177_22799036261441327 for An infodemiological analysis of arabic online educational content on low back pain: Insights into quality and reliability by Hassan Ali Alradhi, Mohammed Bader Altaha, Ayat Jassim Al Eid, Mohammad Hasan Alatiyah, Biam Saydo, Layan Saleh Baeesa, Abdulrahman Jafar Sabbagh and Saleh Salem Baeesa in Journal of Public Health Research

Footnotes

Acknowledgements

The authors extend their sincere gratitude to all colleagues and affiliated institutions who generously provided valuable insights and support throughout the development of this manuscript.

ORCID iDs

Ethical considerations

Ethical approval was not required for this study because it did not involve human participants, animal experiments, or identifiable personal data.

Author contributions

Hassan Ali Alradhi, Mohammed Bader Altaha, Ayat Jassim Al Eid, Mohammad Hasan Alatiyah, Biam Saydo, and Layan Saleh Baeesa made substantial contributions to the conception, design, data collection, analysis, interpretation, and drafting. Abdulrahman Jafar Sabbagh, and Saleh Salem Baeesa approved the manuscript. The project was supervised by the primary investigator Saleh Salem Baeesa.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The datasets generated and/or analyzed during the current study are available from the corresponding author upon reasonable request.*

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.