Abstract

Orchestrating meaningful classroom interaction and dialogue is a complex pedagogical challenge, requiring teachers to manage both cognitive and social-cultural dynamics. Many teachers, for various social-cultural reasons, may be hesitant to orchestrate interactive learning in the classroom. This theoretical paper proposes the ‘AI Co-Pilot’ framework to help teachers systematically address this challenge. The framework conceptualizes AI as an intelligent assistant that functions as a cultural mediator, enabling teachers to guide a progression from passive reception to interactive co-construction. Theoretically, it integrates the cognitive pathway of the ICAP framework (Interactive, Constructive, Active, Passive) with the social principles of dialogic teaching. Practically, it leverages AI to create psychologically safe spaces (e.g., private Socratic dialogue, anonymous peer interaction) where students can develop interactive competencies. This approach, illustrated within Confucian-heritage contexts, provides a theoretically-grounded and culturally-responsive pathway for pedagogical transformation, offering insights for any settings where social factors influence learning.

Keywords

Introduction

The Confluence of AI and East Asian Pedagogy

The integration of artificial intelligence (AI) technologies into education presents both opportunities and challenges, particularly in contexts where national policies promote interactive learning while teachers operate within Confucian-heritage educational cultures. This intersection is especially significant in East Asian countries, where substantial policy commitments and market investments reflect strategic priorities for AI-enhanced education.

China has established comprehensive national policies such as the New Generation Artificial Intelligence Development Plan (State Council, 2017) and the AI Innovation Action Plan for Colleges and Universities (Ministry of Education, 2018), which aim to cultivate AI talent and enhance educational quality (Knox, 2020; Miao et al., 2021; Song et al., 2022; Wu et al., 2020; Yang, 2019). This policy framework has enabled widespread deployment of AI-powered platforms. For example, Squirrel AI reports serving over 24 million students in China, demonstrating the scale of current implementation (Elliott, 2024; Li & Towne, 2025). Similarly, Singapore’s Smart Nation strategy and South Korea’s Mid-to Long-Term Plan for an Intelligent Information Society reflect regional recognition of AI’s potential role in educational and economic development (Lake, 2023).

However, policy mandates and technological capabilities do not automatically translate into effective pedagogical practice. While these national commitments create infrastructure and resources for AI integration, implementation must address complex pedagogical, cultural, and ethical considerations that vary across contexts.

The Challenge of Passivity in Confucian-Heritage Classrooms

Simultaneously, the pedagogical environment in many East Asian classrooms presents unique characteristics that complicate the adoption of Western-style interactive learning models. These classrooms are often characterised by what Western observers describe as student passivity, marked by limited spontaneous verbal participation, high degree of deference to teacher authority, and preference for receptive learning modes. Recent AI-driven analysis of classroom dynamics in China confirms persistence of this model, revealing that teacher-led presentation occupies the majority of instructional time (51.9%), with teacher-student interaction accounting for only 30.5% (Gao & Yang, 2025).

However, to label this phenomenon merely as passivity is to apply a potentially misleading Western lens. This classroom dynamic is not necessarily indicative of disengagement but rather an expression of a coherent and historically grounded educational philosophy rooted in Confucian traditions (Jin & Cortazzi, 2006; Li, 2012). This philosophy emphasises effortful, respectful, and pragmatic acquisition of established wisdom, a stark contrast to the Socratic ideal of learning through questioning, hypothesis generation, and critical challenge (Li, 2012).

This philosophical divergence manifests in several concrete barriers to the kinds of overt behaviours associated with interactive learning. First, emphasis on social harmony and respect for hierarchy creates significant friction with Western-style interactive pedagogies. Such approaches, which often encourage direct questioning and debate, can be perceived as disrespectful and disruptive to the established classroom order. Indeed, empirical research confirms that these methods can induce significantly higher levels of stress in students from Confucian-heritage backgrounds (Langen & Stamov Roßnagel, 2023).

Second, the powerful cultural concept of ‘face’ (mianzi), which relates to reputation and social standing, creates strong aversion to public error or appearing foolish (Chan & Smith, 2024). This fear of losing face, coupled with concern about peer judgment in highly collectivist cultures, serves as a major inhibitor of risk-taking and public participation that are prerequisites for interactive knowledge co-construction.

Therefore, the challenge is not simply to activate passive students but to bridge two distinct and valid educational paradigms in a way that cultivates collaborative and critical skills required for 21st-century competence without invalidating deeply held cultural values.

The Promise and Peril of AI Integration

AI enters this complex cultural and pedagogical landscape as a technology of dual potential. On one hand, AI offers unique affordances that seem almost perfectly tailored to mediate tensions between traditional East Asian pedagogy and interactive learning goals. AI-powered platforms can create personalised learning pathways that cater to individual student needs and comfort levels, providing a form of cognitive scaffolding that can guide learners from receptive to more interactive modes of engagement (Strielkowski et al., 2025; Tu et al., 2025). The capacity of AI to deliver private, non-judgmental, and adaptive feedback is particularly salient; systems like the Korbit intelligent tutoring platform can provide tailored hints and explanations, allowing students to practise, make mistakes, and ask questions without fear of public exposure or losing face (Kochmar et al., 2020). Indeed, studies of Chinese K-12 learners’ perceptions of AI show that pupils recognise AI’s potential to boost learning efficiency, strengthen higher-order thinking (e.g., critical and creative problem-solving), and increase motivation via personalised support and feedback (Chai et al., 2020; Chiu et al., 2022; Zhang & Zhu, 2022). Together, these findings suggest AI can serve as a constructive learning partner that helps school-age learners practise and refine cognitive skills in ways that complement teacher guidance and classroom routines (Chai et al., 2020; Chiu et al., 2022; Zhang & Zhu, 2022).

On the other hand, uncritical integration of AI carries significant risks that could exacerbate existing problems or create new ones. A primary concern is the potential for AI to reinforce passive learning if implemented merely as a sophisticated content delivery system. If AI platforms are used only to transmit information more efficiently, they risk deepening the very passivity they are intended to alleviate (Holmes et al., 2019). This would represent a form of technological solutionism that ignores the pedagogical transformation required.

Moreover, current AI systems have well-documented limitations including factual errors (hallucinations) (Ji et al., 2023), algorithmic bias reflecting societal inequities (Mehrabi et al., 2021), and inadequate understanding of social-emotional and cultural nuances (Khare et al., 2024; Tao et al., 2024). These limitations are particularly concerning in educational contexts where AI recommendations might inappropriately influence pedagogical decisions or where biased systems could disadvantage certain student groups.

Finally, there exists a real risk that over-reliance on AI could diminish irreplaceable human elements of teaching: emotional connection, cultural interpretation, ethical judgment, and spontaneous responsiveness that characterise effective human pedagogy. If AI is positioned as replacement for rather than complement to human teachers, it could undermine the very aspects of education most essential for student development.

Therefore, the central question is not whether to integrate AI in East Asian classrooms, but how to do so in a manner that is both culturally responsive and pedagogically sound, that exploits AI’s unique affordances while acknowledging its limitations, and that positions technology as partner to rather than substitute for human teachers.

Research Focus

This paper addresses three interrelated dimensions of AI integration in Confucian-heritage classrooms. First, it examines how the ICAP framework of cognitive engagement can be adapted to account for unique cultural barriers in AI-mediated learning contexts. Second, it identifies specific mechanisms through which AI can facilitate culturally coherent transitions across engagement levels without undermining learner autonomy. Third, it explores implications for teacher professional development and student agency cultivation in technology-enhanced learning environments.

In addressing these dimensions, this paper develops an integrated, culturally-contextualised framework that extends ICAP theory for AI-mediated learning in East Asian contexts. The framework conceptualises AI not merely as content delivery tool but as a dynamic cultural and cognitive mediator that can scaffold behavioural and social norm development while respecting existing cultural values.

Theoretical Foundations

The ICAP Framework: Cognitive Engagement and Learning Outcomes

The Interactive, Constructive, Active, Passive (ICAP) framework, articulated by Chi & Wylie (2014), offers a robust taxonomy for classifying modes of cognitive engagement. Its theoretical power lies in its capacity to link observable student behaviours to underlying cognitive processes and, consequently, to predictable learning outcomes. The framework posits a hierarchy of engagement, with learning benefits increasing across four distinct modes: Passive, Active, Constructive, and Interactive. This hierarchy, often expressed as I>C>A>P, provides a valuable heuristic for educators and researchers to design and evaluate learning activities.

At the base of the hierarchy is the Passive mode, where the learner primarily receives information. This includes activities such as listening to a lecture, reading a text without annotation, or watching a demonstration. The underlying cognitive process is primarily one of storing information in memory. While essential for initial exposure to content, passive engagement offers limited opportunity for integration or generation of new knowledge.

The next level, Active engagement, involves the learner physically manipulating information or materials. Overt behaviours in this mode include highlighting text, taking verbatim notes, or repeatedly practising a procedural skill. The cognitive process associated with the Active mode is selection and encoding of information, which represents a step beyond mere reception. However, the learner is still largely operating within the confines of provided material, without adding new information or ideas. Studies have shown that active engagement can lead to better learning perceptions and reduced negative emotions compared to passive approaches, but its superiority in terms of deep learning is often limited (Bavishi et al., 2022; Wekerle et al., 2024).

A significant qualitative leap occurs with Constructive engagement. In this mode, the learner generates outputs that go beyond information explicitly given. Characteristic behaviours include summarising in one’s own words, creating concept maps/mind maps, posing questions, or engaging in self-explanation. The key cognitive process is one of inferring and integrating, where the learner builds connections between new information and prior knowledge, thereby constructing a more robust mental model. Research consistently demonstrates that constructive activities yield significantly better learning outcomes than passive or active modes because they compel the learner to generate new knowledge structures (Chi et al., 2018; Vosniadou et al., 2023).

At the apex of the ICAP hierarchy is Interactive engagement. This mode is defined by dialogic, co-generative activity between two or more learners. It involves behaviours like debating, reciprocal teaching, co-building an artefact or explanation, or asking and answering deep questions. The theoretical advantage of the Interactive mode lies in its promotion of co-inferential cognitive processes, where partners build upon, challenge, and refine each other’s ideas to create shared understanding that is more advanced than what any individual could have achieved alone (Chi & Wylie, 2014). The superiority of this mode has been empirically supported in studies showing that interactive and constructive activities predict greater learning gains than active or passive ones, particularly in complex domains like STEM (Wiggins et al., 2017).

Recent scholarship has extended the ICAP framework by exploring its application in diverse settings. For instance, empirical work in authentic education contexts suggests that the optimal mode of engagement and its outcomes can be moderated by specific contextual factors (Wekerle et al., 2024). This indicates a valuable opportunity for adapting the framework to specific learning environments. The AI Co-Pilot framework builds on this insight by contextualising ICAP principles, designing an approach specifically tailored to the social and cultural dynamics of Confucian-heritage classrooms.

Dialogic Teaching and Interactive Learning

Dialogic teaching, as conceptualised by Alexander (2020) and elaborated in research on classroom discourse (e.g., Chen et al., 2020; Howe et al., 2019; Mercer & Dawes, 2014; Michaels et al., 2008), emphasises the role of talk and interaction in learning. It represents a shift from traditional monologic instruction, where the teacher transmits knowledge to passive recipients, towards a more collaborative and participatory model where meaning is co-constructed through dialogue. Dialogic teaching is characterised by five key principles: collective (addressing learning tasks together), reciprocal (listening to and building on each other’s ideas), supportive (articulating ideas freely without fear of embarrassment), cumulative (building on previous contributions), and purposeful (teachers plan and steer dialogue towards educational goals).

In Confucian-heritage contexts, dialogic teaching, which is fundamentally Socratic in its nature, faces particular cultural barriers. This is empirically verified by research from Langen and Stamov Roßnagel (2023), who found that Socratic communication methods—the very foundation of dialogic teaching—induce significantly higher levels of stress in students from Confucian-heritage backgrounds compared to their Western peers. This finding provides a crucial evidence base for understanding why the reciprocal and supportive principles of dialogic teaching are so challenging to implement. Following this, the concept of face (mianzi) creates further barriers: students fear public correction or asking questions that might appear foolish, as individual performance reflects on the group in collectivist contexts (Fan et al., 2021). In collaborative settings, Nguyen et al. (2005) observed that the drive to maintain group harmony often suppresses the critical dialogue essential for interactive learning. These cultural dynamics are compounded by systemic pressures: examination-oriented education systems create institutional incentives for teacher-centred content delivery over process-oriented interactive learning (Huang & Asghar, 2018). These multifaceted barriers underscore that a simple importation of Western interactive pedagogies is likely to fail; a successful approach must be deeply contextualised and designed to work with, rather than against, these powerful cultural and systemic forces.

The AI Co-Pilot framework addresses these barriers by creating culturally buffered spaces for dialogue practice. AI-mediated interactions allow students to engage in dialogic behaviours—questioning, explaining, building on ideas—in contexts where cultural risks are reduced. Anonymous peer interaction platforms, private AI chatbots, and structured collaborative protocols provide scaffolding that helps students develop dialogic capabilities gradually, moving from culturally safer contexts towards more challenging face-to-face dialogue (Hwang & Chen, 2023).

Cultural-Historical Activity Theory and Teacher Orchestration

Cultural-Historical Activity Theory (CHAT), rooted in the work of Vygotsky (1978) and extended by Engeström (2001), provides a lens for understanding how tools mediate human activity within cultural contexts. CHAT posits that human cognition and learning are fundamentally social and culturally situated, mediated by both physical and psychological tools. In educational settings, these tools include not only traditional instruments like textbooks and calculators, but also symbolic systems like language and, increasingly, digital technologies including AI.

A central concept in CHAT is the zone of proximal development, the distance between what a learner can do independently and what they can achieve with guidance. Effective teaching involves providing scaffolding within this zone, gradually transferring responsibility to the learner as competence develops. AI systems can function as mediating tools within this framework, providing adaptive scaffolding that responds to individual learner needs.

The concept of teacher orchestration, grounded in CHAT principles, emphasises that effective technology integration requires teachers to coordinate multiple elements: learning objectives, student needs, technological affordances, and pedagogical strategies (Dillenbourg & Jermann, 2010). Teachers as orchestrators do not simply adopt technology but rather strategically deploy it to achieve educational goals, maintaining awareness of classroom dynamics and making real-time adjustments based on student responses.

The AI Co-Pilot framework positions AI as a mediating tool that teachers orchestrate strategically rather than a replacement for human instruction. The Teacher Orchestration Dashboard provides visibility into classroom dynamics, enabling teachers to make informed decisions about when to intervene directly, when to allow AI to provide scaffolding, and when to adjust instructional strategies (Kim et al., 2024; Possaghi et al., 2025; Yang et al., 2024). This maintains teacher agency while augmenting instructional capacity through intelligent technological support.

Synthesis: Toward Culturally-Responsive AI-Mediated Learning

The AI Co-Pilot framework synthesises these theoretical foundations to address a fundamental challenge: how to support progression through ICAP engagement levels in educational contexts where cultural values create barriers to interactive learning. By combining ICAP cognitive engagement theory with dialogic teaching principles and grounding implementation in CHAT understanding of mediated activity and teacher orchestration, the framework provides both theoretical coherence and practical guidance.

The framework recognises that effective AI integration in education requires more than technical capability. It demands careful attention to cultural context, systematic pedagogical design, and maintenance of human judgment and values at the centre of educational practice. The subsequent sections detail how these theoretical principles are operationalised in the AI Co-Pilot framework.

The AI Co-Pilot Framework

Core Conceptualisation

The AI Co-Pilot framework conceptualises an integrated system of AI technologies—including Natural Language Processing (NLP, encompassing both traditional techniques and large language model-based systems such as GPT-4), Machine Learning (ML), and Learning Analytics (LA)—not as teacher replacement but as an intelligent assistant that empowers teachers as ‘learning orchestrators.’ This positioning is deliberate: the metaphor of co-pilot emphasises that AI provides navigational support and handles specific technical functions, but the human teacher remains in command, making critical decisions about direction, priorities, and interventions.

The framework operates across three interconnected dimensions that work synergistically to support student progression through ICAP engagement levels while respecting Confucian-heritage cultural values.

First, the Cultural Bridge-Building Dimension ensures all AI-mediated interactions are culturally coherent and sensitive. AI functions as a cultural interpreter, creating spaces where students can practise interactive behaviours while maintaining respect for traditional values. For instance, to mitigate fear of losing face, AI facilitates initial interactive exercises through anonymous or pseudonymous channels. Students can practise argumentation mechanics, pose questions, and make errors without high social stakes of public performance. Research specific to Chinese K-12 settings also indicates that AI is valued when it offers a psychologically safe, culturally attuned, and personalised space—one that nurtures critical thinking and creativity without threatening face or violating prevailing classroom norms (Huang, 2021; Ni & Cheung, 2023). In this sense, AI acts as a bridge that enables school-age learners to access the cognitive benefits of interactive learning while maintaining a familiar, low-risk climate for participation and experimentation (Casal-Otero et al., 2023; Huang, 2021).

Second, the Cognitive Scaffolding Progression Dimension operationalises transition through the ICAP hierarchy as a series of managed, sequential phases. This progression respects traditional emphasis on foundational knowledge and gradual mastery. The AI system continuously monitors student performance and engagement data to dynamically adjust scaffolding levels and interactive demands complexity. This progression can be conceptualised in five phases: (1) Foundation Building (Passive → Active), where AI enhances traditional receptive learning with personalised content and adaptive drills; (2) Guided Construction (Active → Constructive), where AI introduces constructive behaviours through private dialogues and self-explanation prompts; (3) Structured Collaboration (Constructive → Interactive), where AI facilitates small-group interactions with significant scaffolding; (4) Dialogic Co-construction (Interactive - High Level), where students engage in more complex collaborative knowledge building; and (5) Independent Interactive Learning, where students function with minimal AI intervention and AI shifts to a metacognitive coaching role.

Third, the Social Interaction Facilitation Dimension addresses challenges of peer collaboration in collectivist cultures. AI functions as an intelligent and neutral facilitator of group work, using data on students’ personality traits, knowledge profiles, and cultural comfort levels to form optimal peer groups, minimising potential friction and maximizing productive synergy. AI provides real-time support for collaborative processes, offering structured conversational prompts, clarifying roles, and mediating disagreements in a non-confrontational manner. This function respects cultural preference for harmony while enabling productive dialogue essential for deep, interactive learning.

Visual Representation of the AI Co-Pilot Framework

The framework can be visualised as a dynamic system diagram with several interconnected components that illustrate relationships among teacher, student, AI, and the learning process (see Figure 1). The AI Co-Pilot Framework: System Architecture.

At the centre is the Student’s Engagement Progression through the four ICAP stages (Passive → Active → Constructive → Interactive), represented as a spiral arrow to visualise learning as an iterative and deepening process rather than a linear path. This spiral metaphor captures the reality that students may revisit earlier engagement modes even as they develop more advanced capabilities, and that progression is rarely perfectly linear.

Encircling the student’s journey is an outer ring labelled ‘AI Co-Pilot,’ representing the integrated system of AI technologies. This ring interacts directly with each stage of student progression, with connections to key functions enabled by specific technologies. For example, at the Passive-Active transition, labels point to ‘Personalised Content Delivery (ML)’ and ‘Adaptive Quizzing (ML).’ At the Active-Constructive transition, ‘Socratic Prompts (NLP)’ and ‘Automated Feedback (NLP)’ are indicated. At higher ICAP levels, ‘Group Formation (ML)’ and ‘Collaborative Facilitation (NLP + LA)’ appear.

The Teacher is positioned outside this student-AI interaction loop, signifying their role as manager and overseer of the entire process. This external positioning does not mean the teacher is removed from learning; rather, it emphasises that the teacher operates at a meta-level, orchestrating the overall instructional environment rather than being embedded within every specific AI-student interaction.

Arrows flow from the Student/AI interaction to the teacher, passing through a layer labelled ‘Real-Time Learning Analytics Dashboard.’ This represents the flow of data that informs teacher decisions. The dashboard aggregates information about individual student engagement levels, group dynamics, common misconceptions, and pedagogical moments requiring intervention.

Arrows also flow from the Teacher back to the Student/AI interaction loop, labelled ‘Pedagogical Orchestration & Intervention.’ These represent teacher actions such as forming new groups, launching whole-class discussions, providing targeted support to specific students, or adjusting AI system parameters based on observed patterns.

This visual model clarifies that AI does not operate in isolation but is part of a human-in-the-loop system where the teacher retains ultimate pedagogical control, using AI-generated insights to orchestrate a more effective and responsive learning environment.

This diagram illustrates the integrated architecture of the AI Co-Pilot framework, showing relationships among teacher orchestration, AI-mediated support mechanisms, and student progression through ICAP engagement levels. The central spiral represents student learning progression (Passive → Active → Constructive → Interactive), visualising learning as an iterative and deepening process. The outer ring represents AI Co-Pilot mechanisms with specific technologies indicated at each ICAP transition point. The teacher is positioned outside the student-AI interaction loop at a meta-level, receiving data through the Real-Time Learning Analytics Dashboard (dashed arrows) and providing pedagogical orchestration (solid arrows). This human-in-the-loop design maintains teacher agency while augmenting instructional capacity through intelligent technological support.

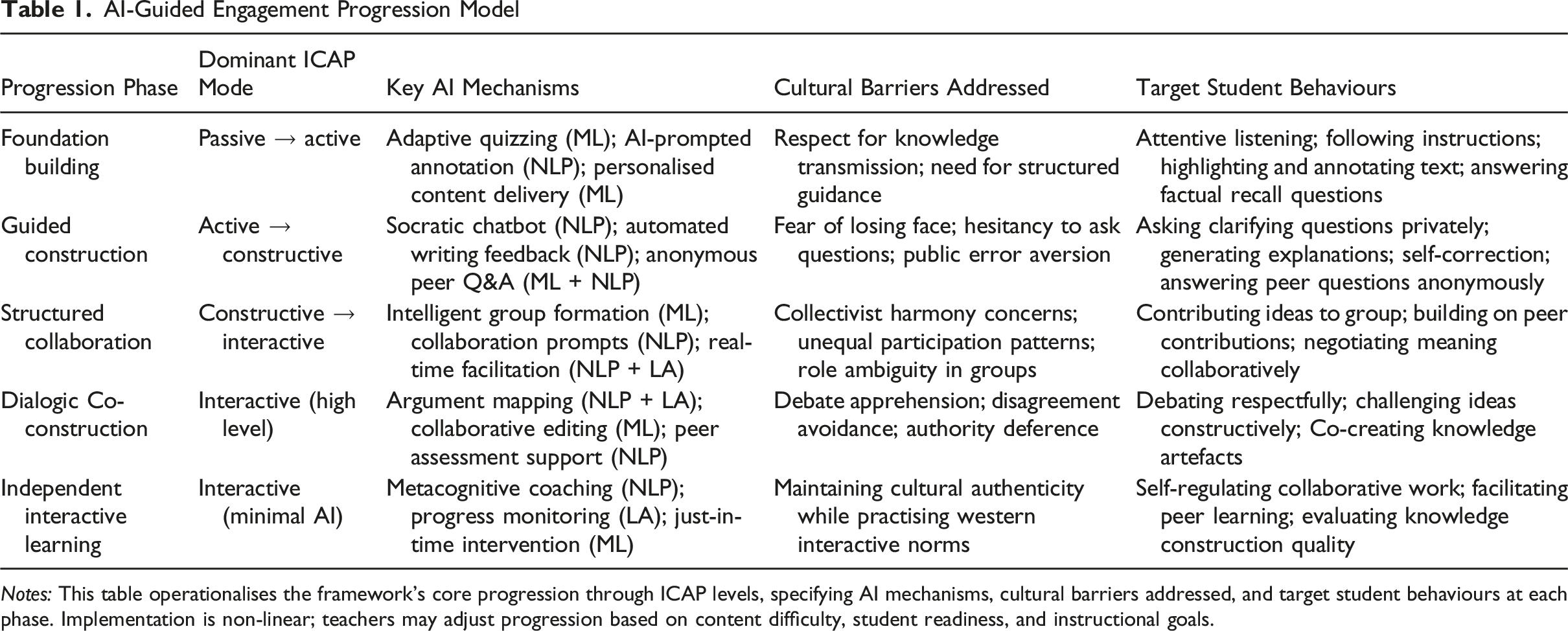

The AI-Guided Engagement Progression Model

AI-Guided Engagement Progression Model

Teacher Instructional Design Processes

The Learning Orchestrator Role and Dashboard Functions

The AI Co-Pilot framework positions teachers as ‘learning orchestrators’ who use AI-generated insights to make informed pedagogical decisions. This role transcends traditional content delivery, encompassing strategic coordination of learning resources, dynamic response to student needs, and maintenance of pedagogical coherence across diverse classroom activities. The Teacher Orchestration Dashboard serves as the primary tool enabling this role, providing three core functions that correspond to fundamental teacher needs: seeing, knowing when to act, and knowing what to do.

The Mirroring Function provides real-time visualisation of classroom dynamics, offering teachers unprecedented visibility into student cognitive engagement. Teachers can view which students are in Passive versus Active versus Constructive versus Interactive modes at any given moment, displayed through intuitive visual representations such as colour-coded student icons or engagement distribution graphs. The dashboard shows participation patterns, concept understanding distribution, and individual progress through ICAP levels. This mirroring capability addresses a fundamental teacher challenge: the difficulty of monitoring 30-40 students simultaneously in complex learning environments.

The Alerting Function implements automated detection of pedagogical moments requiring teacher intervention. Alerts are triggered when patterns emerge that suggest students need support or when opportunities arise for strategic instructional moves. For example, alerts might indicate: students persistently stuck in Passive mode despite scaffolding; groups experiencing unproductive conflict; misconceptions spreading across multiple students; or students ready to progress to next ICAP level but not yet challenged to do so. Critically, alerts are prioritised by urgency and pedagogical significance rather than generating constant notifications that overwhelm teachers.

The Advising Function provides AI-generated suggestions for instructional moves based on current classroom state and pedagogical principles. These suggestions are framed as options rather than directives, maintaining teacher agency while offering informed possibilities. For instance, the system might suggest forming small groups to transition students from Active to Constructive mode, offering targeted mini-lessons to address specific misconceptions, or providing extension challenges to students who have mastered current content. Teachers can accept, modify, or reject these suggestions based on their professional judgment and contextual knowledge that AI lacks.

Adapted ADDIE Model for AI Integration

Teachers design AI-enhanced lessons using an adapted ADDIE (Analysis, Design, Development, Implementation, Evaluation) instructional design model (Branch, 2009) that explicitly accounts for AI capabilities and cultural considerations specific to Confucian-heritage contexts.

Analysis Phase: Teachers identify learning objectives aligned with curriculum standards and determine which ICAP engagement levels are appropriate for achieving these objectives. They assess current student ICAP engagement baseline through classroom observation or preliminary assessments, identifying which ICAP transitions are most challenging for their specific student population. Cultural analysis considers which aspects of interactive learning may face particular resistance given student backgrounds and school culture. Teachers also analyse available AI system capabilities, determining which AI functions are technically feasible and pedagogically appropriate for planned activities.

Design Phase: Teachers map learning activities to ICAP progression phases using Table 1 as a guide, determining which phases will be emphasised and how students will transition between them. For each phase, teachers select appropriate AI mechanisms that provide necessary scaffolding while maintaining cultural responsiveness. They design culturally appropriate scaffolding sequences that gradually reduce cultural barriers while building student confidence. Teachers specify decision rules for when AI should intervene automatically versus when teacher intervention is preferable, balancing efficiency with maintenance of human pedagogical judgment.

Development Phase: Teachers configure AI systems with appropriate content, prompts, and parameters. This includes loading subject-specific materials into adaptive platforms, scripting Socratic dialogue prompts for chatbots, and setting thresholds for dashboard alerts. Teachers create teacher intervention protocols based on anticipated dashboard alerts, pre-planning responses to common scenarios to enable rapid, effective action during instruction. They develop assessment criteria aligned with ICAP progression goals, specifying not only content mastery but also engagement quality indicators.

Implementation Phase: Teachers launch AI-supported activities while monitoring the dashboard in real-time, maintaining awareness of both system status and students who may need support beyond what AI provides. They make responsive instructional adjustments based on dashboard data and direct observation, balancing planned lesson structure with adaptive flexibility. Teachers continually balance AI delegation with direct instruction, recognising that some pedagogical functions—emotional support, ethical guidance, cultural interpretation—require human presence.

Evaluation Phase: Teachers analyse learning outcomes using AI-generated analytics while exercising critical judgment about which metrics meaningfully indicate educational success. Quantitative data (assessment scores, engagement time, ICAP level progression) is supplemented with qualitative observations (student attitudes, quality of discourse, social-emotional indicators). Evaluation examines not only academic outcomes but also impacts on student motivation, development of collaborative skills, equity of participation, and any unintended consequences such as technology dependency or reduced teacher-student relationship quality. This comprehensive evaluation informs iterative refinement of AI integration approaches.

AI Technologies and Mechanisms Supporting ICAP Transitions

Three Categories of AI Technologies

Drawing on Vygotsky’s (1978) zone of proximal development, AI systems can function as adaptive scaffolding tools that respond to individual learner needs. Jose et al. (2025) propose that effective educational AI must decrease extraneous cognitive load while sustaining engagement, support higher-order thinking, and promote learner autonomy. This theoretical framework guides the selection of AI technologies for the Co-Pilot system. The following three categories represent core technological capabilities, each addressing specific pedagogical needs while maintaining appropriate limitations and teacher oversight.

The AI Co-Pilot framework employs three categories of AI technologies, each serving distinct functions in supporting student engagement progression.

Adaptive Learning Platforms utilise Machine Learning algorithms to personalise learning pathways based on continuous performance data (Kabudi et al., 2021). These systems adjust content difficulty, pacing, and instructional approach dynamically, responding to individual student mastery levels and learning patterns (Xie et al., 2019). Example: DreamBox Learning, which now serves more than six million students across the United States and Canada, offers mathematics and reading programmes; company statements report that its system analyses roughly 50,000 learner-interaction data points per student per hour to drive real-time personalisation. For empirical evidence, see a recent conceptual replication in early-elementary mathematics (Foster, 2023) and a randomised trial of Reading Plus—now distributed as DreamBox Reading Plus—showing gains in reading efficiency and achievement (Spichtig et al., 2019). In the AI Co-Pilot framework, such platforms support the Foundation Building phase (Passive → Active) by providing personalised practice opportunities with immediate feedback, allowing students to master foundational content at their own pace before progression to more demanding interactive modes.

Conversational AI Systems employ Natural Language Processing to engage students in dialogue, answer questions, and provide guidance (Ait Baha et al., 2024). These range from relatively constrained educational chatbots with scripted responses to more sophisticated systems built on large language models with greater flexibility (Baidoo-Anu & Owusu Ansah, 2023). Example: Khan Academy’s Khanmigo, built on GPT-4 (Khan, 2023), acts as both tutor and teaching assistant. It helps students solve problems step-by-step while encouraging critical thinking rather than providing direct answers. The system engages in Socratic dialogue, prompting students to explain their reasoning (Khan Academy, 2023). In the AI Co-Pilot framework, conversational AI primarily supports the Guided Construction phase (Active → Constructive) by facilitating private dialogue where students practise explanation and question-asking without fear of public embarrassment. Research on intelligent tutoring systems demonstrates that machine learning models can generate personalised hints and explanations based on specific errors and knowledge gaps (Kochmar et al., 2020). However, current conversational AI faces significant limitations including factual errors, cultural bias, and privacy concerns, which are addressed comprehensively in the Technical Limitations and Risk Mitigation Strategies section.

Learning Analytics and Natural Language Processing Tools analyse student work, participation patterns, and discourse quality, providing teachers with insights through dashboards (Bhattacharya et al., 2024; Hasnine et al., 2023). NLP tools can analyse written explanations for conceptual understanding, identify common misconceptions, and detect patterns in student questioning. Learning Analytics aggregate data across multiple students and timepoints, revealing trends that inform instructional decisions. By processing extensive data from student interactions, AI can build more nuanced pictures of cognitive engagement than what is available through classroom observation alone (Ouyang & Zhang, 2024). In the AI Co-Pilot framework, these tools support teacher orchestration across all phases by generating metrics displayed in the Learning Analytics Dashboard, enabling informed pedagogical decisions.

Core Mechanisms Supporting ICAP Transitions

The framework employs a wide range of specific AI functions at each stage, as detailed in Table 1. This section focuses on six core, cross-cutting mechanisms that are fundamental to driving the overall engagement progression.

Mechanism 1: Adaptive Content Sequencing. The system personalises learning pathways based on continuous performance data, adjusting difficulty, pacing, and content emphasis to maintain appropriate challenge levels. This mechanism operates primarily in Foundation Building phase, ensuring students master prerequisite knowledge before attempting more cognitively demanding tasks. By providing individualised progression rates, it respects cultural emphasis on thorough foundational mastery while preventing students from becoming stuck in Passive mode longer than necessary.

Mechanism 2: Private Socratic Dialogue. AI chatbots engage students in one-on-one dialogue using strategic questioning that promotes self-explanation and conceptual connection. Unlike public classroom questioning that triggers face concerns, private AI dialogue creates a psychologically safe space for exploratory thinking. Rather than replacing teachers, AI chatbots provide psychologically safe spaces that reduce learning anxiety (Chiu et al., 2023), mitigating social pressures associated with public classroom interaction while developing dialogic capabilities. The system asks questions like ‘Can you explain why you think that?’ or ‘How does this connect to what we learned yesterday?’ prompting constructive cognitive processes without social risk. This mechanism is central to the Guided Construction phase, building student confidence in generating explanations before requiring public articulation.

Mechanism 3: Anonymous Peer Interaction. AI platforms facilitate anonymous or pseudonymous peer question-answering and discussion, reducing face concerns while enabling collaborative knowledge construction practice. Students can pose questions they fear might seem foolish, answer peer questions without worry about being wrong publicly, and engage in tentative hypothesis-testing that precedes confident knowledge claims. This mechanism bridges individual constructive work and full collaborative interaction, allowing students to experience benefits of peer learning while cultural safeguards remain in place.

Mechanism 4: Intelligent Group Formation. Machine Learning algorithms analyse student characteristics including knowledge levels, personality traits, learning preferences, and cultural comfort with interaction to form optimal collaborative groups. The system balances groups to avoid both excessive homogeneity (which limits perspective diversity) and excessive heterogeneity (which can create communication barriers or unequal participation). By thoughtfully composing groups, this mechanism increases the likelihood of productive collaboration while reducing common group work problems.

Mechanism 5: Real-time Collaborative Facilitation. AI systems monitor group work through analysis of conversation patterns, contribution equity, and task progress. They provide structured conversational prompts that support productive dialogue, such as ‘What does everyone think about this approach?’ or ‘Can someone build on what Zhang just said?’ They clarify roles when ambiguity emerges and mediate disagreements by reframing conflicts in less confrontational terms. This mechanism respects cultural preferences for harmony while enabling substantive intellectual engagement that might otherwise be avoided.

Mechanism 6: Dashboard-Mediated Teacher Orchestration. Learning Analytics visualise real-time classroom dynamics, aggregating data from all other mechanisms to provide teachers with actionable insights. The dashboard transforms invisible cognitive processes into visible patterns, enabling teachers to identify students needing support, recognise optimal moments for transitions between ICAP levels, and evaluate whether intended learning is occurring. This mechanism maintains human judgment at centre of pedagogical decision-making while enhancing teacher capability through AI-augmented perception.

Implementation Scenarios and Preliminary Practitioner Feedback

Scenario: Ms. Wang’s Tang Poetry Analysis Lesson

The following illustrative scenario demonstrates how the AI Co-Pilot framework might be implemented in practice. While based on authentic secondary classroom contexts and informed by preliminary practitioner feedback, this scenario represents a designed example rather than documented research data.

Ms. Wang, a Chinese language teacher at a Shanghai secondary school, implements the AI Co-Pilot framework in her unit on classical poetry analysis, specifically focusing on Tang Dynasty poems. The lesson demonstrates how the framework supports progression through ICAP stages while respecting Confucian-heritage values.

Foundation Building Phase (Passive → Active): Students begin by reading selected Tang poems with AI-curated annotations explaining historical context, literary devices, and classical Chinese vocabulary. The adaptive platform adjusts content difficulty based on students’ prior performance in classical Chinese comprehension. Students are prompted to highlight key literary devices and mark unfamiliar vocabulary in their digital texts. Ms. Wang monitors her dashboard, observing that 29 of 35 students are successfully engaging with Active behaviours (highlighting, annotating), while six students show predominantly Passive patterns. She notes to check in with these students individually.

Guided Construction Phase (Active → Constructive): Students engage with an AI chatbot that poses Socratic questions about poetic imagery and themes: ‘What emotions does the poet convey through the image of falling autumn leaves? How does this connect to the poem’s historical context?’ Students type responses privately, receiving AI-generated feedback that prompts deeper interpretation: ‘You mentioned loneliness—can you identify specific word choices that create this mood?’ Ms. Wang monitors dashboard metrics showing which students successfully generate interpretive explanations versus those providing only literal paraphrasing. She observes that students who rarely volunteer interpretations in class discussion are producing thoughtful analytical responses in private AI dialogue.

Structured Collaboration Phase (Constructive → Interactive): The AI system forms small groups of four students, balancing demonstrated comprehension levels with collaborative work preferences identified from previous group activities. Groups receive a collaborative task: ‘Compare how three different Tang poets express the theme of homesickness. Create a comparative analysis identifying both commonalities and distinctive approaches.’ AI provides structured prompts—’What imagery does each poet use? What similarities do you notice?’—and monitors participation patterns. When the dashboard alerts Ms. Wang that one group has unequal participation, she approaches quietly to encourage balanced contribution.

Dialogic Co-construction Phase (Interactive - High Level): Groups engage in structured debate about whether Tang poetry’s value lies primarily in its historical documentation or its universal human emotions, with AI-generated argument maps visualising each group’s arguments and textual evidence. Ms. Wang facilitates whole-class discussion, using dashboard data to invite students who developed strong textual arguments in small groups but might not volunteer for public discussion. The AI’s argument mapping helps students see connections between different interpretative approaches.

Independent Interactive Learning: For homework, students collaboratively create annotated anthologies of self-selected Tang poems, with AI providing metacognitive prompts: ‘Have you considered alternative interpretations of this line? What textual evidence supports your analysis?’ The system monitors progress and alerts Ms. Wang only when groups encounter significant obstacles, maintaining minimal intervention that encourages student self-direction.

Practitioner Feedback on Framework Feasibility

During framework development, preliminary feedback was gathered from practising school teachers to inform conceptual refinement and assess perceived feasibility. This feedback collection was non-research in nature and involved no systematic data collection, recording of personally identifiable information, or formal research protocols. Teachers were invited to review the framework concept and share their professional perspectives on its practical applicability and alignment with classroom realities.

Practitioners consistently noted that the framework addresses genuine challenges they encounter in contemporary Chinese classrooms. Teachers emphasised difficulty managing cognitive complexity of interactive learning with large class sizes, typically 40-50 students. The tension between policy mandates for student-centred learning and practical constraints of classroom management was identified as a persistent concern.

Cultural dynamics were recognised as significant factors shaping student participation patterns. Practitioners acknowledged that face-saving concerns, respect for hierarchy, and social harmony values influence willingness to engage in public questioning, debate, or risk-taking behaviours central to interactive learning. The framework emphasis on creating psychologically safe spaces through private AI-mediated practice was viewed as a culturally appropriate approach to scaffolding participation.

The Learning Analytics Dashboard concept generated particular interest among practitioners. Teachers expressed that real-time visibility into student engagement levels and conceptual understanding would enable more responsive and equitable instruction. The capacity to identify students requiring support before summative assessments was noted as addressing a fundamental teacher challenge in large classroom contexts.

Practitioners also demonstrated awareness of AI system limitations, expressing appropriate caution about over-reliance on automated systems for complex pedagogical judgments. Teachers emphasised the importance of maintaining human oversight, particularly for assessing creative or original student thinking that may not conform to algorithmic patterns. This perspective aligns with the framework positioning of AI as augmentation rather than replacement of teacher judgment.

Professional development needs were consistently identified as prerequisite for successful implementation. Teachers acknowledged requiring training in dashboard data interpretation, AI-integrated lesson design, and strategies for maintaining pedagogical authority while collaborating with intelligent systems. Infrastructure concerns including reliable internet connectivity, device availability, and technical support were also noted as implementation considerations.

This initial practitioner feedback informed several framework design decisions, including emphasis on teacher orchestration role, explicit acknowledgment of cultural barriers, integration of comprehensive professional development guidance through the ADDIE model, and transparent discussion of technical limitations and infrastructure requirements in the Ethical Considerations and Implementation Constraints section. While this feedback provides useful guidance for framework refinement, systematic empirical validation through rigorous research studies remains necessary to establish effectiveness and identify necessary adaptations across diverse implementation contexts.

Ethical Considerations and Implementation Constraints

Age Recommendation and UNESCO Guidelines

UNESCO guidelines recommend against generative AI use for children under 13 years, reflecting concerns about developmental readiness, data privacy, and potential impacts on cognitive and social development (Miao & Holmes, 2023). First, young children (e.g., under 13) are still developing critical thinking skills necessary to evaluate AI-generated information for accuracy, detect bias, and distinguish reliable from unreliable sources. The cognitive demands of metacognitive monitoring—assessing one’s own understanding and AI system limitations—are developmentally challenging for young children (Kasneci et al., 2023). Second, generative AI systems typically require substantial data collection to personalise interactions and improve performance. Young children lack full capacity to understand implications of data sharing and provide informed consent, creating significant privacy risks.

Third, over-reliance on AI assistance during formative years may impede development of independent problem-solving capabilities, persistence through difficulty, and intrinsic motivation to learn. If children become habituated to AI providing answers whenever difficulty arises, they may not develop crucial self-regulatory learning skills. Fourth, young children are developing interpersonal skills best nurtured through direct human interaction. Excessive AI-mediated communication might compromise development of empathy, emotional regulation, and social understanding that emerge through face-to-face relationships with teachers and peers.

Following the guidelines, this AI Co-Pilot framework might be more suitable for secondary education contexts where students possess greater cognitive maturity and digital literacy to engage with AI systems appropriately.

For elementary students (ages 5-12), substantially different approaches with minimal direct AI interaction would be required if adaptation of framework principles were attempted. Such adaptation would require teachers to maintain complete control over AI content selection, students to view AI-generated materials only under direct supervision, and primary emphasis to remain on human-facilitated learning rather than AI-mediated activities.

Technical Limitations and Risk Mitigation Strategies

Current AI systems have well-documented limitations requiring explicit mitigation strategies integrated throughout framework implementation.

Hallucinations and Factual Errors: Large language models occasionally generate plausible-sounding but factually incorrect information, a phenomenon termed ‘hallucinations’ (Ji et al., 2023). In educational contexts, this poses serious risks as students may learn incorrect information presented authoritatively. Mitigation strategies include that teachers must verify all AI-generated content before student exposure; students receive explicit instruction on cross-referencing AI information with textbooks, academic databases, and other authoritative sources; dashboard systems flag potentially unreliable AI outputs based on confidence scores or consistency checks; and teachers model critical evaluation of AI-generated information, treating AI as a source to be questioned rather than an authority to be accepted uncritically.

Algorithmic Bias: AI systems trained on biased data can perpetuate or amplify societal inequities. Documented biases in AI systems include gender stereotypes, racial discrimination, and socioeconomic disadvantages (Sanderson et al., 2023). Mitigation strategies include regular bias audits of AI systems by independent reviewers, diverse representation in training data to reduce skewed patterns, teacher training to recognise and address AI bias when it occurs, student instruction on critically evaluating AI recommendations for potential bias, and explicit mechanisms for reporting and correcting identified bias, ensuring continuous improvement.

Social-Emotional Intelligence Gaps: AI lacks genuine empathy, nuanced understanding of student emotional states, and capacity for ethical reasoning about complex situations. Mitigation strategies include that AI handles primarily cognitive scaffolding while teachers address emotional support, motivation, and character development; protocols specify when human intervention supersedes AI recommendations, particularly in situations involving student distress, conflict, or ethical dilemmas; continuous teacher monitoring prevents over-reliance on AI for aspects of education requiring human judgment and emotional connection; and explicit framework principle that AI augments rather than replaces human teaching, preserving irreplaceable human elements of education.

Infrastructure Requirements and Equity Concerns: Effective AI implementation requires reliable internet connectivity, adequate computing devices, technical support infrastructure, and electricity reliability. These requirements create potential equity barriers, particularly for rural schools or under-resourced districts. Mitigation strategies include government investment in educational technology infrastructure as prerequisite for AI integration, provisions for offline functionality when internet connectivity is unreliable, shared device protocols ensuring all students have access during school hours, technical support systems enabling rapid problem resolution, and explicit monitoring of whether AI implementation advantages already-privileged students, exacerbating inequality.

Data Privacy and Security Protocols

Given that AI systems collect substantial student data, they require robust privacy protections aligned with relevant regulations, such as China’s Personal Information Protection Law (PIPL, 2021). These protections are guided by foundational principles, starting with data minimization. This principle mandates that only data essential for educational purposes be collected, avoiding unnecessary personal information. It also involves implementing automatic data deletion protocols after pedagogically relevant retention periods and resisting the temptation to collect data simply because technology enables it, thereby maintaining a strict focus on educational necessity.

Equally critical are transparency requirements. This involves providing clear communication to students and parents about what data is collected, how it is used, who has access, and how long it is retained. Functionally, this requires implementing explicit opt-in consent procedures that offer a genuine opportunity to decline without educational penalty, creating accessible privacy policies in plain language, and ensuring students and parents can access collected data to verify its accuracy and understand system operations (Adams et al., 2023).

Security Measures: Implement encrypted data storage and transmission to prevent unauthorized access; establish restricted access controls limiting data viewing to those with legitimate educational need; conduct regular security audits identifying vulnerabilities; and maintain compliance with relevant data protection regulations, updating practices as regulations evolve.

Student Agency and Control: Enable students to understand what data AI systems collect about them through accessible dashboards showing collected information; provide mechanisms to access, correct, or delete their data when appropriate; empower students to make informed decisions about AI interaction levels rather than imposing mandatory participation; and teach students about digital privacy as component of digital literacy, preparing them for broader technology landscape.

Discussion: Implications, Limitations, and Future Directions

Theoretical Contributions

This paper makes three primary theoretical contributions to educational technology and learning sciences literature. First, it extends the ICAP framework to the context of AI-mediated learning in Confucian-heritage classrooms, demonstrating how AI can serve as a cultural mediator supporting cognitive engagement progression while respecting traditional values. While previous research has applied the ICAP framework to various learning contexts (Chi et al., 2018; Wekerle et al., 2024), little work has examined how cultural factors moderate ICAP transitions or how technology can address culturally-specific barriers. The AI Co-Pilot framework shows that AI’s unique affordances—private practice spaces, anonymous interaction, adaptive scaffolding—can reduce cultural barriers to interactive learning without requiring students to abandon valued cultural norms.

Second, it integrates ICAP theory with dialogic teaching principles, showing how these frameworks complement each other when AI provides scaffolding for dialogue practice. Previous scholarship has generally treated cognitive engagement frameworks and sociocultural learning theories as separate domains. This integration demonstrates that ICAP cognitive progression depends partly on social infrastructure (psychological safety, turn-taking norms, collaborative protocols) that dialogic teaching emphasises, while dialogic teaching effectiveness depends on cognitive engagement quality that ICAP framework specifies.

Third, it advances theory on human-AI collaboration in education (Holstein et al., 2019) by positioning teachers as learning orchestrators who use AI-generated insights to make informed pedagogical decisions rather than as passive implementers of AI recommendations. This contrasts with techno-optimistic narratives portraying AI as replacement for human teaching and techno-pessimistic narratives rejecting AI entirely. The framework demonstrates how AI can augment teacher capability—extending perceptual reach through dashboards, providing adaptive individualisation beyond human capacity, enabling new pedagogical possibilities—while maintaining human judgment, values, and relationships at centre of educational practice.

Practical Implications for Policy and Practice

For policymakers, the framework provides structured guidance for AI integration that respects age guidelines while advancing educational innovation goals (e.g., Miao & Holmes, 2023). It suggests that effective AI integration requires substantial investment beyond technology acquisition, including teacher professional development preparing educators for the orchestrator role rather than traditional content delivery, infrastructure investment ensuring reliable internet, adequate devices, and technical support, systematic evaluation examining both benefits and risks rather than assuming technology automatically improves learning, and regulatory frameworks protecting student privacy while enabling pedagogically valuable data use.

For practitioners, the framework offers concrete tools that teachers can implement systematically: the Teacher Orchestration Dashboard providing actionable classroom insights; the adapted ADDIE model guiding AI-enhanced lesson design; ICAP progression sequences structuring student engagement development; and explicit strategies for addressing cultural barriers to interactive learning. Preliminary practitioner feedback suggests teachers find the framework culturally appropriate and pedagogically valuable, though implementation requires significant initial time investment that must be supported through professional development and planning time allocation.

For technology developers, the framework specifies AI capabilities most valuable for educational contexts: adaptive content sequencing responding to individual learning patterns; Socratic dialogue facilitation promoting self-explanation and conceptual connection; intelligent group formation optimising collaborative learning conditions; real-time collaborative support scaffolding productive peer interaction; and dashboard analytics visualising classroom dynamics. Critically, it emphasises importance of designing AI systems that augment rather than replace human teaching, respecting teacher expertise and maintaining human relationships as the foundation of education.

For researchers, the framework generates testable hypotheses about mechanisms through which AI supports learning in specific cultural contexts. It provides structure for systematic investigation of questions including which AI capabilities most effectively support specific ICAP transitions, how cultural factors moderate AI effectiveness, what teacher practices optimise AI orchestration, and how AI-mediated learning affects long-term outcomes including skill development, motivation, and learning dispositions.

Limitations and Contextual Boundaries

Several limitations constrain this framework’s applicability and interpretability. First, it is designed specifically for Confucian-heritage educational contexts. Its application is most appropriate for learners at the secondary school level and above, in line with ethical guidelines for generative AI. Direct application to other cultural contexts or age groups requires substantial modification. The specific cultural barriers addressed (face concerns, authority deference, collectivist harmony preferences) may not be primary challenges in different cultural contexts, requiring identification of context-specific barriers and corresponding adaptations. Similarly, younger students require different developmental considerations that this framework does not address.

Second, the framework assumes availability of technological infrastructure (reliable internet, computing devices, technical support) that may not exist in all schools. In China, urban-rural digital divides are substantial, with rural schools often lacking resources necessary for effective technology integration. Global digital divides are even more pronounced. Framework implementation without adequate infrastructure risks creating new inequities rather than reducing existing ones.

Third, effective implementation requires teachers with both technological proficiency and pedagogical expertise in interactive learning methods. Many teachers were prepared for traditional instruction and may lack preparation for the orchestrator role. Substantial professional development investment is necessary, including not only technical training but also pedagogical preparation for facilitating collaborative learning, interpreting learning analytics, and maintaining appropriate AI-human boundaries.

Fourth, the framework is primarily theoretical. While preliminary practitioner feedback suggests conceptual soundness and practical feasibility, systematic empirical validation is necessary to establish effectiveness and identify needed adaptations. Rigorous research studies with experimental designs, longitudinal data collection, and diverse implementation contexts are required to evaluate impacts on learning outcomes, skill development, student motivation, and potential unintended consequences.

Fifth, the rapid pace of AI technological development means specific AI capabilities described here may evolve or become obsolete. The framework’s principles—cultural responsiveness, pedagogical progressions, teacher orchestration—are more durable than particular technological implementations. However, specific mechanisms may require updating as AI capabilities change.

Finally, the framework cannot address all challenges of educational transformation. Broader systemic changes are also necessary, including assessment practices that value collaborative knowledge construction rather than only individual test performance, curriculum design allowing time for interactive learning rather than coverage of excessive content, educational culture shifts valuing questioning and exploration rather than only correct answers, and professional norms supporting teacher innovation rather than standardized compliance.

Future Research Directions

Future research should pursue several directions to extend theoretical understanding and establish an empirical evidence base.

Longitudinal studies examining sustained impacts of AI Co-Pilot implementation on student learning outcomes, engagement, and skill development are essential. Such studies should employ rigorous experimental or quasi-experimental designs with appropriate control groups, random or matched assignment, pre-post assessments, and multiple outcome measures including standardized achievement, collaborative skill development, critical thinking, motivation, and learning dispositions. Implementation periods should extend over full academic years or longer to examine sustained rather than only short-term effects.

Comparative studies examining framework effectiveness across different cultural contexts, age groups, and subject domains would clarify generalisability boundaries and necessary adaptations. Such research might implement the framework in different East Asian contexts (Japan, Korea, Singapore) to examine whether similar cultural barriers emerge; adapt the framework for Western contexts to identify which components are culturally specific versus generally valuable; or test implementation across different age groups with appropriate developmental modifications.

Research on teacher professional development approaches that effectively prepare teachers for AI-orchestrated learning would inform implementation strategies. Questions include what combination of technical training, pedagogical preparation, and ongoing support enables effective implementation; how do teachers develop orchestration expertise over time; what support structures sustain teacher innovation in the face of implementation challenges; and how does teacher belief about AI role influence implementation quality.

Studies examining how AI-mediated learning affects student relationships with human teachers, peers, and learning itself would illuminate potential unintended social-emotional consequences. Research might investigate whether AI-mediated dialogue builds confidence for human dialogue or creates dependency on technology-mediated communication, how students perceive teacher role when AI provides substantial instructional support, whether AI-facilitated peer collaboration transfers to non-AI contexts, and what impacts emerge on student identity as learners.

Investigation of how different AI capabilities differentially impact learning would refine framework mechanisms. Experimental studies might isolate specific components (adaptive sequencing versus Socratic dialogue versus group facilitation) to examine their independent and interactive effects on engagement and learning outcomes.

Finally, critical examination of equity implications is essential for socially just implementation. Research should investigate who benefits most from AI-mediated learning and who might be disadvantaged, how existing achievement gaps are affected by AI integration, whether AI access creates new forms of educational inequality, and how to ensure equitable access and outcomes across diverse student populations.

Conclusion

The integration of AI in education presents a complex opportunity that demands careful, principled implementation. This paper proposed the AI Co-Pilot framework as a theoretically-grounded and culturally-responsive approach to this challenge, addressing the interplay of cognitive, social, and cultural classroom dynamics. The framework’s central contribution is its conceptualisation of AI not as a replacement, but as a ‘co-pilot’ functioning as a cultural mediator. By positioning the teacher as a ‘learning orchestrator’, this approach demonstrates how AI can augment pedagogical capacity for extending perceptual reach and scaffolding complex interactions while maintaining human judgment, relationships, and ethical reasoning at the centre of practice. This model provides a necessary theoretical foundation for technology integration, shifting the focus from tool-centric adoption to the service of authentic pedagogical goals. As a theoretical framework, its principles must be validated through rigorous empirical research, including longitudinal and comparative studies, to establish a firm evidence base. The AI Co-Pilot framework thus represents a principled and adaptable starting point for the ongoing work of integrating AI responsibly, ensuring that human agency and values remain at the core of education.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Research Grants Council, University Grants Committee (Grant No.: 17605221) and the Standing Committee on Language Education and Research (Grant No.: 1047-2050-8080-9020-00053), Hong Kong, China.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.