Abstract

As media planning becomes increasingly data-intensive and algorithmically driven, advertising educators face the challenges of preparing students to operate effectively within hybrid human–Artificial Intelligence (AI) workflows. This paper introduces a human–AI collaborative model for teaching undergraduate media planning, centered on a custom GPT tool, the Media Planning AI Assistant, embedded within a semester-long course. Rather than functioning as an autonomous decision-maker, the Assistant serves as a generative partner that models industry-standard workflows while requiring students to construct the knowledge base, interpret research inputs, and critically evaluate outputs. Using a mock national campaign, the paper demonstrates how students engage in iterative human–AI collaboration across audience analysis, competitive assessment, media strategy, budgeting, and scheduling. The study offers a practical pedagogical framework for integrating generative AI into media planning education, designed to reduce cognitive load, scaffold strategic reasoning, and support ethical AI literacy without displacing human judgment.

Introduction

Human–machine collaboration is increasingly demonstrating how advertising and integrated communications workflows can be reimagined to enhance effectiveness, efficiency, and creative capacity across both technological systems and human practitioners (Li, 2019). This is particularly consequential in media planning, where sophisticated artificial intelligence (AI) technologies can evaluate behavioral patterns and shifting media trends to guide the development of more precise and audience-responsive solutions (Qin & Jiang, 2019). Media planning now requires evaluating far greater volumes of data than previously encountered (Katz, 2025), and most real-time data flows, including behavioral signals, pricing, bids, media options, and impressions, exceed human capabilities to support efficient and unbiased decision-making (Ugbaja et al., 2024).

Given this ongoing shift, it is essential that teaching align with emerging developments in AI and, where appropriate, anticipate them to ensure students are prepared for advanced roles in media planning (Huh et al., 2023). Reimagining student learning now requires a strategic reframing of the practices simulated in coursework. As media practice increasingly transitions toward collaborative human–AI planning workflows, instructional design must also shift to mirror the co-planning processes that define modern media and advertising research practices.

Despite growing recognition of AI’s influence on professional media planning, instructional approaches have not fully evolved to reflect the human–AI workflows that characterize industry practice. Although generative AI has entered higher education discussions (Kasneci et al., 2023; Zawacki-Richter et al., 2019), much of this work focuses on AI as a writing or tutoring aid rather than as part of discipline-specific workflows. Many media planning courses still emphasize human-driven research synthesis and strategy development, often relying on static datasets or simulations that do not reflect algorithmically mediated decision systems. As a result, students learn foundational concepts without fully experiencing how strategic reasoning operates in AI-augmented environments. The pedagogical challenge is not whether to introduce AI tools, but how to design structured learning experiences that model human–AI co-planning while preserving critical thinking and strategic accountability.

To address this gap, the purpose of this article is to introduce a human–AI collaborative model for teaching media planning, centered on a custom GPT tool, the Media Planning AI Assistant, integrated into a semester-long undergraduate media planning course. The tool simulates industry-standard workflows, requiring students to construct a knowledge base, interpret data, and critique AI outputs to reinforce conceptual and applied learning. By illustrating how a custom GPT supports research synthesis, strategic justification, and iterative refinement, we propose a pedagogical model to prepare students for the increasingly hybrid human–AI media planning environment. While this paper does not empirically test learning outcomes, we discuss anticipated instructional benefits, including reducing cognitive load and scaffolding strategic reasoning.

Background and Pedagogical Rationale

The rapid advancement of artificial intelligence (AI), computational advertising, and large-scale data systems has transformed media planning from a process focused on mass exposure to one centered on data-driven, individualized targeting (Araujo et al., 2020). Programmatic advertising, defined as the automated use of data to buy and place personalized digital ads, now dominates the digital ecosystem, with spending projected to exceed 91% of all U.S. digital display advertising in 2024 (Lebow, 2024). As advertisers operate in this algorithmically managed environment, there is a growing consensus that professionals must possess strong analytical and computational thinking skills, including pattern recognition, logic-based modeling, and real-time decision-making (Brown-Devlin, 2021; Yang, 2023). However, most traditional media planning textbooks and simulation tools remain outdated, offering only limited coverage of programmatic systems or the strategic use of AI in campaign execution (Wu & Andrews, 2024).

In response to evolving industry demands, communication programs are increasingly integrating AI literacy and algorithmic thinking into their curricula. These approaches aim to demystify core AI concepts, such as machine learning, neural networks, and algorithmic bias, by making them accessible without requiring programming expertise (Brown-Devlin, 2021; Yang, 2023). Instruction focuses on helping students interpret how algorithms process data, recognize patterns, and generate insights—skills essential for navigating AI-driven environments. At the same time, students are encouraged to critically evaluate the ethical and societal implications of automation in advertising practice. Although these initiatives represent important progress in advancing AI literacy (Zawacki-Richter et al., 2019), much of the existing AI literacy pedagogy remains conceptually oriented, emphasizing awareness and interpretation rather than structured application within discipline-specific workflows (Kasneci et al., 2023). As a result, students may understand how algorithms function in theory but receive limited guidance on how to operationalize AI systems within the complex, multi-stage decision processes characteristic of media planning.

Parallel to this technical and ethical training, emerging models of human–AI collaboration reframe the role of advertising professionals not as competitors to AI, but as strategic partners. Human-in-the-loop and co-creation frameworks position students as managers of AI systems, responsible for directing generative tools, interpreting algorithmic outputs, and applying insights to campaign planning (Yang, 2023). Nevertheless, human–AI collaboration frameworks often emphasize role redefinition, positioning students as “managers” of AI systems, yet provide limited guidance for how collaboration unfolds in applied campaign contexts. Without structured scaffolding, AI tools risk being over-relied on as decision-makers or underutilized as superficial enhancements.

Experiential learning approaches, such as Kolb’s cycle, further support this shift by enabling students to actively experiment with AI tools, reflect on their outcomes, and iteratively refine their strategic decisions (Brown-Devlin, 2021). Collectively, these approaches emphasize knowledge integration, where students synthesize creative, technical, and strategic capabilities to work effectively and ethically alongside AI systems in modern advertising contexts.

Conversely, critics warn that AI might become a crutch that stifles critical thinking. Beyond eroding basic skills, there are deep-seated fears that algorithmic bias and unequal access to these tools will only worsen existing educational disparities (Matjie et al., 2026). Rather than overlooking these pitfalls, this approach uses a structured process to address them. Students are required to evaluate AI outputs and explain the logic behind their choices, ensuring they remain in control of the strategy. In this way, AI is reframed as a tool for deeper engagement and critical thinking in media planning.

Despite growing interest in AI-enhanced advertising education, significant gaps remain, particularly regarding media planning. Existing pedagogical research has largely concentrated on generative AI for creative production or analytics for consumer insights, offering limited guidance on how to teach the logic, sequencing, and interdependencies of media planning decisions themselves (Brown-Devlin, 2021; Yang, 2023). Even when hands-on simulations are introduced (e.g., Wu & Andrews, 2024), they often replicate technical interfaces without fully addressing how students develop strategic judgment within algorithmically mediated systems. Consequently, a key unresolved issue is how to design instructional models that both mirror contemporary programmatic workflows and intentionally scaffold higher-order reasoning, ethical evaluation, and strategic accountability. To address these limitations, our approach introduces a structured human–AI co-design model using a custom GPT tool embedded within a semester-long course. Rather than treating AI as a standalone assistant or autonomous decision-maker, we position it as a scaffolded generative partner within a defined planning workflow. This structure is intended to balance efficiency with accountability, ensuring that students remain responsible for knowledge construction, strategic justification, and ethical evaluation throughout the planning process.

Pedagogical Framework for Human–AI Collaboration in Media Planning Education

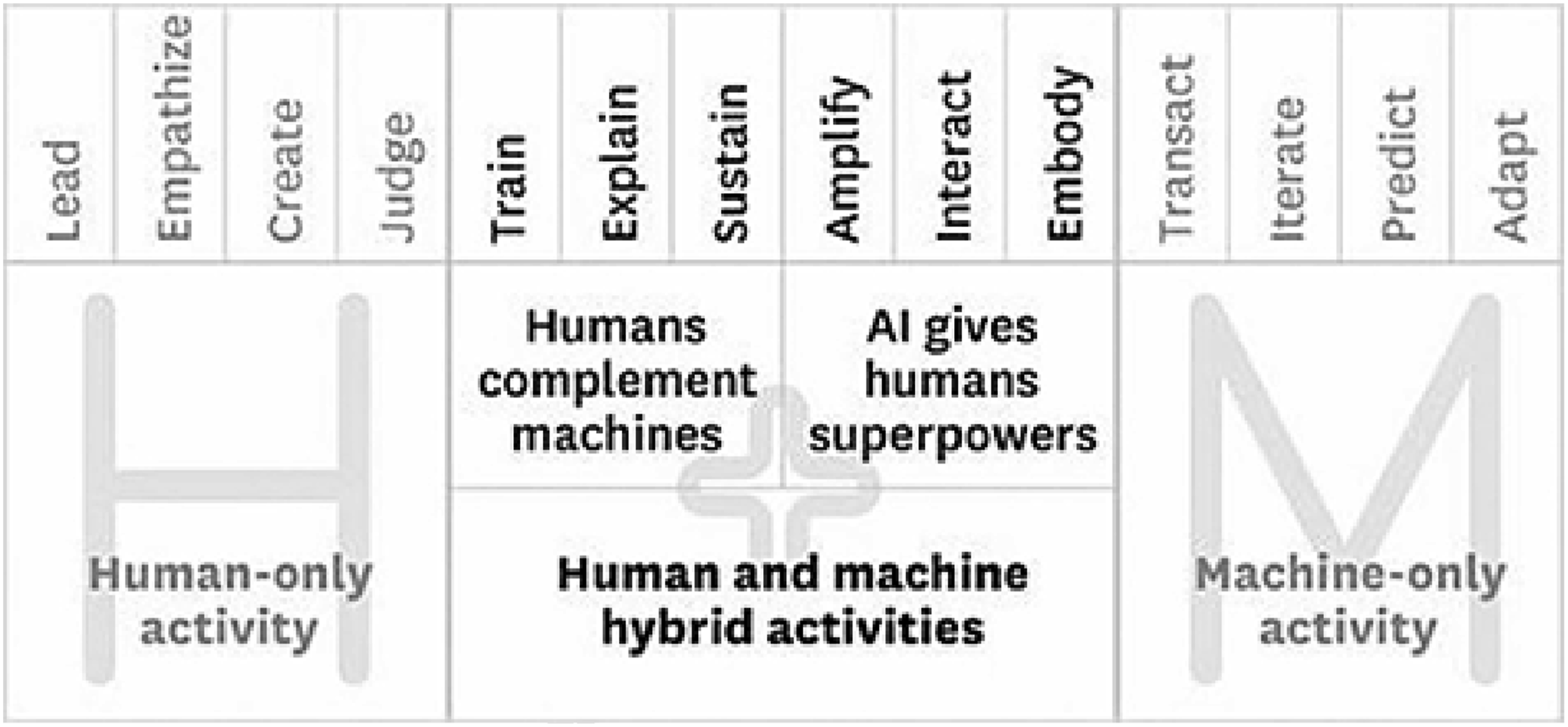

Building on this pedagogical rationale, custom GPTs offer a strategic opportunity to facilitate human and AI collaboration in ways that mirror contemporary media planning workflows. A custom GPT is especially critical because it enables the specific type of human–machine teaming depicted in the “Missing Middle,” where humans and AI contribute distinct but complementary strengths that neither can accomplish alone (Daugherty & Wilson, 2024; Masalaci et al., 2025). This conceptual grounding is illustrated in Figure 1, which presents the Human–Machine “Missing Middle” framework adapted from Paul R. Daugherty and H. James Wilson’s Missing middle framework

In the media planning context, the “Missing Middle” makes clear that the future of planning does not depend on eliminating human expertise, but on designing workflows in which humans, and in this case students, serve as the domain experts who bring prior knowledge, empathy, and contextual judgment to the process alongside their machine counterparts (Lou et al., 2025). The student role aligns with the left side of the model, where humans lead, empathize, create, and judge, and within this framework students are responsible for training, guiding, critiquing, and directing the custom GPT’s recommendations rather than accepting its outputs as fact (Daugherty & Wilson, 2024).

In contrast, the machine-only tasks illustrate how custom GPTs can extend the limits of the domain expert’s traditional knowledge base and capabilities, given the model’s ability to process data and predict at scale using course-specific materials, client briefs, and other targeted inputs (Daugherty & Wilson, 2024). This alignment between the roles outlined in the “Missing Middle” and the capabilities of a custom GPT illustrates why a tailored model, rather than a generic AI system, is necessary for supporting conceptual learning (Masalaci et al., 2025) and industry-aligned media planning environments. This collaboration not only mirrors contemporary industry practice but also prepares students to enter the workforce with the skills needed to collaborate effectively with increasingly sophisticated AI systems, allowing them to contribute meaningfully from day one in professional media planning environments. (Ouyang et al., 2022).

Human–AI Collaboration Model for Media Planning Education

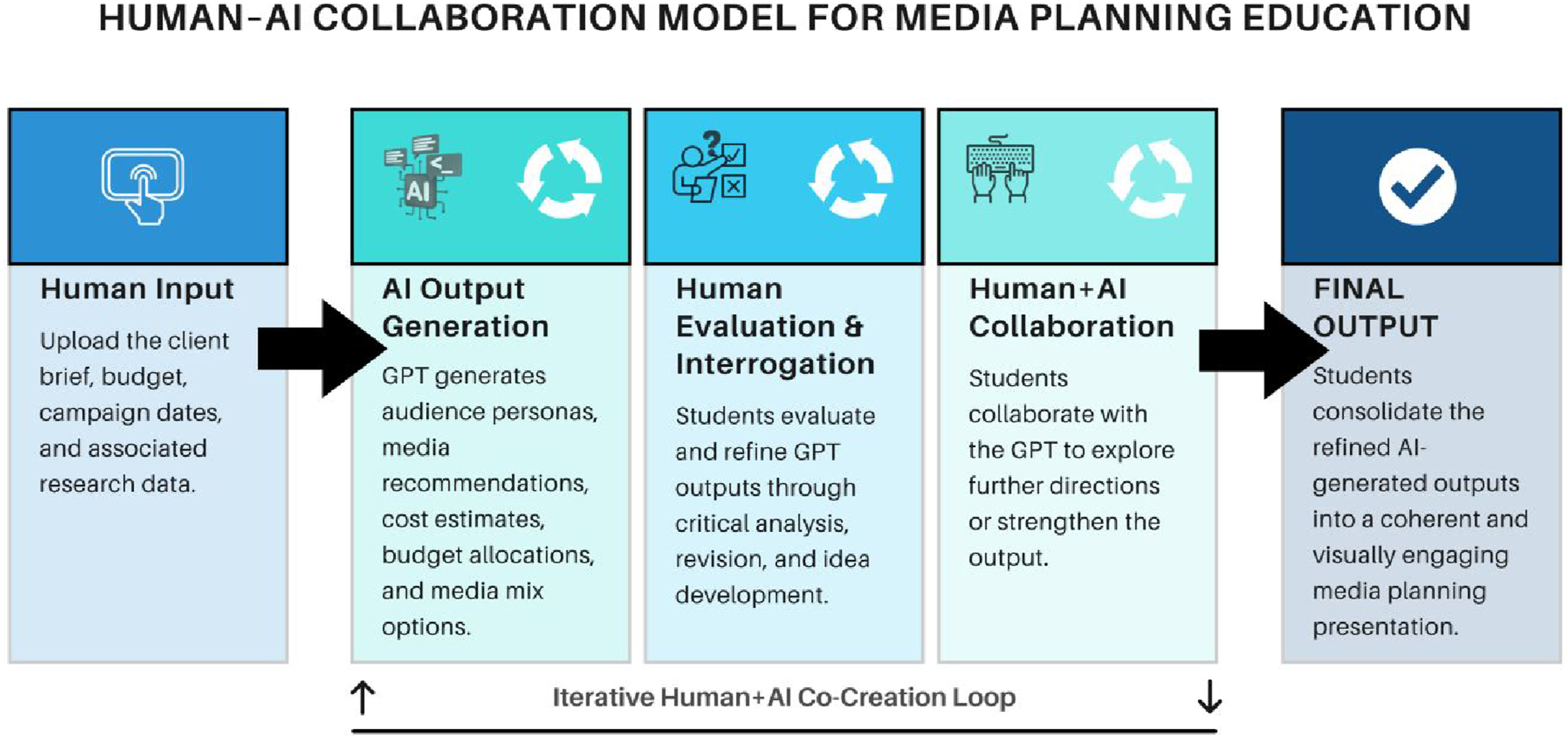

Building on this foundation, Figure 2 presents the model through which students engage in structured Human–AI collaboration over the course of the semester. The model outlines a workflow in which collaboration begins with student input and progresses through iterative steps that mirror how agencies are embedding large language models into media planning workflows to increase planning precision, accelerate activation, and interpret fragmented performance data. Human–AI collaboration model for media planning education

In modern media buying, LLM-powered systems, particularly custom GPTs and other configured applications, are increasingly embedded in professional workflows, with their greatest impact at key analytic decision points. Practitioners use these tools to accelerate scenario exploration, inform budget allocation and channel mix decisions, and support post-campaign performance analysis (Bradley, 2025). Prompts function as strategic engines to model alternatives, stress-test assumptions, and identify likely drivers of performance. At the same time, higher-order responsibilities such as positioning, objective setting, and client management remain human-led, with AI serving as decision support rather than an autonomous planner (Vespa, 2026).

This human–AI dynamic directly shapes the “messy middle” framework. As in industry, students move between model-generated outputs and human judgment, refining prompts, evaluating outputs against briefs and constraints, and translating results into credible strategy. The approach reflects how AI is transforming analytic tasks while preserving human strategic control, but makes this interaction explicit and teachable, providing a structured model for human–AI collaboration that is often informal in practice (Häglund & Björklund, 2022; Wang et al., 2024).

In this model, the learning process becomes a structured cycle of collaboration that follows a defined sequence. • Phase 1—Human input: In this phase, the student acts as the subject matter expert, guiding the media planning process by uploading the client brief, assigning a client budget and campaign timelines, and inputting supplementary research data. Students are also responsible for retrieving all essential external data, including SRDS rates for offline media (e.g., TV and print ads), DMA maps, competitive spending summaries, and any market level information needed to construct a media-mix or share-of-voice analysis. These inputs train the custom GPT as an extended media planning partner and establish the essential foundation for the workflow. In doing so, students set the strategic parameters that shape the GPT’s reasoning and frame all downstream planning outputs. • Phase 2—AI output generation: Based on the human inputs provided, the custom GPT generates key elements of the media planning process, including audience personas, recommended media outlets, projected costs, budget allocations, timing strategies, platform rationales, and preliminary media-mix scenarios. These outputs align with the strategic objectives defined in the brief and the additional materials supplied in Phase 1. The outputs also serve as the foundation for a continuous feedback loop that links Phases 2 through 4, proposing the initial recommendations that students will critically evaluate and refine in subsequent phases. • Phase 3—Human evaluation and interrogation: Within this phase, students serve as domain experts and project leads, evaluating GPT outputs and identifying areas for refinement while articulating constraints the model may have overlooked. They question reasoning behind AI-generated recommendations and assess alignment with the strategic requirements of the brief, including identifying omissions, inconsistencies, or undervalued insights. This process drives the human–AI feedback loop, as student evaluations inform subsequent GPT outputs. • Phase 4—Human–AI Iteration: Following student evaluation, students collaborate with GPT to distill research, explore previously unexamined directions, and strengthen outputs through targeted prompting and structural adjustments. During this phase, students may conduct descriptive data analysis, such as interpreting MRI Simmons indices, reviewing competitive spending patterns, comparing CPM/CPP values, or examining trending tables, and translate these insights into channel investment guidance, media scenario forecasting, and planning decisions. This phase establishes an ongoing cycle of human synthesis and AI recalibration through which outputs become increasingly aligned with strategic objectives. Multiple cycles through Phases 2–4 continue until the work reaches a level of completeness and quality suitable for final presentation. • Phase 5—Final Output: In the final phase, students review the refined outputs and translate them into a coherent and strategically grounded media planning presentation. This involves developing compelling and logically structured deliverables and visuals that reflect the objectives, challenges, and opportunities outlined in the client brief, as well as the analytical insights generated throughout earlier phases of the model. During this phase, students create a unified plans book in which they, as human decision-makers, are responsible for presenting and justifying their final media planning recommendations to the simulated client (instructor).

In Appendix A, we propose the weekly modules and associated deliverables, which provides a structured and replicable framework for integrating human–AI collaboration into media planning education. Importantly, the schedule operationalizes both the collaborative workflow and the step-by-step development of the Custom GPT, serving as a helpful starting point that instructors can further refine based on their course needs.

Teaching Critical AI Literacy

While custom GPTs introduce new opportunities for automating routine media tasks and expanding students’ conceptual capabilities, their integration into the curriculum requires an intentional focus on AI literacy (Zhou & Schofield, 2024). The objective is not simply to teach students how to use the tool as an extension of their workflow, but to ensure that they develop as domain experts who can critically evaluate and manage the AI’s strategic outputs (Ouyang et al., 2022) when shaping the final media strategy.

Achieving this objective requires reconfiguring the instructor role into one of strategic mediation, guiding the relationship between student reasoning and AI assistance. This structure helps students understand when to rely on AI, when to question outputs, and how to integrate them after human synthesis completes the iterative loop (Ciubotaru, 2025). Students also learn to detect and address AI inaccuracies, including inaccurate CPM estimates, incorrect assumptions, or invalid competitive insights (Kaufmann et al., 2024). These moments allow instructors to frame AI limitations as opportunities to strengthen verification practices, data proficiency, and evaluative reasoning (Bach et al., 2024). In doing so, instructors align classroom guidance with human–AI workflows, ensuring consistent expectations for interpreting, questioning, and refining AI-generated outputs (Amershi et al., 2019). In Appendix B, we present the Assessment Framework and AI Use Guidelines, offering practical tools for instructors to evaluate the effectiveness and responsible use of AI in the course.

Human–AI Media Planning Pedagogy: Development of the Media Planning AI Assistant

To integrate generative AI into an undergraduate media planning curriculum, we developed a custom GPT-based tool, the Media Planning AI Assistant (hereafter

Pedagogical Rationales

Undergraduate students often struggle with synthesizing multiple data sources, including client briefs, consumer insights, competitive spend, and media rates, into a coherent planning framework. They also face challenges in determining where to begin and how decisions flow from one stage of planning to the next. A key pedagogical goal of this tool is not simply to answer answers but rather to scaffold the Human reasoning process that students must ultimately master. When prompted to generate a strategic roadmap, the Assistant outlines a five-stage, agency-aligned workflow that helps students understand how discrete research components combine into a coherent plan. This structure supports students as they learn to move from raw data to actionable strategy, while still requiring them to evaluate, refine, and challenge the AI’s suggestions.

Knowledge-Based Construction and Prompt Architecture as Human–AI Co-Creation

A central design principle of the Assistant is that it does not function as an autonomous planning engine; instead, it operates through human–AI co-creation, where students generate, interpret, and curate the knowledge the Assistant relies on. Before the model can perform any media planning tasks, students must: • Review the client brief with a clear understanding of the core marketing challenge or opportunity (e.g., new product launch, brand awareness objective, brand repositioning, market expansion, declining engagement, or a competitive threat). This includes assessing the client’s background, campaign budget and timeline, competitive landscape, and any additional factors relevant to the media planning process. The review should also include a preliminary consideration of key strategic implications, such as audience needs, marketplace dynamics, and potential strengths, weaknesses, opportunities, and threats (SWOT), which together establish the foundational context for all planning decisions. • Interpret MRI-Simmons datasets and translate them into qualitative summaries of consumer values, media habits, and product-use patterns. These human-generated insights become the uploaded persona data the Assistant draws from when creating audience segments. • Identify key competitors and top DMAs in the functional energy drink space and gather their monthly and annual spend data from MediaRadar 360. Because Custom GPTs cannot reliably ingest raw spreadsheets, students must restructure this information into clean, descriptive text files. This translation process reinforces their understanding of competitor dynamics and Share of Voice (SOV) calculations. • Integrate industry media rate benchmarks by synthesizing information from SRDS, agency rate sheets, and scholarly sources such as Wu and Andrews (2024). Students convert these data into narrative summaries the AI can reference when calculating impressions or recommending mixes. • Teach the Assistant core media planning terminology, such as GRPs, CPM, media mix, and SOV, by preparing a terminology glossary that defines each concept using industry conventions.

These human-produced files form the architectural backbone of the tool. Thus, the Custom GPT acts as an extension of students’ analytical labor, not a replacement for it, and its outputs are only as strong as the research students synthesize and upload. This human–AI collaboration makes the Assistant highly adaptable to different classes, datasets, and client briefs.

The prompt architecture reinforces this dynamic. Students are positioned as junior-level planners, and the Assistant continually requests clarification or additional human input where needed, helping them learn to diagnose missing information and recognize the dependencies across planning stages.

Step-by-step Interaction Flow

To illustrate how advertising instructors can integrate generative AI into media planning pedagogy, this section summarizes the step-by-step interaction flow of the Media Planning AI Assistant using a modified industry-style client brief for a mock brand, STRIDE Energy™, as the running example. In this scenario, STRIDE is positioned to enter the U.S. market through a fully integrated twelve-month national media campaign (January 1–December 31, 2026) with a total launch budget of $30 million. This simulated brief provides students with a realistic planning context and serves as the foundation for each stage of human–AI collaboration described below, with detailed excerpts provided in Appendix C.

Strategic Roadmap Generation

The planning process begins with a high-level prompt asking the Assistant to produce a strategic roadmap. The model responds with a structured outline of the media planning process, audience definition, competitive analysis, channel strategy, geographic prioritization, and flighting, while specifying what data students must analyze at each stage (e.g., MRI-Simmons summary for audience insight and spend data for SOV analysis). This serves as a scaffold for students who often struggle to see the end-to-end logic of a media plan.

Persona Development Using MRI-Simmons Data

Next, students request primary and secondary target personas based on the uploaded MRI-Simmons consumer insights and the Stride Energy client brief. The Assistant generates detailed persona, including demographics, psychographics, motivations, barriers, media habits, purchase drivers, and explicit “media planning implications.” Instructors can use these persona drafts as starting points for classroom critique and refinement, strengthening students’ ability to connect research to strategy.

Competitive Analysis and SOV Interpretation

Students then ask the Assistant to interpret competitive spending data (uploaded from MediaRadar 360). The model calculates estimated category totals, derives Stride Energy’s SOV, and articulates the strategic implications of entering market with a particular SOV. This step demonstrates how AI can model the analytical reasoning expected in professional media planning while giving students a framework for understanding competitor dynamics.

Channel Strategy Justification

With personas and competitive context established, students prompt the Assistant to evaluate potential media channels. The model produces channel-by-channel assessments, explaining why streaming audio, social video, connected TV (CTV)/over-the-top (OTT), retail media networks, programmatic display, and digital out-of-home (DOOH) align with the audience’s media habits and Stride Energy’s campaign objectives. This provides a clear example of how AI can generate strategy options that students must evaluate these proposals, adjust weights, and justify changes during critique sessions, rather than accept uncritically.

Media Mix Development With CPM Benchmarks

Students then request a recommended media mix for the $30M campaign. Using the uploaded media rate benchmarks and personas, the Assistant outputs a professionally structured mix with channel budgets, CPM ranges, and estimated impressions. The mix also includes a tiered DMA strategy informed by competitor presence and audience distribution. Students then test alternative allocation scenarios and evaluate trade-offs. This demonstrates how students can move from qualitative insights to quantified decision-making.

Monthly Budget Allocation (Flighting Strategy)

To operationalize the annual media mix, students ask the Assistant to produce a monthly budget schedule aligned with category seasonality and competitive spending patterns. The model generates a pulsing plan with heavier allocations in priority windows (e.g., January, February, June, and August), illustrating how AI can model timing logic based on media consumption behaviors and competitive bursts. In this final step, students also prompt the Assistant to calculate the overall GRPs for the full campaign, as well as the GRPs allocated each month based on the CPM of the selected channels, budget, and estimated impressions. The resulting monthly flow chart provides a consolidated view of how total spend shifts across the year and serves as the final planning output that students can refine and integrate into their media plan books.

Iterative Refinement to Encourage Strategic Thinking

The iterative cycle of human–AI collaboration illustrated in Figure 2 is central to the pedagogical design of this course. Importantly, the AI-generated outputs presented in this paper represent the result of multiple rounds of interaction and refinement rather than single-pass responses. Students are expected to interrogate AI outputs, challenge underlying assumptions, and revise recommendations through structured prompting and data verification.

In practice, iteration begins with identifying AI limitations, inaccuracies and hallucinations. For example, the Assistant may generate inflated or generalized CPM estimates, miscalculate SOV due to incomplete competitor inputs, or overgeneralize audience behaviors. Students are instructed to treat all AI-generated metrics (e.g., CPM, impressions, and GRPs) as provisional estimates, requiring independent validation against SRDS, industry rate sheets, and course benchmarks. Although the Assistant is trained with industry-provided media rate benchmarks, AI hallucinations may still occur; therefore, students are required to write prompts that explicitly validate CPM assumptions against the uploaded benchmark files. If, for instance, the Assistant suggests a CPM of $40 for TikTok, well above typical industry ranges, students must flag the discrepancy, cross-check the value, and revise it with proper justification. This process is reinforced through assignments that require verified calculations and annotated corrections, supported by targeted prompts (e.g., “Validate these CPM assumptions against benchmarks and identify inconsistencies”).

This iterative process also mitigates the “garbage in, garbage out” risk. Because AI outputs depend on student-provided inputs, inaccurate early-stage research (e.g., misinterpreted MRI-Simmons data or incomplete competitive inputs) can propagate through the plan. To address this, the course incorporates instructor checkpoints after Phase 1 and early Phase 3, where key inputs (e.g., audience insights and SOV calculations) are reviewed and, if necessary, resubmitted before further development.

Through repeated cycles of student evaluation, instructor feedback, and AI recalibration, students progressively strengthen their strategies. Iterations thus function not only as a refinement mechanism but also as a core learning process that cultivates competencies in data validation, strategic reasoning, and critical evaluation of AI-assisted work.

Ethical and Pedagogical Considerations

The Assistant tool is framed explicitly as a draft generator rather than an autonomous planner, emphasizing that all outputs require human verification and interpretation. This positioning creates intentional opportunities to discuss the limitations of generative AI in media planning. Because the Assistant relies on uploaded data and often surfaces its own assumptions (e.g., estimating total category spending), students must critically evaluate its reasoning instead of accepting it at face value. Students must check all CPM assumptions, validate competitive totals, and compare AI personas to their own MRI-Simmons readings.

This framing supports classroom discussions about the limitations of generative AI, the importance of transparency, and the centrality of human expertise in media planning. Students are required to disclose use of the Assistant, cross-check outputs against uploaded files, and demonstrate independent strategic justification in their final campaign books. Interaction examples show how the tool models industry-style deliverables while requiring student oversight, such as assessing whether a proposed media mix aligns with Stride’s objectives or adjusting monthly spending. In this way, the Assistant functions as a catalyst for critical thinking, transparency, and ethical engagement with AI-assisted work rather than a shortcut around core learning goals.

Discussion

Implications for Media Planning Pedagogy

The Media Planning AI Assistant illustrates a human–AI collaborative model for media planning pedagogy. In contrast to traditional courses emphasizing static case studies and linear planning, this model embeds AI within research, budgeting, and scheduling to align with contemporary workflows. Students construct the knowledge base, provide inputs, and critically evaluate outputs, while the Assistant models industry workflows and decision structures. This reflects how media agencies use large language models and custom GPTs as decision-support tools to generate scenarios, inform budget trade-offs, and interpret performance rather than automate decisions, positioning custom GPT as a pedagogical tool that augments human learning.

One anticipated instructional benefit of this approach is the potential to reduce extraneous cognitive load. Framing AI as a way to offload routine, lower-level tasks align with cognitive load theory, which recommends minimizing unnecessary processing demands so that learners can devote more resources to core conceptual and strategic work. By automating routine drafting tasks, the Assistant is designed to allow more class time to be devoted to higher-order activities such as interrogating assumptions, comparing strategic alternatives, and justifying budget decisions. This shift aligns with experiential and practice-based approaches in advertising and marketing education, which emphasize applied reasoning and strategic justification over procedural tasks such as formatting or calculation (Kolb, 2014). In this way, skills, such as visualization, ethical evaluation, and strategic storytelling, may become more central within the learning process.

The tool also makes the long-standing art-science duality of media planning more explicit. AI can be structured to support the “science” (data synthesis, calculations, structure), while students contribute to the “art” (interpretation, persuasion, creative strategy). This reflects programmatic and computational advertising, where data systems handle large-scale optimization and human strategists interpret patterns into brand-relevant strategy. This human–AI collaboration mirrors real agency practice and helps students understand where human judgment is indispensable. Framing the Assistant as a co-designer, rather than a generator, foregrounds human judgment and aligns with emerging models of human–AI collaboration in education. It positions learners as active operators and evaluators who direct, monitor, and refine outputs, reflecting AI literacy frameworks that emphasize critical evaluation, bias detection, and verification of AI-generated content.

The Assistant offers benefits for educational accessibility and equity. Students with limited data-analysis experience or language challenges can use AI-generated scaffolds to engage more effectively with complex planning tasks. By standardizing drafting and lowering procedural barriers, the tool broadens participation and supports more inclusive learning environments. This aligns with equity-focused research in digital learning, which finds that thoughtfully designed tools can reduce disparities in participation and performance when access and support are equitably distributed (Warschauer, 2004).

Importantly, this collaborative model is structured to maintain methodological rigor. Because students generate the knowledge files, interpret the research, and verify the AI’s conclusions, they remain accountable for every strategic decision. AI outputs serve as starting points that students must critique and refine, reinforcing the role of human expertise in shaping media plans. This process also reflects the kind of “computational thinking” media planning increasingly demands, pattern recognition, scenario modeling, and logic-based decision-making, positioning generative AI as a structured support for analytical reasoning in coursework.

The model incorporates safeguards to promote grading fairness, uphold academic integrity, and limit overreliance on AI. Rather than evaluating only the final deliverable, the framework emphasizes the iterative process, including research inputs and prompt rationale, providing a basis for assessing individual contribution. Academic integrity is reinforced by requiring students to critically evaluate and validate AI-generated content, ensuring final strategies reflect informed, human-led decision-making. Consequently, dependency is mitigated by situating AI within a scaffolded, process-driven workflow that requires active engagement and promotes strategic reasoning.

To provide preliminary insight into this approach, informal student reflections and instructor observations were collected across a mixed cohort, including students from the National Student Advertising Competition (NSAC), the Washington Media Scholars competition, and undergraduate media planning courses. Students reported that the Media Planning AI Assistant improved their ability to synthesize key inputs, such as client briefs, audience data, DMAs, and campaign timelines, into more cohesive strategies, while increasing efficiency by enabling them to process multiple variables and generate recommendations for channel selection and budget allocation.

The tool was particularly effective in supporting quantitative decisions, including budget distribution and pulsing strategies, areas students often find challenging. Students also emphasized that output quality depended on the research they provided, reinforcing the importance of human input. Importantly, students used the Assistant as a decision-support tool, critically evaluating and refining recommendations rather than accepting them at face value. These observations suggest the tool enhances efficiency and confidence while maintaining the central role of human judgment in media planning.

Limitations and Future Directions

While this approach offers clear pedagogical value, several limitations remain. AI-generated outputs are inherently dependent on input quality and may produce inaccuracies or hallucinations, requiring students to critically evaluate results and rely on strong foundational materials such as client briefs and research inputs (Akter et al., 2021; Paul et al., 2023). Bias embedded in training data may also surface in outputs, necessitating active student awareness and mitigation to avoid inequitable decision-making (Zhuo et al., 2023).

Additionally, GPT-generated recommendations may not reflect real-time media conditions, as cost benchmarks such as CPM and CPP fluctuate across markets, formats, and timing. Students must validate outputs against industry sources rather than rely exclusively on AI-generated estimates. Overreliance on AI remains a concern, as cognitive offloading and reduced engagement with manual planning may limit skill development without safeguards and instructor oversight (Klingbeil et al., 2024; Zhai et al., 2024). Access disparities also present challenges, as institutional support for AI tools varies, creating inequities in learning environments (Spivakovsky et al., 2023). Further, model behavior may shift due to updates, and privacy concerns remain when working with sensitive or proprietary materials (Vargas et al., 2025).

These limitations also inform future research and curriculum development. Expanding this model across advertising disciplines, including creative strategy, consumer research, and account planning, may support more integrated, full-funnel learning. Longitudinal research is needed to examine how sustained exposure to AI-assisted workflows influences students’ analytical capabilities, ethical awareness, and professional readiness over time.

The integration of AI also necessitates a rethinking of assessment, with greater emphasis on evaluating student reasoning, justification, and ability to critically interrogate AI outputs rather than focusing solely on final deliverables. Future work should explore assessment models that capture iterative thinking and compare outcomes across pre-AI and AI-integrated learning environments.

Finally, this framework holds strong potential for graduate-level and professional applications, where more complex datasets and client-facing scenarios can further bridge academic preparation and industry practice, positioning AI literacy as a core competency in contemporary advertising education.

Supplemental Material

Supplemental Material - Co-Designing Media Plans With Generative AI: Teaching Media Planning as Human–AI Collaboration in the Classroom

Supplemental Material for Co-Designing Media Plans With Generative AI: Teaching Media Planning as Human–AI Collaboration in the Classroom by Taylor Jing Wen, Kristin Walker, and Carrie Jingyi Xiao in Journal of Advertising Education.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.