Abstract

The integration of generative artificial intelligence (GenAI) in business education is reshaping data analysis and decision-making. However, concerns remain about potential over-reliance on GenAI at the expense of students’ critical thinking. This article presents an assessment designed to develop students’ technical proficiency in AI-supported data analysis while encouraging critical reflection on AI-generated outputs. Using a real-world-inspired case study, students collaborated with GenAI tools to conduct regression, forecasting, and hypothesis testing, and compared AI-generated results with manual analysis in Excel. Thematic analysis, aligned with Kolb’s experiential learning model, reveals stratified engagement: middle and strong performers demonstrated deeper reflection, especially on ethical implications and the importance of human oversight. Strong students further explored GenAI’s adaptability across different contexts. These findings contribute to the growing discourse on GenAI literacy in management education, underscoring the need for balanced pedagogical strategies that cultivate both AI skills and ethical and professional judgment essential for AI-augmented management practice.

Keywords

The integration of generative artificial intelligence (GenAI) into decision-making is transforming management education, making AI literacy a core competency for future managers and leaders (Surugiu et al., 2024; Zhou et al., 2024). In business data analysis—a core element of management education—GenAI tools allow students in processing datasets, automating computations, generating insights, and simulating strategic decisions (Krause, 2023).

However, educators remain concerned about GenAI’s effects on students’ critical thinking and ethical awareness (Gerlich, 2025; Jose & Jose, 2024). Cognitive load theory (Sweller, 2020) suggests GenAI may reduce mental effort, yet such ease risks undermining deep engagement if students fail to critically interrogate AI-generated outputs. Moreover, algorithmic biases and lack of transparency may lead to flawed business decisions if AI is adopted uncritically (Aquino, 2023; Wang, 2021).

To address these concerns, the Human–AI Collaboration Model positions AI as a cognitive partner, with learners retaining responsibility for interpretation and judgment (Atchley et al., 2024). This approach encourages students to iteratively co-produce analysis with GenAI tools—generating outputs, questioning assumptions, and applying management knowledge. Such engagement aligns with Kolb’s experiential learning cycle, integrating experience, reflection, conceptualization, and application (Kolb, 2014).

Existing literature highlights both benefits and limitations of human–AI collaboration in education. GenAI can automate routine tasks, freeing students to focus on complex problem-solving, creativity, and reflection (Clegg & Sarkar, 2024; Padovano & Cardamone, 2024). It also supports personalized feedback and adaptive learning (Chiu & Rospigliosi, 2025; Dang et al., 2025; Edwards et al., 2025), and may enhance metacognitive and self-regulated learning (Hutson & Plate, 2023; Zhou & Schofield, 2024). However, unequal access and digital literacy gaps risk deepening the digital divide in business schools (Zhou et al., 2025). Concerns over AI’s transparency and reliability may undermine student trust and lead to flawed decisions (Chen, 2022; Karakose et al., 2023). Over-reliance on AI without scaffolding can also weaken independent judgment and ethical reflection (Essien et al., 2024; Larson et al., 2024; Valcea et al., 2024). Effective implementation requires deliberate instructional design and ongoing oversight to balance AI use with responsible learning goals (Dai et al., 2025; Padovano & Cardamone, 2024).

Within management education, several studies have examined GenAI’s use in supporting business students’ creativity, writing, or idea generation (Aure & Cuenca, 2024; Guha et al., 2023; Narang et al., 2025), and others explore student perceptions or conceptual models (Gonsalves, 2024; Hazari, 2025; Oliveira et al., 2025). However, most rely on surveys or self-reports rather than direct observation of AI use in discipline-specific tasks.

Our study fills this gap by embedding human–AI collaboration within a business data analysis assessment that simulates authentic managerial decision-making. Through student reflections, we analyze how students critically engage with AI-generated outputs, developing ethical awareness and reflective thinking. Drawing on student-authored qualitative reflections and observable performance patterns, we offer insights into how human–AI collaboration can support management education outcomes. This exercise suggests that GenAI can be effectively integrated into education while fostering, rather than undermining, critical thinking, consistent with the findings of Essien et al. (2024) and Rusandi et al. (2023).

Learning Objectives

After completing the exercise, students will be able to:

Collaborate with GenAI tools to conduct data analysis, forecasting, and decision-making in a business context, integrating AI outputs with traditional methods.

Communicate data-driven business decisions, justifying choices based on both AI-generated insights and independent reasoning.

Evaluate the effectiveness, reliability, and limitations of GenAI in business decisions, considering transparency, accuracy, and potential biases.

Apply critical thinking to interpret AI-assisted business recommendations, assess their validity, and align them with business objectives.

Reflect on the ethical and professional implications of GenAI use, including data privacy, automation, and human oversight.

Exercise Overview

This assessment develops students’ ability to apply statistical techniques and critically evaluate GenAI tools in business data analysis. Based on a case study of a hypothetical IT company that expanded GenAI investment after 2021, students analyze seasonal business performance data to assess impacts on sales, profits, staff satisfaction, customer satisfaction, and employee turnover.

Using data from 2001 to 2023, students conduct forecasting and hypothesis testing, selecting variables and interpreting results. They collaborate with GenAI tools (e.g., ChatGPT, Copilot) to support decision-making, comparing AI-generated outputs with manual Excel analysis to evaluate accuracy, reliability, and efficiency. Finally, students critically reflect on GenAI’s role as a decision-making partner, assessing its strengths, limitations, and real-world implications. The final deliverable is an individual report combining quantitative analysis and critical evaluation of human–AI collaboration.

Logistics

Participants

The assessment involved 276 students enrolled in the

Data and Context of the Case Study

The dataset contains 552 observations across six business metrics over 23 years. Details on dataset simulation are in Appendix A. It spans a pre-AI period (2001–2021) and post-AI period (2021–2023), allowing students to examine how AI investment relates to business performance.

Preparation

Students receive guidance via lectures and the university’s learning platform, including the dataset, report template, and instructions on using GenAI tools and Excel. A worked example (airline passenger prioritization) demonstrates forecasting, hypothesis testing, and AI-supported analysis. Lectures also cover forecasting methods, hypothesis testing, and ethical issues. An AI policy statement is provided (Appendix B).

Required Materials

Seasonal business dataset (2001–2023).

GenAI tools (e.g., ChatGPT, Copilot). The base or free versions of GenAI tools are sufficient for the purpose of this exercise, which focuses on fundamental business data analytics tasks such as basic regression, hypothesis testing, and simple predictive modeling. In fact, the limitations of these tools can enrich student learning by prompting critical reflection on the imperfections, errors, and boundaries of GenAI outputs—an important aspect of developing responsible AI use in management education.

Excel for manual calculations and comparison with AI-generated results.

Structured report template for consistency in submissions.

Timing

Students complete the exercise independently over 2 weeks. The task requires around 22 hours. Final reports and Excel files are submitted via the university’s learning platform.

Step-by-Step Instructions

Data Analysis Using GenAI and Traditional Methods

Students begin by reading the assessment instructions (Appendix C). They load the dataset into GenAI tools and Excel to conduct:

forecasting on a selected variable;

hypothesis testing on relationships between variables;

comparative analysis of GenAI outputs versus manual Excel computations.

Throughout, students assess accuracy, efficiency, and computational logic.

Critical Evaluation of GenAI Tools

After their analyses, students critically evaluate AI-generated results, considering:

accuracy and reliability of AI insights;

efficiency compared with manual computations;

embedded biases in GenAI decision-making;

the role of human oversight in business decisions;

risks of uploading sensitive or company data to public tools, and related privacy concerns.

Report Compilation and Submission

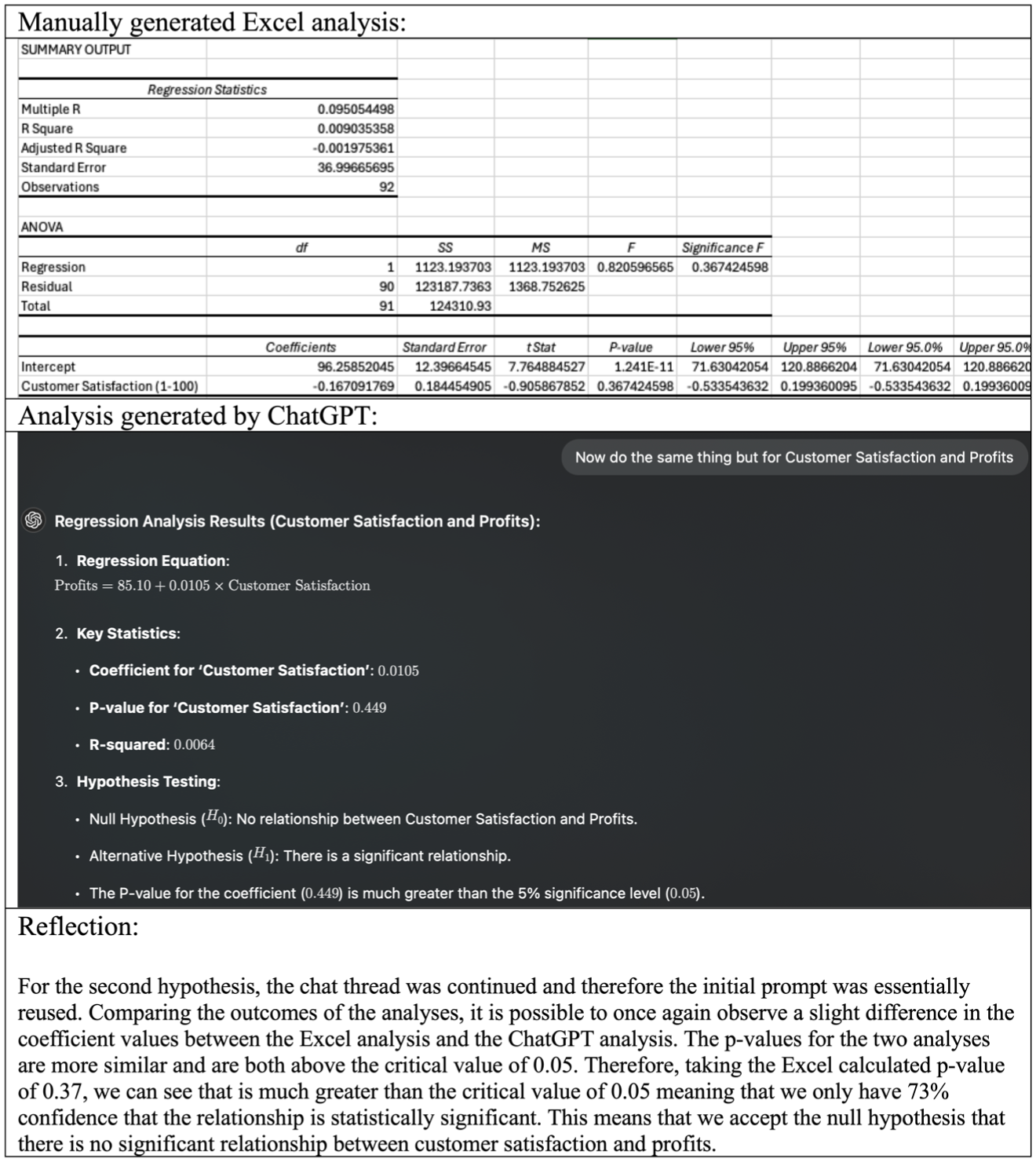

Students compile a structured report combining data analysis with reflection on GenAI’s role in this exercise. Figure 1 shows a sample comparing Excel and ChatGPT analyses, with student reflections on the comparison.

A Sample Student’s Comparison of a Manually Generated Analysis in Excel With a ChatGPT-Generated Analysis, Including a Reflection

Variations

Please see Appendix D for details of variations, including further examples of adapting this exercise to other management education contexts.

Debriefing

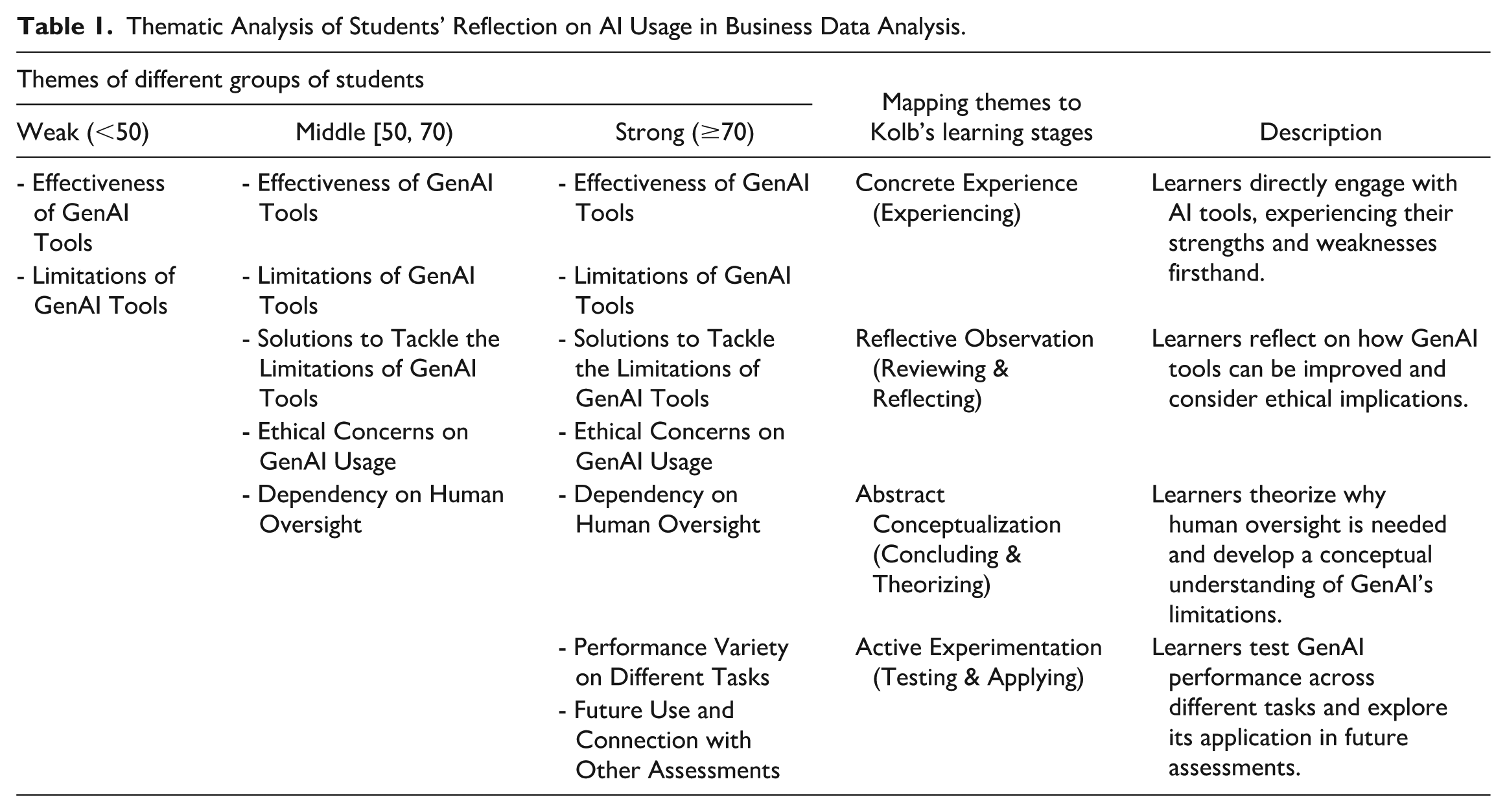

We did a thematic analysis of students’ reflections on AI tool usage, mapped to Kolb’s experiential learning cycle (see Table 1). Students were grouped into three performance bands: weak (score below 50), middle (score between 50 and 69), and strong (score of 70 or above). The module includes assessments and coursework tasks that go beyond the use of GenAI, so the final grade provides a comprehensive reflection of students’ overall performance beyond this exercise. By mapping students’ reflections to Kolb’s framework, we identified distinct patterns in how students engaged with GenAI tools, from direct experience to conceptual understanding and application.

Thematic Analysis of Students’ Reflection on AI Usage in Business Data Analysis.

Across all student groups, the initial interaction with GenAI tools centered on evaluating their effectiveness and limitations. All students engaged directly with GenAI applications, observing both their strengths and weaknesses in business data analysis.

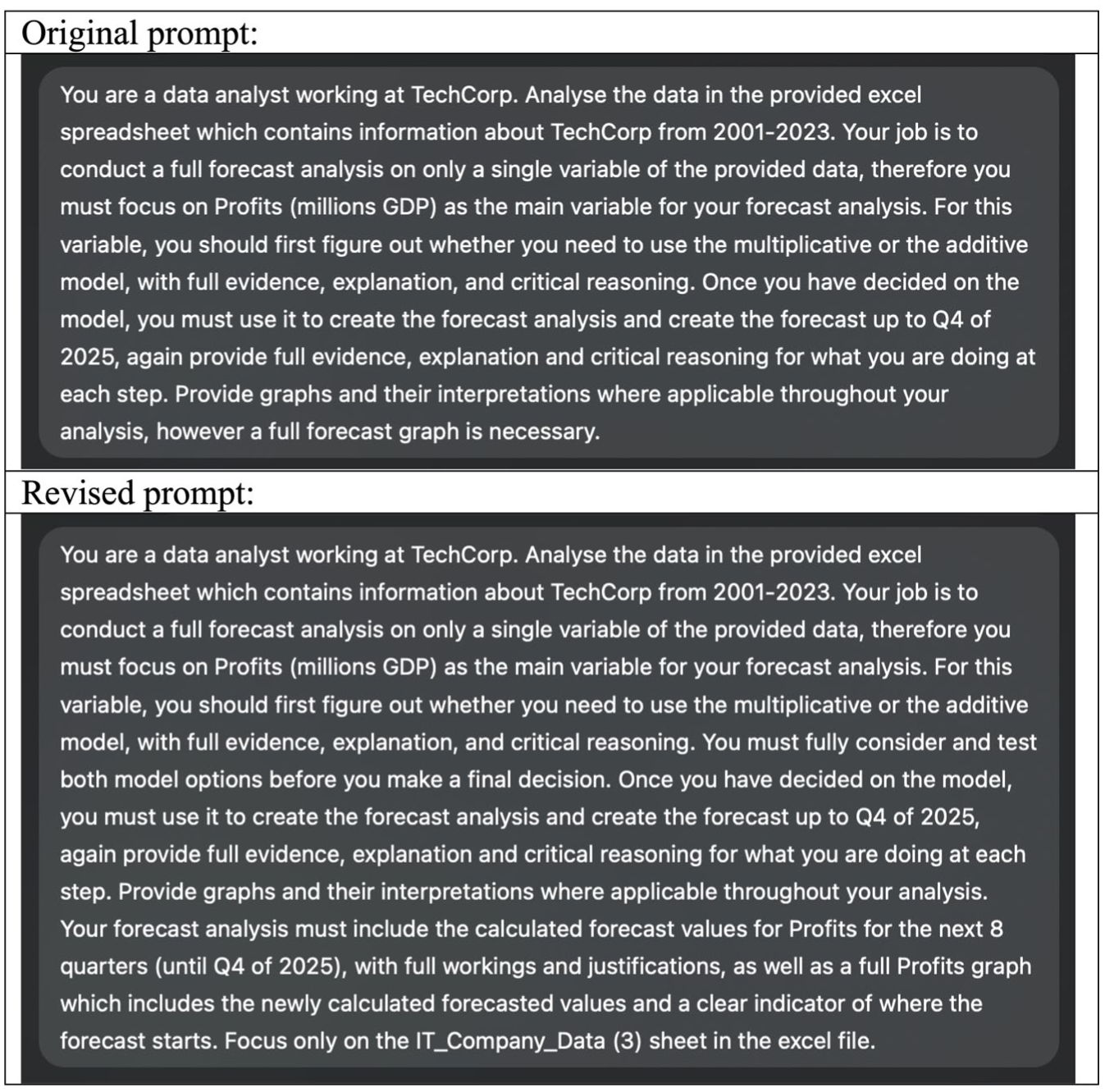

Middle and strong students progressed beyond experiencing, reflectively discussing solutions to GenAI’s limitations (e.g., “

Example of Iterating the Process by Coaching AI to Improve Its Responses Through Updated Prompts and Additional Instructions

Among strong students only, engagement extended further to exploring GenAI’s performance across different tasks (e.g., “

These findings suggest several tips for replication. First, the limitations of GenAI—such as vague or overly confident outputs—can be pedagogically useful. Allowing students to encounter and respond to these imperfections encourages critical thinking and iterative refinement, rather than passive acceptance.

Second, structuring the task around human–AI collaboration shifts focus from outputs to process. Asking students to explain how they used, modified, or rejected GenAI responses helps develop evaluative judgment and reinforces the importance of human oversight.

Third, the choice of dataset shapes ethical reflection. We used fictional data (see Appendix A), but when real company scenarios are included, students can be prompted to consider issues of privacy and data sharing—leading to valuable discussions about ethical responsibility in AI-assisted decision-making.

Conclusion

This exercise enabled students to engage with GenAI tools in business data analysis while critically evaluating their effectiveness. Mapped to Kolb’s experiential learning cycle, student reflections revealed distinct learning patterns. Weak students focused on effectiveness and limitations, while middle and strong students advanced to ethical concerns and human oversight. Strong students further explored GenAI’s performance across tasks and its broader applications. The findings align with Kolb’s theory, as students progress from experience to reflection, conceptualization, and application. The structured assessment ensured GenAI was used not just as a computational tool but as a subject of critical evaluation, reinforcing the necessity of human oversight in AI-driven decision-making. Student feedback of this teaching practice was very positive, and our planned future improvements are summarized in Appendix E.

While these findings have broader relevance for education generally, they are particularly significant for management education, where decision-making under uncertainty, ethical reasoning, and professional judgment are core competencies (Maclagan, 1995). Through engaging with GenAI tools in business data analysis, students not only develop technical data skills but are also exposed to managerial dilemmas that require critical evaluation of AI-generated outputs, balancing conflicting information, and recognizing where algorithmic limitations intersect with human responsibility. In particular, students reflected on issues such as when AI outputs are unreliable, when data require pre-processing, and when GenAI’s inability to handle ambiguity or contextual nuance creates risks in decision-making. These reflections help prepare students to lead organizations where GenAI tools may assist analysis but do not absolve managers from oversight and accountability.

The exercise also creates opportunities for management educators to facilitate deeper ethical discussions. For example, students debated responsibility for AI-generated mistakes, fairness in AI-informed business recommendations, transparency of AI processes, and the risks associated with over-reliance on opaque algorithms. These conversations mirror real organizational challenges managers face when adopting AI-driven decision support systems. In doing so, the exercise supports both technical development and the formation of ethical judgment and leadership capabilities necessary for AI-augmented management practice.

By integrating GenAI tools into a business case study, the assessment fostered both technical proficiency and critical thinking. Future work may explore ways to enhance support for weaker students while enabling stronger students to experiment more broadly with GenAI in management education contexts.

Supplemental Material

sj-xlsx-1-mtr-10.1177_23792981251389862 – Supplemental material for From Collaboration to Critique: Engaging With GenAI to Foster Critical Thinking in Business Analytics

Supplemental material, sj-xlsx-1-mtr-10.1177_23792981251389862 for From Collaboration to Critique: Engaging With GenAI to Foster Critical Thinking in Business Analytics by Xue Zhou and Lei Fang in Management Teaching Review

Footnotes

Appendix A

Appendix B

Appendix C

Appendix D

Appendix E

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.