Abstract

Protein–protein interactions (PPIs) provide valuable insight into the inner workings of cells, and it is significant to study the network of PPIs. It is vitally important to develop an automated method as a high-throughput tool to timely predict PPIs. Based on the physicochemical descriptors, a protein was converted into several digital signals, and then wavelet transform was used to analyze them. With such a formulation frame to represent the samples of protein sequences, the random forests algorithm was adopted to conduct prediction. The results on a large-scale independent-test data set show that the proposed model can achieve a good performance with an accuracy value of about 0.86 and a geometric mean value of about 0.85. Therefore, it can be a usefully supplementary tool for PPI prediction. The predictor used in this article is freely available at http://www.jci-bioinfo.cn/PPI_RF.

Introduction

Proteins play a vital role in nearly all biological functions, such as composing the cellular structure and promoting chemical reactions. Many critical functions and processes in biology are sustained largely by different types of protein–protein interactions (PPIs), and PPIs are highly relevant to disease states. During the past few years, a vast amount of protein data has received a significant improvement with the rapid development of biotechnology. In recent years, various experimental techniques have been developed for large-scale PPI analysis, such as yeast two-hybrid systems,1,2 mass spectrometry,3,4 protein chips, 5 and so on. But these experimental approaches are tedious, time-consuming, labor-intensive, and expensive. 6 Only a small part of the PPIs’ pairs is analyzed by such methods. 6 Hence, it is important to develop a reliable computational model to relieve the difficulty of the identification of PPIs.

In biology, it is virtually axiomatic that the sequence specifies conformation, which implies an intriguing hypothesis: The amino acid sequence alone might be sufficient to determine the conformation of the protein. 7 Therefore, only the sequence information may be used to predict the interactions between two proteins via machine learning methods. Until now, a number of computational models have been proposed for predicting PPIs using simple protein sequence information alone,8–13 and some impressive performances have been reported. Some methods based on the genomic information, such as phylogenetic profiles,14,15 the gene neighborhood, 16 and gene fusion events,17,18 have been developed for prediction of PPIs by accounting for the pattern of the presence or absence of a given gene in a set of genomes. But they can be applied only to completely sequenced genomes and cannot be used for the essential protein that is common to most organisms. Sequence conservation 19,20 between interacting proteins also has been reported. Martin et al. 21 and Chou 22 et al. have developed computational methods for PPIs identification only via the sequence information and have had a prediction accuracy of 80%. Shen et al. 9 have developed an improved model that reaches a higher prediction accuracy of 83.5% when applied to human PPI identification. All of these methods account for the properties of one amino acid and its proximate two amino acids via a conjoint triad method. In fact, the PPIs may occur in the discontinuous amino acids segments in the sequence, and the prediction ability of these sequence-based methods may be beneficial from the consideration of these interactions. Furthermore, the prediction models used in these methods were developed via limited training samples (often, <3000 protein pairs) but with hundreds of variants. Therefore, they can easily encounter the overfitting problem, and the results are data dependent.

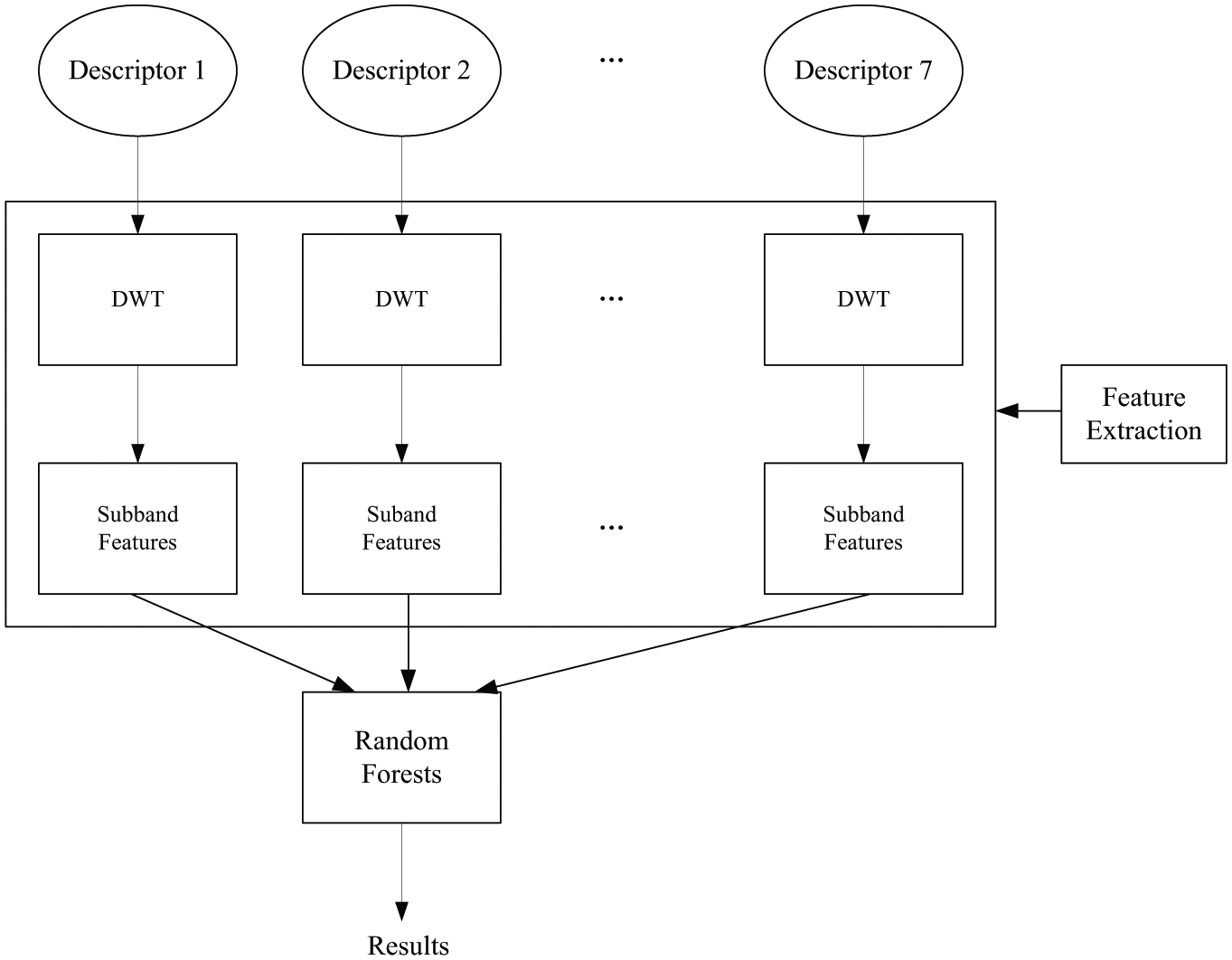

In this study, a new method based on the random forests algorithm and discrete wavelet transform (DWT) 23 with several physiochemical descriptors was proposed. To avoid the problem of overfitting, more than 10,000 PPIs were used to train the prediction model. The method was composed of three main steps. First, the protein sequences were converted into numerical signals by using the physicochemical properties of amino acids, and then these sequences were further analyzed by DWT, through which further relationships of the protein residues were considered. Second, the salient frequency-band features of DWT were extracted, and a series of statistical features was used to construct the feature vectors for representation of the protein sequence. Finally, the random forests algorithm 24 was applied to deal with the classified problem of PPI identification using these statistical feature vectors as inputs. The predictive results of the cross-validation test show that the proposed method is effective.

Materials and Methods

Data Collection and Data Set Construction

To develop a PPI prediction model, we need to construct or select a valid benchmark data set with which to train and test the predictor. The PPI data used in this article were collected from the publicly available Saccharomyces cerevisiae and Helicobacter pylori data sets.

In our experiments, a yeast (S. cerevisiae) data set is first used in independent study, which was downloaded from the Database of Interacting Proteins (DIP; version 20140703). 25 This data set contains 22,775 positive pairs. The protein pairs that contain a protein with fewer than 50 amino acids are removed, and then a nonredundant subset is generated at the sequence identity level of 40% by cluster analysis of the CD-HIT program. 26 After these pre-processing procedures, the total positive data set is reduced to 17,505.

Because the non-interacting pairs are not readily available from DIP, we construct them by using the following methods. The negative data set is generated based on the assumption that proteins locating different subcellular localizations do not interact. The subcellular location information of the proteins was extracted from Swiss-Prot (http://www.expasy.org/sprot/). The positive data may be divided into several types of localization—cytoplasm, nucleus, mitochondrion, endoplasmic reticulum, Golgi apparatus, peroxisome, and vacuole. The negative data were obtained by pairing proteins from one location with proteins from other ones. The strategies must meet the following requirements:9,10 (1) the non-interacting pairs cannot appear in the positive data set; and (2) the contribution of proteins in the negative set should be as harmonious as possible. A total of 5943 negative pairs were generated via this approach.

However, Ben-Hur and Noble 27 have pointed out that the restricting negative examples of different sublocation pairs lead to a biased estimate of the accuracy of a PPI predictor. So it is necessary to generate negative pairs with the same localization to reduce the effects of the bias. The protein pairs at the same localization are considered as the negative pairs if none of them has occurred in the yeast-positive pairs. From the seven sublocalizations, 27,204 negative pairs are generated (8000 at the cytoplasm, 8000 at the nucleus, 8284 at the mitochondrion, 1953 at the endoplasmic reticulum, 300 at the Golgi apparatus, 171 at the peroxisome, and 496 at the vacuole).

The H. pylori data set is composed of 2916 protein pairs (1458 positive pairs and 1458 negative pairs) as described by Maritin. 21 This data set was used to test the sensitivity of the parameters of the model, and it gives a comparison of our predictor with other ones.

Feature Extraction

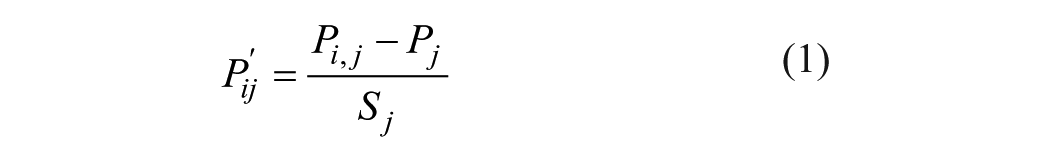

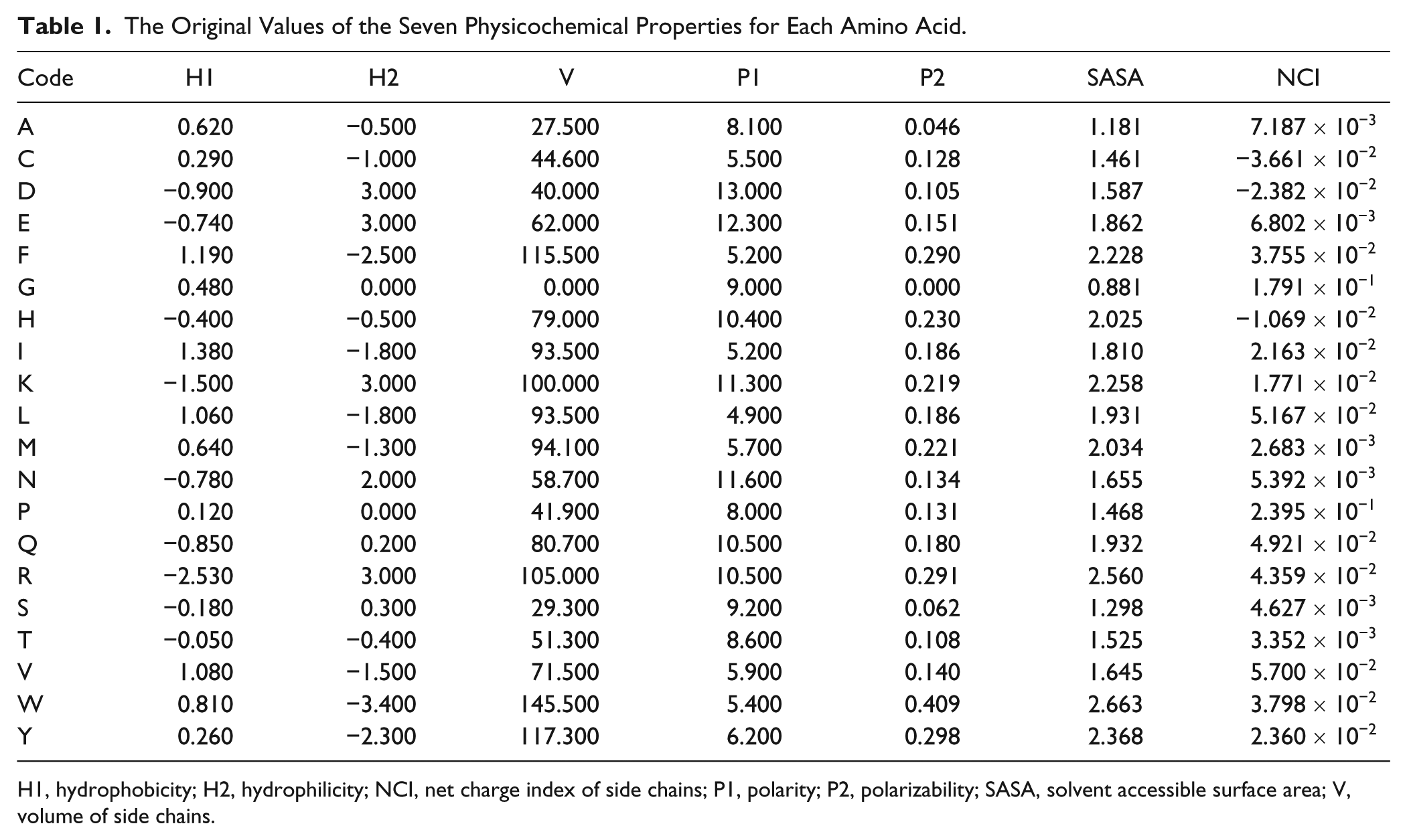

One important step to predict PPIs by using sequence information is to find a suitable encoding of the protein sequence. This means converting the protein sequence to a vector space. In this article, the physicochemical properties of amino acids were selected to translate a protein sequence to seven vectors. The seven physicochemical descriptors are hydrophobicity, 28 hydrophicility, 29 volumes of side chains of amino acids, 30 polarity, 31 polarizability, 32 solvent-accessible surface area (SASA), 33 and the net charge index (NCI) of side chains of amino acids. 34 The original values of the seven descriptors for each amino acid are listed in Table 1 . We first normalized them to zero mean and unit standard deviation according to the following equation:

where Pi,j is the jth descriptor value for the ith amino acid; Pj is the mean of the jth descriptor over the 20 amino acids; and Sj is the corresponding standard deviation.

The Original Values of the Seven Physicochemical Properties for Each Amino Acid.

H1, hydrophobicity; H2, hydrophilicity; NCI, net charge index of side chains; P1, polarity; P2, polarizability; SASA, solvent accessible surface area; V, volume of side chains.

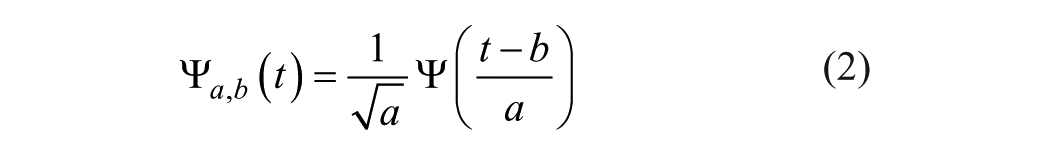

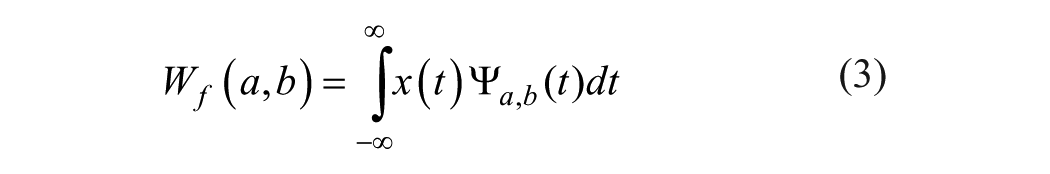

After we obtained these seven vectors, they were regarded as seven digital signals. Discrete wavelet transform was used to deal with them. Wavelet transform is a multiresolution analysis tool. 35 It has become very popular when it comes to analysis, and de-noising and compressing signals and images. Wavelet transform decomposes a signal into a set of basic functions. These basic functions are called wavelets. Wavelets are obtained from a single prototype wavelet-call mother wavelet by dilations and shifting:

where

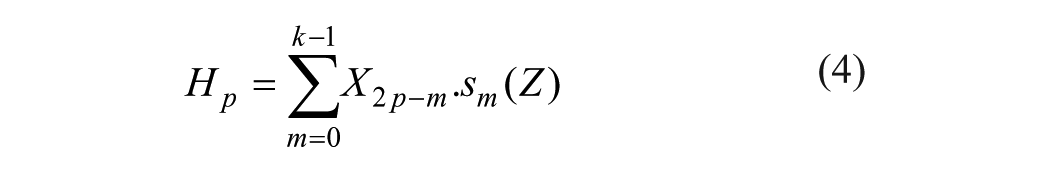

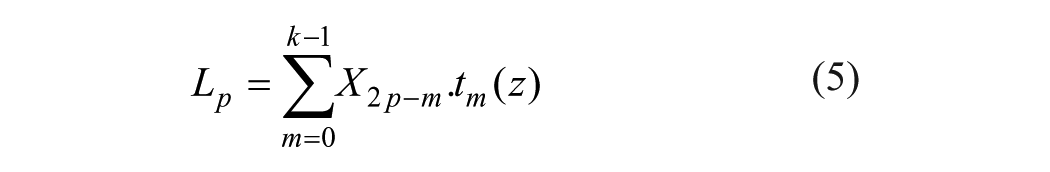

where x(t) is the decomposed signal. DWT transforms discrete time signals to a discrete wavelet representation. It converts an input series X0, X1,… Xn into one high-pass wavelet coefficient series and one low-pass wavelet coefficient series (of length m/2 each), given by, respectively:

where

Procedure of multilevel discrete wavelet transform (DWT). Three levels of DWT are shown in the figure, and we can get four subbands.

After the decomposition of the seven vectors via DWT, the wavelet coefficients can be used as the protein feature vector. But if we did it like this, an overfitting problem from the vast feature vector will occur. To further reduce the dimensionality of the feature vectors, statistics were used over the set of the wavelet coefficients of each subband. The following statistical features of each subband were used for the prediction of PPIs:

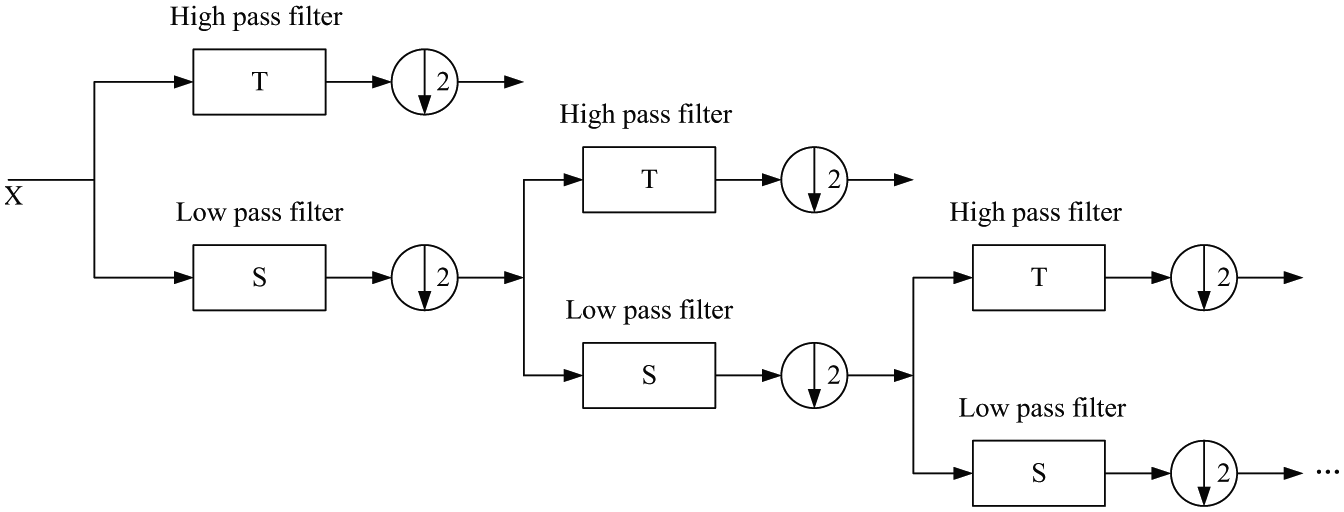

(1) Maximum of the wavelet coefficients in subband q

(2) Mean of the wavelet coefficients in subband q

(3) Minimum of the wavelet coefficients in subband q

(4) Standard deviation of the wavelet coefficients in subband q

where eq is the wavelet coefficients at the subband q; and nq is the number of wavelet coefficients at the subband q. This method is the same as the random subspace approach, especially in the aspect of selecting the optimal feature vector. In this article, the decomposition level λ = 4, which is similar to that in Ref. 37 , was selected to represent a protein for prediction of PPIs, and a 20-dimensional feature vector can be obtained for each protein descriptor vector. Finally, a 20×7×2 dimensional feature vector, where 7 was the number of physicochemical descriptors used in our study and 2 was the number of proteins included in PPI pairs, was input to the learning system for prediction.

Random Forests Algorithm

Random forests algorithm, developed by Leo Breiman, 24 is an ensemble of unpruned classification and regression trees that operates by constructing a multitude of decision trees at the training time and outputting the final class that is the majority vote of the classes output by individual trees. These trees are generated by bootstrap samples of the training data and by using random feature selection in the tree generation process. Random forests algorithm usually exhibits a remarkable improvement of performance compared to single decision tree classifiers such as CART and C4.5, 38 which are often used as the base learner in random forests algorithm. Furthermore, random forests algorithm shows a good generalization error rate when compared to Adaboost and is more robust to noise. In our experiments, 200 trees are used in our model as the computational cost and overfitting problem are considered. The schematic diagram of the proposed method is shown in Figure 2 .

Framework of the proposed framework. Seven physicochemical descriptors and discrete wavelet transform (DWT) are used to describe the protein. Random forests algorithm is used for prediction.

Evaluation Measures

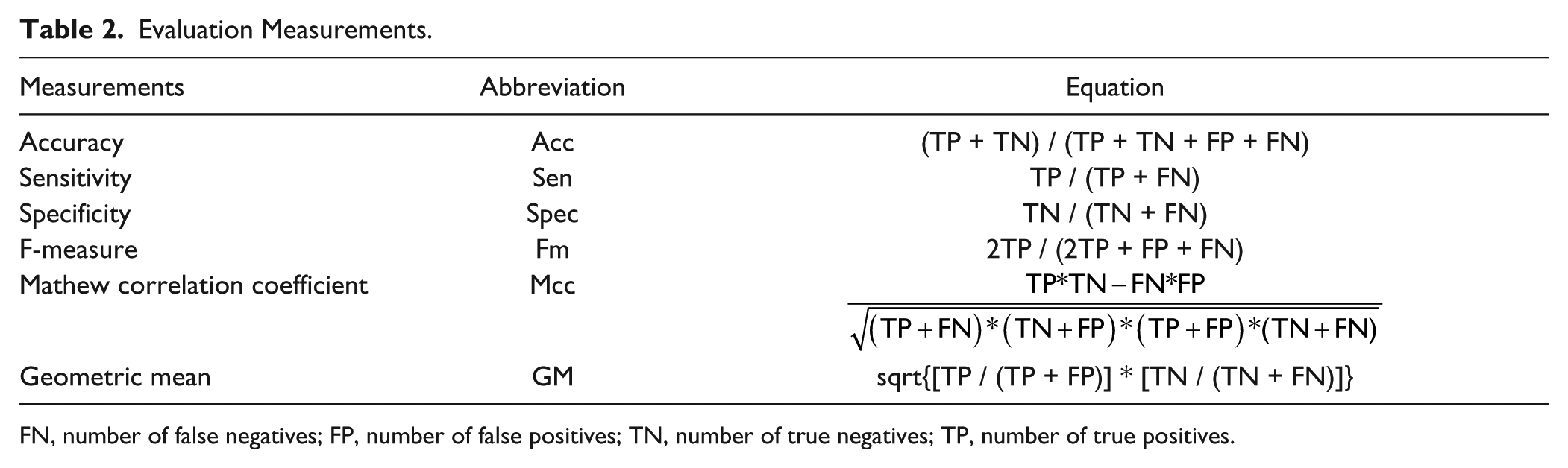

In the literature, six metrics were often used to score the quality of a predictor at seven different angles; these include accuracy (Acc), sensitivity (Sen), specificity (Spec), and the F-measure (Fm). These measures are defined in Table 2 . Sensitivity (Sen) and specificity (Spec) illustrate the correct prediction ratios of positive and negative data sets, respectively. The overall accuracy (Acc) is measured as the average of sensitivity (Sen) and specificity (Spec). But when the numbers of positive data and negative data differ too much from each other, the Mathew correlation coefficient (MCC) should be calculated to assess the prediction performance. The value of MCC ranges from −1 to 1, and a bigger MCC stands for better prediction performance.

Evaluation Measurements.

FN, number of false negatives; FP, number of false positives; TN, number of true negatives; TP, number of true positives.

With a set of clear and valid metrics as defined in Table 2 to measure the quality of a predictor, the next thing we need to consider is how to objectively derive the values of these metrics for a predictor.

In statistical prediction, the following three cross-validation methods are often used to calculate the metrics in Table 2 for evaluating the quality of a predictor: an independent data set test, a subsampling (e.g., 2-, 5-, or 10-fold cross-validation) test, and a jackknife test. The jackknife test was deemed the least arbitrary; it can always yield a unique result for a given benchmark data set. Therefore, the jackknife test has been increasingly and widely adopted by investigators to test the power of various prediction methods. However, to reduce the computational time, we adopted the 5-fold cross-validation or 10-fold cross-validation in this study that was performed by many investigators with random forests algorithm as the prediction engine. In k-fold cross-validation, the original sample is randomly partitioned into k subsamples. Of the k subsamples, a single subsample is retained as the validation data for testing the model, and the remaining subsamples are used as training data. The cross-validation process is then repeated k times (the folds), with each of the k subsamples used exactly once as the validation data. The advantage of this method is that all observations are used for both training and validation; it has high computational efficiency and thus has been used often in protein attributes prediction model checking. However, as can be seen, because the partition is random, the result is variable.

Web Server and User Guide

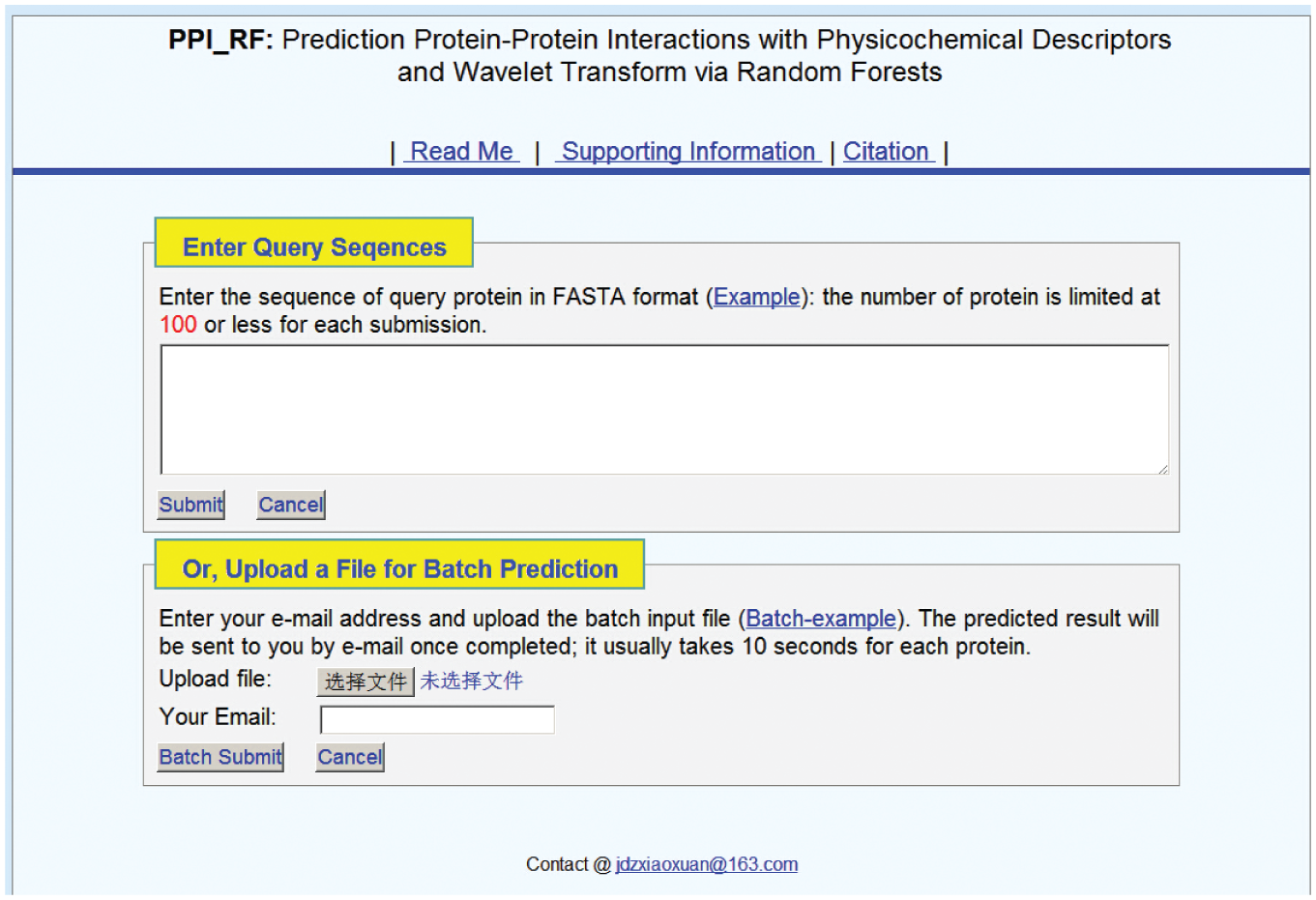

To enhance the value of the PPI predictor’s practical applications, a web server for it was established at http://www.jci-bioinfo.cn/PPI_RF. Moreover, for the convenience of the vast majority of experimental scientists, a step-to-step guide is provided here for how to use the web server predictor:

Step 1: Go to http://www.jci-bioinfo.cn/PPI_RF, and you will see the top page of the predictor on your computer screen, as shown in Figure 3 . Click on Read Me to see a brief introduction about the PPI predictor.

Step 2: When the predicted PPIs are only in several protein sequences, you can either type or copy/paste the query protein sequence into the input box at the top half of Figure 3 . It is important to note that the input sequence should be in the FASTA format. A sequence in FASTA format consists of a single initial line beginning with a greater-than symbol (>) in the first column, followed by lines of sequence data. The words right after the > symbol in the single initial line are optional and only used for the purposes of identification and description.

Step 3: To get the predicted result, you only need to click on the

Screenshot of the PPI Predictor Web Server.

As regards the computational time, the work will be accomplished within 15s in most cases. However, the length of the sequence is the key crucible for time consumption; the longer the query protein sequence is, the more time is usually needed.

Step 4: As shown in the lower panel of Figure 3 , you may also choose batch prediction by entering your e-mail address and your desired batch input file (in FASTA format) via the Browse button. To see a sample of a batch input file, click on the Batch-Example button.

Step 5: By clicking the Citation button, you will find the relevant papers that document the detailed development of the predictor.

Step 6: Click on the Supporting Information button to download the benchmark data set used to train and test the PPI predictor.

Results and Discussion

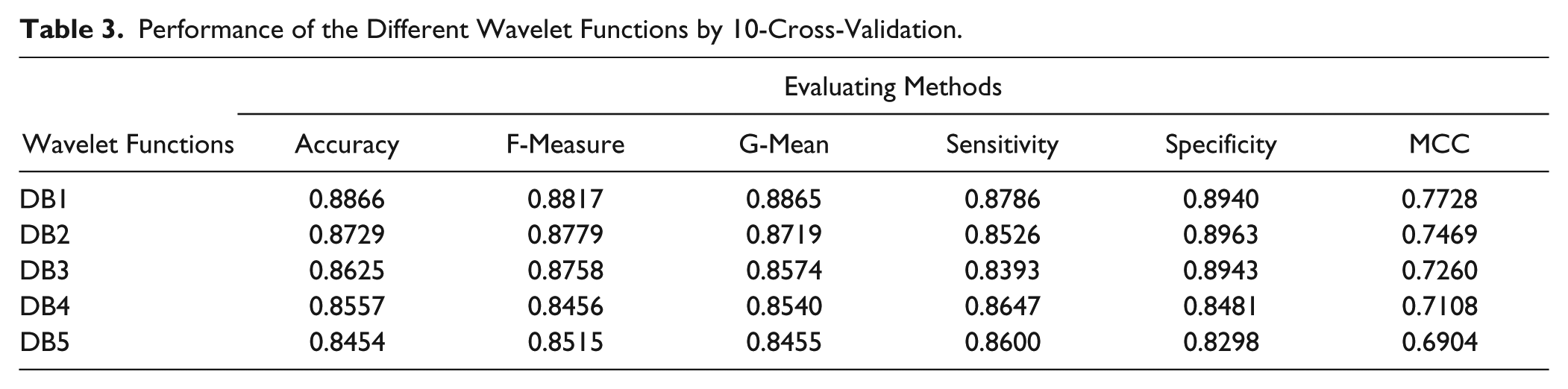

Effect of Wavelet Functions

In this section, the influence of the wavelet function was analyzed because a suitable wavelet basis can match the underlying structure of the signal better, and better features can be extracted from the original protein sequences. Some properties of the wavelet basis, such as compact support, orthogonality, symmetry, smoothness, and a high order of vanishing moments, must be considered for signal processing. It is hoped that the wavelet functions would own these mentioned properties. However, there are many conflicting conditions that restrict the selection of them. None of the wavelet basis functions possesses all of these desirable properties simultaneously. In recent decades, Daubechies constructed a class of orthonormal wavelet basis functions with compact support and smooth properties. In this study, five Daubechies 23 were tested: Daubechies of number 1 (Db1), number 2 (Db2), number 3 (Db3), number 4 (Db4), and number 5 (Db5). As seen in Table 3 , the training accuracy reached 0.8542 when using the random forests algorithm as the classifier with the DB1 wavelet function used to extract the features. The number of trees in random forests is 200, and the number of mtrys (dimension of subspace) is 45. However, when other wavelet functions are used, the training accuracies range from 0.8454 to 0.8866. Moreover, other performance measures, such as geometric means, sensitivity, specificity, F-measure, and MCC, were also investigated. Table 3 shows that DB1 was also the best one. These results may be caused by a property that the DB1 wavelet possesses: a lower vanish moment. More non-zero coefficients are generated after the decomposition, and the diverse trees used in random forests are easy obtained because the diversity of component learners is necessary. 40 But for a single learner, a higher-vanish-moment wavelet function such as DB4 is needed. In this study, the DB1 wavelet function was selected as the appropriate wavelet function in our experiments.

Performance of the Different Wavelet Functions by 10-Cross-Validation.

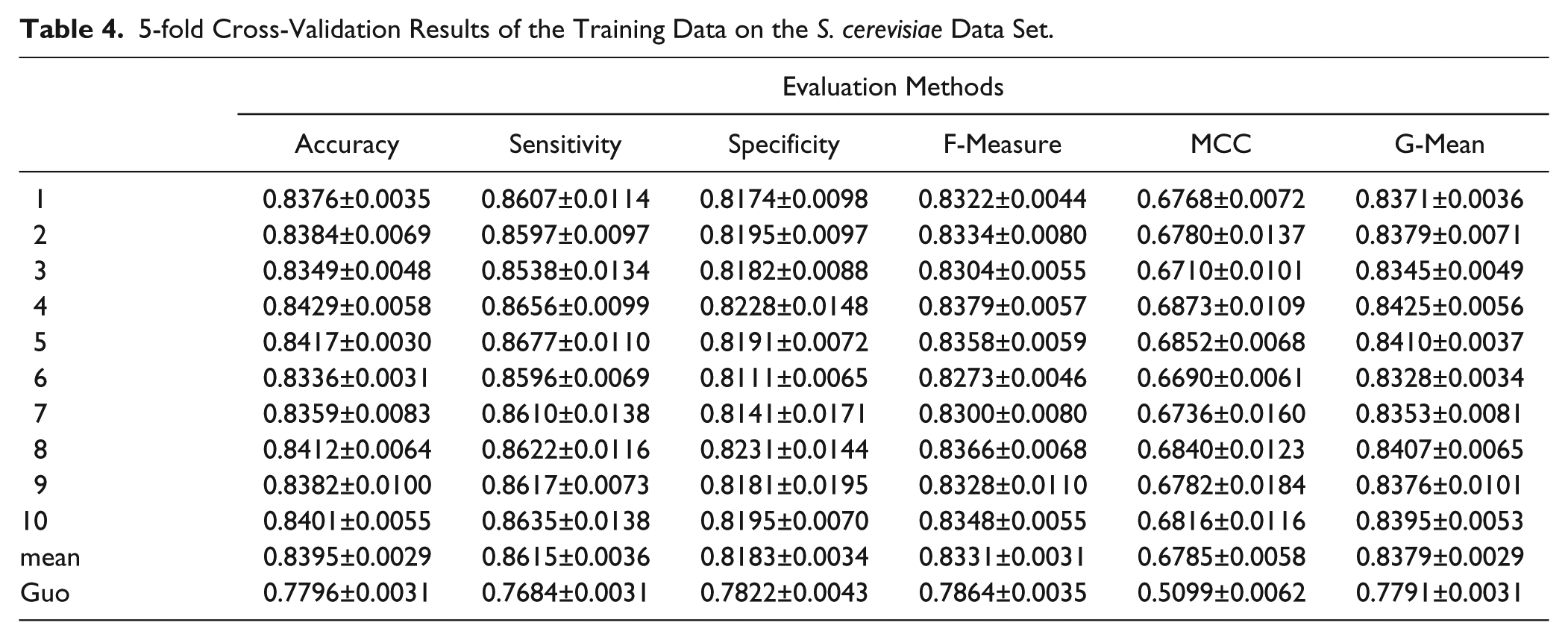

Performance on the S. cerevisiae Data Set

The proposed predictor was first applied to the S. cerevisiae data set. The data set consisted of 17,505 positive pairs and 27,204 negative pairs; 5943 positive pairs and 5943 negative pairs were randomly selected from S. cerevisiae as the training data set. The remained ones were used as the independent-test data set. A 5-fold cross-validation was used to evaluate the predictor on the training data set, and the procedure was repeated 10 times. The results from the training data set are shown in Table 4 .

5-fold Cross-Validation Results of the Training Data on the S. cerevisiae Data Set.

From the results shown in Table 4 , we can see that the proposed model achieves a good performance on the training data set. The average results of the model are 0.8395 for accuracy, 0.6785 for the MCC, 0.8379 for the geometric mean, 0.8331 for the F-measure, 0.8615 for sensitivity, and 0.8183 for specificity.

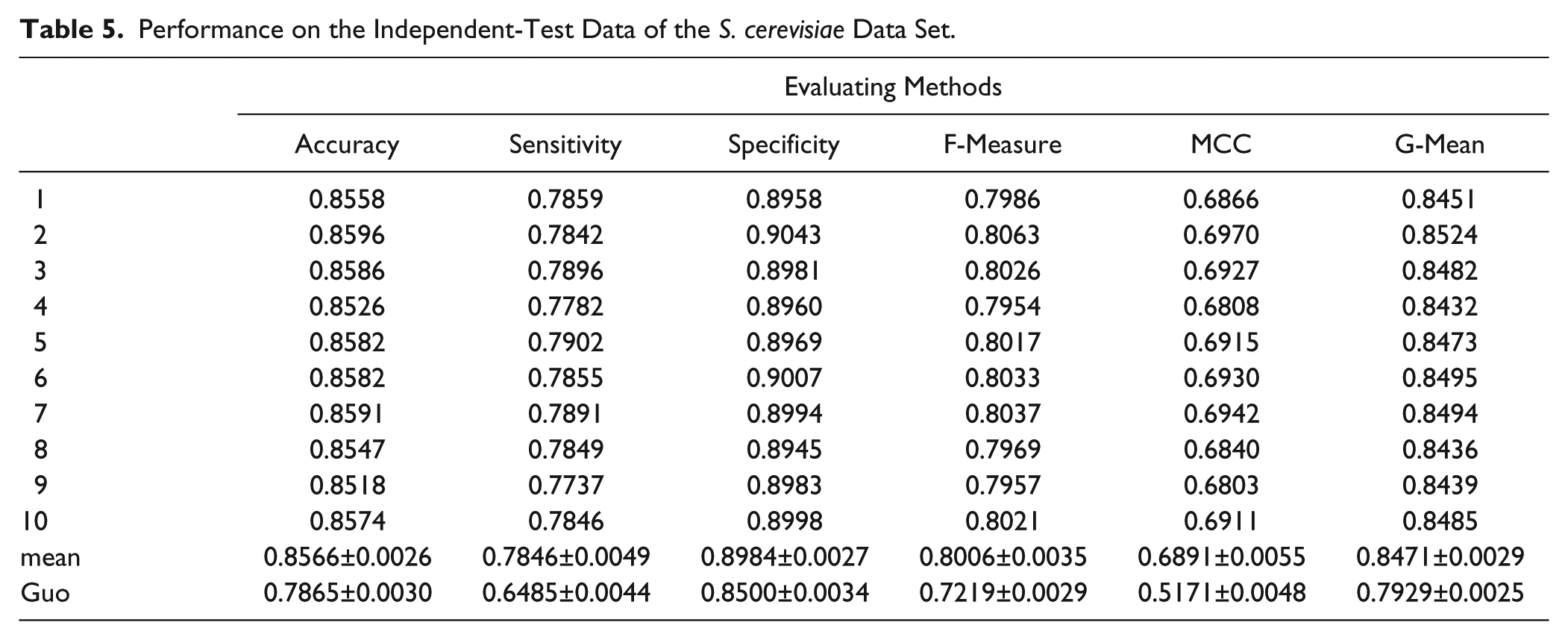

After the 5-fold cross-validation, the independent-test data set, the remaining pairs of the data set, was also applied to further evaluate the proposed predictors. In the test data, 11,562 positive samples and 21,261 negative samples are included. The experimental results are shown in Table 5 .

Performance on the Independent-Test Data of the S. cerevisiae Data Set.

From the results shown in Table 5 , we can see that the proposed model achieves a good performance on the testing data set. The average results of the predictor on the test data set are 0.8566 for accuracy, 0.6891 for the MCC, 0.8471 for the geometric mean, 0.8006 for the F-measure, 0.7846 for sensitivity, and 0.8984 for specificity.

Compared with Other Methods

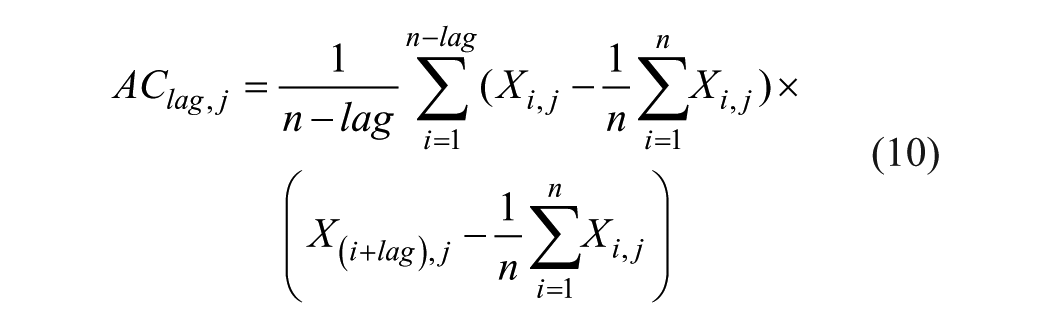

In addition, we compared the effectiveness of our proposed model with the method proposed by Guo. 10 The model also used only the sequence features to predict the PPIs. The auto-covariance (AC) features and support vector machine (SVM) are used for prediction. AC accounts for the interactions between residues that are a certain distance apart in the sequence, so this model mainly takes the neighboring effect into account. The definition of AC is as follows:

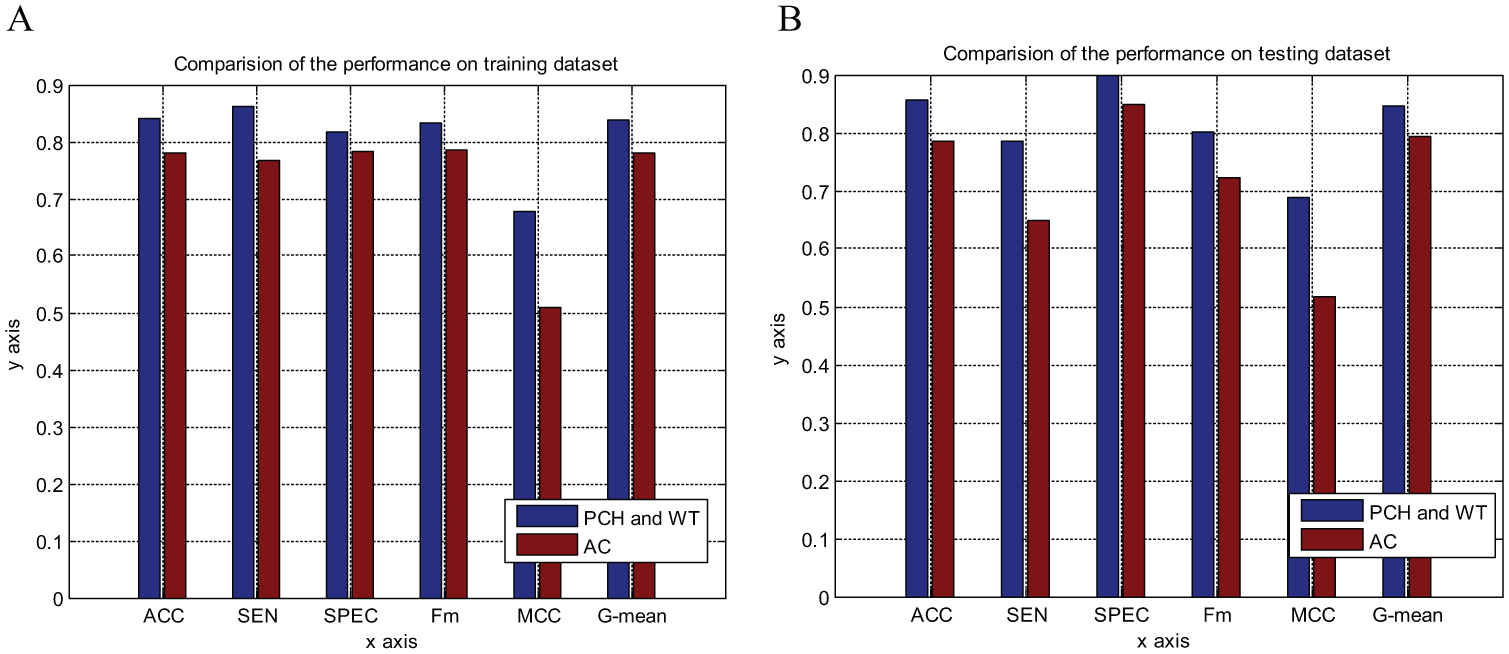

where j represents one descriptor such as the physiochemical descriptors of the amino acids, which composed the protein; i is the position in sequence X; n is the length of sequence X; and lag is the value of the lag (the maximum distance between an amino acid residue and its neighbor with a certain number of residues away). In this work, a protein pair is converted into a 420-dimensional (2 × 30 × 7) vector by AC with a lag of 30 amino acids, where 2 is the number of two protein sequences and 7 is the number of descriptors. The experimental results on training data and testing data are shown in Figure 4 .

Comparison of the proposed predictor with Guo’s predictor (

From Figure 4 , we can see that the performance of the proposed model is better than that of the AC model, especially regarding the MCC value. This means that the proposed model has higher accuracy with both negative and positive samples, and possesses better prediction ability. Furthermore, we must point out that the number of extracted features of our model is only 280, which is less than the AC model has (with 420 features). So we use less features and computational costs, but get a better performance.

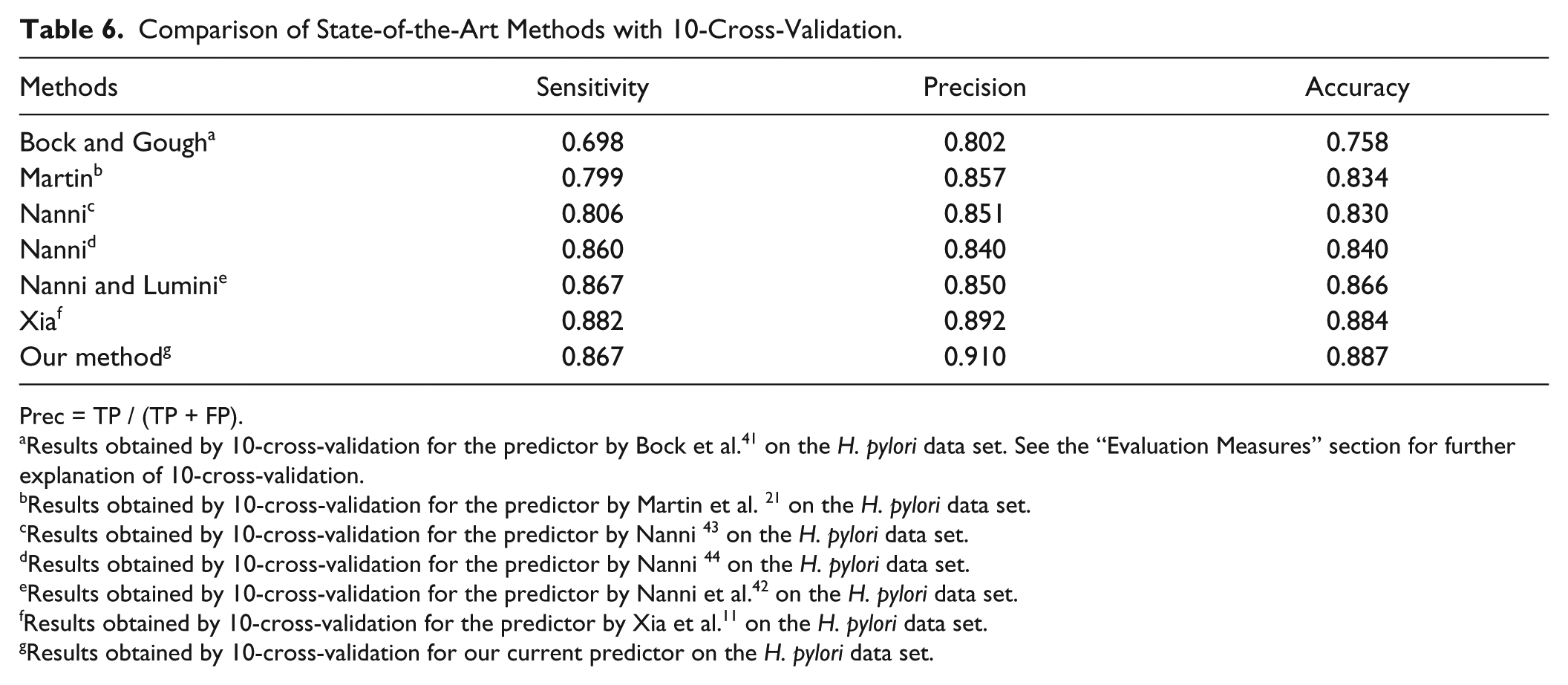

In this section, we compared the results of the proposed method with those of the existing methods on the H. pylori data set. The results of 10-fold cross-validation over several different methods11,21,41–44 on the H. pylori data set are shown in Table 6 . In Boch and Gough’s approach,41,45 several structural and physiochemical descriptors with SVM as the classifier were used to predict PPIs. And, in the method of Martin et al., 21 a novel descriptor called a signature product was developed, which is a product of subsequence and an expansion of a signature descriptor from chemical informatics to infer PPIs. Nanni developed a PPI predictor base on a K-local hyperplane. 44 In Nanni and Lumini’s paper, 42 they developed an ensemble of K-local hyperplanes for predicting PPIs. In another article, Nanni 43 designed a feature vector based on 2 g, and then input it into linear discriminant classifiers for the prediction of PPIs. Nanni and Lumini 42 fused some hyperplane distance nearest-neighbor classifiers to identify PPIs. Xia 11 developed a sequenced-based predictor based on an autocorrelation descriptor and rotation forests.

Comparison of State-of-the-Art Methods with 10-Cross-Validation.

Prec = TP / (TP + FP).

Results obtained by 10-cross-validation for the predictor by Bock et al. 41 on the H. pylori data set. See the “Evaluation Measures” section for further explanation of 10-cross-validation.

Results obtained by 10-cross-validation for the predictor by Martin et al. 21 on the H. pylori data set.

Results obtained by 10-cross-validation for the predictor by Nanni 43 on the H. pylori data set.

Results obtained by 10-cross-validation for the predictor by Nanni 44 on the H. pylori data set.

Results obtained by 10-cross-validation for the predictor by Nanni et al. 42 on the H. pylori data set.

Results obtained by 10-cross-validation for the predictor by Xia et al. 11 on the H. pylori data set.

Results obtained by 10-cross-validation for our current predictor on the H. pylori data set.

We can observe that our method clearly achieves the best results for accuracy and precision compared to the other four approaches. Only the sensitivity was slightly lower than with Xia’s methods. The results for the two data sets showed that the proposed predictor was a useful supplementary tool for PPI prediction.

Conclusion

In this work, a new PPI prediction model is proposed that uses only the primary sequences of proteins. The protein features are extracted by using the physicochemical descriptor and DWT, and random forests algorithm is used for prediction. We evaluate the model on large-scale test data. The prediction results clearly show that our model is effective in PPI prediction. Furthermore, fewer features are used in the model, but better performance can be achieved. The PPI predictor is available on a public server (http://www.jci-bioinfo.cn/PPI_RF).

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by the National Nature Science Foundation of China (Nos. 61261027, 61262038, 31260273, and 61202313); the Natural Science Foundation of Jiangxi Province, China (Nos. 20122BAB211033, 20122BAB201044, and 20132BAB201053); the Scientific Research Plan of the Department of Education of Jiangxi Province (GJJ14640); and the Young Teacher Development Plan of the Visiting Scholars Program, University of Jiangxi Province. The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.