Abstract

We present a software platform developed by Genentech and MathWorks Consulting Group that allows arbitrary MATLAB (MATLAB is a registered trademark of The MathWorks, Inc.) functions to perform supervisory control of process equipment (in this case, fermentors) via the OLE for process control (OPC) communication protocol, under the direction of an industrial automation layer. The software features automated synchronization and deployment of server control code and has been proven to be tolerant of OPC communication interruptions. Since deployment in the spring of 2010, this software has successfully performed supervisory control of more than 700 microbial fermentations in the Genentech pilot plant and has enabled significant reductions in the time required to develop and implement novel control strategies (months reduced to days). The software is available for download at the MathWorks File Exchange Web site at http://www.mathworks.com/matlabcentral/fileexchange/36866.

Many businesses employ advanced process modeling and control algorithms as a part of process development.1,2 It is uncommon to encounter laboratory fermentor controllers or industrial controllers that can readily accommodate sophisticated model-based control algorithms or online optimization.3,4 Recent Food and Drug Administration guidelines 5 encouraging the use of process modeling will result in greater adoption of these techniques by the biopharmaceutical industry; therefore, methods that smooth the linkage from model to product 6 will have an increasingly positive impact on future process development efforts.

As part of a recent overhaul of Genentech’s microbial pilot plant, the existing automation layer was replaced with an industrial control architecture based on DeltaV (Emerson Process Management, St. Louis, MO) that uses OLE for process control (OPC) to communicate with existing process controllers. The DeltaV automation system performs control loop monitoring and executes automated process sequences (e.g., sterilization, clean-in-place, etc.). This architecture provides a significant improvement in reliability and data monitoring relative to the previous system, but it initially left a functionality gap for control algorithm development. The previous SCADA 7 system provided a plug-in architecture that was exposed to the user. This plug-in system allowed process researchers to quickly design and implement supervisory control algorithms. It became apparent with the move to a DeltaV-based architecture that biochemical process researchers were unable to efficiently implement, debug, and deploy code using an industrial automation platform without intimate interaction with automation engineering staff. The introduction of additional interdepartment interaction and translation of concepts from the biological perspective to the automation perspective would unnecessarily slow development. As a result, Genentech set out to define a flexible, rapid, and researcher-serviceable supervisory layer to augment the industrial control system. Genentech chose to work with an outside vendor (MathWorks Consulting Group, Natick, MA) that developed such a supervisory control layer that transparently interfaced with the new DeltaV automation network.

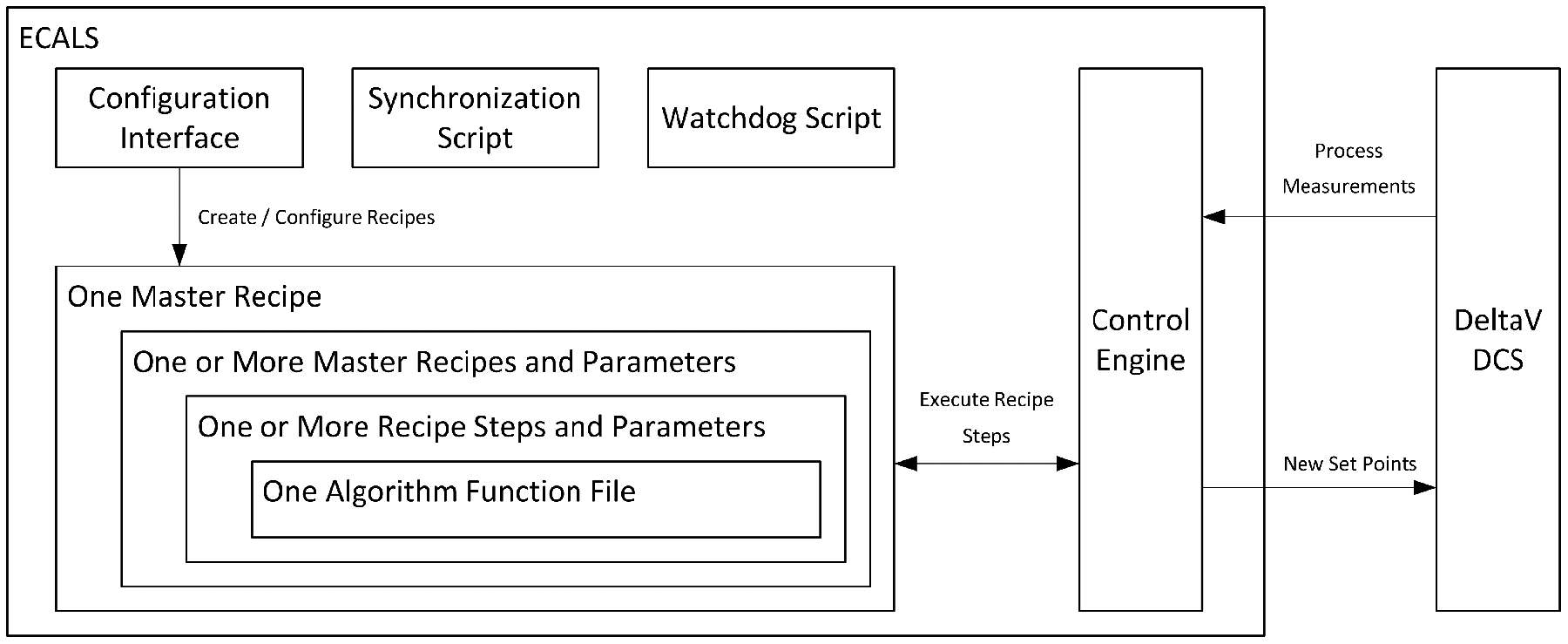

The objective of the present work was to develop a system to allow arbitrary functions to be written in MATLAB (a software application often used for control theory research), packed into recipes and recipe groups, deployed to a supervisory control engine running on a remote server, and then reliably executed during a fermentation (

Fig. 1

). The pilot plant operations staff interacts only with a standard DeltaV human-machine interface (HMI). The system uses an OPC communication layer to trigger the loading of the appropriate group of functions, the mapping of the inputs and the outputs of those functions to OPC data tags, and the execution of those functions at a customizable frequency. The software, presented here, is called

Software components. Each fermentation executes a single master recipe, which controls execution of multiple recipes that execute in parallel. Each recipe may have multiple steps that execute in series. Recipe steps control execution of a single researcher-authored control algorithm as an arbitrary function file. The ECALS application manages communication with the automation network. System scripts outside of the ECALS application maintain synchronization of researcher-generated control functions and watch for unforeseen system failures.

System Overview

The overall system implemented in the Genentech microbial pilot plant consists of a series of Sartorius 10-L, 100-L, and one 1000-L working volume fermentors, each controlled by a digital control unit (DCU). The DCU is responsible for the basic I/O interface with the fermentors’ instrumentation and control loops (e.g., temperature, pH, etc.). The DeltaV software controls the DCU at a supervisory level by sending control loop set points and executing automated sequences.

The ECALS system consists of four components ( Fig. 1 ): A graphical configuration interface is used to define the recipes, and a control engine is used to schedule and run the recipes. These two components were implemented using object-oriented programming concepts in the MATLAB software environment. In addition, there are two supervisory system shell scripts that ensure continuous uptime and perform plantwide code synchronization. These scripts were implemented as PowerShell (Microsoft, Redmond, WA) scripts. PowerShell is a system-scripting language developed as a modern replacement for batch files, and it is present by default on new servers and workstations running the Microsoft Windows operating system.

The design for the ECALS recipe structure was adapted from the ANSI/ISA-88 procedural model and was predicated on the use of modular design features. The supervisory algorithms are layered in three reusable organizational tiers ( Fig. 1 ). The lowest level is control algorithms, which are MATLAB function files that have an arbitrary number of data streams, inputs, and outputs. The MATLAB files are also configured to have parameters that do not originate in the automation layer but are customizable at the next ECALS organizational layer, the recipe step. The recipe step encapsulates the function file and maps its data streams to OPC tags in the automation layer. A collection of recipe steps is organized into a recipe, and a group of recipes is a “master recipe,” the highest organizational level. The master recipe is executed during the course of a fermentation.

This multitiered organization allows for flexible reuse of components. For example, one might have an advanced alarming recipe to be used in every master recipe, but one could include different control recipes to implement specific control algorithms for each experiment. Furthermore, recipes may be defined to be “initialization” recipes that execute at the start of an experiment, “main” recipes that execute only after all initialization recipes complete, or “cleanup” recipes that execute only when a termination signal is received. This permits useful flexibility in experiment design.

As an example, a fermentation might execute a master recipe in which an initialization recipe retrieves researcher-specific configuration information from a network drive and parses it for use by later recipes or steps. A “main” recipe contains a step to initialize a control function based on set points retrieved from the local controller. A second, parallel main-type recipe executes at 1 Hz to set nutrient feed rates in response to bioreactor probe data. A second main recipe executing in parallel adjusts the agitation rate in the fermentor based on off-gas composition. At the completion of the fermentation, a “cleanup” recipe might ramp down the broth temperature set point prior to harvest.

A valuable facet of this structure is that the control algorithm functions are written as separate files in an interpreted language that supports off-line testing. One may build complex models and test harnesses in silico to develop control code that is then directly copied to the supervisory control system. There is no need to translate the control code to an industrial control language, and there is thus no requirement for retesting. A second significant benefit is the ability to introduce code break points in live, executing code. In a process development pilot plant, every fermentation is an experiment, and in some cases, there is a need to modify control logic while a fermentation is in process. This type of live development and debugging is best implemented by using a complete integrated development environment (in this case, MATLAB Editor) to execute the actual control system code.

Control code and recipes reference human-readable aliases, rather than equipment-specific fully resolved OPC tags. Each ECALS server has its own unique “alias map” text file that maps an alias such as “Dissolved_Oxygen” to an appropriate tag in the control system. The control system tags are equipment-based addresses such as “V7201_AIR/DO2_PV.CV.” Because all ECALS recipe and configuration information is based on these generic aliases, this means that all control logic is machine independent, and as long as the appropriate alias map file exists, one can reuse any recipe on any ECALS server. This simplifies deployment of recipes, as the same file is made available to all ECALS servers. Configuring a server for a new fermentor is done by editing only the alias map text file.

The core functionality of ECALS is encapsulated in the MATLAB code for the control engine. This code handles all communication to and from the automation network and has extensive error handling. The microbial pilot plant executes R&D fermentation experiments around the clock, 51 weeks per year, at a rate of 10 to 18 per week. When developing an “always active” controller, there are many error conditions that can originate from the automation network that ECALS must automatically recover from so that there would be no interruption to these experiments. OPC communication issues can arise due to a temporary network interruption, reconfiguring an OPC tag, or restarting one of the automation DeltaV servers. Several iterations of system error handling were required to arrive at the final system recovery approach. The desired behavior is that after failing to send or receive data, the software should exit from the current scan (executed at 1 Hz) without executing any control functions and try again at the next scan. It was necessary to confirm that an active OPC connection exists in every scan since a disconnection could be caused by restarting the OPC server. In this scenario, the OPC connection would be broken, but it would be repaired after a reconnection attempt. All read and write operations also make one attempt, and if any read or write operation returned an error from the server, then the scan was aborted. This try/retry/reconnect/skip approach provided fault tolerance and ensured that the researcher control code would not execute with invalid values. A design consequence of this approach is that researcher control algorithms cannot require precise execution timing as some execution scans may require a reconnection operation to occur before writing a set point to the controller.

Given the decision to use the MATLAB software environment for the external control engine, it was necessary to mitigate the risk of using software that was not designed for continuous uptime. There are two systems in place that support this objective. The ECALS engine measures its own memory usage, and the ECALS application will restart itself in the event of runaway memory consumption. ECALS also updates its status counter in a small text file at a rate of 1 Hz. An external system script checks the counter value in this text file every 15 seconds. If it finds that the counter has not been incremented (implying that ECALS has stopped executing), it will force the ECALS engine to terminate and relaunch. This failsafe is intended to catch any unforeseen bugs or failures that might slip through the other internal error handlers. The ECALS software routinely saves its current state (including calculations) to the hard drive. When testing the software, it was confirmed that upon restart it reloads itself and resumes experiment control within 4 min. A 4-min supervisory control interruption is not desirable, but it is tolerable for a failure recovery scenario. Local controllers maintain their previous set points during the 4-min interruption, and the process impact of this feature was found to be negligible during testing.

An additional feature that is valuable from an operations monitoring perspective is the integration of several diagnostic tags that provide real-time feedback and historical perspective on the performance of the ECALS server system. This diagnostic information is the result of several calculations that are executed at 1 Hz whether or not a fermentation is in process. The diagnostic tags are logged to the pilot plant’s enterprise historian server. For example, each copy of ECALS writes a value to the server indicating the number of floating-point days since the ECALS engine was last restarted. This was designed to allow tracking of overall system robustness but also allowed the implementation of an alarm in the automation system based on the rate of change of uptime. An audible alarm or beacon may be triggered based on a frozen uptime tag just as it may be triggered by any process excursion, allowing the system to leverage standard automation practices for responding to alarms. A second set of reported values track the overall performance of the server (memory usage, CPU load), which were implemented to mitigate the stability risk of using desktop software in a server role. Finally, the system reports the duration in milliseconds that ECALS must wait to retrieve the value of its status flag via OPC from the automation network. This value has proven to contain interesting information about the overall load on the automation network (network responsiveness varies in a diurnal pattern) and also permits alarming on a loss or degradation of communication.

The ECALS system was designed to be invisible to the technicians operating in the microbial pilot plant. As such, the only system that technicians interact with is the DeltaV controller interface. To execute a researcher-specified master recipe, the technician selects the required recipe name from a list displayed on the DeltaV HMI and then clicks a button to toggle ECALS to an active state. These two pieces of information (master recipe name and an activity toggle) are encoded into two OPC tags that are read by ECALS every second. When ECALS detects that the activity tag has changed to TRUE, it reads the name of the recipe name from the second tag, loads the recipe, and executes the appropriate researcher code. By using the OPC standard to communicate with and control ECALS, we avoided the need for embedded MATLAB control graphics in the DeltaV graphics interface. Most importantly, it made ECALS general purpose: it can be used with any OPC-compatible automation network. As a result of this broad compatibility, the researcher control code would not need to be rewritten in the event of nearly any change to the microbial pilot plant automation system. The OPC communication standard is also widely accepted by the process control industry.8,9 Use of this standard communication protocol allows ECALS to be eminently transportable to another site, regardless of the destination automation platform.

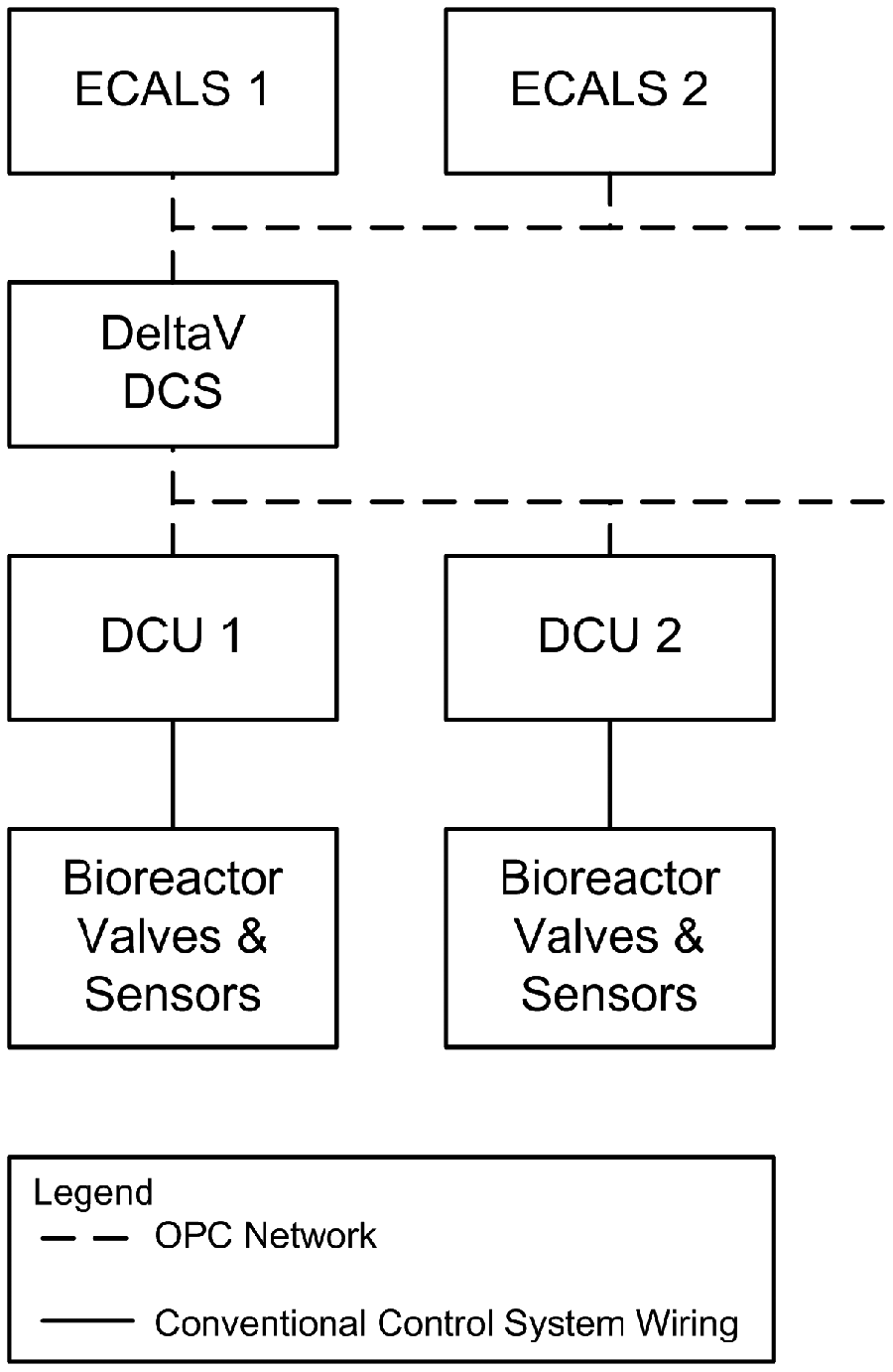

A single ECALS server, each running on a separate virtual machine instance (VMWare Server 2008) in a redundant, distributed server farm, is associated with each fermentor ( Fig. 2 ). This one-server-per-reactor design decision was made to mitigate two risks. The first was the undefined risk of software instability such as memory leaks and crashes. ECALS is based on the deployment of workstation software (MATLAB), rather than real-time control software that is intended for continuous uptime. 10 A software failure would compromise any experiments controlled by that server. The second risk was that if multiple fermentors were controlled by a single application, it would be necessary to develop a robust method for sharing computational resources. If a given prototype control calculation demanded more resources than were available, it could not be allowed to interfere with experiments being conducted by another researcher in another fermentor. Both of these risks were mitigated by the design decision to have separate servers and installations of the software for each fermentor. This decision greatly simplified the software design but increased the support overhead and required the inclusion of additional features for easy deployment and maintenance.

System components. Each bioreactor has a digital control unit (DCU) that communicates with the central DeltaV distributed control system (DCS). There is a separate ECALS instance for each controlled bioreactor, and control signals are routed via the DCS network.

Software deployment and synchronization is handled with a scheduled script that executes hourly on each ECALS control server. This script triggers code synchronization to a version control server. The code to execute ECALS, the Genentech-specific control algorithm functions, and the configured recipes are all stored on a server running the version control software Subversion. 11 Version control software is commonly used in the software development industry to synchronize developers’ efforts and to track changes. In such a system, every incremental change to a file is individually tracked and auditable so that any revision may be compared with any other and any change may be reverted. We have configured each ECALS server as a Subversion client computer with a local working copy of the master code. New control code is saved to the version control master server. On each ECALS server, a script is executed once per hour that confirms that an experiment is not in progress. If no experiment is found to be in process, then the ECALS server executes a standard Subversion command to synchronize its files with the master copy. This method ensures that all changes to control algorithm code are downloaded and made available automatically for the next experiment. A further benefit to this approach is that all control development work must be routed through version control software before it can be executed, ensuring a perfect audit log. Only a single OPC tag list configuration file on each ECALS server is outside of the version control system, which is necessary because each server must look at a different set of equipment-based tags for its inputs and outputs.

Results and Discussion

Genentech has installed ECALS on 15 virtual machines, and the software successfully performs supervisory control on plot plant fermentors ranging from 10 L to 1000 L scale. More than 700 fermentations have been successfully executed under control of ECALS since it was deployed in the spring of 2010.

The error handling built in to the ECALS code has proven capable of handling most network outages and interruptions experienced. Over 500 days, the network of 15 fermentors has averaged 0.08 unattended restarts per fermentor per day. One surprising issue early in development was the observation of daily script-based restarts of ECALS. We discovered that the fail-safe Powershell script routinely detected that ECALS had not updated its status file and was thus forcing the MATLAB application to terminate and relaunch. Examination of the historian logs revealed that this was happening regularly at 8:00 p.m. and 8:30 a.m., which corresponded to the execution of corporate backup software and antivirus scans. It is worthwhile to point out that servers intended to run “desktop-grade” software may require more installed memory than standard desktop personal computers in order to run without experiencing slowdowns during maintenance operations. This also exposed the unintended consequence of implementing virtual offsite servers, which are subject to corporate information technology standards for application loading. Although the occasional sluggish behavior brought on by a virus scan is tolerable for a standard user, the same temporary performance limitation can severely compromise a control server. After upgrading these virtual machines’ memory allocation from 512 MB to 2 GB, these restarts were eliminated because of the increased memory capacity. Prior to this upgrade, the supervisory windows script always successfully terminated and relaunched ECALS, which resulted in an unintended but illustrative daily test of the fail-safe system.

It is worth noting that when developing an application intended for continuous uptime, there is great utility in recording software status values to a data historian or using verbose logging of status variables to disk. By overlaying reported “software uptime” values, we discovered that the ECALS servers were all restarting at the same time every day. Trending the available memory on each server allowed us to trace the system freezes to the fact that the system backup was exhausting the system resources. By trending the number of milliseconds it took to retrieve the “start/stop” signal, it was seen that diurnal loading of the automation network resulted in shifts in the responsiveness of the network. There may be value in such a metric more generally suited to monitor the health of ethernet communication networks. By trending the memory utilization over time, it was seen that a small memory leak occurs within the ECALS application resulting in the loss of 0.2 MB per hour of uptime. A check was implemented within the ECALS code to trigger a safe restart in the event that the total memory usage reaches a predetermined threshold. This memory check has made this leak a practical nonissue.

The concept of automatic recovery was designed into ECALS. When experiment conditions change, each control algorithm can ensure that its current state is saved to disk, allowing critical algorithm state changes to be stored immediately in preparation for an unexpected restart. As a result, supervisory control algorithms are able to recover from any system failures encountered. This applies for both systematic errors as discussed above and coding errors in the control algorithms themselves (not uncommon in periods of rapid bioprocess development and iteration).

The most significant benefit of the ECALS system is the improvement in researcher productivity. By using a robust, matrix-oriented mathematical platform to both develop and implement control strategies, implementation of a control algorithm that took 6 months to write and debug as a set of distributed control system (DCS) sequential function charts and function blocks took 2 weeks to later implement and test in the MATLAB environment. This time savings was realized because the control researcher could directly implement the algorithm using well-suited logic structures. In the case in which the algorithm was implemented using a commercial DCS, there was an additional need to work closely with an automation engineer to refactor the approach for a sequential function chart programming environment. Developing control code in a mathematical prototyping environment such as MATLAB has the additional benefit that it is much easier for researchers to integrate process models, written in the same language, both for model predictive control and algorithm verification.

Conclusion

We have found that using a full-featured desktop application for both control algorithm development and deployment provided a significant benefit in researcher productivity. In one case, a complex preexisting control algorithm was implemented in native DeltaV modules in an effort that took 6 months of work for two process researchers and an automation engineer. The implementation of the same preexisting algorithm for ECALS took one researcher only 2 weeks. Through care and focus during the design phase, it was possible to anticipate and mitigate many of the shortfalls associated with using desktop software in a continuous-uptime automation layer role, and these mitigation approaches are documented here. An external operating system script performs a heartbeat check on the application, which provides insurance that in the case of an unexpected application crash, the MATLAB process will be terminated and automatically restarted with little to no impact on process control. By developing a powerful, flexible, robust platform for supervisory control, we have enabled our microbial pilot plant to move to a modern automation control system without sacrificing the idea-to-implementation speed that is essential to process development.

Footnotes

Acknowledgements

We are grateful to the Genentech Pilot Plant for the execution of fermentations.

Declaration of Conflicting Interests

The authors are/were employees of Genentech or MathWorks, and the contribution was created while financially supported by their respective companies. Three coauthors are employees of MathWorks, the makers of some of the software that appears in the article.

Funding

The authors are/were employees of Genentech or MathWorks, and the contribution was created while financially supporting their employees. Three coauthors are employees of MathWorks and the makers of some of the software that appears in the article.