Abstract

Study Design

Survey-based study.

Objective

To identify how to effectively tailor spine care information to different educational backgrounds, beyond general readability.

Methods

An internet-based survey (Connect™, CloudResearch) recruited 600 U.S. adults evenly distributed across age brackets (18-80).

Results

Of the 600 participants (mean age 45 ± 15.7, 50.8% female), preferred selections were postgraduate (50.6%) and 8th-12th grade levels (22.1%). Bachelor’s degree holders significantly favored postgraduate-level content (55.3%), while postgraduate respondents predominantly preferred 8th-12th grade or simpler (56.2%). Participants with less than a bachelor’s degree similarly preferred 8th-12th grade or lower (53.5%). Standardized residual analysis revealed bachelor’s participants selected postgraduate content more frequently than expected, whereas postgraduate and below-bachelor participants selected it less than expected.

Conclusions

While postgraduate-level responses were the most frequently selected overall, preferences varied substantially by education level. Both postgraduate and below-bachelor participants tended to favor simpler content, while bachelor’s-level respondents consistently preferred more complex language. These findings underscore that simplifying medical information may improve accessibility across diverse educational backgrounds.

Introduction

Healthcare literacy is a critical skill for navigating the healthcare system effectively. However, a recent study found that approximately one-third (33%) of patients in a multi-surgeon spine practice demonstrated limited health literacy, highlighting a significant barrier to informed decision-making and care outcomes.

1

It is well established that patients presenting with spine pathology who have demonstrated lower levels of medical literacy, experience worse patient reported outcomes post-operatively compared to those with higher health literacy levels

ChatGPT is an artificial intelligence chatbot based on large language models. This means that given a specific input, it can generate a coherent and appropriate answer based on using machine learning algorithms. Most recently, patients have turned to ChatGPT as a source to address questions regarding diverse spine pathology and treatment recommendations. The answers generated were consistently at the collegiate level.6,7 However, users can request that generated responses be presented at a reading level most suitable for each individual. The successful ability of ChatGPT to reduce complex medical information has been previously studied in otolaryngology. 8 However, the optimal reading level for patient education materials in spine care has yet to be clearly established.

To our knowledge, this is the first study to stratify patients by educational attainment and systematically assess their readability preferences for medical information. By linking preference to education level, we aim to provide more granular insights into how patient education materials can be better tailored. This study aims to evaluate preferred reading levels for spine surgery educational materials based on an individual’s level of formal education. Requesting that ChatGPT generate standardized answer choices at varying reading levels, we seek to explore how health literacy preferences align with educational background. This approach may improved tailored and patient-centered methods of delivering spine care information, potentially reducing barriers to equitable healthcare access. We hypothesize that while preferred reading levels will vary by education level, a general preference for simpler, more accessible language will emerge, which would be consistent with the American Medical Association’s emphasis on “clear” patient communication.

Methods

Data Collection and Study Design

This is a survey-based study wherein individuals from the general American population completed surveys administered through the CloudResearch platform. Data collection was conducted in January 2025 using a combination of Connect and Prime Panels platforms by CloudResearch. CloudResearch’s Connect platform is known for its two-way reputation system, which allows participants to rate the projects and the researchers who post them. This rating system encourages good behavior from both parties thus enhancing reliability of data. Researchers are also able to set certain quotas for age, sex, race, and other demographics to match the US census; thereby improving capture from a representative sample. Participants are recruited by Connect via partnerships with established online panels that have large pools of pre-registered participants and targeted recruitment (eg, social media). The CloudResearch team continuously monitors data quality and handles feedback to address poor-quality survey responses. They implement technical safeguards to ensure each participant uses only one account and does not submit multiple responses from the same IP address, while also verifying that participants are based in the U.S. 9 To ensure data quality, an attention check was included to detect and remove responses of low quality represented as a failure to respond appropriately to the attention check question. In our survey, our attention check consisted of a question asking participants to select the third answer choice which contained the phrase “For this question, please select this answer choice to indicate you are paying attention”. Following survey completion and data approval from the authors, participants received a small financial incentive.

We included participants aged 18-79 across evenly distributed 10-year age brackets, with efforts to match U.S. Census demographics for age, sex, and race. This approach ensured a sample closely reflecting the national population. 10

A webpage on the Clinical Guidelines for Diagnosis and Treatment of Lumbar Disc Herniation with Radiculopathy through the North American Spine Society (NASS) (https://www.spine.org/Portals/0/assets/downloads/researchclinicalcare/guidelines/lumbardischerniation.pdf) was accessed in November 2024. Guideline information from this page was input into ChatGPT (OpenAI, San Francisco, CA; version 4.0) to generate answer choices for six different clinical scenarios (Appendix A). For each question, five answer choices were generated at different reading levels—Pre Kindergarten-3rd grade, 4th-7th grade, 8th-12th grade, undergraduate, and post-graduate— based on prior work demonstrating that large language models can effectively produce content across a comparable range of Lexile scores (300L-1200L+), spanning early elementary to college-level readability.

11

Demographic details were also collected in this study and included age, sex, race, highest level of education completed, and occupation. The survey had to be completed in full to be included in data analysis.

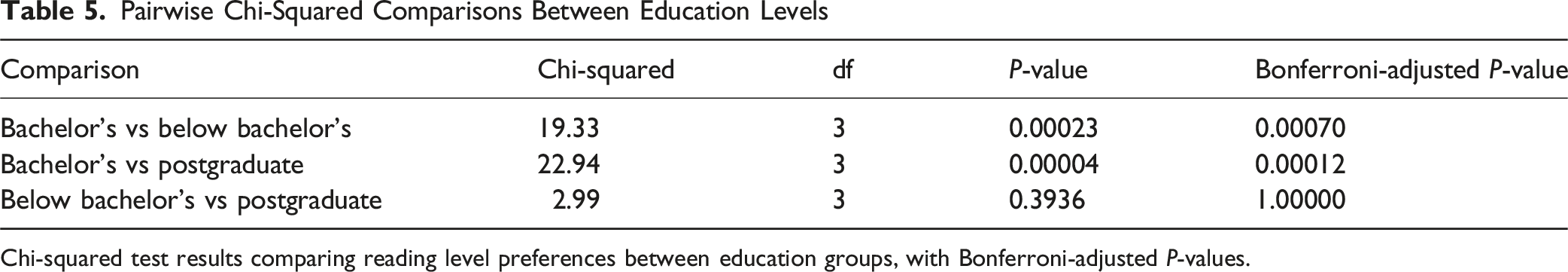

Response preferences were analyzed using a chi-squared test of independence to compare the distribution of selected reading levels across education groups. Post hoc pairwise chi-squared comparisons with Bonferroni correction were performed to assess between-group differences. Standardized residuals were also calculated to identify which specific education-reading level combinations deviated most from expected values under the null hypothesis.

Results

Participant Demographics and Sample Size

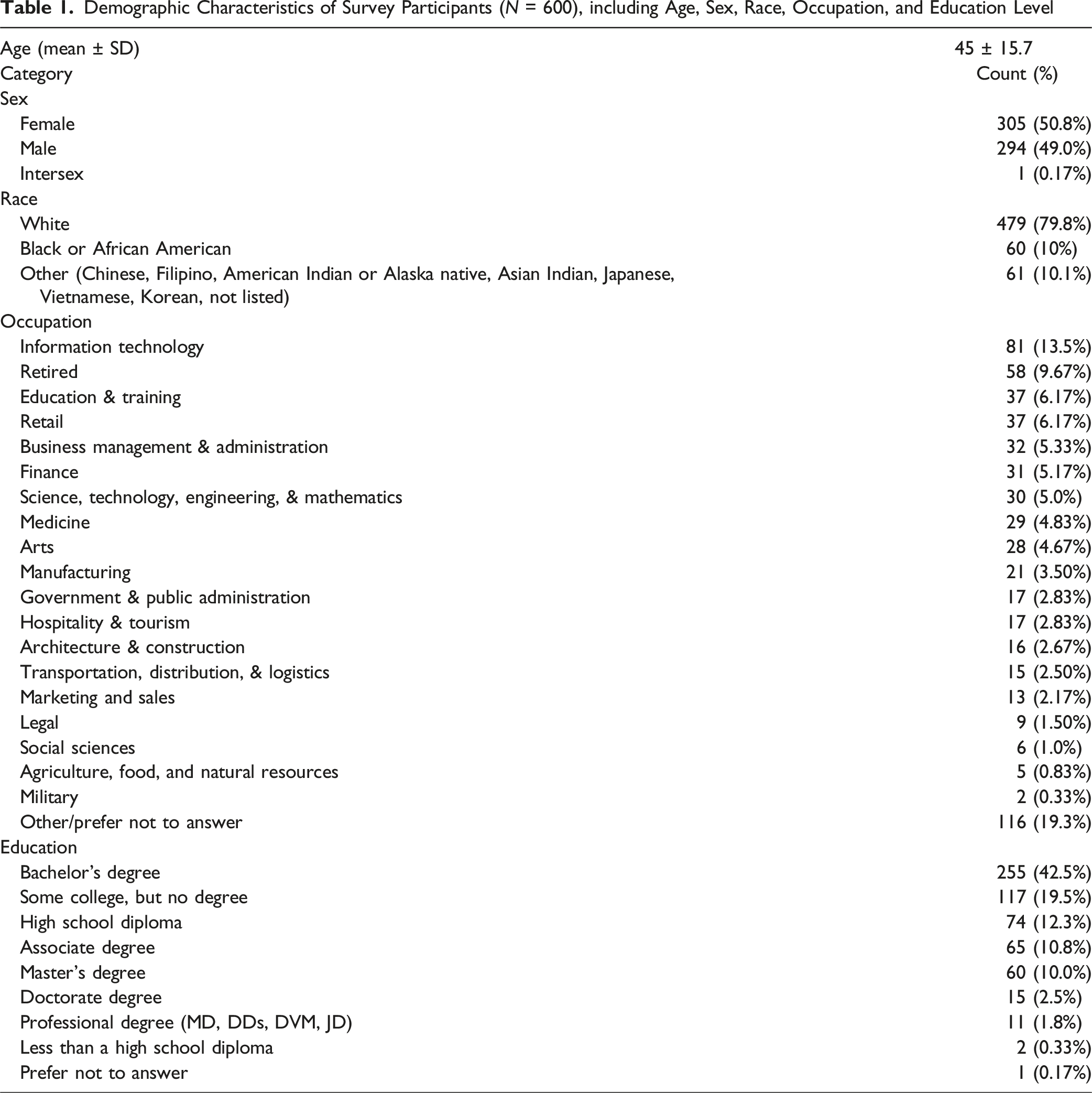

Demographic Characteristics of Survey Participants (

ChatGPT’s Readability Scores at Different Levels

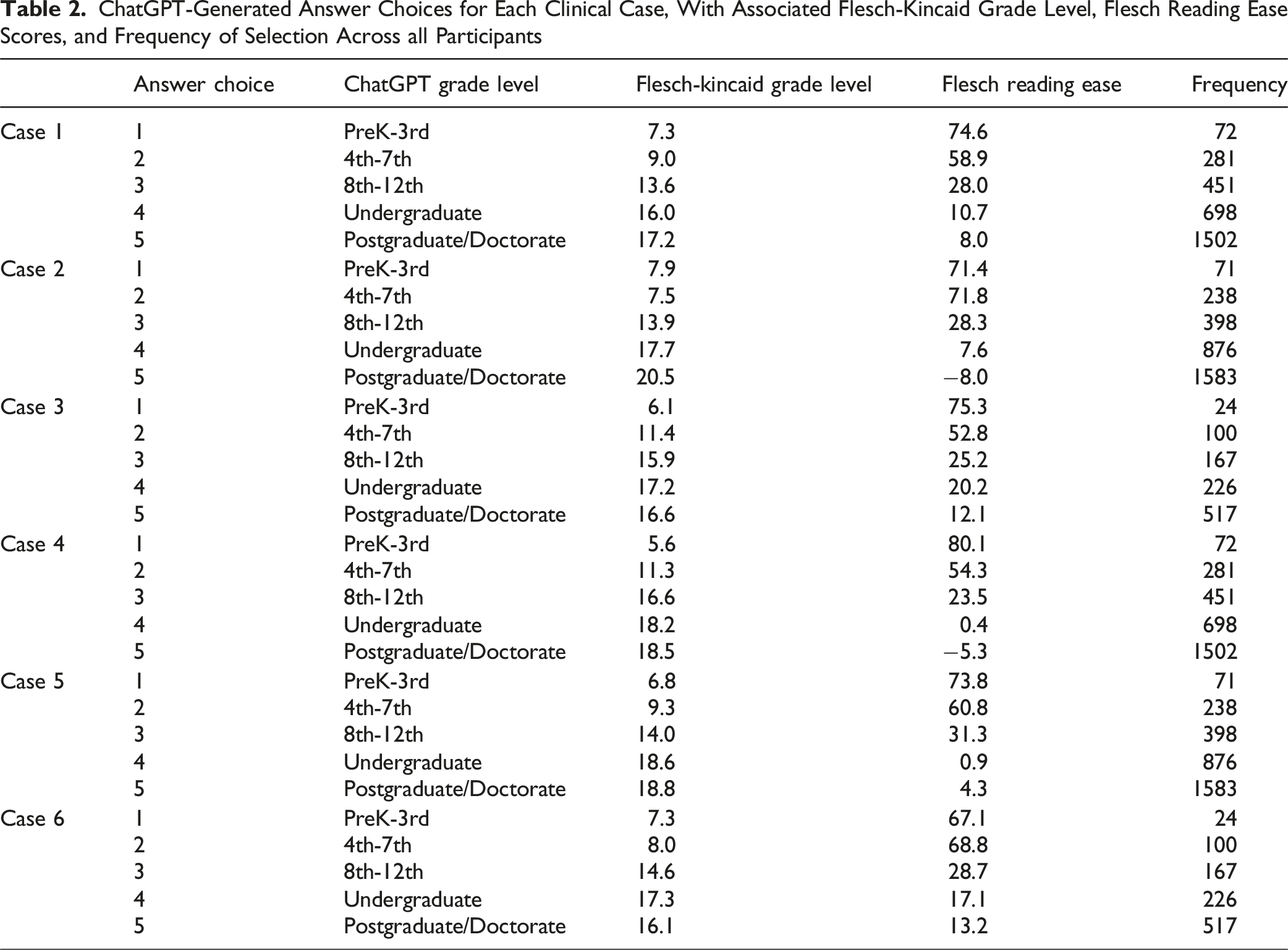

ChatGPT-Generated Answer Choices for Each Clinical Case, With Associated Flesch-Kincaid Grade Level, Flesch Reading Ease Scores, and Frequency of Selection Across all Participants

Preferred Reading Levels Among Respondents

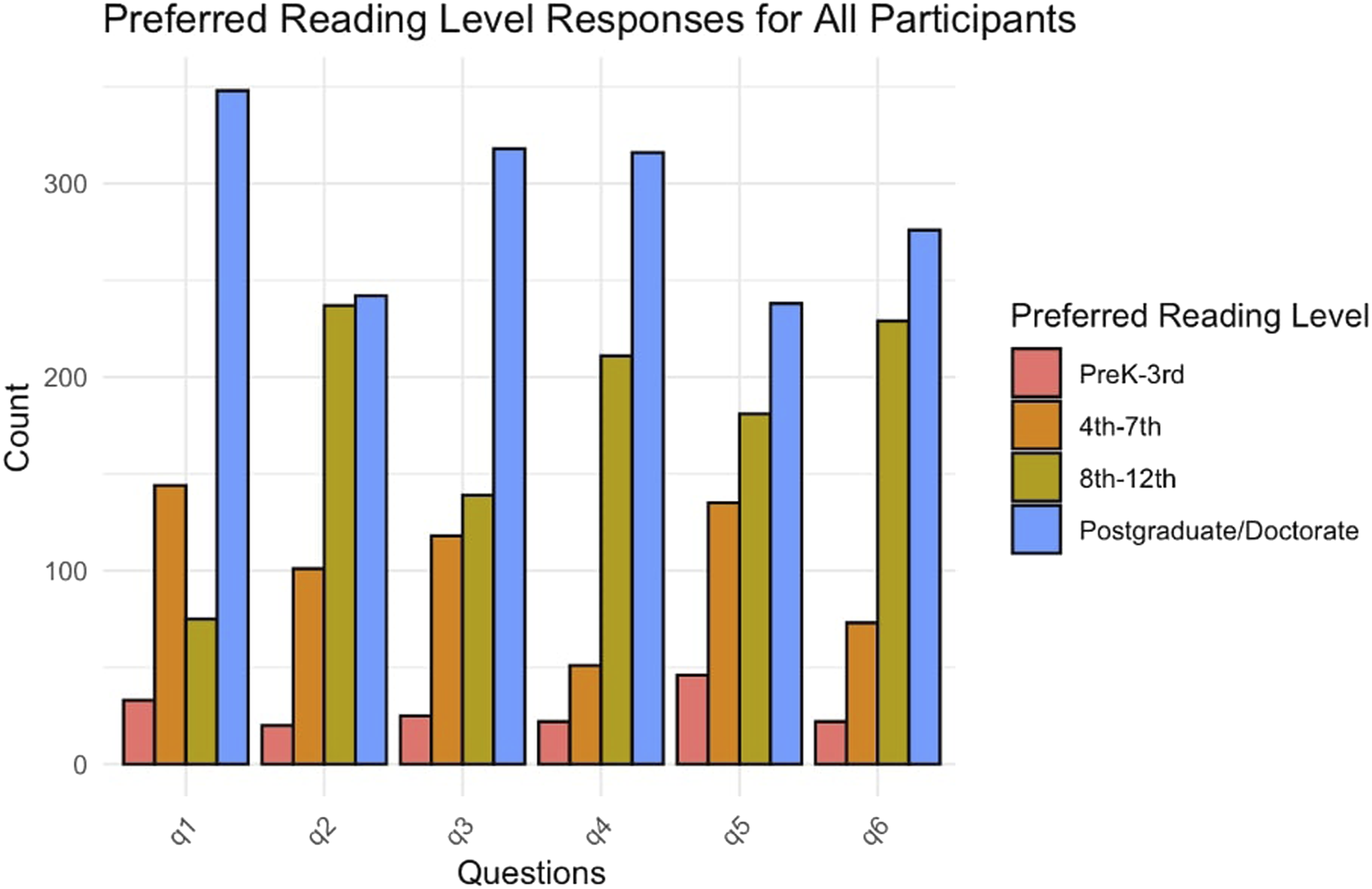

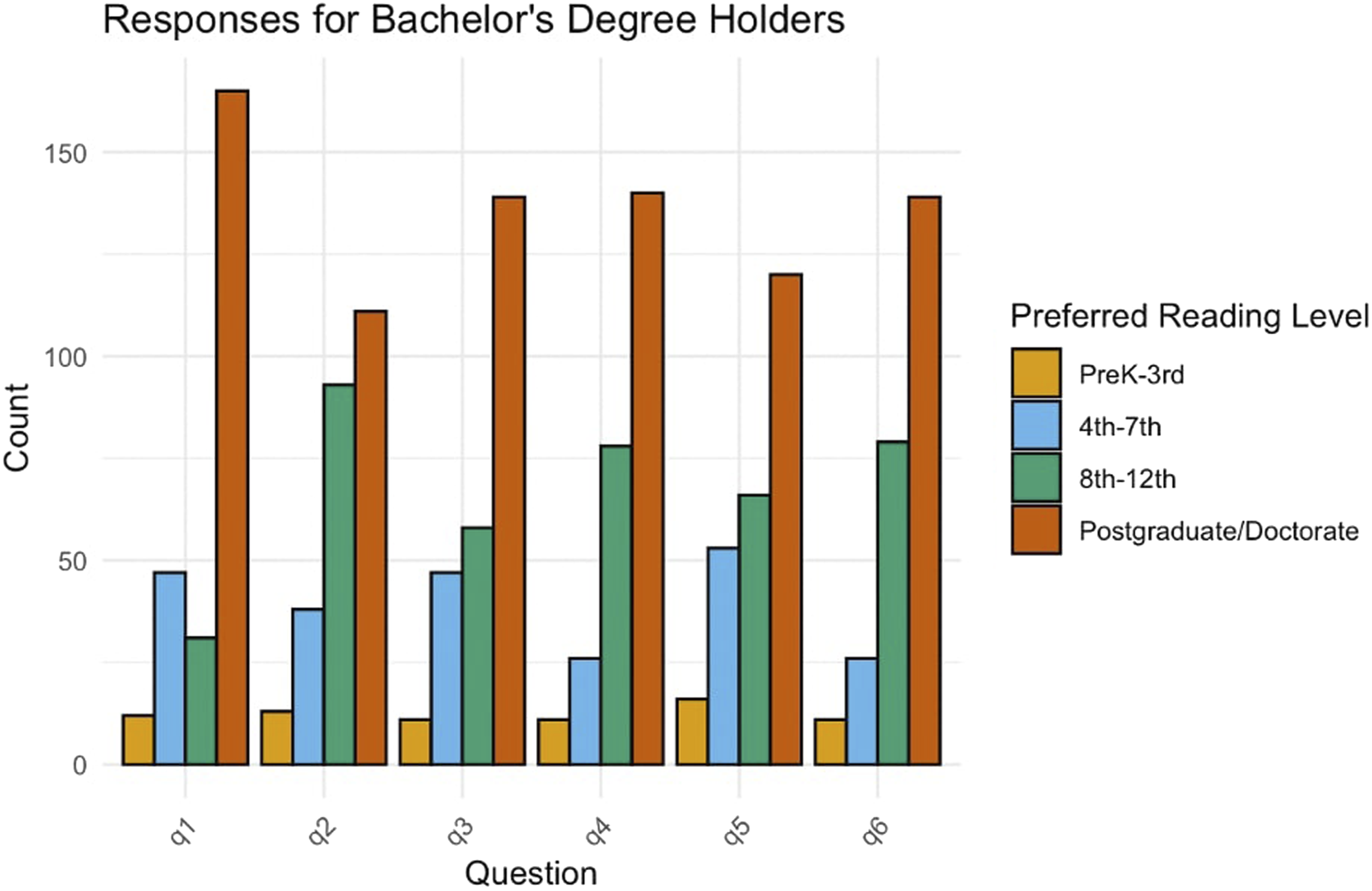

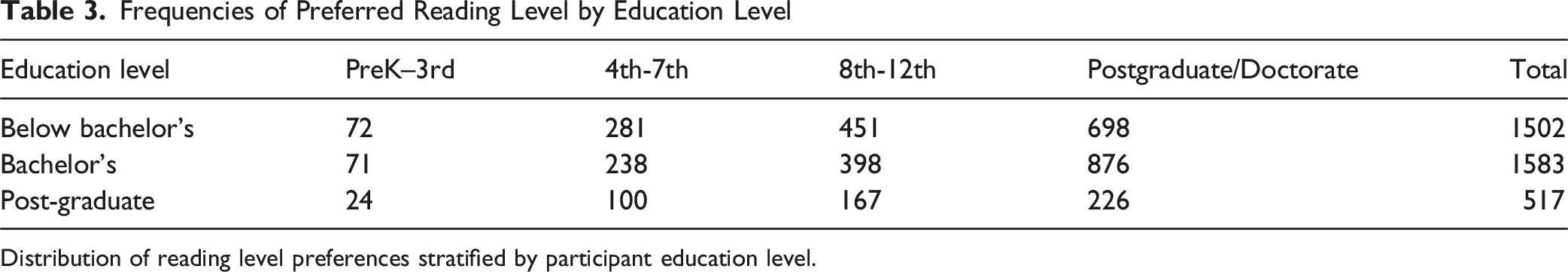

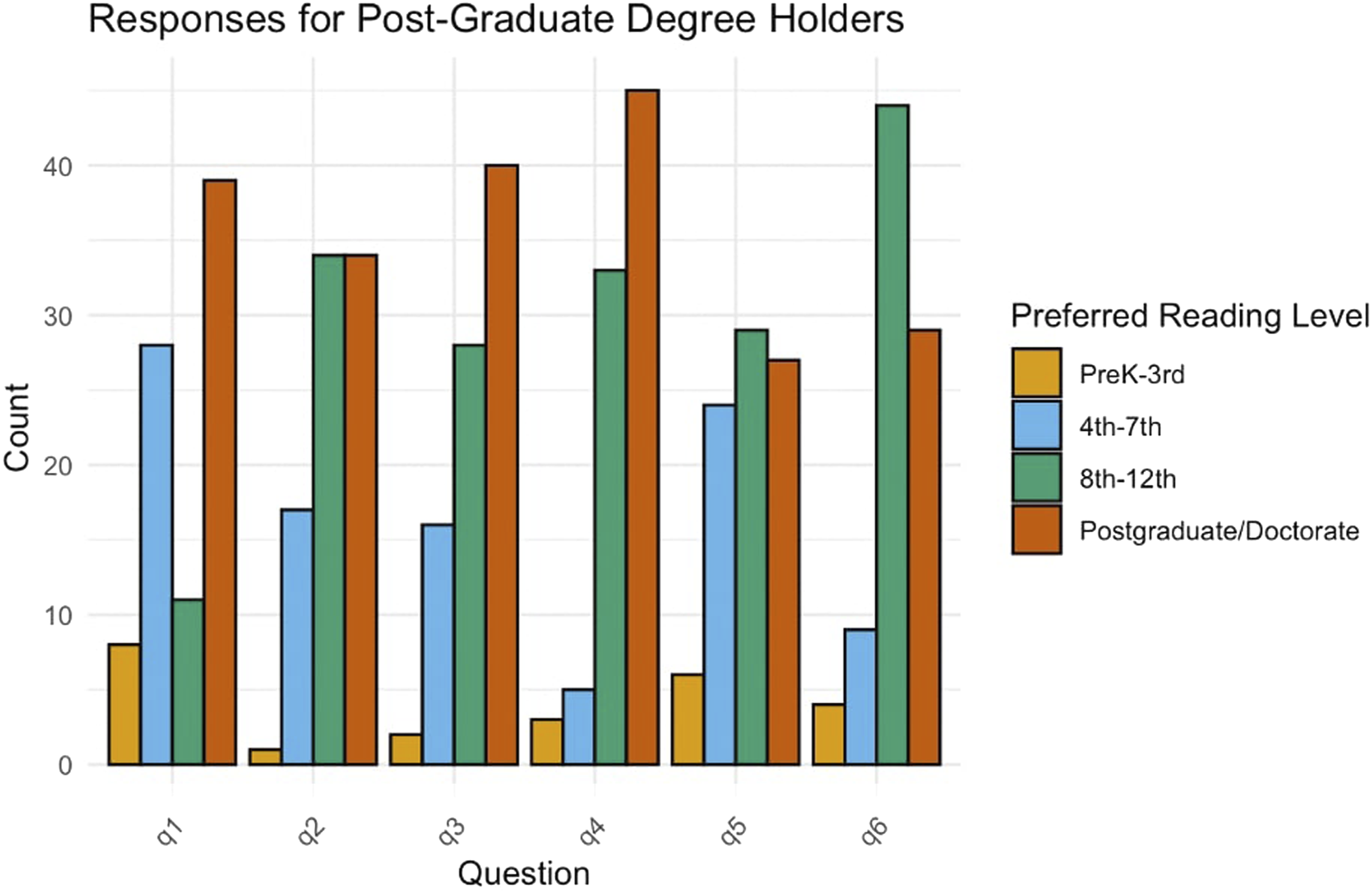

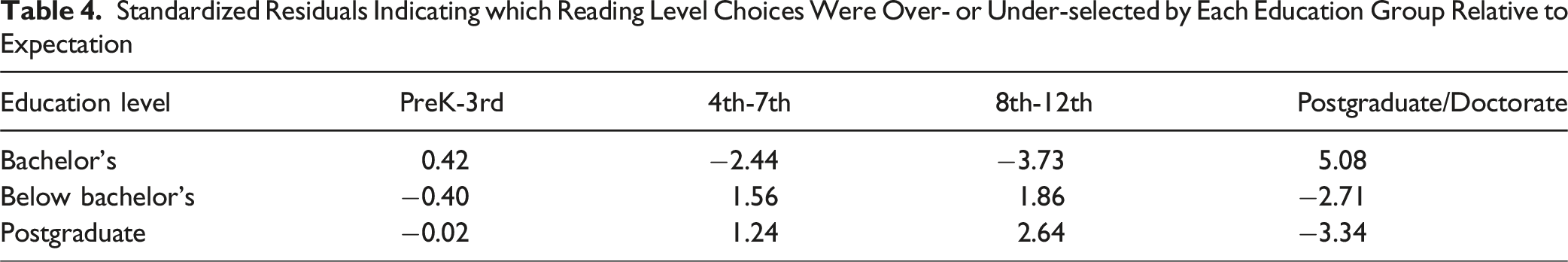

Across all respondents, the most commonly selected answer choices were at the Postgraduate/Doctorate (50.6%) and 8th-12th grade (22.1%) reading levels as generated by ChatGPT (Figure 1; Table 2). When stratified by education level, bachelor’s degree holders demonstrated the strongest preference for postgraduate-level responses (55.3%) (Figure 2; Table 3). Participants with less than a bachelor’s degree also frequently selected postgraduate-level content (46.5%) (Table 3). However, respondents with postgraduate degrees showed a different trend: they chose postgraduate-level content only 43.7% of the time and more often favored simpler alternatives. In fact, 57.1% of postgraduate-educated participants preferred responses written at or below the 8th-12th grade level, as did 53.5% of those with less than a bachelor’s degree (Figure 3; Table 3). Preferred Reading Level Responses for all Participants. Bar Plot Illustrating the Distribution of Preferred Reading Levels Across Six Spine-Related Clinical Scenarios (q1-q6). While “Postgraduate/Doctorate” Responses Were Most Frequently Selected Overall, a Consistent Portion of Participants Preferred Simpler Reading Levels, Particularly “8th-12th” and “4th-7th” Grade Options, Across all Questions Preferred Reading Level Responses for Participants With a Bachelor’s Degree Bar Chart Depicting the Distribution of Preferred Reading Levels Across Six Clinical Scenarios Among Participants With a Bachelor’s Degree. The Majority Consistently Favored Responses at the “Postgraduate/Doctorate” Level, With Notably Lower Selection of “PreK-3rd” and “4th-7th” Reading Levels Frequencies of Preferred Reading Level by Education Level Distribution of reading level preferences stratified by participant education level. Preferred Reading Level Responses for Participants With a Postgraduate Degree Among Individuals With Postgraduate Degrees, Including Those With Master’s, Doctoral (PhD, EdD), or Professional (MD, DDS, DVM, JD) Credentials, Preferences for Simpler Reading Levels Remained Prevalent Across all Clinical Scenarios. While “Postgraduate/Doctorate” Responses Were Frequently Chosen, a Substantial Proportion of Participants Selected “8th-12th” or Even “4th-7th” Grade Options, Particularly for Cases 1, 3, and 6

Standardized Residuals Indicating which Reading Level Choices Were Over- or Under-selected by Each Education Group Relative to Expectation

Discussion

Several studies have highlighted the persistent gap between the intended readability of medical content and its actual accessibility for diverse patient populations.15-17 However, few have explored how patients across educational backgrounds prefer to engage with content at different reading levels. Lumbar MRIs obtained to assess for pathology contributing to low back and leg symptoms have been shown to be quite puzzling and alarming. This concern leads to a variety of internet searches for more information regarding these combinations of symptoms and MRI terminology. 18 Our findings address this gap, showing that a preference for simpler language may transcend educational attainment—suggesting that accessibility, rather than complexity, drives engagement.

Similar to previous work, we found that ChatGPT often generates content above the intended reading level.15-17,19 In our study, responses prompted at the “PreK-3rd grade” level averaged a Flesch-Kincaid (FK) score of 7.3, while “8th-12th grade” prompts averaged 13.4—paralleling findings by Hung et al., who reported that ChatGPT content intended for 7th-grade audiences instead yielded FK scores above 10. 16 Likewise, Covington et al 17 found that ChatGPT-generated medication guides averaged an FK grade of 8.6, overshooting recommended levels despite clear prompt directives. Nasra et al 15 reported similar limitations across AI models, emphasizing the need for improved prompt engineering and output validation. However, none of these studies assessed individual preference across education levels, which is where this study offers new insights.

Importantly, we found that participants across all education groups tended to prefer simpler content than what ChatGPT typically produced. Among respondents with postgraduate degrees, only 43.7% selected responses aligned with their own reading level, while the majority opted for content written at the 8th-12th grade level or below. This challenges the assumption that more advanced education equates to a desire for more complex language, and instead points toward a broader, cross-demographic preference for clarity, usability, and cognitive ease. Our findings are reinforced by those of Nasra et al 15 (2024), who reviewed 60 studies evaluating AI-generated patient education materials in academic writing, health care education, and health care practice/research. 15 They found that users consistently expressed greater satisfaction with simplified outputs, regardless of their medical or educational background. In studies where AI-generated content was revised to meet lower reading levels, user engagement and preference ratings improved markedly. The authors emphasized that legibility, not technical depth, was the primary driver of usability. These conclusions align with our data, which show that even highly educated users often favor mid-level readability when given a choice. These findings may reflect the cognitive load patients experience when processing complex health information, especially in high-stress or unfamiliar clinical settings.

Pairwise Chi-Squared Comparisons Between Education Levels

Chi-squared test results comparing reading level preferences between education groups, with Bonferroni-adjusted

Taken together, these findings indicate that aiming for lower reading levels may increase comprehension, usability, and engagement across a diverse audience, regardless of formal education. In situations where tailoring is feasible, AI systems could be designed to offer real-time customization of readability based on user preference. Until such systems are widely implemented, targeting an 8th-12th grade reading level—or lower—may strike the best balance between accessibility and informational depth. Future studies should explore how aligning readability with preference impacts real-world outcomes such as knowledge retention, shared decision-making, and patient satisfaction.

Limitations

As with many survey-based studies, there are limitations to this study. First, the survey was distributed on a platform that allows participants to choose which surveys to participate in. Perhaps, we captured respondents with a special interest in this topic, thereby creating a potential selection bias. Second,

The evaluation of AI and its applicability in this study has many limitations. Many of these were related to the dynamic functionality of ChatGPT. While the program’s dynamism is what allows it to learn, it also hampers reproducibility of results. For example, if ChatGPT is prompted to translate a text multiple times, the output varies slightly with each request. Therefore, only the first translation was utilized for analysis purposes. While there are multiple large language models (LLMs) publicly available, including Claude, Gemini, and LlaMA, we elected to use ChatGPT. ChatGPT has become one of the most recognized and utilized generative AI tools in both consumer and academic settings, making it a relevant choice for studying user preferences and content readability in a real-world context. However, this decision may limit the generalizability of our findings across other LLM platforms, as different models may generate varying outputs even when prompted similarly.10,25 Additionally, ChatGPT often calculates different readability scores each time it is prompted to evaluate a unique text. This limitation might be overcome by teaching the program to calculate scores with a given formula. 8 Finally, our ChatGPT-generated grade-level specific answer choices did not align with Flesch-Kincade grade level scores. This indicates that our answer choices were above the expected reading level yet followed the correct pattern of increasing reading level difficulty.

Conclusion

While postgraduate-level responses were the most frequently selected overall, preferences varied substantially by education level. Both postgraduate and below-bachelor participants tended to favor simpler content, while only bachelor’s-level respondents consistently preferred more complex language. These findings underscore that simplifying medical information may improve accessibility across diverse educational backgrounds.

Supplemental Material

Supplemental Material - Assessment of Preferences for Delivery of Spine Care Information: A ChatGPT and Survey-Based Study

Supplemental Material for Assessment of Preferences for Delivery of Spine Care Information: A ChatGPT and Survey-Based Study by Patricia Lipson, Kenneth Nguyen, Aiyush Bansal, Erika Castaneda, Jack Sedwick, and Philip K. Louie in Global Spine Journal

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data are available upon request.

Supplemental Material

Supplemental material for this article is available online.