Abstract

Study Design:

Systematic review.

Objectives:

To review the existing literature of prediction models in degenerative spinal surgery.

Methods:

Review of PubMed/Medline and Embase databases was conducted to identify articles between January 1, 2000 and March 1, 2020 that reported prediction model performance for outcomes following elective degenerative spine surgery.

Results:

Thirty-one articles were included. Twenty studies were of thoracolumbar, 5 were of cervical, and 6 included all spine patients. Five studies were externally validated. Prediction models were developed using machine learning (42%) and logistic regression (42%) as well as other techniques. Web-based calculators were included in 45% of published articles. Various outcomes were investigated, including complications, infection, length of stay, discharge disposition, reoperation, readmission, disability score, back pain, leg pain, return to work, and opioid dependence.

Conclusions:

Significant heterogeneity exists in methods used to develop prediction models of postoperative outcomes after degenerative spine surgery. Most internally validate their scores, but a few have been externally validated. Areas under the curve for most models range from 0.6 to 0.9. Techniques for development are becoming increasingly sophisticated with different machine learning tools. With further external validation, these models can be deployed online for patient, physician, and administrative use, and have the potential to optimize outcomes and maximize value in spine surgery.

Introduction

Value-based care has become a manifest focus of American health care policy and is driven by efforts to improve outcomes while reducing costs. Hospital systems and policy makers continue to explore methods to reduce complications, improve patient education, and increase efficiency in perioperative and postoperative settings. Given substantial variability between surgeons in the indications and interventions used for given degenerative spinal pathologies, there is commensurate variability in outcomes. 1 -4 Randomized controlled trials (RCTs) remain the gold standard for determining the efficacy of an intervention and a small number have been conducted for the management of degenerative spine pathology. 5 -8 However, RCTs of surgical interventions have inherent challenges 9,10 and cannot be performed for every clinical question. Cost and comparative effectiveness studies have emerged as an alternative to identify operations that are more likely to yield high value outcomes. Another burgeoning approach is the development and validation of clinical prediction models.

Predictive analytics in clinical medicine has been enabled by the rapid adoption of electronic medical records, development of national registries and prospective multicenter databases, and increased awareness of machine and statistical learning methods. Clinical prediction models have the potential to provide patient-specific risk profiles and expected outcomes. With these tools, surgeons may be able to give a patient their expected likelihood of success for a given operation, as well as their chance for adverse outcomes and complications. On a hospital-wide and national level, these tools can help identify targets for quality improvement efforts and policy making.

Given the demonstrated variability in degenerative spinal surgery practice and outcomes, the application of more robust prediction models to this field may lead to substantial improvements in patient care. However, the studies of prediction model development for degenerative spinal surgery have been heterogeneous. These articles have focused on postoperative outcomes, length of stay (LOS), discharge disposition, and adverse events. They have also varied in terms of design, sample size, method of validation, and mode of deployment. The goal of this systematic review was to summarize the existing literature on prediction models in degenerative spinal surgery. We categorized the existing degenerative spinal surgery prediction models based on their respective outcomes and design and report the relative strengths and weaknesses of these studies to aid in interpretation and consideration for clinical deployment.

Methods

We performed a search of the English language literature using the PubMed/Medline and Embase databases to identify articles between January 1, 2000 and March 1, 2020 that reported prediction model performance for outcomes following elective degenerative spine surgery.

Search terms included (prediction OR predictive) AND (spine OR spinal OR “spine surgery” OR “laminectomy” OR “interbody fusion” OR “diskectomy” OR “discectomy” OR “spinal fusion”). We further queried the bibliographies of the included studies to identify additional relevant articles.

Inclusion criteria were English language articles involving adult patients who underwent elective spine surgery for a degenerative spinal pathology. Studies involving tumor, infection, and deformity were excluded, as were nonclinical studies. All studies were required to have a description of a model that could facilitate inputting patient-level data to predict the outcome of interest. Prediction model outcomes could include functional/disability/pain scores or more objective measures such as LOS, reoperation, readmission, and complications.

Results

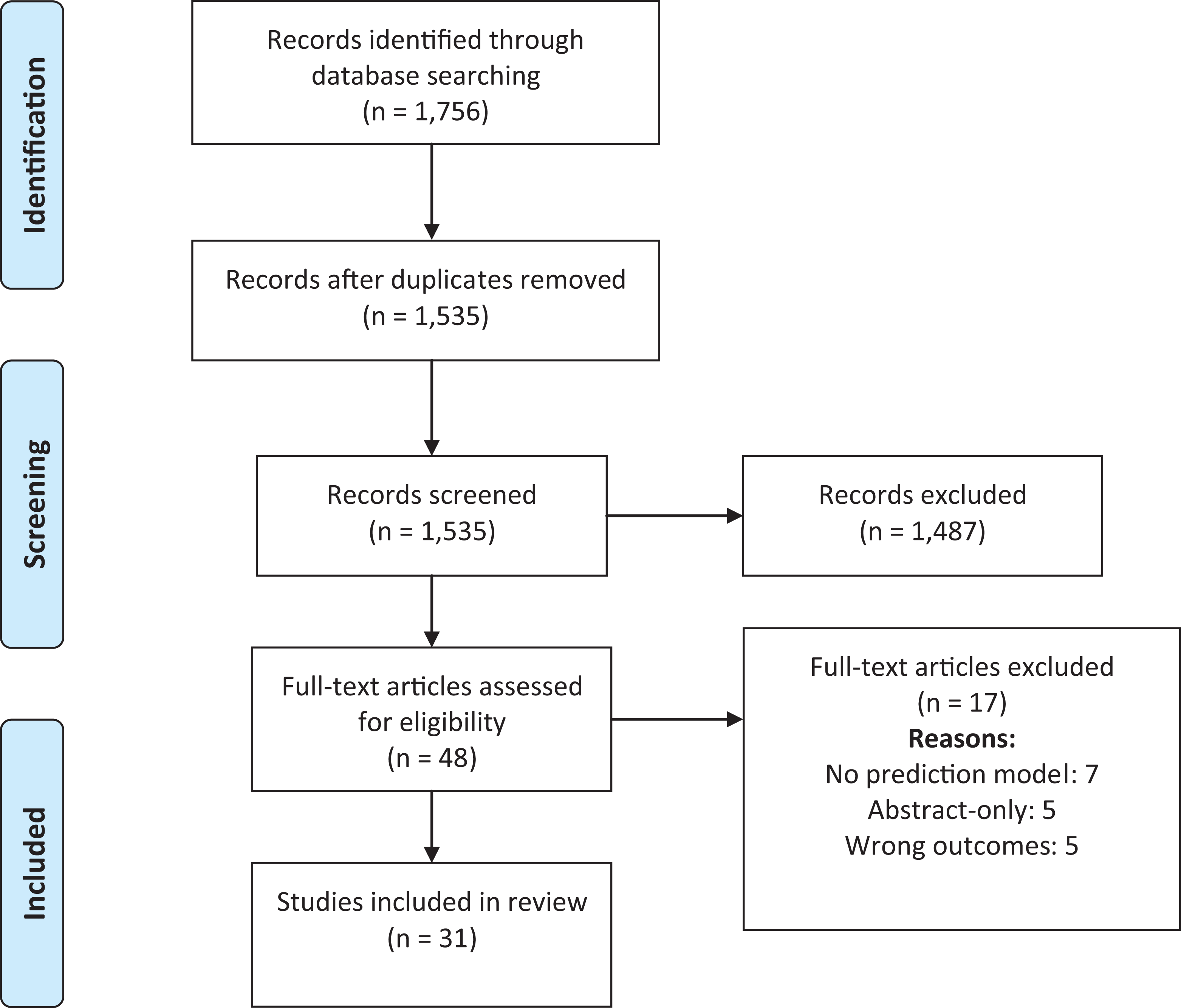

We identified 1535 unique articles (Figure 1), of which 48 underwent full-text review leading to the inclusion of 31 articles in this review. Reasons for exclusion included no mention of a prediction model (n = 7), outcomes not fitting inclusion criteria (n = 5), and only abstract available (n = 5). Of these 31 articles, 5 articles (16%) included external validation. Of the 31 included studies, 20 (65%) were of thoracolumbar surgeries, 5 (16%) were cervical surgeries, and 6 (19%) were inclusive of patients undergoing any spinal surgery.

PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flow diagram for articles with degenerative spine disease prediction models with 1-year outcomes after surgery.

There was heterogeneity in how the prediction models were developed. Thirteen (42%) used machine learning, 13 (42%) used logistic regression, 2 (6%) used linear regression, 1 (3%) used binomial regression, 1 (3%) used both logistic and linear regression, and 1 (3%) used Cox proportional hazards regression. For internal validation, 17 (55%) used cross-validation by splitting their cohort into a training and validation sets, 9 (29%) used bootstrapping, 1 (3%) used random number generators, and 4 (13%) did not specify. Web-based calculators were included in 14 (45%) of the published articles. Various outcomes were investigated, including overall complications, infection, LOS, discharge disposition, reoperation, readmission, Oswestry Disability Index (ODI) score, back pain, leg pain, return to work, and opioid dependence.

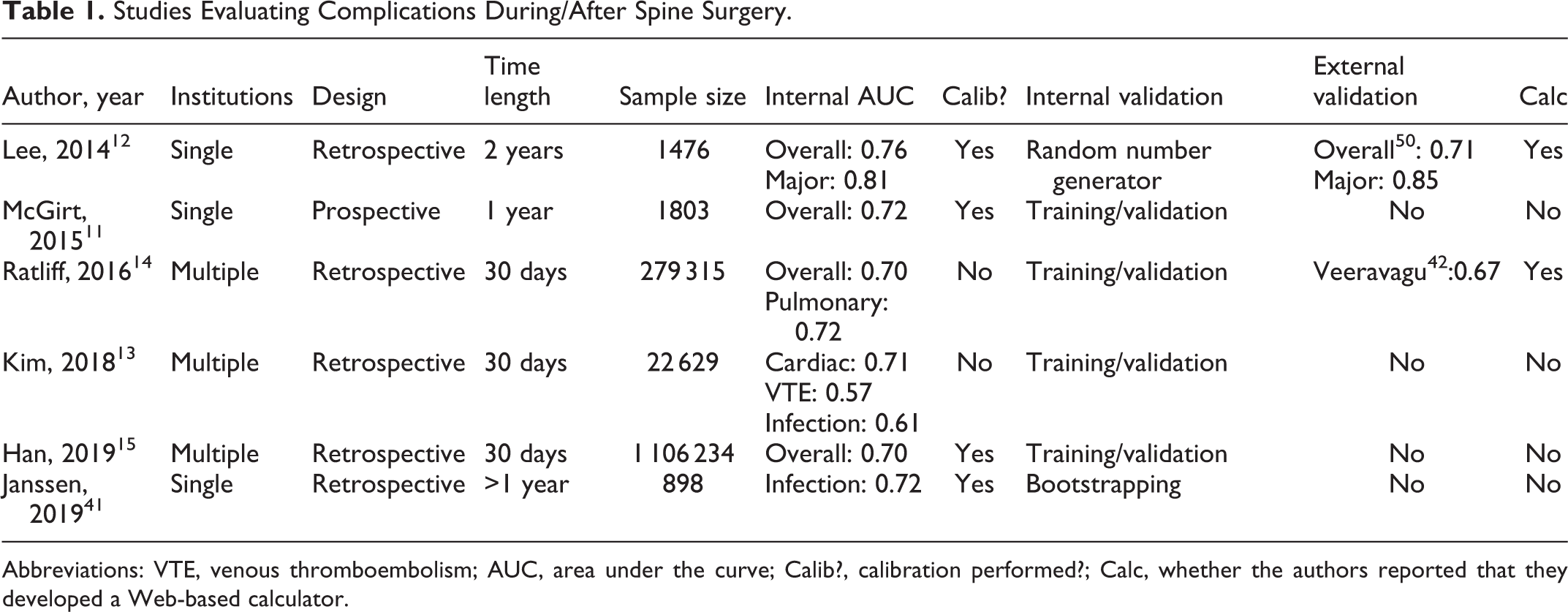

Six articles looked at complications (Table 1), which included infection (n = 3), all-inclusive complications (n = 4), pulmonary complications (n = 2), cardiac complications (n = 2), venous thromboembolism (n = 1), and neurologic complications (n = 1). 11 -15,41 Of these, 3 were single institution studies, 1 used the American College of Surgeons National Surgical Quality Improvement Program (ACS-NSQIP) database, 1 used the Truven Health Analytics MarketScan database, and 1 used both the Truven database and the Centers for Medicare and Medicaid Services (CMS) Medicare database. One was prospective while 5 were retrospective. Study follow-up ranged from 30 days to 2 years. Area under the curve (AUC) ranged from 0.57 to 0.72.

Studies Evaluating Complications During/After Spine Surgery.

Abbreviations: VTE, venous thromboembolism; AUC, area under the curve; Calib?, calibration performed?; Calc, whether the authors reported that they developed a Web-based calculator.

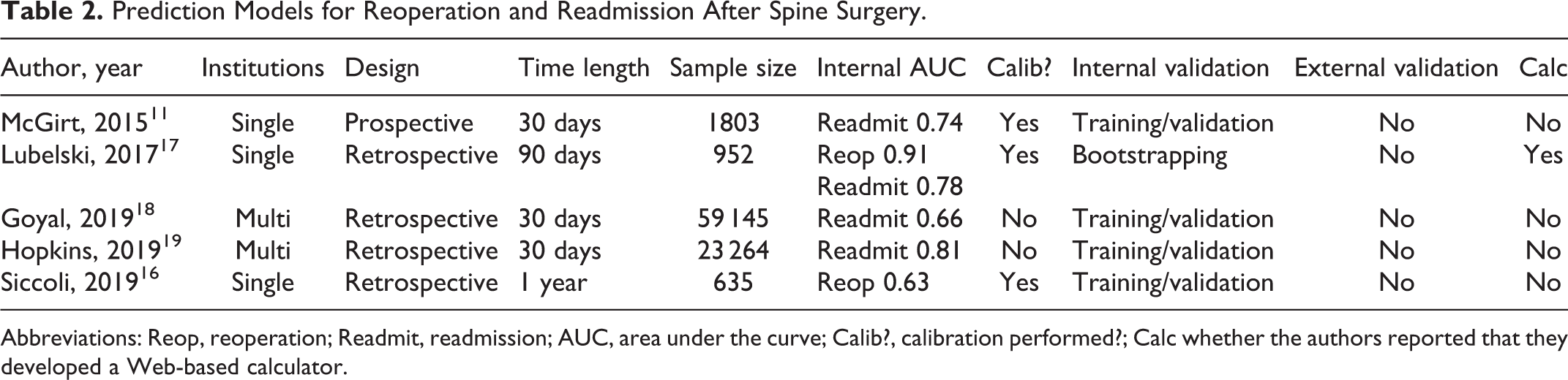

Reoperation (n = 2) and readmission (n = 4) were examined by 5 articles (Table 2). 11,16 -19 Three were single institution studies and 2 used the ACS-NSQIP database. One was prospective while 4 were retrospective. Study follow-up ranged from 30 days to 1 year. AUC ranged from 0.63 to 0.91.

Prediction Models for Reoperation and Readmission After Spine Surgery.

Abbreviations: Reop, reoperation; Readmit, readmission; AUC, area under the curve; Calib?, calibration performed?; Calc whether the authors reported that they developed a Web-based calculator.

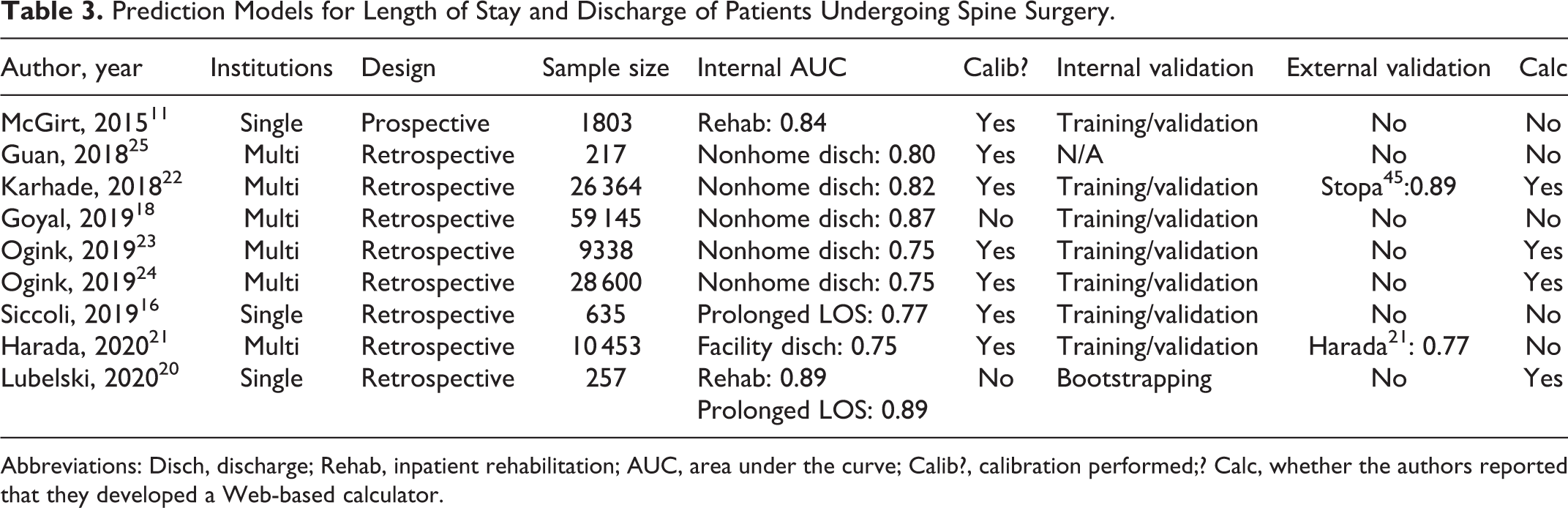

Nine studies examined the LOS and discharge disposition of patients (Table 3). 11,16,18,20 -25 Of these, 2 examined discharge to a rehabilitation facility, 1 examined discharge to any facility, 5 examined nonhome discharge, and 2 examined prolonged LOS. Three were single institution, 5 used the ACS-NSQIP database, and 1 used the NeuroPoint Quality Outcomes Database (QOD) database. One was prospective while 8 were retrospective. AUC ranged from 0.75 to 0.89.

Prediction Models for Length of Stay and Discharge of Patients Undergoing Spine Surgery.

Abbreviations: Disch, discharge; Rehab, inpatient rehabilitation; AUC, area under the curve; Calib?, calibration performed;? Calc, whether the authors reported that they developed a Web-based calculator.

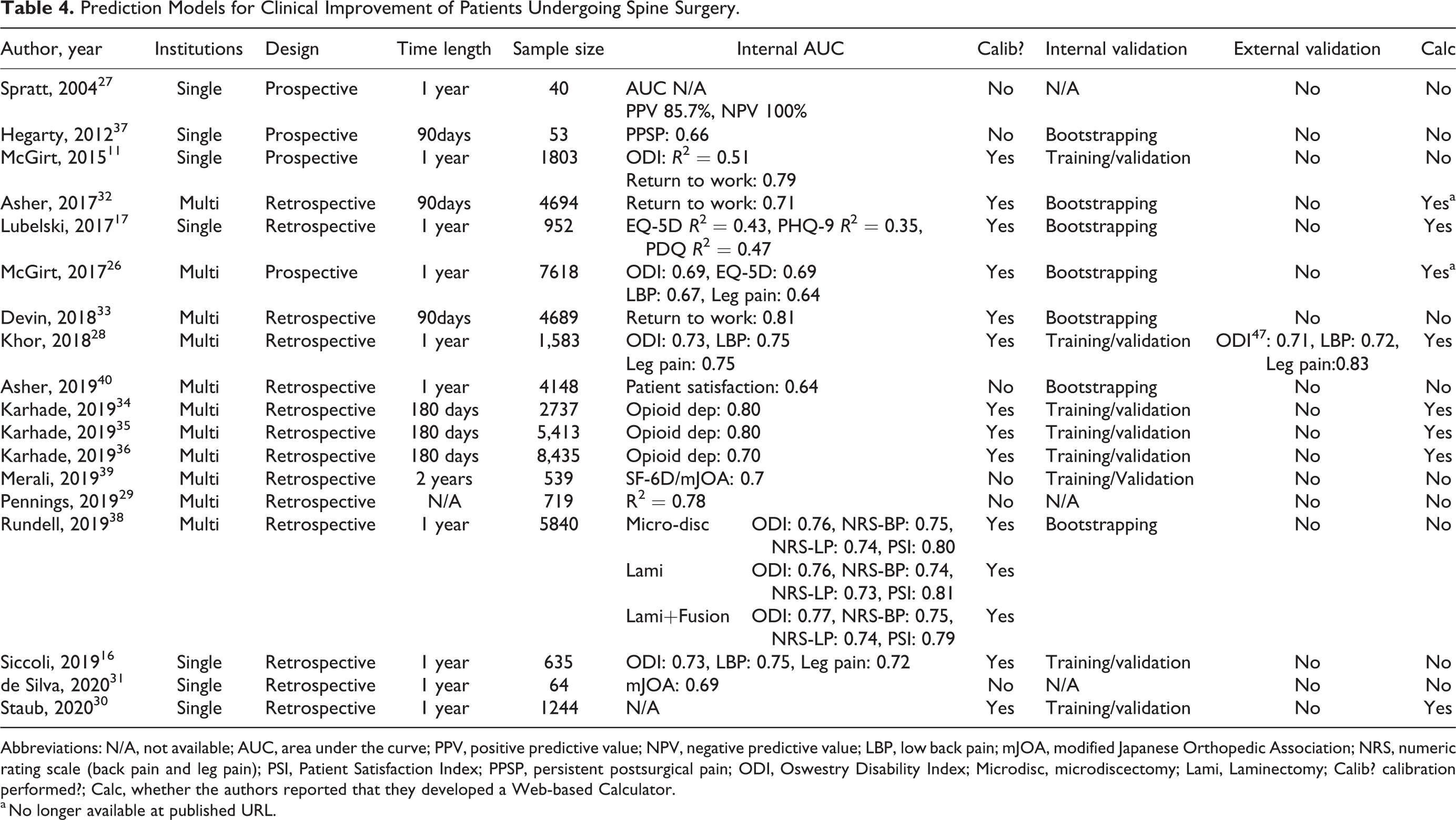

Eighteen articles examined functional outcomes (Table 4), which included quality-of-life measures (n = 11), opioid dependence (n = 3), returning to work (n = 2), patient satisfaction (n = 2), and persistent postsurgical pain (n = 1). 11,16,17,26 -40 Quality-of-life outcome measures included scores on the following validated inventories: ODI, visual analog scale for leg and lower back pain, EuroQol 5-dimensions (EQ-5D), Patient Health Questionnaire-9 (PHQ-9), Pain and Disability Questionnaire (PDQ), Short Form 6-dimensions (SF-6D), and the modified Japanese Orthopedic Association (mJOA). Seven were single institution studies, 5 used the QOD database, and 6 were multi-institutional. Four were prospective while 14 were retrospective. Follow-up ranged from 90 days to 2 years. AUC ranged from 0.64 to 0.81. Specifically, AUC ranged from 0.64 to 0.81 for quality-of-life measures, 0.70 to 0.80 for opioid dependence, 0.71 to 0.81 for return to work, and 0.64 to 0.79 for patient satisfaction. AUC was 0.66 for persistent postsurgical pain.

Prediction Models for Clinical Improvement of Patients Undergoing Spine Surgery.

Abbreviations: N/A, not available; AUC, area under the curve; PPV, positive predictive value; NPV, negative predictive value; LBP, low back pain; mJOA, modified Japanese Orthopedic Association; NRS, numeric rating scale (back pain and leg pain); PSI, Patient Satisfaction Index; PPSP, persistent postsurgical pain; ODI, Oswestry Disability Index; Microdisc, microdiscectomy; Lami, Laminectomy; Calib? calibration performed?; Calc, whether the authors reported that they developed a Web-based Calculator.

a No longer available at published URL.

Discussion

We identified 31 studies reporting prediction models for degenerative spinal surgery. These have mainly focused on predicting complications, readmission, reoperation, and functional/quality-of-life outcomes. We found that while almost all studies attempted to internally validate their model, external validation was rare. AUC values ranged from as low as 0.6 to as high as 0.97, and only two-thirds of papers reported calibration of their models. While most articles reported discrimination, calibration is equally important when trying to identify patients that will develop a given event versus those who will not. One should not use a model where the absolute risk estimates are not accurate. Sometimes calibration can be good in certain risk groups, but overestimates or underestimates risk in different populations. For this reason, better models are those that report both these values. Furthermore, just under half the studies reported their model in the form of a web-based calculator. Model deployment in this format greatly enhances the ability of a clinician to incorporate such a model into their clinical workflow.

Complications

Models predicting complications after degenerative spine surgery were the most commonly published; however, the types of models and the datasets used to create them varied greatly. Lee et al 12 retrospectively evaluated 1476 patients undergoing degenerative spine surgery from a single institutional surgical registry to construct a predictive model of postoperative major complications, minor complications, surgical site infection, and durotomy. They reported an AUC of 0.76 for any complication and 0.81 for major complications and deployed their model at http://depts.washington.edu/spinersk/. McGirt et al 11 prospectively evaluated 1803 patients undergoing lumbar spine surgery at a single institution to produce a model that incorporated 45 baseline variables to predict postoperative complications with an AUC of 0.72. Most recently, Janssen et al 41 reported a single institution retrospective series predicting postoperative infection with an AUC of 0.72.

The other studies that published models of complications used multi-institutional data. Ratliff et al 14 retrospectively evaluated 279 315 patients from a longitudinal national claims database to construct a predictive model of complications after surgery. They produced a model with an AUC of 0.70 and deployed the algorithm in a freely available smartphone application (http://itunes.apple.com/app/ratool/id1087663216). The authors also externally validated this model using data from a single-institution prospective patient series (N = 246). 42

Kim et al 13 retrospectively evaluated 22,629 patients using the cross-sectional NSQIP database to develop machine learning models to identify risk factors for complications after posterior lumbar spine fusion. AUCs for logistic regression and artificial neural network models both outperformed benchmark American Society of Anesthesiologists (ASA) class for predicting complications. In their logistic regression model, the AUC for predicting cardiac complications was 0.66, for predicting venous thromboembolism was 0.59, for predicting wound infection was 0.61, and for predicting mortality was 0.7. Of note, several authors including Sebastian et al 43 attempted to validate the previously developed NSQIP Surgical risk calculator (riskcalculator.facs.org). They found that the calculator generally had relatively poor predictive performance across all outcomes measured, including an AUC of 0.56 for reoperation, 0.61 for any complication, 0.61 for serious complications, and 0.63 for surgical site infection.

Han et al 15 retrospectively evaluated 1 106 234 patients from the Truven MarketScan, Commercial database, the Truven MarketScan Medicare Databases, and the CMS Medicare database to develop predictive models of adverse events 30 days after spine surgery. The predictors identified included patient demographics, medical comorbidities, surgical indication, and operative characteristics and the resultant model had an AUC of 0.70 for predicting overall adverse events.

Reoperation and Readmission

Prediction models of readmission and reoperation are particularly apt for current CMS hospital quality metrics. The articles that have looked at this have been primarily retrospective, with the exception of the article by McGirt and colleagues, 11 who prospectively evaluated 1803 patients at a single institution to develop multiple predictive models, including one for readmission. Using 45 baseline variables, their model yielded an AUC of 0.74. They did not have external validation and the large number of baseline variables as compared with overall number of readmission events (N = 108), may potentially increase the risk of overfitting and thereby limit generalizability.

Of the models derived from retrospective analyses, Siccoli et al 16 evaluated 635 patients from a prospective registry using machine learning algorithms to predict need for reoperation and patient outcomes at 12 months. Their model for reoperation had an AUC of 0.63, which is on the lower end of the spectrum. Lubelski et al 17 retrospectively evaluated 952 patients from a single institution who underwent anterior or posterior cervical decompression/fusion and found that predictors of clinical outcomes included race, median income, body mass index, medical comorbidities, presenting symptoms, surgical indication, surgery type, and number of operated levels. They validated their cohort using bootstrapping and found an AUC of 0.91 for 90-day reoperation, 0.63 for 30-day emergency department visits, and 0.78 for 30-day readmission. A web-based calculator was deployed at https://riskcalc.org/PatientsEligibleforCervicalSpineSurgery/.

Two additional studies used the ACS-NSQIP database to generate calculators. Hopkins et al

19

retrospectively evaluated 23 264 patients who underwent posterior lumbar fusion and found that predictors of 30-day readmission included medical comorbidities and whether surgery was a reoperation or index case. Despite the limitations of the NSQIP database, their model achieved an AUC of 0.81. Though not included in the original article, the authors did later report that this model was adequately calibrated.

44

In contrast, the more inclusive study by Goyal et al,

18

which had cervical

The national administrative databases are readily accessible and have very large numbers, which may increase the power for statistical analysis. Predictive models that are calculated from these databases, however, may be subject to significant bias because of how the data is collected, completeness of the included variables, and how they are categorized based on billing diagnosis and procedure codes. Models based on smaller sample sizes may potentially be superior if the data is collected prospectively and if the data collection is more nuanced and accurate. Ultimately, when evaluating different prediction models, it is important to consider how the data was collected, sample size, number of institutions included, as well as AUC, discrimination, calibration.

Length of Stay and Discharge

In addition to predicting adverse outcomes, predicting prolonged length of hospital stay and discharge disposition can improve patient experience, reduce health-facility associated complications, and reduce costs. Several authors have developed prediction models to determine expected length of stay as well as the likelihood of discharge to nonhome or inpatient rehabilitation destination.

Using their prospective data set, McGirt et al 11 developed a model with an AUC of 0.84 for predicting discharge to in-patient rehabilitation. Lubelski et al 20 retrospectively evaluated 257 patients from a single institution and published a model that had an AUC of 0.89 for likelihood of rehabilitation discharge as well as AUC of 0.89 for prolonged LOS (>7 days). The authors deployed this model as a web-based calculator at https://jhuspine1.shinyapps.io/RehabLOS/. Similarly, Siccoli et al 16 retrospectively evaluated a prospective registry of 635 patients undergoing lumbar decompression surgery using machine learning algorithms to predict extended length of stay (>28 hours) with an AUC of 0.77.

Guan and colleagues 25 used the Quality Outcomes Database (QOD), a multicenter prospective registry, to develop a prediction score of discharge needs for patients undergoing lumbar fusion. With an AUC of 0.81, their model could place a patient into the low- or high-score category, which would determine the likelihood of needing additional homes services or acute rehabilitation.

The other publications on predictors of rehabilitation discharge all used the ACS-NSQIP database to generate prediction models. Harada et al 21 evaluated 10,453 patients from the ACS-NSQIP database who underwent open lumbar fusion (AUC of 0.75), and then externally validated the model using their institutional dataset (AUC of 0.77). Similarly, Karhade et al 22 evaluated 26 364 ACS-NSQIP patients who underwent lumbar surgery for degenerative disc disorders to generate a model with an AUC of 0.82. Their model was then externally validated by Stopa and colleagues 45 and the authors of the original article deployed a web-based calculator at https://sorg-apps.shinyapps.io/discdisposition/.

Ogink and colleagues 23 then published an evaluation of 9338 patients in the ACS-NSQIP database who underwent surgery for degenerative spondylolisthesis and found that their model predicted nonhome discharge with an AUC of 0.75 (https://sorg-apps.shinyapps.io/spondydisposition/). Then in a parallel publication, the same group 24 evaluated 28 600 patients in the ACS-NSQIP database who underwent surgery for lumbar spinal stenosis and generated a model predicting nonhome discharge with an AUC of 0.75 (https://sorg-apps.shinyapps.io/stenosisdisposition/). Last, analyzing 59 145 ACS-NSQIP patients who underwent either cervical or lumbar spinal fusion, Goyal et al 18 produced a model predicting nonhome discharge with an AUC of 0.87.

Pain, Disability, and Quality of Life

Functional and quality-of-life outcomes are critical to delivering patient-centered spine care. Therefore, these outcome metrics have also been the focus of clinical prediction models. The ODI is a widely used and extensively validated method for quantifying low back pain–associated disability and has been used by multiple prediction studies as an outcome. 46

McGirt et al 26 prospectively evaluated a larger cohort of 7618 patients from the NeuroPoint QOD one year after elective lumbar spine surgery and found that predictors of patient-reported outcomes (PROs) included employment status, baseline back pain, psychological distress, baseline ODI, level of education, workers’ compensation status, symptom duration, race, baseline leg pain, ASA score, age, primary symptom, smoking status, and insurance status. Internal validation yielded modest AUCs of 0.69 for ODI, 0.67 for numeric rating scale (NRS) for back pain, and 0.64 for NRS for leg pain. Siccoli et al 16 achieved comparable discriminative ability for these outcomes among patients undergoing single- or multilevel decompression for lumbar spinal stenosis, with data collected from retrospective review of a prospective registry. Khor et al 28 collected prospective, multi-institution registry data (N = 1583) for patients undergoing elective lumbar surgery and developed predictive models that achieved AUCs of 0.73 for ODI, 0.75 for NRS back pain, and 0.75 for NRS leg pain. A web-based calculator was deployed at https://becertain.shinyapps.io/lumbar_fusion_calculator. Importantly, these models were independently, externally validated by Quddusi et al. 47

An often-underestimated aspect in the development of clinical prediction models is variable selection. In an effort to address this, Rundell et al 38 retrospectively evaluated 5840 patients from multiple institutions to develop prognostic models of 1-year outcomes. The key finding of this study was that ODI at 3-months postsurgery was the strongest predictor of 12-month outcomes. 38 Future predictive studies should think carefully about variable selection and consider feature engineering, a term in machine learning that describes using domain knowledge to create variables that may drive improved predictive performance.

While the majority of predictive models in degenerative spine surgery have focused on lumbar spine surgery, early efforts in modeling quality-of-life outcomes for cervical spine surgery patients are emerging. In addition to predicting reoperation and readmission rates, Lubelski et al

17

used their single-institution cohort of patients undergoing cervical spine surgery to develop nomograms for quality-of-life outcomes (EuroQOL, EQ-5D; PHQ-9, PDQ). These nomograms predicted quality-of-life outcomes to varying degrees, with

A final outcome of interest is opioid use following degenerative spine surgery. Associations between spine surgery and opioid use are well established. 48,49 Karhade et al 34 -36 endeavored to build predictive models of sustained opioid use after cervical and lumbar spine surgery, defined as >90 days of uninterrupted prescription filling. Their models had AUCs ranging from 0.7 to 0.8 and were deployed as web-based calculators to potentially enable a surgeon, at the bedside, to identify an individual’s specific risk.

Limitations and Future Directions

There is an increasing body of literature looking at predicting outcomes in degenerative spine surgery. Some focus on administrative outcomes such as readmission, emergency department visits, and reoperation, whereas others focus on patient reported outcomes and complications. Heterogeneity also exists in how the data is collected, how the analyses are performed and models validated, and the mechanisms by which the data is reported. To be integrated into clinical practice, prediction models need to have the data collected in a systematic way, preferably prospectively, with detailed clinical information. Models based on the Current Procedural Terminology and diagnosis codes of administrative databases are therefore inherently limited. Models need to assess for discrimination and calibration and should preferably have AUC >0.7. Details of how the analysis is performed should be explicitly reported. Validation should be performed with a patient population that is different from which the model was generated, ideally at another institution. If validation is performed on patients from the same institution, this limits the model’s generalizability outside of the primary hospital setting.

Future directions include the generation a grading system to help clinicians determine the relative strengths of the different published models. Additionally, studies are needed to determine the usefulness of such prediction models. Better understanding is needed whether the use of a prediction model leads to greater patient satisfaction, outcome, or value. Lastly, it is important to remember that regardless of how accurate the prediction model is, it cannot replace clinical judgment. There are innumerable clinical and social variables that are taken into account when helping patients decide on a treatment course. The goal is to create prediction calculators that can help the physician provide more accurate and individualized descriptions of the risk/benefit profile for a given patient.

Conclusion

The continued emphasis on value-based care in American health care and the variability in degenerative spine surgery outcomes presents an important case for clinical prediction modeling. The current body of clinical prediction for degenerative spine surgery is heterogeneous with regard to data sets, outcome measures, and statistical learning methods. Importantly, external validation of proposed models must be emphasized and executed. While the promise of clinical prediction in degenerative spine surgery for patients, hospitals, and health systems is significant, further efforts are required before current models are appropriate for clinical deployment

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Daniel M. Sciubba is a consultant for Baxter, DePuy-Synthes, Globus Medical, K2M, Medtronic, NuVasive, Stryker, and receives unrelated grant support from Baxter Medical, North American Spine Society, and Stryker. The other authors have no disclosures to make.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This supplement was supported by a grant from AO Spine North America.