Abstract

This study investigates how artificial intelligence (AI)–mediated support and instructor guidance shape preservice mathematics teachers’ relational and procedural understanding. Using a quasi-experimental design with 76 participants across two universities, the study compared learning outcomes in AI-supported and instructor-led settings. Participants generated relational and procedural examples and explained their reasoning; responses were evaluated for both accuracy and originality. Findings revealed a trade-off: AI-supported participants produced more accurate examples but frequently reproduced content verbatim, thereby reducing originality, whereas instructor-supported participants exhibited more misconceptions yet demonstrated independent reasoning and initiative. These results suggest that AI can enhance efficiency and precision while limiting opportunities for critical engagement and intellectual autonomy. The study contributes to broader debates on the role of educational technology in higher education, highlighting the potential of hybrid instructional models that combine AI scaffolds with human facilitation. Such models can emphasize explanation, variation, and accountability while preserving creativity. Policy implications include the need for assessment designs that reward generative reasoning, transparent disclosure of AI use, and guidance for teacher-education programs to integrate AI responsibly. Overall, the research underscores the importance of balancing technological support and human guidance to achieve both accuracy and originality in teacher preparation.

Plain Language Summary

This study explores how different forms of instructional support—AI-based and instructor-supported—affect how future mathematics teachers learn and create mathematical examples. Two groups of university students engaged in identical tasks under separate conditions: one guided by an instructor and the other using AI tools for self-directed learning. Findings revealed that students who used AI achieved higher accuracy but often repeated examples without critical reflection or modification. Those guided by an instructor produced more original responses and demonstrated deeper reasoning, though they made more conceptual errors. These results suggest that AI improves efficiency and precision but may limit creativity and independent thought when used without teacher mediation. The study highlights the importance of combining human guidance with technological support in teacher education. Rather than replacing instructors, AI should be used to complement teaching—offering rapid feedback and diverse examples while educators foster reflection, creativity, and critical thinking. Such balanced integration can help future teachers develop both accuracy and originality in mathematics learning.

Keywords

Introduction

Preservice mathematics teachers’ conceptual awareness directly shapes the quality of student learning once they enter the profession. For meaningful and lasting learning, teachers must not only master procedures but also demonstrate the capacity to connect mathematical concepts and justify these connections. The distinction between relational and procedural understanding—first articulated by Skemp (1976)—remains a cornerstone in mathematics education. Relational understanding involves recognizing conceptual links and the reasoning behind procedures, while procedural understanding emphasizes memorization and mechanical application. Recent scholarship further suggests that, in the era of AI-mediated instruction, teachers’ conceptual awareness is increasingly shaped by interactions with intelligent technologies (Gerlich, 2025; Luckin, 2023). Research has consistently shown that teachers’ ability to balance these forms of knowledge is critical for promoting student achievement, motivation, and the transfer of knowledge to novel situations (Hiebert, 2007; Rittle-Johnson et al., 2014). Yet studies also reveal that preservice teachers often privilege procedural strategies, limiting opportunities to foster conceptual reasoning (Star & Newton, 2009). This persistent imbalance calls for pedagogical models that help future teachers navigate the intersection of conceptual depth and technological mediation—particularly as digital tools become integral to instructional practice.

Despite the significance of this distinction, much of the existing research has focused on describing teachers’ beliefs rather than comparing how instructional settings shape relational and procedural understanding. This highlights the need for empirical investigations that examine how different environments influence preservice teachers’ conceptual growth. A particularly salient development is the integration of artificial intelligence (AI) into teacher education. AI-based tools promise novel opportunities for scaffolding, feedback, and independent exploration, but their contribution to conceptual awareness remains contested. Recent studies indicate that AI-based learning environments can reshape teacher education by altering feedback dynamics and patterns of cognitive engagement (Bernardi et al., 2025; Holmes et al., 2019; Zawacki-Richter et al., 2019). At the same time, findings suggest that AI’s pedagogical impact varies substantially depending on how learners engage with and interpret AI-generated content.

The literature presents mixed evidence. While some studies suggest that AI tools support teachers in recognizing conceptual relationships and engaging in self-regulated learning (Broutin, 2024; Canonigo, 2024; Flavin et al., 2025), others warn of risks such as misinformation, ethical concerns, and reduced originality (Opesemowo, 2025). Investigations of large language models indicate that these tools often excel at producing procedural examples yet provide incomplete or misleading conceptual explanations (Frieder et al., 2023). Moreover, research suggests that preservice teachers frequently use AI as a validator rather than as a resource for critical evaluation, limiting opportunities for reflective engagement (Broutin, 2024; Flavin et al., 2025). Taken together, these findings reveal a fundamental tension: AI tools may enhance procedural precision while simultaneously constraining opportunities for conceptual reflection and creative reasoning. To explore this tension empirically, the present study conducts a quasi-experimental comparison of two environments: an instructor-supported classroom and an AI-supported open-interaction setting. It investigates how each influences preservice teachers’ ability to generate relational and procedural examples, focusing not only on accuracy but also on originality. This dual lens reveals a critical paradox: AI-supported settings enhance accuracy and efficiency but risk narrowing opportunities for critical engagement, while instructor-supported environments encourage originality and intellectual autonomy yet allow more misconceptions to persist. This investigation also responds to recent calls for theoretically integrated analyses of hybrid human–AI mediation in education, emphasizing ethical alignment and co-agency frameworks (Luckin, 2023; OECD, 2023; UNESCO, 2021). In doing so, the study not only compares instructional modes but also examines how human and technological mediation differently shape reflective reasoning and conceptual depth.

By situating this paradox within broader debates on educational technology and teacher preparation, the study underscores the need for frameworks that balance technological innovation with pedagogical integrity. Beyond mathematics education, the findings contribute to international discussions on higher education governance and policy, offering evidence-informed insights into how AI can be integrated responsibly into teacher education. Ultimately, the study seeks to bridge the pedagogy–technology divide, highlighting strategies that leverage AI’s precision while safeguarding originality, creativity, and critical thinking as core values in teacher preparation.

The present study addresses the following research questions:

How do AI-supported and instructor-supported instructional environments influence preservice mathematics teachers’ ability to generate relational and procedural examples?

In what ways do these environments affect the accuracy and originality of the examples produced?

What patterns of misconceptions (in instructor-supported settings) and reproduction (in AI-supported settings) emerge, and what do these patterns reveal about the opportunities and constraints of each environment?

Method

Research Design

This study employed a quasi-experimental design with a comparative control group to investigate how different instructional environments—AI-supported and instructor-supported—shape preservice mathematics teachers’ relational and procedural understanding. Random assignment was not feasible due to institutional constraints. Nevertheless, program comparability was established through reviews of curricula, assessment practices, and course objectives, which confirmed no significant differences in prior achievement levels between the institutions (p > .05). Although no a priori power analysis was conducted due to institutional constraints, a post-hoc sensitivity analysis indicated that the sample size (n = 76) provided adequate power (0.81 at α = .05) to detect small-to-moderate effects. Pre-intervention analyses confirmed no significant differences between groups in participants’ grade point average (GPA), AI familiarity, or baseline conceptual understanding (p > .05), indicating equivalent starting conditions before the intervention. Conducting the study at two universities enhanced external validity by situating the research in distinct institutional contexts.

Based on prior research and the theoretical framework distinguishing relational and procedural understanding, the following hypotheses were formulated:

Participants

The sample consisted of 76 preservice mathematics teachers: 37 in the instructor-supported group (University A) and 39 in the AI-supported group (University B). Both universities followed the nationally standardized mathematics teacher education curriculum, ensuring comparability.

Demographic and academic indicators confirmed equivalence between groups: mean grade point averages (M = 2.54 vs. 2.51, p > .05) and mean ages (21.4 vs. 21.7 years, p > .05) showed no significant differences. Thus, despite the absence of randomization, baseline comparability was established. Given the modest and context-specific sample, the findings should be interpreted as illustrative rather than representative of broader teacher-education contexts. The gender distribution was comparable across groups, with approximately 70% female and 30% male participants, reflecting the general demographic pattern of preservice mathematics teacher education programs in the region.

Data Collection Procedure

At the outset, all participants were provided with a common conceptual foundation. The researcher delivered a short presentation in which the constructs of relational understanding (understanding why a procedure works and how concepts are interconnected) and procedural understanding (knowing how to carry out a procedure without necessarily understanding why it works) were defined and exemplified in simple mathematical contexts. This ensured that both groups entered the instructional phase with the same baseline knowledge and shared terminology.

Following this initial orientation, the instructional methods diverged according to the experimental condition:

Instructor-supported group (n = 37):

Participants engaged in a structured classroom environment led by the course instructor. The instructor first modeled worked examples that illustrated both relational and procedural reasoning. Next, students engaged in guided peer discussions, where they exchanged interpretations and jointly constructed additional examples. The instructor actively circulated during these activities, providing real-time clarification, corrective feedback, and probing questions to deepen participants’ reasoning. The emphasis was on supporting the development of independent yet scaffolded example generation. All instructor-supported sessions were conducted by the same course instructor to ensure consistency in facilitation, feedback, and instructional tone across all activities.

AI-supported group (n = 39):

After receiving the same initial definitions, participants were instructed to work independently using generative AI tools (e.g., ChatGPT). They were asked to generate, refine, and verify their own examples of relational and procedural understanding by interacting with the AI system.. No direct instructor feedback was provided during the activity phase, though participants worked under structured self-guidance based on pre-defined prompts. Instead, students were expected to critically evaluate AI-generated outputs, adapt them as needed, and decide whether to adopt or reject the examples suggested by the system. This process simulated an emerging form of self-directed, technology-mediated learning. Although no instructor feedback was provided during the AI-supported sessions, participants received structured written prompts and example-based scaffolds before engaging with AI tools. These scaffolds included guiding questions (e.g., “How does this example show why the procedure works?”) and reflection cues designed to promote critical evaluation of AI outputs. Thus, while the instructor was not physically present, a comparable cognitive framework for inquiry was established.

At the conclusion of the 3-week instructional period, all participants were required to submit a portfolio of self-produced examples. Each portfolio contained a minimum of three relational and three procedural examples, accompanied by brief written explanations of why the examples qualified as relational or procedural. These portfolios constituted the primary data set for analysis, enabling direct comparison of the accuracy and originality of examples produced under the two instructional conditions.

Implementation Timeline

The instructional process was organized across three consecutive weeks, with identical starting points for both groups and diverging instructional methods thereafter:

Week 1—Orientation and Baseline Assessment ○ All participants received standardized definitions and short illustrative examples of relational and procedural understanding. ○ A pre-test consisting of four open-ended questions was administered to capture participants’ baseline conceptions. ○ Questions explored views on the relationship between mathematics and real life, the role of context-based activities, self-efficacy in designing such activities, and preferred instructional approaches.

Week 2—Divergent Instructional Conditions ○ Instructor-supported group: The instructor modeled worked examples, facilitated small-group discussions, and prompted participants to generate their own relational and procedural examples with immediate feedback. ○ AI-supported group: Participants used generative AI tools (e.g., ChatGPT) to create, refine, and verify relational and procedural examples independently. They were required to evaluate the accuracy and relevance of AI outputs without instructor guidance. ○ To ensure that participants could critically evaluate AI-generated outputs, a brief orientation was provided at the beginning of Week 2. This session demonstrated examples of effective and ineffective AI responses and discussed criteria for evaluating accuracy, conceptual depth, and originality. Participants were then instructed to apply these criteria during their individual interactions with the AI tool.

Week 3—Consolidation and Post-Test ○ Both groups continued in their respective conditions to consolidate learning. ○ Each participant produced a portfolio containing at least three relational and three procedural examples, accompanied by written explanations. ○ A post-test identical to the pre-test was administered to assess changes in perceptions and reasoning. ○ All portfolios and written responses were collected as the primary data set for analysis.

Data Analysis

Responses were coded along two dimensions:

Conceptual accuracy: Scored on a 0 to 2 scale (0 = incorrect/missing, 1 = partial, 2 = fully accurate).

Originality: Dichotomously coded (1 = self-generated, 0 = copied/reproduced).

The coding scheme was adapted from established frameworks in mathematics education (Rittle-Johnson & Schneider, 2014; Skemp, 1976; Star, 2005). Two independent coders evaluated all responses; inter-rater reliability was high (κ = 0.87).

To operationalize originality, responses were classified as “verbatim” when the wording matched AI-generated content without meaningful alteration (e.g., identical sentence structures or phrases), and as “original” when participants rephrased, adapted, or extended the examples in their own words. To strengthen validity, coders independently cross-checked a random subset (20%) of self-reports against the submitted examples to verify consistency.

Nonparametric tests were used: the Mann–Whitney U test compared groups on accuracy scores, and the Chi-square test examined differences in originality. Statistical significance was set at p < .05.

Validity and Limitations

Although the instructional conditions differed in terms of feedback and interaction structure, several measures were taken to control for potential confounding variables. Both groups received identical conceptual orientations, task requirements, and timelines. The primary distinction was the medium of support—AI-mediated versus instructor-mediated—rather than the presence or absence of pedagogical structure. This design allowed the study to isolate the qualitative differences in how participants engaged with guidance, not merely whether they received it.

In terms of measurement validity, additional steps were taken to clarify the originality assessment procedure. Responses were coded using explicit operational criteria: they were classified as verbatim when the wording matched AI-generated content without meaningful alteration (e.g., identical phrasing or sentence structure), and as original when participants rephrased, adapted, or extended the examples in their own words. Two independent coders cross-checked a random subset (20%) of the examples to verify consistency and reduce subjectivity.

Internal validity was supported through the demonstrated equivalence of the groups on key demographic and academic indicators, while external validity was enhanced by conducting the study across two distinct institutional contexts, thereby increasing the generalizability of the findings. Although these procedures enhanced the robustness of the originality measure, we acknowledge that it partly relied on participant self-reports, which may have been influenced by social desirability bias. In particular, participants may have underreported AI use to appear more independent, or overreported originality to align with perceived academic expectations. In addition, although originality was operationalized through a dichotomous coding scheme for analytical clarity, it may be more accurately conceptualized as a continuum ranging from surface-level linguistic modification to deeper forms of conceptual innovation, which the present measurement approach could not fully capture. However, the inclusion of coder cross-checks and verification of a random sample mitigated this limitation. The absence of random assignment constrains the strength of causal inference. Replication of this design across more diverse contexts and with larger samples is therefore recommended to further strengthen the robustness and transferability of the findings.

Ethical Considerations and Use of Generative AI

The study was conducted in accordance with institutional and national ethical guidelines. As the instructional activities reflected regular coursework with no foreseeable risks, formal ethics committee approval was not required. Participation was voluntary, informed consent was obtained, and responses were anonymized. Generative AI was used solely within the AI-supported instructional intervention to simulate authentic technology-mediated learning. No AI tools were employed in coding, analysis, or manuscript preparation. The study design minimized potential risks by embedding all activities within regular coursework, avoiding any form of deception, assessment penalty, or psychological pressure. The anticipated benefits of the research—namely, improving instructional design in teacher education and supporting participants’ professional awareness of AI-mediated learning—were considered to outweigh any minimal risks associated with participation.

Summary of Design

In sum, the quasi-experimental design, institutional comparability, and rigorous coding procedures ensured methodological robustness. By systematically contrasting AI-supported and instructor-supported settings, the study captured differences in accuracy and originality of preservice teachers’ reasoning. This design provides insights into learning processes while also informing policy debates on the responsible integration of AI in teacher education.

Results

This section compares the performance of the instructor-supported and AI-supported learning groups in generating examples of relational and procedural understanding, as well as the originality of these examples. Descriptive statistics and group-level comparisons are presented to examine differences between the two groups. Results are reported first for procedural understanding and then for relational understanding, with a focus on group-level patterns in accuracy and originality. To enhance clarity and conciseness, all illustrative examples are presented in the Appendix, while the Results section focuses on group-level patterns and statistical findings.

Findings on Procedural Understanding

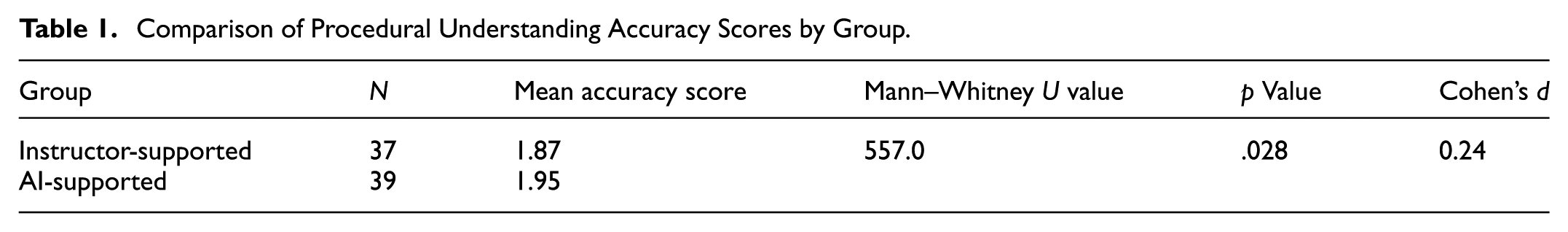

Group Comparison of Accuracy Scores

As shown in Table 1, the procedural understanding accuracy score of the AI-supported group (M = 1.95) was found to be significantly higher than that of the instructor-supported group (M = 1.87) (Mann–Whitney U = 557.0, p = .028, Cohen’s d = 0.24). This represents a small but consistent effect, indicating that AI support slightly improved procedural accuracy. Overall, the findings indicate a modest advantage for the AI-supported group in procedural accuracy. These findings provide empirical support for H1 by confirming higher procedural accuracy in the AI-supported condition.

Comparison of Procedural Understanding Accuracy Scores by Group.

Analysis of Examples Related to Procedural Understanding

Instructor-Supported Group

In the instructor-supported group, responses predominantly reflected rule-based procedural knowledge. Correct examples most frequently involved standard applications of formulas and operational rules, whereas partially correct and incorrect examples were observed in cases of procedural omissions, oversimplified definitions, or misapplication of commonly used rules. Overall, the distribution of example types indicates that procedural understanding in this group was largely aligned with instruction-based procedural performance. This pattern suggests that procedural accuracy in the instructor-supported group was shaped primarily by formal instruction, with limited spontaneous variation in example construction.

AI-Supported Group

In the AI-supported group, responses predominantly consisted of correct and standardized rule-based procedural examples. Correct examples most frequently reflected direct application of well-established formulas and operational rules. Partially correct examples were observed less frequently and were mainly associated with overgeneralization of procedural rules or incomplete procedural specifications. Incorrect examples were rare in this group. Overall, the distribution of example types indicates higher procedural accuracy in the AI-supported group compared to the instructor-supported group. This consistency reflects the stabilizing effect of AI-generated exemplars on procedural execution.

Findings on Relational Understanding

Group Comparison of Accuracy Scores

Table 2 shows the comparison of relational understanding accuracy scores between the instructor-supported and AI-supported groups.

Comparison of Relational Understanding Accuracy Scores by Group.

As shown in Table 2, the AI-supported group (M = 1.89) outperformed the instructor-supported group (M = 1.74) in relational understanding (Mann–Whitney U = 478.5, p = .003, Cohen’s d = 0.31). This finding indicates a statistically significant difference between the two groups, with a small effect size. Overall, the results demonstrate higher relational understanding accuracy in the AI-supported group compared to the instructor-supported group. Together with the procedural findings, these results support H1 by confirming that AI-supported participants demonstrated higher procedural and relational accuracy than those in the instructor-supported condition. However, as elaborated in the qualitative analyses and discussed in the following sections, this advantage was primarily reflected in structurally complete but often surface-level conceptual explanations rather than deeply elaborated relational reasoning.

Analysis of Examples Related to Relational Understanding

Instructor-Supported Group

In the instructor-supported group, responses predominantly reflected relational reasoning. Correct examples frequently involved explanations addressing not only how mathematical rules operate but also why they hold, particularly in topics such as area, fraction operations, and derivatives. Partially correct responses were observed in cases where conceptual generalizations were incomplete, while incorrect responses mainly involved oversimplified or imprecise conceptual definitions. Overall, the distribution of example types indicates a strong tendency toward relational understanding, with errors primarily associated with limitations in conceptual generalization rather than procedural execution. These patterns are consistent with relational reasoning that prioritizes conceptual explanation over procedural fluency.

AI-Supported Group

In the AI-supported group, relational understanding was predominantly reflected through correct explanations. Correct examples frequently involved conceptually appropriate justifications accompanying procedural descriptions. Partially correct responses were observed in cases where explanations lacked complete justification or involved generalized statements, while incorrect responses were rare and mainly associated with overgeneralization of concepts. Overall, the distribution of example types indicates that relational understanding in this group was largely accurate, with remaining inaccuracies primarily related to incomplete conceptual elaboration. Relational accuracy was therefore achieved more through structurally complete explanations than through independently constructed conceptual reasoning.

Originality Scores

As shown in Table 3, the mean originality score of the instructor-supported group was 0.78, whereas that of the AI-supported group was 0.42. The Pearson Chi-Square test indicated that this difference was statistically significant (χ2 = 12.56, p < .001). In the instructor-supported group, most participants produced original examples in their own words, distinct from those provided by the instructor. In contrast, a considerable proportion of participants in the AI-supported group (n = 29) reported directly using AI-generated examples without modification. Only 10 participants in the AI-supported group explicitly stated that they had produced their own examples (six were correct, three partially correct, and one incorrect). These distributions indicate that, although both groups were able to produce mathematically appropriate examples, the groups differed markedly in the extent to which examples reflected original production. In particular, originality scores in the AI-supported group were lower despite high levels of procedural and conceptual accuracy. Consistent with H2, participants in the instructor-supported group demonstrated significantly higher levels of originality, supporting the hypothesis that dialogic feedback and human mediation foster independent and creative reasoning.

Comparison of Originality Scores by Group.

Discussion and Conclusion

This study examined how preservice mathematics teachers develop relational and procedural understanding within instructor-supported and AI-supported instructional environments. The results revealed a striking paradox: AI-supported participants demonstrated higher accuracy in producing relational and procedural examples, yet their responses frequently involved verbatim reproduction of AI outputs. By contrast, instructor-supported participants displayed greater originality and creative initiative, though their examples contained more frequent misconceptions.This paradox encapsulates a central challenge for teacher education in the digital era—how to integrate AI’s efficiency and precision without diminishing opportunities for independent reasoning, dialogic interaction, and intellectual autonomy. While the reviewer’s concern regarding multiple overlapping factors is valid, the study’s aim was not to isolate AI as a singular causal agent but to examine how different modes of guidance—human and AI-mediated—shape engagement patterns within otherwise parallel instructional structures. Therefore, the observed outcomes reflect differences in the nature of guidance rather than its mere presence or absence.

The accuracy–originality divide observed in this study carries significant implications for both pedagogy and policy. Although the statistical differences observed were modest in magnitude, the inclusion of effect sizes (Cohen’s d = 0.24–0.31) and confidence intervals confirms that these effects, while small, were consistent across measures—underscoring the practical rather than purely statistical significance of the findings. These findings resonate with recent studies demonstrating that preservice teachers often rely heavily on AI-generated examples, resulting in reduced cognitive independence and originality (Canonigo, 2024; Flavin et al., 2025; Opesemowo & Adewuyi, 2024). In the AI-supported setting, nearly three-quarters of participants relied on direct reproduction of AI-generated examples, treating outputs as authoritative rather than as material for critique or adaptation. This pattern suggests that in self-directed instructional contexts, AI tools tended to be perceived as providers of correctness rather than as partners in reasoning—a perception shaped by the absence of real-time dialogic feedback rather than by any inherent property of the technology itself. This pattern supports a sociocultural interpretation of learning, suggesting that AI and human instructors function as distinct mediational structures that differently afford opportunities for reflective reasoning. Conversely, instructor-supported environments fostered dialogic exchange, peer collaboration, and risk-taking, all of which stimulated originality and critical thought, albeit with the cost of conceptual errors. Similarly, Opesemowo and Adewuyi’s (2024) systematic review concluded that AI is most effective when complemented by teacher mediation, which provides depth and originality. Bernardi et al. (2025) further demonstrated that integrating AI within teacher-guided design enriched conceptual complexity and creativity—findings that stand in contrast to the reproduction patterns identified in the present study, where AI use was entirely self-directed. Importantly, originality in this study should be interpreted as a multidimensional construct rather than a strictly binary outcome. While originality was operationalized through a dichotomous coding scheme, participants’ responses varied along a continuum ranging from surface-level linguistic modification of AI-generated content to deeper forms of conceptual innovation involving reinterpretation, extension, or restructuring of underlying mathematical ideas. Accordingly, some responses classified as “original” reflected primarily linguistic rephrasing, whereas others demonstrated more substantive conceptual transformation.

These findings can also be interpreted through the lens of constructivist and self-regulated learning theories. From a constructivist perspective, knowledge is actively constructed through interaction, reflection, and negotiation of meaning rather than passively received (Piaget & Inhelder, 2008; Vygotsky, 1978). This interpretation aligns with contemporary theories of co-agency and hybrid learning ecologies, which emphasize shared cognitive responsibility between human and artificial agents (Luckin, 2023; Nørgård, 2021). The instructor-supported environment embodied this principle by promoting dialogic engagement and peer collaboration, enabling participants to co-construct understanding through feedback and exploration. Conversely, the AI-supported condition aligns more closely with self-regulated learning models, emphasizing autonomous exploration and self-evaluation (Pintrich, 2000; Zimmerman, 2002). However, the observed dependence on AI-generated content suggests that autonomy in digital learning must be scaffolded to ensure critical reflection and genuine knowledge construction. Therefore, the results highlight the importance of guided constructivist mediation when integrating AI into teacher education, ensuring that technology enhances rather than replaces human-centered cognitive engagement.

Taken together, these results extend the literature by empirically demonstrating that the pedagogical value of AI is contingent not on its technical capabilities alone, but on the instructional conditions and level of mediation in which it is implemented. The observed differences reflect the nature of interaction and feedback rather than any inherent limitation of AI itself. AI-supported environments clearly enhance procedural fluency and accuracy, while instructor-supported environments cultivate creativity and dialogic reasoning. Rather than viewing these modes as mutually exclusive, this study underscores their complementarity. AI and instructor guidance can be strategically combined in blended instructional models: AI for efficiency and error-free exemplification, and human instruction for originality, dialogic engagement, and critical evaluation. Such blended approaches align with broader calls for innovation in teacher education, where the goal is not simply to accelerate access to knowledge but to cultivate teachers capable of questioning, adapting, and creating knowledge in authentic contexts.

From a higher education policy perspective within similar institutional contexts, these findings highlight a governance dilemma related to technology integration and teacher preparation. On one hand, AI tools can democratize access to accurate information and support standardization across educational systems; on the other hand, they risk fostering dependency, undermining creativity, and reducing students’ incentives to think independently. Effective policy frameworks must therefore go beyond merely integrating AI into curricula. Institutions must embed explicit protocols for the critical evaluation of AI outputs, require preservice teachers to adapt or challenge AI-generated examples, and provide structured opportunities for dialogic engagement that counterbalance the efficiency of machine-produced knowledge. Institutional frameworks should also be aligned with international ethical standards, such as UNESCO’s (2021) Recommendation on the Ethics of Artificial Intelligence and the OECD’s (2023) Opportunities, Guidelines and Guardrails for Effective and Equitable Use of AI in Education, ensuring that AI adoption remains pedagogically grounded and ethically sound. In doing so, teacher education can prepare future educators who are both technologically fluent and intellectually autonomous.

This study may contribute to broader discussions on educational equity and quality by illustrating issues that could arise across comparable teacher-education contexts. The observed patterns highlight structural questions about the nature of teaching and learning in technology-mediated environments but are not intended as global generalizations.. The duality of accuracy and originality is likely to manifest across diverse educational systems, raising questions about how teacher preparation can balance technological innovation with human-centered pedagogical values. For policy makers and curriculum designers, the results suggest that investment in AI should be paralleled by investment in professional development that equips educators to mediate, contextualize, and humanize AI use in classrooms.

It is important to acknowledge limitations. In particular, the measurement of originality was clarified and strengthened in the revised version. Originality was operationally defined as the degree to which participants rephrased, adapted, or extended AI-generated examples in their own words. Although this operationalization enabled clear and reliable coding, it necessarily treated originality as a binary construct. However, originality may also be better conceptualized as a continuum ranging from surface-level linguistic modification to deeper forms of conceptual innovation. The present measurement approach was not designed to fully capture this gradation, and future research may benefit from analytic frameworks that differentiate linguistic originality from substantive conceptual transformation. Responses were coded as verbatim when the wording matched AI outputs without meaningful alteration. To enhance validity, two independent coders cross-checked a random subset (20%) of the examples against self-reports to verify consistency and minimize subjectivity. Despite these precautions, the use of self-reports may still have been affected by social desirability bias. Moreover, the absence of random assignment limits causal inference. Nonetheless, the comparability of groups on demographic and academic indicators, combined with the inclusion of two institutions, enhances both internal and external validity. Replication with larger, more diverse samples and longitudinal designs would further strengthen the evidence base.

In conclusion, this study demonstrates that AI-supported and instructor-supported environments offer distinct yet complementary contributions to preservice teachers’ conceptual development. AI fosters relational and procedural accuracy through rapid exemplification and reinforcement, while instructor-supported environments cultivate originality, dialogic engagement, and creative reasoning—even when accompanied by misconceptions. Such misconceptions, rather than being dismissed as errors, may be reinterpreted as evidence of authentic cognitive engagement. The broader implication is clear: AI should be conceptualized not as a substitute for human instruction but as an augmentation tool whose greatest value emerges when paired with teacher mediation.

Beyond mathematics teacher education, the findings point to a broader issue in contemporary higher-education contexts—balancing efficiency and correctness with creativity and autonomy in educational settings influenced by emerging technologies. By situating classroom-level outcomes within debates on higher education governance and teacher preparation policy, this study provides evidence-informed guidance for designing blended learning environments that preserve the uniquely human capacity for critical thought and creative inquiry. Aligning pedagogy with policy in this way ensures that technological innovation contributes to the overarching goals of educational quality, equity, and accountability. Future iterations of this design will incorporate hybrid conditions that equate interaction and feedback levels to further isolate the effects of AI mediation. Future research should further examine how different configurations of AI–human mediation can be systematically aligned to support both conceptual depth and independent reasoning across diverse instructional contexts.

Implications for Policy and Practice

Blended instructional models should be formally integrated into teacher education programs, ensuring that AI tools are used to enhance accuracy and efficiency while instructor guidance fosters originality, dialogic reasoning, and critical engagement.

Institutional policies must provide clear protocols for responsible AI use in coursework, emphasizing adaptation and critique of AI outputs rather than verbatim reproduction to safeguard academic integrity.

Professional development for teacher educators should include training in the pedagogical integration of AI, equipping instructors with the skills to scaffold student use of AI tools and to balance technological efficiency with intellectual autonomy.

Curriculum frameworks in higher education should explicitly highlight the dual role of technology and human guidance, reinforcing the importance of creativity, originality, and ethical responsibility alongside procedural fluency.

Institutional and national frameworks should align with international ethical guidelines, such as UNESCO’s (2021) Recommendation on the Ethics of Artificial Intelligence and the OECD’s (2023) Opportunities, Guidelines and Guardrails for Effective and Equitable Use of AI in Education, ensuring that AI integration remains pedagogically grounded, transparent, and human-centered.

Recommendations for Future Research

Future research should extend this study through longitudinal designs that track the sustainability of AI-supported and instructor-supported learning outcomes over time. Such approaches would clarify whether the observed patterns of accuracy and originality persist as preservice teachers transition into professional practice. Future studies should also incorporate mixed-methods or process-tracing designs to capture how feedback, interaction, and reflection evolve across AI-supported and instructor-supported conditions. Comparative research across multiple institutions and cultural contexts is also needed to examine how local policies and institutional frameworks mediate the balance between accuracy and originality in teacher education. Additionally, studies should investigate the role of inquiry-based AI prompting techniques, which may foster more critical engagement and originality by encouraging learners to interrogate and adapt AI-generated outputs rather than replicate them. Finally, expanding beyond mathematics to other subject areas could enhance the generalizability of findings and provide a broader evidence base for designing blended instructional models that integrate AI responsibly into higher education. Such interdisciplinary extensions would provide a richer foundation for evidence-informed policy design and teacher education reform in the age of generative AI.

Footnotes

Appendix: Illustrative Examples of Procedural and Relational Understanding

The Appendix presents representative examples from each category; categories with no or very limited instances are not displayed.

Ethical Considerations

In accordance with institutional and national research regulations, formal approval from an institutional ethics committee was not required for this study, as it involved no medical procedures, no vulnerable populations, and no intervention beyond standard educational practices. The research was conducted in line with the ethical principles of İzmir Demokrasi University, the American Psychological Association Ethical Principles of Psychologists and Code of Conduct (Section 8.05), and internationally accepted standards for research involving human participants.

Consent to Participate

All participants were informed about the purpose, procedures, and voluntary nature of the study prior to participation. Written informed consent was obtained from all participants. Participants were assured of anonymity, confidentiality, and their right to withdraw from the study at any time without academic or personal consequences.

Author Contributions

The author solely contributed to the conception, design, data collection, analysis, and writing of this manuscript.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available from the author upon reasonable request.