Abstract

In today’s digital era, digital government platforms play a crucial role in providing citizens with access to essential information and services, influencing their satisfaction and trust in governance. This research delves into the complexities of issue reporting and feedback mechanisms within Saudi Arabia’s digital government framework, aiming to identify and address challenges that hinder seamless user engagement and effective issue resolution. This research developed “Empower,” a unified platform that consolidates various reporting channels into a single, streamlined interface, enhancing user interaction and accessibility. By integrating user-centric design principles, “Empower” simplifies the reporting process, facilitates efficient issue management, and adheres to Service Level Agreements (SLAs), significantly improving government responsiveness and operational transparency.

Plain Language Summary

Digital government services often provide many ways for citizens to report problems, such as emails, websites, or social media, but these channels are usually fragmented and difficult to manage across government agencies. This study presents Empower, a unified digital platform designed to improve how issues related to government digital services are reported, tracked, and resolved. The platform brings citizen reporting, agency handling, and supervisory oversight together in one system, making responsibilities and follow-up clearer. Using a design science approach, the study developed and evaluated a working prototype with government stakeholders. The results show that integrating reporting with clear workflows, service-level agreements, and oversight dashboards can improve transparency, accountability, and coordination in digital government quality assurance.

Introduction

In the contemporary digital era, digital government serves as a key enabler of citizens’ access to critical information and public services (Garson & Khosrow-Pour, 2008; Heeks, 2006; Viet & Huntgeburth, 2014). The quality and effectiveness of digital government platforms are critical, with quality assurance serving as a central factor that enhances citizen satisfaction, strengthens trust in governance, and supports the effective delivery of services (Pressman & Maxim, 2015; Sommerville, 2016).

The current digital government framework encounters a complex set of challenges, most notably within its issue reporting and feedback mechanisms. These mechanisms are highly fragmented, dispersed across multiple government agencies, and consequently create a disjointed and inefficient environment for reporting and addressing issues (Mahlangu & Ruhode, 2021). This complexity hinders the swift resolution of reported concerns, significantly impeding the government’s efficacy in addressing societal issues.

Furthermore, the lack of user-centric design principles within these reporting channels serves as a major barrier to effective citizen engagement and participation (Chang et al., 2020; Alkhoori et al., 2023; Shalan, 2024). The absence of intuitive, user-friendly interfaces compounds this issue, making it difficult consequently, the combination of systemic fragmentation and the lack of user-centric approaches constitutes a substantial barrier to fostering meaningful citizen engagement and advancing effective governance strategies.

The primary objective of this research is to strengthen the existing issue reporting and feedback mechanisms within Saudi Arabia’s digital governance framework. This involves developing an integrated platform that consolidates the diverse and dispersed reporting channels found across multiple government agencies. This consolidation aims to simplify the user experience significantly, allowing citizens to report issues seamlessly without the complexities involved in navigating through various platforms, thereby enhancing service quality and user satisfaction.

The significance of this research lies in its potential to reshape the digital government landscape into a more inclusive, efficient, and responsive system that aligns with user needs and expectations. By addressing issues of poor performance and delayed response times, it enhances service quality, promotes user satisfaction, and strengthens the overall effectiveness of digital government service delivery.

This paper is divided as follows: Section 2 covers the related work; Section 3 presents the conceptual foundation of the research; the research design is described in Section 4. The three main phases of our solution are provided then in the next three sections, before discussing the results in Section 8 and concluding the paper in Section 9.

Related Work

Background

Digital government, or e-government, denotes the strategic integration of information and communication technologies (ICT) into governmental functions. This transformation redefines the interaction between state institutions and society, fostering more transparent, efficient, and mutually beneficial relationships (Viet & Huntgeburth, 2014).

In the past, citizens typically engaged with government agencies through face-to-face meetings or paperwork, processes that were often burdensome due to bureaucracy and time-consuming procedures. Digital government, on the contrary, seeks to simplify these interactions by utilizing digital platforms, thereby improving the overall efficiency and effectiveness of service delivery. Citizens today can access a broad spectrum of government services online—ranging from permit and license applications to essential information—conveniently from their homes (Heeks, 2006).

The operational dynamics of digital government are centered on a diverse set of digital tools, including websites, mobile applications, social media platforms, and user-friendly e-portals. These digital interfaces are intricately connected to robust databases, enabling citizens to access government services, retrieve information, and engage in meaningful communication with government agencies. These digital platforms prioritize user-friendliness, accessibility, and rigorous data security, contributing to a seamless and effective user experience (Garson & Khosrow-Pour, 2008; Viet & Huntgeburth, 2014).

In the domain of digital government, Quality Assurance (QA), User Acceptance Testing (UAT), User Experience (UX), and User Interface (UI) are critical factors that shape the success of digital platforms. Together, they ensure reliability, efficiency, and user-centric design, strengthening the connection between government agencies and the citizens they serve.

Quality Assurance (QA) is a central practice for ensuring the reliability, efficiency, and overall performance of websites and e-services. It is a systematic approach that aligns the outcomes with specific quality criteria, encompassing functionality, usability, reliability, and security. This approach involves continuous monitoring, evaluation, and enhancement of processes critical to the development and delivery of digital solutions in the government sector (Pressman & Maxim, 2015; Sommerville, 2016). Within the domain of software development, QA sets the foundation for precise quality standards and expectations regarding the intended product or service. These standards cover a broad spectrum of requirements, spanning from functionality to user experience and security (Sommerville, 2016).

QA entails systematic verification and validation of the software to uncover defects, bugs, or deviations from specified requirements (Myers et al., 2012). This methodical verification process occurs at various levels, from testing individual components to comprehensive system-wide testing, ensuring the integrity of the entire system. Adopting such structured approach is essential for detecting and resolving issues early in the development lifecycle, thereby minimizing rework, reducing costs, and saving valuable time and resources (Myers et al., 2012; Pressman & Maxim, 2015).

Furthermore, User Experience (UX) and usability principles are especially relevant to digital government issue reporting, where citizen engagement is voluntary and interaction breakdowns can discourage reporting and follow-up. Consistent usability and transparent interaction flows can reduce user effort and improve participation in feedback and reporting mechanisms. This relevance is particularly important when reporting channels are fragmented across agencies, increasing cognitive load and reducing trust in responsiveness (Garrett, 2010; Rubin & Chisnell, 2011).

An intuitive interface and seamless navigation remain important enablers of accessibility and inclusivity in digital government services. In the context of issue reporting, these qualities help ensure that users can submit reports and track progress without unnecessary complexity (Hassenzahl & Tractinsky, 2006; Nielsen & Loranger, 2006).

Current Methods for Issue Reporting in Digital Government Services

In the evolving landscape of digital government services, effective mechanisms for issue reporting and feedback are paramount in ensuring user-centric service improvement. This section explores key channels through which users can report issues and interact with government agencies.

Built-in Reporting and Feedback Features: these are commonly integrated into government websites to enable users to report issues during service interaction. While these mechanisms provide a convenient entry point for user feedback, they are typically implemented at the level of individual websites and operate independently across agencies. As a result, such features often lack standardized workflows for validation, follow-up, or escalation beyond the hosting platform. Although widely adopted in Saudi governmental websites, their isolated implementation limits their effectiveness as instruments for cross-agency quality assurance and coordinated issue resolution (Tafaul Platform, 2025).

Email Support: it is commonly used by government agencies as a direct channel for users to report software-related issues and request assistance. While this channel allows individualized communication, it typically relies on unstructured exchanges that vary across agencies and lack standardized processes for tracking, prioritization, or escalation. As a result, email-based reporting provides limited visibility into issue resolution progress and does not readily support cross-agency coordination or accountability. Although widely adopted within Saudi government services, its effectiveness as a quality assurance mechanism remains constrained by these structural limitations (Reddick, 2005).

Phone Support: it provides a direct communication channel through which users can report issues or seek assistance from government agencies. While this mode of interaction enables immediate, verbal clarification, it is inherently transient and difficult to document systematically. As a result, phone-based reporting often lacks formal records, standardized categorization, and transparent tracking of issue resolution. These characteristics limit its suitability for structured quality assurance and cross-agency accountability, particularly when compared to digital mechanisms that support traceability and auditability (Reddick, 2005).

Live Chat Support: it enables real-time, text-based interaction between users and government support representatives during service use. While this channel can facilitate timely clarification and immediate assistance, interactions are often handled in isolation and may not be systematically integrated into formal quality assurance or issue tracking workflows. Without standardized logging, categorization, and escalation mechanisms, live chat exchanges risk remaining ephemeral and difficult to audit. Consequently, despite improving responsiveness at the point of interaction, live chat support provides limited support for sustained accountability and cross-agency issue management (Elmorshidy, 2013).

Social Media Channels: these channels are increasingly used by government agencies as informal platforms for receiving user complaints and service-related feedback. While these channels enable visible and timely interaction, reports submitted through social media are typically unstructured and dispersed across multiple platforms. This fragmentation complicates systematic tracking, prioritization, and resolution of issues, and limits the ability to integrate such reports into formal quality assurance or accountability workflows. As a result, despite improving transparency and public responsiveness, social media-based reporting remains weakly suited for coordinated, auditable issue management across government services (Nam, 2012; Schrock, 2018).

Store Feedback and Ratings: these provide aggregated, user-generated assessments of digital services within application marketplaces. While these ratings can signal general satisfaction levels and highlight recurring usability concerns, they are typically coarse-grained and detached from formal issue resolution processes. Such feedback lacks contextual detail, standardized categorization, and mechanisms for agency response or escalation. Consequently, store-based ratings offer limited value for systematic quality assurance or accountable issue management within digital government services (Pagano & Maalej, 2013).

Surveys and Polls: They are commonly used to collect aggregated user feedback on digital government services over defined time periods. While these instruments provide valuable insights into user satisfaction and perceived service quality, they are typically retrospective and detached from real-time issue handling. As such, survey-based feedback lacks the immediacy, traceability, and procedural integration required for effective quality assurance and coordinated issue resolution. Consequently, although informative for strategic assessment, surveys and polls offer limited support for operational accountability in digital government contexts (Benlahcene et al., 2024; Susha & Grönlund, 2014).

Feedback Pop-ups: these provide in-context prompts that allow users to submit comments or report issues during service interaction. While these mechanisms can lower the barrier to feedback submission, the resulting input is often brief, unstructured, and weakly integrated into formal issue management workflows. Without standardized validation, prioritization, and tracking processes, feedback collected through pop-ups risks remaining isolated and difficult to act upon systematically. As a result, feedback pop-ups contribute to issue awareness but offer limited support for accountable quality assurance in digital government services (Bangor et al., 2008).

Page Content Rating: This mechanism allows users to provide lightweight assessments of content relevance or usefulness within digital government services. While such ratings can inform content refinement and identify general satisfaction trends, they are not designed to capture actionable service issues or support structured follow-up. The absence of contextual detail, validation workflows, and accountability mechanisms limits their usefulness for quality assurance and issue resolution. Consequently, page content ratings contribute to content optimization but offer minimal support for coordinated governance-oriented issue management (Pagano & Maalej, 2013).

While these channels improve accessibility and offer multiple entry points for citizen feedback, they are largely isolated from one another, limiting their effectiveness for coordinated quality assurance, cross-agency integration, and accountable issue resolution. A primary concern underscored in the literature revolves around the fragmented landscape of platforms dedicated to issue reporting and feedback mechanisms (Mahlangu & Ruhode, 2021). The growing number of diverse platforms across government agencies often leads to confusion and inefficiencies, discouraging active user reporting. Such fragmentation complicates the user experience and impairs the government’s capacity to address concerns promptly and effectively. Additionally, the current issue reporting channels lack a genuinely user-centric design (Chang et al., 2020; Shalan, 2024). Although literature emphasizes user-centric service delivery, existing reporting channels often fail to reflect this principle, leaving users to navigate fragmented systems and encounter suboptimal experiences. The absence of a seamless and intuitive design acts as a significant barrier to efficient issue reporting.

Literature Review

Although numerous systems exist to facilitate citizen reporting and feedback, they often remain fragmented, inconsistent, and insufficiently integrated into broader governance structures. For example, U.S. 311 systems have long provided centralized channels for reporting local issues, yet studies highlight persistent weaknesses in equitable participation, follow-up, and accountability across agencies (Schrock, 2018). Similarly, the Begium’s FixMyStreet platform improves accessibility through location-based reporting, but evidence reveals disparities in usage across socio-demographic groups and limited mechanisms for ensuring agency responsiveness (Pak et al., 2017). Similarly concerns have also been noted in the use of civic apps in European cities, where adoption is high but long-term engagement and responsiveness from agencies remain uneven (Hilgers & Schmidthuber, 2018). These cases illustrate that while reporting tools can increase accessibility, they frequently fall short of creating unified, accountable environments for issue resolution.

Research in Asian contexts further underscores these challenges. In China, Ye et al. (2023) demonstrate that citizen feedback portals surface valuable insights into service dissatisfaction, yet they rarely embed mechanisms for follow-up or cross-agency coordination. A recent review of e-participation systems also emphasizes that global platforms typically measure adoption and satisfaction, but neglect deeper aspects such as operational reliability, SLA compliance, and supervisory oversight (Benlahcene et al., 2024). Broader reviews of e-government architectures confirm that fragmentation and interoperability remain systemic barriers, impeding governments’ ability to manage issues across multiple agencies efficiently (Baheer et al., 2020). Similar conclusions emerge in South Korea, where Nam (2012) observes that even advanced e-participation systems tend to prioritize technological solutions over institutional accountability and user empowerment.

Regional experiences illustrate similar patterns. The UAE’s unified digital platform policy (UAE Government, 2021) and Bahrain’s Tawasul initiative (Bahrain Government, 2020) represent important steps toward centralizing citizen feedback. However, these systems remain largely focused on providing service access rather than embedding quality assurance, SLA monitoring, or performance dashboards to ensure accountability. Similar findings are reported in Qatar, where early attempts to consolidate complaint channels were hindered by interoperability challenges and limited transparency in resolution processes (Shalan, 2024). Within Saudi Arabia, studies of digital governance highlight the importance of inclusivity, usability, and citizen trust in service adoption (Al-Mamary & Alshallaqi, 2023). Yet, despite initiatives such as the Absher system that streamline service access, practical implementations of comprehensive issue reporting platforms, those unifying fragmented channels, enabling agency validation workflows, and embedding structured feedback loops, are still limited.

At a broader level, systematic reviews of e-government performance (A. J. Meijer & Rodríguez-Bolívar, 2013; Susha & Grönlund, 2014) reveal that while digital tools enable governments to collect feedback more efficiently, they often fail to translate this input into accountability structures or quality assurance frameworks. This disconnect reinforces the observation that fragmentation, weak user-centricity, and insufficient oversight are not isolated to a single region but represent systemic limitations in digital governance worldwide.

Existing systems demonstrate the value of centralized access and citizen participation; however, prior studies consistently report fragmentation, limited user-centricity, and weak accountability associated with these systems. To date, the literature provides limited evidence of platforms that fully integrate the essential elements of intuitive citizen reporting, cross-agency unification, SLA-driven accountability, and supervisory oversight. Addressing this multidimensional gap constitutes the central contribution of the Empower platform, which consolidates dispersed reporting mechanisms into a unified system designed to strengthen efficiency, inclusivity, and trust in digital government.

Conceptual Foundation

In response to the limitations identified in current digital government issue reporting methods (Sections 2.2 and 2.3), the primary objective of this research is to strengthen existing issue reporting and feedback mechanisms embedded within digital governance frameworks through the development of a unified platform that consolidates disparate reporting channels and interfaces seamlessly with multiple government agencies.

This research adopts the Design Science Research (DSR) methodology (Peffers et al., 2007; von Brocke et al., 2020) as the guiding paradigm. DSR is particularly suited for addressing complex real-world problems by developing and evaluating innovative artifacts that respond directly to empirically observed gaps. In this study, the artifact is the Empower platform, a user-centric solution for reporting Quality Assurance (QA) issues within digital government services.

To strengthen rigor and analytical generalizability, the design of the Empower platform is informed by established theories from digital government, information systems, and public administration research. In particular, User-Centered Design (UCD) theory emphasizes designing systems around users’ needs, capabilities, and contexts to achieve usability, learnability, and transparency of interaction (Garrett, 2010; Rubin & Chisnell, 2011). As demonstrated in prior digital government studies reviewed in Section 2.3, citizen engagement with reporting platforms is largely voluntary, and poorly designed interfaces, fragmented workflows, and unclear feedback mechanisms significantly reduce participation and trust (Chang et al., 2020; Nielsen & Loranger, 2006).

Prior research on e-government applications consistently shows that simplifying interaction flows, reducing cognitive load, and making system status visible are central factors influencing adoption, satisfaction, and sustained use (Chang et al., 2020; Husain, 2023). However, the literature also indicates that many existing reporting platforms fail to operationalize these principles consistently, requiring users to navigate fragmented channels and opaque processes (Hilgers & Schmidthuber, 2018; Mahlangu & Ruhode, 2021; Schrock, 2018).

In parallel, accountability theory in public administration conceptualizes accountability as the obligation of public institutions to explain, justify, and take responsibility for their actions to relevant stakeholders (Bovens, 2007). Subsequent work distinguishes accountability as a normative value from accountability as an operational mechanism, emphasizing the role of institutionalized processes, monitoring, and oversight in ensuring enforceability rather than symbolic compliance (Bovens, 2010).

Viewed through this accountability lens, the literature reviewed in Section 2.3 reveals that many digital government reporting platforms emphasize transparency and participation while providing limited support for enforceable follow-up, cross-agency coordination, or supervisory oversight (Benlahcene et al., 2024; Nam, 2012; Schrock, 2018; Ye et al., 2023). In response, accountability in digital government increasingly becomes embedded within information systems through traceable workflows, audit trails, performance indicators, and supervisory dashboards (A. Meijer & Bolívar, 2016). Platforms that merely collect citizen feedback without such mechanisms risk becoming symbolic rather than effective governance tools.

These theoretical perspectives directly inform the design of the Empower platform, where citizen-reported issues are operationalized as accountable cases governed by service-level agreements (SLAs), validation workflows, and escalation rules. The inclusion of supervisory dashboards for the Digital Government Authority reflects established findings that performance dashboards can function as accountability instruments by consolidating and visualizing cross-organizational data to support governance and oversight (Matheus et al., 2020).

Research Design

Research Approach

The methodology was redefined into three main phases, reflecting both the DSR framework and the practical steps undertaken in the study.

Phase 1: Problem Understanding and Requirements Analysis

The first phase focused on understanding the challenges in existing QA reporting mechanisms across Saudi Arabia’s digital government platforms. A requirements analysis was conducted to highlight the fragmented nature of issue reporting systems, the lack of user-centric design, and limited responsiveness from government agencies.

To capture stakeholder needs, semi-structured interviews were carried out with representatives from the Digital Government Authority (DGA), quality assurance experts, business analysts, and UX/UI specialists. This process clarified both functional and non-functional requirements, ensuring that the envisioned platform aligned with citizen expectations as well as institutional constraints.

Phase 2: System Design and Development

Building on the elicited requirements, this phase involved the conceptualization, design, and development of the Empower platform. A system architecture was proposed, incorporating both a mobile application and a web portal to facilitate accessibility for citizens and government agencies.

The design prioritized user-centric principles, with emphasis on intuitive navigation, accessibility, and SLA-driven issue management. Iterative development was carried out using modern technologies such as React Native, ReactJS, and API-based back-end integration, ensuring modularity and scalability.

Phase 3: Demonstration and Evaluation

The third phase validated the effectiveness of the Empower platform through demonstration and evaluation activities. The platform was showcased in controlled settings to stakeholders, highlighting how issues could be reported, tracked, and resolved in real-time. User feedback was collected from QA experts, business analysts, and DGA representatives to assess system usability, effectiveness, and alignment with predefined objectives. Performance measures included user satisfaction, efficiency in issue reporting, and compliance with Service Level Agreements (SLAs).

Stakeholders

The project entails active collaboration with a consortium of key stakeholders, each contributing invaluable insights and perspectives to enhance our understanding of the digital government landscape. These stakeholders include the Digital Government Authority (DGA), Quality Assurance (QA) professionals, Business Analysts (BAs) and UX/UI experts.

Delving into an in-depth exploration, the following outlines the critical roles played by these key stakeholders:

DGA: As the supervisory agency, the DGA assumes a regulatory and oversight role in the digital government landscape. Their perspective is crucial in ensuring compliance with standards, regulations, and the overall governance of the digital government.

QA Professionals: Bringing a wealth of experience, QA professionals play an important role in assessing and validating the functionality and performance of digital government. Their role is to maintain the high standards of quality and reliability expected in the digital government domain.

BAs: BAs play an important role in understanding and defining the business processes and requirements underpinning digital government. Their perspective helps align digital solutions with organizational goals and user needs.

UX/UI Experts: UX/UI professionals focus on optimizing the user experience by refining the design and interface of digital government. Their contributions significantly influence the creation of user-friendly and accessible interfaces, enhancing the overall user experience.

An overview of the representatives from each stakeholder group who actively contributed to the interviews conducted in this project is presented in Table 1.

An Overview of the Stakeholders Representatives.

Problem Understanding and Requirements Analysis

Semi-structured interviews with stakeholders provided deep insights into the challenges of quality assurance (QA) reporting in digital government platforms and the requirements for a unified solution. These interviews were designed to explore specific areas crucial for the platform’s development and functionality. The findings are presented below in three analytical dimensions: technical, organizational, and user-oriented requirements. And summarized in Table 2.

Summary of Abstracted Requirements Derived From Problem Analysis.

Technical Dimension

Stakeholders emphasized the critical importance of reliable technical mechanisms to support issue reporting, documentation, classification, tracking, analytics, and security.

Issue Reporting: QA and BA professionals highlighted the need for an efficient reporting mechanism, suggesting a third-party platform to oversee issue validation and resolution. They emphasized user-friendly reporting forms, coupled with automated notifications via SMS and email to acknowledge submission and provide updates. UI/UX experts added perspectives focused on intuitive design: clear navigation, prominently placed reporting options, simplified forms with real-time previews, user guidance, and progress indicators. Transparent communication and clear categorization were also considered essential.

Issue Documentation: QA professionals underlined the need for comprehensive issue documentation, recommending detailed descriptions, reproduction steps, screenshots, and specific service links. Predefined issue categories, complemented by an “Other” option, were advised to balance structure with flexibility. From a UI/UX perspective, the emphasis was on ensuring that users could document issues easily and accurately through clearly visible reporting buttons, intuitive forms, immediate acknowledgment messages, and accessible language. Additional design considerations, such as consistent formatting, responsive layouts, search functionality, and inclusivity features, were highlighted to support diverse user needs and ensure reliable issue documentation.

Issue Severity and Prioritization: QA professionals stressed that severity classification should not rely solely on user input, given the risk of misinterpretation. They recommended automated classification linked to predefined categories, with final validation by QA staff. UI/UX experts highlighted the importance of transparency for users, advocating for intuitive visual cues—such as color-coded urgency levels, symbols, status labels, and dashboards—to ensure users understand how their issues are being prioritized.

Notification and Tracking: QA professionals noted the importance of immediate confirmation messages and tracking dashboards. A dedicated dashboard for government agencies was suggested to enable statistical monitoring and quick identification of priority issues.

Data Analytics and Reporting: QA and BA professionals emphasized advanced analytics capabilities, including daily, weekly, and monthly metrics, SLA adherence monitoring, issue-type breakdowns, trend analysis, and escalation alerts. Interactive dashboards allowing drill-down into specific statistics were considered essential for agencies and the supervisory authority. The DGA required comprehensive reporting functions, including executive summaries, agency-specific performance reports, and metrics such as platform availability, satisfaction rates, resolution efficiency, and SLA compliance.

Data Security and Privacy: DGA requirements focused on rigorous security and privacy controls. These included NAFATH-based consent verification, strict hosting of infrastructure within national borders, secure repositories for sensitive data, and full compliance with national data protection standards.

Organizational Dimension

Beyond technical mechanisms, stakeholders emphasized organizational frameworks to ensure accountability, compliance, and inter-agency collaboration.

Service Level Agreements (SLAs): QA and BA professionals recommended SLA frameworks that define specific timelines for issue resolution: critical severity issues within 3 days, medium within 5 days, and low within 10 days. Stages such as “New,”“Under Study,”“In Progress,” and “Closed” were suggested for consistent tracking. The DGA further specified escalation tiers, ranging from supervisors to agency ministers, with defined timelines. KPIs such as first-contact resolution, SLA response rates, satisfaction scores, and resolution efficiency were considered essential for monitoring.

Regulatory Compliance: DGA representative emphasized strict adherence to regulatory requirements, including audit trails, mandatory reporting, licensing, service availability, downtime protocols, security compliance, and adherence to NIC cloud regulations. These elements ensure alignment with national standards and reliable service delivery.

Collaboration with Government Entities: DGA stressed the importance of structured integration processes: formal initiation through ministerial communication, assignment of contacts, training, and continuous support (e.g., dedicated email channels). Mechanisms such as newsletters, monthly reports, and competitive benchmarking among agencies were also proposed to promote accountability and cross-agency cooperation.

User-Oriented Dimension

A recurring theme across the interviews was the need to design the platform around the end-user experience, ensuring usability, transparency, and meaningful engagement.

Communication with Users: QA and BA professionals emphasized automated and timely communication, including acknowledgment messages with reference IDs, status updates, and resolution notifications. UX/UI experts expanded this vision, suggesting multi-channel communication (email, SMS, chat, in-app, and social media), clear resolution messages, and feedback invitations. Communication design was expected to be simple, concise, and user-friendly.

User Feedback: QA and BA experts highlighted post-resolution surveys to measure satisfaction with resolution, communication, and platform experience. UX/UI experts recommended multiple feedback channels, positive language, rewards, and follow-up mechanisms. Tools such as quick surveys, recommendation scores, app reviews, and behavioral monitoring were considered effective for capturing user sentiment.

User Engagement: BA professionals stressed showcasing the impact of user reports to motivate continued participation. QA experts recommended collaboration with agencies to endorse the platform. UX/UI experts proposed gamification (badges, rewards), user education (tooltips, guides), and community forums to encourage active participation. DGA emphasized systematic collection and analysis of feedback, with closed-loop mechanisms to address dissatisfaction.

The requirements in Table 2 can be viewed as meta-requirements that transcend specific technologies or implementations. They define the problem class addressed in this study and serve as the basis for guiding subsequent design and development decisions.

System Design and Development

Design Principles

The system design of the Empower platform is guided by a set of design principles derived from the abstracted requirements summarized in Table 2. These principles translate stakeholder-derived technical, organizational, and user-centered requirements into prescriptive guidance that informs architectural decisions, workflow design, and interface development.

Unified Reporting Principle: The system should provide a single, unified reporting mechanism that enables users to submit quality assurance issues across multiple government agencies through one entry point, addressing fragmentation and reducing complexity.

Structured and Evidence-Supportive Reporting Principle: The system should support structured issue reporting that enables users to provide clear descriptions and supporting evidence while maintaining a simple and accessible reporting experience.

Transparency and Continuous Communication Principle: The system should ensure transparent communication with users by providing acknowledgment of issue submission, reference identifiers, and clear visibility into issue status throughout the resolution lifecycle.

DP4: Role-Based and Accountable Workflow Principle: The system should implement role-based workflows that support issue validation, prioritization, responsibility assignment, and traceable resolution within government agencies.

DP5: SLA-Driven Governance Principle: The system should embed service-level agreements (SLAs) into issue handling workflows, enabling monitoring of resolution timelines, escalation of delayed issues, and consistent enforcement of accountability.

DP6: Supervisory Oversight and Analytics Principle: The system should support supervisory oversight by providing cross-agency visibility into issue handling performance, SLA compliance, and operational metrics to enable monitoring and continuous improvement.

DP7: Security and Regulatory Compliance Principle: The system should ensure secure handling of user data and compliance with applicable national regulations, including identity verification, data protection, and infrastructure hosting requirements.

These design principles serve as the foundation for the subsequent system architecture, workflow, and prototype implementation described in the following subsections.

System Architecture and Workflow

The system design within this research project centers on a three-layer architecture: the presentation, application, and data layers. As illustrated in Figure 1, the design ensures a structured approach to managing user interface, functionality, and data flow. It is the framework guiding how the platform operates, aligning with project needs while ensuring user satisfaction and system efficiency.

System architecture diagram.

The collective participation of stakeholders has articulated vital elements essential for optimizing user-reported issue handling system. The process represents the dynamic aspects of the system’s operation, showcasing the sequence of activities of reporting an issue. The process diagram is divided into swimlanes that correspond to each actor or role within the system, users and the Government Agency as Agency Manager and Agency Representative. Each swimlane contains a series of action states and decision nodes that describe the tasks they perform and the decisions they make during their interaction with the platform.

The process diagram in Figure 2 illustrates the sequence of activities involved in reporting and resolving an issue, divided into two main roles: Users and Government Agency.

User’s Role: Login: The user starts the process by logging into the system. Report an Issue: After logging in, the user reports an issue. Receive Updates: The user is kept informed about the status of the issue until it is resolved.

Government Agency’s Role: Assign Report to a Representative: Upon receiving a report, it is assigned to a representative for handling. Validate Report: The representative evaluates the report: ▪ If valid, update the issue’s status, priority, severity, and type, then proceed to resolve it. ▪ If not valid, close the report and inform the user. Resolve Issue: The representative works on the issue, aiming to resolve it promptly. Close Issue: Depending on whether the issue was resolved on time: ▪ If yes, close the report and notify the user. ▪ If no, mark the report as “Late” and take necessary follow-up actions.

Process diagram.

System Prototyping

The Empower prototype was developed as a design science artifact to demonstrate how the requirements identified during the interviews can be translated into a functional platform. Its purpose is not to serve as a production-ready system, but to validate feasibility, illustrate core features, and provide a foundation for stakeholder feedback and iterative refinement.

To situate the prototype within the digital government ecosystem, the design is conceptualized as operating across three interconnected layers that reflect distinct governance roles (Table 3). At the citizen layer, the platform supports issue submission, progress tracking, and feedback in order to lower participation barriers and encourage consistent reporting. At the agency layer, government departments are responsible for validating submissions, classifying severity, and resolving issues in accordance with defined service-level agreements. At the supervisory layer, the Digital Government Authority (DGA) provides cross-agency oversight through consolidated monitoring mechanisms, enabling transparency and accountability across the issue resolution lifecycle.

Stakeholder Requirements and Prototype Features.

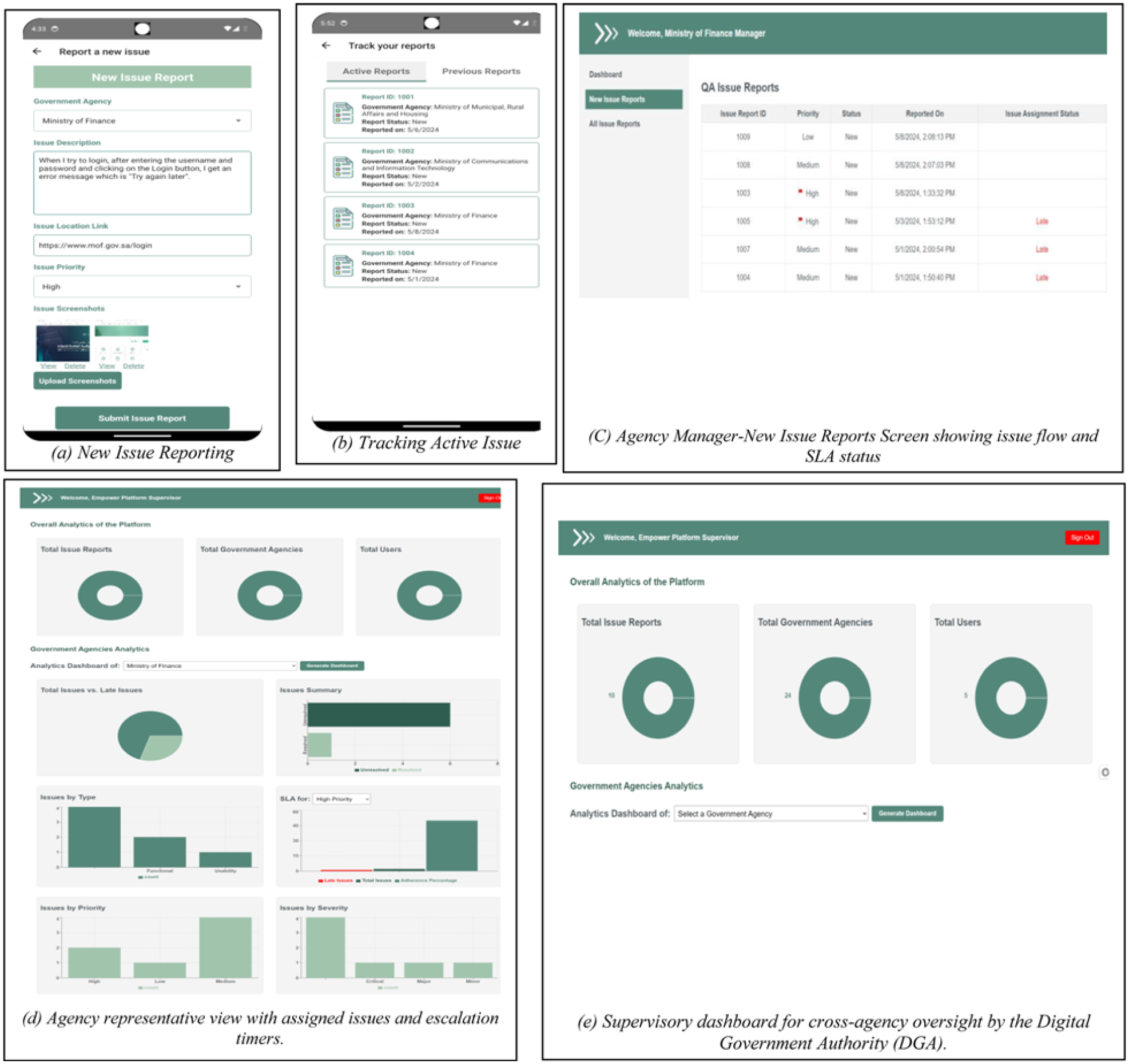

At the citizen layer, the prototype implements a unified reporting interface designed to prioritize simplicity and accessibility. The consolidated form allows users to describe issues, attach supporting evidence, and select predefined categories, thereby supporting clear documentation while minimizing interaction complexity (Figure 3a). This design reflects stakeholder requirements to reduce cognitive load and improve participation in issue reporting.

Snapshots of system screens. (a) New issue reporting. (b) Tracking active issue. (c) Agency manager-new issue reports screen showing issue flow and SLA status. (d) Agency representative view with assigned issues and escalation timers. (e) Supervisory dashboard for cross-agency oversight by the Digital Government Authority (DGA).

Transparency is operationalized through integrated status tracking and notification mechanisms. Citizens are able to monitor the progress of both active and previously submitted reports and receive updates via multiple communication channels, including SMS, email, and in-application notifications. As illustrated in Figure 3b, this functionality directly addresses stakeholder concerns regarding limited feedback and visibility in existing reporting processes.

At the agency layer, the prototype provides structured dashboards and workflows that support operational issue management. Agency managers are able to monitor issue volumes, track SLA compliance, and observe performance trends, while designated representatives handle assigned reports using role-specific views supported by escalation rules and resolution timelines (Figure 3c and d). These mechanisms embed accountability into routine agency operations by making delays and responsibilities explicit.

At the supervisory layer, the DGA is provided with oversight dashboards that aggregate data across all participating agencies. These views enable monitoring of compliance, comparative performance assessment, and identification of systemic bottlenecks at a national scale. As shown in Figure 3e, the supervisory dashboard supports regulatory alignment and inter-agency coordination by consolidating issue resolution data into a single governance interface.

The prototype therefore demonstrates how Empower operationalizes stakeholder needs into a unified and transparent platform. By integrating citizen-facing reporting, agency-level workflows, and supervisory oversight, it provides a practical proof-of-concept for improving quality assurance in digital government services. The screenshots included the key functionalities (Figure 3), while the full visual representations of the React Native mobile application for the citizens, the React portal for government agencies manager and representatives, and supervisory agency are available in this webpage:

(https://github.com/salyahya99/Empower).

It should be noted that the prototype does not cover every requirement identified during the requirements analysis. The implementation effort was intentionally focused on the core functionalities required to demonstrate the platform’s feasibility: citizen issue reporting and tracking, agency validation and resolution workflows, SLA-driven accountability, and supervisory dashboards. More advanced requirements, namely: predictive analytics, gamification, and comprehensive accessibility compliance were not included at this stage. Their omission reflects a deliberate design science strategy, where the first iteration provides a proof-of-concept and subsequent cycles progressively refine and expand the artifact.

Demonstration and Evaluation

The Empower prototype was evaluated through a structured demonstration with key stakeholders, following the Design Science Research (DSR) principle of naturalistic evaluation (Peffers et al., 2007). The objective was to validate the platform’s alignment with stakeholder needs and to assess its utility and usability as a formative step in the design science cycle.

The evaluation involved the same group of participants described in the Stakeholders section, including representatives from quality assurance (three participants), business analysis (three participants), UX/UI design (two participants), and the Digital Government Authority (one participant). In addition, two further e-government experts from the Digital Government Authority were included in the evaluation. This resulted in a total of 12 participants.

All participants took part in the scenario-based evaluation and provided feedback through interviews, usability assessment, and structured surveys. This purposive sample was selected to support formative Design Science Research approach focused on assessing the feasibility, usability, and governance workflows of a proof-of-concept artifact and is not intended to be statistically representative of the general population. In addition, all participants were regular users of digital government services in their personal lives, allowing them to provide feedback from a citizen-user perspective alongside their professional expertise. The results are presented as exploratory and indicative. Broader evaluation involving diverse citizen groups is identified as an area for future research.

Two sessions were conducted: one with the Digital Government Authority (DGA), representing the supervisory perspective, and another with QA professionals, business analysts, and UX/UI experts, representing operational and user-centered perspectives. This dual engagement ensured that both strategic oversight and practical workflows were examined.

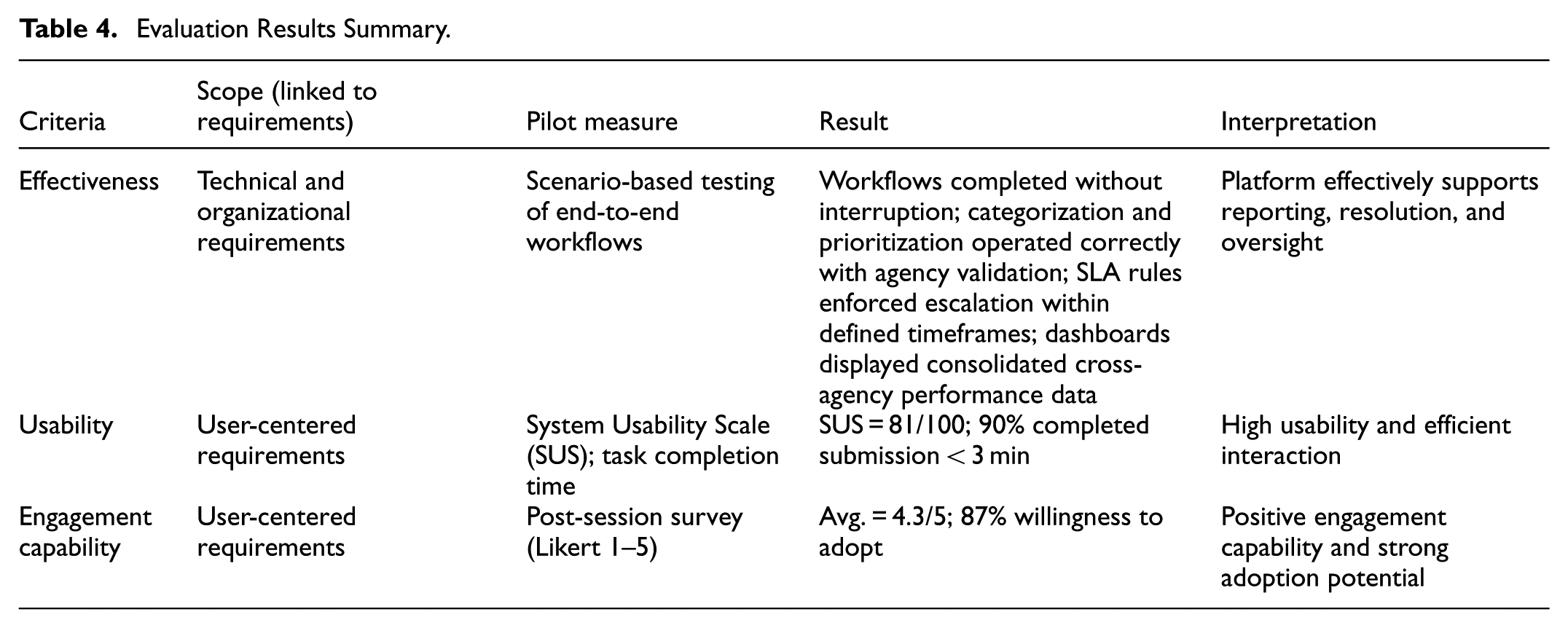

The evaluation was guided by three criteria:

Effectiveness: The extent to which the platform supports core reporting workflows, documentation, severity and prioritization, SLA-driven accountability, and supervisory oversight.

Usability: The quality of user interaction, including navigation, accessibility, efficiency, and satisfaction.

Engagement Capability: Stakeholder acceptance, willingness to adopt, and mechanisms that encourage participation and feedback.

Demonstration Results

The prototype was assessed using representative workflows, pilot measures, and post-session surveys (Table 4). The evaluation assesses whether the artifact satisfies predefined design criteria derived from stakeholder requirements, focusing on usability acceptability, task feasibility, and workflow validity rather than on statistical inference.

Evaluation Results Summary.

Effectiveness: Out of 24 elicited requirements, 21 were implemented in the prototype, corresponding to 87.5% coverage. More importantly, stakeholders state that implemented features addressed the most critical pain points identified in the problem analysis: fragmented reporting channels, limited oversight, and weak accountability. Stakeholder feedback underscored the platform’s effectiveness in meeting organizational and user objectives. Stakeholders from QA and BA backgrounds confirmed that the platform improves clarity, reduces duplication, and ensures accountability through auditability and dashboards. DGA representatives emphasized that supervisory dashboards strengthen their oversight capacity, aligning the system with strategic goals of transparency and efficiency. Collectively, these findings demonstrate that the platform is effective in addressing the multidimensional gaps of fragmentation, limited user-centricity, and weak accountability, thereby delivering measurable improvements in the quality of digital government services.

Usability: Participants performed reporting and tracking tasks through the prototype. Task efficiency was high: 90% of participants completed issue submission in under 3 min. A System Usability Scale (SUS) survey (Bangor et al., 2008) produced an average score of 81/100, placing the platform in the “Good–Excellent” usability range. Stakeholders highlighted intuitive navigation, consistency of design, and clarity of workflows, while recommending further enhancements for accessibility compliance and visual cues.

Engagement Capability: it was assessed in terms of stakeholders’ perceptions of the platform’s ability to encourage active and sustained citizen participation in issue reporting. Post-session surveys revealed high levels of acceptance: on a five-point Likert scale, satisfaction and willingness to adopt averaged 4.3/5, with 87% of participants indicating that they would consider the platform useful in practice. Stakeholders also highlighted design features such as automated notifications, acknowledgment messages, and potential gamification elements as important enablers of continuous participation. These observations suggest that the platform has the potential to foster user engagement beyond initial adoption by reinforcing transparency and demonstrating the impact of user contributions.

Stakeholder Feedback and Observations

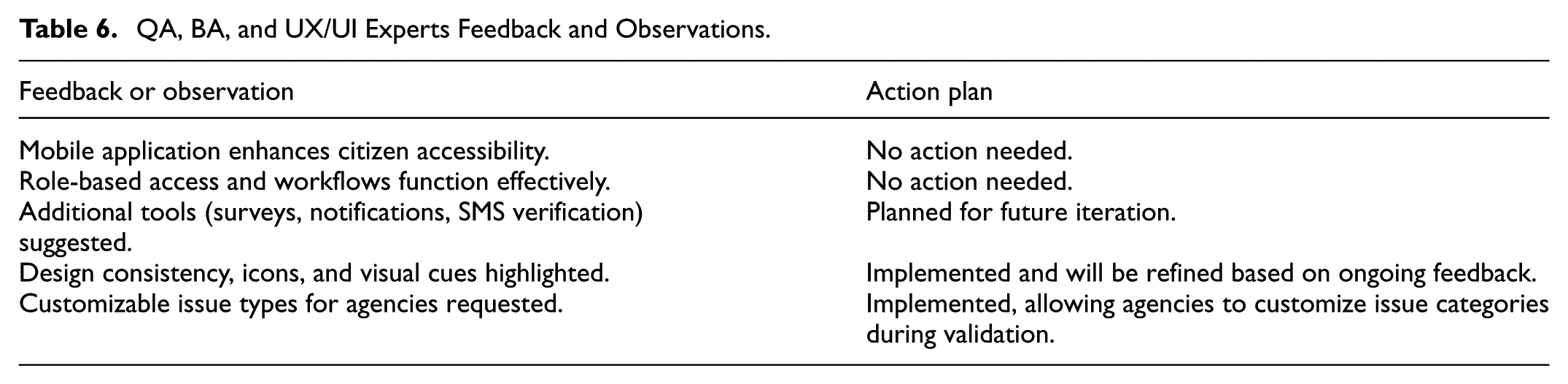

The quantitative results were reinforced by qualitative insights from both groups of stakeholders.

DGA stakeholders praised the analytics dashboards, SLA enforcement mechanisms, and the platform’s role in strengthening oversight and accountability. They requested additional capabilities, such as predictive analytics, agency-specific monthly reports, and accessibility audits, to further enhance supervisory functions.

QA, BA, and UX/UI experts confirmed the effectiveness of workflows and praised the mobile application, role-based access, and customizable issue types. They suggested enhancements such as expanded feedback tools, automated notifications, and further attention to design consistency to support a cohesive user experience.

Tables 5 and 6 summarize this feedback and corresponding action items.

DGA Feedback and Observations.

QA, BA, and UX/UI Experts Feedback and Observations.

Discussion

Interpretation of Evaluation Findings

The evaluation results provide evidence that the proposed platform addresses several limitations identified in prior digital government issue reporting approaches. Usability outcomes and task completion results indicate that unifying fragmented reporting channels into a single interface reduces user effort and supports more intuitive issue submission, directly responding to user-centricity concerns highlighted in the literature. Stakeholder feedback further suggests that the integrated workflows enable clearer responsibility assignment and reduce ambiguity in issue handling across agencies, a challenge frequently reported in existing platforms. Importantly, the inclusion of SLA targets, escalation mechanisms, and supervisory dashboards was consistently perceived as strengthening follow-up and accountability, addressing gaps where prior systems rely on external or informal enforcement processes. These findings do not imply population-level effectiveness, but they demonstrate the feasibility and coherence of embedding governance mechanisms directly within a digital reporting platform.

The findings do not imply population-level effectiveness; rather, they indicate that the design coherently integrates user-centric interaction with enforceable accountability structures. These insights form the basis for articulating the research contributions of the study in terms of design knowledge and governance integration.

Research Contributions

This study contributes to digital government research by advancing design-oriented and governance-oriented knowledge for issue reporting platforms, addressing limitations consistently identified in prior work. As demonstrated in the related work section, existing digital government reporting channels and platforms, such as built-in feedback tools, civic apps, and centralized portals, primarily emphasize accessibility, participation, and transparency, while remaining fragmented and weakly integrated into institutional accountability and quality assurance structures (Mahlangu & Ruhode, 2021; A. J. Meijer & Rodríguez-Bolívar, 2013; Nam, 2012; Schrock, 2018; Susha & Grönlund, 2014). The contributions of this study therefore lie not in introducing isolated technical features, but in providing generalizable design knowledge and an integrated governance configuration that responds directly to these documented gaps.

This study contributes reusable design knowledge in the form of design principles derived from stakeholder-informed requirements. Building on User-Centered Design (UCD) theory (Garrett, 2010; Rubin & Chisnell, 2011) and prior e-government usability research (Chang et al., 2020; Husain, 2023), the study abstracts a set of design principles from the requirements summarized in Table 2. These principles address challenges repeatedly highlighted in the literature, including fragmented reporting channels, high user effort, limited transparency, and weak feedback loops. Rather than being specific to a single platform or national implementation, the design principles provide transferable guidance for designing cross-agency issue reporting systems in digital government contexts where citizen engagement is voluntary and usability failures undermine participation and trust (Chang et al., 2020; Nielsen & Loranger, 2006).

Furthermore, the study contributes a governance-oriented reconceptualization of citizen issue reporting as an accountability mechanism. Drawing on accountability theory in public administration, which conceptualizes accountability as an enforceable relationship involving responsibility, monitoring, and oversight rather than transparency alone (Bovens, 2007, 2010), the proposed design embeds governance mechanisms directly into the digital workflow. In contrast to platforms reviewed in Section 2.3, where follow-up, responsiveness, and enforcement are often limited or handled outside the system (Benlahcene et al., 2024; Nam, 2012; Pak et al., 2017; Schrock, 2018; Ye, 2023), the Empower platform integrates service-level agreements (SLAs), role-based validation, escalation procedures, and supervisory dashboards as core system functions. This contribution extends prior digital government research that emphasizes participation and service access but does not systematically address enforceable accountability or quality assurance (Baheer et al., 2020; A. J. Meijer & Rodríguez-Bolívar, 2013; Susha & Grönlund, 2014).

Finally, the study contributes an integrated, end-to-end issue lifecycle model for cross-agency quality assurance. The literature consistently identifies fragmentation, limited interoperability, and weak coordination across government agencies as systemic barriers to effective issue resolution (Baheer et al., 2020; Mahlangu & Ruhode, 2021; Shalan, 2024). While existing platforms may support issue submission and, in some cases, status visibility, they rarely integrate submission, validation, prioritization, resolution, escalation, and oversight within a single, coherent workflow. The Empower platform instantiates such an end-to-end lifecycle, explicitly aligning citizen-facing reporting with agency-level operations and supervisory oversight. The novelty of this contribution lies in the systematic integration of technical workflows, organizational roles, and governance controls, rather than in any individual component such as dashboards or reporting interfaces.

From a Design Science Research perspective, these contributions represent analytically generalizable design knowledge rather (Matheus et al., 2020; Peffers et al., 2007). Although the artifact is empirically grounded in the Saudi digital government context, where regulatory frameworks and institutional roles provide a concrete setting, the underlying design principles, governance configuration, and lifecycle model address a problem class that the literature demonstrates to be global in nature: fragmented issue reporting combined with weak institutional accountability (A. J. Meijer & Rodríguez-Bolívar, 2013; Nam, 2012; Schrock, 2018; Susha & Grönlund, 2014). As such, the contributions of this study are transferable to other digital government environments with similar organizational and governance characteristics, subject to clearly defined boundary conditions.

Conclusions and Future Work

This research embarked on addressing significant challenges within the digital government framework, notably the fragmented and non-user-centric nature of the existing issue reporting systems. Through the development of “Empower,” a unified platform enabling citizens to report QA issues on government agencies’ websites, it successfully consolidated various reporting channels into a single streamlined interface, significantly enhancing accessibility and user interaction. The platform integrates user-centric design principles, simplifying the reporting process and enabling efficient tracking and management of issues.

“Empower” has made contributions to technological integration by implementing functionalities crucial for enhancing interaction between citizens with government agencies, such as reporting the issues and real-time tracking of issue reports. Additionally, it has enhanced user experience by offering an intuitive interface that simplifies the reporting process and encourages active citizen participation. This improvement is essential for fostering a transparent and responsive environment between citizens and government agencies.

The practical implications of “Empower” are profound. For government agencies, the platform facilitates more efficient issue management and adherence to Service Level Agreements (SLAs), enhancing the government’s ability to respond swiftly and effectively to citizen concerns. For citizens, it provides an accessible and straightforward means to report issues, empowering them and fostering greater engagement, which contributes to more informed users.

This study presents a proof-of-concept prototype intended to demonstrate the feasibility and coherence of a governance-oriented issue reporting platform rather than a fully deployed production system. The evaluation was conducted as a formative assessment involving domain stakeholders, which provided early insights into usability, workflow integration, and governance feasibility, while broader citizen-centered evaluation remains a logical next step. Although the proposed architecture is designed to support cross-agency integration, scalability and long-term operational performance were not empirically examined at this stage. Therefore, the findings support analytical generalization as design knowledge, rather than statistical generalization across populations.

Looking forward, the project is poised for several strategic enhancements based on the feedback gathered during the demonstration and evaluation phase. Stakeholders expressed a keen interest in incorporating advanced functionalities such as predictive analytics to enhance proactive decision-making and support the generation of monthly reports tailored to specific government agencies. In future iterations, such capabilities could be implemented by leveraging aggregated historical data and evaluated through their ability to support timely insights and management decision-making. These enhancements are aimed at leveraging data for continuous improvement and more effective governance. Additionally, there was a strong emphasis on ensuring the platform’s compliance with accessibility standards to cater to diverse user needs. Recommendations from stakeholders included conducting regular accessibility audits, adhering to WCAG standards, and providing alternative content formats, which could be assessed through compliance checks and usability feedback from diverse user groups, particularly emphasized by the Digital Government Authority (DGA). Other suggestions from Quality Assurance professionals, Business Analysts, and UX/UI experts included implementing tools such as surveys, feedback forms, SMS-based verification, and automated notifications to further improve user interaction, security, and communication. These features are expected to enhance engagement and platform robustness and could be evaluated in future deployments through usage metrics and stakeholder feedback.

Future work will also extend the evaluation of the Empower platform through a large-scale, citizen-centered study to complement the formative, stakeholder-focused assessment presented in this paper. This will include recruiting a diverse sample of citizen users across age groups, digital literacy levels, and service usage patterns to evaluate usability, accessibility, and adoption intentions in real-world conditions. Planned methods include comparative usability testing against existing government reporting channels, larger-sample survey instruments to support stronger quantitative analysis, and longitudinal usage observation to assess sustained engagement. This next phase will enable broader empirical generalization while building directly on the validated governance workflows, design principles, and architectural foundations established in the current study.

Footnotes

Acknowledgements

The authors would like to extend their sincere appreciation to the Deanship of Scientific Research at King Saud University for its funding this research (Ongoing Research Funding Program- (ORF-2025-1364)).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Ongoing Research Funding Program- (ORF-2025-1364), King Saud University, Riyadh, Saudi Arabia.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All data generated or analyzed during this study are included in this published article.